Abstract

This article describes The Evidence of Impact Model that was recently developed to support the analysis of short- to medium-term impact arising from Participatory Action Research and Developmental Evaluation approaches that were used within the Evidence for Change initiative. Evidence for Change was a team capacity building initiative that supported rapid, bottom-up, evidence informed change in health and social care environments. To explain the need for The Evidence of Impact Model and outline how it was developed, a variety of similar models are explored and the challenges of capturing impact arising from Evidence for Change are discussed. The resulting new model based on the existing concept of micro, meso and macro levels of impact is outlined in detail with supporting qualitative evidence. The authors conclude that The Evidence of Impact Model provides an effective lens for exploring impact at a variety of levels within an emerging and complex environment.

Keywords

Introduction

Recent radical reforms outlined within health and social care policy mandate the development of new ways of working across the health and social care sector in England (Department of Health, 2010; Health and Social Care Act, 2012; National Health Service, 2014, 2017). To keep pace with such reforms there is also a need to expand existing impact assessment frameworks, and explore new impact assessment metrics and models. For example, additional measures and mechanisms are needed to assess the impact of the increasing use of qualitative research to support contextualised decisions, public and patient involvement in the co-production of health service redesign (Batalden et al., 2015; Cook, 2012; SCIE, 2015) and the degree to which health inequalities are being addressed (Marmot, 2010; Whitehead, 2014). However, the health and social care sector are not alone in this endeavour, currently research organisations across the globe are also grappling with how to redefine and broaden their research impact metrics to include social and economic outcomes (Adam et al., 2018).

Participatory Action Research (PAR; Cook, 2012; de Koning and Martin, 1996; Reason and Bradbury, 2008; Ritchie et al., 2014) and Developmental Evaluation (DE; Patton, 2011) approaches offer the potential to support health and social care reforms. This is because they involve participants in the co-production of initiatives and research and utilise an iterative process that enables learning, development and change.

The difference between PAR and DE is subtle. PAR, founded by Paulo Freire in 1982, is predominantly focused upon challenging systems to tackle the inequalities and disadvantages created by historical societal structures of power and wealth. The research undertaken is led by the participants with the support of researchers (de Koning and Martin, 1996; Reason and Bradbury, 2008; Ritchie et al., 2014). DE, on the other hand, as described by Patton (2011), was developed to enable innovation in a time of flux and uncertainty by using iterative and purposeful reflection on processes and outcomes to create services or products that are fit for purpose. While participants are heavily involved in DE, the evaluation is guided by evaluators working as part of the initiative design team.

Evidence for Change (EfC), as described in Harper and Dickson (2019), was developed in 2015 to support health and social care reform in the North West of England. This capacity building initiative enabled multidisciplinary health and social care teams to use scientific knowledge to inform and develop their practice. Given the rapidly changing landscape in health and social care, it was decided that a DE approach would be used to create EfC and that PAR would be used to support the teams that were seeking to create and implement rapid, bottom-up change (Balogun and Hope Hailey, 2004).

Careful consideration was given to finding an appropriate tool to support the identification and analysis of data that informed the EfC evaluation. This was because the majority of impact assessment tools have historically been designed to support more traditional projects where the aims, outcomes and milestones are declared at the outset and used to judge mid and endpoint progress, the so-called ‘distance travelled’. This differs significantly from projects developed through PAR or DE, as the aims and intended outcomes will have been purposely renegotiated during the project’s lifecycle (Patton, 2011; Waterman et al., 2001). Therefore, the EfC evaluators (LH and RD) faced the dilemma of what, when and how to evaluate the impact of EfC. The EfC evaluators also noted the views of Fagen et al. (2011) who warned that the premature measurement of impact arising from DE can stifle innovation. However, the EfC evaluators decided that the rapid, bottom-up change approach used with the EfC teams necessitated the capture and analysis of the short- to medium-term impacts of EfC.

The concept of using levels to measure impact has been widely used within the evaluation of learning, development and capacity building. For example, Kirkpatrick (1996) and Kirkpatrick and Kirkpatrick (2005) explain how to evaluate training outcomes at four levels (reaction, learning, behaviour and results). Kirkpatrick’s model, originally developed in 1959, focuses upon the individual, the job and the organisation. Kaufman and Keller (1994) expand Kirkpatrick’s work to include a fifth level of impact (societal). Whereas Saunders (2007, 2011) adapts the concept of five levels of impact to include analysis of long-term strategic impacts. Cooke (2005) also uses the concept of levels to measure impacts, including those arising from PAR projects, for individuals, teams, organisations and networks within health and social care.

The EfC evaluators saw merit in exploring levels of impact as a potential means to support their evaluation. Therefore, a priori models with the following potential were considered: (a) ability to use qualitative data to assess impact across a variety of levels and (b) sufficiently flexible to capture impact of current government health and social care reforms.

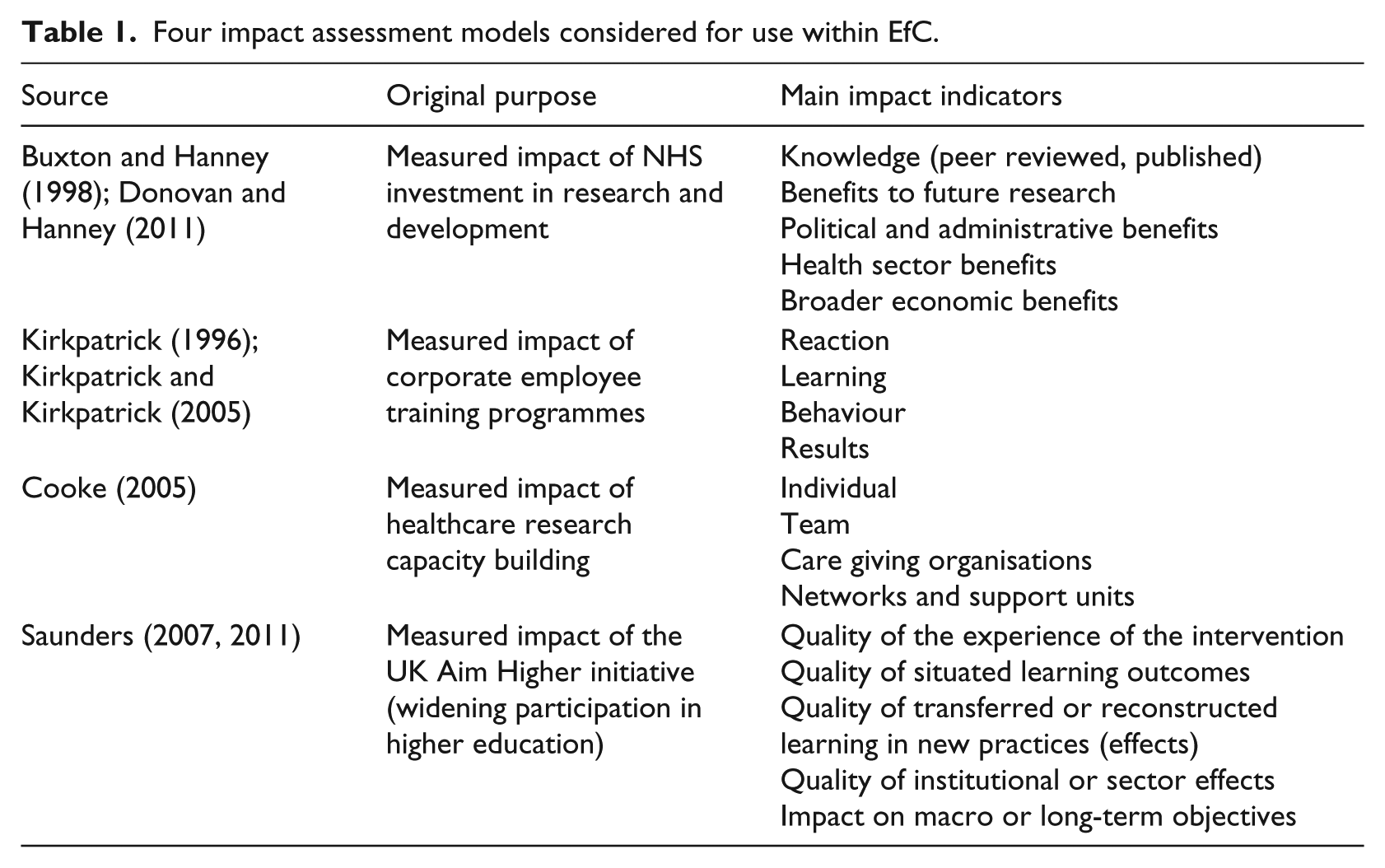

All models within Table 1, except Saunders’ model, were prevalent within the health and social care literature. None of the models identified exactly matched the requirements of the EfC evaluation. For example, all of the models lacked the capacity to explore multidisciplinary, multiagency team impact. In addition, the following key points were noted by the EfC evaluators:

Buxton’s and Hanney’s (1998) framework was unsuitable as it focused on economic, research and political impacts, with no provision for exploring individual learning.

Kirkpatrick’s (1996) and Kirkpatrick’s and Kirkpatrick’s (2005) model was unsuitable as it focused on quantitative assessment of private sector corporate training and development.

Cooke’s (2005) framework was unsuitable as it focused on infrastructure, policy, funded national networks and academic impact. The framework also reflected the government policy of that time, rather than current health and social care reforms.

Saunders’ (2007, 2011) model was unsuitable as it lacked sufficient detail to support in-depth analysis of qualitative data.

Four impact assessment models considered for use within EfC.

This article therefore aims to (a) outline why the EfC evaluators chose to create a new model to support their work in analysing the impact of the EfC capacity building initiative and (b) explain The Evidence of Impact Model and how it was developed and implemented.

Methods

To create a workable impact assessment model, a ‘best-fit framework’ approach (Booth and Carroll, 2015) was used. From the available models (Table 1), Saunders’ (2007, 2011) model offered the most potential as it explored learning, action and change at micro, meso and macro levels arising from a public sector change initiative. Saunders’ model was therefore selected as a ‘best-fit, single framework’ model (Booth and Carroll, 2015) to support the creation of a new and workable impact assessment model for use within the EfC initiative. A DE approach (Patton, 2011, 2016) was used to guide the model’s creation in - real-time alongside the development of the EfC initiative and data that were collected for the endpoint impact assessment of EfC were used to further refine the model. As outlined in Harper and Dickson (2019), the EfC endpoint impact assessment was informed by data from focus groups, line manager interviews and an online anonymous questionnaire.

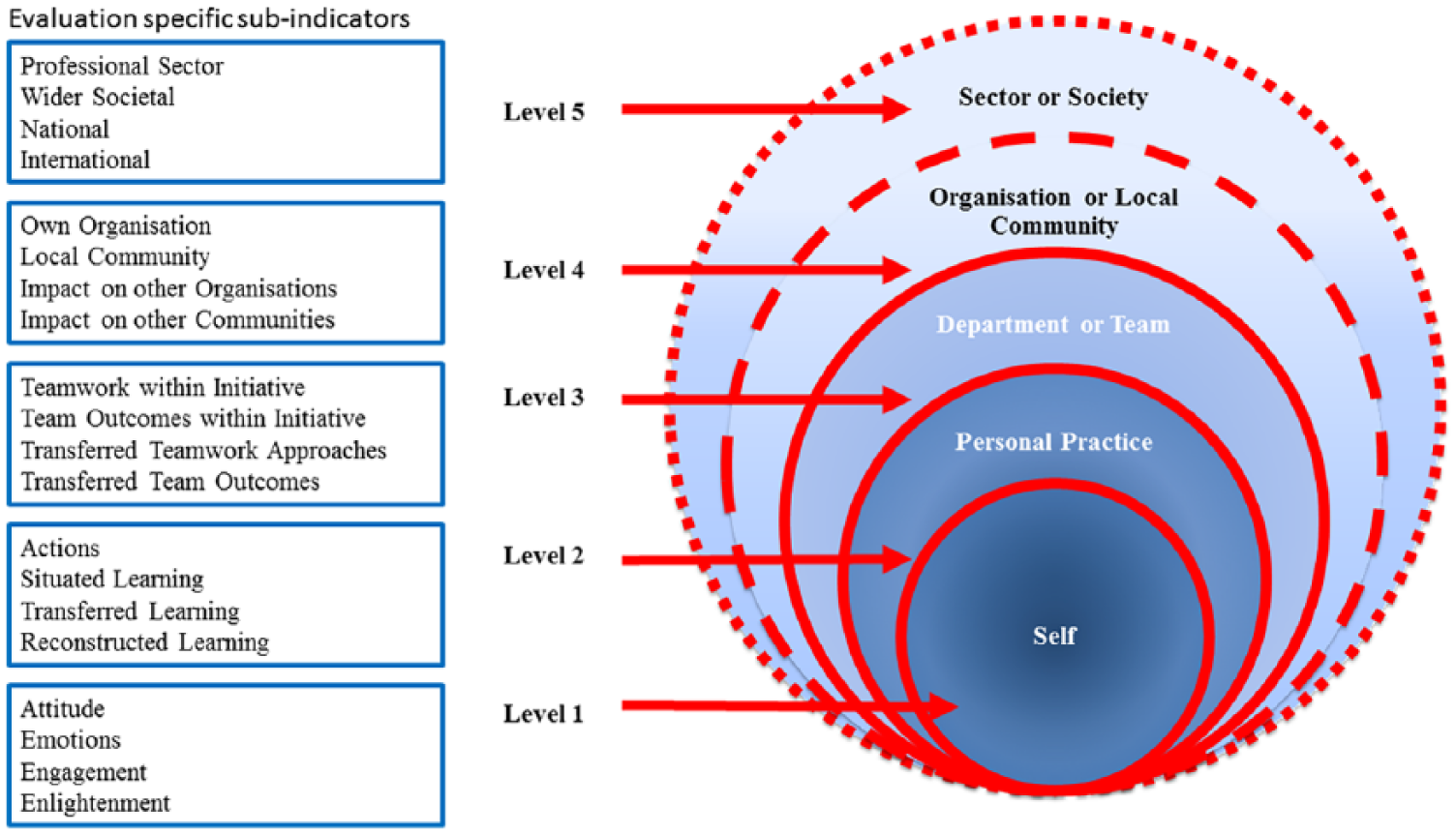

The Evidence of Impact Model was developed in two stages. First, five new main indicators (Figure 1) were developed to reflect the EfC initiative, current policy papers and feedback from EfC’s key stakeholders. Second, four evaluation-specific sub-indicators (Figure 1) were developed for each level of the model. This was achieved by a process of deductive and inductive analysis (Braun and Clarke, 2006; Gale et al., 2013). Starting with deductive analysis, the raw EfC data from the EfC endpoint impact assessment were coded against the main indicators from The Evidence of Impact Model (Figure 1). Next, key topics within each level were derived by inductively analysing the refined data and these topics were used to create the four evaluation-specific sub-indicators shown within each level of the model (Figure 1). The data were then recoded against these newly identified evaluation-specific sub-indicators to reveal even finer complexities at each level.

The evidence of impact model.

The model’s development was conducted by one researcher (LH) and checked by a second researcher (RD) as well as an external independent evaluator from Lancaster University.

The Evidence of Impact Model was designed to capture the short- to medium-term impacts arising from EfC using qualitative research methods. Therefore, impact was captured between 1 and 9 months after the initiative had concluded, allowing sufficient time for actions and reflections to occur. The methods used to conduct the EfC evaluation are outlined in Harper and Dickson (2019).

Ethical considerations – everyone taking part in the follow-up evaluation of EfC gave their informed consent. Ethical approval from the University of Liverpool was also granted to subsequently involve the team members’ line managers (Reference no: IPHS-1516-147) (Harper and Dickson, 2019).

Results

The model has five distinct levels (Figure 1), starting from the microlevel of individuals (Levels 1 and 2), rising through to teams (Level 3) and organisations or local communities (Level 4) and finally impacting at the macrolevel (Level 5) to demonstrate professional sector or societal change. Four topics within each level are identified, as seen in Figure 1. Furthermore, sub-topics for each level are also discussed within the following text and for illustrative purposes, examples of text that helped to define the model are provided in Table 2. As Saunders’ (2007, 2011) model shares some similarities with Kirkpatrick’s (1996) model, reference to relevant learning concepts within Kirkpatrick’s model have also been noted in the following text.

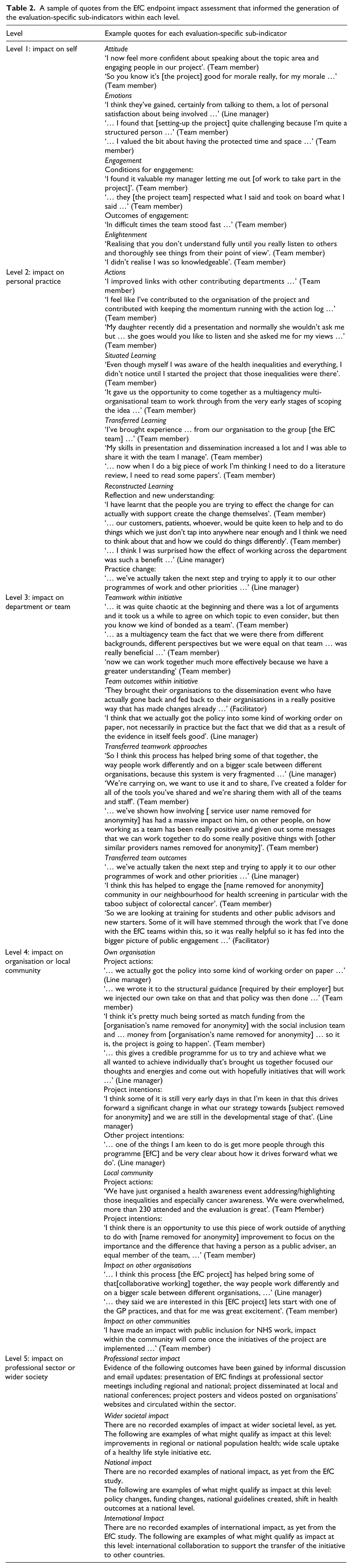

A sample of quotes from the EfC endpoint impact assessment that informed the generation of the evaluation-specific sub-indicators within each level.

The indicators within each level of the model are the following:

Level 1 – Self. This level seeks to explore the personal impact of the initiative upon the individual participant. Level 1 is about the individual’s own thoughts and observations, however the individual may not necessarily transfer their learning into action or practice.

The four evaluation-specific sub-indicators within Level 1 are as follows:

Attitude – for example, increased or decreased confidence, motivation or morale.

Emotions – for example, enjoyment, personal satisfaction, feeling challenged or feeling valued.

Engagement – within EfC there were two sub-sets of engagement:

Conditions for engagement, for example, feeling supported and respected, or having policies and processes that enabled engagement. Outcomes arising from engagement such as increased collegiality, networking or being enabled to shift perceptions (either their own or those of others).

Enlightenment – for example, being able to see things from a new perspective, gaining new insights or gaining an enhanced understanding.

Level 2 – Personal practice. This level seeks to explore the degree to which an individual applies their learning from the initiative.

The four evaluation-specific sub-indicators within Level 2 are as follows:

Actions – this refers to the direct action taken by participants as a result of being involved in the initiative. For example, increases or decreases in networking, communication, teamwork, use of skills and knowledge in the workplace, community or home.

Situated learning – this refers to learning that arose from the initiative’s intended design and is therefore situated within the learning opportunity offered by the initiative (Kirkpatrick, 1996; Saunders, 2007, 2011). For example, a planned learning outcome within EfC was being able to identify and tackle health inequalities. Support was provided within the workshops for participants to engage with this key concept. Situated learning was therefore deemed to have occurred if participants reported either identifying or tackling health inequalities within their team’s project.

Transferred learning – this refers to an individual being able to transfer learning from one context to another (Kirkpatrick, 1996; Saunders, 2007, 2011). For example, within EfC this included

Participants who learnt from EfC and transferred their learning to a different project or another part of their role either at work, socially or at home. Participants who transferred their existing knowledge and skills from elsewhere to support the development of their EfC team or project.

Reconstructed learning – this refers to an individual who gains new insights through critical reflection and uses these insights to develop and deploy a new course of action to solve a problem (Bray et al., 2000; Egan, 1998; Kolb, 1984). Within EfC, reconstructed learning was observed as a two-step process:

Step 1: reflection leading to new understanding. For example, one participant realised that the public members of the team were central to shaping the project to ensure it was accepted by the local community. Step 2: practice change. For example, the participant noted in step 1, used their experience, knowledge and new insights gained through EfC, to adapt organisational policy and procedure to enable members of the public to be involved in future projects.

Level 3 – Department or Team. This level seeks to explore the degree to which departmental or team behaviours, actions or practices have changed and spread as a result of the initiative.

The four evaluation-specific sub-indicators within Level 3 are as follows:

Teamwork within initiative – this refers to evidence of the team working together as a team of equal peers. For example, evidence of team bonding, the sharing of emotional support, knowledge, expertise, responsibilities and resources.

Team outcomes within initiative – this refers to evidence of outcomes that arose from the team’s project as a result of their teamwork. For example, finding and analysing evidence to inform new organisational policy and developing a tool to increase local community communication.

Transferred teamwork approaches – this refers to evidence that team members transferred their teamwork skills and knowledge to other teams that they worked with beyond their EfC project. For example, networking and collaborating with others, using teamwork principles in other teams, preparing and supporting others to change by sharing information.

Transferred team outcomes – this refers to evidence that team members influenced outcomes in other teams by applying their learning from EfC. For example, informing wider practice improvement, persuading other organisations and community members to donate time and resources to the project, and using robust evidence to inform commissioning decisions in other teams.

Level 4 – Organisation or local community. This level explores whether changes have taken place at organisational and/or local community level and whether these changes have spread beyond the organisation or local community.

The four evaluation-specific sub-indicators within Level 4 are as follows:

Own organisation – this refers to changes that have been made at an organisational level as a result of the initiative. The examples provided by the organisations involved in EfC fell into three categories:

Actions and changes that took place to support the EfC project, for example, writing organisational work procedures and routinely using evidence to make commissioning decisions associated with the project. Applying learning from the EfC project elsewhere within the host organisation, for example, adopting EfC project tools and policies. Organisations also outlined future project intentions such as the intention to rollout their EfC project more widely across the organisation and the intention to involve the public in future projects.

Local community – this refers to changes within the local community that have taken place as a result of the initiative. The examples provided by the teams involved in EfC fell into three categories:

Local community project actions, which is where actions were taken up by the local community as a part of the project such as local community engagement in raising awareness of taboo health subjects. Other local community project actions, which is where actions or developments were transferred from the initiative and applied to other projects within the local community such as the adoption of resources from the EfC project within other local community projects. Local community project intentions such as an expression of willingness by the local community to be involved in future projects.

Impact on other organisations – this refers to actions that have taken place in other organisations not connected to the initiative but as a direct result of the initiative. For example, an organisation not involved in EfC hosted a joint health awareness event with one of the EfC teams.

Impact on other communities – this includes actions that take place in other communities beyond the initial scope of the project but as a direct result of the initiative. There were no examples of impact on other communities within the EfC evaluation.

While future intentions cannot be counted as direct impact, they are important to recognise, especially in cases where the endpoint impact data are collected soon after the initiative completion date. The rippling out effects of the initiative are likely to be seen within Level 4. For example, not only were EfC teams impactful within their own project but they also had positive impacts on other projects within their organisations and their local communities.

Level 5 – Professional sector or wider societal. This level explores whether changes have taken place across a profession or across a wide sector of society as a result of the initiative.

The four evaluation-specific sub-indicators within Level 5 are as follows:

Professional sector – for example, changes made to national health and social care guidelines or changes to professional conduct.

Wider societal – for example, changes within a wide section of the community at region or national level.

National – for example, laws, government policies, collective national action such as changes in habits or activities.

International – for example, collective global action, international agreements, international laws and international research.

There is a purposeful overlap between the wider societal evaluation-specific sub-indicator and the national evaluation-specific sub-indicator, as they both include behaviour change at national level. However, the wider societal evaluation-specific sub-indicator focuses upon the collective actions of people mainly at regional level, whereas the national evaluation-specific sub-indicator focuses upon national policy.

For illustrative purposes, examples of text that helped to define the indicators within The Evidence of Impact Model are provided in Table 2.

Discussion

When mapping the EfC endpoint impact evidence to The Evidence of Impact Model, it was clear that the volume of evidence at each level tapered out from Level 1 to Level 4 with a significant drop at Level 5. This is possibly due to two factors:

First, EfC participants were keen to share their learning and to explain the impacts and outcomes that arose for themselves and their teams at Levels 1, 2 and 3. The participants therefore provided rich and thick data to support the evaluation at these levels (Gale et al., 2013). However, the teams took different approaches to engaging with local communities and this may have limited impact or limited knowledge of impact at Level 4.

Second, the endpoint data were gathered between 1 and 9 months after EfC concluded. At this point, most teams were still engaged with their EfC project and it is possible that there was insufficient time for actions at Level 4 and 5 to be implemented and outcomes to be evaluated.

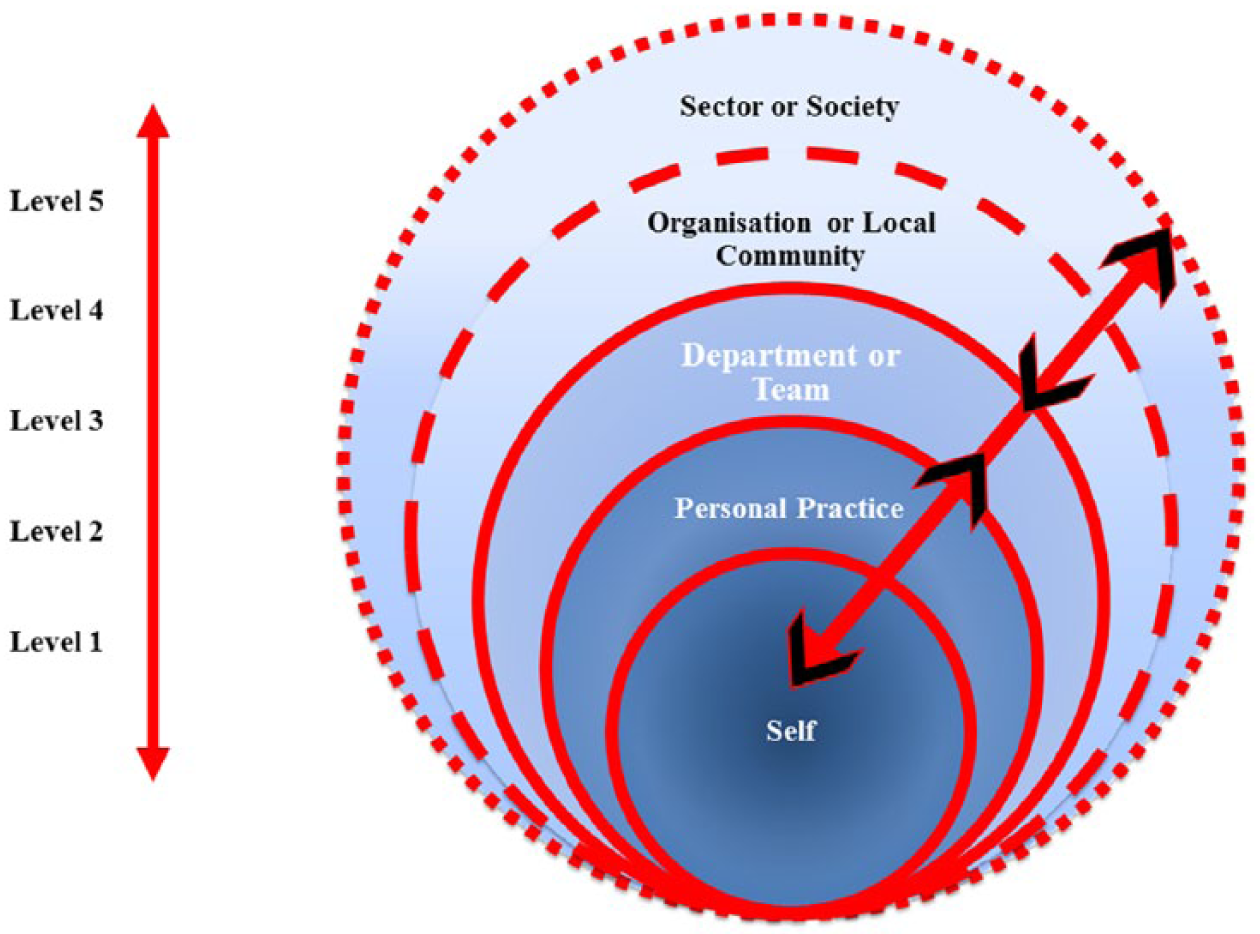

By using The Evidence of Impact Model, the EfC evaluators found that learning and impact within EfC were built incrementally over time, were multidimensional and interdependent upon each other. Learning and change were not simply created at Level 1 radiating outwards in one direction like a domino effect, as is the case with Kirkpatrick’s (1996) model and to some degree Saunders’ (2007, 2011) model. For example, analysis of the EfC data suggested that the motivation and morale of an individual (Level 1) may be greatly influenced by recognising their own contribution to their team’s or community’s success (Levels 3 or 4). This newly bolstered or diminished morale for the individual (Level 1) may also impact upon the individual’s ability to act (Level 2) and their ability to influence change within their team (Level 3). Conversely, impact created by the team (Level 3) may impact simultaneously upon both the individual involved (Levels 1 and 2) and the organisation or local community (Level 4). In this example, impact is two-way or more, pushing both inwards and outwards within the model, as demonstrated in Figure 2.

The multidimensional aspects of learning and impact.

The multidimensional aspect of impact is something that Cooke (2005) comments on, outlining that impacts at one level can have synergistic or detrimental effects on another. Similar findings of the impacts upon an individual’s confidence that may support them to act are reported by Waterman et al. (2001) and explained within experiential learning theory (Kolb, 1984). In a similar vein, the EfC evaluators found it important to consider the interconnectedness of the evaluation-specific sub-indicators within each level of the model. This helped to reveal some of the causal effects leading to the positive impacts reported by the EfC participants as discussed in Harper and Dickson (2019).

The raft of measures outlined by the National Health Service (2014, 2017) recognises the need for both local and national action in order to effectively change systems and working practices to cope with rising health and social care demands and to tackle health inequalities. PAR methods support and enable social change (Cook, 2012; de Koning and Martin, 1996; Reason and Bradbury, 2008; Ritchie et al., 2014) and DE methods support development in emerging and complex environments where there are many shifting variables (Patton, 2011). These approaches therefore offer the potential to support the proposed changes within health and social care. However, assessing the impact arising from PAR and DE projects and initiatives is problematic as the initial project aims are often subject to adaptation and change in order to cater for the uncertainty of the emerging environments in which the projects are situated (Patton, 2011; Waterman et al., 2001). The EfC evaluators’ search of the literature confirmed a gap for impact assessment metrics and models to cater for such projects, which led to the decision to create The Evidence of Impact Model.

None of the models previously discussed in the literature were considered suitable for use in the EfC evaluation. Therefore, using a ‘best-fit, single framework’ approach (Booth and Carroll, 2015), the EfC evaluators chose Saunders’ (2007, 2011) model to provide a starting point for developing The Evidence of Impact Model. The concepts that were considered important were the ability to demonstrate impact from micro (individual), through meso (organisational) to macro (societal) change (Saunders, 2007, 2011) and the concepts of situated and transferred learning (Kirkpatrick, 1996; Saunders, 2007, 2011). In addition to these concepts, consideration of current policy papers and stakeholder feedback, together with the analysis of real-time EfC data, enabled the development of a workable impact assessment model that was appropriate for the evaluation of impact arising from the EfC initiative.

The resulting Evidence of Impact Model differs from existing models in three key aspects. First, the model enabled a deep exploration of the impact of EfC upon the personal and professional lives of the individual participants. The process also enabled the individuals involved to recognise the impact of EfC upon themselves. Second, the model considered the degree to which learning was applied, rather than measuring what was learnt; this enabled the EfC evaluators to explore in depth the level and scope of impact. Third, the model explored impact on teams and local communities and this was important within the health and social care context of EfC.

Strengths and limitations

There are three key strengths to using The Evidence of Impact Model. First, the model was created and tested live in - real-time by the use of DE and a unique opportunity to work with teams of health and social care practitioners and service users who wished to co-create practice change. Second, the model provides main and evaluation-specific sub-indicators to support the capture and analysis of impact including micro, meso and macro level impact focusing upon individual, team, organisational and local community learning and change. Third, the model offers a mechanism to explore the causal effects of the multidimensional aspects of learning and change within a complex system.

The Evidence of Impact Model has only been tested once on a small study sample, with only one snapshot of impact taken between 1 and 9 months after the completion of the EfC initiative. The model only demonstrated positive impacts, which were based on data arising from the evaluation of EfC. As noted in the ‘Methods’ section of this article, appropriate steps were taken to mitigate against possible influences and biases. However, the model may be further developed in the future to include indicators that will capture negative impacts, as well as broader or more context-specific indicators capable of assessing impacts in different settings.

Conclusion

The Evidence of Impact Model provides a tool for capturing and analysing the short- and medium-term impacts arising from team-based capacity building within the context of health and social care reform. The use of this model provided a lens to examine impact at a variety of different levels within an emerging and complex environment, especially looking at rapid, bottom-up change (Balogun and Hope Hailey, 2004). The Evidence of Impact Model was used to demonstrate how the EfC capacity building initiative impacted on the personal and professional lives of the participants, as well as on their work environment and practice.

The development and use of the model allowed the EfC evaluators to analyse what was happening on the ground within each EfC team’s change project. The analysis of the data against the evaluation-specific sub-indicators also enabled the EfC evaluators to understand the multidimensional relationship of impact at many levels. Using the model also revealed that topics and themes at Levels 3 and 4 were influenced by the participants’ political and social surroundings, which reflected changes taking place within the health and social care system.

Using a model such as The Evidence of Impact Model, which links service providers, service users, the local community and researchers, represents an important step in the journey towards supporting agendas for change. This is because it enabled providers, communities and individuals to better understand the environment within which they lived and operated and to recognise the impact that their singular and collective actions had upon their environment. Moreover, involvement in the assessment of impact was an important process that supported their learning, action and change.

Currently, there is considerable demand for evaluators to demonstrate the impact of the initiatives that they are evaluating. The authors believe that the use of The Evidence of Impact Model provides evaluators with a structure with which to do this. The model is new and the authors look forward to learning about the experiences of evaluators as they use, test, expand and develop The Evidence of Impact Model in their future work.

Footnotes

Acknowledgements

The authors wish to thank the participating teams, their line managers and organisations, and the EfC facilitators, EfC subject experts and EfC administrator for their contributions. They also wish to thank Eleanor Kotas, University of Liverpool, for her support with searches to inform the literature review, and Paul Davies and Murray Saunders, Lancaster University, for their methodological support and guidance.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/ or publication of this article: This article represents results of research funded by the National Institute for Health Research Collaboration for Leadership in Applied Health Research and Care North West Coast (NIHR CLAHRC NWC), Liverpool Reviews and Implementation Group and University of Liverpool Institute of Population Health Sciences. The views expressed are those of the authors and not necessarily those of the NHS, NIHR or the Department of Health and Social Care.