Abstract

This article contributes to Developmental Evaluation practice by highlighting the impact of embedding Developmental Evaluation principles into the learning approach of a capacity building initiative named Evidence for Change. The health and social care teams participating in Evidence for Change were operating within a complex environment requiring radical change to tackle health inequalities and provide modern, cost-effective, evidence-informed, quality of care for all. A gap in the literature around capacity building models for knowledge mobilisation led the authors to explore Developmental Evaluation as a potential approach. Naturally occurring evidence was used to develop Evidence for Change. Questionnaires, focus groups and structured interviews were used to assess the impact. Results demonstrated that Evidence for Change supported evidence-informed practice change and learning for individuals, teams, organisations and local communities. The report concludes that embedding Developmental Evaluation principles into Evidence for Change was instrumental in providing an innovative capacity building model for effective knowledge mobilisation in health and social care.

Introduction

Patton (2016) outlines eight essential Developmental Evaluation (DE) principles to guide evaluators working with those who seek to navigate complexity to create and innovate. This article aims to illustrate the impacts and outcomes of embedding these eight DE principles into the learning approach of a capacity building initiative named Evidence for Change (EfC). DE was also used to create EfC and this together with the results of the qualitative impact evaluation are discussed to explain how the principles were embedded into EfC and how the outcomes arose.

Developed in 2015, EfC was designed to support multidisciplinary teams who wished to use evidence to improve health and social care provision with a focus on reducing health inequalities. EfC was funded by the newly formed Collaboration for Leadership in Applied Health Research and Care North West Coast (CLAHRC NWC). Established to bring together local health and social care providers, commissioners and universities, the role of CLAHRCs nationally was to develop and conduct health research and support the translation of findings into improved patient outcomes.

Background context- why was DE chosen?

DE provides a helpful approach to use with real-time projects in a time of uncertainty, flux and change, where the way forward is not known and is likely to constantly change. The role of DE is to support the ongoing development, innovation and change required to enable such projects to adapt to the emerging and complex environments in which they are situated (Dozois et al., 2010; Gamble, 2008; Patton, 2011).

Within England’s health and social care system, where the EfC teams were situated, large-scale service transformation was required to tackle the health and wellbeing gap, the care and quality gap, and funding and efficiency challenges (National Health Service, 2014). As a part of this shift in service delivery, providers were mandated to develop new models of care informed by both scientific evidence and service user needs and views. This was to be achieved by building new relationships with patients and communities (Department of Health, 2010; National Health Service, 2014) to involve them in co-producing services (Batalden et al., 2015; Social Care Institute For Excellence (SCIE), 2015). Integrated care was one of the new models to be developed and implemented. This model requires multiagency, multidisciplinary teams to work together to provide, often complex, interventions to improve population health and reduce health inequalities.

The current rate of knowledge mobilisation (the transfer of scientific evidence into health and social care practice) is slow and problematic. Research has demonstrated that this phenomenon, also known as the ‘evidence to practice gap’, is costly as it is delaying the implementation of the most effective and efficient treatments and care (Grimshaw et al., 2012; Lau et al., 2016; Ward et al., 2012). Knowledge mobilisation is reported to be hampered partly by organisational barriers and issues arising from working between and across health and social care professions (Grimshaw et al., 2012). This does not bode well for developing effective evidence-informed care or for developing integrated care.

Given the requirement to use evidence-informed practice and the known difficulties in knowledge mobilisation, the EfC developers (L.M.H. and R.D.) sought a capacity building model to support practitioners to find and use evidence. The need to align professional education and capacity building programmes with the changing global health and social care landscape is recognised within the health and social care sector (World Health Organization (WHO), 2010). An alignment to support integrated care, however, requires deviating from traditional capacity building models that cater for individuals and support silo (uni-professional) working, to making capacity building provision for multidisciplinary and integrated teams. Supporting the argument in favour of teams, it is also suggested that a team-based approach may provide a solution to tackle the ‘evidence to practice gap’ (Kislov et al., 2017; Lau et al., 2016). Within the literature however, there is a scarcity of capacity building models that support team-based knowledge mobilisation. The EfC developers therefore decided to create EfC from scratch to help meet this need.

Due to the turmoil and upheaval within the system and the unknown needs of the yet to be created EfC teams, a DE approach was selected to support the development of EfC. In addition, early within the preformative (development) phase of EfC, the developers realised that if the EfC teams were to innovate to create an impactful practice change, then they too could benefit by using a DE approach. Thus, in an attempt to constructively align (Biggs, 2003) their teaching and learning methods with the newly emerging environment, the developers also incorporated the DE principles into EfC’s approach to learning.

The EfC teams were located in the North of England where socio-economic health inequalities have a more acute and dire impact on population health compared to the rest of the country (HIAT, 2017; Whitehead, 2014). Therefore, in addition to involving service users in the co-production of service developments informed by scientific evidence, the EfC teams were also focused on tackling the difficult issue of reducing health inequalities. DE was therefore deemed an appropriate approach to support the EfC teams as they were operating in a complex, rapidly emerging environment, requiring service and practice redesign on many levels.

As EfC was new, the developers decided to conduct a qualitative impact evaluation to establish the short- to medium-term impacts and outcomes of the initiative. A qualitative study was selected because the EfC developers felt that it would enable them to explore the changes happening, even if only small, for the teams and their services. The EfC developers also wanted to understand from the participants’ perspective what, if anything, they personally and collectively had gained from their involvement. While conducting a qualitative impact evaluation to assess medium-term impacts is not necessarily in keeping with a DE approach (Fagen et al., 2011), as discussed in this article, it has proven to be useful.

Methods

Two evaluation approaches were used. First, DE was used to both create EfC and to underpin the learning approach used within EfC to support the EfC teams. Second, a qualitative impact evaluation was conducted to establish the endpoint outcomes and impacts of EfC.

Practical steps in creating EfC

Prior to EfC’s launch (June 2014–April 2015), policy documents, published literature and stakeholder feedback were gathered and analysed by the EfC developers to create a flexible capacity building framework for EfC. The framework was used to advertise EfC to local health and social care providers. Data from the completed application forms and team selection interviewers were analysed to refine the framework, develop workshop 1 and the online portal, and select and brief the EfC facilitators.

During EfC’s lifespan (May–December 2015), EfC was incrementally and conjointly developed together with the qualitative impact evaluation. EfC was tested and developed in real-time using feedback and feedforward (Ferrell and Gray, 2016) collected during and after each workshop from the participating teams, together with observations by the EfC facilitators and developers of the teams’ developments, discussions and dilemmas.

EfC team members and EfC facilitators were invited to take part in the pre-launch and lifespan stages of EfC’s development. The participants’ line managers and EfC funders were invited to contribute to the pre-launch stage of EfC’s development.

Using DE principles to create EfC

To ensure EfC was fit for purpose, it was piloted by the participating teams for whom it was designed and tested live within the complex environment within which the teams were operating. This enabled the EfC developers to understand and interpret development through the complexity lens (Patton, 2016).

The EfC developers had a non-standard DE role, while they were embedded within the design team, they were not independent evaluators focusing solely upon the evaluative elements of the developmental work. Instead, they held multifaceted roles, they were the EfC designers and leaders and as such, they were key decision makers in the development and delivery of EfC. As EfC is a capacity building initiative, the EfC developers were also strategic learning partners (Preskill and Beer, 2012) supporting the participating teams in evaluative thinking (Lam and Shulha, 2015). Finally, they were also insider researchers (Robson, 2000) responsible for evaluating the impacts of EfC. Due in part to this complex and potentially conflicted role and partly to support their own learning in relation to evaluation, the EfC developers enlisted a highly experienced evaluator from Lancaster University, as an evaluation mentor and critical friend (Costa and Killick, 1993).

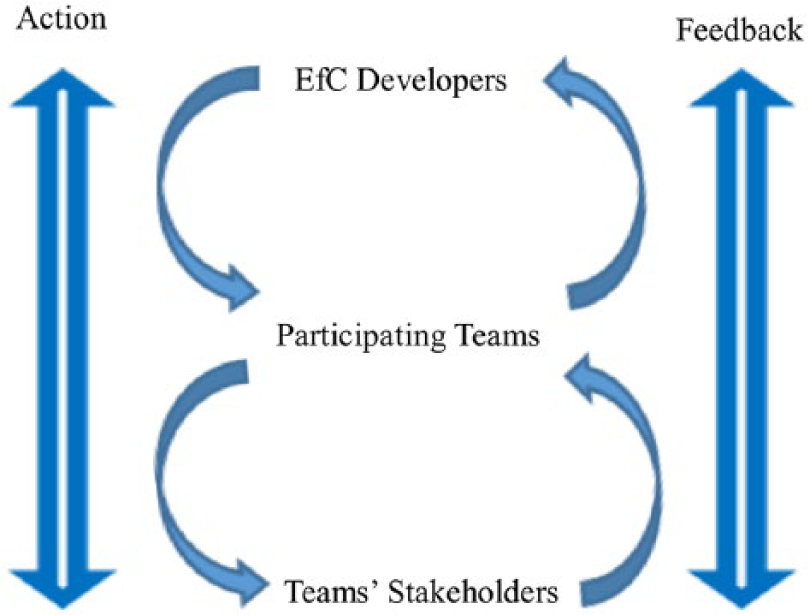

The EfC developers were the intended users of the DE evaluation data; therefore, the timing and coherence of DE feedback needed to fit with their requirements and deadlines. However, as depicted in Figure 1, the participating teams were the intended users of EfC; therefore, the EfC developers needed to use data collection methods that would not disrupt the teams’ activities and would collect sufficient and timely data to enable the shaping of EfC so that it was fit for purpose. To achieve both goals, the EfC developers planned opportunities within the workshops to regularly ask probing questions and seek feedback. Workshop activities were designed to elicit feedback about team projects throughout the workshop day. At the end of the workshop, team members were asked for feedback on the workshop (what worked or did not?) and importantly feedforward (what would help next time?) (Ferrell and Gray, 2016). Observations made by EfC facilitators were utilised to elucidate and validate participants’ feedback and progress. The EfC developers’ meetings between each workshop provided an opportunity to discuss the evaluation feedback and reflect upon progress against the flexible capacity building framework. The findings informed the design of the forthcoming workshop and shaped facilitator support offered outside of the workshops. Therefore, the initiative and evaluation were both interwoven, interdependent, iterative and co-created and made use of the naturally occurring evidence available (Patton, 2016). As depicted in Figure 1, the systems thinking principle was applied to EfC’s design by the sequence of continually testing developments live in the field and utilising immediate feedback from both the EfC teams and their stakeholders.

The continual sequence of action and feedback that took place between the EfC developers, the participating teams and the teams’ stakeholders.

Embedding DE principles into EfC’s learning approach

EfC’s design utilised elements of Blended Learning (Glazer, 2012), which combined online and workshop support for learning; Work-Based Learning (Basit et al., 2015; Roodhouse, 2010), which involved learning from and for work; and Experiential Learning (Kolb, 1984), which involved learning, adapting and developing through reflection on a sequence of learning experiences. Regular opportunities were also provided for Team Reflexivity (Schippers et al., 2015), which involved the team in reflecting on how they were working and acting together. As the teams directed and led their own evidence-informed change projects, elements of Participatory Action Research (Reason and Bradbury, 2008) and Collaborative Inquiry (Bray et al., 2000) were also incorporated into EfC. While elements of these teaching, learning and research approaches are reflected in the following narrative, the focus is on the DE principles that were embedded into EfC’s learning approach.

The teams participating in EfC were required to bring a key issue or challenge from their practice to address and this formed the basis of their live project. In keeping with DE, the teams’ projects were being developed step-by-step, in real-time as EfC unfolded.

Each team had a leader who was a health or social care practitioner responsible for the project. Teams were requested to be multidisciplinary and multiagency in which all members were equal peers including the public representatives. The members were selected on the basis of being intended service users (e.g. patients, health and social care practitioners, commissioners) who represented various levels of decision making from across the system. The teams were encouraged to think systemically, involving a cross section of stakeholders in order to consider the interrelationships, perspectives, boundaries and social systems (Patton, 2016) within which they wished to make impactful practice change.

To support systemic thinking, EfC was sequenced to regularly allow time in the workplace for teams to test in practice the ideas they developed in the workshops and receive ongoing timely feedback from a variety of stakeholders (Patton, 2016). To enable evaluation rigour and utilisation (Patton, 2016), time was provided in the next workshop to share feedback, to collectively reflect upon impacts and to inform team decisions. The practical exercises were designed to enable the taught content (theory) to be directly applied to the teams’ projects. At the end of the workshop day, each team devised an action plan to steer the next phase of their workplace activity. Individual team members also met together regularly between workshops to move their project forward.

Alongside identifying organisational, stakeholder and local evidence, the teams were supported to identify, analyse and evaluate scientific peer-reviewed evidence. The objective was that teams would develop their projects by continuously interrogating the evidence base, while taking into account the local context by testing out their designs in practice.

EfC focused on flexibility and learning and therefore, where necessary, teams were encouraged to change plans as they progressed, to ensure their projects and the project evaluation data they gathered were suitable for the intended use by the intended users (Patton, 2016). For example, the teams were not pushed to set aims and objectives too early. Instead, the teams were encouraged to have intended aims and a general sense of direction, to be open and receptive to their stakeholders’ feedback, as well as their own observations and be willing to adapt in order to ensure their projects were in-step with any changes in the environment. Teams therefore regularly reviewed and adapted their project aims and objectives to ensure they aligned with their changing environments.

The concluding dissemination event was as an opportunity for teams to consolidate their learning and to formally share their results with their stakeholders. The teams therefore created both an academic poster and a 40-minute presentation. In keeping with the innovation niche principle (Patton, 2016), the dissemination event provided the EfC teams with an opportunity to explain how the processes and concepts they were developing were innovative.

The EfC teams did not have an embedded evaluator as a part of their team, instead each team was assigned a facilitator, provided by CLAHRC NWC. The facilitators were academic subject experts and their role was to facilitate team learning. They supported team discussion and reflection within the workshops by asking strategic and evaluative questions (Lam and Shulha, 2015) and kept teams focused throughout the day. Facilitators were also responsible for providing information, network contacts and mentoring support outside of the workshops, as and when required. The facilitators supported the teams but were not embedded within them.

Qualitative impact evaluation methods

The qualitative impact evaluation, designed during EfC’s lifespan, was conducted post-initiative to determine EfC outcomes and impacts. Impact data were gathered, January to September 2016, from participant online questionnaires using open questions and Likert-type scales (piloted prior to issue by a cross sample of participants), individual team focus groups, EfC facilitator focus groups, structured interviews with participants’ line managers, team videos, posters, presentations and reports. (Focus groups and interviews were conducted by an interviewer and a note taker, audio taped, transcribed verbatim and anonymised). Learning from EfC was shared with teams, facilitators, the funder and wider audiences via reports, posters, a video, conference presentations and discussions.

Data analysis

Overarching indicators based on Saunders’ (2007, 2011) model were adapted and developed by the authors to measure impact arising from learning and change across five levels. The newly developed indicators were: Level 1 (Self), Level 2 (Personal Practice), Level 3 (Team or Department), Level 4 (Organisation or Local Community) and Level 5 (Professional Sector or Wider Society).

During the preformative phase of EfC, the five overarching indicators were used repeatedly by the developers to ascertain the effectiveness of EfC as it unfolded. This process informed the decisions about the next stage of EfC’s development. During the qualitative impact evaluation, the overarching indicators were used to aid the thematic analysis (Braun and Clarke, 2006; Ritchie et al., 2014) of the endpoint impact data.

Using an iterative process, the qualitative impact evaluation data gathered post-initiative were uploaded to Atlas.ti (2016–2017) by EfC developer (L.M.H.) and coded against the overarching indicators using deductive analysis (Braun and Clarke, 2006; Ritchie et al., 2014). This process enabled data to be assigned to each of the five levels. To explore the relationship within and between the five levels, the data were then refined further by inductive analysis (Braun and Clarke, 2006; Ritchie et al., 2014) to identify new codes within the data at each level. Using these new codes, the data within each level were then recoded and analysed to illuminate topics and sub-topics. Validity, accuracy and consistency of coding were checked by EfC developer (R.D.) and an evaluation mentor. The different perspectives provided by the four groups of participants (team members, facilitators, EfC developers and line managers) were used to elucidate and confirm meaning arising from the qualitative impact data.

Ethical considerations

Informed consent was gained from all those taking part in EfC. Ethical approval from the University of Liverpool was granted to subsequently involve the team members’ line managers (Reference Number IPHS-1516-147). All data relating to the evaluation are securely stored at the University of Liverpool and all quotes have been anonymised to protect the identity of the participants.

Results

The outcomes of using DE to both create EfC and to underpin EfC’s learning approach

EfC was created from scratch and built incrementally over 8 months using a DE approach to guide the development work. Shaped by the analysis of the EfC pre-launch data, EfC’s flexible capacity building framework incorporated an allocation of 2 days per month in the workplace to conduct a project and consult with stakeholders, interspersed with 5 day-long workshops and a dissemination event. An online portal, housing workshop materials and university library access, was also provided. Each team was supported within and outside of the workshops by a facilitator, who was an academic subject expert. Using stakeholder feedback and observations during EfC’s lifecycle enabled the just-in-time development of the workshop content and the tailoring of support provided outside of the workshops.

Four teams came forward to take part in EfC. Each team brought with them a live project brief to work on, as follows: Team 1- Improve esteem for dyslexic teenagers in an area of high deprivation; Team 2- Change organisational policy and practice around self-injury harm minimisation; Team 3- Reduce premature death from bowel cancer in an inner city Black, Asian and Minority Ethnic community; and Team 4- Reduce ambulance callouts in elderly care.

None of the teams taking part in EfC dropped out; all four teams were newly formed and multidisciplinary and three were also multiagency. Three teams remained the same in composition and size, whereas one team steadily grew in size over the duration of the project. This is a significant outcome as capacity building and professional development programmes are known to experience dropout. Each team also accessed and analysed scientific and local evidence in order to make a successful evidence-informed practice change. This is also a significant outcome, because the mobilisation of knowledge into health and social care practice is commonly known to be problematic (Grimshaw et al., 2012; Lau et al., 2016; Ward et al., 2012).

Reported outcomes for each of the four teams were Team 1, joined forces with another team within their organisation conducting similar work, they contributed the evidence-base they had developed within their EfC project which helped to shape their organisation’s work going forward; Team 2, used the evidence-base generated within their EfC project to write an organisational policy and work procedure which they presented to their board for consideration; Team 3, used the evidence-base generated within their EfC project to gain funding for the continuation and evaluation of their project, they have since piloted their EfC project in 11 general practitioner (GP) surgeries; Team 4, were persuaded by their public adviser members to change the focus of their project, which they successfully achieved. They used the evidence-base generated within their EfC project to create a tool for supporting communication between care home residents, carers, family and practitioners which was adopted and used by their local community. The results therefore demonstrate that EfC was fit for purpose.

A significant non-linear outcome (Patton, 2011) of EfC was the capacity development of CLAHRC NWC through the development of the EfC facilitators. The facilitators reported developing a deeper understanding of the complexity of the health and social care environment in which CLAHRC NWC partners were operating, developing new networks and developing the ability to undertake future DE. The facilitators’ learning was also put to good use in incrementally developing a similar new initiative for CLAHRC NWC, named ‘the Partner Priority Programme’, as well as contributing to the development of public engagement policies and practices.

Results of the qualitative impact evaluation

The response rates to the online questionnaires were as follows: team members 50 per cent (

Qualitative impact findings

Analysis of the qualitative impact evaluation data highlighted five interconnected themes, developed through capacity building, which enabled successful EfC team outcomes. These were teamwork, involving public as equal peers, increased communication, learning and development, and increases in positive attitudes and emotions. In addition, the role of organisational support was acknowledged as being important.

Theme 1- teamwork. Team members identified that, while it was difficult to begin with, ‘chaotic with a lot of arguments’ (Team member) and ‘meandering around’ (Team member) to establish the project and develop roles within the team, members reported that, over time, their team bonded. They increased their awareness of each other’s skills, developed a shared understanding, shared tasks and responsibilities, felt supported by the team and stated that the team stuck together during difficult times. Reflected in this feedback is the reality of working developmentally (Patton, 2011), of not having rigidly set plans to work to and the complexity of involving stakeholders from across the system. However, over time, team members felt it was important to work as equal peers and that having a variety of views helped them to gain new insights, to think through ideas and to shape their projects.

… as a multiagency team, the fact that we were there from different backgrounds, different perspectives but we were equal on that team …, we were all coming at things from slightly different perspectives and it was really helpful just having that time and space to focus on a piece of work … (Team member) … it was impressive that you know there were people from multiple levels in different organisations and they worked extremely collaboratively and extremely respectfully together, there didn’t seem to be a hierarchy as such within teams. (Facilitator)

The team leader’s role was highlighted as being central to the team’s success. Team leaders were seen as being responsible for selecting team members with relevant skills, developing and supporting the team, being open to new ideas, enabling flexibility and change within the project, and keeping the team moving forward. It is possible that the team leaders were acting as informal developmental evaluators, leading their teams through a cycle of action and reflection in order to incrementally develop their projects.

… he (the team leader) had thoughts about the whole programme, the overall tasks needed to be achieved to lead the programme and he chose the professionals or the people to be involved … (Team member) … this project has certainly made me think, and I think others think around how important it is to get the right people involved and engaged right from the beginning. (Team member)

The diverse membership achieved within each team enabled the teams to connect with a wide range of stakeholders from across the system within which they operated, thus each team was able to communicate more efficiently and effectively with their stakeholders. Each team’s collegiality appeared to have a positive impact on team members’ motivation and productivity. Team members also reported cascading their learning to others outside of the team by using their teamwork skills, sharing information, raising awareness and challenging perceptions, which helped prepare the ground for change.

Theme 2- involving the public as equal peers. Each team took a different approach to public involvement. One team consulted with practitioners, parents and young people to gain a variety of service user perspectives; one team involved an expert by experience to guide their work, while another involved six public members in order to represent the multicultural local community and both, they and one other team, facilitated wider public engagement through their public members.

Evaluation feedback indicated that team members who were practitioners felt they had learnt about the role and the value of involving the public. Several practitioners reported becoming disconnected from their service users by moving up the management ladder and now understood the importance of being reconnected with them. They explained how valuable the public members’ contributions were in shaping the project and in helping the team to communicate with wider service user groups.

It was a good reminder of the benefits of going to care settings to see the work in practice and to feel what difference the work is making, as I sometimes feel removed from this. (Team member) Overall I would say it was brilliant and especially the invaluable contribution of the public advisory panel members when they came on board … (Team member)

All practitioners stated how much they had enjoyed the public involvement aspect of the project and most stated they had plans to embed public involvement into their future practice.

In terms of public engagement I guess, it now seems preposterous to continue any kind of project that’s around healthcare provision without involving the people who use the service … (Team member)

Conversely, team members who represented the public explained that they were apprehensive to begin with and not sure of how they might contribute to the team. However, with the team’s support, they felt respected and listened to, which helped them to engage and contribute. They stated they had gained confidence in communicating with practitioners and learnt about the services they were supporting.

… I initially started apprehensive because I thought you know as a public member, not being a professional, am I able to contribute anything? What is it that I can contribute? And it wasn’t until I started on the project … one of the proudest moments was when I was at one of the workshops and … actually I did mention something and everyone turned round and said yeah you know that is something that we were thinking of as well … We think of professionals from a public point of view as hierarchy and you feel you can’t really communicate but I felt that, that barrier as well has gone down. (Team member)

Both parties felt that public involvement helped to shape their project so that it was more likely to be fit for purpose. One team completely redesigned their project and refocused their aims based upon their public member’s advice.

… I was surprised I think by the extent to which our PPI’s (public member’s) involvement shaped the project … I knew he would be a really key person in the team but I don’t think I realised how much it would shift what we did … (Team member) I have learnt that the people you are trying to effect the change for can actually, with support, create the change themselves. (Team member)

Theme 3- increased communication (including networking and stakeholder feedback). Participants reported increased two-way communication between the team and a variety of stakeholders on many levels, including national government agencies, clinical commissioning groups, senior executive boards, local community members and family. Team members explained how some meetings about their projects were difficult and challenging with many questions asked. They also outlined the importance of gaining respect from community leaders and clinicians so that the door for future communication could be left open.

… well it’s got out to the community which I think is the most important part to influence you know … the community members involved, they’ve gone into their communities and spread the word, that’s probably going to be the most effective part of the whole thing, not the phone calls to the patients, probably word of mouth within the communities, so by getting, engaging with the community I think that could have the greatest long term impact really … (Team member)

Team members expressed how surprised and motivated they were when they heard positive feedback from senior managers and local communities about their project. They explained how this feedback helped them to reflect and shape their plans. They outlined how this impacted on their perceptions of their own abilities leading to an increase in confidence and explained how they used their learning to influence and challenge other people.

We have just organised a Health Awareness Event addressing, highlighting those inequalities and especially cancer awareness. We were overwhelmed, more than 230 attended, and the evaluation is great. (Team member)

Team members and line managers outlined how a variety of media enabled them to reach a wider audience; in particular, they noted the impact of short videos, posters, reports and community workshops.

… certainly I’ve had feedback from councillors, executive directors and head of safeguarding and the general feedback is this is fantastic, this is fabulous, you need to show this (the video), … so it’s starting to spread … I think films are very powerful … (Line manager)

Theme 4- learning and development (including skills and knowledge development). Team members and facilitators reported four key aspects to their learning:

Learning through experience (Kolb, 1984) within the workplace by taking part in the EfC project. Team members reported developing skills and knowledge in team leadership, inter-professional learning, project management, community engagement and designing projects with a utilisation focus.

For the first time being involved in something that’s got an opportunity to influence more widely across an organisation has had a personal impact for me, that’s the first time I’ve done that and to realise that you can do that is really good. (Team member) What has been most valuable, I think doing a presentation to the committee meeting (name removed for anonymity), the three of us, although it was a very tight meeting schedule and we were slotted in and to get unanimous support after that, that was very powerful … (Team member)

Applying learning directly within the situation or situated learning (Kirkpatrick, 1996; Saunders, 2007), for example, applying workshop taught content within their EfC project. Team members and facilitators reported that EfC supported them to identify health inequalities in their own community, develop multi-professional teamwork skills, use evidence searching and analysis skills, plan change, create an academic poster, gain awareness of the health and social care context in which they were operating, and meaningfully engage public in a project.

It gave us the opportunity to come together as a multiagency multi-organisational team to work through from the very early stages of scoping the idea to, with your support doing the various things from understanding around health inequalities, the work around how we would search and critically analyse the research that’s happened, right through to all the things around looking at stakeholders communications … (Team member)

Gaining new personal perspectives and shifting perceptions. Team members and their line managers explained that they realised how knowledgeable they had become, realised that they were having an impact, that they were learning from others and able to understand things from another perspective and noted how their own morale and motivation had improved by being involved in the whole project and seeing the positive outcomes of their work.

… from a management point of view, that kind of increasing the efficacy of the department and the team is a key thing for me, … from an individual point of view, it is that element of seeing an impact from something that he’s (team member) done … (Line manager)

Transferring learning from one environment to another to benefit a different piece of work (Saunders, 2007). For example, team members reported informing wider practice improvement, engaging other partners within their project, using robust evidence to inform commissioning decisions, and informing policy and practice on public engagement.

I learned about encouraging change in my organisation and I encouraged learning through evidence. (Team member)

Theme 5- increases in positive attitudes and emotions. Team members’ confidence, motivation and morale were reported to have increased, which appeared to have positive impacts on their ability to undertake actions and to challenge others. Team members and facilitators unanimously stated the enjoyment and satisfaction they gained from being involved in the team and in the project. There is evidence that team members, including the public members and facilitators, continued with their project work, their engagement and their networking after EfC concluded.

I now feel more confident about speaking about the topic area and engaging people in our project. (Team member) … the staff that were involved in the programme (EfC), I think it strengthened their belief in what they were doing and now they have the evidence to say what they were doing, what they were thinking, what they were taking out into practice was right because actually some of this is going on in practice so they’re now kind of doing that with a lot more confidence … (Line manager) I think they’ve (two team members) gained, certainly from talking to them, a lot of personal satisfaction about being involved in the programme (EfC) … (Line manager)

Organisational support was additionally seen as key by many team members. Of particular note was protected time to work together and undertake the project, line manager support, organisational buy-in and support from other collaborating organisations who provided resources.

I think in terms of authority to act though the fact that we have that level of buy-in from that level in the organisation, from your (line manager) level of seniority in the organisation, that has made the world of difference, because had I not had (line manager name removed for anonymity) to go to then I probably couldn’t make those things happen. (Team member)

In summary, the five themes reveal that the diverse membership of each team enabled the team to gain a shared understanding of the problem, to support each other, and to communicate with and gain feedback from a wide range of stakeholders from across the system where change was required. This helped to reduce communication barriers between stakeholders and led to an increase in networking and feedback. Team members were able to apply their learning directly within their EfC project, to learn by experience, gain new perspectives and even transfer their learning beyond their EfC project. This process led to an increase in positive attitudes and self-belief, which enabled team members to challenge others, take action and pave the way for change.

Discussion

What do the findings mean for knowledge mobilisation in health and social care?

EfC brought together academics, health and social care practitioners and service users to co-produce (Batalden et al., 2015; Marshall et al., 2014; SCIE, 2015) evidence-informed practice change. This was in keeping with the requirements of health and social care reforms (National Health Service, 2014, 2017) and the aims of EfC’s funders (CLAHRC NWC). The qualitative impact evaluation demonstrated that by being involved in EfC, the teams were starting to reduce barriers between and across the professions and between professionals and service users (Grimshaw et al., 2012). In addition, by engaging their public members as equal peers, the multidisciplinary teams increased their ability to communicate with a cross section of stakeholders from within the system where change was required. By using evidence to inform their decisions, the teams also demonstrated that they were starting a journey towards reducing the evidence to practice gap (Grimshaw et al., 2012; Lau et al., 2016; Ward et al., 2012).

As the environment was both complex and shifting, the iterative DE process enabled team members to engage with key stakeholders, incrementally test out ideas in practice, receive timely feedback and adapt their plans in order to take account of newly emerging information and previously overlooked service user needs. Taking account of service user needs is important within health and social care as it helps to ensure services are fit for purpose and helps towards reducing health inequalities by creating services that are accessible and suitable for service users (Whitehead, 2014).

What has been learnt from the qualitative impact evaluation of EfC?

The results of the qualitative impact evaluation demonstrate that the teams upheld the co-creation, systems thinking and timely feedback principles as applied within their complex environments (Patton, 2016). The steps that supported the EfC teams’ positive outcomes are shown in Box 1.

Three steps leading to positive EfC team outcomes.

Step 1- The projects tackled by the EfC teams arose from organisational goals. These goals may have been challenging in some way and the department(s) responsible for achieving each goal felt they needed extra support to help them tackle the work, which is why EfC appealed to them. Therefore, each team’s overarching project aims were aligned with their organisation’s goals.

Step 2- Team leaders gained line manager approval to join EfC and gathered a multidisciplinary team including public members to work on the project as equal peers.

Step 3- The team communicated among themselves and with key stakeholders from across the system to design plans and develop ideas. Teams were not pushed to set aims and objectives too early within the project and EfC was set-up to allow flexibility and learning, which enabled teams to change plans as they went in order to adapt within the emerging environment.

The continual communication with multiple stakeholders at many levels, as shown in Box 1, enabled three interconnected processes to take place, that facilitated change:

It prepared the ground for the project to be accepted within the organisation as line managers and service users heard about the work as it progressed; this kept the project at the forefront of key stakeholders’ attention and enabled them to feedback and contribute ideas.

It allowed teams to test ideas step-by-step and for the project to be adjusted as it unfolded, to ensure it was fit for purpose.

Feedback gained helped to build team members’ confidence in what they were doing; their enthusiasm was developed and shared, and their motivation increased as they received approval and saw the positive outcomes of their work.

What do the findings mean for capacity building?

It is feasible that the constructive alignment (Biggs, 2003) of EfC’s learning approach with the environment in which the teams were operating has supported the positive outcomes noted in the qualitative impact evaluation. The alignment was achieved in two ways. First, the DE approach enabled the EfC developers to explore stakeholder requirements, in order to create a flexible capacity building framework. The framework, together with team and facilitator feedback were used in real-time to create each workshop to suit the teams’ emerging needs. Second, by using DE principles to shape the workshop structure, influence team composition and guide the learning processes, the DE principles were embedded into EfC’s learning approach. This provided a guide to support the teams to develop their own projects. The key elements of DE that supported the teams to learn and adapt within their complex environments were: engaging teams of equal peers who represented stakeholders from across the system where change was required, thus enabling systems exploration and thinking; sequencing support for the teams around their real-time projects enabling them to test ideas and adjust plans as they went; actively encouraging and providing opportunities for the teams to gather evidence and reflect upon impacts from a variety of diverse perspectives, before planning the next step, thus encouraging co-creation by incorporating DE processes into the change process. Therefore, this article adds to modern learning theories by explaining how DE principles can be used to underpin learning and capacity building approaches to support teams to innovate and change within complex environments.

What do the findings mean for DE practice?

The role of DE is to support the ongoing development and change required to enable projects to adapt to emerging and complex environments (Dozois et al., 2010; Gamble, 2008; Patton, 2011). Due to the adaptation required within such complex environments, a standard DE approach, a recipe for how to conduct a perfect DE, is not feasible. As Patton (2011) outlines, the adaptive developmental evaluator is a bricoleur using whatever can be found to fit the job. In this vein, the EfC developers also created their own way forward in the development of EfC. For example, unlike the cases outlined by Lam and Shulha (2015) and Lawrence et al. (2018), the EfC developers were the evaluators, developers and initiative leads, they held multifaceted roles. Bias was limited and objectivity was provided by employing an experienced evaluation mentor to question assumptions, and check and validate the EfC developers’ findings. The process of using EfC facilitators also provided a level of crosschecking and exploration to balance the views of the EfC developers. Feedback, fact checking and evidence provided by the EfC teams and their line managers also supported the EfC developers to think and act objectively in relation to the development of EfC and in conducting the qualitative impact evaluation. It maybe that the role the EfC developers played is not standard practice for DE, but it has still proven to provide successful outcomes.

It is suggested that evaluation utility can be greatly enhanced by in-depth knowledge of both DE and the field being changed (Lam and Shulha, 2015). Given the lack of experienced DE experts and the limited funding available at the time, it was not feasible to embed a DE specialist within each team. The EfC teams, however, accessed DE expertise from the EfC developers and gained in-depth and previously unexplored knowledge of the field by involving public members together with a variety of practitioners. Considering the qualitative impact evaluation feedback and the positive outcomes achieved by the teams, it is possible that the EfC team leaders guided by the EfC initiative became informal developmental evaluators leading their own teams steadily forward in the incremental development of their evidence-informed projects, which raises the question of whether specialist DE experts need to be embedded within each project, or whether through a capacity building initiative such as EfC, they could be sufficiently effective while taking a more devolved role.

The four stages to the adaptive cycle outlined by Patton (2011) involve sequential periods of creation, improvement, conservation and finally termination. This sequence of a project’s life cycle is however questionable in an environment that is constantly adapting to meet the changing, growing, accelerating health and social care needs of a diverse population. In such circumstances, fixing the parameters and co-ordinates of a capacity building initiative like EfC would make the initiative inflexible and unable to respond to the needs of the participants. This accords with Patton’s (2016) later work, where he suggests that in order for the initiative to remain current in a changing world, the developers may opt for an ongoing cycle of creation. The EfC developers also argue that they will continue to need to use DE, especially given the following two key factors. First, EfC is a capacity building initiative designed to support health and social care teams to develop evidence-informed practice change in complex environments. The teams that apply to take part in EfC will always bring with them new change projects and for that reason, the DE principles will remain embedded within EfC’s learning approach. Second, as the needs of future EfC teams are unknown and are likely to change with each cohort of teams and because being immersed in DE is a part of the learning experience for the teams, the EfC developers intend to continue to use a DE approach to create and develop future iterations of EfC. This suggests therefore, that EfC will remain in the creation and development stage until no further teams come forward to take part, at which point it will simply cease without a conservation or termination phase. This factor is likely to need careful consideration in the scaling-up of EfC.

This article also provides a useful example of the benefits of assessing the short- to medium-term impacts of an initiative developed through DE. Fagen et al. (2011) warn that premature summative evaluation can suppress innovation but also suggest DE may be a precursor to a cycle of formative and summative evaluation undertaken once the initiative is established. However, by establishing the short- to medium-term impacts of EfC across five levels, the developers were able to explore the strengths, limitations and unexpected outcomes that unfolded from DE.

Conclusion

By aligning with the environment, the capacity building initiative supported individual and team learning and enabled all four participating teams to achieve evidence-informed practice change in a short timescale with scant resources. Exploration of the data revealed that embedding the DE principles within the capacity building learning approach was fundamental to the teams’ successes.

EfC provides an exciting capacity building model to support effective knowledge mobilisation in health and social care. Undertaking a qualitative impact evaluation of the short- to medium-term outcomes of EfC, analysed against the five overarching indicators noted in the methods section, enabled the exploration of the underlying processes that led to success.

Strengths and limitations

The strengths of this research are that, by conjointly developing a qualitative impact evaluation during the development stages of EfC, the developers were enabled to capture and report the short- to medium-term outcomes that arose. In addition, using the five overarching indicators to support deductive and inductive thematic analysis (Braun and Clarke, 2006) of the qualitative impact evaluation data enabled the identification of the concepts and processes that led to success.

The limitations of this research are that it was a small-scale study conducted over a relatively short timescale (16 months including follow-up), with only one round of follow-up. This means that it was not possible to ascertain the impacts on local population health outcomes or, in the longer term, the degree to which evidence-informed decision making as a practice had been embedded into the everyday practices of the participating organisations or local communities.

Recommendations

We recommend further research into the processes and impacts of EfC by conducting a larger scale research project with broader reach to assess the generalisability of the findings. We recommend that population health outcomes are considered and that punctuated periods of endpoint follow-up with participants are conducted beyond 9 months.

We also recommend that evaluators conjointly develop a qualitative impact evaluation during the preformative stages of their initiative, so that they are best placed to capture the arising short- to medium-term impacts.

Footnotes

Acknowledgements

The authors wish to thank the participating teams, their line managers and organisations, and the EfC facilitators and EfC administrator for their contributions. Thanks also go to Paul Davies and Murray Saunders, Lancaster University, for their methodological support and guidance.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article:This article represents results of research funded by the National Institute for Health Research Collaboration for Leadership in Applied Health Research and Care North West Coast (NIHR CLAHRC NWC) and the Liverpool Reviews and Implementation Group, University of Liverpool. The views expressed are those of the authors and not necessarily those of the NHS, NIHR or the Department of Health and Social Care.