Abstract

We argue that everyday creative tasks that involve generative artificial intelligence (GenAI) are tacitly performed within computational test environments that not only enable and constrain human agency but also shape the epistemic conditions that allow us to ascertain what constitutes creativity itself. Without making such taken-for-granted conditions explicit, society will remain unable to critique them. To make these conditions explicit, we combine elements of cultural anthropology, science and technology studies and the pragmatic sociology of critique to develop an alternative framework for describing such tasks processually as testing creativity. We then apply this framework to a case study of a university student’s use of GenAI to create an image based on digital ethnographic observations. Adapting descriptive categories from 20th century experimental creativity tests like of fluency, flexibility, elaboration, and originality we draw attention to specific qualities of judgement exercised by the student as part of his performance of this everyday creative task. We show how GenAI-mediated creativity is a layered, processual activity, enabling more critical engagement with its entanglements and implications. By reframing creative tasks with GenAI as testing creativity, we open space for critique and re-description of the conditions under which digital creativity is enacted.

Introduction: Creative tasks in test environments

What kind of task does a person perform when they prompt generative artificial intelligence (GenAI) to create an image? In the case of generating and editing images with such tools, our common sense description of GenAI image-making as a traditional creative process – that is, anchoring it in artistic or visual discourse – may limit or inhibit our understanding of what is, in fact, a more hybrid type of task. What is at stake in such an understanding of creative tasks is the taken-for-grantedness of their reality, their ‘states of affairs’ (Boltanski, 2011: p.103), and therefore, our ability to critique these conditions, or challenge how these digitally mediated tasks prefigure their undertaking (Dieter, 2024). By describing creative tasks with GenAI using the same language as other artistic practices, we may not only overlook some key differences with these practices but also different social realities in which these tasks are performed. One such social reality is how ‘the introduction of data- and compute-intensive technologies’ like GenAI into everyday environments have reconfigured them into ‘test environments’ (Marres, 2025: p. 8) which, as we intend to show, includes creative tasks.

In the following case study, we explore in detail how an individual undertakes a creative task within such a test environment to demonstrate how a different kind of descriptive language – one that makes testing explicit – can be used to describe its performance and render this reality more explicit. Our case follows Gabriel (pseudonym), a fourth-year student in their 20s with no previous GenAI experience, who is studying Criminal Justice at a university in Texas, USA. Gabriel responded to our call for student participants shared as posters on the university’s online learning management system and on physical bulletin boards across the university. After Gabriel signed up for our IRB-approved study and provided informed consent, he was asked to answer a brief survey for basic demographic information. Similar to our previous digital ethnography fieldwork (Lesage et al., 2025), Gabriel was tasked with recording himself ‘performing the digital skill’ (p. 1397) of modifying a television poster with the help of OpenArt (n.d), a GenAI image-making platform where ‘AI art enthusiasts’ can express their ‘creativity and imagination’. Gabriel recorded the task with Zight, a screencast software he used on his laptop in his bedroom, capturing his screen activity, webcam, and microphone in a single stream. 1 As he was the first participant to sign up for our study, we selected his recording to pilot our chosen descriptive framework. Our goal in this paper is not to suggest that Gabriel’s experiences are reflective of a broader sample or population, but to demonstrate that how one describes the performance of creative practices with GenAI, and by extension how one understands these creative practices, may benefit from approaching them processually as testing rather than as artistic production. We argue that drawing on the descriptive language of creativity testing may be better suited to critique aspects of its entanglements, layered within testing of three aspects of creative tasks: their agency, truth, and reality (Boltanski, 2011; Latour, 1987). As a first step towards describing the proposed creative task, we argue for a framework that allows us to consider two different dimensions of creativity: one morphogenetic, the other epistemic. Below, we explain why both dimensions of creativity are important for describing this task and subsequently proposing how testing creativity may provide the necessary descriptive language that combines both dimensions.

For Ingold (2022), any engagement in any task can be ‘creative’ in that a task is an extension of vitalist energies, of life becoming, within an environment. He defines tasks as socially embedded, human-driven activities closely affiliated to the identities of those who perform them. Tasks are defined in terms of their objectives, rather than any specified set of instructions or rules, but these objectives are far from independently prescribed and emerge through an ‘agent’s involvement within the current of social life’ (p. 409). This conception of tasks is morphogenetic in that they become ‘moments when a series of forces, capacities, and predispositions intermesh to make something else occur, to move into a state of self-organization’ (Fuller, 2005: p.18). Image-making is morphogenic, to the extent that it entails intermeshing human capacities and technical forces that are emergent rather than predetermined (Deleuze and Guattari, 1988; Fuller, 2005; Ingold, 2013). Gabriel’s engagement with a media environment that includes OpenArt for the ‘transposition from image to object’ (Ingold, 2013: p. 22) is not simply an execution of rules or procedures, he sets out to complete an everyday image-making task whose outcome emerges through the creative act itself.

What remains missing from the above morphogenetic dimension is a consideration of how creativity has historically developed an epistemic dimension that enables and constrains its affective structure, cultivating regimes of novelty, creative teamwork, and innovation (Reckwitz, 2017). How one sets out to engage in a creative task like image-making – how one sets the purpose of such a task – is socially embedded in discourses and materials that give order to its epistemic conditions. In other words, a morphogenetic account of a creative task is incomplete without considering how creativity also historically constitutes a ‘dispositif’ that structures the intermeshing of humans and technologies. For Foucault (1980: p. 195), the ‘dispositif’ is a ‘formation which has as its major function at a given historical moment that of responding to an urgent need’. The creativity dispositif shapes the drives and desires that power creative tasks, but also brings to bear the institutions, knowledge, and meanings associated with a task. This forms through both aestheticization and ‘specific, non-aesthetic complexes’, shaping modern conditions such as economisation, mediatisation, and rationalisation (Reckwitz, 2017: p. 9). Being creative (McRobbie, 2016) is a dispositif that drives aestheticization in response to the ‘purpose-driven and normative rationalization processes in modernity’ (Reckwitz, 2017: p. 205). Through our description of the creative task below, we will combine these two dimensions to show how creativity is both a morphogenetic process and a dispositif that is a constituent part of the existing, dominant social order. Gabriel’s creative task is therefore potentially ‘doubly’ creative in that it is an emergent act, and yet this emergence is performed within a reality (or dispositif) that enables and constrains what creativity can and should be.

Testing creativity

Having claimed that performing a creative task with Gen-AI involves a tension between two conceptions of creativity – one as a processual becoming that unfolds through its performance and another as an epistemic reality constructed by institutional actors – we need some kind of descriptive language that would allow us to experiment with this claim. How can we describe the interplay of both types of creativity without losing sight of one or the other? The answer, we believe, lies in the appropriation of a descriptive language devised for an earlier attempt to test creativity.

At one level, this claim sits uncomfortably with a morphogenetic dimension of task. Ingold (2022: p. 409) characterises testing as ‘entirely artificial’ and disembedded from involvement in social life. As we intend to show in this section, the sociology of testing reveals how testing does not merely involve the execution of rules or procedures but can also be conceived, like any other task, as a socially embedded performance that develops in response to an environment through practice.

Drawing from pragmatic sociology, we situate testing as a task that stands out, entangled in normative and political functions, yet contingent on valuation criteria (Boltanski, 2011; Boltanski and Chiapello, 2005; Boltanski and Thévenot, 2006; Stark, 2020). Boltanski and Thévenot (2006) introduce testing to enunciate how actions, objects, and people are constituted through material arrangements. Contrary to formal tests – what Ingold refers to as ‘artificial’ – pragmatic sociology registers testing in everyday life as profound, even if mundane (Boltanski, 2011). The logics of testing now format every aspect of life (Boltanski, 2011; Fürst et al., 2024; Marres 2025; Marres and Stark, 2020a, 2020b). The result is that our fragmented social world operates as the ‘scene of the trial’ (Boltanski, 2011: p. 25) where ordinary actors continuously suspect, wonder, and submit to the world of tests (Boltanski and Thevenot, 2006).

Testing can also serve as a bridge between morphogenetic and epistemic dimensions of creativity. Testing creativity emerged as a key development that bridged the creativity dispositif’s crystallisation in the early 20th century and precipitated its subsequent crises in the 1960s and 70s. Drawing from early psychometric work, Guilford (1950, 1967) combined the abilities of intelligence and creativity to measure ‘divergent thinking’. While Guilford’s (1950) psychometric research drew on Lewis Terman’s eugenic project of identifying ‘exceptional talent’ (Reckwitz, 2017), he doubted that intelligence tests like IQ (what he termed ‘convergent thinking’) were adequate for measuring creativity.

Guilford, careful not to situate his concepts of creativity and divergent thinking synonymously, influenced a ‘battery of tests’ generated by E. Paul Torrance that led to the development of the Torrance Tests of Creative Thinking (TTCT), originally titled the Minnesota Tests of Creative Thinking (Alabbasi et al., 2022; Torrance, 1966; Torrance and Gowan, 1963; Yamamoto, 1964). The TTCT includes a verbal test (TTCT-V) to measure ‘outbox imagination’ and figural tests (TTCT-F) to more comprehensively measure ‘creative potential’ (Kim, 2017: p. 304). While the TTCT-F adopts Guilford’s components of divergent thinking (i.e. fluency, flexibility, elaboration, and originality), Torrance described the TTCT as broader in scope than Guilford’s divergent thinking tests (Alabbasi et al., 2022). While the TTCT has been re-normed four times (1974, 1984, 1990, and 1998), occasionally due to criticism of earlier iterations, these creativity tests remain widespread and influential over the last 70 years or so (Kim, 2006).

This epistemic transformation of creativity into something that can be measured and tested, and as a process that can involve technical devices and scientific methods, has contributed in important ways to the conception of a generalised creativity in the second half of the 20th century by popularising perceptions that anyone has the potential to be creative. It also embedded creativity into a regime of scientometric evaluation and management, instrumentalizing creativity as a social and economic resource (Baer, 1993). Despite its significance, the TTCT has been singled out for its lack of reliability and validity as an empirical test (Weiss et al., 2021): ‘Practically speaking, our results raise serious questions about what the TTCT-F measures’ (Warne et al., 2022: p. 22). Baer (2011a: p. 316) even suggests the field would be ‘better off without the Torrance Tests, at least in the ways they are most commonly used’. Researchers have attempted to overcome some of these limitations by using multiple, diverse, and inclusive measures (Baer, 2011b; Kim, 2006) or by adopting the TTCT’s figural language for other types of tests.

What we propose to draw from the TTCT are how its measures can serve as ‘qualities of judgement’ for testing creativity as a task. In our analysis of Gabriel’s image-making with OpenArt, we use the TTCT subscales (see below) as a guide for describing how the creative process is performed, and if or how Gabriel and OpenArt work together on a task. Rather than use each of these measures to quantitatively score the creative process, we propose adapting them as descriptive qualities for how creativity unfolds as testing. Given their importance in the constitution of the creativity dispositif, we argue creativity tests like the TTCT may provide a useful descriptive language to portray how creativity testing is embedded in the performance of GenAI-mediated tasks. As Ge and Hou (2025) helpfully point out, distinct ideas are generated through divergent thinking, while evaluation and refinement occur through convergent thinking. In the context of their work, Gen-AI platforms and abstract visual stimuli have been found to deepen creative performance. Similar to this work, we adapt subscales from TTCT to help us investigate Gabriel’s creative task: • Fluency: The number of words or drawings (or any other type of content) produced during a task, usually measured within a set amount of time (Ge and Hou, 2025). • Flexibility: Similar to Guilford (1950), we define flexibility as the ability to respond to changing conditions. • Elaboration: An ‘ability to develop and elaborate on ideas’ or images (Kim, 2006: p. 4). • Originality: As a measure related to the ‘novelty of ideas’, originality is an indicator of ‘creative quality’ (Ge and Hou, 2025) through the number of unusual, or original, objects or ideas mobilised in a test (Kim, 2006). As we will see in the case of Gabriel and OpenArt, this measure usually relies heavily on comparison to other executions of the same task, making it difficult to apply to this individual case, but we will briefly address it below.

Results

Using the TTCT-F’s descriptive qualities, we now return to our case study and examine how Gabriel and OpenArt are ‘put to the test’ as a creative task (Marres and Stark, 2020a: p. 423). As we intend to demonstrate through our analysis, testing creativity can involve testing for different things at once. We address three of these, agency, reality and truth, which are encapsulated as three interrelated questions that each emphasise (in italics) a different aspect of what is being tested in the process: Can Gabriel and OpenArt create an image by engaging in this task? Have Gabriel and OpenArt created an image by engaging in this task? Have Gabriel and OpenArt created a good image by engaging in this task? We adapt various elements of the sociology of testing 2 (Bijker et al., 1987; Boltanski and Thévenot, 2006; Boltanski, 2011; Callon, 1986; Maares and Stark, 2020a, 2020b; Pinch, 1993) to help us answer each of these questions, starting with a description of testing agency by drawing on Science and Technology Studies (STS) before moving onto Boltanski’s (2011) truth and reality tests.

Agency: Can Gabriel and OpenArt act together to create an image by engaging in this task?

Gabriel sits at a desk in his bedroom, getting ready – the starting point of any task (Ingold, 2013) – by launching a screen capture tool on his laptop called Zight. It is because Gabriel undertakes this creative task with the help of Zight that we, as researchers, can experience a feeling of co-presence in the accomplishment of the task (see Ingold, 2022; Lesage et al., 2025). Gabriel initially launches Google in his web browser to search for an image. Speaking into his laptop as he searches, he explains the purpose of his task: ‘The movie poster I’m going to edit is from one of my favorite animes I ever watched called Attack on Titan’. 3 Selecting an image from the first season of the show which Gabriel saves to his desktop, he searches Google (‘dall e 3’) and clicks on the first sponsored link for a GenAI platform called OpenArt that claims to be similar to OpenAI’s DALL·E 3: ‘I’m going to use this editing tool to see what I can do’. He drags the image of the television poster from his desktop to the ‘Drag and Drop or Click’ field in OpenArt.

As Gabriel sets out to modify the image, he tests OpenArt’s capacity to adjust the main character on the front of the poster to assess whether a certain technological assemblage works to produce an image. In this test, both human and technology come together to exchange and enhance each other’s capacities to act (Latour, 1999). In following Gabriel’s tests, we take seriously people’s capacity to critique and to justify their actions in critical moments (Boltanski, 2011), including those involved testing OpenArt. The attempt to work together – to see if it is even technically feasible for Gabriel and OpenArt to create an image – is a test of strength.

For STS scholars, ‘tests of strength’ or ‘trials’ 4 offer a way of conceptualising how scientific controversies are resolved and how technologies are designed and used outside of the laboratory (Callon, 1986; Latour and Woolgar, 1979; Martuccelli, 2015). When engaging in a test of strength, Gabriel is ‘faced with spokespersons and what (or whom) these persons speak for’ (Latour, 1987: p. 78). In this way, Gabriel’s trial embodies projections – or proxies that stand for something – about the operations of OpenArt’s technology (Pinch, 1993; Stark, 2020). Over time, technological objects like OpenArt install and sustain agreements that become ‘difficult to dismantle’ (Boltanski, 1990: p. 92). The result is that people find it difficult to call into question the validity of these technologies and the assumptions that underpin their designs in our everyday lives. Even simple technologies, like doors, or more complex ones like GenAI features, ‘test’ a user’s knowledge and ability to use them (Latour, 1992; Pinch, 1993). Trials can elicit new performances from humans and non-humans, such as how Gabriel and OpenArt work together to produce an image, revealing both possibilities and limitations about the ‘attachments that emerge between the users and their new technologies’ (Lehtonen, 2003: p. 363).

One way to encapsulate how Gabriel engages in a test of strength is through his use of the phrase ‘Let’s see’ or ‘Let me see…’. He uses a version of this phrase seven times in the first 3 minutes of the task (17 times in all over 11 minutes and 30 seconds) as a way to qualify his actions. The frequent use of the term also suggests that Gabriel is at a stage of ‘setting out’, or the moment performance begins (Ingold, 2022; Lesage et al., 2025). Gabriel is open to ‘seeing’ what emerges from the morphogenetic process of working with OpenArt (consistent with Latourian interpretation) because the result of testing is not predetermined, and emerges from the ‘agencement’ of humans and technologies.

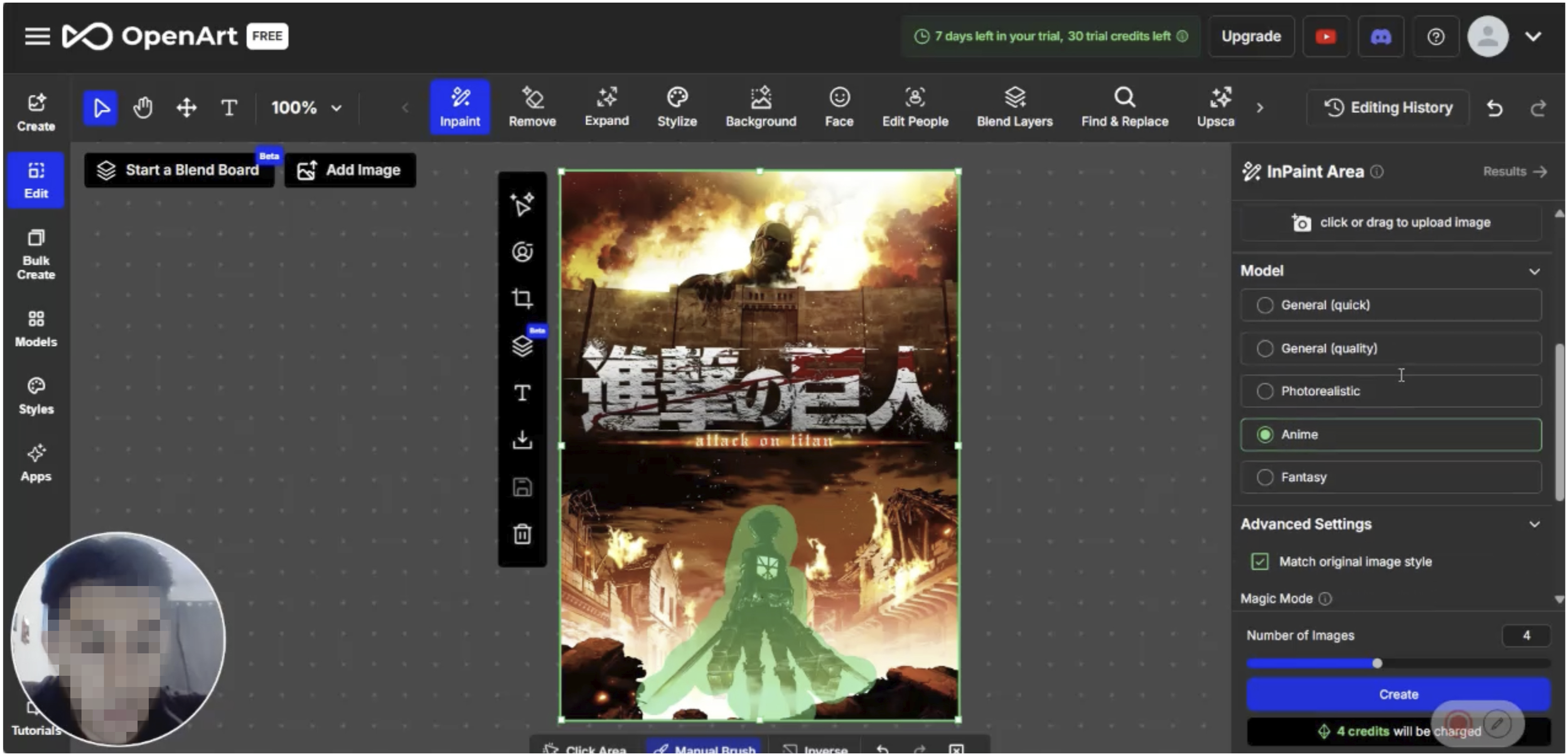

Using the InPaint feature on the right-hand side of the interface, Gabriel highlights the main character from the show with the manual brush, while saying, ‘I guess I’m going to try to make him look a little different’ (Figure 1). Similar to how prompts are used in TTCT to test a research subject’s ability to perform a creative task (Alabbasi et al., 2022), Gabriel uses this prompting feature to test OpenArt’s capacity to create. After entering a textual description of his idea (‘Eren jaeger [sic] founding titan, having a sad look on his face’) and selecting some menu items on OpenArt’s interface, Gabriel clicks the ‘create’ button on the feature window and states: ‘Let’s see what this creates’. Gabriel working with OpenArt’s ‘InPaint’ feature to produce an image, starting with a modification of the main character on the front of the poster.

At this point, a circular animation appears on the ‘create’ button, conveying to Gabriel that the application is processing his prompt. Gabriel briefly pulls his head back, then curls his fingers together and rests his chin on them as he waits for the machine to generate a result. Soon, the interface shifts to a different graphic animation of an infinity sign swirling over a greyed out window with the words ‘Making wonders…’ written beneath the graphic. When the graphic appears, Gabriel uncurls his fingers and focuses his attention on the screen stating, ‘Ok… let’s see’. As the infinity sign continues to spin, Gabriel scrolls down the feature window, showing four identical versions of the same greyed out window with a spinning infinity sign and the same text. At this point, a tinge of impatience appears on his face as he states, ‘Let’s see if we get anything’. After clicking ‘create’, it takes 58 seconds before Gabriel sees the first image, and approximately a minute and a quarter before all four images are visible. It is here that the descriptive language of testing creativity can again be helpful: while the number of images to be generated is set to four, Gabriel is testing OpenArt’s fluency – how much time it takes to generate this fixed number of images until they begin to appear in each of the four vertically aligned boxes. 5

Once one of the images appears, Gabriel shifts to judging what could be characterised as OpenArt’s ability to elaborate a corpus of images. As Gabriel hovers the mouse over the first image to appear, he smiles and states, ‘Ok, that’s… a little too much’. He scrolls to the next image and dismisses it with a sceptical ‘hum’. One of the windows is still ‘Making wonders…’. He scrolls to the top window and states in a slightly less dismissive way: ‘hum… that’s not bad’. Then he scrolls back down to assess the last image that was still loading, but quickly dismisses it with a ‘No’. He returns to the top image stating, ‘I guess this one looks kind of cool. It looks… yeah, I like this one’ while clicking the ‘select’ button that appears beneath the image. Key to this moment in the creative process was OpenArt’s ability to provide elaborations of the prompts that Gabriel provided.

While the above description represents less than 2 minutes in Gabriel’s execution of the creative task, we see how fluency and elaboration can describe qualities of judgement that enable him to find the ‘intelligibility of the actions and events’ (Suchman 2007: p. 179) as a creative task that works towards the production of an image. Gabriel is testing to ‘see’ what OpenArt can create with the image-making task at hand. While he is not using the descriptive language of TTCT creativity tests for this engagement, being attuned to qualities of fluency and elaboration helps us describe and understand the dynamics of how he tests creativity with OpenArt as a test of strength. Had OpenArt not been fluent enough, or if Gabriel had deemed its elaborations insufficient for the purposes at hand, this test of strength would have likely been judged a failure. Returning to our original question of whether Gabriel and OpenArt can pragmatically work together to achieve this task, the result is a resounding yes, as Gabriel can sit there, articulate an intended outcome, and OpenArt can ‘perform’ its role as a creative tool in a way that suits the given situation.

Truth: Have Gabriel and OpenArt created an image by engaging in this task?

As we’ve established above, the material process of creating the image contained enough of a morphogenetic condition to warrant being creative as it was generated through a process of interweaving human capacities and technical forces whose outcome wasn’t predetermined. However, testing needs to be understood as more than a test of strength because of its institutionalisation, and, as we will show later, its banalisation as part of everyday life (Marres and Stark, 2020b). We must therefore return to the epistemic dimension of the creative process through what Boltanski (2011: p. 103) would describe as a truth test – instances of confirmation ‘endowed with a semantic function’. While not critical, truth tests are encouraging as they tautologically confirm existing states of affairs and their related symbolic orders (Boltanski, 2011). Such testing is usually a mandated examination imposed by a powerful state or corporate institution, serving as an apparatus of control and domination that reaffirms this institution’s place within the established social order. In this case, the truth being tested is whether this task is indeed creative.

Traditional art worlds (Becker, 1982) or creative industries may serve as a useful starting point for identifying the institutions through which such testing might confirm creativity. But Gabriel isn’t a recognised artist. He doesn’t hold a creative occupation or job (Ashton, 2022) like working in a gallery or other established artistic institution. Gabriel is, however, working with the GenAI image-making platform OpenArt. Far from an abstract entity – what Boltanski (2011: p. 84) refers to as a ‘bodiless being’ – GenAI platforms embody institutions as ‘spokespersons’ that confirm a particular form of creative truth. Institutions serve to confirm specific constructions of truth, making creativity ‘inextricably bound’ to the institutionalisation of the task (Boltanski, 2011: p. 51). It is instructive to consider if and how OpenArt is designed as a platform, with institutional infrastructures and interfaces (Schober, 2022: p. 156) that remediate a discourse of creativity and the conventions of image-making practices.

In terms of discourse, some of the words and phrases incorporated in the interface denote and/or connote creativity. When Gabriel first enters the interface, OpenArt greets him: ‘Hi Gabriel! Ready to get creative?’ Above the greeting, there is a banner that says, ‘Unlock a year of limitless creativity with all annual plans’. Below the greeting, OpenArt guides users to select a primary task: image-making or storytelling. Filters, sketching, image-blending, and sticker generation are in an OpenArt tab called ‘Creative Concepts’. When Gabriel is waiting for images to load, the text accompanying the animations states that ‘wonders’ are being made. This kind of discourse symbolically aligns the process to a popular conception of creativity.

Its use of this creativity discourse also helps OpenArt exhibit qualities of flexibility – shifting in response to changing conditions – for Gabriel’s image-making task by referencing different terminology from a variety of image-making practices. This flexibility is exemplified when Gabriel tests what options are available for modifying the image with ‘InPaint’. Below the ‘Prompt’ box, there is a ‘Negative Prompt’ field where users can describe items they wish to exclude in their image, and an ‘Image Guidance’ area where people can upload images and allow AI to ‘fill in’ space. ‘Magic Mode’ allows people to adjust prompt adherence, steps, mask grow, and mask blur. While Gabriel leaves these features untouched, he does test OpenArt’s flexibility with the model category feature. With the main character still highlighted, and his prompt written (see above), Gabriel scrolls through various model categories, from ‘General’ to ‘Photorealistic’ to ‘Fantasy’ – before selecting the ‘Anime’ category.

Flexibility with OpenArt is partly the result of having been designed to provide a ‘glut’ (Lesage, 2018) of features and functions that remediate the discourse and established cultural conventions for image-making. Gabriel’s specific image-making task is facilitated in part by his choice to work on an anime poster – a popular Japanese animation genre that is prefigured in the interface’s feature glut through the ‘anime’ model button. Gabriel can draw a categorical association between his chosen image, its genre, and the names of features in the platform that align with this genre. The popularity of anime, and this specific anime series, also facilitates the creative process when the interface grammatically corrects the spelling in Gabriel’s prompts. For example, when Gabriel writes a prompt to modify the character (see above), the platform’s ‘familiarity’ – its access to an extensive library of training data and other informational resources – makes it possible for OpenArt to autocorrect Gabriel’s spelling of the anime character from ‘eren jeager’ to ‘Eren Jaeger’. OpenArt’s familiarity with anime and this series is visible on the homepage, where there is an area to ‘see what others are creating’. A simple search returns hundreds of created artworks with tags for ‘anime’, ‘Attack on Titan’, and ‘Eren Jaeger’ (See Figure 2). Gabriel tests OpenArt’s flexibility through model features exemplifying glut (i.e. General, Photorealistic, Anime).

The way generative art can be produced with the click of a button, as Gabriel does above in his generation of the ‘Attack on Titan’ poster, may lead us to think originality is no longer relevant. In this context, it is helpful to consider how the definition of originality has historically shifted over the last 75 years and how this shift affects how quality is judged in image-making practices. The definition of originality that Torrance (1988) used to develop the TTCT relied on more romanticist and modernist interpretations of the term that dominated in mid-20th century America, emphasising aesthetic invention and novelty (Reckwitz, 2017). As a test, the TTCT treated originality as a quality that could be quantitatively assessed by comparing the different answers given by test respondents, attributing more value to ‘statistically infrequent ideas’ (Kim, 2006: p. 5). A more thorough exploration of the historical shifts that led away from this dominant conception of originality are beyond the scope of this paper, but one relevant shift is the emergence of a digitally enabled remix culture (Bardzell, 2007; Lessig, 2008; Manovich, 2007).

Deeply entwined in the structural conditions of mediatisation (Reckwitz, 2017), remix culture is an example of the expansion of the creativity dispositif to include digitally mediated experiments with creative tools as creativity. How Gabriel and OpenArt create a remixed image constitutes a widely recognised creative practice, even if it isn’t performed by an anime artist for its use in an anime feature. The vernacular process of combining and recombining images taken from popular culture becomes a ‘true’ creative act. In this way, the production of images with OpenArt may be a ‘unique’ recombination, but that hardly makes the image ‘original’ (Voigts, 2021), at least according to the original definition developed with the TTCT. This very remix, as a recognised creative practice, undermines the foundations of ‘statistical infrequency’. Instead, with GenAI, originality becomes something that emerges from the novel interplay of easily accessible content taken from popular culture – what we might call ‘statistically frequent ideas’. The more frequent the ideas, the more likely OpenArt has sufficient data to enable the recombination of materials into ‘new’ images. In light of the above discussion, the answer to the question for testing truth is that Gabriel and OpenArt have indeed created an image. If this were earlier in the historical development of the creativity dispositif, maybe this creative task would not have passed a truth test of creativity. 6

Reality: Have Gabriel and OpenArt created a good image by engaging in this task?

Testing should not only be understood as a task whose outcome is entirely predetermined by powerful institutional actors. While testing can broadly refer to the ‘way reality is shaped’ (Bogusz, 2014: p. 135), testing reality puts disputes to the test. Unlike testing truth, testing reality entails ‘tests exploited by critique’ (Boltanski, 2011: p. 103). Critique can ‘take advantage’ of reality tests in two ways: (1) through evidence that supports claims; and (2) the ability to locate ‘inconsistencies between the logics governing different tests in different spheres of reality’ (Boltanski, 2011: pp. 106–107). Dissimilar to how truth tests reinforce social order, reality tests confirm or establish the ‘order of critique’ (Boltanski, 2011: p. 106). Gabriel engages with these forms of critique as he sets out to create a good image with OpenArt.

Once Gabriel is finished modifying the main character (see above), he sets out to change the background of the image. In the toolbar, Gabriel changes his selection from ‘InPaint’ to ‘Background’. This change tests OpenArt’s flexibility to adjust to the changing conditions of creativity. Rather than removing the background, Gabriel selects the option in OpenArt to ‘change’ the background, speaking to us as he selects the button: ‘I’m going to try to make it look more current, just like the show looks today’. Placing his cursor over the text box, he types a prompt that describes the most current season of the show: ‘colosal [sic] titan six of them, families running away, homes being destroyed’. After typing the prompt, Gabriel says, ‘Okay, that’s kind of realistic of the show’. In this case, the object (colossal titan) is made to stand out better with a ‘realistic’ background, in such a way that it would qualify as a work of art (Fürst et al., 2024).

After OpenArt autocorrects Gabriel’s spelling of the word ‘colossal’, he clicks the ‘select’ button to create the images. After four images appear, he scrolls through the options, saying: ‘There has to be something’. Gabriel intently looks at one of the images. There is a single colossal titan, but no ‘families running away’ or ‘homes being destroyed’ as he described in his prompt. Displeased, he says, ‘Nah. It kind of looks off’. He removes the ‘Background’ tool and returns to ‘InPaint’, where he highlights the bottoms of the image to the left and right of the main character. He writes a prompt: ‘people running away, titans from attack on titan anime’. Gabriel’s prompt once again tests OpenArt’s ability to elaborate, this time explicitly within the confines of the anime genre. He changes the model type to ‘Anime’ and clicks ‘Create’: ‘Let’s see if that’s enough’. After OpenArt returns the first background image, Gabriel says, ‘Yeah, okay. That’s kind of what I want’. Unlike the ‘Background’ tool, ‘InPaint’ has successfully added people running away in the background of the image.

Gabriel selects the second image that OpenArt produces to look closely at it, but quickly dismisses it because there is a character in the background that looks identical to the main character on the front of his image: ‘That kind of looks like him’. Eventually, Gabriel selects the first image option. It displays two people running away on the right, and two characters embedded into the scene on the left-hand side. He leans toward the screen on his laptop to inspect the image. After a few seconds, he sits back a bit and states, ‘It’s perfect’.

As he considers the image he is creating with OpenArt, Gabriel sets out to make one final modification to the background. He selects the ‘InPaint’ feature and draws a green rectangle in the sky, just below the titan and slightly above the main character’s head. He types one final prompt: ‘airplanes crashing and burning’. This time, in addition to the ‘Anime’ model, he uses an ‘Advanced Setting’ option to ‘Match original image style’. Once again, OpenArt generates four images. The first aeroplane looks cartoonish: ‘It kind of looks funny’. Gabriel turns to the second image, in what appears to be a commercial airline on fire: ‘Nah, it looks weird’. He finally selects the third image that contains an aeroplane with fire emerging from the jet: ‘Yeah, that looks good. I like it’.

With testing reality, there is always the potential of ‘disruptive effects’ that reveal ‘dimensions of reality that might be called forgotten’ (Boltanski, 2011: p. 106). One reality that is tacitly tested in this creative task is its commoditization. The extent to which OpenArt elaborates on any creative task – how many different versions of an image it generates – is contingent on the platform’s business model for resource allocation. At the start of the task, OpenArt reminds Gabriel that he has 30 credits and 7 days left in his free trial. As Gabriel selects ‘Create’ for the first time (see tests of strength), the interface reminds him that the cost to produce four images is ‘4 credits’. The number of credits taken off matches how many images OpenArt creates each time Gabriel clicks ‘select’. OpenArt’s commoditization of elaboration through credits helps explain why Gabriel keeps the default setting to four images throughout the task. As Gabriel and OpenArt work together, his credit balance reduces from 30 to 26 to 14. Gabriel does not challenge the reality of the commoditization of elaboration. It is unclear what would have happened had he reached the end of his credits before he could have completed the image to his satisfaction. He spends his last credit as he adds the aeroplane to the top of the movie poster stating: ‘How do we create it?’ He then saves the image, downloads it, and says, ‘Okay, that looks good’, and closes Zight (see Figure 3). The final image created by Gabriel and OpenArt.

The only judges of this reality test, at the moment of the task’s completion, are Gabriel and OpenArt. If Gabriel decides the image is good, there are no other agents in place to challenge the reality of this judgement. It is at this point that we reach the methodological limits of the approach taken, given that the task itself is prompted by the researchers rather than emerging from a more ‘natural’ situation in which conditions of evaluation would contribute to the implementation of the reality test. To the researcher, it remains unclear what criteria Gabriel applies to ascertain that the result ‘looks good’. Meanwhile, OpenArt does not share with us if or how it has judged the image created.

On one level, this test reinforces the reality of a certain kind of general yet bureaucratically technicist and insular creativity that some have accused the TTCT of fostering (Baer 1993): individuals (in this case Gabriel) are free to apply whatever aesthetic or other criteria they see fit to make an image, but within artificial situations that are isolated from their everyday experiences. Meanwhile a separate institutional apparatus (in the case of TTCT, the researchers conducting the test, in this case, OpenArt, its designers, and we the researchers who witness the task) are able to apply their (or our) own independent assessment criteria without having any direct bearing on the execution of the task but nevertheless testing realities that rely on its very performance.

Conclusion: A critical question for testing creativity

In the introduction, we claimed that what are at stake in how we collectively describe and understand creative tasks are their ‘states of affairs’ (Boltanski, 2011: p.103) and, by implication, if or how we can critique their performance. We then demonstrated how to describe creative tasks involving GenAI as testing creativity in order to produce a different perspective on their states of affairs. To achieve this description, we adopted and adapted measures from 20th Century creativity tests – fluency, flexibility, elaboration, and originality – and applied them as qualities of judgement for testing creative agency, truth, and reality.

If creative tasks with GenAI can be described as extensions of a long tradition of intelligence and creativity tests as well as extensions the kinds of computational tech trials described by Marres (2025), the most complicated question that remains to be asked is: does this new descriptive language provide helpful points of entry for a critique? A preliminary answer to this question is possible by returning to a consideration of the morphogenetic and epistemic dimensions of creativity defined above.

Considering the morphogenetic dimension of the creative task sets up a way to clarify some of the asymmetries (Fuller and Goffey, 2017) between human and machine. The case has certainly allowed us to describe a certain kind of co-creative process (Oppenlaender, 2022) in which human and machine intermesh to create an image. But what also becomes apparent is that there exist asymmetries in this intermeshing, including how GenAI works as an intermediary between humans and the repository of cultural resources through which it is ‘trained’. The example of Gabriel’s use of the ‘anime’ category in OpenArt illustrates this well. GenAI instrumentalises a rapport to entire assemblages of visual culture into a single feature that Gabriel can test through qualities of judgement like fluency and flexibility. However, these qualities seem to be focussed on the feature’s timely execution as a technical feat rather than as a meaningful engagement with the way this repository was assembled or how aspects of remixing it have been configured prior to its execution. More importantly, there also exists a tacit asymmetry with respect to what, if any, qualities of judgement are used by OpenArt to evaluate Gabriel’s use of its service: what data does OpenArt collect from this intermeshing and how are these data analysed in ways that enable and constrain the performance of future creative tasks?

With respect to the epistemic dimension, the critical implication raised by this case study is to draw attention to the confluence of, on the one hand, what Marres (2025) calls the increasing implicitization of computational test environments in everyday life with, on the other hand, the creativity dispositif. It is possible that we have entered a new phase in the development of the creativity dispositif in which these computational test environments become the dominant setting for reaffirming creative truth and reality. From a methodological perspective, it raises questions about researchers’ complicity in fostering this kind of confluence as part of their research designs and what kinds of methodological interventions could facilitate the kinds of ‘emergence testing’ (Marres 2025: p. 14) that foster more critical scrutiny. One potential avenue would be to explore how the qualities of judgement like fluency, flexibility, elaboration, and originality could be reimagined and reconfigured as explicit practical categories for counter-testing and evaluation.

The empirical limitations of this single case limit our ability to further answer this question. Nevertheless, our objectives in this paper were to draw attention to a taken-for-granted reality for engaging in creative tasks with GenAI – how its performance is embedded in computational test environments – and to demonstrate how the language of testing creativity might provide a foothold to begin the difficult work of better describing this reality and, in turn, develop tools for its critique.

Footnotes

Acknowledgements

The authors would like to thank the members of the Digital Democracies Institute’s Creative Labor, AI and Democracy working group for their comments on early drafts of this paper.

Ethical considerations

This study, Searching for Digital Skills (

Consent to participate

The participant underwent an informed consent process before participating in the study.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The ethnographic data from the study is not available to the public and may not be shared due to IRB protocol.