Abstract

Artificial Intelligence (AI) and particularly Generative AI, such as ChatGPT, has attracted attention within the media recently, both in terms of the potential negative (e.g. job losses, promotion of misinformation) and positive (e.g. enhanced efficiency, easy access to information) impacts on various sectors of society. We explore science journalists’ views of AI and how they are adopting and using these new technologies. A survey methodology allowed exploration of differences between journalists in Brazil, India and the UK. The study found widespread adoption of AI tools for relatively straightforward and simple tasks (e.g. transcription, writing support). Although there is an appetite for more sophisticated use (e.g. data analysis), fears around the accuracy of AI appear to temper adoption. Significant differences were seen in the adoption of AI tools across countries, with Brazilian science journalists more widely adopting tools to support writing in a second language and journalists and India and Brazil making more widespread use of sophisticated tools than those in the UK. British journalists appeared more reticent to adopt AI tools. The study captures a transition in science journalism practices; increases in the capabilities of AI are changing working practices. Our findings indicate that the complexity of AI and its potential lack of compatibility with journalistic values, such as accountability, are at least partially responsible for the lack of use of AI for more sophisticated journalistic tasks.

Keywords

Introduction

Artificial Intelligence (AI) has been described as the ‘...Industrial Revolution of knowledge work…’ due to the way it has transformed how data are analysed, synthesised and content is created (PwC, 2024: 3). Pricewaterhouse Cooper’s 2024 AI Jobs Barometer reported that adverts for AI jobs are growing 3.5 times faster than all other jobs globally, and jobs requiring AI skills carry a 25% wage premium in some sectors (PwC, 2024). In their essay, Dodds et al. (2025) argue that advances in AI technology are leading to long-term changes in journalistic working practices and a transformation in what it is to be a journalist. AI-based news anchoring is already fast transforming Asia’s electronic mediascape, catering to a multilingual landscape. After China’s AI news anchor debut in 2018, India, Indonesia, Taiwan, Malaysia, and Kuwait followed suit in 2023 (Kaur, 2023), with newsrooms using virtual anchors presenting weather and agriculture issues (Choudhury, 2025; Kaur, 2023).

Individual journalists and news organisations are expected to be answerable for the content they produce and the methods they use. Whereas the algorithms behind generative AI (genAI) and their potential to obscure where information has been sourced from make editorial processes more opaque (Porlezza and Schapals, 2024; Moran and Shaikh, 2022). There are also concerns that AI in journalism may perpetuate biases and amplify the spread of disinformation (Porlezza and Schapals, 2024), as well as there being broader fears about erosion of editorial quality (Beckett, 2019; Moran and Shaikh, 2022). At the same time, the news-reading public is concerned about AI use in news production. A recent Reuters Institute for the Study of Journalism survey found 47% of European respondents and 52% of US respondents said they were uncomfortable with news mainly produced by AI with some human oversight (Newman et al., 2024).

Even in today’s fragmented digital world, traditional media are still an important source of news about science among the public (Department for Business, Energy and Industrial Strategy, 2019; Weitkamp, 2014). Science journalists cover topics in which public awareness is important, such as health issues and climate change, topics which have also been subject to concerns about mis- and disinformation (Piatek et al., 2024). Science journalists also cover topics about which there is political and/or ethical debate, such as genetic modification and AI technologies. In an increasingly polarised online environment, science journalists have an important role to play in enabling evidence-informed debate. They also act as watchdogs, holding scientists and scientific institutions to account (Fahy and Nisbet, 2011). Given their role in society, the working practices of science journalists are worthy of attention. Furthermore, the topic of AI falls within the science journalists beat, and this group may be expected to be better informed about the technology than journalists in general, yet studies suggest that science journalists make limited use of sophisticated AI tools (Dijkstra et al., 2024). In this context, Tatalovic (2018) points to an emerging practice of using AI tools to produce science press releases and simple science news stories, arguing that science journalists remain largely unaware of such practices and are failing to engage effectively with the potential ways that AI could enhance reporting. While there is a growing body of literature exploring the use of and perceptions of AI by journalists in general, research into AI use and perceptions of AI specifically by science journalists is lacking.

It is against this background that this research presents the results of an online survey distributed among science journalists in the UK, India, and Brazil, investigating their use and perceptions of AI. This survey provides an initial exploration of the editorial tasks in which AI is being employed by science journalists in three different continents, as well as the journalists’ thoughts on the opportunities and risks AI use in science journalism presents.

Literature review

Although science journalism is a distinct news beat and may be expected to have commonalities of practice across different countries, nuances of culture and practice may shape the ways and speeds with which science journalists adopt new technologies. For example, science journalists working in languages other than English may often need to undertake a double translation role, translating the English of scientific discourse into their native language and at the same time translating the language of science into the language of their readers. These journalists may adopt AI tools that provide translation, including of audio content (e.g. Notebook LLM), in greater numbers than journalists operating mainly in English. Furthermore, AI tools are addressing challenges faced by multilingual countries, such as India. AURA, an AI-powered conversational research tool (by Journalism AI project), for example, is being adapted to work with regional languages like Marathi, Hindi, and Bengali (Maseko, 2025). On the other hand, many AI tools are initially produced in English, presenting a challenge for those needing to access such tools in other languages where they may be either absent or of lower quality (Kshetri, 2024). Financial resources available to science journalists and publishers in middle income countries could also affect the adoption of AI technologies. For this reason, we adopted E.M. Rogers’ diffusion of Innovation theory (DoI) as a paradigm through which to study science journalists’ adoption and views of AI in Brazil, India and the UK.

Rogers (2003) argues that when new innovations (ideas, technologies and practices) emerge, those who might benefit from these technologies must decide whether and how to adopt them. To do so, they must first be aware that the technology exists, and this in turn requires communication of those innovations to potential adopters. DoI identifies four key components: the innovation (in our case AI tools suitable for journalism), communication of the innovation, the channels through which the innovation is communicated and the time it takes to spread within the social system (in this case science journalism). DoI suggests that individuals’ perceptions of the relative advantage of a technology affect how readily it is adopted, and this may be particularly true at the ‘awareness’ stage when science journalists, for example, must ‘be knowledgeable of an innovation before decision making’ (Zhang and Feng, 2018: 1283).

Given the key role of communication in the adoption of technologies, and the fact that AI technologies themselves fall within the scope of many science journalists’ ‘beats’, it seems likely that science journalists will be aware of these technologies. Familiarity with them may be greater in contexts where they are seen to offer specific advantages (e.g. language translation in India and Brazil) or where such technologies might be more visible (e.g. India where an AI anchor has been deployed (Kaur, 2023)). Familiarity, of course, may not always be positive and the adoption of AI tools by science journalists offers both opportunities and risks. In the UK, for example, there has been much public discourse on the risks associated with AI, particularly in relation to job losses. Public concern about products and services that use AI is notably higher in the UK than in India and Brazil (Ipsos, 2024). This negative reporting might also be expected to influence the views of science journalists toward such technologies. In contrast, while journalists in India display a level of scepticism towards AI technologies, they are pressured by both the state and start-up companies to adopt these technologies. This reflects in part India’s emphasis on developing its technology sector, where it seeks to be an innovation leader. The discourse around AI in Brazil has been balanced to date, with a focus on both benefits and risks.

Rogers identifies five attributes that affect the speed of adoption of new technologies – their perceived relative advantage compared with existing approaches, their compatibility with the existing needs and values of adopters, their complexity; how easy they are to understand and use, the opportunity to try and test the innovations and the degree to which the end products of the technology can be observed by others (). In the context of science journalism, AI may offer economic and practical advantages, by, for example, reducing repetitive tasks (e.g. transcription) as well as creative advantages, such as enabling new forms of journalism (e.g. interrogation of large data sets). In India, mandated use of technology may increase its adoption. Regarding compatibility, challenges may arise if the use of AI tools raises ethical or legal concerns, while tools which meet defined needs (such as transcription) may be seen as compatible with journalist norms and values. Adoption of technologies tends to increase if it can be trialed, even if in a limited basis. With many AI tools initially offered free, or with limited feature free versions, the industry has sought to make it easy for journalists to experiment with such tools. Finally, the blackbox nature of the computational science underpinning AI technologies, means that while they may be simple to use, the hidden mechanics of how they work are often complex and opaque. Together, these five features paint a complex picture of the likelihood that science journalists will adopt AI tools and one worthy of unpicking.

While some of the more sophisticated AI technologies may be adopted at the organisational level (for reasons of cost), many of the simpler AI tools (such as Otter) are more readily available to individuals. Rogers (2003: 136) suggests that such innovations ‘are generally adopted more rapidly than when an innovation is adopted by an organization’. The rate of adoption of AI tools by individuals is affected by how they find out about such tools. Rogers argues that tools which receive media attention are more widely adopted than tools which require interpersonal communication. The current media landscape tends to talk about AI in general and rarely specifically discusses tools that might be useful in professional capacities, suggesting that journalists will learn about specific tools through interpersonal networks, slowing the rate at which technologies are adopted.

Drawing on DoI, we therefore identify the following research question:

AI tools: Opportunities and challenges

Given the key roles of technology affordances in DoI, we sought to understand both the opportunities and challenges associated with the adoption of AI technologies for journalists. Rogers (2003) notes that the need for an innovation and the adoption of that innovation are not straightforward and he argues that these technologies often widen socioeconomic gaps. Likewise, a focus on quality in science reporting, may raise concerns about the quality of AI-generated materials, particularly as concerns around misinformation and AI hallucinations may bring such concerns to the fore (though perhaps less so in Brazil where the media discourse around AI appears more balanced). For example, Schäfer (2023) points to concerns about the speed and volume of errors, produced in a context of diminished transparency, yet with an appearance of greater reliability, raising concerns about a rise in fabricated scientific information and a dilution of true knowledge.

Several studies highlight the potential utility of AI tools, for example, to increase efficiency and productivity in the newsroom (Guanah et al., 2020; Guenther et al., 2025; Noain-Sánchez, 2022; Noor and Zafar, 2023). Noain-Sánchez (2022) found that adopting AI tools could increase job satisfaction by freeing journalists from repetitive tasks and allowing them to focus on qualitative and investigative reporting. Likewise, Guanah et al. (2020) argue that automated journalism can offer benefits, particularly in terms of time-saving, reducing bias, and increasing accuracy, while Jamil (2020) notes the potential of AI to assist with producing multilingual stories and reducing errors in texts. Noain-Sánchez (2022) argues that AI tools have a role to play in fact-checking and in the detection of deep fakes, an aspect also discussed by Beckett and Yaseen (2023) who found journalists expect AI to influence fact-checking and disinformation analysis in the future. In a European study, Dijkstra et al. (2024) identify science journalists as holding a ‘mildly positive’ view of the potential of AI. A similar picture emerges amongst Pakistani TV journalists (Noor and Zafar, 2023).

Beckett (2019) identified a range of challenges to the widespread adoption of AI tools in journalism, including financial limitations and low internet penetration rates, particularly in some parts of the Global South, the relatively low availability of AI tools in languages other than English and a fear of job losses. A follow-up survey published 4 years later (Beckett and Yaseen, 2023) found the social and economic benefits of AI use in journalism were still concentrated in the Global North. Guanah et al. (2020) point to a need for Nigerian journalists to adopt AI tools to avoid being left behind in a world of automation, while Jamil (2020) particularly points to lack of economic resources and poor availability of local data as challenges to journalists in Pakistan. In India, while the use of AI news anchors is increasing in local languages, they are also criticised for reinforcing gender bias (Choudhury, 2025). This aligns with a study reporting the pervasive nature of gender biases in AI news imagery across digital media (Chen et al. 2024). Other concerns raised include a risk that AI will limit creativity and skill development (Noor and Zafar, 2023). Wu (2024) found that journalists are using their agency in determining how AI is being used in newsrooms, adopting practices such as verifying and editing AI outputs to maintain journalistic standards. This leads to our second research question:

Ethics and threats to journalism

Strong journalistic norms may raise particular ethical concerns with the adoption of AI tools, as well as concerns around legal issues. Such concerns may reduce the perceived compatibility of AI tools with journalist practice. However, given the governmental pressure to adopt AI technologies in India, we are interested to understand whether there are differences in science journalists’ perceptions of the compatibility of AI technologies with journalistic roles between countries.

Questions around the ethical use of AI emerge as a concern for journalists (e.g. Gutiérrez-Caneda et al., 2024; Noor and Zafar, 2023) and journalism (González Esteban and Sanahuja, 2023), particularly in the ways that AI technologies may affect core tenets of journalism, such as truth and independence. Diakopoulos (2019) points to the new skills journalists will need to make effective, legal, and ethical use of AI. While Ufarte Ruiz et al. (2021) identify the need for journalists to consider issues around authorship and transparency when making use of genAI. To this end González Esteban and Sanahuja (2023) and Mahony and Chen (2024) point out the importance of journalists understanding the algorithms used by AI, including their potential to perpetuate or introduce bias. Further, there is a need for the creation of ethical guidelines that cover aspects such as data privacy, content manipulation, and algorithmic biases (Aissani et al., 2023; Gutiérrez-Caneda et al., 2024). As Noor and Zafar (2023: 1646) explain, ‘As newsrooms navigate this technological shift, prioritizing transparency, accountability, and societal well-being is crucial for maintaining the ethical standards that underpin journalism’s role in society’. At the same time, concerns have been raised about the replacement of journalists by AI-created news (Aissani et al., 2023) and associated job losses (Beckett, 2020; Guanah et al., 2020; Jamil, 2020; Peña-Fernández et al., 2023). Given this backdrop, we sought to explore journalists’ perceptions of ethical and legal issues relating to the use of AI, leading to our third research question:

Methods

AI has the potential to transform the way science is communicated (Schafer, 2023), by informing the way practitioners, such as science journalists, work (Dijkstra et al., 2024) or being used by the public for seeking information (Greussing et al., 2025). This study focused on the adoption of AI tools by science journalists for several reasons. Firstly, as a technology itself, AI is a subject that falls within the beat of many science journalists and so this group may be expected to be familiar with both technological and societal debates about AI technologies. Further, science journalism is also an area of journalism that may adopt more sophisticated data analysis tools (e.g. for trend spotting, data driven journalism) as the beat often encompasses complex interactions between science, technology and society. These factors could lead science journalists to be early adopters of this technology.

Journalistic practices are shaped by the social and political contexts in which journalists work, which differ between countries (Hanitzsch et al., 2025). At the same time, there is some international consistency in terms of who might be classified as a science journalist and there is a single world federation for these specialists (see e.g. https://wfsj.org/) which helps to provide some alignment in the job role across different countries. This facilitates a comparative study of science journalism practices and perceptions in relation to AI in different countries.

Consistent with a comparative study, this research adopted a quantitative survey methodology to enable direct comparison between countries. Survey questions were developed to shed light on the way that journalists are adopting AI, drawing on diffusion of information theory (Rogers, 2003) and which would allow comparison across countries (Brazil, India and UK), as outlined above. Survey questions were developed through our work with science journalists (N = 42) engaging with the project through a variety of means and by drawing on the extant literature on journalists’ use of AI, including surveys of general journalists undertaken as part of the Journalism AI project (https://www.lse.ac.uk/media-and-communications/polis/JournalismAI). A draft survey was reviewed by the project team (comprising academics and practicing science journalists) as well as the project advisory group (practicing journalists).

The final survey comprised 20 questions, some of which included multiple statements. Six-point Likert-like rating scales were used (Strongly Disagree to Strongly Agree) to force respondents to choose a position, rather than allowing a neutral response. The survey also included closed questions (yes, no and unsure/don’t know or ‘prefer not to say’ options), multiple choice (tick all that apply) and open questions. A definition of AI was offered at the start of the survey:

‘For the purposes of this survey, artificial intelligence (or AI) can be defined as the process of “creating computing machines and systems that perform operations analogous to human learning and decision-making” (Castro and New, 2016: 2). It includes machine learning (where a system is able to use experience to improve) and natural language processing (interaction between computers and humans using human languages, including chatbots)’.

Respondents were asked to rate their agreement with statements about the uses and impacts of AI on science journalism. These covered seven indicator areas identified in previous studies and our wider work with science journalists: Opportunities presented by AI tools (e.g. Currently available AI tools can effectively identify trends in data by time periods, geography or demographics); Utilitarian features of tools (e.g. A large volume of data can be analysed effectively and summarised in a matter of minutes or seconds by AI tools alone); Quality issues linked to AI tools (e.g. AI tools can be used to assess the credibility of news); Threats posed by AI tools (e.g. AI and automation is a threat to science journalists’ jobs); Inclusivity issues (e.g. AI is a greater threat to science journalists jobs in the Global South than in the North); Legal issues (AI tools could increase the risk of libel) and Ethical issues (I am concerned about ethical issues that may arise from using AI tools).

In all cases, respondents were asked to consider the statement specifically in relation to science journalism. A separate section of the survey presented a set of statements relating to training in the use of AI tools (using the same Likert-like scale). Multiple choice questions asked about journalists’ familiarity with the use of AI in news settings, including which tools they personally use and how they use AI tools. Open questions explored in more detail: how journalists use the specific AI tools they had previously indicated they use; what, if any, biases AI tools might address; journalists’ hopes for the use of AI tools in science journalism; journalists’ fears about the use of AI tools in science journalism. A final section of the questionnaire explored demographic factors. These included: the topic areas covered by respondents; the length of time respondents had worked in science journalism; employment status (e.g. work for media organisation, work freelance, work as illustrator, with open comment boxes to explain some answers) and separately full/part time employment status; gender and whether they consider themselves to be from a minoritised group. We also collected information on the country in which their work is primarily published.

Once the questions had been agreed in English by the research team, they were shared with science journalists advising the project (2) for review and further comment. A few minor wording issues were addressed and the questions were then input into Qualtrics to create an online survey. The English language survey was translated into Portuguese by LM and LN. Both surveys were distributed electronically. The English language survey was distributed by: Association of British Science Writers (inserted in their newsletter); Science Journalism Association of India; through the personal contacts of the research partners, including to journalists involved in earlier phases of the project, and through the social media accounts of the project staff and associated organisations. All social media posts and more general promotion of the survey highlighted that respondents should work as science journalists or science writers. The Portuguese language survey was distributed by the Brazilian Network of Journalists and Science Communicators, to stakeholders and via social networks. Both surveys were distributed and collected data during the same time period (29/2/2024–15/4/2024) to avoid the potential for breaking news on AI to impact respondents differently if they were responding in different time periods (no such news was identified that might have affected the responses). The survey contained an embedded information and consent form, alongside information on data protection. Ethical approval was received from the Research Ethics Committee of the University of the West of England Bristol [HAS.23.02.066].

In total, 103 responses were received to the English language survey and 109 to the Portuguese language survey. However, on cleaning the data, a large number of blank responses were encountered. Entirely blank responses were removed. But partial responses were retained within the data set if the respondent had fully answered the first question. Once completely blank responses were removed 47 English language and 66 Portuguese language responses remained. Where no information was provided about the country in which the respondent worked, we were able to use the IP address of the respondent to determine where the survey had been completed. We used this as a proxy for the country in which the respondent’s work is primarily published (14 responses comprising seven from Brazil, four from India and three from UK), recognising that this might introduce errors.

Estimating the number of science journalists globally is challenging given that there is no certification or global list of science journalists (Massarani et al., 2021). However, a substantial number of journalists cover the science ‘beat’: the Association of British Science Writers (ABSW) in the UK describes itself as a network of over 700 professional science journalists and communications professionals (ABSW, undated) and a global survey of science journalists attracted over 600 responses (Massarani et al., 2021). Therefore, it is not possible to determine the extent to which insights from this research can be considered representative of the community of science journalists. Instead, we argue that what is presented here are exploratory insights into the use and perceptions of AI by science journalists in three different countries.

Quantitative data were analysed using a combination of SPSS and Excel. The data set was checked, and missing data was double-checked to ensure this was not an artifact of the data importation process (data were imported separately into SPSS and Excel and also checked in Qualtrics). The English language and Portuguese data were merged into a single file and analysed as a full dataset. This allowed further comparisons to be made between countries (UK, India and Brazil), by gender and by career stage. Statistical analysis was carried out using SPSS adopting Pearson’s Chi Squared and post-hoc analysis using Dunn’s pairwise test with Kruskal-Wallis H test for significance.

Results

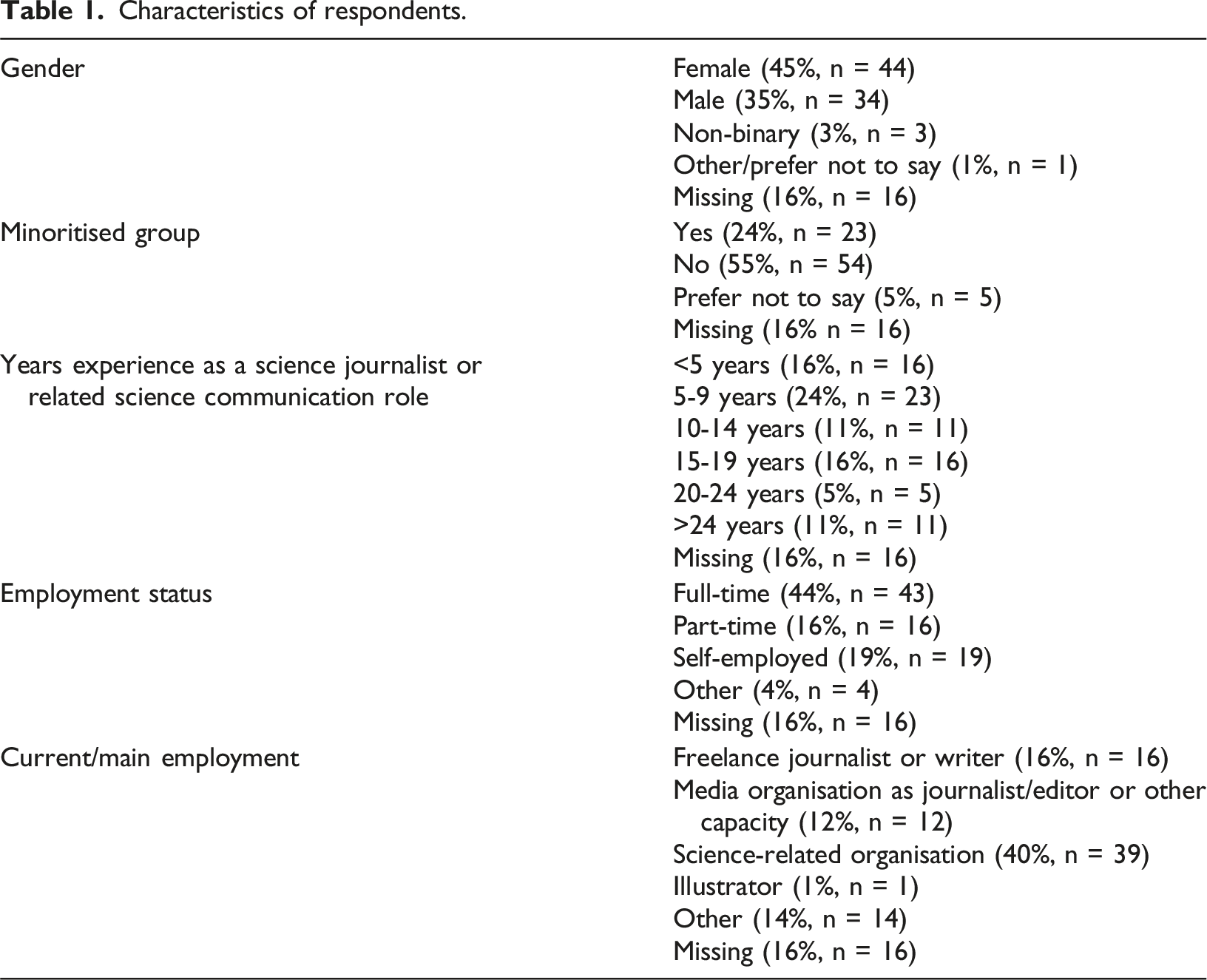

Characteristics of respondents.

Journalists’ use of AI tools

To get some understanding of the tools currently used by respondents, we asked about their use of four common AI tools, also offering the option for journalists to indicate other tools in an open box 62% of respondents had used ChatGPT (n = 61), 9% had used Otter (n = 9), 6% had used Firefly (n = 6) and 1% had used Copy.ai (n = 1). 21 respondents skipped this question, while 35% (n = 34) indicated that they used other tools, including Gemini, Perplexity, DallE, Grammarly, DeepL, Trint and Claude.

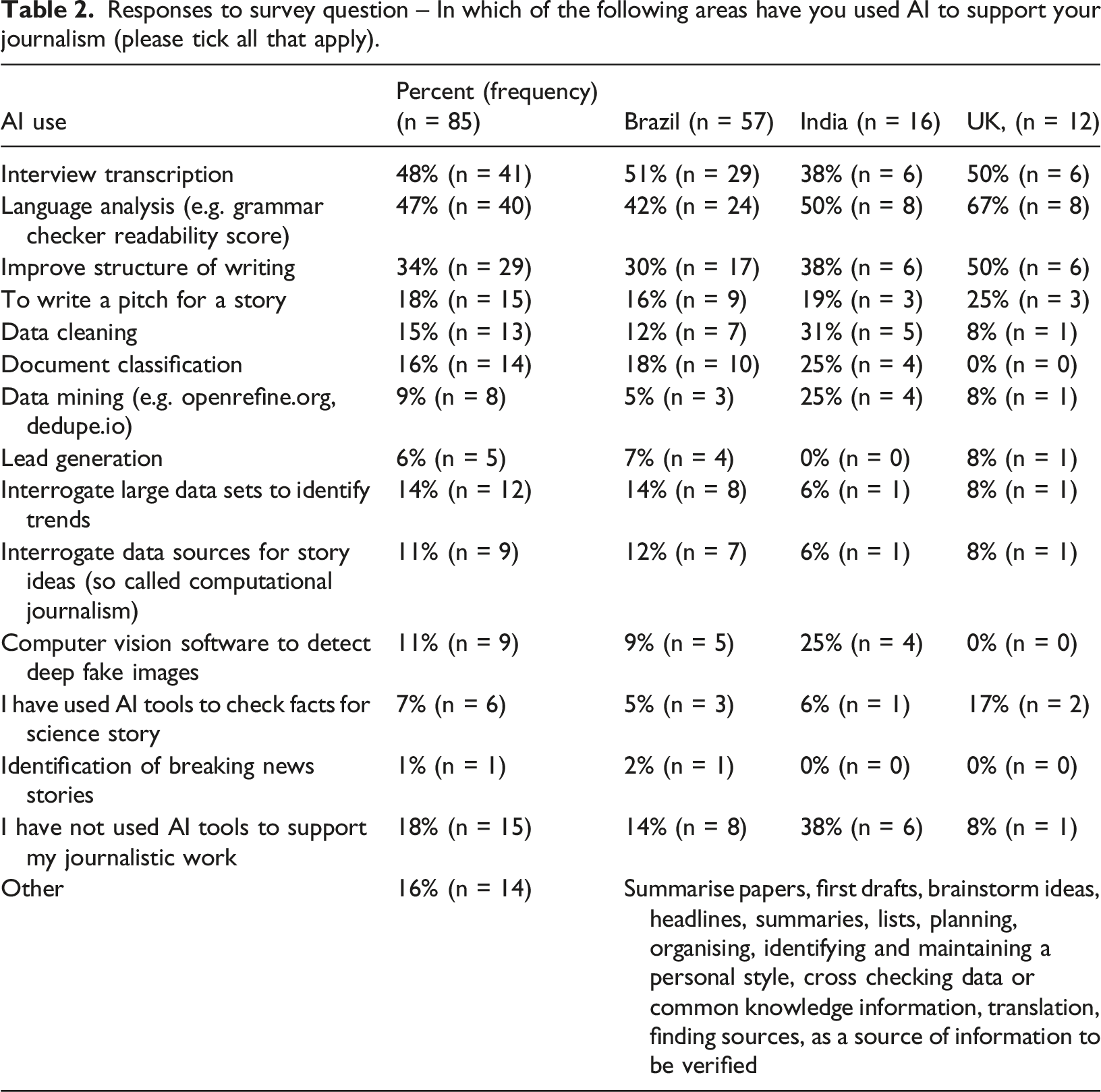

Responses to survey question – In which of the following areas have you used AI to support your journalism (please tick all that apply).

Views on the use of AI tools in journalism

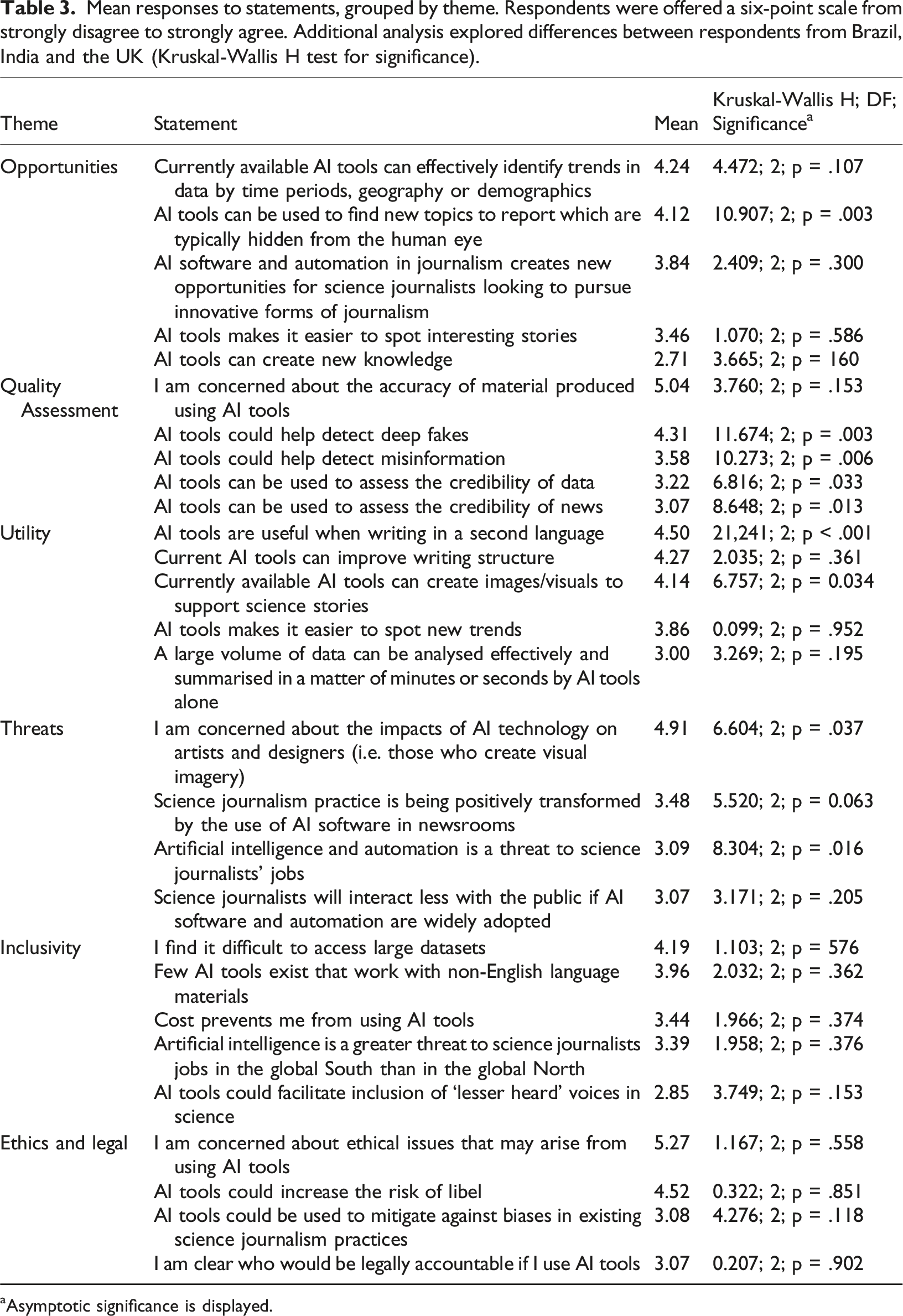

Mean responses to statements, grouped by theme. Respondents were offered a six-point scale from strongly disagree to strongly agree. Additional analysis explored differences between respondents from Brazil, India and the UK (Kruskal-Wallis H test for significance).

aAsymptotic significance is displayed.

Our respondents rated the utility of AI tools aimed at writing support highly, indicating they are useful when writing in a second language, for structuring writing and creating visuals to support stories. Post-hoc analysis indicates that Brazilian respondents agreed more strongly than UK or Indian respondents that AI can support writing in a second language and UK respondents were significantly less likely to agree that AI tools are useful for creating visuals, than Brazilian respondents. Although reporting lower use of AI tools for data analysis or trend spotting, respondents also felt current tools could facilitate such activities. Participants were particularly concerned about ethical issues arising from AI use. They were also concerned about the risk of libel and unclear about legal accountability when using AI tools.

Post-hoc analysis identified significant differences between countries with regards to most of the statements addressing quality issues, although there was considerable agreement across countries with the statement ‘I am concerned about the accuracy of material produced using AI tools’. Regarding the potential of AI to detect deep fakes and misinformation, in both cases post-hoc analysis indicates that Brazilian respondents were significantly more likely to agree with this statement than Indian or UK respondents. UK respondents also were significantly more likely to disagree with the statements that AI can assess the credibility of data or news than Brazilian respondents. Indian respondents were also significantly less likely to agree that AI can assess the quality of news than Brazilian respondents.

Discussion

This exploratory research provides a snapshot of how AI has been integrated into the working practices of science journalists in three countries. Addressing RQ1, our survey echoes the findings of studies of generalist journalists (Beckett and Yaeen, 2023) indicating that AI is predominantly used by science journalists for relatively simple tasks such as interview transcription and language analysis (including checking grammar), and to a lesser degree to improve the structure of writing. AI is being used by fewer science journalists for more complex tasks. However, there is evidence that science journalists in India are early adopters of more advanced uses of AI, with a higher proportion of respondents using it for data cleaning and data mining. Science journalists in Brazil also appear to be using it for sophisticated tasks such as the interrogation of large data sets to identify trends and story ideas, compared with journalists in India and the UK. Turning to RQ2, in a similar vein to the science journalists interviewed by Dijkstra et al. (2024) survey respondents were broadly optimistic about how AI can be used for journalistic tasks such as finding new topics to report which may not have been spotted otherwise and to identify trends in data. They also recognised AI’s ability to support writing in a second language. In terms of challenges, the science journalists in all three countries share the concerns of participants in other studies (e.g. Diakopoulos, 2019; Manfredi-Sánchez and Ufarte-Ruiz, 2020) about the accuracy of material produced by AI. Overall, science journalists in the UK appear to be less optimistic about the capabilities of AI than their counterparts in India and Brazil. Addressing RQ3, there was considerable concern across the countries about the ethical issues AI tools present, similar to findings in research with non-specialist journalists (Gutiérrez-Caneda et al., 2024; Noor and Zafar, 2023) and, to a slightly lesser the risk of libel.

Across all countries, AI is being used by science journalists where it offers a clear relative advantage over previous working practices (Rogers, 2003) such as the significant time-saving of AI transcription of interviews compared to manual transcriptions. The backdrop of increasing workloads of science journalists globally (Massarani et al., 2021) mean that time-saving tools are likely to be particularly welcome. Use of AI tools is much less evident by science journalists for tasks, such as data mining and analysis, where the complex and opaque mechanics of how AI works makes it difficult for journalists to understand the analytical process and check it. The ‘black box’ nature of AI tools mean that they do not align with the institutional and individual values that shape journalists’ working practices, including accuracy and objectivity (Leonhardt, 2025) and accountability (Nishal and Diakopoulos, 2025).

The more sophisticated uses of AI by journalists in Brazil and India and their more positive views on its potential use mirror differences in public perceptions of AI between countries, with members of the public in Brazil and India less apprehensive about AI in products and services than the UK and more optimistic about AI’s ability to reduce disinformation online (Ipsos, 2024). It is noticeable, however, that a higher proportion of science journalists in India than in Brazil and the UK said they have not used AI in their work. The modest sample size in this research means that further research is needed to determine whether the concerns of some (such as Beckett, 2019; Jamil, 2020) about potential disparities in access to AI tools between the Global North and Global South, are evident in science journalism.

An aspect of the survey where there were notable differences between countries in science journalists’ perceptions of AI, was in their level of concern about the influence of AI on job security. While our findings are similar to those of others (Beckett, 2020; Guanah et al., 2020; Jamil, 2020; Peña-Fernández et al., 2023) in terms of concerns about job losses of journalists, it was in the UK where this was of the greatest concern. In contrast, within the general population in India and Brazil, more people think AI will replace their current job than in the UK (Ipsos, 2024).

Conclusions

This survey provides a snapshot of a moment in the transition in science journalism practices due to rapid increase in the capabilities of AI in recent years; science journalists appear to be using their agency to determine the extent to which they are integrating AI into their ways of working. The extent and speed of the transition in science journalism working practices will depend on the extent to which science journalists; questions of accuracy, ethics and legal responsibility, common across countries, are addressed.

In the countries studied here, AI is predominantly being used for simple tasks such as transcription, though science journalists in India and Brazil are using AI in more advanced ways and appear to be slightly more open to more sophisticated uses than their counterparts in the UK. Nevertheless sophisticated uses of AI are relatively infrequent. Rogers’ (2003) diffusion of innovation theory has provided a useful framework with which to explore AI use and perceptions among science journalists. Our findings indicate that the complexity of AI and its potential lack of compatibility with journalistic values, such as accountability, are at least partially responsible for the lack of use of AI for more sophisticated journalistic tasks.

Further research should explore whether it is these characteristics of AI, complexity and a potential lack of compatibility, that predominantly determine the extent to which AI is used by science journalists in different countries, or whether contextual factors, such as differences in public perceptions and acceptance of AI between countries, have the biggest influence. There may also be other contextual factors influencing the adoption of AI, such as differences in access to scientists and resources between science journalists in the Global North and Global South (Nguyen and Tran, 2019). Given that traditional media are still an important source of science news, combined with AI’s potential to radically change journalists’ working practices highlights the importance of understanding the factors mediating its adoption, and how these differ across the Global North and South.

This study points to the need for further research that would enable science journalists and their employers to navigate the ethical questions AI use presents, including understanding audiences’ perceptions of AI-generated material about science. We add to the calls of others (Aissani et al., 2023; Gutiérrez-Caneda et al., 2024) to create ethical guidelines in the use of AI in journalism. There is arguably a particular need for this in the reporting of science given the way ‘science fact’ is being increasingly contested online and the consequences of this in public health and other domains.

Footnotes

Acknowledgements

We would also like to thank the Association of British Science Writers, the Science Journalism Association of India and Rede Brasileira de Jornalistas e Comunicadores de Ciência (RedeComCiência), for support with organising workshops, recruitment to workshops and distribution of surveys to members.

Ethical considerations

Ethical approval was received from the Research Ethics Committee of the University of the West of England Bristol [HAS.23.02.066].

Consent to participate

English language version is provided below. The Portuguese survey contained the same ethics information except it refers additionally to ‘no Instituto Nacional de Comunicação Pública da Ciência eTecnologia, sediado na Fundação Oswaldo Cruz, no Brasil’ in addition to the University of the West of England and Indian Institute for Science Education and Research, Pune as participants.

Consent for publication

By submitting this information you are consenting for your questionnaire answers to be included in the study. Data Protection Privacy Notice: All data will be treated as personal under the UK Data Protection Act 2018 and the UK General Data Protection Regulation 2016 (GDPR). The data controller for this project will be the University of the West of England, Bristol, UK. Your personal data will be processed only for the purposes outlined in this questionnaire. The legal basis that we will rely on to process your personal data is that it is necessary for the performance of a task carried out in the public interest. Personal, identifiable raw data will only be processed for the duration of the study and subsequent analysis of results. Your personal data, provided in this questionnaire, is not shared with our partners or third parties. What are your rights? You have a number of qualified rights including a right to access your personal information. Please visit the University Data Protection webpages for further information in relation to your rights. Any requests or objections should be made in writing to the University Data Protection Officer: ![]() , 10:23 Qualtrics Survey Software https:/

, 10:23 Qualtrics Survey Software https:/

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work received funding from The British Council [IND/CONT/G/22-23/22] to EW, AR and SS and from the National Council for Scientific and Technological Development (CNPq, 465658/2014-8) and Carlos Chagas Filho Foundation for Research Support of the State of Rio de Janeiro (FAPERJ, E-26/200.89972018) to LM. LM also wishes to thank CNPq for the Productivity Scholarship 1B and Faperj for the grant ‘Cientista do Nosso Estado’ (Scientist of our state).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Artificial intelligence (AI) and science journalism international survey

You have been invited to participate in a study exploring the skill needs of science journalists. This study is focussing on digital skills (including AI) and this survey specifically asks about your hopes, fears about, and current use of, AI tools. This study will contribute to the development of new teaching and learning materials that will support students studying at undergraduate and postgraduate levels at the University of the West of England, Bristol, UK and the Indian Institute for Science Education and Research, Pune, India. The data gathered will be collected and analysed by Dr Emma Weitkamp, with support from the wider project team. The data we collect will be processed, stored and shared in accordance with the UK General Data Protection Regulation, by Dr Emma Weitkamp. This means that your data will not be identified in any reports or publications and any data extracts will be carefully reviewed to ensure you are not identifiable. Any sensitive or identifiable data will be kept confidential, and only aggregated and pseudonymised data will be shared within the research team. The information gathered will be used to develop teaching and learning materials and may be reported in academic and practitioner facing publications. Participation is voluntary; you do not have to participate in this study if you do not wish to. We have not identified any potential risks associated with participating in this study. Care has been taken with the wording of questions to minimise risks. However, you can discontinue the study at any time by simply closing your browser. You may ask for your contribution to be withdrawn from the study by the 15 March 2024 and you will be asked for a memorable word within the questionnaire to facilitate this. The questionnaire will take approximately 30 minutes to complete, and it is entirely your choice as to whether to complete it or not. When you click the final arrow after the memorable word field at the end of the survey, you give your consent for any answers you have given to be included in the study. Additional information on Data Protection is also provided at the end of the survey. If you have any questions on the questionnaire or would like more information on the study, please contact Emma Weitkamp via email

Data Availability Statement

The data are available on request to the corresponding author