Abstract

The global proliferation of internet-distributed video-on-demand (VOD) services has left in its wake a rich but scattered corpus of research into the catalogs and interfaces of these services. Using empirical methods and sources including scraping, observation, digital simulations, and third-party datasets, researchers have found many ways to study VODs, their content, and their recommendations. Our article provides a critical review of this research landscape. We describe the evolution of two key methods: catalog analysis and interface analysis. We then explain how these methods intersect with each other and also with audience research. Throughout, we assess the value and limitations of various methods, showing how they fit within a wider research landscape that involves multiple ‘ways of knowing’ VOD. The practicalities and politics of access to VOD data are considered throughout.

Keywords

Introduction

The global proliferation of internet-distributed video-on-demand services (VODs) has left in its wake a rich but scattered corpus of research into the catalogs and interfaces of these services. Using empirical methods including scraping, observation, digital simulations, and quantitative analysis of third-party datasets, researchers have investigated issues related to the structure, curation, and use of VODs. Within this literature, two critical themes emerge. The first is diversity – referring both to the diversity of titles in VOD catalogs (content diversity), and the differentiated audience consumption of VOD content (exposure diversity). The second is prominence, including the related concept, discoverability – referring, respectively, to the relative visibility of content within the interface and its accessibility through search, promotion, and recommendation.

To investigate these interconnected issues, academics, regulators, and market researchers have developed different research approaches. These include retrofitting research methods initially developed in fields such as broadcasting research; devising techniques for manual and automated data collection from catalogs and interfaces; and developing new concepts and theories needed to interpret these data. Such work contributes to a vibrant international research enterprise dedicated to understanding the online video ecology.

Much of this research explicitly challenges the common assumption that VODs are black boxes impenetrable to academic inquiry. On the contrary, researchers have shown that VODs offer a wide range of semi-public data including titles, genres, metadata, recommendations, artwork, promos, ads, and interfaces. Such data provide useful insights into content diversity and exposure diversity; as such, they provide useful context for current policy debates about audiovisual diversity, recommendation bias, and other related issues. However, such data are often difficult to gather and interpret, and the research literature on these topics is highly fragmented. As a result, researchers may struggle to access current best-practices in VOD research methods, increasing the risk of redundancy and replication.

To address this problem of knowledge, our article provides a critical survey of the current state of VOD research methods, focussing on VOD catalog and interface analysis. We consider the value and limitations of these methods for debates about audiovisual diversity, discoverability, and platform regulation. Our analysis is informed by our own experiences researching VODs, including hands-on experience with several of the methods discussed below, and by our professional observations of empirical research approaches used within government. We reflect here on what we have learnt about the limitations of different approaches, and the benefits of combining them in different ways.

The article proceeds as follows. First, we describe the evolution of catalog analysis and interface analysis as methods for studying VODs, building on earlier published review articles (Lobato, 2018; McKenzie et al., 2023). We then explain how these methods intersect with each other and with audience research. Throughout, we critically assess the value and limitations of each method, showing how it fits into a wider research landscape that involves multiple ‘ways of knowing’ VOD.

VOD research is already a vast field and we do not attempt to cover everything in this review. We note some important provisos. First, we have focused on what we believe to be the most widely cited English-language studies in VOD interface and catalog research, as well as those that have introduced novel methods of data collection or analysis. In doing this, we are mindful that these inclusion criteria inevitably skew the sample toward research from national markets where the VOD ecology is most developed (i.e. high-income countries), as well as studies published in prominent English-language journals. Of course, other valuable studies exist that are not discussed here – and many more will be published in coming years. Our examples should therefore be considered illustrative rather than exhaustive. Second, we focus specifically on VODs for film and TV content, notably SVODs (subscription VODs) and BVODs (broadcaster VODs). We exclude studies of social video platforms, such as YouTube and TikTok, which have both a different structure and a separate research literature. Third, our article focuses on VOD research from screen, film, media, and media industry studies, where small-n and qualitative studies are preferred, as well as policy research by regulators and other institutions seeking to understand actually-existing VOD market dynamics. However, we have excluded simulation-based, experimental, and behavioural-science approaches (see McKenzie et al., 2023 for an excellent overview).

VODs as research objects

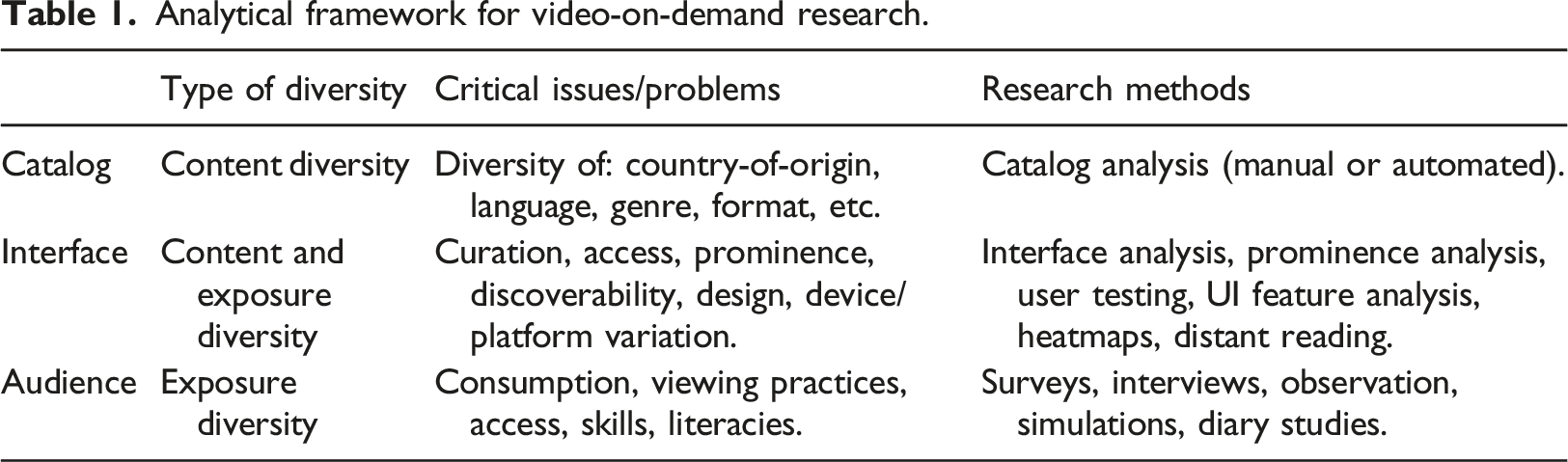

Analytical framework for video-on-demand research.

Each of these three elements ties directly to specific policy debates. Analysis of catalogs is integral to cultural policy and specifically to the policy mechanism of content quotas (e.g. the EU’s Audiovisual Media Services Directive 2018 revision requires a minimum of 30% European content quota in SVOD catalogs). Analysis of interfaces is crucial for policy analysis of algorithmic diversity, algorithmic bias, recommendations, nudging, visibility, prominence, promotion, and discoverability. These two supply-side perspectives are complemented by the demand-side perspective of audience research which provides essential insight into the actual consumption practices of populations (exposure diversity). VOD research is therefore multifaceted, and each element provides only one part of a larger picture. No single method captures all these elements. For a holistic perspective it is necessary to combine multiple methods. We now consider each of these methods in turn.

Catalog analysis

The most straightforward method for studying VODs is catalog analysis, a form of content analysis. Catalog analysis drives from an earlier tradition of broadcast schedule analysis (Katz and Wedell, 1977). It is also related to contemporary forms of schedule analysis, which approach the schedule as a site of televisual branding, business models, temporal flow, and audience engagement (Bruun, 2019: 16-17). Building on these traditions, catalog analysis can be used to understand the composition and characteristics of SVOD libraries, and the curatorial logics that inform those libraries.

Through catalog analysis, researchers investigate issues such as catalog size, origins, genre mix, languages, and categorisation logics. Such knowledge is useful for understanding how VODs perform in terms of content diversity, and the degree to which each service is offering local content, national content, regional content, public-interest content, linguistically diverse content, and other content categories. In this way, the method is a helpful lens for assessing ‘concerns [about] cultural imperialism, the audiovisual trade balance, and cultural diversity’ (Larroa, 2023: 666).

Catalog analysis can assist researchers to understand competitive dynamics in the marketplace. For example, Lotz et al. (2022: 514) used catalog analysis to explore ‘what [specific SVOD] services offer, how the offerings of the services differ, and how those offerings compare with linear, ad-supported services’. Iordache et al. (2023: 362) used the same method to ‘identify strategies of complacency, resistance, differentiation, diversification, mimicry or collaboration’ within VOD markets. At scale, this method can also be used for longitudinal and transnational analyses that compare catalogs in different countries. Aguiar and Waldfogel (2018) analysed global Netflix catalogs sourced from UNOGS (Unofficial Netflix Online Global Search) to develop a novel way of measuring cross-border content availability (‘value-weighted geographic reach’).

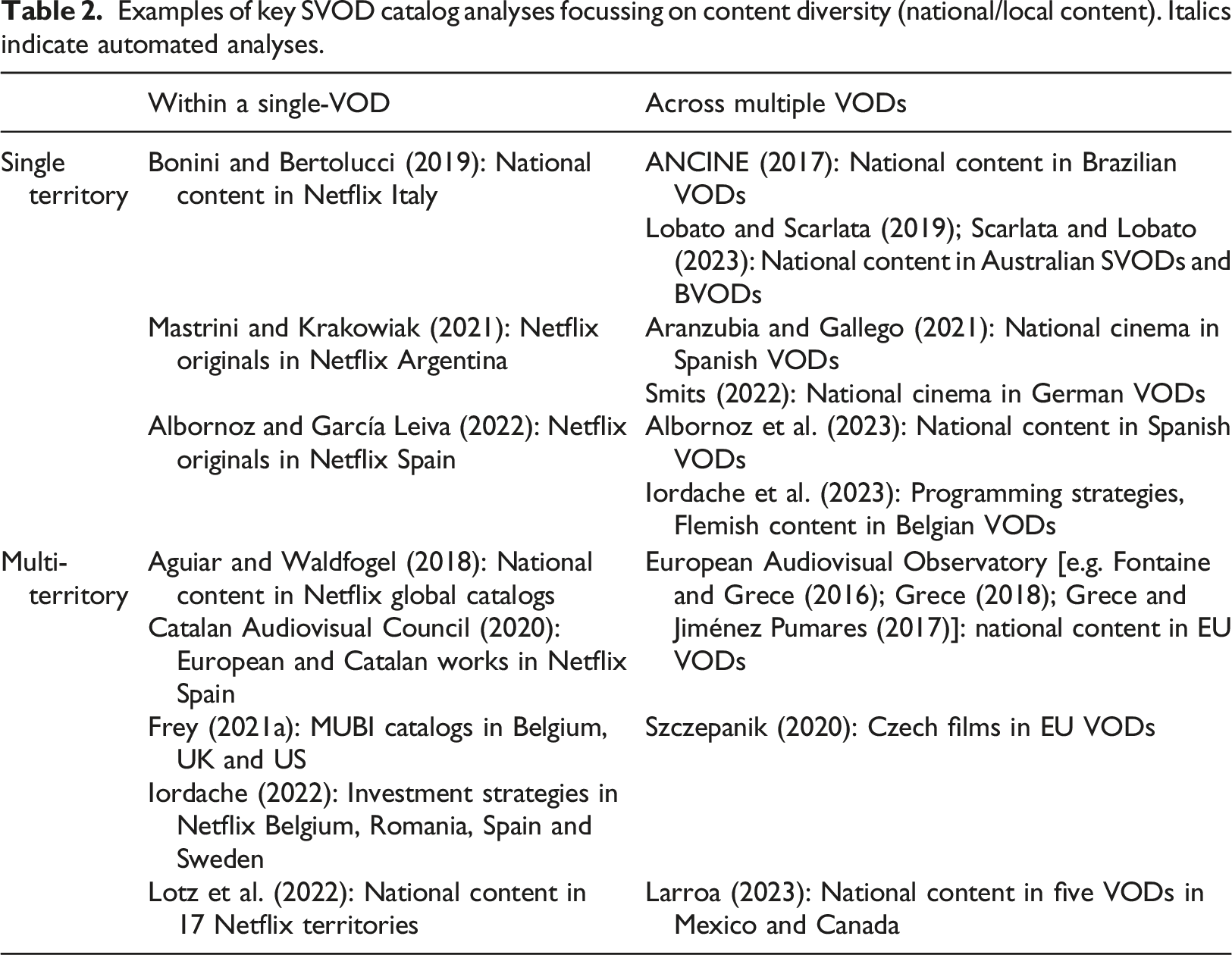

Examples of key SVOD catalog analyses focussing on content diversity (national/local content). Italics indicate automated analyses.

The European Audiovisual Observatory (EAO), an EU-funded research centre, pioneered many of the scraping methods now used to analyse VOD catalogs (e.g. Grece, 2018). During the 2010s, when European media policy was grappling with the impact of Netflix and other US-based SVODs on audiovisual diversity in Europe, the Observatory’s catalog studies uniquely clarified the direction of transnational content flows in SVODs. These methods were then adapted by other scholars within Europe and worldwide. The research of the EAO also informed the work that went into developing the revised Audiovisual Media Services Directive, which increases obligations to promote European works for non-linear services.

Private providers specialising in catalog research have also emerged, notably the UK-based commercial data analytics firm Ampere Analysis, which worked with the Observatory and became its main data provider. Ampere’s proprietary SVOD database, which can be licensed for scholarly use, allows researchers to track SVOD licensing with attention to variables including national origin, language, and producer. Using Ampere data, Lotz et al. (2022) were able to explore and compare seventeen national Netflix libraries. Ampere has also been used by regulators; for example, Ofcom commissioned Ampere to analyse the volume of content, types of content, and the lifecycle of titles in UK VODs (Ampere Analysis, 2019). These commercial research platforms are priced expensively for corporate clients and are designed for commercial applications such as demand modelling, catalog benchmarking, and marketing analysis – which, along with their high cost, may explain why these tools are rarely used in academic research.

As an alternative, it is possible to study VOD catalogs using free third-party indexes such as JustWatch. While useful, these indexes may contain errors or display restrictions. Another limitation is that coding can be complex due to inconsistent classification; for example, ‘definitions of [country of] origin vary from country to country and defining local culture has always been problematic and a topic of debate’ (Larroa, 2023: 672). Within jurisdictions themselves there may be multiple ways to define what is local and researchers and agencies may not always adopt the same definitions as VOD providers. Assigning countries of origin to catalog titles in a way that conforms to legislative definitions of national content is therefore a complex task. 1 Additionally, how researchers and VODs tag content may be inconsistent, and catalog analysis may be impacted by the researcher’s ability to visually recognise national or other types of content.

Another approach is to gather data manually through the VOD interface (manual catalog analysis). This is a low-cost, accessible method which does not require specialist resources and or expensive infrastructure. All that is required for a manual analysis is access to a VOD, a search and classification taxonomy, a spreadsheet to record findings, and time. Because the work of manual catalog analysis involves many hours of browsing and searching within a VOD interface, it requires the researcher to get ‘up close and personal’ with the catalog. This intimacy can lead to surprising insights; for example, identifying unusual clusters of titles in specific genre niches, particular patterns of licensing that reveal previously unknown commercial strategies, or discoverability quirks related to visibility, indexing, and retrieval of titles in search (Lobato and Scarlata, 2019).

A methodological divide thus characterises the catalog studies literature. On one side: automated methods designed for speed, scale, and transnational and multi-service comparison, which are used by quantitatively-inclined scholars as well as policymakers requiring hard evidence to inform government decision-making; on the other: manual methods, which are labour-intensive, inductive, contextual, and favoured by scholars in the humanities.

Over time the scope of catalog research has expanded to include other issues beyond national content. Recent catalog studies consider topics such as the proportion of auteur/festival cinema in catalogs (Farchy et al., 2019), age and exclusivity of content (Lobato and Scarlata, 2019), SVOD investment strategy (Iordache, 2022), the categorisation of queer media (Monaghan, 2023), IP sources of Netflix originals (Cuelenaere, 2024), and pricing of content (Suzor et al., 2017). These examples show the range of different issues and topics that can be usefully studied using a catalog analysis method, combined in some cases with targeted textual or content analysis. We expect, and hope, that these methodologies – like national content analysis – will grow over time to permit a much wider range of research possibilities that can be addressed through the catalog, allowing a multifaceted understanding of the politics of access and representation in VOD.

National regulators have also built their own publicly available tools to track catalogs. For example, Australia’s Bureau of Communication, Arts, and Regional Research (BCARR) built an online dashboard related to SVOD consumers, content, and production in 2021-2022, to monitor local content levels over time (BCARR, 2022). BCARR used Ampere data to show the number of titles and share of Australian content within eight SVODs (both Australian and foreign owned) between 2015 and 2021. The adoption of catalog research methods by governments represents the migration of this empirical technique into the sphere of public policy and public knowledge infrastructure.

In summary, various forms of catalog analysis now exist including manual, automated, single-SVOD, multi-SVOD, single-territory, and multi-territory approaches. While much work has focused on content diversity and national content, the scope of research has expanded to consider other issues including genres/formats, pricing, representational diversity, and IP sources. The limitations of catalog analysis have also become apparent, including its time-intensive and resource-intensive nature, a tendency towards simple counting at the expense of more integrated and contextualised analyses, and its inability to deal with discoverability and exposure. As Farchy et al. (2022: 423) observe, ‘the mere presence of a title in a catalog does not necessarily imply that the user can discover it [and] the abundance of online content does not automatically create diversity in access to content’. For these reasons, attention is increasingly turning to a different empirical method for studying VOD: interface analysis.

Interface analysis

To explore discoverability, prominence, and personalisation in VOD services, a different set of methods are needed. As Bideau (2022) observes, the VOD interface is the logical site for such research:

Streaming services today give access to such a large selection of contents that [catalog analysis] methods are no longer relevant. Indeed, what is the use of measuring diversity in a catalogue that no one user ever sees in its entirety? Surely the more relevant space within which to study cultural diversity is the user’s screen and the subset of works from the catalogue that appear on it.

While Bideau’s argument about catalog analysis may be debatable, his point about the analytical importance of the interface is undeniable. Yet, as Johnson (2017: 124) observes, there are still few ‘established methodologies in TV studies for studying interfaces’, and data collection remains difficult given the impossibility of observing users’ screens at scale. How, then, are researchers studying VOD interfaces?

Several methods have emerged in recent years. The first of these involves

A second approach is quantitative or qualitative research into

A third approach is to study specific

A fourth approach is to analyse

Experimental methods for studying personalised recommendations: simulations and bots

With the rise of prominence and discoverability as policy concerns, the need for robust data on VOD recommendations is widely acknowledged. But recommendations are the hardest element of a VOD to study, because of their dynamic and personalised nature. As Kelly (2021: 269) notes, personalisation ‘presents the biggest methodological challenge for media studies scholars’.

How then to study recommendations? The existing literature suggests some possibilities. Bideau and Tallec (2022) studied Netflix recommendations by simulating and testing the effects of different viewing behaviour. Using Raspberry Pis (tiny single-board computers), they trained bots to watch Netflix for three hours each evening for eight days. Each bot was assigned unique and contrasting taste profiles; for example, a fan of Japanese content versus a fan of European content. Bideau and Tallec then studied Netflix’s recommendations for each ‘user’, noting every title that was promoted on the home screen during the test period. They found that watching only European content on Netflix tends to result in an increase in recommendations for European content on the home screen, and vice versa. Concerns about ‘invisibility by way of the algorithm’, though, seems to be unfounded: 92% of European titles in the catalog were recommended at least once to one or more bots. Bideau and Tallec concluded that ‘overall, there does not seem to be many “dormant” titles buried deep within the catalog’.

Researchers without these computational resources may also consider licensing data from specialist research firms, including MTM, Looper Analysis, Arvester and AQOA, which have all developed automated methods to capture VOD recommendations. Like the catalog analysis tools developed by Ampere Analysis, these services may be expensive and/or come with restrictions on the use of data. For this reason, they tend to be used primarily by corporate and government clients (e.g. Fontaine, 2021).

Fortunately, reverse-engineering of recommendation algorithms can also be achieved manually through ‘speculative experimentation and playing around’ (Bucher, 2018: 60). An example is Pajkovic’s (2021) study of Netflix recommendations, which used fake user profiles to mimic different personas including a ‘die-hard sports fan’, a ‘culture snob’, and a ‘hopeless romantic’. Pajkovic found that the recommendations for each profile became increasingly genre-specific. In contrast, a fourth ‘genre disruptor’ persona led to a ‘highly diverse homepage absent of an easily distinguishable taste identity’ (ibid: 224). Using a similar approach, Česálková (2023) examined curation and discoverability of classic movies on Netflix (Czech Republic) via three experimental user profiles that searched, selected, and watched with varying degrees of intensity. She then tracked the evolution of each profile’s menu and its saturation of classic movies over time, observing a clear correlation.

These approaches to capturing VOD recommendations suggest interesting possibilities for research. Of course, there are also limitations to consider. The cost and complexity of capturing or simulating recommendations in SVOD services means that it is very difficult to extrapolate findings to a population level. Another consideration is that recommendations, even though they provide another vital piece of the exposure puzzle, do not actually tell us about audience consumption. Hence there is still the need to consider some form of audience research as part of an integrated approach to studying VODs.

Intersections with audience research: joining the dots between supply and exposure diversity

While supply-side research methods are useful for studying content diversity, they cannot fully address the question of exposure diversity. To understand exposure diversity in VOD researchers need to find some way of asking actual people about how they use VODs – or observing such use.

In a recent contribution to the methodological debate, Frey (2024: 133) argues for a more nuanced stage of research into VOD recommendation and its effects on exposure diversity, advocating for a return to audience research. Observing that ‘the technological basis of recommender systems is a poor predictor of consumption outcomes’, Frey argues that researchers need to investigate ‘[what] audiences actually watch’ as well as what is promoted to them on the home screen. Shifting the focus to audiences in this way also requires that we question deterministic effects-models and focus instead on audience practices: Discussions of film and media diversity, perhaps in a hangover from the days of limited media options and the three-to-four-channel linear television landscape, often imply a passive consumer whose horizons will be either broadened or narrowed by a respectively benevolent or malevolent programmer – with this metaphor now being extended and essentialized to the benevolent curator and the malevolent algorithm. […] As we speak about VOD users we must be careful to assume neither a rational-agent, perfectly informed ‘active’ user nor an absolutely ‘passive’ corporate-technology victim (ibid).

This attention to audience engagement with recommendations can be seen in Frey’s book Netflix Recommends (2021b), which includes a fascinating empirical chapter on audience discovery entitled ‘How real people choose films and series’. Frey conducted two nationally representative surveys in the US and UK, followed by 34 qualitative interviews in the UK. These found ‘no compelling evidence to show that algorithmic recommender systems are the most important or most widely used form of suggestion’ (ibid: 132-133). Instead, they revealed a diverse variety of online and offline factors – including recommendations from friends, the opinions of others watching, the popularity of titles on the platform, and genre searches – that shape content selection.

Another study using a combination of survey and interview methods to explore VOD discovery is the ‘Routes to Content’ project (Johnson et al., 2020a; 2020b; 2022; 2023). Based on a multi-year study of streaming behaviours in the UK involving a national survey and follow-up interviews, Routes to Content explores ‘how people find and make decisions about the television content that they choose to watch’ (2020a: 1). Challenging assumptions about the deterministic power of algorithm recommendations, Johnson et al. found that UK audiences have an average of eight different ways to discover content, including word-of-mouth, traditional advertising, and social media. In other words, while the VOD interface plays a role in discovery it is only one of many factors. This finding was echoed by a separate study on VOD discovery in Australia based on a national survey (Lotz and McCutcheon, 2023). Lotz and McCutcheon found that around half of the time Australian viewers already knew what they wanted to watch before opening a VOD, leading the authors to suggest that ‘concerns about algorithmic priority and display [in the interface] may be overstated’ (ibid: 7). Martínez and Kaun similarly found that discovery was strongly shaped by ‘practices outside the platform: sharing trailers, commenting with friends, and promoting certain shows’ (ibid: 209-210), while audience research by Lüders and Sundet (2022) again demonstrated that the ‘experiential affordances of watching online TV are relationally contingent on technical materiality, viewer agency, and social context’ (ibid: 347). This growing corpus of qualitative audience research is challenging the often deterministic discourse about algorithmic effects in VOD usage in at least two ways. It foregrounds the importance of offline and social communication, reminding us that interfaces and algorithms are not all-powerful, and it draws attention to the agency and diversity of individual users as they negotiate interfaces.

Audience scholars have also used creative ethnographic methods to capture the nuances of VOD use. Varela Martínez and Kaun (2019: 201-202) combined a walkthrough of the Netflix interface with ‘think aloud’ interviews with heavy Netflix users in Singapore to investigate usage and perceptions of the recommendation algorithm. As the users moved around the interface, the authors asked them to ‘reflect on what they were doing and why’ (ibid). During their thirteen interviews with Malaysian and Indonesian audiences, which sought to understand patterns of ‘roaming’ across the media landscape, Hill and Lee (2022: 99) asked participants to physically ‘draw maps that imagine the psycho-social terrain of their media landscape’. These visualisations uncovered the trope of the ‘Netflix Park’: a gated community of subscribers that is at once open and sociable, but also aggressive and territorial. By foregrounding the full range of cultural and social variables in VOD use, these studies helpfully shift debates about interfaces away from a deterministic effects paradigm toward a more contextual and integrated analysis.

Audience research is also an important tool for regulators and governments, which use a range of methods (including annual surveys and market research) to understand how populations use VODs. While the aim of this research is typically to identify emerging harms or assess impacts and vulnerabilities among populations, these studies often contain fascinating insights into exposure diversity. Audience research on content discovery conducted by Canadian government agencies in 2016 – which found that word-of-mouth was the most important discovery mechanism – informed that country’s policy approach to digital discoverability in VODs (Canada Media Fund, 2016a; 2016b). Other government research has used surveys to understand decision-making and discovery by TV audiences, as part of regulatory investigations into VOD (Ofcom, 2023; Social Research Centre, 2022). Here participants reflect on challenges of discovery and navigation in VODs and what methods they use to make choices. While much of this research explores behaviours, attitudinal survey research has also been conducted by some regulators to assess community expectations for VOD regulation (Ofcom, 2023).

These studies show the importance of integrating audience research with interface and catalog research. Using surveys, interviews, and other methods, audience researchers have added important nuance to claims about the deterministic power of algorithms to shape viewer behaviour. Indeed, most of these studies have confirmed the importance of offline discovery practices (word-of-mouth and social recommendation) over algorithmic recommendations. Rather than confirming the audience as free agents or cultural dupes, audience research tends to reveal a wide array of different usage and discovery behaviours whose analysis can advance theory-building about exposure diversity. However, such research should not fall into the trap of simply describing or celebrating audience agency, as has occurred in previous waves of audience studies. Instead, the focus needs to be on explaining the dynamics of audience agency and choice in relation to the structuring power of the VOD catalog, the interface, and recommendations. Indeed, in both academic and government literature, consideration has been given to broader institutional and industrial factors at play in diversity, discoverability, and prominence (Albornoz and García Leiva, 2019; Canada Media Fund, 2016b).

Discussion: Futures of VOD research

In this article we have surveyed the key empirical methods that can be used for researching VODs. Across catalog research, interface analysis, and audience research, scholars have deployed various techniques including scraping, manual content analysis, simulations, surveys, interviews, and distant reading. There now exists considerable depth and expertise in each of these methods, indicating a maturing research enterprise.

It is important for scholars working in the field to build on this existing knowledge rather than reinvent the wheel. With so many useful models for catalog analysis, interface analysis, and audience research to draw on, researchers have access to many interesting exemplars. We hope our review will save time and effort by pointing researchers in some interesting directions. We believe the methods discussed here offer an appealing and robust alternative to more ad hoc, impressionistic studies of VODs.

Empirical research on VODs has been siloed to date, with many different specialisations that do not always talk to one another. As a result, certain aspects of catalog-interface-audience interaction remain under-researched. For example, catalog research studies have rarely sought to integrate audience research. Similarly, audience research has been mostly silent on the question of catalogs – though it has had more to say about interfaces. A few brave scholars have attempted to bridge these gaps through multi-method work that considers catalogs and/or interfaces and/or audiences in an integrated way within single publications (e.g. Frey, 2021b on audiences and interfaces; Broocks and Studnicka, 2021 on catalogs and audiences), or across different projects by a research team (e.g. Johnson, 2019; Johnson et al., 2020a on audiences and interfaces), or in conjunction with ratings analysis (Thurman et al., 2023). Nonetheless, it remains the case that most research has tended to look at a single element of VOD in isolation.

This fragmentation is understandable given the need to develop depth and expertise in specific areas of VOD research, not to mention the well-known risks faced by interdisciplinary or mixed-method research. However, there remains a need for larger-scale, mixed-method research that can look at VODs in an integrated way. The analytical pay-off here would be to explain how different elements of a VOD (catalog, interface, and audience) interact to produce different kinds of diversity or homogeneity effects, across both content and exposure. Such an analysis might also explore whether the diversity dynamics of VOD differ from other areas of the cultural industries where content and exposure diversity have been more extensively excavated, such as news and television (Napoli, 2009). How to integrate these research efforts is perhaps a long-term challenge for the field – something to consider as we consolidate expertise within each field of VOD research.

As the technology landscape evolves and new topics come into focus, the priorities for VOD research will shift accordingly. Already, we can see certain topics barely covered in the literature that are now becoming more important. One example is the recommendation and promotion functions of streaming devices – for example, when a smart TV ingests VOD recommendations onto its home screen, which then becomes a primary user interface. Such device interfaces are often difficult to study using existing methods, as they require a different data collection protocol.

Another challenge – always present in audience research – is how to scale up studies of exposure diversity to include more than a small sample of participants. Here, the possibility of data donation is promising. Data donation, used already in social media and advertising research (Angus et al., 2024), allows citizens to donate their viewing histories to researchers for further analysis; these histories are requestable through General Data Protection Regulation in the form of a downloadable spreadsheet. A current project by Karin van Es and Dennis Nguyen of Utrecht University (in progress) is testing the viability of this method to study Netflix usage.

Another potential source for research on interfaces and catalogs is the data VODs provide to government as part of regulatory undertakings or official inquiries. For instance, major SVODs must voluntarily report to Australia’s national media and communications regulator (the Australian Communications and Media Authority) on the number of titles and hours of available Australian content and on how they make Australian content discoverable. SVODs also provide information on catalogs and interfaces in their submissions to public enquiries. These sources of information, while often brief or inconclusive, may offer valuable insights into catalog and interface curation.

Finally, there is the opportunity to partner with industry on VOD projects. Many of the questions for which academics commonly seek answers (How do people use VODs? Which recommendations do they see?) are already familiar to those on the inside of VODs, such as product managers and interactive designers, who often have more data at their fingertips than they have time to analyse. Partnering with industry can be beneficial for both sides, but it may require an academic researcher to prove the value of their research for the business challenges of their VOD partner, and there may be limitations embedded in how the researcher can share findings. Such partnerships may well be the only way that academics can feasibly access VOD data, especially certain forms of interface and usage data.

The practical challenges for access to data under all of these scenarios remain substantial. Nonetheless, we hope that these possibilities present opportunities for scholars, regulators and other research professionals to move beyond the black-box stage of research and explore the many different ‘ways of knowing’ VOD that are now possible.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Australian Research Council (FT190100144).