Abstract

In August 2019, a mass shooter in the United States posted a violent manifesto to the anonymous forum 8chan prior to his attack. This was the third such incident that year and afterwards hosting and security services conceded to calls to drop 8chan as a client, pushing 8chan to the margins of the accessible internet. This article examines the deplatforming of 8chan as a public relations crisis, contributing to understanding ‘governance by shock’ (Ananny and Gillespie 2016) by examining who is shocked and their power to turn shock into online regulation. Online platforms and media attention created opportunities to study how the deplatforming was justified, drawing on the theoretical framework of economies of worth (Boltanski and Thevenot 2006) and controversy mapping methods. The examination finds: (1) that this case of deplatforming indicates the openness of infrastructure-as-a-service companies to external challenges over content, rather than hegemonic control. (2) That regulatory gaps, including the broadness of U.S. free speech laws, made these companies, rather than legal processes, the relevant authority. (3) That framing responsibility as following the law – as Cloudflare attempted to do – misunderstands the importance of normative principles, voluntary measures, and contestation in governing online content, underselling the value of policy-making at other levels. The success of the campaign to deplatform 8chan affirms the significance of PR crises in the regulation of online content, rewarding deplatforming as a political tactic for civil society groups and online networks pushing for governance in regulatory gaps. However, the significance of normative enforcement in this case underlines the difficulties of this semi-voluntary style of governance. While normative opposition to violence contributed to 8chan’s deplatforming, other normative oppositions contribute to deplatforming vulnerable users, as in the moral panics that drive the deplatforming of sexual content (Tiidenberg 2021) and feed suspicion over the ideological application of deplatforming. The ambivalence of PR crises as a strategy for influencing platform governance underlines the need for clarity in policy-making at multiple levels.

On August 3rd, 2019, 23 people were killed in a mass shooting in El Paso, New Mexico, U.S.A. The killer posted a violent manifesto to the anonymous forum 8chan prior to his attack – the third shooter to post their plans to 8chan that year. The site had hosted the manifestos of the shooter in the attack on two mosques in Christchurch, New Zealand, in March, and the shooter who attacked a synagogue in Poway, California in April (Conger, 2019). 8chan allowed users to post to the site anonymously and prided itself on enforcing few limitations on content. In the face of mounting pressure, including being called to testify before U.S. Congress, 8chan’s leadership doubled down on its intention to continue hosting ‘constitutionally protected hate speech’ (Kelly, 2019). However, calls for accountability extended beyond 8chan itself. Web infrastructure companies that enabled 8chan to stay online, particularly security services provider Cloudflare, became objects of public outrage after the Christchurch shootings, but it was not until August and the shooting in El Paso that Cloudflare cut ties with 8chan, which failed to find stable new providers for security and hosting. The site eventually and intermittently resurfaced under a new name, 8kun, serviced by VanwaNet and a Russian service called Media Land, LLC, best known for hosting crimeware used for phishing and spam attacks (Gallagher 2019), marking its fall from the standard, indexed web to the more difficult to access fringes.

The case of 8chan and Cloudflare exemplifies the rise of deplatforming as a political tactic directed at websites, as well as individuals and political groups, but it is not the first time Cloudflare was the target of a deplatforming campaign. In 2017, Cloudflare was identified in a ProPublica investigation as providing services that allowed the neo-Nazi site the Daily Stormer to remain online (Schwencke, 2017). After other services, such as GoDaddy, dropped the site and individuals affiliated with the Daily Stormer began claiming that Cloudflare was ‘one of them’, Cloudflare CEO Matthew Prince discontinued service to the Daily Stormer, saying publicly that he felt unable to participate in larger discussions about the appropriate power of internet infrastructure companies ‘with people calling us Nazis’ (Conger, 2019). Contemporary reporting also suggests that pressure on Cloudflare employees and heightened attention from the media, as well as ‘a ton of people…yelling at us on Twitter’ (Matthew Prince, in Johnson, 2018), including respected figures in the internet community, played a role in the decision to remove service to the Daily Stormer. There are few alternatives to the protection services provided by Cloudflare (Suzor, 2019) and without them the Daily Stormer has been relegated primarily to the dark web (Jardine, 2019). The removal of the Daily Stormer, like 8chan, was not associated with a change in overall policy at Cloudflare.

In deplatforming campaigns, the goal is to the limit the site or individual’s access to audiences and to mark the content as abnormal or unacceptable by constraining its use of mainstream services and sources of funding, such as hosting and advertising. Deplatforming mirrors the ‘no platform’ politics of university campuses, where student groups challenged efforts by openly fascist or racist groups ‘to portray themselves as legitimate parts of the political discourse’ (Smith 2020: 216) by limiting and protesting those groups’ access to university spaces – spaces which confer legitimacy through their social status. During deplatforming campaigns, activist groups pressure platforms, advertisers, and other service providers to withdraw services from targeted offenders for violating platforms’ terms of service or broader social definitions of acceptability. While the actual deplatforming is done by social media platforms and infrastructure service providers in their roles as online content ‘governors’ or ‘custodians’ (Gillespie, 2018; Klonick, 2017), examination of deplatforming events reveals the critical role played by external groups, such as activists (progressive and conservative), advertisers, and influential individuals, mirroring the social shaming (Klonick, 2017) and ‘normative reinforcement’ performed by networked groups (Marwick, 2021).

Deplatforming echoes challenges in other contexts between the legal right to operate and social licence, dynamics that have been prominent in globalized consumer goods production (Ruggie, 2020). In internet and platform governance, the tensions between the internet’s libertarian past and the social licence of major intermediaries such as social media platforms have been under scrutiny almost since their inception (Flew, 2021). Recent years have seen an increase in pressure on tech moderation as part of a ‘techlash’ that includes renewed governance efforts by national governments and civil society groups (Flew, 2021; Suzor, 2019), but also pressure on and from commercial interests such as advertisers (Braun et al., 2019; Kumar, 2019). However, while individual webpages and social media companies have been heavily scrutinized, there has been little robust discussion of how infrastructural services such as hosting, domain registrars, and security services should respond to issues of content, raising questions about their legitimacy as regulators (see Figure 1, Donovan, 2019). These services are not visible to users at most times and their power and even their business models are rarely discussed outside of reporting and activism covering their controversial clients. While some are well-known – GoDaddy for domain hosting, for example – the industry itself is not transparent. Content Moderation in the Tech Stack (Donovan, 2019).

Concerns about legitimacy in infrastructural content moderation echo longstanding questions within internet governance scholarship about the power of commercial actors in online infrastructure and the relationship between content governance online, the rule of law, and human rights (For instance, in Musiani et al., 2016; Suzor 2019). These questions have become more urgent as deplatforming events become more visible and politicized as either anti-free-speech ‘cancel culture’ or necessary controversies that fill the vacuum where responsible content governance should be. To analyze the development and resolution of the deplatforming of 8chan, this article examines the online networks publicly pressuring Cloudflare and other companies to take action against 8chan, how that pressure was framed, and how the companies themselves responded. It asks what the circumstances in which companies chose to deplatform 8chan reveal about the practices of deplatformization and their intersection with online content moderation. Treating these deplatforming cases as PR crises, it argues that rather than an expression of platform hegemony, deplatforming events reflect external pressures on internet infrastructure services to conform to the social norms of influential publics. In this way, the article contributes to understanding ‘governance by shock’ (Ananny and Gillespie 2016) by examining who is (publicly) shocked and what influence they have to turn shock into online policy.

Online platforms and media attention created opportunities to study the deplatforming of 8chan as a test of public justification, drawing on the theoretical framework of economies of worth (Boltanski and Thevenot 2005) and online controversy mapping methods. The examination finds: (1) that this case of deplatforming indicates the openness of infrastructure-as-a-service companies to external challenges over content, rather than hegemonic control. (2) That regulatory gaps, including the broadness of U.S. free speech laws, made these companies, rather than legal processes, the relevant authority. (3) That framing responsibility as following the law – as Cloudflare attempted to do – misunderstands the importance of normative principles, voluntary measures, and contestation in governing online content, underselling the value of policy-making at other levels. The success of the campaign to deplatform 8chan affirms the significance of public relations crises in setting the terms of online content moderation, rewarding deplatforming as a political tactic for civil society groups and online networks pushing for governance in regulatory gaps. However, the significance of social norms and ‘public moral criticism’, or shaming (Klonick 2017; Marwick 2021, (2) in this case underlines the difficulties of this semi-voluntary style of governance. While widely shared social norms of opposition to violence contributed to 8chan’s deplatforming, other normative oppositions contribute to deplatforming and endangering vulnerable users, as in the moral panics that drive the deplatforming of sexual content (Tiidenberg 2021). The ambivalence of PR crises over perceived moral violations as a strategy for influencing platform governance emphasizes the need for robust policy-making at multiple levels.

Literature Review

Removing 8chan from key services that allowed it to be easily accessible online is a case of what Van Dijck et al. (2021) refer to as deplatformization. Deplatformization is similar to the deplatforming of individual users for violation of a platform’s rules or standards (Rogers 2020), but applied to entire platforms or sites that are removed from the infrastructural services that allow them to be accessible across networks (Van Dijck, et al., 2021). These removals happen in ‘in a gray area where responsibility is unregulated’ (Van Dijck, et al., 2021: 12). In the case of 8chan’s removal, Cloudflare did not have a particular policy against the content on the site. Instead, the controversy over 8chan and its use of Cloudflare’s services existed in a regulatory gap between national, international, and corporate policy-making efforts. Like deplatforming, deplatformization is primarily associated with efforts to restrict far right organizing by denying individuals, media outlets, and platforms access to audiences and infrastructure (Mirrlees, 2021; Van Dijck, et al., 2021). However, similar tactics have sometimes been used by other groups to force journalists, activists, and members of marginalized groups off platforms (Suzor 2019). Deplatforming is also the term used to describe the significant restrictions placed on sexual content and the effects these restrictions have on sex workers, educators, and activists in platformized spaces (Tiidenberg, 2021). Van Dijck, et al. (2021) argue that deplatformization is indicative of the hegemonic control wielded by Big Tech platforms, particularly the members of the GAFAM (Google, Amazon, Facebook (now Meta), Apple, and Microsoft), and reflective of the restructuring of much of online life around the infrastructure, economic model, and governmental frameworks of platforms (Poell, et al. 2021). The power of this platformization is to allow large platforms to push smaller platforms to the margins of the accessible internet. Those deplatformed, including representatives for 8chan, often argue that deplatforming violates their right to free speech, as understood in U.S. legal contexts. Acknowledging this concern, Van Dijck et al. (2021) and Freelon et al., (2020) note potential negative democratic implications of attempting to govern ‘ideologically diverse polities’ (Freelon et al., 2020: 3) in a situation where deplatforming or deplatformization is an active political strategy. While the particular power of Big Tech platforms raises novel policy questions, examined in more detail below, the politics of attempting to deplatform the extreme right are preceded by decades of anti-fascist organizing under the banner of ‘no platform’.

‘No platform’ originated in the United Kingdom. The tactic – and long-running policy of U.K. student unions – was used to challenge efforts by explicitly fascist and racist groups to ‘portray themselves as legitimate parts of the political discourse’ (Smith, 2020: 216) by denying them access to space on university campuses, picketing or disrupting their speeches, or petitioning for speakers to be disinvited from venues and events. Targets of protest in the United Kingdom often characterized all efforts to challenge their speech as instances of no platforming and argued that it denied them rights to free expression (Smith, 2020). Targets of deplatforming similarly invoke free speech rights to contest their treatment, suggesting (on smaller social media platforms that tolerate more extreme content) that the content moderation practiced by mainstream social media platforms does not respect those rights (Donovan et al., 2019; Jasser, 2021). This argument is complicated in commercial spaces, since free expression is a right that restricts actions by the state, rather than by private companies. Companies are free to moderate their content as they choose, explicitly protected from liability for moderation choices by Section 230 in the United States and similar legislation elsewhere – policies set during the internet’s ‘libertarian past’ (Flew and Gillett, 2021: 9) now complicated by the dominance of online platforms in global communication (Suzor, 2019). The free speech argument is also complicated because deplatformization includes, and is sometimes restricted to, demonetization – the restriction of access to revenue from ads, sponsorship, merchandise sales, or amplification from algorithms – rather than the ability to post particular content (Mirrlees, 2021; Van Dijck, et al., 2021). In that way, the stakes of deplatforming are not always the ability to speak, but rather the ability to mix with, and profit from, ‘the rest of us’ (Shane Burley in Renton 2021: 122) on the largest and most well-known platforms. This is not to deny the impacts of deplatforming on those targeted, but to emphasize that events that currently fall under the label of deplatforming or deplatformization can be viewed as an evolution of strategies to contest the legitimacy of organized groups by depriving them of resources and markers of social acceptance. The history of the ‘no platform’ movement underlines the importance of looking at deplatformization in the context of multiple rights, as well as the need to examine the social groups that shape how platform power is applied (Caplan et al., 2020). Activist publics are central to both the politics of ‘no platform’ and the deplatforming of online figures, groups, and sites. The role of networked publics, what Dutton and Dubois (2015) call the ‘Fifth Estate’, but which also fit Marwick’s (2021) description of ‘morally motivated networked harassment’ is overlooked in the discussion of deplatforming and deplatformization in Rogers (2020), Van Dijck et al., (2021), and Renton (2021) but appears to play a significant role in the deplatforming of 8chan.

The intense public pressure that surrounded 8chan’s deplatforming exposes some boundaries in online content moderation, indicating the kinds of content and circumstances that are publicly indefensible for commercial actors. The rules made during controversies like these are sometimes called ‘governance by shock’ (Ananny and Gillespie, 2016) and critiqued for being ‘ad hoc, incremental, and mutable’ (Flew, et al., 2019: 39) as well as likely influenced by external factors, such as parliamentary hearings. However, rulemaking in response to crises and challenges also informs the more enduring parallel governance structures that frame content moderation on some platforms, such as Facebook, which has pursued novel policy structures in the face of sustained criticism from the public, regulators, academics, and the media (Klonick 2017, 2019). Outside of platforms, much of the key infrastructure of internet governance is under the control nominally multistakeholder processes that privilege private companies (DeNardis, 2014). This industry-led governance environment provides little opportunity for individual appeals over content or process (Gorwa, 2019) and in many environments, such as domain names and copyright enforcement, seems to be more accessible to corporate actors than the wider public (Karanicolas, 2020). The companies that hold much of this power can be identified and pressured when they are visible as a point of regulation (Mueller, 2015), putting them at the centre of intense controversies over content, including activist efforts targeting infrastructural elements of internet service, such as wifi and web hosting (Lovink, 2020). Given the significance of these private actors, internet governance scholars have scrutinized them closely, identifying the challenges of creating legitimacy around governance decisions in an environment that includes overlapping legal jurisdictions, terms of service requirements, and public pressures (Klonick, 2019; Suzor, 2019). While there is a ‘regulatory turn’ toward increasing ‘nation-state and supranational regulation’ in internet governance (Flew and Gillett, 2021: 9), within the frameworks of national law and policy, individual companies will continue to have power over content rules (Suzor, 2019). In this complex environment, controversies such as those that surround the deplatforming of 8chan act as one forum for negotiating the legitimacy of governance decisions with potentially far-reaching consequences for content moderation online.

The deplatforming of 8chan reflects ongoing developments in corporate governance and activism, in the governance of internet infrastructure, and renewed pressure on businesses by networked social activists. To incorporate those developments, this article examines the businesses involved in this case and their ability to define and engage with political discourses and harms in a media environment that facilitates new forms of ‘everyday politics’ (Highfield, 2017) and communicative power for networked publics (Dutton and Dubois, 2015; Marwick, 2021). This article considers questions of content and strategic communications concerns in the privatized, but not advertising-supported, environment of internet infrastructure-as-a-service companies. It treats companies and their public relations concerns as part of the ‘functional public sphere’ (Edwards, 2018) that includes strategic and professional motivations alongside the political interests of citizens, civil society, and others. Public relations work has moved toward the role of ‘social brokers’ (Cronin, 2018: 107), forging new relationships that seek to redress, in part, democratic practices that lag behind the formation of publics. In this environment, corporate social responsibility is frequently paired with outright advocacy, which is then mediated through corporate channels such as social media and blogs (Aronczyk, 2013). Dutton and Dubois (2015: 60) note that at the time they were writing ‘networked individuals are challenging the information practices of major Internet companies, such as Facebook and Google, and leading them to alter their approaches to protecting the privacy of their users and many other information policies, driven by what can easily be identified as Fifth Estate accountability’. Since then, the pattern of public pressure, mediated in part by social media platforms, on businesses to force changes to their policies or those of their affiliates has only increased, often pushing corporations beyond self-responsibility and towards wider advocacy (Gaither, et al., 2018; Hill, 2020). This increase mirrors growth in internal pressure from employees over political issues (Reyes, 2021) within a framework of critique and justification (Edwards, 2020). While self-mediated and proactive corporate social advocacy can blur the definition of activism, reactive and reluctant corporate social advocacy provides insight into boundaries of acceptable content and moderation and its implications for commercial content governance. For internet governance, these developments include stakeholders and corporate social responsibility concerns in what is sometimes called the ‘social license to operate’ (Flew and Gillett, 2021). By treating the deplatforming of 8chan as an example of a public relations controversy with content governance implications, this article incorporates networked publics into examining deplatforming in the context of internet governance.

Theoretical framework and methods

This article’s research approach maps the controversy in the 8chan case, incorporating a theoretical framework taken from Boltanski and Thevenot’s (2006[1991]) orders of worth. Controversy mapping methods include identifying the primary actors, constructing a timeline, and examining the resolution of an identified controversy and are compatible with frameworks of justification (Patriotta, et al., 2011). Controversy mapping has been used to track sociocultural controversies, such as GamerGate, online (Burgess and Matamoros-Fernandez, 2016) and identified by Scholzel and Nothhaft (2016: 64) as a useful tool for understanding the web-enabled ‘shit storms’ of modern public relations controversies. At the same time, this approach is limited by a lack of access to Cloudflare employees who might describe the company’s motivations directly. Cloudflare and the other companies are subject to the kinds of ‘visibility management’ (Flyverbom, et al., 2016) practiced within organizational communication, but a controversy map reliant on publicly available information is not able to access the exact motivations behind the actions taken by Cloudflare. Rather, this article documents public efforts to hold Cloudflare responsible for the content of its client’s sites and the ways those efforts appear to be rewarded by Cloudflare’s actions and those of other infrastructure services providers, in ways consistent with current understandings of public relations practices of managing external public critique (Hill, 2020). Boltanski and Thevenot’s (2006) economies of worth provide frameworks for identifying the types of arguments forwarded by different actors, whether and how they are adopted by other actors in the network, as well as the compromises involved as the controversy is negotiated, in keeping with what Edwards (2020) has identified as the tension central to corporate acts of transparency. These orders are used to justify the value or legitimacy of an actor or an action and include civic (common over the individual), fame (influence, attention from others), market (competition), domestic (individual responsibility to key relationships), inspired (spiritual or transcendent), and industrial (efficiency).

This project relied on both digital and manual methods for data collection. The digital portion of this research made use of Sysomos software to gather data and perform initial categorization of the collected social media data. Sysomos is social listening software designed primarily for use by marketing professionals, and so reflects the priorities of this professional group, particularly an interest in identifying networks of communication and influential members of those networks. Data collected included lists of news stories associated with the keyword searches outlined below, posts from social media platforms, particularly Twitter, Tumblr, and forums such as Reddit, and maps of online communities that formed on Twitter. In addition to the collected social media statements, I manually added items, such as public statements, videos, or news reports, referred to by sources, as well as statements from Cloudflare and 8chan themselves. The collected social media posts and accompanying documents were grouped according to the identified networks and coded in NVIVO, qualitative analysis software that facilitates organizing and analyzing documents, using a combination of deductive and inductive coding. The deductive codes were based on Boltanski and Thevenot’s economies of worth. Inductive codes were created to track emerging themes such as the importance of past incidents.

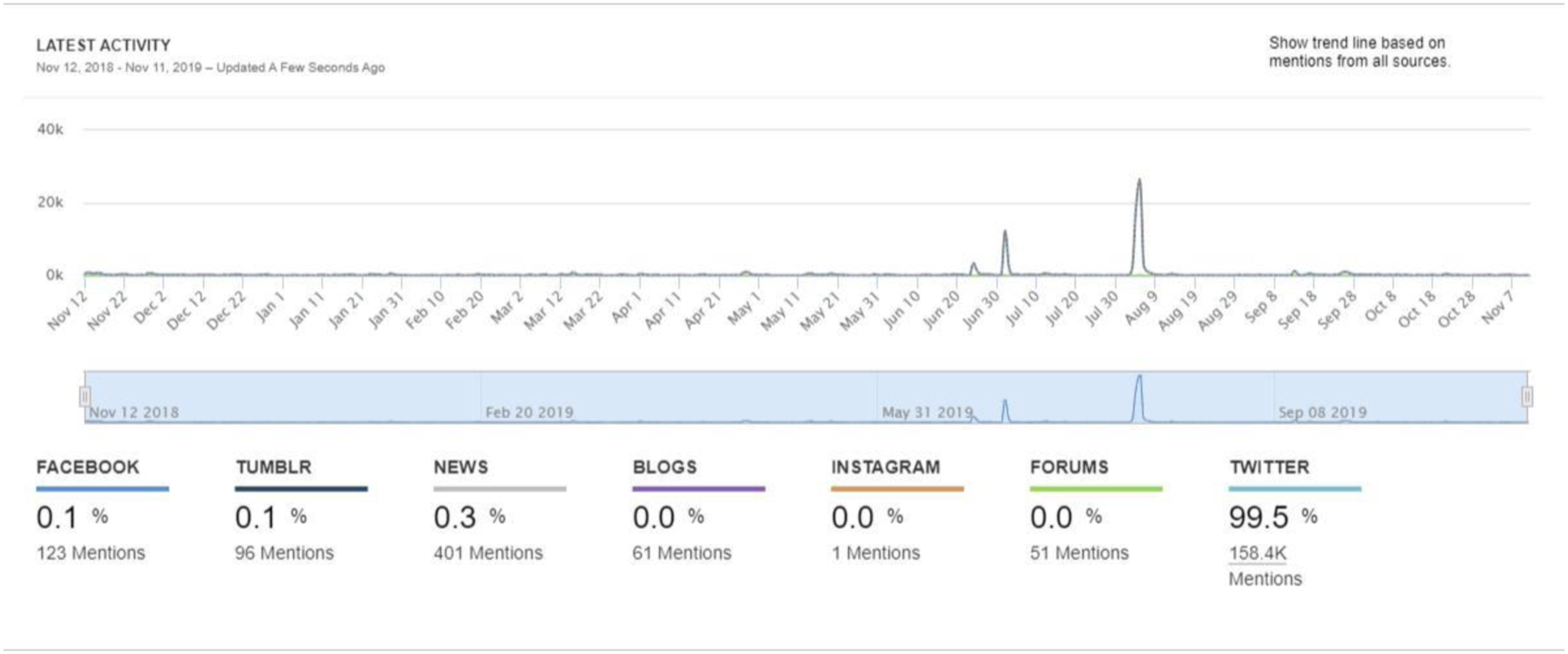

For Cloudflare, social media communication during the controversy was collected using keywords ‘@Cloudflare’ ‘cloudflare’ ‘8chan’ ‘elpaso’. Sysomos software was used to graph social media engagement over a 12-month period including the decision to deplatform 8chan. The collected media communication for the Cloudflare dataset included 108,441 posts, primarily from Twitter (69, 314 from 48,155 distinct users), but including posts from forums (9426), Tumblr (3404), news sources (3476), and blogs (288). The analysis below focuses primarily on the Twitter data, supplemented by posts from other media.

Results

During the controversy over 8chan, activity directed at Cloudflare was strikingly higher than the baseline activity directed at either company. The charts below (Figure 2) give a sense of how quickly and intensely attention focused on the business and how quickly that attention dissipated once connections to 8chan were cut. Cloudflare Social Media Engagement, November 12, 2018–November 12, 2019. The two earlier spikes, in June and early July, are related to two separate outages of the company’s services (Whittaker, 2019).

The online controversy attracted commentary from four main groups – deplatforming activist groups, online alt-right networks, and tech journalists and mainstream journalists. There was substantial overlap between the tech journalism and mainstream journalism categories, and they are treated as a single group in the analysis that follows. Cloudflare and 8chan were added to these three groups to examine whether and how central figures in the controversy responded to the arguments put forward by other actors.

Sleeping giants and activists

This group included communication from other civic groups, such as the Anti-Defamation League, but centred on Sleeping Giants, a social media activist group that operated an anonymous Twitter account that reported sites that host hate speech, misogyny and other extremist views to their advertisers in order to demonetize those outlets. By exposing the connections between mainstream brands and extreme content, Sleeping Giants works to contest the legitimacy of targeted sites as recipients of profits from ‘normal’ businesses – a strategy that echoes ‘no platform’ organizing, even as it raises uncomfortable questions about the role of these businesses in content moderation (Braun et al., 2019). Sleeping Giants had been pressuring Cloudflare over providing services to 8chan since the Christchurch shooting in March of 2019 and had been blocked by Matthew Prince, Cloudflare’s CEO, earlier in the year. Sleeping Giants’ messages were widely circulated, amounting to four of the 15 most circulated posts in the Twitter sample (12.7% of the total sample, or 8791 Tweets) and worked to paint 8chan itself as a bad actor, while making the infrastructure that kept 8chan online visible to the public with statements such as ‘8chan, where three racist mass killers have posted their screeds, is protected by @Cloudflare, hosted by @tucows and is monetized by @amazon. If you’re doing business with a site that helps people spread violent racist ideologies, you are just as culpable. Full stop’. Their tweets were some of the few that directly engaged with Cloudflare’s market value, asserting that it was ‘ridiculous’ that a company ‘worth 3.5 billion’ would work with 8chan (Sleeping Giants, 2019) and attributing their eventual decision to cut ties with 8chan to an upcoming IPO. The group did not engage with legal or process arguments, instead underlining how Cloudflare’s business put it in a position to protect 8chan, profit from its activities, and potentially demonstrate solidarity with some groups over others – contrasting Cloudflare’s action in this case with earlier decisions to stop providing service to Switter, a platform used by sex workers (contemporary reporting suggests that Switter may have lost services because of the passage of FOSTA-SESTA, a U.S. law that made online intermediaries liable for content related to sex trafficking). Discussion in this group emphasized Cloudflare’s provision of services as matters of choice, positioned the company as morally responsible for protecting known bad actors, but not vulnerable groups such as trans people (in the case of Kiwi Farms, which facilitated the harassment of trans individuals,) and sex workers (Switter); and publicized Cloudflare’s connection with bad actors, past and present.

Right-wing Twitter and 8chan users

The right-wing community the Sysomos software identified on Twitter at first reads like a who’s-who of the U.S. right-wing. Members include Donald Trump, Breitbart, Tucker Carlson, the GOP (the Twitter handle of the Republican Party), Fox News, and other prominent right-wing figures. However, on closer inspection, these individuals were included in the network after being invoked by other speakers. Only Breitbart, BNONews, and the Daily Caller were communicating directly in this sample. The rest of the tweets in this group invoked the right-wing figures listed above without eliciting a response. However, the absence of involvement by these key figures did not dampen the level of engagement around the topics this group discussed. Accounts in this community had tens of thousands of followers. Moreover, four of the 15 most circulated tweets in the sample came from this group, amounting to 9551 of the 69,313 total tweets collected, or 14% of the total sample for Twitter. This group was the only part of the Twitter sample strongly critical of Cloudflare’s decision. Those tweets include conspiratorial references to ‘deep state’ activity, shifts in Cloudflare’s board membership, and efforts to silence Q, a cult figure on 8chan’s message boards. No other groups referenced the conspiratorial nature of this line of discussion, which, combined with the most prominent ‘members’ of this network only being invoked by other speakers, suggests that the ideas discussed were still part of a relatively isolated fringe at the time. During the controversy, these ideas appeared to have limited influence outside of this network and these topics were not taken up by other actors in the sample.

Journalism

After the mass shooting in El Paso on August 3rd, 2019, media outlets very quickly publicized the connection between 8chan and Cloudflare and began to ask whether Cloudflare would stop providing services to 8chan. For example, within a day of the El Paso attack, tech journalist Julia Carrie Wong (2019) noted the similarities to the Daily Stormer incident and declared that she had ‘reached out to CEO @eastdakota to see if the company might change its mind about providing services to 8chan in the wake of today’s attack’. Media were the largest group in the sample and primarily made up of established tech journalists, such as Aaron Sankin of the Markup and Kate Conger at the New York Times, and a small number of academics and politicians. The tweets and articles circulated by this group were overwhelming coded as domestic and civic (with 130 items coded as both), reflecting a debate over whether legal standards or harm reduction was the legitimate civic principle in this case and the overall preoccupation with Cloudflare’s responsibility in providing services to 8chan. There was also a smaller discussion of the effectiveness of deplatforming on reducing hate speech, with journalists highlighting academic and activist voices that argued that deplatforming was both efficient (faster than the courts) and legitimate (hate speech and incitement not being protected categories of speech) balanced against ‘slippery slope’ arguments that deplatforming some sites would create further pressure on infrastructure services to moderate online content. Mainstream media covered similar themes to tech reportage with heavier emphasis on 8chan’s founder, Fredrick Brennan. Brennan no longer controls 8chan and told the New York Times on August 4th that he would like to see the site shut down – a quote circulated by multiple sources in the sample.

A significant portion of this network recirculated articles on the deplatforming of the Daily Stormer and online hate to provide context to the 8chan case, including Sankin’s (2019) ‘The Dirty Business of Hosting Hate Online’ on Gizmodo and Johnson’s (2018) ‘Inside Cloudflare’s Decision to Let an Extremist Stronghold Burn’ on Wired. For example, Talia Lavin shared their article ‘Neo-Nazis of the Daily Stormer Wander the Digital Wilderness’, written in 2018 for the New Yorker, with the comment ‘The likely path 8chan will travel after Cloudflare dropped them’. Likewise, Donie O’Sullivan of CNN circulated predictions that ‘8chan will go offline for a week and then move to BitMitigate, which now protects The Daily Stormer after Cloudflare dumped them in 2017’. The circulation of these reports in 2019 publicized Cloudflare’s ability to impact sites like 8chan, demonstrated the growth in understanding Cloudflare’s relationship to content moderation, and directed attention to what might happen next.

In addition to journalists contextualizing the ongoing case, a vocal section of users circulated tech journalism as a framing device for arguments that Cloudflare had not done enough and should ‘get no credit for this’. Specifically, this subgroup called out Cloudflare for providing service to the sites such as Kiwi Farms and for previously forwarding the details of complaints, including information identifying those complaining, to 8chan, with predictable negative effects. This last complaint was the third most quoted tweet in the sample, circulated 3836 times, and served to depict Cloudflare as either naïve about the damage done by some of its clients or complicit with it. In this network, Cloudflare’s power over 8chan, its significance in maintaining access to extremist content, and its history of removing an infamous site as a client reinforced the visibility of the company as a point of content regulation that could impact 8chan and a moral agent in the controversy.

8chan and Watkins

During the period after Cloudflare removed 8chan as a client, 8chan communicated to say that the site was down and they would try to restore service. It was not until August 6, 2020, after 8chan had exhausted its options, that its owner, Jim Watkins, released a YouTube video claiming that ‘common sense will prevail’ and that the El Paso shooter had originally uploaded their manifesto to Instagram – a claim that was quickly contradicted by Instagram (Somos, 2019). On the same day, Jim Watkins was summoned to appear before the U.S. Congressional Committee on Homeland Security, which took place September 5th, 2019. Before the hearings, Watkins released a statement through his lawyer committing to continue to host ‘constitutionally protected hate speech’ and to work with law enforcement. He also speculated that social divides stemmed in part from the ‘celebration and exaggeration of victimhood’ (Watkins and Barr, 2019). Watkins’ testimony has not been made public (Kelly, 2019). This line of argument seemed to suggest that legal limits focused on individual rights (a very narrow civic/legal argument) were a more appropriate logic to justify 8chan’s lack of moderation than the frame of organizational responsibility and civic concerns over solidarity and risk to which critics held the platform. Watkin’s and 8chan did not communicate within the collected sample, suggesting that the site and its owner operate outside of the communities of activists, media, and tech companies with which Cloudflare engages and in which it is visible as a potential regulator.

Cloudflare

On the evening of August 4th, Cloudflare’s lawyer, Douglas Kramer, was quoted in Vice’s Motherboard describing Cloudflare as a ‘largely a neutral utility service’ and saying it had no intention of removing 8chan as a client. At 9:44 p.m. the same day, a blog post by CEO Mathew Prince appeared on Cloudflare’s website, announcing that Cloudflare would no longer provide service to 8chan.

Within the coding framework, Cloudflare’s responses were overwhelmingly concerned with civic issues, especially the law and legality of Cloudflare’s actions, and domestic issues, such as Cloudflare’s organizational responsibility in pursuit of its stated mission to contribute to ‘a better internet’. Cloudflare’s written statement about removing services for 8chan hinged on defining the site as directly responsible for enabling loss of life, and unwilling to take responsibility for what happens on their platform – ‘lawless by design’ (Prince, 2019). In contrast to civic ideals of due process and the rule of law, Cloudflare depicted its own decision to drop 8chan as selfish, as taking ‘the heat off us’ and ‘solving our own problem’ at the expense of clear principles, and potentially even at the expense of law enforcement’s ability to track potential dangers. This line of argument suggests that, outside of the lack of policy directives that would make it clear when content should be removed, Cloudflare’s ‘problem’ is being publicly associated with bad actors, and that severing the relationship by deplatforming one of those actors is a solution. In addition, it positions 8chan as irresponsible in ways that forced Cloudflare to take responsibility for it – a similar argument to how activist groups painted Cloudflare as culpable because its services kept 8chan online.

Little of the discussion around Cloudflare invoked profits, the market, or its competitors. Market motivations may have operated indirectly, through concerns about reputation and media scrutiny. Content control is not a central aspect of Cloudflare’s business model. Prince has suggested that one of the reasons that social media companies have more legitimacy to moderate content is the advertising business model (‘it’s an easier question for [Facebook and YouTube], because they’re advertising-supported companies. If you’re Procter & Gamble, you don’t want your ad next to terrorist content, and so the business model and the policy line up’ (Matthew Prince, in Johnson, 2018)). However, the increasing visibility of Cloudflare as a point of regulation for extreme sites may have changed how content is evaluated. Cloudflare’s August 2019 Registration form in preparation for its initial public offering (IPO) listed association with its clients and their content – along with their responses to those clients – as risk factors to their business (Cloudflare, Inc., 2019).

Beyond cloudflare: Voxility and Tucows

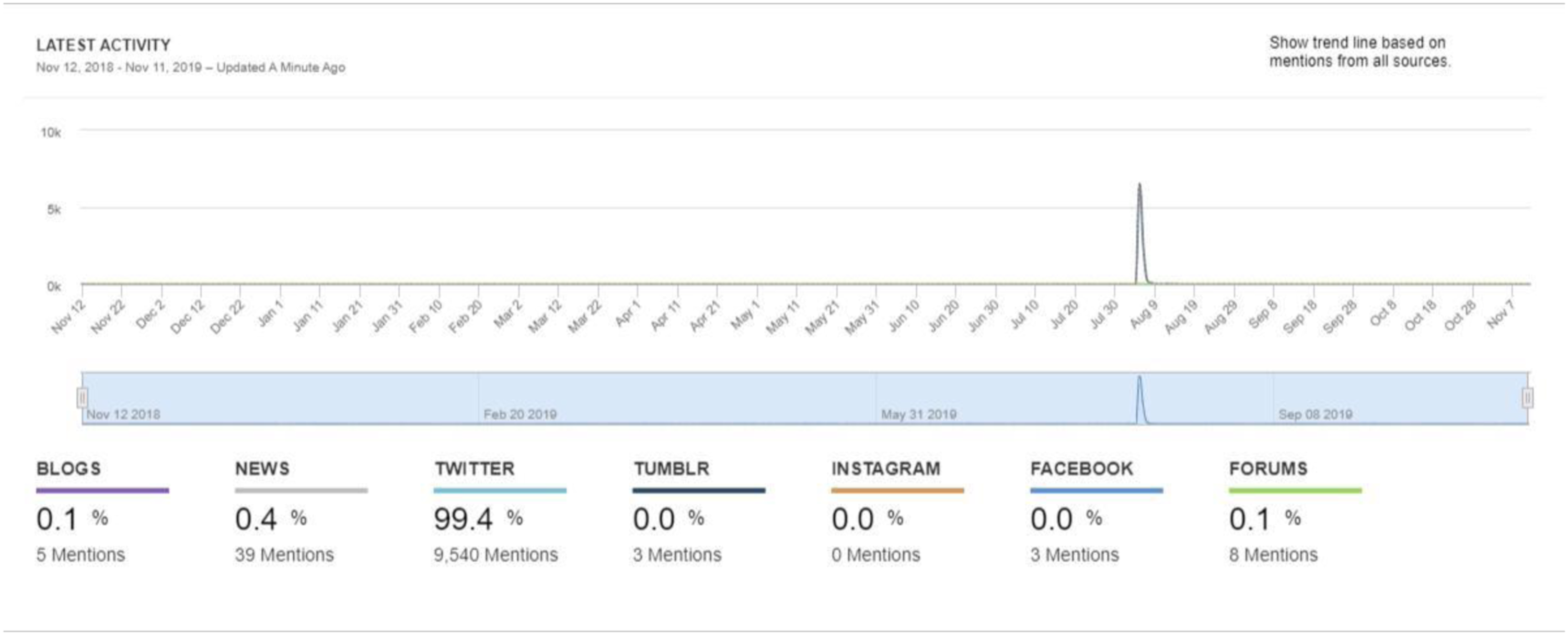

The ability for typically invisible elements of internet infrastructure to become visible points of regulation through association with an infamous site went beyond Cloudflare. During 8chan’s deplatforming, motivated networks of activists and journalists followed the movements and contacted any company that became associated with the site. After Cloudflare stopped providing services to 8chan, a company called BitMitigate, which has become famous for sheltering extreme sites when they are deplatformed, stepped in on August 5th, 2019 and 8chan was briefly accessible. Alex Stamos, of the Stanford Internet Observatory, soon alerted the world via Twitter that BitMitigate actually owned very little of its own infrastructure and the servers hosting and protecting 8chan were owned by Voxility, an infrastructure-as-a-service company based in San Francisco. Social media attention to Voxility skyrocketed (see Figure 3) and Voxility promptly stopped providing service to Bitmitigate, explaining in later interviews that (a) it was the second issue they had had with BitMitigate hosting content that violated their terms of service (the first was with BitMitigate hosting the Daily Stormer) and (b) they understood it as an issue of hate speech, which they would not tolerate on their servers. This view was underlined in Voxility’s online interactions with some of the thousands of users who turned their attention to the company with messages such as ‘Stop hate’ to which Voxility replied, ‘We did!’ (see Figure 4). Voxility did not publicly express any of the concerns about due process, rule of law, or legitimacy that characterized Cloudflare’s response. Rather, they publicly stated that their position was a matter of hate speech and public safety, civic concerns about a democratic community: ‘We do not tolerate hate speech. This is a firm stand from our team and we count on the support of our community online and via social media platforms in keeping the internet a safer place’ (Maria Sirbu, Voxility’s vice president of business development, in Makuch, 2019). The online scrutiny directed at Voxility was substantially less than that directed at Cloudflare, but more than Voxility ordinarily received. Alex Stamos made the connection between Voxility, BitMitigate, and 8chan public in a Tweet at 11:02 a.m. on August 5th, 2019. By 11:55 that same morning, he had updated the thread to note that Voxility had cut BitMitigate off. By 2 p.m. the amount of traffic directed at Voxility had plummeted, and within 3 days traffic concerning Voxility and 8chan had dropped to nearly nothing. Voxility Social Media Engagement, November 12, 2018–November 12, 2019. Stop Hate. We did!

Similarly, Toronto-based hosting service Tucows became subject to significant media attention when 8chan was identified as receiving hosting services tied to the company. Tucows stopped hosting 8chan between August 4 and 5, 2019. While headlines initially characterized this development as an intentional part of 8chan’s deplatforming, 8chan appeared to have been transferred from Tucows’ network of domain name resellers independently, though the company publicly clarified that they were ‘definitely not in the business of wanting to be associated’ with 8chan (Buck, 2019). Voxility and Tucows were part of a series of stops along the way for 8chan, which appeared on networks owned in the United Kingdom and by Chinese companies such as Tencent before being removed from each network once they were made aware of 8chan’s presence. The site briefly reappeared as ‘8kun’ using a Russian hosting service and security from Vanwanet (a U.S. company) later in 2019 (Gilbert, 2019) and was periodically accessible online and through dark web servers in the years following. After posts associated with the attack on the U.S. Capital were found on 8kun, the site lost protection services again (Paul, et al., 2021), largely because journalists and activists continued to report its presence to companies providing services to the site (Krebs, 2022). While Cloudflare was the most high-profile and drawn out element of the deplatforming of 8chan, the afterlife of the controversy demonstrates how internet infrastructure companies were made visible as points of regulation and targeted by networked publics.

Discussion

Cloudflare’s services are vital to controversial websites that want to stay easily accessible online. There are few alternatives to the security services it provides (Suzor, 2019) and some of those alternatives are even less inclined to host controversial sites. Though these companies sometimes depict themselves as closer to utilities or common carriers than media platforms, there are no legal restrictions on them exercising a moderation role – rather, companies are explicitly protected when they do moderate content. Once connected to 8chan and the El Paso shooting in popular media outlets, it was very difficult for Cloudflare and subsequent companies to justify why they would continue providing services to 8chan. This difficulty mirrors broader developments in public contestation of corporate narratives of social responsibility (Edwards, 2020), examined in this article within the theoretical framework of Boltanski and Thevenot’s (2006/1991) economies of worth. This article demonstrates how well-connected individuals and groups, such as Sleeping Giants and Alex Stamos, consistently made the connection between the targeted site and its technical support systems, how this story was picked up by media outlets and journalists, and how, in the absence of another credible authority, Cloudflare struggled to justify taking a neutral position toward the site. Rather than demonstrating the hegemony of key platforms and their allies, the deplatforming of 8chan appears to demonstrate the openness of tech policy to public contestation and normative arguments over propriety.

Deplatforming campaigns work when targeted intermediaries accept arguments that certain sites and content are abnormal – too far outside accepted approaches to content moderation. In this way, deplatformization campaigns targeting alt-right and alt-tech platforms again echo the politics of no platform as they contest the legitimacy of the alt-right by disrupting their business operations (Mirrlees, 2021). However, as Van Dijck, De Winkel, and Schafer (2021) note about the social media platform Gab, and as many have noted about the persistence of 8chan (Conger, 2019) and the Daily Stormer (Jardine, 2019: 1), sites that are removed from mainstream infrastructural services typically continue to exist in the margins of the mainstream internet. Being deplatformed under public pressure is not always a threat to the ability to speak and publish. Rather, it disrupts access to mainstream audiences, advertising revenue, and the legitimacy that comes from association with the services of mainstream providers. In some cases, the disruption of access to services contributes to the ‘isolation of the far right’ (Freelon, et al., 2020: 1201) from the rest of the political spectrum, particularly as these groups develop parallel technical ecosystems (Dehghan and Nagappa, 2022; Donovan, et al., 2019), and feeds disputes over the ideological alignment of deplatforming efforts. However, deplatforming campaigns are not exclusively aimed at right-wing groups. For example, sexual content is often central to public pressure over internet governance, most recently demonstrated by the backlash against Pornhub, which focused attention on payment intermediaries (Tusikov, 2019). At other times, the right-wing itself has benefitted from contesting how content moderation is applied, as in the case of conservative accounts that were exempted from moderation on Facebook over concerns of public accusations of bias (Solon, 2020). Parsing the ideological messiness of deplatforming events is complicated by the strong incentives that the alt-right and alt-tech have to maintain their connections to mainstream platforms and services (Donovan, et al., 2019) and to deny that hate speech and violent rhetoric are the reason that their content is being constrained – in essence, to insist that they are engaging in legitimate political speech or legally protected content moderation, rather than acting as an extreme fringe or protecting violent communities.

Cloudflare’s decision to refuse service to 8chan – and a similar decision in 2022 in a crisis over the harassment forum Kiwi Farms (Prince, 2022) – exposes the degree to which governance of content online continues to be contingent on the decisions of for-profit businesses, even in the early period of a regulatory turn (Flew, 2021). This article asked what the circumstances in which companies chose to deplatform 8chan reveal about the practices of deplatformization and its intersection with online content moderation. The deplatformization of 8chan reveals how regulatory gaps or ‘gray areas’ (Van Dijck, et al., 2021) are filled by infrastructure-as-a-service companies under public pressure from activists, media, and influential individuals who make these services visible as enablers and potential regulators of identified problematic online content. These regulatory gaps are more apparent in the U.S., where Section 230 is the law, and where platform governance has followed the norms of U.S. law (Klonick, 2017), though efforts to demonetize right-wing media using similar tactics have been exported to numerous non-U.S. contexts (Braun, et al., 2019). The resolution of this controversy – an uneasy compromise of individual and organizational responsibility, rather than a legal or a policy shift – demonstrates that the regulatory gaps that fuel deplatforming controversies remain open.

Deplatforming 8chan was not an arbitrary decision by Cloudflare or the other companies involved. Rather, it was the result of a political process that included networked publics, NGO and activist pressure, and reputational concerns that influenced how and when Cloudflare acted. The deplatforming campaign succeeded in August, though groups, including Sleeping Giants, had been pressuring Cloudflare over 8chan to no effect since the Christchurch shooting in March. In March, Facebook’s livestreaming policies were the focus of moderation efforts, rather than 8chan and its infrastructural supports. In August, 8chan and its service providers had little competition for attention. The communities examined as part of this controversy overwhelming framed the case of deplatforming 8chan as a referendum on Cloudflare’s judgement. Cloudflare was simultaneously praised for its choice, castigated for not doing more, and argued to have overstepped. The overlap between framing the controversy in terms of Cloudflare’s responsibility for its business relationships – a domestic frame, in Boltanski and Thevenot’s (2006) terms – and civic concerns are in keeping with an established compromise. In Boltanski and Thevenot’s framework, the domestic and the civic come together in applying individual good sense and respectful behaviour in civic environments. This frame is reinforced in Boltanski and Chiapello (2005), in which the ‘new spirit of capitalism’ responds to social criticisms by incorporating new practices of responsibility and workplace identity. In this case, that new spirit seems manifest in Voxility’s ability to present itself as proactive and responsible, in touch with current sensibilities surrounding online threats. In contrast, Cloudflare’s decision to deplatform 8chan without creating an ongoing policy appeared motivated by threats to its reputation and open to interpretation by various publics, lacking, as Suzor (2019) argues about the deplatforming of the Daily Stormer in 2017, convincing due process. Both companies have abuse departments whose mandate includes copyright violations, attacks and phishing, as well as malicious activity such as child pornography and direct threats of violence. Voxility states that any clients engaging in such activity ‘will be blocked immediately’ (https://www.voxility.com/contact-abuse). Cloudflare instead gives contact information for offending websites if complainants submit a ‘substantially complete abuse report’ (https://www.cloudflare.com/abuse/). Neither company makes public their terms of service, definitions of abuse, or routes for appeals. In place of due process internal to the companies or imposed by a legal system, the deplatforming of 8chan resembled the ‘pluralistic accountability’ identified by Dutton and Dubois (2015) or the enforcement of norms via ‘morally motivated networked harassment’ identified by (Marwick, 2021).

There was virtually no appeal, by Cloudflare or by the journalists covering the story (with the exception of Aaron Sankin), to any authority or source of legitimacy except for (U.S.) law. Cloudflare presented 8chan as lawless, and themselves as lawless in their response. Prince expressed a desire for boundaries to be set through ‘due process of law’ (Prince, 2019). He rejected the idea that Cloudflare had the ‘legitimacy’ to determine ‘what content is good and bad’. Nor could Cloudflare be ‘transparent and consistent’ in its policies because what it does is not (ordinarily) visible to users. In making this argument (and similar ones regarding Kiwi Farms in 2022), Prince was contrasting Cloudflare’s services to those of social media platforms, whose transparency to users can be debated (e.g. by Gehl, 2014; Gillespie, 2018). Prince’s occupation with transparency as a principle of legitimate governance is shared by scholars of digital constitutionalism, who place it alongside stability, consent, and equal application in their analysis of the terms of service of digital platforms (Suzor, et al., 2018). However, Prince’s statement seems to discount the growing public recognition of Cloudflare’s role in supporting online content, as well as the company’s ability to set up a more robust system around content or engage policymakers about responding to issues such as hosting violent manifestos.

In his reporting on the topic, Aaron Sankin noted that Cloudflare continued to provide services to ‘56 other hate groups’, aside from those associated with 8chan (Sankin, 2019). Sankin interviewed members of organizations that track hate groups – such as the Anti-Defamation League (ADL) and the Southern Poverty Law Centre – to identify groups associated with hate speech and their online hosts. In media interviews about the deplatforming of 8chan, representatives of the ADL suggested that while infrastructure companies did not have the expertise in house to decide what content was dangerous and what was not, they could develop legitimate policy responses to online hatred in partnership with groups with that expertise (ADL, 2019). In addition to suggesting that any hegemonic banishment of hate groups by Cloudflare and other infrastructural services remains fragmented and incomplete, the ability to identify known hate groups online suggests policy options beyond waiting for new laws. Other companies, including digital platforms and payment intermediaries, have created rules against hate speech and a range of other harms independently and in partnership with civil society organizations. They have increased their use of those powers in reaction to controversial events and media attention such as the Unite the Right march in Charlottesville in 2017, though not without controversy and inconsistency (Tusikov, 2019). There are also initiatives such as the Ranking Digital Rights Index, Global Disinformation Index, The Christchurch Call, and a growing body of work on digital constitutionalism (Suzor, et al., 2018) that Cloudflare could have consulted between 2017 and 2019 to add legitimacy to its decision-making processes around content. The lack of internal policy-making or engagement with available international and civil society initiatives about where to draw a line on content left Cloudflare open, as a private company that knowingly provided services to controversial and dangerous actors, to the public challenges that came from 8chan and other deplatforming events.

Conclusions

Arguing that Cloudflare and other companies were participating in a process of political contestation or ‘pluralistic accountability’ (Dutton and Dubois, 2015) when they removed 8chan from their services is not to say that these controversies are the answer to wider questions of content moderation online. Indeed, as Marwick (2021: 4) demonstrates, the ‘attack vectors’ of normative enforcement in these kinds of public pressure campaigns can as easily follow the well-worn paths of sexism, racism, and homophobia. That path is identifiable in the moral panics that drive the deplatforming of sex (Tiidenberg, 2021). Likewise, the tools of participatory culture are as available to conspiracy theorists as they are to anyone else (Marwick and Partin, 2021). Rather, deplatforming events like 8chan point to the openness of businesses to public challenge in certain circumstances. In this sense, content moderation resembles other international environments, such as global finance and consumer goods production, in following an ad-hoc multistakeholder process (Raymond and DeNardis, 2015), but also in incorporating norm-setting to restrain or direct private action. Treating deplatforming as an aberration from legal rules or a one-sided exercise of private power neglects the importance of these multistakeholder processes in influencing who and what is a target of deplatforming and in responding to the absence of other policy or regulatory frameworks.

Footnotes

Acknowledgements

I would like to thank the University of Leicester, Toronto Metropolitan University, and the Canada’s Social Science and Humanities Research Council, the Michael Smith Foreign Study Supplement, and Mitacs Globalink for funding this research.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Social Sciences and Humanities Research Council of Canada (Grand no. 752-2018-2077).