Abstract

Voice assistants (VAs) like Alexa have been integrated into hundreds of millions of homes, despite persistent public distrust of Amazon. The current literature explains this trend by examining users’ limited knowledge of, concern about, or even resignation to surveillance. Through in-depth, semi-structured interviews (n = 16), we explore how young adult Alexa users make sense of continuing to use the VA while generally distrusting Amazon. We identify three strategies that participants use to manage distrust: separating the VA from the company through anthropomorphism, expressing digital resignation, and occasionally taking action, like moving Alexa or even unplugging it. We argue that these individual-level strategies allow users to manage their concerns about Alexa and integrate the VA into domestic life. We conclude by discussing the implications these individual choices have for personal privacy and the rapid expansion of surveillance technologies into intimate life.

Introduction

While discussions about artificial intelligence (AI) in the home often gesture toward the future, AI is already deeply entwined with domestic life. With over 200 million devices sold worldwide (Rubin, 2020), Alexa, Amazon’s voice assistant (VA), is the world’s most popular type of household AI. Alexa is portrayed as human-like and often sympathetic in commercials (Amazon, 2021), on social media (Wang, 2020), and in the news (Larson, 2016). At the same time, there is increasing concern among users and non-users about Alexa ‘listening’ (Lau et al., 2018) and general public anxiety regarding surveillance by large technology companies (Auxier et al., 2019).

In this article, we use the example of Alexa to investigate the ways in which consumers navigate uncertainties inherent in their interactions with VAs. Launched in 2014, Alexa was the first smart home VA in a market that Amazon still dominates (Turow, 2021), with a 69% share in the U.S. (Bishop, 2021). Amazon is leading the expansion of smart home technologies, thereby creating an infrastructural network of surveillance through products like Ring, its video doorbell and home security system (Bridges, 2021). While advertisements promote a fantasy of the domestic sphere as an oasis from market forces (Strengers and Kennedy, 2020), the collision between public and private is inevitable when VAs usher large companies into the home (Goulden, 2021), allowing them to advertise their products and potentially sell data to third parties (Iqbal et al., 2022; Maalsen and Sadowski, 2019).

Existing research has highlighted consumers’ lack of trust in smart technologies (Cannizzaro et al., 2020) and VAs in particular (Olson and Kemery, 2019). This lack of trust is well-founded, given that high-tech firms have long been secretive about the way they treat user data. In the cases that have received journalistic attention, it is clear that highly personal data has been used for the financial gain of technology companies—often to the detriment of users (Véliz, 2021). In 2019, 79% of Americans expressed concern that tech companies could be misusing consumer data (Auxier et al., 2019). Despite this clear public distrust, sales of VAs are increasing (Kinsella, 2022).

This research sets out to understand this puzzling combination of widespread distrust and widespread adoption. We find that some users may distrust tech companies deeply, while nevertheless integrating VAs into their daily lives. Drawing on in-depth, semi-structured interviews with Alexa users from the United States (n = 16), we observe that users managed Alexa’s intrusive and unsettling presence in the domestic sphere using three strategies that are both accommodating and resistant: anthropomorphism, ‘digital resignation’, and taking action.

Theoretical framework

Technology and privacy in the home

Trust is a central concern in the literature on the interactions between humans and domestic technologies. Large technology companies, including Amazon and Google, leaders in the US VA market, have purposefully nurtured information asymmetries between themselves and their customers, obscuring details about how they use customer data (Turow, 2021; Véliz, 2021). Perhaps as a result of this, these companies now face high levels of public distrust (Auxier et al., 2019). Lawsuits centering on Alexa ‘listening’ (Corkery, 2021), reports of employees secretly transcribing recordings (Valinsky, 2019), and discrepancies in Amazon’s privacy policy and actual practices (Iqbal et al., 2022), indicate that this distrust is well-founded. The increase in use alongside distrust gives rise to what some scholars have called a ‘privacy paradox’ (Norberg et al., 2007), that is, the observation that consumers report misgivings about privacy violations, while continuing to disclose large amounts of personal data. Of course, what is considered a violation of privacy in the first place is dependent on social norms, legal precedent, and the divisions between the private and public spheres – what Nissebaum, (2004) calls privacy as ‘contextual integrity’. In our paper, we focus on privacy violations as understood in contemporary western, and more specifically US context. In this section, we will highlight previous research on the tradeoffs that individuals make around privacy, data collection, and surveillance by large technology firms.

First, individuals may be aware of the costs to their privacy but be willing to trade this off against the promised benefits of digital technologies. Neff and Nafus (2016) observe that many users of self-tracking devices allow companies to collect large amounts of personal data in return for personalized insights, such as the ability to keep track of workout statistics. Similarly, West (2019: 29) argues that ‘Amazon’s desire and capability to watch and listen to its customers is increasingly presented as a feature, rather than a bug, even to consumers themselves’. Amazon uses the promise of greater safety and convenience to get into users’ homes, collecting huge amounts of personal data in the process. Smart homes may also be a part of what Gilliard and Golumbia (2021) call ‘luxury surveillance’ wherein through devices like Apple Watches, the rich can opt into monitoring that is otherwise imposed on others. Though Amazon products like the Echo Dot are relatively affordable, smart home technology still carries an association with luxury (Spigel, 2005), which may lead users to reframe surveillance as a benefit.

Other studies argue that VA users are simply misinformed about how their data is stored, collected, and sold. In a comparative study of users and non-users, Lau and colleagues (2018) found that while non-users expressed privacy concerns about surveillance, users had fewer worries. Both Lau et al. (2018) and Gruber et al. (2021) find that most VA users had limited understanding of the actual privacy risks and did not understand how their VAs stored data. This lack of awareness may have contributed to their willingness to adopt their VAs. While lack of awareness may certainly play a role in decisions around privacy, explanations that foreground this as a primary explanation risk neglecting the multi-faceted, contextual nature of decisions that individuals make around privacy.

Draper and Turow’s concept of ‘digital resignation’ provides a compelling alternative to a more simplistic ‘privacy paradox’ framework. They argue that when surveillance by large corporations seems inevitable, resignation is a rational response. Different from the cost-benefit calculation outlined in ‘trade off’ explanations, individuals subject to ‘digital resignation’ feel that they do not have a choice and their adoption of digital devices, including VAs, does not indicate their broader approval of ‘surveillance capitalism’ (Zuboff, 2019). While digital resignation is an individual-level response, it is cultivated on the institutional level by large corporations, which benefit from consumers’ feelings of futility. Rincón et al. (2021) found some evidence supporting this, as most of their study participants reported ‘immense distrust’ of Amazon and Google, despite frequently using their devices. Similarly, Vitak et al. (2023) observed signs of resignation among focus group participants, but also found that users made different risk calculations based on perceived levels of invasiveness – their responses were not one size fits all.

All the three explanations underemphasize the role of trust as a complex social process. Trust has been defined in multiple ways. The narrowest definitions of trust see trust as reliance on performance or functionality of a device. That is, if ‘I trust this VA to do its job’ I rely on the VA to decipher my voice instructions and act upon them within the confines of its capabilities (see Kerasidou, 2017, for a detailed discussion of the differences between trust and reliance). Others offer a broader definition of trust, which argues that trusting relationships generally share three common characteristics: (1) the trusting person’s vulnerability, (2) the voluntariness of their choice to put trust in the relationship, and (3) trusting person’s expectation of good will, that is, that the entity they put their trust into will act in a way that is conducive to their welfare (Kerasidou, 2017; Schilke et al., 2021). This definition sees trust itself as an act of vulnerability – the act of trusting an entity, be it a person, company, or machine, makes an individual at risk of negative consequences if their trust is violated.

The other two characteristics of this definition, voluntariness and good will, are complicated when individuals are faced with making choices about whether or not to trust potentially untrustworthy sources – what Hamill and colleagues (2019) calls ‘trusting the untrustworthy’. When a source is perceived as untrustworthy, individuals must make a different kind of choice. Voluntariness becomes dependent on the necessity of the relationship (i.e., a doctor providing essential services) and good will may be hoped for rather than expected – however, individuals still make decisions to trust under less than ideal circumstances. Often discussed in situations such as potentially faulty medicine (Hamill et al. 2019), or relationships formed in secretive environments (Gambetta 2011), we argue that, while with much lower stakes, the relationships between consumers and VAs may also be an example of individuals making complex decisions about their trust in potentially untrustworthy actors.

Interrelated to ‘trusting the untrustworthy’ is distrust, which can be defined as a lack of confidence in an agent, the assumption that an agent might cause an individual harm, or the general failure to establish a trusting relationship (Cook and Santana, 2020; Grovier, 1994 in Kramer, 1999). While distrust is often framed as negative and socially corrosive, Cook and Santana (2020) argue that, in respect to the distrust of institutions, distrust can help to moderate power. The literature points to a general distrust of big technology companies and their approach to data collection. Steedman et al. (2020) observe in their study of individual attitudes towards data collection by a large news organization that the process of trusting an institution under these conditions is often messy and contradictory – individuals form ‘complex ecologies of trust’ rather than simply trusting or distrusting a company and all its products.

We are interested in how these sociological perspectives on trust and distrust are reflected in users’ relationships to their VAs. By collecting large quantities of interpersonal data that make individuals more observable to large technology companies (Goulden et al., 2018), VAs give rise to privacy risks. Users adopting VAs make themselves more vulnerable to data breaches. VAs are far from ubiquitous and their adoption is, ultimately, voluntary. Eschewing a VA does not yet result in the non-user’s inability to carry on with their daily lives in a way that not using a smartphone does. 1 This paper therefore focuses on the component of trust that is the least obvious in our context: it will explore how the expectation of good will features in human-VA interactions, as well as the ways in which individuals make complex decisions about trust under less than ideal circumstances.

Anthropomorphism and trust of voice assistants

People have little trust in the large technology companies (Auxier et al., 2019) and the companies are keenly aware of the trust problem they face. As Foehr and Germelmann (2020) argue, anthropomorphism can be one pathway to trust for individuals who use VAs, and technology companies have certainly embraced anthropomorphic design. Scholars have argued that companies like Amazon, Google, and Apple have attempted to make VAs appear more trustworthy by humanizing (and often feminizing) them (Humphrey and Chesher, 2021). Strengers and Kennedy (2020) coined the term ‘Big Mother’, which refers to the way in which technology companies create a regime of surveillance and data capture within the home through the promise of feminized, maternal care (Sadowski et al., 2021). Turow (2021) calls this process ‘seductive surveillance’ and Lingel and Crawford (2020) argue that Alexa’s construction as a feminized secretary offers a false sense of security, soothing users’ potential anxieties about surveillance.

Humanizing VAs is an effective strategy for Amazon and other companies, and an essential step for users to imagine good will on the part of their relationships with VAs. Pitardi and Marriott (2021) found that users who heavily anthropomorphized VAs were more likely to trust them. Additionally, they found that most participants separated the company from the machine and blamed many of their anxieties on the former, a dynamic that we explore further in our analysis. Foehr and Germelmann (2020) identified consumers’ perceptions about the personality of the technology’s voice interface as one of four paths to user trust. In a study of user reviews, Purington et al. (2017) found that anthropomorphism significantly predicted user satisfaction (though not necessarily trust), and Brause and Blank (2020) observed that some participants used their VAs for companionship, potentially indicating increased levels of trust. However, anthropomorphizing a VA does necessarily indicate trust in of itself, and it could simply be the users’ response to the communicative logic of the device, a pattern that has been explored by many social scientists.

Epley and colleagues’ (2007) widely cited three-factor theory of anthropomorphism provides a framework for the concept and argues that people often anthropomorphize non-human agents as a way to find and impose structure amidst uncertainty. The phenomenon is typically viewed in the literature from either of two perspectives: (a) a psychological perspective, focusing on individual psychological states (e.g., Epley et al., 2007; Li and Sung, 2021; Pitardi and Marriott, 2021) or (b) from a more structural viewpoint, where authors analyze how companies maintain gendered, racialized design norms, but without investigating individual experiences (e.g., Lingel and Crawford, 2020; Phan, 2019; Woods, 2018). In this paper, we examine anthropomorphism as an individual-level sociological process that connects with the broader question of how people manage uncertainty and distrust of large companies in the home. Anthropomorphism of VAs may be a way of dealing with tech-related uncertainty and distrust in the home; while not synonymous with trust, it might be a pathway to trust (Foehr and Germelmann, 2020), or a way of managing distrust.

Our approach allows us to make better sense of how individuals relate to VAs by accounting for the messy, often contradictory ways in which individuals trust (and distrust) institutions (Steedman et al., 2020). Building on existing literature that links anthropomorphizing technology and user trust (Foehr and Germelmann, 2020) we sought to answer the following research questions: 1. How and why do users anthropomorphize Alexa? 2. What is anthropomorphism’s relationship to trust? 3. How do users see the relationship between Alexa and Amazon?

Through in-depth, semi-structured interviews, we find that anthropomorphizing helps users separate Alexa, a potential agent of goodwill, from Amazon, a company which they distrust.

Methods

This article draws on 9 months of fieldwork (November 2020–July 2021) conducted online by the first author, with data collected via semi-structured interviews with 16 Amazon Alexa users. 2 From November through December 2020, the first author conducted a series of informal pilot interviews with Alexa users recruited through social media. These conversations are not included in the sample, but rather informed the direction of study and the eventual interview guide.

We pursued a purposive sampling strategy (see Online Appendix A), choosing to focus on childless individuals in their early 20 s to early 30 s. There is significant literature on the unique relationships formed by older adults (e.g., Duque et al., 2021; Pradhan et al., 2019) and young children (e.g., Sakr, 2021; Turkle et al., 2006) with household AI, as well as the complex negotiations that parents undergo regarding data collection and privacy as it relates to their children (Barassi, 2020a, 2020b). Although VAs are more popular with young people (Auxier et al., 2019), many of the interview studies cited here have samples that skew older; more research on younger users is necessary. In order to explore this population and avoid the complicated territory of how children and older adults interface with household AI, we chose to focus on young, childless adults. Finally, we focused on users in the United States, which has the highest VA market share (Statista Research Department, 2020). Focusing on one country context was appropriate given the context-sensitive nature of the way privacy is understood.

Participants were recruited via social media, with snowball sampling employed once several points of entry were obtained. The final sample (n = 16) consisted of young adults, ages 22–31, seven men and nine women (see Online Appendix A). The first author conducted interviews until saturation was reached, and the same themes began to appear again and again in response to the interview questions. Participants were heterogeneous in terms of occupation and fairly homogeneous in terms of race, with 12 white and four Asian participants. Several participants held tech- or IT-related jobs, and participants had differing levels of technical understanding of their devices. All participants held at least a college degree, which is relevant as a large-scale survey of privacy attitudes indicated that individuals with a bachelor’s degree are more likely to be concerned about surveillance than those with lower levels of education attainment (Auxier et al., 2019). Most participants obtained their first Alexa device somewhat unintentionally—for example, as a gift from a family member or through an Amazon promotion. While all participants had incorporated Alexa into their daily lives, they ranged from hesitant users who only tolerated the VA due to a partner, to smart home aficionados. The homogeneity of education, race, and age in our sample, though a limit in some capacities, 3 also helped us draw comparisons regarding gender, occupation, and technical knowledge of Alexa.

Interviews were conducted virtually from March–July 2021. Given that VAs require Internet connectivity, virtual interviews were not an access issue for this population, and this allowed us to speak to participants across the United States. The interview schedule was developed partly through observing which themes organically came up when asking broad, open-ended questions in the pilots, such as ‘How do you feel about Alexa?’ or ‘How do you use Alexa in your daily life?’ We drew on Gerson and Damaske’s (2020) tactic of using broad, general questions paired with more specific probes, which we organized around themes that came up in the literature review and during the pilots, such as anthropomorphism and data collection (see Online Appendix B). Interviews ranged from 30 min to 2.5 h, averaging 1 h. We used a grounded theory approach to analyze the data (Corbin and Strauss, 2008). The first author coded transcripts and memos in NVivo, with an exploratory round of coding to identify themes and recurring images, writing detailed memos throughout the coding process. After the exploratory round of coding, they returned to the literature and identified a smaller, more specific set of codes that focused on how individuals managed distrust. Three main codes were identified, each with several sub-codes. Finally, the first author conducted a second round of coding with the finalized codebook.

Challenges in operationalizing anthropomorphism

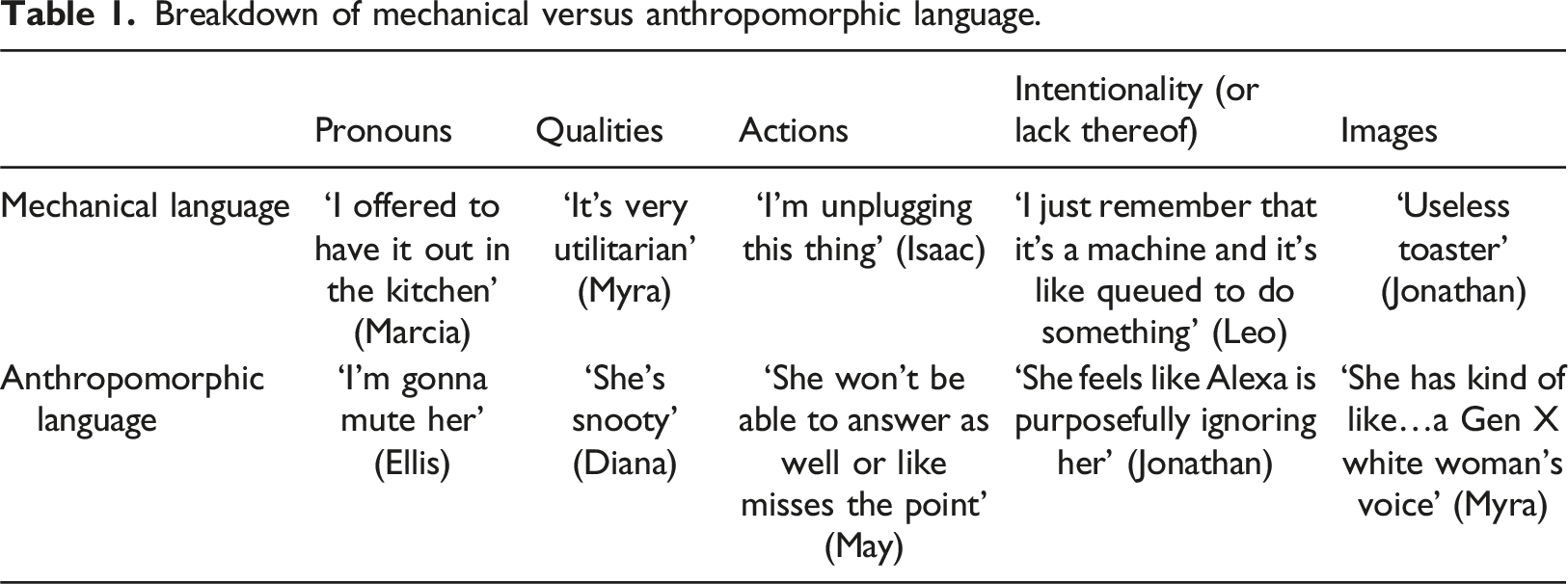

During the pilot interviews, anthropomorphism emerged as a frequent theme, and it became clear that we needed a systematic method of observation. Operationalizing anthropomorphism is challenging (Bartneck et al., 2009). Some researchers operationalize anthropomorphism or ‘personification’ as using gendered pronouns to refer to the technology (Lopatovska et al., 2018; Purington et al., 2017). However, this assumes that pronoun use is both stable and consistent with other potential indicators, while evidence from interview studies shows unstable pronoun use, even when users report ‘personalizing’ the VA (Rincón et al., 2021; Skjuve et al., 2021). While the use of gendered pronouns does not necessarily indicate anthropomorphism, we argue that it is one aspect of anthropomorphic language, which we have outlined below. Survey questions and scales are also common; a widely used psychological scale for measuring anthropomorphism includes questions such as ‘The agent has free will’ and ‘The agent has consciousness’ (Waytz et al., 2010). However, Gruber and colleagues (2021) note that surveys can present challenges for understanding how people use and relate to technology. Indeed, in our pilot interviews, we observed a more complicated picture of anthropomorphism, with participants displaying unstable pronoun use and anthropomorphism despite being aware that the device lacked emotions or consciousness. Additionally, no studies to our knowledge have looked specifically at when and how individuals do not anthropomorphize, using mechanical language instead (e.g., ‘machine’ or ‘computer’).

Breakdown of mechanical versus anthropomorphic language.

Results

Participants used three strategies to manage their distrust of Amazon while continuing to use Alexa. First, many anthropomorphized Alexa and established a ‘relationship’ with it, thus separating it conceptually from the impersonal and untrustworthy Amazon. Second, a number of interviewees showed signs of digital resignation. Finally, individuals set certain personal boundaries regarding Alexa and took action to contain or even unplug the VA when those boundaries were violated.

‘She’s not listening, they are’: Anthropomorphism

All participants anthropomorphized Alexa to some extent. As detailed above, we coded for instances of mechanical and anthropomorphic language (see Table 1 for a breakdown) and found that all participants used a mix of human and mechanical language, sometimes within the same sentence. Across interviews participants tended to use mechanical language when discussing distrust of Amazon and anxieties about data collection, and anthropomorphic language when talking about domestic frustrations and daily life.

Our findings highlight a fundamental lack of trust in Amazon among the participants. Nine participants strongly disliked Amazon, six had ‘complicated’ or ‘mixed’ feelings about the company, and only one expressed uncomplicated positive feelings. Some noted that despite owning Alexa, they tried not to shop on Amazon, with one participant claiming that it made her feel ‘grimy’ (Myra). Nearly all admitted not knowing much about Amazon’s data practices and expressed some concern about how the company was using their data. Participants often separated Amazon from Alexa by anthropomorphizing the VA and employing mechanical language when talking about their distrust of the company.

The image of ‘Alexa listening’ was a salient example. All participants mentioned this concern. However, most references to listening either framed Amazon as the listener (‘they’re listening’) or used object pronouns to talk about Alexa (‘it’s listening’). Object pronouns were used to discuss ‘listening’ even when the participant had previously used gendered pronouns to refer to Alexa. Tanya stated, ‘I feel like it’s listening. Like, even when they’re like, “Oh no, you have to activate it,” it’s definitely spying for sure’. Even when ‘it’ pronouns were used, participants still invoked Amazon as the source of distrust.

Many participants talked about Amazon directly, referring to ‘them’. Hao stated, ‘it’s always, like, kind of there at the back of my mind. Kind of like, you know, when I’m talking to Alexa, Jeff Bezos kind of, like, listens in’. Louis used mechanical language, remarking, ‘it would shock me tremendously if every single microphone that I had in my house is not constantly recording me and trawling for any sort of data that they can sell’. In these instances, the emphasis was not on Alexa as a human-like entity listening, but on either Alexa’s mechanical qualities, Amazon the company, or both. Importantly, most instances of ‘them’ or ‘it’ listening were characterized by marked distrust.

When participants used anthropomorphic language to separate Amazon from Alexa, they talked about their affection for ‘her’—even if they had recently expressed distrust of Amazon. Kristin spoke about Alexa as a human-like companion: ‘I secretly love it…I ask her about the weather, and, like, I literally refer to her as “her” and…she’ll say, “have a good day, [Kristin]”…like sometimes I will say, “you too, Alexa”’.

Just as anthropomorphism proved unstable among the interviewees, even when they clearly inscribed some human-like qualities onto Alexa, the separation between Amazon and Alexa created a clear tension for some participants. When asked whether she thought about Amazon in relation to Alexa, May replied, ‘I just kind of think of her as her own thing, but maybe...I need to?’ However, across the interviews a clear pattern of anthropomorphism as a way to separate Amazon from Alexa persisted, summed up explicitly by Jonathan: Jonathan: So it’s, like, in this household we hate Jeff Bezos, but we separate Alexa from Jeff Bezos…. Interviewer: Ah, okay. [Both laugh.] That makes a lot of sense. Jonathan: It’s not her fault.

‘I feel like it’s in vain to resist’: Digital resignation

We found no evidence that participants were wholly unconcerned about Amazon’s data collection practices, as suggested by some previous studies, but digital resignation (Draper and Turow, 2019) was a recurring response. First, interviewees discussed corporations’ data collection activities as both certain and ubiquitous. Second, many initially proclaimed a lack of concern but later contradicted themselves, expressing fears and doubts. Finally, all participants but one agreed that they did not approve of Amazon collecting data but they saw no other option and did not consider Alexa per se to be the primary problem.

Many participants spoke with absolute certainty of Amazon ‘listening’ through Alexa, with phrases like ‘definitely’, ‘obviously’, ‘certainly’, and ‘for sure’. Jo summarized this sentiment well: ‘people who are like, “oh my god, they’re listening!” I’m like, “well, we know this”’. There was also a clear sense of the ubiquity of data collection today extending far beyond just Amazon: ‘It’s everywhere’, Jonathan stated, ‘it’s owned, bought, sold, traded, bid for. That seems very obvious’. Some participants expressed distrust of other devices, particularly their phones, as well as other companies besides Amazon. Lucia remarked, ‘I’m pretty sure my phone is doing everything also…like Apple and Amazon…I imagine they’re both ruthless’. There was a sense among many participants that Amazon was certainly collecting their data for nefarious purposes, but so were many other companies – the problem was everywhere, not just limited to Alexa.

Some participants followed these statements with a proclaimed lack of concern (‘it doesn’t bother me’, said Myra), but upon deeper probing, most revealed not just anxiety but a feeling of futility regarding a system they felt was deeply entrenched. Lucia made clear her dislike and distrust of both Apple and Amazon. It was not that she didn’t worry or care about data collection, rather that ‘I feel like it’s in vain to resist’. Ellis, who had worked for large tech companies, admitted, ‘I don’t dive into privacy policies too much because, you know, I am pessimistic’. Several other participants worked in tech-adjacent fields, and they too expressed a general pessimism around the inevitability of data collection. Tanya expressed a lack of options, stating, ‘it kind of feels like you either agree to it, or you don’t, and your only option if you don’t is you get rid of it’. However, one of the potential reasons why participants didn’t just ‘get rid of it’ was a broader sense of resignation: why get rid of Alexa, if that data would just be collected anyway? As Myra observed, ‘I know that technically, like, she listens and takes data from me, but so does my phone, so does my TV, so does everything’.

For our participants, Alexa did not feel like the source of the problem, nor was the feeling of resignation limited solely to Amazon. That is, Alexa as an entity in the home did not appear to be the object of their distrust; rather, the blame was shifted to tech companies and the general conditions of surveillance capitalism in the United States. As Jonathan said, ‘she [Alexa] doesn’t feel particularly a problem. That’s really it. She’s just another arm of a general problem’. Here, Alexa acted as the ‘arm’ – the human-like, but relatively blameless extension of the actions of tech companies.

‘The last straw’: Taking action, drawing lines

The third way we observed our participants managing distrust was by taking action and drawing personal lines around their use of Alexa. Participants kept Alexa out of specific rooms, drew lines regarding the tasks they would allow Alexa to perform, unplugged, and in one case stopped using the VA altogether. Through these actions, participants coped with their lack of trust in Amazon by managing Alexa’s role in the home.

One strategy involved deliberately keeping Alexa out of specific rooms. These choices were characterized by distrust, or a more general desire to keep the VA away from more intimate parts of their lives. Three participants noted that they would not want Alexa in the bathroom or shower. Tanya reflected, ‘I kept thinking about getting another Alexa for the bathroom. I was like, “is that too much? Is that too intimate?”’Marcia’s roommates prevented her from putting Alexa in their shared kitchen. Referring to this decision, Marcia said, ‘everybody in the house is like, “no, like, that’s creepy, like, I don’t want it listening in the kitchen”’. Notably, the objection was specific to Alexa, and did not reveal a general distrust of household AI—the roommates also owned a yeedi, a robot vacuum, which they allowed to roam throughout their home collecting some personal data (yeedi, 2020), but notably not ‘listening’.

Most participants drew lines around the tasks they saw as ‘too intimate’ for Alexa to perform. Participants were asked open-ended questions about things they would and would not want Alexa to do, regardless of what the VA is currently capable of. Many of these tasks, both actual and imagined, involved physical contact, emotions, relationships, and personal decision-making. These lines were often contradictory and malleable. For example, Jonathan told us: I don’t want Alexa to, like, give me a massage, that feels weird. But if Alexa could turn on my massage chair…if I had one, that would be okay. Like, I wouldn’t want them to give Alexa arms…unless you put the coffee in the coffee machine! Then it’s different!

Though Jonathan spoke partly in jest, it was clear from his discomfort that intimate tasks like touching would be a step too far (at least presently). This reflected a clear desire among some participants to keep Alexa (and Amazon) separate from certain areas of intimate life.

Unplugging Alexa due to privacy concerns was a rare occurrence; only three participants reported ever having done so. Michelle’s experience indicates that this action was done out of distrust and uncertainty about the VA’s presence in her home: Michelle: We were having a hard patch in our relationship at one point and I didn’t feel comfortable talking about that with Echo on.

4

And that was the only time that we really did that. Interviewer: What was the motivation for not wanting Echo on? Michelle: For some reason that was really in the forefront of my mind, like, “can we just turn it off?” I couldn’t tell you why, but it was there and it was a presence. Although Michelle couldn’t articulate a precise reason for switching off Alexa, her

Indication that it was a ‘presence’ during this tough conversation could highlight anxiety about both Alexa being ‘too human-like’ and the lack of information she possessed about what would be done with any (highly personal) data collected in that moment. This small action was a means by which she managed this uncertainty.

Because our participants were all frequent Alexa users, quitting the device was not something we expected to observe. However, Isaac had, just prior to the interview, switched from Alexa to Apple Homepod. He stopped using Alexa when it served him an Amazon ad. Of the decision, he said, ‘I was thinking about switching anyway and now that was just what I pointed at…like, that’s the last straw, like, I’m unplugging this thing’. With this ad Alexa crossed a line, providing evidence that Amazon was using the VA to promote its own interests. Similarly, Leo recounted a moment when he considered removing Alexa from his home, when it suggested an Amazon-related feature: ‘I remember once very clearly that it said, ‘Hey, did you know I can get’, and I was like, that’s crossing a line. You don’t talk to me. No, no, no, no’. When prompted about why it felt like Alexa crossed a line, Leo responded, ‘I don’t want ads. Not in my house… if it can sit there and listen to me, that’s fine... If it started doing that more often, that would be the end of it’.

Leo was willing to take action when Alexa introduced clear reminders of Amazon into the home, via encouragements to make purchases within the Amazon marketplace. His attitude implies a feeling that the home should represent an oasis from Amazon’s advertising efforts. Threatening to remove the device was a way to manage his distrust of the company, even if he did not end up following through.

Discussion

The specter of ‘Alexa listening’ presents an interesting sociological puzzle. The public is clearly concerned about data collection yet demand for VAs continues to rise. In this paper, we argue for the relevance of a broader concept of trust that relies on individuals expecting good will from an entity they choose to trust. Our findings suggest that some individuals may manage distrust of Amazon by anthropomorphizing; converting the VA into a figure that is ‘trustworthy enough’. Managing distrust of Amazon and creating a (somewhat) trusting relationship with Alexa were interrelated processes. We describe three ways in which our interviewees managed what they perceived as untrustworthy tech within the home: resigning themselves to the potential risks, establishing a ‘relationship’, and taking steps to draw boundaries. Individuals mixed these approaches changing between them depending on the situation. All our interviewees anthropomorphized Alexa some of the time and this humanization of Alexa enabled some of them to talk about it as a possible partner that they would not automatically distrust.

Draper and Turow (2019) argue that the puzzle of widespread technology adoption against a background of low trust can be understood through digital resignation. Among our participants, there was a sense that data collection – not just by Amazon but by large corporations generally – was inevitable and ubiquitous. Draper and Turow drew on Fisher’s (2009) idea of ‘capitalist realism’ to argue that part of what makes the corporate cultivation of digital resignation so effective is its ability to make consumers feel that they have no other choice. In line with this argument, our interviewees showed signs of digital resignation when they talked about data collection as both obvious (‘we know this’) and unavoidable (‘in vain to resist’).

Of course, resignation is not the same as indifference; it is a rational response to the feelings of futility cultivated by Amazon and other corporations. Some scholars have argued that VA users lack significant privacy concerns (Lau et al., 2018), don’t fully understand the privacy risks they face (Gruber et al., 2021), or view surveillance as a ‘service’ (West, 2019). In contrast, we find that our participants distrust Amazon and display a general pessimism around the safety of their personal data. Several of our participants worked in or adjacent to the technology and/or advertising sector; this may have added to their sense of pessimism around data collection’s inevitability. Some feel digital resignation, which we argue is an accommodating strategy; it allows users to live with uncertainty and takes distrust of technology firms like Amazon as a given. Resignation, however, was not the only way to cope.

More often, our interviewees turned to anthropomorphism to separate Alexa, the VA, from Amazon, the company they distrusted and often deeply disliked. The fact that anthropomorphic design encourages trust among VA users is well-documented in the literature (e.g., Foehr and Germelmann, 2020; Pitardi and Marriott, 2021; Seymour and Van Kleek, 2021). While Amazon encourages users to think of Alexa as human-like and relatable, our interviewees did not have any doubts that Alexa was a machine. However, they often used anthropomorphic language, and talked affectionately about the VA in its domestic capacities, while switching to mechanical language when talking about Amazon (and particularly their privacy concerns).

Contrary to previous studies, many of the most salient observations about anthropomorphism came from the use of mechanical language. Alexa became a machine when associated with Amazon (‘it’s listening’) and anthropomorphic when disconnected from the company (‘it’s not her fault’). The latter quote highlights, if not good will, at least the lack of attribution of ‘bad will’ to Alexa, in contrast to Amazon. In cases of both anthropomorphic and mechanical language, anthropomorphism worked as a protective layer – a way for individuals to distance the VA from the company and construct a fiction of Alexa’s good nature. Participants varied in how much they constructed this layer, but the moments when they were reminded of Amazon were often characterized by mechanical language and distrust. Research on individuals trusting potentially untrustworthy medicines shows that relationship-building is a key mechanism that helps individuals solve or at least alleviate a pressing trust problem arguably through a belief that someone they have a relationship with will be more likely to be favorably disposed towards them (Hamill et al., 2019). Anthropomorphizing Alexa helped our participants imagine a ‘relationship’ with the VA, thus allowing themselves to trust in an absence of ‘bad will’ on Alexa’s behalf, even if this trust, like our participants’ anthropomorphism, was unstable. The instability of this perceived relationship was key; when participants distrusted the VA or talked openly about Amazon’s potential data collection, they spoke about Alexa as a machine, rather than an anthropomorphic ‘presence’ in their home.

We argue that in a low-trust context defined by stark information asymmetry, anthropomorphism acts as a tool to help individuals manage their distrust of the VA as it becomes increasingly entwined in intimate life. Unlike other low-trust contexts studied by sociologists, such as patients choosing to use medicine that could be fake (Hamill et al., 2019) or criminals needing to cooperate (Gambetta, 2011), our participants did not face illness or prison as a potential consequence of misplaced trust. Many said that they had ‘nothing to hide’ or ‘nothing to fear’ from Alexa collecting data. However, they still perceived a cost to their choices, expressing significant disillusionment with the broader system of surveillance capitalism when pressed. To ‘trust’ the potentially ‘untrustworthy’ device to come into their homes our interviewees reimagined their VAs as an anthropomorphic entity one could persuade to be trustworthy enough, by building a relationship with it. Like resignation, this was an accommodating strategy; participants reshaped distrust of Amazon rather than confronting it directly. And like resignation, this strategy appears to be reinforced by Amazon, through gendered, anthropomorphic design (Lingel and Crawford, 2020; Strengers and Kennedy, 2020; Sweeney, 2021).

Finally, when accommodating strategies would not suffice, some individuals engaged in ‘privacy-guarding’ behaviors (see Draper and Turow, 2019) by banning Alexa from certain rooms, choosing not to use it for specific tasks, or unplugging it. When participants restricted Alexa they often used mechanical language. Echoing other studies that find privacy-guarding behaviors to be rare (e.g., Lutz and Newlands, 2021), we observed few instances of this. Michelle’s insistence that her partner ‘turn off’ the VA during an argument was an interesting but uncommon example; action was taken only in a vulnerable moment of domestic intimacy. In Marcia’s case, the choice to ban Alexa from the kitchen was made at the request of others. Moreover, the boundaries our interviewees set in their interactions with Alexa were often inconsistent, malleable, and/or contradictory. Except for Isaac’s decision to get rid of Alexa, even these resistant strategies were ultimately ways to manage distrust and continue using the VA.

We have argued here that trust is not a simple barrier to use for individuals who have incorporated Alexa into their homes. Rather, our participants mostly disliked and distrusted Amazon, while nevertheless using Alexa. Sometimes our interviewees felt resigned, certain that if Alexa didn’t collect their data, another device surely would. More often they resorted to anthropomorphism to separate the company from the VA, deeming Alexa ‘trustworthy enough’ while continuing to distrust Amazon. These individual-level responses are reinforced by the company’s actions, namely Amazon’s anthropomorphic design and advertising and its opaque, shifting policies regarding personal data. While these strategies should be tested on larger, generalizable datasets of Alexa users, they offer a potential explanation to our initial sociological puzzle: why do sales of VAs continue to soar despite the clear public distrust of big tech companies?

These strategies also raise important concerns. Most of our participants took steps to make themselves more comfortable with Alexa’s ‘listening’ which helped them cope with their distaste for Amazon’s labor practices, environmental destruction, and corporate greed. But resigning themselves to surveillance, anthropomorphizing Alexa, or taking individual actions to manage distrust obviously did little to reduce Amazon’s profits or their broader expansion into intimate life. Greater user comfort with ‘Alexa listening’ could result in a lack of appetite to take substantive steps both to protect privacy on the individual level and imagine any kind of collective resistance to the severe human and environmental toll associated with the production of the device itself (Crawford and Joler, 2018). Yet, as Steedman and colleagues (2020: 829) write, ‘changes are needed at the macro level of the data ecosystem in order to engender greater trust, not in individual institutions or in relation to individual users’. It is difficult to imagine such macro-level changes taking place without greater collective resistance.

Limitations and future research

There are several limitations to this study, many reflecting opportunities for future research. First, the sample size was small; however, as Hennink and Kaiser (2022) surmise from an empirical review of the literature, small qualitative samples can be successful at achieving saturation, especially when the aims of the study are focused. Another limitation was that our sample was young, college-educated, and predominately white. The first author, who conducted recruitment, fits this demographic, which likely affected who responded to the recruitment posts and which networks they were circulated among. Subsequent snowball sampling may have perpetuated this. Given recent critiques of Alexa’s ‘default whiteness’ (e.g., Phan, 2019) as well as the unique challenges faced by Black users (e.g., Harrington et al., 2022), further research should focus on how people of color trust and/or distrust Alexa. Single individuals who lived alone were also underrepresented, which could have impacted how participants talked about Alexa given the social nature of anthropomorphism (Epley et al., 2007).

Several of the study participants lived with individuals who disliked Alexa and sometimes refused to interact with the VA. Few of these individuals were willing to speak with us. However, further investigation into the experiences of these reluctant users could provide an interesting case study of how personal lines are drawn and negotiated within the home. Though this sample was limited to childless interviewees, further research could focus on how the presence of children in the home affects how parents relate to Alexa, following work by Barassi (2020a, 2020b).

Given that this is a small, limited sample, further qualitative and quantitative research is necessary. Online ethnographies could explore user forums, Facebook groups, and subreddits. Content analysis of ads, news articles, and Amazon’s public-facing materials could examine media coverage, and Amazon’s own portrayal of Alexa. Finally, an analysis of generalizable country-level datasets with questions about trust in technology, attitudes towards VAs, and feelings about large companies like Amazon are a crucial step in testing and triangulating the theory we have outlined.

Conclusion

Amazon is currently expanding Alexa’s functionality into many more segments of the private sphere. They provide integrations with over 100,000 different brands of household devices like smart ovens and coffeemakers (Statista Research Department, 2021), offer eldercare features to check in on aging parents (Amazon, 2022), and can even define familial relations through the affordances of their household membership structures (Goulden, 2021). With their Ring Security System, Amazon continues to push the limits of home surveillance (Bridges, 2021). In this paper, we outlined some of the strategies that individuals use to manage their distrust of Amazon, via their interactions with Alexa. If one necessary component of trust is good will (Kerasidou, 2017; Schilke et al., 2021), anthropomorphism may be one way that trust is formed under less than ideal circumstances. Unfortunately, the individual strategies that we have identified, whether accommodating or resistant, will not provide meaningful answers to the problems of surveillance capitalism; rather, they will simply continue to facilitate Amazon’s rapid expansion into intimate life. 5

Supplemental Material

Supplemental Material - It’s not her fault: Trust through anthropomorphism among young adult Amazon Alexa users

Supplemental Material for It’s not her fault: Trust through anthropomorphism among young adult Amazon Alexa users by Elizabeth Fetterolf and Ekaterina Hertog in Convergence.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study is supported by European Social Fund; ES/T007265/1

Supplemental Material

Supplemental material for this article is available online.

Notes

Author biographies

![]() project that scopes new technologies’ potential to free up time now locked into unpaid domestic labor and measures how willing people are to introduce these technologies into their private lives.

project that scopes new technologies’ potential to free up time now locked into unpaid domestic labor and measures how willing people are to introduce these technologies into their private lives.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.