Abstract

The paper analyzes social interactions among crowdworkers on Discord. The explored crowdtesting platform uses this social media platform to offer their crowdworkers various opportunities for work-related and private communication and to host events that encourage learning practices. The paper investigates to what extent the interactions on Discord can be analyzed as social learning practices and can be understood as a specific form of solidarity among crowdworkers. In an explorative online ethnographic study, two learning related channels of the platform company’s Discord server were observed: the question channel in which testers can ask for help, and the quiz channel in which a testing related quiz event takes place. Additionally, interviews with moderators and crowdtesters were conducted. The observation of learning practices on Discord makes clear that the social media tool is mostly used by testers for situational and functional information exchange like helping each other with bug classifications or solving technical problems. Testers mostly provide each other with brief information that can directly be applied in the work context. Further information are mainly shared by moderators that offer supplementary explanations as a possibility to self-help. The study highlights that a form of weak cooperative solidarity emerges among testers as they support each other via Discord to fulfill individual work tasks. This differs from resistant solidarity in other contexts of platform work, because in the observed case, platform workers’ solidarity is not directed against the platform company.

Keywords

Introduction

Crowdwork delineates a new form of work – that is, work offered (outsourced) to an undefined number of people (the crowd) through an intermediary digital infrastructure (platform) (Howcroft and Bergvall-Kåreborn, 2019: 23). Usually, crowdworkers have the status of ‘self-employed agents. They are not employed […] and can freely choose their working time and location’ (Durward et al., 2020: 68). Crowdwork can be distinguished from on-site gig work where tasks are performed locally, that is, delivery services (Wood et al., 2019: 57). For this study, we therefore define crowdwork as ‘paid crowdsourced work where the delivery of service occurs entirely online’ (Margaryan, 2019: 251), and we particularly examine a testing platform. Being a subtype of crowdworking platforms, crowdtesting platforms allow workers to test software, websites, or applications (Durward and Blohm, 2018).

During a research project on learning opportunities on crowdworking platforms, we conducted exploratory interviews with crowdworkers on different crowdwork platforms. We were particularly struck by one testing platform because crowdworkers reported using an internal communication space that was not found on other investigated platforms. The mentioned testing platform uses a social media server on Discord, on which the community can communicate among each other. Community interaction structures in crowdwork have been previously examined as a medium for self-regulation and information exchange among the crowd (Gerber, 2021) but also for social learning practices (Cedefop, 2020). In crowdtesting, the social interaction is particularly interesting against the backdrop of the competitive logic of crowdwork platforms (Stefano, 2015; Anwar and Graham, 2021; Wood et al., 2019; Leicht et al., 2017). This led us to questions about social learning practices and potential solidarity among crowdtesters. Regarding solidarity in the platform economy (which includes on-site and off-site work) our study offers insights on how solidary practices can emerge in digital communication spaces that are implemented and controlled by the platform company. This platform-initiated communication space is characterized by guidelines provided by the platform company and sanctioning of their violation with consequences on both the Discord server and the testing platform.

In the Global North, crowdwork is usually a ‘small side-job’ (Schmidt, 2017: 24) for the majority of crowdworkers (Berg and Rani, 2021). They do not only participate for the rather low remuneration, they are ‘also motivated intrinsically by social exchange, learning, or the task itself’ (Durward et al., 2020: 69). Different types of learning practices in crowdwork and their extent in interactions on platform-initiated social media channels have not been examined yet.

These observations of the interactions on Discord against the backdrop of the competitive work environment on the testing platform as well as previous research on learning practices of crowdworkers and social media as a learning tool led to the following research questions: - Which interaction practices can be observed on the server and to what extent can they be categorized as social learning practices? - To what extent can these social learning practices be understood as a form of solidarity among crowdworkers?

To examine these questions, we used an online ethnographical approach observing the platforms Discord server supplemented by data from interviews with crowdtesters and platform moderators. To present our findings, our paper is divided into eight sections. We begin with a brief overview of previous research on social exchange among crowdworkers (part 2), followed by a theoretical background on social learning practices and solidarity (part 3). Afterward, the field of research is introduced (part 4), followed by our methodical approach (part 5). Thereafter, we present and discuss our findings (part 6 and 7) before concluding our study (part 8).

Exchange and social learning practices among crowdworkers

Crowdwork is often described as an atomized and isolated work environment as workers cannot meet each other locally due to the entirely digitally performed work (Anwar and Graham, 2021; Heiland and Schaupp, 2021; Wood et al., 2019; Yao et al., 2021). Unlike in the on-site gig economy and due to the digital labor organization, crowdworkers do not have the opportunity to meet each other physically (Johnston 2020). Nevertheless, there is an ongoing debate about to what extent and in what form there is exchange among crowdworkers. A growing amount of literature is emphasizing that digital communication and exchange among crowdworkers exists (Dissanayake et al., 2021; Gerber, 2021; Gray et al., 2016; Ma et al., 2021; Yin et al., 2016).

Insights on the extent of crowdworkers’ exchange vary strongly, some studies are estimating that nearly 60% of crowdworkers are using online forums (Yin et al., 2016: 1296) whereas others speak rather of 20–30% (Margaryan et al., 2020: 27). Crowdworkers use diverse communication channels like online forums, social media groups or even digital one-to-one communication to interact (Margaryan et al., 2020; Wood et al., 2018; Yin et al., 2016). While the use of social media groups (e.g. Facebook) is of great importance for online freelancers, crowdworkers, on microtask platforms tend to use online forums (Soriano and Cabañes, 2020; Wood et al., 2018; Yin et al., 2016). Most of the studies on crowdworkers’ peer-to-peer exchange focus on Amazon Mechanical Turk (MTurk), one of the biggest microtask platforms (Gray et al., 2016; Ma et al., 2021; McInnis et al., 2016; Savage and Jarrahi, 2020; Wang et al., 2017; Yin et al., 2016). At MTurk, various exchange opportunities were created by workers in the form of online forums or browser extensions like Turkopticon (Irani and Silberman, 2013; Yin et al., 2016). Previous literature comes to the conclusion that communication among crowdworkers is primarily functional and serves as an opportunity for work-related knowledge and information sharing (Dissanayake et al., 2021; Gerber, 2021; Gray et al., 2016; Jin et al., 2021; Wang et al., 2017). Crowdworkers seek exchange particularly to cope with task-, platform- or client-related questions or uncertainties (Gerber, 2021; Gray et al., 2016; Yin et al., 2016).

Furthermore, in this peer-to-peer exchange crowdworkers’ learning practices can be found (Cedefop, 2020; Margaryan, 2016, 2019; Margaryan et al., 2020). Besides individual learning practices like learning through trial and error, performing new tasks, or attending courses and tutorials, crowdworkers interact with peers to fulfill tasks, ask for advice, receive feedback, or use online forums (Cedefop, 2020; Margaryan, 2016, 2019). Similar to other online communities, not only active participants benefit from exchange, knowledge sharing, and peer-to-peer learning but also passive participants, who are just observing the interactions without contributing anything themselves (‘lurking’) (Gerber, 2021; Ma et al., 2021).

Solidarity in the form of unionizing behavior and collective organization is rather rare in crowdwork (Gerber, 2021; Wang et al., 2017). This is contrary to research on exchange in the on-site gig economy, where exchange is often connected with collective and unionizing organizing to improve working conditions (Heiland and Schaupp, 2021; Karanović et al., 2021; Tassinari and Maccarrone, 2020; Yao et al., 2021).

As there are no platform-internal communication opportunities available for crowdworkers on many platforms, exchange usually takes place on platform-external and self-initiated channels (Margaryan et al., 2020; Yin et al., 2016). In this case, exchange becomes necessary because platforms provide little information or workers are faced with challenges of working conditions, which lead to uncertainty or dissatisfaction (Gray et al., 2016; Irani and Silberman, 2013; Schwartz, 2018). Nevertheless, some platform companies provide communication opportunities themselves. These exchange structures constitute management and community building strategies (Gerber, 2021). According to Gerber (2021), communication spaces on crowdwork platforms differ especially in the complexity of technical infrastructure on the one hand and the degree of moderation and regulation on the other.

Theoretical background

To examine social learning practices and to understand whether these practices are a form of solidarity among crowdworkers, we refer to the following theoretical assumptions.

Social learning practices

In our study we examine learning in the digital workplace from a praxeological perspective focusing on social learning practices in an online community. This perspective differs from theories which assess learning mainly on an individual level. While the behaviorist perspective mainly focuses on learning through controlling individual behavior, cognitivist scholars assess individual cognition as a result of learning, while constructivist researchers investigate subjective knowledge construction (Grotlüschen and Pätzold, 2020). We take up a rather sociocultural approach to learning, introduced for example by Vygotsky, building on the idea that socioeconomic contexts influence learning (Grotlüschen and Pätzold, 2020). In digital research, the connectivist and praxeological perspectives emerged as helpful for understanding learning as social practices embedded in digital networks. In those environments, it is rather about the flow of information than the possession of knowledge (Siemens, 2005; Wenger, 2011).

Connectivism proposes that learning takes place in and through connections between entities. Those connections form networks and ‘enable us to learn more’ (Siemens, 2005: 6). Connectivist learning networks consist of participating human and non-human actors who nurture the connections to enable continual learning and to ensure up-to-date information exchange (Siemens, 2005). One way of operationalizing the connectivist approach of distributed knowledge and learning is the concept of Communities of Practice (Boitshwarelo, 2011). They can be defined as an ‘activity system about which participants share understandings concerning what they are doing and what that means in their lives and for their communities’ (Lave and Wenger, 1991: 98). In those communities, learning does not take place through instruction and replication of a knowledgeable practice but through participating in the community (Lave and Wenger, 1991) which is defined by three characteristics: • It is a joint enterprise with a shared domain of interest which does not necessarily be acknowledged as expertise from people outside the community. • Members show mutual engagement and take part in shared interactions like activities, discussions, and information sharing. • They share a certain repertoire of resources like shared experiences, tools, and ways of solving a problem (Wenger, 1998, 2011).

Typical practices within those communities are solving problems, requesting information, or seeking experience from others (Wenger, 2011: 2). In digital environments, online Communities of Practice emerge which enable its participants to transcend time and space through communication technology. Common examples are online forums, question-and-answer-channels or instant messaging, discussion and social groups (Abedini et al., 2021). From a praxeological point of view, in such Communities of Practice, people learn closely connected to their practices and activities. Therefore, knowledge is acted out in practice and thereby both produced (learned) and reproduced in actions which form the practice (Hager et al., 2012: 3).

In addition to social learning practices, the context of learning in crowdwork needs to be considered. Therefore, we are taking recent research on workplace learning into account, describing learning in the context and process of work, which is gaining importance in ever changing digital work environments (Sauter and Sauter, 2013) and is forming an inherent part of crowdworkers’ practices (Margaryan 2019). Harnessing certain potentials as a flexible form of learning, sparing financial organizational resources as it is done in the course of work which is performed anyway by the employees, it also holds certain risks of being dependent on the work processes and resources provided by the organization, narrowing learning only to the experiences made in the course of work and generating random learning results (Dehnbostel, 2019). Workplace learning is mainly associated with informal learning activities, taking place in the process of work (Sauter and Sauter, 2013) without necessarily following any learning objectives. It is distinguished from formal and non-formal learning, describing systematic educational activities with a learning target. Whereas formal learning is offered by formal institutions, non-formal learning is offered by actors outside the formal education system to a certain subgroup (Coombs and Ahmed, 1974).

Since crowdwork is a rather recent phenomenon, research on learning among crowdworkers is rare (Harteis et al., 2022). Nevertheless, research suggests that learning is an inherent part of crowdwork as new crowdworkers or unfamiliar task requirements make learning necessary (Kittur et al., 2013). Margaryan (2016) suggests that not only online freelancing, but as well microtasking are forms of ‘learning-intensive’ (Margaryan, 2016: 1) crowdwork, since workers pursue learning goals during their work. She also highlights the importance of social learning among crowdworkers, distinguishing ‘learning with and from other people’ (Margaryan, 2016: 3). In the context of crowdwork, learning with others refers to learning while collaborating, and learning from others involves sharing experiences and information.

Bearing in mind that crowdwork environments individualize workers and are highly competitive, it needs to be explained why social learning practices in crowdwork are likely to happen at all. The concepts of cooperation and solidarity help to understand the contextual factors leading to social learning practices.

Solidarity and cooperation

Cooperative 1 interactions are a key element of social learning practices that are understood primarily as an exchange of information and experiences. Cooperation can be expressed as working together with others to achieve an individual goal (Jaeggi, 2001; Griesemer and Shavit, 2023). Jaeggi (2001) emphasizes a strong link between cooperation and solidarity. In contrast to cooperation, solidarity is not only non-instrumental, but characterized as a reciprocal and symmetrical relationship between equals in contrast to asymmetrical or one-sided relationships between unequals as they can be found in hierarchical or charitable relations (Jaeggi, 2001: 291–292). In solidary relationships, people stand up for each other and help each other, and they have shared challenges and experiences (Jaeggi and Celikates, 2017: 39). Therefore, solidarity is often connected to common goals and interests as well as a shared identity (Bayertz, 1999; Jaeggi, 2001). Solidarity is generally based on a collective identification as a group which often manifests itself in an identification as a ‘we’ in distinction to a ‘them’ evolved through mutual practices and relations (Morgan and Pulignano 2020; Jaeggi, 2001). As solidarity is based on shared practices and identification processes, it has certain references to Communities of Practice (Sharratt and Usoro, 2003). It must be noted that collective identity is often not experienced but rather imagined (Polletta and Jasper, 2001: 284). However, this mutual identification can also be merely the identification with a particular situation or a common cause and not with a strong joint identity (Jaeggi, 2001: 299).

Supplementary to the aforementioned definition of solidarity and sharping its relation to cooperation, with Althammer (2019) different manifestations of solidarity can be described. To differentiate, he first distinguishes whether solidarity is unidirectional and sacrificing help (altruistic) or on reciprocal and mutual advantage (cooperative). Second, he differentiates whether it addresses a specific or an abstract target group. One manifestation is cooperative solidarity that helps to sharpen our concept connecting cooperation and solidarity. The term refers to ‘the willingness of self-interested actors to work together in order to attain a specific goal’ (Althammer, 2019: 16). This type of solidary behavior refers to a specific group or person but is reciprocal. Solidarity is thus based on mutuality. Cooperation is understood in a sense of mutual support. A common goal may, but does not need to be pursued. A sub-category, the adversative solidarity, comes close to a resistant solidarity and exists when cooperative solidarity is expressed in a formation of interests against others, for example, in labor disputes. Adversative solidarity refers to solidarity interactions that lead to collective actions or collective mobilizations (Atzeni, 2010).

For online contexts, Felix Stalder developed the concept of digital solidarity (Stalder, 2013). He conceptualizes digital solidarity as a practice of sharing without a direct return (Stalder, 2013: 56). Working practices are increasingly organized in networks due to the complexity and differentiation of knowledge, workers are connected and depend on these knowledge sharing networks (Stalder, 2013: 15–17). Thus, like connectivist learning theories, Stalder emphasizes the importance of knowledge access and sharing. Especially his understanding of solidarity as weak networks, based on Mark Granovetters concept of ‘weak ties’ (Stalder, 2013: 44), is useful in loose online contexts because it refers to the volatility of interactions and the fact that people’s communication practices depend on and are structured by platform infrastructures, they do not have control over (Stalder, 2013: 43–44).

Field of research

Our research focuses on a German crowdwork platform company providing a testing infrastructure for an international crowd. The platform offers crowdtesting tasks, mainly about testing hard- and software (Mrass and Peters, 2017: 15). In the range between simple, standardized microtasks (mostly clickwork) and more complex, pre-knowledge based macrotasks (e.g. freelance jobs) (Gerber and Krzywdzinski, 2019: 122), we consider testing as a kind of ‘mesotask’ as it does not fit completely into both categories. Testing differs from other forms of crowdwork because crowdworkers usually not only compete for jobs but also compete in the work process. Many tests are organized on a ‘first come, first serve’-principle. This means that only the tester who submits a bug first is paid. Crowdtesters will be invited to a test case and a limited number of testers can participate during a certain time frame (Blohm et al., 2016: 49). After completion, the testers will send reports including a description of ‘how a test was performed and what happened during the test’ (Wang et al., 2021: 1). The reports will then be checked by platform workers and sent to the customer (Blohm et al., 2016: 48). If the report is accepted, the worker gets a remuneration (Zogaj et al., 2014: 379). One common test type on testing platforms is searching for bugs. A bug represents an undesired defect in the soft- or hardware (Tan et al., 2014).

In our case study, the testing platform offers several communication and learning tools. Besides a chat feature for testers during a test case and an information blog providing articles about organizational testing basics, the platform company uses a Discord server. Discord is an online communication platform, primarily known in the gaming scene (Arifianto and Izzudin, 2021: 96). It hosts so-called servers consisting of multiple persistent chat rooms and voice channels (Arifianto and Izzudin, 2021: 95). Discord is also utilized for social and business communication as well as for learning activities (Anderson and Kanuka, 1997; Barnad, 2021). As shown by Arifianto and Izzudin (2021), those learning activities are supported by its attractive interface related to a ‘fun and competitive atmosphere, just like when playing games’ (Arifianto and Izzudin, 2021: 98). Online communication spaces like Discord are important for workplace related learning because they ‘enable access to diverse resources, such as job-related knowledge, task advice, strategic information, and social support’ (Zhang and Venkatesh, 2013: 702).

The case of our crowdtesting platform is special because the platform promotes interactions with and among crowdworkers and uses Discord as a means for community building. This is of particular interest as the crowdtesters compete on the other actual working platforms. What is particularly interesting here is that crowdworkers use it, even though it is unpaid time for them and is not otherwise incentivized. It must be considered and reflected that it is provided and moderated by the testing platform. The testing platform provides several channels on the Discord server dedicated to particular interests, that is, social exchange, psychological or informational support. For communication on the server, there are rules provided by the testing platform, that is, it is not allowed to share insider information from test cases and customers or to discuss bug rejections. Disregarding these rules can lead to a ban from both platforms – the testing platform and the Discord server. This shows the immense influence of the testing platform on the communication practices on the Discord server and therefore limits the interaction practices that can be observed there.

The server is managed by moderators. Moderators are not employees of the platform but self-employed and often experienced testers themselves, gaining an additional fixed remuneration for their moderation tasks on Discord. Their tasks include making sure that the community guidelines are met by the testers, providing learning formats or information, and supporting open questions or discussions. Moderators have the authority to delete messages or ban testers from Discord if they do not comply with the provided rules. This shows the powerful role of moderators as they can influence interaction practices and habits. Therefore, they enforce the interests of the testing platform as it is their task to pay attention to the compliance of the communication rules, which distinguishes them from other testers.

In our case, we had access to ten persistent chat rooms on the Discord server, which have all been established by the platform. Two of those can be understood as learning related, because they are focused on dealing with information around testing. Therefore, they were the focus of our research which aimed to examine learning related interaction practices. Those two channels include the Quiz Channel providing a quiz format every two weeks, and the Question Channel for asking for advice.

Methodical approach

Our methodological approach is characterized by an explorative design as platform moderated social exchange is a research field which has not been examined in detail yet. For data collection, we observed the Quiz and the Question Channel on the Discord server, using online ethnography over a period of eight weeks. With reference to different ethnographic approaches (Breidenstein et al., 2013) and approaches for the ethnography of virtual and online research fields (Kozinets, 2020; Pink et al., 2014; Hine, 2000, 2015) we developed a case specific approach. Approaches of digital ethnography are characterized by their aim to examine cultural practices and experiences that are entangled with digital spaces (Kozinets, 2020: 14). They are based on the assumption that social phenomena are not only displayed in what study participants are able to remember and reproduce in spoken language, often recorded in interviews or survey studies, but especially in social practices which can be observed in the research field. Simultaneously, ethnography is based on the idea of an inner social order of the research field built by the production and reproduction of social practices which leads the explorative research process. Therefore, the study of social phenomena cannot be separated from the research field and its inner logic (Breidenstein et al., 2013: 42–44).

The ethnographic study we conducted is characterized as a participant observation (Breidenstein et al., 2013: 47–50) but with a low amount of active participation. With reference to Hine (2015: 57), our participation can be understood in the sense of lurking, which means that we read the interactions but did not actively participate.

Furthermore, we did not interact as the observation was not disclosed to the Discord users, and we did not want to influence the interactions. This is also the basis for our ethical considerations, based on the procedure scheme for research ethics in netnography developed by Kozinets (2020: 179). Our aim was to collect investigative data and the Discord server can be considered as a semi-public sphere, as anyone can gain access after registration and completion of the entry tests on the testing platform. Additionally, platform representatives were informed about our research purposes. We did not investigate any sensitive topics or vulnerable groups in this study and made sure that data security and anonymization standards are met (Kozinets, 2020: 179).

For data collection and analysis, we followed the approach of Breidenstein et al. (2013). We were taking screenshots and fieldnotes, merging them in detailed observation protocols. To ensure intersubjective traceability, all individual observations were discussed in the research group consisting of five researchers. These protocols were openly coded according to the approach of grounded theory (Glaser and Strauss, 1967; Breidenstein et al., 2013: 142–147). In the next step, these codes were discussed together, related to each other, and thus coded axially (Strauss, 1998: 101). Finally, analytical topics were developed on this basis according to Breidenstein et al. (2013: 134–138), which are characterized by their double relevance. They are relevant in the field itself as well as regarding the scientific discourse and the research question.

In addition to this ethnographic data, we draw on interviews with two community moderators and with seven testers, previously conducted in the context of the project. The interviews with community moderators were focused on organizational aspects of the Discord server. In the interviews with testers, their use of the various exchange options and motives for community exchange were addressed. These interviews are used as a complement to the ethnographic material to gather contextual and non-observable information.

Results

Since the focus of the paper is on digital ethnographic insights, the ethnographic results are presented descriptively and will be discussed in the next chapter with reference to the presented theoretical concepts and the qualitative interviews. In this chapter, we begin by presenting the general observed interactions and roles, followed by a detailed description of the different types of interactions examined on the two Discord channels.

Communication on Discord takes place in English language. Testers and moderators interact in written form or in form of posting smileys or animated pictures (GIFs). Besides the form, interactions differ in terms of personal constellations, content, and the course of interaction depending on the channel.

The following personal interaction constellations could be observed: 1. Between two testers, 2. among a group of testers, 3. between a tester and a moderator, 4. among a group of testers and a moderator.

On Discord, roles can be assigned to users which are shown through badges in the public profile. We observed that moderators act as advisors answering testers' questions and providing further information for self-help. At the same time, they are animators trying to motivate the testers to participate in different community events. Moreover, they are authorities, immediately pointing out inappropriate utterances and rule violations.

Nevertheless, most users on the Discord server are testers. They are globally distributed, which is indicated by badges disclosing their role affiliations as well as their type of specialization on certain testing tasks. Having multiple or specialized roles refers to being a more experienced tester which indicates that there are different levels of experience among the testers. As the observed interactions differ on the two channels, we present the detailed results separately for the Quiz Channel and the Question Channel.

Quiz Channel

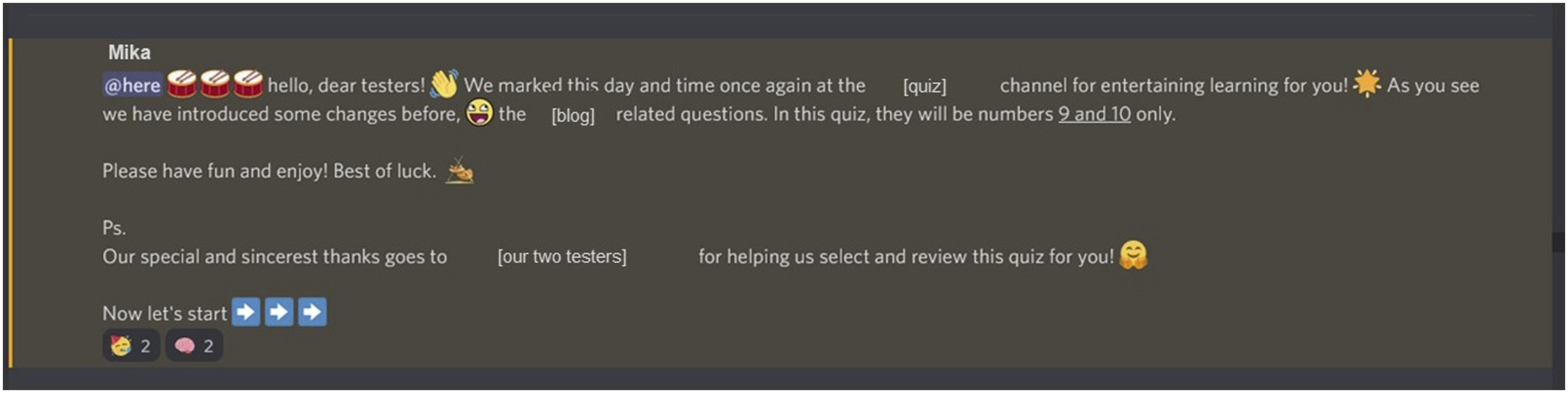

On the Quiz Channel, a quiz event is taking place every other week. The quiz is organized by the platform company and is led by moderators. It contains multiple choice questions concerning realistic test cases having a relation to the actual workflow of testing and the work infrastructure. The platform company implemented the quiz event explicitly for learning purposes. It is intended to serve as a practical and playful preparation for future test cases. The quiz starts with a brief introduction by one of the moderators (see Illustration 1

2

). It takes place as follows: A question and four possible answers as well as a screencast showing the test case are posted. Thereafter, the participants can give the correct answer in a given period of time. Participants can see which answers have been selected by the other participants, adding a social aspect to the quiz. After the time has expired, the correct answer, an explanation, and a link to the information blog are posted. After each question the current ranking of participating testers and their quiz points are presented. Introduction of a quiz event (Protocol07_01, l. 8)

For the most part, the interactions in the Quiz Channel deal with questions concerning the quiz cases. The observation shows that there is a casual atmosphere created during the quiz. The moderators aim to motivate the participants by posting emojis and animated pictures (GIFs), or cheering for right answers (see illustration 2). In the channel, the moderator’s role as animator becomes apparent as the interactions are dominated by motivational posts and animating gamification elements which can be described as a form of entertaining learning.

3

GIF-Reaction (Protocol03_03, I. 19).

4

The moderators’ contributions make up a large portion of interactions, and there are almost never any tester-tester interactions in this event. Nevertheless, some testers verbalize their learning effects. For example, one tester posts: ‘Thanks for the interesting quiz. It seems a bit difficult to distinguish low or high bugs! :)’ 5 (Protocol01_01, l. 169). This comment indicates that the quiz is a way to practice bug classification for at least some users, and that participants do not simply guess the correct answer.

Additional comments also indicate that testers apply their work experience to the quiz: ‘I already did a bug like that, so this time I didn’t fall for it haha’ (Protocol03_03, l. 116). This comment states that the tester knows the correct answer from his/her experience. By providing the participants with links to the information blog of the testing platform, the testers also have the opportunity to explore topics in greater detail, acquire new knowledge, and possibly apply the acquired knowledge in future test cases.

Cases of asking follow-up questions show that some participants want to understand the test content in depth. During one quiz event, a tester reacts with a question to a presented bug: ‘Does this happen with only this color or all of them?’ (Protocol07_04, l.61). After the solution has been posted, he/her shares two other thoughts on this case. Here, it is clear that the tester is trying to understand how the bug classification has been derived. Such in-depth discussions of the bugs and solutions illustrate that learning seems to take place in these practices.

Question channel

The Question Channel offers the opportunity for testers to ask or answer testing-related questions. Compared to the Quiz Channel, there is a more frequent, almost daily social exchange. Asking a question requires basic English language skills, giving relevant context information, defining the problem, and meeting social standards on the platform given either explicitly through community guidelines or implicitly through certain communication styles that were observed. Unclear wording mostly related to insufficient English skills can make the mutual understanding process more difficult. The communication style is rather asynchronous, answers are usually provided within a few hours. In some cases, the answer is given within minutes and short synchronous dialog sequences emerge. Most of the time, answers will trigger certain reactions by testers in form of emojis. A common behavior is to say ‘thank you’ for a provided answer. Both, testers as well as moderators, answer the questions. While especially the more experienced testers often answer questions of seemingly less experienced testers, the moderators step in when no or incorrect answers are provided. Most of the time, answers will trigger certain reactions by testers in form of emojis. A common behavior is to say ‘thank you’ for a provided answer.

We were able to identify the following contents covered in the questions:

The first content are bug classifications, the most common type in this channel. Testers ask for information on a specific task-related problem. They shortly describe a testing scenario including a found bug and ask for the bug type. It is noticeable that some testers are often unclear about how to classify a bug, even though this is the main work component on the testing platform.

The second question type, technology-related information or problems, refers to questions in which testers ask for helpful tools or express technical problems in the test context. These questions are about information that go beyond a specific test case and can be applied in further test cases or contexts. Like the questions on bug classification, these questions are also of a functional nature because they are based on a real task problem.

The third question type, organizational issues, is less common in the channel. These questions mostly relate to platform processes like the payment. Dissatisfaction and conflicts are rarely expressed in these questions although they sometimes refer to faulty processes on the platform, which at least have the potential of leading to conflicts. In some of these cases, however, moderators intervene and refer to other private channels in the Discord community. This shows that moderators act as advisors and authorities.

Besides these three content types, we observed different courses of interaction in the channel which can be topologized as follows:

The first type, brief and functional answers, is given on a specific task-related problem. Typically, testers give brief bug classifications like ‘functional (high) I think’ (Protocol03_04, l. 151). The answering tester provides no further explanation. This is an example of a short question-answer dialog and is frequently found in question dialogs regarding bug classifications. The answer serves direct problem solving because it can directly be applied by the asking testers in a certain test case. If a moderator gives the answer, he or she typically provides explanations and links to further resources. Nevertheless, testers normally do not request an explanation.

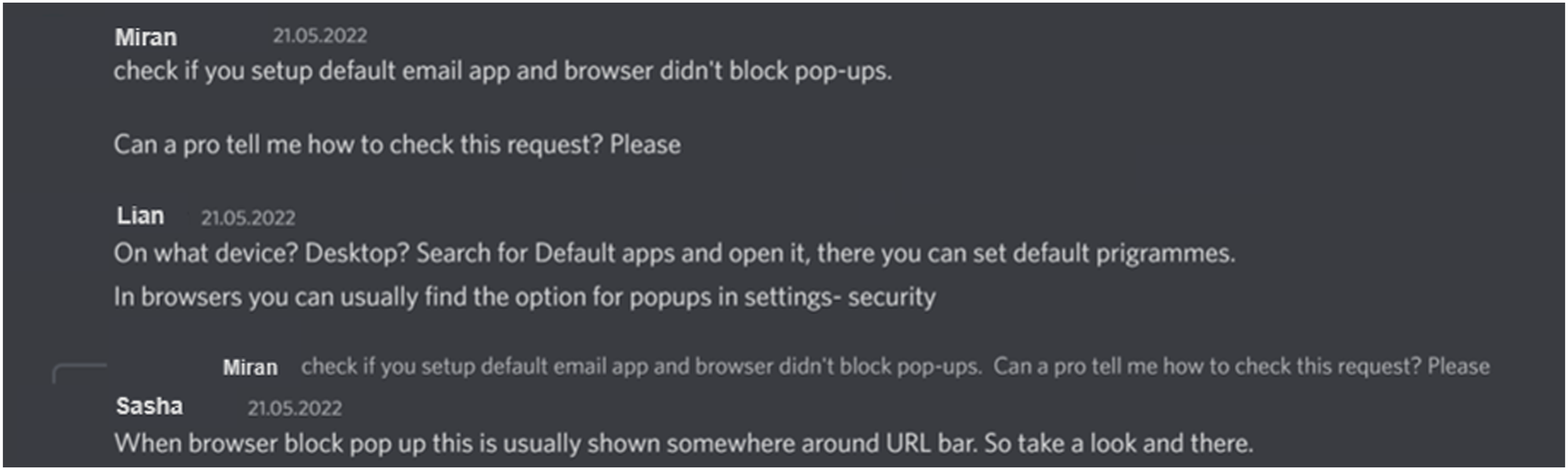

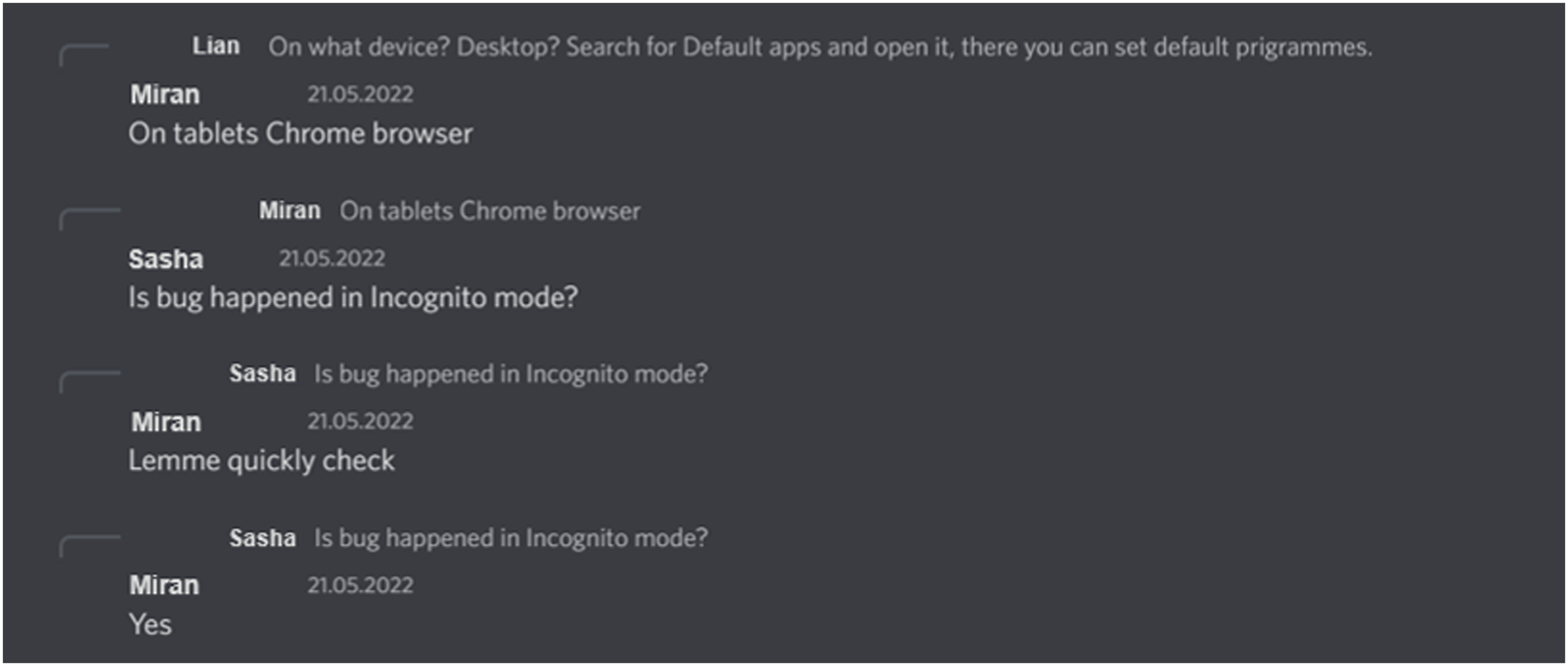

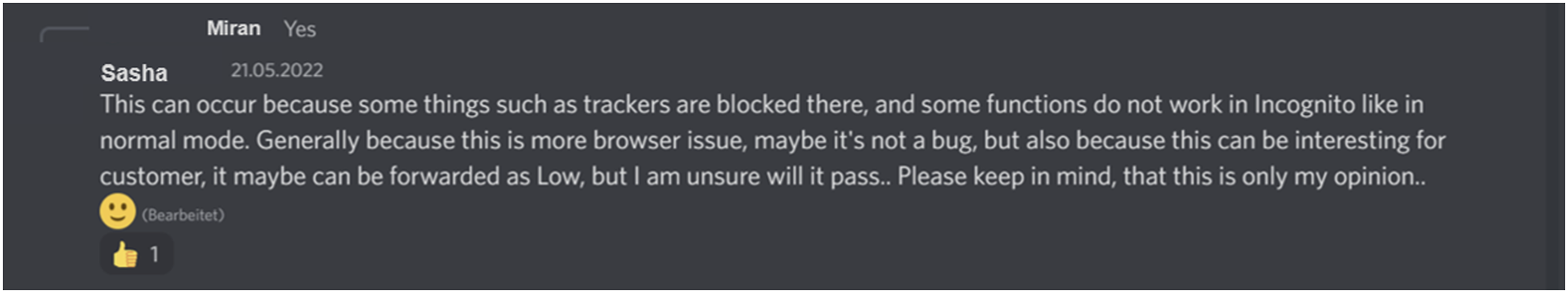

The second interaction type are comprehension questions. In these cases, a comprehension question referring to the original question is asked, because more context information is needed to answer the question. The rare interaction type is used by testers and moderators. An example of this is a conversation between two testers called Miran and Lian and a moderator called Sasha (see illustrations 3, 4, and 5). Sample question and answers in question channel: Part 1 (Protocol03_03, l. 171). Sample question and answers in question channel: Part 2 (Protocol03_03, l. 177). Sample question and answers in question channel: Part 3 (Protocol03_03, l. 180).

The question refers to a testing task. Obviously, Miran does not know how to complete the requested steps and asks for advice of a ‘pro’, likely meaning professional or experienced testers on the platform. The dialog continues with Lian asking for more details to understand the problem. Even though Miran answers Lian’s comprehension question, moderator Sasha answers to Miran’s message explaining what he/she must do.

The questions by Miran lead to a dialog including nine iterations on clarifying the problem, directing to the assessment that it is not quite sure if the reported bug can be accepted or not.

This conversation shows that to give a correct answer, context information is important, while also pointing out a fundamental issue on the platform concerning bug classifications. Those classifications do not seem to be totally objective. There is room for interpretation by the test case supervisors, 6 who accept or reject a bug leading to higher or lower income for the testers. Furthermore, this conversation shows a significant observation on the Discord server: as soon as a moderator gets involved in a conversation, testers do not participate anymore. Even though Miran was referring to Lian’s question, he/she did not post anything thereafter.

The third type of answers, containing further explanations and links, is usually given by moderators, rarely by other testers. An example is the following interaction: a tester called Lou has a problem with a new device on the testing platform. Moderator Marlin gives a short explanation: ‘Hey [Lou]! It must be the native show taps feature, it cannot be just an app that “shows them” - If it’s an iOS device, you don’t have to show any gesture [Link to the testing platform’s information blog]’ (Protocol04_04, l.32).

The fourth type of interactions, feedback, is also part of longer conversations. In some cases, the testers provide feedback if the bug has been accepted or rejected by the supervisor as proposed in the answers given on Discord. The feedback-loops give the previously answering tester the opportunity to check whether his/her answer was correct. This is even more important as bug classifications can be subjective to a certain degree because they depend on the supervisors’ assessment. Thereby, potential learning opportunities are offered. One example is tester Luca posting after a bug classification: ‘Thank you for helping me, also the [supervisor] rejected the bug’ (Protocol05_05, l.146). Feedback-loops like this take place among testers but can only rarely be found.

The fifth type of interactions, responding with an emoji, could be observed in each of the interactions. Emoji reactions were given by users who were part of the interaction, but also by those who did not actively participate with writing an own post. This indicates that there is another common interaction practice on the server: testers lurking and reading interactions of other Discord users.

Furthermore, one-way interactions in form of unanswered questions could be observed. In this case, the question is ignored in following postings. These cases are not common, but they happen occasionally. In most cases, however, the testers do not repeat their question. During our ethnographic observation, it was not possible to conclude why these posts were not answered.

Overall, the interaction constellations as well as the question content and courses of interaction form the basis of potential learning practices or solidary behavior which we discuss in the following section.

Discussion

Interaction practices as social learning practices?

Information exchange and interaction practices could be observed on the two explored Discord channels. Both channels can be seen as an expression for the underlying learning practices as part of a testers Community of Practice. At the same time, the community is strongly guided by the platform principles represented through the role of the moderators. The connections between the actors necessary for learning and gathering up-to-date information are therefore nurtured intentionally by the platform while at the same time serving testers’ aims to support their personal problem solving in their test tasks.

The Quiz Channel offers a standardized learning format organized by the platform with the explicit goal to learn where moderators promote testers’ learning practices. Even though there is hardly any interaction and exchange among testers, the Quiz Channel seems to be used intentionally for learning by simply taking part in the quiz or (although less common) by discussing quiz topics with others. This discussion in the quiz event is an interactive social learning practice guided by the moderators and mainly consisting of conversations between testers and moderators. In some answers and reactions of testers, explicit reflection and learning intentions of the quiz content could be observed.

Social interactions and more complex dialogs among crowdworkers mainly take place on the Question Channel as testers ask the community for help regarding work- and task-related questions. If learning happens it rather seems to be a byproduct, suggesting that it can be characterized as rather informal. Interactions are particularly asynchronous asking-answering sequences and resemble the communication in online forums. Questions are often related to specific individual tasks and mainly dealing with bug classifications. Other questions, not so much focused on a specific task, deal with questions on technology-related information, for example, the use of technical devices, or platform-related organizational issues (e.g. payment problems). In one of the interviews, a tester describes his usage behavior as follows: “So, they have a large, large Discord server, where you can exchange information, yes, just about current tests, or what does not work, that there is also often the technology of the test platform does not work or the test product itself does not work at all. That also happens more often. And then to ask, hey, is it me, am I too stupid, or do my devices not fit or work, is that the same for you?” (Frank, tester: ll. 518–522)

7

The channel is mostly used in a functional and situational way by the testers to deal with current problems. The questions have a concrete, work practice-related reference and serve to quickly solve a task-related problem. These questions are formulated in such a way that the answers they are looking for can be applied directly in the test case. This user behavior is also beneficial for the testing platform because many questions are not sent to the testing platform but solved in the community.

As shown, the question-answer sequences between testers are mostly short, and the information is accepted with no further discussion or exchange. These brief, functional interactions indicate that, in our case, peer-to-peer learning particularly consists of short-term information exchange and can therefore be interpreted as a specific work practice-related form of learning practices. Hereby, testers do not learn through primarily following specific learning objectives but by reaching their working objective, thus learning incidentally as a form of informal workplace learning (Sauter and Sauter, 2013).

Regarding the question of whether the testers use Discord to learn and to understand what they do or just to get a quick answer to a question to get the job done, one of the moderators responded: “Both of them. (laughs) And of course, to learn to know what they should do next time when they have such situation. And also, to provide, to get a quick answer on their questions. So, because at our platform every second matters, so both of them.” (Finjas, moderator: II. 459–462)

During the observation of Discord, the specific characteristics of these learning practices became clear. Work-related learning fosters and culminates in a kind of advanced trial-and-error learning. Since they receive feedback by test case supervisors (e.g. whether the bug classification is correct), they know whether the answer is correct, and they can apply or use this as heuristics in similar future tasks.

Longer and more complex interactions are mainly guided by the moderators. As shown in chapter 6, the moderators’ answers usually differ from those of other testers. They regularly explain the answer in much more detail than the testers, and often add information sheets or links to the information blog to their answer. It is mostly the moderators who, with their answers, provide more general in-depth information to facilitate learning processes and to help the testers help themselves. Moderator Sasha describes his/her way of working as follows: “Usually, I help other testers by providing them answer because I’m, to say, more experienced than others […] I always give reasons why I have provided such answer. Usually there are questions about basic stuff which are described in our [information blog], and I just answer them in [a] clear way and then provide the link to the [information blog] article where they can find more about their question” (Sasha, moderator: ll. 18–43).

The statement and observational results also indicate that they try to encourage the testers to reflect on the answer and thus to learn. In most cases, the moderators provide directly applicable answers and give general information as well as case examples. The information blog and sheets do not directly relate to the current task context and the associated application context. Obviously, moderators, as executives of the controlled community structures (Gerber, 2021), promote learning opportunities. This indicates one the one hand that controlled community structures in crowdwork, which go beyond a merely self-initiated organization of the crowd but use a moderation system, can promote additional learning opportunities. On the other hand, it also shows that due to the special role of the moderators and to fixed themes of the channels, the community exchange is structured and bounded. It mainly serves the platform goals, which is to actively foster knowledge sharing among the tester community by even paying experienced testers for taking on the role of moderators.

Nevertheless, there are also rare cases in which testers give feedback on whether the answer was correct or not and thereby offering learning opportunities. In these cases, another loop of information exchange takes place and offers the original answerer the possibility to acquire new knowledge on the information given by him/her. But this seems to be rather exceptional. In addition, the manifold emoji reactions indicate that learning does not only consist of active participation in interactions, but that it can also take place by observing postings and passively participating in the community (lurking). For example, one interviewee explained: ‘But I almost never communicate with other testers. But I like to read what they write’ (Alma, tester: ll. 239–240). 8

In summary, it can be stated that except for explicit learning events organized by the platform, mainly functional and situational social learning practices can be observed on Discord. Inspired by Margaryan (2016), who emphasizes the differences between learning with and from other people, we found both forms of learning while also identifying their concurrence. Furthermore, we see the testers’ behavior as an expression of a Community of Practice especially through the informal learning practices related to problem solving in the Question Channel which built upon the knowledge of more experienced testers and moderators. These interactions are an expression of a shared domain of interest namely the domain of software testing. Through sharing established problem-solving strategies testers gain the opportunity to develop a shared repertoire especially for tasks related to bug classifications. This repertoire can be developed both by testers actively taking part in the interaction and passively lurking participants. At the same time the community is strongly guided and kept alive by the moderators who are paid for their activities by the platform. This makes this Community of Practice part of the platform organization to a certain degree, serving their aim to distribute knowledge among the testers. Connections between the actors needed for knowledge distribution and learning in a connectivist understanding seem to be fostered and sometimes even kept alive by the platform. This supports the idea of Gerber (2021) that communities in crowdwork can be used as management instruments.

Social learning practices as a form of solidarity among workers?

The second question is to what extent these observable social learning practices can be interpreted as a form of solidarity among crowdworkers. Our observed tester-moderator interactions cannot be defined as solidary as there is no symmetrical relation, and it is not a non-instrumental behavior as community moderators are working for the platform and getting paid for their activity. It thus contradicts central characteristics of solidarity following the definition used in this paper (Jaeggi, 2001: 291–292). For solidarity, only tester–tester interactions with no moderators involved are relevant as their relationship can be considered as reciprocal, symmetrical, and non-instrumental. The answering testers have no direct benefit from answering questions or providing information to others, and could get into a demand situation themselves.

As the Quiz Channel was dominated by moderators, and testers do rarely interact, no solidary practices were observed. In the Question Channel there is room for solidary practices where interactions among testers serve to provide workplace-related mutual support, underlining these interactions as solidary practices and relationships where people help each other: tester–tester interactions are based on providing information and helping others to fulfill their tasks, which can be seen as an act of solidarity. Especially providing fellow testers with essential information about bug classifications or technological issues allows them to fulfill their individual tasks and contribute to the questioners’ income.

The observation of a rather situational, functional use of the Discord server for information exchange and the observed tester relations can be characterized as what Stalder characterizes as digital solidarity in form of knowledge sharing in weak networks (Stalder, 2013: 11). Based on the concepts of Stalder (2013) and Althammer (2019), we propose the term weak cooperative solidarity to describe this kind of solidarity among testers. Characteristics for weak cooperative solidarity are its weak and functional character because it is based on loose relations in an online forum and mutual support among crowdworkers to fulfill an individual need. Social media seems to reinforce the mutual identification as a tester at least to a certain degree, because one could find oneself in the same situation and would be glad of help in a similar situation. Although it is unclear to what degree there is an explicit shared identity with common goals or interests, testers identify with the testing situations of others and help them to achieve their individual goals. In addition, the conducted interviews indicate that there is no strong direct competition perceived as one interviewee explains: ‘But it does feel-, well, I don’t have there-, it’s not a competitive thing for me’ (Jonas, tester: ll. 1303–1304). 9

The weak, functional networks of testers described above are not based on in-depth exchange processes. Not every tester apparently has interest in strong social ties and learning practices (at least in these two channels). One of the testers explained, that it is not a matter of finding friends, but rather to get the work done: ‘No, no, no, no, no, I am more old-fashioned and I do not look for friends online’ (Frank, tester: l. 526). 10

Because the channels are limited in terms of content, there is almost no interaction that goes beyond these limitations. If something thematically is posted that is not in line with the guideline or that shows dissatisfaction, for example, with bug report rejections, the moderators point out that the post does not fit into that channel, delete the post, or the dissatisfaction is channeled by quick referrals to other points of contact. As a result, hardly any dissatisfaction with the testing platform is expressed or is channeled quickly. On the platform’s Discord server, no adversative or resistant solidarity among testers could be observed. Thus, the form of solidarity found among testers on a platform-initiated infrastructure can be distinguished from the solidarity among workers of the on-site gig-economy (Heiland and Schaupp, 2021; Cant, 2019) or other exchange forms in crowdwork like at Amazon Mechanical Turk (Irani and Silberman, 2013), where self-initiated exchange is mostly characterized by a common resistance against the company or power imbalances. In our case, solidary practices are characterized by their supportive and functional character, because testers help each other fulfilling their tasks. Reasons why other forms of solidarity do not emerge could be related either to the controlled and regulated community space or to the tester’s attitudes.

Conclusion

The aim of our study was to examine the interaction practices on the Discord server, to elaborate whether these practices can be understood as crowdworkers’ social learning practices, and to figure out to what extent there are forms of solidarity among those social learning practices.

First, we were able to show that platform-controlled social learning practices take place on the Discord server provided by the platform company. Most testers use the server in these channels in an equally situational and functional way to solve their work problems, as evidenced by the large number of observed short question-answer sequences. Moderators are to guarantee the quality of answers and solutions and supplement these sequences with further information and thereby guide the interaction and learning practices on the server. The exchange practices can be characterized as an expression of a Community of Practice among the testers. At the same time, the activities within the community are strongly guided by the moderators, which are paid by the platform to nurture knowledge exchange on the Discord server.

Second, we were able to show that weak cooperative solidarity can be found in tester-tester interactions. This form of solidarity can be observed to be very functional; it is mostly about short, quick, and directly applicable support. This is a particular result especially against the background of the competitive setting on crowdwork platforms. However, due to the described functionality of the interactions, learning is not necessarily directly in focus, but it can certainly be a possible by-product.

Solidarity among testers is mostly seen in terms of specific work assignments where they support each other and not in terms of a collective resistance to improve working conditions. To what extent this is due to the fact that the testers are more satisfied with the working conditions, we could not find out with this study design. This is to be investigated in further research on solidarity in crowdwork. Weak cooperative solidarity, in contrast to resistant solidarity, can be used by the platform company for better task performances, thus benefitting the platform company. This distinguishes the community exchange from other forms of communication among crowdworkers that do not take place on platform-driven community structures (Soriano and Cabañes, 2020; Wood et al., 2018; Yin et al., 2016).

In addition to our findings, further research needs to be conducted on learning practices on social media in the context of crowdwork. Our online ethnographical approach has proven to be a viable method to analyze social learning practices on social media, likely to also be applicable in other contexts. Nevertheless, the phenomenon of crowdwork is still evolving and in this study only one case on social learning practices and solidarity could be examined. Future research should further test the results of this case study concerning its applicability and robustness in other contexts. There, special interest should be placed on the complexity of social practices in crowdwork and the interrelatedness of stakeholders involved. Additional research can be conducted to gain further insights into motivation of crowdworkers helping each other via social media tools. Furthermore, the role of the platform company ought to be scrutinized in more detail, as the findings in our study indicate that the way in which they structurally influence the interaction among crowdworkers (e.g. by deleting posts) might also affect their learning opportunities.

Footnotes

Author’s Note

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research paper is funded by dtec.bw – Digitalization and Technology Research Center of the Bundeswehr. dtec.bw is funded by the European Union – NextGenerationEU.