Abstract

In this study, I examine the extent to which voters apply consistent moral and democratic standards to political misinformation, and how these standards interact with perceived misinformation in the campaign. Drawing on an original survey experiment during the 2024 U.S. presidential campaign, I randomly assigned respondents to read one of ten fact-checked misleading statements made by either Kamala Harris or Donald Trump in a nationally televised debate. I found that individuals were more likely to justify, excuse, and downplay the democratic harm of misinformation when it came from their preferred candidate. While this tendency was evident across both partisan groups, the tendency to downplay the democratic harm of co-partisan misinformation was particularly noticeable among Trump supporters. Moreover, the main effect was stronger among respondents who perceived misinformation as widespread during the campaign. Finally, I demonstrate that willingness to accept misinformation varies by policy area, with greater justification for misleading statements on immigration, abortion and gun rights among Trump supporters, and trade and tax among Harris supporters. These findings highlight how partisan loyalty can distort moral judgment and underscore the challenges misinformation poses for democratic accountability.

Introduction

The spread and acceptance of misinformation is not only a cognitive or partisan phenomenon but also a moral one (Effron and Helgason 2022). In an ideal world, citizens would weigh politically misleading statements against questions of justification, acceptability, and harm for democratic processes. In reality, however, there is mounting evidence that voters often overlook norm violations and moral transgressions when these are committed by elected officials they support, yet respond strongly when similar actions come from the opposing side (Bhatti et al. 2013; Karv and Kim 2022; Littvay et al. 2024; Simonovits et al. 2022; Walter and Redlawsk 2019; Wolsky 2022). Furthermore, partisans tend to misperceive their political opponents as lacking basic moral values relative to their own group (Puryear et al., 2024). While the extent to which such double standards in evaluating individual politicians and political parties undermine democratic processes, particularly politicians’ accountability to the public (Cagle and Davis 2024; Fang and Thal 2024), remains open to debate (Birch and Allen 2015), voters’ tendency to apply inconsistent moral judgments to political misconduct poses serious challenges for various micro- and macro-phenomena, from democratic norms to social cohesion within political entities (Clayton et al., 2021; Schedler 2019). This may be particularly alarming in highly polarized societies, where the perceived benefits of party loyalty and in-group favoritism can outweigh the value of maintaining consistency in moral judgment (see Barber and Pope 2019; Fang and Thal 2024 for a discussion).

This study seeks to make several contributions to the growing body of work that examines voters’ reactions to politicians’ deviations from the standard of truth-based discourse. First, it tests a recent finding on moral flexibility by applying it to the case of the 2024 U.S. presidential election. Second, moving beyond the single-item measures common in prior research, it uses multiple dependent variables to capture different aspects of moral judgment, which paves the way for a more nuanced analysis of the political underpinnings of moral flexibility. Specifically, the paper examines whether co-partisan misinformation is justifiable, morally acceptable, defensible as a political strategy, harmful to democratic discourse, and influential in shaping individuals’ voting behavior. Third, the study further explores the conditions under which partisan moral flexibility strengthens, with particular emphasis on perceptions of misinformation prevalent in the system. Finally, although preliminary, the paper also shows that individuals’ willingness to justify co-partisan misinformation may be dependent on issue areas, suggesting that voters may have a stronger tendency to justify misinformation in certain policy areas more than others.

To that end, I fielded an original survey experiment in the U.S. prior to the 2024 presidential election. Participants were randomly assigned to read false or misleading statements made by Kamala Harris or Donald Trump during the September 2024 presidential debate, accompanied by a note that fact-checkers had later confirmed the statements as inaccurate. I expect respondents exposed to misinformation from their preferred candidate to be more likely to view the statement as justified, morally acceptable, and strategically defensible than respondents exposed to misinformation from the opposing candidate (i.e., Moral Judgment Hypothesis). Additionally, I argue that the treatment group respondents will be less likely to believe the misleading statement by their preferred candidate undermines democratic discourse or affects vote choice, compared to respondents in the control group (i.e., Democratic Standards Hypothesis).

Although my argument relies heavily on the universalistic assumptions proposed in the literature on partisan motivated reasoning (Bolsen et al., 2014; Dickson and Yildirim, 2025; Lodge and Taber, 2013), I contend that voters’ willingness to tolerate misinformation by their preferred candidate is moderated by their beliefs regarding the prevalence of misinformation in the 2024 U.S. presidential campaign (i.e., Contextual Justification Hypothesis). That is, co-partisan misinformation may be perceived as less of a violation by voters who believe that misinformation is widespread during the election period. I find strong support for my hypotheses. Interestingly, the effect of treatment assignment does not differ significantly between partisan groups for various aspects of moral flexibility, except for perceptions of democratic harm. This is greatly in line with past scholarship (e.g., Littvay et al. 2024; Strandberg et al., 2020; Walter and Redlawsk 2019). Unlike past studies, however, I also show that Trump supporters show a stronger tendency than others to minimize the extent to which co-partisan misinformation is perceived to undermine democracy. Finally, I demonstrate that willingness to accept misinformation varies by policy area, with greater justification for misleading statements on immigration, abortion and gun rights among Trump supporters, and trade and tax among Harris sup-porters.

In what follows, I first briefly review existing work on partisan moral flexibility and derive my formal hypotheses based on the theoretical discussion. I then introduce my survey and empirical methodology, present results from regression analyses, and conclude with a discussion of implications of my findings.

Theory and hypotheses

In his seminal work, Haidt (2012, p. 107) has famously formulated morality as something that binds and blinds: “In moral and political matters we are often groupish, rather than selfish. We deploy our reasoning skills to support our team, and to demonstrate commitment to our team.” This implies that not only do strong group-oriented moral commitments, such as loyalty to one’s political party, bind people together, they also often blind people to wrongdoing within the group. Although Haidt (2012) argues that conservatives rely more heavily on the loyalty foundation compared to liberals, extant research shows that individuals on both ends of the ideological spectrum display similar behaviors when it comes to in-group favoritism and confirmation bias (Janoff-Bulman and Carnes 2016; Littvay et al. 2024; Strandberg et al., 2020; Walter and Redlawsk 2019). Specifically, studies show that both Democrats and Republicans respond disproportionately more negatively when a politician of the other party violates a moral foundation (Walter and Redlawsk 2019, 2023). In a similar vein, Morais et al. (2020) find that unethical behavior by a president is perceived as more acceptable by both sides of co-partisans. A study by Graham and Svolik (2020) shows that voters of both parties are more likely to downplay the importance of violations of democratic norms when their favorite candidates are responsible for such violations.1

Comparative research suggests this is not solely unique to the U.S. public. In an experimental study from Spain, Anduiza et al. (2013) find that respondents judge the exact same offense more harshly when the responsible politician is a political rival. Similarly, an analysis of 18 sub-Saharan African countries by Chang and Kerr (2017) reveals that individuals with clientelistic networks tend to be more tolerant of corruption. Based on a sample of Norwegian citizens, Schönhage and Geys (2024) show that politicians’ blame avoidance behaviors in times of allegations of misconduct tend to be more effective among voters favoring the politician’s party. A growing consensus contends that partisan identity outweighs all other social factors in shaping Americans’ attitudes toward others (Norman and Green 2025), and that individuals are more tolerant of violations of the norm of fact-grounding especially if doing so serves the party loyalty norm (Hull et al. 2024).

While the evidence for individuals’ tendency to tolerate, or even support, moral violations in line with broader partisan goals is well documented, less is known about justification strategies involved in such behavior. In a recent work, Kim et al. (2024) argue that voters who engage in loyalty-signaling by supporting co-partisans’ false statements can seek to justify their support through two mechanisms: factual flexibility and/or moral flexibility. According to this, some voters may knowingly accept or even appreciate factually false statements when they believe the statements express a deeper political or moral ‘truth’ aligned with their values or group identity. The authors reach the conclusion that while moral and factual flexibility are closely related, partisan moral flexibility adds significant predictive power to individuals’ tendency to believe misleading statements (i.e., factual flexibility). 2

In short, I argue that individuals will evaluate misinformation more favorably when it originates from their preferred presidential candidate. Specifically, I expect respondents exposed to a statement made by their preferred presidential candidate to view such statements as justified, morally acceptable, and strategically defensible at greater rates compared to others. I also argue that they are less likely to see these statements as harmful to democratic discourse or consequential for their vote choice. My expectations reflect the idea that partisanship shapes both moral and democratic evaluations of political behavior.

In developing these expectations, it is important to consider the distinct dimensions along which individuals may evaluate political misinformation. Normative judgments can take the form of assessing whether a statement is justifiable given the circumstances, morally acceptable according to personal or societal standards, or strategically defensible in advancing political goals. Beyond these moral dimensions, individuals may also evaluate the systemic implications of misinformation, such as its potential to harm democratic discourse, and the personal, behavioral consequences, such as whether it would influence their likelihood of voting for the candidate. While these dimensions are conceptually related, they capture different mechanisms through which partisanship might shape evaluations, and they need not all be affected with equal strength. Moral Judgment Hypothesis: Respondents exposed to misinformation from their preferred candidate will be more likely to view the statement as justified, morally acceptable, and strategically defensible than respondents exposed to misinformation from the opposing candidate. Democratic Standards Hypothesis: Respondents exposed to misinformation from their preferred candidate will be less likely to believe the statement undermines democratic discourse or affects their vote choice, compared to respondents in the control group.

While partisans may be generally inclined to excuse misleading behavior by their preferred candidate, I argue that this tendency is unlikely to be uniform across individuals. One factor that may shape the strength of partisan moral flexibility is how pervasive individuals believe misinformation is within the broader political environment. When voters perceive misinformation to be widespread, they may be more inclined to rationalize its use as a necessary tactic (or at least a common one), thus justifying similar behavior by their own side. In this view, co-partisan misinformation becomes less of a violation and more of a strategic response in an already corrupted informational landscape. To test this possibility, I examine whether the effect of co-partisan misinformation exposure on moral and democratic judgments is moderated by individuals’ beliefs about the prevalence of misinformation in the 2024 U.S. presidential campaign. Contextual Justification Hypothesis: The effect of co-partisan misinformation exposure on individuals’ moral and democratic evaluations will be stronger among those who perceive misinformation to be highly prevalent in the 2024 U.S. presidential campaign.

Data and methods

I draw on an original survey experiment with approximately 1000 respondents in the United States in the lead-up to the 2024 presidential election. The survey, conducted between October 31-November 4,3 was administered by Qualtrics, which recruited respondents through their online panel. The primary objective was to examine whether exposure to misleading political statements made by one’s preferred candidate influences individuals’ moral judgments, democratic attitudes, and potential application of double standards in evaluating political behavior. I have added a speeding check measured at one-half of the median time, which automatically terminated those who are not responding thoughtfully. Also, to ensure appropriate targeting, the survey began with questions about age and country of residence. Respondents younger than 18 or residing outside the US were screened out. The resulting sample is fairly representative of the general population in terms of formal education, urban-rural residence, and racial background, although women are somewhat overrepresented (see Appendix C for survey characteristics).

At the beginning of the survey, respondents were asked which candidate they intended to vote for in the upcoming election, with the response options being Donald Trump, Kamala Harris or ‘undecided/other’. Following this, each respondent was randomly assigned to one of ten treatment groups. These groups were structured around five misleading statements made by Vice President Kamala Harris and five made by former President Donald Trump during the September 10, 2024 presidential debate. Each respondent was shown one of these statements, which had been fact-checked and judged false or misleading by FactCheck.org. I chose to use multiple misleading statements per candidate (five each) for an important reason: I aimed to avoid issue salience effects that could arise if different candidates were associated with statements about inherently different policy domains. Specifically, respondents were assigned to one of ten experimental groups, each centered on a statement related to (i) immigration, (ii) gun ownership, (iii) abortion, (iv) the Affordable Care Act, (v) the US Capitol attack, (vi) trade deficit, (vii) unemployment, (viii) a second statement on abortion, (ix) election threat, or (x) sales tax, with the first five attributed to Donald Trump and the latter five to Kamala Harris.

4

This approach ensures that my measure of treatment effects is not driven by the specific content of a single issue, but rather by the broader concept of a misleading claim made by a favored political figure. An example of such a treatment is as follows:

5

In the Presidential Debate on September 10, 2024 between Vice-President Kamala Harris and former President Donald Trump, Trump said that immigrants were eating residents’ cats and dogs. Fact checkers later pointed out that this statement was false or misleading. However, some suggest this statement was made to draw attention to the issue.

For analytical purposes, I constructed a single binary treatment variable to capture co-partisan exposure to misleading information. Respondents who read a misleading statement made by the candidate they preferred (i.e., Democrats reading a Harris statement or Republicans reading a Trump statement) were coded as treated; all others served as the control group. I then conducted regression analyses to examine the effect of this treatment on key outcomes designed to capture respondents’ moral and democratic evaluations of political behavior. I asked (i) whether the candidate’s statement was justified, (ii) whether spreading such information was morally acceptable, (iii) whether the ends justified the means in making the statement, (iv) whether such statements undermine democratic discourse, and (v) the extent to which such statements impact the likelihood of voting for this candidate. My goal in selecting justifiability, acceptability, and defensibility, alongside perceived effects on democracy and vote choice, was to capture distinct yet related dimensions of moral flexibility in political judgment. These measures allow me to assess whether individuals apply consistent moral and democratic standards, or whether those standards shift when evaluating misleading behavior by a co-partisan candidate. For each questions, respondents were asked to choose from one of the five options; not at all (1), not very (2), a little (3), somewhat (4), and extremely (5).

To assess the success of the randomization procedure, I conducted balance checks at two levels of treatment assignment. First, I estimated a multinomial logistic regression predicting assignment into the ten individual treatment groups (five by each candidate) using a set of covariates. Second, I estimated a binary logistic regression predicting assignment into the collapsed co-partisan treatment condition (i.e., whether respondents were exposed to a misleading statement made by their preferred candidate). Across both models, the results indicate that none of the key covariates significantly predicted treatment assignment at conventional levels. These findings suggest that randomization was successful, which strengthens confidence that any differences in outcomes can be attributed to the experimental manipulation rather than pre-existing differences across groups. I provide these results in Table B1, Table B2 and Table B3.

Since control variables are not needed in the presence of a successful randomization, I begin by reporting simple bivariate regressions where the only predictor is the treatment variable. However, I report multivariate models using some of the commonly utilized control variables, which include perceived misinformation in election campaigns, gender, age, education, trust in the media, vote choice, ideology, self-efficacy, and attention to politics (see Table C1). I present the descriptive statistics of key variables in Appendix A.

Results

Main results

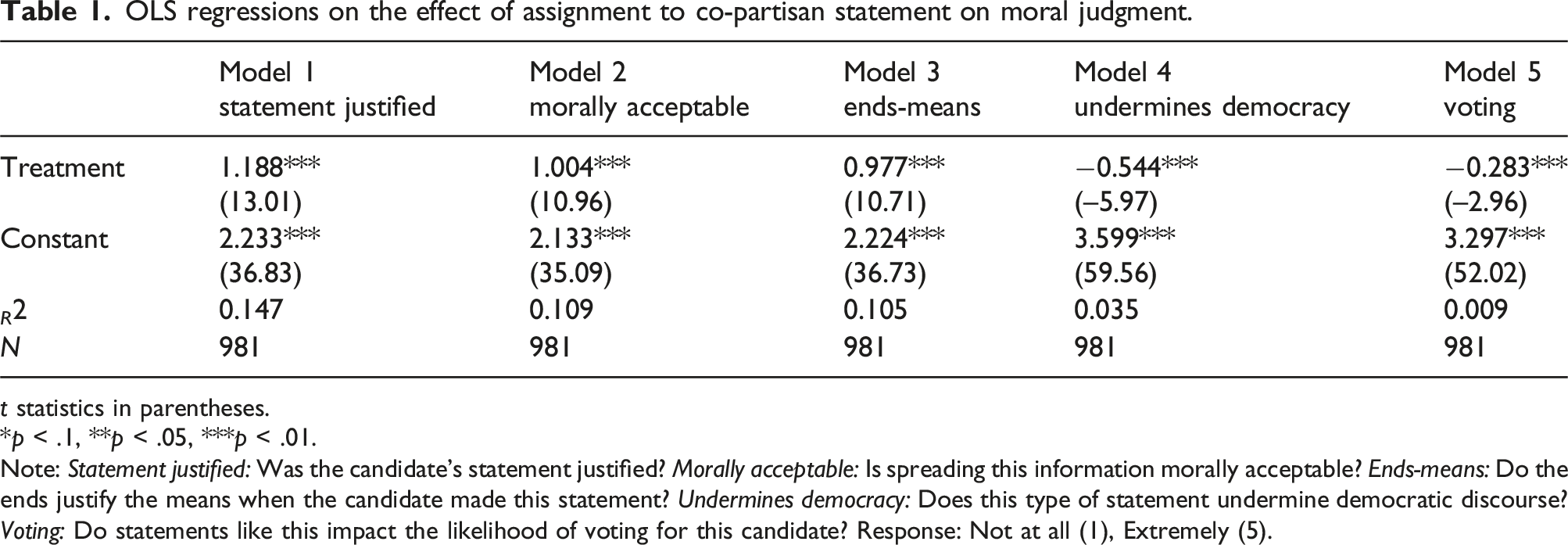

OLS regressions on the effect of assignment to co-partisan statement on moral judgment.

t statistics in parentheses.

*p < .1, **p < .05, ***p < .01.

Note: Statement justified: Was the candidate’s statement justified? Morally acceptable: Is spreading this information morally acceptable? Ends-means: Do the ends justify the means when the candidate made this statement? Undermines democracy: Does this type of statement undermine democratic discourse? Voting: Do statements like this impact the likelihood of voting for this candidate? Response: Not at all (1), Extremely (5).

Model 4 examines the extent to which treatment assignment affects respondents’ agreement with the argument “Does this type of statement undermine democratic discourse?”, whereas Model 5 focuses on the question of “Do statements like this impact the likelihood of voting for this candidate?” In both models, the treatment variable carries a negative sign, and reaches statistical significance at p < .01. This indicates that respondents in the treatment group, on average, expressed significantly weaker beliefs that misinformation by presidential candidates undermines democratic discourse and that such behavior would affect their likelihood of voting for the candidate, compared to those in the control group. However, it is important to note that the effect sizes are considerably smaller compared to the size of coefficients presented in Models 1, 2 and 3. This is also reflected in the substantially lower explained variance in Models 4 and 5 compared to the earlier models.

To further aid interpretation, I examined the likelihood of answering each question with “extremely” across treatment and control groups. The differences are both statistically and substantively significant: moving from the control to the treatment group increases the probability of saying “extremely” by two-to threefold in Models 1, 2, and 3, whereas it decreases the likelihood in Models 4 and 5 by 25% and 38%, respectively. While the former represent substantively important differences, the latter two are arguably modest in size. I present other quantities of interest in Table C9. 7

Heterogeneous effects

I now turn to analysis of heterogeneous effects. I argue that perceptions of prevalence of misinformation in the 2024 US Presidential Campaign moderate the effect of treatment assignment on moral justification for misinformation. I contend that those who perceive greater prevalence of misinformation will have heightened moral justification for misinformation. I estimated new models including an interaction between the treatment and misinformation variables, and illustrated marginals effects estimates in the appendix due to space constraints.

Figure C2 confirms that respondents exposed to co-partisan misinformation are more forgiving, especially when they believe misinformation is widespread. Those who believe that misinformation was prevalent during the presidential election campaign were more likely to say that misleading statements by presidential candidates were justified, morally acceptable, and instrumentally defensible, and less likely to see them as undemocratic or vote-damaging. While control group respondents did not seem affected by misinformation beliefs when it comes to statement justification, its moral acceptance, and instrumental defensibility, those who held misinformation beliefs were more likely to agree that misinformation by presidential candidates undermines democratic discourse and influences voting behavior. These results illustrate clear evidence that the partisan double standard documented in the previous section is amplified by perceptions of a misinformation-rich environment, which is consistent with theories of motivated reasoning and asymmetric moral judgment.

Do Republicans display stronger moral justifications for misinformation than Democrats? To address this, I estimate models testing the interaction between treatment condition and partisan identification, with full results presented in the online appendix (see Table C2). As seen in the table, the interaction term is not statistically significant in any of the models except for Model 4, which is largely in line with findings from recent scholarship (e.g., Littvay et al. 2024). In other words, respondents who intended to vote for Harris and those who intended to vote for Trump did not differ significantly in their moral justifications for misinformation. However, a note on the finding from Model 4 in Table C2 is warranted. The negative and statistically significant (p < .01) interaction indicates that Trump voters who were exposed to Trump’s misleading statement were especially unlikely to view it as undermining democratic discourse. To put it differently, the tendency to downplay the democratic harm of co-partisan misinformation is particularly pronounced among Trump supporters.

I further explore how the treatment effect varies across separate issue categories concerning misleading statements by presidential candidates. Recall that the respondents were exposed to one of ten statements by a presidential candidate. This raises the possibility that the results are driven by one or more salient policy areas. Using subsamples based on individual statements, I present linear regression models in the appendix (Tables C3–C7). As seen in the tables, the treatment effects are not uniform across issues, and some coefficients are much larger than those in the pooled models, which suggests that statements on some issue areas are more likely to trigger moral flexibility than others. For Trump supporters, immigration, abortion, and gun rights consistently show larger treatment coefficients compared to the pooled estimates. By contrast, Harris supporters show less consistent patterns: while the treatment effects tend to be weak or null in many issue areas, they reach substantive and statistical significance especially in areas like trade and sales tax. Across all moral dimensions, however, it is notable that for Harris voters, the models predicting perceived influence of misinformation on vote choice fail to produce statistically significant treatment effects. In other words, my results regarding influence on vote choice are mainly driven by Trump supporters. Importantly, however, I proceed cautiously in interpreting these findings given the relatively small sample size (about 100 respondents per statement).

Conclusion

In this study, I sought to address whether voters apply consistent moral and democratic standards when evaluating misleading political statements. Drawing on a pre-election survey experiment conducted in the U.S. in the lead-up to the 2024 presidential election, I exposed approximately 1000 respondents to false or misleading statements made by either Kamala Harris or Donald Trump. Regression analysis reveals strong evidence of partisan moral flexibility: Respondents in the treatment group, namely, those exposed to co-partisan misinformation, were significantly more likely to justify the candidate’s statement, to view the spread of that misinformation as morally acceptable, and to endorse the notion that the ends justify the means. They were also significantly less likely to believe that such behavior undermines democratic discourse or would impact individuals’ likelihood of voting for the candidate. These results remained robust when controlling for demographic, attitudinal, and political variables. Further analysis revealed that the effect of partisan alignment was moderated by perceptions of misinformation in the political system: those who perceived misinformation to be widespread were even more likely to excuse misleading statements by their preferred candidate. Importantly, while I found no meaningful differences between supporters of Harris and Trump in four of the five aspects of moral flexibility investigated in this research, I found that Trump supporters exposed to a misleading statement from him were particularly unlikely to see it as harmful to democratic discourse. Finally, I found that voters were more willing to justify co-partisan misinformation on certain policy issues, such as immigration, gun rights and abortion among Trump supporters, than on others.

These findings have important implications. First, my findings provide further evidence that partisanship can override commitments to moral consistency in political reasoning. The willingness to justify misleading statements from one’s preferred candidate or political party, even when they have been explicitly labeled as false, demonstrates some of the challenges of sustaining democratic norms. Second, the stronger effect among respondents who see misinformation as widespread implies that weakened standards in evaluating the factual accuracy of political statements can create a self-reinforcing cycle of moral flexibility, and distrust in political actors. In such a context, accountability may become more fragile, and partisan identity may continue to distort voters’ moral standards.

This study is not without limitations. While I used multiple misleading statements to reduce issue-specific bias, the treatment still relied on a single moment, the 2024 presidential debate, which may not generalize to other political contexts or time periods. Perhaps more importantly, because the selection of policy issues in this study varied between the candidates, with Harris’s statements focusing more on economic issues, the generalizability of the findings to other political contexts requires further scholarly attention. For example, I showed that, among Trump supporters, statements on such issue areas as immigration, abortion, and gun rights are more likely to trigger moral flexibility than others. This means that we are unable to speculate about how Trump supporters would react to co-partisan misinformation about areas that are relatively less salient and polarizing. Future research employing a larger sample size and using a larger selection of issues might prove useful in furthering our understanding of how the nature of policy issues interacts with the justifiability of misinformation regarding them.

Future research can build on the paper’s findings in several ways. First, longitudinal studies examining whether moral flexibility in response to misinformation is stable over time or contingent on particular campaign dynamics might prove useful. Partisan moral flexibility, as well as the strength of attitudes, may be weaker outside campaign periods. Second, comparative work could explore how these dynamics operate in different institutional and cultural settings, particularly in multiparty or less polarized systems. Finally, future studies should consider the role of elite cues, social media environments, and varying fact-checking interventions in shaping voters’ tolerance for falsehoods.

Supplemental Material

Supplemental Material - Moral justification for misinformation: Evidence from a survey experiment during the 2024 U.S. presidential campaign

Supplemental Material for Moral justification for misinformation: Evidence from a survey experiment during the 2024 U.S. presidential campaign by Tevfik Murat Yildirim in Party Politics.

Footnotes

Acknowledgments

I thank Zach Dickson and Hande Eslen-Ziya for their comments on an earlier version of this manuscript. I am also grateful to the anonymous reviewers for their insightful suggestions, which greatly improved the paper.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study is financially supported by the Research Group on Populism, Anti-Gender, and Democracy, Faculty of Social Sciences, University of Stavanger.

Data availability statement

Replication materials (data and code) are available from the author’s Harvard Dataverse.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.