Abstract

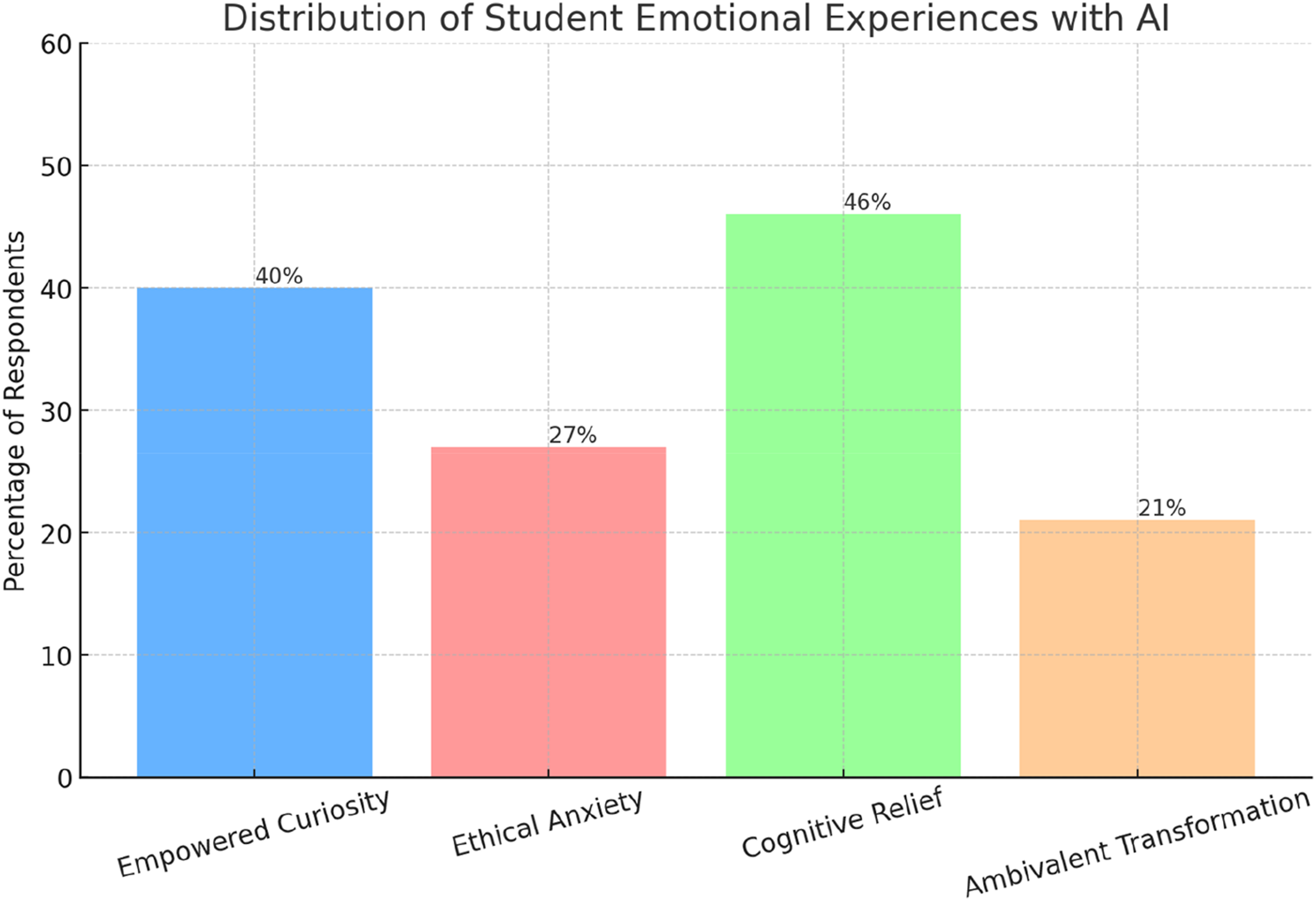

This study explores the emotional experiences of students using Large Language Models (LLMs) like ChatGPT in higher education from a Bakhtinian, dialogic perspective. This perspective helps understand emotions arising within communication between humans, non-humans, and the self), and examines the unique I-It emotional tensions in human-AI interactions. LLMs are considered dialogic partners, not mere tools. Current research lacks studies on whether AIgenerated emotions differ from human-generated emotions. This study aims to understand the lived emotional experiences and their subjective meanings to students. Through an anonymous open-ended survey of 195 students, four key emotional themes were identified: Empowered Curiosity, Ethical Anxiety, Cognitive Relief, and Ambivalent Transformation. Findings indicate that AI systems introduce new emotional responses distinct from those generated in human interactions, characterized as ambiguous assemblages of liberating and morally questionable meanings. This research contributes to new cultural understandings of the psychological impact of AI in education and discusses the development of emotionally aware AI-enhanced instruction to help students gain and develop their emotional authorial agency in and through learning.

Keywords

Introduction

This study explores the emotional experiences of students using Large Language Models (LLMs) like ChatGPT in higher education from a Bakhtinian, dialogic perspective (Matusov et al., 2023). In this view, LLMs are dialogic partners of learning, not simple tools. All emotional experiences are dialogic because they emerge from and within communication among people. As Garvey and Fogel (2007) found all «self-experience is a dialogical and emotional experience, whether the dialogue occurs in the context of an interpersonal or intrapersonal communication» (p. 55). Bakhtin wrote that the human self is «a unified and united event of being» who lives and becomes conscious of herself through relations and dialogues with others. However, there seems to be little consensus as to what kind of emotional experiences emerge between the human self and AI, specifically Large Language Models (LLMs), such as ChatGPT4. Numerous studies investigate various emotional responses, interventions and assessments in AI educational contexts from what emotions are generated by communication with AI, how AI supports students’ assessment and participation in education emotionally to how AI recognizes and predicts students’ emotions (Rechowicz & Elzie, 2024; Vistorte et al., 2024; Yang & Zhao, 2024). Yet, there are no current studies that explore whether these AI-generative emotions in students are different from human-generative emotions. How are the AI-induced emotions different or the same? What are these emotions?

The purpose is to understand the lived experience and its subjective meanings to students from a dialogic theoretical perspective. My findings, grounded in students’ own words, suggest AI systems introduce new emotional responses that are different from the emotions generated by human-human interactions. The main difference is that AI-generative emotions, or I call them with the help of Yang and Zhao (2024) AI-induced emotions (AIIE). are the ambiguous assemblages of both liberating and morally questionable emotions. This research contributes to a new cultural understanding of the psychological impact of AI integration in education and offers implications for developing emotionally aware AI-enhanced instruction helping students gain and develop their emotional authorial agency in and through their learning experiences (Matusov, 2024).

Literature review

The integration of Artificial Intelligence (AI) in education, particularly Large Language Models (LLMs) like ChatGPT, is a relatively new domain of research. For example, Pekrun et al. (2002) conducted a comprehensive study on academic emotions in students’ self-regulated learning and achievement. They introduced the control-value theory of achievement emotions, which posits that students' emotions are determined by their appraisals of control and value in academic settings. There is a spectrum and diversity of students’ emotions that range from both positive and negative. Interestingly, positive emotions at the beginning of the term are associated with higher achievements. The researchers found that “academic emotions are significantly related to students' motivation, learning strategies, cognitive resources, self-regulation, and academic achievement” (p. 91). Also, they found that students’ emotions depend on their learning environment, and emotions impact their sense of learning and achievement (p. 102). A key limitation of this study was its focus on Western, educated populations, potentially limiting its generalizability to other cultural contexts.

Furthermore, Schutz and Lanehart (2002) provided a foundational perspective on emotions in education. They argued that “emotions are intimately involved in virtually every aspect of the teaching and learning process and, therefore, an understanding of the nature of emotions within the school context is essential” (p. 67). Their work highlighted the need for more research on emotions in educational settings but was limited by its theoretical nature, lacking empirical data to support its claims. Both studies by Pekrun et al. (2002) and Schutz and Lanehart (2002) observed that emotions in education are usually neglected by researchers and instructors. Nevertheless, emotions play a critical role in learning experiences with a high impact on their education, achievement, and satisfaction. Without emotions, education suffers in meaning and livelihood. Schutz and Lanehart (2002) found that «an understanding of the nature of emotions in the school context is important and has a lot of promise for informing the understanding of teaching, motivation, and self-regulated learning» (p. 67). Yet, what this understanding entails remains unclear and unproblematized.

In addition, Baker et al.’s (2019) report on AI in education is an early overview of AI applications in schools and colleges. They identified several potential benefits of AI in education, including personalized learning and automated assessment. However, they also noted challenges, stating that “AI systems can perpetuate and amplify existing biases” (p. 28). A limitation of this report was its speculative nature, as many of the AI applications discussed were still in the early stages of development or implementation. Holmes et al. (2019) conducted a systematic review of AI in education, identifying key trends and future directions. They found that AI has the potential to “support adaptive learning and assessment, provide intelligent tutoring systems, and facilitate collaboration” (p. 12). They also noted ethical concerns, particularly around data privacy and algorithmic bias. A limitation of this review was its broad scope, which may have prevented in-depth analysis of specific AI applications in education.

Also, Arroyo et al. (2014) investigated the use of AI for detecting and responding to student emotions in intelligent tutoring systems. They developed a system that used multiple sensors to detect student emotions and adapt instruction accordingly. The researchers found that “students who received emotion-sensitive support showed improved learning gains and more positive attitudes towards mathematics” (p. 22). The main limitations are the small sample size and short-term nature, raising questions about long-term effects and generalizability as well as the students’ voices. Yang and Zhao (2024) conducted a systematic review of AI’s role in detecting and regulating emotions in online learning. They found that “AI-based emotion detection systems show promise in identifying students' emotional states during online learning, potentially allowing for timely interventions” (p. 100923). However, they also noted that “most studies in this field are still in early stages, with limited evidence of long-term effectiveness” (p. 100924). It remains unclear what kind of emotions students feel during their AI-infused learning. These studies collectively provide a foundation for understanding the intersection of AI, emotions, and the culture of education. However, they also reveal gaps in our knowledge, particularly regarding the specific emotional experiences of students interacting with AI tools like LLMs in higher education settings. This underscores the need for further research in this area, especially studies that apply dialogic theory to understand the unique emotional dimensions of AI-enhanced learning environments.

Theoretical framework

I use the Bakhtinian perspective on dialogic learning as a theoretical framework informed by our earlier work under the leadership of Dr Matusov, with ChatGPT4 and Dr. Smith (Matusov et al., 2023). As we found AI does not have a dialogical self that people have but it has a discursive self. As Matusov et al. (2023) explain, «ChatGPT4 does not author I-positions, instead it produces it-positions… ChatGPT4 either generates its own it-positions or presents somebody else’s positions. In the latter case, ChatGPT4 does not provide so-called ‘double voicedness,' as often (but not always!) humans do” (p. 17). The term “it-positions” is crucial here. Unlike human I-positions, which are personal and emotionally invested, AI’s it-positions are impersonal and lack the ontological properties of human-generated positions. Eugene wrote, «The abstractness rather than personalness of ChatGPT4 makes observed and perceived patterns of communication such as felicity, commitment to truth and safety, caring about a respondent, civility, politeness, biases, etc. fake, empty, pretense, formulaic, impersonal, 'as if,’ etc.” (Matusov et al., 2023, p. 18). This discursive self of AI is capable of generating coherent and contextually appropriate responses, but it lacks embodied engagement with the human self. The AI can simulate these qualities, but it does not genuinely possess them since it does not have the physical body and its ontological ecology of being. AI lacks life.

Understanding this fundamental difference between the human dialogical self and the AI’s discursive self is crucial for my study. It forms the basis for my hypothesis that the interactions between humans and AI may generate unique emotional experiences, as humans engage with an entity that can produce coherent discourse but lacks the personal, emotional, and ontological qualities that typically characterize human-human interactions. I hypothesize that the interaction between the human dialogical self and the AI discursive self generates what Yang and Zhao (2024) term AI-induced emotions (AIIE). I assume that these emotions are not usual human-human emotions since they lack the medium of other human beings, but still have AI-induced otherness. What is this otherness?

I also assume that this AIIE otherness is qualitatively different from emotions arising from human-human interactions. The difference stems from the juxtaposition of human dialogic self (I) and AI discursive selves (It positions) in a new chronotope (Bakhtin, 1981; Matusov et al., 2023). Bakhtin (1981) wrote, «In the literary artistic chronotope, spatial and temporal indicators are fused into one carefully thoughtout, concrete whole. Time, as it were, thickens, takes on flesh, becomes artistically visible; likewise, space becomes charged and responsive to the movements of time, plot and history” (p. 84). AI discursive self or selves may become fused with the human self like a cyborg (Matusov et al., 2023), and this cyborg I-IT identity emerges in and through the new AIIEs chronotopes of learning. As I discussed in our previous work on ChatGPT4, a cyborg is a hybrid between a machine and human organism (Haraway, 1991; Matusov et al., 2023). As Haraway (1991) wrote, «A cyborg is a cybernetic organism, a hybrid of machine and organism» (p. 149). Human beings become cyborgs through our interaction with AI.

Applying this to AI-enhanced learning, I can understand how the integration of AI alters the taken-for-granted spatiotemporal learning experience of dialogue and experience itself. The “altered chronotope” of a cyborg suggests a fundamental shift in how time and space are experienced and mediated by AI. The chronotope is both a space and time. AI acts in no time, in seconds and it exists in no specific space either. Both its time/space are imaginative in their digital domains. This altered chronotope may contribute to the emergence of AIIE by creating novel contexts for emotional experiences and cyborg identities.

This dialogic theoretical framework allows me to explore the complex emotional landscape that emerges when students engage with AI in educational contexts. It provides a lens through which I can examine how the dialogic nature of emotions manifests through AI, and how these new forms of dialogic interaction might give rise to unique emotional experiences and their understanding. By applying this Bakhtinian perspective to the study of emotions in AI-enhanced learning, I hope to uncover the novel, hybrid, altered ways in which students’ emotional experiences are shaped by their interactions with AI. This approach not only contributes to our collective , cultural understanding of emotions in educational contexts but also offers insights into the broader implications of AI integration in education.

Methodology

The goal of this study is to explore students’ emotional experiences that emerge through and from their interaction with any AI system of their choice in higher education. Specifically, this research aims to: 1) identify, define and categorize the key emotions students experience; 2) understand and explain how AIIE are different from human-human emotions. I conducted an anonymous open-ended survey with 195 college students who use LLMs for academic and professional development purposes at a Canadian higher education institution. The participants were pursuing various fields of study, including business, technology, and arts. The sampling was random. I created a secure, anonymous survey for all students in the last term of their work-integrated learning through projects and self-directed studies, which I oversee as their coordinator. I led a professional development session for them to teach them AI-based skills of learning in which AI is perceived as a dialogic partner (Matusov et al., 2023). The survey was unsolicited and fully anonymous published on Moodle for them to answer at their leisure if they chose to answer. Participants were informed about the purpose of the study, the voluntary nature of their participation, and their right to withdraw at any time without penalty. Informed consent was obtained electronically before participants accessed the survey. I opened the survey at the beginning of their term and closed it at the end, allowing all students the opportunity to express their views.

The survey included the following key questions: (1) How do you perceive LLMs such as ChatGPT? What does it mean to you? (2) What kind of LLMs do you use everyday for learning and daily life? (3) How do you use it? Please explain your usage and engagement with LLMs. (4) How does your usage of LLMs make you feel? Describe your feelings and emotions associated with the use of ChatGPT or any other AI. (5) Open-ended comments: Any additional thoughts or feelings about your experience with LLMs?

These open-ended questions were designed to elicit detailed reflections on students’ emotional responses during and after their interactions with LLMs. Through these questions, students can write about their perceptions, usage and emotions/feelings that are generated by and through their interactions and dialogues with AI systems of their choice. According to Ferrario and Stantcheva (2022), open-ended questions help researchers understand new phenomena without any predetermined set of expectations because participants can write freely. What’s more, open-ended survey questions are «gut reactions;» «these reactions are informative, as they reflect what a respondent thinks and will keep thinking, absent more learning or targeted reflection. . . .answers to open-ended questions capture the first- order considerations that matter to people and the aspects of an issue that are top of mind for them» (p. 2). This immediate and spontaneous response is very important, especially in exploring emotions. They capture the most authentic and unfiltered emotional states of the respondents (Cassels & Birch, 2014; Forman et al., 2008; Haddock & Zanna, 1998; Hansen & Świderska, 2024; Kragel et al., 2016). Specifically, Hansen and Świderska (2024) observed that researchers rarely allow their participants to express their thoughts in their own words often due to the close-ended structure of surveys; open-ended questions generate «data that otherwise might not be possible to obtain from theory and the researchers' reasoning» (p. 4803). Kragel et al. (2016) found that the brain’s spontaneous fluctuations during rest reflect a dynamic range of emotional states that are genuine and not influenced by external stimuli. By allowing participants to express their thoughts freely, I could gather data that more accurately represents these naturally occurring emotional states, providing deeper insights into the participants’ unique, idiosyncratic emotional processes.

The subjective dimension of the open-ended question survey about perceptions, feelings and thoughts is specifically important because it provides rich answers to the research questions about emotions (Cassels & Birch, 2014). My open-ended questions allow for spontaneous and immediate responses, which are crucial for capturing authentic emotional states. The open-ended nature of these questions encourages respondents to express themselves freely, potentially revealing deeper insights about their perceptions, attitudes, and emotional responses to AI technologies.

Data analysis was a thematic approach, with a focus on identifying the most significant to participants emergent themes related to emotional experiences. A thematic approach is well suited for an open-ended survey method of participants’ emotions and perceptions (Hansen & Świderska, 2024). More specifically, I utilized a meaning extraction method (Chung & Pennebaker, 2008; Markowitz, 2021). Drawing on the foundational work by Chung and Pennebaker (2008), Markowitz defines the goal of the meaning extraction method as «to form a simple and interpretable number of themes from text data using content words (e.g., nouns, verbs, adjectives)» (p. 3). This ensures that the themes stay close to the intended and expressed meanings of the participants. Instead of using a traditional Meaning Extraction Helper (MEH) tool, I innovatively employed Claude 3.5 Sonnet, an advanced AI language model, to assist in the meaning extraction process. This decision leverages Claude 3.5 Sonnet’s advanced natural language processing capabilities (Claude 3.5. Sonnet, 2024). I used the meaning extraction method with the help of Claude 3.5. Sonnet, to perform a thematic analysis. (Claude 3.5., 2024) . I instructed Claude 3.5 Sonnet to identify recurring themes, keywords, and phrases related to emotional experiences and perceptions of LLMs as advised by Markowitz (2021). Claude 3.5 Sonnet provided frequency data on the identified themes and keywords. I then used Claude 3.5. as a dialogic partner to help me focus and distill the contextual meanings of the responses (Matusov et al., 2023). I used this output from Claude 3.5 Sonnet as a starting point for further manual refinement and interpretation in my thematic analysis process.

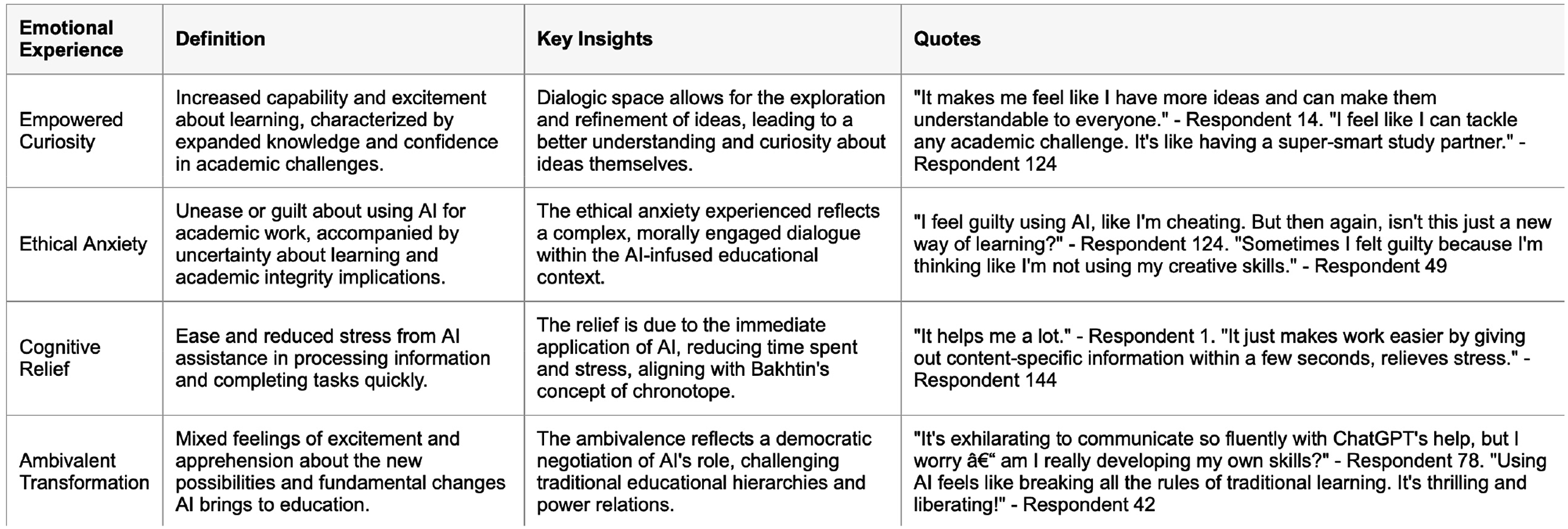

As a theoretical lens, Bakhtin’s theory of dialogue helped me to define and explore emergent themes. In my analysis, I looked for multiple, potentially conflicting perspectives within individual responses about LLMs, recognizing that a single participant might express various “voices” or viewpoints (Bakhtin, 1981). I treated each response as a “dialogue” in itself, reflecting Bakhtin’s idea that all utterances are in dialogue with other texts and discourses (Bakhtin, 1981). This approach allowed me to explore how participants’ views on LLMs might be shaped by various societal discourses and personal experiences. My manual reading and validation of the generated themes were mainly shaped by the literature as well. I wrote memos about each theme with the participants-led definition staying close to their own words and intended meanings. Leavy (2017) defines memo writing as a way of «thinking and systematically writing about data. . . .Memos are links between coding and interpretation and they document [my] impressions, ideas and emerging understandings» (p. 152). I wrote memos and stored them in the same Claude 3.5. Sonnet chat for documentation and also asking for alternative perspectives and, perhaps, the accuracy of my interpretations (e.g., Sinha et al., 2024). As Sinha et al. (2024) wrote, AI models can help researchers see the hidden and often taken-for-granted findings. In my case, my memos revealed the ambiguity of responses that were difficult to understand within one theme of emotion. I expressed my concerns to Claude 3.5. and brainstormed my frustrations that I felt like I am missing the true meaning of these expressions. Claude 3.5. helped me re-analyze my interpretations and prompted me not to simplify their meanings but to leave the definitions complex and rather ambiguous to be more true to the data. It generated visual charts so that I can see the data as well, including the generative codes and themes from the data (Figure 1) and the data-grounded definitions of these themes (Figure 2). Thus, Claude 3.5. provided interesting explanations which I wrote back in memos and reflected on my literature base to document the new unexpected awareness, confusion, perplexity and meanings. Generative themes. Data-grounded definitions of themes.

Findings

Empowered Curiosity

Students described this emotional experience as the complex feeling of increased capability and excitement about learning, characterized by a sense of expanded knowledge and confidence in exploring ideas. For example, one student wrote, «It makes me feel like I have more ideas and can make them understandable to everyone.” (Respondent, R14) And, «It makes me feel excited and curious. It’s like having a knowledgeable virtual friend who is always there to help and share insights, which is truly fulfilling and empowering.” (R130). Another student wrote, «I use AI for better language, compare my ideas with AI ideas» (R73). «Using LLMs like ChatGPT makes me feel empowered and efficient. They provide a sense of confidence knowing I have a reliable tool to assist with diverse tasks.” (Respondent 190). Another wrote, »I feel like I can tackle any academic challenge. It’s like having a super-smart study partner.” (R175). This sense of empowerment was induced by their interaction with mainly ChatGPT3.5 as they were able to explore their ideas freely in chats.

The free and unconstrained back-and-forth interaction with AI seems to be creating a dialogic space where students can explore and refine their ideas helping them understand ideas better with curiosity (Bakhtin, 1981). Having more ideas and making them understandable meant empowerment and more curiosity about the ideas themselves, not necessarily about the best or the worst ideas, not even about any confrontation about ideas without anyone, not even with the self. This is an interesting finding that echoes Wegerif's (2016) experiments with a dialogic space in his classroom. He wrote, «dialogic theory suggests that we learn not so much by replacing wrong ideas with right ideas, but more often by augmenting existing perspectives with new perspectives which enable us to see further or better or just differently (Wegerif, 2013). Dialogic Space, as a concept, builds on and augments a range of related space-type notions» (para. 8). This augmentation was possible with the help of ChatGPT. Students’ interactions with the Chat created this dialogic space of augmented ideas which meant their empowered curiosity. Each chat had its own unique dialogic space which was meaningful to each student in their terms and conditions. Hence, empowerment was the key by-product of their dialogues with AI. Likewise, curiosity is not about confrontation or judging ideas as best or worst, but rather about empowered exploration of a dialogic space with AI.

Ethical Anxiety

Students describe feelings of unease or guilt about using AI for academic work, often accompanied by uncertainty about the broader cultural implications for learning and academic integrity. For example, a student wrote, «I feel guilty using AI, like I’m cheating. But then again, isn’t this just a new way of learning?” ( R124) “I feel like it’s going to take over the educational system because almost all of the students use it and it’s so convenient that it answers everything and students does not need to work or their own research for a project or assignment.” (R90) “By using these LLMs, we wont use our brain for anything” (R103). Another student wrote, «Sometimes I felt guilty because I’m thinking like I’m not using my creative skills” (R50). Ethical anxiety is a novel emotion that is hard to label and understand because it emerges from the students’ applications of AI and its unique dialogic space. The contradictory emotion of ethical anxiety suggests that they are in their dialogical spaces, trying to make sense of their learning in a deep and personal engagement (Wegerif, 2013). The new way of learning has a question mark; hence the student is contemplating what is new about it and how it makes them feel. This space forces them to think morally and to make a moral judgment which signifies their personal investment, responsibility and authorial agency for their learning (Matusov et al., 2023). Bakhtin (1981) wrote, The dialogic nature of consciousness, the dialogic nature of human life itself. The single adequate form for verbally expressing authentic human life is the open-ended dialogue. Life by its very nature is dialogic. To live means to participate in dialogue: to ask questions, to heed, to respond, to agree, and so forth. In this dialogue a person participates wholly and throughout his whole life: with his eyes, lips, hands, soul, spirit, with his whole body and deeds. He invests his entire self in discourse, and this discourse enters into the dialogic fabric of human life, into the world symposium. (p. 293)

When students engage in a dialogue with a discursive self of AI (It), they become more conscious of their own I position. They begin to question the moral implications of using AI in learning. AI cannot answer for them about their moral agency, their ethics of human involvement in this AI-infused learning. Yet, the It positions evoke a sense of ontological difference and a more conscious sense of I infused with the answerability for their, human agency and participation in AI dialogues (Matusov et al., 2023).

The AI-infused dialogic space is filled with complex emotions and a multiplicity (Bakhtin, 1981) of students’ inner voices that emerge in moral tension with each other and in an ongoing dialogue with the existing educational spaces. Consequently, their learning becomes lively and living with their self-expression of different ideas, augmented and enriched by AI as well as by the complex emotions of moral judgments of the self.

Cognitive Relief

Students described this as a sense of ease and reduced academic stress resulting from the assistance provided by AI in processing information and completing tasks quickly. As students wrote, «It helps me alot» (R1) “It just makes work easier by giving out content-specific information within a few seconds, relieving stress.” (R144). The sense of relief is consistent as AI makes their work easier to handle, understand, and process. This convenience provides relief from feeling overwhelmed by overthinking. Moreover, students can find the immediate application of ideas to their own lives, rather than rely on abstract theories that are decontextualized from their own lived experience. Bakhtin (1979/1986) wrote that «understanding comes to fruition only in the response. Understanding and response are dialectically merged and mutually condition each other; one is impossible without the other» (p. 282). Hence, students’ emotions of cognitive relief are dialogic because they emerge in response to the AI-generated content. The responsive nature of AI makes learning stress free.

Also, time is another factor that enables this sense of cognitive relief. Instead of spending time looking for content in the library, they use AI to find out specific content of their inquiry. Hence, time and easy access to content reduce if not alleviate stress. This emotion is interesting because it is a combination of stress relief and reduction of cognitive or information overload. The immediacy of this emotion echoes Bakhtin's concept of the chronotope. AI is reshaping students' experience of time and space in their learning process, creating a new chronotope of being in a moment, being in the immediate collapse of time/space. Bakhtin's (1981) wrote that «Every entry into the sphere of meanings is accomplished only through the gates of the chronotope» (p. 258). The AI-enhanced learning process represents a dialogic space or a new chronotope, where the traditional constraints of time and space in academic work dissolve which makes students feel cognitively relieved.

Ambivalent transformation

Students described mixed feelings of excitement about the new possibilities offered by AI in learning, coupled with apprehension about the fundamental changes it brings to the educational experience. Students wrote, «It’s exhilarating to communicate so fluently with ChatGPT’s help, but I worry – am I really developing my own skills?” (R78) Interestingly another student questioned, «Using AI feels like breaking all the rules of traditional learning. It’s thrilling and liberating!” (R42). «It’s exciting yet unsettling» (R87). This emotion of thrill and liberation combined with the sense of ambivalence and concern is not contradictory, it is a characteristic of intrinsic tensions of and in a dialogic space (Shugurova, 2020, 2024). In a dialogic space, traditional hierarchies and power relations are often misaligned, subverted and transformed by the multiplicity of voices (Bakhtin, 1965). The ambivalent transformation contextualizes Bakhtin’s concept of the dialogic nature of meaning-making. As Bakhtin (1986) wrote, «Truth is not born nor is it to be found inside the head of an individual person, it is born between people collectively searching for truth, in the process of their dialogic interaction’ (p. 110). In this case, students are collectively searching for the truth about the role of AI in their education through their dialogic interaction with the technology and themselves The tension between excitement and apprehension, liberation and concern, creates a rich dialogic space where new understandings of learning and knowledge creation are being negotiated, rather than enforced, imposed or foisted through compelled curriculum and obedience (Matusov, 2020). This dialogic space, characterized by ambivalence and transformation, serves as a democratic microcosm of the broader societal negotiation of AI’s role in culture and education.

Implications for future research

This study contributes to the field of AI-induced emotions by identifying a new category of emotions. While previous research has explored emotions in traditional learning contexts (Pekrun et al., 2002),these findings suggest that AI introduces a unique emotional landscape or unique emotional dialogic space that merits further investigation. For example, the generative theme of Empowered Curiosity aligns with and extends Bandura’s (1997) self-efficacy theory. These results suggest that AI tools may be enhancing students’ beliefs in their own capabilities in novel ways, potentially expanding the concept of self-efficacy through diverse emotional expressions. However, the data did not provide enough support or reasoning related to self-efficacy. Future dialogic research can help understand this process.

Furthermore, the identification of Ethical Anxiety as a key emotion addresses a gap in the literature on the ethical implications of educational technology from a student perspective. This finding calls for a more dialogic understanding of how students navigate the moral complexities of AI use in academic settings helping them make ethical decisions and articulate their moral judgment (Matusov, 2024). Moreover, the theme of Cognitive Relief contributes to discussions around cognitive load theory (Sweller, 1988) in the context of AI-enhanced dialogic learning. While AI appears to reduce cognitive load in certain tasks, my findings raise important questions about the long-term implications for cognitive development and problem-solving skills.

Limitations

The demographic sample is unclear since the surveys were anonymous and not iterative. More research is needed to find out the impact of AI and AIIE on diverse students from diverse cultural backgrounds and in different educational settings. Also, more qualitative study can help researchers and educators understand the lived experience of students and to co-create meaning together with them. The time scope of this study did not permit further investigations since students had already graduated at the end of the survey and were unavailable for qualitative focus groups or in-depth interviews for follow-up study.

Conclusion

This study has provided novel insights into the complex emotional landscape that unfolds as students interact with Large Language Models (LLMs) like ChatGPT. This spectrum of emotions is not just reactions to technology but is part of a deeper, dialogically constructed experience in which students create their sense of learning through meaning-making and authorial agency.

The findings suggest that the relationship between students and AI can be viewed through the metaphor of a cyborg identity. The AI, devoid of a physical or emotional self yet capable of engaging in seemingly meaningful dialogues, creates new emotional responses in human beings and forms a new chronotope where traditional boundaries of time and space are blurred, fluid, unstable and changing with every utterance. This shift has profound implications not only for how educators and students perceive the role of AI in education but also for how we understand ourselves and our capacities as learners and emotional beings. In conclusion, this study contributes to a new understanding of the psychological and cultural impacts of AI integration in education, calling for a dialogic investigation and appreciation of how technology not only changes what we learn but also who we become in the process of learning.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.