Abstract

The debate about lethal autonomous weapons systems (LAWS) characterises them as future problems in need of pre-emptive regulation, for example, through codifying meaningful human control. But autonomous technologies are already part of weapons and have shaped how states think about human control. To understand this normative space, I proceed in two steps: first, I theorise how practices of designing, of training personnel for, and of operating weapon systems integrating autonomous technologies have shaped normativity/normality on human control at sites unseen. Second, I trace how this normativity/normality interacts with public deliberations at the Group of Governmental Experts (GGE) on LAWS by theorising potential dynamics of interaction. I find that the normativity/normality emerging from practices performed in relation to weapon systems integrating autonomous technologies assigns humans a reduced role in specific use of force decisions and understands this diminished decision-making capacity as ‘appropriate’ and ‘normal’. In the public-deliberative process, stakeholders have interacted with this normativity by ignoring it, engaging in distancing or positively acknowledging it – rather than scrutinising it. These arguments move beyond prioritising public deliberation in norm research towards exploring practices performed at sites outside of the public eye as productive of normativity. I theorise this process via international practice theories, critical security studies and Science and Technology scholarship to draw out how practices shape normativity, presenting ideas of oughtness and justice, and normality, making something appear normal via collective, repeated performances.

Artificial intelligence (AI) technologies are spreading at pace. They create foundational political and ethical challenges because of machine bias, opacity and obscuring accountability across contexts as diverse as government administration, policing, healthcare systems, law, the political economy and surveillance (Chesterman, 2021; Dafoe, 2018). One of the most concerning developments is the weaponisation of AI technologies. This development is often associated with lethal autonomous weapon systems (LAWS) 1 integrating autonomous or AI technologies into sensor-based targeting without human intervention or assessment (ICRC, 2021: 5). What is at stake here is whether humans remain in control over using force.

Since 2017, 2 a Group of Governmental Experts (GGE) at the UN Convention on Certain Conventional Weapons (CCW) has discussed LAWS. Human control is at the centre of this debate as a response to legal questions (can LAWS be used in adherence to international humanitarian law) 3 and ethical/moral questions (should LAWS be used) (Heyns, 2016). The International Committee of the Red Cross (ICRC) has, for example, argued that retaining human control is necessary ‘to protect civilians and civilian objects, uphold the rules of international humanitarian law and safeguard humanity’ (ICRC, 2021). While the GGE has a discussion rather than a negotiation mandate, we can see normativity on human control, in the sense of understandings of appropriateness, emerging here. In 2019, states parties included ensuring human–machine interaction as one of their 11 Guiding Principles on LAWS (UN-CCW, 2019: Annex III). GGE meetings have shown continuous agreement about retaining human control. But what counts as an ‘appropriate’ quality of human control remains open. There is likewise no consensus on moving towards negotiating new international law and enshrining human control as a legal principle. The GGE debate allows for the study of how normativity on human control emerges as part of public deliberations (Bahcecik, 2019; Bode, 2019; Carpenter, 2011; Garcia, 2019; Rosert and Sauer, 2021). But there is an undercurrent to this process. The debate on LAWS characterises such systems as future problems. This is remarkable because it does not acknowledge how states have already used weapons with automated and autonomous features in their targeting functions for decades. 4 In air defence systems (ADS), guided missiles, loitering munitions or active protection systems, motor and cognitive tasks in targeting have already been ‘delegated’ to machines and circumscribe human control (Boulanin and Verbruggen, 2017). So, what shapes the normative space on human control and LAWS?

To address this question, I proceed in two steps: first, I theorise how practices of design, of training personnel for and of using weapon systems with autonomous features, which I analyse via the example of ADS, shape normativity on human control. Second, I examine how this normativity emerging in and through practices, in the process of doing things, interacts with public deliberations about normativity at the GGE. I therefore distinguish between two interlinked processes shaping normativity on LAWS: a practice-based and a public-deliberative one. 5 Taking this perspective reveals that LAWS are part of a more historical and complex normative space than the one that is visible at the GGE. As I demonstrate, the normativity emerging from practices has a minimal quality: it assigns humans a reduced role in specific use of force decisions and understands this diminished decision-making capacity as ‘appropriate’ and ‘normal’. I use the term normativity to highlight its relational constitution rather than referring to a seemingly stable ‘norm’ (Gadinger, 2022; Hofferberth and Weber, 2015; Pratt, 2020, 2022).

In making these arguments, I contribute to International Relations scholarship in three ways: first, by distinguishing between practice-based and public-deliberative processes as sources of normativity, I add both to research in between international practice theories (IPT) and norm research (Bernstein and Laurence, 2022; Bode and Karlsrud, 2019; Gadinger, 2022; Hofius, 2016; Lesch, 2017; Ralph and Gifkins, 2017) and to critical security studies, such as the Paris School, that recognises ‘the productive power of practice’ (C.A.S.E Collective, 2006: 458). Drawing on Science and Technology Studies (STS) insights that technology is deeply social, I argue that practices can be productive of both normativity and normality. Practices can therefore shape ideas of oughtness and make something appear normal. This focus on practices works to counter a prioritisation of public, deliberative forums as sites where normativity emerges that has long shaped norm research (Acharya, 2004; Deitelhoff and Zimmermann, 2020; Keck and Sikkink, 1998; Rosert, 2019). Focusing on how normativity emerges through the performance of practices at sites unseen and preceding public deliberation therefore makes visible ‘previously obscured patterns and processes’ (Bernstein and Laurence, 2022: 77).

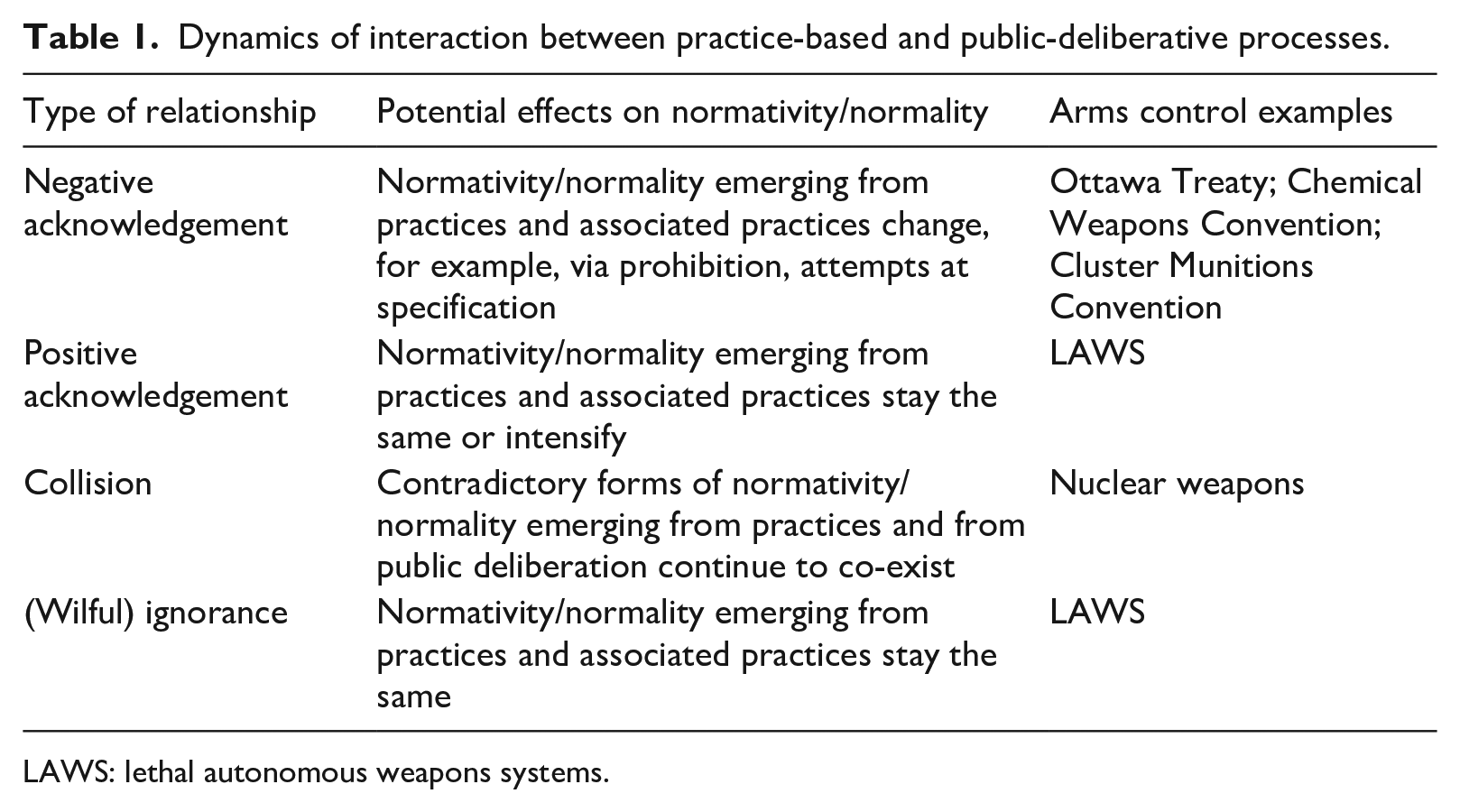

Second, I take research on practices and norms further by proposing four types of interaction between practice-based and public-deliberative processes and their effects on normativity: positive acknowledgement, negative acknowledgement, collision and (wilful) ignorance. This inductive conceptualisation is based on a close study of LAWS and other arms control/disarmament processes.

Third, by investigating the trajectory of normativity on human control, I make a substantial contribution to the literature on LAWS that has covered four strands of inquiry: (a) the extent to which using LAWS may be compatible with or challenge international law (e.g. Crootof, 2015; Heyns, 2016); (b) their effects on strategic stability and the balance of power (Altmann and Sauer, 2017; Payne, 2021); (c) the ethical consequences of their development (Asaro, 2019; Renic, 2020; Schwarz, 2019); and (d) their potential effect on international norms broadly understood (Bode and Huelss, 2018, 2022; Garcia, 2016; Huelss, 2020; Rosert and Sauer, 2021; Williams, 2021). While some policy studies concentrate on human control (Boulanin et al., 2020), there is no systematic, analytical study examining what shapes normativity in this space.

Following an interpretive methodology, I collected and analysed data through four qualitative methods. I focus on ADS as a type of weapon integrating autonomous technologies for two reasons: first, their development since the 1960s offers a long trajectory of how practices manifest, unfold and come to shape normativity; and second, with 89 states operating ADS, such systems have proliferated globally (Boulanin and Verbruggen, 2017: 37).

The remainder of the article is structured as follows: first, I outline how practice-based processes that precede public deliberation produce normativity/normality, how practice-based and public-deliberative processes interact, and outline my methodology. Second, I examine how normativity on human control initially emerges in the performance of practices of designing, training personnel for and operating ADS. Third, I analyse how such normativity interacts with that shaped in the public-deliberative process at the GGE (2017–2022). I demonstrate how existing weapons integrating autonomous technologies in targeting, including ADS, have either been side-lined or positively acknowledged. As a result, the public-deliberative process validates the normativity/normality emerging from the practice-based process without scrutinising its potentially undesirable content. Finally, I draw conclusions and sketch avenues for further research.

Practices, public deliberation and normativity on human control

To track normativity, norm research has typically focused on the point of its public deliberation. Constructivist models have turned to public discussion, deliberation, communication, critique and discourse as ‘trails of communication that can be studied’ (Björkdahl, 2002: 13) to understand emerging norms (Finnemore and Sikkink, 1998; Keck and Sikkink, 1998; Risse et al., 1999). Critical norm research interested in how norms are contested and localised also predominantly captures deliberative, public processes (Acharya, 2004; Bower, 2015; Zimmermann, 2017). As contestation is ‘the range of social practices, which discursively express disapproval of norms’ (Wiener, 2014: 1), it is crucial whether actors have access to the forums where the constitutive debate about norms happens (see Wiener, 2018). But Wiener’s (2014) understanding of contestation, for example, also holds that ‘while mostly expressed through language not all modes of contestation involve discourse expressis verbis’ (p. 1). This can include contestation expressed through practices such as neglect, negation or disregard (Wiener, 2014: 7), but has only been explored in limited ways (Stimmer and Wisken, 2019). Furthermore, norm research has not analysed the normative impact of practices preceding public deliberation and performed outside the public sphere.

Understood as ‘socially meaningful patterns of actions’ (Bicchi and Bremberg, 2016: 394), practices can capture the ‘process[es] of doing something’ (Adler and Pouliot, 2011: 7) by which normativity/normality is constituted in the first place, is sustained or changes (Bueger, 2018; De Franco, 2016; Gadinger, 2022; Nicolini, 2013; Pratt, 2022). 6 Taking practices to be productive of both normality and normativity adds to the research projects associated with both the Paris School of critical security studies and norm research/IPT. Drawing on Foucault, the Paris School has emphasised the constitution of the ‘normal’ as a site of struggle resulting from practices of exclusion and regulation (Bigo, 2002; C.A.S.E Collective, 2006). But they do not speak to questions of normativity, that is, expressions of oughtness and justice. Conversely, scholarship at the intersection of norm research and IPT investigates normativity without considering dynamics of normality, that is, the consequences of the process by which something becomes defined as ‘normal’ or ‘commonplace’. Ontologically, practices and normativity are not separable as the content of ‘norms’ only manifests itself in how practices are performed to enact and sustain them (Frost and Lechner, 2016a; Gadinger, 2022; Neumann, 2002; Wiener, 2009). 7

Building on this for the case of LAWS, practices produce normativity/normality that precedes public deliberation in two ways: first, practices of designing weapon systems with autonomous features embed normative, value-laden choices into the very technologies animating such systems (Adler-Nissen and Drieschova, 2019; Bijker and Law, 2010; Latour, 2005; Pouliot, 2010). This builds on STS scholars who consider technology as deeply social and a reservoir of practices (Bijker and Law, 2010; Franklin, 1999). It contrasts with a positivist view on the process of knowledge creation via experimentation and problem-focused refinement as the basis of the natural sciences and technological development. In focusing on practices of design as sources of normativity on human control, I follow in how STS scholarship troubles the boundary-work between technological factuality and value decisions. Target profiles that weapon systems integrating autonomous technologies use require the generation of computational models that translate complex social activities into a problem to be solved as well as an intended goal (Gillespie, 2014). Such design practices encode value-laden choices into the very programming of targeting.

Second, practices of training personnel for and of using weapon systems integrating autonomous technologies in warfare are likewise based on assumptions about human–machine interaction that shape normativity/normality. Such assumptions include the level of trust put into system outputs and the practical set-up of human operators as part of the decision-making loop of weapon systems. The repeated, collective performance of a similar range of practices over time ‘makes normal’ and thereby increasingly ‘acceptable’ (Huelss, 2020). Making normal, associated with notions such as ‘the common, the ordinary, the standard, the conventional, the regular’ (Cryle and Stephens, 2017: 1), therefore interrelates with normativity in indicating incremental, collectively shifting understandings of appropriateness. When it comes to what is considered ‘appropriate’ human control, we may therefore come to see a build-up of normative/normal content through solidifying and travelling practices performed by a wide range of actors at different sites and outside of the public eye.

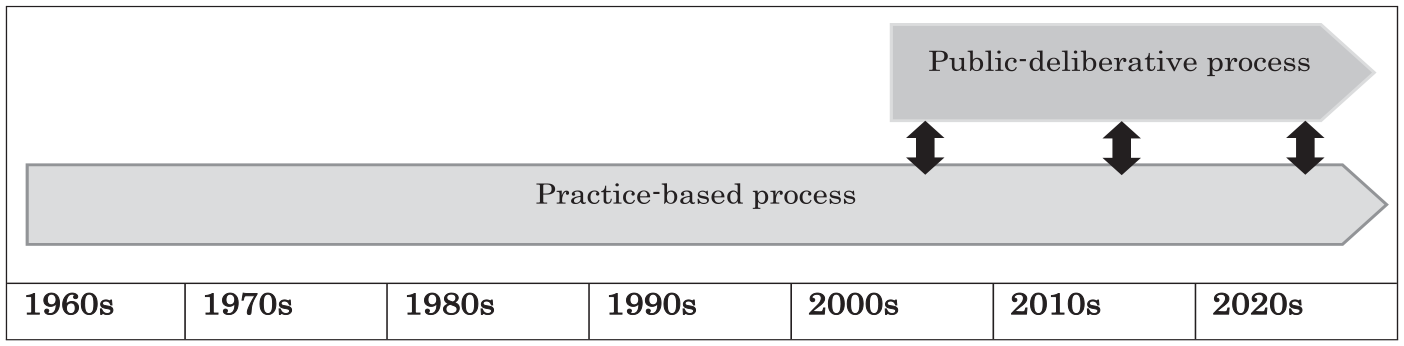

How does this normativity/normality emerging from practices interact with the public deliberation at the GGE? Figure 1 depicts how these two processes run in parallel since the 2010s, but that the practice-based process precedes the public-deliberative process by several decades. In this time, normativity/normality surrounding human control of weapon systems with autonomous features emerged gradually and before such technologies were recognised as coherent enough to become objects of public deliberation. 8

Human control: practice-based and public-deliberative processes.

While I focus on LAWS, the differences in the timelines of the practice-based and public-deliberative processes are characteristic of arms control/disarmament: states develop and use military technologies long before there is an international, public debate about their appropriateness. In the absence of public debate, practices around such weapons shape normativity. Of course, this process does not happen in a normative vacuum: states perform practices within a dense normative structure (Björkdahl, 2002; Finnemore, 1996), for example, the principles and prohibitions of international humanitarian law (IHL) present a limiting baseline. However, that baseline is ambiguous in character and individual stipulations contradict each other (Koskenniemi, 2011; Wiener, 2009). This leads to differing assessments of what constitutes ‘appropriate’ action and considerable wiggle room for states performing practices.

Once public deliberation of normativity/normality has started, there are at least four interaction dynamics in between the practice-based and public-deliberative processes that have different effects on emerging normativity: (1) negative or (2) positive acknowledgement, (3) collision or (4) (wilful) ignorance (Table 1). I drew out these dynamics inductively from studying the case of LAWS and a wider range of arms control cases. With this conceptualisation, I therefore want to make a more general analytical contribution to understanding the normative space around military technologies.

Dynamics of interaction between practice-based and public-deliberative processes.

LAWS: lethal autonomous weapons systems.

Negative acknowledgement. In the case of negative acknowledgement, stakeholders point out the adverse consequences of normativity/normality emerging from practices. Cases in point are the 1997 Ottawa Treaty that banned anti-personnel landmines or the 1992 Chemical Weapons Convention (Price, 1995, 1998). Here, the public-deliberative processes focused on demonstrating that the practices performed by states (and other actors) who used such systems were not considered normatively ‘appropriate’, for example, due to their indiscriminate effects on civilians or the inhumane injuries they produced. While the creation of new international legal normativity has not completely ended the initial practices, their public deliberation has changed them significantly. In the case of anti-personnel landmines, for example, most states ceased their use, production and trade (International Campaign to Ban Landmines, 2021).

Positive acknowledgement. Interaction via positive acknowledgement affirms existing practices and emerging normativity/normality. This is part of what we see in the case of human control and LAWS, although the public-deliberative process is still ongoing. References to how practices shape normativity are few and far between, but, if made, actors positively affirm such practices as part of the public-deliberative process by understanding them as ‘appropriate’. In the case of positive affirmation, normativity/normality emerging from practices and the associated practices performed by states are not likely to change and may even intensify because of having been ‘sanctioned’ in public deliberation.

Collision. Collision describes a relationship where contradictory practices continue to co-exist after the public deliberation of normativity/normality. The case of nuclear weapons is a good example: the normativity/normality emerging from practices regarding nuclear weapons led to public deliberation in the form of the 1970 Treaty on the Non-Proliferation of Nuclear Weapons and the 2021 Treaty on the Prohibition of Nuclear Weapons. These public deliberations created new normativity/normality and, to a certain extent, re-shaped the normativity/normality emerging from practices, as well as the associated practices themselves (Tannenwald, 1999). At the same time, states still possess and pursue nuclear weapons and consider the normativity/normality that emerged from prior practices as ‘appropriate’: this is underlined by abstentions from the Prohibition Treaty or the debate about nuclear modernisation (Potter, 2017).

(Wilful) ignorance. (Wilful) ignorance means that actors in the public-deliberative process do not explicitly acknowledge normativity/normality emerging from practices or wilfully ignore it, including by engaging in distancing. This is another dynamic we can see on LAWS. Here, also, we should not expect the normativity/normality emerging from practices to change as they have not been publicly deliberated. Of course, such practices and the normativity/normality they produce may still change over time, but this would be a slow, gradual change rather than the potentially explicit, drastic change associated with negative acknowledgement.

Methods

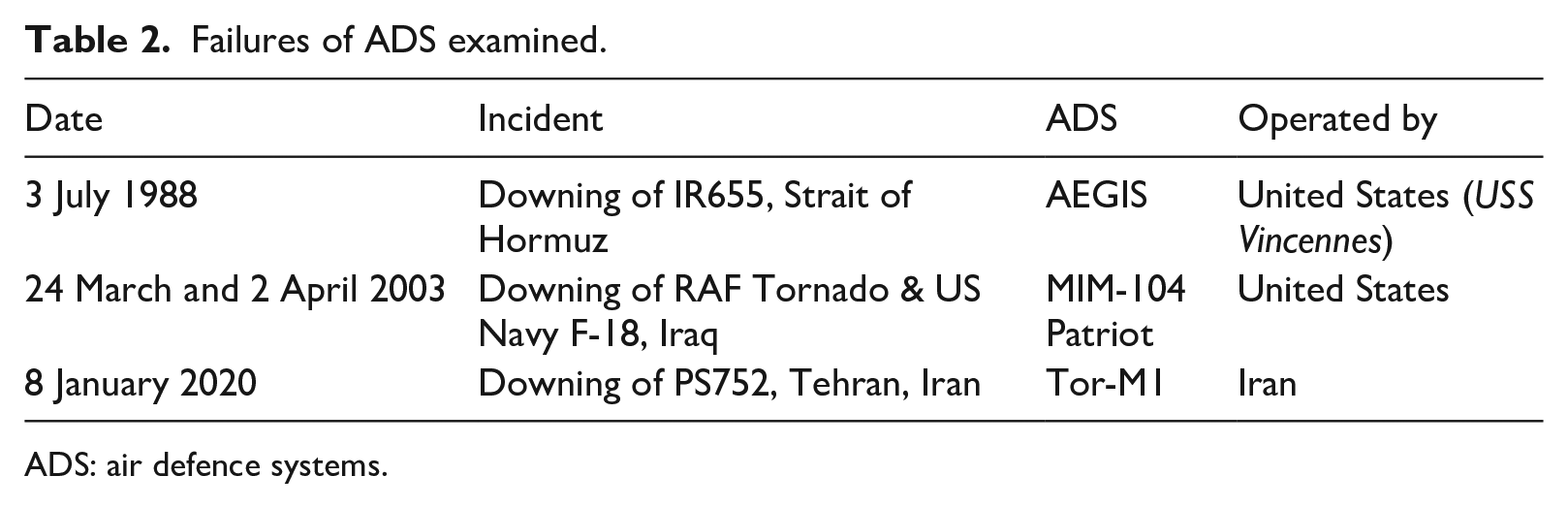

My interpretive study of how normativity/normality on human control emerges in an interplay between practices and public deliberation is based on four qualitative methods, featuring the collection of primary and the analysis of secondary data. First, I was a participant observer at six GGE meetings from 2017 to 2022. 9 Second, from 2017 to 2022, I conducted 28 interviews with states parties, civil society representatives and academics 10 either following the Chatham House Rule or as background information. 11 Third, I co-compiled a qualitative data catalogue of 28 ADS integrating autonomous technologies (Watts and Bode, 2021). Building on open-source data, this catalogue includes systems manufactured by all five recognised ‘leaders’ in developing LAWS (China, the European Union, Russia, South Korea, and the United States), as well as regional powers (Haner and Garcia, 2019; Nadibaidze, 2022; Qiao-Franco and Bode, 2023). Fourth, I examined practices performed in relation to three ADS involved in high-profile failures 12 via content analysis: Iran Airlines flight IR 655 (1988), Ukrainian Airlines flight PS752 (2020) and two instances of fratricide in the Second Gulf War (2003) (Table 2). I analysed a mixture of firsthand and official reports, news coverage and literature on human-factor analysis. These failures of ADS opened rare public windows for examining how the otherwise hidden performance of practices shapes the ‘appropriateness’ of autonomous technologies. Interestingly, in none of the cases did this period of scrutiny change practices. The combination of ADS data from the qualitative catalogue and the failures allows for a long-term view on a historically unfolding trajectory of practices since the 1960s.

Failures of ADS examined.

ADS: air defence systems.

How normativity/normality emerges in practices: ADS and human control

ADS are used to identify, track and, if necessary, engage airborne threats to protect platforms, military installations and people from attack. ADS can operate in at least two modes of human–machine interaction: in manual mode, where the operators must authorise the launch of weapons and manage the engagement process. This is often referred to as ‘human-in-the-loop’ because operators identify specific targets before initiating an attack or choose from a list of targets suggested by the system. In automatic modes, the system ‘can automatically sense and detect targets and fire upon them’ (Roff, 2016). Also referred to as ‘human-on-the-loop’, operators may only be allocated a time-restricted veto in the targeting process.

While humans therefore remain in-the-loop or on-the-loop, practices of designing, of training personnel for and of operating ADS integrating autonomous technologies have fundamentally changed the quality of human control exercised over the use of force. The role of operators has been simultaneously minimised and made increasingly complex. The normativity/normality emerging from these practices has come to define an understanding of meaningless rather than meaningful human control (MHC) (Bode and Watts, 2021). It has become meaningless because the decision-making capacities of operators do not fulfil prerequisite conditions for MHC derived from literature on human factor analysis: a functional understanding of how the targeting systems ‘make’ decisions, sufficient situational understanding and the capacity to scrutinise machine ‘decision-making’ (e.g. Hawley and Mares, 2012). The following sections examine how states performing practices in relation to ADS integrating autonomous technologies have shaped this diminished quality of human control as normatively ‘appropriate’ and normal at sites outside of the public eye.

Practices of design

States started developing ADS in the 1960s in response to perceiving that the ‘reaction time, firepower, and operational availability’ of existing systems could not handle the threat posed by low-flying, anti-ship missiles (Pike, 1998). This manifested in a concern that human operators have a limited ability to conduct targeting at the speed perceived to be required. The ambition to ‘fight at machine speed’ (Doll et al., 2019) therefore motivates basic practices of design that increasingly minimise the role of human operators in favour of ‘delegating’ more and more targeting tasks to the system.

Such practices are visible in the design of all ship-mounted close-in weapon systems. Systems such as the Russian-manufactured AK-630M, the French-manufactured Crotale and the US-manufactured Phalanx that became operational in the late 1970s, and whose technologically upgraded variants remain in service with militaries today, all have ‘fully automatic’ tracking and targeting capabilities (Watts and Bode, 2021). The AK-630M, for example, ‘does not require human supervision although it can be directed from optical control posts in case of damage’ (NavWeapons, 2016). In the Crotale, all decision-making tasks in target detection and tracking ‘are automated to achieve reduced reaction-times’, while the operator is on-the-loop with a time-restricted ‘option of overriding the sensor automatically selected by the operational software’ (Army Technology, 2021). Other, short-range ADS, such as the Israeli-manufactured Iron Dome, are also capable of ‘identifying, tracking, and engaging targets without human intervention’ (Richemond-Barak and Feinberg, 2016: 494). The Iron Dome makes these engagement ‘decisions’ ‘according to undisclosed algorithms’ that calculate whether an incoming projectile is expected to land in a populated area (Cohen-Lazry, Oron-Gilad, 2016: 26).

Practices of designing autonomous technologies for ADS do not only involve the delegation of motor and sensory tasks but also of cognitive tasks in the form of automated target recognition (Watts and Bode, 2021). This has increased system complexity. For example, the US Army’s Patriot system alone runs on ‘more than 3.5 million lines of software code’ and is designed to be operated with other, even more complex ADS, such as the ship-bound AEGIS (Hawley and Mares, 2012: 4). This networked, multi-tiered cooperation between systems is typical for how ADS are designed to be used (Mizroch, 2012).

Such design practices shape normativity/normality on human control in at least two ways: first, they create significant comprehension barriers limiting the extent to which human operators can follow and understand the system’s functionality. By designing increasingly complex systems, these practices contribute to human operators’ ‘misperceiv[ing] or misunderstand[ing] the system’s behaviour’ (Hawley, 2007: 1). Second, practices of design that increase system complexity make ADS integrating autonomous technologies susceptible to failure. As part of the design process, it is not possible to test and thereby ascertain how the system and its sub-systems will behave across all possible conditions and situations: ‘Designing autonomous machines for war means accepting some degree of error in the future, and [. . .] it means not knowing exactly what that error will be’ (Atherton, 2021). Holland (2021) refers to this phenomenon as the ‘known unknowns’ of designing military systems with autonomous features. Practices of designing weapon systems integrating autonomous technologies therefore include accepting significant risks of failure – and that ‘risk can be reduced but never entirely eliminated’ (Scharre, 2016: 25).

Design practices around target profiles underline such challenges. How to programme target profiles, that is, what to categorise as intended objects of attack, is an important part of automating target recognition. Target profiles may be ‘based on weight (many landmines), heat-shape (certain sensor-fused anti-armour systems), acoustic signature (certain torpedoes), and radar signature (certain counter-missile systems)’ (Moyes, 2019: 4). Target profiles include both intended and unintended objects of attack – in other words, they tend to be widely rather than narrowly defined. This is because defining a wide target profile reduces the risk of false negatives, that is, failures to recognise a potential target because it ‘does not align precisely enough with the target profile’ (Moyes, 2019: 5). This means that civilian aircraft can fall within the target profile of ADS and it becomes the task of the operator to make determinations in specific use of force situations. This necessity of human triangulation was highlighted in the tragic downing of Ukraine Airlines Flight PS752 by an Iranian Tor-M1 in January 2020: ‘Everything becomes an enemy to the missile – unless you can identify by sight and turn the missile off’ (Grant quoted in Ponomarenko, 2020). But the extent to which this human triangulation can be meaningful is compromised by practices of designing complex ADS. Again, such practices of design are based on accepting a diminished decision-making capacity of human operators and thereby shaping normative substance on human control in this direction. In this way, they embed normative choice into the design of the technologies that animate targeting in ADS.

Furthermore, a reliance on ‘technological fixes’ in practices of design, that is, the idea that known system weaknesses could be fixed via technological practices as soon as more ‘sophisticated’ solutions become available, shapes normativity on human control. Such practices speak to the general belief inherent to weaponising AI that protracted problems in warfighting may eventually be successfully addressed through technological solutions. The design of the Patriot system’s targeting parameters, for example, had been associated with several near-miss fratricidal engagements both during the First Gulf War in the 1990s and in training exercises (Hawley, 2017: 6). Rather than communicating these known problems to the operators (US Department of Defense, 2005: 2), they were cast as software problems that could and would be quickly fixed. The two friendly fire incidents of the Patriot system in the 2003 Iraq War demonstrate the consequences of such practices.

Performing design practices that increase system complexity, programme wide target profiles and rest on a belief in technological fixes thereby shape a minimised form of human control as normal/normative in response to the speed demanded by modern air defence. The challenges that this creates for the system’s understandability and its potential failures are treated as ‘appropriate’ and gradually normalised.

Practices of training

Practices of training personnel for operating ADS integrating autonomous technologies are based on particular assumptions about human–machine interaction that shape normativity/normality on human control. To start with, such training practices conform to a common, general ‘myth’ associated with autonomous systems: that integrating autonomy facilitates tasks conducted by human operators (Bradshaw et al., 2013: 58). In the case of ADS, a belief in this myth has manifested in both ‘reduc[ing] the experience level of their operating crews [and] the amount of training provided to the individual operators and crews’ (Hawley, 2017: 8). The experience level of the Patriot crew involved in the 2003 RAF Tornado fratricide underlines this: ‘the person who made the call [. . .] was a twenty-two-year old second lieutenant fresh out of training’ (Scharre, 2018: 166). The potential inadequacy of training is mentioned in reports published after prominent failures of ADS: Press reports after the PS752 disaster referred to ‘a badly-trained and inexperienced crew’ (Bronk, 2020) that lacked the necessary experience to properly operate the system in determining whether unidentified planes were civilian or military (Peterson, 2020). Training practices display a mismatch between positive assumptions about what integrating autonomous technologies mean for the human operator versus what it actually means to prepare personnel for operating complex ADS under stressful combat situations. The normative effect of such training practices is that human operators of ADS may lack the understanding and expertise needed to potentially overrule targeting prompts by the system. These training practices therefore contribute to shaping a diminished decision-making capacity of operators as an ‘appropriate’ form of human control.

Furthermore, training does not only concern ‘understanding’ the system but also sanctions particular trust regimes in human–machine interaction as ‘appropriate’ and normal. Both training practices that lead to over- or under-trusting the system have consequences for normativity/normality on human control. Over-trust or ‘automation complacency’ (Parasuraman and Manzey, 2010) refers to the tendency of over-relying on automation and accepting the outputs provided by autonomous systems uncritically. In training practices, ‘Patriot crews are trained to react quickly, engage early and to trust the Patriot system’ (Ministry of Defence, 2004: 3). These practices manifested in putting greater score on the veracity of system outputs rather than the operators’ own, critical ability to triangulate.

Operators of ADS may also encounter situations of under-trust. This appears to have played a role in the AEGIS-equipped USS Vincennes shooting down Iran Airlines Flight IR655 in July 1988. The AEGIS system’s instrumentation was correctly displaying that IR655 was descending rather than ascending in altitude, thereby dis-associating the track from being identified as an attacking fighter jet. The operators disregarded this information. But the situation was also not as clear-cut: the operators misread information about IR655 because of how it was displayed on their screens. IR655 had initially been assigned the radar track number (TN) 4474 but this was changed automatically by the AEGIS system to TN4131 to match its designation with another guided missile frigate in the area, the USS Sides, while it was in flight. Senior personnel on board of the USS Vincennes were apparently not aware of this change. The original TN4474 had also been ‘re-assigned to an US Navy A6 making a carrier landing in the Arabian Gulf’ (Maclean, 2017: 29). Later reports found that senior personnel on the USS Vincennes was unfamiliar and uncomfortable with the computer-assisted exercise of their roles demanded by operating in interaction with AEGIS (Barry and Charles, 1992).

To ensure MHC, training practices should therefore enable operators to step in where the system fails. For this, operators would need a productive balancing of trust and distrust 13 to know when to trust the system and when to question its outputs (Hawley and Mares, 2012: 7). This is a case of human judgement which operators must get exactly right: as discussed, both too much and too little trust in the system can create problems. Current training practices do not work in this direction but instead shape a diminished decision-making capacity for operators of ADS as an ‘appropriate’ form of human control. The problematic character of such training practices has become known after ADS failures and has, in the case of the Patriot fratricides, for example, triggered significant review exercises. However, even such reviewed practices still featured issues that had been previously identified as problematic and therefore did not lead to significant changes in ADS training overall (Hawley, 2007: 7).

Practices of operation

As I have argued, practices of design and practices of training structure what kind of practices operators can perform and shape normativity/normality on human control. There are three further types of practices performed to operate ADS with effects on normativity/normality.

First, operators do not have sufficient situational awareness in specific use of force situations as they have been relegated from active controllers to passive supervisors in the process of delegating more and more tasks to autonomous technologies in ADS (Bode and Watts, 2021). As passive supervisors, operators typically find themselves in situations of underload or overload (Hawley et al., 2005; Kantowitz and Sorkin, 1987; Parasuraman and Manzey, 2010). For the long hours of monitoring the system, they are underloaded with tasks as the system is ‘doing its work’. When the system is used in high-pressure combat situations, they find themselves overloaded with tasks of triangulating objects that the system identified as targets and deliberating on whether to release force (Bode and Watts, 2021). The change of their role to that of passive supervisors is tantamount to a loss of situational awareness, defined as ‘the perception of elements in the environment [. . .], the comprehension of their meaning, and the projection of their status in the near future’ (Endsley, 1995: 36). As many tasks have been ‘delegated’, operators are left without anything useful to do until they are called upon to perform practices of operation in specific use of force situations. But it is unclear how they are supposed to perform these practices competently when they often lack a functional understanding of the system’s targeting process and the necessary time to re-gain situational awareness.

Second, humans are expected to perform practices of operating modern ADS at machine speed. This significantly reduces the time operators have to decide whether to authorise the machine-prompted use of force or not. In the case of the PS752, the short-range radar of the Tor-M1 meant that operators had 10 seconds ‘to interpret the data’ and to decide whether to fire or not (Harmer quoted in Peterson, 2020). For the Patriot, operators only have a few seconds to veto the machine’s targeting decision (Leung, 2004), while in the case of the Iron Dome, operators have ‘five seconds to decide’ (Rogoway, 2018).

Third, operators perform practices of operation in high-pressure combat situations, exacerbating the already problematic structure of human–machine interaction. The failures of ADS I analysed happened in the context of international tensions when the militaries involved were on high alert. This clearly had an impact on how operators could perform practices of operation. Of course, high-pressure combat situations of this kind are the default in times of conflict and war, which is when weapon systems are expected to be used. These pressures should therefore be counted as a normal part of how practices of operation can be and are performed.

In sum, decades-long practices of designing, of training personnel for and of operating ADS integrating autonomous technologies have shaped normativity/normality around human control that circumscribes the critical mental space of operators. This takes the shape of counting a diminished, reduced role of humans in specific use of force decisions as ‘appropriate’ and ‘normal’. This normativity/normality results from an incremental process mobilised through solidifying and travelling practices performed by various states that operate ADS.

Interaction between practice-based and public-deliberative processes: human control as a positive obligation?

My focus in this section is specifically on identifying how the practice-based and the public-deliberative processes interact in shaping normativity/normality on human control. I analyse the extent to which stakeholders at the GGE explicitly reflect on normativity/normality emerging from practices of designing, training personnel for and operating weapon systems integrating autonomous technologies. Analytically, I therefore only capture a part of the public-deliberative process at the GGE. Stakeholders have posited many more arguments and framings in relation to human control over LAWS, for example, that delegating use of force decisions to machines threatens human dignity by reducing humans to data points, that we should consider human control as distributed across the entire life-cycle of weapon systems and that even LAWS operated without human control in specific use of force situations may increase compliance with IHL. These framings are, however, united by their focus on the future and by active distancing from trajectories of existing weapon systems integrating autonomous technologies.

I have identified two dynamics of interaction between how normativity/normality on human control emerges in between practices and public deliberation: first, (wilful) ignorance or the absence of explicit public-deliberative interaction and distancing by presenting LAWS as a problem of the future; and second, positive acknowledgement of practices associated with ADS (and other weapon systems integrating autonomous technologies) as representing a ‘gold standard’ of MHC.

(Wilful) ignorance and distancing

More often than not, existing weapon systems integrating autonomous technologies in targeting have been conspicuous for their absence in the GGE debate on LAWS. This curious silence inspired my research interest to investigate how significant practices of designing, training personnel for and using such systems are for what states consider as an ‘appropriate’ quality of human control.

In addition, stakeholders engage in distancing by presenting LAWS as a future problem. On occasion, states parties mention how ‘precursors’ of LAWS already exist and that ‘failure to take pre-emptive action [. . .] poses the risk of such weapons being fully developed and deployed in the future’ (Permanent Mission of Sri Lanka, 2017). Presenting potential new international law on LAWS as ‘pre-emptive’ or ‘preventive’ courses of action appeared recurringly in statements delivered by states parties in GGE debates since 2017. 14 States parties highlight that when applied to weaponry and warfare, autonomous technologies ‘have the potential to challenge the most basic principles of human rights, international humanitarian law and international law’ (Permanent Mission of Austria, 2017, author’s emphasis) and that the ‘GGE should continue to consider issues related to their potential development and regulation’ (Permanent Mission of the European Union, 2017, author’s emphasis). Not only states parties but also some civil society stakeholders have emphasised their ‘goal of pre-emptively banning fully autonomous weapons’ (Campaign to Stop Killer Robots, 2017, author’s emphasis).

Many states parties are further careful to locate the problem of LAWS in the future, emphasising that ‘fully autonomous systems do not exist yet’ (Permanent Mission of the European Union, 2017, author’s emphasis), that LAWS are ‘future weapon systems’ (Permanent Mission of New Zealand, 2017), a ‘possibly emerging new technology of warfare’ (Permanent Mission of Austria, 2017) or by simply referring to the ‘potential emergence of LAWS’.

15

Some states parties have explicitly highlighted that ‘we want to deal with the questions raised by the development and use of

This continuous future framing distances the public-deliberative process from practice-based normativity. In fact, this future focus is even suggested by the full GGE title: ‘Group of Governmental Experts on emerging technologies in the area of LAWS’. Characterising the technologies that sustain LAWS as emerging rather than emerged marks out the potential development of LAWS as a radically novel departure (Rotolo et al., 2015). But looking at the trajectories of existing weapon systems like ADS demonstrates that such technologies are already here, they are already used and they are already having an effect (Drew, 2021).

Pushing the issue of LAWS to the future also takes the urgency out of the GGE. It makes any potential regulation or a ban on LAWS ‘premature when the implications are so unclear’. 18 Mentioning uncertainty as an obstacle in potentially regulating LAWS was particularly prominent in the early years of the GGE (2017–2018), 19 but is still present, if to a lesser extent, in 2021 and 2022. In some, rare instances, GGE stakeholders have pointed out that developing LAWS is not an issue for the distant future, evidenced by announcements about ‘fully autonomous fighter jets, tanks, submarines, naval ships, border protection systems, and swarms of small drones’ that ‘have not been deployed as yet but that will not take long’ (ICRAC, 2017). LAWS ‘are not science fiction anymore’. 20 The ICRC and civil society actors such as Article 36 and Pax have likewise drawn attention to the reality of sensor-based targeting (Article 36, 2021; ICRC, 2021; Kayser, 2021). Such arguments sustain a sense of urgency as ‘technological advances in these weapon systems continue to outpace the substance of our debates’. 21 Yet, these references are marginalised at the GGE.

Positive acknowledgement

In the few instances that stakeholders at the GGE have explicitly addressed weapon systems integrating autonomous technologies, often referred to as ‘semi-autonomous’, 22 these are argued to operate under MHC. ADS have been framed as representing a ‘gold standard’ of human control because they have an operator either in-the-loop or on-the-loop. The Netherlands has used the Goalkeeper ADS as an example for ‘how MHC is exercised’, while also drawing attention to ‘similar systems [that] are in use by various high-contracting parties’ (Permanent Mission of the Netherlands, 2021: 4). More specifically, the Netherlands highlights how ‘MHC has been exerted inter alia through its designing and testing phase, the article 36 review of the system and the training of personnel and operating the system’ (Permanent Mission of the Netherlands, 2021: 4). Similar arguments have been made in the context of ADS by other states parties. 23

These arguments shelf any substantive public discussion of practices performed in relation to ADS as sources of emergent normativity as they are supposedly already operated under MHC. Moreover, not only states parties perform such positive acknowledgement, but also some civil society actors such as the Campaign to Stop Killer Robots. For some civil society actors, avoiding an in-depth discussion of existing systems may be a pragmatic choice motivated by not wanting to feed the anxiety of states parties that they seek to convince of moving towards new international law to govern this space. In other words, there is resistance to discussing the issue of LAWS in concrete terms: ‘if autonomy is too widely defined, many types of weapon systems in existence would fall under this rubric’. 24

In other cases, states parties such as the United States do not mention ADS specifically, but propose human–machine interaction practices for the GGE to endorse ‘drawn from US military practice in developing and using autonomous and semi-autonomous weapon systems’. 25 Current practices are therefore taken to represent ‘appropriate’ understandings of human control as states parties consider their current use of weapon systems in adherence to IHL: ‘Examining the use of autonomous functions in existing weapon systems will help clarify how IHL applies’. 26 The United States has expanded on this in March 2022 by putting forward a joined proposal on Principles and Good Practices on Emerging Technologies in the Area of LAWS together with Australia, Canada, Japan, the Republic of Korea and the United Kingdom (Australia et al., 2022). This takes the experiences that states have gained in using weapon systems integrating autonomous technologies purely as representing ‘good practices’ that other states can draw on. 27 A draft version of the GGE’s 2022 report circulated by the Chair notably also referenced ‘good practices of human-machine interaction’ as a topic to be discussed further (GGE on LAWS, 2022: 22 d).

Similarly, other states parties have delivered presentations about existing weapon systems integrating autonomous technologies to GGE meetings in an effort to highlight that state practices of using such weapon systems remain under human control. At the GGE meeting in August 2018, the Swedish delegation, for example, presented the 155-mm anti-armour BONUS ‘smart’ munition. The BONUS carrier shell contains two sub-munitions on winglets that are released over the battlefield and scan a target area for targets objects via heat signatures and radar-acquired target profiles. 28 Human operators are responsible for selecting the target area and identifying legal targets. The autonomous technologies integrated in the munition were portrayed to serve the purpose of directing the warhead to a designated target, but once the BONUS shell is launched, human operators have no way to control or stop it. 29 A member of the US delegation responded to the Swedish presentation by stating that ‘in this case, the intention of the commander is enhanced by autonomous features. The attack is more discriminate and more discrete. There is much more focus on the actual targets rather than bombarding a large area’. 30 This statement again underlines the US position that human–machine interaction in weapon systems integrating autonomous technologies in targeting is unproblematic (Permanent Mission of the United States, 2018). The goal of such presentations is therefore not to invite critical reflection of practices, but to underline how they represent ‘good practices’. By arguing that current systems integrating autonomous technologies operate under MHC, such statements dismiss that LAWS even represent an added problem: ‘Nothing changes, LAWS are not a game-changer’. 31 The prevailing understanding among states parties is that the only thing that may be gained from studying existing systems integrating autonomous technologies are ‘best practices’ for how MHC can be successfully exercised. Yet, such statements wilfully ignore challenges to the meaningful exercise of human control such practices already shape.

What do these public-deliberative practices of (wilfully) ignoring, distancing LAWS from existing systems integrating autonomous technologies and positively acknowledging current human control practices mean for normativity on LAWS? Fundamentally, this undercuts efforts to potentially regulate LAWS through codifying a positive obligation towards human control. Current public deliberation does not scrutinise normativity on human control shaped in practices performed in relation to existing systems, such as ADS. Furthermore, by distancing existing weapons integrating autonomous technologies from the GGE debate, stakeholders act to legitimise such systems because they are not LAWS. More so, stakeholders also positively acknowledge current practices of using weapons integrating autonomous technologies as representing MHC. But what is positively acknowledged here is not a high quality of direct, human control in specific use of force situations. In fact, studying ADS shows that even direct human control (either in-the-loop or on-the-loop) does not automatically make human control meaningful because of the complexity of human–machine interaction. And this is not a surprise, as research in human factor analysis has demonstrated these issues for years. It is just a surprise that these research findings have virtually no platform in the debate on LAWS.

Taking a practice-internalist perspective (Frost and Lechner, 2016b) may go some way towards capturing why stakeholders do not scrutinise existing practices for their normative shaping effects on human control. 32 An internalist perspective aims to understand what a practice means to the practitioners performing it (Frost and Lechner, 2016b: 303). Many of the GGE stakeholders, in particular the delegates, but also some of the civil society representatives and academics, come to the debate from a background in IHL or use IHL as their main reference point for assessing potential challenges represented by LAWS. Its embedding within the CCW also limits the GGE debate to IHL and most states speak to and support this limited purview. In IHL, the use of ADS has not been considerably challenged, although ADS integrating autonomous technologies have been used in armed conflict for decades. This can also be evidenced by the absence of any significant legal and security studies scholarship on ADS (Richemond-Barak and Feinberg, 2016: 472). The lack of scrutiny may therefore be associated with stakeholders not perceiving ADS as particularly problematic from an IHL perspective.

It nonetheless influences normativity/normality surrounding LAWS: by not scrutinising whether the direct control exercised by humans over weapon systems integrating autonomous technologies is actually meaningful, this positive affirmation masks how practices of design, training and operation structure human–machine interaction in such a way that operators at the bottom of the chain of command bear the responsibility for any failures. ‘Human error’ was typically highlighted as a major factor in high-profile failures of ADS. But such failures were grounded in complexities of human–machine interaction resulting from the ever-greater delegating of cognitive tasks to the system. This corresponds to Elish’s (2019) argument that operators can become ‘moral crumple zones’: ‘the human in a highly complex and automated system may become simply a component – accidentally or intentionally – that bears the brunt of the moral and legal responsibilities when the overall system malfunctions’ (p. 41).

Conclusion

In response to an empirical puzzle surrounding what gets to count as ‘meaningful’ human control over weapon systems, I proposed examining how such normativity arises from two separate yet interlinked processes of a practice-based and a public-deliberative nature. This argument uses insights from IPT, critical security studies and STS to counter the public-deliberative bias in IR norm research. I argue that normativity/normality on human control initially emerges in the process of doing things, namely in practices of designing, training personnel for and operating weapon systems integrating autonomous technologies performed at sites not accessible to the public. Furthermore, I propose four dynamics of interaction between practice-based and public-deliberative processes that have shaping effects on both emerging normativity/normality and on how and which practices are performed. Building on varied and novel primary and secondary data, I analysed first how practices of designing, training personnel for and operating ADS have incrementally shaped normativity/normality on human control. This normativity/normality designates a diminished decision-making capacity of humans as ‘appropriate’ in specific use of force situations. Furthermore, I argue that normativity/normality on human control is shaped via two dynamics of interaction between the practice-based and the public-deliberative processes: (wilful) ignorance and distancing, as well as positive acknowledgement. These dynamics positively affirm the normativity/normality emerging from practices of design, training and operation rather than scrutinising it, thereby undercutting public, deliberate efforts to retain MHC over the use of force.

This endeavour opens at least four avenues for further research on normativity in IR: first, to what extent are the dynamics in between practice-based and public-deliberative processes that I identified for the case of LAWS and human control representative of how wider social processes shape normativity? As noted, there is evidence that normativity/normality in other regulatory processes of military technologies, such as nuclear weapons or anti-personnel landmines, can be studied via such dynamics of interaction. But the phenomenon of normativity/normality initially emerging in practices (performed at sites unseen) and only becoming the object of public deliberation at a later point appears to be of an even more general nature. In International Relations, developing global problems may often trigger responses by actors in practices that are not publicly deliberated. The normative/normal shaping effect of practices can run under the analytical radar, as it were.

Second, what role do technologies play in the performance of practices and, consequently, in shaping normativity/normality? Normativity/normality on human control is not being shaped by human designers, commanders and operators in isolation but in close enmeshment with technologies. There is much intellectual space to explore this further in conversation with STS concepts (Bellanova et al., 2020, 2021; Faulkner, 2001; Hoijtink and Leese, 2019; Hoijtink and Planqué-Van Hardeveld, 2022), such as configuration, affordances or sociotechnical imaginaries (Franklin, 1999; Suchman, 2012). There is already some overlap with one prominent STS approach, actor-network theory and IPT (Adler-Nissen and Drieschova, 2019; Leander, 2013; Pouliot, 2010). But this has so far only been explored with respect to a limited range of material artefacts. It has not resulted in innovative studies of the wider sense that technology could occupy within IPT. Acknowledging technologies does not only expand the modes that are relevant for studying emerging normativity/normality, but also the sites of norm research by highlighting operational spaces and practices hitherto unseen by scholars and practitioners.

Third, where is the agency in processes shaping normativity/normality? Focusing on the role of technologies may lead readers to vest agency in such objects. But, rather, and in line with STS scholars and wider practice theories, I argue that human agency is dispersed but very much present in these processes. Technologies do not ‘naturally’ become important reference points by themselves, but because of processes of social meaning-making. They are carriers of practices, often not necessarily in intended ways. Thinking about agency also raises the question of who benefits from the type of normativity/normality that emerges in practice-based processes being performed at sites unseen by the public. States using such weapons benefit from such unseen and inaccessible processes because they have more room for manoeuvre. At the same time, the kind of pressure complex human–machine interaction puts military personnel under is not necessarily in the interests of those states. However, it seems to be currently often taken as an ‘unavoidable risk’ in the grand scheme of things.

Fourth, what do these dynamic interactions between processes of emergent normativity/normality mean for international law? My focus seeks to capture normativity beyond legal norms – that is understandings of appropriateness that do not necessarily originate in, nor speak to, international law. But it is important to track how they relate to international law, nonetheless. There are two potential scenarios: first, such processes may come to change, over time, how certain core provisions of international law are understood (Maas, 2019); or second, they may continue to run in parallel to legal standards. In this second scenario, the law would remain technically ‘intact’, but is de facto undercut and therefore loses in importance.

As these open questions illustrate, work at the analytical intersection of STS, IPT, critical security studies and norm research is likely to be an increasingly innovative and fruitful field of research to help IR scholars study the normative consequences of ever more technologically mediated practices in the social world – and the human relationship with technology.

Footnotes

Acknowledgements

I gratefully acknowledge the work of Tom Watts in putting together the data catalogue on automation and autonomy in air defence systems. I am further grateful for feedback I received when presenting drafts of the paper at the Sandhurst Defence Forum, the IFSH (Institut für Friedensforschung und Sicherheitspolitik, University of Hamburg) research seminar, the European International Society for International Law’s Working Group on Law and Technology, the 2021 Conference of the European International Studies Association (EISA) and by members of the AutoNorms team (Hendrik Huelss, Anna Nadibaidze, Guangyu Qiao-Franco and Tom Watts). The manuscript was also significantly strengthened by engaging with feedback offered by two anonymous reviewers.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This research has received funding from the European Union’s Horizon 2020 research and innovation programme under grant agreement No. 852123 (AutoNorms).