Abstract

Background:

Disability accrual in multiple sclerosis (MS) is highly variable and challenging to predict, complicating personalised care. Integrating machine learning (ML) with patient-reported outcomes (PROs) and clinician-assessed outcomes (CAOs) may support tailored interventions.

Objectives:

To develop and validate an interpretable ML model for predicting disability accrual trajectories in MS.

Methods:

A multicentre data set of 1,176 MS patients with up to 8 years of follow-up was used. A random forest model was trained to predict disability accrual at 2, 3, 4, and 5 years, using baseline clinical variables, PROs and initial risk class as predictors. Model performance was assessed using accuracy, area under the curve, and survival analysis.

Results:

437 patients composed final cohort. The model predicted disability changes with an accuracy of 0.82 (95% CI: 0.77–0.86) at 2 years and 0.73 (95% CI: 0.66–0.80) at 5 years. Initial risk class and baseline Expanded Disability Status Scale (EDSS) were the most influential predictors. Survival analysis confirmed model’s ability to effectively capture the time-dependent patterns of disability accrual events at the population level (log rank p > .05).

Conclusions:

The model offers robust and interpretable predictions that may support clinical decision-making using routine clinical data.

Keywords

Introduction

Multiple sclerosis (MS) is a chronic, demyelinating autoimmune disease of the central nervous system (CNS). Its marked clinical heterogeneity and various disability progression trajectories present major challenges for prognostication, 1 and although MS has been classified into a few main clinical phenotypes2,3 – most notably relapsing-remitting MS, primary progressive MS, and secondary progressive MS – this stratification has limited utility in guiding individualised treatment and predicting outcomes. Therefore, selecting the most appropriate treatment strategy at the individual level is far from straightforward, despite the availability of a wide range of tailored therapeutic options 4 (disease-modifying therapies [DMTs], symptomatic drugs, motor and cognitive rehabilitation, physical activity, etc.). Timely and appropriate therapeutic decisions can significantly influence long-term quality of life, affecting physical, cognitive, and emotional well-being, as well as the trajectory of disability accrual. Within this framework, an additional level of complexity arises from the different perspectives of clinicians and people with MS (pwMS): clinicians may evaluate progression by observing sustained worsening of the disease stage or previously controlled symptoms; pwMS may interpret progression as the cumulative impact of the disease on their daily lives, concerns regarding long-term medication, or anxieties about later-life care. 5 A minor change in EDSS (e.g. from 1.5 to 2) can significantly affect individual’s quality of life, yet it is often overlooked in clinical trials. 6 These different viewpoints underscore the multifaceted nature of disease progression and highlight the importance of considering multiple perspectives in healthcare. The PROMS Initiative, a collaborative endeavour by the European Charcot Foundation and the Multiple Sclerosis International Federation, strives to amplify the influence of pwMS input on MS care and establish a cohesive perspective on patient-reported outcomes (PROs) for diverse stakeholders. 7 This paradigm suggests the possibility of incorporating both active and passive data collection on MS-specific functional domains to enrich traditional measures by fully capturing the experience of pwMS. 8 Integrating subjective and objective indicators of functional status and monitoring MS fluctuations over time can help interventions and treatments overcome hurdles in Phase II and Phase III clinical trials, ultimately improving patient access to care. As the understanding of MS progression continues to evolve, there is an increasing need to investigate whether a combination of PROs and clinician-assessed outcomes (CAOs) can more comprehensively monitor disease development.9,10

The ability to track the variety of data and employ it confidently in clinical practice may require support, which is increasingly provided by artificial intelligence (AI) and machine learning (ML). 11 These technologies are regarded as valuable tools for enhancing patient care. 12 Nevertheless, implementing such technological strategies in clinical practice remains challenging. 13 On one hand, many approaches have yet to meet the necessary clinical standards in terms of accuracy and specificity. On the other hand, most algorithms tend to prioritise performance and predictive capacity, often at the expense of interpretability. 14 Indeed, greater predictive accuracy frequently comes with reduced explainability, which in turn can undermine the trust and confidence of medical professionals in applying these tools in real-world settings. Therefore, this paper aims to address this gap by developing and validating an ML model that places clinical interpretability at the forefront of each step in the prediction process. This study contributes to the growing effort to develop predictive models based on real-world data, 15 as it relies exclusively on routinely collected PROs and CAOs to forecast MS-related disability trajectories.

Materials and methods

Participants were a sample of 1176 pwMS enrolled in the context of the currently large, multicentre, and prospective PROMOPRO-MS initiative (grant FISM 2013/S3). Using a set of performance measures (PM), PROs and CAOs about the domains typically affected by MS, the medical staff of clinical centres in Genoa, Vicenza, and Padua routinely monitored pwMS at intervals of about 4 to 6 months from January 2014 to March 2023. All participants gave written informed consent prior to study entry in accordance with the revised Declaration of Helsinki. 16 The Regional Ethics Committee of Azienda Ospedaliera ‘San Martino’ (Genoa, Italy) (N: 023REG20l4) approved this study.

Data

Specifically, the following data were accessible.

CAOs:

Expanded Disability Status Scale (EDSS) for assessing the degree of neurologic impairment in MS (range 0–10).

Functional Independence Measure scale (FIM) for estimating the level of functional independence in daily activities (range 18–126).

Montreal Cognitive Assessment (MoCA) for evaluating global cognitive status across multiple domains (range 0–30).

PROs:

ABILHAND questionnaire for measuring perceived difficulty in performing manual daily activity and upper limb functionality (range 0–46).

Hospital Anxiety and Depression Scale (HADS) for measuring anxiety and depressive symptoms (range 0–42).

Life Satisfaction Index (LSI) for assessing overall satisfaction with life domains (range 0–22).

Modified Fatigue Impact Scale (MFIS) for assessing the impact of fatigue on physical, cognitive and psychosocial functioning (range 0–84).

Overactive Bladder Questionnaire (OAB-Q) for evaluating bladder-related symptoms and their impact on quality of life (range 8–48).

PM:

Symbol Digit Modalities Test (SDMT) for assessing information processing speed (score 0–110).

Supplementary Materials provide full references for all instruments.

Inclusion and exclusion criteria

The study protocol was rather loose regarding the follow-up rate (ranging from 2.6 months to 8.2 years) and data collection procedures. PwMS were invited to be administered all CAOs, PROs and PMs, but were not required to answer all questions; no person was excluded due to other health conditions. Pharmacological treatment was recorded, but it was not used as an exclusion criterion, nor was rehabilitation therapy. There were no inclusion criteria other than a confirmed diagnosis of MS.

Medical staff training

To ensure consistent and reliable data collection across centres, all medical staff underwent standardised training on administering each outcome measure included in the PROMOPRO-MS project. 17 Periodic retraining sessions were conducted to reinforce protocols and maintain accuracy over time. A dedicated research team member oversaw data quality control, verifying consistency and adherence to standardised data management procedures. 18

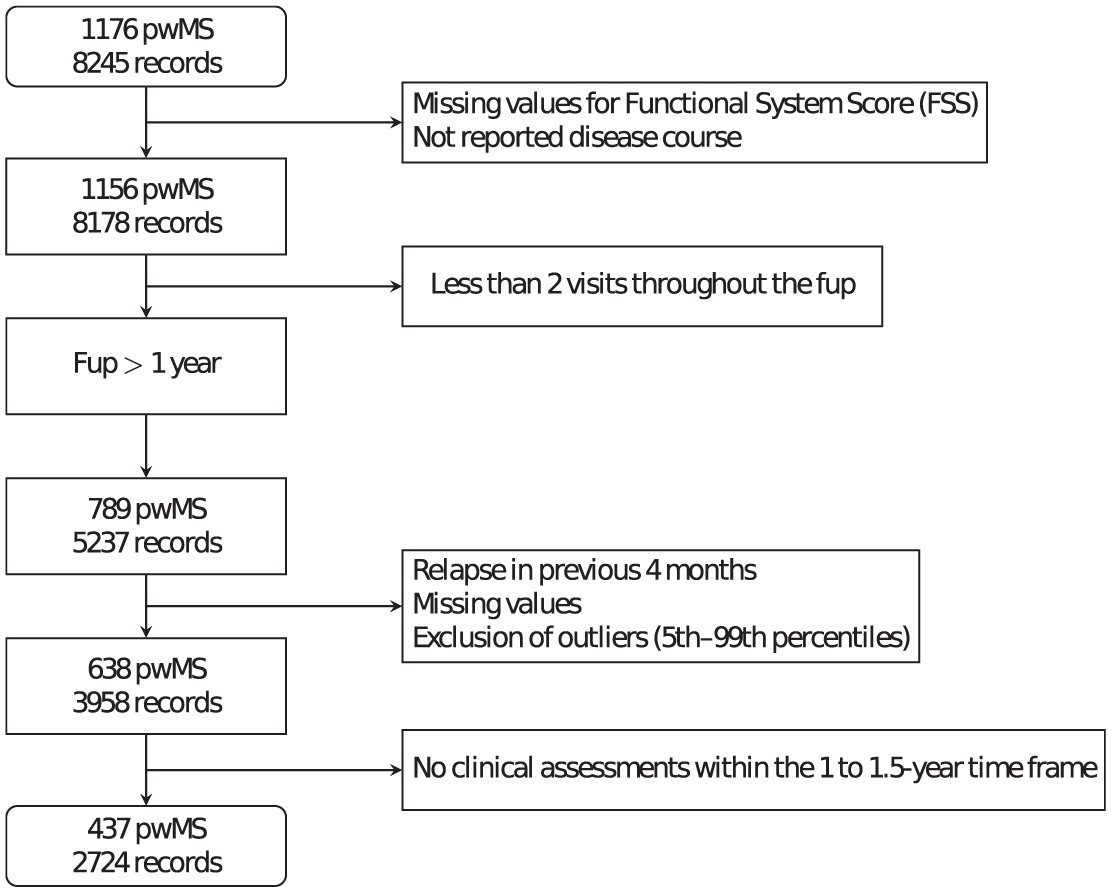

Data set pre-processing

To ensure maximum interpretability and focus specifically on the accumulation of disability unrelated to acute disease phases, we adopted a conservative approach for the whole data pre-processing (Figure 1). A record was excluded if a relapse had occurred within the four-month period preceding that assessment; if the disease course was not reported; if the EDSS score fell outside the 5th to 99th percentile range (to exclude outliers); or if any Functional System Score was missing, since these constitute the essential components for calculating the EDSS. In addition, we excluded individuals with less than one year of follow-up, fewer than two clinical visits during this period, or lacked a clinical record within the six months following the first year of monitoring, as all these conditions were required to satisfy model input criteria. Comorbidities were originally recorded in the database, and due to their considerable heterogeneity, we defined a summary variable to point out the potential presence of concurrent diseases (see Supplementary materials for more specifications).

Flow chart illustrating the data processing and selection steps, from the initial cohort (1176 pwMS) to the final data set used for analysis (437 pwMS).

Model overview

The goal of the model is to predict the trajectory of disability accumulation in pwMS in an interpretable and clinically accessible way.

Prediction timeline

Four separate models with identical structure were trained to generate predictions at fixed time points T: 2, 3, 4 and 5 years after the first visit. For each time point, the clinical record closest to T (within ± 0.5 years) was selected.

Model input

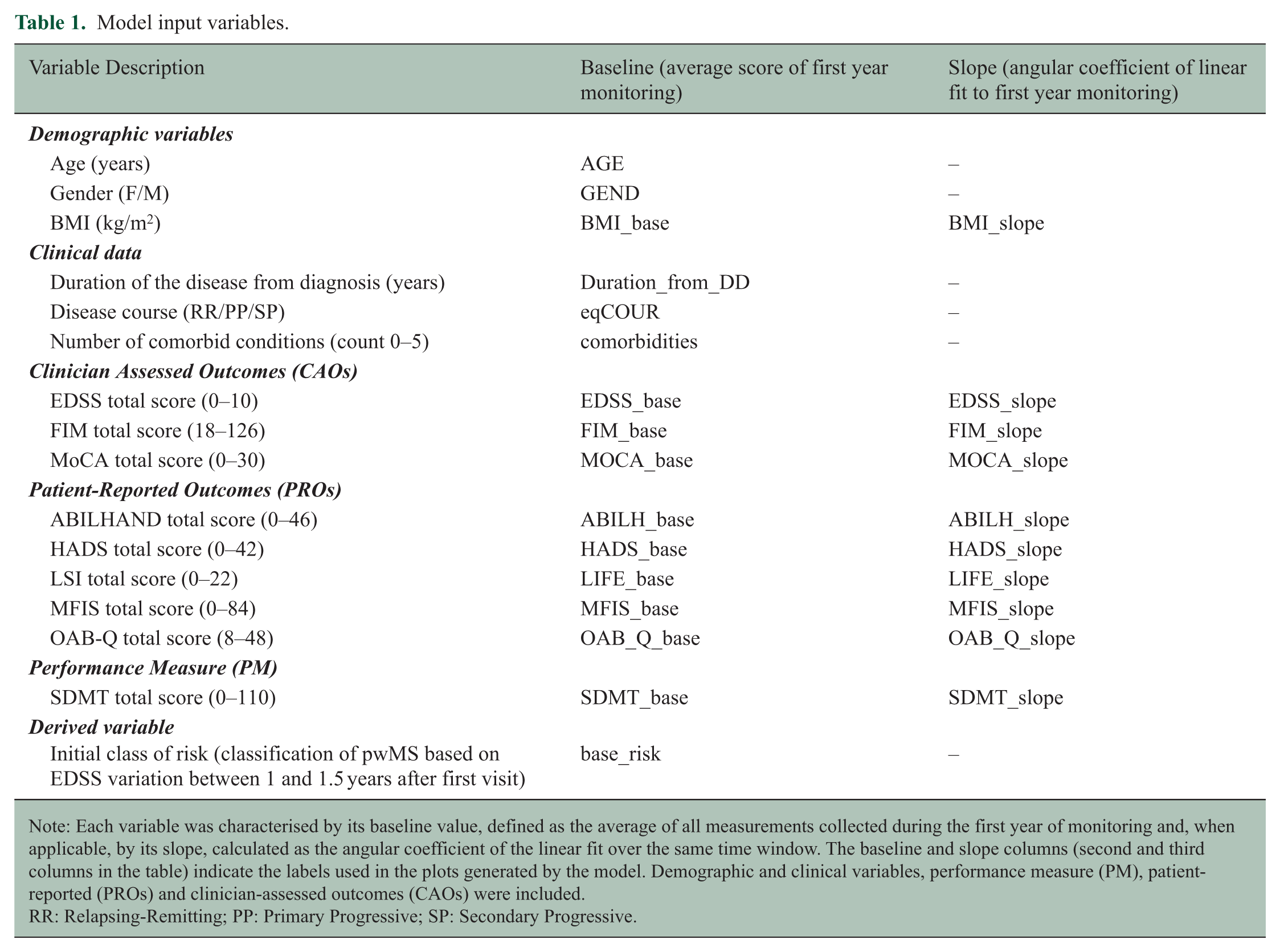

The input features comprised baseline values and, when available, the slopes of total scores for PROs, CAOs, and PM, in addition to relevant clinical and demographic variables (see Table 1 for details). For each patient, the baseline score was defined as the average of the assessments performed during the first year of monitoring. A linear model was fitted to these points, and the resulting slope (angular coefficient) was used as an indicator of change over time. In addition, we included the initial risk class (base_risk), estimated from the period between 1 and 1.5 years after the first visit. This early risk label summarises short-term disease evolution and serves as an additional predictor.

Model input variables.

Note: Each variable was characterised by its baseline value, defined as the average of all measurements collected during the first year of monitoring and, when applicable, by its slope, calculated as the angular coefficient of the linear fit over the same time window. The baseline and slope columns (second and third columns in the table) indicate the labels used in the plots generated by the model. Demographic and clinical variables, performance measure (PM), patient-reported (PROs) and clinician-assessed outcomes (CAOs) were included.

RR: Relapsing-Remitting; PP: Primary Progressive; SP: Secondary Progressive.

Model output

At each follow-up T, the model classifies the patient into one of two risk categories, based on the cumulative variation of EDSS over time (ΔEDSS). More precisely, for each time point, the cumulative EDSS change from the baseline score (EDSS_base) was divided by the number of years passed, yielding an annualised cumulative rate of EDSS change. PwMS with ΔEDSS > 0 were assigned to the ‘severe risk’ class, indicating faster disability accumulation, whereas pwMS with ΔEDSS ⩽ 0 were assigned to the ‘negligible risk’ class, indicating a more stable course of disability.

Predictive analysis

We selected the random forest (RF) algorithm for the predictive analysis. The following parameters were defined: 200 weak learners (decision trees), uniform prior probabilities across classes, interaction curvature for the predictor selection criteria, and Gini index for the split criterion at each node. To maximise accuracy, we used leave-one-out cross-validation.

Feature importance (FI) was assessed to identify non-informative variables for the prediction task. The model performance was evaluated by several metrics (accuracy, specificity, sensitivity, and F1-scores). With Receiver Operating Characteristic (ROC) curves, we compared the discrimination ability of the model in function of time, considering the ‘severe risk’ class as the positive outcome.

Survival analysis

A survival analysis was completed. Computing Kaplan–Meier curves enables us to determine the likelihood of developing disabilities as a function of time. Comparing the actual and simulated survival curves provides a qualitative assessment of the model’s performance, allowing us to see if it tends to underestimate or overestimate risk in comparison to real data. We defined the event of interest as the transition into the ‘severe risk’ class; we identified censored data as those without further follow-up or those that never shifted to this category. The two survival curves were compared using the log-rank test. A non-significant result implies a considerable agreement between the observed temporal sequence of events and the predicted pattern.

Additional task

Additional models were developed:

EDSS model including DMT as covariate.

EDSS model with 2 years of baseline as input.

Three additional models trained independently to test internal consistency, maintaining identical parameters and structure but differing in outcome scale (FIM, MFIS, LSI).

The results focus solely on the main EDSS model with 1 year of baseline for readability. See Supplementary Materials for results from all additional models described above.

Results

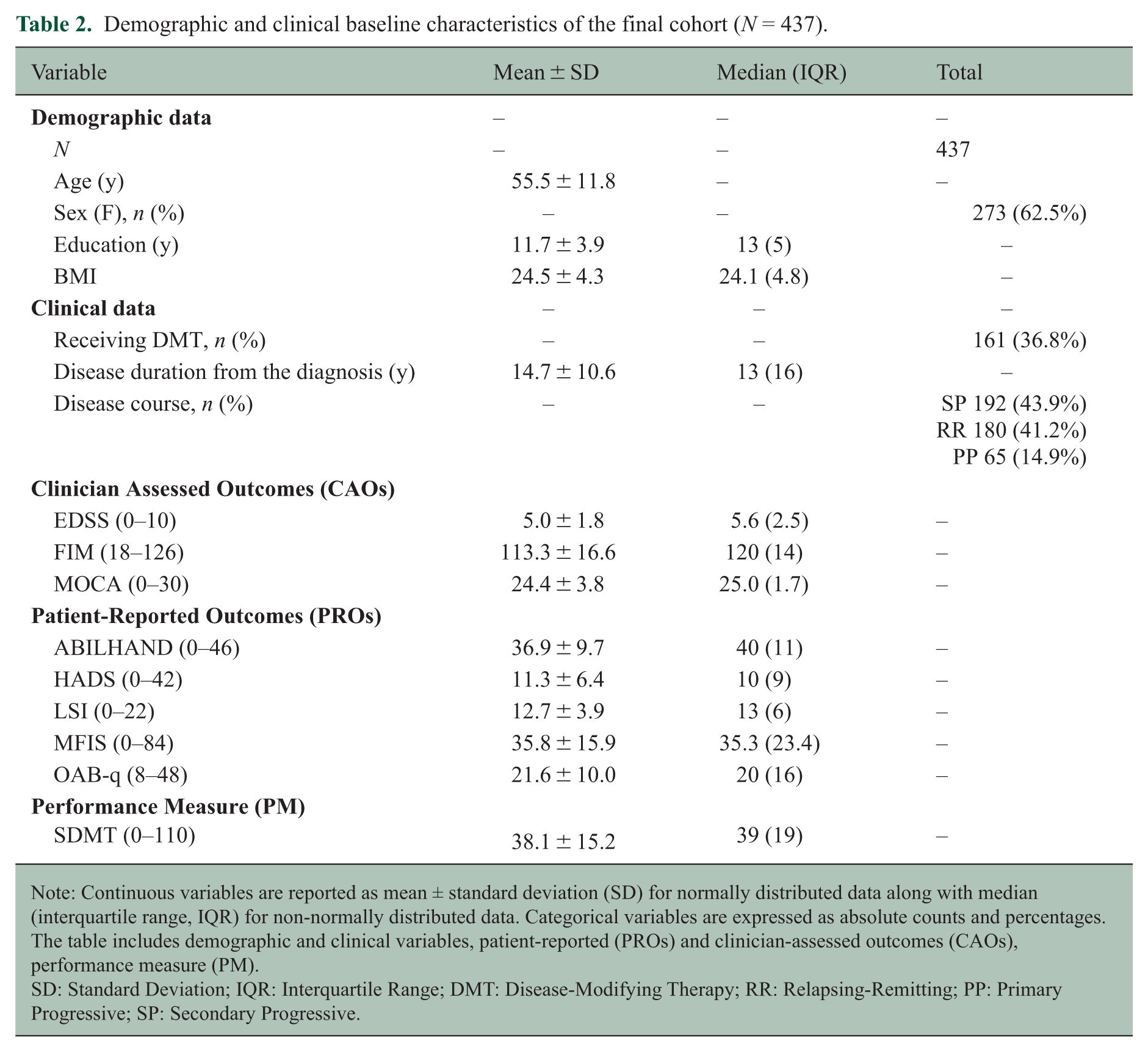

The final database consists of 2724 clinical records collected from a cohort of 437 pwMS (F: 62.5%; age (SD): 55.5 (11.8); EDSS (SD): 5.0 (1.8); disease duration (SD): 14.7 (10.6)). Table 2 provides an overview of the baseline demographic and clinical characteristics of the final sample included in the analysis.

Demographic and clinical baseline characteristics of the final cohort (N = 437).

Note: Continuous variables are reported as mean ± standard deviation (SD) for normally distributed data along with median (interquartile range, IQR) for non-normally distributed data. Categorical variables are expressed as absolute counts and percentages. The table includes demographic and clinical variables, patient-reported (PROs) and clinician-assessed outcomes (CAOs), performance measure (PM).

SD: Standard Deviation; IQR: Interquartile Range; DMT: Disease-Modifying Therapy; RR: Relapsing-Remitting; PP: Primary Progressive; SP: Secondary Progressive.

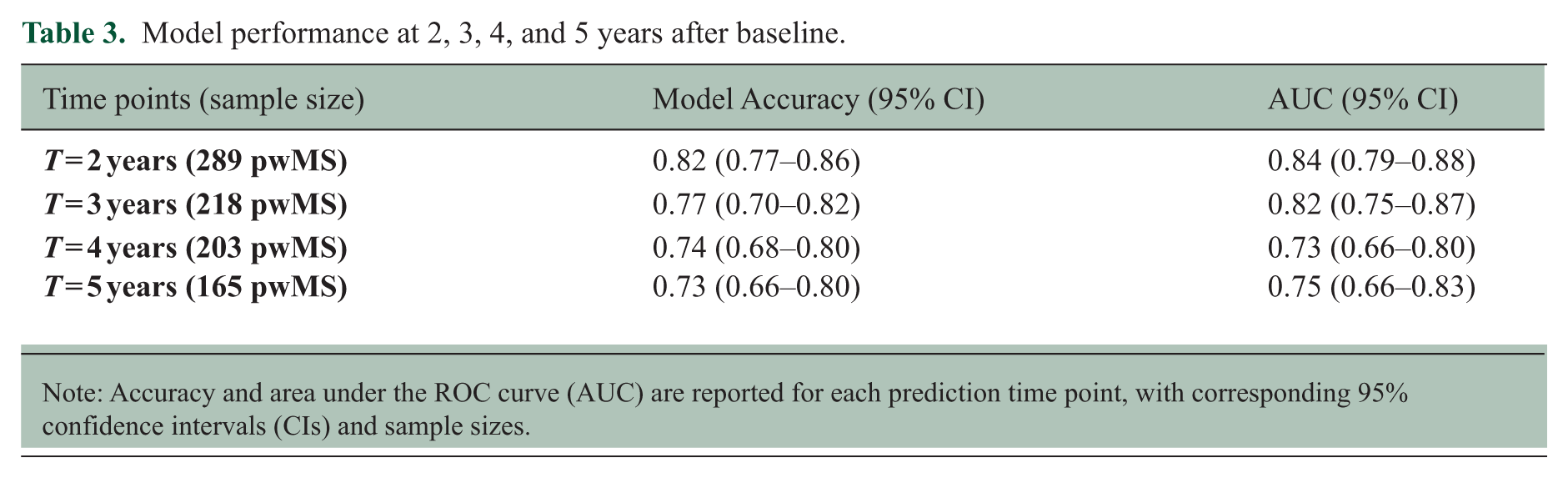

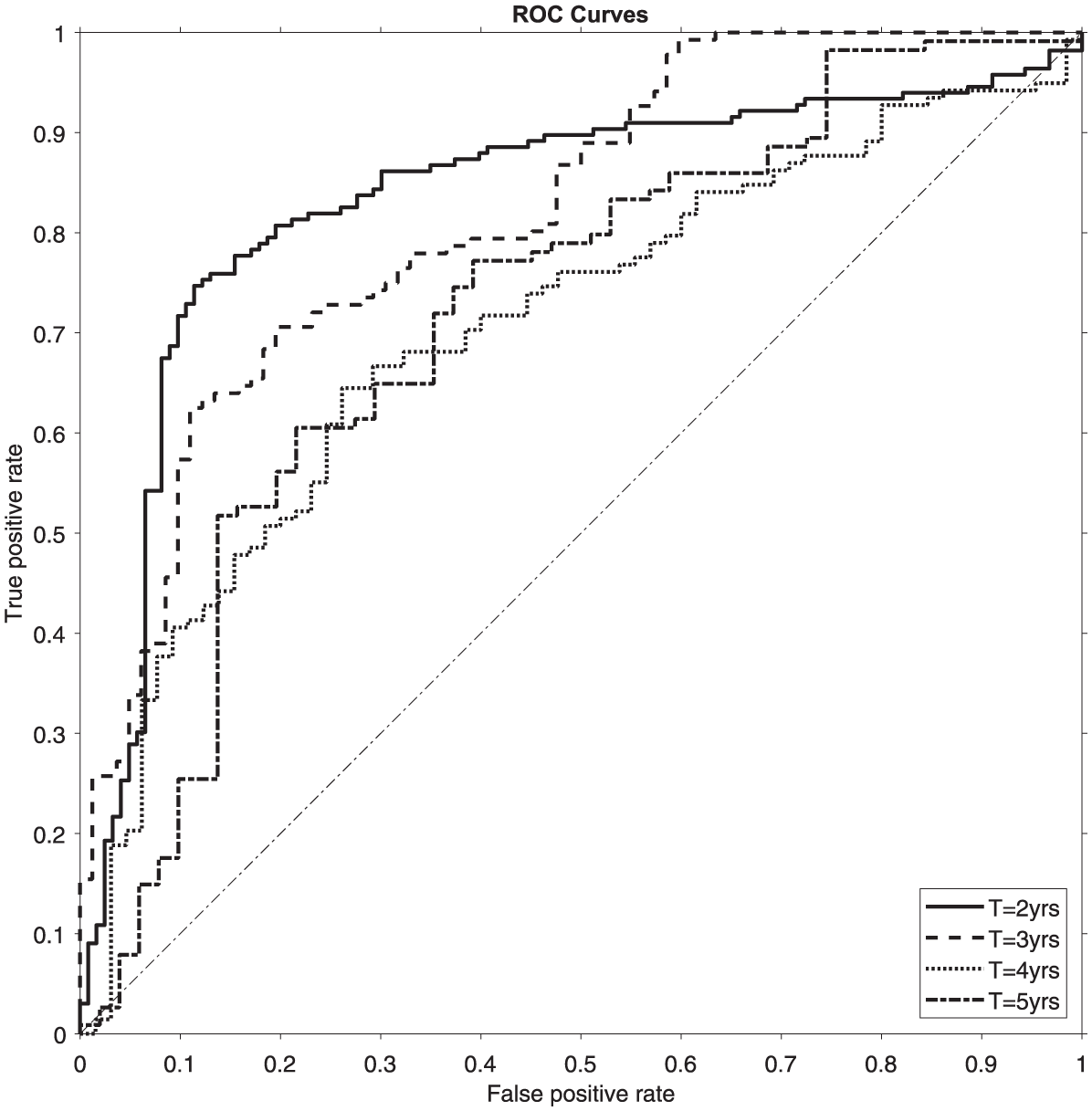

The results demonstrate the model’s strong discriminatory ability between high and low risk patients, with the algorithm correctly identifying the risk category for approximately 82% of patients at the initial time point. Accuracy gradually declined over time but remained 73% at year 5. Table 3 summarises the model’s predictive performance across different time points. ROC curves (Figure 2) show a similar trend, with area under the curve (AUC) values decreasing from 0.84 (95% CI: 0.79–0.89) in year 2 to 0.75 (95% CI: 0.66–0.84) in year 5. The percentage distribution of predictions, other metrics for model performance assessment, statistical significance of predictors are detailed in Tables S.2-S.3, Figure S.2 (Supplementary material).

Model performance at 2, 3, 4, and 5 years after baseline.

Note: Accuracy and area under the ROC curve (AUC) are reported for each prediction time point, with corresponding 95% confidence intervals (CIs) and sample sizes.

ROC curves for two (solid lines), three (hatched lines), four (fine hatched lines) and five (dash-dot lines) years. The dashed bisector represents the performance of a random classifier. The corresponding AUC values are 0.84, 0.82, 0.73 and 0.75, respectively (see Table 3 for 95% confidence intervals).

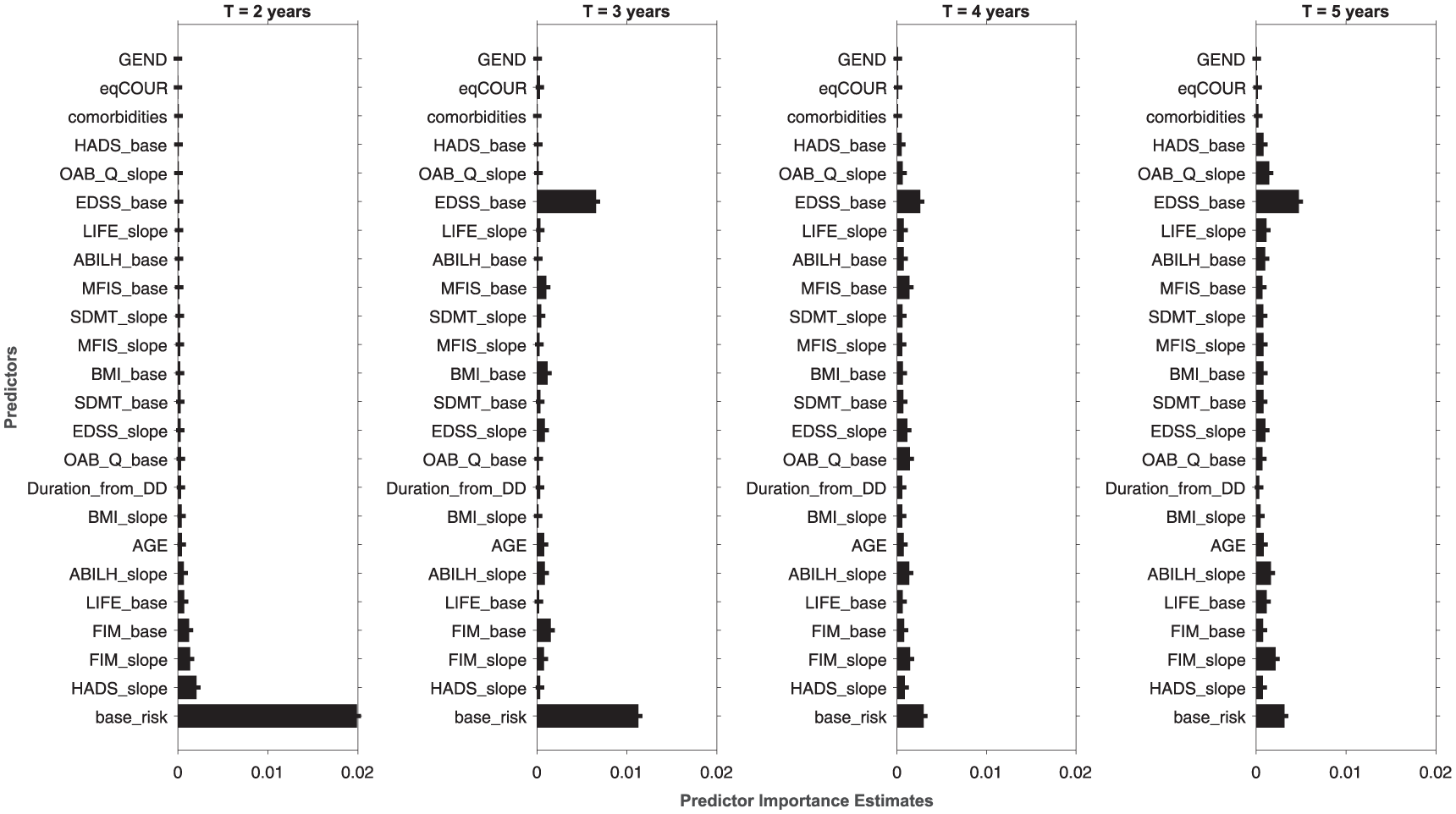

The FI analysis reveals that the initial risk class is the most influential predictor at time points closer to the baseline. However, as time progresses, the baseline EDSS score becomes increasingly important, ultimately assuming the lead. As time goes by, the role of many other PROs and CAOs becomes comparable to the leading predictors. Notably, the contributions of gender, comorbidities, and disease course are minimal across all models. Figure 3 depicts the temporal trend of variable importance, and the order of features in the last three plots is fixed based on the first model’s ranking, allowing for a more direct comparison.

Feature importance rankings for the classification models at 2, 3, 4 and 5 years. The histograms display the relative contribution of each predictor across all time points. For T = 3, 4, and 5 years, the ordering of the features is based on the ranking obtained from the first model (T = 2 years) to enable direct visual comparison. Thresholds of statistical significance are reported in Figure S.2 (Supplementary Material).

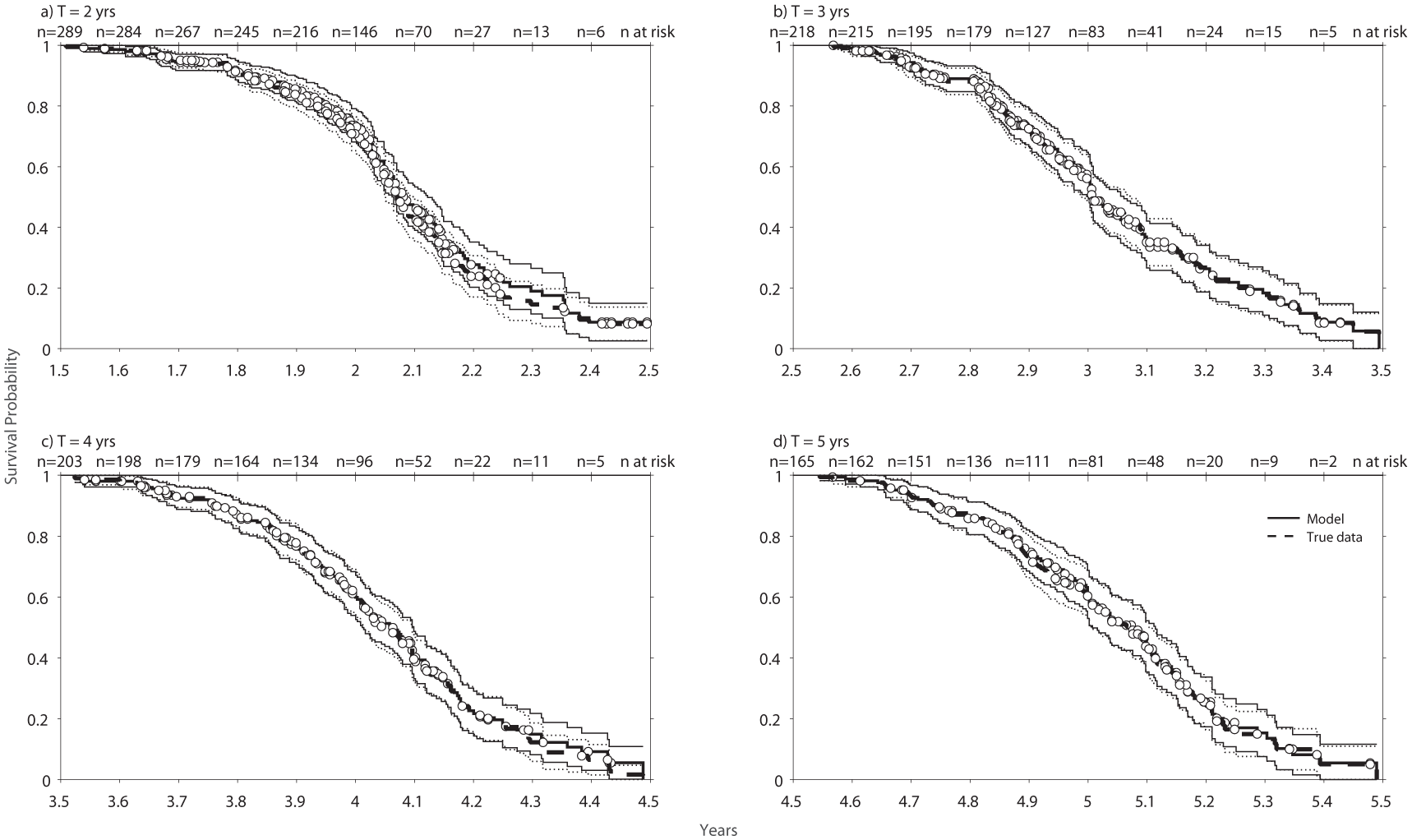

Figure 4 presents the survival analysis results for the model at 2 (a), 3 (b), 4 (c) and 5 (d) years. White dots represent the censored data. The number of patients composing the risk set decreases over time, as shown in the upper section of each graph. The dashed curves represent the probability of disability accrual based on actual events, while the solid lines illustrate the probability estimated by the model’s predictions. In all comparisons, the log-rank test provided non-significant results (p > 0.05), supporting agreement between the observed and model-predicted survival curves. Thus, no statistically significant differences were detected between the curves based on actual events and those generated from predicted ones.

Kaplan–Meier survival curves for the model at different time points. The hatched line represents the actual probability of belonging to the severe-risk class, while the solid line indicates the probability estimated by the model. Dots represent censored data points. Panel (a) shows curves at T = 2 years, with hazard rates of 5.6 (actual) and 5.5 (predicted), yielding a hazard ratio (HR) of 1.05 (95% CI: 0.84–1.31). Panel (b) presents results at T = 3 years, with hazard rates of 5.3 (actual) and 5.2 (predicted), HR = 1.06 (95% CI: 0.83–1.35). Panel (c) displays curves at T = 4 years, with hazard rates of 10.5 (actual) and 6.8 (predicted), HR = 1.05 (95% CI: 0.82–1.33). Panel (d) displays curves at T = 5 years, with hazard rates of 7.3 (actual) and 7.0 (predicted), HR = 1.01 (95% CI: 0.78–1.33).

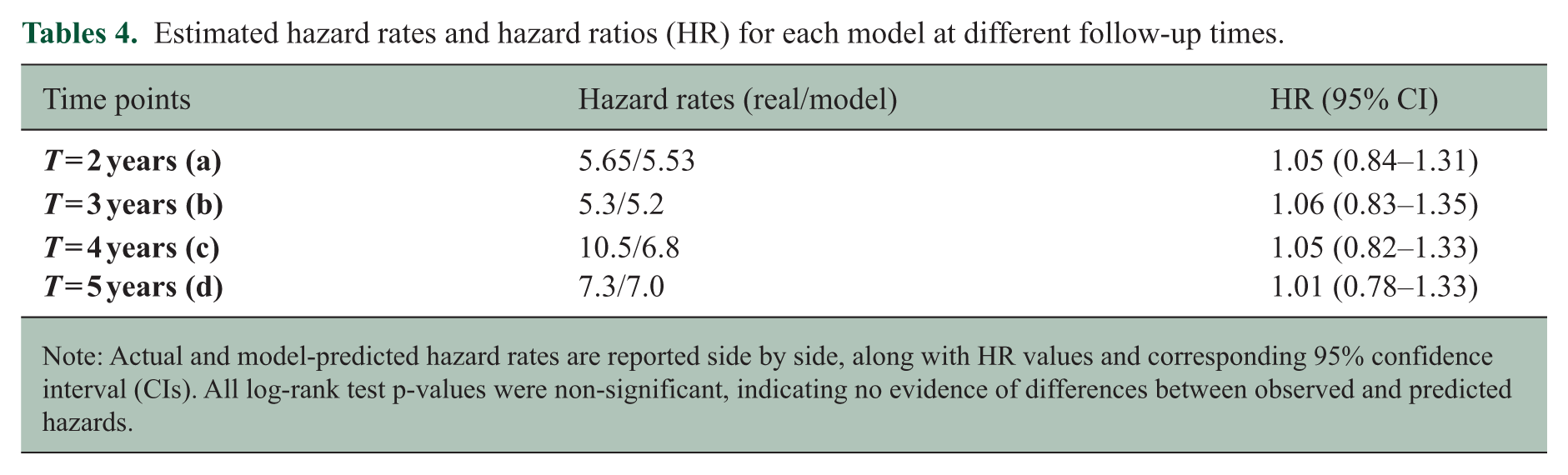

The hazard rates resulting from the model-predicted events (Table 4) show an upwards trend with time, rising from 5.53 to 7.0, indicating that the odds of accumulating disability increase as follow-up progresses. However, the model’s predicted event rates are lower than observed rates, indicating a small underestimation of risk.

Estimated hazard rates and hazard ratios (HR) for each model at different follow-up times.

Note: Actual and model-predicted hazard rates are reported side by side, along with HR values and corresponding 95% confidence interval (CIs). All log-rank test p-values were non-significant, indicating no evidence of differences between observed and predicted hazards.

Discussion

This study highlights the potential of our model as a trustworthy support tool for providing personalised risk profiles that reflect the impairment experienced by pwMS. The findings suggest that the model effectively captures the underlying data structure, assisting clinical judgement through objective, data-driven predictions.

In particular, it identifies pwMS who are likely to get worse over the next few years with an expected decreasing accuracy over time; furthermore, despite being primarily designed for individual-level predictions, it effectively captures population trends in survival analysis, with increasing hazard rates over time beyond baseline. This study extends the prediction window to 5 years post-baseline, thereby increasing its potential applicability in clinical practice. In doing so, it addresses a limitation of the pioneering work by Brichetto et al., who first demonstrated the potential of ML to extract meaningful information from PROs and CAOs by predicting MS phenotype transitions.19,20 However, their model was restricted to forecasting transitions within four months from baseline, reducing its broader applicability.

To achieve adequate predictive performance with the available data, the task was simplified to a binary classification. Ideally, baseline EDSS should inform both input features and output classes, since the impact of an EDSS change depends on its baseline value. This refinement, however, was beyond the capability of the current PRO- and CAO-based algorithm. In addition to the previous observation, a further limitation affecting the model’s clinical readability relates to the absence of formal confirmed disability progression (CDP). This consideration supports our choice to employ a definition of cumulative EDSS variation across time points. This strategy stabilises fluctuations by capturing the overall trajectory of disability change, 21 thereby providing a more consistent outcome measure for the model. Although this definition does not fully capture the clinical construct of CDP, it represents a step towards approximating it within the boundaries imposed by the available data.

Overall, although the integration of quantitative measures will likely be required to address more granular and complex predictive tasks, the present work provides a solid foundation for future developments in this direction. 22

It prioritises an explainable and interpretable approach, 23 primarily relying on an RF algorithm rather than more performant but opaque neural network models. This option provides insight into the model’s internal decision-making process by enabling FI analysis while accounting for interactions between variables, as well as the possibility to trace every decision step, if eventually required in clinical contexts. All these advantages are not easily achievable with occlusion techniques or other interpretability tools typically applied to neural networks.

This study suggests that the EDSS trajectory within the baseline surrounding time frame is sufficient to predict short-term disease evolution, with the initial risk class serving as the primary predictor at T = 2 years. In the long term, the baseline EDSS, which is not part of the original risk class, becomes progressively more influential. At later time points, some PROs (ABILHAND, OAB, and MFIS) retain significance for prediction alongside baseline EDSS and baseline risk class (Figure S.2). This suggests that PROs and CAOs do contribute to disability prediction, albeit in a less prominent sense. We might hypothesise that the useful insights gained from self-reported data are difficult to incorporate into a predictive framework, reducing their overall effectiveness. Nonetheless, these variables do not operate as confounders but rather provide supplementary and clinically relevant insights.

Indeed, these data have huge epidemiological value but also introduces significant limitations for a predictive task as the one developed in this paper. Since the data partially include self-reported measures, they ensure the incorporation of patients’ self-perception of the disease but may also bias the results. While it is cost-effective and minimally invasive, which is especially valuable for monitoring chronic disease, it also exposes us to intrinsic fluctuations, errors, and a lack of quantitative details. 24 Similarly, self-reported and performance data tend to result in random errors, as they are affected by factors like mood and environment, rather than systematic biases.

These issues confer additional relevance to the robustness and plausibility of the model, which are further supported by the results obtained for FIM, MFIS, and LSI. Each model consistently prioritises the outcome scale’s initial risk class as a key predictor, combined with either its baseline value or the slope within the first year (Supplementary material, Figure S.3 and S.4).

Another limitation concerns the characteristics of the study sample, which included pwMS with relatively high age and long disease duration. Due to sample size limits, it was not possible to train distinct models for younger and older individuals. However, future work could focus on stratified analyses based on age or other relevant factors to increase the informative value of these measures. It is also worth noting that, while the model underwent robust internal validation, the lack of standardised MS monitoring protocols makes it difficult to identify or reproduce an independent data set suitable for external validation. These defects, however, suggest that future improvements for including these types of data within the prediction of disability progression should entail quantitative data such as those gathered through wearable devices and passive sensor monitoring, 25 to enhance predictive accuracy and potentially guide therapeutic decisions and the design of tailored rehabilitation programmes. 26 In addition, better stratification of the sample could optimise model performance. With these refinements, the current study contributes to the ongoing and highly relevant discussion regarding the application of AI in neurology and MS by proposing an appropriate way for addressing typical issues of real-world data.

Supplemental Material

sj-docx-1-msj-10.1177_13524585251411093 – Supplemental material for Patient-reported outcomes as predictors of disability evolution in Multiple Sclerosis: An interpretable machine learning approach

Supplemental material, sj-docx-1-msj-10.1177_13524585251411093 for Patient-reported outcomes as predictors of disability evolution in Multiple Sclerosis: An interpretable machine learning approach by Federica Di Antonio, Giampaolo Brichetto, Andrea Tacchino, Ludovico Pedullà, Jessica Podda, Erica Grange, Alice Bollini, Margherita Monti Bragadin, Valeria Prada, Matteo Bauckneht, Silvia Morbelli and Andrea Chincarini in Multiple Sclerosis Journal

Footnotes

Acknowledgements

We thank all PwMS followed as outpatients at the Rehabilitation Service of Genoa (Italian MS Society, AISM) for participating in this research, and Maria Madera and Giulia Bignone for their support during enrolment.

Author Contributions

GB, AC and FDA designed the study. FDA and AC analysed the data. All authors discussed the data analysis and interpreted the results. FDA, GB and AC wrote the first draft of the manuscript. All authors critically revised the manuscript for publication.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: F. Di Antonio, G. Brichetto, A. Tacchino, L. Pedullà, J. Podda, M. Monti-Bragadin, E. Grange, A. Bollini, V. Prada are part of RAISE Innovation Ecosystem. All other authors declare no conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: the study was conducted within the currently funded PROMOPRO-MS project (grant FISM 2013/S3), which originally supported the data collection. It also received funding from the European Union–NextGenerationEU and by the Ministry of University and Research (MUR), National Recovery and Resilience Plan (NRRP), Mission 4, Component 2, Investment 1.5, project ‘RAISE–Robotics and AI for Socio-economic Empowerment’ (ECS00000035). S. Morbelli and A. Chincarini are supported by a grant from Italian Ministry of Health (RF-2018-12366238).

ORCID iDs

Data Availability

The data set is available from the corresponding author upon reasonable request.

Supplemental Material

Supplementary material for this article is available online.