Abstract

Background:

Existing metrics of patient-reported cognitive difficulties in multiple sclerosis (MS) are lengthy, lack psychometric rigor, and/or fail to query prevalent expressive language deficits.

Objective:

Develop a brief psychometrically robust metric of patient-reported cognitive deficits that includes language items; the Multiple Sclerosis Cognitive Scale (MSCS).

Method:

Exploratory factor analysis (EFA) was conducted on 20 Perceived Deficits Questionnaire (PDQ) items plus five newly developed language questions in a large MS sample and matched respondents without neurologic disease. Independent confirmatory principal components analysis (PCA) assessed EFA factor structure. Reliability of the new scale and subscales, and relationships with objective cognitive impairment and cognitive change, were assessed.

Results:

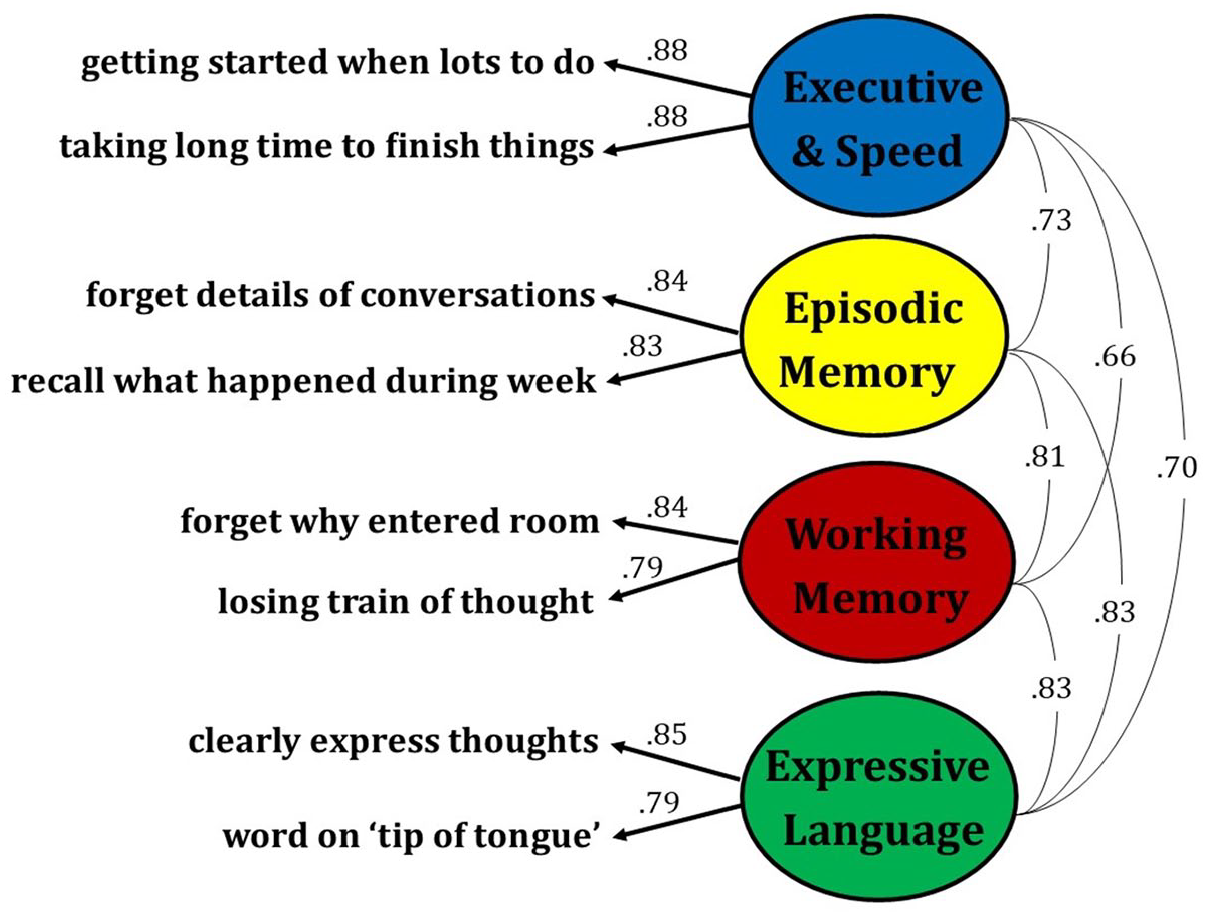

EFA in patients (n = 502) and controls (n = 350), item analyses, and confirmatory PCA in an independent sample (n = 361 patients; 150 controls) supported construction of an eight-item scale with four two-item subscales: Executive/Speed, Working Memory, Expressive Language, and Episodic Memory. Internal consistency and test–retest reliability were excellent for the total MSCS (α = 0.93, ICC = 0.95) and good for each subscale (α’s:0.83–0.87; ICCs: 0.86–0.92). MSCS showed medium-size links to cross-sectional objective cognitive impairment (η2 = .06) and cognitive change over time (η2 = .07); the traditional PDQ did not (η2s = 0.01 and 0.02).

Conclusion:

The brief MSCS is a psychometrically robust, reliable, and valid metric of patient-reported cognitive deficits in MS that holds promise for improving assessment of MS cognitive dysfunction.

Introduction

Cognitive decline is common in multiple sclerosis (MS); 1 it is therefore important to have psychometrically validated, clinically feasible patient-report tools to screen for cognitive deficits. One option is the Perceived Deficits Questionnaire (PDQ), 2 which is a 20-item scale developed in 1990 with four a priori subscales (attention/concentration, planning/organization, retrospective memory, prospective memory). The PDQ has limitations: subscales were not validated by factor analytic studies, there are no questions about expressive language deficits although word-finding difficulty is frequently reported by patients, 3 and the relatively long length of the PDQ reduces clinical feasibility due to patient burden, especially when included within a wider collection of questionnaires (e.g. fatigue, mood, and physical disability). Another option is the MS Neuropsychological Questionnaire (MSNQ), 4 which is a 15-item scale developed in 2003 without cognitive subscales; items focus heavily on attention/executive function and memory without assessment of word-finding difficulty. It is also notable that the MSNQ and PDQ were developed over two and three decades ago, respectively. Others have more recently recommended Neuro-QoL short forms including a brief questionnaire assessing communication difficulties; 5 however, even these lack questions on word-finding difficulty. This study aims to develop a brief, reliable, clinically useful measure of patient-reported cognitive difficulties with cognitive subscales validated with factor analytic techniques.

Methods

Sample

The Corinne Goldsmith Dickinson Center for Multiple Sclerosis at Mount Sinai Hospital is a tertiary care center with a catchment area encompassing the racially/ethnically diverse New York Metropolitan Area. In 2018, we established a clinic aiming to perform cognitive screenings as standard of care for all patients at our center; as such, we have shown that demographic and disease characteristics do not differ between the patients completing these cognitive screenings and a random sample of patients cared for at our MS Center. 6 The 20-item PDQ plus five language questions were completed by patients from August 2018 through August 2021. We performed an Institutional Review Board (IRB) approved retrospective chart review of clinical and patient-reported cognitive data from all patients aged 18 to 65 years and diagnosed with relapse-onset MS from 1995 onward who completed cognitive screenings. Self-reported cognitive difficulty was also collected from demographically matched persons without neurologic conditions through an IRB-exempt anonymous electronic capture questionnaire; links to this survey were sent to patients at the MS Center who were encouraged to share the link with friends and/or post on social media at their discretion.

PDQ plus language questions

The PDQ is a 20-item self-report inventory assessing frequency of cognitive difficulties as never (0), rarely (1), sometimes (2), fairly often (3), or very often (4) over the last 4 weeks. The PDQ has four a priori subscales (with five items each) assessing attention/concentration, planning/organization, retrospective memory, and prospective memory, but there is no assessment of language difficulty. Our development of five language questions to add to the PDQ was informed by clinical experience with patients and confirmed by retrospective chart review of self-report remote electronic capture (REDCap) questionnaire in consecutive patients (meeting aforementioned inclusion criteria) during a 12-month period. Patients were asked “Do you have any concerns about your current cognition?” If yes, an open-field question displayed: “In a few words, please describe the cognitive problem(s) that you experience.” These questions were completed prior to completion of the PDQ to avoid biasing results. Three independent raters (see acknowledgements) reviewed all free-text responses to identify the presence or absence of self-reported language difficulty and then to categorize difficulties into like categories.

Statistical approach

Exploratory factor analysis (EFA) was performed using R version 4.2.1 and the psych package. 7 Goodness of fit was evaluated using root mean square error (RMSE: ⩽.05 good, ⩽.08 acceptable, ⩽.10 marginal) and Tucker–Lewis Index (TLI: ⩾.95 good, ⩾.90 acceptable, ⩾.85 marginal). The goal was to develop an empirically derived brief cognitive screener; as such, we identified the two items with the highest factor loadings and highest internal consistency (Cronbach’s alpha: ⩾.90 excellent, ⩾.80 good, ⩾.70 acceptable) for each factor, which were selected for the new brief screener. Next, confirmatory PCA was performed for selected items in an independent sample of patients and controls.

Results

Sample

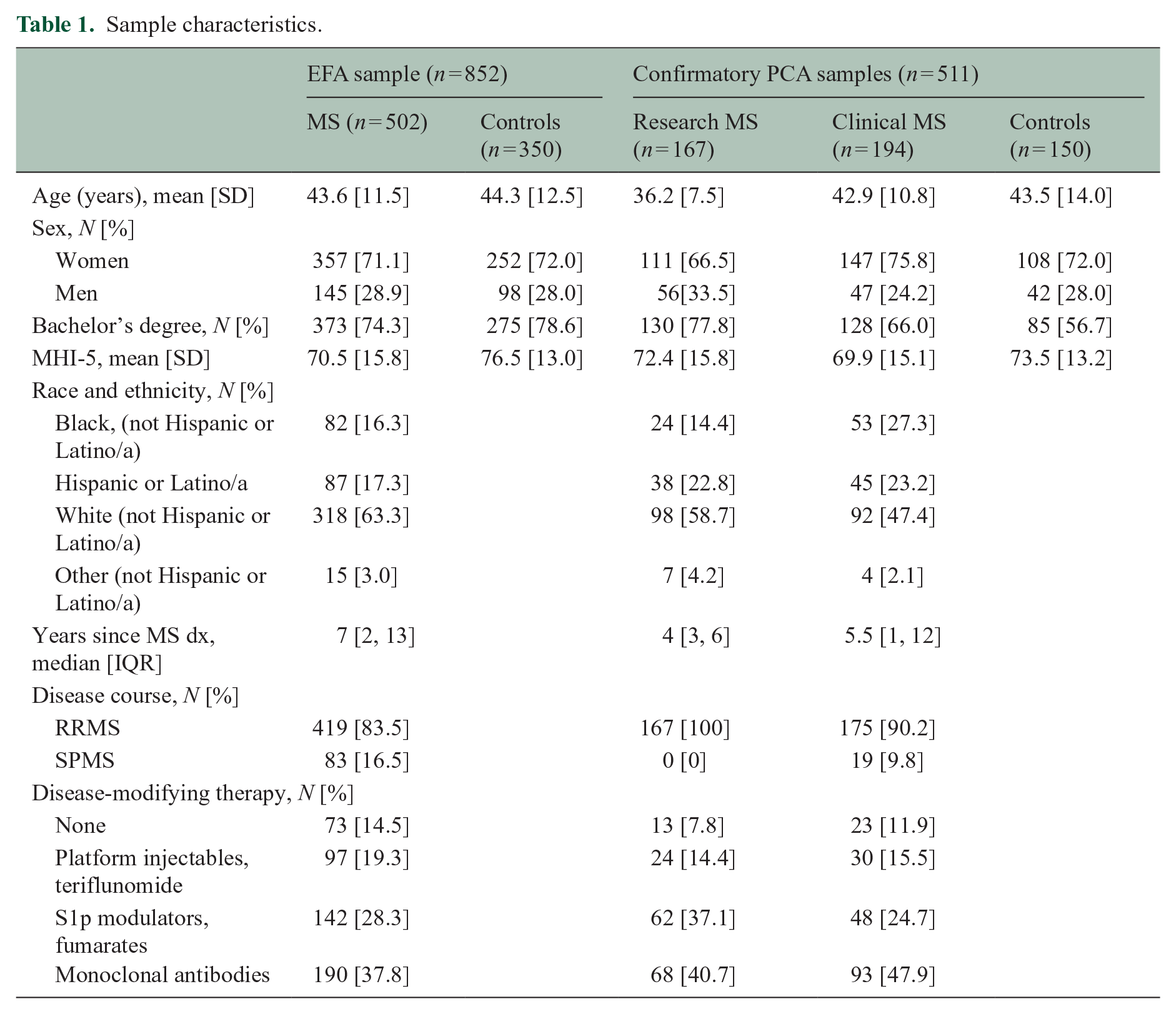

Data on the 25-item self-report cognitive questionnaire were captured from 502 persons with MS and 350 demographically matched control respondents for the EFA (Table 1).

Sample characteristics.

Language questions

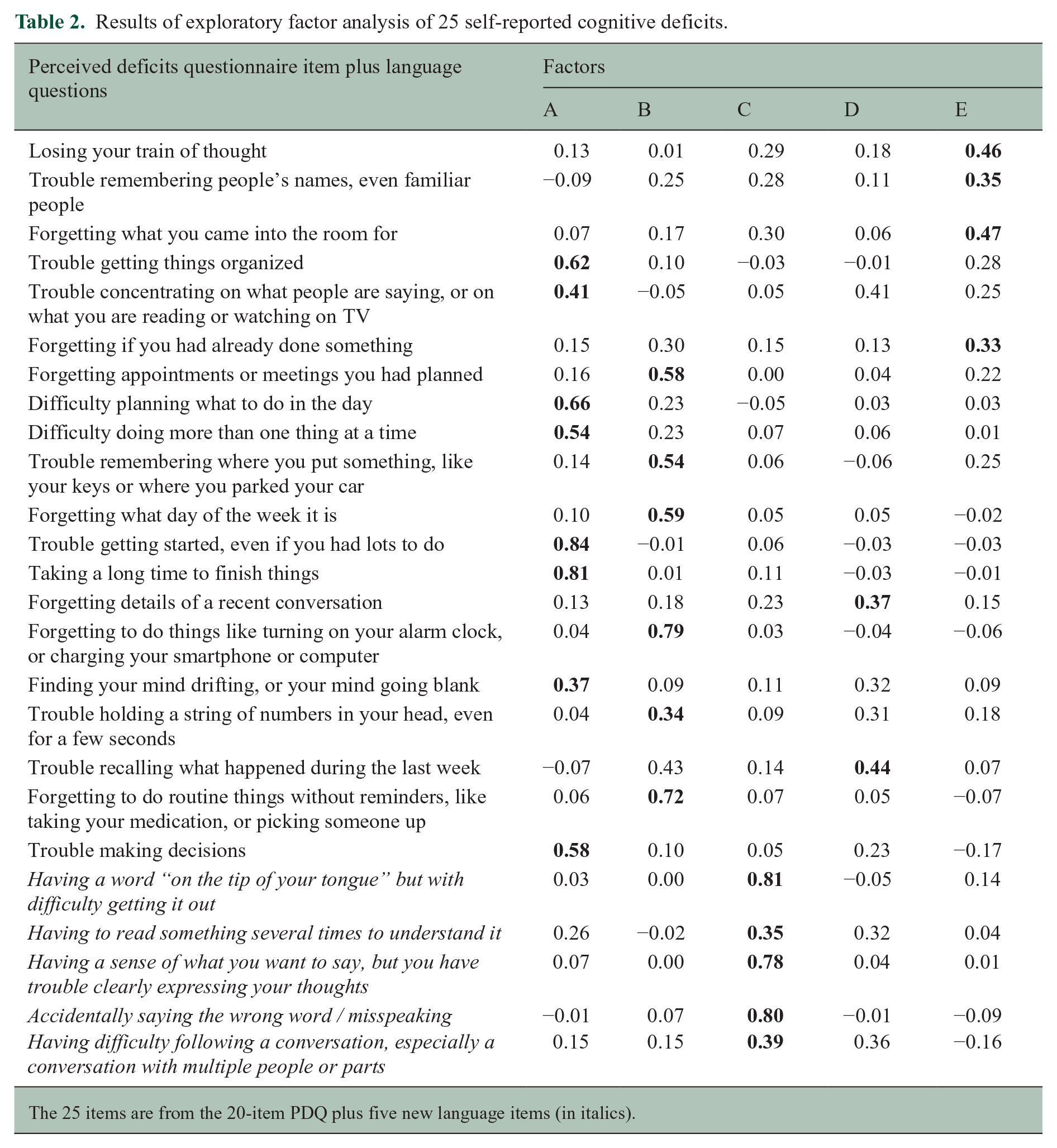

Data were captured from a subset of 319 consecutive patients with MS for validation of language questions. Of 319 patients, about half (51%, n = 163) endorsed “yes” to having concerns about cognition; free-text responses were reviewed by three independent raters to identify the presence or absence of self-reported language difficulty (interrater agreement was excellent, Kappa [95% confidence interval (CI)] of 0.85 [0.76, 0.93]). Language deficits were considered present if all three raters (n = 54) or two of three raters (n = 10) coded it present; language difficulty was reported by 39% of patients endorsing concerns about cognition (n = 64 of 163), and 20% of all patients regardless of endorsement of cognitive concerns (n = 64 of 319). Analysis of the 64 responses identified (a) word-finding difficulty (n = 46) with descriptions of trouble retrieving known words (i.e. “tip of the tongue” phenomenon), (b) difficulty clearly expressing thoughts (n = 13), (c) using the wrong word or misspeaking (n = 10), (d) difficulties comprehending text or discourse (n = 6), and (e) other and/or vague responses (n = 6; e.g. “speech problems,” misspelling). Analyses support use of the five language questions (last five items in Table 2).

Results of exploratory factor analysis of 25 self-reported cognitive deficits.

The 25 items are from the 20-item PDQ plus five new language items (in italics).

EFA

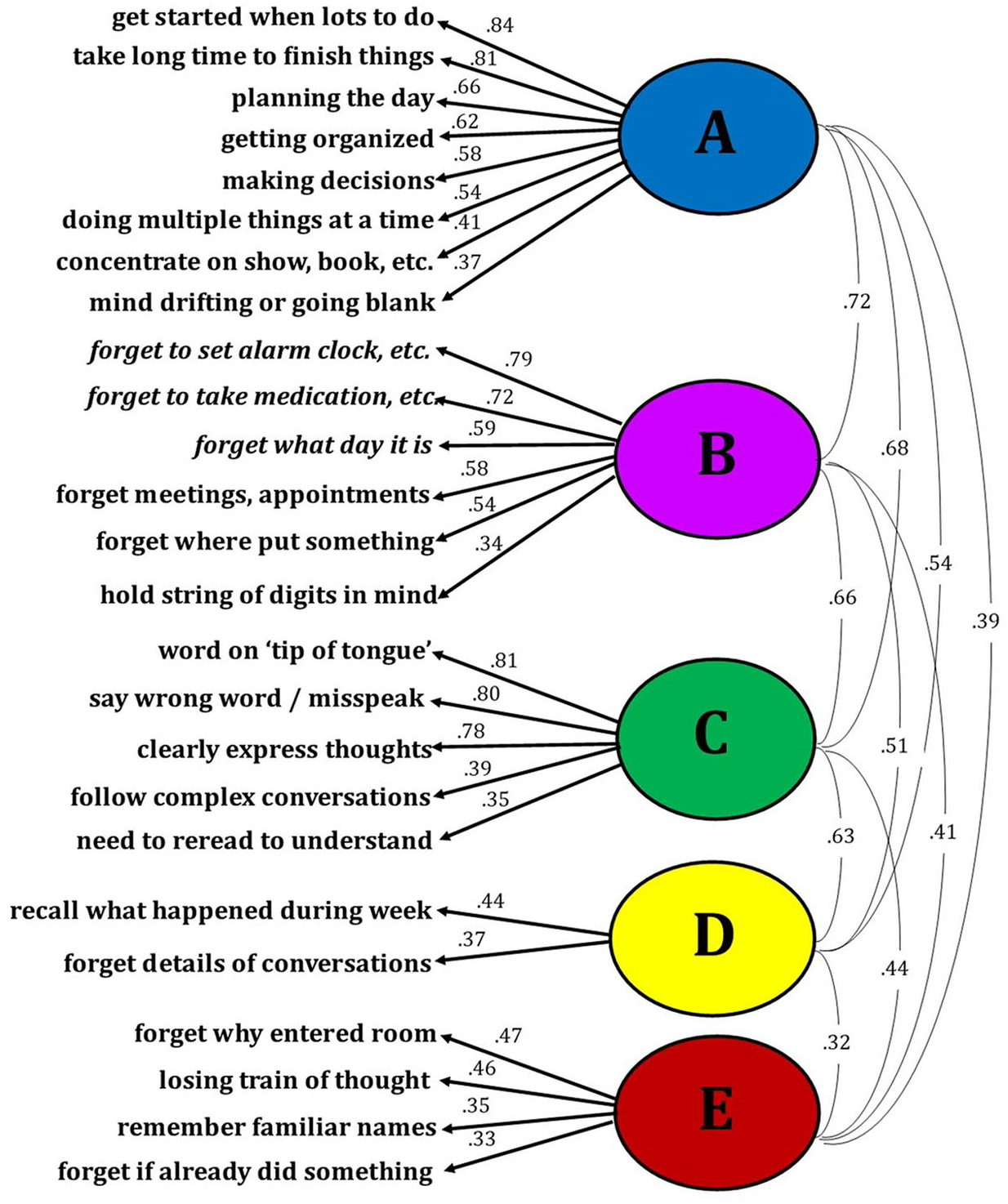

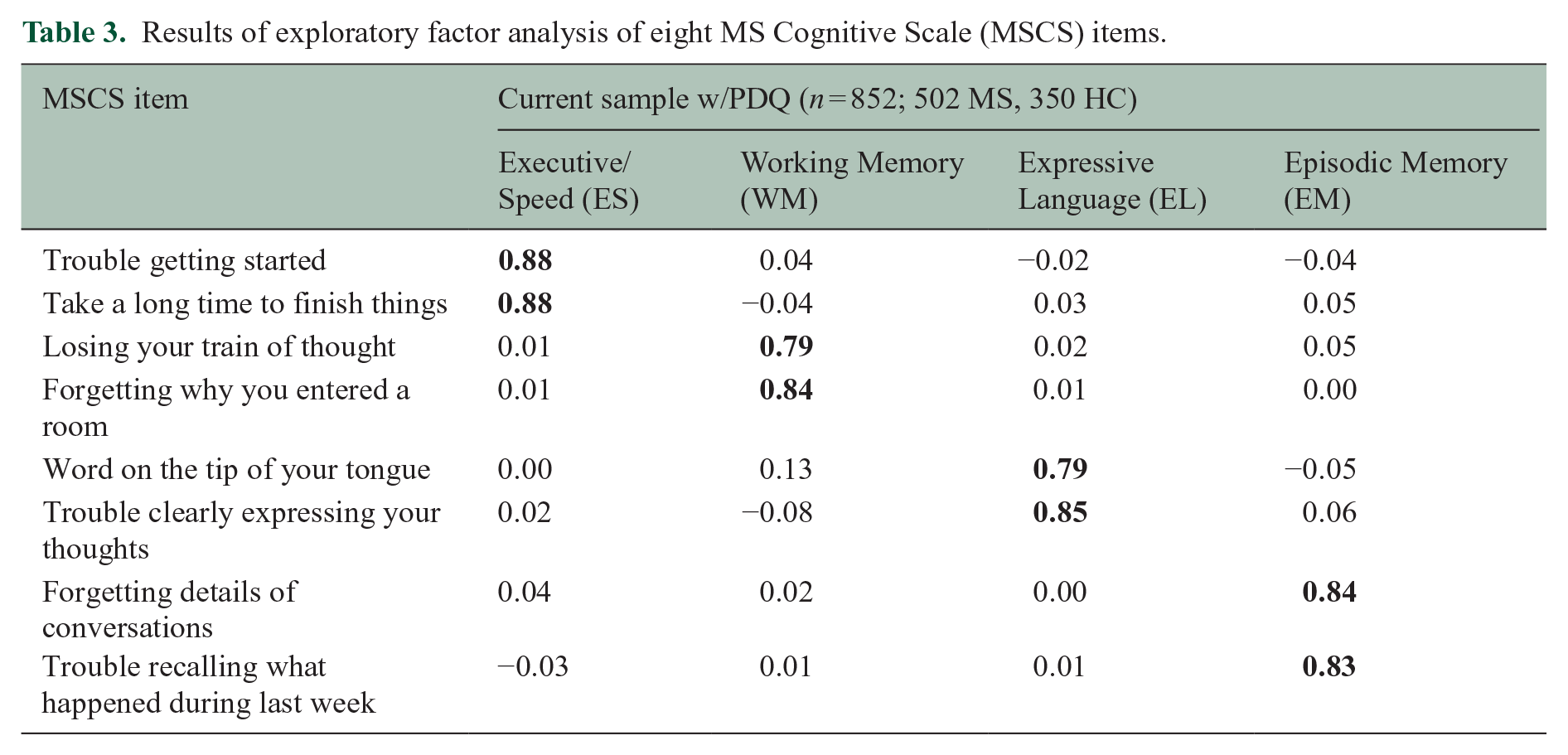

Responses to the 25 questions by 852 consecutive respondents (502 patients, 350 controls) were analyzed with EFA. The Kaiser–Meyer–Olkin measure of sampling adequacy was .98 suggesting excellent factorability. Results from the parallel analysis, in concordance with the scree plot, suggested that a five-factor solution with oblimin rotation has excellent fit (root mean square error of approximation [RMSEA] = .05, TLI = .962; Table 2, Figure 1). Of the four items with highest loadings for each factor, we identified the two with the highest internal consistency (Cronbach’s alpha). For factor A, internal consistency was good for “get started when lots to do” and “take long time to finish things” (α = 0.88). For factor B, internal consistency was acceptable for “forget to take medication, etc.” and “forget meetings, appointments” (α = 0.79). For factor C, internal consistency was good for “word on ‘tip of tongue’” and “clearly expressing thoughts” (α = 0.85). For factor D, internal consistency was good for “forgetting details of a recent conversation” and “trouble recalling what happened during the last week” (α = 0.85). For factor E, internal consistency was good for “forget why entered room” and “losing train of thought” (α = 0.85). Internal consistency was good for all two-item pairs across factors except factor B. Further inspection revealed possible floor effects for all four items with highest loadings for factor B, with <10% of all respondents endorsing “fairly often” or “very often.” These items are four of the five items of the PDQ prospective memory subscale; means for this scale were much lower than all other PDQ subscales in the original publication. 2 Only one of the other 21 questions showed a possible floor effect (“follow complex conversations”). To derive a brief scale with the best reliability and clinical relevance, we excluded factor B. EFA with the remaining eight items yielded four factors (Table 3, Figure 2), which are best characterized as Executive/Speed, Episodic Memory, Working Memory, and Expressive Language.

Exploratory factor analysis of 25 self-reported cognitive deficits.

Results of exploratory factor analysis of eight MS Cognitive Scale (MSCS) items.

Exploratory factor analysis of the eight MSCS items.

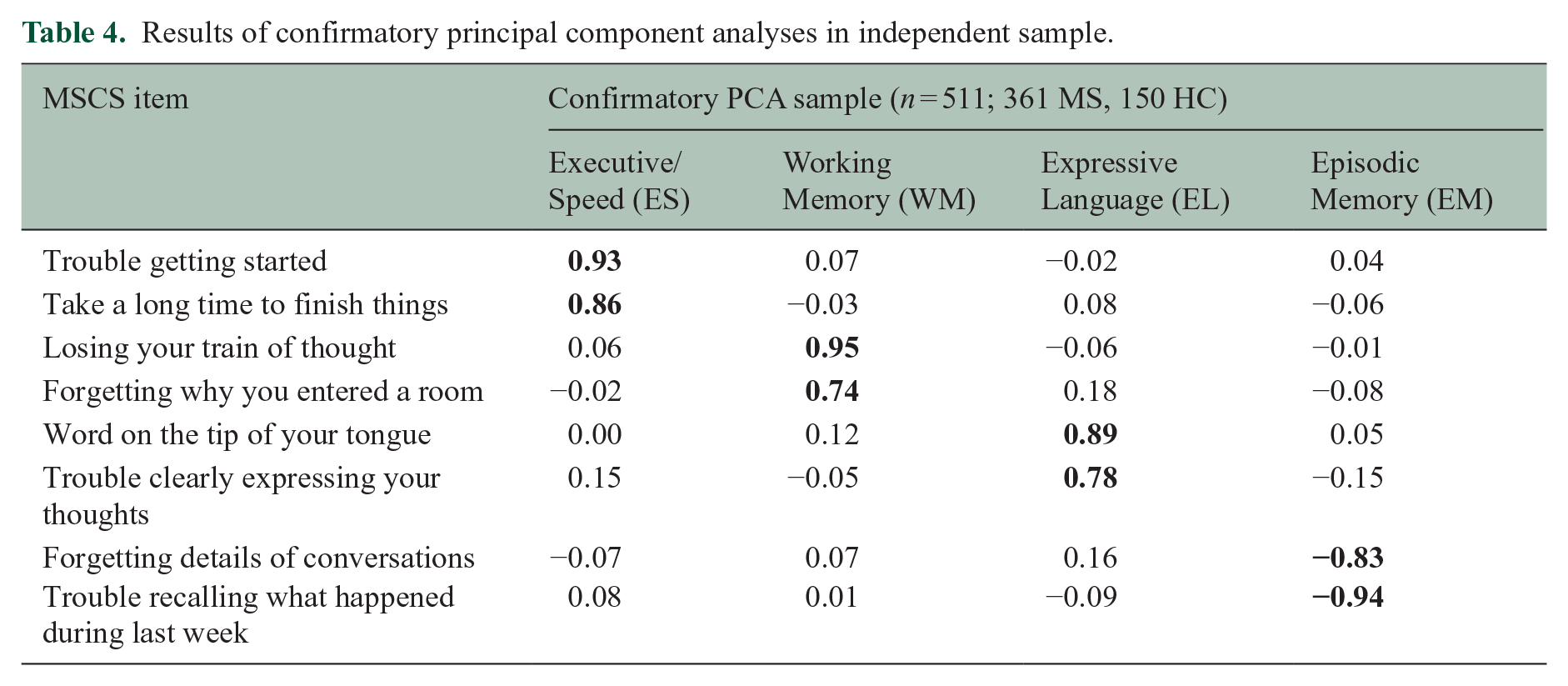

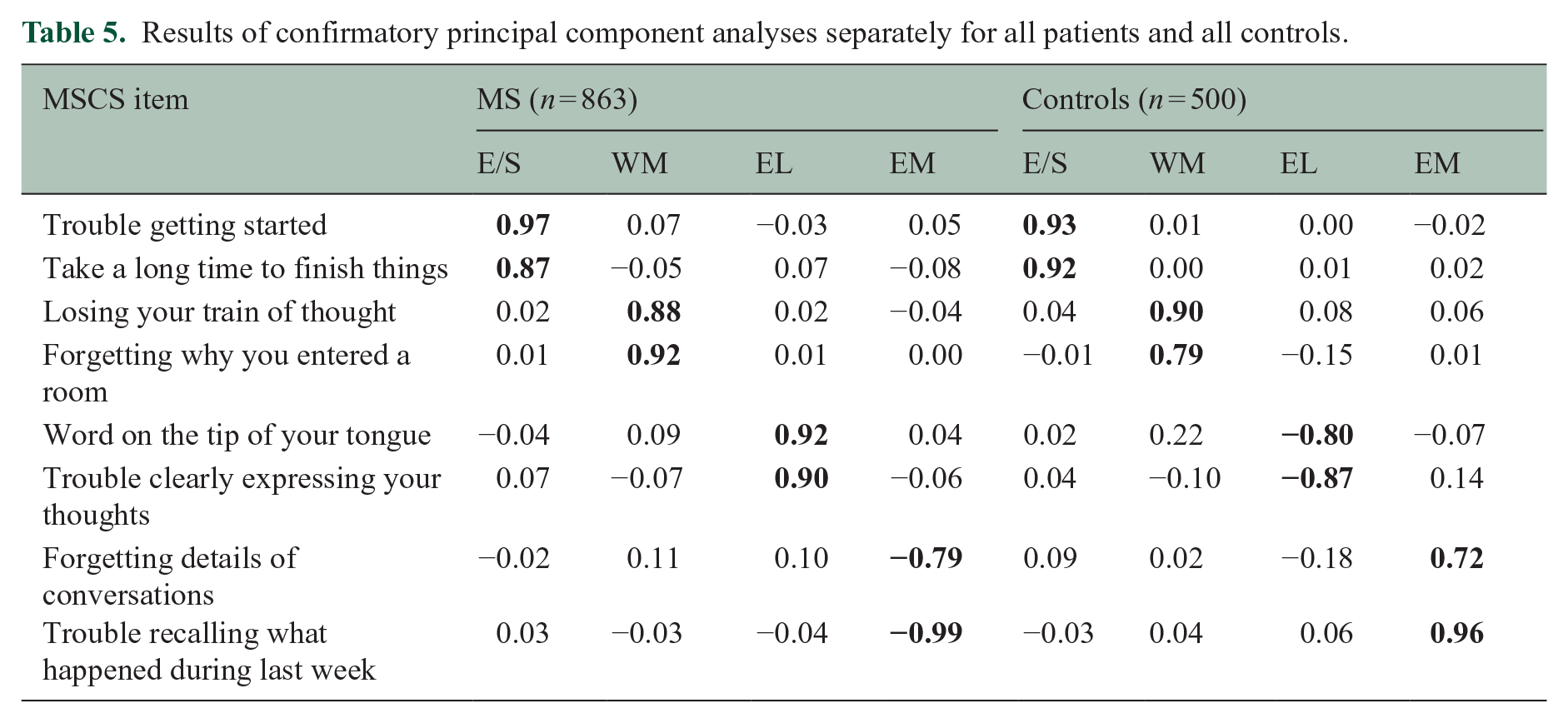

Confirmatory Principal Components Analyses

To replicate and verify the robustness of the scale, confirmatory principal components analyses (PCAs; four components, oblimin rotation) with the eight selected items were performed in an independent sample combining a research sample of 167 persons with relapsing-remitting MS who completed the full 25-item survey, a clinical sample of 194 patients with MS who completed the brief scale, and a sample of 150 respondents without neurologic conditions who completed the brief scale. As shown (Table 4), confirmatory PCA in this independent sample supported the factor structure of the eight-item MSCS, which was also shown when performing separate PCAs for all patients (n = 863) and all controls (n = 500; Table 5).

Results of confirmatory principal component analyses in independent sample.

Results of confirmatory principal component analyses separately for all patients and all controls.

Reliability

Internal consistency (Cronbach’s alpha) of the eight-item scale among patients who completed the brief form (n = 194) was excellent for the total MSCS (α = 0.93) and good for each subscale (Executive/Speed, α = 0.85; Episodic Memory, α = 0.85; Working Memory, α = 0.83, Expressive Language, α = 0.87). Test–retest reliability was assessed with intraclass correlation coefficients (ICC; two-way mixed analysis of variance [ANOVA]; absolute agreement between single scores) 8 for the brief form in an independent sample of 40 consecutive patients with MS (mean [SD] age: 45.7 [12.3] years; 31 women, 19 men; 62.5% White non-Latino; inter-test interval median [interquartile range (IQR)]: 2 [1, 3] days). Reliability (ICC [95% CI]) was excellent for the total MSCS (0.95 [0.90, 0.97]) and good to excellent for subscales (Executive/Speed: 0.92 [0.85, 0.96]; Episodic Memory: 0.91 [0.83, 0.95]; Working Memory: 0.86 [0.75, 0.92]; and Expressive Language: 0.88 [0.70, 0.94]).

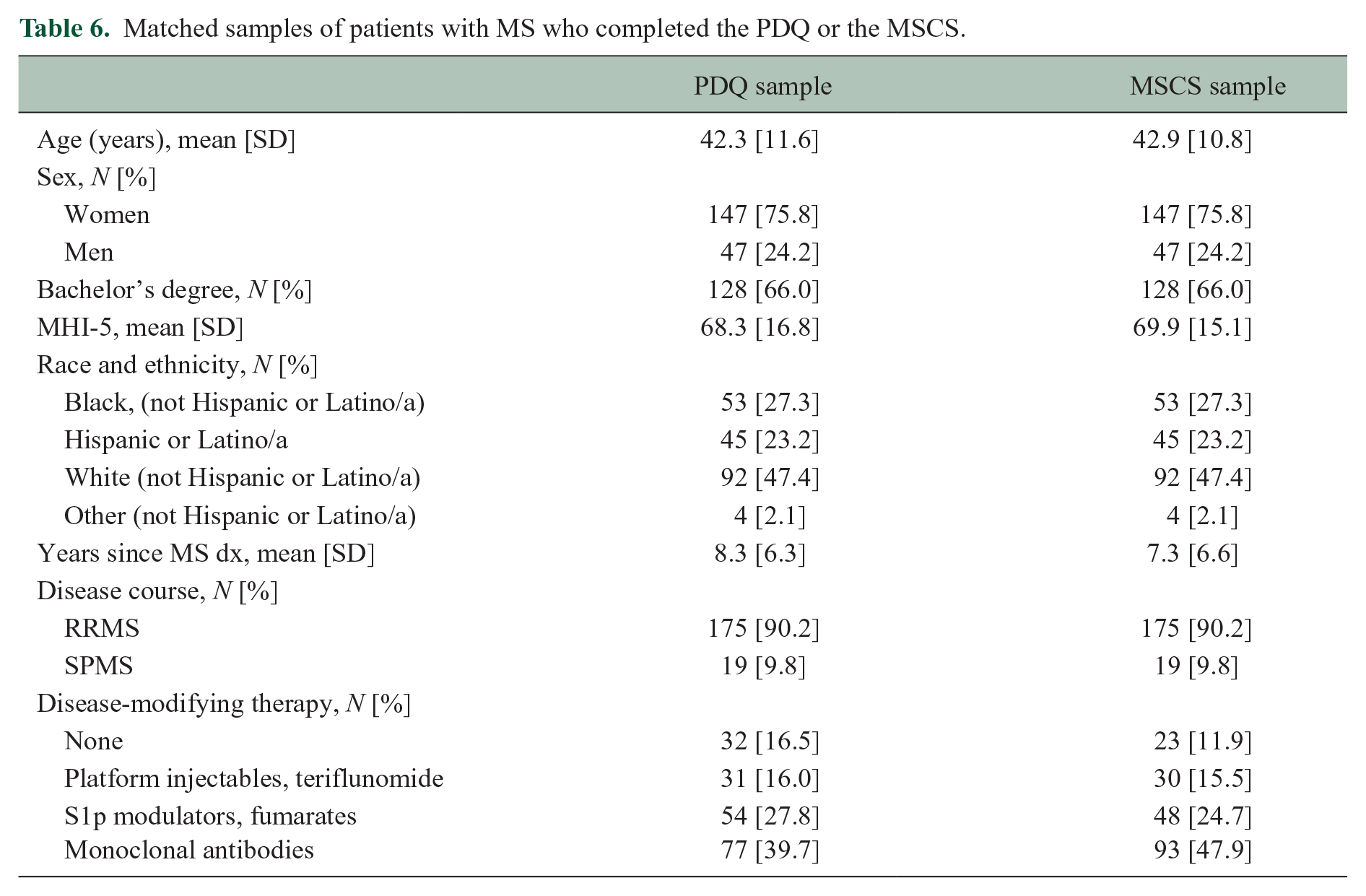

Construct validity: link to cross-sectional objective cognitive performance

A brief screening battery adopted for MS (BICAMS) 9 consists of a high-sensitivity information processing task (Symbol Digit Modalities Test, SDMT) 10 and measures of word-list learning and object-location memory. Our modified version of this battery includes SDMT, word-list total learning on the Hopkins Verbal Learning Test, Revised (HVLT-R), 11 and object-location memory on CANTAB Paired Associate Learning (PAL; tablet-based task not affected by sensorimotor ability, see online Supplement). 12 Task performance data were available for 502 consecutive patients who completed the full 20-item PDQ (August 2018 through August 2021) and 194 consecutive patients who completed the MSCS (which replaced the PDQ after August 2021). Raw scores were converted to age-adjusted norm-referenced z-scores relative to each test’s healthy standardization sample, which was then used to characterize impairment for each task as performance ⩽1.5 standard deviations below normal (z score ⩽ −1.5). Patients were categorized as having impairment on 0, 1, or 2+ tests. We then matched patients from the larger PDQ sample to the smaller MSCS sample for age, sex, race/ethnicity, education, MS phenotype, time since diagnosis, and mood (MHI-5), resulting in extremely well-matched samples of (a) 194 patients who completed the full 20-item PDQ and (b) 194 patients who completed the MSCS (Table 6).

Matched samples of patients with MS who completed the PDQ or the MSCS.

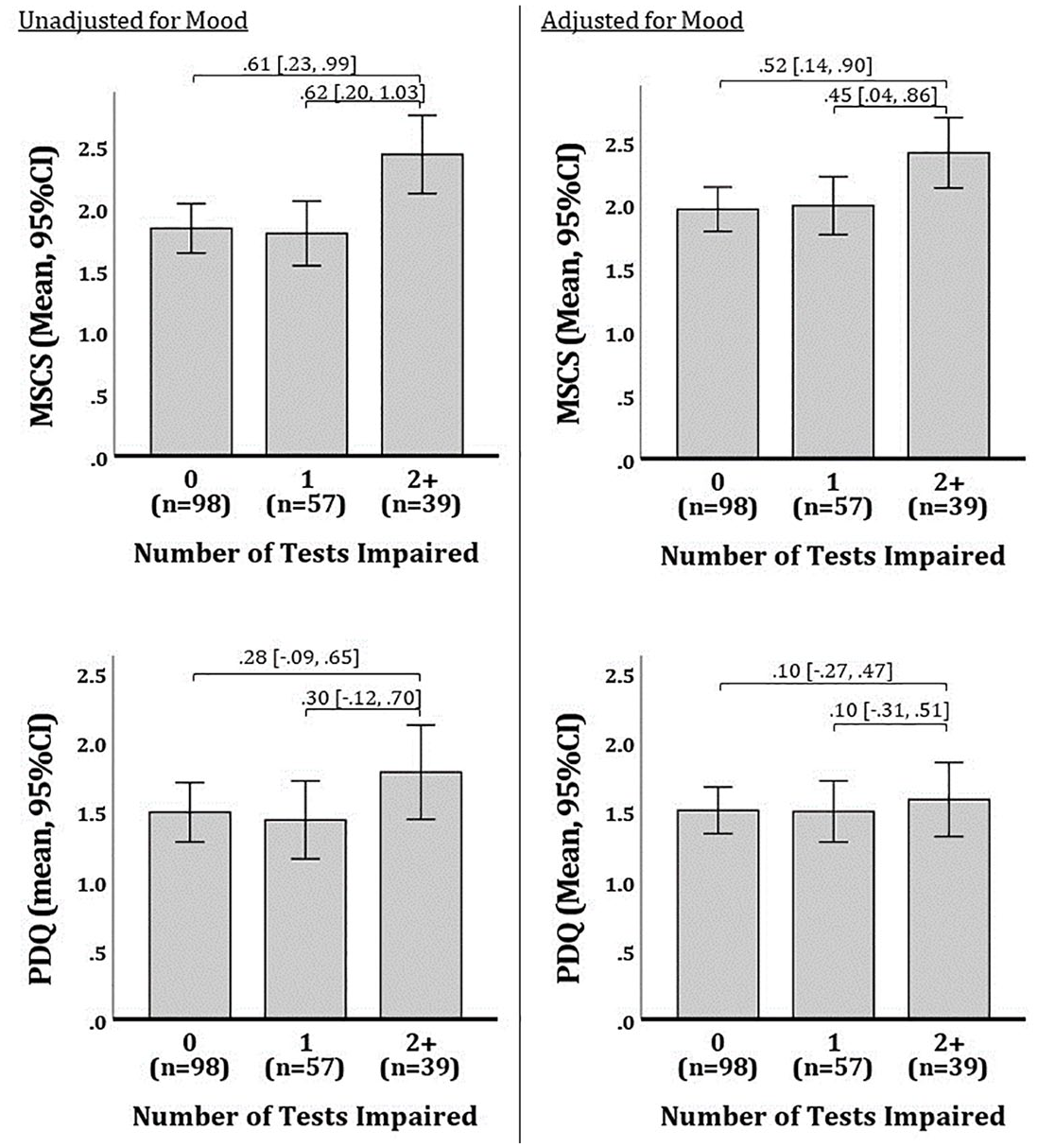

One-way ANOVAs tested differences in the PDQ (mean of 20 items) and the MSCS (mean of eight items) across patients with impairment on 0, 1, or 2+ tests. As shown (Figure 3), MSCS differed across levels of cognitive impairment (F[2, 193] = 5.85, p = 0.003; η2 [95% CI] = 0.06 [0.01, 0.13]) whereby patient-reported difficulty was worse among patients with 2+ impaired tests than those with ⩽1 impaired test. In contrast, there was no difference in PDQ across levels of cognitive impairment (F[2, 193] = 1.34, p = 0.265; η2 [95% CI] = 0.01 [0.00, 0.06]). One-way ANOVAs were repeated after adjusting MSCS and PDQ for mood (MHI-5, using GLM). Again, as shown (Figure 3), there were differences in MSCS across levels of cognitive impairment F[2, 193] = 3.83, p = 0.023; η2 [95% CI] = 0.04 [0.00, 0.10]), but PDQ did not differ across levels of impairment (F[2, 193] = 0.15, p = 0.864; η2 [95% CI] = 0.00 [0.00, 0.02]).

Differences in patient-reported cognitive difficulty across levels of cognitive impairment in matched samples completing the PDQ or MSCS.

Construct validity: representativeness of scale to cognitive difficulties in MS

To evaluate whether the scale overlooked any prevalent cognitive difficulties, we reviewed open-ended descriptions of cognitive difficulties by 464 patients (aforementioned 163 responses used to develop language items, plus an additional 301 responses from subsequent patients). As detailed within the online Supplement, no other prevalent cognitive difficulties were identified beyond attention/executive function, working memory, expressive language, and episodic memory.

Responsiveness to change in objective cognitive performance

To examine responsiveness of the MSCS to change in cognition over time (relative to the PDQ), retrospective chart review identified a consecutive sample of 120 patients with annual follow-ups who completed the full PDQ at their first visit (V1) and second visit (V2), and completed the MSCS at their third visit (V3; characteristics at V1: mean [SD] age 45.5 [11.7] years; 72.5% female; 65.0% non-Latino White; 23.3% progressive course; median [IQR] 6.5 [1.5, 13.0] years since diagnosis). Patients completed aforementioned cognitive tasks (SDMT, HVLT, CANTAB PAL) at each visit (alternate forms used as appropriate); performance was converted to normative z-scores and averaged into composite z-scores for each time point. Cognitive change scores were derived as V2 minus V1, and V3 minus V1. Changes in PDQ and MSCS raw scores were derived as V2 minus V1, and V3 minus V1, respectively (V1 MSCS derived from its eight items within larger item pool). All values were winsorized (1.5*IQR) to avoid undue impact of outliers; there was no skewness (all <±0.45) or kurtosis (all <±0.31) for any values. Dependent t-tests showed no difference in cognitive change (mean [SD]) between V2-V1 (0.07 [0.45]) versus V3-V1 (0.10 [0.47]; t[119] = 0.56, p = 0.575, d = 0.05), and no difference in patient-reported cognitive change on PDQ V2-V1 (0.06 [0.45]) versus MSCS V3-V1 (0.07 [0.54]; t[119] = 0.16, p = 0.874, d = 0.01). Pearson correlations examined associations between (a) V2-V1 PDQ change and cognitive change, and V3-V1 MSCS change and cognitive change. Cognitive change was not related to PDQ change (r = −0.14, p = 0.115), but it was related to MSCS change (r = −0.23, p = 0.011); findings were maintained when re-analyzed as partial correlations adjusting for changes in mood (MHI-5; PDQ: r = −0.18, p = 0.057; MSCS: r = −0.21, p = 0.025).

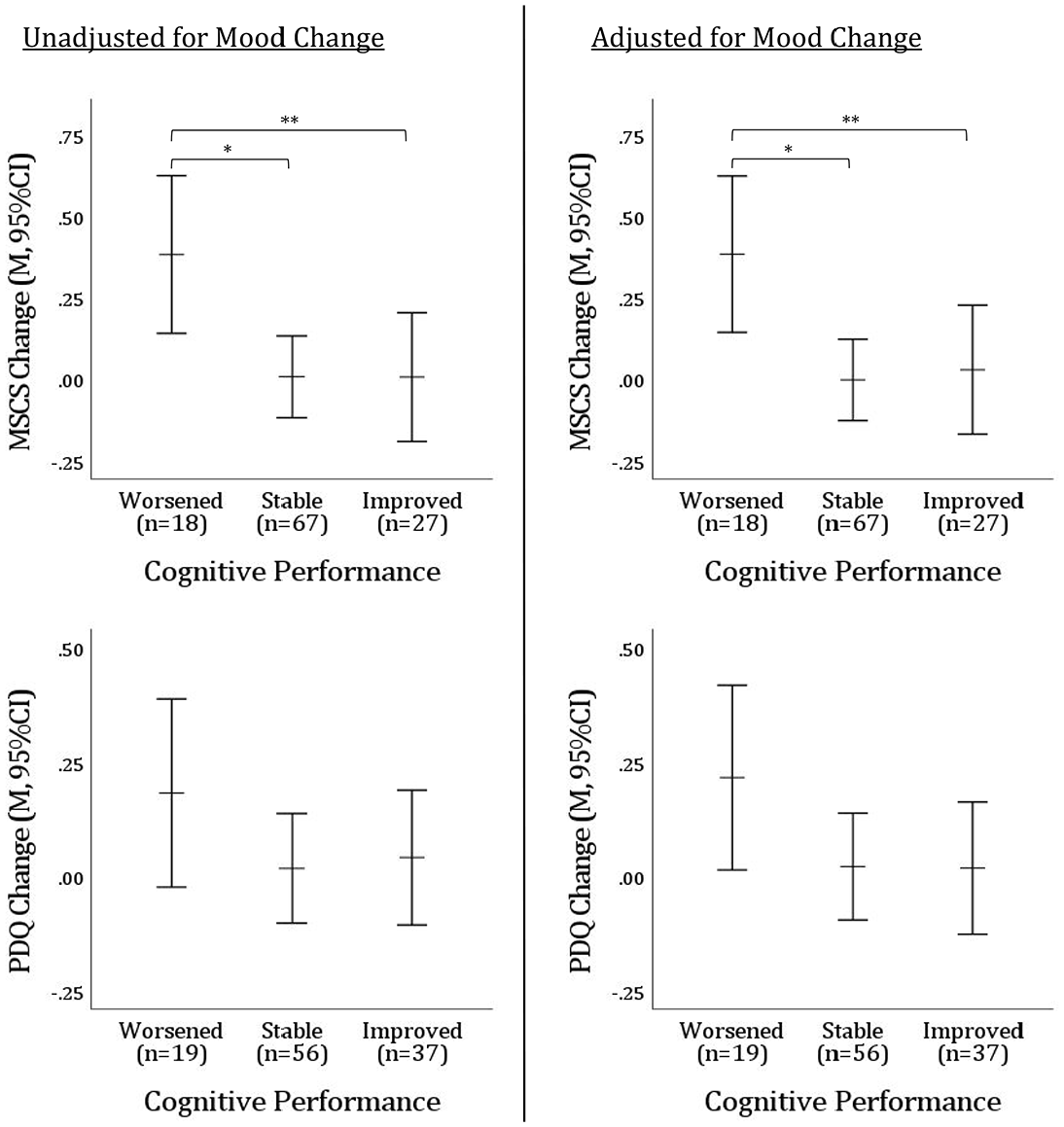

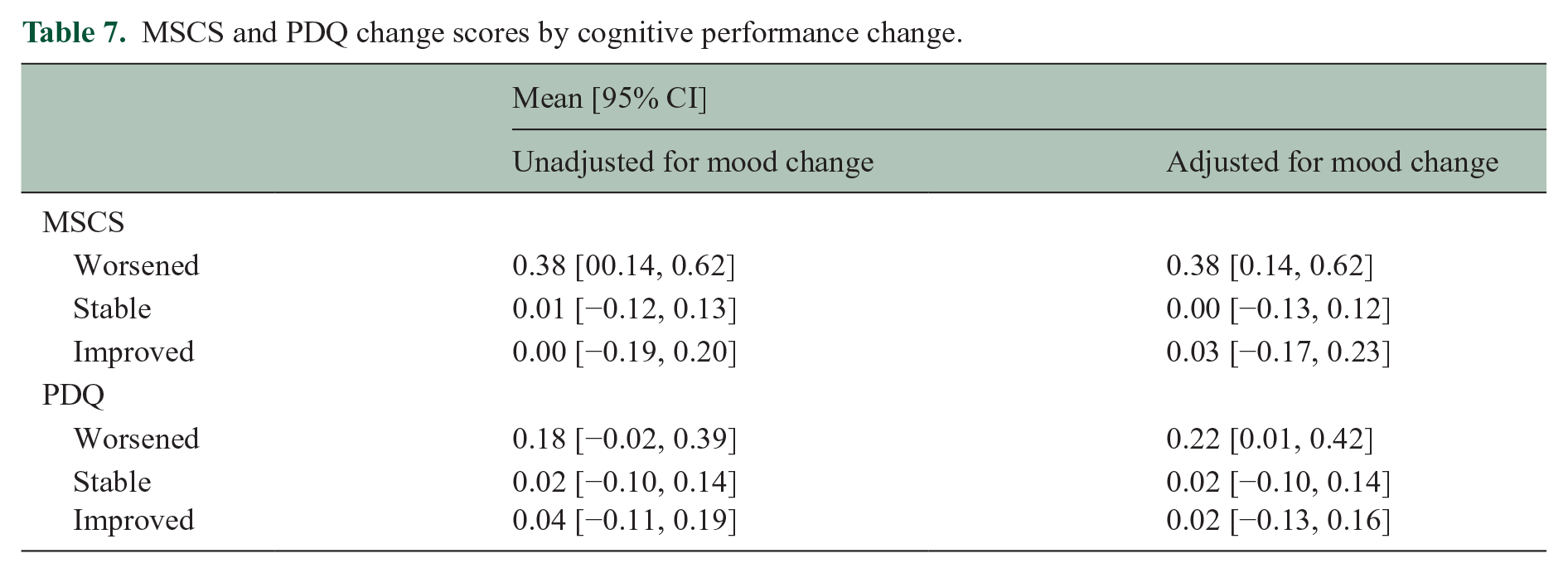

Finally, ANOVAs tested differences in PDQ change and MSCS change across patients classified as cognitively worsened, stable, or improved on tasks (SDMT, HVLT, PAL) for V2-V1 and V3-V1, respectively (worsened: ⩾1 SD lower on ⩾1 task; improved: ⩾1 SD higher on ⩾1 task; stable: <1 SD change on all tasks). Eight patients (four in each comparison) were excluded due to mixed cognitive change (i.e. worsened on one task, improved on another). As shown (Figure 4, Table 7), MSCS change (F[2, 109] = 3.97, p = 0.022, ηp2 = 0.07) but not PDQ change (F[2, 109] = 0.39, p = 0.387, ηp2 = 0.02) differed across cognitive change groups; this pattern remained even when re-analyzed with analyses of covariance (ANCOVAs) adjusting for changes in mood (MHI-5; MSCS: F[2, 108] = 4.10, p = 0.019, ηp2 = 0.07; PDQ: F[2, 108]= 1.53, p = 0.221, ηp2 = 0.03).

Differences in patient-reported cognitive change on MSCS versus PDQ across levels of objective cognitive change.

MSCS and PDQ change scores by cognitive performance change.

Discussion

We report good reliability and validity for the Multiple Sclerosis Cognitive Scale (MSCS), a new eight-item patient-report cognitive questionnaire with four factor analytically derived subscales (executive/speed, working memory, expressive language, episodic memory). The MSCS is provided in Appendix 1. MSCS showed medium-sized cross-sectional and longitudinal relationships to objective general cognitive impairment and cognitive changes, which remained statistically significant even when adjusting for mood. In contrast, the traditional PDQ was unrelated to objective cross-sectional impairment or longitudinal change with and without adjusting for mood, despite having 2.5 times as many items as the MSCS. It may be that patients respond more thoughtfully when there are fewer items and that MSCS items better represent the cognitive problems experienced by persons living with MS, especially given assessment of expressive language difficulty (which is missing from existing patient-report cognitive scales).

Development of the MSCS aligns with the Consensus-based Standards for the Selection of Health Measurement Instruments (COSMIN Taxonomy of Measurement Properties). 13 Herein, we have demonstrated the MSCS is reliable as indicated by good test–retest reliability and good internal consistency, established using EFA and verified independently using PCA. The full-scale MSCS score is also a construct valid instrument as evidenced by its link with objective cognitive performance and responsiveness to change in cognitive performance over time. The next step is to evaluate additional measurement properties of the scale. For example, cross-cultural validity and content validity of the subscales (i.e. relationships with objective estimates of cognitive performance in these domains). Published norms were used to quantify cognitive impairment and the design was retrospective, introducing limitations that could be overcome with a matched healthy control group and prospective design in future work.

The MSCS holds promise as a brief, reliable, psychometrically robust self-report scale with good links to objective cognitive impairment and responsiveness to cognitive change. Widespread adoption of valid and reliable patient-reported outcomes in research and clinical settings will help advance the field toward meaningful interventions.

Supplemental Material

sj-docx-1-msj-10.1177_13524585241309805 – Supplemental material for Multiple Sclerosis Cognitive Scale (MSCS): A brief psychometrically robust metric of patient-reported cognitive difficulty

Supplemental material, sj-docx-1-msj-10.1177_13524585241309805 for Multiple Sclerosis Cognitive Scale (MSCS): A brief psychometrically robust metric of patient-reported cognitive difficulty by James F Sumowski and Joshua Sandry in Multiple Sclerosis Journal

Footnotes

Appendix 1: Multiple Sclerosis Cognitive Scale (MSCS)

Please check the box to indicate how frequently during the past month you experienced:

Acknowledgements

The authors thank the patients, faculty, and staff of the Corinne Goldsmith Dickinson Center for Multiple Sclerosis. Special thanks to Jordyn Anderson, PsyD, Hanaan Bing-Canar, PhD, and Emily Dvorak, PhD for their work as independent raters of responses.

Data Availability

Data are available from the corresponding author upon request.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded in part by the National Institute of Child Health and Development (NICHD) within the National Institutes of Health (NIH; R01 HD082176 to JFS).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.