Abstract

Background:

Monitoring of cognition in multiple sclerosis (MS) is critical. Traditional cognitive testing is resource intensive and insensitive to subtle changes. Digital tests could address this need; however, their long-term usability remains unexplored.

Objectives:

To determine the long-term acceptability and feasibility of digital cognitive measures in MS.

Methods:

Participants with relapsing or secondary progressive MS were prospectively enrolled. MSReactor, a web-based test evaluating processing speed, attention and working memory, was performed 6-monthly for up to 36 months. Patient acceptability, anxiety, depression and quality of life were collected concurrently. Correlations between test acceptability, psychosocial measures, physical disability and cognition were analysed using Spearman’s correlation.

Results:

This study included participants with complete data at 12 (n = 601), 24 (n = 280) and 36 (n = 317) months. Attrition after 12 months was low (3.5%). Acceptability of MSReactor was high, although interest and enjoyment decreased slightly. Minor correlations were observed between reduced acceptability and increased anxiety, depression and disability and lower quality of life.

Conclusion:

Long-term cognitive monitoring was highly acceptable. We identified characteristics, such as increased anxiety, that were associated with reduced acceptability. Patients with these characteristics may benefit from support to maintain monitoring. These findings underscore the potential for integrating such tools into MS care.

Introduction

Cognitive impairment can affect up to 65% of people living with multiple sclerosis (pwMS), with processing speed and episodic memory often affected.1,2 This can significantly reduce quality of life (QoL) and ability to perform daily activities, such as employment. 3 Monitoring cognitive decline, particularly for early cognitive change, has been identified as a priority in MS management. 4 This would allow the inclusion of cognitive assessment in routine care and the evaluation of the effectiveness of therapies in slowing cognitive decline.

Although sensitive to detect cognitive impairment, standard neuropsychological tests are less responsive to changes in mild cognitive impairment.4,5 An ideal cognitive monitoring test should be brief, easy to administer, assess multiple cognitive domains 6 and have minimal practice effects.7,8 Importantly, the test should be acceptable to the patient to maintain high levels of adherence. Computer-based tests meet many of these requirements and may enable precise tracking of cognitive performance. Previous studies have shown that computerised cognitive batteries are useable and reliable for people of different age groups, both healthy and impaired.9,10

MSReactor is a web-based, reaction time test that efficiently assesses some of the most affected cognitive domains in pwMS, psychomotor (processing) speed, visual attention and working memory. 11 In earlier work, 11 MSReactor testing was feasible to implement in MS clinics, and task performance correlated with the Symbol Digit Modalities Test (SDMT).12,13 MSReactor was well received by participants at baseline testing, with 96% being happy (or neutral) with repeat testing and only 1% indicating that the test made them feel very anxious. A recent review on smartphone-based cognitive monitoring in MS found that although the reported acceptability of these tests was generally high, there were significant attrition rates in longitudinal testing. 14 Despite the increasing number of computerised cognitive tests available, 4 no studies have formally evaluated the long-term acceptability or feasibility for pwMS. Our study aims to evaluate the long-term acceptability of digital cognitive monitoring in pwMS using MSReactor over 36 months.

Methods

Standard protocol approvals, registrations and patient consents

Local human research ethics committees (Melbourne Health and Alfred Health) approved study designs for the prospective studies contributing to the larger cognitive monitoring programme. All participants provided written informed consent prior to any data collection.

Participants and recruitment

All participants were enrolled in a larger cognitive monitoring programme, comprising two prospective studies as previously described.11,15 Adults diagnosed with relapsing-remitting MS or secondary progressive MS were prospectively enrolled between February 2016 and December 2019 across four tertiary clinics in Australia. One study restricted enrolment to those with an Expanded Disability Status Scale (EDSS) of below 4, while the other had no restriction. Patients with significant upper limb, visual or cognitive impairment (as determined by the treating clinician) that could interfere with participation in the computerised MSReactor test were excluded.

Study design

We pragmatically included all participants who had completed at least 12 months of MSReactor testing with surveys at baseline and 12 months, and had an EDSS recorded by a neurologist within a 6-month window of the 12-month timepoint. Data from 24- and 36-month timepoints were included if available. All participants completed the MSReactor computerised test on an iPad® tablet during their routine clinic visit. Participants were then presented with an electronic survey to assess the acceptability of the MSReactor cognitive tasks. In addition, participants completed surveys to assess anxiety, depression and QoL. MSReactor tasks, acceptability and psychosocial surveys were completed at baseline and approximately every 6 months.

MSReactor testing

MSReactor is a digital cognitive battery comprising three tasks, as previously described. 11 Briefly, psychomotor (processing) speed (simple reaction time (SRT)), visual attention (choice reaction time (CRT)) and working memory (one-back (OBK)) are assessed using reaction time tasks. Each task requires the participant to respond to stimuli on the screen as fast as possible. Mean reaction times for each task are recorded in milliseconds. Task results are uploaded to the database (http://www.msreactor.com/), where they are automatically collated with previous tests and presented on a results page.

Acceptability, QoL, anxiety and depression surveys

Acceptability of the MSReactor platform was assessed by five questions asking participants to assess the test they just completed: ‘How interesting did you find the test?’; ‘Did you enjoy doing the test?’; ‘Did it make you feel anxious?’; ‘Would you be happy if you were asked to do it again?’ and ‘How do you feel about the duration of the test?’. QoL was measured using the Multiple Sclerosis International Quality of Life (MusiQoL) questionnaire, 16 with higher scores reflecting better QoL. Anxiety and depression were quantified using the Penn State Worry Questionnaire (PSWQ) and the Patient Health Questionnaire-9 (PHQ-9), with higher scores reflecting increasing anxiety and depression, respectively.17,18

Data analysis

Descriptive data are presented as mean and standard deviation (SD) or median and interquartile range (IQR). Frequency data are presented as proportions.

Acceptability Likert-type scales ranged from negative (0) to positive response (10) for the duration, happy to repeat and enjoyment questions; and from positive (0) to negative (10) for the interest and testing anxiety questions. A dummy variable collapsed the acceptability responses to a variable with five ordinal levels for analysis. Depression, anxiety and QoL were scored as per the literature.16–18 The proportion of acceptability responses for each question was calculated and compared to the observed response frequencies across both studies (n = 1138) at baseline testing using chi-square statistics for the 12-, 24- and 36-month timepoints.

The correlation of MSReactor acceptability measures with anxiety, depression, QoL and disability (EDSS) at 12, 24 and 36 months was calculated using Spearman’s correlation coefficient (Spearman’s Rho ρ). Confidence intervals (CIs) of the coefficient were calculated by bootstrap (n = 1000). The correlation between acceptability measures and baseline MSReactor task performance and change in reaction time since baseline was assessed similarly.

The feasibility of long-term testing and identification of factors that may reduce attrition over time was assessed by comparing the month 12 timepoint characteristics of participants who withdrew after at least 12 months of testing to those who remained in the study. We performed univariate logistic regression modelling with withdrawn status as the dependent variable, and independent variables included depression, anxiety and QoL scores; EDSS score; age at the 12-month timepoint; sex; acceptability responses at 12 months; baseline task performance and change in task performance over the initial 12 months of testing.

Each timepoint (12, 24 and 36 months) was analysed cross-sectionally, due to the potential for missing longitudinal data caused by interruptions in clinic-based testing because of the COVID-19 global pandemic.

Results

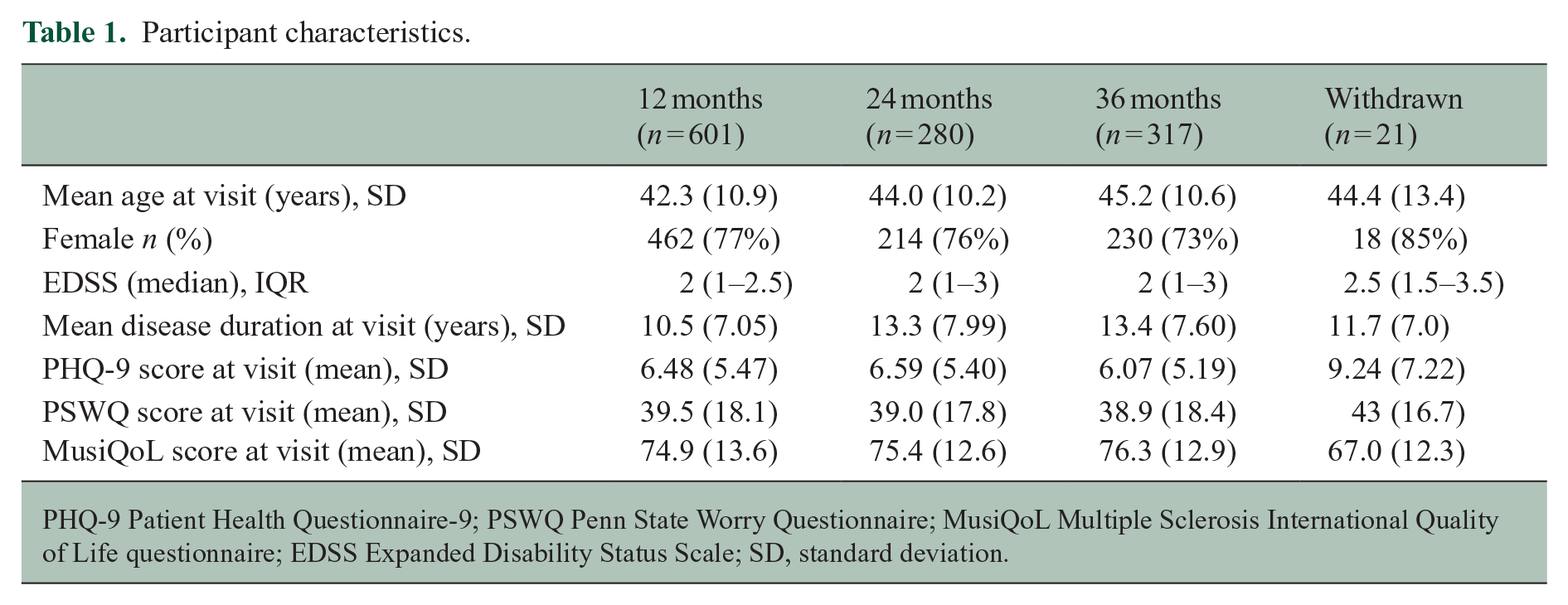

From studies in the wider programme, 601 participants had performed at least 2 MSReactor testing sessions with complete surveys and a recorded EDSS at the 12-month timepoint. At the 24- and 36-month timepoints, 280 and 317 participants had complete data, respectively. Twenty-one participants included in the 12-month analysis subsequently withdrew from the study. Of these, 18 participants withdrew between months 12 and 24; 2 withdrew between 24- and 36-month follow-up; and 1 withdrew participation after 36 months. Overall, the attrition rate for participants who completed over 12 months of MSReactor testing was very low at 3.5% (21 out of 601 participants). Characteristics of the study participants can be found in Table 1.

Participant characteristics.

PHQ-9 Patient Health Questionnaire-9; PSWQ Penn State Worry Questionnaire; MusiQoL Multiple Sclerosis International Quality of Life questionnaire; EDSS Expanded Disability Status Scale; SD, standard deviation.

Acceptability of MSReactor cognitive screening

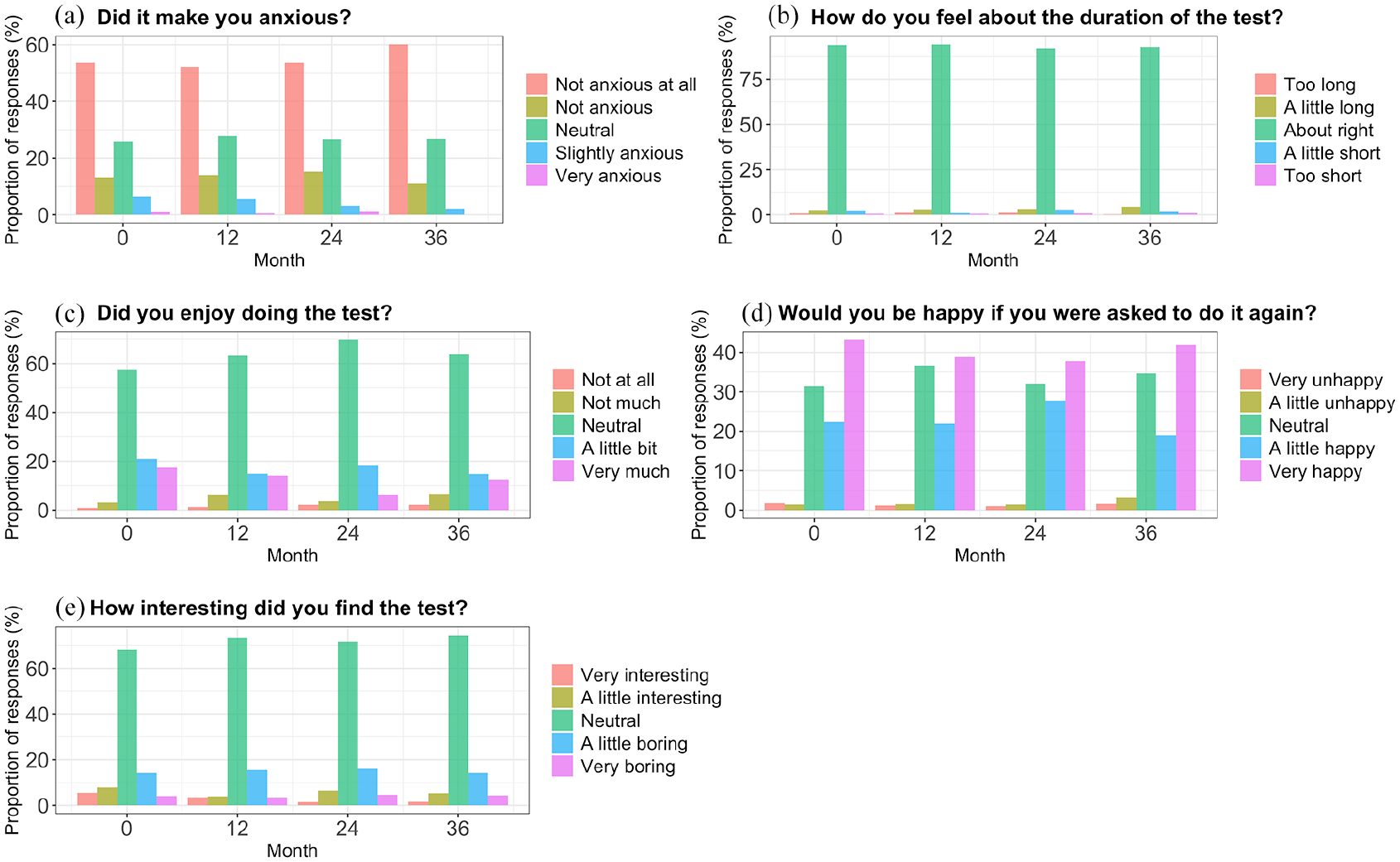

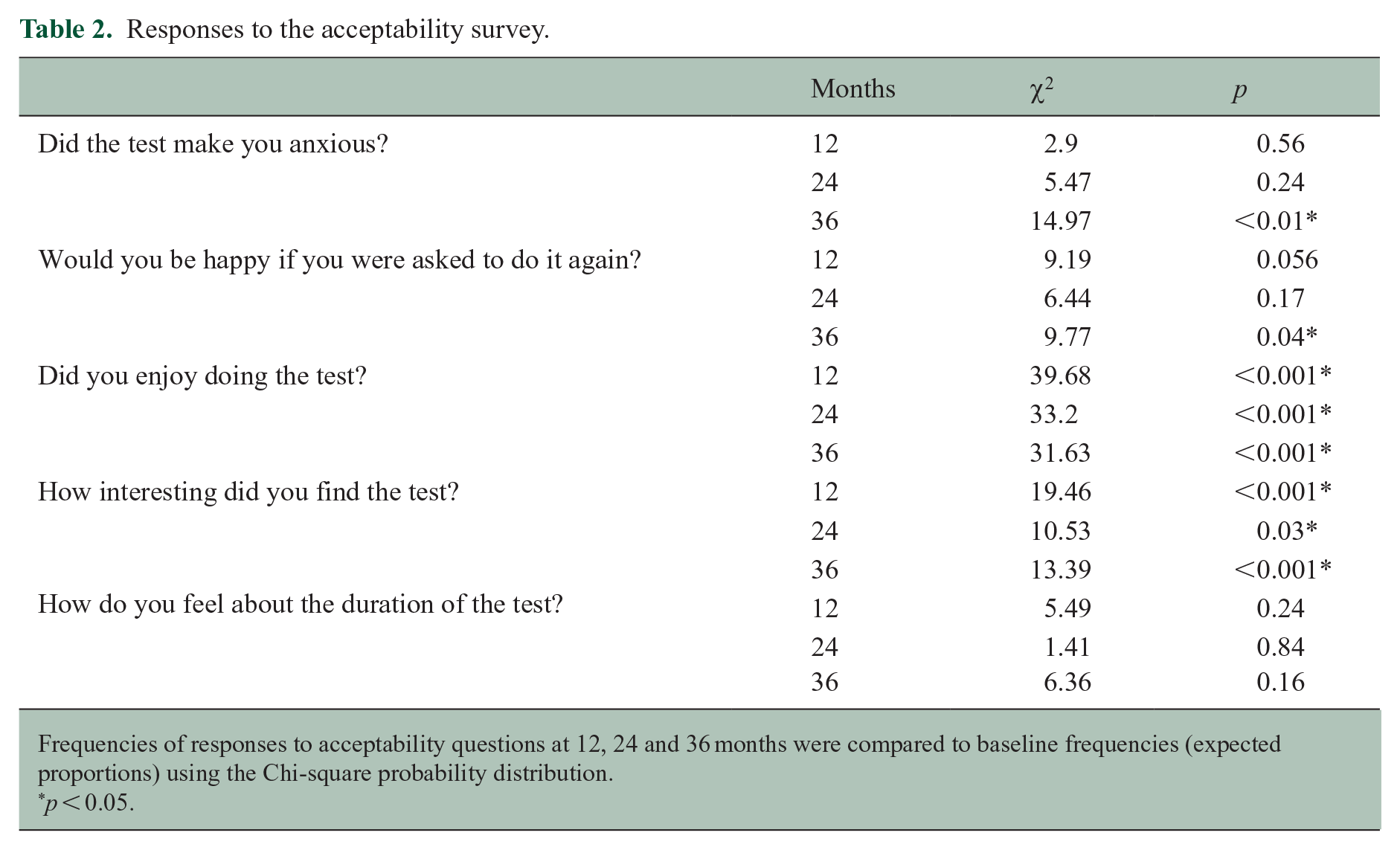

Overall, the acceptability of the MSReactor tasks remained high at each timepoint investigated. The proportions of survey responses for the ‘enjoyment of the tasks’ and the ‘interest of the tasks’ differed at 12, 24 and 36 months compared to the baseline test (Figure 1). When examining the frequencies of responses for the enjoyment and interest of the tests, we note a shift in proportions from positive responses towards a more neutral response. At 36 months, we observed a similar finding when we asked participants if they would be happy to repeat the tests. Similarly, at 36 months, there was a reduction in participants who felt anxious while performing the test. Overall frequencies of acceptability responses, as well as frequencies from each of the included studies, can be found in Appendix 1 and chi-square statistics in Table 2.

Graph of acceptability responses at baseline (0), 12, 24 and 36 months. All responses are in relation to the test just completed at each timepoint. (a) Participant responses to the question ‘Did it make you anxious?’, (b) Participant responses to the question ‘How do you feel about the duration of the test?’, (c) Participant responses to the question ‘Did you enjoy doing the test?’, (d) Participant responses to the question ‘Would you be happy if you were asked to do it again?’ and (e) Participant responses to the question ‘How interesting did you find the test?’

Responses to the acceptability survey.

Frequencies of responses to acceptability questions at 12, 24 and 36 months were compared to baseline frequencies (expected proportions) using the Chi-square probability distribution.

p < 0.05.

Acceptability correlations with psychosocial measures

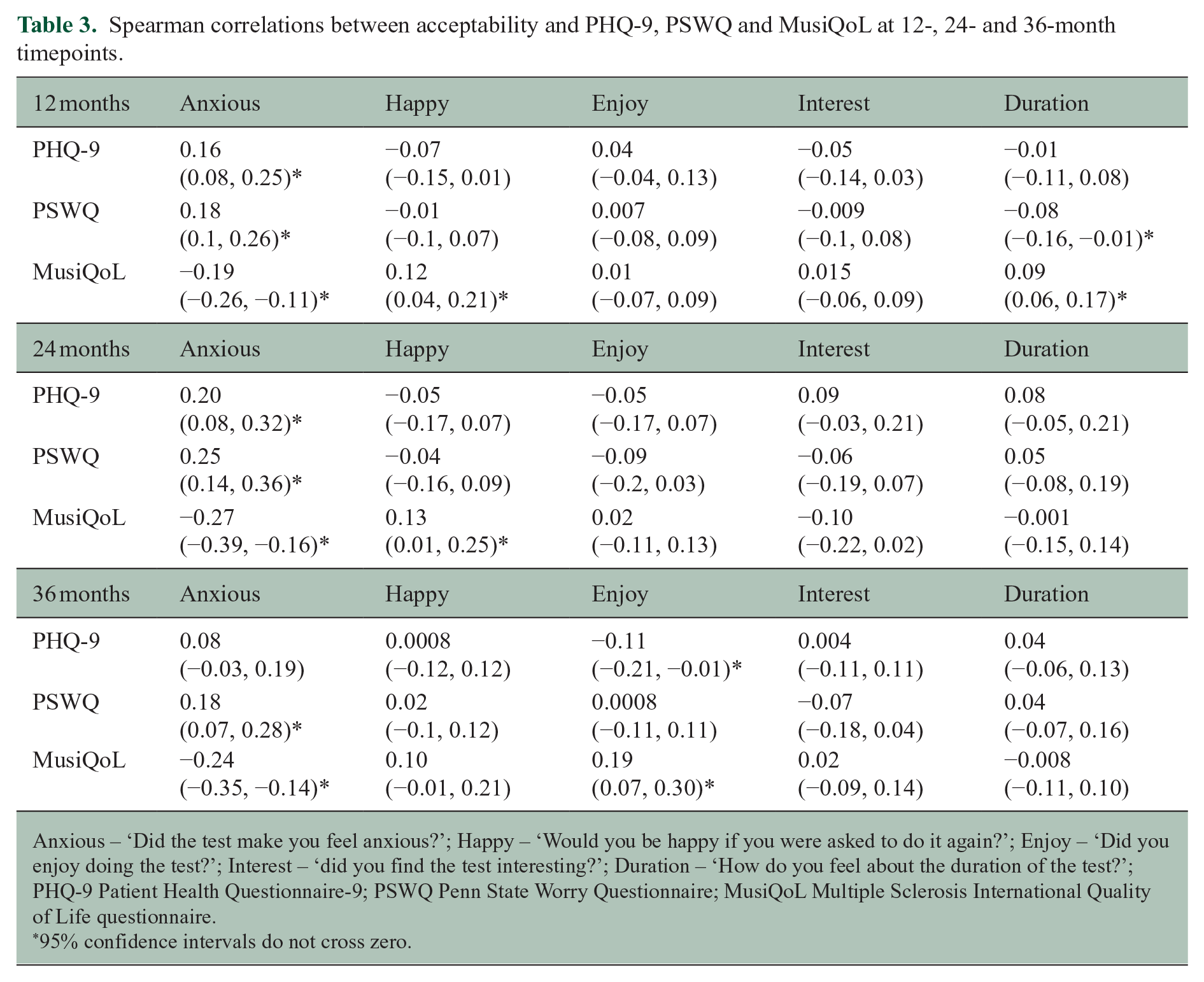

We observed evidence of minor associations between some acceptability responses and self-reported depression, anxiety and QoL (Table 3).

Spearman correlations between acceptability and PHQ-9, PSWQ and MusiQoL at 12-, 24- and 36-month timepoints.

Anxious – ‘Did the test make you feel anxious?’; Happy – ‘Would you be happy if you were asked to do it again?’; Enjoy – ‘Did you enjoy doing the test?’; Interest – ‘did you find the test interesting?’; Duration – ‘How do you feel about the duration of the test?’; PHQ-9 Patient Health Questionnaire-9; PSWQ Penn State Worry Questionnaire; MusiQoL Multiple Sclerosis International Quality of Life questionnaire.

95% confidence intervals do not cross zero.

The correlations between testing anxiety and PSWQ, PHQ-9 and MusiQoL scores at 12 and 24 months were weak, with CIs that did not cross zero. In addition, we observed minor associations between testing anxiety and PSWQ and MusiQoL scores at 36 months of testing. In general, higher level of testing anxiety was weakly associated with increased self-reported anxiety (PSWQ) and depression (PHQ-9) scores and lower QoL (MusiQoL).

Lower MusiQoL scores were weakly associated with reduced willingness to repeat the tests at 12 and 24 months of testing, with the opinion on the duration of the tests at the 12-month timepoint, and with the reported enjoyment of the tests at the 36-month timepoint. Depression scores were also weakly associated with enjoyment of the tasks at the 36-month timepoint.

Participants who withdrew from the study had higher PHQ-9 scores (z = −2.306, p = 0.02) and lower MusiQoL scores (z = 2.671, p = 0.007) at the 12-month timepoint compared to those who remained in the study. Participants who withdrew from the study were also more likely to report a lower willingness to complete another test at the 12-month timepoint (z = 2.739, p = 0.006). We found no associations between psychosocial measures and attrition at 24 or 36 months.

Acceptability correlations with disability and cognitive performance

We found evidence of minor associations between disability level and testing anxiety at 12 months (ρ = 0.10, 95% CI = 0.01, 0.18), opinion on the duration of the test at the 24-month timepoint (ρ = 0.15, 95% CI = 0.03, 0.26), interest in the test (ρ = −0.16, 95% CI = −0.27, −0.05) and willingness to complete another test (ρ = 0.13, 95% CI = 0.02, 0.24) at the 36-month timepoint.

At the 12-month timepoint, testing anxiety was weakly associated with the psychomotor function, attention and working memory task baseline scores (SRT baseline ρ = 0.13, 95% CI = 0.05, 0.21; CRT ρ = 0.12, 95% CI = 0.04, 0.20; OBK ρ = 0.13, 95% CI = 0.05, 0.21, respectively), but not with change in reaction times over 12 months. The association between willingness to repeat the tests and baseline psychomotor speed (ρ = −0.11, 95% CI = −0.19, −0.03) and baseline working memory (ρ = −0.14, 95% CI = −0.22, −0.06) performance was weak. Willingness to repeat the test was weakly associated with working memory task changes over 12 months (ρ = 0.11, 95% CI = 0.03, 0.18). The baseline attention task performance (ρ = −0.08, 95% CI = −0.16, −0.01) weakly correlated with participant opinion on the duration of the tasks.

The change in the psychomotor (ρ = 0.13, 95% CI = 0.01, 0.25) and working memory (ρ = −0.14, 95% CI = −0.26, −0.01) tasks over 24 months showed a minor association with testing anxiety and task enjoyment, respectively.

At the 36-month timepoint, change in the attention task since baseline was weakly correlated with participant enjoyment of the tasks (ρ = −0.11, 95% CI = −0.23, −0.001). There was a weak relationship between willingness to complete another test and baseline working memory performance (ρ = 0.11, 95% CI = 0.01, 0.21) and the change in working memory task performance (ρ = −0.15, 95% CI = −0.26, −0.04) at 36 months.

Patients who withdrew from the study were more likely to have a slower baseline reaction time in the psychomotor (z = −3.11, p = 0.002), attention (z = −3.713, p = 0.0002) and working memory (z = −2.704, p = 0.007) tasks.

Discussion

This novel study evaluated the long-term acceptability and feasibility of digital cognitive tests integrated into MS outpatient clinics. This study, the longest-running digital cognitive biomarker acceptability study in MS, shows that the acceptability of using a digital tool to monitor cognitive function in pwMS remained very high over 3 years. While the participants initially enjoyed and found the MSReactor tasks interesting, their interest became more neutral over time; however, the magnitude of change was minimal. Furthermore, at 36 months, we observed slightly increased proportions of participants who were neutral or less inclined to repeat the MSReactor tasks compared to baseline. Despite this minimal increase in the proportion of participants who were unhappy to repeat testing, over 60% of participants were still happy to continue testing after 36 months. This is further reflected in the very low attrition rate we observed following the 12-month timepoint. These observations are concordant with those found by Midaglia et al., 19 in which more than 60% of participants with MS expressed a desire to continue using the Roche FLOODLIGHT app, albeit after only 24 weeks of testing. Testing anxiety was lower after 36 months of testing, when compared to initial testing. No participants reported they felt highly anxious while performing the cognitive tasks at the final timepoint in this study. Although testing anxiety did not explicitly show an association with higher levels of attrition, participants who felt anxious may have chosen not to test at these later timepoints. This issue is further discussed in the limitation section. We did not observe changes in patient satisfaction relating to test duration. These findings are important as they demonstrate the long-term acceptability of MSReactor, despite a neutral trend in enjoyment and interest during the tests. However, it also highlights the need to maintain interest and enjoyment to ensure good long-term motivation and adherence to testing. One way to maintain enjoyment and interest in the computerised tests could be to further follow a framework of system design principles. 20 This could include more ‘gamification’, changing stimuli, rewards for achieving milestones or additional in-app support to maintain motivation.

Testing anxiety was consistently, but weakly, associated with self-reported depression, anxiety and QoL. Higher levels of anxiety and lower QoL were weakly related to testing anxiety over 3 years. Likewise, higher levels of self-reported depression were associated with testing anxiety at 12 and 24 months. Although associations were weak, these results identify a group of pwMS who may need additional support and reassurance during testing to reduce anxiety, a potential issue highlighted recently by Morrow and Hancock. 21 This finding supports that pwMS who reported increased depression and reduced QoL at 12 months were also more likely to withdraw from testing in the subsequent years. The very weak correlations between acceptability measures and self-reported depression, anxiety and QoL make it unlikely that these factors would significantly hinder long-term digital screening of cognitive function.

The level of disability also did not significantly impact the subjective acceptability of the computerised cognitive tests, with only very weak correlations observed with negative responses to testing anxiety, opinion of duration of the tests and willingness to repeat the tests at 12, 24 and 36 months. Despite the low number of participant withdrawals, we observed a non-statistically significant trend towards higher median EDSS score in participants who withdrew from the study after their 12-month test (p = 0.07). Participants remained happy to repeat testing despite the weak association between testing anxiety and increasing EDSS. This suggests that increasing disability may not affect long-term cognitive monitoring. However, the low median EDSS of the included cohort requires noting, and further research in pwMS with higher disability is warranted. This is an important finding however, as our earlier work showed that pwMS with a higher level of disability at baseline were more likely to experience a worsening cognitive trajectory. Hence, these participants may benefit the most from ongoing screening. 22

We observed weak correlations between cognitive performance, some acceptability measures and study withdrawal. Most consistently, slower baseline reaction times were associated with more significant testing anxiety at 12 months. There was also an association between decreased willingness to complete another test and slowed one-back test performance at 12 months. These associations may reflect cognitive difficulties, leading to test anxiety and reluctance to continue with cognitive monitoring or study withdrawal. However, this was reassuringly not reflected in participants’ enjoyment of testing. Our previous work has shown that people with MS with existing cognitive difficulties are more likely to experience worsening performance on neurocognitive tests. Therefore, it is particularly important to identify this group early to keep them engaged with cognitive monitoring.

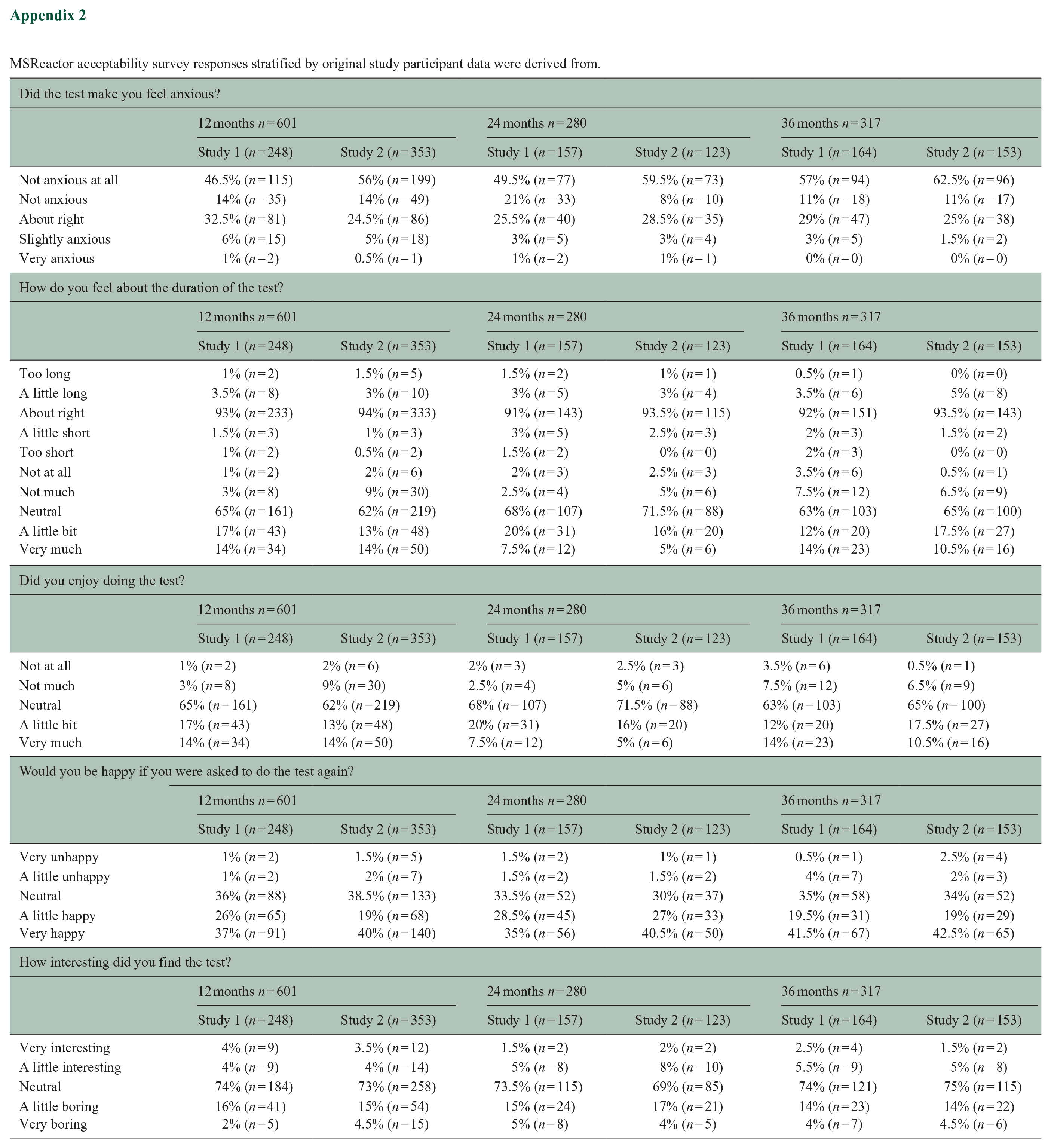

There were some limitations to this study. The participants in this study were part of a larger ongoing and pragmatic cognitive monitoring initiative to embed computerised monitoring of cognitive function into ‘real-world’ MS outpatient care. As such, the COVID-19 pandemic affected data collection for the acceptability surveys conducted during clinic visits. The likely result of this is seen by fewer participants who completed acceptability surveys at the 24-month timepoint, with the resumption of face-to-face contact by the 36-month point. Although we have attempted to characterise the participants at each timepoint and those who withdrew from the study, we cannot exclude possible selection bias that may arise from participants who did not complete surveys within the intervals and were consequently not included in this analysis. IT issues, participant refusal to complete a test (because of anxiety or time constraints, for example) or clinic operational needs could be reasons for the non-completion of surveys, and the included studies did not record these reasons. One study from which data were derived restricted the inclusion of EDSS to below 4. Thus, we must interpret the EDSS findings cautiously despite the acceptability responses being similar across studies (Appendix 2). In addition, we excluded participants with significant pre-morbid cognitive impairment from this study, so we cannot comment on the long-term acceptability in this population. Finally, this study did not measure cognitive domains not assessed by MSReactor, or other factors such as remote-testing, fatigue and technological aptitude, that may impact the acceptance of computerised cognitive monitoring.

Overall, the MSReactor platform remained highly acceptable for extended periods of cognitive monitoring. We highlight characteristics of pwMS who may benefit from extra support in maintaining motivation for regular cognitive monitoring. However, integrating validated and sensitive digital cognitive testing into MS clinical practice is feasible and could potentially change the way we monitor changes in cognitive function in all pwMS.

Footnotes

Appendix

MSReactor acceptability survey responses stratified by original study participant data were derived from.

| Did the test make you feel anxious? | ||||||

|---|---|---|---|---|---|---|

| 12 months n = 601 | 24 months n = 280 | 36 months n = 317 | ||||

| Study 1 (n = 248) | Study 2 (n = 353) | Study 1 (n = 157) | Study 2 (n = 123) | Study 1 (n = 164) | Study 2 (n = 153) | |

| Not anxious at all | 46.5% (n = 115) | 56% (n = 199) | 49.5% (n = 77) | 59.5% (n = 73) | 57% (n = 94) | 62.5% (n = 96) |

| Not anxious | 14% (n = 35) | 14% (n = 49) | 21% (n = 33) | 8% (n = 10) | 11% (n = 18) | 11% (n = 17) |

| About right | 32.5% (n = 81) | 24.5% (n = 86) | 25.5% (n = 40) | 28.5% (n = 35) | 29% (n = 47) | 25% (n = 38) |

| Slightly anxious | 6% (n = 15) | 5% (n = 18) | 3% (n = 5) | 3% (n = 4) | 3% (n = 5) | 1.5% (n = 2) |

| Very anxious | 1% (n = 2) | 0.5% (n = 1) | 1% (n = 2) | 1% (n = 1) | 0% (n = 0) | 0% (n = 0) |

| How do you feel about the duration of the test? | ||||||

| 12 months n = 601 | 24 months n = 280 | 36 months n = 317 | ||||

| Study 1 (n = 248) | Study 2 (n = 353) | Study 1 (n = 157) | Study 2 (n = 123) | Study 1 (n = 164) | Study 2 (n = 153) | |

| Too long | 1% (n = 2) | 1.5% (n = 5) | 1.5% (n = 2) | 1% (n = 1) | 0.5% (n = 1) | 0% (n = 0) |

| A little long | 3.5% (n = 8) | 3% (n = 10) | 3% (n = 5) | 3% (n = 4) | 3.5% (n = 6) | 5% (n = 8) |

| About right | 93% (n = 233) | 94% (n = 333) | 91% (n = 143) | 93.5% (n = 115) | 92% (n = 151) | 93.5% (n = 143) |

| A little short | 1.5% (n = 3) | 1% (n = 3) | 3% (n = 5) | 2.5% (n = 3) | 2% (n = 3) | 1.5% (n = 2) |

| Too short | 1% (n = 2) | 0.5% (n = 2) | 1.5% (n = 2) | 0% (n = 0) | 2% (n = 3) | 0% (n = 0) |

| Not at all | 1% (n = 2) | 2% (n = 6) | 2% (n = 3) | 2.5% (n = 3) | 3.5% (n = 6) | 0.5% (n = 1) |

| Not much | 3% (n = 8) | 9% (n = 30) | 2.5% (n = 4) | 5% (n = 6) | 7.5% (n = 12) | 6.5% (n = 9) |

| Neutral | 65% (n = 161) | 62% (n = 219) | 68% (n = 107) | 71.5% (n = 88) | 63% (n = 103) | 65% (n = 100) |

| A little bit | 17% (n = 43) | 13% (n = 48) | 20% (n = 31) | 16% (n = 20) | 12% (n = 20) | 17.5% (n = 27) |

| Very much | 14% (n = 34) | 14% (n = 50) | 7.5% (n = 12) | 5% (n = 6) | 14% (n = 23) | 10.5% (n = 16) |

| Did you enjoy doing the test? | ||||||

| 12 months n = 601 | 24 months n = 280 | 36 months n = 317 | ||||

| Study 1 (n = 248) | Study 2 (n = 353) | Study 1 (n = 157) | Study 2 (n = 123) | Study 1 (n = 164) | Study 2 (n = 153) | |

| Not at all | 1% (n = 2) | 2% (n = 6) | 2% (n = 3) | 2.5% (n = 3) | 3.5% (n = 6) | 0.5% (n = 1) |

| Not much | 3% (n = 8) | 9% (n = 30) | 2.5% (n = 4) | 5% (n = 6) | 7.5% (n = 12) | 6.5% (n = 9) |

| Neutral | 65% (n = 161) | 62% (n = 219) | 68% (n = 107) | 71.5% (n = 88) | 63% (n = 103) | 65% (n = 100) |

| A little bit | 17% (n = 43) | 13% (n = 48) | 20% (n = 31) | 16% (n = 20) | 12% (n = 20) | 17.5% (n = 27) |

| Very much | 14% (n = 34) | 14% (n = 50) | 7.5% (n = 12) | 5% (n = 6) | 14% (n = 23) | 10.5% (n = 16) |

| Would you be happy if you were asked to do the test again? | ||||||

| 12 months n = 601 | 24 months n = 280 | 36 months n = 317 | ||||

| Study 1 (n = 248) | Study 2 (n = 353) | Study 1 (n = 157) | Study 2 (n = 123) | Study 1 (n = 164) | Study 2 (n = 153) | |

| Very unhappy | 1% (n = 2) | 1.5% (n = 5) | 1.5% (n = 2) | 1% (n = 1) | 0.5% (n = 1) | 2.5% (n = 4) |

| A little unhappy | 1% (n = 2) | 2% (n = 7) | 1.5% (n = 2) | 1.5% (n = 2) | 4% (n = 7) | 2% (n = 3) |

| Neutral | 36% (n = 88) | 38.5% (n = 133) | 33.5% (n = 52) | 30% (n = 37) | 35% (n = 58) | 34% (n = 52) |

| A little happy | 26% (n = 65) | 19% (n = 68) | 28.5% (n = 45) | 27% (n = 33) | 19.5% (n = 31) | 19% (n = 29) |

| Very happy | 37% (n = 91) | 40% (n = 140) | 35% (n = 56) | 40.5% (n = 50) | 41.5% (n = 67) | 42.5% (n = 65) |

| How interesting did you find the test? | ||||||

| 12 months n = 601 | 24 months n = 280 | 36 months n = 317 | ||||

| Study 1 (n = 248) | Study 2 (n = 353) | Study 1 (n = 157) | Study 2 (n = 123) | Study 1 (n = 164) | Study 2 (n = 153) | |

| Very interesting | 4% (n = 9) | 3.5% (n = 12) | 1.5% (n = 2) | 2% (n = 2) | 2.5% (n = 4) | 1.5% (n = 2) |

| A little interesting | 4% (n = 9) | 4% (n = 14) | 5% (n = 8) | 8% (n = 10) | 5.5% (n = 9) | 5% (n = 8) |

| Neutral | 74% (n = 184) | 73% (n = 258) | 73.5% (n = 115) | 69% (n = 85) | 74% (n = 121) | 75% (n = 115) |

| A little boring | 16% (n = 41) | 15% (n = 54) | 15% (n = 24) | 17% (n = 21) | 14% (n = 23) | 14% (n = 22) |

| Very boring | 2% (n = 5) | 4.5% (n = 15) | 5% (n = 8) | 4% (n = 5) | 4% (n = 7) | 4.5% (n = 6) |

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship and/or publication of this article: D.M., J.J. and C.Z. declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article. M.G. is currently working on observational studies funded by Biogen and Roche. Y.C.F. received travel compensation from Biogen. He also receives research funding support from National Health and Medical Research Council, Multiple Sclerosis Research Australia and Australian and New Zealand Association of Neurologists. D.D. is founder & former shareholder of CogState Ltd. He has received honoraria from Biogen, Novartis and Merck. T.K. served on scientific advisory boards for the MS International Federation and World Health Organization, BMS, Roche, Janssen, Sanofi-Genzyme, Novartis, Merck and Biogen, the steering committee for the Brain Atrophy Initiative by Sanofi-Genzyme, and has received conference travel support and/or speaker honoraria from WebMD Global, Eisai, Novartis, Biogen, Roche, Sanofi-Genzyme, Teva, BioCSL and Merck, and research or educational event support from Biogen, Novartis, Genzyme, Roche, Celgene and Merck. J.L.-S. received travel compensation from Novartis, Biogen, Roche and Merck. Her institution receives the honoraria for talks and advisory board commitment as well as research grants from Biogen, Merck, Roche, TEVA and Novartis. M.B. served on scientific advisory boards for Biogen, Novartis and Genzyme, and has received conference travel support from Biogen and Novartis. He serves on steering committees for trials conducted by Novartis. His institution has received research support from Biogen, Merck and Novartis. K.B. has received honoraria for presentations and/or educational support from Biogen, Sanofi-Genzyme, Merck, Roche, Alexion and Teva. She serves on medical advisory boards for Merck and Biogen. B.T. received funding for travel and speaker honoraria from Bayer Schering Pharma, CSL Australia, Biogen and Novartis, and has served on advisory boards for Biogen, Novartis, Roche and CSL Australia. H.B.’s institution has received compensation for advisory boards or lecture fees from Novartis, Biogen, Merck, UCB Pharma and Roche. His institutions receive research funding from Novartis, Biogen, Merck, Roche, the NHMRC of Australia, The Medical Research Future Fund (Australia), Monash Partners, the Trish MS Foundation, The Pennycook Foundation and MS Australia. He receives personal compensation as the Managing Director of the MSBase Foundation and from the Oxford Health Policy Forum Brain Health Initiative. A.v.d.W. served on advisory boards for Novartis, Biogen, Merck and Roche and NervGen. She received unrestricted research grants from Novartis, Biogen, Merck and Roche. She is currently a co-principal investigator on a co-sponsored observational study with Roche, evaluating a Roche-developed smartphone app, Floodlight-MS. She has received speaker’s honoraria and travel support from Novartis, Roche, Biogen and Merck. She serves as the chief operating officer of the MSBase Foundation (not-for-profit). Her primary research support is from the NHMRC of Australia and MS Research Australia.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This study was funded by Novartis Australia (grant no. CFTY720D2418T), Biogen (IMPROVE-MS) and a MS Australia postdoctoral fellowship (21-3-040). The funder played no role in the study design, data collection, analysis and interpretation of data or the writing of this manuscript.

Ethical Considerations

This study was approved by the Melbourne Health Human Research Ethics Committee (HREC/16/MH/326, HREC/16/MH/297). All participants provided written informed consent prior to enrolment in the study and any data collection.

Data Availability Statement

The data sets used and/or analysed during the current study available from the corresponding author on reasonable request.