Abstract

This paper aims to address the dark side perspective on digital control and surveillance by emphasizing the affective grip of ideological control, namely the process that silently ensures the subjugation of digital labour, and which keeps the ‘unexpectedness’ of algorithmic practices at bay: that is, the propensity of users to contest digital prescriptions. In particular, the theoretical contribution of this paper is to combine Labour Process with psychoanalytically-informed, post-structuralist theory, in order to connect to, and further our understanding of, how and why digital workers assent to, or oppose, the interpellations of algorithmic ideology at work. To illustrate the operation of affective control in the Platform Economy, the emblematic example of ride-hailing platforms, such as Uber, and their algorithmic management, is revisited. Thus, the empirical section describes the way drivers are glued to the algorithm (e.g. for one more fare, or for the next surge pricing) in a way that prevents them, although not always, from considering genuine resistance to management. Finally, the paper discusses the central place of ideological fantasy and cynical enjoyment in the Platform Economy, as well as the ethical implications of the study.

Keywords

‘Puppeteers will rarely behave as having total control over their puppets. They will say queer things like: ‘their marionettes suggest them to do things they will have never thought possible by themselves’ (. . .) The hand still hidden in the Latin etymology of the word ‘manipulate’ is a sure sign of full control as well as a lack of it. So who is pulling the strings? Well, the puppets do in addition to their puppeteers’. (Latour, 2005: 59–60)

Introduction

This essay makes the case for the importance of attending to ideology and its affective grip in studies of digital control. It is well established that ideologies preside over the control of organizational practices (e.g. Braverman, 1974; Edwards, 1979; Knights and Murray, 1992; Leonardi and Barley, 2010; Levine and Rossmoore, 1995; Markus, 1983; Markus and Pfeffer, 1983; Thomas, 1994), yet the dark and unexpected side of such processes has thus far remained underexplored.

Digitalization enables new ways of working, developing products and services or mobilizing crowds (e.g. Bauer and Gegenhuber, 2015; Constantiou and Kallinikos, 2015; Mount and Garcia Martinez, 2014). A Platform Economy is emerging, which is generating a more connected society in which people can potentially share apartments through Couchsurfing, dine socially through Vizeat and commute together via Blablacar carpools (Ritzer and Jurgenson, 2010; Sundararajan, 2016). With the development of Big Data, new algorithms and cloud computing, platform owners have attained a status comparable to, or even more powerful than, factory owners in the early industrial revolution (Kenney and Zysman, 2016). Nevertheless, mainstream and constructivist research in technological matters have conspicuously failed to address certain difficult ideological and ethical questions; in particular, critical research has shed light on the threat of substituting algorithms for participatory, open-ended and egalitarian organizations (Linstead et al., 2014; Ossewaarde and Reijers, 2017; Srnicek, 2017; Taplin, 2017; Zuboff, 2019). Put simply, the main aim of this paper is to bring the issue of politics back into the discussion about the digitalization of work. This is currently of particular relevance, as the founders of major internet companies such as Amazon, Etsy, Google and Salesforce are openly libertarian; they oppose taxes, which they usually depict as ‘confiscatory’, and do not strongly believe in politics and democracy (Taplin, 2017). A pure and recent account of such ideology can arguably be found in an issue published in Cato Unbound, a libertarian journal (Friedman et al., 2009). Here, Pieter Thiel, the founder of PayPal and a former philosophy student at Stanford University, states: ‘I no longer believe that freedom and democracy are compatible’, because the system of universal suffrage subjects individual entrepreneurs ‘to the unthinking demos that guides so-called “social democracy”’ (Friedman et al., 2009).

In this paper, the notion of the ‘Platform Economy’ is foregrounded, rather than that of the ‘Sharing Economy’, as this emerging phenomenon, despite its associated buzzwords and entrepreneurial successes, is not socially minded per se; rather, platforms rely on the monetization of networking and consumer assets (Rogers, 2015). This aspect is especially problematic, as dark side research has documented how digitalization can facilitate new forms of isolation (Turkle, 2011), exploitation and precariousness (Beverungen et al., 2015; Fuchs, 2010), and may also lead to the emergence of workplace conflicts (Upchurch and Grassman, 2016). Thus, algorithmic capitalism has emerged as a subject of public and political contestation (Taplin, 2017), particularly after the boom and bust of the 1990s and the 2008 crisis (Srnicek, 2017). The proliferation of platforms has led to increased knowledge production and transparency (Hansen and Flyverbom, 2015), and new forms of ideologies have emerged (Lustig et al., 2016), in order to manage workers (e.g. evaluating job performance, matching fares and hiring and firing employees). Nevertheless, a psycho-affective account of this phenomenon is crucial, as the human (or corporate) hand behind the algorithm is invisible (Beer, 2017; Zuboff, 2019). In particular, one needs an approach which foregrounds the subjectivity of the users and the fantasy frames to which they are tied.

While it is clear that digitalization differs from management, are old ideologies being utilized by capital, or are new ideologies being constructed in an era of digitalization? To address this core question, a novel engagement with the psychoanalytic notion of fantasy can fundamentally assist in the portrayal of these new ideologies, as well as forms of resistance to them which may not be passive, aggressive and cynical, but rather pro-active, detached and ethical. As Linstead et al. (2014) argue, dark side findings often emerge as an accidental by-product of other, more regular, activities. For instance, corporate search engines such as Google ideologically conceal their primary function, which is to generate online advertising (Mager, 2012). In this paper, algorithmic ideology is arguably the process through which the ‘unexpectedness’ of digital practices is avoided. Drawing on psychoanalytically-informed, post-structuralist theory, this unexpectedness can be defined as the contingent emergence of political events which may potentially disrupt digital authority (e.g. privacy invasion scandals involving secretive organizations, such as the National Security Agency or Cambridge Analytica). An empirical enquiry into this phenomenon seems crucial if one is to avoid any accusation of technological determinism (Orlikowski and Barley, 2001), idealism (Leonardi and Barley, 2010) or conspiracy theory (Latour, 2005), that is, assuming, without any formal evidence, that control takes place behind the scenes.

The structure of this paper is as follows. Firstly, the dark side of digitalization will be reviewed and distinguished from traditional management; in particular, the labour process tradition will be reinvigorated to foreground how ideological control governs technology at work. This will then lead to a closer scrutiny of contemporary post-structuralist theory (Butler, Zizek and Glynos) in order to make sense of the new ideologies, enacted in behaviours and rituals, that have emerged in the era of digitalization. To provide a real-life example of this theoretical contribution, empirical observations of the public discourse of platform-based transportation companies such as Uber, Deliveroo and Lyft, as well as the online ethnography of drivers (via YouTube and the virtual forum Uberpeople.net), provide an exemplification of the political and ethical implications associated with algorithmic management. The discussion will evaluate the implications of fantasmatic control for our understanding of algorithmic management, and foreground the issues of cynical resistance and organizational ethics. Finally, the conclusion will address this paper’s limitations and possible avenues for future research.

The dark side of digital control

A key question of this paper is whether control in the digital era is different from earlier traditional forms of monitoring (e.g. work measurement, time and motion studies, piece rate payment systems), or if it is the same, albeit in an intensified and more extensive form (e.g. Rosen and Baroudi, 1992). To address this underexplored, yet crucial, issue, the phenomenon of algorithmic ideology and the underpinning place of fantasy will be specifically addressed.

Algorithmic management and ideological control

Algorithmic management can be defined as: ‘software algorithms that assume managerial functions and surrounding institutional devices that support algorithms in practice’ (Lee et al., 2015: 1). The substitution of algorithms for humans in managing business operations is intended to instruct, track and assess a mass of workers who are not directly employed, so that they provide a standardized service (Beer, 2009; O’Connor, 2016). Due to their superior analytical capacities, algorithms are replacing tasks that were once seen as the responsibility of middle (and even upper) managers (Möhlmann and Zalmanson, 2017). In other words, these new tools allow management to nudge and manipulate the users’ choices (Hansen and Jespersen, 2013). Such practices of digital control have been adopted in relation to subway engineers (Hodson, 2014), warehouse workers (McClelland, 2012), Starbucks baristas (Kantor, 2014), UPS delivery personnel (Davidson and Kestenbaum, 2014), as well as new crowd-sourced workers on platforms such as Uber, Task Rabbit and Amazon mTurk (Hassan et al., 2013). To take one example, scheduling technologies are being used on Hong Kong’s subway system to reduce unexpectedness (i.e. ensuring stability and predictability) and to manage the necessary engineering work (Hodson, 2014), thereby saving time and money. The Artificial Intelligence overseer manages the nightly engineering work, and bombards workers with signs that state productivity goals and the work schedule. Urgent, unexpected repairs can be added manually, yet the algorithm remains subject to experts’ approval. Nevertheless, the interest of dark side research in algorithmic management remains in its infancy relative to the wide coverage of this phenomenon in the popular press (e.g. Hill, 2015; O’Connor, 2016; Porter, 2015; Scheiber, 2017; White, 2015).

Thus, more critical approaches have centred their concerns on control and resistance in the workplace under changing technologies (Fleetwood and Ackroyd, 2004; Thompson and O’Doherty, 2009). The downsides of ‘algorithmic management’ include surveillance (Zuboff, 2015), people analytics (Bersin et al., 2016; Hansen and Flyverbom, 2015) and behavioural prediction (Angrave et al., 2016; King, 2016). Furthermore, warehouse businesses such as Amazon, Deutsche Post DHL, Staples, Dell or Walmart are becoming increasingly reliant on so-called ‘wage slaves’ (Dubal, 2017). As a result, low-paying, older temp workers may suffer from physical pain, chronic insomnia and mental pressure induced by the online-shipping machine (MacClelland, 2012). At Starbucks, workers, including single mothers, may learn their schedule no more than three days before the start of a workweek, plunging them into urgent and unsolvable logistical hassles (Kantor, 2014). Besides, software scheduling does not take place in a political vacuum. Flexibility of schedules (i.e. built-in accommodating hours) between employers and managers has a darker meaning for low-income workers, who are more exposed to unstable hours or pay cheques. Legislators and activists are indeed proposing laws to make scheduling decisions less unilateral (Kantor, 2014). In fact, such struggles remind us that algorithms, in practice, are inextricably blended with ideologies which are always open to negotiation and contestation (Mager, 2012). Arguably, search engines, such as Google, tacitly depend on the contingent buy-in of a network of content providers and users who agree to disregard the dark side of their business practices, such as the collection and use of personal data, advertising schemes, privacy violations and collaborating with secret services (Mager, 2012). Another example of such conditional assent is the repair teams of the Hong Kong’s subway system, who have a high level of responsibility, and therefore feel reluctant to relinquish part of their power to the algorithm. While people ordinarily trust algorithms, such as Page Rank, without thinking, they may nevertheless go through a phase of mistrust when the algorithm radically transforms their own mundane work practices. According to Adel Sadek, a transport engineer at the University of Buffalo in New York, such distrust also occurred when the same AI solution used in Hong Kong was implemented in the U.S. ‘People get scared when you talk to them about AI’ he argues. ‘A Department of Transport official is responsible for lives, they want to see how the decisions are being made’. (Hodson, 2014).

By contrast, those who manufacture and study scheduling software seem to naively – or opportunistically – endorse the algorithmic hype: ‘It’s like magic’ said Charles DeWitt, Vice President for business development at Kronos, which supplies the technology for Starbucks and many other companies (Kantor, 2014). Scheduling technologies are indeed a powerful way of bolstering profits, and companies purposefully incorporate ‘science’ to cut labour costs. This is the digital re-activation of an old debate in the managerial literature. While Taylor and Mayo investigate how management might best control work practices in order to meet a set of particular objectives (Holt and Den Hond, 2013), industrial relations and labour process studies, contrastingly, precisely challenge the ‘scientific’ ideology at the root of management studies (e.g. Jermier et al., 1994; Knights, 1990; Thompson, 1990, etc.) A particularly interesting way of analysing the contemporary scheduling problem and the managerialist ideology of control is via technological deskilling (Braverman, 1974; Noble, 1978), a process through which managers intentionally seek to deskill workers by introducing numerical control technologies in factories: the more complex the work process becomes, the less the workers understand it. Thus, as an inevitable consequence of a managerial ideology rooted in Taylor’s Scientific Management, managers extend their own power positions, as well as degrading the workers’ agency – and thereby their capacity for resistance. The LPT has been criticized, for example, by Orlikowski and Barley for telling a deterministic story, and for not being strictly ‘materialist’ (Orlikowski and Barley, 2001: 150). While Braverman’s conceptual tools are helpful to understand how technology is inextricably embedded with ideological control and, perhaps inevitably, it fills a managerial agenda, his view remains somewhat deterministic, as it fails to understand how and why workers accept such invisible forms of control. Clearly, Braverman’s definition of skills underestimates the importance of human agency (Mackenzie, 1984), namely the informal skills and tacit know-how, which escapes organizational control.

As the focus of this paper is now on how and why workers assent to such algorithmic ideology, it is timely to turn to Burawoy’s legacy, which is well-equipped conceptually to address the lacuna of LPT. In particular, managing unexpectedness – namely, ensuring the workers’ psychological incorporation into ideological processes – becomes a central feature of control-building (Meiksins, 1994). Burawoy’s work is of particular importance, as it foregrounds the subjective process through which social actors strategize their own subordination (Burawoy, 1985). In other words, employees engage in a Faustian pact to accept conditions of subordination for the sake of pay-offs in terms of identity, financial standing and job security. They adapt their feelings, lifestyles, domestic situations, the decision to have children, their work exertions and their relation to management to what the companies offer: as a result, the level of consent grows, and the consequences of not consenting (e.g. loss of identity, exit costs) are simultaneously perceived as greater (Deetz, 1998, 2003). On the shop floor, ideological consent is rendered possible by the informal game of ‘making out’, through which workers manipulate productivity in order to secure a targeted percentage output (Burawoy, 1979). When the chances of winning are too high, the game degenerates into boredom, but when the uncertainty is too great, the game generates frustration. To function effectively and keep the operators engaged without diminishing returns, ‘making-out’ needs to keep the workers’ excitement intact, otherwise the game descends into a crisis of motivation or legitimation. In the context of work, rules are not arbitrary, and management has to persuade workers to deliver a surplus by tolerating, or even constituting, the game as the social structure in the factory (Burawoy, 2012). The key point here is that supervisors turn a blind eye when operators ‘make out’, and this spontaneous game becomes the trick through which control is contrived in the factory (Anteby, 2008a; Burawoy, 1979). As Anteby observes in an aeronautics plant, contemporary forms of control tap the employee’s active engagement (2008), a process which requires an implicitly negotiated leniency between management and workers, namely a managerial tolerance of illegal work practices which enhance the workers’ desired identities. Anteby’s study shows that officially forbidden, yet tolerated, work practices are also conducive to identity enactment: they constitute organizational grey zones (Anteby, 2008a, 2008b). The study of illegal work practices (i.e. those that are not merely prohibited, but forbidden by rule, e.g. bribery attempts, daily break schedules, etc.) proves to be more informative than the study of legal practices (such as formal feedback, task performance and role modelling), because participants reveal their preferred identities as they probably choose to engage more freely in these practices than in those that are legal.

The fantasmatic attachment to digital interpellations

Following Burawoy’s seminal work, psychosocial relations have become a key locus of investigation for organizational researchers interested in the dark side of ideological control (e.g. Casey, 1995, 1996; Du Gay, 1996; Watson, 1994). In particular, Carlisle and Manning (1994) assert that organizational actors are ideological animals who ascribe value to the practices of organizations. For these authors, the authoritative character of an ideology is what makes it central to the motivational engagement of social actors. What is critical in these studies are the processes of ideological (re)formation (Alvesson and Wilmott, 2002) and the readiness of users to subjugate themselves to (or to resist) identity-constituting practices (Coombs et al., 1992).

While ideology does not replace the ‘hard’ technological facts, it explains how these facts practically come to be (Carlisle and Manning, 1994). A distinctive novelty of ideological control in the Platform Economy is that it takes place at a profoundly personal level. Althusser’s classic work is particularly enlightening here, as it incorporates the understanding of ideology as a personal interpellation: ‘Hey you there!’ (Althusser, 1971). Althusser argues that subjects are interpellated by dominant and institutional discourses which subject them, by hailing them (i.e. like a policeman in the street). In other words, interpellation places the subject within a discursive context. From that post-Marxist perspective, interpellation systematically reinforces and reproduces the status quo (Purvis and Hunt, 1993). Thus, perhaps a limitation of the Althusserian notion of interpellation is that it has the connotation of a policeman in the European context of the late 60’s, which may sound somewhat anachronistic to a digitalized (Western) audience. However, Butler, 1 in Excitable Speech, argues that subjects are not meant to accept the traumatic or excitable words by which they are interpellated, named and recognized (Butler, 1997). There is instead a two-way negotiation between the dominant discourse of the institution and the ongoing identification of subjects, who may always be in a position to reject the interpellation, or deal with it, and choose their own words. Furthermore, what appears to be neutral technologies are not necessarily affect-free; digital interpellations recall Butler’s claim that administrative inscriptions (e.g. gender or race categories) may not have a speaker and may act insidiously (Butler, 1997). Likewise, algorithmic notifications have no subject, but constitute the subject when operating. In other words, Butler’s revision can be formulated as an ethics of consent wherein the subject is the agent of his own subjection (Davis, 2012). In that respect, one may dynamically seek to gain a critical distance between one’s self and one’s interpellations, thereby challenging the status quo (Lampert, 2015). The subject’s political agency resides in this unexpectedness, namely its potential capacity for queer parody (Butler, 1993), creativity (Endrissat et al., 2016), feminist rewriting (Fotaki et al., 2014) or emancipation (Huault et al., 2014) from the linguistic control of the organization.

Crucially to us, ideology materializes when social actors misrecognize the unexpected side of the digital practices in which they are involved. Thus, ideology is characterized by ‘its power to transfix subjects’ (Glynos, 2001: 192), which it exerts by seductively promising ‘control over the uncontrollable’ (Fotaki, 2010: 714). Psychoanalytical theorists alert us to the dark side of this ideological closure as it represents an attempt to cover up the ‘lack in the Other’ (Lacan, 2006), an essential desire for recognition, primarily constitutive of the process of discursive construction. As a result, psychoanalytically-informed studies have turned to desire-based explanations of actors’ ideological attachment to organizational discourses (Fotaki and Kenny, 2014; Fotaki et al., 2014; Glynos, 2001). From that perspective, ideology speaks more specifically to the social actors’ affective attachment to organizational discourse (Glynos, 2001). This affective identification with discourse leads Glynos to relate the grip of ideological interpellation to its fundamentally fantasmatic origins: The crucial point is the psychoanalytic thesis, that it is precisely its fantasmatic character that sustains the grip of an ideological formation. (. . .) What we should be on the lookout for are specific phenomena or opinions, that tend to resist official public disclosure, that prefer to be kept secret. (Glynos, 2001)

The crucial aspect is that ideological control takes place primarily at a subjective-affective level (Fotaki et al., 2014), involving a desiring subject, that is, constantly absorbed, yet frustrated, by a secretive and fulfilling narrative. Fantasy, as understood in the clinical sense, does not designate imagined scenes of wish-fulfilment, but rather characterizes what fundamentally sustains the subject’s psychic realm by indicating to it ‘how to desire’ (Zizek, 1989: 118). More precisely, fantasy regulates the subject’s orientation and works as the ‘glue’ (Glynos, 2001: 210), which accounts for why some interpellations have more ‘sticking power’ (Arnaud and Vidaillet, 2018) than others. Such ideological fantasy structures enjoyment, and it sustains the subject, which is understood as a subject of lack, namely a desiring subject which is not reducible to need or demand. The internalization of fantasmatic control can be fundamentally maintained if it is kept private from ‘public official discourse’, not merely public discourse (Glynos, 2001).

From such a psychoanalytical perspective, it is not the content of ideology which is the core focus of interest, but rather its ‘persuasive performance’ (Carlisle and Manning, 1994: 685). It occurs not in our heads, but in practice itself. For that reason, ideological fantasy cannot be reduced to false consciousness; rather, it is a performative and physical organization of signifying practices embodied in rituals and actions (Eagleton, 1991). This dialectic between fantasmatic control and resistance typically takes the form of cynical jokes, gossip, craft pieces, graffiti, cartoons and stories (Anteby, 2008; Contu, 2008; Gabriel, 1995). One telling example is how those performing ‘dirty work’ (Tracy and Scott, 2006), or even prisoners, build self-esteem through the imaginary constitution of fantasized identities which subvert the organization’s legitimacy, but which nevertheless facilitate the construction of valued identities (Brown and Toyoki, 2013). Such cynical subjects, who ostensibly reject the values of the organization, nonetheless enact even the most repelling of discourses because of their failure to understand that the fantasy is embedded in their own social practice (Willmott, 1993). Thus, the actors’ preservation of a desirable image of the self is crucial to their acceptance of managerial control as being somewhat legitimate (Carlisle and Manning, 1994; Shamir, 1991). In that respect, corporate discourses promising a greater sense of autonomy in contemporary organizations are not without a seductive appeal (Filby and Willmott, 1988). Nevertheless, these discourses insidiously introduce a ‘vicious circle of cynicism and dependence’, one which numbs the actors’ ability to directly confront the extant power relations (Willmott, 1993: 518). More precisely, Willmott finds that the mere possibility of engaging in ‘the playful ironicizing’ of the corporate ideology is interpreted by many as evidence of the company’s commitment to openness and freedom of expression (Willmott, 1993: 537). As a result, voluntary forms of control may eventually transpire to be more effective than constraint, because they produce the consent of workers (Eccles and Nohria, 1992; Feldman and Khademian, 2000; Soeters, 1986), without being perceived as patronizing or humiliating (Willmott, 2005).

To recapitulate, ideologies in the Platform Economy are no longer facilitated by deskilling technologies or negotiated leniency, as was the case in the managerial era; they are more intimately embedded in the actors’ unconscious and personal identification with digital interpellations. To trace the internalization of affective control in the context of platforms, one needs to analyse digital discourses which render visible those moments when users cope with interpellations and struggle with their own fantasmatic attachment. The following empirical section addresses this research route.

A tale of control and resistance: Algorithmic management in ride-hailing companies

To illustrate how studies of digital control profit from greater familiarity with the ideology involved in the affective identification with interpellations, one of the most significant technical developments in new ways of working will now be used as a core example, namely algorithmic management in the context of ride-hailing companies.

Thus, the data generated for the purpose of this paper are a mix of primary and secondary research data. The primary data are derived from documentary analysis of websites and advertising, as well as online ethnography (i.e. observations tailored to the study of communities created through computer-mediated social interaction) of a virtual forum involving Uber drivers (Uberpeople.net). The motivation for targeting internet-based communications is that online fora typically play a central role amongst workers, such as the film industry union involved in the visual effects industry (VFX News, 2013), or that representing cabin crews against British Airways (Taylor and Moore, 2013). The specific drivers were selected as they discuss the effects of surge pricing and share their feelings about, and interpretations of, this issue. If one is to observe unexpected events that might emerge out of algorithmic management, focussing on micro-localized discourse, such as mundane online conversations between drivers, is a methodological imperative (Alvesson and Karreman, 2000). Secondary data include ethnographic or in-depth journalistic studies, involving new, hybrid workers. These individuals are solo-workers whose boundaries between work and private spheres are blurring (Murgia and Pulignano, 2019), in addition to the fact that some simultaneously drive for both Uber and its competitors, such as Lyft.

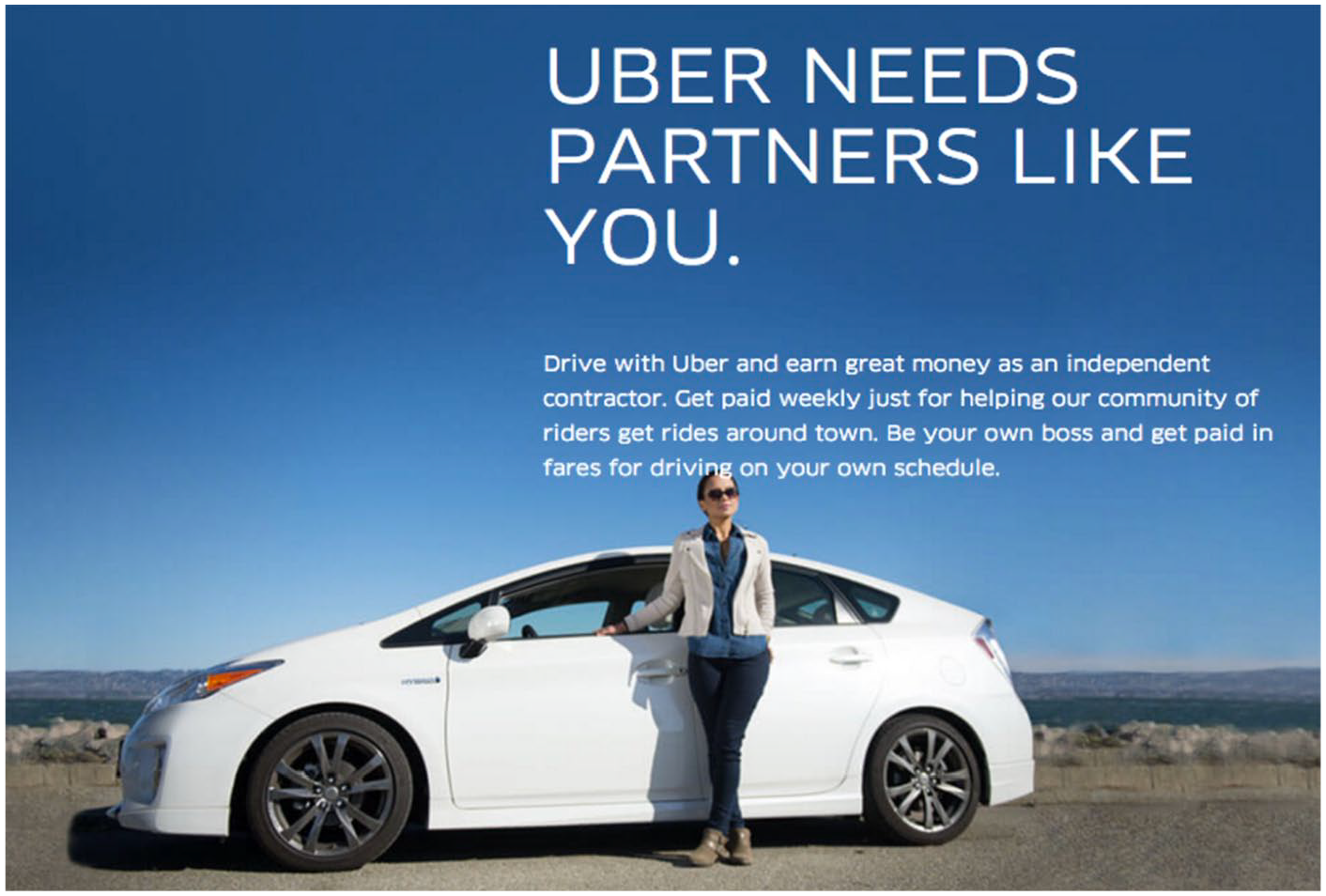

At the global level of corporate ideology, Uber or Lyft’s discourses propagate cultural and glamorous values, such as entrepreneurial risks and high rewards (Griffith, 2015). For instance, Uber’s marketing team develops and displays online biographical videos and success stories of heroic ‘Uberpreneurs’. A Uber advertisement illustrates the ambiguity of the Platform Economy by combining a seductive invitation to join a ‘community of drivers’ and to create a ‘partnership’, utilizing the very individuated posture of a female driver, as well providing as a highly-personalized interpellation: ‘be your own boss’, ‘choose your own schedule’, ‘Uber needs partners like you’ (Figure 1):

Uber’s interpellation in action (Allyn, 2005).

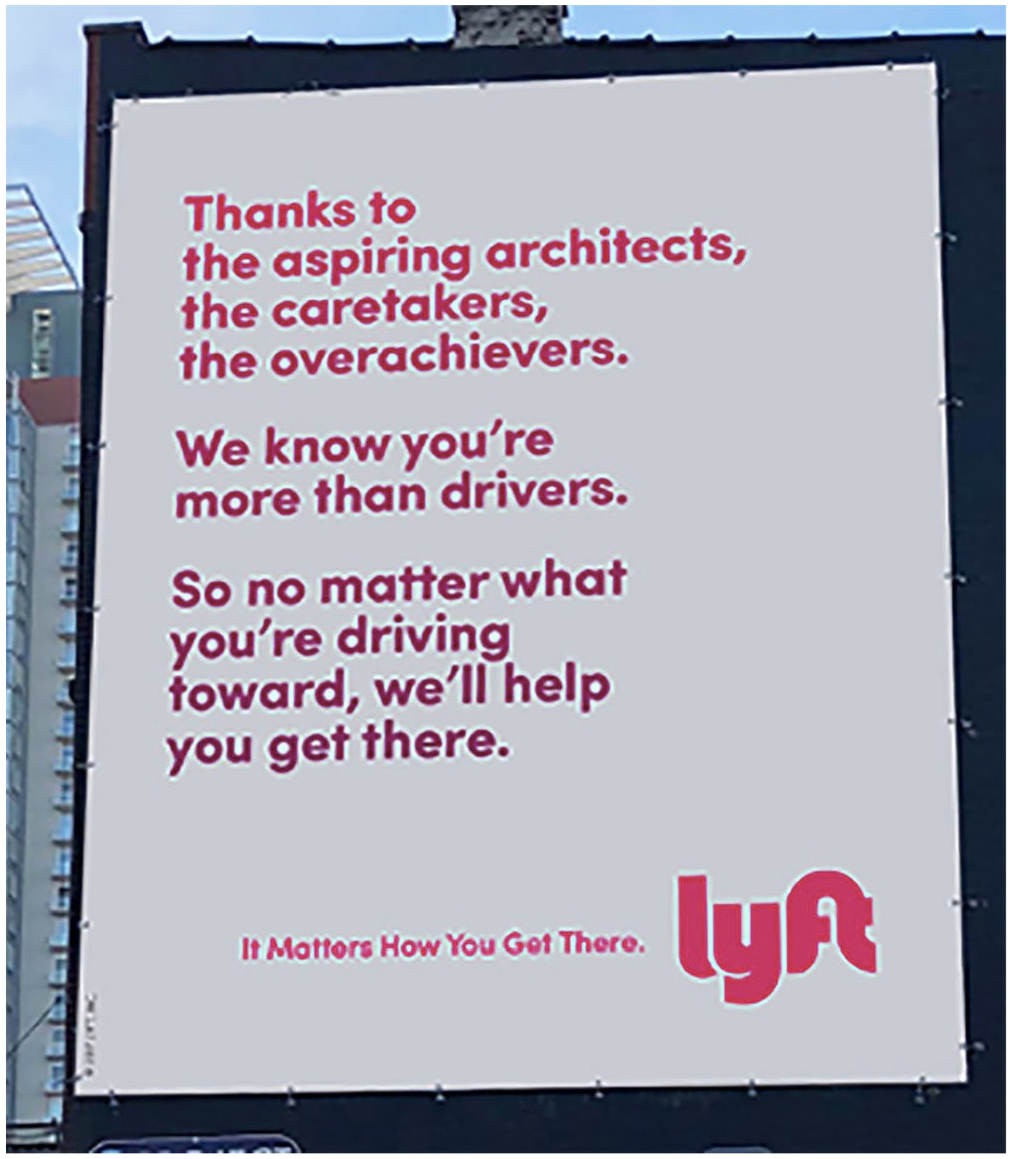

Uber’s corporate discursive formations such as ‘Uberpreneur’ or ‘partners’ create an image of a balanced and prosperous collaboration between drivers and Uber’s management. However, field researchers have cast doubt on the inclination of drivers to identify with the myth of the driver as an entrepreneur (Rosenblat and Stark, 2016). Rather, what were once traditional, stable and fixed employment relationships have turned into precarious, temporary, nomadic forms of work (Kalleberg, 2009, 2011; Vallas, 2015). Platforms constantly position themselves in regards to their users, clients, advertisers and policymakers, making strategic claims about what they do whilst masking the precarious realm of lower-income workers (Gillespie, 2010). As a result, interpellations are ‘larger than life’ and seek to make the workers valued, as illustrated in the advertising hoarding below by Lyft, which lists the numerous alternative identities of their drivers, that is, who may also work as architects, caretakers, activists, mothers, craftsmen, poets, breadwinners, etc. (Figure 2):

Lyft’s interpellation in action (Nudd, 2017).

Such gratifying discourse publicly acknowledges the real or desired identity of their drivers, or desire to be, outside of driving, and thereby presents Lyft as a caring, socially-minded company (Nudd, 2017). Yet such public gratitude also serves to mask the fact that these drivers are neither officially recognized, nor paid, as workers. The novelty is that such informal congratulations now form part of the corporate communication of platform companies. This imaginary duplication of interpellations seduces and flatters the self by silently depriving workers of their social and legal status as ‘employees’ or ‘workers’, and by removing from them any chance of challenging the existing power structure. Deliveroo also mobilizes such obsequious discursive interpellations by referring to its ‘riders’, never its ‘drivers’ or ‘staff’. Another example is the use of ‘kit’ or ‘branded clothing’, instead of ‘uniform’ (Hills, 2017).

What is more, the affective and seductive interpellations are not only used to conceal the reality about their formal status; they can also play out as goal-oriented behavioural design, one which implicitly prescribes appropriate behaviours. For instance, algorithmic interpellations also take the form of badges, which function as motivational incentives (Adar, 2019). As Uber drivers are not officially employees, and thus cannot be fired, congratulatory badges are used to define the company’s gold standard without appearing to be overly managerialist. Winning a reward such as ‘Night Hero’ can enhance a driver’s mood and motivation at work. The following examples illustrate the norms of how a ‘perfect driver’ should behave (Figure 3):

Uber badges for drivers (Adar, 2019).

Good drivers should know their way around town, be cool, entertaining, have up-to-date tastes in music as well as being charming conversationalists. Moreover, Lyft goes a step further by introducing personalized profiles in order to add a touch of customization, so clients and drivers can be ‘friends’ (Vivion, 2015). For instance, the client can inform the Lyft driver about their musical preferences in advance via its personal profiles. The proclaimed objective is to ‘foster a deeper community’. Nevertheless, such normalizing discourse operates as an interpellation, as it forces the drivers, consciously or otherwise, to position themselves in relation to these expectations, and to set the standards for the client. Arguably, algorithmic interpellations function as a fantasy screen intended to produce ideological consent for such non-standard work arrangements, also known as a gig working (Moisander et al., 2017).

Thus, the subjectivity of the workforce, and its potential resistance, is constantly solicited and neutralized (Solman, 2017; Surowiecki, 2014). The dark side of such flattering techniques has been fully documented, and such processes can only be observed at the micro-level of localized discourse. For instance, Rosenblat has recently described the particular regime of automated management under which Uber drivers or Deliveroo’s couriers experience labour (Rosenblat, 2019; Rosenblat and Stark, 2016). Algorithmic control is central to this process: Deliveroo’s algorithm tracks their couriers individually. As one employee put it (Scheiber, 2017): It was all day long, every day – texts, emails, pop-ups: ‘Hey, the morning rush has started. Get to this area, that’s where demand is biggest’. It was always, constantly, trying to get you into a certain direction.

Other examples include calibrating notifications and encouragements (‘You’re almost halfway there-congratulations!’), as well as the messaging (e.g. intentionally adopting a female persona for texting drivers, given the overwhelmingly male driver population) that Uber sends at strategic moments. For example, when drivers try to log off, they are alerted that demand is high in that driver’s location at that exact time (Rosenblat and Stark, 2016; Scheiber, 2017). Drivers describe precisely the way in which the app starts to govern their every move: In that state, they are literally just listening to the sounds [of the driver’s apps]. Stopping when they said stop, pick up when they say pick up, turn when they say turn. You get into a rhythm of that, and you begin to feel almost like an android (Hook, 2017).

In the extracts above and below, algorithmic interpellations and personalized management techniques lead the drivers to experience anxiety (e.g. trouble thinking clearly, suspiciousness, inappropriate emotions). Algorithmic management proves to be stressful by its very design, as the possibility of lost income for low ratings, high cancellation rates, or low acceptance rates is combined with financial and employment insecurity (Bartel et al., 2019). Thus, this type of non-standard ‘gig’ work also negatively impacts health through sleep deprivation, musculoskeletal disorders and urinary disorders. Furthermore, many ride-share drivers comprise an immigrant workforce facing multiple risk factors, including shift work, low pay and threats of violence (Davidson et al., 2018).

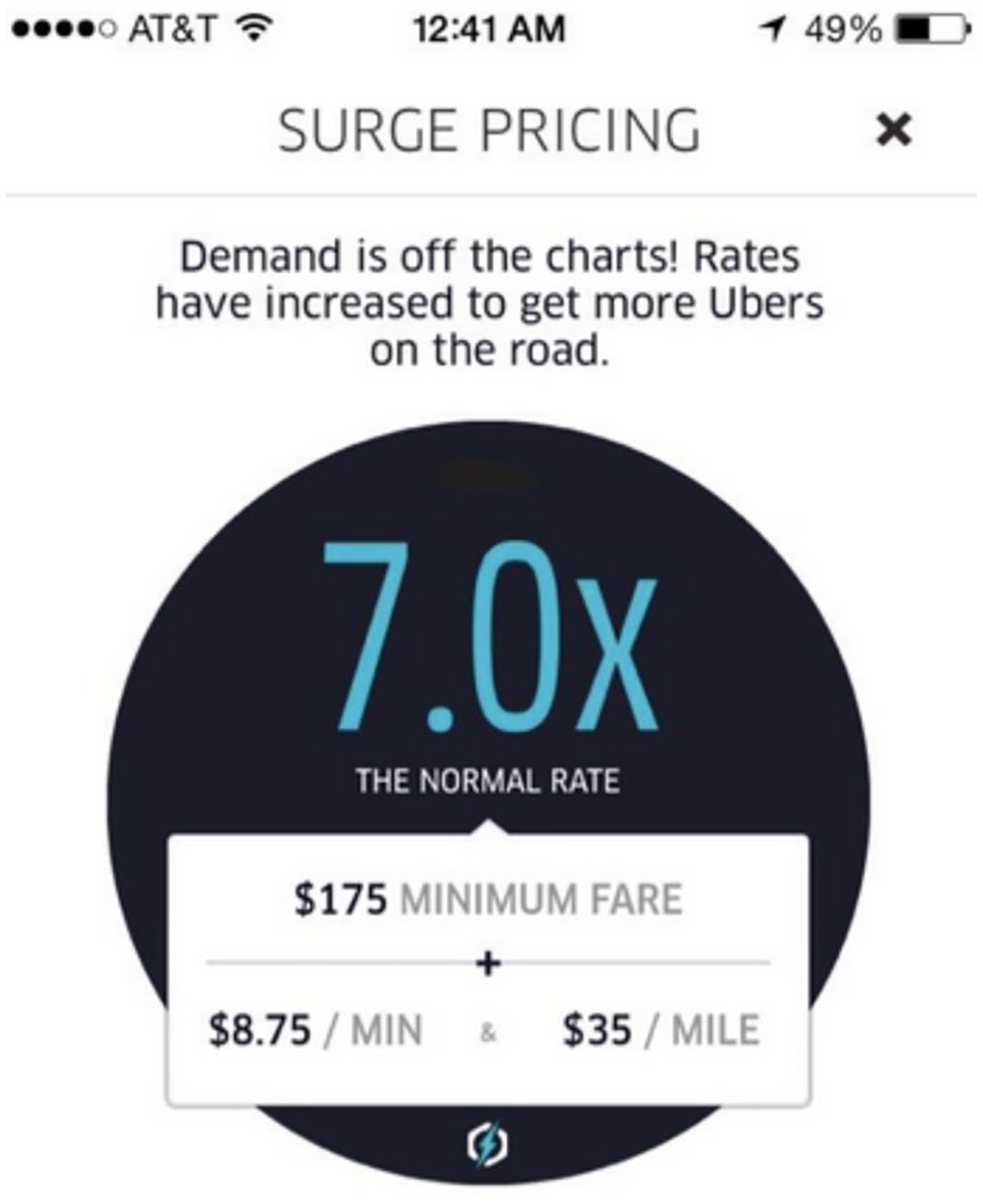

In this paragraph, the operation of surge pricing is specifically highlighted and analysed in a novel way: by contrast with the scheduling technologies reviewed above, an algorithm might be deployed specifically to trigger unexpectedness. The basic argument behind surge pricing is that those times when people most want a ride also happen to be the times when it is most risky to drive, for example in rush hours, snowstorms or on New Year’s Eve (Surowiecki, 2014). Thus, Uber’s network needs to be built with such rush hours in mind; a flexible labour supply model and surge pricing can closely match supply with demand throughout the day (Cramer and Krueger, 2016). Crucially, Uber’s interpellation here arguably resembles – metaphorically, at least – a slot machine, by providing the driver with a sudden and exciting duplication of possible financial gains. This phenomenon triggers a thrilling sense of urgency and an immediate injunction to identify with the algorithm. This obligation to position oneself is exemplified below (Figure 4):

Surge pricing (Mishra, 2016).

In practice, however, such algorithmic interpellations (‘Demand is off the charts!’) have in fact generated multiple complaints and scepticism; typically, surge pricing has frequently been pejoratively labelled as a ‘price-gouging’ practice (Popper, 2013). Parameters such as bad weather, special events, rush hour or very high demand may, in real time, turn out to be inherently ambiguous (Solman, 2017). A defining feature of these techniques is the information asymmetries between app designers, owners and the service providers: ‘Those who offer the infrastructure for labour but no stability or benefits to accompany it’ (Gregg, 2015). In that respect, the online fora where drivers socialize, ask each other questions and exchange tips, know-how and tactics, potentially constitute important sites of self-reflection and resistance (Lee et al., 2015). Uber’s reliance on black-boxed algorithms is certainly not transparent, unlike eBay or Airbnb, where the supply of goods is well-known (Chen et al., 2015). Uber claims that surge pricing increases driver supply. Contrastingly, data collection, which would need to be corroborated, seems to reveal that the feature merely serves to redistribute existing supply while pricing out some customers (Diakopoulos, 2015). According to Chen et al. (2015), spatial dynamics play out, and passengers can obtain lower prices by exploiting differences between surge areas (e.g. Times Square in Manhattan vs. an adjacent street).

As exemplified by the following online discussion thread from one of Uber’s major international fora, drivers commonly discuss the extent to which the algorithm controls them. Nevertheless, they show various degrees of self-identification with the narrative of algorithmic certainty and authority (Gillespie, 2010). Typically, price-fixing requires an agreement between sellers or buyers in order to coordinate pricing for the mutual benefit of the traders. As can be seen in the extract below, algorithmic pricing becomes the locus of scrutiny by the platform users:

It doesn’t matter if they manually manipulate it or automatically manipulate it. They manipulate it. Everyone knows that.

It is not like it is government regulated.

You work for Über, you get manipulated.

Those who may be a little smarter than the average, manipulate Über in return.

But don’t ever, ever call your pax. That is against the rules. ☺

It transpires that Uber’s forum features numerous statements expressing suspicion about the way the algorithm is potentially instrumentalized. In the above extract, the driver explicitly posits an opposition between ‘manual’ and ‘automatic’. This dichotomy is artificial as, ultimately, Uber inevitably has the last word. Corporate interests here are clearly opposed to governmental interests, yet Uber seem to ultimately benefit from the worried complicity of the workers. From the perspective of the driver, if being manipulated by Uber is preferable to working within a regulated industry, this probably means that that they perceive the government as as naïve and excessively formalistic. Interestingly, the driver above recognizes a potential agency on the part of the drivers, as well as a two-way negotiation, claiming somewhat cynically: ‘Those who may be a little smarter than the average, manipulate Uber in return’. However, in this case, Uber’s automated regime is eventually uncontested: ‘Don’t ever call your pax. 2 That is against the rules. ☺ ‘ The driver here is adopting an attitude that one might usefully describe as derisory (Faÿ, 2008), as indicated by the smiley and the ambiguous obedience, that is, acknowledging the dark side of manipulation on the one hand, while protecting the status quo on the other hand. In fact, nicoboy is even being relatively docile here by emphasizing, ironically, that directly calling a pax is strictly prohibited, and would not constitute an appropriate behaviour. Derision here forms the psychic mechanism through which the potential unexpectedness of digital organizing is kept at bay (Faÿ, 2008).

Data and information provide the basis of algorithmic management; users are literally and constantly interpellated by the app’s discourse, and are impelled to accept or reject injunctions to follow certain instructions. The following example demonstrates how drivers respond to and interpret Uber’s claims, and how parameters are set, including surge multipliers, surge zone and window size:

Uber always claimed that surge is demand triggered, aka lack of drivers in an area of high pax demand. They claim it is a natural, fully automatic process. Extending or reducing window times or locking in surge multipliers (even via constantly, manually updated ‘algorithms’) means it is no longer a natural ‘supply and demand’ process and places that process well in the ‘scumming drivers’/’price fixing’ process. They may be ‘algorithms’ at work, but Sara write those algorithms, constantly updating them to suite her needs. The ‘algorithm’ is Uber’s secret source, used to manipulate drivers and pax. No one except Uber can take a peek under the hood. Enough said.

When trying to make sense of their situation, the drivers personalize and fantasize the algorithm. It seems unclear in the extract above whether ‘Sara’ refers to a human being or a female bot, revealing the confusion in the mind of the drivers. The expression ‘suite her needs’ (sic) illustrates the fact that ideology is always an intersubjective process, demonstrating an allegiance to a somebody, not a robot. Furthermore, drivers engage in practices of ‘rule discovery’, analogous to rule discovery in gaming, in an attempt to uncover the rules of the ‘secret algorithm’ by which they are governed, as one driver put it (Allen-Robertson, 2017). This uncertainty leads drivers to talk of conspiracy, to self-denigration and to concerned assumptions about the presence of a hidden agency disregarding the ‘scumming drivers’. The use of words such as ‘manually’, ‘suspect’, ‘manipulate’, ‘secret source’ and ‘take a peek under the hood’ clearly confirm that it is becoming increasingly difficult to ignore the human hand in the steering of algorithms (Beer, 2017). Thus, the opposition between ‘scumming drivers’ / ‘price fixing’ neatly characterizes the ambiguity associated with the use of technology. The biased nature of machine-based processes generates a fundamental concern amongst the drivers of being robbed by the corporation. This feeling is captured in the following extract:

I was in a 1.3x boost zone and they paid me with a 1.2x. Small difference but I’m sure they’re ripping off thousands of drivers. Also, my toll reimbursements are now missing more often than before. They are stealing that also.

While this suspicion is not based on any solid evidence, the lack of clarity and transparency are nonetheless translated into a frustration, that is, highly visible in the forum and discussions. For good or bad reasons, this opacity generates worries and a sense of helplessness, which is duplicated and reinforced by a fantasmatic surplus: ‘I’m sure they’re ripping off thousands of drivers’. It transpires that dynamic pricing is frequently perceived as a manipulative device by the drivers, one which accentuates the precariousness of their position, and which is translated emotionally as a neurotic sense of unfairness, of being ‘stolen’ from and, more generally, that the corporation is lying.

As a result of the anxiety generated by the lack of evidence, combined with the intimate conviction of theft, drivers frequently suggest taking screenshots in order to demonstrate and materialize such manipulation, arguing that Uber might thus be impelled to reimburse them: ‘Screenshot or it never happened lol’. Derision and laughter are associated with a relatively desperate attempt to resist the interpellation, characterized by their feeling of helplessness, namely fighting a corporate army with rudimentary weapons. The Customer Support Representatives, officially intended to provide drivers with a positive customer experience, is frequently criticized, if not contested, in the forum, as exemplified by the following extract:

I received a request at Universal studio 2.1x. After I finished a ride. Price of the trip wasn’t anything close to my 16 mile trip. I checked and 1.7x was applied. I immediately called the support rep. Their response was surge dynamically changes.. therefore.. even though request was at 2.1x, it can change based on the pickup location and surge changes. So I asked them I should not believe what request is in the beginning. She said you shouldnt because surge pricing changes dynamically. Lol. I am so over with their lies.

As exemplified above, the online forum is characterized by numerous complaints and individual rumblings of discontent about customer pricing associated with surges. In the example above, the data collected by the drivers appear to be precise and credible, and demonstrate a significant discrepancy between the anticipated fare of 2.1× and the actual fare of 1.7×, leading to frustration and negative energy. Here the driver ‘immediately’ calls the rep, showing both a highly affective self-investment in the algorithmic ideology as well as a sense of urgency. Of particular interest here are the words used by the sceptical drivers, never technical or rational, but rather belonging to the subjective and ideological register of belief and the falsehood of Uber’s discourse.

It must be noted that their feelings are not individualistic but contagious, fuelling a collective resentment which is shared on a larger scale. For instance, the complaints section of the online forum is introduced with the following appeal: ‘Let it all out. Someone must feel for you and your gripes’. Moreover, this highly affectively charged relationship (a blend of paranoia and defensive feelings) also takes place between riders and drivers. Typically, drivers share Youtube videos when riders seek to cadge a free ride, or act in a racist, homophobic or humiliating way. The following screenshot provides one such example (Figure 5):

Youtube video: someone tries to get a free ride (Ryan Is Driving, 2018).

In the video above, viewed by millions of people on Youtube, the drivers keep smiling derisorily, repeating to the threatening rider – a woman, who tried to cancel her order during the ride, in order to evade paying – that she is being filmed. Some riders, including the woman above, use the ratings as a threat to damage the reputation of the driver. Some riders also threaten to report the driver to Uber (or here, mistakenly, to Lyft). In the case above, the rider keeps insulting the driver: the driver tries to remain calm in the face of what seems to be an obviously unjustified provocation. This kind of episode, which illustrates the daily difficulties of a driver, contributes to the creation of a highly aggressive and obsessive frame of mind of ‘being screwed over’.

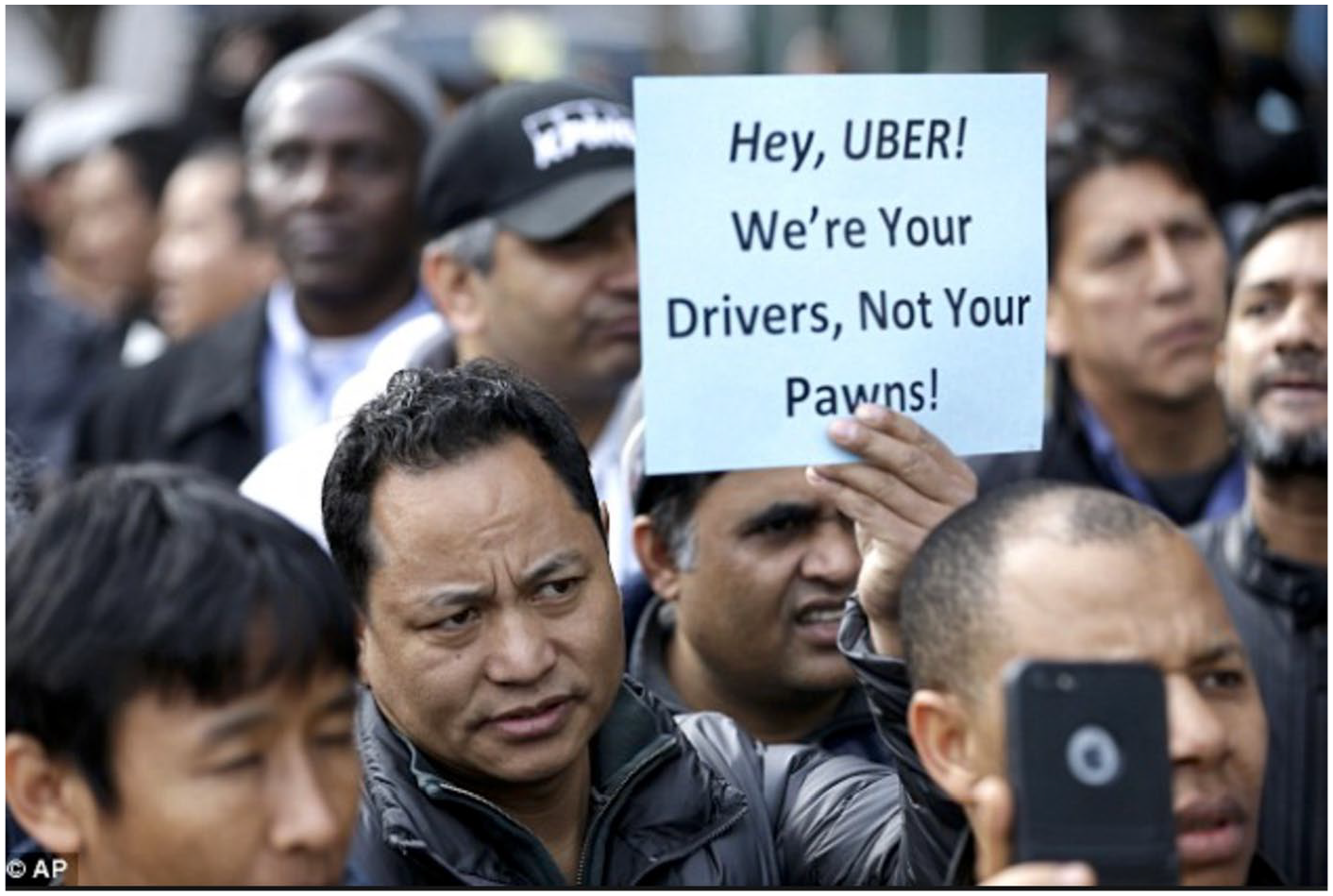

There is indeed an element of control – indirect yet powerful – in the circulation of an ideological fantasy among the drivers, delivery persons and other (new) workers. However, in this new work environment, opportunities remain for users to organize themselves collectively. From this perspective, algorithmic management is a tale of control and resistance, albeit one in which there are nevertheless opportunities for collective action. For instance, a protest outside the UberEATS office in London involved drivers chanting: ‘We are people, not Uber’s tools!’ (O’Connor, 2016). Another example is illustrated by the following picture, which depicts a conflicting interpellation in action, when exasperated drivers demonstrate over fare cuts outside Uber’s New York headquarters (Figure 6):

Uber drivers demonstrate in New York in January 2016 (Cao, 2016).

Put simply, these drivers are ostensibly protesting against this practice of algorithmic management which cynically treats them like objects (‘pawns’), rather than subjects, via Uber’s cheap exhortations and surge pricing opportunities. As a response to the digital interpellations (the notifications, etc.) they, in turn, challenge the management behind the algorithm. According to the director of the New York Taxi Workers Alliance: ‘Every city needs to take a deeper look at what happens when you let Wall Street-backed corporations use billions of dollars in capital to lock workers into a prison of poverty’, (Pager and Palmer, 2018). Some trade unions argue that algorithms exercise such a degree of control over workers that companies should now treat them as regular employees, with standard rights (Chapman, 2016). Until recently (Uber Technologies, 2014), Uber have described themselves as a platform company in its partner agreement with drivers – namely, a mere neutral intermediary – not a transportation company, thereby creating a grey zone in which they operate (Strochlic, 2015). However, companies such as Deliveroo, DoorDash and Uber are currently involved in legal battles in several countries over whether drivers should be classified as standard employees, rather than independent contractors. The former categorization may have implications for millions of workers, and would make such firms responsible for paying a minimum wage and making social security contributions (Conger, 2019). In Europe, Uber has appealed against a decision by a British labour tribunal that drivers must be classified as workers (including benefits or minimum pay and holidays). In California, legislators approved a landmark bill that requires companies such as Uber and Lyft to treat drivers and delivery-persons as employees (Conger and Scheiber, 2019).

Beyond cynical resistance? The ethics of crossing the fantasy

The main objective of the empirical section has been to identify and deconstruct the dark side of fantasmatic attachment to algorithms. In particular, corporate advertising and personalization techniques have been presented as subtle discursive and material interventions where the subjectivity of the workforce can be interpellated and disarmed. The contribution of this paper to the dark side literature of digitalization is threefold: critical, psychosocial and ethical.

The paper contributes to critical approaches to the dark side of algorithmic control by engaging with the new forms of ideology that are being constructed in an era of digitalization. In this regard, this paper seeks to connect, rather than oppose, the classical Neo-Marxist and post-Marxist works of Althusser and Butler. By theorizing and illustrating ideological interpellation in the context of management through algorithms, this novel theory revitalizes the established, and relatively old, research tradition of labour process theory (e.g. Braverman, 1974; Burawoy, 1979; Noble, 1978). However, it does so by specifically investigating a new form of organizing and the new context of digitalization. Braverman’s well-known thesis about the degradation of work has been hastily marginalized by constructivists for being idealistic and deterministic (Leonardi and Barley, 2010; Orlikowski and Barley, 2001). Nevertheless, contemporary technology not only offers itself as an aid to workers, as in Braverman; the ideological grip is now part of the zeitgeist and takes place at a public level, beyond the workplace, through the glamorous promotion of Californian values. Besides, platforms’ interpellations to be an entrepreneur or a perfect driver present themselves with a beatific veneer (Baker, 2014; Fleming, 2017; Griffith, 2015), whose calmness and bliss pay little attention to the drivers’ personal struggles, material conditions and juridical status. Furthermore, surge pricing apparently functions in a relatively similar way to Burawoy’s ‘making-out’ (1979) – an organized pendulum between boredom and frustration – which yokes the drivers even more closely to the algorithm. Yet, the finding here differs from Burawoy’s ethnographic study, as the game today is immediately structured and organized by workplace technologies, whereas in Burawoy’s factory, the game emerged informally and spontaneously, before being ‘tolerated’ by management. Thus, ideologies in the era of digitalization differ fundamentally from management, as technology now implements the game without requiring any direct confrontation between workers and managers. Thus, surge pricing techniques have been identified as an artificial technique – as they are potentially humanly manipulative – of affective nudging. Traditionally, gurus and executives have developed strategies to capture the minds and hearts of employees by infantilizing the workforce (Willmott, 2005), and this attempt has become ever more central with the erosion of traditional and bureaucratic forms of control. Nowadays, somewhat insidiously, such identity management (e.g. Alvesson and Willmott, 2002; Willmott, 1993), is increasingly conducted via algorithms and their game mechanics. Personalized technologies now directly organize the desires of their subordinated workers, effectively depriving the workforce of any proof of symbolic acknowledgement (i.e. any authoritative counterpart to whom they can truly express and voice their concerns, or appeal to in order to improve their work practices). In the data above, Uber’s Customer Support Team in effect replaces such authoritative counterparts, when frustrated drivers seek to decipher the hidden reality behind surge pricing.

Another, psychosocial contribution of the Uber case is to engage closely with the intimate interaction between technological interpellation and subjectivity. More precisely, the data of the present study illustrate existing conceptualizations of cynicism as a reproductive mechanism and self-defeating ideology 3 (Fleming and Spicer, 2003; Willmott, 1993). Thus, a worker does not need to be fanatically supportive or blindly obedient to endorse an ideology. Cynical employees may even experience a thrilling sense of autonomy by transgressing their job’s prescriptions. Indeed, previous studies have thoroughly documented how drivers, in practice, engage in guessing, resisting, switching and gaming the Uber system (Möhlmann and Zalmanson, 2017). Nevertheless, they still perform their professional role, perhaps even more acutely, than if they did not identify with these prescriptions, such as the McDonald’s employee wearing a ‘McShit’ tee-shirt under her uniform (Fleming and Spicer, 2003). Likewise, in the online forum studied above, drivers distance themselves from algorithmic authority, while nonetheless reproducing the power relations from which they seek to escape (Du Gay and Salaman, 1992; Willmott, 1993). For example, jokes, smileys and derision are frequently used to make frustration acceptable to peers – that is, other drivers. At a conscious level, drivers are well aware that algorithms do not exert a direct and coercive form of control. Nevertheless, the fantasmatic mechanism enables the internalization of control: workers act as though the ‘secret algorithm’ were controlling them. Consequently, most of the drivers studied in this paper act derisorily – they ostensibly preserve themselves from the fantasy, and merely use it as a means to (in their own words) ‘manipulate Uber’ in return (i.e. they should try to squeeze as much out of Uber before Uber ‘cons’ them). The innovation of the empirical findings here is the re-materialization of the (post-structuralist) account by incorporating the agency of algorithms, which is closely related to their exchange processes with data, parameters and implementations, such as surge pricing. Those are not merely symbols or signs, but non-human, technological actants which seemingly reinforce the disenchantment of the drivers in a way that effectively pre-empts any contestation. While this process is not intentional (i.e. there is no ‘evil’, omniscient puppet-master duping the innocent masses ‘from above’), the observations here illustrate that its persuasive performance is, potentially, more efficient than traditional control mechanisms.

Thus, the drivers’ attachment to the algorithm is sustained by an ideological fantasy which structures cynical enjoyment (Fotaki, 2009; Grugulis and Knights, 2001; Knights and Willmott, 1990). Can one now explicitly relate this process to the dramatic rise of ride-hailing platforms? As seen above, cynicism has been conceptualized as an ideological adjunct of power, which paradoxically protects – and perhaps even fuels – the enactment of the business models of digital platforms such as Uber or Deliveroo. To a certain extent, deviant and transgressive acts which express the genuine identity of the subjects do indeed fuel the inadvertent success of algorithmic management by infusing it with verve (Fleming and Spicer, 2003). Nonetheless, fostering a cynical culture might well eventually produce an unexpected and out-of-control backlash against the organization. In our example, the algorithm is fantasized as being all-powerful and a source of worries, which leads to regressive online discussions and conspiracy theories. According to psychanalytical theorizing, such fantasy is indeed particularly intense when subjects experience the imaginary scenario of the ‘theft of enjoyment’ (Chang and Glynos, 2011; Miller, 1994). In the present case, Uber’s management is depicted as a cabal conspiring to preserve power, while silently stealing money from honest drivers. When fully immersed in this fantasy, the paranoid-cynical driver becomes fanatical about details, harbouring an imaginary suspicion of some dark, obscene enjoyment behind the public platforms (Huang, 2007). More precisely, the paranoid-cynical subject circles around the hidden presence of a ‘subject supposed to enjoy (the organic Wholeness)’ (Zizek, 1997) or to possess the secret source of absolute enjoyment, which is retroactively constructed to be stolen, and which can never be directly confronted. In that respect, the idyllic imagery of public advertising and flattering interpellations operates seductively – rather than naively – precisely because it silently covers an infernal downside, exemplified by ‘dodgy’ personalized tricks (the dirty-sticky algorithm), combined with the nightmare of corporate robbery. Such double-edged fantasy – unwittingly combining beatific and horrific undersides – provides ideology with a certain vigour, escalating to a fatal and disastrous resolution from a public perspective (Pignot, 2015). (See Levin (2017) for the list of scandals leading to Uber’s co-founder Travis Kalanick’s resignation). Fittingly, Kalanick was caught on camera yelling like a despot at his own driver: ‘Some people don’t like to take responsibility for their own shit’. This image of a tyrannous personality is also amplified by the exacerbated fantasy of the ‘theft’ of sexual enjoyment, as Uber’s HR Department has been accused of perpetuating an internal culture of sexism and harassment (Lopatto, 2020). Another example involves a top Uber executive who had illegally obtained the medical records of a woman raped by a Uber driver, allegedly in order to cast doubt upon her account (Levin, 2017). By taking blog posts or online fora seriously, this paper extends existing essays which have suitably re-labelled the Sharing Economy as the ‘Taking Economy’: information asymmetry involves a company extracting more and more value from participants, while continuing to enjoy the appearance of a socially-minded company (Calo and Rosenblat, 2017). In that regard, the approach adopted here also includes traditionally less official forms of disclosure such as gossip, rumours, ‘bitching’ and conspiracy theories, which are traditionally denigrated and considered as morally suspect (Birchall, 2011; Hansen and Flyverbom, 2015).

While cynical subjects are still imprisoned within Uber’s ideological fantasy, what is the place of ethics in such reflection? As Zizek argues (1989), cynical subjects are ideologically blended, not in the sense of naivety or ‘false consciousness’, but insofar as they fundamentally ignore the ‘lack in the Other’ sustaining their social and material circumstances. The ‘big Other’ is a concept that speaks not only to private actors within a cenacle (influential persons such as investors, shareholders, business partners), but also to an unassimilable, radical alterity whose perception generates outcomes and may have potentially dramatic consequences. With the advent of social media, public relations (PR) scandals reveal significant disruptions between a business organization and its public (e.g. customers, employees), which can stimulate extensive media coverage. Perhaps depressingly, some cynical drivers are fatalist by conspiring to ignore the presence of a superior intermediary instance which might recognize them at a public-official level: ‘Everyone knows that. It is not like it is government-regulated’ as one driver put it, for example. By doing so, they consider unexpectedness as a pure impossibility, which does not function materially (Zizek, 1991) and protects the competitive advantage of the company – e.g. in terms of regular taxis, which are regulated. Thus, accounting for organizational ethics consists of rendering visible those unexpected moments in which subjects are not entirely gripped in a discourse that glosses over alternative interpretations of their work practices. In this regard, ‘true materialism’ occurs when actors ethically acknowledge ‘chanciness’ without concealing it with a hidden meaning (Zizek, 1991: 52). Other employees or drivers act differently, and report unfair treatment online in blog posts or by sharing videos (e.g. sexual harassment of managers, wrongdoings of passengers), but these generally remain isolated cases; indeed, sometimes such actions may even negatively impact their author’s lives (Lopatto, 2020). In such momentums (e.g. a blog post calling out sexual harassment at Uber, or a Youtube video demonstrating passengers’ misconduct), things get out of control – that is, a crack emerges in the dominant discourse, which can be challenged and re-signified. As ideological control is the process through which contingency is made invisible (Glynos, 2001), taking an ethical stance is contrastingly related to a deep engagement with unexpectedness. At a psychic level, Zizek frequently argues that such confrontation involves what Lacan terms the ‘crossing of the fantasy’ (e.g. Zizek, 1997), namely that drivers stop seeking an imaginary agency. Ethical acts of resistance are inherently radical, as they ‘present themselves as outrageous breaks with all that seems reasonable and acceptable’ in our liberal world (Contu, 2008: 377). From that perspective, cynicism and irony are contestable, as they typically trigger the emergence of ‘decaf resistance’, namely a form of manifestation which lacks its critical and radical content, and ‘threatens and hurts nobody’ (2008: 370). While this paper has identified the cynical significance of workers’ fantasmatic attachment to platforms, the ethical value of this enquiry is that workers learn to adopt scepticism and a detachment from the fantasy, and to give up chasing an imaginary agency in control behind the app.

A final question now remains unaddressed: how can unexpectedness emerge in the decollectivizing workplace (Thompson, 2016) – beyond individual acts of whistleblowing, for example? In reality, of course, cynical acts of resistance at the workplace remain self-centred agential practices that achieve prominence at the expense of broader, collective struggles (Thompson, 2016). This essay therefore subscribes to, and enriches, Ossewaarde’s and Reijers’s view on the cynicism of the digital commons; exchanges in the Platform Economy (e.g. via BeWelcome, Couchsurfing and Airbnb) are mediated by explicit and implicit price mechanisms, embedded in the technology design, which constitute an illusory form of commoning, and which eventually foster monetary and non-emancipatory practices (Ossewaarde and Reijers, 2017). Nevertheless, collective mobilizations become especially urgent in the ‘Taking Economy’, as AirBnb hosts do not hesitate to gather in the streets and protest against governmental attempts to protect the integrity of cities and local economies (Hickey and Cookney, 2016). Using a disclosing device is not straightforward, however; the surveillance-aware nature of workers’ engagement leads them to use pseudonyms and avatars to fully display their feelings and ideas (Moore and Taylor, 2013). Furthermore, Greaves (2015) observes that users of digital fora act like fatalist ‘digital proletarians’ who contribute to the dispersion of critical energy. Arguably, their worsening conditions may generate self-isolation, neurotic attachment and self-destructive contestation – including auto-denigration (the ‘scumming drivers’), sabotage (Sprouse, 1992) or even suicide (Dejours and Bègue, 2009). In that respect, personal, critical commentary can potentially trigger broader mobilizations, for example by migrating to public fora such as Twitter and Facebook (Richards and Kosmala, 2013). In the context of ride-hailing platforms, ethical practices take the form of public demonstrations and multiple trials, where drivers suddenly become aware of their capacity to confront algorithmic authority and refuse to be treated like robots or pawns. Unexpectedness takes its revenge and relieves the anxiety of closure when drivers become collectively aware of the lack underpinning their engagement with algorithmic management, and when alternative practices and regulations become possible. Although one must resist the temptation to make predictions, such collective mobilizations are more likely to destabilize the socio-symbolic network, thereby promoting new norms and regulations for the Platform Economy.

Conclusion

In this paper, the main objective has been to identify and deconstruct the dark side of fantasmatic attachment to digital organizations. The main conceptual contribution is that the persuasive performance of algorithms may potentially go deeper than traditional control mechanisms. The notion of ideological fantasy is crucial here, as it helps us to understand the double-edged, ambiguous narratives that tie the drivers to the organization. The empirical section shows that such processes materialize thanks to algorithmic features such as surge pricing, which acts as an ambiguous instrument of control. Alternatively, Uber drivers can ‘manipulate the app’ in return by exploiting technology too (screenshotting or posting videos or stories on blogs, fora and Youtube). While algorithmic control draws on fantasmatic logic to produce cynical enjoyment, this energetic impulse develops limitations when it becomes excessive, generating irreparable damage in terms of public relations (e.g. the CEO’s resignation). The Uber case enables the demonstration of this contribution by showing how unexpectedness can emerge in the decollectivizing workplace. The key lesson for gig workers is that they need to learn to cultivate scepticism and a detachment from the vicious circle of cynical enjoyment and fantasy of an all-powerful organization, and to find tricks to publicly reveal its arbitrary dimension.

Last but not least, this paper opens avenues for future research. Firstly, the case of algorithmic management at Uber, Lyft or Deliveroo is utilized here for illustrative purposes only. The interpretations of the category of respondents are exploratory and, admittedly, quite thin, and are not aimed at generalizability: more data should be generated by conducting further ethnographic observations and interviews in different contexts to present an accurate view of the circumstances (e.g. the social embeddedness of the people studied; their imperatives to work; their domestic situations) and the ideals of the respondents. As Burawoy argues, workers cannot choose a different game: they only have a choice about the manner of their subservience. Arguably, considering their material and other socio-cultural reality affects the narratives which actors construct for themselves, in terms of their ideological attachments, ethics and concerns. Secondly, while this paper has drawn on the affect-based theory of ideology, future theoretical work could usefully extend research into the ideological fantasy of digital platforms by drawing more specifically on the corpus of psychoanalytical studies of organizations (see Arnaud, 2012, for a review). Thus, another limitation of this paper is probably that, for reasons of space, it does not engage extensively with the nascent literature on psychoanalytically-informed discourse analysis (e.g. Cederström and Spicer, 2014; Kärreman and Levay, 2017), which has much to offer regarding the interrelationship between the materiality of affect and that of information technology. Thirdly, a psycho-affective lens on algorithmic ideology seems particularly promising in terms of analysing how information (e.g. the hashtags, texts, online feeds and fake news which populates the web) materializes in organizations. An acknowledgement of unexpectedness is thus crucial, as it contributes to bringing the ethics back into performative process studies of social and material work practices (Dale, 2005; De Vaujany and Vaast, 2014; Scott and Orlikowski, 2012). In that respect, this approach shares with De Vaujany and Mitev (2017) the aim of displacing the focus on the performativity of information, rather than technologies, by adopting temporal, semiotic and material perspectives. However, this paper provides notions capable of capturing the information’s potential to directly control subjectivities, whereas practice-based researchers and actor-network theorists typically ask different questions. This paper constitutes an ethical and political endeavour to further explore and question how information signifies in new work practices, and how it becomes intimately and materially intertwined with every individual or collective activity.

Footnotes

Authors’ Note

Edouard Pignot is now affiliated with Léonard de Vinci Pôle Universitaire, France.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.