Abstract

The emergence of journal quality lists such as that issued by the UK’s Association of Business Schools (ABS) has instigated a wave of ‘journal list fetishism’ throughout the business school sector. Business school deans and research managers have become fixated on whether the publication records of current staff and new applicants include the requisite number of ‘hits’ in the best ranked journals. Little attention is paid to additional measures of research quality, or to the broader context within which the research has been produced. This paper examines the current fetishizing of the ABS guide in general, and the magical ‘4’ rating in particular (the symbolic token for top journals). It begins by looking at how ‘trust in numbers’ may have assisted the uptake of the ABS guide through developing a perception of ‘trustworthiness’ and then raises questions regarding the current fetishizing of ‘4’ ratings using additional data within the ABS guide.

Keywords

Fetish items are revered and imbued with power by their worshipers, vastly beyond what would appear rational in the eyes of non-believers. Many factors may explain the existence and use of fetish objects by individuals but the strength of an individual’s fixation with such an object is likely to be strengthened significantly if there is ‘objective evidence’ to support their belief or trust in the power of that object. In many areas of modern life, numbers are given greater weight than ‘subjective’ opinions because they are viewed as being more objective. This ‘trust in numbers’ (Porter, 1995) can help generate or strengthen a perception of trustworthiness in a system or metric.

This paper examines the fetishizing of journal quality lists, with a particular focus on the UK’s Association of Business Schools (ABS) Academic Journal Quality Guide, and the role of ‘trust in numbers’ in helping to reinforce the fetish power of the list. Special attention is paid to the indicator for top-tier journals (a ‘4’ rating), which has become the ultimate fetish token for UK academics, imbued with a magical power in the minds of research managers and a source of both pain and pleasure to research staff attempting to publish in such journals (Willmott, 2011: 435). The notion that some Platonic ideal of research quality exists, let alone that it can be measured using a single discrete metric, will be anathema to many within the research community. Nevertheless, many at the managerial level within UK business schools seem to have accepted that journal ranking guides provide an authoritative source from which to infer the quality of published individual research items. For example, shortly after the ABS guide first appeared the then Dean of Warwick Business School commented that ‘A comprehensive Guide of this kind … has long been needed’ and described the guide as ‘authoritative’ (Harvey et al., 2008: 1).

The provision of a plethora of additional data in both the 2009 and 2010 editions of the ABS guide gives a reassuring ‘numerical objectivity’ to the ratings. This paper begins by examining how provision of these additional data helps foster a perception of trustworthiness among users of the guide using a framework employed previously by Jeacle and Carter (2011) to examine hotel ratings. The second stage of the analysis involves an examination of those cases (individual journals and subject areas) where there is a notable disconnect between the ABS ratings and the quality signals from the additional data presented in the guide. These analyses should encourage research managers and other decision makers who currently place a high degree of trust in the guide’s ‘4’ ratings to critically re-evaluate their views.

The remainder of the paper is structured as follows. The next section discusses the usage of the ABS guide and questions the degree to which its ratings have been fetishized by some within the academic community. This is followed by a section examining how the ABS guide may be viewed within the framework of trustworthiness. Next is a section in which the quality signals from the ABS ratings are compared to those from the additional metrics provided in the third edition (2009) and fourth edition (2010) of the ABS guide. The last section, prior to the conclusion, discusses several additional issues: the use of the ABS guide in structuring research assessment submissions and the need for additional research into how lists are influencing resources and career paths within the business school community.

Measuring research quality and fetishizing journal lists

The impact of journal quality guides

Concerns regarding the potentially damaging consequences of using journal quality guides have been raised by a number of business-related academics in recent years (e.g. Adler and Harzing, 2009; Giacalone, 2009; Hoepner and Unerman, 2012; Hussain, 2010, 2011; Macdonald and Kam, 2011; Mingers and Willmott, 2010; Nkomo, 2009; Özbilgin, 2009; Rafols et al., 2012; Willmott, 2011). 1 These concerns range from the stifling of new journals and research methods, to the domination of powerful cliques among editorships of well-established journals who act as gatekeepers and whose power is enhanced by rating lists. Willmott (2011) characterizes the current obsession with journal rankings as ‘journal list fetishism’. His paper focuses primarily on the ABS guide and draws attention to the potentially damaging consequences of its over-zealous use by research managers.

The ABS guide is currently in its fourth edition and is edited by Harvey et al.(2010).

2

Journals are rated 1 to 4, with a ‘4’ indicating the top journals in their field. The fetishizing of the ABS guide’s ‘4’ rating can be observed both among research staff and research managers. Both groups imbue the ‘sign of 4’ with almost magical properties such that researchers are prepared to twist and contort their work into an appropriate format for ‘4’ rated journals, even if they do not believe that this may be the best way to present the research, while research deans view such publications as a powerful indicator for assessing promotions, tenure and remuneration. The impact of the ABS guide on UK academics in both pre-1992 and post-1992 universities is well illustrated via extensive quotes in the recent survey by Nedeva et al. (2012). These reiterate Willmott’s (2011) concern that the guide has distorted the publication patterns of UK academics. The following quotes are extracted directly from Nedeva et al. (2012: 349–350): [I] feel I have to try and get things in higher ranked journals even when I don’t think they’re the best ones for the topics. The ABS booklet is infuriating! Several excellent journals in my field are unlisted, while others are given absurdly low ranks … I feel I have to follow the ABS rankings otherwise I won’t get research support.

Those publishing in unlisted or poorly rated journals are likely to find themselves under significant pressure either to change their target journals (as indicated in the quotes above) or to accept cuts in their personal research allowances even if they are generating a notable volume of research. Direct evidence on this point is given in a personal account by Sangster (2011: 576), who has held various chairs in accounting since the 1990s: I was omitted from a list of possible and probable entrants to the UK’s 2014 Research Excellence Framework (REF) exercise … The reason for that omission was that none of the outlets for my research was rated above 2 in the ABS Journal Quality Guide … At a meeting with departmental staff, it was announced that my colleagues could continue to develop as researchers but that they would not be entered in the 2014 REF unless they published in journals ranked at levels 3 or 4 in the ABS Guide. My research team disintegrated immediately … .

This statement will resonate with many UK academics whose research managers rely primarily or solely on the ABS guide in making crucial decisions regarding individual academics’ inclusion or exclusion from research assessment exercises, the appointment and promotion of staff and the redefining of some staff as ‘teaching only’. Surveying practices across six UK business schools (Nottingham, Bangor, University of the West of England, Swansea, Oxford and Warwick) Nedeva et al. (2012: 348–349) report that: Hiring (including probation procedures), promotion and the distribution of resources constitute three core institutional practices. Our study indicates that the ABS list of journals is used by business and management schools to inform decisions regarding all three of these.

They note that while Oxford’s SAID Business School used a range of lists and benchmarking tools (unspecified), business schools at Nottingham, Bangor, University of the West of England, Swansea and Warwick relied on the ABS guide to inform tenure, promotion and research allocation decisions.

Is there a Platonic ideal?

Underpinning much of the rationale for the existence of journal ranking lists is the belief that there is some Platonic ideal of research quality that the lists are attempting to measure. For those who follow this belief, measuring this Platonic ideal is the Holy Grail to which their lists and metrics aspire.

An obvious limitation to this belief, of course, is that within the social sciences academics use a range of different paradigms to frame their research papers. While in the physical sciences research tends to be conducted under the prevailing accepted paradigm until it is replaced by some new paradigm, within the social sciences a wide range of paradigms co-exist within the academic literature. It should be noted that even in the physical sciences paradigm shifts do not always occur as a clean-break and so rival views can co-exist over periods of time (Galison, 1999; Galison and Stump, 1996). However, the co-existence of many paradigms is clearly a greater issue in the social sciences, including business disciplines. Since the language of one paradigm may not translate easily into the language of another, and rational evaluation may be near impossible, these competing paradigms may be viewed as incommensurable (Khun, 1996). To what extent can it be appropriate to attempt a comparison of research quality across such a diverse set of subjects and methodologies as are housed within business schools?

A second problem is that research, particularly within business disciplines, has many characteristics—how should these be weighted? As Milne (2000, 2002) points out, research quality is multi-dimensional in its characteristics and so any attempt to summarize these characteristics into a single figure will inevitably be excessively simplistic. Even if all academics shared the same paradigm, it would have to be acknowledged that research papers are likely to vary across characteristics such as the impact on business practice, the development of theory and methodology, the impact on policy decisions, the impact on teaching and learning design, etc. Since each paper would have a distinctive profile across these characteristics (even assuming that such characteristics could be unambiguously measured) such variations cannot be reflected meaningfully in a single metric.

Another limitation to the use of journal quality guides is that the quality of individual articles (however measured) can deviate notably from the perceived quality of the journals in which they appear. Evidence of this comes from a number of studies that examine citation counts, although it should be noted that the use of citation counts as a measure of quality has also received much criticism. Examining the citation data for articles published in three biochemical journals during the 1980s, Seglen (1997) shows that journal impact factors published in the SCI Journal Citation Reports correlate poorly with actual citations for individual articles, and that journal impact factors are often skewed by a small number of highly cited articles. Similar results have been found within the social sciences. For example, Oswald (2007) conducts a survey on the citations of papers published in six differently ranked economics journals. Oswald (2007: 25) reports that the most heavily cited papers in middle-ranked journals are cited far more often than the least cited papers in the elite journals.

Further concerns are raised by Baum (2011) who finds that within the field of organizational studies, journals with high citation-based impact factors often obtain their high impact factors on the back of a small number of highly cited papers, so once again there is evidence that impact factors are unlikely to be representative of the citations of most articles within a ‘top’ journal. Macdonald and Kam (2011) delve further into this issue to look at those who publish in the top management journals and find that this ‘skewed few’ tend to be the same ‘few’, and that self citation and mutual citation is widespread.

It must be acknowledged here that the ABS guide does not rely solely on citation-based impact factors in determining journal quality. Nevertheless, all four editions of the ABS guide (2007–2010) include citation-based metrics alongside the ABS ratings. Indeed, in the introduction to the fourth edition of the ABS guide (Harvey et al., 2010: 1–15) the editors state that ‘4’ rated journals ‘generally have the highest citation impact factors within their field’ while ‘3’ rated journals ‘generally have fair to good citation impact factors relative to others in their field’. The editors then go on to state that: ‘The editors … believe in principle that all higher graded journals—3 and 4—should carry a citation impact factor’. So clearly citation data are viewed as being important by the guide’s editors.

Journal quality guides and fetishism

Despite the problems of quantifying research quality, research managers and deans are still keen to employ journal quality lists to assess staff and inform resource allocation decisions. Of particular concern among many research deans in elite institutions is the identification of ‘top international’ research, which is signalled by a journal’s ‘4’ rating on the ABS Guide. Willmott (2011) comments specifically on this issue: Lists become fetishized when the publication outlet, the fetish object, assumes an importance greater than the substantive content and contribution of the scholarship. In common with other fetishisms, an awesome power is attributed to an object … For the scholar, the fantasy object is the top journal ‘hit’ whose attainment affirms an imagined scholarly virtuosity. (Willmott, 2011: 430)

Willmott (2011: 438) draws a parallel with the use of a ligature applied to the throat in asphyxiation-based acts of autoeroticism. He suggests that the degree of risk, coupled with acts of ‘self-torture’ in the tantalising anticipation of a satisfying pay-off may be likened to the self-imposed struggle of wrestling with a manuscript to render it compliant with the form of scholarship required by a targeted top-tier (‘4’ rated) journal. In this case the anticipated pay-off is the top-tier journal ‘hit’. He extends the metaphor by suggesting that in the same way that a ligature restricts blood flow, so the journal list restricts the type of research that is viewed as top-tier.

Willmott (2011: 438) acknowledges that some readers may consider this metaphor to be somewhat far-fetched but his use of the word ‘fetish’ viewed within an anthropological setting as referring to ‘an object that is believed to have magical powers and thus attracts excessive and irrational investments’ (Böhm and Batta, 2010: 348) is one that many readers will recognize in relation to the ABS guide and ‘4’ ratings in particular. The subject in this setting is the individual who has fallen under the spell of the journal list. For example, the ABS guide has created a subset of ‘star’ academics whose research papers appear in journals carrying the magical ‘4’ rating. Among some their perceptions of themselves have become highly intertwined with the ABS guide. In their survey of UK academics Nedeva et al. (2012: 351) identify one senior academic whom they describe in the following terms: [He saw] his whole identity as defined by the ABS Guide, his soul measured by it. He has, in a Foucauldian sense, enfolded himself in the discourse of journal rankings and they define him.

To these individuals the ABS guide provides a quantification of their top-tier research status, by which they define themselves. Ten years ago such individuals could say ‘I’m a leading figure in my field’ but this may not mean much to academics from other subject groups or even within their own subject area if it was broad, but now they can say ‘I’m a four star professor’ and they immediately gain the respect of those like-minded individuals within UK business schools including many deans and research managers. Of course not all professors who publish in top rated journals will see their identity wrapped up in ABS ratings or need the ABS ratings to provide a sense of validation for what Willmott (2011: 430) refers to as ‘imagined scholarly virtuosity’ but those who do are most likely to be susceptible to, and to perpetuate the phenomenon of, journal list fetishism. Such individuals are also likely to be reluctant to accept critiques of the ABS guide since the guide’s ratings are vital to how they see themselves.

Trust and journal ranking lists

A framework for analysing ‘trust’

The current paper argues that the phenomenon of journal list fetishism is due in part to the perception of trustworthiness encouraged by the ABS guide. If trust in a guide’s ratings is very weak among the academic community then the fetish power of the guide will likely be limited. There would be less satisfaction from a top-tier journal ‘hit’ if there is widespread scepticism about the top-tier ranking of a particular journal. To extend Willmott’s (2011) asphyxiation-based metaphor, the weaker the trust in a journal quality guide the weaker the effectiveness of the ligature and the resulting gratification from a ‘hit’.

If trust plays a role as an antecedent to the journal list fetishism identified by Willmott (2011), how may it have developed? Mayer et al. (1995: 712) define trust as: … the willingness of a party to be vulnerable to the actions of another party based on the expectation that the other will perform a particular action important to the trustor, irrespective of the ability to monitor or control that other party.

Given the degree to which research, promotion and tenure decisions are currently being made solely or primarily on the basis of ABS guide ratings, the above statement appears a reasonable description of how universities are willing to make themselves vulnerable to the judgements of the ABS editorial panel. In the build-up to the UK’s research assessment in 2014, known as the Research Excellence Framework (REF), universities are investing vast amounts of money to buy-in ‘four star’ professors. To the extent that the cost of these investments is known and considerable in financial terms, and that the resulting REF outcomes are unknowable at the time the investment is made, universities are making themselves vulnerable to the judgements of the guide.

How can we begin to formally assess the role of trust with regard to the widespread adoption of the ABS guide? Free (2008: 630) notes that there is inadequate understanding of the antecedents of trust with regard to the organizational context. An important contention of his thesis is that: … a complex phenomenon like trust cannot be universally defined to suit any theoretical purpose. Rather, definitions should be elaborated to fit specific research issues and study aims … (Free, 2008: 630)

However, he proposes that investigations of trust should consider two broad dimensions: personal/interpersonal forms of trust (Mayer et al., 1995) and systems trust (Giddens, 1990, 1991). This framework is employed by Jeacle and Carter (2011) to examine the rating and ranking of hotels by visitors to the TripAdvisor website. Clearly the ranking of hotels by anonymous private citizens is not identical to the ranking of journals by the expert advisors and editors of the ABS guide. However, both Jeacle and Carter (2011) and the current paper are concerned with analysing the trust of users in a process by which individual opinions are converted into quality ratings for one set of organizations (hotels/academic journals) by another organization (TripAdvisor/ABS guide).

Drawing upon the suggestions of Free (2008), Jeacle and Carter (2011) identify three major antecedents to personal trust that can be applied to explain the trust of external users in the hotel ratings reported by TripAdvisor. These are labelled ‘ability’, ‘benevolence’ and ‘integrity’.

Ability is described as ‘skills, competencies, and characteristics that enable a party to have influence within some specific domain’ (Mayer et al., 1995: 717). The importance of domain-specificity is noted also be Free (2008: 295).

Benevolence, within the context of trustworthiness, is the belief of the trustor that the trustee wishes to help, aside from any opportunistic profit or ego-driven motive (Jeacle and Carter, 2011; Mayer et al., 1995).

Integrity is based on the understanding that the trustee is adhering to a set of values or principles with which the trustor concurs (Mayer et al., 1995: 718).

Free (2008: 633) acknowledges that within many real-world scenarios these three factors are likely to be interrelated and points to Tomkins (2001) as evidence that benevolence ‘takes more time to emerge’ and so ability and integrity may play a greater role in establishing trustworthiness during the initial period of a relationship between trustor and trustee.

While these three factors are important antecedents for establishing trustworthiness, there is also a role for systems-related trust, as described by Giddens (1990, 1991). The two factors highlighted by Free (2008) and Jeacle and Carter (2011) are:

Expert systems and calculative regimes, in which there is a formalized system for processing opinions, often involving quantified data. In the modern world quantification is often seen as a method to give conclusions a more scientific and objective look, enhancing trustworthiness (Free, 2008: 633).

Symbolic tokens, which have a standard value across locations and time, thus allowing users (trustors) to make informed judgements. Jeacle and Carter (2011) look at the ‘1’ to ‘5’ ratings given to hotels on the TripAdvisor web site, which are similar in concept to the ‘1’ to ‘4’ quality ratings given to journals in the ABS guide.

The next step is to look at the ABS guide in the light of these antecedents to trustworthiness.

Analysing trustworthiness: the case of the ABS guide

The perception of benevolence is encouraged by the ABS guide’s editors in the introduction to the 2010 edition of the guide in which they state that the guide is ‘primarily to serve the needs of the UK business and management research community’ (Harvey et al., 2010: 1). This kind of statement, which uses words like ‘serve’ rather than ‘instruct’, together with the imprimatur of the ABS logo, helps foster a belief that the guide has been constructed free of any egocentric profit motive and so enhances the perception of trustworthiness. The imprimatur of the ABS logo on the guide lends it a type of subject-wide authority that it would lack if it had been published under the names of individual academics.

Since its first edition, the ABS guide has emphasized its reliance on domain specialists in the determination of journal ratings, and that these expert opinions are part of an expert system designed to minimize biases and ensure an appropriate representation of all major subsets within the domain of business-related academic journals. The process by which journal ratings are obtained encourages positive perceptions of both ability and integrity in the minds of trustors.

In their Academic Journal Quality Guide: Context, Purpose and Methodology Harvey et al. (2007) explain the process by which they established ratings in the first version of the ABS guide, which appeared in 2007. They note that the process was multi-stage and involved obtaining expert assessments from domain specialists, examining broader contextual factors (e.g. journal links with research associations, the standing of a journal’s editorial board members, the journal’s track record) together with citation indices and the ‘blind testing’ of ratings using three or four reviewers from different universities.

In the introduction to the 2010 edition of the ABS Academic Journal Quality Guide (Harvey et al., 2010: 1-15) the editors make reference to their examination of a new set of information sources: grade point averages estimated from the outcomes of the UK’s Research Assessment Exercise (RAE) in 2008, a range of citation-based impact measures, plus ratings on ten other international journal quality lists. 3 These data, or summary statistics of these data, are now presented in the 2010 edition of the ABS guide.

[These data were] … considered by the ABS Guide’s advisory committee, often with the benefit of external comment and feedback from scholarly associations in particular specialist areas. Running alongside these formal methods of consultation, presentations outlining the methods employed in the compilation of the ABS Guide were made at several Business and Management Studies conferences and at 10 university business school seminars. Feedback was sought from the audiences at these events and was fed back into the development process for the ABS Guide. (Morris et al., 2011: 566)

The role of expert domain specialists in the grading process strengthens the perception of ability. With regard to integrity, the apparent collegial nature of the process suggests that the principles and values underpinning the ABS ratings are commonly understood and widely accepted.

The degree to which the ABS guide’s ratings are driven by expert opinions analysed within an expert system and calculative regime is further emphasized by the extensive presentation of quantitative metrics for journal quality alongside their own rating for each journal. The first three editions of the ABS guide (2007–2009) included journal ratings from six UK business schools: Warwick, Imperial College, Cranfield, Kent, Aston and Durham. In addition to these, three different citation metrics were also included. The fourth edition of the ABS guide appeared in 2010 and has replaced the six individual schools’ ratings with a range of new metrics: RAE2008 submission figures for each journal, together with an estimated grade point average (GPA) based on RAE2008 outcomes; a ‘World elite count’ metric indicating how frequently a journal is rated top-tier across a range of international journal quality guides; and four citation-based impact metrics, standardized by subject field.

The presentation of such a large amount of quantitative data gives the impression of a highly scientific quantitative and ‘objective’ process underpinning the ABS’s own rating for each journal. The ‘fetish of calculation’ within organizations has long been recognized (see Bloomfield, 1991). Free (2008) notes how quantification of particular characteristics can be used to enhance trustworthiness: Giddens (1991: 89–90) acknowledges lay persons’ respect for science and technical specialism. Of particular relevance here is the way that calculation is widely seen as desirable in social and economic interaction. In his engaging attempt to account for the prestige and power of quantification in the modern world, Porter (1995) characterizes calculation as a project to standardize reasoning and present impersonal standards of ‘objectivity’. According to Porter, quantification is primarily a technology of distance and a means by which conclusions can be rendered more ‘objective’ and ‘trustworthy’. (Free, 2008: 633, emphasis added)

The provision of a plethora of additional metrics within the various editions of the ABS guide is likely to help foster a sense of objective trustworthiness.

Although this paper draws on a framework used by Jeacle and Carter (2011), which in turn draws on the work of Free (2008) and Giddens (1991), it is important to note that their study focused on the power of numbers to lay people (i.e. members of the public visiting the TripAdvisor website) not business school academics. It could be argued that academics are better informed about the potential misuse of numbers than lay people. There are several reasons why the existing framework is still likely to be relevant to academics. Firstly, while many of us may have enough subject-specific expertise to question a guide’s ratings within our own subject field this is unlikely to be the case when examining ratings within other subject areas or between subject areas. Would someone specializing in econometric theory really trust their own judgement in ranking journals within human resources or in comparing human resource journals to those in tourism and hospitality? This uncertainty means that academics will often refer back to the ABS guide when assessing academics from outside their own subject field, in a manner not much different from how members of the public use the hotel ratings on TripAdvisor’s website.

Secondly, the level of quantitative skills possessed by individuals varies considerably within the UK business school community, and even among those who possess such skills there is a surprising lack of familiarity with the additional metrics that are provided within the ABS guide. So even though they have the intellectual skills to critique any possible misuse of numbers, without having acquired any knowledge about the ABS guide’s additional metrics they effectively have no more insight than colleagues with no quantitative skills. Hence, the relationships between the public and its use of TripAdvisor’s ratings, and academics and their use of the ABS guide, can be seen in a similar light.

Does usage of the guide indicate trust or merely ease-of-use?

The question of whether the ABS guide is ‘trusted’ or merely ‘used’ by research managers is clearly of relevance to the current paper. The guide’s ease-of-use and the transparency of its usage for promotion decisions, etc. (e.g. setting grade point average targets for staff) make it an attractive tool for decision-making. Within large institutions and organizations, managers often rely on metrics to assess performance. This is particularly the case where managers may not be experts in the specific fields or activities of individual employees. This scenario has increasingly become the norm in many UK universities during the last two decades with the incorporation of small, relatively autonomous subject departments into large business schools (e.g. Khalifa and Quattrone, 2008: 70). Journal lists such as the ABS guide offer research mangers a simple solution to the problem of assessing and ranking a large number of heterogeneous research outputs (see Jain and Golosinski, 2009; Worrell, 2009).

Ease-of-use may be a significant contributory factor in the uptake of the guide but it is unlikely to be a sufficient condition. It should be remembered that many business school deans are under pressure to improve research quality. It is therefore unlikely that a guide would be adopted if deans had no trust in its judgements. This is not to say that their trust in the guide is absolute—merely that trust is likely to play a role in explaining its uptake. Certainly there is anecdotal evidence to support the view that some major decision-makers respect the authority of the guide’s judgements. In their survey of UK business schools Nevada et al. (2012: 344–345) quote extensively from the then dean of Warwick Business School at the time of the ABS guide’s launch in 2007 in which the guide is described as ‘comprehensive’ and ‘authoritative’. In addition, it is certainly the opinion of the ABS editors themselves that trust plays a role in the usage of their guide, as evidenced by their comments in the introduction to the fourth edition of the guide referring to ‘those who trust the ABS Guide in making often otherwise extremely difficult judgements about research quality’ (Harvey et al., 2010: 11–12).

The next section presents empirical evidence that questions the current fetishizing of the ABS guide’s ‘4’ rating. The analysis is based on a range of additional data that the ABS guide’s editors have included in the third and fourth editions of the guide, and the potential anomalies that it reveals may not be obvious to a casual reader of the guide.

Trust in numbers: evidence from the ABS guide

How data are presented in the ABS guide

To those who believe that it is meaningless to try and assess the quality of individual research by reference to citations and other quality metrics assigned to particular journals the ABS guide serves no useful purpose. However, it is clear that many research managers are actively employing the guide to assess research quality in this manner. This section of the paper may be of special interest to them. The intent here is to question the degree of trust that they appear to have in the ABS guide’s ratings and to make them think carefully about the potential injustices and damaging consequences of a heavy-handed use of the guide in determining individuals’ workloads and career paths.

Both the third edition (Kelly et al., 2009b) and fourth edition (Harvey et al., 2010) of the ABS guide present a plethora of additional quality metrics alongside the guide’s own rating for a journal. As has already been mentioned, the 2009 edition of the guide presented individual recommended ratings from six UK business schools. The 2010 edition has replaced these with a range of new metrics including a World elite counter that indicates the number of times a journal appears in the top tier of a set of international journal quality guides. 4 These international lists are drawn mostly from Europe and Australia. Only one list derives from the US—the University of Texas Dallas database, which is effectively a list of 24 elite journals rather than a full set of journal ratings—and no lists are drawn from any part of Asia despite the rapid growth of this region as a producer of academic research (Au, 2007).

The 2010 edition of the guide includes a grade point average (GPA) for each journal estimated from the actual RAE2008 ratings for UK business schools and their submissions. In the introduction to the 2010 edition, the editors describe the estimation process used. Weights of 1, 2, 3 and 4 are applied to the proportions of output in each of the four quality categories in RAE2008, with a weighting of 1 (4) allocated to the lowest (highest) quality grading. The mean score for a journal is the mean GPA for outputs awarded to the institutions citing the journal in RAE 2008. This kind of RAE-based estimation is always potentially hazardous and various estimation methods can be employed (see Beattie and Goodacre, 2006; Mingers et al., 2009). Several points are worth noting here. Firstly, the estimation process is complicated by the fact that not all articles in a particular journal are rated identically by the RAE panel (see Ashton et al., 2009). Secondly, non-journal submissions create problems for the estimation process: should all book chapters be treated the same as, say, reports for governmental bodies, for example? These factors introduce errors into the estimation process.

The 2010 edition of the ABS guide also includes citation-based measures, standardized by subject field. Quartile scores (1–4) are given for each journal based on its subject-adjusted impact factor. The 2008 impact factor of a journal is the number of current year citations to items published in that journal during the previous two years. The Five Year impact factor covers the previous five years. In both cases the impact factors are standardized by subject, and are measured in terms of standard deviations from the mean for their subject area. A quartile score of 4 (1) means that a journal is in the upper (lower) quartile on the basis of impact factor. While attempting to standardize citations by subject area is understandable given the different citation rates across different subject areas, a journal’s impact factor can be severely impacted by the subject area to which it has been allocated. This is not always a clear cut decision. There are many journals that could reasonably be allocated to two or three different ABS subject categories, giving potentially quite different quartile rankings.

The suggestion in the current paper is that the provision of these additional metrics gives the ABS guide a more scientific presentation and so fosters a perception of trustworthiness through trust in numbers but is there evidence to support this suggestion? In the 15-page introduction to the fourth edition of the guide (Harvey et al, 2010: 1–15) the editors describe the usefulness of the new additional metrics that had been introduced (e.g. RAE2008 grade point average, World elite count). They also provide some analyses based on these metrics (although not the same analyses provided here, obviously) commenting that: ‘One way of assessing the validity of the ABS scheme is to assess its consistency or reliability in relation to other quality indicators’ (Harvey, et al., 2010: 8). Having conducted several analyses comparing their own ratings and the additional metrics the editors then state in the conclusion: The data analysis presented in this introduction gives support to those who trust the ABS Guide in making often otherwise extremely difficult judgements about research quality across a disparate set of sub-fields within the business school community. (Harvey et al. 2010: 11–12, emphasis added)

This provides strong direct evidence that the guide’s editors view these additional metrics as being useful in their own right and also that they play a role in establishing the guide’s trustworthiness among decision-makers within the business school community.

Of course, if ratings mean different things across different subjects then the role of the ABS guide as a tool for research managers and deans would be seriously compromised and the current fetishizing of the ‘4’ rating would become highly questionable. Some empirical evidence is presented on this issue next.

Fetishizing the ‘sign of 4’: evidence from the ABS guide

In many UK business schools the ABS ‘4’ rating has become the fetishized symbolic token. An important dimension to symbolic tokens is that they are considered to be ‘media of exchange which have standard value, and thus are interchangeable across a plurality of contexts’ (Giddens, 1991: 18). This is one of the most important dimensions to the ABS guide. Users must trust that a ‘4’ rating means the same thing across all subject groups, for example. The ABS guide’s discrete ratings may be likened to the symbolic tokens identified by Jeacle and Carter (2011) in regard the ‘1’ to ‘5’ ratings given to hotels by TripAdvisor. They quote from Cassell (1993: 29) who points out that the power of symbolic tokens can ‘only operate when agents trust the value of symbolic tokens’. This provides a strong rationale for the ABS editors to establish a high degree of trust in their guide’s ratings.

As has already been noted above, the ABS guide’s editors suggest that examining the quality signals from additional metrics is one way to assess the trustworthiness of the guide’s ratings (Harvey et al., 2010: 8, 11–12). The degree to which disconnections occur between the ABS ratings and the additional metrics that the ABS guide presents can be most readily observed through the RAE2008 estimated grade point average (GPA) and the World elite count measures, both of which are presented in the 2010 edition of the guide. 5 The four citation metrics are standardized by subject field so variations between fields are less easy to identify. The following analysis is illustrative only. It is not intended to prove that one journal or subject area is better than another or to recommend changes in ratings for individual journals. However, it raises some interesting potential anomalies.

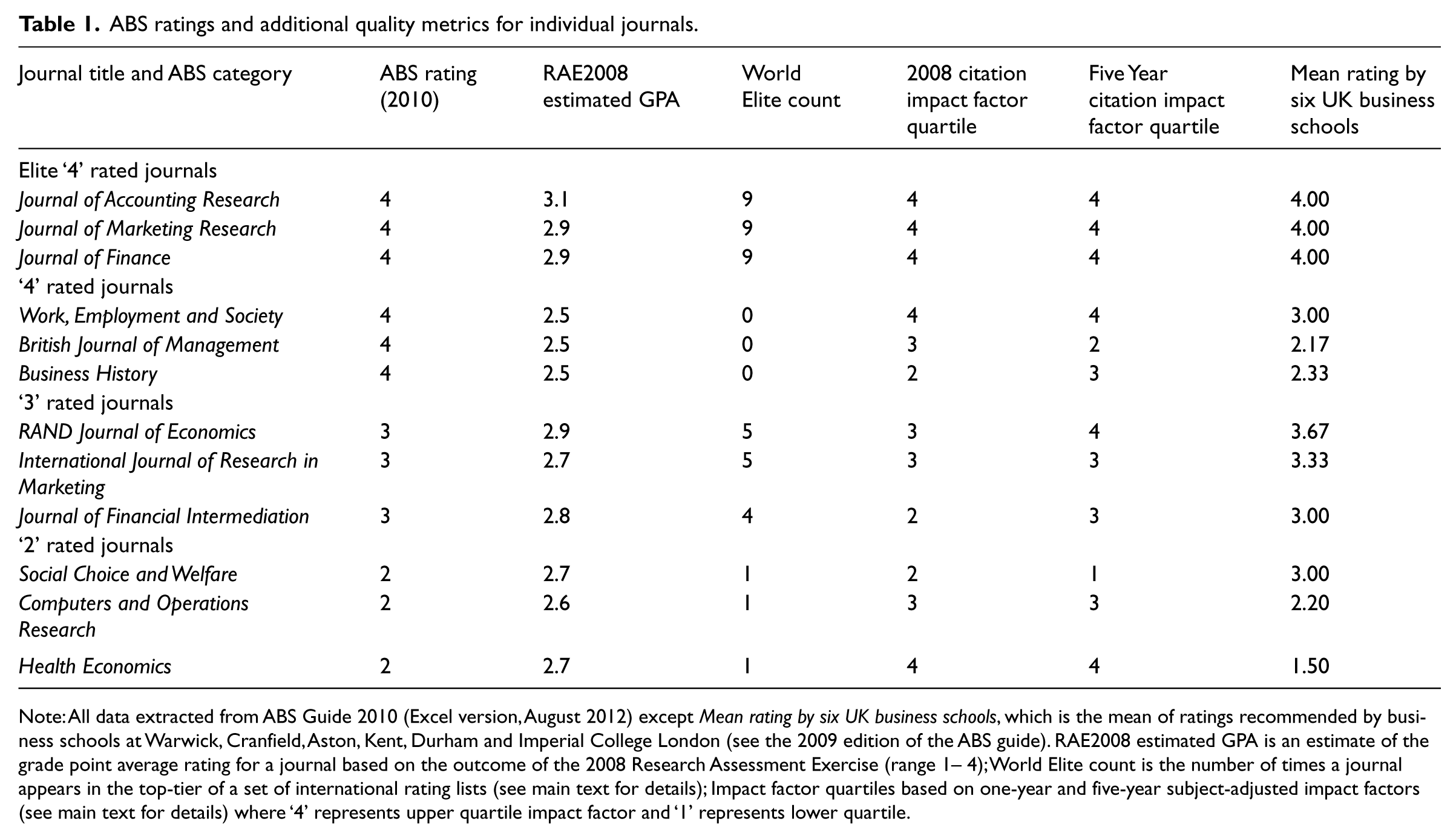

Table 1 focuses on the overlaps in the additional quality metrics between subsets of journals rated from ‘2’ to ‘4’ on the ABS guide.

ABS ratings and additional quality metrics for individual journals.

Note: All data extracted from ABS Guide 2010 (Excel version, August 2012) except Mean rating by six UK business schools, which is the mean of ratings recommended by business schools at Warwick, Cranfield, Aston, Kent, Durham and Imperial College London (see the 2009 edition of the ABS guide). RAE2008 estimated GPA is an estimate of the grade point average rating for a journal based on the outcome of the 2008 Research Assessment Exercise (range 1– 4); World Elite count is the number of times a journal appears in the top-tier of a set of international rating lists (see main text for details); Impact factor quartiles based on one-year and five-year subject-adjusted impact factors (see main text for details) where ‘4’ represents upper quartile impact factor and ‘1’ represents lower quartile.

The first three journals in Table 1 are among the top-performing journals across all subject areas on the basis of the additional metrics in the ABS guide and are included here merely as an upper-bound reference point. These ‘elite’ journals show no notable disconnect between the additional metrics given by the ABS guide and the ABS guide’s ‘4’ rating. This can help promote a certain trust in the ‘ability’ and ‘integrity’ of the ABS guide and its systems for those users who do a quick ‘calibration check’ using a handful of the most well-known academic journals.

However, not all ‘4’ ratings appear to be so well supported by the additional metrics. The superiority of ‘4’ rated journals such as Business History and the British Journal of Management over ‘3’ rated journals like the RAND Journal of Economics seems very difficult to justify on the basis of the additional metrics. More importantly, even some high-performing ‘2’ rated journals appear to perform at a comparable level to the lower-performing ‘4’ rated journals.

This disconnect between the additional metrics presented in the 2010 edition of the ABS guide and the eventual ABS rating for these journals can also be seen in earlier versions of the guide. The third edition of the ABS guide was published in 2009 and presented recommended ratings from six UK business schools. While the RAND Journal of Economics received four ‘4’ recommendations and two ‘3’ recommendations the British Journal of Management received only one ‘3’ recommendation and five ‘2’ recommendations. Business History fared little better with two ‘3’ recommendations and four ‘2’ recommendations. Table 1 presents the mean score from these six business schools for those cases where a rating was given. 6

The overlap in the values of the additional metrics between some ‘4’ and ‘2’ rated journals is a potentially significant finding given the vast difference in how ‘2’ and ‘4’ outputs are currently viewed by research managers within UK business schools. Many ‘2’ rated journals are specialized and so are unlikely to score highly on citation measures but are highly influential in their specialist fields. Despite this, the draconian consequences for UK academics of publishing in ‘2’ rated journals are reported by Sangster (2011: 576). This is the inevitable result of research managers fetishizing the ABS ratings guide. They are blind to quality signals from any other source in the same way that the fetishist becomes obsessed with power of the fetishized object alone.

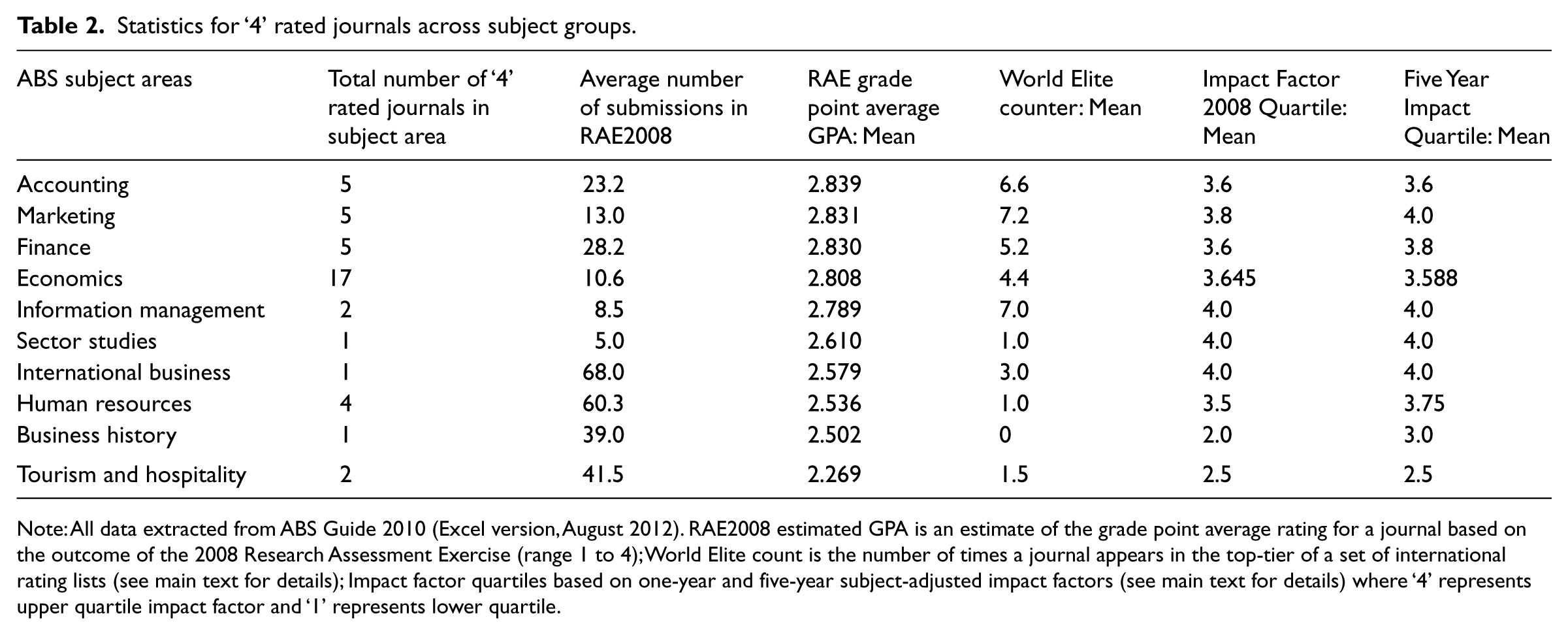

Further concerns regarding the fetishizing of the magical ‘sign of 4’ can be seen in Table 2. At present most research managers treat the ‘4’ rating as a common token of top-quality journals. This is an essential characteristic for any symbolic token—it must mean the same thing across different contexts (Jeacle and Carter, 2011: 301). So can we trust the ‘sign of 4’ as a common measure of quality across all subject groups? This is difficult to prove either way since there is no universally accepted measure of quality for journals but for those who believe in the quantification and measurability of journal quality the quality signals from the additional metrics presented in the ABS guide should offer food for thought.

Statistics for ‘4’ rated journals across subject groups.

Note: All data extracted from ABS Guide 2010 (Excel version, August 2012). RAE2008 estimated GPA is an estimate of the grade point average rating for a journal based on the outcome of the 2008 Research Assessment Exercise (range 1 to 4); World Elite count is the number of times a journal appears in the top-tier of a set of international rating lists (see main text for details); Impact factor quartiles based on one-year and five-year subject-adjusted impact factors (see main text for details) where ‘4’ represents upper quartile impact factor and ‘1’ represents lower quartile.

In Table 2 all ‘4’ rated journals in each subject group are examined on the basis of the mean value for each additional quality metric in the 2010 edition of the ABS guide. Whilst all the constituent journals are ‘4’ rated there are vast variations in how the additional quality metrics vary by subject group. The average ‘4’ rated journal in subjects like accounting, finance and marketing generates high values across the additional quality metrics and exhibits relatively low numbers of ‘hits’ by UK academics entered for RAE2008. On the other hand the average ‘4’ journal in areas such as tourism and human resources generates much weaker results regarding additional quality metrics and tends to have a much higher number of ‘hits’ by UK based academics. 7

Users need to trust that ‘4’ symbols mean the same thing across different contexts, i.e. across journals and subjects. At the very least, the results here raise concerns regarding this point for those who believe that research quality can be effectively represented by a single discrete metric. Is the presentation of so many different quality metrics in the ABS guide designed to exploit ‘the prestige and power of quantification in the modern world’ (Free, 2008: 633) in an attempt to foster the illusion of ‘objectivity’ and so enhance perceived ‘trustworthiness’?

Of course, even if faced with objective evidence that challenges a belief system it is in the nature of fetish worshipers to find some way of denying the evidence. This is likely to be particularly strong in the case of the ‘four star professor’ whose whole identity and self-image are intertwined with the ABS ratings. Cluley (2011: 787) comments of this phenomenon more generally: Žižek (2009: 61) claims that ‘what fetishism gives body to is precisely my disavowal of knowledge, my refusal to subjectively assume what I know’. He (2009: 65) explains that ‘the fetish is the embodiment of the Lie which enables us to sustain the unbearable truth’. Here, British psychotherapist Adam Phillips (1993: 93) confirms that: ‘Just as it is the sign of a good theory that it can be used to support contradictory positions, it is the sign of a good fetish that it keeps incompatible ideas alive’.

Unfortunately, given that the kind of ‘four star professor’ referred to by Nedeva et al. (2012: 351) is likely to be frequently an influential individual within his/her school this suggests that there could be significant inertia with regard to any attempts to move away from the current usage of journal ranking guides for decision making purposes.

Clearly the business school community includes a heterogeneous set of opinions. Within any community or society it is possible for a belief system to be dominant without every member being absolutely convinced by that belief system. All that is required is for a sufficiently large or influential proportion of the community to have a sufficient level of trust or belief in order for that system to be the dominant source of authority. The increasing tide of articles criticizing the use of journal ranking guides is evidence of critical opinion within the business school community but the current widespread and mechanistic use of such guides by many decision makers should be a matter for concern to all members of the community.

Additional issues and limitations

The ABS guide and research assessments

Even research managers who acknowledge that the ABS guide may sometimes ‘get it wrong’ for individual journals seem to believe that the ABS guide will ‘get it right’ on average. Indeed, one of the major selling points of the ABS guide is its suggested ability to explain outcomes from the previous Research Assessment Exercise (RAE 2008) even though research panels have stated explicitly that they will not be using formal rating lists in the assessment of the next exercise, REF 2014. So what is the evidence on predictive ability for the ABS guide?

Taylor (2011) illustrates plots of actual RAE2008 outcomes versus ABS-predicted performance for both Business and Management Studies (BMS) and Economics and Econometrics (E&E) and reveals some significant deviations between predictions and realizations even among well-established universities: [For BMS] we see that Durham and Queen Mary have substantially higher predicted research output scores than Leicester or Warwick even though their actual (RAE-determined) scores are very similar … Even more striking contrasts are evident for E&E departments … While the (RAE-determined) research output scores for Manchester and Exeter are very similar, for example, Exeter has a far higher predicted score.

Nevertheless, the ABS guide ratings appear to possess some significant explanatory power for RAE2008 outcomes (Kelly et al., 2009a; Taylor, 2011). However, journal ratings from various guides tend to be positively correlated. For example, Harvey et al. (2008: 10) show strong positive rank correlations between the ABS ratings and those for individual university lists. Thus, it is likely that many other rating guides will also possess significant explanatory power even though they may have very different ratings for certain subsets of business journals. 8

It is possible for a journal quality guide to generate a relatively high measure of explanatory power for RAE outcomes across a large sample of journals but still have significant errors in specialist niche journals that may not appear frequently in RAE submissions. Since most academics publish in only a small number of journals most of the time, usually reflecting their subject specialism, negative ratings bias for a number of specialisms within subject areas are likely to have a devastating impact on individual researchers. Are these individuals, whose research careers are unjustly ended, to be considered nothing more than an acceptable level of ‘collateral damage’? Willmott (2011: 431) identifies the areas of sustainability (Wells 2010), business communication (Rentz, 2009), tourism (Hall, 2011) and feminist multidisciplinary research (Meriläinen et al., 2008) as having suffered in this manner. Recent empirical evidence has also been presented with regard to the treatment of accounting history journals relative to business history journals (Hoepner and Unerman, 2012), and multidisciplinary journals relative to single-subject journals (Rafols et al., 2012).

Future research: a case for UK surveys

While this study presents an argument based on the observable characteristics of the ABS guide and what we know about trust and quantification, a limitation to this study is that it does not involve any survey of the usage of the ABS guide across UK business schools or the views of staff within these institutions. For most of us the growing power of the ABS guide as a tool for research managers has been a matter of common observation across recent years. Most research-active staff within UK schools will now be familiar with the ABS guide’s ratings for the research outlets that they target, a fact demonstrated in the recent UK survey by Nedeva et al. (2012). Indeed the ABS editors themselves have noted that their guide ‘has been widely adopted as a policy tool in UK business schools’ (Kelly et al., 2009a: 2) and that the wide reaching aims of the ABS guide include the shaping of publication patterns.

Given the extensive usage of the ABS guide across many UK institutions it would clearly be beneficial for further in-depth surveys to be conducted, building on the work of Nedeva et al. (2012) and also providing empirical evidence on some of the issues raised in this paper. Questions could attempt to assess the degree to which the widespread adoption of the ABS guide by research managers and deans reflects different antecedents of trust and the degree to which ease-of-use has played a role in the guide’s uptake.

On a practical note it is worth pointing out that the ABS guide is not free of typographical errors. One of the most glaring, which was quickly acknowledged and corrected by the ABS editors, was the sudden reclassification and demotion of the Journal of Accounting and Public Policy from a ‘3’ to a ‘1’. This was rectified but what about smaller changes for other journals between the 2009 and 2010 editions (e.g. movement of Abacus from ‘2’ to ‘3’ and of Social Choice and Welfare from ‘3’ to ‘2’): are these all intentional or are some of these typos too? A similar point can be made with regard to the downloadable Microsoft Excel ® versions of the 2010 edition of the ABS guide. The data for the RAE2008 estimated GPA in the initial (May 2010) version available from the ABS website is different from that presented in a more recently (August 2012) downloaded version, even though both relate to the 2010 version of the ABS guide. 9 Similar issues afflict the impact factor quartile metrics. A survey could assess the degree to which users of the guide are aware of these changes and how they respond to them.

Conclusion

This paper is concerned with the fetishizing of the ABS journal quality guide within the UK business school community and the role that trust may play in establishing and reaffirming this fetish. The first part of the analysis illustrates how particular characteristics of the guide may help to generate a perception of trustworthiness with reference to specific antecedents of trust identified by Free (2008) and Jeacle and Carter (2011). The second part of the analysis examines empirical evidence relating to specific ratings for journals and subject fields. Of particular concern is the degree to which the guide’s ‘4’ ratings are a common token of top-international quality across journals and subject groups.

Those who believe that attempts to measure and quantify the quality of research are inherently unwise or meaningless will not need any convincing as to the inappropriateness of using journal ranking guides to assess research quality. However, for those who believe in the measurability of research quality through the use of metrics, the issues raised in this paper should act as a wake-up call against placing blind trust in the use of any single quality metric as a tool for managing research. As has already been pointed out in a number of recent studies (e.g. Hussain, 2010, 2011; Nedeva et al., 2012; Rafols et al., 2012; Sangster, 2011) the rigid application of the ABS guide by deans and research managers has the potential to kill off many new research areas, multidisciplinary methodologies and specialist research fields. This would be a manifestation of the ‘perversion of scholarship’ referred to by Willmott (2011: 429) and could damage the long term growth and enrichment of the academic environment for a generation. When it comes to assessing the quality of academic research there is no such thing as ‘the ABSolute truth’.

Footnotes

Notes

Author biography

Simon Hussain is presently Reader in Accounting at Newcastle University. Previous work on the assessment of research quality within UK business schools has appeared in British Accounting Review and Accounting Education: An International Journal. Address: Newcastle University Business School, 5 Barrack Road, Newcastle upon Tyne, NE1 4SE, UK.