Abstract

Artificial intelligence is transforming literature review in management learning, offering new opportunities for knowledge synthesis while raising epistemological and methodological challenges. This study contributes to categorizing artificial intelligence into predefined-function and machine-learning artificial intelligence to compare their strengths and weaknesses in literature review processes and quality for insights into management learning. Artificial intelligence can improve literature review quality in multiple ways, although it poses various risks in different aspects. We identify the main artificial intelligence limitations in critical thinking and domain knowledge, which are unique human capabilities. Thus, we reconceptualize and argue for human–artificial intelligence collaboration based on artificial intelligence literacy and literature review purposes for management learning. We emphasize the importance of critical inspection of artificial intelligence to mitigate overgeneralization and hallucination of knowledge. Artificial intelligence can be an augmentation tool for efficiency and inspiration in management learning. Future research can explore human application processes and contexts, artificial intelligence evolution, and methodological advancements.

Keywords

Introduction

Literature review (LR) is fundamental to management learning, critical in synthesizing knowledge, fostering innovative thinking, and guiding research development (Kraus et al., 2022; Lim et al., 2022). LR deals with controversy and conceptual complexity as a major contribution (Kunisch et al., 2023; Wright and Michailova, 2022). Beyond compiling existing studies, LR manifests how individuals construct, interpret, and apply knowledge (Chia and Holt, 2008; Chiva and Alegre, 2005). The process of conducting LR is not only an academic practice of knowledge synthesis but also an essential component of management learning, affecting how knowledge is created, communicated, and integrated into management practice (Sharma and Bansal, 2020; Wright and Michailova, 2022).

Over the past two decades, LR has evolved significantly regarding LR philosophical foundations, LR roles and purposes, actionable plans, reporting methods, technologies for LR, and the expectations placed upon LR (Alvesson and Sandberg, 2020; Kunisch et al., 2023; Wagner et al., 2022). Among these innovations, artificial intelligence (AI) has emerged as a transformative force, particularly in large-scale text analysis, automated data extraction, and content synthesis (De La Torre-López et al., 2023; Morgan, 2023; Wagner et al., 2022). With the rapid development of machine learning, text mining, and relevant AI technologies, the appropriate application of AI is believed to enhance work effectiveness and strengthen rigorous research (Barros et al., 2023; Inala et al., 2024; Johnson et al., 2021). How AI aids and harms knowledge synthesis and writing LR is the concern (De La Torre-López et al., 2023; Wagner et al., 2022). A deeper understanding of AI in LR benefits management learning, including expanding the theoretical frameworks on learning and disseminating knowledge, reconceptualizing and improving human-AI collaboration, and paving the way for effective management learning in the future (Barros et al., 2023; Johnson et al., 2021).

Although past studies have mentioned AI in management, most articles examined AI functions instead of critical debates on the AI foundational infrastructure and principles (Antons et al., 2021). The discussion of the AI foundation can supplement past studies on preventing AI risks and maximizing AI function by optimized utilization (De La Torre-López et al., 2023; Wagner et al., 2022). Through engaging in critical debates, people can gain insight into the AI foundation and types to understand how AI can collaborate with humans to synthesize knowledge (Wagner et al., 2022). Discussing the foundational aspects of AI and its relationship with LR allows people to extend theoretical considerations and envision AI’s practical and conceptual influence on management learning, which constitutes our main contribution.

Corresponding to this background, we analyze AI for knowledge synthesis, including LR processes and quality criteria. We prioritize the AI foundation with common AI tools over specific AI algorithms and experimental AI tools, emphasizing the principles of AI application in LR. This approach aims to contribute to management learning by enabling readers to apply AI based on an understanding of the AI foundation. Moreover, we explore the unique strengths and weaknesses of humans and AI. We reconceptualize human-AI collaboration in LR by discovering why and how AI can assist or hinder researchers doing LR and considering its implications for management learning.

Our conceptual article is based on management and technology literature. We apply AI to search relevant articles across disciplines and generate alternatives and counterarguments for us to reflect on. We also apply AI to check spelling (details in Supplement 1).

We begin by introducing AI as the foundation for our discussion. Then, we apply an LR process framework to examine AI’s unique contributions and limitations. Subsequently, we discuss the quality criteria for LRs to explore the further implications of AI. Based on our analysis, we critically debate the relationships among humans, AI, and LR tasks, focusing on management learning and future research directions (Barros et al., 2023; Davis et al., 2025).

Artificial intelligence

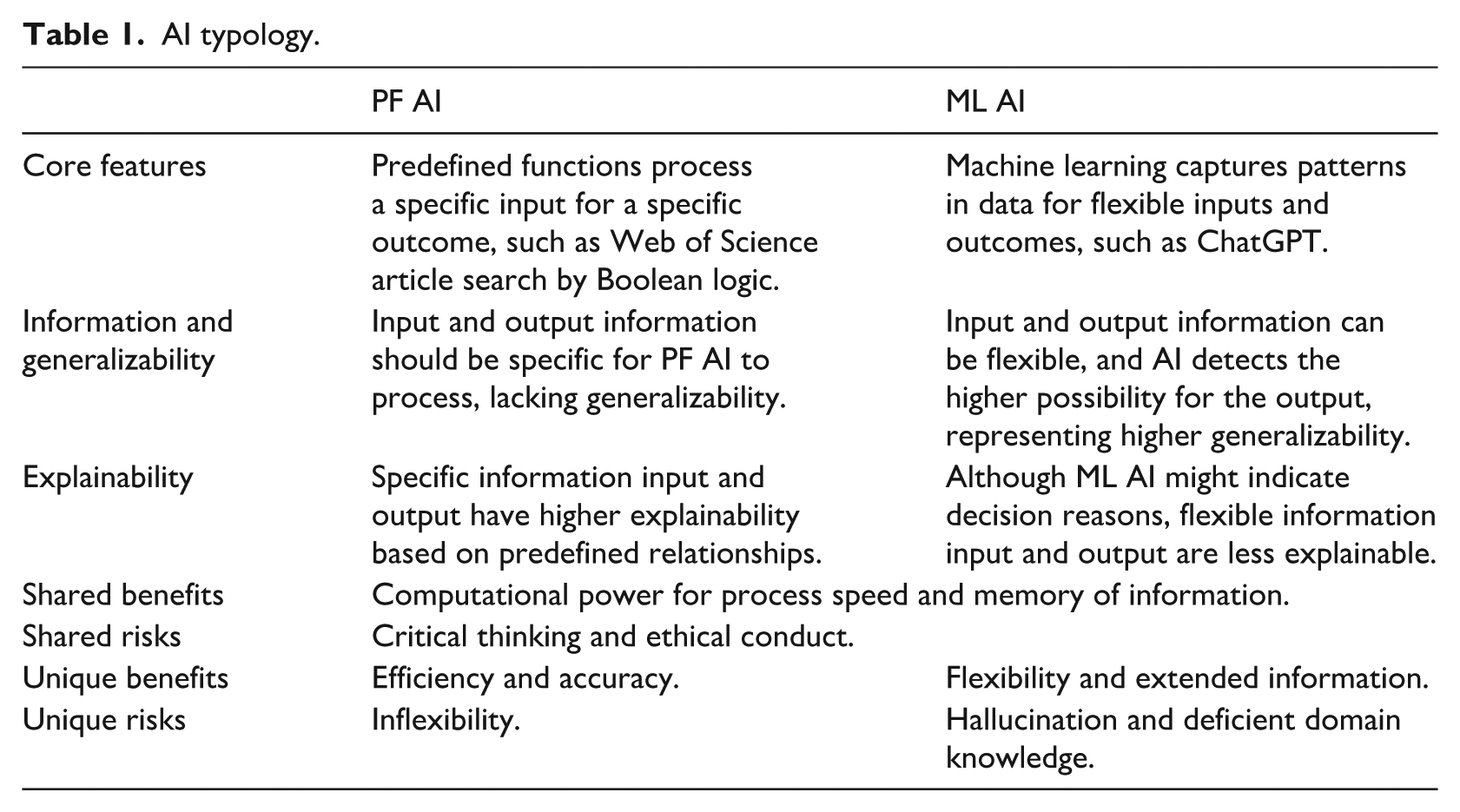

In this section, we outline our perspective on AI and explain AI in accessible terms for readability. We introduce an AI typology of predefined-function AI (PF AI) and machine learning AI (ML AI) to articulate the unique contribution of AI to LR (Table 1). We provide the principles of AI application and apply the typology to analyze AI’s function in different LR processes in the next section.

AI typology.

AI is often used as a general term for technologies that exhibit cognitive abilities and are frequently described in humanized words (Niu et al., 2024; Prince, 2023). Most AI can be constructed by predefined functions or machine learning methods (Lee, 2023; Stuart and Norvig, 2022). For PF AI, humans specify certain input-output relationships and program the AI to “memorize” and “reproduce” them (Lee, 2023; Stuart and Norvig, 2022). When users enter a specific input, PF AI will give the predefined answer, such as expert systems for doctors to search symptom-medicine relationships (Johnson et al., 2021; Stuart and Norvig, 2022). PF AI cannot produce meaningful responses if users ask questions outside predefined parameters, representing a deficiency in the generalizability of relevant concepts (Stuart and Norvig, 2022).

In contrast, most AI tools perceived as superior to traditional automation are based on machine learning, as they can handle flexible inputs and generate diverse outputs (Lee, 2023; Niu et al., 2024). Within ML AI, generative AI (GenAI) can flexibly generate text, graphs, sounds, or speech. Several prominent GenAI tools are based on large language models (LLMs, techniques in machine learning) capable of processing natural language for diverse functions. For instance, ChatGPT can “understand” and “reply” to users relatively naturally because it can follow the fundamental rules of language (OpenAI et al., 2024). Some ML AI tools can process language without LLMs and GenAI (e.g. recurrent neural networks), and our implication of ML AI is not limited to using LLMs (Chang and Lin, 2011; Lee, 2023). We intend to provide more generalizable principles for ML AI.

Although ML AI seems functional, whether ML AI is explainable has triggered heated debate (Bilal et al., 2025). Some data details might be ignored or treated as deviants because ML AI learns the general trend of data (Huang et al., 2024; Lee, 2023). In this sense, ML AI might not be fully explainable since it lacks expert-defined answers or full knowledge of the data. However, ML AI can incorporate infrastructure for explainability by signifying decisions and reasons similar to automatic tools (Bilal et al., 2025; R. Wang et al., 2024). Moreover, ML AI can be largely shaped by users’ feedback or natural language, which explains ML AI’s outcome (Bilal et al., 2025; R. Wang et al., 2024). The explainability of ML AI keeps improving.

Regarding the benefits and risks of PF and ML AI, their shared benefits are the computational power for speedy processing and memory for big data (Putta et al., 2024; Wagner et al., 2022; Walker et al., 2022). AI can memorize research steps and information being processed, which helps researchers synthesize information without omitting important (details De La Torre-López et al., 2023; Drori and Te’eni, 2024; R. Wang et al., 2024). Thus, researchers can save time and energy for innovation and critical thinking that can enrich the contribution of LR. LR processes and conduct can be adjusted for efficiency and high-quality LR with the assistance of AI (Drori and Te’eni, 2024; Wagner et al., 2022).

Regarding shared risks, AI tools currently are less capable of critical thinking defined as (re)thinking logically without relying on prevailing social norms for a novel understanding (Hu et al., 2023; Huang et al., 2023; Larson et al., 2024). We do not discuss human agency in critical thinking since it excludes AI critical thinking by definition. Although some research studies have observed AI abilities similar to critical thinking, such as conducting behavior similar to self-reflection and correction, generating seemingly critical feedback, engaging in overthinking similar to humans, and reevaluating assessment processes without human agency, researchers are uncertain about how people can reliably measure true AI critical thinking free from AI hallucination and incorrect but novel understanding (Hu et al., 2023; Lu et al., 2024; Piché et al., 2024; Xu et al., 2024; Yan et al., 2024). For common ML AI tools, AI “understands” the content by a general pattern from data, and commercial ML AI would choose higher possibility answers for broadly applicable understanding instead of critical answers (Lee, 2023; OpenAI et al., 2024). In contrast, regarding PF AI, the predefined input and output are designed by humans, so PF AI only represents what humans design for AI. PF AI only replicates human decisions rather than employs critical thinking for judgment and debate (Stuart and Norvig, 2022). Therefore, AI’s critical thinking ability is doubtful through the analysis of both PF and ML AI.

Although we emphasize AI functions for LR, we still provide some insights into the ethical use of AI in LR (Barros et al., 2023). For example, can AI be an author when AI helps in LR? Many journals require disclosure of AI utilization to enhance transparency. Moreover, AI can be biased, such as the predefined functions in PF AI not designed by ethical domain experts and ML AI learning from biased data with unfair feedback from users (Prince, 2023; Stuart and Norvig, 2022). Some data and feedback might be obtained in an unethical manner without consent. Although AI can be conceptualized as representing people, it might obtain unethical data and reflect the biases of people. Additionally, algorithms can impact the AI output (e.g. imperfect decoding strategies), resulting in some algorithms generating more readable but less accurate results (Huang et al., 2023). Researchers are required to investigate how AI can produce output (Barros et al., 2023). Furthermore, LR articles may be tailored to specific research contributions, and the intentional elimination or elaboration of certain content should be decided by the humans responsible for the knowledge (Barros et al., 2023; Lindebaum and Fleming, 2024; Wagner et al., 2022).

Next, we compare PF with ML AI by their unique benefits and risks. PF AI can imitate people’s work, thereby reducing the workload for individuals (Stuart and Norvig, 2022). Moreover, PF AI generates an accurate outcome when the knowledge of predefined functions is correct (Stuart and Norvig, 2022). For example, many researchers use Web of Science to search and select articles for LR, which is efficient and accurate (Antons et al., 2021). Similarly, PF AI can be proficient in domain knowledge when predefined functions are designed by domain experts (Stuart and Norvig, 2022). In contrast, PF AI can hardly process flexible input beyond its scope, manifesting unique risks (Lee, 2023).

Concerning ML AI, flexibility and extended information provision can be the unique benefits (Lee, 2023). ML AI captures the main trend of data and links concepts together as a concept map, which enables it to process various inputs and generate various outcomes (Prince, 2023). ML AI might inspire researchers by providing relevant concepts instead of a predefined outcome (Chen et al., 2025; Wang et al., 2025). However, how current AI can understand uncommon metaphors and conduct logical inference is contentious (R. Liu et al., 2025; Niu et al., 2024). Researchers have not reached a consensus on how humans can test AI for these specific capabilities or what can be used to assess them in various situations (Yan et al., 2024). Although AI can somewhat comprehend complex concepts or metaphors, it does not guarantee an understanding equivalent to that of common humans or professionals, which can inspire future research (Chen et al., 2025; Xu et al., 2024).

Moreover, ML AI might choose a higher-possibility answer, which might not be the same as the correct or predefined answers (Lee, 2023; Prince, 2023). ML AI might connect concepts based on general language and information rather than an intentional design and specific knowledge domain (Májovský et al., 2023). This can bring about hallucination, where AI generates plausible but factually incorrect or nonsensical information in specific research contexts (Huang et al., 2023; Májovský et al., 2023; Xu et al., 2024). AI hallucination can be represented by deficient internal knowledge of AI, external changes beyond AI’s scope, or problematic outcome generation processes (Huang et al., 2023; R. Liu et al., 2025). Multiple factors drive AI hallucination, including misinterpreting the focus of a question, lacking necessary knowledge, contextual misalignment, and inappropriate abstraction (Huang et al., 2023).

Furthermore, domain knowledge can be uncommon knowledge with critical debates tailored for specific purposes and even contradict general knowledge (Larson et al., 2024; Lindebaum and Fleming, 2024; Wright and Michailova, 2022). Although new AI agent techniques intend to combine multiple specialized AI models for domain knowledge, training AI agents is controversial regarding data quality and optimization (Putta et al., 2024; Xu et al., 2024). For example, AI cannot grasp the foundational rules of language without sufficient data (OpenAI et al., 2024; Prince, 2023). However, when trained on vast datasets, AI learns general patterns that are broadly applicable with flexibility rather than specialized knowledge (Huang et al., 2023). These examples indicate the trade-off between accuracy and flexibility in the current stage of AI technology.

In summary, we recognize current AI’s features and limitations. Debates continue on how AI can learn general language rules without becoming overly general on concepts (Huang et al., 2023; Xu et al., 2024). Despite techniques developed to strike a balance between generalizability and specificity, challenges persist due to the big data requirement, algorithms for conflicting information, and the complexities in conceptualizing social phenomena (Chang et al., 2024; Putta et al., 2024; Yan et al., 2024).

We have elaborated on AI’s main characteristics and limitations, which will be further analyzed with LR to inspire management learning. AI can be theoretically functional by optimized utilization, which shapes the principles of AI utilization (Lee, 2023; Stuart and Norvig, 2022). In the following sections, we introduce AI tools and discuss AI’s functions and limitations in LR processes and quality.

Literature review processes with AI

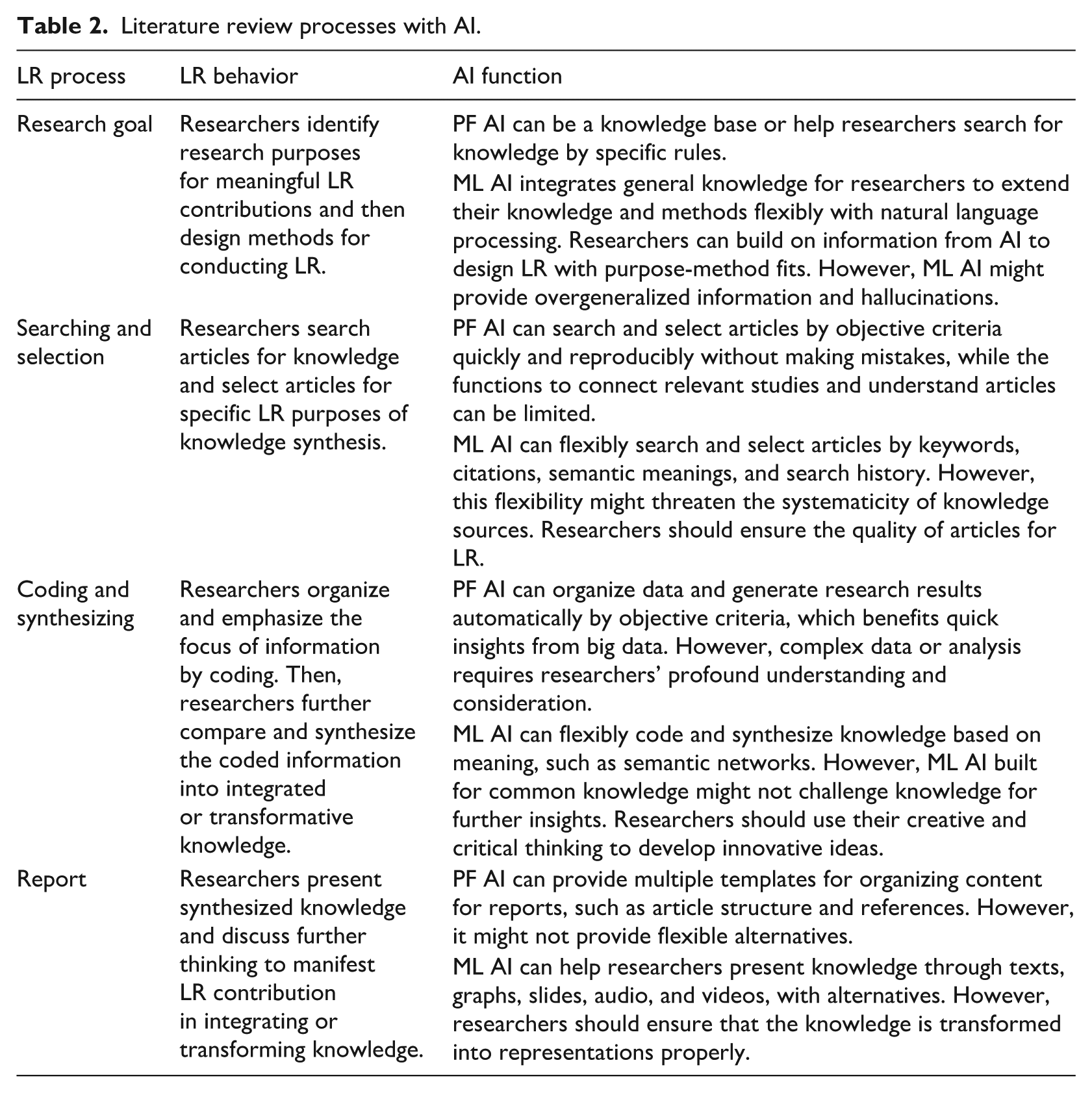

Complex, circular, iterative processes of LR can be modeled for our discussion on LR with AI (Hart, 2018; Xiao and Watson, 2019). We will describe the main LR processes for knowledge synthesis and explore how AI functions within these processes (Table 2). Although we provide example AI tools, our discussion focuses on the principles of our AI typology rather than the technical details of using an AI tool.

Literature review processes with AI.

Research goals

Research goals are targets for researchers to achieve in LR (Hart, 2018; Xiao and Watson, 2019). Researchers must articulate the reasons for a specific LR, such as timely and urgent requirements, the criticality of debates, ecological changes, and the implications for future research (Aguinis et al., 2020; Parmigiani and King, 2019). By considering the reasons for LR, researchers can highlight the unique contribution of an LR article, such as classifying (Kunisch et al., 2023), representing (Kunisch et al., 2023), problematizing (Alvesson and Sandberg, 2020), configuring, aggregating (Kunisch et al., 2023), integrating (Cronin and George, 2020), interpreting (Elsbach and van Knippenberg, 2020), and explaining (Kunisch et al., 2023). Researchers need to elaborate on how the research can provide new insights or transform prior thinking. The contribution of LR would shape the behavioral conduct of an LR article to bring about unique LR outcomes (Kunisch et al., 2023; Wright and Michailova, 2022). Purpose-method fit emphasizes the alignment of research purposes with appropriate methods for design, execution, and analysis for LR (Edmondson and McManus, 2007; Knight et al., 2022). Some frameworks guide purpose-method fit for meaningful LR, such as PRISMA, SPAR-4-SLR, PAGER, and RAMESES (Bradbury-Jones et al., 2022; Lim et al., 2022; Siddaway et al., 2019; Xiao and Watson, 2019). Based on the considerations of research goal and methods, the rest of the steps of collecting and selecting articles, coding and synthesizing information, and reporting LR can be structured to be rigorous (Hart, 2018; Xiao and Watson, 2019).

The initial stage of LR is relatively conceptual, involving the construction of meaningful research goals for knowledge synthesis. Theoretically, PF AI’s predefined rules can be the domain knowledge as the foundation of research (Stuart and Norvig, 2022). Practically, some PF AI might help researchers search relevant research resources, such as the initial identification of relevant studies by Scopus, Web of Science, and Google Scholar (Antons et al., 2021). However, when researchers need to expand and compare diverse knowledge, PF AI might be constrained by fixed input and outcomes for a limited scope of information (Stuart and Norvig, 2022).

In contrast, ML AI with natural language and conceptual knowledge can be useful in several aspects. For example, ChatGPT, Claude, Scite, Gemini, Jasper Chat, Llama, Copilot, and Mistral, which are similar to advanced chatbot systems, can help researchers extend their research domain and coverage by flexible input and output (De La Torre- López et al., 2023; Wagner et al., 2022). Currently, ML AI can possess extensive knowledge and access new information online (OpenAI et al., 2024). ML AI can inspire conceptual and theoretical thinking when general knowledge is needed (Wagner et al., 2022). For example, researchers used AI for research hypotheses with automatic experimental tests in biochemistry (Chen et al., 2025; King et al., 2009). AI could propose some possible research agendas based on the semantic meanings of key concepts and integrating existing research’s suggestions (Wang et al., 2025). Humans might gain quick insights from AI and keep reflecting on social situations for critical thinking and impactful LR (Chen et al., 2025; Larson et al., 2024; Lindebaum and Fleming, 2024).

However, when researchers delve into uncommon or controversial topics without sufficient elaboration and evidence, ML AI might underperform due to an insufficient knowledge base (Chen et al., 2025; De La Torre- López et al., 2023; Xu et al., 2024). AI might generate highly possible proposals evaluated by AI knowledge instead of real-world situations, which might reduce the contribution of AI (Huang et al., 2024; Huang et al., 2023). Also, AI might not debate the topic critically due to the design for generalizable AI results (Lee, 2023). Moreover, the match between changeable humans, research, resources, and social situations for purpose-method fits is complex (Huang et al., 2023). AI might struggle to generate purposeful research questions with relevant methods to accomplish complex research projects in social settings for humans (Johnson et al., 2021). Therefore, although ML AI is functional, humans should collaborate with AI to reduce mistakes.

Articulating research goals in LR directly impacts management learning by shaping how knowledge is synthesized and applied. AI might assist management researchers in identifying and prioritizing information and challenges (Chen et al., 2025; Stuart and Norvig, 2022). However, AI alone can lack the contextual sensitivity to determine which topics are most relevant for contemporary business environments (Lee, 2023; Prince, 2023). Thus, we argue for critical engagement with AI-generated recommendations to ensure methodological fit in complex organizational phenomena. Refining AI-assisted research goals to ensure alignment with dynamic managerial realities should be the emphasis in management learning.

Searching and selection

Common literature sources include journals, online databases, conferences, industry reports, governmental data, and personal references (Adams et al., 2017; Harari et al., 2020). Researchers invest time and resources to search articles that will be further analyzed to be synthesized knowledge as the contribution of LR (Hiebl, 2023). Researchers select articles thoughtfully by considering trade-offs between research scope and article quality using content criteria (e.g. concept relevance, theoretical framework, method, and data) or non-content criteria (e.g. publisher, date of publication, author, journal review methods, journal, and language) (Adams et al., 2017; Hiebl, 2023). For example, researchers conducting qualitative systematic LR might focus on a comprehensive search for articles and selecting articles by multiple criteria, such as conceptually relevant, not outdated, and with peer review (Hiebl, 2023).

PF AI tools such as Google Scholar, Web of Science, and Scopus help researchers search articles quickly based on exact inputs (Antons et al., 2021). For example, researchers can search on title, abstract, keyword, and content by words (e.g. “exact word” and term* with word extension) with Boolean operators of “AND” or “OR” (Kraus et al., 2022). For instance, researchers can search the exact terms “artificial intelligence” AND “management learn*” in the abstract by Scopus. PF AI offers multiple filter functions for selection, such as categories of article types, publication years, language use, academic subjects, and institutions. PF AI is generally considered reliable due to minimal machine bias and strict adherence to human instructions (Harari et al., 2020). PF AI can reduce time spent on repetitive tasks, allowing researchers to focus on creative and critical activities. However, PF AI generally cannot read the content in detail to provide further information for researchers to select articles intentionally (Antons et al., 2021).

ML AI tools capture user experience and conduct automatic analysis for researchers. AI can assist in finding relevant articles based on search history, related terms, flexible descriptions, and citations (Chen et al., 2025; De La Torre-López et al., 2023). Moreover, ML AI can link various papers based on information on citations, authors, and institutions, which benefits the extended search for relevant knowledge and detects the relationships among articles (Chen et al., 2025; Wagner et al., 2022). For example, researchers applied three topics of LR for comparing traditional keyword search with AI-augmented article search with AI knowledge maps. They found that ML AI could enhance both search comprehensiveness and accuracy (Ma et al., 2022). Additionally, ML AI based on language models can read the article to extract information for researchers to select useful articles in LR (Chen et al., 2025; De La Torre-López et al., 2023). For instance, researchers compared human review with ML AI review by different prompts and review frameworks on three aspects. Results indicated that the differences between human and ML AI’s review were insignificant (Drori and Te’eni, 2024). Various ML AI tools exist, including Elicit, Scite, DistillerSR, Connected Papers, Research Rabbit, Litmaps, and Semantic Scholar.

Although AI seems to be useful, it is necessary for researchers to examine ML AI’s article search and selection since academic articles are generally advanced and complex (Johnson et al., 2021). AI may not deeply understand articles with intricate implications, uncommon metaphors, and complex contexts for some specific topics of LR (Wagner et al., 2022). Moreover, the information extracted from common ML AI might not apply specific analytic frameworks or critical thinking to contribute to LR purposes (Drori and Te’eni, 2024; Wagner et al., 2022). Common ML AI might provide a biased evaluation of articles due to its data quality, processing strategies, and output constraints (Huang et al., 2023; Lee, 2023).

In summary, AI can enhance efficiency in literature search and selection in various ways, inspiring management learning to shift from manual retrieval to deeper analytical engagement in literature (Antons et al., 2021; Chen et al., 2025). However, AI lacks contextual understanding, requiring people to assess its outputs critically. Effective management learning can integrate AI’s function with human critical thinking, fostering human analytical skills and methodological rigor with higher efficiency (Larson et al., 2024; Lindebaum and Fleming, 2024). Management learning should cultivate AI literacy and responsible application to improve LR efficiency.

Coding and synthesizing

Through coding and synthesizing, researchers transcend existing knowledge limits via adjudication (clarifying and strengthening the foundation of what is known) and redirection (changing the thinking about a topic) (Cronin and George, 2020). Coding transforms diverse original article information into meaningful and organized information using induction, deduction, or mixed methods for purpose-method fit (Köhler et al., 2022). For example, researchers can apply grounded theory to code and capture the main themes in studies.

During synthesis, researchers compare and integrate this organized data from coding into a concise, synthesized, and transcendent knowledge structure (Kastner et al., 2012; Suri and Clarke, 2009). For example, researchers can reconceptualize factor relationships based on coded information to reduce redundant arguments and construct a parsimonious but explainable model. Researchers intentionally design the degree of synthesis for specific research contributions (Lim et al., 2022). For instance, a problematization review might highlight critical points for counterarguments or alternative understandings, rather than aggregating results from numerous studies (Alvesson and Sandberg, 2020). Coding and synthesis need information storage and retrieval to ensure LR quality.

Researchers can consider inductive, deductive, and abductive reasoning for coding and synthesizing. The current default setting of PF and ML AI tools would mostly be inductive (e.g. cluster analysis) (De La Torre- López et al., 2023). Deductive reasoning requires researchers to provide theoretical frameworks, necessitating explicit input to enable PF and ML AI’s deductive functions (Wagner et al., 2022; Walker et al., 2022). While ML AI might perform abductive reasoning to find highly probable solutions, few measures have assessed ML AI to ensure its performance in this aspect (Chen et al., 2025; Putta et al., 2024). Coding and synthesizing require critical thinking of trade-offs between more abstract theories and more objective evidence (Larson et al., 2024; Lindebaum and Fleming, 2024).

ATLAS.ti, MAXQDA, Nvivo, RobotReviewer, Paper Digest, WebPlotDigitizer, Graph2Data, DistillerSR, Rayyan, EPPI-Reviewer, ASReview, NotebookLM, and Covidence are relevant AI tools in this area (Wagner et al., 2022). They are mostly originally PF AI tools, gradually equipped with ML AI modules. PF AI can help researchers code the data by objective criteria, such as the detection, location, and marks of key terms (Antons et al., 2021). Researchers can obtain a basic record of coding processes and results for further manual coding and reading. PF AI can organize information by identical code across articles, such as categorizing articles into groups by keywords (Wagner et al., 2022). Therefore, PF AI can be efficient and reliable but deficient in flexibility in automatic processing.

In contrast, ML AI can code the data flexibly by detecting the meaning of the article even for multilingual contexts (Morgan, 2023; Prince, 2023). ML AI can automate some data analysis, such as semantic network analysis, statistical analysis of quantitative coefficients, and cluster analysis of terms, word frequency, and highlighting key terms (Chen et al., 2025; De La Torre-López et al., 2023). It surpasses PF AI by capturing semantics with other linguistic characteristics and extracting quantitative estimation results (Chen et al., 2025; Lee, 2023). However, ML AI cannot replace humans’ efforts to understand sentences deeply, especially philosophical and metaphorical ones (Johnson et al., 2021). Humans should inspect and adjust the code from ML AI and ensure a meaningful synthesis for specific research purposes.

For example, researchers conducted experiments to test human and ML AI coding on two datasets (Morgan, 2023). The results indicated that ML AI performed reasonably well regarding concrete and descriptive themes. However, ML AI was less successful at locating subtle and interpretive themes (Morgan, 2023). Another research indicated that the semi-automated workflow assisted by ML-AI did not appear to affect the precision rate while substantially reducing the median extraction time and slightly reducing the recall rate compared to a manual workflow (Walker et al., 2022). Other researchers conducted three experimental studies and revealed that ML AI’s coding performance patterns could be similar to humans’ patterns. However, ML AI could be more capable of processing concepts with higher concreteness, objectivity, and specificity (X. Liu et al., 2025). When the content included inference in contexts, and data had temporal relationships, ML AI required special treatment for higher performance levels parallel to humans (X. Liu et al., 2025).

Regarding subjective understanding or intersubjectivity issues, in contrast with PF AI replicating human decisions, how ML AI can truly represent human considerations and concerns of phenomena is controversial (OpenAI et al., 2024; Prince, 2023). This is also a critical debate in LR conducted by humans about the contribution of consistent and objective knowledge synthesis or a more critical transformation of thinking (Alvesson and Sandberg, 2020; Wright and Michailova, 2022). Synthesizing knowledge by reconceptualizing variable relationships and challenging underlying assumptions of studies is not the focus of ML AI now (Lee, 2023; Wagner et al., 2022). AI may face hallucinations when the content exceeds its knowledge scope, and integration criteria are vague, requiring numerous decisions on information loss and integration levels (Huang et al., 2023). Researchers should use their creative and critical thinking to generate innovative ideas.

Overall, AI can enhance efficiency by automating coding and synthesis, allowing researchers to focus on critical analysis and creative synthesis (Wagner et al., 2022). However, we argue for deeper engagement in debates and meanings as AI struggles with complex theoretical reconceptualization. Active human guidance of AI tools is essential to ensure that knowledge synthesis aligns with evolving managerial theories and environments (Antons et al., 2021). Management learning can foster analytical rigor, enhance reflexivity, and promote innovative thinking in LR with continuous human learning and human-AI collaboration (Chen et al., 2025).

Report

The reporting stage describes LR processes and provides evidence of reliability (Lim et al., 2022). A report describes how the research is conducted and how the article is written (Siddaway et al., 2019). Synthesized results and contributions of LR are discussed in the report, which emphasizes understandable and concise content for consolidating knowledge or transforming thinking rather than overwhelming databases and unorganized information (Cronin and George, 2020; Kunisch et al., 2023). A better report facilitates audience engagement and thus exerts its impact on society. Researchers might refine the writing style for higher impact and accessibility for readers (Lim et al., 2022; Sharma and Bansal, 2020).

Researchers strive to present results through texts, graphs, and videos for higher understandability. PF AI might not directly generate alternative texts, graphs, and videos for LR since the predefined function is for specific outcomes instead of rephrasing or generating new things based on existing resources (Stuart and Norvig, 2022). PF AI might be designed with templates for organizing texts, graphs, and videos (Stuart and Norvig, 2022). For example, Zotero, Mendeley, EndNote, RefWorks, and Citavi help researchers manage references and apply the correct format of citations.

In contrast, ML AI, including Claude, Gemini, Jasper Chat, Llama, ChatGPT, DistillerSR, Rayyan, Notion AI, and Duet AI, can generate texts for researchers, which reduces the workload of drafting (Wagner et al., 2022; Z. P. Wang et al., 2024). Researchers can rewrite AI-generated material into quality text, and AI can assist in translating or rephrasing content to mitigate language barriers (Lee, 2023; Májovský et al., 2023). Although these ML AI tools might be functional in language tasks, LR as concept-heavy articles requires researchers’ careful consideration of the clear and precise description (De La Torre-López et al., 2023). Midjourney, Visme, Stable Diffusion, Leonardo AI, Draw.io, and Dall-E generate illustrative graphs for researchers to showcase their ideas in more understandable methods. Runway, Pika, HeyGen, Leonardo AI, Stable Video Diffusion, Synthesia, Make-A-Video, and Descript can help researchers generate and edit videos, which are prevalent in various academic works, such as presentations, introductions to research, and video abstracts.

Different ways can present the research outcomes, and multiple methods can be applied to improve the outcome presentation (Kraus et al., 2022). This indicates that research can be more accessible or understandable for different audiences (Siddaway et al., 2019). However, this also represents the ambiguity of presenting research results. AI might need to decide from multiple possibilities of reports. On the one hand, this echoes the flexibility of reports; on the other hand, AI might generate some incorrect or nonsensical reports (Xu et al., 2024). It is necessary for researchers to ensure report quality and avoid hallucination.

For example, empirical studies discovered that ML AI could create a highly convincing article regarding word usage, sentence structure, and overall composition (Májovský et al., 2023). AI-generated articles could include standard sections such as introduction, materials and methods, results, discussion, and even data sheets (Májovský et al., 2023). Other researchers found that ML AI could generate text, tables, and diagrams for biomedical review papers (Z. P. Wang et al., 2024). However, they highlight the need to improve ML AI to understand intricate concepts and process complex content step-by-step (Májovský et al., 2023; Z. P. Wang et al., 2024).

Management learning can recognize the power of AI to produce alternative presentations, inspiring the importance of AI literacy. AI can enable clearer communication through text, graphs, and multimedia, making research more accessible (Chen et al., 2025). However, AI application requires critical human oversight to ensure accuracy, coherence, and relevance (Wagner et al., 2022). Management learning should emphasize the inspection of AI tools and the refinement of AI-generated content to maintain theoretical depth and avoid misinformation.

Summary

We discuss various AI functions and limitations in LR processes, allowing people to reflect on these critical considerations of AI and enhance their understanding of AI applications for improving knowledge synthesis. We can conceptualize the benefits of general AI as quickness and accuracy in objective tasks (Niu et al., 2024; Wagner et al., 2022). PF AI can process repetitive work faster and without errors than experts can (Inala et al., 2024). Humans can collaborate with AI on objective work to improve efficiency, which can be the new focus for management learning.

Conversely, AI currently cannot complete creative and complex tasks in LR with high-quality and consistent outcomes (Huang et al., 2023). These tasks need not only time but also mindful consideration (Kunisch et al., 2023; Lim et al., 2022). Even humans cannot accomplish these tasks only by working longer. Although ML AI attempts to synthesize concepts through a deep understanding of descriptions and metaphors, ML AI is still deficient in challenging the underlying assumptions of studies for creativity and critically reflecting on existing studies (Lu et al., 2024; Xu et al., 2024). Humans can collaborate with AI to provide detailed information for ML AI to learn from and gain AI feedback to rethink LR critically.

Our exploration of AI intends to inspire management learning to improve efficiency in LR processes and allow researchers to focus on critical and creative thinking and contribution. Management learning should recognize the strengths, weaknesses, opportunities, and risks of LR with AI for further learning and knowledge synthesis (Lindebaum and Fleming, 2024). Improving AI literacy and encouraging appropriate human-AI collaboration can be crucial for future management learning (Larson et al., 2024). To explore this in detail, the following section discusses AI in LR by quality criteria for improving LR.

Quality criteria of literature review with AI

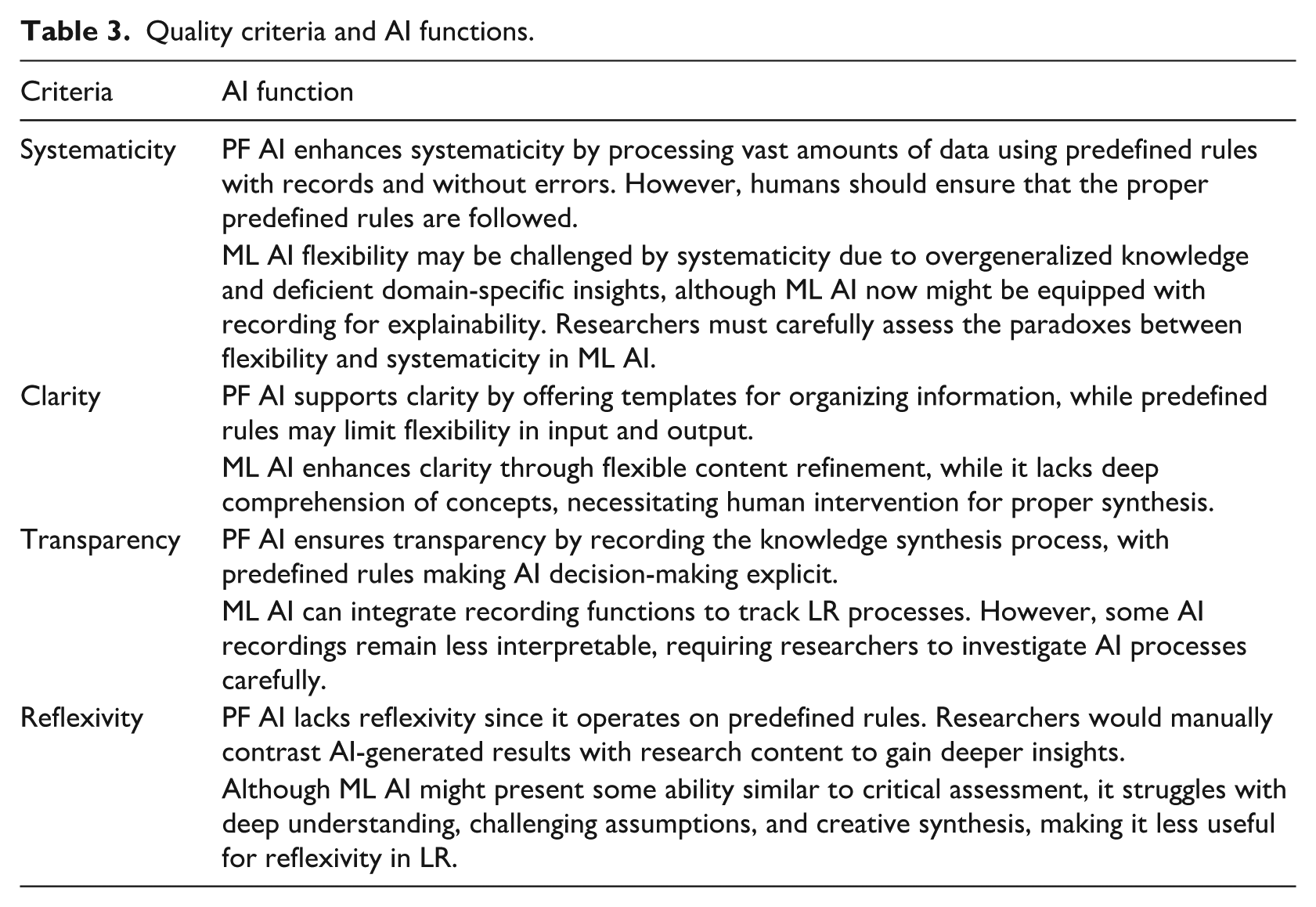

Extensive prior research has explored LR quality criteria to facilitate continuous improvement in LR quality (Aguinis et al., 2018; Simsek et al., 2021). Systematicity, clarity, transparency, and reflexivity can be four essential quality criteria of LR integrated by multiple studies (Kunisch et al., 2023; Lim et al., 2022). In this section, we analyze AI functions and limitations based on LR quality criteria, aiming to discuss how AI can enhance or undermine LR quality (Table 3). To improve conciseness, we prevent repetitive discussion of AI in the previous section of LR processes.

Quality criteria and AI functions.

PF AI can enhance systematicity by processing massive amounts of data without errors, increasing the likelihood of including appropriate articles and analyzing data properly (Stuart and Norvig, 2022). Moreover, researchers can set specific criteria for AI to detect content, organize relevant information, and generate documents using reliable methods without manual errors (Inala et al., 2024; Walker et al., 2022). However, AI cannot guarantee systematicity independently since it may inadequately address criteria for selecting articles, organizing content, or generating reports without humans’ appropriate predefined functions.

In contrast, ML AI with flexibility might be challenged by systematicity. Although ML AI started to be equipped with explainable elements for indicating ML AI’s decision-making, not every decision can be fully interpreted by human understanding (Bilal et al., 2025; R. Wang et al., 2024). ML AI might mistakenly apply general knowledge rather than focus on domain knowledge, which might contaminate knowledge (Bilal et al., 2025; Lee, 2023). Although AI might help researchers improve efficiency and record critical decisions, the logic and reasons for meaningful LR should be examined by humans (Wagner et al., 2022). Researchers must ensure systematicity by deeply understanding article content and metaphors and ensuring purpose-method fits with AI.

PF AI can help to increase clarity by providing templates and structure as frameworks, such as templates for gathering information, structuring articles, and formatting citations, although these functions might lack flexibility for input and outcomes (Stuart and Norvig, 2022; Walker et al., 2022). Therefore, PF AI might organize information without further synthesis for clarity.

In contrast, ML AI can flexibly refine article content, graphs, and video data, benefiting clarity (Chen et al., 2025; Z. P. Wang et al., 2024). However, AI cannot entirely replace the critical synthesis inherent in LR. AI lacks the depth to profoundly understand complex concepts and metaphors to offer new perspectives, especially in contexts requiring critical analysis (Wright and Michailova, 2022; Xu et al., 2024). ML AI might mistakenly eliminate important complexity for simplicity (Lee, 2023; Májovský et al., 2023). Therefore, researchers should mindfully consider synthesis before using AI to generate illustrations and reports. AI might generate multiple possible answers for humans to choose from and modify (De La Torre-López et al., 2023).

PF AI generally aids transparency when it can record the knowledge synthesis process. Moreover, PF AI is predefined, so the rules for AI decision-making are explicit for transparency (Stuart and Norvig, 2022). People can replicate PF AI’s processes and results, and ML AI started to integrate recording functions. Researchers can refer to ML AI’s records to describe the entire LR process, enhancing transparency by documenting detailed steps and results (Bilal et al., 2025; R. Wang et al., 2024). However, ML AI processes can sometimes appear opaque due to difficulties in interpreting some less intuitive ML AI records (Bilal et al., 2025; Wagner et al., 2022). Researchers should ensure that AI processes are transparent, similar to tools with interpretable workflows.

PF AI can only generate results based on predefined rules without reflection, so PF AI might benefit from human reflexivity (Stuart and Norvig, 2022). Researchers might use PF AI to contrast research content and predefined rules for further insights. Although ML AI might provide diverse information, current common ML AI focuses on general concepts instead of domain knowledge and uncommon debates (Lee, 2023). Therefore, ML AI might also be less useful regarding reflexivity and elements of deep understanding, critical thinking, and creative synthesis (Xu et al., 2024). For example, although ChatGPT can connect to the Internet, it was trained on a limited amount of data for its main functions. Most AI is not designed for reflexivity and cannot challenge underlying assumptions for LR.

In the previous section, LR processes for AI applications were discussed. In this section, analyzing AI’s impact on LR quality for LR value offers new insights for management learning. Different types of AI can enhance LR quality in different ways based on their unique characteristics, such as various kinds of recording, templates, and content organization (Wagner et al., 2022; Z. P. Wang et al., 2024). However, AI’s role might remain supportive instead of substitutive since AI might require human input for purpose-method fits, and has limitations in reflexivity (Hu et al., 2023; Huang et al., 2023). These functions and limitations highlight the complementary nature of AI and human cognition for efficiency and social reflection (Niu et al., 2024). To foster management learning, educators should balance the utilization of AI with the cultivation of critical and reflective thinking, equipping learners to harness technology for efficiency while engaging deeply with complex organizational challenges for accuracy and inspiration.

Discussion: human and AI in LR

Reflecting on AI function and human behavior for LR processes and LR quality can give people new insights into human-AI collaboration for knowledge, which can be essential in management learning (Barros et al., 2023). We have discussed some implications for management learning above, such as AI literacy and recognition of the advantages and disadvantages of AI. In this section, we reflect on the relationships between human, AI, and LR tasks to provide further thinking about LR with AI in management learning (Davis et al., 2025).

AI: paradoxes between flexible and automatic work

Generally, AI can enhance LR processes by providing vast knowledge and rapid automation for some specific purposes (Chen et al., 2025; Z. P. Wang et al., 2024). We explore the AI function for LR, echoing the call for efficient and proper application of AI in management (Barros et al., 2023). AI can map LR topic domains through its extensive knowledge, search articles quickly, integrate concepts swiftly, and generate content based on research results (Wagner et al., 2022). PF AI ensures quality LR results while lacking flexibility, contrasting ML AI, which can be used flexibly with the concerns of inaccuracy and hallucinations (Stuart and Norvig, 2022). Technologists keep developing AI to be more user-friendly and functional (Durante et al., 2024; Xi et al., 2023). When people understand AI deeply, these tools can be applied intentionally for effective and quality LR.

Management learning should emphasize the paradoxes of AI in LR, such as the trade-offs between general knowledge and domain insights, result accuracy and urgent need regarding time, and automatic work and human thinking with flexible information exchange with AI (Tables 2 and 3) (Lee, 2023; Stuart and Norvig, 2022). For example, PF AI for automation might induce laziness and irresponsibility but allow learners and educators to allocate more time to critical analysis and interpretation rather than exhaustive data collection (Inala et al., 2024; Lindebaum and Fleming, 2024). Additionally, in management studies, concepts can be abstract, complex, contentious, and profound. Even ML AI might not provide deep insights into management studies (Lindebaum and Fleming, 2024; Xu et al., 2024). This limitation underscores the essential need in management learning to develop learners’ critical thinking and analytical skills (Larson et al., 2024). Educators should emphasize the evaluation of AI outputs to identify potential inaccuracies or biases and make mindful judgments. Moreover, the results from ML AI risk overgeneralization and hallucination (Huang et al., 2023; Larson et al., 2024). However, learners might reflect on these outputs and develop a niche area for further study as an innovation. By critically engaging with such areas, learners can advance management knowledge and practice (Sharma and Bansal, 2020). The paradoxes of using AI in LR can be further explored to inspire thoughtful decision-making.

Moreover, AI might stimulate the evolving demands of management learning, where proficiency in leveraging technology is increasingly essential (Barros et al., 2023). Even though AI functions with risks of mistakes, humans can still learn from these possible alternatives. Management learning should emphasize human-AI collaboration instead of replacement (Barros et al., 2023). Humans and AI both make mistakes in exploring innovation and transformation, while humans and AI can provide each other with new opportunities to be mutually augmented (Johnson et al., 2021; Lee, 2023). For example, researchers have collaborated with AI to discover possible biochemical structures, which were further tested and validated for innovation. Future studies can further explore the relationships between evolving AI, LR practice, and the change in research conduct (Durante et al., 2024). Although people might be capable of using AI, the processes of using AI and the influences of AI on research processes might be different across people, which leads to different risks and benefits for researchers and research outcomes. Future studies can explore psychosocial, environmental, ergonomic, and technological issues on humans engaging in technology use (Chen et al., 2025; Larson et al., 2024; Lindebaum and Fleming, 2024).

LR types: reflexivity and fit

LR contributes to knowledge synthesis and fosters innovation based on existing evidence. AI can do simple information gathering, but it lacks critical thinking in challenging underlying assumptions and deeply analyzing metaphors (Májovský et al., 2023; Wagner et al., 2022). In contrast, researchers expect some kind of LR with critical evaluation and meaningful combination, which needs unique human ability and enriches management learning (Lindebaum and Fleming, 2024; Maclean et al., 2012; Wright and Michailova, 2022). Problematization and reflexivity manifest unique human capabilities and values in conducting LR. Problematization review seeks to provide alternatives for existing understanding based on rational thinking and problematized cases, facilitating the development of new ideas and theories surpassing traditional paradigms (Alvesson and Sandberg, 2020). Reflexivity can be achieved by three main approaches, including accumulative (e.g. accumulating resources and embracing opportunities), reconstructive (e.g. overcoming constraints and learning from adversity), and mixed methods (e.g. developing strategic focuses) (Maclean et al., 2012). Reflexivity encourages critical thinking and re-evaluation of existing understandings of phenomena, with a particular focus on challenging and reimagining our current ways of thinking about them, which encourages open discussion, diverse feedback, and interdisciplinary communication (Alvesson and Sandberg, 2020; Maclean et al., 2012; Wright and Michailova, 2022). For management learners, this process cultivates the ability to question established norms and to innovate, which is vital in adapting to the ever-changing management reality.

When LR aims at reflexivity, humans’ unique value can be manifested, and AI cannot replace humans but can interact with humans during LR processes (Chang et al., 2024; Chen et al., 2025; Lindebaum and Fleming, 2024). This interaction highlights the importance of educators emphasizing human-AI collaboration in management learning to bring an opportunity to improve knowledge synthesis. By teaching learners how to integrate AI tools with their analytical skills, management learning can enhance the depth and quality of their research (Barros et al., 2023; Chen et al., 2025; Larson et al., 2024). Future LR might comprise further critical thinking of the concepts and larger quantitative data for synthesis.

Moreover, purpose-method fit can be applied to evaluate AI for LR, and using AI might not be a cutoff of yes or no but a multidimensional spectrum (Edmondson and McManus, 2007; Knight et al., 2022). People might utilize AI to a degree concerning their LR methods and contribution, and both the advantages and disadvantages of AI can be fairly assessed (Hart, 2018; Lim et al., 2022). The implication for management learning is that learners should be trained to discern when to leverage AI’s strengths and when to rely on human intellect, which is discussed in this study. Incorporating this understanding into management curricula can better prepare learners to navigate the complexities of knowledge (Chen et al., 2025; Larson et al., 2024; Lindebaum and Fleming, 2024). Future studies can explore the relationships between LR types and AI, examining how LR keeps pace with AI and developing methods to maximize AI contribution while minimizing risks.

Human: capability changes and relationships with AI

Human-AI collaboration might improve LR quality to meet increasing quality expectations. We argue that AI can benefit LR and be our friend to help improve LR rather than our enemy inducing laziness and recklessness (Chen et al., 2025; Davis et al., 2025; Raisamo et al., 2019). Human-AI collaboration is a complex process, with the effort required to reach an improved LR by accessing a broader range of articles, allowing researchers to reflect deeply, and facilitating the management of ideas and ambiguous results in research (King et al., 2009; Kunisch et al., 2023; Larson et al., 2024). Our discussion of AI for LR processes and quality can serve as a guideline. In management learning, AI literacy can be essential for human-AI collaboration, such as providing appropriate prompts to AI (Barros et al., 2023; Lindebaum and Fleming, 2024). Moreover, fostering a positive perception of AI as a collaborative partner can encourage learners to embrace technological advancements without losing human agency (Larson et al., 2024). The psychological influences in AI applications, such as social norms, motivation, and emotion, can be further explored (Larson et al., 2024). These mindset shifts might enhance people’s ability to leverage AI effectively while maintaining critical engagement (Larson et al., 2024; Lindebaum and Fleming, 2024).

Moreover, management learning should emphasize purpose-method fits, explaining why using a specific type of AI is reasonable, enhances effectiveness, and reduces risks. Utilizing AI in LR based on human capabilities and AI functions can transform the processes of human behavior in LR. AI can be prioritized in human-AI collaboration, such as computational literature search, and AI can also be supportive and complementary, such as AI providing information for problem reconstruction (Inala et al., 2024; Wang et al., 2025). Also, AI can be a parallel process to human LR work, which can be applied as a new way of comparing results and getting inspiration from AI’s work. Researchers can redesign their workflow for specific purposes of AI utilization (Chen et al., 2025; De La Torre-López et al., 2023). Future studies can further explore AI literacy and AI application behaviors.

Furthermore, AI can facilitate our rethinking of the meaning of critical thinking and reflection. Humans and AI face a similar issue of hallucination since they both can generate plausible but incorrect answers (Huang et al., 2023; Larson et al., 2024). By critical thinking, humans might provide new and uncommon insights similar to plausible answers. However, these insights can still be a hallucination before the discovery of the ultimate truth. This highlights the importance of learning from the paradox between criticality and accuracy (Maclean et al., 2012). For management learning, analyzing why and how AI generates hallucinations can reflect human processes to prevent human mistakes, such as the judgment of hallucination by what criteria, how researchers can deal with environmental changes, and how the ambiguity of concepts leads to unstable assessments (Huang et al., 2023; Xu et al., 2024). Through the examination of hallucinations from both AI and humans, people can be more cautious about the risks behind thought-provoking critical thinking. Critical thinking can be a continuous effort to prevent bias and reflect on assumptions and social phenomena (Larson et al., 2024; Maclean et al., 2012). Hallucination can also be a source for people to reexamine knowledge and inspire profound thinking on some issues without superficial conclusions (Xu et al., 2024). Management learning should pursue continuous reflection on both human and AI outcomes.

Overall, human-AI collaboration dynamically shapes research and management learning. AI enhances efficiency by automating LR tasks, enabling learners to focus on critical analysis and interpretation. Conversely, human judgment refines AI outputs and addresses complexities and ambiguities that AI cannot fully grasp. We provide the principles of using AI in LR processes for higher LR quality. By navigating AI’s strengths and limitations, management learners gain the tools to innovate and reflect deeply to strengthen their knowledge.

Limitations

Although we discuss some prominent considerations of ethics, in-depth discussion and frameworks for analyzing ethics are needed (Barros et al., 2023; Chen et al., 2025; Lindebaum and Fleming, 2024). How people employ AI responsibly and how the application of AI influences the whole ecosystem can be further explored. For example, we lack knowledge of AI’s impact on learners’ well-being, the fairness of AI accessibility in developing countries, and the power of data control in management learning.

Moreover, although we explore AI typology for functions and limitations, we do not provide technical guidance on a specific AI tool for a specific LR purpose. Various tools have different characteristics for unique functions, which can influence LR practical behavior (Chen et al., 2025; De La Torre- López et al., 2023). Further exploring specific situations for specific guidance can benefit management learning in practice.

We acknowledge the continuous development of AI (Chen et al., 2025; Walker et al., 2022; Z. P. Wang et al., 2024). We anticipate that future AI tools will integrate multiple technological elements, such as predefined functions, human feedback modules, and continuous learning. Technologists keep developing AI agents that combine techniques, including logic flow, reasoning, and action tools, to perform specific tasks (Durante et al., 2024; Xi et al., 2023). Furthermore, AI might bridge the gap between researchers and other tools, such as statistics and documentation tools (Chen et al., 2025; OpenAI et al., 2024). Additionally, by combining AI elements, technologists start to train more specific models for specific tasks, similar to experts solving specific problems in relevant areas (Lu et al., 2024; OpenAI et al., 2024; Putta et al., 2024). However, we acknowledge that we ignore human agency issues (Larson et al., 2024; Lindebaum and Fleming, 2024). Although AI might generate outcomes similar to humans, it does not involve human mental processing.

Conclusion

Constructing, interpreting, and applying knowledge is essential in management learning, manifesting the value of knowledge. AI is transforming LR and knowledge synthesis, enhancing efficiency while raising critical questions about the role of human cognition. Although AI can automate search, synthesis, and reporting, it lacks the ability to engage in deep critical thinking, challenge assumptions, and generate original theoretical insights. Our analysis of AI in LR processes and quality criteria reveals the unique value of AI based on AI typology. Also, we indicate that AI cannot fully replicate people’s ability to reflect, problematize, and synthesize knowledge. We argue for human-AI collaboration that balances technological efficiency with intellectual depth for meaningful research. For management learning, this underscores the need to refine research training on AI literacy, strategic human-AI collaboration for specific knowledge synthesis, and human reflexivity in AI-assisted work.

This article inspires people to think: In an era where AI can rapidly process massive amounts of information, what remains as unique human capabilities in knowledge synthesis? What can be the future emphasis of management learning? Future studies can explore AI’s impact on learning curriculum, behaviors, and impacts. Ultimately, AI’s value is not replacing human insight but facilitating people to rethink how we generate, evaluate, and apply knowledge to empower management learning to evolve in the face of technological transformation.

Supplemental Material

sj-docx-1-mlq-10.1177_13505076251377550 – Supplemental material for Advancing knowledge synthesis in management learning: Literature review assisted by artificial intelligence

Supplemental material, sj-docx-1-mlq-10.1177_13505076251377550 for Advancing knowledge synthesis in management learning: Literature review assisted by artificial intelligence by Young Chan in Management Learning

Footnotes

Acknowledgements

I am grateful to Hung-yi Lee and Yun-Nung Chen from the National Taiwan University for their knowledge of artificial intelligence. I appreciate the feedback from Yinshang Wang and Salilporn Kassie Soubsawwong at University of Leeds and Lu Han from Central China Normal University. I also express my appreciation to the editor and anonymous reviewers.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: University of Leeds Jisc for open access.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

No new data generated.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.