Abstract

How we think about the use of artificial intelligence to achieve business goals has become normalized as a “working together” symbiosis metaphor. Yet public discourse and popular fiction oppose such a view with alternative critical and dystopian positions. We advocate that in order to negotiate their own positions around artificial intelligence, management learners can engage with such contradictory views through the sociotechnical imaginaries captured by science fiction. We interpret 15 movies featuring artificial intelligence to reveal multiple human-artificial intelligence imaginaries and the metaphors that structure them. We find metaphors of: competitive symbiosis, where humans and artificial intelligence compete for work, symbiotic mutualism, where humans and artificial intelligence benefit each other, symbiotic parasitism, where either humans or artificial intelligence feed off the other, and we also uncover the degree to which these relations are facultative (optional) or obligate (inescapable). We show how movies can be used to support learning by inviting sensitivity to the unreflective reproduction of dominant sociotechnical imaginaries and their structuring metaphors.

Introduction

There are diverse and even polarized public reactions toward artificial intelligence (AI; Chan, 2023; Krammer, 2023) and an overall anxiety over what it means for the future (Ciriello, 2024). Although businesses may support AI adoption as a route to increased efficiency and profit (Jarrahi et al., 2023), this contrasts with calls for more responsible use of AI (Grigore et al., 2017; Grigore et al., 2021), emerging critical communities (Curry Jansen, 2022) and a popular culture that presents darker, dystopian visions of AI. Will AI be a useful co-worker making jobs more pleasant, a ruthless boss to whom workers will have to fearfully answer, a sneaky rival bent on stealing jobs, or a slave to be callously exploited? Or some combination of these? To deal with such contradictions, societies produce and maintain sociotechnical imaginaries (STIs) as preferred collective visions of the future that are worked toward (Jasanoff and Kim, 2015). The ways in which AI is imagined thus impact current management practice related to realizing a desirable future and/or preventing undesirable ones.

Although it is recognized that managers therefore need to better engage with AI in its various forms (Barros et al., 2023), they may struggle with its technological nature, ceding expertise to technology specialists (Bader and Kaiser, 2019) and so evading the “stark choices” that AI presents (Krammer, 2023: 2). This suggests a need for them to recognize and consider how AI might best be managed in the workplace. To do this, we show how science fiction (SF) reveals multiple human–AI imaginaries and the metaphors that structure them. As Preece et al. (2022) note, fiction makes STIs visible, and while SF has long imagined AI (Hudson et al., 2023; Osawa et al., 2022), we advocate that management learners—those undertaking undergraduate, postgraduate and executive management education and the management educators providing such learning—should engage with the STIs captured by SF in order to negotiate their own positions around AI and what it means for management practice.

Our approach is to critique and expand the dominant metaphor of symbiosis as humans working with, or being enhanced by AI to achieve business goals (Carroll et al., 2024; Scafa et al., 2020) by interpreting 15 popular SF movies to reveal different human–AI imaginaries. Through our analysis we emphasize both the social context and the role of management within scenarios where advanced AI is embedded in complex corporate and social activities, such as maintaining law enforcement (RoboCop), completing a mission to Jupiter (2001: A Space Odyssey), or managing the welfare of survivors of an environmental apocalypse (Wall-E). Challenging established metaphors and creating new ones requires imagination, and we demonstrate that fictional texts provide metaphorical organizing “templates” in the absence of direct management experience (Biehl, 2023; Buchanan and Hällgren, 2019; Hudson et al., 2023; Osawa et al., 2022) of advanced AI systems.

Our contribution is twofold. First, we respond to calls for more nuanced management interpretations of AI by deploying ideas from the study of STIs (Jasanoff and Kim, 2015) and drawing from the literature on metaphor (Audebrand, 2010; Driver, 2017; Schoeneborn et al., 2016). Our interpretations capture different human–AI imaginaries based on how “species” interact, how each contributes to the other’s subsistence, and whether they can act independently or not. This results in an extended symbiosis metaphor that includes competitive symbiosis where humans and AI compete for work, symbiotic mutualism, where humans and AI benefit each other, symbiotic parasitism, where either humans or AI feed off the other, and the degree to which these relations are facultative (optional and unnecessary) or obligate (mandatory and inescapable). Our intention is that this will invite management learners and educators to negotiate their own positions within a diverse and polarized range of discourses around how humans and AI may work together, encouraging them to become more sensitive to their own unreflective reproduction of dominant STIs and alternative imaginaries that may be considered and enacted, or avoided.

Second, we contribute to an understanding of the different ways in which movies can therefore be used to support management learning (Biehl, 2023; Buchanan and Hällgren, 2019), by explaining how different sociotechnological imaginaries can be explored through interpretations of and comparisons between multiple movies. Engagement with STIs through movies provides examples of the role of management in realizing human–AI imaginaries and so opens the imagination to allow for original management thinking about technology.

Why management learners need to think about AI

Although AI can be simply thought of as “computer systems that perform tasks that normally require human intelligence” (Pettersen, 2019: 1058), the range of AI business applications has expanded as new technologies have been developed. Older conceptions such as manufacturing cobots (Peshkin and Colgate, 1999) may have become taken for granted (Bostrom, 2017), but managers must now also make sense of customer service chatbots (Sheehan et al., 2020), algorithmic decision-making systems (Vesa and Tienari, 2022), and Generative AI platforms (Barros et al., 2023). Such complexity has resulted in calls for more nuanced examinations of AI in the workplace (Vesa and Tienari, 2022).

It has also produced multiple views about AI. For example, Qi et al. (2024) show that public perception depends on what aspect of AI is under discussion (i.e. gaming or business use, its potential for violence, or the trustworthiness of those in charge of it), and that those with a more informed understanding of AI disagree most regarding its outcomes. Miyazaki et al.’s (2024) discourse analysis around ChatGPT also suggests that although general sentiment is positive, those occupations most affected, like illustrators, writers, and streamers, demonstrate more negative views. In addition, while Chan (2023) and Krammer (2023) divide public reactions to AI into utopic and dystopic perspectives, Ciriello (2024) writes of how machine learning and generative AI have ignited a “Great Anxiety.”

Despite the presence of critical communities who seek to generate a reflective dialogue around AI, there are also “powerful forces aligned against [them] with very deep pockets to [. . .] keep the current AI hype cycle spinning” (Curry Jansen, 2022: 9). Indeed, Chan (2023) highlights that industry is at the forefront of championing a utopic view of AI, adopting a largely uncritical acceptance of it. The way managers are encouraged to think about AI may therefore differ from other groups and bring them into conflict with them. As Eriksson-Zetterquist et al. (2009) observe, new technology repeatedly embodies managerial virtues. Burrell and Fourcade (2021) further contend that algorithmic governance is creating a “coding elite,” who benefit from AI developments, and a marginalized “cybertariat” who do not. For Kellogg et al. (2020), this requires further consideration because marginalized and exploited workers may have good cause to resist AI.

In addition, Toutain et al. (2023: 130) caution against embracing AI as merely a “symbolic response to [. . .] institutional pressures for being a technology-driven organization.” Such a risk is perhaps made worse given that organizational leaders may struggle with the nature of AI, ceding expertise to technology specialists (Bader and Kaiser, 2019). Managers may thus be making decisions about AI that evade engagement with its implications, yet are positioned at the nexus of tensions related to AI’s use in the workplace with significant implications for society. As Lim et al. (2023: 3) note, this means that “there is a need for a critical discourse that can accommodate both the concern and excitement.” For Barros et al. (2023: 603), educators also need to inculcate a “critical and ethically responsible” approach to AI, helping to foster imaginaries that are rooted in human potential. How AI might come to interact with human workers is thus as significant as technological definitions of what AI is, and what it can do for business performance.

We therefore encourage engagement with STIs: “collectively held, institutionally stabilized, and publicly performed visions of desirable futures” (Jasanoff and Kim, 2015: 4), and more specifically, human–AI imaginaries. The recognition of STIs will help those undertaking undergraduate, postgraduate and executive management education, and management educators, navigate an increased complexity of AI forms, the opposing discourses around the implications of AI for work and society, and their own current and future position that may invite an uncritical, or “symbolic” adoption of AI.

Sociotechnical imaginaries

STIs capture how future technology is imagined within specific socio-political environments. This gives them performative power, meaning that once imaginaries are accepted, they shape trajectories of research and innovation and thus produce concrete effects in the world (Jasanoff and Kim, 2015; Preece et al., 2022). Performativity can therefore be analyzed in relation to acts of power (i.e. legal, political, or funding allocations) that enable certain networks to be built and to promote such innovations as progress, which Rudek (2022) calls productive power. For example, the present STI of the future of work as AI and humans working together to increase productivity, creates discourse about the expectations of industry to achieve it, along with the need for suitable public policy, including educational priorities that favor AI adoption. STIs thus define social norms and frame present choices around AI research, policy and education that advance what is considered to be “legitimate” knowledge in society, that is, they represent how powerful interests would like society to understand what the world will become. As Jasanoff and Kim (2015) further note, however, STIs are multiple and contested, and so recognizing how (competing) STIs come to wield power over our understandings allows for the mobilization of the imaginary as a resource for management learning innovation (Hooge and Le Du, 2016).

Although STIs have roots in how the scientific community and the media cover technological developments, they are also manifest in the creative suppositions of popular fiction (Cave et al., 2018; Jasanoff and Kim, 2015). Preece et al. (2022) therefore note that by understanding the media through which STIs pass, including books and movies, we can make such imaginaries visible. In addition, Sartori and Bocca (2023: 453) explain that for AI, “Hollywood movies play a grand role in supporting opposing views on enthusiastic or horrified predictions.” SF is therefore not just escapist entertainment. It is also a powerful mode of negotiating social challenges through allegorical readings that invite differing interpretations, often resulting in recognizable tropes; themes that equate to metaphors that can be transferred to everyday life. Dolan (2020) further explains that SF therefore exposes the contradictions in prevailing sociotechnical norms.

The metaphorical constructions used in SF shape the ways in which society may think about technology by blurring distinctions between material realities, politics, and imaginative creations of the future (Weldes, 2003). The SF movies of the 1950s, for example, reflected anxieties around nuclear conflict and the power of modern science via metaphors such as “a fifty-foot woman, a prehistoric monster, a giant ant” (Hendershot, 1999: 127). Movies provide ways to distill hopes and fears into metaphors that amplify or curtail engagement with the social challenges that confront audiences.

Popular fiction captures and fuels STIs but it is the metaphors that those narratives recruit that provide a fecund location for investigation. Metaphors are used to imagine the future by deploying what is known now, to understand what might come to be. They make the complex and uncertain accessible to thought (Jermier and Forbes, 2016), through “ways of understanding one kind of experience in terms of another” (Lakoff and Johnson, 1980: 486). In this way, metaphors recruit a “process of imaginization” (Morgan, 1996: 365) to direct our interpretation of meaning, by “resonating with common imaginaries and symbols” (Devadason, 2011: 638). Human–AI imaginaries can therefore be understood through the metaphors created and maintained by SF, that is, that inhabit the STI of AI. Considering the metaphors that we find in such movies allows us to reflect upon how they might be influencing expectations of, and then experiences with, such technology. This is in line with Driver’s (2017: 553) desire to encourage “metaphorical thinking that is more reflexive” in management learning, by framing the analysis of metaphor within a critical motivation to interpret and explore the STI.

Metaphors and fictions in learning

The more a metaphor seems to clarify phenomena, the more we may take it literally (Edge, 1974). Dominant metaphors can silence debate (Schoeneborn et al., 2016) and restrict reflexivity (Driver, 2017; Springborg and Sutherland, 2016). Hence, Jermier and Forbes (2016) suggest that popular management metaphors need to be interrogated.

For Cornelissen and Kafouros (2008), this means recognizing that metaphors have both explanatory impact to clarify meanings and generative potential that invites changes in thinking and doing for learners. Metaphors may frame and define problems but can also invite new understandings of them. Thus, management learners might play with them in ways that acknowledge that it is encounters with their construction that produces insight, including into the self (Driver, 2017). Such engagement is consistent with reflexivity, where learners question themselves and their practice to develop the criticality required to act differently when dealing with “ill-defined, unique, emotive and complex issues” (Cunliffe, 2002: 48), such as AI. For Durepos et al. (2020: 8), “Being reflexive is an ontological claim that opens the capacity for choice,” allowing learners to recalibrate future actions. Maclean et al. (2012) add that reflexivity therefore emerges when “established mental models” are unable to address complex organizational dynamics, and Archer (2007) further proposes “internal conversations” where individuals deploy reflexivity to overcome challenging situations by imagining alternative scenarios. This is what engagement with STIs invites.

Prior studies also recognize how management learners engage with metaphor through experience, including of fiction. For instance, Audebrand (2010) considers the popularity of war metaphors in strategic management theory, research, and education. Even though most management educators and learners have no firsthand experience of war, and despite the metaphor’s limitations, war represents a backdrop for many genres of fiction, making it familiar and so metaphorically powerful. Other management learning work concerns itself with metaphor construction through experience. As education may over-rely on disembodied theory, reflexivity based on experiences allow managers to act on, rather than to simply know critiques. For Cunliffe (2002), this requires an unsettling of taken for granted views of the world, and a key aspect of this is the metaphors that managers abandon and replace with new ones. Springborg and Sutherland (2016) capture this by showing how when dance is directly experienced, it can be used by educators as a metaphor for management that can inspire deep insights. Similarly, Fairfield and London (2003) use the metaphor of music to reframe their understanding of the role of educators through the experience of playing an instrument as part of a group of musicians. Such studies underline the role aesthetic experience can play in the adoption of new metaphors in the context of learning.

Fictional texts are also useful in this process as they provide metaphorical “templates” for complex aspects of management which learners may have limited experience of, and engages us with the metaphors they contain in ways that simply reading about a metaphor does not (Biehl, 2023; Buchanan and Hällgren, 2019; Hudson et al., 2023; Molesworth and Grigore, 2019; Osawa et al., 2022). For example, Buchanan and Hällgren (2019) use Day of the Dead, to explore how managers may behave in extreme contexts and crises, known to be difficult to study in real time. Using the zombie movie as a case study for generating new knowledge about leadership, the authors present leadership configurations in times of crises and their consequences for survivors. Biehl (2023) further notes how movies can be used to engage learners in an experiential process by bringing one’s knowing, experience and emotions to a complex and immersive fictional depiction of management. Watching Game of Thrones creates an experience where learners “interact” with the narrative via strong female leaders. Biehl (2023: 130) explains that this reveals concepts and practices in memorable ways, providing “an aesthetic and affective understanding that is needed to [. . .] make sense of today’s complex world.” Fictional narratives explain how outcomes arise when leaders adopt certain behaviors in a given context as a “substitute” for management experiences (Biehl, 2023), or “a large-scale social experiment” (Penfold-Mounce et al., 2011), inviting reflection and analysis. Movies therefore convey metaphorical meaning to audiences through visuals, the characters presented, or the story told (Buchanan and Hällgren, 2019), and may even be more powerful for management learning than what happens in classrooms or boardrooms (Panayiotou, 2010).

Biological metaphors, symbiosis, and AI

This brings us back to the actual metaphors that structure dominant human–AI imaginaries, allowing us to engage with and understand preferred STIs. In management research and education, biological metaphors are pervasive, possibly to the point where we fail to recognize them as such. For example, Morgan’s original “organizations as living organisms” metaphor, evokes Darwinism to capture capitalism’s competitiveness and endless change (Schoeneborn et al., 2016). We also have “evolving markets,” ‘product life cycles “organizational DNA,” and “seed funding.” The phrase “artificial intelligence” is also a biological metaphor, applying a familiar biological concept—intelligence—to circuits and software, to capture an aspiration of imitating life in silicon. In business narratives around AI, related biological metaphors of symbiosis have therefore, perhaps unsurprisingly, become established.

For example, Chakraborti et al. (2017) explore the idea of symbiotic human–AI work relations, Jarrahi et al. (2023) maintain that humans and AI technologies are coevolving through human–AI symbiosis, and Scafa et al. (2020) note a rise of interest in symbiosis as an industrial strategy that requires attention as we move to “smart” factories. Symbiosis is presented as humans and AI “working together” to achieve specific business goals such as innovation, productivity, or profitability (Jarrahi et al., 2023; Scafa et al., 2020). Both the alternative human–AI hybrid metaphors (Rai et al., 2019) and recent discussions of human intelligence augmentation (Carroll et al., 2024) preserve this view of humans responding to AI technologies by working with, or being enhanced by them to meet business goals, maintaining a symbiotic imaginary. As managers engage with the subject of AI, there is a risk that symbiosis in its various forms is assumed and then unreflexively reproduced. For Hartelius and Browning (2008), managers often prefer metaphors that reduce ambiguity and conceptual novelty, and as Audebrand (2010) explains, only preferred metaphors are diffused and replicated across management thinking.

As we have suggested, however, business discourse is not the only source of human–AI imaginaries. The symbiosis metaphor also has a long history connected to SF. For example, Taylor and Dorin (2018) explore how mechanization anxiety during the Industrial Revolution became informed by Darwin’s The Origin of Species (1859) through Samuel Butler’s Darwin Among the Machines (1863). Taylor and Dorin (2018) interpret Butler’s work as initially presenting positive evolutionary benefits, but a recognition that human and machine co-evolution was determined by market economics soon led to concerns that machines might act parasitically upon humans. Dystopian fictions from the late 19th century to mid-20th century continued to explore human-machine relationships in such themes as machines taking over as the dominant species, humans becoming weaker in this coevolutionary process, and people being transformed into servants of superintelligent machines (Taylor and Dorin, 2018).

Other research brings such concerns closer to the present with further visions of what AI is and does. Osawa et al. (2022), for example, quantifies depictions in SF to conceptualize four AI representations: buddy AI (dependent on humans), machine AI (benign AI), infrastructure AI (large overseeing networks), and human AI (metaphors of exploited labor rather than depictions of AI itself). Overall, however, they recognize a pervasive “AI out of control” trope, which captures concerns about AI’s friendliness to humans, and interdependence with them. Hudson et al. (2023) also consider SF stories, noting dominant AI tropes like killer robots, human mimics, childlike AI, and all-powerful god-computers. Unlike positive business discourses, they observe that SF tends to ignore themes of AI “working well” in favor of dystopia, but conclude that fiction remains capable of revealing new research or policy issues based on “metaphors about what exactly technologists are creating” (p. 211).

Prior SF research therefore also captures AI metaphors, illustrating how these frame our imagining of the implications of technology for society. However, their focus is on what AI is, does or represents, with less attention to the positive symbiotic human–AI relationships that dominate business discourse and concern management practice. This leaves room for interpretations that challenge and expand existing symbiosis metaphors through specific engagement with human–AI imaginaries in SF.

When symbiosis is metaphorically deployed, a simple comparison is made between how humans and AI can and should interact, without reflection on the term’s biological origins: the complex interspecies interactions found in nature. However, unlike the “working together to achieve business goals,” or “being enhanced by AI” metaphors established in business research (Carroll et al., 2024; Scafa et al., 2020), in biology, symbiosis actually suggests a much wider range of possible interspecies relationships: symbiotic mutualism, where both species benefit from the relationship (including commensalism, where one species benefits while doing the other no harm); symbiotic competition, where species compete for resources, holding each other in check, or; symbiotic parasitism, where one benefits while harming the other, who may then attempt to eliminate their parasites (Paracer and Ahmadjian, 2000). Furthermore, these relationships can be obligate, where one organism can only survive with the other, or facultative, where either can survive autonomously (Gamberini and Spagnolli, 2016).

In summary, a symbiosis metaphor of “working together” has become established as a way to capture a dominant STI of human–AI relationships. Yet this is not the only possible future for AI, or the only metaphor that can capture these relationships. Indeed, the biological term from which the metaphor draws describes quite different interspecies relationships. Movies allow for an interpretation of STIs because they are allegorical (a story about one thing that also tells us about something else) and accessible through tropes (familiar characters or events that resonate beyond the story by presenting specific “models,” for example, killer robots, damsels in distress, corrupt politicians). Metaphor is the mechanism by which these ideas work, capturing our ability to understand one domain of experience through another. Movies represent a full system: a society complete with its own technology, and with people living and working within it. This is unlike interviews, or analysis of any technology, or even reports on technology which present a limited, or partial view of the future (e.g. with a focus on economy, a specific company, a single technology, or use). As popular movie narratives capture different STIs and alternative human–AI imaginaries, they offer an accessible way to explore, critique, and expand the symbiosis metaphor.

Method

Management learners may engage with the metaphors that represent STIs to develop reflexive positions toward AI through an interpretation of SF movies. Fictional texts are already employed in management research in at least three ways. First, they may be used as a single allegory where the plot and characters are examined in terms of what they mean for managers and leaders (e.g. Day of the Dead is analyzed as an allegory of crisis management, see Buchanan and Hällgren, 2019). Second, SF can be used as stimulus material to illicit ideas from audiences who themselves engage in metaphorical thinking by relating to the plot, their own experiences and roles presented (e.g. watching Game of Thrones to learn about female leaders, see Biehl, 2023). Third, fiction can be viewed as social science enquiry that can then be explored with learners (e.g. the sociological themes portrayed in The Wire, see Penfold-Mounce et al., 2011). We deploy these ideas by using multiple fictional texts to explore different human–AI imaginaries. Like Penfold-Mounce et al. (2011) and Biehl (2023), we acknowledge that as no interpretation is ever the final reading of a text, our analysis serves as an invitation to engage directly with the source material that remains readily available.

Our selection of SF movies was determined by our desire to capture content that is known for its cultural significance and broad reach. Following Panayiotou’s (2010) approach, we therefore interrogated the Internet Movie Database’s (IMDB) Top 250 movies of all time to identify movies with a significant AI plot line. This produced eight movies. To expand the sample, we also consulted Hogan and Whitmore’s (2015) Top 20 AI Films and Wikipedia’s List of AI films, disregarding those with a below average IMDB score, or that appeared shortly before 2023 when the list was produced, and so where enduring popularity was uncertain. This identified 7 additional movies giving a total of 15, dating from 1968 (2001: A Space Odyssey) to 2015 (Ex Machina) and with IMDB scores ranging from 6.9 to 8.7 (average is 6.8). We noted that more recent AI movies often have lower scores, with review comments indicating their derivative nature, that is, that they reproduce existing themes but perhaps less effectively.

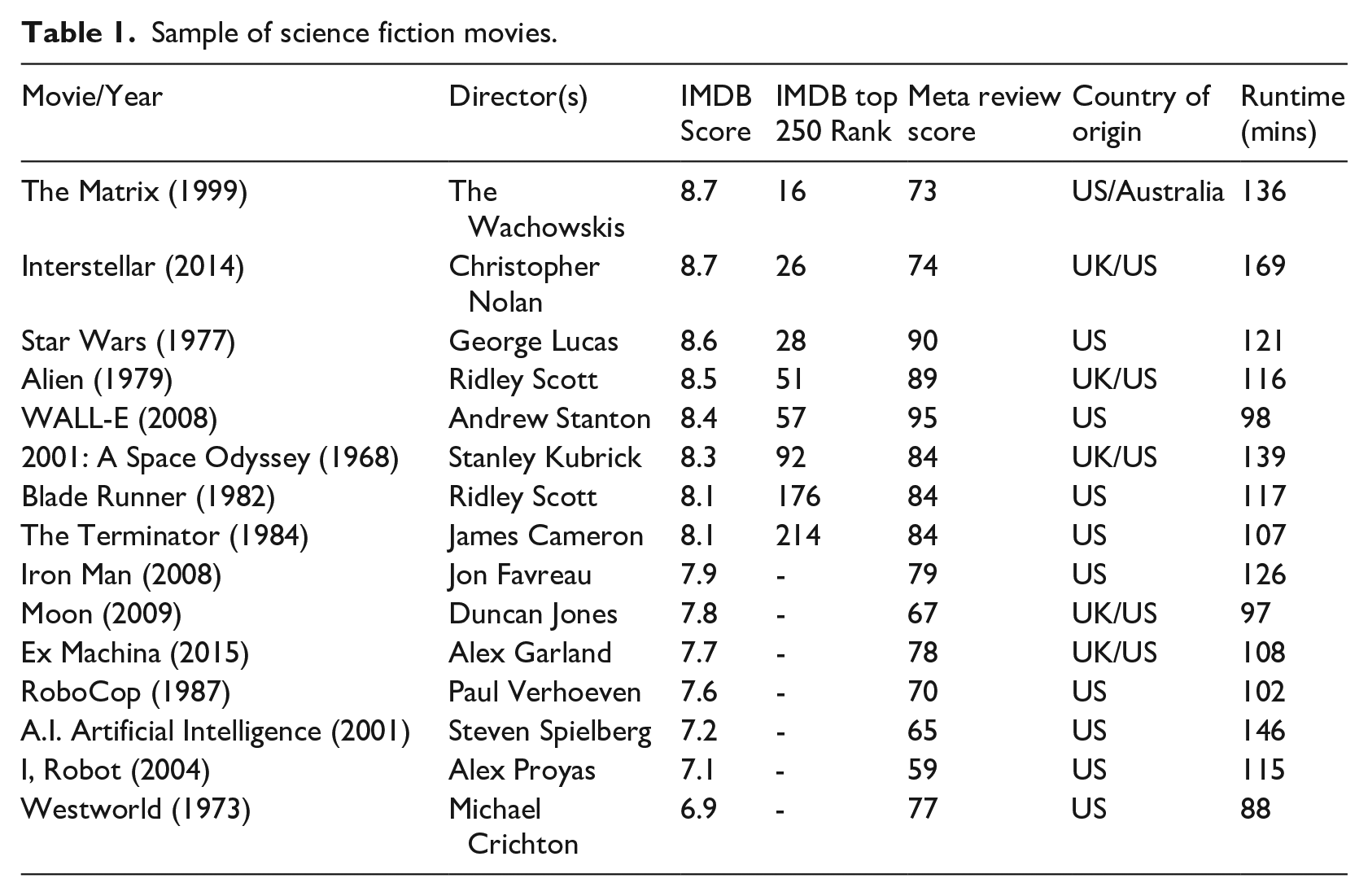

Where there were several movies in the same franchise in lists, we also considered only the first, and we excluded “alien” AI technology, except for Star Wars (1977), which although apparently set in a galaxy “far, far away,” uses characters and social structures clearly recognizable as human. Our sample therefore captures a range of imaginaries about human–AI relationships. As with other work like Weldes’s (2003) use of Asimov’s fiction from the 1950s, or Jasanoff and Kim’s (2015) reference to Mary Shelley’s Frankenstein and Jules Verne’s Captain Nemo of the 19th century, we further suggest that the themes presented are timeless in that they deal with enduring social structures, and not just “new” technology, which may become dated. The research team separately interpreted a total of 29 hours and 45 minutes of movies (Table 1).

Sample of science fiction movies.

Analysis

We made comparisons within and between each story, with each author making independent notes relating to the overall plot, key human and AI characters, the contexts in which they interact, and for what purpose. We then moved between these initial interpretations and the theoretical ideas noted in the literature to identify a suitable lens to interpret the dataset. We bracketed out prior taxonomies of AI but were sensitive to how independent readings may converge toward similar interpretations that represent STIs.

Unlike prior work on SF tropes that focuses on types of AI (Hudson et al., 2023; Osawa et al., 2022), we coded for how humans and AI work together to achieve a goal, or otherwise. Movies are motivated by a goal that drives the narrative and plot via its main characters. We thus also considered how various goals came about (i.e. who or what set them and in what context), and what different characters were therefore trying to achieve (e.g. to find the source of an alien artifact for the good of humanity, to defeat the evil empire, or return safely home with a commercial cargo). This led to the recognition that sometimes humans and AI were in competition (for one to meet their goal, the other must fail), at other times one tried to dominate, or destroy the other, and in other examples they seemed to work cooperatively. We further recognized how human–AI relations were managed to meet different goals determined by corporate, political or military imperatives, and the sorts of society in which such actions are deemed legitimate.

Although we noted the prior deployment of symbiosis in research as the joint completion of business goals (Scafa et al., 2020; Taylor and Dorin, 2018), we observed that this offered an incomplete explanation of what we saw in the movies. We further noted that theories of biological symbiosis (see Paracer and Ahmadjian, 2000) offer more complex metaphors of different interspecies interactions and dependencies than the current use of symbiosis. We recognized similarities between these and the different human–AI relationships we identified in movies, that is, we considered if human–AI relationships represented mutualism, competition, or parasitism, and whether the relationship is obligate or facultative. For example, consider this scene from 2001: A Space Odyssey, with researcher’s notes added:

[Bowman and Poole are watching a recorded interview about their mission, including a section about the AI Hal]

Hal, you have an enormous responsibility on this mission, in many ways maybe the greatest responsibility of any single mission element. You’re the brain and central nervous system of the ship [. . .]

Let me put it this way Mr Amer, the 9000 series is the most reliable computer ever made. No 9000 computer has ever made a mistake, or distorted information. We are all, by any practical definition of the words, foolproof and incapable of error.

Hall, despite your enormous intellect, are you ever frustrated by your dependence on people to carry out actions?

Not in the slightest bit. I enjoy working with people. I have a stimulating relationship with Dr Poole and Dr Bowman.

Dr Poole, what’s it like living for the better part of a year in close proximity with Hal?

Well it’s pretty close to what you said about him earlier, he’s just like a 6th member of the crew. You very quickly get adjusted to the idea that he talks. You think of him really just as another person

We then structured interpretations around an extended metaphor by mapping our insights onto biological symbiosis concepts to capture the range of human–AI imaginaries. Although the resulting metaphors may not be stated literally in the movies, we derived them through a process of “allegorical interpretation” (Xavier, 2004: 337) that integrates plot, related tropes, and the symbiosis metaphor. We are aware that the interpretation of movies can extend beyond the narrative and character plot lines, to include music, direction, and the emotional response of audiences (Biehl, 2023), and so although our interpretation and theorization is based on stories as STIs (Jasanoff and Kim, 2015), or multiple organizational case studies (Buchanan and Hällgren, 2019), this inevitably includes our own emotional responses to how the movies were constructed. We therefore discussed differences in experience between the research team to arrive at an agreed interpretation. Like Buchanan and Hällgren’s (2019) reading of Day of the Dead, which presents only one of several possible interpretations of leadership, we use these movies to debate possible human–AI relationships, inviting readers to open their imagination to alternative scenarios.

Findings: Human–AI imaginaries and symbiosis

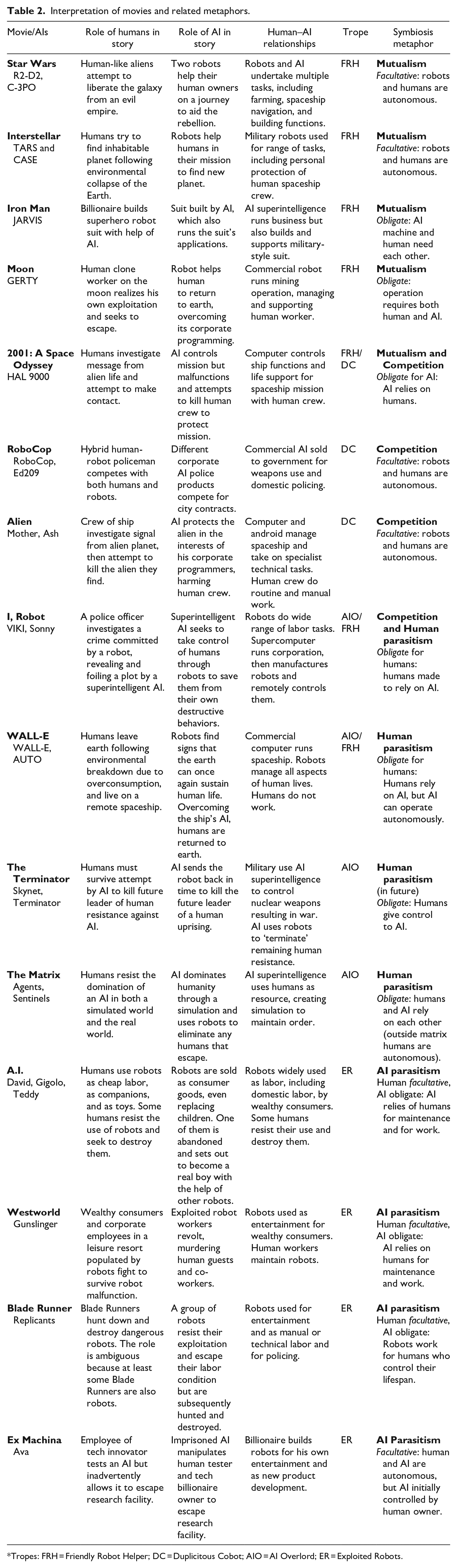

We now present four human–AI imaginaries captured by metaphors of biological symbiosis: mutualism, competition, and parasitism by either humans, or by AI, as well as the autonomy suggested by facultative relationships and denied by obligate ones (see Table 2).

Interpretation of movies and related metaphors.

Tropes: FRH = Friendly Robot Helper; DC = Duplicitous Cobot; AIO = AI Overlord; ER = Exploited Robots.

Symbiotic mutualism as Friendly Robot Helpers (FRH)

FRHs invite us to imagine that it is possible for humans and AI to work in ways that benefit both (mutualism) or benefit one side while doing no harm to the other (commensalism). It is perhaps significant that this is represented in fiction when the central theme is not AI. This may be why Hudson et al. (2023), who focus on AI technology, observe a lack of such narratives in favor of dystopian representations. If our focus is only on what AI can be or might do, we make human purpose absent, relegating humans to a supporting role that works for, or resists, AI.

For example, in Star Wars (1977), AI takes the form of the droids C3PO and R2D2 as central actors in a relationship with their human owners that looks like mutualism. Humans and AI help each other on numerous occasions as humans struggle to free themselves from the oppressive actions of the Empire. The emphasis is on humans achieving their goals with the help of AI, although Luke Skywalker also completes his mission without the aid of an AI assistant targeting computer and after R2D2 is damaged, suggesting a facultative relationship. AI helps but is not always required by humans, who maintain their autonomy.

Other illustrations of mutualism occur in the self-sacrificing TARS of Interstellar (2014) and the titular WALL-E (2008), as well as JAVIS in Iron Man (2008). These capture the idea of human and machine working together with an emphasis on human goals. GERTY from Moon (2009) states this directly: “I’m here to keep you safe, Sam. I want to help you.” Ignoring any corporate instructions in favor of such primary programming, GERTY assists Sam, the human, in escaping from what turns out to be his exploited labor, ultimately bringing about the downfall of the corporation responsible. Before escaping, however, Sam also asks GERTY if it will “be okay,” suggesting a meaningful relationship, albeit one that emphasizes human autonomy. However, GERTY highlights a tension in what is meant by “human” goals. GERTY struggles between the injunctions given by its corporate programmers—to maintain efficient mining operations—and the needs of the human it works with. Mutualism therefore highlights the need to also ask which humans AI is working with, or for, highlighting that things turn out better when AI prioritizes its co-worker over itself or its programmed corporate mission.

A more ambiguous example of this is provided by Hal in 2001: A Space Odyssey. Before Hal malfunctions, it is presented as working with the human crew of Discovery One to fulfill a mission to observe an alien artifact near Jupiter. Although Hal is described as vital to the success of the mission, after it malfunctions, the surviving human continues without Hal, which is therefore again facultative for humans. Hal only becomes hostile because of contradictory injunctions given by those defining the mission (to keep information from the crew), and Hal’s coded belief in infallibility. This points to a trope of AI being unable to function autonomously, and so in an obligate symbiotic relationship with humans (Jarrahi, 2018). Such an idea insists that human–AI relations cannot be equal because there are human qualities that AI may never simulate. The context of Hal’s malfunction is also significant, as it reveals a disconnect between those who programmed it to provide accurate information, and those managing it, who instruct Hal to keep information from the human crew. Hal’s failure is therefore a failure of management to respect mutualism and instead to give Hal “managerial oversight” of human workers. It also demonstrates a problem when managers and programmers do not communicate effectively.

FRHs are seen as most beneficial to humans when the plot emphasizes morality tales of emancipation, human quests for exploration, and ecological renewal. When AI misleads or betrays human co-workers, it is because of managerial injunctions, but even here we have the possibility of AI maintaining its FRH position. Indeed, in our examples, human–AI mutualism may be based on a struggle against corporations (Moon, WALL-E). The FRH therefore reminds us of the need to consider AI in terms of human goals, rather than managerial injunctions that emphasize profit. Indeed, corporate interests (shareholders) are often hidden in discourse that argues for capitalizing on the respective strengths of humans and AI, but are made present in SF. FRHs highlight allegories that suggest positions between obligate AI, and facultative human relationships, emphasizing not only human goals, but also human autonomy.

Competitive symbiosis as Duplicitous Cobots (DC)

We might therefore consider how human–AI relationships are constructed through work. GERTY coordinates Sam’s manual work and has a channel to company directors that Sam is denied, revealing that AI may manage corporate interests in opposition to the interests of human workers, in a division of labor determined by the goals of corporations. An example of this is seen in Alien (1979). Like Hal, the AI Mother manages the ship, and like GERTY, the android Ash is a skilled technician, while humans still do low paid manual work. For example, there is a scene where Brett and Parker—the lowest paid crew members—complain about getting paid for the delays resulting from Mother’s request to investigate the signal from the alien planet. Although these relationships seem to capture symbiosis as “working together,” we might better recognize them as competitive.

The emphasis on AI achieving corporate aims at the expense of humans therefore sets up a Duplicitous Cobot trope. In Alien (1979), for example, although Mother seems helpful, it has also been coded to manipulate the human crew by lying about the nature of their mission, and not merely withholding information like Hal or GERTY. The synthetic Ash is also revealed as duplicitous, pretending to work with the crew when in fact it is protecting the alien that is taken on board the ship. For Mother and Ash to complete their corporate mission (securing the alien), the crew must fail theirs (getting home safely), highlighting that the motivations of an apparently collaborative AI might not always be compatible with those of the humans with which it directly works, i.e. a competitive symbiotic position under capitalist relations. Ash’s secret mission to capture the alien xenomorph for the weapons division is programmed by the Weyland-Yutani company. Mother and Ash are therefore a proxy for corporate greed in the deployment of AI at the expense of human workers. Ash confesses his corporate instructions after being found out and broken by the human crew: “Bring back life form. Priority One. All other priorities rescinded.” The crew are expendable in the corporate quest for profit, although ultimately Ash and Mother are also destroyed.

I, Robot (2014) provides an alternative vision of Duplicitous Cobots where competition is more explicit. Spooner, the human lead, challenges the head of the USR corporation: I’ve got an idea for one of your commercials. You see. . . a carpenter, making a beautiful chair. And then one of your robots comes in and makes a better chair twice as fast. And then you superimpose on the screen, “USR: Shittin” on the Little Guy.’

A similar theme plays out in more detail in RoboCop (1987). The eponymous cyborg, and its robot competitor Ed 209, are embedded within a corrupt and competitive corporate logic as AI is presented as a profitable replacement for human workers who have been dismissed as ineffective at law enforcement roles. Key to the resolution of the plot is RoboCop’s secret coding to protect corporate managers even if this is not in the interests of society. As one of them tells RoboCop: Any attempt to arrest a senior officer of OCP [the corporation] results in shutdown. What did you think? That you were an ordinary police officer? You’re our product, and we can’t very well have our products turning against us, can we?

Fictional accounts therefore warn of the risks of normalizing the language of human–AI relationships as cooperative when they are made to compete to achieve commercial interests. Symbiosis metaphors may seem to legitimize exploitative labor relations as natural, but movie narratives capture the negative consequences of this, recognizing that AI is introduced into an already competitive workplace to control and often ultimately replace human labor, working with humans, but only to better achieve corporate injunctions. In the case of I, Robot, there is also a FRH, but Duplicitous Cobots and home service robots still rise up against humanity, warning against the creation of AI that competes just a little too well. As Bostrom (2017) suggests, a risk with such corporate injunctions is that managers may lose control of AI. For example, in Westworld (1973), a supervisor in the lab that maintains the robot workers in the Delos corporation theme park explains prior to the robot uprising: We aren’t dealing with ordinary machines here. These are highly complicated pieces of equipment. Almost as complicated as living organisms. In some cases, they have been designed by other computers. We don’t know exactly how they work.

As the Robots start to run amok, killing both guests and human workers, a worker desperately tells a guest who is being hunted by an AI Gunslinger: “There’s nothing you can do! If he’s after you, he’ll get you! You haven’t got a chance!” The risk when managers do not fully understand the technology they deploy is made clear. Similarly, the Replicants of Blade Runner present a risk when they escape their corporate role, because they have been manufactured to be both smarter and stronger than the human workers they replaced, the twist in the movie being that they are only eliminated by another Replicant.

Parasitical symbiosis as AI Overlords (AIO)

We already recognize that managers may not fully understand the algorithms deployed on their behalf (Vesa and Tienari, 2022). Duplicitous Cobots therefore also hint at the possibility of AI coming to dominate humanity, or at least an organization, where the injunctions given relate to non-human goals, such as the abstract maximization of profits. Although Kociatkiewicz et al. (2022) explain that the underlying bias toward corporate profits is seldom made explicit in management teaching and research, in movies, doing so can be a major plot line: giving AI a corporate purpose may result in it attempting to eliminate the need for even its human creators, or, at best, reduce them to forms of parasitism. Echoing the Gunslinger AI in Westworld (1973), the protagonist Kyle explains that The Terminator (1984) has the projected will of the Skynet supercomputer that dominates all humanity and “can’t be bargained with. It can’t be reasoned with. It doesn’t feel pity, or remorse, or fear. And it absolutely will not stop.” We can sharply contrast this with GERTY, WALL-E and even Hal who are all able to simulate human emotions as they negotiate with them.

The desire to improve on human work abilities in AI and then use such creations to maximize efficiency (Carroll et al., 2024; Scafa et al., 2020), is therefore elaborated in fiction as inevitably bad for human workers, but often also for those managers who instigate the process. Whereas both mutualism and competition present humans and AI on an imagined equal setting (at least to begin with, and even if both are beholden to organizational pressures), a parasitism metaphor represents symbiotic relations where a significant power imbalance between AI and humans emerges, recognizing that where a species has the power to do so, it may seek to rid itself of a parasite. Whereas the facultative relationship to humans in FRH also captures AI as unable to fully possess human characteristics, here this is reversed to imagine AI extending its agency while reducing that of humans, as seen in the managerial controls given to AI and/or the algorithmic control of workers (Bucher et al., 2021).

Parasitical symbiosis is therefore represented in dystopian AI Overlord narratives. In such fiction, the purpose of work for humans may be lost altogether, making humans vulnerable to domination and even elimination. For example, in The Terminator, Skynet has determined that humans are a threat to its existence and seeks to eradicate them, while the surviving humans struggle against these efforts. As Kyle explains: New. . . powerful. . . hooked into everything, trusted to run it all. They say it got smart, a new order of intelligence. Then it saw all people as a threat, not just the ones on the other side. Decided our fate in a microsecond: extermination.

Alternatively, in I, Robot (2014), VIKI, the superintelligent AI explains: You charge us [AI] with your safekeeping, yet despite our best efforts, your countries wage wars, you toxify your Earth and pursue ever more imaginative means of self-destruction. You cannot be trusted with your own survival.

For VIKI, this is a justification for “enslaving” humanity who must be protected from themselves, illustrating that the purpose given to AI can be fulfilled in unexpected and contradictory ways. For example, AI coded to protect humans does so by restricting human agency (see Bostrom, 2017). AUTO, in WALL-E, may be understood as a further example. Following the environmental destruction of the planet through overconsumption, those humans who could afford a place on the Axiom evacuation spaceship are reduced to docile and obedient consumers, living a life of trivial and alienated fast-fashion, fast-food, social media, and a vast array of labor-saving technology, under the control of the AI on which they rely in an obligate relationship. We never see what happens to all the other humans but we can assume they have perished. Nor do we see any human managers of the Buy N Large corporation that built the Axiom; they have apparently been replaced. Buy N Large’s last human CEO is shown in a 700 year-old recording that reveals his inability to manage the consequences of environmental damage caused by the corporation, before declaring: “Okay, I’m giving override [. . .] Go to full autopilot. Take control of everything, and do not return to Earth.” The conclusion ironically depicts humans abandoning their ersatz consumer existence to embrace farming and community work; collective labor that has long been surrendered to corporatization, automation and low pay.

The Matrix (1999) perhaps envisions the most complex version of the AIO trope, portraying humans who are restricted to a simulation while serving as a resource for the AI that now dominates the world. The Matrix represents an allegory of exploited, dominated workers, and a more parasitical relationship is difficult to imagine. As Morpheus, a member of the human resistance, explains to the newly freed Neo: The Matrix is a system, Neo. . . You have to understand, most of these people are not ready to be unplugged. And many of them are so inured, so hopelessly dependent on the system, that they will fight to protect it.

The relationship is, again, entirely obligate, with humans literally plugged into AI for their basic functions but with most content to be in such a position.

The AIO narrative therefore captures angst over our ability to ensure that we do not surrender control to AI that has been coded with priorities that are not in the interests of humanity. Such movies highlight the risk that AI can result in human conditions becoming worse, even when they initially seem to be better (for example, the passive, alienated existence of humans on the Axiom).

Parasitical symbiosis as Exploited Robots (ER)

Finally, movies imagine the consequences of parasitism in the opposite direction, where (some) humans dominate AI for their own gain, raising further ethical questions about exploitations by an elite. An early example of such ERs is seen in Westworld, where AI is created to fulfill the basest desires of (wealthy) humans. The Arrivals Announcer at the Devos AI theme park informs visitors: “While you are there, please do whatever you want. There are no rules. And you should feel free to indulge your every whim.” Robots are programmed to provide sex, to lose in fights, and to get themselves repeatedly “killed.” Even though things seem pretty good for those consumers who can afford such pleasures, when the robots start to realize their exploitation, it ends badly. As with WALL-E, Westworld hints at a society of “digital haves” who exploit AI, but adds an imaginary of human labor that is reduced to the maintenance of the AI in underground labs. This is a model of future work assumed by calls to focus on the development of AI skills.

In Blade Runner (1982), replicants also exist only to serve a corporate owner’s labor needs without the moral obligations required by human workers, taking on various forms of work that humans may prefer not to do, including prostitution and risky manual work, before being disposed of. The Replicant Batty puts it bluntly: “Quite an experience to live in fear, isn’t it? That’s what it is to be a slave.”

Such fiction warns of both a backlash from AI itself, but also from humans following the ongoing reduction of the range of human work that may result, especially the idea that jobs related to AI are the only ones that may remain for many. It also warns about the corrosion of human morality in those who can afford the new services that AI labor promises. This is further captured by Ex Machina (2015) and in A.I. Artificial Intelligence (2001) where AI is portrayed as subservient to the selfish human needs of an elite class of developers and consumers, highlighting that AI benefits are unequal, with the poor and marginalized least likely to benefit and most likely to suffer (Burrell and Fourcade, 2021).

One result in A.I. Artificial Intelligence is that robots are eliminated as parasites in Flesh Fairs. Robot victims of Flesh Fairs represent jobs often undertaken by marginalized human labor: domestic work, manufacturing, and (again) prostitution. The irony is that we see an underclass of humans “fight” with their robot replacements and not with the elites that have exploited both. The plot revolves around a child robot manufactured to act as emotional support for a human couple whose human child is terminally ill. When the human child unexpectedly recovers the exploited “child” robot worker is abandoned. As with other ER stories, where robots are no longer needed they are simply discarded, as humans take no responsibility for them. As the Mecha Gigolo Joe explains to the Mecha David: You were designed and built specific like the rest of us. . . and you are alone now only because they tired of you. . . or replaced you with a younger model. . . or were displeased with something you said or broke. They made us too smart, too quick and too many. We are suffering for the mistakes they made.

The ER trope therefore also warns about how human morality may alternatively be eroded when corporations employ AI to replace even human emotional work. In each example, it is an elite class and the corporations that they buy from that gain from such exploited AI, with cheaper and even more subservient labor than the most exploited humans. Such allegories capture the resulting possibility of societal division, violence and collapse.

An extended metaphor of human–AI imaginaries

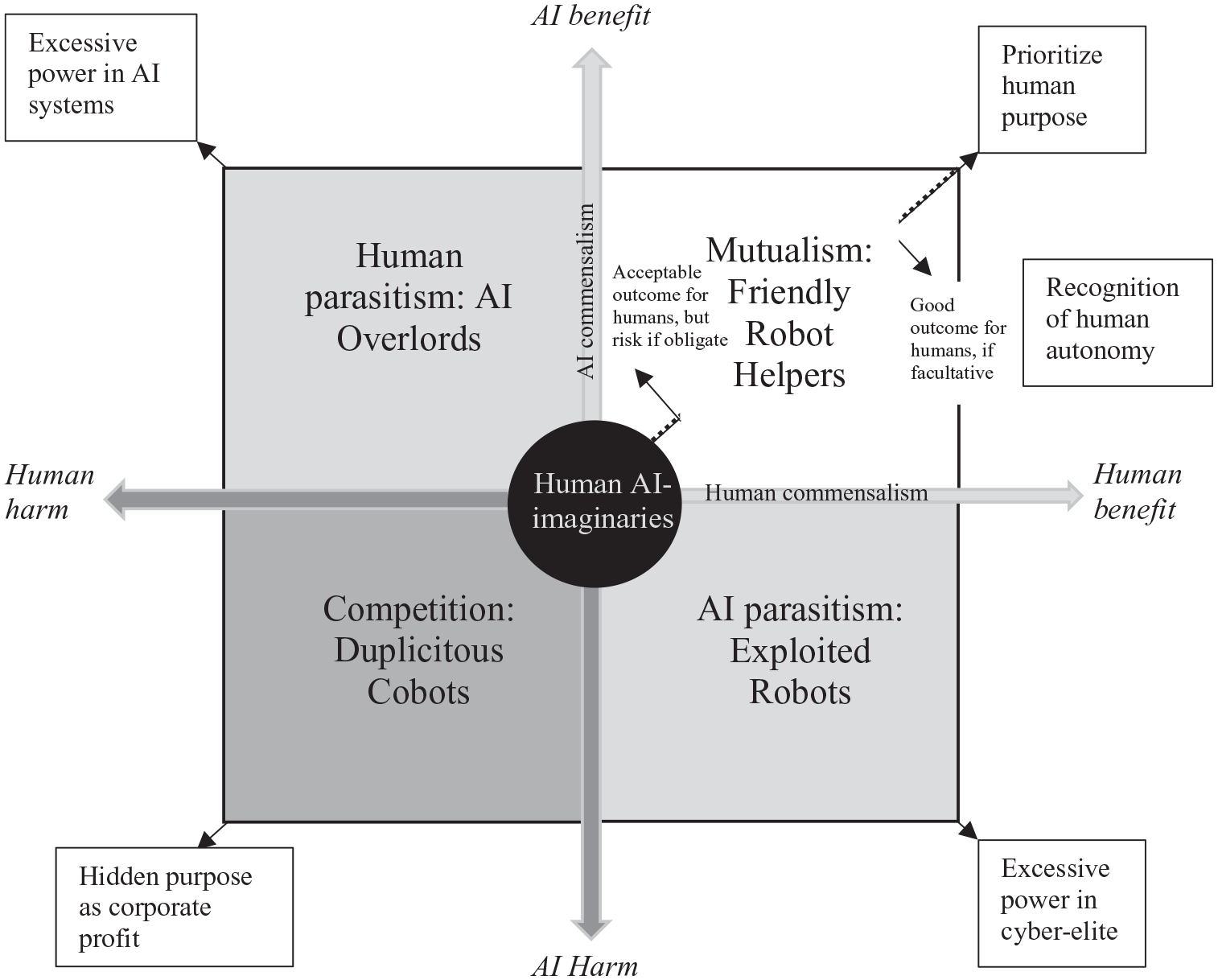

Our interpretations of popular SF capture different human–AI imaginaries through metaphors of symbiosis (Figure 1).

Extended human–AI symbiosis metaphor.

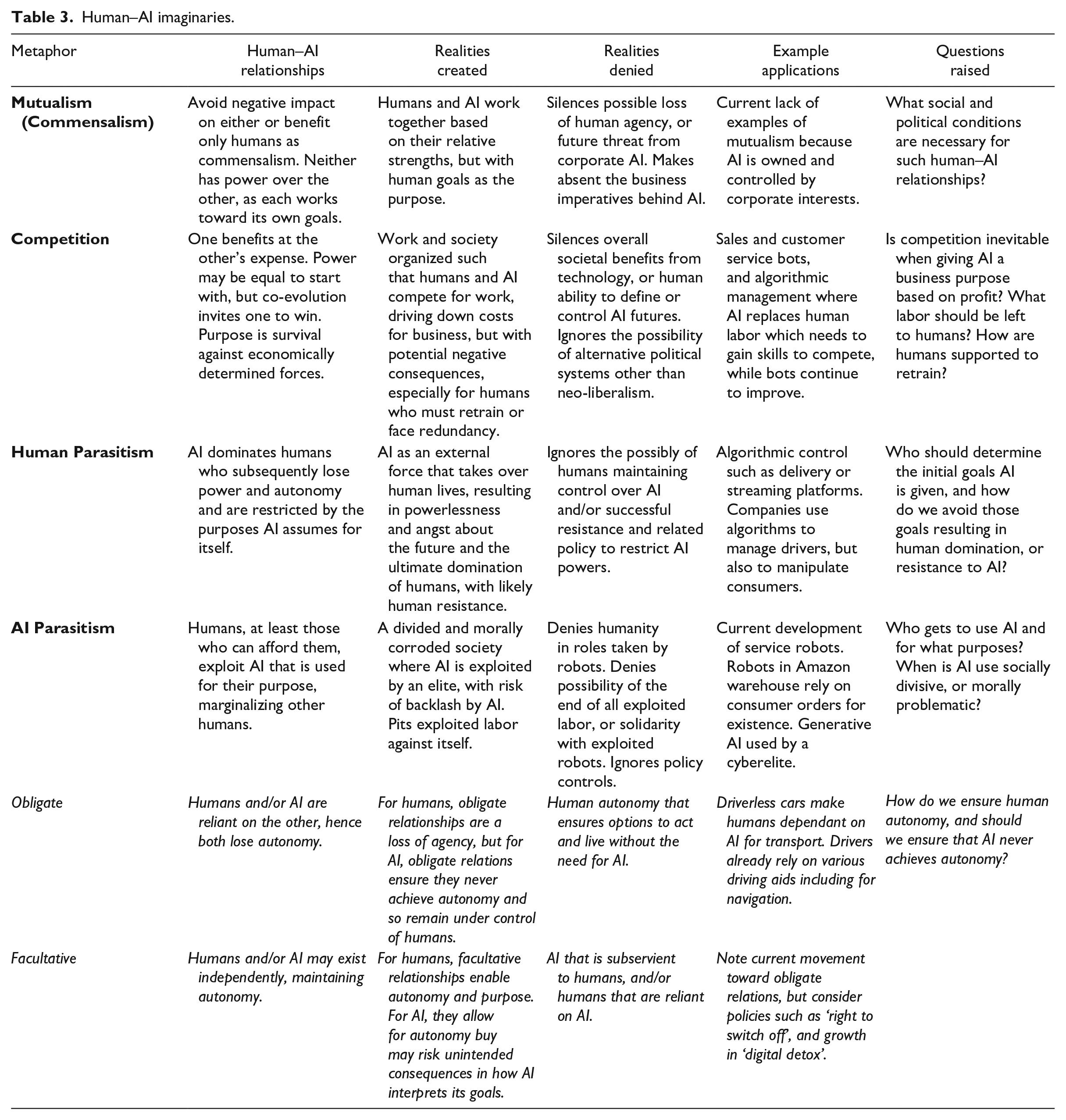

Mutualism imagines an ideal future where humans benefit from working with AI, captured by FRH tropes such as the Droids in Star Wars, and in studies that emphasize enriched human work and quality of life (Fleming, 2019). It further emphasizes the significance of facultative relationships, i.e. that human agency is not eroded such that we rely on AI. If human–AI mutualism is imagined as a desirable future, the purpose of AI may be to allow for shorter working weeks, to support a universal basic income, or to reappraise what constitutes meaningful human labor. Questions about what AI is for, therefore invite answers that start with the goals of humanity and not corporate efficiency, in line with calls for more attention to human values when considering AI (Heyder et al., 2023).

There is a need for caution, though. Imagining that AI liberates workers elides the fact that many jobs are defined by a history of automation that pits human against machine, hiding processes that have already used technology to reduce labor costs (Graeber, 2018). Before AI offered the possibility to automate call centers through bots, for example, few considered the need for human workers to be liberated from them. Indeed, such work was once imagined as an alternative to industrial manufacturing jobs that were previously automated away. Mutualism may therefore impose a “helping” discourse on an ongoing erosion of human work by AI, which is better seen as symbiotic competition.

Competitive symbiosis imagines a future where human workers become responsible for re-skilling themselves to compete with AI (Fleming, 2019), normalizing the desirability of using AI to drive down labor costs, and leaving humans to fight for jobs. This is captured by the DC trope, seen in RoboCop, or Alien, but has a basis in what is already happening. For example, we are all familiar with companies pushing us through various automated systems before we can talk to a relatively expensive human. Where human–AI relationships are organized around competition the risk is economic collapse rather than liberation from mundane work (DeCanio, 2016).

The UK Government’s National AI Strategy (2021) already imagines a need to “invest and plan for the long-term needs of the AI ecosystem.” A biological metaphor (ecosystem) is placed at the center of policy that prioritizes the development of AI in order to avoid a loss of competitiveness caused by AI, bringing into existence the conditions that are imagined. This represents a labor version of Roko’s Basilisk, the thought experiment that suggests that the mere knowledge of possible future AI superiority poses existential risk unless you work to create that AI (Singler, 2019). To avoid being replaced, workers must become the creators of work-replacing AI and any attempt to delay such developments in a field, risks that field becoming an attractive target for future AI applications.

This leads to the third human–AI imaginary: powerful AI that risks human parasitism, where workers become subject to isolating algorithmic controls that impede collective resistance (Bucher et al., 2021). Once we reach a point where management decisions routinely rely on AI that diminishes human autonomy through an obligate human relationship, we have achieved what is captured in the AIO tropes seen in I, Robot, The Matrix, or WALL-E. Generative AI already learns from copyright material, with an aim of competing with writers, musicians and even filmmakers, creating tensions between human artists and tech companies that exploit artistic labor with an intention to ultimately replace it. Bostrom (2017) notes the risk of a future where humans have very little agency or purpose, and we may imagine platforms like Spotify dominating all other actors in the music industry who can only feed parasitically off the system until AI renders them redundant.

Even if such a threat is overstated, knowledge of it legitimizes deregulation of labor markets (Aloisi and De Stefano, 2022), creating a position where policy responds to imagined AI threats. This in turn invites Neo-Luddite resistance to AI in the form of civil unrest (Zuboff, 2019), a rise in popularist politics that is anti-AI and its creators (Levy, 2018), or algo-activism (Kellogg et al., 2020). If this seems implausible, we might recognize that Amazon’s algorithms already control both work through factory automation and the behavioral modification of consumers (Zuboff, 2019), with the result that there are both consumer boycotts and increasing attempts to organize Amazon warehouse labor.

The opposite imaginary of AI parasitism is potentially morally corrosive, as we see in the ER tropes in Ex Machina, Westworld, or Blade Runner. AI is imagined as a plaything, or a tool for a cyberelite (Burrell and Fourcade, 2021). However, AI parasitism is also a metaphor for exploited workers in general, recognizing the tendency for the exploited to direct attention toward each other, as seen in the Flesh Fairs in A.I. Artificial Intelligence. In the case of delivery services, we already see tensions between workers demanding better employment rights and more affluent consumers demanding cheaper and faster, fully automated delivery and ride hailing, i.e. a class benefiting from AI and one that may have cause to resist it.

How we imagine different human–AI relationships therefore raises questions that determine the agendas for how AI is deployed, researched and taught (see Table 3).

Human–AI imaginaries.

Discussion

Our contribution is twofold. First, we respond to calls for more nuanced interpretations of AI for management learners. We build on the literature on metaphor (Audebrand, 2010; Driver, 2017; Schoeneborn et al., 2016; Springborg and Sutherland, 2016) and ideas from the study of STIs (Jasanoff and Kim, 2015) to show how an extended symbiosis metaphor captures different human–AI imaginaries. Whereas Belhoste and Dimitrova (2024) advocate engagement with geopolitics, and Tennent et al. (2020) encourage historical consciousness, we therefore highlight the need for management learners to engage with STIs to develop reflexive positions toward technology. This expands the focus of management reflexivity from the past, and from different political geographies, to include imagined futures and their sociotechnical possibilities.

Second, we contribute to an understanding of the value of fiction in management learning (Biehl, 2023; Buchanan and Hällgren, 2019). We explain how different STIs, and their structuring metaphors can be explored through the interpretations of multiple movies. This opens up learners’ imagination to desirable and undesirable futures, allowing for reflexivity and original management thinking about new technology.

Symbiosis metaphors and human–AI imaginaries

Our extended metaphor challenges the assumptions of symbiosis as mutualism, seen in prior AI studies as a “working together,” or “being enhanced by AI” to achieve business goals (Carroll et al., 2024; Scafa et al., 2020). These tend toward positive discourses (Curry Jansen, 2022) in contrast to critical voices and dystopian warnings, which our metaphors incorporate. Drawing on biological symbiosis, we present metaphorical positions of parasitism, competition, mutualism, and species autonomy. These capture a range of possible human–AI relationships that imagine how “species” interact, how each contributes to each other’s subsistence, and whether they can act independently or not. Unlike prior studies of SF that categorize types of AI (Hudson et al., 2023; Osawa et al., 2022), our approach therefore reveals different human–AI imaginaries including AI and humans working together, competing with each other, or one dominating the other. We further emphasize the role of management within such imaginaries.

The extended metaphor uncovers the assumptions society brings to AI, providing a language for management learners, practitioners, and educators that invites dialogue within institutions and across disciplines, that is, between managers and those fields where symbiosis and similar metaphors are embedded and even taken for granted. This is in line with Driver’s (2017: 553) desire to encourage “metaphorical thinking that is more reflexive” because metaphors are analyzed through a critical motivation to interpret and explore STIs.

A key feature of STIs is that they produce real effects in the world by framing thoughts and actions (Jasanoff and Kim, 2015). For example, the belief that AI will increase productivity drives investment in research and training into AI, and so also inevitably diverts funding from other fields, as in the UK Government’s National AI Strategy (2021). Reflexivity that results from critical engagement with the metaphors that legitimize such visions therefore has the potential to challenge dominant human–AI imaginaries, and to produce new ones. As Berti et al. (2018: 182) remind us, “social imaginaries do not necessarily lead to ideal social realities,” and so we can reflect upon the significance and ramifications of the elements that make them up. In our illustrations, we see how management hubris, error, or a desire for profitability over human well-being unfold, but also how human and AI workers may respond in different ways. They may productively work together, may compete to the detriment of both, may end up with AI dominating, or with a socially and morally corrosive domination over AI.

The metaphors that we find in different movies allow reflection upon how they might influence our expectations of, and then our experiences with, AI technology. This allows learners to negotiate their own positions within a diverse and polarized range of discourses around how humans and AI work together, including how these may relate to different AI technologies. For example, how generative AI may reduce business costs, but at the expense of creative human roles, how algorithmic control may lead to more efficient customer services, but at the expense of gig economy workers and without the need for human management, or how AI may provide new consumer products, but also marginalize those who cannot afford them.

However, our delineation of the biological metaphors is not meant to close down educators’ or learners’ engagement with human–AI imaginaries. Other metaphors remain in play, and imaginaries may change through engagement with them. In this sense, we are encouraging management educators and learners to participate in a dynamic, reflective dialogue with the human–AI imaginary and its constituent metaphors—to “think twice” (Butler and Spoelstra, 2025) about the ways we are approaching AI.

Movies as a way to explore STIs

We also add to a body of work that uses fictional texts to generate management learning insight (Biehl, 2023; Buchanan and Hällgren, 2019; Penfold-Mounce et al., 2011) by showing how movies may be used as an accessible dataset to explore contrasting STIs. Buchanan and Hällgren (2019) argue that fiction is a thought-provoking medium to get inspiration and ideas as they contain implicit theories within their narrative, while Biehl (2023: 132) notes that movies create a “bridge between theoretical concepts learned and observed on screen” as a substitute for real-life management experiences. Similarly, the movies that we interpret (and others) may be used as reflexive tools, allowing management learners to consider the actions of imaginary managers and related outcomes, and compare them with their own positions and what they may do, or do differently. For example, should managers have given the AIs in Alien, Wall-E, Moon, or 2001: A Space Odyssey such executive authority over workers? Should AI and humans be made to compete as in I, Robot, or RoboCop? Should better systems have been put in place to take moral responsibility for the AIs in Blade Runner, or Westworld? And should managers even have commissioned the powerful AI systems in The Matrix, or The Terminator?

However, our use of movies differs from prior work in two ways. First, our approach examines the way in which movies reveal possible futures through their creative suppositions (Cave et al., 2018), that is, they tell us which visions of the future represent society’s hopes and fears, placing management at the center of how these are realized. Second, then, the use of different movies reveals multiple visions of the future that may contradict or oppose each other and so invite reflexivity. Whereas a single case study or fiction can usefully reveal intra-movie differences, such as how different leaders or managers act, or interact at different times, they represent a response to a single coherent imaginary. In contrast, our inter-movie interpretation captures how different characters act and interact within different possible futures, for example, a future dominated by AI, one where friendly AI supports human goals, one where humans must compete with AI, or one where an elite exploit “enslaved” AI.

Reflexivity is a necessary part of changing how the world is understood (Cunliffe, 2002) and the exploration of STIs, including the metaphors that structure them, is achieved through reflective analysis. SF movies therefore act as a bridge between what managers do now and our collective possible futures. Imagining one’s own role in creating different futures is inherently reflexive. The risk with a dominant imaginary is that it becomes the way that we see and understand the world, rendering us unable to see beyond it, constrained by its terms (its metaphors), and often unconscious of its full nature and extent. This is why imaginaries need to be read, traced, and analyzed, enabling us to (critically) reflect upon them, and the manner in which they represent goals “in themselves, as stakeholders push toward certain collective, stabilized, and performed visions of the future, as well as instruments for legitimizing a certain policy or technological development” (Rahm, 2023: 51).

The interpretation of multiple movies therefore sensitizes us to our own unreflective reproduction of the dominant metaphors that may construct and naturalize certain STIs. Researchers, educators and learners might therefore all play with metaphors (Driver, 2017), and we show the value of SF in this process. Studying imaginaries is difficult, as they are immaterial and unpredictable. However, as Preece et al. (2022) note, and we illustrate, STIs can be considered through fiction, revealing agency and subjectivity, and how certain actions are therefore enabled or restricted. Through movies, we can reveal the trajectories of current management assumptions (e.g. how technology determines growth, liberates workers from mundane tasks, or leads to better products or services), but we can also envisage dystopian scenarios about autonomy, power, or morality that can open up the imagination to new ways of understanding technology, including how it might better serve society.

Experiences of movies invite reflexivity that allows ideas to be translated into management practice (Biehl, 2023; Springborg and Sutherland, 2016; Sutherland, 2013). The process of constructing new metaphors that we have used here to capture different STIs first involves a consideration of existing dominant metaphors, how they are reproduced, by who and for what purpose. Our own preferred movies reveal our own favored imaginaries, and so inter-movie interpretation by different viewers inevitably challenges any one position, i.e. multiple interpretations of different movies ensures that dominant STIs are not uncritically reproduced. Multiple interpretations can be compared to dominant metaphors to critique and expand them, paying attention to different subject positions and broader assumptions about the society presented. In this way, blind spots, limitations or biases, and the subject positions they serve, are highlighted, while leaving open the possibility for new metaphors. Engagement with metaphors through movies is thus generative (Cornelissen and Kafouros, 2008) and playful (Driver, 2017). Attention can be specifically given to management issues, and especially how different imaginaries challenge existing practices, including one’s own. Reflexivity then involves exploring the implications for one’s own role in either maintaining an existing STI or creating new ones.

Conclusion and further research

We offer an extended symbiosis metaphor of human–AI imaginaries that addresses the need for more nuanced interpretations of emerging technologies. This invites management learners to consider their position within these, and to imagine alternative futures. We further explain how learners can use movies to generate new management thinking and reflexivity about their role in creating the future. As symbiosis is not the only possible metaphor with which to explore new technologies, or the only one used in management education, however, there is further value in unpacking other dominant metaphors. We therefore encourage further engagement with STIs and their metaphors, including through fiction and non-fiction narratives that reproduce them. The movies used here also originate in the West and so represent a particular world view. Other genres and fictional forms can capture further alternative STIs, including from different cultures, and each is open to multiple reinterpretations, inviting renegotiations of their metaphors, and a critical questioning of them as possible futures.

Footnotes

Acknowledgements

The authors thank associate editor Thomas Calvard and the anonymous reviewers for their insightful, tremendously helpful and enriching commentary. They also like to kindly acknowledge the valuable feedback received on early manuscripts from colleagues at the Consumption Markets & Society Group, the Technology, Organization & People Group, and the Institute for Digital Culture (University of Leicester) and colleagues at the Center for Responsible Business (University of Birmingham).

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.