Abstract

This article examines Australia's Online Safety Amendment (Social Media Minimum Age) Act 2024 as a case study in digital policy formation, focusing on the socio-political processes that shaped its development. Framing the debate through Celia Lury's concept of ‘problem spaces’, the article explores how the issue of youth and social media use is constructed through ‘givens’ (perceived risks), ‘goals’ (protection), and contested ‘operators’ (policy solutions). Drawing on public opinion surveys and stakeholder submissions to a parliamentary inquiry, the article finds widespread public concern about online harms, especially for minors, but disagreement about how best to address them. While the Act's age restriction was broadly supported by the public and a majority of stakeholders, critics warn of unintended consequences and advocate alternative measures including media literacy and platform accountability. The case reveals broader tensions in digital governance between public demand for action, legislative responsiveness, and competing visions of youth digital rights.

Digital policy as problem space

In November 2024, the Australian Federal Parliament passed the world's first legislation restricting access to social media for individuals under the age of 16. The legislation is to come into effect on 10th December 2025 and will restrict access for young people to designated social media platforms, including Facebook, Instagram, X, Snapchat, YouTube and TikTok. The Online Safety Amendment (Social Media Minimum Age) Act 2024 will age restrict access for all users under 16 years of age from designated platforms, and will require that designated platforms put ‘reasonable’ system controls into place that would restrict access to under 16s, or else face fines of up to AUD$50 million.

The legislation was supported by both major parties – the Australian Labor Party and Liberal-National Party Coalition – but faced opposition from independents and the Greens. As well as being championed by Labor Prime Minister Anthony Albanese, it was strongly supported by Labor State Premiers, Peter Malinauskas, Premier of South Australia, and New South Wales Premier Chris Minns. Their advocacy was influenced by NYU Professor Jonathan Haidt's book The Anxious Generation (2024), by Facebook whistleblower Frances Haugen who argued that her former employer deliberately sought to ‘hook’ young people on their platforms, and by U.S. social psychologist Jean Twenge (2017). There is considerable debate about the evidential underpinnings of the proposal. A number of academics, both in Australia and internationally, have presented evidence which supports restrictions on young people's access to social media (Capraro et al., 2025; Leigh and Robson, 2025; Moshel et al., 2024; Shin et al., 2022). At the same time, many academics and others have questioned such assumptions, including leading youth advocacy groups such as the Australian Child Rights Taskforce and Project Rockit, digital advocacy organisations such as Reset.Tech Australia (Dawkins and Farthing, 2024), and leading research centres such as the ARC Centre of Excellence for the Digital Child, ARC Centre of Excellence for Automated Decision-Making and Society, and the Young and Resilient Research Centre (Meese et al., 2024). In October 2024, an open letter was signed by 140 signatories, including leading social media academics, youth advocacy groups and tech sector non-government organisations (NGOs), that condemned the age restrictions as not supported by available evidence, ignoring the benefits of the online environment for young people, and being too blunt an instrument to address risks associated with social media use (Australian Child Rights Taskforce, 2024).

In this article, we are less concerned with advocacy for or against the legislation, than with understanding how and why particular digital policy and governance regimes come into place. We understand the question of young people and social media as what Celia Lury has termed a problem space, defined as: A representation of a problem in terms of relations between three components: givens, goals, and operators. ‘Givens’ are the facts or information that describe the problem; ‘goals’ are the desired end state of the problem – what the knower wants to know; and ‘operators’ are the actions to be performed in reaching the desired goals. (Lury, 2021: 2)

Drawing upon a diverse array of theorists, including John Dewey, Herbert Simon and Donna Haraway, Lury argues that ‘a problem is not simply found but must be composed and represented’ (Lury, 2021: 30), and that ‘the focus of making or composing problems’ has a shifting relationship to ‘the surrounds, environments or contexts – the spaces – in or with which problems emerge’ (Lury, 2021: 23–24). From Lury's perspective, the ‘problem’ of young people and social media is one where, as John Dewey observed, ‘facts are evidential and are tests of an idea in so far as they are capable of being organised with one another … Some observed facts point to an idea that stands for a possible solution. The idea evokes more observations’ (Dewey, quoted in Lury, 2021: 27).

This can give the public debate around such issues a somewhat slippery quality and generate a degree of inconclusiveness in academic debates on the subject, since a focus on one topic is always opening up others, and refuses to be bounded by the parameters of the initial debate. For advocates of social media age restrictions, the connection between deteriorating mental health outcomes for people under 18 and the rapid growth in use of mobile phones and social media platforms appears clear-cut. But such arguments are challenged by the possibility that evidence gathered may point towards other factors being determinate, including greater preparedness to report mental health issues, concerns among young people about the future, and the long-term mental health impacts of COVID-19 lockdowns. There is also the issue of privacy implications arising from any attempts to monitor the age of Internet users, and the question of whether age assurance technologies for young people can only work if complemented by other measures that would apply to all Internet users, such as digital IDs.

Critics of measures such as social media age restrictions point towards the emancipatory possibilities of digital childhood, rejecting ‘moral panics’ about how technologies are damaging young people (Bruns and Rodriguez, 2024). At the same time, these arguments face the challenge that global tech giants have long been alleged to be actively manipulating social media algorithms to maximise attention and reaction among young people in ways that can be detrimental to their well-being. Such allegations have been made by industry insiders such as whistleblower and former Meta senior executive Frances Haugen and the former Director of Global Public Policy at Meta, Sarah Wynn-Williams (2025). Such accounts are not easily dismissed, highlighting the tensions that can arise when conflicting definitions of the givens compete for public legitimacy. The arguments against the implementation of age restrictions on social media are not against greater regulation of social media platforms per se. Rather, they point towards a ‘third way’ between minimal self-regulation and state regulation, with a greater role for NGOs and academic researchers in platform governance (Flew, 2021: Ch. 5; Gorwa, 2019).

In this article, we examine what renders youth use of social media as a problem space by looking at how its parameters are shaped through public discourse, opinion polling, and public policy processes. These communication processes increasingly mark as a given that social media platforms are a risk for young users and that there is a goal to ensure these users are protected from harm, though operators of how this can be achieved remain contentious – with some disagreement over whether it can be achieved at all. A central contention in this problem space is that while any kind of regulation – particularly regulation with broad international implications – is difficult, the direction of public sentiment is one of growing scepticism towards tech companies, that manifests itself in support for greater government regulation of social media platforms. Examining international policy and research, relevant polling data, and submissions to government enables a deeper understanding of the problem space, how responses are being crafted, and what can be expected moving forward.

Social media in Australia: from promotion to restriction

Debates about young people and the potential harms of the online world are as old as the Internet itself. The cornerstone legislation that shaped Internet policy and industry development in the United States was called the Communication Decency Act 1996 because it contained within it a clause to criminalise the distribution through interactive computing services of ‘obscene or indecent’ material to people known to be under 18. These provisions were struck down in the U.S. Supreme Court in the Reno v. ACLU case in 1997, as being an unconstitutional abridgement of First Amendment rights.

At the same time, the issue of whether access to certain kinds of online content can and should be subject to age restrictions has never gone away. The failure to enact a national X18+ classification category in Australia for pornography has meant that sexually explicit content has sat in a perpetual legal grey area between R18+ content (restricted for children but freely available for adults) and prohibited content, referred to as Refused Classification (RC) (Australian Law Reform Commission, 2012). In the context of online content regulation, there has long been de facto benign neglect at a governmental level towards content that may be unsuitable for young people. There has instead been a reliance upon content distributors and platform companies to set rules themselves, which typically defaulted to U.S. regulatory models that have a considerably higher ‘free speech’ threshold than most other countries, including Australia.

Until the 2010s, this largely permissive approach to online content in Australia combined with policies to encourage people, and particularly young people, to become more involved online. Young people were often seen as being particularly adept at navigating the online world, and the problem was that people who called for greater regulation of the Internet simply did not fully understand the medium and its capabilities (Joint Select Committee on Cyber-Safety, 2011).

Over the course of the 2010s, however, a variety of concerns have gained strength about negative aspects of the online experience, such as online harassment, cyberbullying, non-consensual image sharing, and online hate speech leading to physical violence, denigration, discrimination and other forms of harm arising from online interactions (Humphry et al., 2023; Livingstone and Blum-Ross, 2020).

The Online Safety Act 2021, and the establishment of the Office of the eSafety Commissioner, were designed to address such concerns in different ways to the Australian Communication and Media Authority, which was originally designed to deal with broadcasting and telecommunications policies and regulations. The Office's strategy of working informally with service providers to resolve complaints through ‘safety by design’ principles is aligned with a broader policy shift from formal regulation, associated with nation-states, public bureaucracies, formal laws, and command-and-control management, towards more informal governance frameworks associated with multiple stakeholders, networked relationships, and collaborative arrangements. Mark Bevir has described such governance models as shifting from ‘a focus on the formal institutions of states and governments [to] recognition of the diverse activities that often blur the boundaries between state and society’, in order to better deal with policy issues which ‘are hybrid and multijurisdictional with plural stakeholders who come together in networks’ (Bevir, 2011: 2).

A turn towards social media age restrictions may appear to be a reversion towards the more formal model of explicit regulation by nation-states through legislation, and at odds with such models of self-regulation and multistakeholder governance (Nash et al., 2017). But there has been growing discontent among citizens in liberal democracies with models based on quasi-self-regulation (Flew, 2022). Daniela Stockmann has observed that ‘the … predominant governance approach – a combination of the multistakeholder approach as practiced in international institutions with self-regulatory frameworks at the national level – were not effective. Scholars have identified a regulatory deficit whereby self-regulatory laws and regulations lag the way technology companies operate’ (Stockmann, 2023: 4).

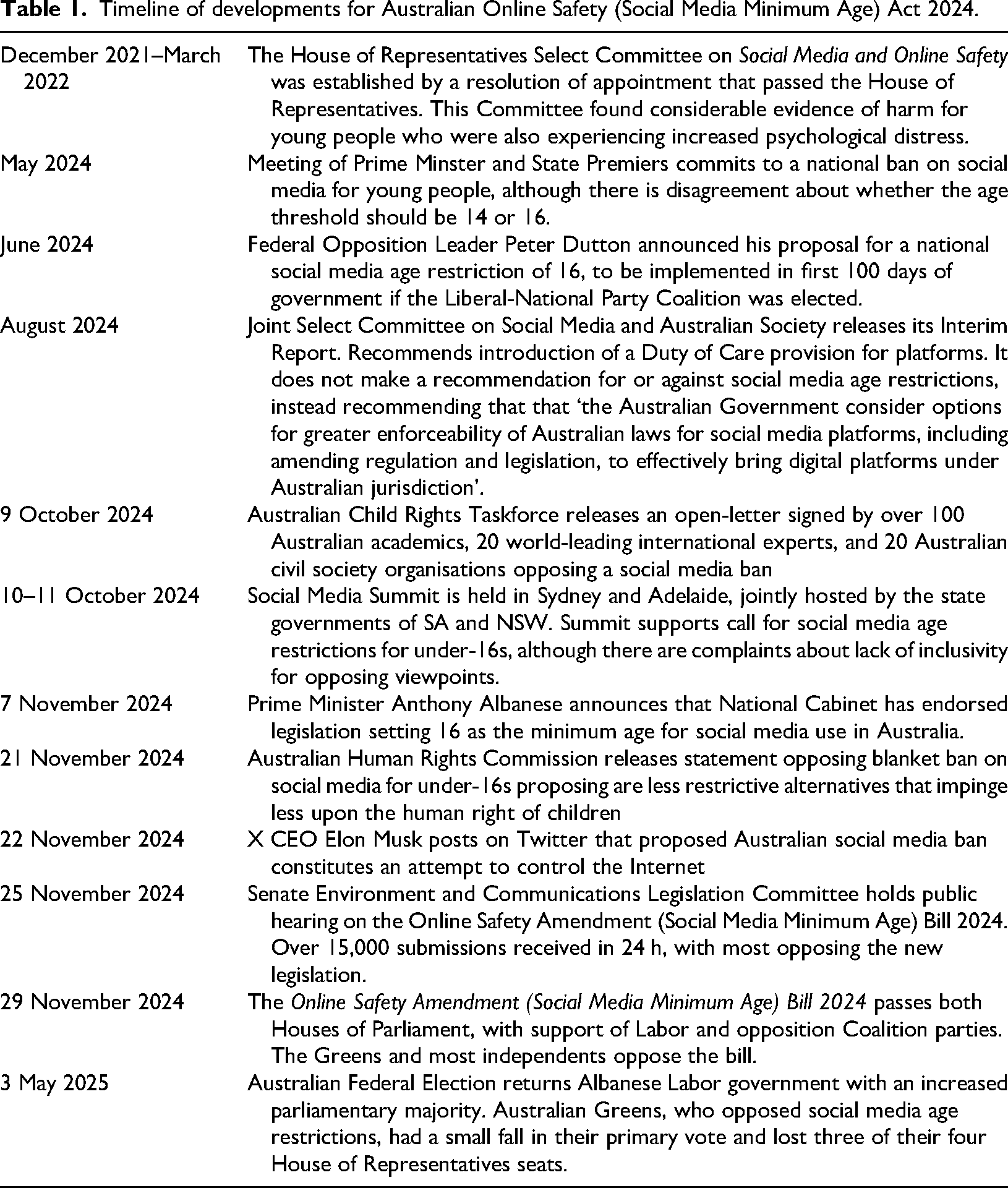

The Australian path towards social media age restrictions moved surprisingly quickly. As Table 1 shows, the idea of a national social media age restrictions only emerged as a serious policy option in May 2024, but by November 2024 it had been passed into law. This occurred despite considerable criticism from academics, child rights organisations, tech companies – including X, where CEO Elon Musk mused on whether it was an attempt to censor the Internet – and the Australian Human Rights Commission. After the May 2025 Australian Federal Election, which saw Labor re-elected with a significantly increased parliamentary majority, Prime Minister Albanese spoke in support of the legislation at the United Nations General Assembly, where the policy was endorsed by European Union President Ursula van der Leyen (Speers, 2025).

Timeline of developments for Australian Online Safety (Social Media Minimum Age) Act 2024.

In the next section of this article, we examine public opinion surveys on social media regulation, which can include age-based restrictions but also incorporates a range of other concerns. A clear message coming from these surveys is a strong community expectation that the Australian Government should ‘do something’ about the harmful influences of social media, particularly upon young people. When directly asked are people for or against such age restrictions, the support for such age restrictions is significant and apparently growing. But whether people would support different measures aiming at a similar goal is an open question.

Public opinion surveys

In order to identify how social media regulation has been framed in popular discourse, we have conducted a small-scale meta-analysis (Shelby and Vaske, 2008) of publicly available opinion poll and survey data conducted in Australia between January 2019 and October 2024, a period covering the 2019 and 2022 Australian federal elections, as well as early campaigning for the 2025 election. The criteria for inclusion were that a polling or survey question had to specifically focus on social media regulation, including questions asking:

If greater social media regulation was needed. If so, who should be responsible for regulation (governments, companies, etc.); and If a specific regulatory approach was suitable or effective.

We excluded from the dataset questions that focused on the regulation of other digital technologies such as search engines, content streaming, and artificial intelligence, or which focused on social media usage or uptake. Questions that offered open text responses, or that provided unbalanced response options to respondents, were also excluded from the data set. This led to a focus on surveys conducted by four organisations: Essential Media; YouGov; The Australia Institute; and Reset Tech Australia. These organisations made their data and methodologies publicly available and have demonstrated a consistent engagement with questions of social media regulation throughout the 2019–2024 period.

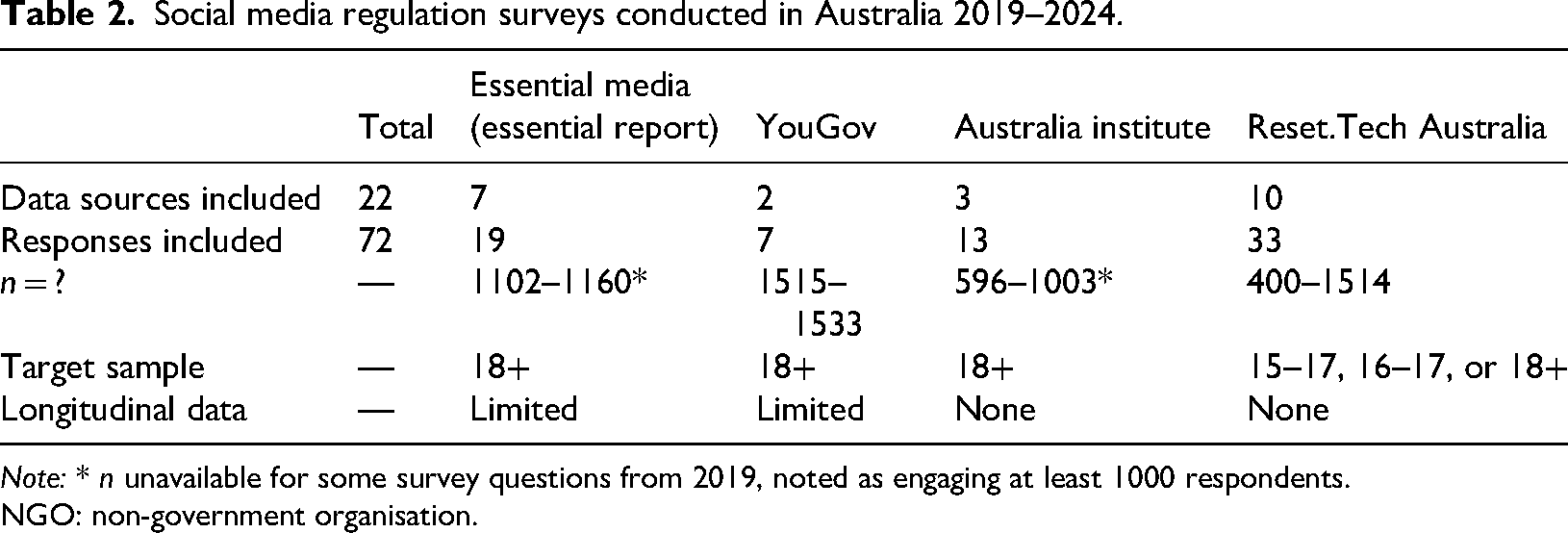

In total, 22 reports were published by these organisations which included survey questions that met the inclusion criteria, within which we initially identified 72 questions as meeting the criteria listed above, including 19 responses from Essential Media's Essential Report, seven from reports published by YouGov, 13 from reports published by The Australia Institute, and 33 survey responses from Reset.Tech. Of these organisations, Reset.Tech was the only organisation to conduct research with teenagers aged 15 to 17. Of the four data sources, only Essential Report and YouGov included examples of longitudinal data, where near-identical questions were posed months or a year apart. A summary of report and response questions included from each organisation is summarised in Table 2.

Social media regulation surveys conducted in Australia 2019–2024.

Note: * n unavailable for some survey questions from 2019, noted as engaging at least 1000 respondents.

NGO: non-government organisation.

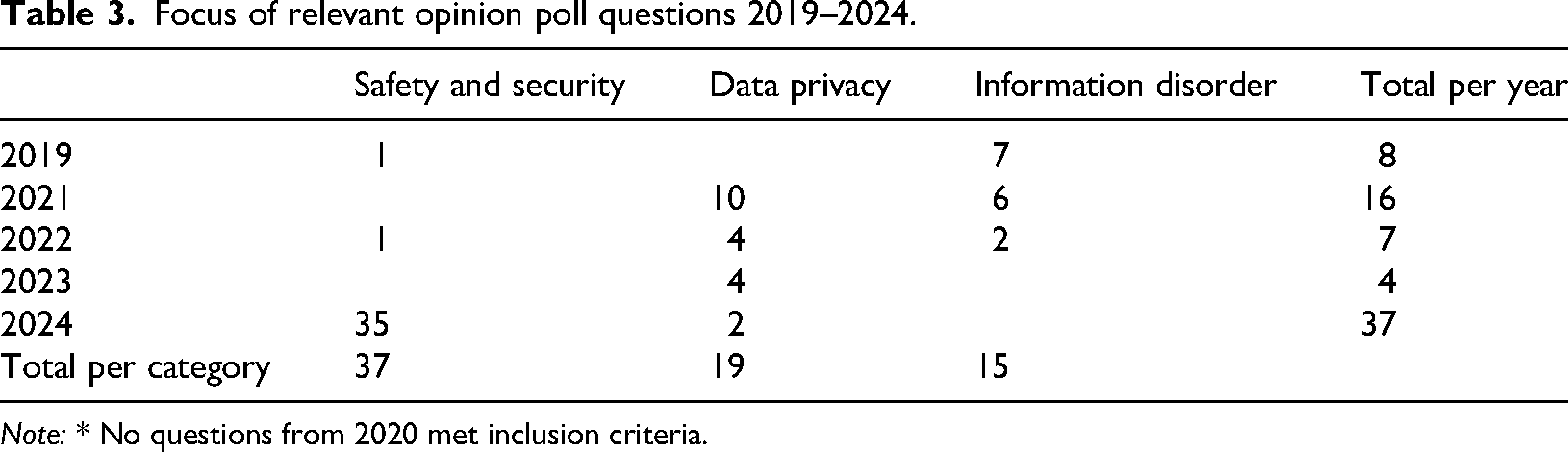

To conduct the meta-analysis, both the polling questions and the resulting responses were coded into categories and frames using thematic content analysis (Lune and Berg, 2017) to create a quantifiable dataset. Polling questions were first coded for topic of regulation, then for question framing, focus of regulation, target actor, and target audience. Responses to the included set of questions were also coded for the dominant position expressed in the survey result. Three principal topics of regulation were identified in the initial coding of polling questions: information disorder, data privacy, and safety and security, the latter of which included questions relating to social media age restrictions. We found that of the 72 questions included in the initial analysis, more than half (n = 37) related to the topic of safety and security (Table 3). This correlates with increased media coverage and public debate within Australia at the time surrounding the as-then proposed social media age restrictions, framed around protecting children from harmful content (Manfield, 2024; Middleton and Taylor, 2024). For this study, we have focused on these 37 safety and security related questions and responses.

Focus of relevant opinion poll questions 2019–2024.

Note: * No questions from 2020 met inclusion criteria.

As opinion polls and surveys aim to identify the public's position on a topic, questions were then coded for how they were framed to respondents, providing insight into how some of the ‘givens’, ‘goals’ and ‘operators’ have been scaffolded in what we have identified as the social media problem space. We identified three framing categories: problem salience, solution support, and solution effectiveness. Of the 37 questions included in the analysis of safety and security related polling only three were coded to the ‘goals’-oriented solution effectiveness category, all of which were included in polls conducted by Essential Media and related to the proposed social media age restrictions. More often, questions were framed as ‘operators’-oriented solution support (n = 23), with the remainder categorised as ‘givens’-oriented problem salience (n = 11).

To better understand how the ‘operators’ have been framed within opinion polling, questions were also coded by the type of regulation proposed and who would be most affected if it were to be introduced. This coding resulted in four primary categories: platform accountability; end-user criminal offence; end-user age restrictions; and platform ban. The overwhelming majority of questions were coded to the platform accountability category. Questions coded to this category asked respondents for their position on whether social media platforms were sufficiently regulated, or if further action was needed to ensure that their services did not directly or indirectly harm their users. A total of 23 out of the 37 questions were coded to this category, with seven of these explicitly referencing external regulators or new legislation. Although the majority of questions analysed fell under this category, it is notable that no such ‘operators’ were proposed by government during this period.

Questions coded as end-user criminal offence referenced proposed legislation that would criminalise specific actions that end users might engage in on social media platforms and accounted for five of the questions in the data set. These questions referenced proposed legislation on issues such as doxing, posting sexualised deep fake images, and financial scams, with an anti-doxing bill passing both houses in 2024 (Privacy and Other Legislation Amendment Bill 2024, n.d.). The end-user age restrictions category included eight questions that directly related to the Australian government's then-proposed social media age restrictions, while one question was coded as platform ban: a question polling levels of support for a U.S.-style ban of TikTok that had been proposed in the United States at the time.

The final stage of analysis was conducted on the responses to the questions included in the study, which were coded for the dominant position or dominant preference taken by respondents. Across the 37 polling questions reviewed, the majority of respondents surveyed supported greater regulation of social media regardless of how the question was framed or worded. For the most part, this support was shown with a clear strong majority of responses, with responses garnering greater than 50% agreement or support for an issue considered a strong majority. This included polling published by YouGov just two days prior to the Australian Government passing the social media age restriction legislation in November 2024 that showed a significant increase to support for the bill (77% overall support) compared to their previous polling in August (61% overall support) (YouGov, 2024).

As with many large-scale surveys, the questions included for analysis tended to be short, referred to general ideas, themes and issues around social media regulation, and did not ‘lead’ respondents within its wording (Rasinski, 2008). In addition, Essential Research (n.d.) has noted how opinion poll questions are typically formulated after a review of recent media coverage on topical issues. However, the notable specific inclusion of emphasis on ‘operators’ such as legislative proposals and other external regulatory actions framed within these opinion polls suggests that the ‘givens’ and the ‘goals’ of the youth and social media governance problem space had taken a back seat to calls for action through laws and regulations, as well as registering dissatisfaction with social media platforms’ record on protecting their users from potentially harmful content. This suggests a top-down approach to agenda setting at play (Kleinnijenhuis and Rietberg, 1995), especially where politicians attempt to set the agenda through media releases and press conferences announcing their legislative proposals.

Review of submissions to Joint Select Committee on Social Media and Australian Society

One way in which we can get a higher-level understanding of different perspectives on policy questions is through document analysis of submissions to public inquiries. This allows for stakeholder analysis to understand how public opinion can aggregate upwards into the perspectives of organised groups or individuals sufficiently engaged with an issue to actively participate in such inquiries. Submissions to public inquiries provide a window into how and why different groups would approach a matter of public concern, with an eye to influencing relevant public policy. As a policy research method, Flew and Lim (2019) have noted that ‘stakeholder analysis conceives of society as a set of organised and competing interests and identifies the role of the state and policy-making institutions as one of reconciling these competing interests towards shared goals. Critical to stakeholder analysis is the importance of dialogue and deliberation: it is through the process of engaging others, and through listening to the voices of others, that shared perspectives on issues can be developed, that combine expert knowledge and group consensus’ (Flew and Lim, 2019: 541).

The Joint Select Committee on Social Media and Australian Society provided an opportunity to leverage these insights to better understand the givens, goals and operators behind the amendments to the Online Safety Act setting a minimum age for social media use. The Committee invited submissions during June 2024, and released its Final Report, Social Media: The good, the bad, and the ugly in November 2024 (Joint Select Committee on Social Media and Australian Society, 2024). The Committee's Terms of Reference invited submissions on five issues:

the use of age verification to protect Australian children from social media. the decision of Meta to age restrictions on deals under the News Media Bargaining Code. the important role of Australian journalism, news and public interest media in countering mis and disinformation on digital platforms. the algorithms, recommender systems and corporate decision making of digital platforms in influencing what Australians see, and the impacts of this on mental health; and other issues in relation to harmful or illegal content disseminated over social media, including scams, age-restricted content, child sexual abuse and violent extremist material.

The Joint Select Committee received 217 submissions overall over this period from 200 organisations.

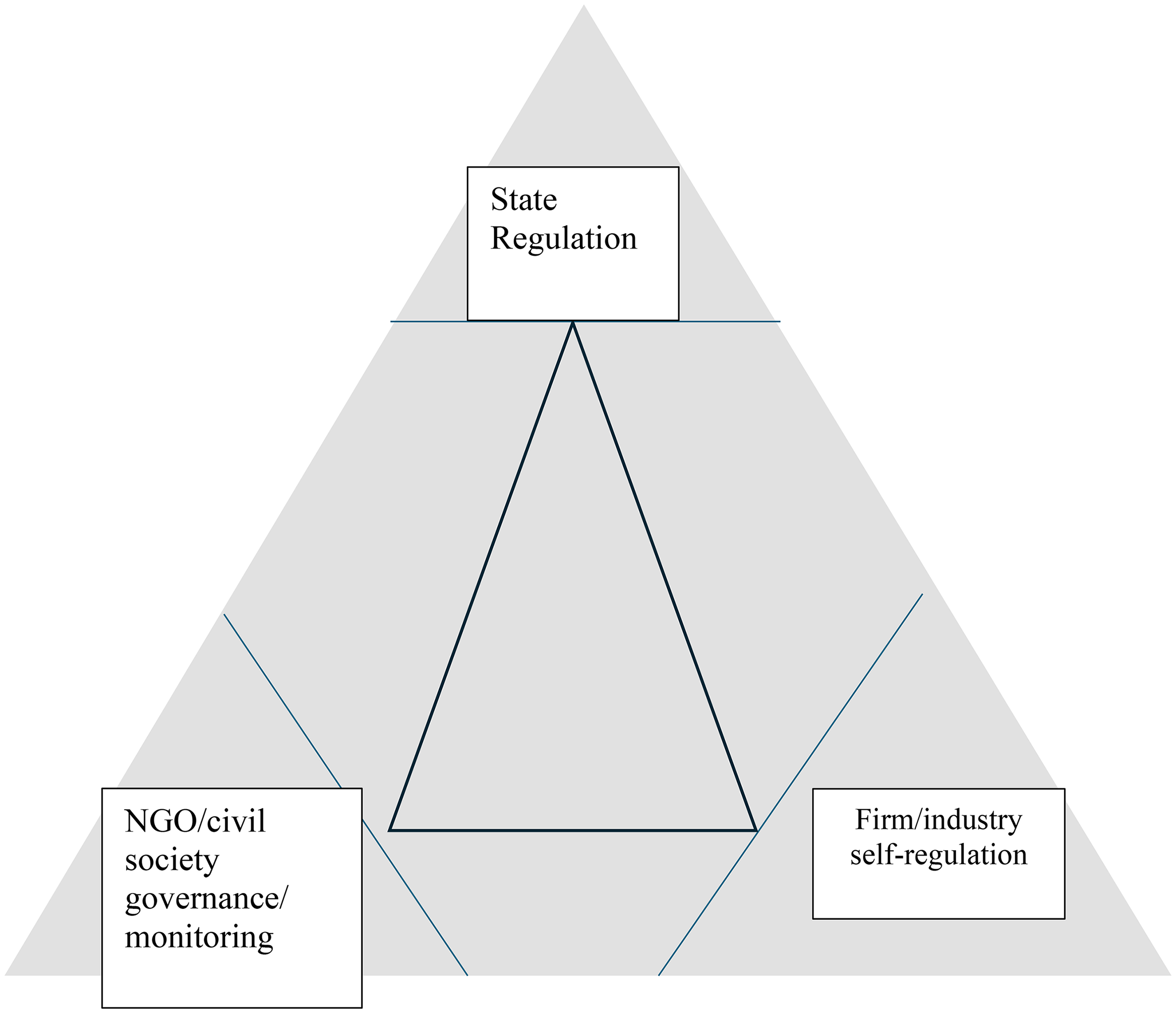

In order to develop a taxonomy to analyse these submissions, we drew upon Robert Gorwa's (2019) platform governance triangle (see Figure 1). Gorwa identified the state, the firm and NGOs as the three ‘nodal points’ of platform governance and sought to place different governance models within this tripartite arrangement. Traditional state regulation based on parliamentary legislation and oversight by government agencies sits at the top of this triangle, while firm and/or industry self-regulation sits at the bottom right. NGOs and civil society groups sit at the bottom left of the triangle. The triangle is designed to capture hybrid forms of governance as they are common in the tech sector (e.g. state-firm co-regulation, or multistakeholder governance models that make civil society and actor. But the governance triangle also provides a way of mapping the various stakeholders to public inquires across the state-firm-NGO triangle, while noting that other actors are significant in such engagements, including individual contributors and academics. The latter are broadly within civil society, although their inputs are typically distinct from those of NGOs.

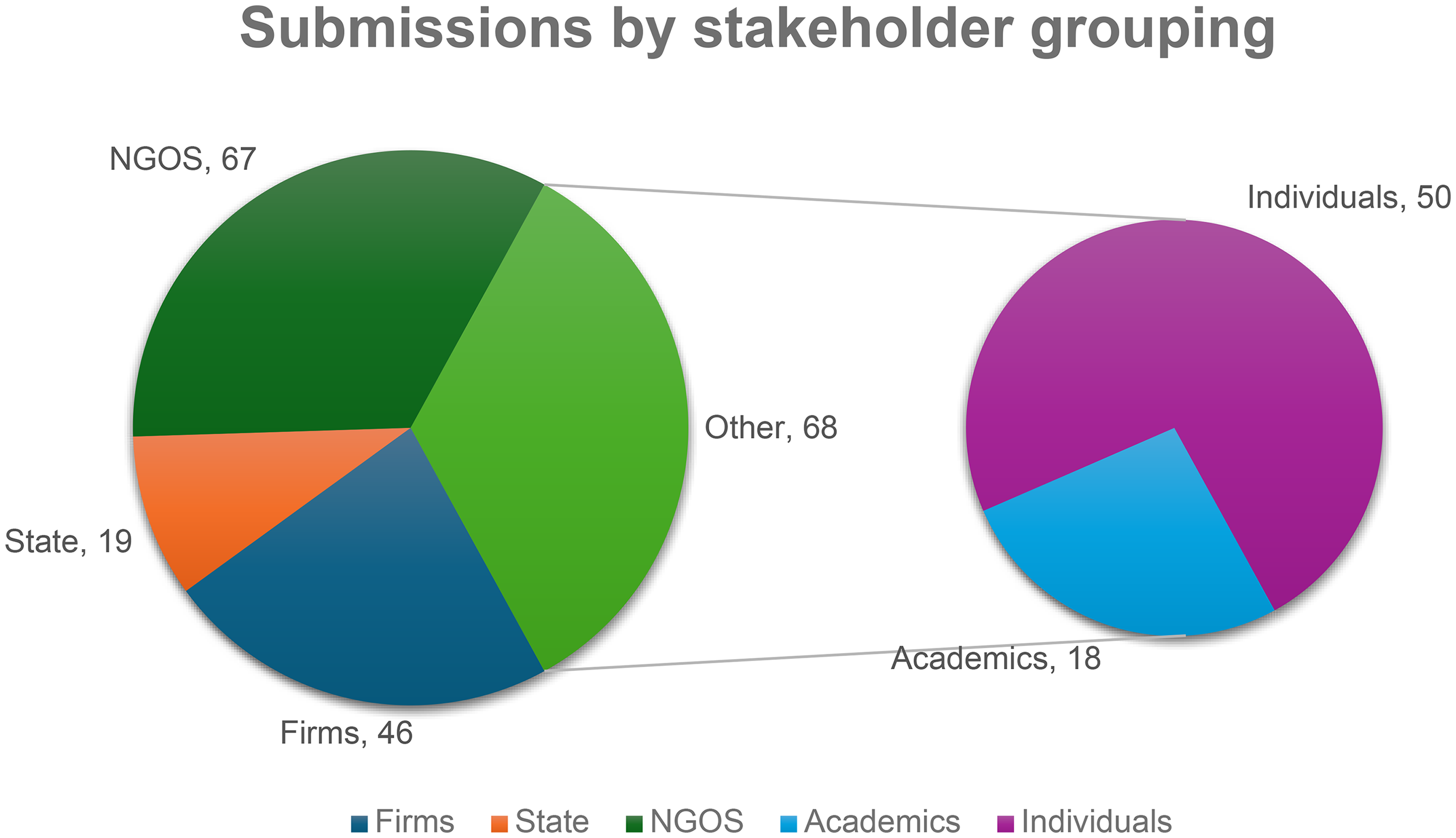

There was an identifiable relationship between the views in each submission and the positioning of the contributors in terms of Gorwa's platform governance triangle. In this case, firms included platform companies, media companies, industry associations as well as other tech companies and companies that are sector-adjacent, such as legal firms representing corporate interests. State actors included agencies with direct responsibility for social media legislation such as the Australian Communication and Media Authority and the eSafety Commissioner, state governments, organisations such as the Attorney-General's Department and the Australian Human Rights Commission, and individual parliamentarians such as Allega Spender MP. NGOs, one of the largest groupings of submission contributors, comprised a wide range of actors from inside and outside of Australia, and included tech policy and advocacy groups such as Digital Rights Watch and Reset.Tech Australia, youth groups, mental health advocacy groups, groups associated with particular illnesses such as eating disorders, and religious organisations. We found that this taxonomy could account for about two-thirds (66%) of overall submissions, as shown in Figure 2. There were also a large number of submitters – others who claimed no affiliation with any of these larger groupings. This group included including academics and universities, as well as individual citizens. Finally, 10 contributors submitted attachments that consisted of reference materials, letters of support, or alternative file types; these attachments were coded alongside the authors’ original submission unless they were explicitly flagged as the contribution of another organisation.

Gorwa's platform governance triangle.

Breakdown of submissions to the joint select committee by stakeholder grouping.

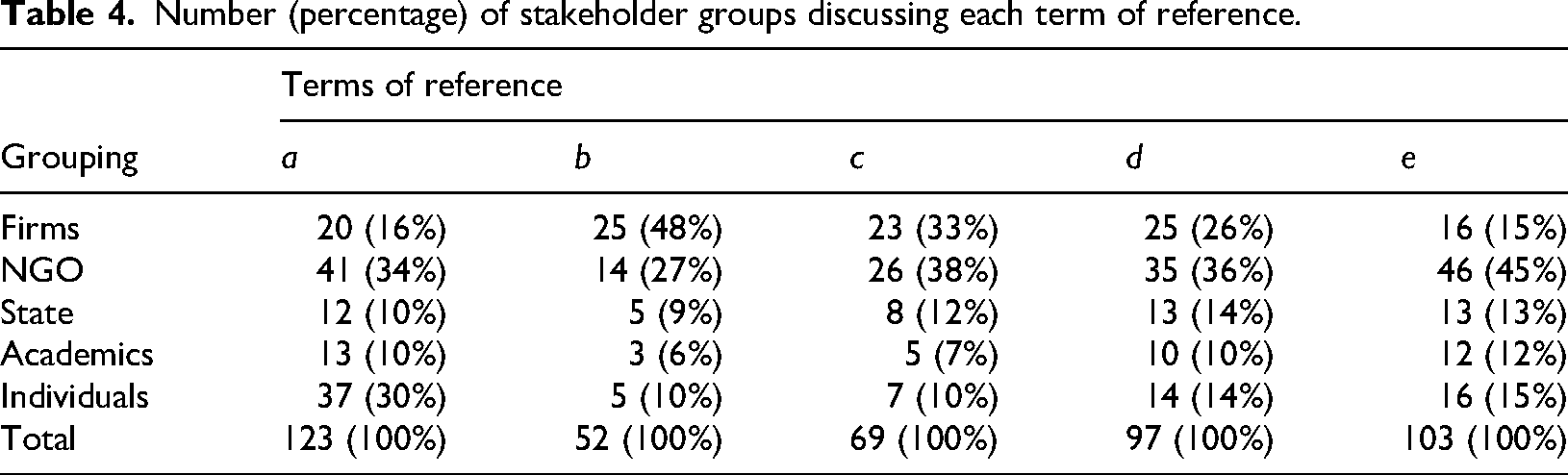

A preliminary investigation of the submissions sought to identify the salience of safety and security risks – particularly among young users – among the collection of submissions to find how they provided support for or argument against age restrictions. An important point to be noted is that not all submissions were engaged, or primarily engaged, with the question of age restrictions on social media. While it was the first of the terms of reference, submission authors touched at various points on all of them, with some categorically flagging a contribution to each item. There was a significant divergence depending on their relative position in the governance triangle (see Table 4). Issues related to the future of the News Media Bargaining Code (b) and the role of journalism in countering misinformation (c) were largely engaged with by firms, the vast majority of individuals and academics raised age verification as a key issue.

Number (percentage) of stakeholder groups discussing each term of reference.

However, reading these results through Lury's problem spaces framework (2021) reveals how these different stakeholders can substantially converge on shared ‘givens’ and ‘goals’, yet diverge on the ‘operators’ or policy solutions proposed. For instance, for those submissions raising concerns about safety and security, there was a broad agreement about the overarching ‘given’ of the problem space: 82% of all submission authors expressed or reported concern about social media being a site of risk for safety and security, and this was the case across all submission categories. The claims that underpinned this framing were diverse, with issues flagged including abuse, fraud, bullying, sexual exploitation, disinformation, and other harmful impacts. These were seen as not only having implications for young people but also the elderly and the broader population. Some submissions discussed both the harms and benefits of social media.

Individual citizens were most likely to raise social media as a cause for harms such as suicide and fatal eating disorders, as well as a facilitator of bullying and image-based abuse. Statements claiming that ‘Vulnerable students are easily found and linked into criminal or radical groups because they find a sense of belonging that is not easily accessed at school or in their family’ (Anonymous, 2024b) were indicative of such framing. Platform companies generally acknowledged the existence of risks but were more likely to attribute social problems to other factors. For example, the industry group DiGi argued that ‘Online harms are multi-faceted social problems that cannot be fixed with technical and legal safeguards alone’ (Digital Industry Group Inc., 2024). NGOs, state, and academic contributions tended to focus specifically on establishing givens of harm or benefit through providing data, often through statistics and surveys including those discussed earlier in this article. While all stakeholder groups shared a perception that social media was a vehicle for harms for young users, the basis and subsequent severity of the claim was contested, particularly by firms in industries potentially affected by new legislation.

Conversely, defining the ‘goal’ of the problem space saw broad and specific agreement. Whether submissions were from firms, NGOs, state, academics or other citizens, mitigating harm and protecting users featured extensively across submissions. Even the specific language overlapped, with 67 submissions employing variations of the phrase ‘protect Australian children’. While this phrasing could be reflecting the first term of reference, more submissions described alternative but overlapping goals. These include ‘counter online child exploitation and abuse’ (Attorney-General's Department (Australia), 2024), creating safer online spaces, and keeping children safe, which share a goal of mitigating or preventing the givens of social media harms and vulnerable youth. Even submissions that do not describe particular online harms or negative impacts for youth posit a similar goal: ‘We are committed to being part of the solution for online safety’ (Snap Inc., 2024). Differences in the details and evidence behind submissions’ givens generally did not result in divergent goals.

Where there was agreement on the goals of the problem space as well as some shared sense of its givens, the ‘operators’ or proposed policy solutions were a site of considerable contestation, particularly in relation to the grouping of submissions’ authors. Notably, the Committee proposed its own solution through the Terms of Reference inquiring about ‘the use of age verification to protect Australian children from social media’ (Joint Select Committee on Social Media and Australian Society, 2024), which acted to frame subsequent contributions However, there is no requirement that submissions follow the committee's guidance, and contributors presented a variety of concerns: 123 submissions (62.5%) discussed age verification, age limit, or age assurance policies, practices, or approaches (Terms of reference 1), with 74 submissions (37%) indicating their support. The 49 submissions (24.5%) that stated opposition to an age-based restriction proposed other policy measures, including investing in digital media literacy, greater content moderation, and measures to promote greater platform accountability such as a ‘digital duty of care’.

Support for age verification restrictions tended to relate to where the authors were positioned in Gorwa's platform governance triangle. In particular, the proposals of NGOs and firms’ contributors diverged substantively from individual citizens. Of those that discussed age-based restrictions, only 16% of submissions came from firms, and of these no platforms supported this proposal. By contrast, 30% of submissions on this topic came from individuals and, of these, 84% identified age-based restrictions as their preferred policy response. Their demands could be unambiguous in their support or its urgency: ‘Let's start moving towards healing our families and protecting the generations ahead, any action is better than nothing’ (Anonymous, 2024a). By contrast, the submissions arguing against the approach framed it as being ineffective, insufficient, or without a sufficient basis of evidence. Their demands propose a high bar for such regulation, particularly that it not generate unintended consequences: ‘we ask that full and proper consideration is given to ensure that any future regulations do not have unintended consequences’ (NSW Service for the Treatment and Rehabilitation of Torture and Trauma Survivors, 2024).

Overall, the submissions demonstrate how the problem space behind social media platforms and age restrictions is shaped less by differences in defining the problems or the goals, and more by competing perspectives among divergent stakeholders about the proposed solutions. For supporters of the restrictions, particularly individual citizens, unforeseeable consequences are an acceptable sacrifice to protect youth and mitigate harms arising from social media platforms depicted as an open channel of risk. For critics of the proposed restrictions, unintended consequences (e.g. privacy implications) and children's rights are the principal reason given as to why this course of action should not be undertaken, with content moderation and media literacy programmes presented as alternatives (Australian Child Rights Taskforce, 2024; Bruns and Rodriguez, 2024; Dawkins and Farthing, 2024; Meese et al., 2024). NGOs, state and academic submissions provide evidence on both sides of the ledger, although a small majority (60%) are more likely to support the proposal. These results align with the results of the surveys in how they depict a public seeking action but also flag how they are just one force shaping the problem space driving the debate.

Conclusion

The case of Australia's Online Safety Amendment (Social Media Minimum Age) Bill 2024 provides a compelling and timely insight into the complexities of digital policy formation and social media governance in the 21st century. Rather than representing a straightforward response to empirical evidence or even a consensus within expert or public opinion, the legislation instead illustrates how social concerns are shaped, negotiated, and operationalised within what Celia Lury describes as a ‘problem space’ – a dynamic terrain formed through the interaction of ‘givens’, ‘goals’, and ‘operators’. In this problem space, the question of whether to set restrictions to social media access for those under the age of 16 emerged not merely as a matter of technical feasibility or risk assessment, but as a deeply politicised process reflecting broader transformations in public trust, governance, technological power, and the responsibilities of the state. In particular, the repeated failure of industry self-regulation and the perceived lack of social responsibility on the part of major platform companies came up against the question of whether young people have inherent rights to access social media and whether such access is a net positive for them.

At the heart of the legislative momentum lie a convergence of societal anxieties. These include escalating mental health challenges among young people, declining trust in global technology companies, and a perceived failure of self-regulation in mitigating online harms. As our analysis of opinion polling data indicates, a clear majority of Australians are supportive of increased regulatory measures targeting social media platforms, particularly when framed around the imperative of protecting children. However, such support is often broad and unspecific and is expressed more as a demand for government action to ‘do something’ than a clear endorsement of any policy mechanism. This gap between popular sentiment and legislative precision helps explain why the debate around the Online Safety Amendment (Social Media Minimum Age) Act has been marked by contention and division.

While there is a shared perception across most stakeholder groups that social media can pose significant risks to young users, this consensus dissolves when discussing how these harms should be addressed. The ‘givens’ – mental health deterioration, online exploitation, disinformation, and addictive design – are acknowledged across range of stakeholders, as are the ‘goals’ of enhancing child safety in online environments. However, the ‘operators’ – the specific interventions proposed – are the site of fierce dispute. For some, banning access below a particular age threshold offers a clear, enforceable response that demonstrates leadership and empowers parents. For others, such a measure is blunt, potentially counterproductive, and reflective of a broader trend of reactive policymaking that fails to adequately balance harm mitigation with children's rights to participation, expression, and digital literacy.

Indeed, the legislation appears emblematic of a broader shift in governance models. Australia's digital policy posture has until recently leaned towards platform self-regulation, with institutions like the Office of the eSafety Commissioner intended to facilitate safer digital environments through collaboration and best practices. The turn towards legislated age-based restrictions on social media access signals a reversal of this trend – a reassertion of sovereign legislative power reminiscent of broadcast-era models of content control. This shift can be seen as a response to what Daniela Stockmann has termed a ‘regulatory deficit’, in which self-regulatory frameworks fail to keep pace with the societal impacts of rapidly evolving digital technologies (Stockmann, 2023). It is perhaps notable that the response of governments outside of the United States to the Australian proposal has been largely positive.

Our review of submissions to the Joint Select Committee on Social Media and Australian Society identifies a spectrum of views, from those demanding urgent action to those more critical of restrictions on young people's access to social media. Individual citizens often expressed deep frustration and concern about youth exposure to harmful online content, frequently supporting age restrictions as a necessary, if imperfect, protective measure. Conversely, NGOs, academics, and some state actors expressed hesitance about the bluntness of the legislative proposal. The diversity of these perspectives illustrates a central tension in contemporary digital policy: how to regulate effectively in the absence of full consensus, perfect evidence, or clearly demarcated authority. Digital platforms are transnational, agile, and opaque, often evading the slow-moving mechanisms of national law. The social effects of their products – especially in terms of child development and mental health – are difficult to isolate from broader socio-economic and cultural trends. Yet policymakers are under immense pressure to act swiftly and visibly, especially in response to public concern amplified through media coverage, advocacy campaigns, and high-profile incidents.

As the discussion continues, and as similar legislative responses are considered in other jurisdictions such as Denmark, France, New Zealand and the European Union there are risks across both sides of the current policy divide. For those advocating for age-based restrictions on access to social media, the risks associated with unintended consequences are considerable. Age verification measures may be inaccurate or ineffectual, running the risk of widespread non-compliance with the law, or negative publicity that will attract criticism of the government. It may be that young people, blocked from platforms such as Facebook, Instagram and TikTok, may go to more unregulated spaces where the risks are potentially greater. The belated inclusion of YouTube in the list of sites to which access would be restricted indicates the difficulties in clearly defining what constitutes ‘social media’ (McGuire and Smith, 2025), as does the question of whether gaming platforms such as Roblox may be included.

The nature of the policy debate has also revealed challenges for the critics of such legislation. One obvious issue is how to distance their own critiques of such proposals from those of the tech companies themselves, the U.S. government under Donald Trump, or advocates of a ‘libertarian lnternet’ who see questions of what young people access online as largely matters for parents to address rather than tech companies or the state. It is important to note that critics of social media age restrictions in Australia have presented alternatives. For instance, Bruns and Rodriguez proposed that ‘Instead of attempting to legislate how to exclude children from social media, efforts should be going towards incentivising and regulating tech companies into developing high-quality digital experiences for children’ (Bruns and Rodriguez, 2024). We have noted how the Australian eSafety Commissioner has promoted ‘Safety by Design’ principles for platform developers to adopt that can provide guidance to tech companies on best practices for designing children's digital experiences. The independent review of the Online Safety Act 2021, conducted by Delia Rickard PSM, a former Deputy Chair of the Australian Competition and Consumer Commission, proposed a ‘digital duty of care’, that would legally require online platforms, such as social media companies, to take reasonable steps to protect users from foreseeable harms.

These various proposals offer a ‘third way’ for social media governance between the limited platform self-regulatory measures and state intervention. They are not necessarily mutually exclusive with social media age restrictions. But by themselves, they run the risk of being overly reliant upon the goodwill and sense of social responsibility of the companies themselves (Flew, 2022). It is also apparent that major U.S. tech giants such as Apple, Meta and Alphabet are viewing fines issued by regulators as part of the costs of doing business in particular jurisdictions, such as the European Union, while X (formerly Twitter) is simply refusing to pay many or the fines that have been issued to it. In the wake of the re-election of President Donald Trump in November 2024, U.S. tech companies have also become increasingly assertive about legislation in other countries that they view as discriminatory towards their operations, and have been strongly lobbying the Trump Administration to use the lever of trade tariffs to eliminate such perceived barriers to services trade (Koster, 2024).

The nature of the policy debate has also revealed challenges for the critics of such legislation. One obvious issue is how to distance their own critiques of such proposals from those of the tech companies themselves, or advocates of a ‘libertarian lnternet’ who see these as primarily matters for parents rather than tech companies or the state. Alternative proposals, such as legislating for a ‘digital duty of care’ on the part of these companies, run the risk of being overly reliant upon the goodwill and sense of social responsibility of the companies themselves (Flew, 2022).

This article has drawn upon Celia Lury's concept of problem spaces to understand the complexities and potential contradictions of policy formation around social media governance, using the public debate around Australia's Online Safety Amendment (Social Media Minimum Age) Act 2024 as a case study. Through analysis of public opinion surveys on the topic, and stakeholder submissions to the 2024 Joint Select Committee on Social Media and Australian Society, we found general agreement on the ‘givens’ of the issue as being the potential of online environments to present risks and the potential for harms for young people, and the ‘goal’ of reducing such risks and potential harms through some form of government action. The question of ‘operators’ is far more complex and contested. Most respondents to this debate, other than the tech companies themselves, view firm or industry self-regulation as being limited at best, and a form of ‘counter-regulation’ at worst. Our evidence indicates that, while such measures generate controversy and contention among experts, such policies also have considerable public support. Their emergence on legislative agendas marks the degree to which public policy towards digital platforms has been shifting in the direction of more direct nation-state regulation since the late 2010s.

Footnotes

Acknowledgements

We would like to acknowledge the support of the Mediated Trust ARC Laureate team, including Wenjia Tang, Louisa Shen, Rob Nicholls and Justin Miller.

Ethical approval and informed consent statements

Research on the Mediated Trust project is approved by The University of Sydney Human Research Ethics Committee Project Identifier: 2024/HE001049.

Funding

This research has been supported by the Australian Research Council through the Laureate Fellowship programme (Grant No. FT230100075).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The research in this article is derived from publicly available data sources indicated in the paper.