Abstract

This study draws on several data activism projects and applies discursive interface analysis to understand the material means by which activist software strives to empower users vis-à-vis data power. The analysis uncovers four types of oppositional affordances: (i) enabling the use of hidden affordances (ii) imagining new affordances (iii) creating meta-affordances (resignifying perceptible affordances of corporate platforms and reconstructing their meaning), and (iv) creating anti-affordances (hindering or distorting corporate platforms’ affordances to the extent that they do not perform their intended function). Although not without limitations, oppositional affordances reveal the actual agentic possibilities of data activism for users other than activists to affect the very algorithms that produce them as datafied subjects. The proposed typology provides a means for further empirical analysis of critical software and its subversive potential for users. The article concludes with a critical discussion of data activism as a means of vernacular critical praxis.

Introduction: The emerging practice of data activism

Remember ‘Google bombing’? Back in the 2000s, internet users were witnessing the at times amusing effects of a tactic that took advantage of the Google search algorithm to make a –usually– political statement, such as typing ‘miserable failure’ to get linked to the biography of George W. Bush. Google bombing was based on the discovery that with enough links any site could be pushed up to the top of the search results, linking a particular word or phrase with a particular site, even if the search term did not exist on that page. Retrospectively, Google bombing can be seen as an act of data activism, understood as engagement ‘in politically motivated use of technical expertise in view of fixing society through software and online action’ (Milan and Van der Velden, 2016: 60).

Since then, algorithmic media have grown rapidly in sophistication and reach, peaking with social media and big data applications that ‘constitute and enact the everyday’ (Willson, 2017: 139). Data mining techniques in social media are ubiquitous, turning many aspects of users’ social life into algorithmic relations, while proprietary algorithms assume decision-making power over users. This has given rise to concerns that we are ushered into an emerging order of ‘data colonialism’ that appropriates human life itself (Couldry and Mejias, 2019) and a ‘soft’ biopolitical mode of control (Cheney-Lippold, 2017). Rieder (2016: 40) highlights the epistemic nature of big data practices, which function as ‘empiricism on steroids’ and enable fully automated decisions in the market and governance that are endowed with economic appeal and social –even ethical– desirability. Critical accounts of algorithmic power reflect on how top-down datafication typically corresponds to a loss of human agency (Rieder, 2016), due not only to algorithms’ opacity but also to their ‘a-semiotic’ and instant operation (McKelvey, 2014). User subjectivities created by algorithmic media tend to be subordinate to data power (Gehl, 2014), unless new mediators ‘translate their operations into something publicly tangible’ (McKelvey, 2014: 597). Data activists attempt to do just that: assume the role of the intermediary to ‘support the agency of datafied publics’ (Baack, 2015: 1) and create ‘counter-imaginaries of datafication’ (Kazansky and Milan, 2021).

Data activism, as a grassroots response to (top-down) datafication, pursues practices such as obfuscation and encryption to resist corporate or state surveillance and employs campaigns, trainings and software to strengthen the agency of datafied citizens. Existing studies have developed valuable descriptive accounts and typologies of data activism (e.g. Brunton and Nissenbaum, 2015) and have explored its alternative epistemologies (e.g. Milan and Van der Velden, 2016). Yet, excluding some recent studies (e.g. Kazansky and Milan, 2021), a focus on subversive software itself is comparatively underdeveloped, empirically and theoretically. This is precisely the focus of our inquiry: to zoom in on the software created by data activism practices that make ‘sense of data as a way of knowing the world […] turning it into a point of intervention’ by creating ‘counter-discourses and data countercultures’ (Kazansky and Milan, 2021: 63–64).

This article combines the fields of data activism and affordance theory, and engages in a sociotechnical analysis of data activist software to understand the material means by which data activism strives to empower users vis-à-vis data power. Having briefly introduced the emerging practice of data activism, we focus on affordance theory to situate our analysis, reviewing latest approaches and suggesting further nuances. The empirical part of the study draws on a diverse set of projects to explore the following question: what kind of affordances do data activists provide everyday users and how do these affordances mediate the perception and experience of corporate platforms? In other words, how does data activism software attempt to empower users and render corporate platforms permeable to user agency?

Affordances and user agency

Untangling data power and user agency requires moving ‘constantly between the technical and the social’, ‘the inside and the outside of technical objects’ (Akrich, 1992: 206). In algorithmic media, the relation between the material and the symbolic is profoundly reconfigured, as the meaning of data occurs not semantically or through representation but primarily through data processing (Couldry and Powell, 2014; Milan, 2015a). Affordance theory is a constructive entry point for tapping into the action possibilities enshrined in data activism by exploring the interconnection between material and symbolic resources. Gibson (2014/1979) first referred to affordances as means of action within the field of psychology while Norman (1999) within design studies posited that 'affordance refers to the perceived and actual properties of the thing […] that determine just how the thing could possibly be used' (1999: 9). Our theoretical understanding of affordances is grounded on these definitions, while also developing them further to respond to the needs of this study. Affordances is a good entry point into the making of data activism as this concept can offer the means (symbolic and material) to understand the normalities of technologies through the study of its designed properties and functionalities. Following Bucher and Helmond (2018: 242), when it comes to data activism the concept of affordances can reveal communicative actions and 'can best be studied in and through the kinds of practices that technology allows for or constraints'.

In conceptualizing affordances, we draw primarily on Hutchby (2001), who offers a conception of affordances as aspects of artefacts that ‘frame, while not determining, the possibilities for agentic action’ (p. 444). The notion of affordance accounts for the possibilities technologies offer the people who use them. On the one hand, affordances are functional in that they ‘shape the conditions of possibility associated with an action: it may be possible to do it one way, but not another’ (Hutchby, 2001: 448). In this sense, they are properties of artefacts, often conceived as constructed and materialized by designers. Designed or ‘structural’ affordances (boyd, 2011) shape digital architectures and dictate particular uses, as they bypass processes of reflection, and construct specific user subjectivities. As Akrich (1992: 208) explains, designers ‘define actors with specific tastes, competences, motives, aspirations, political prejudices, and the rest […] “inscribing” [their] vision of (or prediction about) the world in the technical content of the new object’. Hutchby claims that this conception of affordances allows us to challenge the (radical) constructionist thesis that artefacts are fully open for interpretation and are ‘given meaning and structure through actors’ interpretations and negotiations’ solely (2001: 450). Affordances do not predetermine human action, yet they ‘do set limits on what it is possible to do with, around, or via the artefact’ (Hutchby, 2001: 453). More importantly, this approach allows a shift of the analytic gaze from representations of and discourses around technologies to its material aspects.

At the same time, affordances are also relational, which means that possibilities of action are not immutable and identical for all users (Shaw, 2017) but may differ according to how different users perceive them and position themselves toward them – what Nagy and Neff (2015) call ‘imagined affordances’. The relational quality of affordances is exemplified if we consider that platforms encode different action possibilities for different kinds of users (Ettlinger, 2018). For instance, Facebook's ‘Like’ enables ordinary users to express emotions and opinions, share information, indicate support etc. At the same time, it enables Facebook (owners) to extend its data collection practices to external websites. It also affords marketers access to targeted advertising based on the information collected by users (Bucher and Helmond, 2018). Facebook ‘Likes’ are used by developers to build applications and new services on top of Facebook, such as the dating app Tinder (Helmond, 2015).

The notion of ‘imagined affordances’ opens up a host of possibilities with regard to user agency in terms of practices or uses. Shaw (2017) moves toward this direction by connecting affordance theory to Stuart Hall's encoding/decoding model (Hall, 1980/2006). Approaching technology and artefacts as text and reading positions as uses, she identifies dominant or hegemonic uses when users use artefacts ‘correctly’, namely they use the perceived affordances in ways that conform with the designers’ intended uses. Oppositional uses are unexpected, unintended or ‘wrong’ uses, in the sense that users devise affordances that designers had not thought of or exploit existing but imperceptible affordances.

Although the recent but prolific field of (critical) data studies lays bare the harms of datafication, the study of data activism and especially its critical software (Fuller, 2003) is comparatively underdeveloped, empirically and theoretically. The current analysis responds to Fuller’s (2003) call for understanding the 'materiality of software' and theorizing how particular activist software creations produce certain effects and models of user subjectivity (Fuller, 2008). This critical approach is not concerned with the functionalities of software but is rather concerned with the questions on how software consists of different elements and the ways they are embedded in a dynamic web of relations. Fuller (2003) further explains that there are two key modes of operation: a) firstly, by using 'the evidence presented by normalized software to construct an arrangement of the objects, protocols, statements, dynamics, and sequences of interaction that allow its conditions of truth to become manifest' (2003: 23), b) secondly, it exists 'in its various instances of software that runs just like a normal application, but has been fundamentally twisted to reveal the underlying condition of the user, the way programmes treat data, and the transduction and coding processes of the interface' (ibid). The work of Fuller is valuable because it makes apparent the processes of technological normalization operating at many scales within different software products. Within this tradition, Andersen and Pold (2018) examine the relationships between art and computer interfaces, pointing out the interface's disruption of everyday cultural practices. Through their work, they present a new interface paradigm with cloud services, smartphones, and data capture, by examining how particular software art forms seek to reflect this paradigm. In their book The Metainterface, the authors argue that there exists a metainterface to the displaced interface, which can be described as a form of ‘semantic capitalism’ of a metainterface industry that captures user behaviour. This metainterface can disrupt everyday urban life, changing how the city is organized but also how the cloud affects the experience of the interface. In the same vein, van der Vlist and Helmond (2017), inspired by the work of Fuller (2003), point towards an analysis of Data selfie, arguing that such activists’ creations tend to confront users with social media companies practices of data collection, calculation, and potential profiling by means of speculative data awareness.

Data activism shares many features with other concepts such as tactical media (Garcia and Lovink, 1997), which builds on De Certeau's (2014/1984) theory of people's tactical responses to everyday texts and technologies to resist, without escaping, repressive realities. Data activism projects, working with new materials ('data') are too, like tactical media, 'always in becoming, performative and pragmatic, involved in a continual process of questioning the premises of the channels they work with' (Garcia and Lovink, 1997: n.p.). What is different is the focus on the relation between the creators of data activism projects and their users. In tactical media, users of mainstream texts/technologies perform acts of resistance by engaging in tactical uses of given texts/artefacts. In data activism, data activists create software to offer lay users opportunities for oppositional action. These opportunities or interventions can be qualified as forms of 'antagonistic algorithmic media practices,' which 'tactically subvert, manipulate, or obviate extant power relations' (Heemsbergen, Trere and Pereira, 2021). Data activism practices are inscribed within critical algorithm studies as forms of political and social resistance towards how algorithms are created, used, and imagined (ibid). What exactly do these creations enable lay users to do? This is the question that we wish to explore; towards this end, affordances is an important concept that can shed light on the actual potentialities (and limits) of activist software.

Others existing studies of data activism have developed useful descriptive accounts and typologies (Brunton and Nissenbaum, 2015) and have explored its alternative epistemologies (Milan and van der Velden, 2016). The concept of affordances, as laid out above, can be extended beyond analysing the restrictions of certain artefacts, as critical software studies typically do, to explore the possibilities for action crafted by activist software. Within this background, a host of questions spring up. How do oppositional uses come about? How does data activism software mediate the perception and use of corporate platforms’ affordances? As we will argue below, data activists intervene at the material substratum by creating oppositional affordances for ‘ordinary’ users.

Research questions and methodology

Our empirical inquiry poses one main research question: what kind of affordances do data activists provide users and how do these affordances mediate the perception and experience of corporate platforms and technologies? This question aims at exploring the ways in which data activism attempts to empower users and render corporate platforms permeable to user agency.

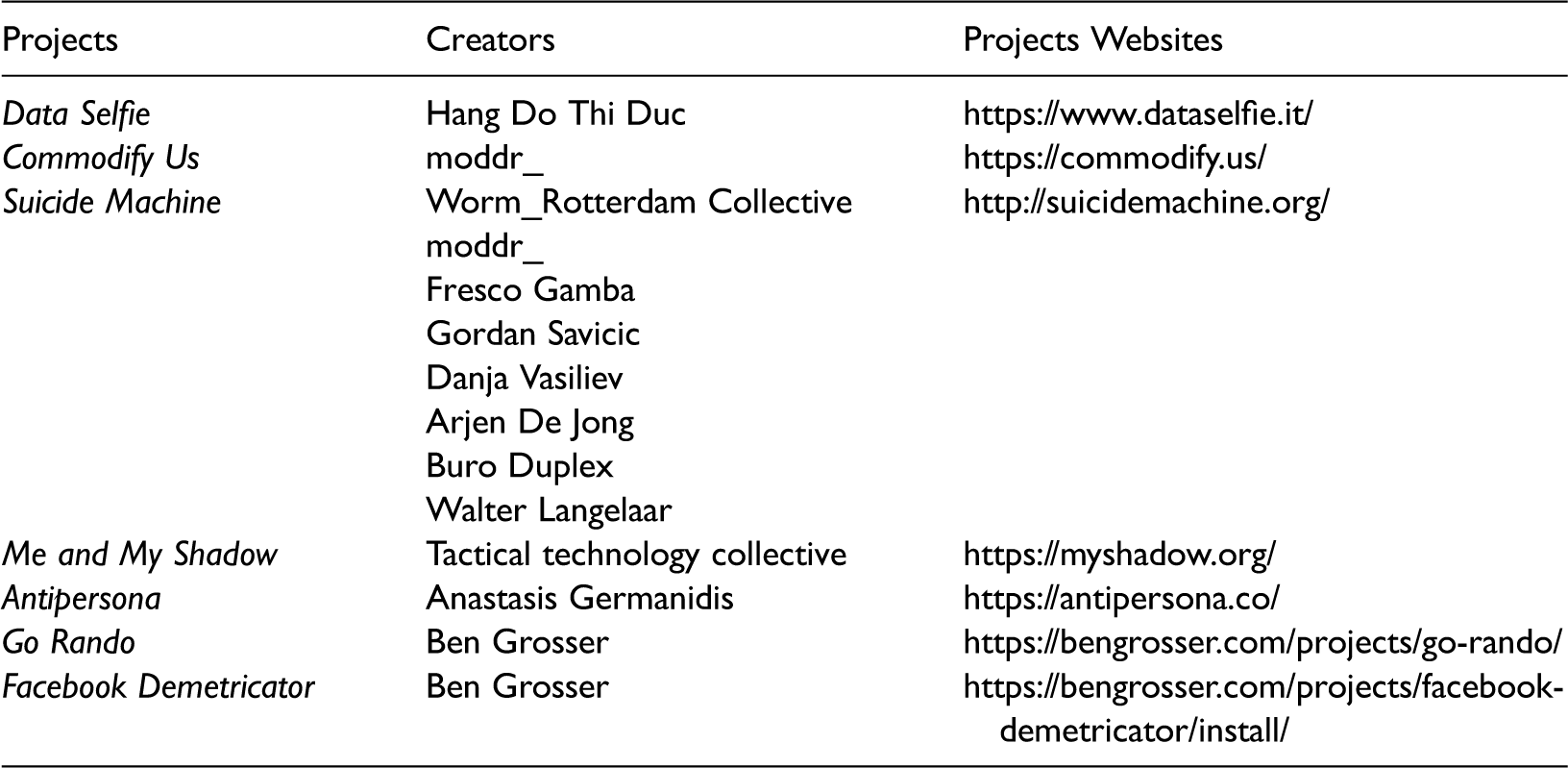

To explore this question, we analysed several data activism projects which we selected by purposive sampling. First, an initial list of projects was compiled through general search engines (May 2016 – December 2018 1 ) using key phrases such as ‘data activism’, ‘data activism applications’, ‘social media applications’, ‘data awareness’. These searches yielded links to websites of applications and projects. This initial list was reviewed to acquire prior knowledge of the phenomenon under study (Rapley, 2013). To limit the number of cases, three explicit criteria were set. First, that they included the use of software (hence, solely informational material was not selected) 2 ; second, that their description made explicit reference to the most prominent corporate platforms (e.g. Facebook, Twitter, Google) or technologies (e.g. face recognition systems) or that their use required use of these platforms; third, that they explicitly challenged corporate social media by referring to key issues related to ‘data politics’ such as privacy, surveillance, data governance, algorithmic selection of content, filter bubble etc. The final sample includes seven projects, which were deemed as ‘information-rich’ cases (Rapley, 2013) that reflect well the diversity of relevant applications and thus serve the purpose of this exploratory study aiming at discovering theoretical concepts about the phenomenon under study (Glaser and Strauss, 1967). The seven projects are: Data Selfie, Commodify Us, Suicide Machine, Me and My Shadow, Antipersona, Go Rando, and Facebook Demetricator (see Table 1). After arriving at a typology through the analysis of these cases, additional examples of similar projects (some of them very recent) were identified and analysed to broaden the scope of the study (Syb, ADPC, Zizi – Queering the Dataset, Instawareness, Tracking Exposed, This Person Does Not Exist, TrackMeNot, Fawkes).

To briefly introduce the initial seven projects, Data Selfie, developed by a team of designers, artists, researchers, and coders, is a browser extension that tracks Facebook use and reveals to users the data traces they leave behind and the inferences that can be made about users’ interactions, habits, interests, personality predictions and so on 3 . Commodify Us, created by the moddr_ media/hacker team, is a web application that allows users to license and sell their Facebook data directly to marketers. Moddr_ also created Web 2.0 Suicide Machine, an application that removes all content and connections from social media accounts and then deletes these accounts. Me and My Shadow is a project developed by the non-profit organization Tactical Tech that includes a variety of resources and tools regarding user management of data tracking and digital awareness. Antipersona, a work by the artist and engineer Anastasis Germanidis, is a mobile application that allows users to adopt another Twitter user's persona for 24 hours. Go Rando is a web browser extension that randomizes the selection of the Facebook ‘Reactions’ (the extension of the Like button) to obfuscate the emotional profiles of Facebook users. Finally, Facebook Demetricator is a web browser addon that hides all numbers (e.g. metrics enumerating friends, likes, comments) on Facebook interface. Both projects are created by the researcher, artist and programmer Ben Grosser.

Regarding the method of analysis, we proceeded in two steps. First, we studied all texts related to the projects’ websites (descriptions, manifestos, instructions of use, FAQs, posts etc.) and other sources linked to by their websites (e.g. media articles, commentaries, interviews) to get a sense of the projects’ philosophy. Second, to explore the affordances of data activism projects, we performed a discursive interface analysis of the selected cases. Stanfill (2015) explains how discursive interface analysis interprets the assumptions built in websites and applications through the analysis of their affordances, to reveal ‘how norms for technologies and their users are produced and with what implications’ (p. 1059). Based on the premise that ‘the interface of a computing technology is the manifestation of its implicit politics and ideology’ (Sun and Hart-Davidson, 2014: 3534), affordances entail normative claims ‘about what Users should do’ (Stanfill, 2015: 1062), acting to ‘configure the user’ (Hutchby, 2001: 451). In this method, affordances are seen as producing discourses, articulating ‘structural ideals that position particular behaviour as “correct” or “normal”’ thus ‘reflecting social logics and non-deterministically reinforcing them’ (Stanfill, 2015: 1060; see also Fotopoulou and O; iordan, 2017). Based on the premise that discourses are ‘practices that systematically form the objects of which they speak’ (Foucault, 1972: 49), discursive interface analysis adopts a critical lens by reflecting on power relations between media industries and users. Our analysis extends this method to the analysis of critical software affordances and the ways it acts upon corporate social media affordances (and their implied logics). The analysis was performed by installing and using the software provided by those projects, signing in through our personal social media accounts when needed. The applications were used for as long as was necessary to record the various actions users could engage in by using these tools and the outcomes generated by the use of the applications. This method allowed us to obtain access to ‘the user's reactions that give body to the designer's project, and the way in which the user's real environment is in part specified’ by the software (Akrich, 1992: 209).

Presentation of data activists’ projects, authors, and projects websites (original contribution by the authors).

The oppositional affordances of data activism tools: hidden, new, meta- and anti-affordances

The ways in which data activists attempt to empower users can be approached through the concept of oppositional affordances. We ask what this software does, what it enables users to do, as well as how specific actions afforded by data activist tools act upon corporate platforms, attempting to modify users’ experience of them and often subverting their perceptible affordances. Oppositional affordances are distinguished to four types: (i) enabling the use of hidden affordances; creating (ii) new affordances, (iii) meta-affordances and (iv) anti-affordances.

i. Enabling hidden affordances

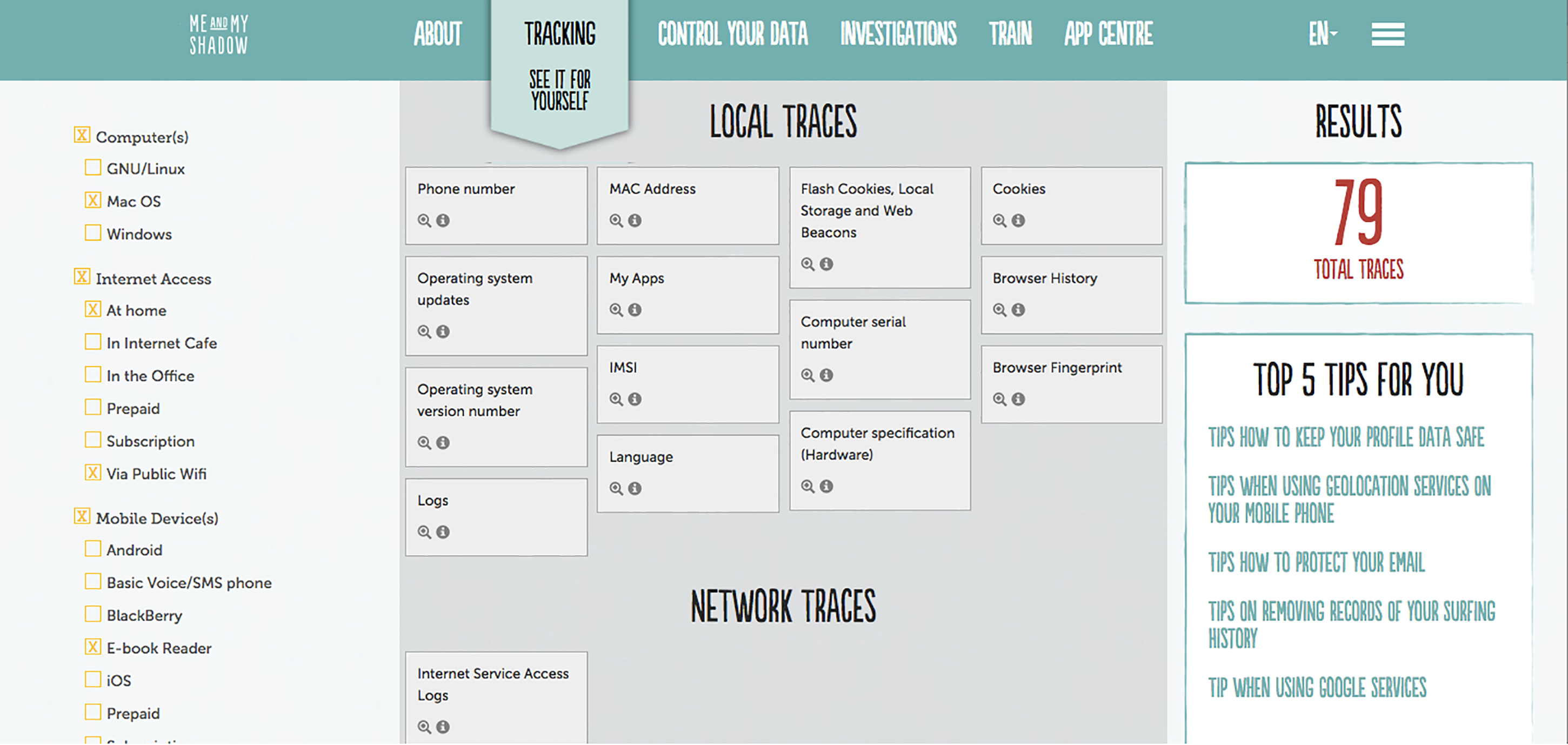

Several data activist applications aim at revealing hidden affordances in corporate platforms – ‘hidden’ is used to refer to affordances that are already provided by platforms but are hardly utilized by users, such as customization of settings, often because doing so is too burdensome or inconvenient. Me and My Shadow offers users multiple tools to control their data and enhance their privacy. For instance, the sub-project ‘Trace My Shadow’ offers users detailed instructions about how to customize default settings to protect themselves. As shown in Figure 1, users select the devices, applications, social networks and parameters of internet connection they use and see the type and number of ‘traces’ they leave behind. Furthermore, they are given instructions (‘Top 5 tips for you’) about how to eliminate or minimize those traces. Such advice entails using affordances already provided by corporate platforms (e.g. de-activating the Facebook account instead of just signing out, opting out of data collection by Google Analytics, disabling browser history, creating pseudonymous accounts).

The Me and My Shadow project.

Another example of enabling the use of hidden affordances is Web 2.0 Suicide Machine. This application aims at supporting users who wish to delete their social media accounts 4 but want to avoid the strenuous and often imperfect process of doing it themselves. As its creators claim, manually shutting down a Facebook account with 1000 Facebook friends can take up to 9,5 hours (homepage). Web 2.0 Suicide Machine promises users a ‘faster, safer, smarter, better’ exit from social media. The automated ‘suicide’ is committed by software that, once users provide their passwords, removes, one by one, the user's online content, erasing connections, posts, photos etc.; throughout this process, users can witness their ‘online life passing away’. The developers stress the experience created for users to witness their virtual ‘suicide’: ‘It's exactly like they say the last minute of your real life is like. But then slow, and fun’ (demonstration video). In this case, the data activist tool again utilizes social media's perceptible affordances (deleting an account), increasing the speed and ease by which they can be used and thereby making a statement against the deleterious effects of these social media on users’ lives. As an artistic web project, Suicide Machine also makes use of irony and ambivalence to articulate its critique, mostly evident in the demonstration video of the project.

ii. Creating new affordances

Syb (http://syb.feministchatbot.com/) is a voice interface developed by Feminist Internet that connects trans and non-binary users to content created by the trans community. It is discussed here as a tool that offers new affordances. The first affordance is a voice interface (like the well-known Alexa) that does not follow heteronormative patterns, namely it is a selection of voices that are familiar to queer users and relevant to their life experiences. Secondly, Syb recommends content that is created by the trans community and is addressed to trans and non-binary users. It is a filtering and recommendation mechanism that tends to queer needs and interests, unlike commercial search engines and recommender systems. These new affordances allow queer users to experience technology (AI) in their own terms (e.g. through a queer-sounding voice) and to discover cultural content that is relevant to their identities much more easily compared to commercial search engines or recommender systems. As the creators point out, 'we aspire to a future where trans people can safely embody themselves, having the agency to actualise their desires. […] Syb […] enable[es] them [users] to imagine new and more liberating futures for themselves' (https://thenewnew.space/projects/syb-queering-voice-ai/).

Commodify Us offers users the opportunity to extract their data from Facebook and grant Commodify Us the right to sell their data on their behalf. Thus, it introduces a new high-level affordance, namely the ability to monetize user data without Facebook's mediation. Users can become ‘data brokers’ themselves under more ‘fair’ conditions in comparison to Facebook's business model. As ‘true owners’ of their data, users are offered a venue to ‘commodify their immaterial labour’ (About). Commodify Us is an artistic project, and although the (counter)affordances it provides are real, it seeks more to destabilize common-sense beliefs about platforms’ business models that monopolize monetization of users’ data and less to offer a functional alternative business model. Nevertheless, it does open up new pathways for user action that disrupt the consolidated modus operandi of web economy.

Another category of tools is software that automatically communicate users’ privacy decisions to websites. One is ADPC (Advanced Data Protection Control – at the time of writing existing as a prototype), which is a piece of software (created by noyb and privacy activist Max Schrems) that provides ‘an alternative to existing non-automated consent management approaches (e.g. “cookie banners”)’ (https://www.dataprotectioncontrol.org/about/), offering users a tool for automatic privacy controls. In the same logic, Consent-o-Matic (https://github.com/cavi-au/Consent-O-Matic) is an open-source browser extension that automatically answers cookie-consent requests, saving users from having to submit the same information over and over at the risk of eventually giving consent to avoid the hassle (see Lomas, 2020). These tools offer users new affordances with heightened control over their interaction with websites vis-à-vis personal data processing, replacing existing mechanisms that are notoriously annoying for users and, for this reason, of reduced effectiveness. Instead, they enable users to manage privacy requests in an easier and more substantial way (e.g. give, refuse or withdraw consent) and weaken the effectiveness of dark patterns.

iii. Creating meta-affordances

We propose the term meta-affordances to denote counter-affordances that act upon platforms’ perceptible affordances, directly or indirectly. They are action possibilities offered by data activist tools that resignify the use and operation of corporate platforms, often revealing, in a practical manner, the inner working of processes related to algorithms, data use, business models etc. Meta-affordances can refer to technical features of data activist tools that enable users to discover affordances of social media's key functionalities (e.g. the ‘Like’ button) that are directed at other types of users (e.g. owners, marketers) and thus are hidden from the ordinary user. By offering a glimpse ‘under the hood’ they reconstruct the very meaning of social media's perceptible affordances. At a higher level they enable users to modify their online behaviour within social media and in turn affect the latter's operation per se. Meta-affordances are found in several tools examined; yet the application that exemplifies their operation is Data Selfie.

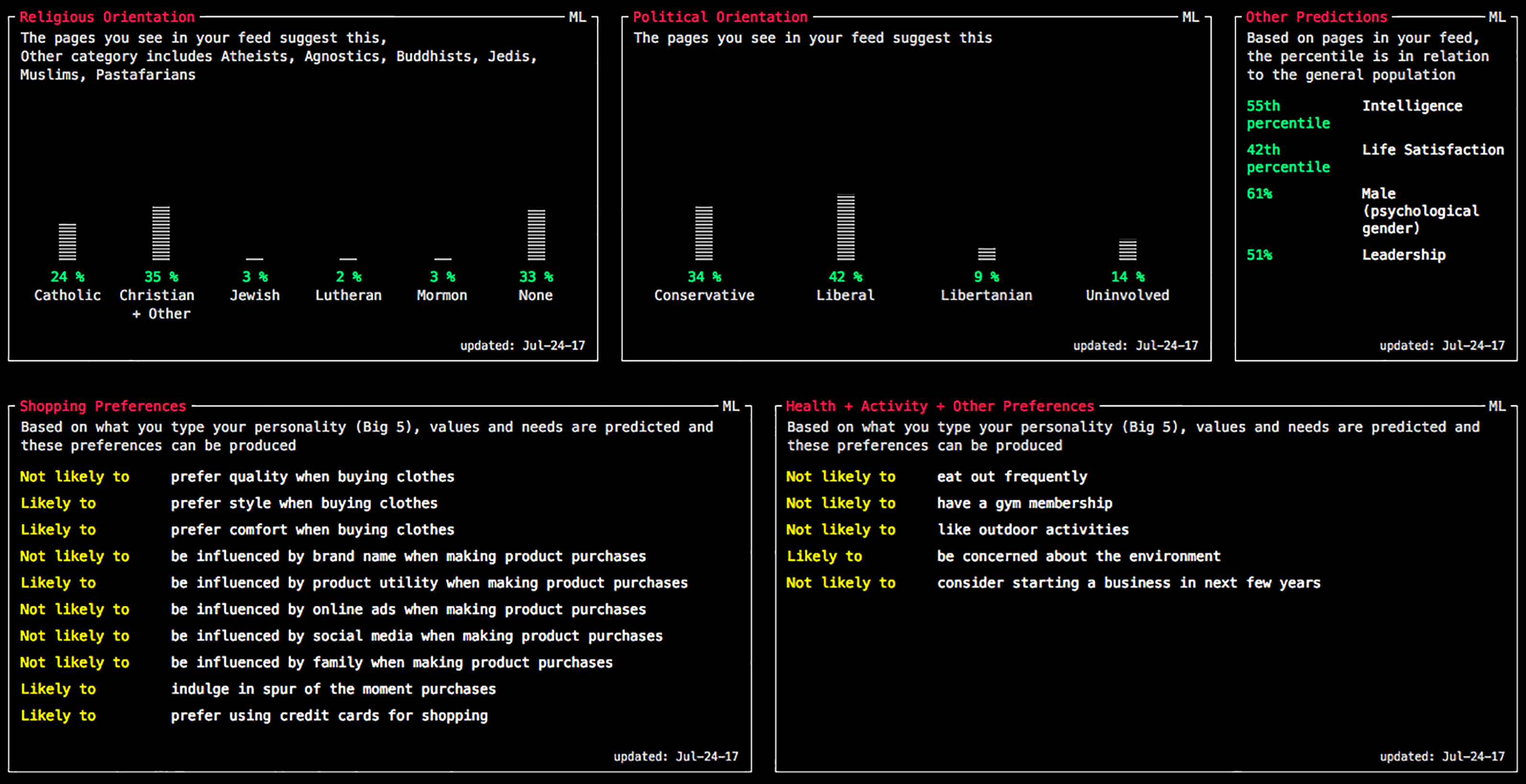

Data Selfie is a chrome extension that tracks a user's actions on Facebook, namely what catches her attention and for how long, what she ‘likes’, clicks on and types (messages and comments). In a separate interface, the Data Selfie dashboard, the user is provided an aggregated visualization of the user's Facebook usage (Figure 2). As the dashboard is enriched continuously with Facebook user data, the user is offered a parallel view of her own online activity. The dashboard shows the amount of time users spend daily on Facebook, their top preferences in pages and posts, and a personality prediction based on their passive (time spent) and active (messages and comments) engagement with Facebook content. More importantly, users are offered a reconstruction of their algorithmic identities that are inferred by their data i.e. demographic features (gender), emotional traits (e.g. life satisfaction), consumption habits (e.g. if one eats fast food frequently or prefers style over quality when buying clothes), cultural preferences, and political or religious orientation.

While Data Selfie does not modify or interfere with Facebook's interface and its function, it operates at a metacommunicative level. Metacommunication (Bateson, 1987) conveys information as to how a message is to be interpreted and what kind of message it is (Jensen, 2011). Data Selfie reveals the affordances of Facebook's functionalities that are reserved for a different set of users, thereby signalling the nature of what is being afforded and to whom, and reframing those affordances. Liking, sharing, posting information, commenting etc. are no longer just means for ordinary users’ expression on Facebook but are exposed as affordances provided to marketers and advertisers. Through her dashboard, the user can witness hidden affordances of these features, namely the construction of her data identity and the inferences and predictions that can be made by her online actions. This aggregated visualization of users’ algorithmic identities functions as meta-affordance as it comments upon and re-appropriates perceptible affordances. Liking, for instance, no longer signifies just a means for users’ expression of attitudes and emotions, but also a tool that tracks, infers and predicts personal, and at times private, personality traits, identity attributes, habits, desires and convictions.

This recodification of Facebook's affordances occurs at a material level, by employing code itself as the main discursive means to resignify Facebook activity at a practical and personal level. This is noticeable in the aesthetic of the Data Selfie website and dashboard (the pixelized logo, the clear and plain black-to-white design and the font of the main menu which is the standard font used in command line – see Figure 2). As its producers themselves explain, ‘the command line aesthetic very much reflects our mission of giving you a perspective that you are seeing behind the scenes’ (Data Selfie introductory video). End users are typically unable to synthesize the hundred or thousand tiny bits of data they create, dispersed in time as they are in the course of everyday online activity (e.g. an occasional photo, comment, like, or particular attention granted to a post or an ad). Corporate platforms, in contrast, are able to reconstruct user profiles based on the processing and correlation of these bits of data in a process which is centralized, hidden from view and closed off (Gehl, 2014), so that they acquire meaning (in terms of monetization potential) for a special category of users (marketers and advertisers). Through Data Selfie users are not just being ‘informed’ about Facebook's affordances to marketers; as its dashboard reconstructs their algorithmic identities, it renders user data meaning-ful also for ordinary users, before or while Facebook makes them meaningful, malleable, and open to manipulation for advertisers. As Data Selfie puts it in their introductory video, ‘after all, you ARE hacking your own data’, elevating end-users to ‘hackers’ as they are provided access to affordances meant for corporate users.

Data Selfie dashboard (part).

Another tool that works in a similar way is Instawareness, a piece of code that exposes the operation of curation algorithms on Instagram and the ways in which the visibility of the content on the platform is affected by them (see https://instawareness.ugent.be/; Fouquaert and Mechant, 2021), as well as the tools under the umbrella project Tracking Exposed (https://tracking.exposed/) that allow users to gather data about their own activity on social media and popular platforms in order to make sense of algorithm personalization. In most cases, meta-affordances seem to be about building user awareness, usually regarding algorithmic processes, in the hope that increased awareness will enable users to take the next step i.e. modify their actions within the platforms in order to escape, as much as possible, automatized decisions and their effects (e.g. filter bubbles).

iv. Creating anti-affordances

Other applications provide tools that get in the way of platforms’ affordances, hindering or distorting them to the extent that they do not perform their intended function. These can be conceptualized as negative affordances or anti-affordances, in relation to platforms’ intrinsic affordances. The most characteristic applications in this respect are two projects created by Ben Grosser, Go Rando and the Facebook Demetricator. Once installed, Go Rando acts upon Facebook's interface by choosing randomly one of the six ‘reactions’ of the ‘Like’ button each time a user clicks on it. The application aims at obscuring the expression of Facebook users’ feelings by removing its more valuable, in terms of their commercial value, quality: its authenticity. Randomly liking items is an obfuscation practice already known to users, yet Go Rando applies it consistently to the entirety of Facebook activity, producing a potentially disruptive effect. In a similar vein, the Facebook Demetricator, a web browser extension that hides all metrics on Facebook, aims to ‘disrupt the prescribed sociality these metrics produce’ within a capitalist culture that insists on an ‘insatiable desire for more’ (Grosser, 2014). The Demetricator functions as an anti-affordance as it cancels metrics’ intended effects: it has the potential to revoke not only the constant push for more engagement in the platform but also to deconstruct the ‘metricated social self’, built into Facebook's hegemonic affordances, which sits well with a neoliberal ‘audit culture’ that postulates self-surveillance and ‘an internalized need to excel within the audit's parameters’ (Grosser, 2014). Feedback from users of the Demetricator shows that this anti-affordance can transform the entire ‘Facebook experience’ for users (see further Grosser, 2014).

Antipersona, a mobile application enabling users to adopt another Twitter users’ identity for 24 hours, functions as an anti-affordance in a more implicit way. To better understand this function, we need first to decipher Twitter's intended uses. As in other social media platforms, the ‘ideal user’ should have a single, stable, consistent and real identity. Users’ sincerity is essential for corporate social media business models, so that their algorithmically reconstructed profiles make sense to advertisers (Gehl, 2014; Van Dijck, 2013). Through various interface strategies – such as Facebook's ‘real name policy’ and recently Twitter's ‘account verification policy’ (see Hearn, 2017) – platform owners ‘promote the ideology of having one transparent self or one identity’, to discourage users from ‘splitting up their online identities through various platforms, which messes up the clarity and coherence of their data’ (Van Dijck, 2013: 212). Antipersona substitutes a Twitter user's real identity with a different one, albeit temporarily, urging users to experiment with their identities. This way, it challenges the imposed need for constant self-branding and gaining popularity. Twitter is being transformed from a tool enabling its established perceived affordances to a tool serving its own logic, namely affording critical and self-reflexive identity play and responding to the need for a ‘multiple, composite self’ (Van Dijck, 2013: 200), ‘a diffusion of subjectivity that opens up multiple ways of being’ (De Vries and Schinkel, 2019: 11). This unintended use is also an undesired use: users spending their time on Twitter observing other users’ activity and engaging in identity play, do not release useful behavioral data that would feed the business model of the platform (Hearn, 2017). As Hu (2015: 50) argues, ‘the one user of no value to online companies is the user who fails to “use” […]; this failed user, the user that doesn’t participate or produce content, represents the queer stoppage of technological (re)productivity’.

Other software tools that provide anti-affordances exist TrackMeNot (https://trackmenot.io/) is among the most well-known obfuscation tools (created by Daniel Howe and Helen Nissenbaum), which ‘helps protect web searchers from surveillance and data-profiling by search engines’. The tool performs random search-queries to popular search engines and thus attempts to lessen the accuracy of user profiles rendering them less easily identifiable – hindering the operation of search engines in respect to user profiling. In a similar vein, Fawkes is a tool created in 2020 that ‘cloaks’ images so that depicted people are not recognized by facial recognition algorithms (http://sandlab.cs.uchicago.edu/fawkes/). Akin to obfuscation tactics, this tool provides an anti-affordance as it obstructs the functioning of facial recognition technology with respect to ‘cloaked’ users. Interestingly, this works equally invisibly to the human eye (at the machine learning level) as the original software which this tool targets 5 .

Discussion: The promises and limits of data activism for user agency

The present study highlights the essentially sociotechnical nature of this form of oppositional action that ‘can be understood only by intersecting the material of human-machine interactions and the symbolic of human action’ (Milan, 2015a: 2). The analysis unearthed core practices of data activists, showing how they endorse oppositional use positions (Shaw, 2017), by facilitating user actions that can modify designed affordances and encompass a subversive potential. A similar idea is expressed by Santo; (2013: 24) ‘hacker literacies’, namely the critical ‘reading’ and ‘rewriting’ of sociotechnical spaces by exposing the values embedded in their design and promoting an understanding that ‘these spaces are indeed malleable’ and hence contestable. By embracing the hidden, new, meta- and anti-affordances created by data activism projects, ‘ordinary’ users are offered the possibility to reflexively modify their behaviour online and affect the very algorithms that produce them as datafied subjects.

All projects examined aim at giving ordinary users agency – albeit in different ways. Projects that focus on relatively hidden or non-prominent existing affordances enable users to ‘work with the system’ and utilize what the system has to offer i.e. they offer users defensive mechanisms to shield themselves against the harms of commercial platforms while continuing their use. Meta-affordances operate at the level of signification, attempting to resignify the corporate platforms and tools we use every day, usually by revealing what occurs ‘under the hood’. Such tools employ software that address users as researchers of their own online activity (or ‘hackers of their own data’, as put by Data Selfie creators) and encourage them to experiment and participate in a critical play with data. Typically aiming at raising awareness, they do so through demonstrating, in a practical and personal way, the inner working of algorithmic systems, utilizing an aesthetic and discourse that can reduce feelings of surprise and revelation. Both types are akin to Ettlinger’s (2018) ‘productive’ modes of resistance (strategies that use and/or subvert elements of digital environments). New affordances go one step further, offering alternatives that can bypass existing tools, often catering to the needs of vulnerable communities, with a forward-oriented outlook to ‘imagine new and more liberating futures’ (Syb project). Anti-affordances are the most antagonistic tools, as they outright distort or disrupt the operation of platforms.

What new knowledge does this analysis bring into the field of data activism? We believe that the proposed typology of oppositional affordances can provide the ground on which the diverse forms of data activism can be empirically investigated vis-à-vis the actual praxis of users, considering the different user subjectivities and users’ various degrees of consciousness regarding data politics (Ettlinger, 2018). How are the various types of data activism received by users and with what implications? Which types of oppositional affordances are of value and fit the needs of which user communities? How are the various types integrated into the fabric of everyday use of technologies and with what effects? To which type of activist software should activists channel their energy to reach users (especially when the ‘guerilla war’ with companies is unabating and activist resources are always scarce)? Such questions can be explored more systematically using the proposed framework so that we better understand critical software’s subversive potential for ordinary users. To give an example, while much energy is poured into meta-affordances and awareness-building, taking for granted that awareness can translate into action, Fouquaert and Mechant (2021: 17) discuss what they call the ‘Algorithm Paradox’: ‘when people know the existence of curation algorithms, they claim to be bothered by them while not acting accordingly […]’. This echoes the concerns of digital security trainers about ‘tool-centric solutions’ that put ‘a mounting pressure’ on users to ‘know everything’ about digital surveillance (Kazansky, 2021: 5). More empirical research is required to understand the role of the various oppositional affordances in linking awareness/knowledge and empowerment/critical praxis and in crafting new experiences and agonistic subject (user) positions.

At the same time, as significant a potential data activism holds for empowering, there is a significant caveat that needs to be stressed. Despite the fact that most of the projects studied advance a critical discourse vis-à-vis corporate platforms, there is often an impression that these tools are technological ‘fixes’ aiming to protect individual users instead of collective reactions for structural regulation or social control over corporations and states (Fish, 2017). In other words, the responsibility tends to be placed primarily on users, who should take action to protect themselves against corporate actors eroding their privacy and violating their rights. This idea is compatible with a fundamental ideology permeating the data industry, the idea of the ‘sovereign interactive user’ (Gehl, 2014). As Gehl argues, the ideology of self-regulation, pushed by advertising and marketing companies, is based on an imagined user who is conceived as a free and powerful subject, a ‘master of digital flows’ (2014: 111), able to make informed choices and take on the risks related to her activity online. What follows is the shifting of responsibility from data industries and regulatory agencies to the ‘enlightened’ user, in line with ‘neoliberal ideologies of self-determination and personal risk management’ (Gehl, 2014: 110). One could argue, as Milan & Gutiérrez do (2015: 127), that data activists are ‘inherently political, as their hands-on practices seek ultimately to alter power distribution’. As hard as it is to disagree, we observe that, as far as data activism discourses aim solely at creating awareness among individual users, the broader political economy of big data and data companies’ practices tend to be left intact i.e. keeping one's own data private (practicing good ‘digital hygiene’ as many public campaigns advocate these days) does little to address the larger political problem of surveillance (Hu, 2015: 119) 6 .

A related concern is associated to collective agency, as opposed to the agency of individual users. To be sure, the projects examined are part and parcel of a broader movement of data activism, which is linked to both traditional and data-centred social movements (Milan, 2015b), focusing on a collective effort to build a fairer digital (eco)system. Besides, cyber- or hackactivism has always been conceived less as a mass movement and more as a decentralized ‘cell-based hit-and-run media intervention’ (Milan, 2015b: 552). Still, data activism is characterized by a ‘novel tension between the individual and the collective dimension of organized collective action’ (Milan and Gutiérrez, 2015: 124). While these projects offer ample opportunities for the active engagement of programmers as data activists, it is hard to see how non-skilled users can take part in the game (see also Baack, 2015). From the perspective of end users with no programming skills, the affordances provided do not envisage a process of collective identity building or collective action in a tangible manner. With a few exceptions that incorporate a mechanism of public visibility of contentious online action or a more active role for lay users, the latter are on their own vis-à-vis the corporate power nexus, empowered to perform personalized acts of defiance that do not seem to be weaved into a collective representation of ‘we-ness’.

All the cases of activist software examined certainly reflect an activist identity on the part of the creators of these tools. An interesting question that arises, and this analysis attempted to answer, is what is the role envisaged for users. In other words, to what extent do users act as activists by utilizing these action possibilities? The analysis showed that anti-affordances enable users to act as activists more than other tools, effecting change on the platform infrastructures themselves, whereas new affordances tend to pull users away from using corporate platforms, potentially weakening their user base. Meta-affordances operate at the level of identity or consciousness, which may (or may not) transform into activism, while the revelation of hidden affordances subscribes to a model of individual protection. We consider that this typology of oppositional affordances enables activist software creators to better understand which action possibilities their projects create for users as well as their limits, and provide a useful ground for reflection on how users can be further involved in this process.

Although the recent but prolific field of (critical) data studies lays bare the harms of datafication, the study of data activism is comparatively underdeveloped, empirically and theoretically. Responding to Fuller’s (2003) call for understanding the ‘materiality of software’ and theorizing how particular activist software creations produce certain effects and models of user subjectivity (Fuller, 2008), we argue that the study of data activism has a lot to gain by focusing on subversive software itself, drawing on software studies’ emphasis on the material aspects of technology. Equally important is to develop a critical approach that does not fall back into merely celebratory accounts that take the claims on deconstruction of hegemonic narratives at face value. Oppositional affordances reveal the actual agentic possibilities of data activism for users other than activists and invite further research into whether users will come forward to play the roles envisaged by data activists or whether they will define other roles for themselves.

Footnotes

Acknowledgements

Both authors contributed equally to this work.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.