Abstract

Automated medical diagnosis has become crucial and significantly supports medical doctors. Thus, there is a demand for inventing deep learning (DL) and convolutional networks for analyzing medical images. Dermatology, in particular, is one of the domains that was recently targeted by AI specialists to introduce new DL algorithms or enhance convolutional neural network (CNN) architectures. A significantly high proportion of studies in the field are concerned with skin cancer, whereas other dermatological disorders are still limited. In this work, we examined the performance of 6 CNN architectures named VGG16, EfficientNet, InceptionV3, MobileNet, NasNet, and ResNet50 for the top 3 dermatological disorders that frequently appear in the Middle East. An Image filtering and denoising were imposed in this work to enhance image quality and increase architecture performance. Experimental results revealed that MobileNet achieved the highest performance and accuracy among the CNN architectures and can classify disorder with high performance (95.7% accuracy). Future scope will focus more on proposing a new methodology for deep-based classification. In addition, we will expand the dataset for more images that consider new disorders and variations.

Keywords

Introduction

Disease diagnosis and health care services have long been of high demands and faced significant challenges. Cost, time, and location barriers limit the quality of service, and patients cannot receive the services they acquire. Skin diseases affect approximately 1.9 billion people. 1 These kinds of diseases need follow-up with dermatologists, and most last for a long time, which makes the aforementioned barriers more challenging. Technology plays a crucial role in providing smarter and powerful solutions and can overcome the barriers. Artificial intelligence (AI), machine learning (ML), and deep learning (DL) offer a wide range of robust solutions.2-4 Specifically, dermatology conditions rely on morphological features and can be diagnosed throughout the patient’s images. This automated diagnosis is mainly based on recognizing visual patterns. Skin imaging tools and technology provide a variety of styles and designs that have become crucial for the clinical diagnosis of skin diseases.

AI has been developed in the last few decades for many different applications in medical science. It was developed as a rule-based induction for defined rules that are extracted from a set of observations and represent local patterns in the data. Medical image analysis is a crucial step in diagnosis. ML as a branch of AI has been utilized for the purposes of classification, object detection, segmentation, and image generation. Various methods and algorithms have been applied to medical image analysis, such as support vector machines (SVMs), decision trees, regression, and many other methods. 5 Moreover, artificial neural networks (ANNs), as AI domains, have been utilized in the last few decades for various areas of medical science.4,6 Although their use in the field of dermatology remains relatively limited, ANNs and other ML models were mainstream for quite a long time until the emergence of DL.7-13

Deep learning is a subset of machine learning methods that consists of multiple layers to extract higher-level features from the raw input data. It is based on networks that are capable of learning unsupervised data that are unstructured or unlabeled. Therefore, it is called deep neural learning or deep neural network. 14 It has been used for a variety of domains, including medicine. Litjens et al. 15 discussed DL methods for medical image analysis and covered its concepts, techniques, and architectures. Tian and Fu 16 reviewed 77 articles focused on DL in medical image classification, detection, segmentation, and generation. They divided it into supervised learning, weakly supervised learning, and unsupervised learning. They stated that the main difference between these 3 learning schemes is the proportion and granularity discrepancy of the annotated labels that drive the models. Common deep neural networks are mainly represented by convolutional neural networks (CNNs), recurrent neural networks (RNNs), and generative adversarial networks (GANs).

According to most of the literature on this subject, there are several challenges and limitations in current DL models applied to dermatology. Cullell-Dalmau et al. 17 mentioned the misclassification of images under process. A situation may occur if the image under process does not belong to any of the training classes; then, the model will classify the image into one of the other categories. They also discussed the importance of considering detailed metadata of images, which adds more challenges if a large number of images are used for training. As they stated, this further imposes the development of a quality test to automatically assess whether an image respects such quality standards; this is also supported by Haenssle et al. 18 Moreover, the quality of images and standardization of dermatological images affect the DL model performance since most dermatological diseases show common features that affect the quality of classification.

The rest of this paper is structured as follows. In Section 2, we present the DL techniques that have been used for skin cancer and some limited research on dermatological disorders. Section 3 describes the contributions of this work as we discuss the 6 main CNN architectures included in this analysis. Additionally, we describe our data used with the 6 CNN architectures. The method used for image enhancement and filtering is also discussed in this section. Section 4 discusses the results obtained. Section 5 discusses the limitations and challenges and analyzes the results. Finally, Section 6 concludes the paper and presents an outlook for future research.

Related Works

Recently, deep learning has made considerable progress in skin tumor and skin cancer detection and classification.19,20 Brinker et al. 21 reviewed CNNs that classify images of skin cancer and showed that the most common approach is to use a CNN pretrained by means of another large dataset and then optimize its parameters to the classification of skin cancers, which usually achieves the best performance with the currently available limited datasets. A significantly high proportion of studies in the field are concerned with skin cancer.14,22-24 Esteva et al. 19 first called attention to DCNN and proved that it could be significant in classifying skin disease, especially Keratinocyte carcinoma and melanoma with a level of competence comparable with that of board-certified dermatologists. This extensive interest is due to the availability of large datasets of skin cancer that are available publicly. Additionally, superior performance is achieved in the detection of skin cancer because the lesion area can easily be detected in images.

However, there is limited interest in dermatological diseases such as psoriasis, eczema, alopecia, and vitiligo. Most automated classification methods developed for dermatological diseases are based on supervised techniques. These methods are well known for ground-truth data and difficult to generalize since skin images vary based on skin tone, type, color, image lighting, and image contouring. 5 These difficulties increase the limitations of adopting it for dermatological imaging diseases. Moreover, the image features vary in this type of image, and the existing methods and techniques require different levels of engineering and filtering to reach a well-fit model. 25 However, convolutional deep learning methods have outperformed classical machine learning techniques for dermatological image classification. Yasir et al. 26 proposed a method based on computer vision techniques and image processing algorithms for feature extraction. They used an ANN to identify the diseases after training and testing. This method detected 9 different types of skin diseases with an accuracy rate of 90%. Kumar Patnaik et al. 27 examined the effect of InceptionV3, Inception-ResNet-v2 and MobileNet on colored skin images, and the architectures successfully predicted skin disease based on maximum voting from the 3 networks. Sae-Lim et al. 28 used MobileNet for skin lesion classification and compared it with the proposed modified MobileNet. DenseNet and ResNet architectures have also been examined with images collected using mobile phone cameras, and the accuracy with the models reached 80%. Facial disorder detection has been examined by Goceri. 25 Dermatological disease classification problems using CNNs have been discussed recently in Liu et al. 1 and Göçeri. 8

Material and Method

Dataset

The dataset was collected from different sources. The first source is Dermnet, which was founded by Thomas Habif, MD, in 1998 in Portsmouth, NH. It is one of the largest independent photodermatology sources for the purpose of medical education. It also provides descriptions of a wide range of skin conditions through innovative media. The second source is offered by the Department of Dermatology at the University of Iowa. They offer a repository of several disorders, such as cosmetic dermatology, skin remedies, and both photo and retinoid therapies. Images with the following diagnosis were included in this study: eczema, atopic, and psoriasis. We focus on these diagnoses since they are most common in the Middle East and Saudi Arabia.29-32

Image Filtering and noise reduction

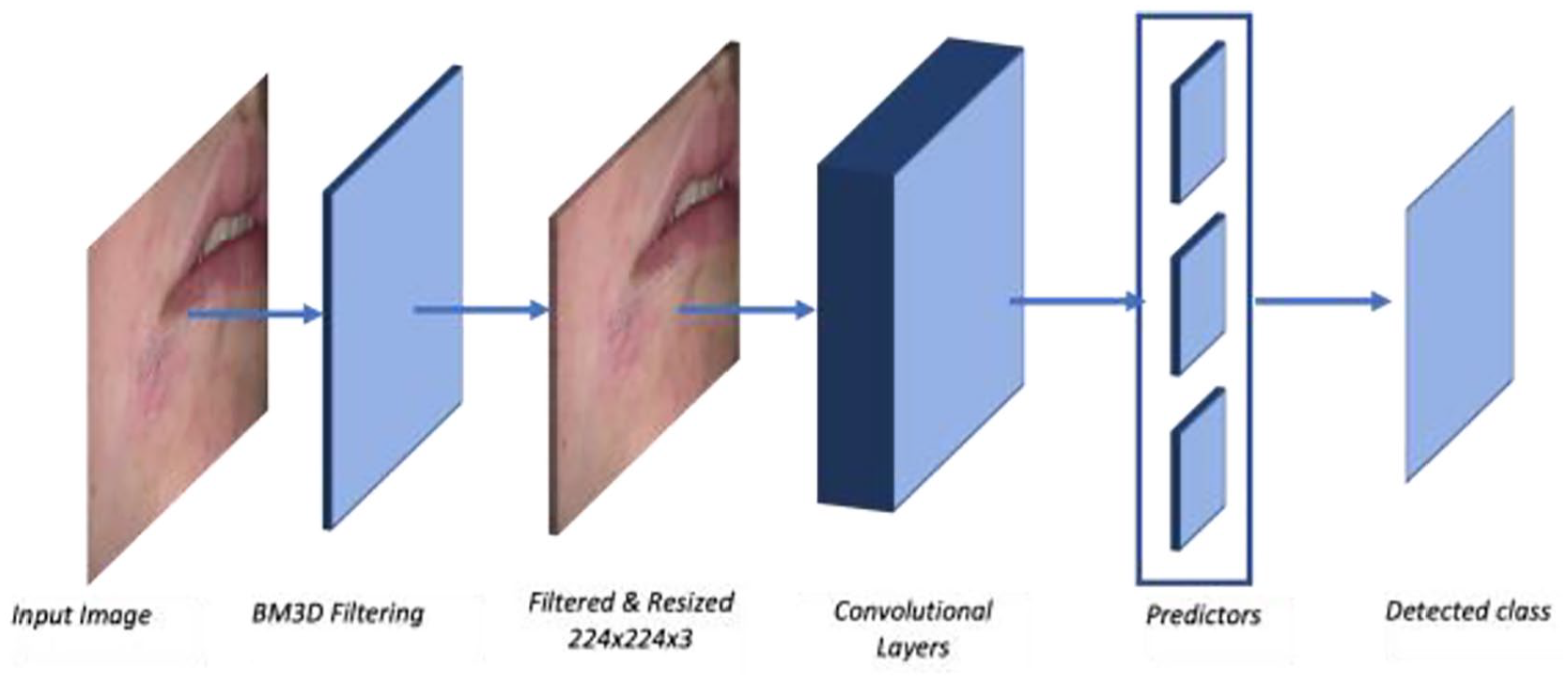

The dataset contains a total of 6723 images, and 1934 are used for testing. The images were labeled with 3 classifications: eczema, atopic, and psoriasis. The image filtering process is important to note in image processing; therefore, the use of the original images affects the final results. Thus, image filtering is a crucial step in image processing, which may include modifying or enhancing the original images. In particular, filtering an image aims to emphasize certain features or remove other features. Image processing operations implemented with filtering include smoothing, sharpening, and edge enhancement. In this work, a block-matching and 3D filtering algorithm (BM3D) was adopted to enhance image dimensions. It is considered one of the current state-of-the-art methods for image denoising. This algorithm has a high capacity to achieve better noise removal results than other existing algorithms.33,34 Several studies have applied BM3D to deep learning for image classification.35,36 Figure 1 shows the result of applying BM3D filtering on a sample image. The images in the dataset were regenerated and resized to a scale of 224 224. The images have the same ratio scale and therefore do not need padding.

Result of applying BM3D filtering: (a) image before applying BM3D and (b) image after applying BM3D.

Deep learning models for classifications

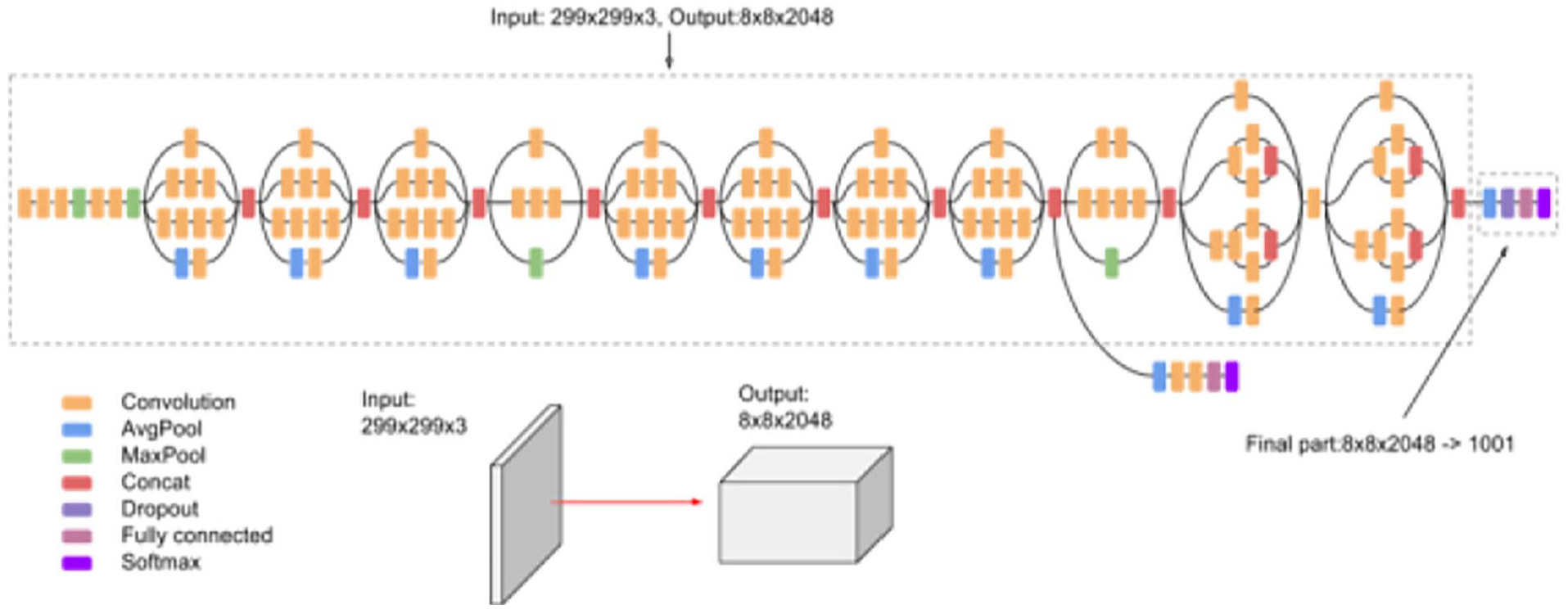

In this stage, classification was performed by employing different pretrained models for image classification. VGG is the first model considered in this study. It was proposed in 2014 by Simonyan and Zisserman 37 to test the efficiency and accuracy of layer depth on classification. The target was to evaluate the robustness of the model with layer weights up to 16. The model at that time was efficient and pretrained for other contexts of datasets. The second model is Inception-v3 (see Figure 2) introduced by Google, and it is the third version in the Inception family of deep convolutional architectures.38,39 It is based on parallel connected layers instead of a stacked layer architecture. 40 The Inception deep convolutional architecture was developed as GoogLeNet and later named Inception-v1. 38 Then, the Inception architecture was enhanced in Inception-v2 by including some batch normalization. Inception-v3 was launched after Inception-v2 with improvements in terms of factorization and fewer parameters that reached the 42-layer deep learning network. The third model applied in this study is residual networks or ResNet, which is presented as a network-in-network architecture. 41 The ResNet model is a block-based architecture where layers stacked on top of one another and form a block. The residual blocks (see Figure 3) are connected where each layer connects the input of a block with the output to ensure nonlinearity. The fourth model is MobileNets introduced by Howard et al. 42 as a class of CNNs with lightweight architectures. It has 2 layers, one for filtering and the other for combining. This architecture reduces the computation and model size in comparison with the standard convolution architecture. MobileNets, as stated in Howard et al. 42 performs depthwise convolution as a single filter on each input channel, and pointwise convolution performs a 1 × 1 convolution to combine the outputs of the depthwise convolution. We have included NasNet 43 as the fifth model in our evaluation. It stands for the neural architecture search network, which is designed to directly and automatically learn from the dataset to build the model architectures. This model is robust since it is applied in the search space where it looks for an architectural building block on a small dataset and then transfers the block to a larger dataset. The last model is EfficientNet, 44 which is based on scaling up CNNs using compound coefficients, where each dimension scales with fixed scaling coefficients. The optimized EfficientNet architecture relies on a baseline network built by performing a neural architecture search using the AutoML MNAS framework. 44

Inception Model v3 source. 39

Residual learning: A building block. 41

In summary, the classification process of CNN architectures for this work has passed through different stages. It is summarized in Figure 4. The process first takes the image for classification as input, and then BM3D filtering and denoising are applied. The filtered image is resized to 224 × 224 × 3 to prepare it for the CNN architecture model. Here, the architecture, the convolution layers in Figure 4, is one of the 6 CNN architectures included in this study. The results of the CNN architecture are sent to predictors to predict the image class. The last step is the result of the detected class.

The process of image classification using the CNN architecture.

Experiment and Result

In this section, quantitative evaluations of the results obtained from CNN-based architectures are presented. In this experiment, we performed hyperparameter tuning to obtain a high-performing model, as shown in Table 1, which has 50 epochs, 10 steps per epoch and 5 validation steps, and Table 2, which has 50 epochs, 10 steps per epoch, and 10 validation steps. Finally, in Table 3, we increased the number of epochs to 100 with 10 steps per epoch and 5 steps to the validation. Figure 5 shows a sample of the resulted lesions for the 3 diseases.

Accuracy obtained by the CNN-based architectures applied in dermatology dataset at epochs = 50, val_step = 5, steps = 10.

Accuracy obtained by the CNN-based architectures applied in dermatology dataset at epochs = 50, val_step = 10, steps = 10.

Accuracy obtained by the CNN-based architectures applied in dermatology dataset at epochs = 100, val_step = 5, steps = 10.

Sample of the resulted lesions for the 3 diseases: (a) eczema lesion, (b) atopic lesion, and (c) psoriasis lesion.

Evaluation measures

The evaluation process of the pretrained models designed in this work considered some evaluation measures that have been extensively applied to measure the quality of the models. The results obtained from the pretrained models were evaluated according to accuracy, precision and sensitivity values. Equations (1)-(3) show how to calculate the values of these measures:

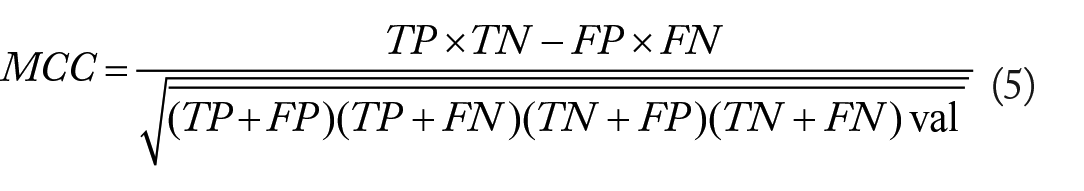

Where TP (true positive) is the number of images correctly classified with the correct disease, FP (false positive) is the number of images classified with the incorrect disease, TN (true negative) is the number of images correctly classified with no disease, and FN (false negative) is the number of images falsely classified with the correct disease. We included the F1 score, which is the harmonic mean of the precision and recall, to measure the performance of the classification. Matthew’s correlation coefficient (MCC) was also included to evaluate the performance of the models. The F1 score and MCC were computed with equations (4) and (5):

Evaluations for CNN-based architectures

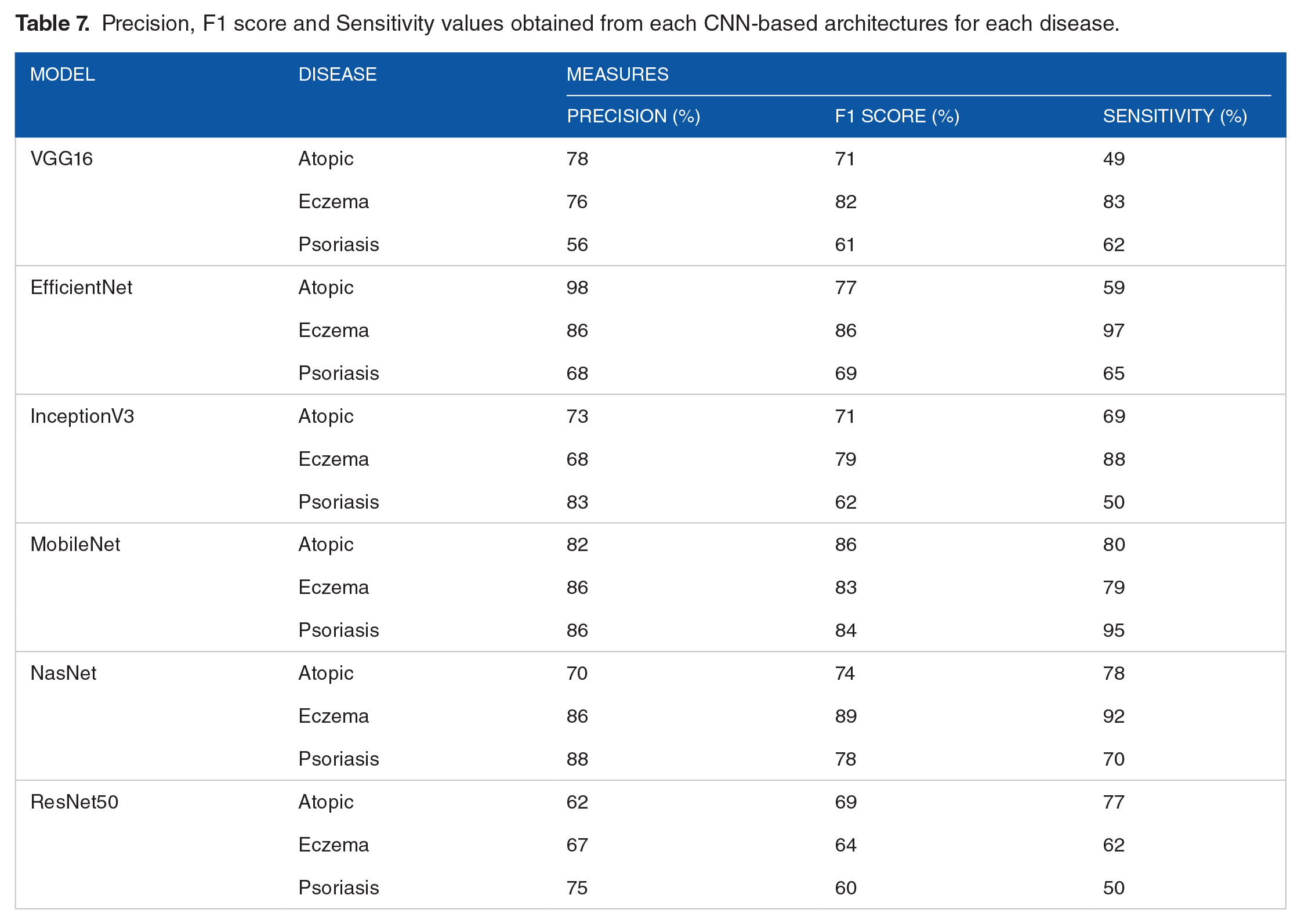

In this work, we performed an image classification problem for dermatology diseases. A number of pretrained CNN-based architectures have been used. To measure the efficiency and compare the performance of the architectures, we performed some evaluations. The 6 models were evaluated using different hyperparameters and the same image sets in the training and testing stages. All networks were tested using the Python Keras interface for ANNs with TensorFlow at the backend. The accuracy of all tested hyperparameters is shown in Tables 1 to 3. The results include the comparison of these networks in terms of accuracy, precision, F1 score, MCC and sensitivity values at different hyperparameters, as shown in Tables 4 to 6. The networks were also tested considering each dermatological disease included in this study, atopic, psoriasis, and eczema. The result is shown in Table 7.

Average accuracy, precision, F1 score, MCC, and specificity values obtained by the results of the CNN-based architectures applied at epochs = 50, val_step = 5, steps = 10.

Average accuracy, precision, F1 score, MCC, and specificity values obtained by the results of the CNN-based architectures applied at epochs = 50, val_step = 10, steps = 10.

Average accuracy, precision, F1 score, MCC, and specificity values obtained by the results of the CNN-based architectures applied at epochs = 100, val_step = 10, steps = 10.

Precision, F1 score and Sensitivity values obtained from each CNN-based architectures for each disease.

Discussion

This work aims to examine and better understand the effect of DL on dermatological disorders. Most current works are directed to skin cancer classification based on DL. The challenge in medical analysis and dermatology in particular is in the availability of data themselves. It has been noted that DL architectures are data-driven where data are crucial. In addition, local data for Middle Eastern dermatological disorders are also limited. Accordingly, we limited the analysis to photographs of 3 classes of dermatological disorders, atopic, eczema, and psoriasis, which commonly appear in the Middle East. Six CNN architectures were examined to classify these classes of dermatological disorders and test their performance. To measure the performance, (i) data were collected with predetermined classes, (ii) image filtering and noise reduction were applied using BM3D,33-36 (iii) 6 pretrained models with hyperparameter tuning were used, and (iv) evaluation and testing been performed.

Comparative evaluations of performance are shown in Tables 1 to 3. In these evaluations, we base our discussion on the hyperparameter at epochs = 100, val_step = 5, steps = 10 where it achieved the maximum performance. The comparative evaluations of performance are shown in Table 3, where the MobileNet architecture 42 produced high accuracy for training (99.3%) and testing (95/7%). The loss value of the MobileNet architecture reached the minimum loss (0.09). This indicates that the MobileNet architecture produced the highest performance against the other 5 architectures. Table 6 shows the results of precision, F1 score, MCC and sensitivity values of the 6 architectures. The MobileNet architecture also produced maximum values in the other evaluation metrics. The precision value of MobileNet was 94.3%, which indicates that 94.3% of all the patients actually suffering from dermatological disorders were correctly classified. In the same context, Table 7 shows the precision, F1 score and sensitivity values of each disease class for all 6 architectures. It clearly demonstrates that the MobileNet architecture produced the highest values on each dermatological disorder for all evaluation metrics. Only the EfficientNet architecture produced higher values in atopic, while NasNet produced values for eczema and psoriasis close to those of MobileNet. This is because of the compound coefficient used in EfficientNet in scaling up networks and the structure of atopic photographs. The second highest performance was obtained by the EfficientNet architecture.

The aforementioned results and discussion are significant; however, finding the correlation between the 3 classes for our classification is also crucial. The MCC correlation coefficient computes the similarity variables. The higher the correlation between true and predicted values, the better the prediction. This metric is perfect for symmetric classification, where no class has higher importance than the other. In our analysis, the results show positive correlations in all architectures for all classes (see Table 6). The MobileNet architecture reaches 95.4%, which is very close to 1 and indicates perfect positive correlation. EfficientNet comes after it with 91.2% correlation, which indicates that the predicted class and the true class are strongly correlated.

Conclusion and Future Works

The interest of DL and CNN in the field of medical sciences has increased recently. Several studies and experiments have been performed in different disciplines, including dermatology. While DL and CNN have been successfully adopted in skin cancer, they tend to be limited in dermatological disorders such as atopic, eczema, and psoriasis. In this work, we measured the performance of 6 CNN architectures on a dataset of dermatological disorder images. It is known that DL architectures are data-driven where data are crucial and image augmentations have been applied in some works 45 to increase the number of images. We have not used an image augmentation in this work. The results show that the MobileNet architecture outperformed the other 5 architectures. EfficientNet was next in terms of accuracy and other evaluation metrics. Despite the surpassing performance of CNN architectures in terms of medical analysis in dermatology, they face various challenges because of their data-driven nature. This is increasingly challenging since dermatological disorders are based on image analysis and computer vision. In addition, most dermatological disorder images have common features, which makes the process of preprocessing crucial. In this work, image filtering and denoising were applied throughout BM3D noise removal and edge enhancement.

Future works for this analysis include different directions. Collaborations between computer science practitioners and dermatologists will open novel data-driven solutions toward dermatological disorder detection and diagnosis. This domain still requires further enhancements in terms of data availability, real clinical experiments, and robust automated diagnosis systems. One crucial point in adopting this kind of approach is the acceptance of patients and physicians. Patient privacy, ethical data, and trustworthiness are critical issues that lead to reluctance. Other enhancements may include powerful CNN architectures in computer vision for dermatological disorders. Communities of dermatologists and computer vision specialists must work together to achieve this goal. This kind of collaboration will further explore opportunities that are cost effective, remotely accessible, and accurate. DL and CNN have demonstrated the capability of achieving highly accurate diagnoses in the classification of dermatological disorders. However, real datasets are still limited, and most current data available are detected for medical student illustrations. To proceed and succeed in this direction, initiatives should take place to offer a large dermatological disorder dataset while preserving patient privacy. This will have a positive effect on the proposed algorithms and experiments that are tested in real life.

Footnotes

Acknowledgements

The author extends her appreciation to the Innovation and Emerging Technologies Center at Digital Government Authority for funding this work. She also would like to thank Ms. Fatima Alogayyel for her valuable support in the technical part and experiments.

Funding:

This work was supported by the Innovation and Emerging Technologies Center at Digital Government Authority.

Declaration of Conflicting Interests:

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Author Contributions

All work done by the single author Lulwah AlSuwaidan.