Abstract

Background:

Interest is growing in the use of Artificial Intelligence (AI) technologies in health care. Health AI innovations have been explored in a range of clinical contexts, yet their implementation into routine practice remains challenging. The aim of this study was to understand the factors that influenced the implementation of AI innovations into routine practice in Australian Healthcare organisations, from the perspective of implementers.

Methods:

The study used a qualitative methodology. AI implementers were identified via an environmental scan of publicly available information, combined with passive snowballing. In-depth research interviews were undertaken between November 2021 and June 2022. Interviews were audio recorded and transcribed into text for data analysis. Transcripts were inductively coded by the researchers, followed by deductive categorisation of the data using the Consolidated Framework for Implementation Research (CFIR).

Results:

The study identified 11 different AI innovations being introduced in Australian healthcare organisations, and a total of 12 implementers working on the implementation of these innovations were recruited to participate in the study. Factors influencing the implementation of AI innovations into routine practice were identified across all five domains of the CFIR framework, but the innovation and implementation process domains were emphasised the most in the data. Implementers faced many barriers integrating their innovations into practice including challenges with stakeholder engagement, data access and other technical hurdles, resourcing constrains and lengthy timeframes for implementation.

Discussion:

The number of Health AI solutions being implemented in routine practice in Australian healthcare organisations is small relative to the uptake of innovation seen in research and industry. This gap is likely a reflection of the length and complexity of the implementation process for Health AI solutions, and barriers that need to be overcome as part of this process.

Keywords

Introduction

Background and Rationale

Healthcare organisations are increasingly adopting digital technologies to support the delivery of health services and support health professionals in healthcare delivery. 1 Information systems such as Electronic Medical Records (EMRs) and Electronic Health Records (EHRs) are foundational digital technologies in this sector and are designed to optimise individual clinical care. 2 EHRs, EMRs and other clinical information systems are designed to collect both structured and unstructured data. These systems have the potential to aggregate data and allow generation of insights which can transcend individuals to inform health organisations and systems. Yet, the data is typically collected without a built-in aggregation and analytical function. Thus, health data is often collected in abundance but seldomly used.

Increasingly, Artificial Intelligence (AI) applications are being applied to clinical and healthcare delivery settings to question, enhance and understand the rich data sets in health information systems. 3 AI describes intelligence demonstrated by machines, in contrast to the natural intelligence displayed by humans. 3 Specific branches of AI include Machine Learning (ML), which seeks to understand how systems can learn from data, identify patterns and make choices with minimal human intervention. 4 Natural Language Processing (NLP) in which computational techniques learn, understand and produce human language content is also increasing in healthcare. 5 ML and NLP are being applied to electronic data sets in healthcare across the globe. 6 NLP has a unique utility for handling large amounts of unstructured data in healthcare informatics systems and there is increasing interest in their application. 7 Over the last 2 years, the presence of user-friendly Generative AI solutions have also been made more widely available, this type of AI uses algorithms to utilise data across the web and generate images, audio and text when prompted.8,9 Though Generative AI chatbots have mostly been applied in healthcare to manage administrative tasks, conduct research and assist with medical education, there are emerging opportunities in clinical settings. 10

Despite the potential of AI, there are many barriers to implementation. Concerns have emerged on data security and undetected bias in AI technologies, alongside trialability, adaptability and costs of larger AI implementations in organisations. 11 The implementation of emerging technologies in healthcare is notably underexplored in the literature. Focus has instead primarily been placed on developing and testing AI innovations, but there is a gap in understanding how to embed AI into real world practice. 12 A recent review of the literature on implementation of health technologies revealed the complexity of the technology and the process. 13 At a systems level, external barriers include lack of strategic efforts, recognised standards, policies or incentives and leadership engagement from development to implementation. 13 Challenges related to technical integration can also hinder the implementing process at an organisational level where adoption of AI models that require constant retraining and updating prior to use in workflows. 13 Importantly, there is a steady emphasis on not replacing human clinical judgement as AI decision-making remains unsatisfactory and suboptimal at an individual level. 14

Some research has explored facilitators for AI implementation. These include policies and legislation on standards and guidelines around the use of technology with respect to data sharing and data privacy. 15 Alongside policies, measuring both medical and economic impact is important in implementation. 16 Studies also show that age and career level do not predict adoption. Rather it is the exclusion of clinicians in the development process of AI systems that limit adoption, 17 particularly the lack of human-centred design in digital developments. 18

Evidence-based implementation frameworks are underutilised in providing structured insights into the implementation of AI innovations in the health sector. The Consolidated Framework for Implementation Research (CFIR) has been applied to understand aspects of Health AI including employing CFIR as a theoretical foundation to understand healthcare leaders’ expectations, as well as the advantages of these innovations for health professionals, patients and organisations. 11 The CFIR has strengths compared to other commonly utilised implementation frameworks, particularly the ability to provide a more comprehensive perspective on implementation the then determinant based frameworks. 19 Other studies utilising the CFIR framework have explored key themes in successful implementation of AI in healthcare, with innovation as a consistent theme. That is, the AI software that is implemented is comparable to or surpasses humans, which in turn decreased diagnostic times, reduced errors and enhanced patient outcomes. 14 There is however very limited application of CFIR to understand the perspectives of Health AI implementers on the factors that influence short- and long-term adoption in the healthcare system.

Aims and Objectives

The aim of this study was to understand the factors that influenced the implementation of AI innovations into routine practice in Australian Healthcare organisations, from the perspective of implementers. The objectives were to:

1) Describe the characteristics of Health AI innovations that have been implemented routinely in practice by Australian healthcare organisations.

2) Explore the factors that influence adoption of Health AI innovations at different stages of the development and implementation process.

3) Explore facilitators to implement AI tools into routine practice in healthcare organisations.

Methodology

Study Design

The study is underpinned by the CFIR framework and is an observational study with qualitative data collection and thematic analysis, focusing on the implementation of dedicated Health AI innovations in Australian healthcare organisations. The Consolidated Criteria for Reporting Qualitative (COREQ) 20 research (Supplemental Material 1) was the reporting framework used in this manuscript.

Study Setting, Participants and Recruitment

Australian healthcare organisations, public or private, who had implemented or were in the process of implementing AI innovations into routine practices were the focus of the study. Inclusion criteria included that the Health AI innovation being implemented had to be developed specifically for addressing a health problem (an enterprise solution), so applications that were translated from general use contexts into healthcare were excluded (a consumer solution). Eligible Health AI innovations also had to be designed to ingest structured or unstructured data from clinical information systems, such as PDFs, imaging and clinical notes. Health AI innovations used for population health that were fully patient facing and were not being used by health services were excluded from this study, as were consumer platforms that were not designed for use in health context but may be being applied in that context. AI innovations that collected other forms of electronic health data such as information in health apps or wearable devices, were excluded.

A purposeful sample was recruited for the study. The purposeful sample recruited participants who were involved in the implementation of Health AI in relevant organisations. They could have any role within the implementation team, and multiple members of the same implementation team were eligible to participate.

Recruitment was informed by an environmental scan of publicly available information on AI innovations being implemented in healthcare with key contacts identified. 15 The environmental scan involved reviewing the media releases of Australian public health services organisations to identify examples of HealthAI innovations being implemented in practice, alongside reviewing conference proceedings from major Australian Digital Health and Health Informatics societies. Information was reviewed from 2016 to 2021 (the time of the scan). A member of the research team extracted information from each website on individual Health AI innovations and used it to aggregated a list of innovations and an implementation contact. This list was subsequently underwent a two-step review to attempt to identify any missing implementations and incorporate them in to it. The first step was a review by the Australian Health Research Alliance (AHRA) data committee, who had representation from most major public hospitals in Australia. The second step involved inviting representatives from jurisdictional government agencies responsible for Digital Health strategy to review the list.

Individuals identified in through the environmental scan were emailed an invitation to participate in an interview, or to identify the suitable implementer in their team. No participants declined to participate in an interview, but a number of individuals identified via the environmental scan did not respond to a request for interview after two follow ups.

In order to do a third check that no major Health AI implementations were missing from the study, passive snowballing was used. This involved asking anyone interviewed about other Health AI implementations they were aware of, and for an introduction to a contact on the implementation team to request an interview.

Data Collection and Procedures

Semi-structured interviews undertaken between November 2021 and June 2022 were used to collect study data. The interviews were conducted by a researcher (AJ) with extensive experience with qualitative methods and undertaking research interviews. The researcher had expertise in implementation of health technologies and their adoption in the health workforce. They did not have any clinical qualifications, nor did they have computer science, engineering or other ICT related qualifications that would give them a deep technical knowledge of AI. Interviews were conducted over the phone and took approximately 60 minutes. Interviews were audio recorded and conducted by a single experienced researcher.

A structured guide (Supplemental File 2) was used with questions focused on aspects of the Learning Health System framework. 21 LHS’s are environments where knowledge generated from health data can be embedded into routine practice in order to improve processes and outcomes. An LHS framework was used to inform the individual interview guide questions so that the interviews would touch on aspects of the framework in the context of Health AI innovations implementation. Two researchers with experience with the LHS framework used (HT, TS) reviewed and provided feedback on the questions, and this feedback was used to create the final guide used in the interviews. The interviews specifically explored the nature of the AI innovations the participant was involved in implementing, its implementation stage, the barriers they encountered during implementation, and factors that enabled successful implementation.

Interview audio was transcribed into text, fully de-identified and cleaned to address any errors in transcription prior to analysis.

Data Analysis

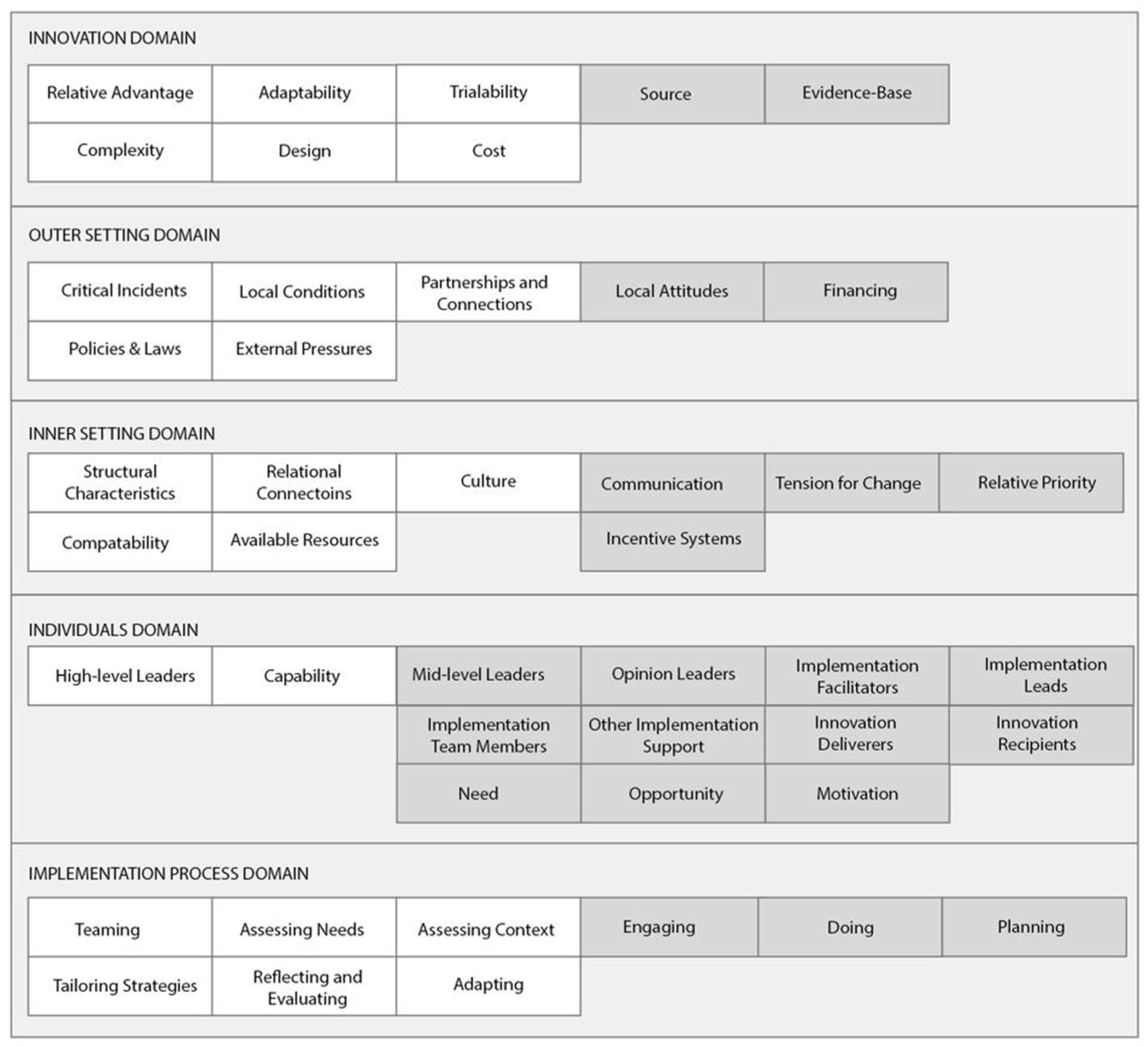

Thematic analysis was undertaken using Braun and Clarke’s approach. 22 Inductive co-coding was undertaken for 25% of the transcripts by two researchers, who then iteratively developed a codebook, and initial themes based on this process. The remaining transcripts were coded by one researcher (AJ) using the code book. Coded data was categorised using the CFIR 6 in order to get a comprehensive understanding of the points at which barriers and enablers occurred when implementing AI innovations into routine practice. Codes were then iteratively classified into the five key domains within the CFIR, and the relevant constructs within them, until agreeance was reached on the final categorisation. Exemplar quotes were extracted from the data to illustrate key constructs.

Results

A total of 12 implementers agreed to participate in interviews across 11 different Health AI innovations. Table 1 describes the AI innovations discussed by the implementers and how they were identified.

Brief Descriptions of Artificial Intelligence Applications Being Implemented in Australian Healthcare Organisations and Their Key-Characteristics. 15 .

Applications identified with (*) were identified through the environmental scan.

Findings are presented according to the CFIR framework. Implementers identified considerations across all domains of the CFIR, but the innovation and implementation process domains were emphasised. This may reflect the implementation stage of the AI innovations, with most being pre-implementation or in very early stages of implementation, with few examples of mature implementations of innovations.

Table 2 provides an overview of the CFIR domains and constructs mapped against exemplar quotes for the study. Figure 1 illustrates the spread of data across CFIR domains and constructs.

A Matrix of CFIR Domains and Constructs Aligned with Illustrative Participant Quotes.

The spread of data across CFIR domains and constructs.

Innovation Domain

The innovation domain focuses on features of the intervention being implemented. It has eight constructs, six of which aligned with data analysed for this study. The six constructs are: Relative Advantage (the extent to which the innovation is better than others available); Adaptability (the ability of the innovation to be modified, tailored or refined to fit implementation context); Trialability (the ability to test the innovation on a small scale); Complexity (how complicated the innovation is and the alignment of complexity to its scope); Design (how well designed and packaged the innovation is) and; Cost (innovation purchase and operating costs).

Relative Advantage

Overwhelmingly participants identified the need to demonstrate value as a key enabler. There were multiple ways that value could be demonstrated including improved patient outcomes, reduced length of stay and better clinical documentation. Demonstrating value was identified by one participant as essential to encouraging organisations to invest in the innovation. Actionability of insights generated by the innovation was also key to demonstrating value, with a product needing to clearly enhance current practice.

Developing AI solutions that were tailored to specific needs and contexts was an important motivator identified by participants for their initial development. This included AI solutions to address common health service issue that required slightly different solutions at different organisations. Many applications were developed to address clinical problems that were difficult for the workforce to manage, such as identifying sepsis presentations, reducing treatment wait times or tailoring a treatment pathway to a specific patient population.

AI innovations were also focused on reducing burden on the health system. This included reducing wait times for patients to access services, reducing referrals to certain services by identifying patients that could be managed at home or in different clinical settings and monitoring patient deterioration.

AI innovations were also used to limit care burden by providing health professionals quick and accessible guidance on how to manage patient care. Several AI innovations were developed to either augment or overcome limitations of human cognition. This includes supporting decision making in contexts where diagnosis is uncertain, identifying potentially confounding factors that could lead to patient readmission or risk screening in complex populations.

There were also opportunities for AI solutions to support health professionals in decision making. Participants noted AI could be provide early alerts for action. Some participants were developing AI solutions to support management or remote monitoring, potentially benefiting health professionals and patients. One participant noted their solution could identify nuances in patient presentations, which could support management.

Adaptability

Most participants described a development process that was adaptable and often tailored to changing project needs. In some instances, the adaption was due to workforce expectations of how the AI solution would work. In other cases, the iterative process was used to refine and enhance the algorithms in the solution as more or better data became available.

Trialability

Several participants identified the need to address technical and data quality issues through trial and error, as an innovation enabler. A significant consideration was potential bias in the Ai derivation data set. Relatedly, data input errors were noted as problematic for Health AI implementation. One participant noted that there was a need to benchmark the performance of the AI solution prior to implementation.

Testing and validation was an important consideration in the iterative process of developing AI innovations. This was to pilot the innovation at the implementation site, but also to understand how it would work across sites.

Complexity

Participants noted complexity at different stages of innovation development and during implementation, particularly in building the model or algorithms. The complexity of model development could be underestimated by implementers as technical knowledge was varied or limited. Even in instances when the initial model development was straightforward, training the AI was often hard and time consuming.

Designing AI innovations to communicate key information clearly to innovation recipients was identified as important, with visibility of how the AI innovations worked to minimise the “black box” effect. This was not always possible. Hence there was a need to explore how to visualise outputs to maximise usability of the application with a focus on those that are clinically meaningful.

When reflecting on learnings from development of AI innovations, many participants indicated data access and ongoing management was important. There were a range of challenges related to data that made development more difficult. Most participants noted that accessing data was hard, and once obtained, data limitations were significant. Delays in data access and the burden of data access were noted. One participant indicated it was important the innovation recipients understood what using the innovation involved to engage them in adoption.

Design

Participants discussed a multi-step process for designing their innovation to ensure it was accessible to innovation recipients. Active engagement of innovation recipients was often the first step in this process both to inform the initial design and get iterative feedback. Iterative codesign with innovation recipients was key.

Once the problem had been refined, participants described the need to “digitise” the problem through the innovation. Another important consideration was whether to use a commercial product or a bespoke solution. Most participants used a bespoke solution, though two had invested in a commercial application. A common thread was that the platform developed or chosen was used because it fit the problem best and was often the only option available.

Participants reflected on the importance of developing a user interface for the AI solution that made it easy for innovation recipients to interact with. Whilst apps were a popular interface, visualisation of data to scaffold feedback of AI insights was also a user interface approach participants reported using. One participant noted the importance of developing a prototype or early conceptualisation before investing in a widescale build.

Cost

Most participants found it difficult to fully articulate the total cost, including licensing for the platform and associated resources such as staff time, of developing their AI innovation. Development costing was hard to capture because development of the innovation was long term, often undertaken over several years, and different people were engaged in the project at different times making it difficult to get a clear picture of resourcing. The dollar figure estimated also varied from participant to participant as a result.

Several participants indicated that cost of the innovation was less of a challenge then protected time for development. One participant felt the cost of developing the innovation was one of the smaller challenges mainstreaming this technology in healthcare.

Outer Setting Domain

The outer setting domain captures the external factors that influence implementation beyond those within the implementation setting. It has ten constructs, five of which aligned with data analysed for this study. The five constructs are: Critical Incidents (large scale and/or unanticipated events that disrupt implementation); Local Conditions (economic, environmental, political and/or technological conditions that influence implementation); Partnerships and Connections (external networks that connect with the inner setting); Policies and Laws (the regulatory landscape and its influence on implementation); and External Pressure (other external factors that influence implementation).

Critical Incidents

Almost all interviewees described impacts of the pandemic. Most commonly projects were unable to access key personnel, including limited capacity of clinical staff due to clinical demands. Some projects had funding reduced or diverted, and some projects were put on hold with the intention to continue implementation at a future point.

Local Conditions

Lack of access to technical experts to build and maintain AI innovations was identified as a major challenge, including for those with non-technical backgrounds to upskill, build and train algorithms themselves.

Partnerships and Connections

Participants identified the effective communication and engagement as key for successful Health AI implementation. Most participants reflected that better engagement with a wider breadth of stakeholders both inside the implementation site and externally could have strengthened their projects. Relatedly, several participants noted that more effective communication with the stakeholders that were engaged with the projects would have been beneficial.

Beyond this, having supports to scale the solution across jurisdictions or settings enabled broader adoption. These supports could vary such as researching the different approaches across settings so a plan could be made to address them and building structures to support shared knowledge transfer about what worked and what does not work.

Policies and Laws

Participants discussed a range of governance considerations related to Health AI implementation. Frequently it was noted that there was a lack of policy or clear guidelines on the use of these technologies and a need to establish governance frameworks for use of AI in the health system. Often governance frameworks were specifically discussed in the context of technical governance and data privacy and security.

Many participants were early in implementation formal ethics and governance framework for implementing their projects. Two participants specifically noted they weren’t sure what the process would be to transition from that context to a governance model for operationalizing use of Health AI. There was a sense from participants that the process was time consuming and had many steps. One participant noted considering ethics and governance when operationalizing Health AI was an important factor to consider as part of the implementation process.

It was considered important for innovations to ensure privacy of data to de-identify patient information or limit the amount of information collected about patients to minimise the risk of a privacy issue. Consent was also a topic that participants raised as a governance challenge for implementing AI innovations.

External Pressure

Multiple participants identified a need to identify clear opportunities for AI to add value in healthcare, rather than just focusing on areas of interest for the project development team. Relatedly, there was a need understand the influence on Health AI on the entire care pathway to ensure gains at one point do not cause challenges at another.

Inner Setting Domain

The inner setting process domain explores the features of the organisation where the innovation is being implemented and how they influence implementation. It has fourteen constructs, five of which aligned with data analysed for this study. The fourteen constructs are: Structural characteristics (infrastructure components that support performance of the inner setting); Relational connections (formal and informal relationships and networks within the inner setting); Culture (shared values, beliefs and norms across the inner setting); Compatibility (how the innovation fits with workflows, systems and processes); and Available resources (resources available to implement and deliver the innovation).

Structural Characteristics

A number of participants identified the complexity of health data, and also the challenges of integrating this complex data set into AI innovations as a major challenge of implementation. Even just accessing the data to input into an individual algorithm could be tricky.

If data could be accessed, participants frequently cited the reliability of data as an issue as well as missing data or insufficient data sets for training. Another quality issue was errors in coding health data sets. Finally, several participants discussed the challenge of getting access to health data in a timely manner, as well as time spent curating and cleaning the data to make it usable for the AI innovations.

Relational Connections

Engaging appropriate partners and coordinating stakeholders was identified as the most significant challenge in this domain by participants. A number of projects relied on collaboration with academic partners in addition to technical and clinical experts. Intertwined with this issue was limitations on funding to support engagement of key partners on the project. One interviewee noted:

Participants identified communication across diverse trans-disciplinary teams as a major challenge. This was a particularly difficult issue to solve with barriers around communicating the nuances of the clinical problem within the team, and also developing a shared lexicon of technical terms across team members.

Culture

Participants noted that acceptance of the innovation varied across organisations, and it could be difficult to obtain support from leadership in some instances. A number of participants identified resistance to using the innovation from frontline health professionals who were not involved in the development or implementation team. This could be an issue because of health professional perception that the application was time consuming to use, that it didn’t lead to great compliance from patients with treatment, or the need to re-think established workflows.

Compatibility

Participants discussed a range of challenges integrating AI innovations into the digital health ecosystem in an organisation including complexity of the Information Communication Technology (ICT) landscape in health care organisations, integration with existing infrastructure, differences in digital maturity across sites in rural and metropolitan areas, and identifying optimal points in workflows to implement the innovation.

In instances where the AI innovations was a commercial product interviewees identified issues related to ongoing costs for accessing the app and how they integrated into existing payment systems. Bespoke solutions could also be challenging to integrate because they created additional work for ICT teams in addition to their duties providing day to day technical supports.

Identifying the optimal point in a workflow to implement the solution within an organisation was an enabler of scaling Health AI implementation, alongside reflecting on whether the planned implementation point in workflows was the optimal one.

Available Resources

Most interviewees noted that human resourcing was not available to support ongoing refinement of AI innovations, and implementation into routine practice. Resourcing constraints took several forms included lack of availability of clinical domain experts and difficulty accessing team members at key project points. This made it difficult to obtain advice on clinical governance for the project or implementation at specific sites such as regional locations.

Participants also discussed significant challenges accessing budget to successfully resource and implement major Health AI projects. Some projects received small amounts of seed funding at the start but had no pathway to obtain further funding for implementation. Other projects started with sufficient funding, but due to the extended timeline of the project struggled to get further cash injections.

Individuals Domain

The individuals domain describes the roles, characteristics and perceptions of people involved in the implementation process. It has nine constructs, two of which aligned with data analysed for this study. The two constructs are: High-level leaders (people with a high level of authority) and Capability (characteristic where individuals have the competence, knowledge and skills to use the innovation).

High-level leaders

Ensuring high-level leaders received periodic updates on successes in the project facilitated on going buy-in and enabled access to potential funding sources. One participant identified CEO support and buy-in as a key enabler of successful AI adoption.

Capability

The need to actively engage innovation recipients and build their capability to use AI innovations emerged as a strong enabler of workforce adoption of these tools. Engaging innovation recipients, typically clinicians, over the life of the AI innovations development process, was a key technique to increase support for the tool when it was implemented in practice.

Implementation Process Domain

The implementation process domain focuses on the processes used to implement the innovation. It has nine constructs, five of which aligned with data analysed for this study. The five constructs and one sub-construct are: Teaming (Intentionally collaborating on interdependent tasks to support innovation implementation); Assessing needs (Collecting information about priorities, preferences and needs of people); Assessing context (Collecting information to identify and appraise barriers and facilitators to implementation of the innovation); Reflecting and evaluating (Collecting and discussion information about the implementation process); Reflecting and evaluating – Adapting (Modifying the innovation or the inner setting for optimal fit and integration into workflows) and Tailoring strategies (Choosing implementation strategies to address barriers and leverage facilitators in the implementation setting).

Teaming

Participants identified teaming as a challenging process when developing AI solutions for implementation in healthcare. A key hurdle that had to be overcome was ensuring clear communication between different individuals in an implementation team, which were frequently made up of researchers, clinicians and technical experts.

Many participants identified collaboration and partnership as a factor that needed to be considered as part of the development process for Health AI. There was a wide variety of key partners that needed to be engaged to support project delivery including researchers with specialised expertise, data scientist, consultants and government agencies. Multiple participants noted the key role of collaborations was to provide opportunities to learn from individuals or to cross check AI innovation methods. Partnering with health services was specifically identified as a key part of the development process for AI solutions. As was engaging with vendors to ensure it aligned well with the needs of the innovation recipients.

Assessing Needs

Interviewees were asked to reflect on the role of consumers in informing the development and implementation of their Health AI solution. The majority of participants had not actively engaged consumers in this process, usually because the innovation was only used by the workforce.

If consumers were engaged as part of the implementation, it was usually to iterate on the core functionality of the Health AI solution. This could be through workshops or surveys about the solution to obtain consumer perspectives. For some projects consumers’ needs weren’t actively assessed as part of the implementation, but consideration had been given to informing them an AI solution was being used to support care delivery. Although not specific to consumers, participants noted needs assessment was an important part of the Health AI implementation process, particularly ensuring the innovation met the needs of the organisation.

One participant reflecting that sometimes simple solutions could be as effective as more complex ones in the Health AI space.

Assessing Context

Participants identified a number of contextual factors including navigating the ethics and governance processes they had to navigate. Many innovations were pre or early-stage implementations and still in a research phase, potentially linked to ethics and governance challenges. Overall, the process of assessing context for implementing AI innovations was found to be complex and time consuming, with a major challenge being variation in processes and data access requirements across sites.

Integration into existing infrastructure and processes was highlighted as necessary for data access but time-consuming and required linkages between Health AI development teams and health service ICT teams. Further participants noted that it was necessary to identify context-specific protocols, both clinical and technical, as part of implementing Health AI into routine practice.

Reflecting and Evaluating

When reflecting on the implementation process, participants described the goal of improving automation and integration of their Health AI solutions to enable sustained use. Integration with EHRs and EMRs was identified as an important factor for integration of AI solutions into workflows.

Automated data entry was also identified as something that could sustain innovation implementation and see wide scale adoption in future. The important role of evaluation and testing was also noted, in progressing the implementation process for their innovation. Ongoing testing and evaluation of algorithms was noted for real world use or to reduce errors.

Many participants identified the need to be able to demonstrate benefits and evaluate impact of Health AI if it is to be widely used. A major opportunity for evaluation was to demonstrate enhanced clinical decision making of health professionals. Some participants also wanted to demonstrate improved accuracy of diagnosis, or a reduction of waste in the system. It was also noted that a potential benefit in future would be to evaluate the utility of AI to remotely monitor patients, rather than having a health professional at the bedside at all times.

Adapting

When considering goals for future implementations of the Health AI solution main participants identified options to extend and adapt them to support new health service challenges. Participants also identified the potential to adapt core algorithms to be used for different health conditions. Several participants saw opportunities to adapt their algorithms for health service monitoring and identifying service improvement opportunities or other quality and safety reviews.

Tailoring Strategies

Although the Health AI solutions participants had worked on were often at different implementation stages, the need to tailor strategies to integrate the systems into health workflows came up consistently. Participants discussed the importance on establishing processes for maintaining the Health AI solutions and ensuring their sustained use. These strategies could include monitoring existing workflows and mirroring them in the interfaces of the AI solution, ensuring the technology was easy to maintain by ICT teams in healthcare organisations and checking accuracy of the models on real-world data. One participant identified the need to be persistent in the implementation of Health AI and have a clear implementation strategy.

Discussion

Findings from this study illustrate that there are a variety of factors that influence the adoption of AI innovations in healthcare workflow. By utilizing the CFIR, 6 implementation barriers and facilitators were noted across all domains, reflecting the complexity of Health AI integration into routine healthcare. Whilst implementers acquired a depth of knowledge about these barriers and facilitators in developing and implementing their innovation, coproduction was often limited, and lessons were learnt retrospectively and not applied to streamline implementation. Interestingly, few innovations had an existing evidence-base on efficacy prior to implementation. This alongside lack of stakeholder and clinician engagement, technical barriers on data access and utility and limited capability, likely contributed to considerable time and resourcing typically required to implement these innovations into routine practice. Notably, many of the innovations were in early-stages of implementation leading to a focus on lessons learned related to the implementation process. The implementers had less insights into the strategies to engage stakeholders post-implementation and how to support scalability long-term sustainability,

This study highlights the diversity of AI innovations that are being implemented into routine practice by health care organisations. AI innovations were being developed to improve clinical screening, support clinical decision making, streamline clinical documentation, and enable remote patient monitoring. Whilst different clinical information was used by the AI innovations, a significant number used imaging or photographic data, aligned to advancements in the field.23,24 Few innovations used natural language processing techniques to interpret unstructured clinical data, despite rising interest and availability, 8 although this may rapidly evolve. Despite the diversity of innovations, barriers in implementation showed some consistency. The barriers occurred across all CFIR domains and were interconnected in a way that one issue compounded a later one. For example lack of understanding of the value add of the HealthAI solution at the Innovation stage, could subsequently lead to challenges in the Inner Setting were workforce were resistant to adoption or struggled to integrate innovations in to their workflows. This finding correlates with what is known about the difficulties of major digital transformation projects, which have been shown to need to implement approaches to overcome intertwined and complex problems if they are to be achieved successfully. 25 Whilst digital health implementation is known to be difficult, AI implementation amplifies existing challenges for several reasons. Firstly, AI is a term to describe a broad range of algorithms, models and innovations and implementation can look very different and have unique pitfalls depending on the specific type of HealthAI being deployed. Secondly, unlike other forms of digital health AI has the capacity to autonomously undertake many human tasks and is much more technically sophisticated the other types of digital health. These characteristics have the potential to create new risks and safety issues that need to be considered as part of implementation, which can in term make deployment more resource and time intensive. Thirdly, safe use of Health AI systems typically requires a combination of high health and digital literacy which can be problematic in a context where there are recognised capability gaps for both consumers and the workforce. 26

Apply the CFIR to understand the factors that influenced Health AI implementation revealed the unexpected finding that external factors (those in the Outer Setting Domain of the CFIR) have a significant influence on the adoption process. It was anticipated that the innovation itself and the implementation process would have a more significant influence. This finding is important as it emphasises the extent to which influences implementers can’t control for can hinder HealthAI implementation. This suggests planning strategies to overcome barriers that can be managed by implementers from the outset may be particularly important to mitigate the risk that all manageable factors do not derail innovation implementation. The HealthAI regulatory and governance landscape emerged as a significant external factor that could influence successful implementation. A notable finding was that many implementers had given little consideration to governance and policy, typically due to being at early-stages of implementation and still being undertaken within a research context. Much focus was on data governance and regulation, with less on clinical governance. The global Health AI regulatory landscape is complex, with varied responses from regulators on regulation of disruptive Health AI innovations.27,28 There is a growing number of international frameworks to guide governance and safe use of AI in health contexts.29-31 but there seems to be a gap in policy development and implementation with those deploying Health AI.

Despite the challenges faced implementing Health AI into routine practice, applying the CFIR enabled this study to identify common facilitators across CFIR domains including a need (1) to be adaptable (innovation and implementation domains); (2) have access to both financial and human resources (innovation and inner setting domains); (3) be able to demonstrate benefits of the innovation (innovation and implementation process domains); and, potentially most importantly, utilise people well through collaboration and fostering strong partnerships (Outer Setting, Inner Setting, Individuals and Implementation Process Domain). Finally, a key strategy for successful implementation of AI innovations into routine practice was to prospectively develop an implementation plan considering barriers and enablers from the outset.

Limitations

This study is limited as the methodology used may not have been adequate to identify all AI applications being implemented in Australian healthcare organisations. In order to attempt to overcome this limitation a governance committee reviewed findings from the environmental scan and had opportunities to suggest AI applications that were missing from the results. The study methodology also included very specific eligibility criteria that AI innovations must have been made with a health problem in mind, and they had to be ingesting data from clinical information systems. As a result, AI innovations being used in healthcare in contexts beyond health services are not included in the study, and the challenges faced by their implementers may vary. A purposeful sample was used to recruit participants, and as such a power calculation to justify the sample size was not done. The sample size is also relatively small, limiting generalisability of findings. As noted in the methods, the researcher undertaking the interviews did not have ICT related qualifications which may have influenced the extent to which they explored more technical factors influencing implementation of Health AI innovations. Finally, the timing of data collection meant that the study was conducted before Generative AI tools such as ChatGPT became widely available to the general public, and the extent to which NLP innovations were being used by health care organisations may have been more widespread had the study been conducted later.

There are many opportunities for future research in this space, as there is currently a relatively small base of literature on the facilitators of widespread adoption of AI innovations in healthcare. This study illustrated that the innovation itself is inadequate as a driver of adoption by health services without quality implementation, but further research into what quality implementation looks like would be beneficial. Finally, the pace of change with AI innovation is rapid as demonstrated by major disruptions in the AI space over the life of this project. Future researchers should explore the adoption of more recent AI innovations such as Gen AI by health services to understand how widely these tools are used and the safety and quality implications.

Conclusions

Adoption of Health AI solutions in Australian healthcare organisations appears limited, likely reflecting the lack of evidence-based interventions with established efficacy and the complexity of successfully implementing an AI innovation into health service workflows. Barriers included lack of engagement, capability, and inconsistent data access processes. Facilitators for successful implementation across the CFIR domains included: (1) planning the approach to implementation from the outset, (2) investing in strong collaborations and partnerships over the life of the project, (3) obtaining buy-in for the innovation from key stakeholders by demonstrating impact, (4) ensuring sufficient resourcing for the project to support the full implementation timeframe, and (5) taking an agile and adaptable approach to both the unexpected implementation hurdles and the technology itself.

There is an urgent need for improvements in Health AI implementation strategies. Development of funding strategies that provide long term implementation approaches and focus on innovations that are considering scalability from the pre-implementation stage could significantly improve sustainable Health AI implementation. Further, successful implementation replied heavily on successful partner engagement over the lifespan of the project, organisations have a key role in identifying and nurturing key strategic partnerships to increase the likelihood of successful Health AI implementation. Finally, AI developers and implementers should be more proactive in dissemination of information on successful Health AI innovations at events and forums with high attendance from the health to make successful implementations more visible and reduce replication of similar implementations and share lessons of implementation failure and success.

Supplemental Material

sj-docx-1-his-10.1177_11786329251392423 – Supplemental material for Implementers Perspectives on the Routine Use of Artificial Intelligence in Health Services: A Qualitative Study Using the Consolidated Framework for Implementation Research (CFIR)

Supplemental material, sj-docx-1-his-10.1177_11786329251392423 for Implementers Perspectives on the Routine Use of Artificial Intelligence in Health Services: A Qualitative Study Using the Consolidated Framework for Implementation Research (CFIR) by Anna Janssen, Kavisha Shah, Helena Teede and Tim Shaw in Health Services Insights

Supplemental Material

sj-docx-2-his-10.1177_11786329251392423 – Supplemental material for Implementers Perspectives on the Routine Use of Artificial Intelligence in Health Services: A Qualitative Study Using the Consolidated Framework for Implementation Research (CFIR)

Supplemental material, sj-docx-2-his-10.1177_11786329251392423 for Implementers Perspectives on the Routine Use of Artificial Intelligence in Health Services: A Qualitative Study Using the Consolidated Framework for Implementation Research (CFIR) by Anna Janssen, Kavisha Shah, Helena Teede and Tim Shaw in Health Services Insights

Footnotes

Acknowledgements

The authors wish to thank all participants who gave up their time to be interviewed for this study. The authors also wish to thank Anna Marinic and other members of the Monash Partners Academic Health Science Centre team who provided ongoing support for this project.

Abbreviations

AI: artificial intelligence

NLP: natural language processing

ML: machine learning

EHD: electronic health data

EMR: electronic medical records

ICT: information communication technology

Ethical Considerations

Permission to conduct this study was granted by The University of Sydney Human Research Ethics Committee: Protocol: HREC 2021/ETH00416.

Consent to Participate

Participants provided written, informed consent to participate in this study.

Consent for Publication

Participants provided consent for anonymised data to be included in this publication as part of the consent to participate in the study.

Author Contributions

Author AJ wrote the first draft of the publication. Authors AJ and KS were involved in study design and data collection. Authors HT, TS, AJ and KS were involved in study design and manuscript review. All authors have read and approved the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project is supported by the Australian Government’s Medical Research Future Fund (MRFF) as part of the Coronavirus Research Response- 2020 Rapid Response Digital Health Infrastructure through Monash Partners (ID RRDHI000027).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets templates for the study are available from the corresponding author on reasonable request.

Contributions to the Literature

• Mapping and detailed description of the types of Health AI innovations being implemented into routine practice in Australian Healthcare organisation.

• Demonstration of the utility of the Consolidated Framework for Implementation Research for understanding the barriers and facilitators to AI implementation in routine practice in healthcare.

• Identification of the common barriers that are faced when attempting to implement Health AI in practice in health services.

• Recommendations on the potential facilitators of Health AI implementation into routine practice in health care organisations.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.