Abstract

This Method Note presents an integrative review of 117 Research on Evaluation (RoE) studies published in the American Journal of Evaluation (2014–2024). We combined thematic analysis with a structured quality appraisal using established critical appraisal tools. Findings show that RoE literature focused primarily on studies on evaluation activities and professional issues, with far fewer examining evaluation outcomes or contexts. Qualitative descriptive designs were most common, whereas experimental designs were rare. Overall methodological quality was moderate to high, but with recurring weaknesses, including limited theoretical grounding and reflexivity in qualitative studies, limited attention to confounding and sampling issues in quantitative studies, uneven quality between qualitative and quantitative strands in mixed-methods studies, and limited application of systematic review techniques. We discuss implications for improving RoE research, such as broadening study foci and adopting more rigorous design and reporting standards to strengthen the evidence base for evaluation practice.

Keywords

Defining Research on Evaluation

Research on Evaluation (RoE) is broadly concerned with studying evaluation itself—its theories, methods, practice, and professional work—with the aim of strengthening evaluation knowledge and, in turn, improving evaluation practice (Henry & Mark, 2003; Mark, 2008). At the same time, the field has repeatedly noted that we still lack a strong, cumulative evidence base about evaluation processes and what evaluations accomplish in real-world settings, which is why calls for more RoE have been so consistent (Alkin, 2003; Henry & Mark, 2003; Mark, 2008).

A persistent challenge, however, remains definitional. Recent work shows that RoE has been defined in multiple, partially overlapping ways, and that these differences matter because they shape what gets counted, synthesized, and learned from (Arbour, 2025; Aston et al., 2025; Linnell & Stachowski, 2025). For example, Aston et al. (2025) summarize several widely used definitions over the past decade and note that the field is not unified around a single definition. Arbour (2025) similarly cautions against treating current RoE definitions as definitive and argues that excluding nonempirical work may be unnecessarily limiting depending on the purpose of the inquiry. We recognize that an exclusively empirical definition can omit conceptual and methodological contributions that shape how evaluation is studied and practiced. We nonetheless made a narrower choice here because our aim was to characterize observable research designs, methods, and appraisal indicators in published studies.

For this review, we adopted Coryn et al.'s (2016) RoE definition because it is explicitly empirical and therefore aligns with our goals: describing methodological approaches and appraising methodological quality using formal critical appraisal tools. We are therefore not attempting to resolve the broader question of what RoE should include. We acknowledge the ongoing definitional debate and return to its implications when interpreting our findings (e.g., Arbour, 2025).

Background

Although RoE scholarship has expanded, prior syntheses show an uneven distribution of topics and methodological approaches. Vallin et al. (2015) cataloged American Journal of Evaluation (AJE) RoE studies from 1998 to 2014 and noted considerable imbalance in subject focus. Coryn et al. (2017) reviewed 14 journals (2005–2014) using Henry and Mark's (2003) agenda and Mark's (2008) taxonomy and similarly found that most RoE studies were descriptive, with comparatively fewer examining evaluation outcomes or evaluation contexts. Galport and Galport (2015), analyzing a subset of Vallin et al.’s sample, documented methodological shifts but did not assess study rigor. Collectively, these reviews suggest RoE has often leaned heavily toward descriptive approaches and has given less attention to evaluation consequences and contexts relative to evaluation activities. A recent meta-RoE update (Linnell & Stachowski, 2025) continues this line of work and shows growth in RoE volume.

What has been missing across most of this mapping work is systematic attention to how well RoE studies are executed and reported. Coryn et al. (2017), for example, explicitly excluded quality assessment and expressed concern about the strength of conclusions drawn from a largely descriptive and unappraised evidence base. Brandon and Singh (2009) offered one of the few early attempts to examine methodological quality in evaluation-use studies; however, their assessment relied on a narrow set of content-validity-focused criteria and drew on a broad body of sources (including narrative reflections and case accounts), limiting its applicability to appraising the rigor of empirical RoE studies. As a result, this remains a critical gap: without systematic attention to rigor, the field risks drawing conclusions from uneven evidence (Brandon & Singh, 2009; Donaldson, 2022, 2025; Villalobos et al., 2025).

Other disciplines have moved toward clearer standards for transparent reporting and structured appraisal of bias (e.g., Preferred Reporting Items for Systematic Reviews and Meta-Analyses [PRISMA]-style guidance and design-appropriate risk-of-bias tools). Similar attention is needed in RoE. This need has also been reiterated in recent RoE-focused scholarship, including a 2025 New Directions for Evaluation special issue that highlights ongoing definitional challenges and calls for clearer standards to support higher-quality RoE and more accurate meta-RoE (Aston et al., 2025).

This integrative review addresses these needs by examining what topics recent RoE studies have explored and evaluating how well those studies were conducted. We focus on RoE articles in AJE from 2014 to 2024 asking: (1) What themes and subjects do they investigate, and how have these trends shifted over time? (2) What research methods and designs are most commonly used? (3) What is the methodological quality of these studies, as judged by established appraisal criteria?

Methods

Sample Selection and Inclusion Criteria

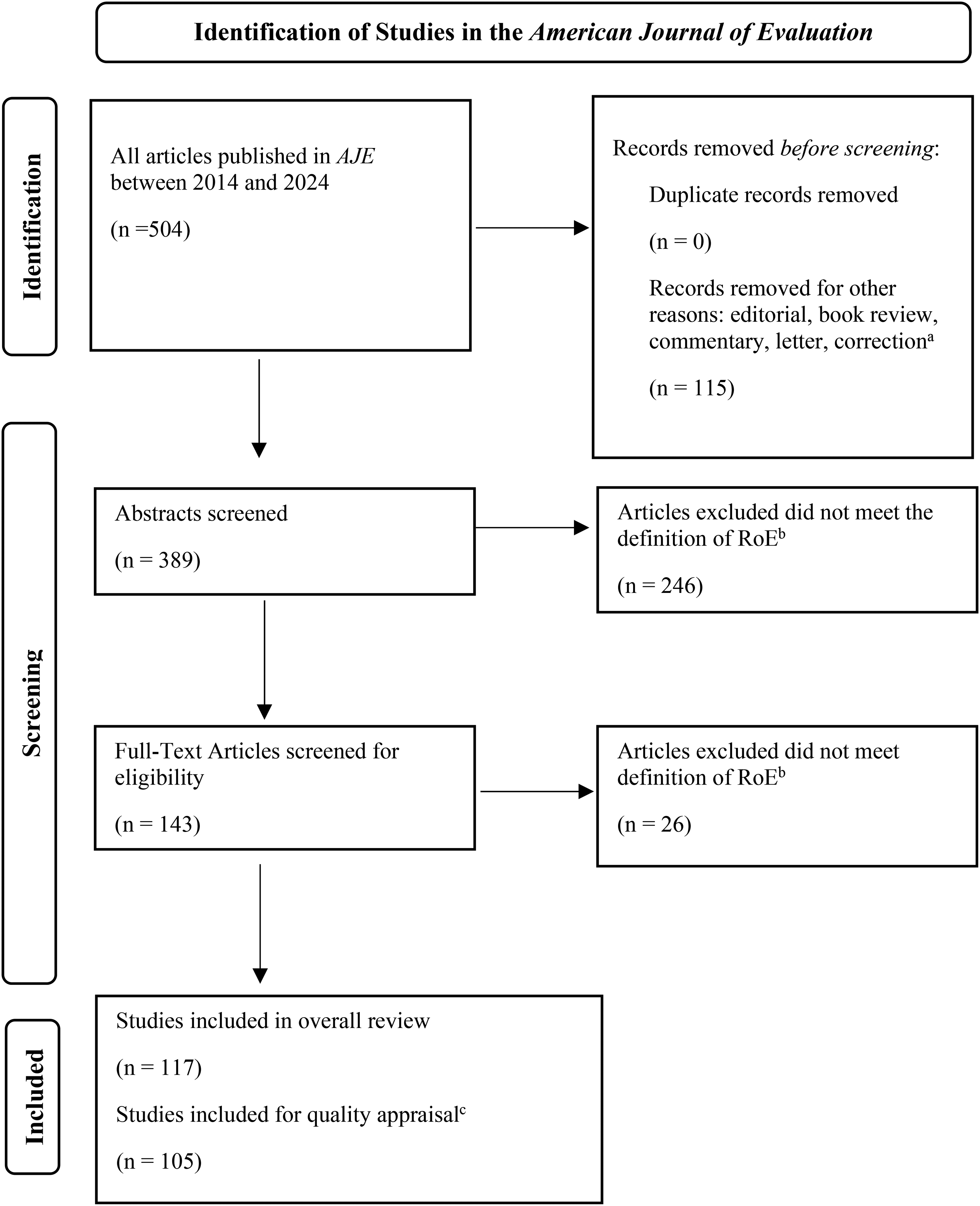

AJE was selected because of its central role in RoE scholarship and to maintain continuity with prior reviews (e.g., Coryn et al., 2017; Galport & Galport, 2015; Vallin et al., 2015). We began with a census of all items published in AJE between 2014 and 2024 (n = 504). Records categorized by the journal as non-article content (e.g., editorials, book reviews, commentaries, letters, and corrections) were removed before screening, leaving 389 abstracts for review (Figure 1).

Selection process for identifying RoE studies in AJE. Note. aRecords categorized by AJE as editorials, book reviews, commentaries, letters, or corrections were excluded prior to screening. bStudies excluded are those that do not meet the operational definition for empirical RoE. Narrative reflections and case studies without an explicit methods section or systematic data collection were also excluded. cMeasurement and tool validation studies and simulations were excluded from quality appraisal as suitable critical appraisal tools were not available for those types of studies. AJE= American Journal of Evaluation; RoE= Research on Evaluation.

Given ongoing definitional variation in the field, we adopted Coryn et al.'s (2016) definition of RoE as “any purposeful, systematic, empirical inquiry intended to test existing knowledge, contribute to existing knowledge, or generate new knowledge related to some aspect of evaluation processes or products, or evaluation theories, methods, or practices” (p. 161). This definition aligned with our focus on methodological quality appraisal and guided our screening decisions.

Articles were included if they (1) were published in AJE between January 1, 2014, and December 31, 2024; (2) met this definition of RoE; and (3) reported a defined dataset and a systematic method for data collection and analysis. This process yielded 117 included studies. Of these, 105 were eligible for methodological quality appraisal; 12 were retained in the review but excluded from appraisal because no suitable checklist was available for those designs (e.g., simulations and tool validation studies). Figure 1 summarizes the selection process, and the full list of included studies is provided in the Supplemental material.

To apply the definition consistently, we used decision rules refined through pilot coding. Studies were classified as empirical RoE when they presented a clearly defined qualitative, quantitative, or mixed dataset and described a systematic analytic or data collection procedure. Narrative reflections and illustrative case accounts without those features were excluded. Case studies were included when authors specified case selection, data sources, and an analytic approach. Embedded studies were included only when they were framed as producing transferable knowledge about evaluation theory or practice rather than assessing program outcomes alone. Tool development and validation studies were included in the review but not in the quality appraisal.

Coding Framework and Thematic Classification

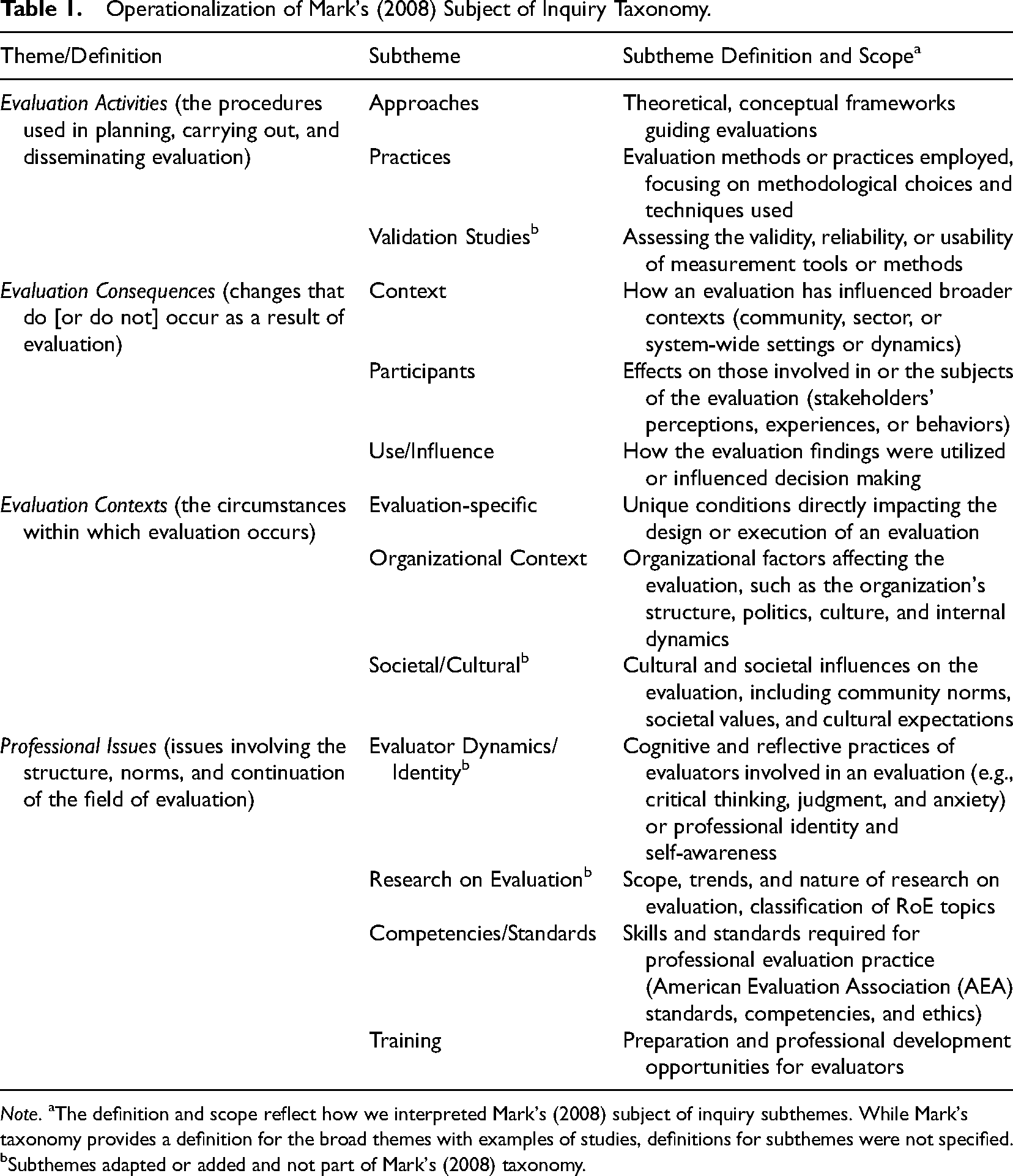

Lessons learned from a pilot study on a subset of this sample (2014–2021; 74 studies) informed the coding framework and decision rules. In the pilot, the lead author and a senior RoE researcher independently coded small design-diverse sets of articles to operationalize key variables and refine decision rules, resolving discrepancies through consensus. All 117 studies were thematically classified using Mark's (2008) subjects-of-inquiry taxonomy. Because our aim was not only to describe study focus but also to examine research design and quality, we relied on the subjects-of-inquiry taxonomy and did not use Mark's (2008) modes-of-inquiry taxonomy, which did not align with the study design distinctions needed for the critical appraisal tools. Using the subjects-of-inquiry taxonomy, we assigned each article to one primary theme and subtheme (Table 1). Mark (2008) provides illustrative subthemes; we adopted these and added several additional subthemes to better capture recurrent topics in our sample and reduce reliance on “other” categories noted by Coryn et al. (2017). Although theme categories were not mutually exclusive, each study was coded to a single theme and subtheme that best captured its primary objective or main research question; overlaps were addressed during pilot consensus discussions. We acknowledge that assigning a single category may underrepresent overlap among subjects of inquiry in the RoE literature, an issue also highlighted by Coryn et al. (2017).

Disciplinary domains (e.g., education and healthcare) were coded using adapted categories from Vallin et al. (2015) and Brandon and Singh (2009). Each study was also classified by methodological approach (qualitative, quantitative, mixed-methods, or review), and we noted whether primary or secondary data were used. For analyses of publication trends, publication year was coded as the year an article was first published online by the journal. As a result, this analytic year may differ from the final issue year shown in the reference list for a small number of articles.

Study Design Classification and Methodological Quality Appraisal

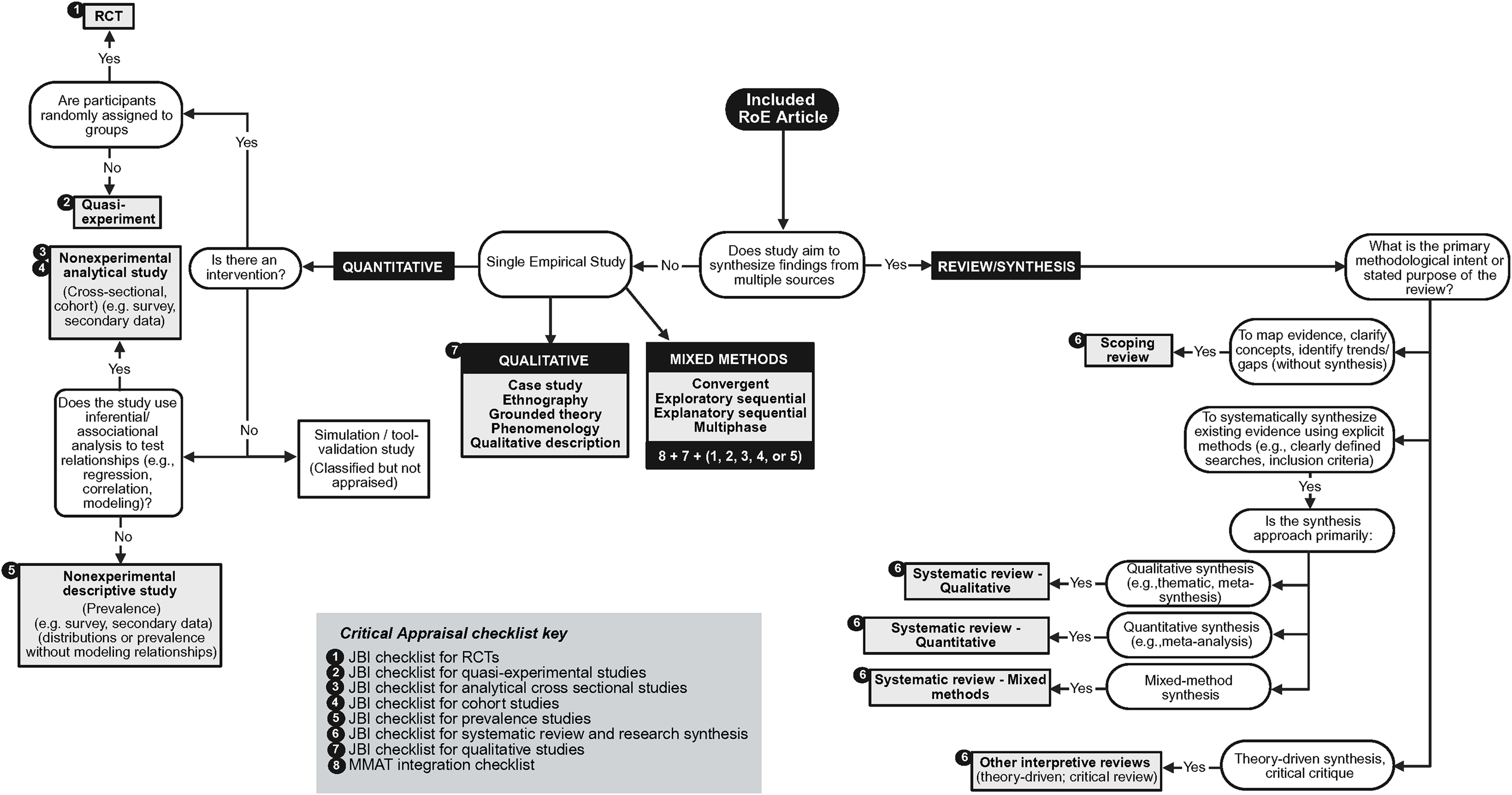

We used a decision tree (Figure 2) to guide classification of study designs and selection of appraisal checklists. Studies were classified using typologies adapted from the Joanna Briggs Institute (JBI) levels of evidence framework because JBI offers design-specific guidance and appraisal tools that align with the wide range of qualitative, quantitative, and review designs represented in this RoE sample (Aromataris et al., 2024). Studies were coded as qualitative, quantitative, mixed-methods, or review based on methodology and stated intent. Qualitative studies without a specific label were coded as qualitative descriptive when they reported qualitative data without an explicit theoretical or methodological framework. Quantitative studies were grouped as experimental (e.g., randomized controlled trials and quasi-experiments) or nonexperimental; nonexperimental studies were further classified as analytical (e.g., regression or correlation models) or descriptive (e.g., distributions or prevalence without modeling). Review and synthesis studies were assigned based on the review's primary purpose and reported procedures, given inconsistent and evolving terminology for reviews (Grant & Booth, 2009). Using JBI guidance, some articles described by their authors as “systematic reviews” were reclassified as scoping reviews because their main aim was to map a topic area (e.g., describe trends, summarize what has been studied, and identify gaps) rather than to synthesize findings in a way that supports conclusions about effectiveness or outcomes. For scoping reviews, JBI does not require critical appraisal of included studies. For that reason, any checklist items related to appraising included evidence were marked N/A for scoping reviews and were not counted in the scoring denominator. This was done so that scoping reviews were not penalized for omitting a step that is not expected for that design. In contrast, systematic reviews that synthesized findings were expected to report critical appraisal of included studies.

Flowchart for Study Design Classification and Critical Appraisal Checklist Assignment. Note. Developed by the authors based on Hong et al.'s (2018) study-design selection algorithm. Checklist items noted as not applicable (N/A) to a given design were excluded from scoring denominators. A link to each critical appraisal checklist is available in Appendix A.

To assess methodological quality across the range of designs represented in this RoE literature, we used applicable JBI critical appraisal checklists and the Mixed Methods Appraisal Tool (MMAT) integration criteria. We used the MMAT because, unlike design-specific checklists alone, it explicitly appraises the integration of qualitative and quantitative components in mixed-methods studies. Mixed-methods studies were appraised using the relevant JBI checklist(s) for the qualitative strand, the relevant JBI checklist for the quantitative strand, and the MMAT integration criteria. We calculated a composite mixed-methods score as the average of the three component scores (equal weights) and then applied a cap based on the MMAT principle that overall quality cannot exceed the weakest strand (Hong et al., 2018). Used together, these tools provided a consistent framework for appraising the RoE studies in this review.

The 105 eligible studies were appraised using the applicable JBI checklist (8–13 items; “yes” = 1, “no/unclear” = 0; “not applicable” excluded) and the MMAT integration criteria (5 items; “yes” = 1, “no/can’t tell” = 0). For each study, we calculated a total score as the percentage of criteria rated “yes.” Because JBI does not prescribe quality cutoffs, we drew on prior systematic reviews that used cutoffs ranging from ≥70% to 80% to indicate high quality (e.g., Akl et al., 2021; Varmaghani et al., 2024). We therefore classified overall quality as high (≥80% of criteria met), moderate (50–79%), or low (<50%).

Although the JBI and MMAT checklists provided a clear structure, several criteria still required judgment (e.g., whether components were “adequately described” or whether there was “congruity” across the question, methodology, and analytic approach). To support consistency, we relied on an appraisal guide that required a brief written rationale tied to text evidence and included design-specific examples. For example, for the qualitative checklist item assessing congruity between the research methodology and the research question or objectives, we coded “Yes” when the question and approach were clearly aligned (e.g., an ethnographic question paired with observation or interviews); “No” when the stated approach and the question and methods were misaligned (e.g., a “grounded theory” claim without theory-building procedures); and “Unclear” when methodological detail was insufficient to determine alignment. For review studies, we judged search strategies as “appropriate” only when databases, search terms, and inclusion criteria were reported in sufficient detail to be reproducible. Consistent with our decision rules, we used N/A only when a criterion was not applicable.

Data Synthesis and Consistency Checks

The lead author conducted data extraction and scoring for the full sample using the finalized decision rules and design-specific appraisal guidance. To support intra-rater consistency over the course of coding, the lead author periodically rechecked earlier coded articles at multiple points, with emphasis on borderline classifications and outliers, and verified decisions against the written rules and checklist instructions. Unresolved questions were discussed with the senior RoE researcher, and resulting clarifications were applied consistently. Findings were summarized using descriptive statistics. Frequencies and percentages were used to describe thematic categories, study designs, and data sources, and means and standard deviations were used to summarize critical appraisal scores. Appraisal findings were interpreted descriptively, with attention to patterns that recurred within study designs. Figures were created using Microsoft Excel and Python, with Python figures produced using matplotlib and seaborn (Hunter, 2007; Waskom, 2021).

Results

Thematic Trends

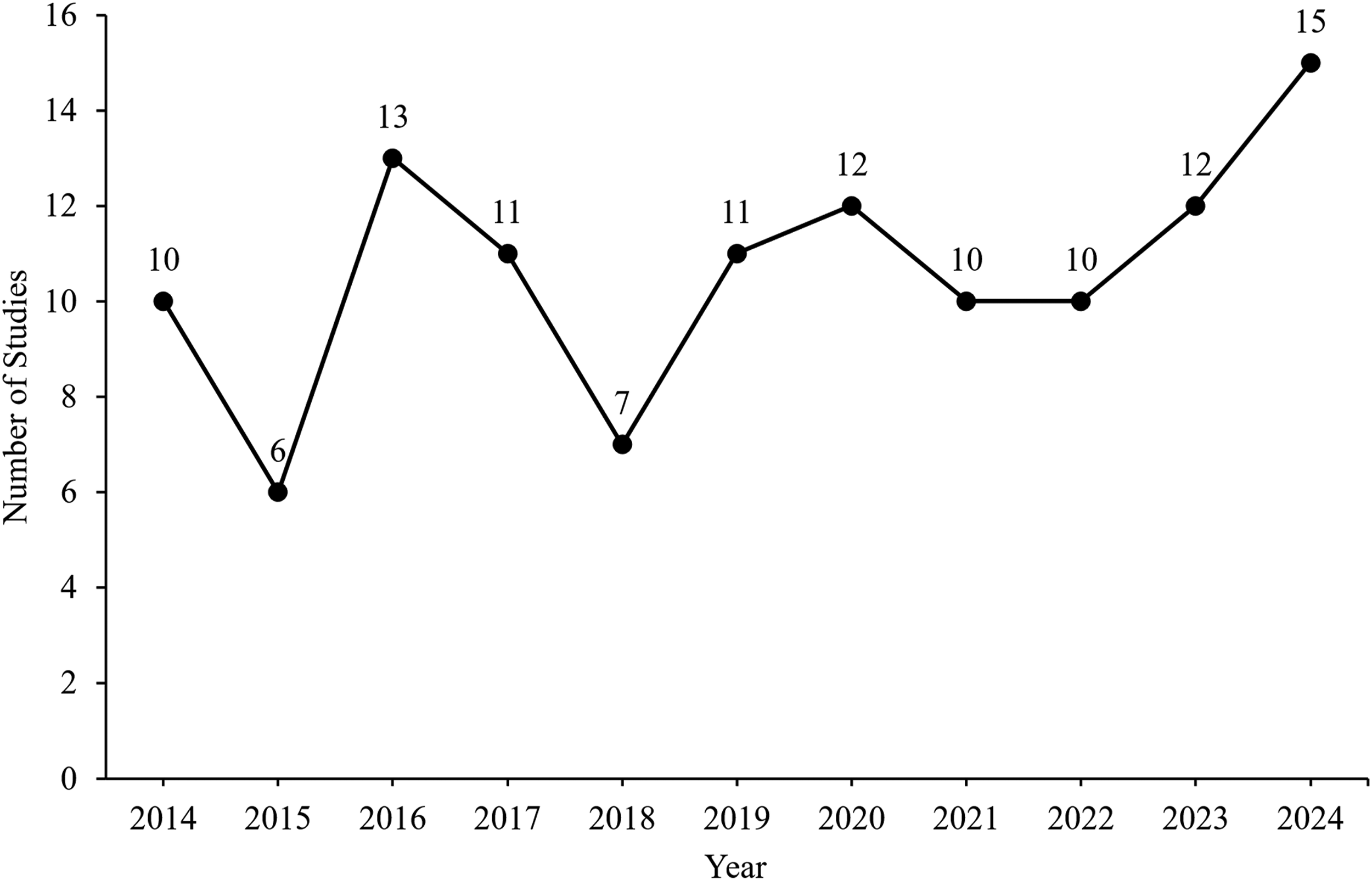

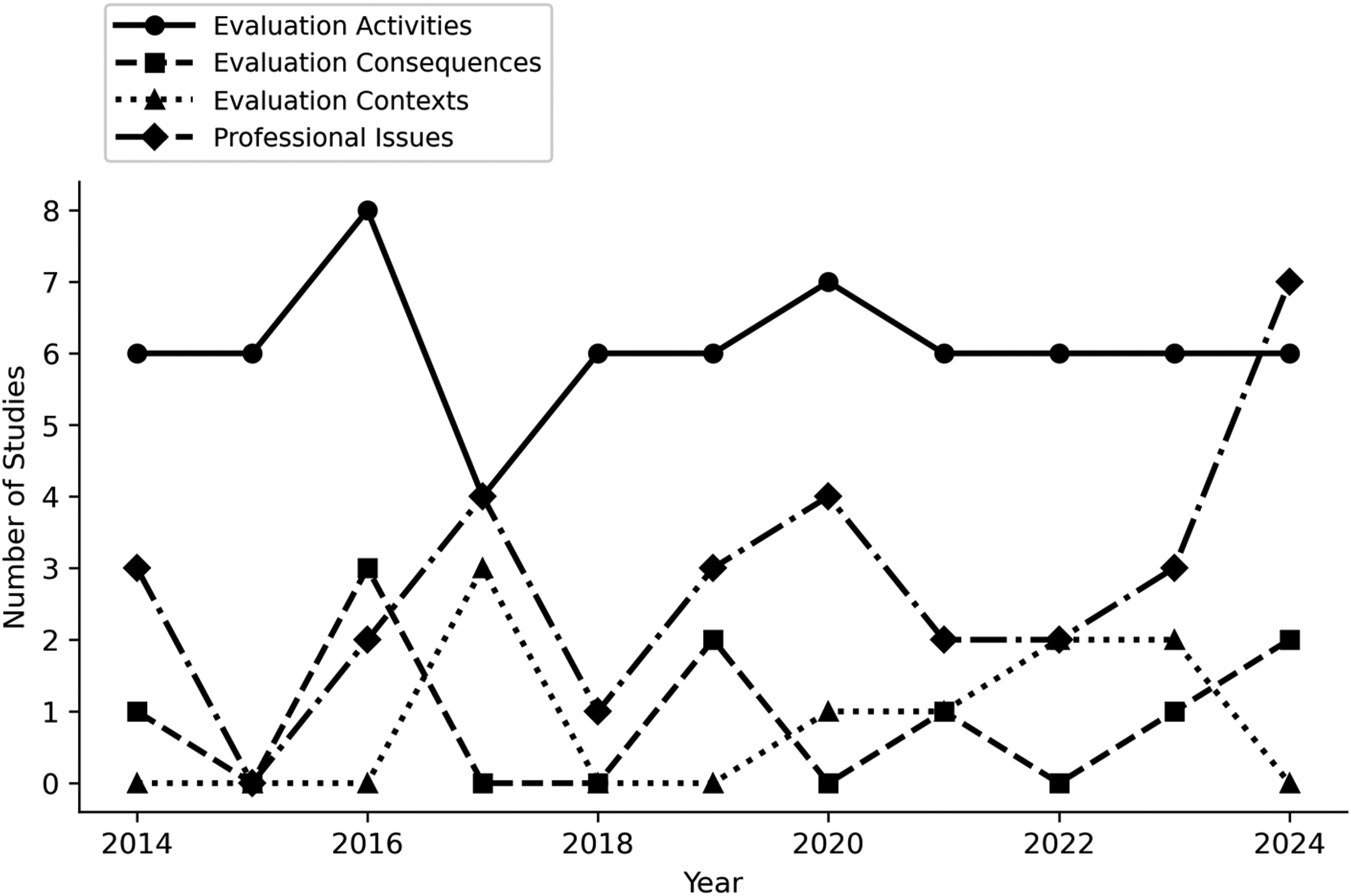

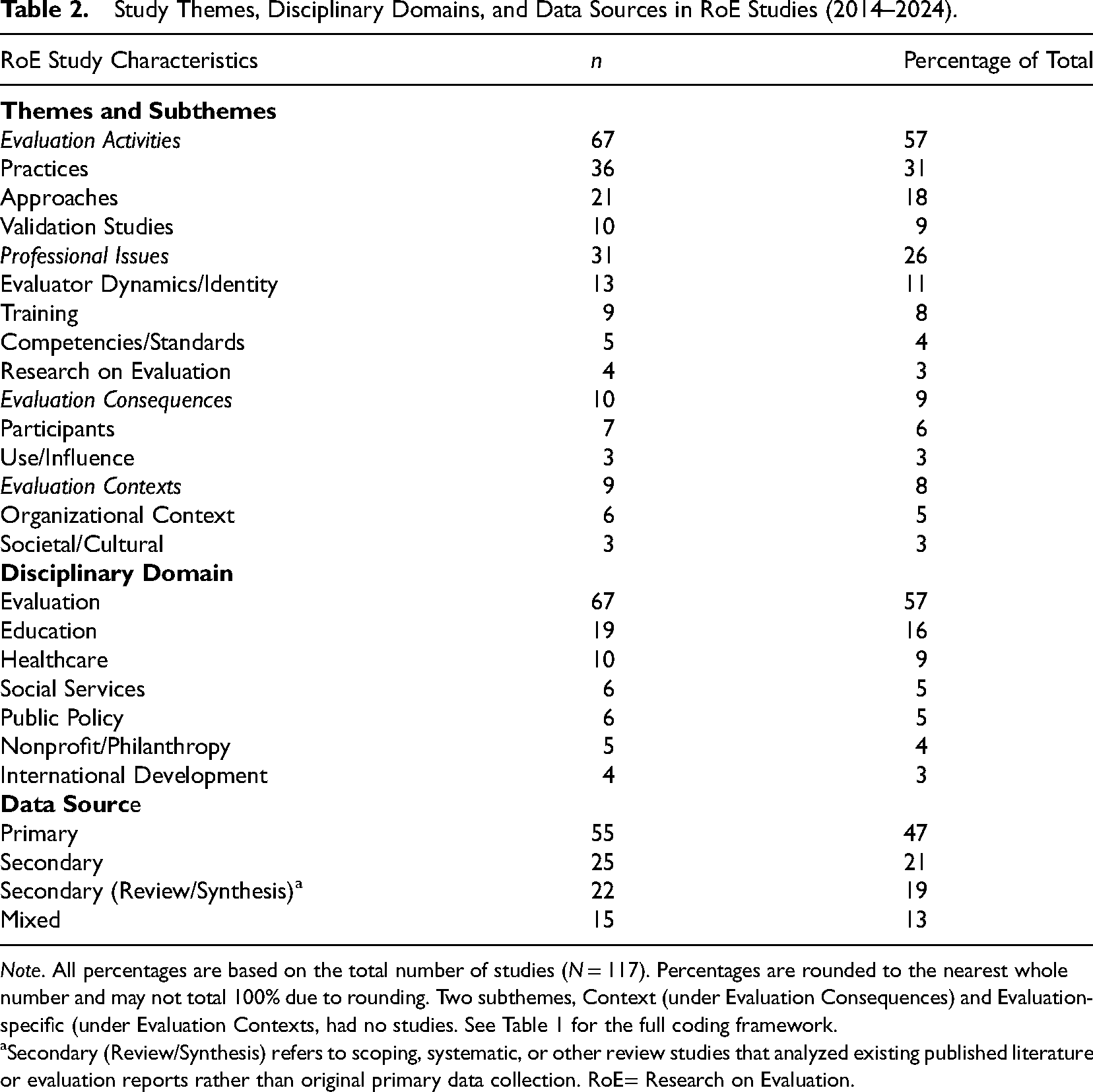

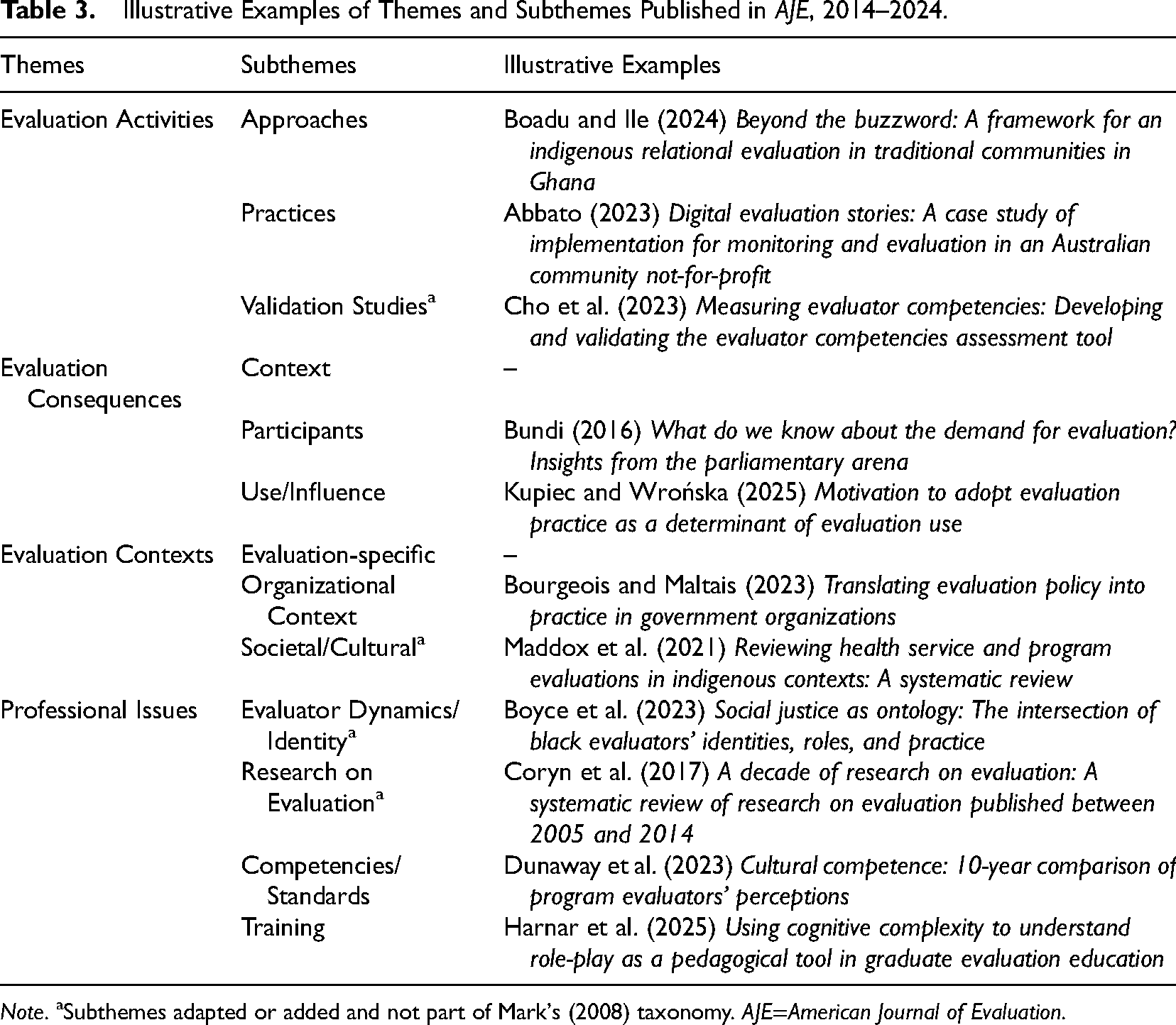

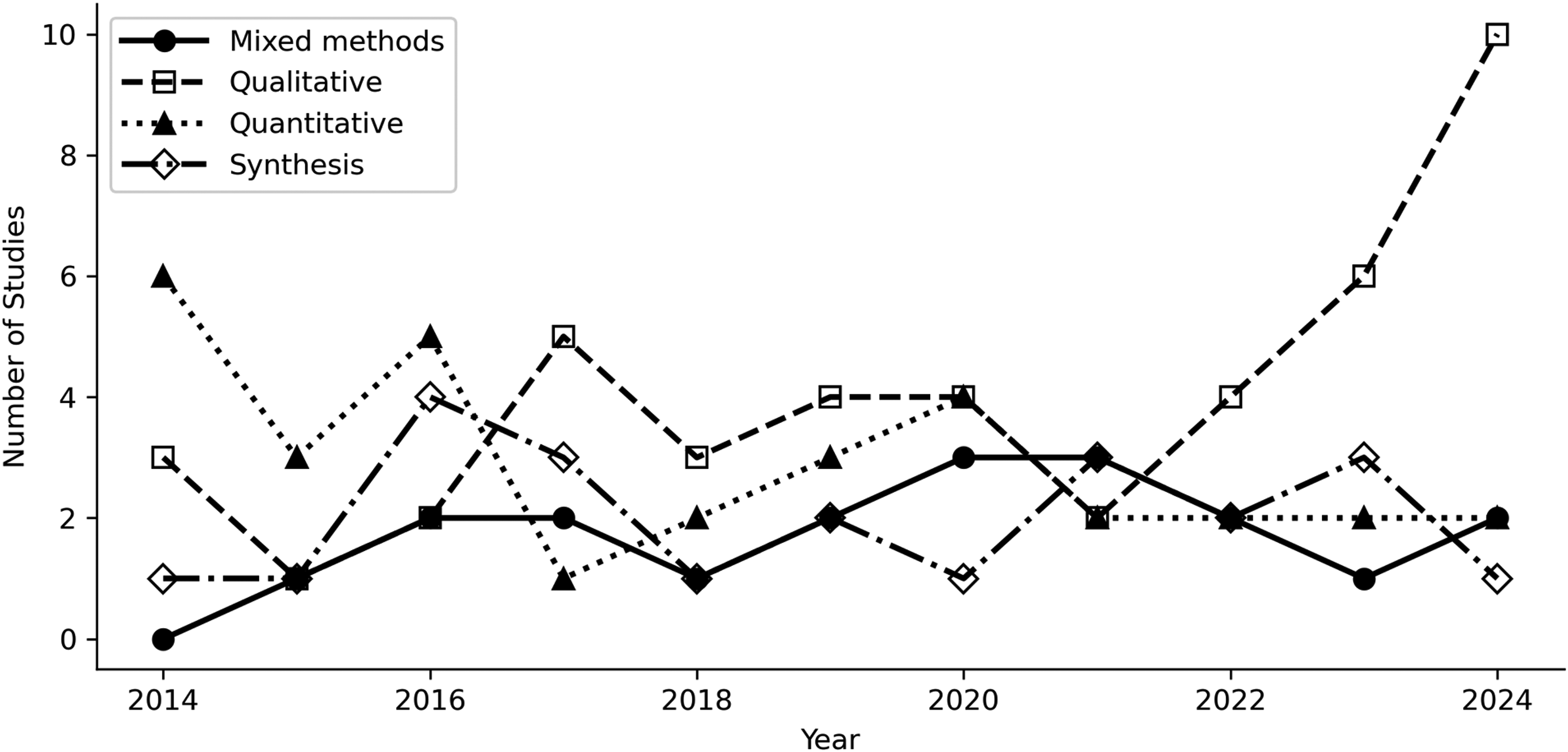

From 2014 to 2024, RoE publication counts fluctuated across years rather than showing a uniform upward trend, although RoE remained a visible and recurring area of inquiry in AJE (Figure 3). The majority of the 117 studies focused on Evaluation Activities (procedures and methods of evaluation, about 57% of studies) or Professional Issues (evaluators’ roles, competencies, or the profession itself, about 26%). Within Evaluation Activities, most studies examined practices and overarching approaches (31% and 18%), with relatively few tool validation studies (9%). Within Professional Issues, studies centered around evaluator dynamics and identity and training (11% and 8%), with fewer studies examining competencies or standards or research that mapped trends and scope of RoE. In contrast, only a small fraction examined Evaluation Consequences (e.g., use or influence of evaluation findings, 9%) or Evaluation Contexts (how organizational or social context affects evaluation, 8%) (Figure 4). These findings indicate that RoE in the past decade has continued to emphasize how evaluations are done and the evaluator community, while questions about the outcomes and broader impact of evaluation remain underexplored (see Tables 2 and 3 for the distribution of themes and subthemes and illustrative examples).

Publication trend over time (N = 117).

Evaluation themes over time (N = 117).

Operationalization of Mark's (2008) Subject of Inquiry Taxonomy.

Note. aThe definition and scope reflect how we interpreted Mark's (2008) subject of inquiry subthemes. While Mark's taxonomy provides a definition for the broad themes with examples of studies, definitions for subthemes were not specified.

Subthemes adapted or added and not part of Mark's (2008) taxonomy.

Study Themes, Disciplinary Domains, and Data Sources in RoE Studies (2014–2024).

Note. All percentages are based on the total number of studies (N = 117). Percentages are rounded to the nearest whole number and may not total 100% due to rounding. Two subthemes, Context (under Evaluation Consequences) and Evaluation-specific (under Evaluation Contexts, had no studies. See Table 1 for the full coding framework.

Secondary (Review/Synthesis) refers to scoping, systematic, or other review studies that analyzed existing published literature or evaluation reports rather than original primary data collection. RoE= Research on Evaluation.

Illustrative Examples of Themes and Subthemes Published in AJE, 2014–2024.

Note. aSubthemes adapted or added and not part of Mark's (2008) taxonomy. AJE=American Journal of Evaluation.

Disciplinary Domains

More than half of the RoE studies fell under the disciplinary domain of evaluation (57%). Studies were coded into this category when they examined evaluation in general rather than in a specific sector. Education (16%) and healthcare (9%) were the most common applied settings, while social services, public policy, nonprofit or philanthropy, and international development appeared less frequently (3%–5% each).

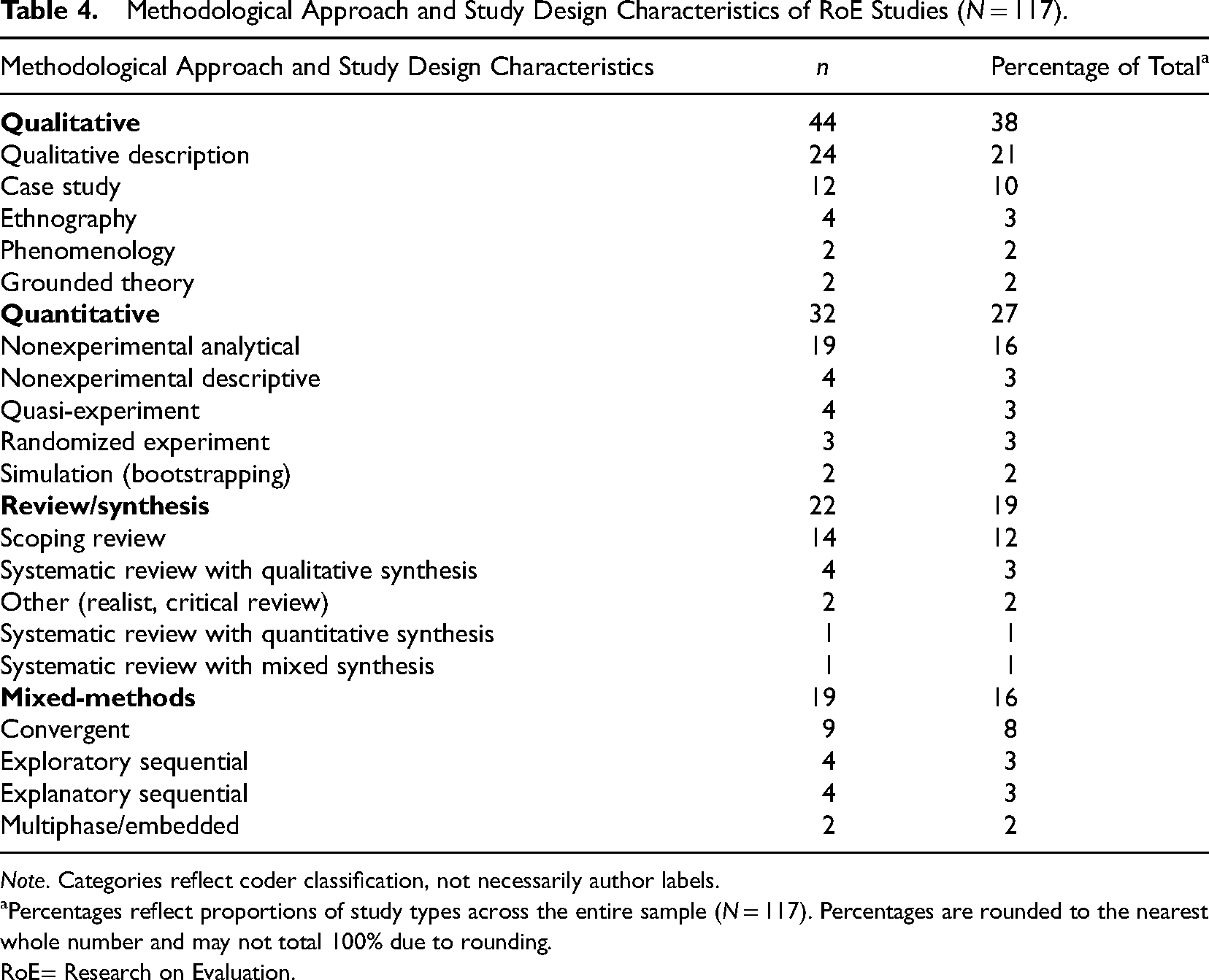

Methodological Approaches

Among the 117 RoE studies, qualitative methods were the most prevalent. Purely qualitative studies accounted for 38% of the sample (with simple qualitative descriptive designs being especially common). Quantitative studies comprised about 27%, most of which were nonexperimental surveys or correlational studies (analytical cross-sectional designs); only a handful of experimental or quasi-experimental studies (6% combined) were published in the entire period. Mixed-methods studies represented 16% of the sample, typically integrating a qualitative component with a quantitative survey. Review studies made up 19% and were usually scoping or mapping reviews; very few performed formal meta-analysis or meta-evaluation (see Figure 5). Fewer than half of the RoE studies relied on newly collected primary data (47%); most instead drew mainly on secondary sources (53%). Secondary use took three main forms: reanalysis of existing datasets such as surveys or administrative records (21%), use of secondary synthesis data from scoping or systematic reviews (19%), and mixed designs that combined primary and secondary data (13%). Qualitative and mixed-methods studies were more likely to collect primary data, whereas quantitative studies more often analyzed existing datasets. Taken together, this pattern suggests a reliance on available information rather than generating new data to study evaluation practice (see Tables 2 and 4).

Methodological approaches over time (N = 117).

Methodological Approach and Study Design Characteristics of RoE Studies (N = 117).

Note. Categories reflect coder classification, not necessarily author labels.

Percentages reflect proportions of study types across the entire sample (N = 117). Percentages are rounded to the nearest whole number and may not total 100% due to rounding.

RoE= Research on Evaluation.

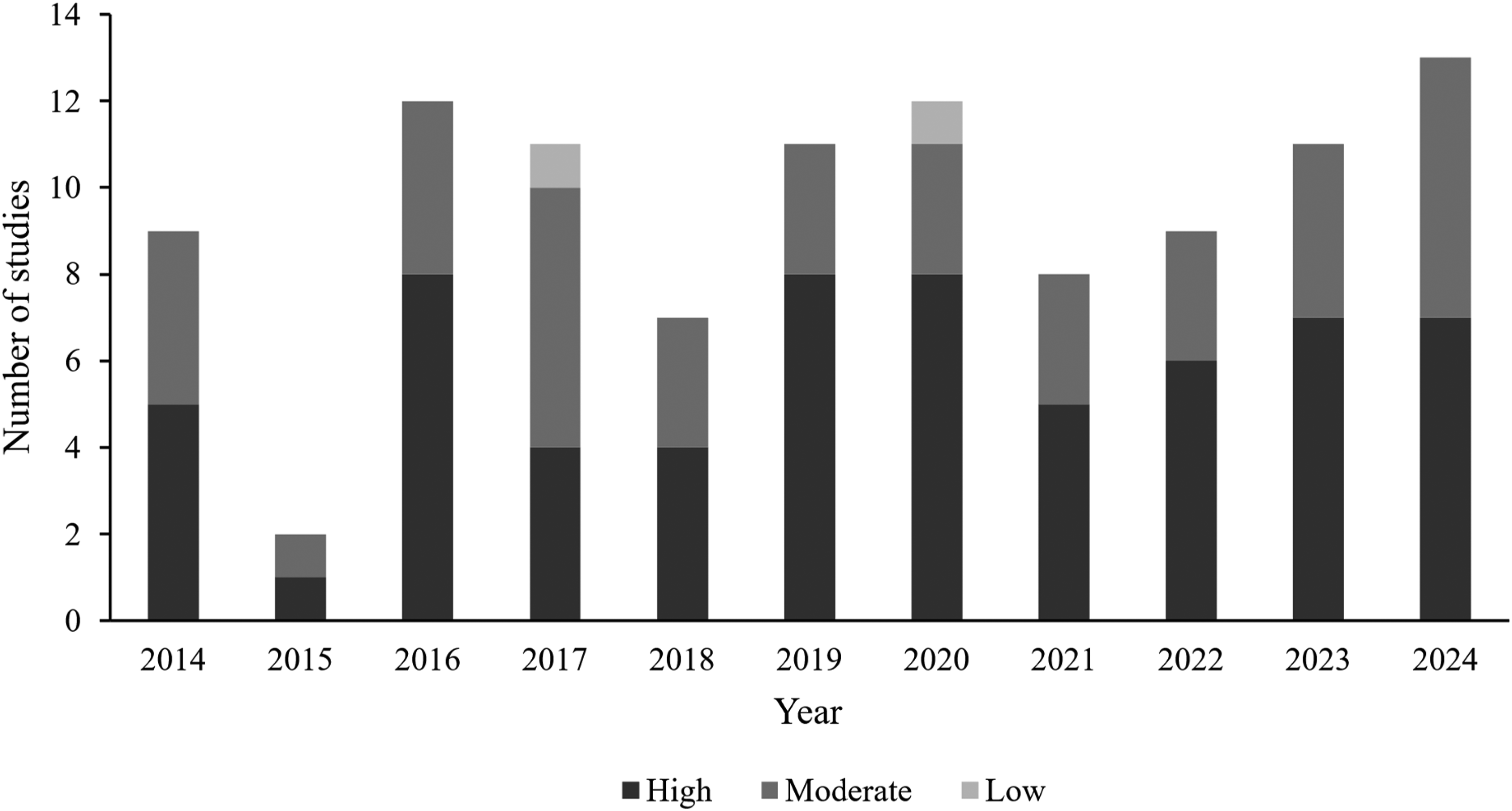

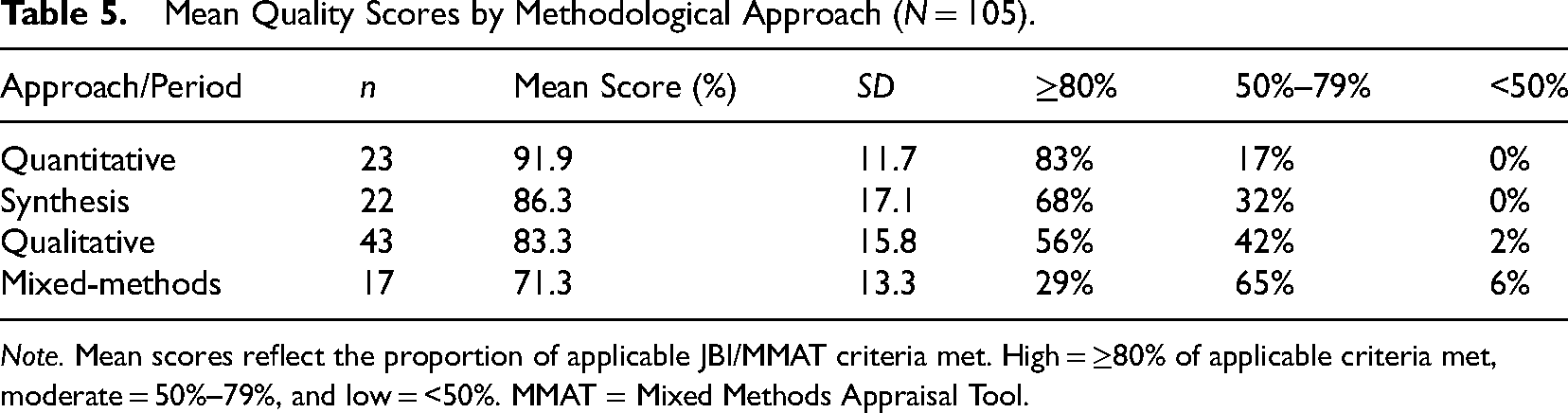

Methodological Quality

Overall, the quality of RoE studies was moderate to high. Of the 105 appraised studies, 60% met at least 80% of the appraisal criteria (high quality), and 38% were in the 50% to 79% range (moderate quality). Only 2% fell below 50%. Descriptively, appraisal scores were higher for studies published from 2018 to 2024 than for those published from 2014 to 2017, suggesting methodological quality in RoE studies may have improved somewhat over time (see Table 5 and Figure 6). However, our appraisal identified several recurring methodological weaknesses across studies. Many qualitative studies did not explicitly state their theoretical or epistemological framework and lacked reflexivity (e.g., no discussion of the researcher's perspective or bias). Common issues in quantitative studies included insufficient information on how confounding variables were addressed and a lack of justification for sample size or discussion of response bias. Mixed-methods studies often showed imbalance in quality between the qualitative and quantitative components (the qualitative strand was frequently weaker), and integration of findings was sometimes superficial. Most of the 22 review studies were classified as scoping reviews and other interpretive reviews, for which formal critical appraisal of included studies is not expected. Of the six studies classified as systematic reviews, where critical appraisal is a standard expectation, only two reported appraising the quality of included studies. Notably, two scoping reviews conducted appraisal, although not required to do so. Overall, formal appraisal of included evidence remained uncommon across RoE syntheses.

Quality rating over time (N = 105).

Mean Quality Scores by Methodological Approach (N = 105).

Note. Mean scores reflect the proportion of applicable JBI/MMAT criteria met. High = ≥80% of applicable criteria met, moderate = 50%–79%, and low = <50%. MMAT = Mixed Methods Appraisal Tool.

Discussion

Our review provides an updated picture of RoE in AJE over the past decade and reveals both areas of progress and areas for improvement. On the one hand, RoE scholarship maintained a visible presence in AJE over the review period, reflecting continued attention to building a more evidence-based understanding of evaluation. This pattern aligns with recent meta-RoE work showing a marked increase in RoE across evaluation journals (Linnell & Stachowski, 2025). At the same time, recent RoE scholarship is a useful reminder that these counts depend on how RoE is defined and how borderline cases are handled (Arbour, 2025; Aston et al., 2025). For this review, we used Coryn et al.'s (2016) empirically anchored definition because our goals included classifying study designs and appraising methodological quality, which required included studies to present a defined dataset and a systematic method. This choice likely made our screening more conservative and may help explain why our AJE-only count for overlapping years is lower than that reported in a recent meta-RoE synthesis (Linnell & Stachowski, 2025). Linnell and Stachowski (2025) noted that their operationalization may have differed from Coryn et al. (2017) in applying that approach to a different body of studies, which further underscores how definitional and screening decisions can affect what gets counted over time. Against that backdrop, differences between our AJE-only RoE counts and those reported in broader meta-RoE reviews are not surprising, especially for borderline articles in which methods are not reported in sufficient detail to support design classification or appraisal.

We found that RoE studies continue to concentrate on how evaluations are conducted and on the evaluation profession itself, mirroring earlier findings (Coryn et al., 2017). This pattern is also consistent with the recent meta-RoE update by Linnell and Stachowski (2025), in which most published RoE remained descriptive and centered on evaluation activities. The persistent underrepresentation of studies on evaluation outcomes and context suggests that fundamental questions about the effects of evaluation or the influence of context remain insufficiently addressed. This imbalance echoes long-standing concerns that the evaluation field lacks evidence of its impact (Henry & Mark, 2003), leaving gaps in our collective understanding of what evaluations accomplish and under what conditions.

On the other hand, we noted some positive developments, including a greater proportion of recent RoE studies that are “evaluation-general” (not tied to specific sectors), and some recent studies have begun to tackle contemporary issues such as racial equity, social justice, and power dynamics in evaluation (e.g., Boyce et al., 2023), whereas others have proposed principles for centering equity in evaluation criteria (Teasdale et al., 2025). These contributions suggest that RoE is beginning to engage with the broader sociopolitical contexts that shape evaluation work. Although these studies remain a minority, they reflect an important evolution in the field's priorities.

Methodologically, our analysis confirms that RoE has been dominated by descriptive and nonexperimental research designs. Qualitative and cross-sectional studies are prevalent, whereas experimental approaches remain rare. This may reflect the complex and context-dependent nature of evaluation practice, but it also points to missed opportunities for methodological innovation. There has been a slight shift toward more sophisticated quantitative analyses (e.g., regression-based studies) compared to earlier decades, but experimental and longitudinal designs are still scarce. The heavy reliance on secondary data is a practical choice but underscores the importance of improving the quality and accessibility of evaluation datasets for research purposes.

Importantly, by systematically appraising study quality with established critical appraisal tools, this review offers an empirical assessment of the rigor of RoE publications in AJE as a focal sample and, to our knowledge, represents one of the first applications of formal critical appraisal tools to this body of RoE studies. The generally moderate-to-high appraisal ratings are encouraging, suggesting that many RoE studies met a substantial proportion of the applicable methodological criteria. Furthermore, the improvement in average appraisal scores in recent years is an encouraging sign that methodological standards may be improving.

At the same time, checklist-based appraisal does not eliminate judgment calls. Several JBI and MMAT criteria require interpretation (e.g., whether key elements are “adequately described” or whether there is “congruity” between the research question, methods, and analysis), so we relied on explicit decision rules and coded conservatively when reporting was incomplete. This means some recurring “weaknesses” likely reflect reporting gaps as much as methodological shortcomings, reinforcing the importance of transparent write-ups and fuller use of supplemental materials when appropriate.

However, the recurring shortcomings we identified point to concrete areas for enhancement. In qualitative RoE studies, researchers should more transparently report their methodological framework and incorporate reflexivity (Patton, 2015); doing so will add credibility and depth to qualitative findings. In quantitative studies, future authors need to pay closer attention to design transparency, clearly reporting how they handled potential biases (like confounds or sampling issues) and the validity reporting of their measures. For mixed-methods studies, it is critical to ensure that both qualitative and quantitative components are executed with rigor and that they are truly integrated (Greene, 2008); mixed-methods designs in RoE would benefit from following established frameworks and explaining how the two strands inform each other. Finally, evidence synthesis in RoE would benefit from closer alignment between review purpose and review methods. Because most review studies in our sample were scoping or mapping reviews, their primary aim was to describe the landscape of RoE rather than to evaluate the strength of evidence. Even so, formal appraisal of included studies was uncommon, even among systematic reviews where it is a standard expectation. Reviews should advance beyond simple narrative reviews by applying systematic review techniques (using protocols, dual coding, quality appraisal of sources, and similar practices), which should greatly strengthen the reliability of conclusions drawn from multiple evaluation studies. Requiring or at least encouraging such practices (e.g., via journal guidelines akin to PRISMA) could improve the quality of RoE reviews. These shortcomings point to specific areas where training, mentorship, and editorial expectations could raise the bar.

Implications

The findings of this review have several implications for the evaluation community. First, RoE scholars should broaden the scope of inquiry to address the evident gaps in our knowledge. There is a need for studies that investigate what difference evaluations make (evaluation consequences) and how contextual factors shape evaluation practice and use. Such research may involve more complex designs, potentially including experiments, longitudinal case studies, or embedded research approaches where researchers collaborate with evaluation teams in real time (Jackson et al., 2021). By expanding into these areas, we can ensure that evidence about both processes and outcomes informs evaluation theory and practice. Second, there is a clear mandate to enhance methodological rigor in RoE studies. Graduate programs and professional development for evaluators might emphasize qualitative methodology (e.g., study design coherence, reflexive practice) and mixed-methods integration skills more strongly (Azzam & Jones, 2025), since our review indicates these are common weak points (Christie et al., 2014; LaVelle & Donaldson, 2010). Strengthening training in these areas will prepare future RoE researchers to conduct more rigorous studies. It may also be valuable to develop evaluation-specific research standards or appraisal tools that account for the unique aspects of studying evaluation and evalation practices. Third, funders and institutions can support RoE by integrating research components into evaluation projects, allocating resources to systematically study how evaluations are conducted and used in various settings (Donaldson, 2022, 2025; Donaldson et al., 2015). This could generate more primary data for RoE and promote a culture of self-reflection in evaluation practice.

Fourth, because our sample is drawn entirely from AJE, we focus these implications on AJE authors and editorial practices specifically. Authors should be encouraged to fully document their methods, even when space is limited. Although AJE already allows and encourages online appendices, many studies in our sample still omitted key details that could have been provided via supplemental files or repositories. In line with open science practices, we recommend that authors submit detailed coding frameworks, extended results, and appraisal decisions via platforms like the Open Science Framework (Linnell & Tilton, 2024). AJE could strengthen and normalize the use of these online supplements. This may be particularly relevant given that mixed-methods studies had the lowest composite mean appraisal scores in our sample, and several appraisal criteria depend heavily on what the authors report (“no/unclear” may sometimes reflect incomplete reporting rather than absence of the practice). One practical, low-burden step AJE could consider is prompting authors to name their study design in the title or abstract (e.g., “case study,” “quasi-experiment,” “systematic review,” or “scoping review”). In this review, study designs were not always labeled. Clear design labeling would make studies easier to find, interpret, and synthesize, and it aligns with widely used reporting guidance (e.g., Strengthening the Reporting of Observational Studies in Epidemiology for observational studies and PRISMA for systematic reviews) that asks authors to identify study design in the title or abstract (von Elm et al., 2007; Page et al., 2021). Finally, the field would benefit from more formal reporting standards tailored to RoE synthesis and design studies. For example, review studies could be asked to follow adapted PRISMA-style checklists, including a transparent search protocol, inclusion criteria, and, where appropriate, critical appraisal of included sources. Establishing such expectations, perhaps as an author resource page or checklist for RoE submissions, could further advance transparency and cumulative learning in evaluation science. More broadly, recent RoE scholarship has also emphasized the value of adopting RoE reporting standards to strengthen quality and the accuracy of RoE syntheses, an area where editorial guidance and clearer expectations can help (Aston et al., 2025). By encouraging these practices, publication outlets like AJE can improve the transparency and quality of RoE reporting and, in turn, the usefulness of RoE findings for advancing the field. High-quality RoE is one pathway to more exemplary evaluations (Donaldson, 2022, 2025; Villalobos et al., 2025).

Limitations

This review has limitations. We examined RoE articles from a single journal over a defined time span, which may limit generalizability to other outlets or recent developments beyond 2024. Because our inclusion criteria followed an explicitly empirical definition of RoE, conceptual and methodological contributions that inform RoE but do not report empirical findings were not captured. Additionally, some types of RoE studies (e.g., simulation-based research and tool development) could not be appraised with existing checklists and therefore were not formally appraised here. The appraisal tools used (JBI and MMAT) may not capture all nuances of RoE studies, and some judgment was required in applying their criteria. In interpreting appraisal results, it is important to distinguish methodological shortcomings from reporting omissions; our ratings reflect what was reported in the published articles and may underestimate methodological quality when procedures were conducted but not described. The cut points used to summarize appraisal ratings and the composite scoring approach for mixed-methods studies were pragmatic choices intended to support descriptive comparison across designs and should not be interpreted as definitive thresholds. Because the JBI and MMAT tools differ in content, emphasis, and number of applicable criteria across study designs, cross-design comparisons of percentage scores should be interpreted as approximate descriptive summaries rather than directly equivalent measures of methodological quality. In particular, because mixed-methods composite scores were capped by the weakest strand, mean appraisal differences across design types should be interpreted cautiously. Finally, while the codebook and decision rules were pilot-tested through independent coding and consensus on a subset of articles, full-sample extraction was conducted by a single coder. Formal interrater reliability assessment was not feasible, and some classification error may remain.

Conclusion

Over the past decade, RoE has gained momentum, shedding light on many aspects of evaluation practice and the evaluation profession (Donaldson, 2022, 2025; Villalobos et al., 2025). Our integrative review of AJE publications from 2014 to 2024 shows that RoE has predominantly explored “how we evaluate” (methods, processes, and people), with comparatively little attention to “what evaluation accomplishes” in terms of outcomes and influence. By introducing a quality appraisal perspective, we found that while RoE studies are generally conducted with moderate-to-high rigor, there are consistent deficiencies in reporting and methodology that need to be addressed. Moving forward, broadening the focus of RoE and improving the methodological transparency and rigor of studies will help build a more credible, actionable knowledge base for the evaluation field. In line with Mark's (2008) vision, investing in rigorous RoE ultimately strengthens evaluation practice itself, ensuring that as evaluators, we continually evaluate and improve our own work through evidence.

Supplemental Material

sj-docx-1-aje-10.1177_10982140261438466 - Supplemental material for Research on Evaluation Studies Published in AJE: An Integrative Review of Themes, Methods, and Quality

Supplemental material, sj-docx-1-aje-10.1177_10982140261438466 for Research on Evaluation Studies Published in AJE: An Integrative Review of Themes, Methods, and Quality by Selam Stephanos and Stewart I. Donaldson in American Journal of Evaluation

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Appendix A

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.