Abstract

Evaluation capacity building (ECB) continues to attract the attention and interest of scholars and practitioners. Over the years, models, frameworks, strategies, and practices related to ECB have been developed and implemented. Although ECB is highly contextual, the evolution of knowledge in this area depends on learning from past efforts in a structured approach. The purpose of the present article is to integrate the ECB literature in evaluation journals. More specifically, the article aims to answer three questions: What types of articles and themes comprise the current literature on ECB? How are current practices of ECB described in the literature? And what is the current status of research on ECB? Informed by the findings of the review, the article concludes with suggestions for future ECB practice and scholarship.

Introduced twenty years ago, evaluation capacity building (ECB) has and continues to evolve through both conceptual and empirical work. Models, frameworks, and measurement tools focused on evaluation capacity and ECB have been published over time, and evaluation scholars and practitioners have sought to develop and implement practices and strategies meant to increase evaluation capacity in a broad range of organizations. King (2007) refers to an ECB continuum that can take various forms and shapes, depending on stakeholder needs and organizational context.

Evaluation capacity building is rooted in the literature on evaluation utilization and organizational learning and merges these two strands by focusing on how evaluations are conducted and used in organizations. ECB is the process

This definition continues to apply to contemporary ECB theory and practice. However, since it was first introduced, our understanding of ECB has continued to evolve, and many of the elements contained within the original definition can now be nuanced and demonstrated through published empirical work. To be sure, and as we document as part of our review, the number of published articles on ECB has continually grown over the past decades.

The purpose of the present article is to take stock of and describe the ECB literature in evaluation journals. The position we hold is that the constant and increasing interest in ECB as both an area of practice and scholarship indicates that ECB may have reached sufficient maturity to warrant an integrative review (Snyder, 2019) that takes stock of what has been learned in the past twenty years. Specifically, the article aims to answer three questions: What types of articles and themes comprise the current literature on ECB? How are current practices of ECB described in the literature? And what is the current status of research on ECB? It is our hope that addressing these questions will serve to inform and guide future research efforts and ECB practice.

The article is structured as follows. The first section provides a brief overview of past efforts to synthesize and classify ECB practices and research. The second section describes the review methodology, including search strategy, screening, review, and coding process, as well as basic characteristics of the included articles. In the third section, we summarize the main findings of our review, including the methodological and conceptual evolution of the ECB literature pertaining specifically to case applications and research on ECB. Recommendations for future practice and research on ECB are presented to offer new and unexplored lines of inquiry.

Prior Reviews of the ECB Literature

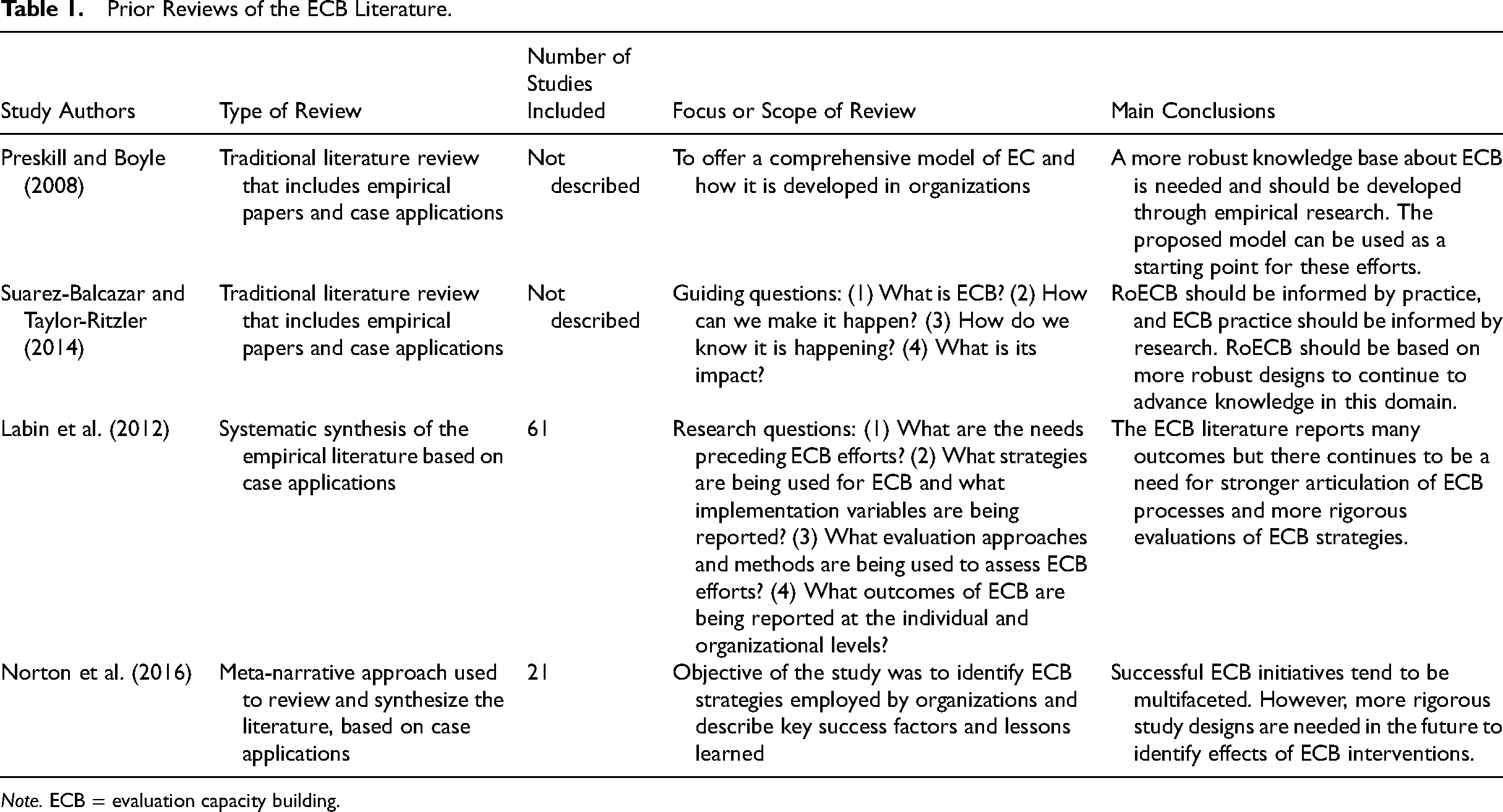

Over the past two decades, evaluation practitioners and scholars have increasingly recognized the importance of establishing an empirical base for ECB, resulting in a steady stream of published conceptual and empirical contributions. Several reviews have been conducted of the existing body of literature to capture key findings related to ECB practices and outcomes and to describe its evolution. Some of these literature reviews were conducted to ground empirical work or case studies of specific ECB interventions, while others focused on building models or frameworks that describe ECB and its constituent elements. Four of these reviews merit particular attention here as precursors of the analysis presented in this article. These four reviews are summarized in Table 1.

Prior Reviews of the ECB Literature.

The first two reviews by Preskill and Boyle (2008) and Suarez-Balcazar and Taylor-Ritzler (2014) are narrative reviews that include various types of publications. Neither of these reviews describes the methodology used as part of the review nor the specific number of papers reviewed. Both reviews focus on describing ECB and its components and conclude that a stronger research base about ECB is needed. The two other reviews, conducted by Labin et al., (2012) and by Norton et al., (2016) employ more structured review approaches and specify the number of studies included in their samples. Both reviews specifically focus on case applications (or strategies) of ECB, their implementation, and their outcomes. Once again, both reviews point to the need for further research and specifically highlight the need for rigorous studies focusing on the outcomes of ECB interventions.

These four reviews set the stage for our own work by highlighting the importance of learning from past efforts to carve a path forward for ECB research and practice. Based on the findings from these reviews and the continuously increasing number of publications focusing on various aspects of ECB, we propose that the field of ECB still requires an empirical, structured analysis of the literature on ECB interventions (case applications) as well as on ECB research (RoECB) to produce a comprehensive picture of the current knowledge base.

Method

The present study is based on an integrative review of peer-reviewed articles on ECB published in major evaluation journals between 2000 and 2019. Integrative reviews seek to “assess, critique, and synthesize the literature on a research topic in a way that enables new theoretical frameworks and perspectives to emerge” (Snyder, 2019, p. 335). They are particularly useful when studying mature topics and enable the inclusion of both experimental and non-experimental studies (Hopia, Latvala, & Liimatainen, 2016); however, they do not include gray literature as do scoping reviews, which tend to focus on identifying gaps in the research literature and/or determine whether a systematic review may be warranted (Arksey & O’Malley, 2005).

Search Strategies

The search strategy for the review involved both a manual and an electronic search strategy. First, we conducted a manual search of relevant articles in the following eight evaluation journals:

We then carried out an electronic search for relevant articles in the same eight journals (as the manual search) through Scopus, using the search terms “evaluation capacity” and “evaluation capacity building”. This search yielded 364 articles. We included articles published in English or French. For this study, we excluded gray literature including doctoral dissertations, theses, technical reports, and white papers from the sampling frame. After removing duplicates from the manual and electronic searches, a total number of 420 articles were found to be relevant for further screening.

Screening of Titles and Abstracts (Level 1)

The screening process was structured around three stages. In the first stage, we screened titles and abstracts. To ensure consistency among reviewers, three inclusion criteria were applied: articles had to (1) be published between 2000 and 2019, (2) be written in English or French, and (3) include the terms “evaluation capacity”

1

or “evaluation capacity building” in the title, abstract, or keywords. In addition to these criteria, publications in the form of editor's notes, final synthesis chapters as presented in issues of

Each article was screened by two reviewers during a process that lasted approximately two months. Reviewer dyads were assigned specific articles and screened the title, abstract, and keywords of the articles independently to make the first decision for inclusion. Dyads then discussed any divergent decisions with a view to include articles that held any potential relevance, so that they could be included in the full-text screening. These dyad discussions resolved all but 79 decisions, which were brought to the entire group and discussed together to reach a final decision regarding inclusion. After this initial step, 293 articles were advanced to the full-text screening review.

Screening of Full-Text Articles and Classification (Levels 2 and 3)

The second and third stages of screening occurred simultaneously over the course of three months. During the second stage of screening, each full-text article was independently reviewed by two reviewers (dyads) to determine whether the primary focus of the article was on EC or ECB. In order to prepare the team for this work, all members reviewed the same five articles and discussed their findings together, to align interpretations. Then, 30 articles were assigned to all dyads, who reviewed the full-text articles and discussed any inconsistencies in their inclusion/exclusion decisions. Ten articles were found to produce differing decisions and were discussed by the entire team until a decision could be reached. Once this was done, articles included for further review were assigned to dyads, who then reviewed the papers and discussed their findings. Three rounds of independent review were completed in this way and in total, 38 inconsistent decisions were brought back to the group for further deliberation. In total, 86 articles were excluded with specific rationales and 160 were included for the third screening stage. Another 47 articles were identified as being “ECB adjacent” (described in the findings section).

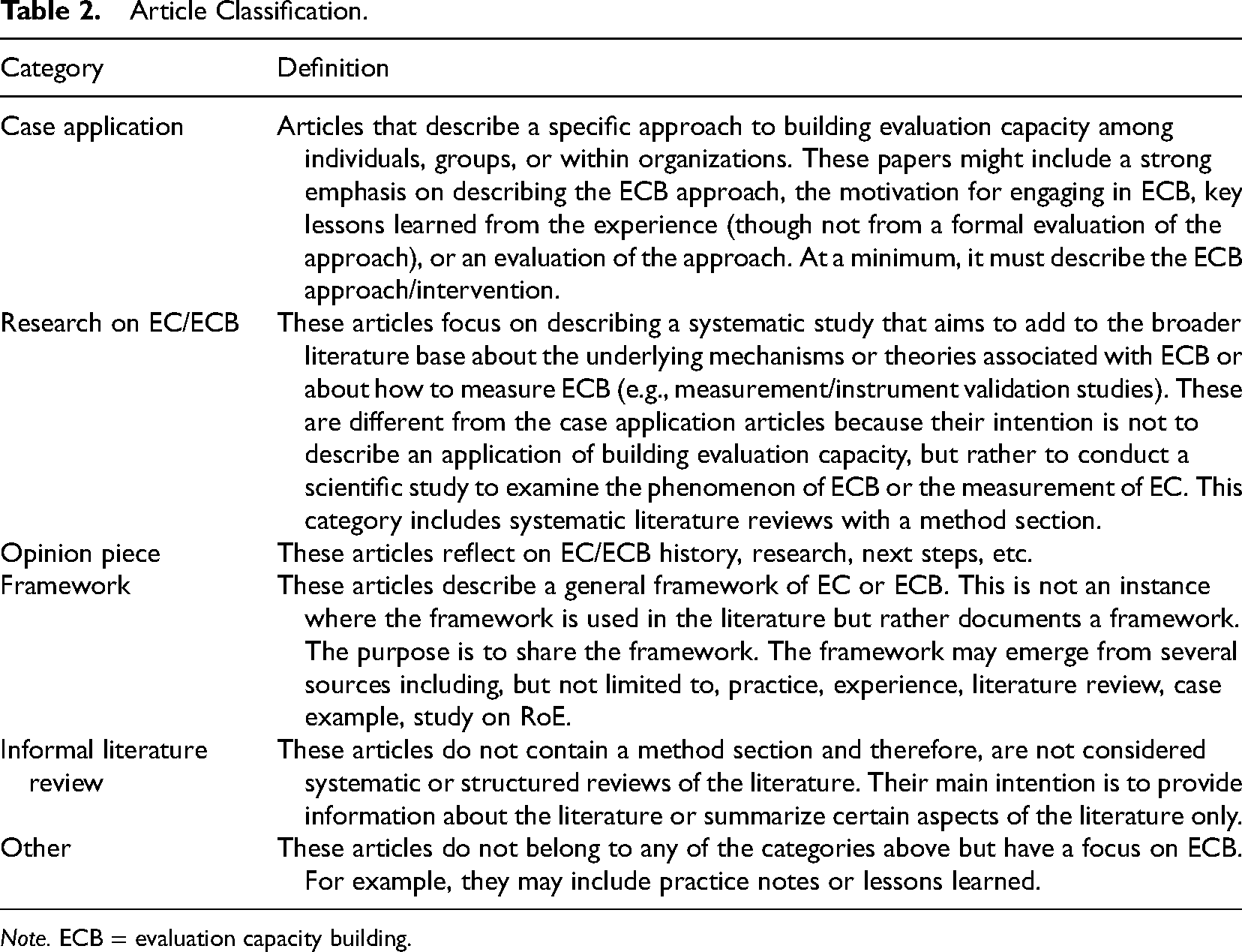

During the full-text review, the dyads also classified the 160 included articles according to six types of publications: case application, research on EC/ECB (RoEC/ECB), opinion piece, framework, informal literature review, and other publication. Articles were independently reviewed by two reviewers. Discrepancies in classification were discussed with the research team and decided by consensus. We developed a specific definition for each category to examine the articles and classify them in a consistent manner (see Table 2 below). The categories are not mutually exclusive, and therefore, some articles are classified into more than one category. Articles that could not be classified within one of these fields were marked as “other.”

Article Classification.

Coding and Analysis

To facilitate coding, a Qualtrics data extraction form was developed to analyze the 116 articles. The Qualtrics data form consisted of three coding modules that were created to extract data from the included articles. The first coding module captured the

The second coding module developed for the

Team members used the second module to code all 73 case application articles. Two variables (publication year and presence of a logic model/theory of change) were coded by article (

The third coding module was developed for

To ensure the quality of the coding modules, as well as to identify potential ambiguity and variability within and across coding categories, two rounds of pilot tests were conducted. In each round, each author was assigned the same three articles. Each researcher coded all three articles independently and any differences in coding were reconciled through a group discussion. Based on the feedback from the two pilot rounds, the coding modules were finalized. Researchers were then randomly assigned articles and completed independent coding of 73 case applications and 43 research on EC/ECB articles. Any questions encountered during independent coding were brought back to the group for discussion and joint decision-making. The coding process was conducted over a two-month period, during which four meetings were held to discuss emerging findings and coding issues. Descriptive statistics were used to analyze the extracted information from the 116 ECB articles.

Results

The following provides a presentation of the main findings of the review. The section is structured according to the main research questions.

What Types of Articles Comprise the Current Literature on ECB?

The current literature on ECB can be described in various ways. The first set of results describes the defining characteristics of the articles reviewed.

Classification of articles

As described previously, of the 160 articles reviewed, 73 were classified as case applications, and 43 as RoEC/ECB. The remainder of the articles were classified into the other categories presented in Table 2: 24 articles were classified as opinion pieces, 10 as frameworks, 10 as informal literature reviews, and five as “other.”

2

Forty-seven articles excluded from the review were categorized as “ECB adjacent” because the topics were not EC or ECB but rather a closely related topic that may strengthen an individual or organization's ability to effectively do and use evaluation. These topics included: evaluation policies; evaluation frameworks; knowledge translation, brokering or management; participatory evaluation approaches; and evaluative thinking (we return to these articles in the discussion). Notably, more than half of ECB adjacent articles (

Source of publication

Articles on EC/ECB have steadily increased in the last two decades. Most articles were published in journals from the United States and Canada with one-quarter published in the

Origin of articles

Of the 73 articles describing case applications, 64 represented unique cases since some were described in more than one article. Most of the articles reviewed featured case applications or studies conducted in northern or western countries. The majority of case applications (

How are Current Practices of ECB Described in the Literature?

Case applications describing an ECB approach provide insight into existing practices and strategies that are effective for building capacity. To that end, it can be useful to think of case applications as ECB interventions that are designed for a particular organizational context and audience(s) to meet specific needs. Describing the types of strategies or mechanisms of change are effective for achieving certain outcomes in particular settings contributes to the knowledge base about what works in which contexts.

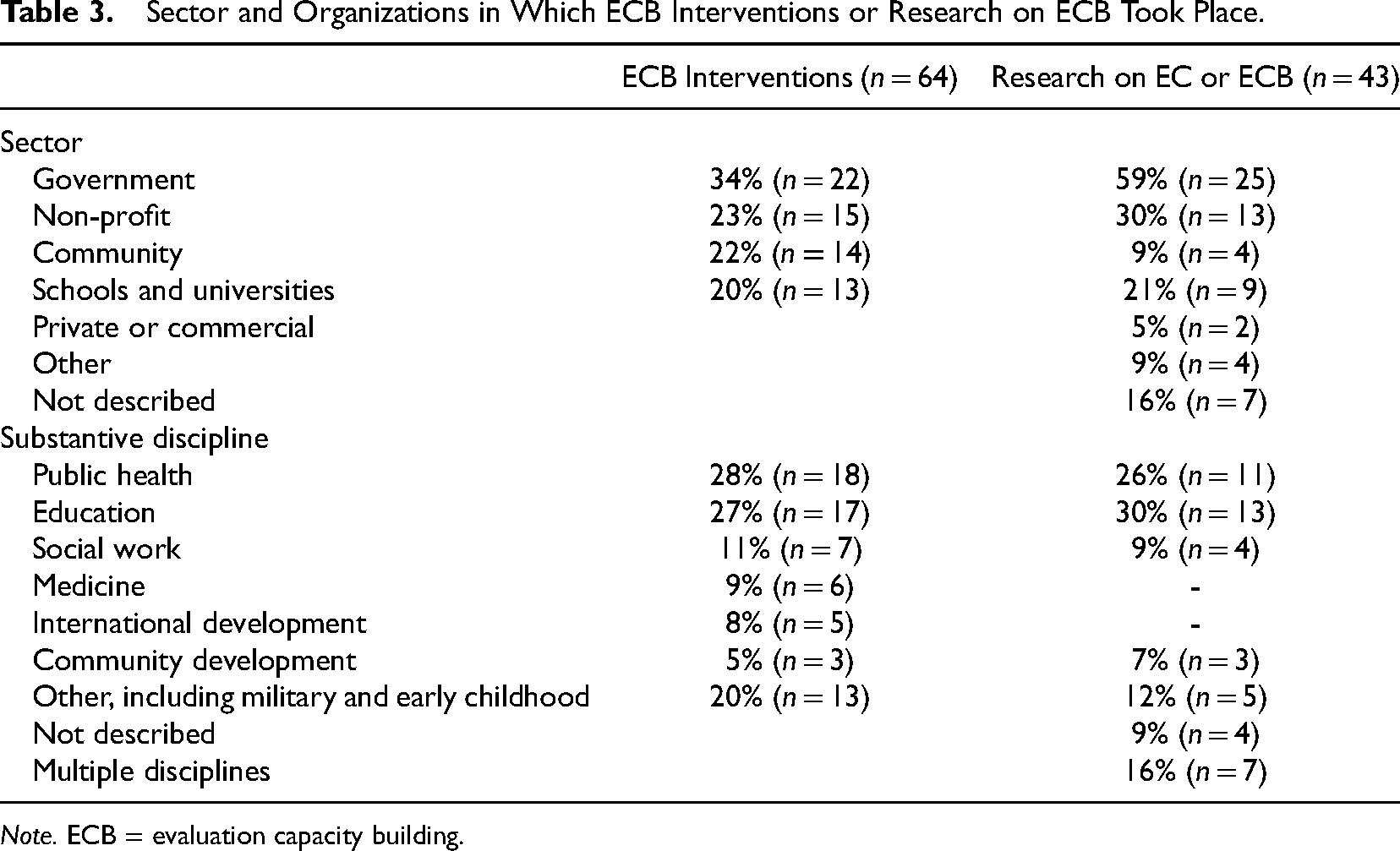

ECB interventions by sector and discipline

The distribution of ECB interventions by sector and substantive discipline is outlined in Table 3. We found that the majority of published ECB interventions were conducted in government and non-profit or community-based organizations, within the fields of public health and education. Public health cases were implemented relatively equally across government agencies, non-profits, and community-based organizations, whereas education ECB interventions occurred primarily within K-12 schools or universities, as may be expected. While cases from public health and education have remained consistent over time (

Sector and Organizations in Which ECB Interventions or Research on ECB Took Place.

ECB intervention participants

We also found that the majority of case applications aimed to build evaluation capacity among frontline staff. More than three-quarters of the target audiences for ECB were staff members (80%,

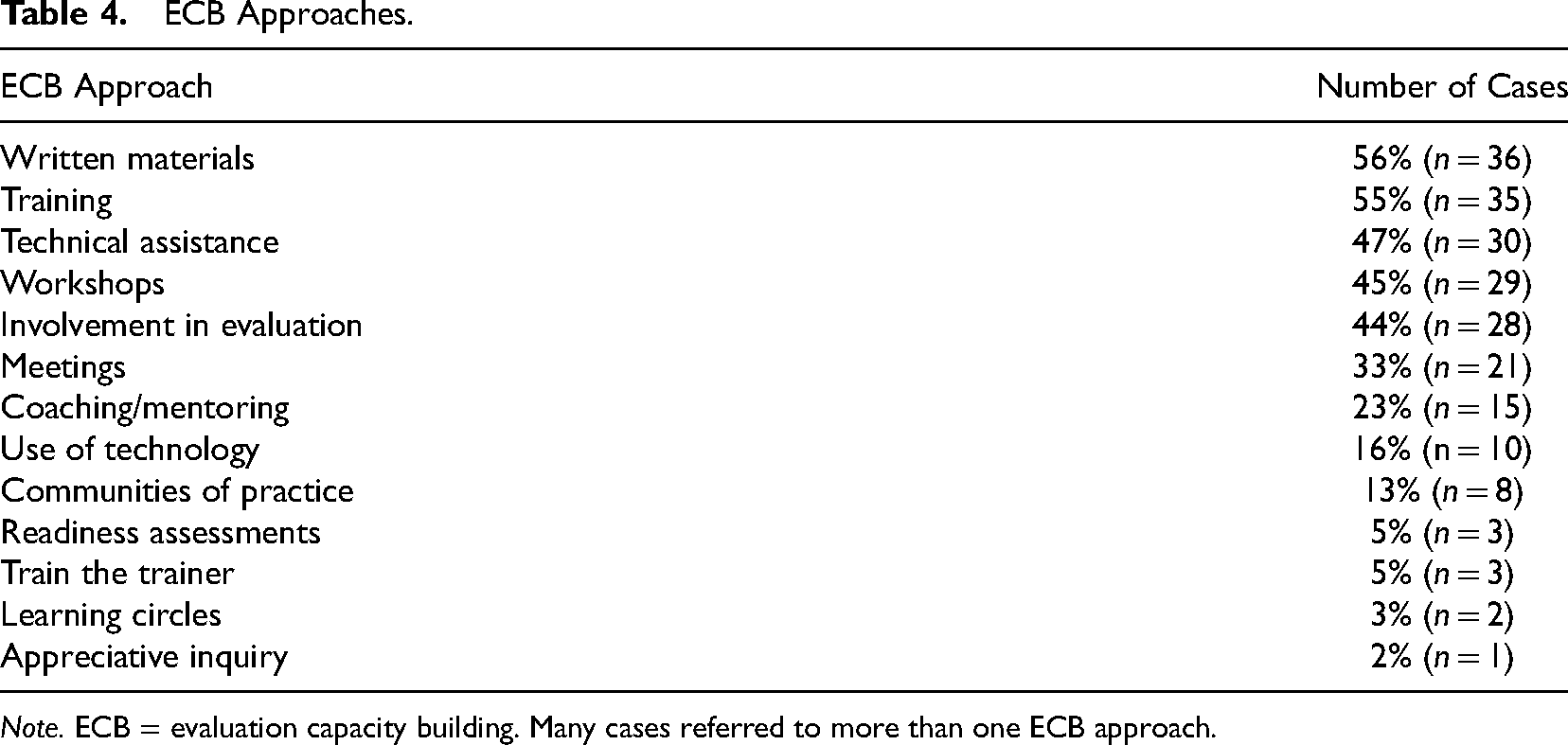

Approaches and strategies to build evaluation capacity

The types of ECB interventions used for building capacity continue to be dominated by more direct approaches (i.e., participants engage in an activity directly aimed at changing cognition or behavior such as participating in a workshop or receiving coaching) rather than indirect approaches (i.e., participants engage in a participatory evaluation process as the mechanism of change). More than half of case applications described a direct form of ECB (52%,

Table 4 outlines the specific approaches and strategies practitioners used in the case applications reviewed, based on the wording used by the authors to describe them. Written materials on evaluation comprise more than half of the strategies practitioners used for capacity building; however, great variation exists in what constitutes “written materials”. For instance, manuals, evaluation guides, toolkits, and workbooks on how to conduct evaluation (Compton et al., 2008; Lachance et al., 2019; Nagao et al., 2005; Satterlund et al., 2013) as well as case studies (Lawrenz et al., 2018) and sample evaluation reports (Karlsson et al., 2008; Satterlund et al., 2013) to promote learning about evaluation were all identified in the articles reviewed.

ECB Approaches.

While use of written materials, training and workshops, technical assistance, and involvement in evaluation have remained fairly consistent across time, there is an increasing trend in the use of other strategies for building capacity. The use of communities of practice ranged from peer-to-peer exchanges to share evaluation information and troubleshoot challenges (Goodyear, 2011), encouraging evaluation reflection and support within and across programs (Janzen et al., 2017; Parsons et al., 2016), and co-writing journal articles (Janzen et al., 2017).

One case used technology to create “interactive web-based systems to guide evaluation design, data collection, data entry and analysis” (Naccarella et al., 2007, p. 233) and another used an online searchable evaluation instrument database to support evaluation projects in multiple sites combined with an “evaluation peer exchange” consisting of a “moderated electronic list-serv in which evaluators and project PIs can talk amongst themselves, asking questions, sharing information, and troubleshooting common issues in evaluation” (Goodyear, 2011, p. 101). However, creative use of technology for building capacity remains an area with potential given the explosion of technological innovations and their use in evaluation, particularly considering the recent COVID-19 pandemic.

Other interventions did not fit into the categories found in Table 4 but provide interesting avenues for further study. These include the “catalyst-for-change” technique used in Nelson and Eddy (2008) where leadership (i.e., a school principal) served as a change agent for infusing evaluation into the working processes of a school, data dialogues (Karlsson et al., 2008) and listening sessions (Milstein et al., 2002), briefing sessions (Dinh et al., 2015), and development of networks between existing and emerging evaluators (Kosheleva & Segone, 2013). Olejniczak (2017) used a “game-based workshop” to assist evaluators in understanding the relationship between research evidence and the policy cycle and the role of knowledge brokering on increasing use of evidence in public policy (p. 559).

Interestingly, all but two case applications used more than one strategy to build capacity, which is increasingly recommended in the ECB literature (e.g., Bourgeois et al., 2018; Preskill & Boyle, 2008).

Evaluation of ECB outcomes in case applications

The effect of the ECB interventions is rarely described. However, the number of evaluated case applications has increased slightly over time from none in 2000 to 50% in 2019. It is noteworthy that most case applications did not include a logic model or theory of change of the ECB intervention (78%, n = 50), yet evaluations of the interventions are on the rise. In evaluation, including a logic model, theory of change, or other visual description of the intervention is common practice to help understand the causal mechanisms of change, yet in ECB, practitioners do not adopt this same practice to a large extent. Only five of the 34 case applications that evaluated the ECB intervention reported developing a logic model/theory of change of the ECB intervention.

Of the 34 cases that evaluated the ECB intervention, 28 articles included a description of the evaluation's main areas of focus and results. Common areas of focus for these evaluations included individual evaluation knowledge, skills or behaviors, and attitudes toward evaluation. More than half of the evaluations of ECB interventions that measured individual changes showed evidence of increased evaluation knowledge and skills and positive attitudes toward evaluation. However, several showed mixed results in which participants cited challenges applying the skills they learned in practice when the ECB intervention did not include an applied component (i.e., where participants were involved in the evaluation process) or other process use strategy. For instance, Dickinson and Adams (2012) noted that public health practitioners who participated in a series of workshops followed by mentoring and coaching reported increased confidence in implementing evaluation and felt well-supported in their organizations but struggled to apply the concepts learned in practice. Similarly, Ploeg, de Witt, Hutchison, Hayward, and Grayson (2008) noted that research mentees in community care settings were able to learn new evaluation skills and fostered positive relationships with their evaluation mentors but struggled to apply their skills given challenges and constraints in their organizational context.

All of the interventions with mixed results used direct approaches for building capacity, with the exception of one case (Taut, 2007) which used both direct and indirect approaches. In general, ECB interventions that incorporated an indirect approach (either alone or coupled with one or more direct approaches) tended to report increases in evaluation skills in greater proportion compared to ECB interventions that used only direct approaches. This finding highlights the importance of learning by doing and involving participants in the evaluation process to ensure transfer of learning from ECB strategies to workplace settings.

Our findings also point to the fact that evaluation results of ECB interventions often demonstrated mixed results, with lack of resources cited as the most common obstacle to ECB success. For example, Hilton and Libretto (2017) reported that staff in military psychological healthcare settings who participated in designing and implementing program evaluation were able to analyze data and use the evaluation tools provided, but lacked human resources and sufficient time for data entry to be used for evaluative purposes. Valenti et al. (2017) noted that substance abuse programs serving LGBQ populations that received evaluation-related technical assistance reported enhanced capacity and rigor of evaluations within their organizations, but continued to face challenges such as lack of available data on their focus population and finding safe spaces where youth felt comfortable participating in the evaluation process.

What is the current status of research on evaluation?

Research studies that empirically examine the underlying mechanisms of ECB or measure aspects of EC provide evidence that can help refine current knowledge and build the evidence base for “what works.” While we reviewed roughly half as many research studies as case applications, they nearly doubled within the last decade. Fifteen studies on EC or ECB were published in 2010 or before compared to 28 studies from 2011 onward.

Research studies by sector and discipline

As for the case applications, most research on ECB studies occurred within government and non-profit or community-based organizations as outlined in Table 3. The number of research studies across all levels of government increased substantially beginning in 2008, from one study to 17, accounting for 46% of all studies on EC/ECB from 2008 to 2019.

Another similarity with case applications was the substantive areas where the research took place. Half of research on ECB studies took place in the areas of education/higher education and public health (Table 3). However, unlike case applications where a larger proportion of studies took place in these two disciplines, nearly one-quarter of research on EC/ECB occurred in public administration. Moreover, while there is an increase in the number of research studies across all types of disciplines over time, studies in public health, education, and public administration each tripled after 2010.

Study participants

We also found that, similar to case applications, a large proportion of research studies involved staff as key participants. However, unlike cases, the study populations identified in the research articles were more diverse and included a variety of participants, ranging from evaluators to policymakers and organizational leaders. More specifically, study populations included staff (47%,

Study purposes and designs

Most of the studies on ECB published over the past twenty years tend to be either descriptive (47%,

In alignment with study purposes, 95% (

Focus of ECB studies

While descriptive or exploratory studies were predominant over the last two decades, study objectives have shifted from individual EC to organizational EC. In the early 2000s, most studies attempted to understand individual evaluation capacity primarily and identify factors that affected evaluation capacity (Arnold, 2006; Newcomer, 2004; Trevisan, 2002). For example, Trevisan (2002) described specific EC for school counselors and Arnold (2006) assessed 4-H educators’ knowledge and skills in program evaluation. While most studies examined individual EC, some studies attempted to identify organizational factors that affect individual EC. For instance, Gibbs et al. (2002) described individual beliefs and attitudes toward evaluation practice at community-based organizations and attempted to identify organizational factors such as resources, leadership, and staff capacity. Additionally, Newcomer (2004) described the efforts made to strengthen individual EC, particularly evaluation managers who oversaw evaluation contracts in federal agencies and assessed organizational culture that affected evaluation capacity.

In the late 2000s, studies on ECB started investigating organizational evaluation capacity more explicitly. Many studies attempted to describe organizational culture and the landscape of evaluation capacity at different types of organizations such as government departments, community-based organizations, or nonprofit organizations (Bourgeois & Cousins, 2008; Carman, 2007; McGeary, 2009; Preskill & Boyle, 2008; Volkov, 2008). Carman (2007) described evaluation activities and capacity of community-based organizations by proposing research questions such as, “what types of activities do community-based organizations conduct to evaluate their programs?” and “who has the primary responsibility for conducting evaluation activities?” (p. 62). Volkov (2008) investigated ECB practices at a nonprofit organization by conducting a qualitative case study through interviews and document review. Some studies emphasized both individual and organizational EC (Alaimo, 2008; Cousins et al., 2008; Fleischer et al., 2008). Cousins et al. (2008) explored the perceptions of internal evaluators regarding the evaluation capacity of their organizations and assessed different perspectives across different types of organizations (i.e., government, non-government organizations). In addition, Alaimo (2008) examined executive directors’ roles and actions taken to develop evaluation capacity in their organizations and analyzed the relationship between leadership and organizational learning culture.

Another noticeable trend identified after 2010 is a growing number of studies that attempted to develop an evaluation capacity framework and create an evaluation capacity assessment tool to measure evaluation capacity (Bourgeois et al., 2013; Bourgeois et al., 2016; Cheng & King, 2017; Labin et al., 2012; Nielsen et al., 2011; Taylor-Ritzler et al., 2013; van Voorst, 2017). Nielsen et al. (2011) developed a conceptual model of evaluation capacity and validated a corresponding Evaluation Capacity Instrument to measure evaluation activity, organizational structure and processes, human capital, evaluation technology and models. Similarly, Bourgeois et al. (2013) created an evaluation capacity building measurement tool, based on the framework of EC developed and tested by Bourgeois and Cousins (2013). Also, Cheng and King (2017) reviewed existing ECB frameworks and tested dimensions of EC in the Taiwanese context.

In the late 2010s, more efforts to validate measurement tools were made (Gagnon et al., 2018; Schwarzman et al., 2019). For instance, Gagnon et al. (2018) examined the construct validity of the Evaluation Capacity in Organizations Questionnaire (ECOQ). Similarly, Schwarzman et al. (2019) developed and validated an Evaluation Practice Analysis Survey (EPAS) to examine evaluation capacity at different levels (i.e., individual, organizational, systems). Interestingly, around the same period, some studies attempted to build upon prior research to advance knowledge in ECB. For instance, van Voorst (2017) attempted to measure the organizational EC of Directorates-General in the European Commission using Nielsen et al. (2011) ECB model. Moreover, Gagnon et al. (2018) adopted the framework developed by Cousins et al. (2008) and tested its construct validity using the ECOQ. Bourgeois et al. (2016) adapted the organizational evaluation capacity assessment instrument developed by Bourgeois et al. (2013) to measure the organizational EC of local public health units.

Strengths and Limitations

As with any study, there are inherent strengths and limitations to the review we present in this publication. The most important limitation is our omission of the grey literature. Many ECB practitioners do not have the time or resources to submit lessons learned for publication in evaluation journals. These reflections are equally as important as the insights that are included in the published literature—they may be captured in various online resources, technical reports, or conference presentations. Alternatively, these lessons from the field may be wholly undocumented, which is our suspicion. Nevertheless, these are important omissions and may have impacted our findings. For instance, it may be the case that we found a larger number of ECB case applications in government settings compared to non-profit and community-based organizations simply because of differing levels of resources (e.g., funding, staff time) available in these sectors to support summarizing and publishing lessons learned.

Additionally, we intentionally included a select number of evaluation-focused journals for this review. Our decision was largely informed by the journals included by other evaluation scholars in performing similar types/scopes of reviews (Coryn et al., 2017; Labin et al., 2012) as well as the accessibility of these journals through indexed databases. Our decision to restrict the current review to such journals is a strength of the study in that it hones in on the status of scholarship and practice within our substantive literature base. As such, it provides a snapshot in time about where our collective interests and intentions are currently focused and could be easily repeated in the future to document changes in our field. This approach certainly has its drawbacks as well since we did not capture ECB publications in discipline-specific journals. Given the proportion of articles we found which were situated within the fields of education and public health, journals within these substantive domains may be a good starting point for scholars who wish to expand upon the current review.

Several strengths also underlie this review, two of which we feel were particularly important in upholding the quality of this research. First, our team is comprised of individuals who have engaged deeply in ECB scholarship and practice in the past decade or are currently engaged in ECB scholarship as part of their ongoing studies. As such, we started with a high level of knowledge about this literature base and were able to readily examine and actively discuss what to include, exclude, or retain for future examination as well as how to categorize and extract information from existing publications with an eye toward what might be helpful for both ECB practitioners and scholars.

Second, as described in the methods section, our team followed a structured and thoughtful approach in conducting this review. Over the course of 18 months, we examined and re-examined articles to ensure we were consistently and appropriately understanding their content. Articles were reviewed by at least two members of the team (see methods section) and it was not unusual for us to bring articles to our regular team meetings for discussion. During this time, the entire team engaged in a real-time discussion to determine whether to include/exclude an article, how to categorize the article, or what information should be coded and extracted.

Discussion

After more than 20 years of discussion in our field about ECB, the purpose of this review was to increase our collective understanding of the types of articles and themes that comprise the extant literature on ECB, to describe common and emerging ECB approaches, and to examine the current state of research on ECB. Our study contributes to advancing knowledge on evaluation capacity and ECB by synthesizing, for the first time, the characteristics and outcomes of case applications of ECB and research on ECB. We believe that considering both types of publication alongside one another supports a more integrated understanding of how research affects practice and vice versa. The other significant contribution that this study makes to the literature is its broad-ranging, twenty-year timeline. No other study of ECB provides a historical description of the field while delving into some of the key milestones reached.

The first observation of note is the steady increase, and cumulative number, of publications since 2000. Much of this increase is due to publications that describe the approaches that practitioners use to build evaluation capacity across a wide range of organizations (

These numbers suggest that a deep dive into this literature was warranted; further, they offer new insights not previously covered under other literature reviews of EC or ECB. For instance, neither the Preskill and Boyle (2008) or the Suarez-Balcazar and Taylor-Ritzler (2014) reviews included methodological notes; although these narrative reviews provide an overview of the field, a more structured approach offers replicability and more specific boundaries. These are key characteristics of the other two reviews outlined at the start of this paper (Labin et al., 2012; Norton et al., 2016), but these studies focused on case applications of ECB on a more limited number of papers than ours. Our study, therefore, extends and expands on this work to provide a more comprehensive picture of the extant literature on EC and ECB and lays a new foundation for the next decade of research and practice.

ECB Practice

ECB case applications describe both the approaches that practitioners have used to build evaluation capacity and the types of evaluation capacities that they have tried to influence through their ECB work. Taken together, these descriptive findings point to important opportunities for future practice and scholarship, which we highlight in the following sections.

ECB Approaches

Our findings suggest that the approaches most frequently used by ECB practitioners are relatively limited in scope. Preskill and Boyle (2008) described several types of ECB approaches practitioners might use as part of their Multidisciplinary Model of ECB—internships, written materials, technology, meetings, appreciative inquiry, communities of practice, training, involvement in evaluation, technical assistance, and coaching (p. 445). We found that practitioners tend to rely on only a subset of these strategies—they most frequently delivered ECB directly to participants in face-to-face settings and used written materials, training, technical assistance, workshops, and intentional involvement in evaluation. It may be that these approaches are simply the most logical “go to” options for building capacity, or that specific approaches are preferred in certain settings, given that the case applications we reviewed were mainly concentrated in the public (government) sector in the disciplines of public health and education.

These most-commonly used approaches provide a partial picture of what evaluation capacity builders have used in recent years. For instance, almost half of the case applications we reviewed (42%) used an online platform among other approaches. This suggests that the ongoing evolution of evaluation as a field of practice, especially considering the COVID-19 pandemic and other uncertainties, requires a diversity of ECB approaches to foster the development of critical thinking and evaluative thinking (Patton, 2018). The need to build EC in organizations does not disappear when contexts change dramatically, and in fact, we believe that ECB is even more important in such contexts. The inability of evaluators to engage with stakeholders and collect data in person, for example, may require organizations to become more involved in the act of conducting evaluations, thereby increasing the need to build their evaluation capacity. Our hope is that investigations of these alternate approaches will appear over the next few years, given the quick pivot to online engagement caused by the pandemic, so that practitioners can learn more about how to conduct online ECB interventions as well as their potential effectiveness.

Our findings show that a one-size-fits-all approach to ECB is not warranted—organizations differ with respect to existing knowledge, skills, and valuing of evaluation; the level of motivation to engage in evaluation; and the infrastructural support for evaluation (e.g., existing data in terms of availability and quality, staff to engage in and conduct evaluation, policies and procedures that are supportive of evaluation) (Fierro & Christie, 2017). As such, we believe that ECB practitioners should have a wide array of approaches to select from so they can appropriately tailor their ECB strategy to each organization. Fortunately, we found

Further, although our findings are limited to journal publications, we recognize and emphasize that ECB practitioners need to have ready access to these approaches through a variety of traditional and alternative means that include journal publications, the gray literature, conference presentations, videos, on-line materials, repositories, social networks, and other modern approaches to information sharing and dissemination. We encourage the evaluation community to develop innovative ways to disseminate these approaches to ECB practitioners and funders of ECB who may not have ready access to journals.

Most of the case applications we reviewed were from North America, with almost no representation of lessons learned from highly populous areas of the world. This finding is potentially a result of the journals we selected for inclusion; however, we do see evidence that these journals do publish scholarship and practice from across the globe. Based on our review as well as our experience, we believe that future research on ECB should extend beyond reports coming from the United States, Canada, and Australia and include other international publications to highlight and learn from approaches to ECB that are culturally responsive.

Effectiveness of ECB: early observations

Given the breadth of literature now available on ECB, and specifically ECB case applications, it is possible to begin examining the effectiveness of various ECB approaches. Unfortunately, our study shows that not all ECB approaches can be considered in such analyses, since just slightly over half of the papers that we reviewed included an evaluation of the ECB initiative described (53%). This observation is consistent with findings from the literature review conducted by Labin et al. (2012) which included articles from 1998–2008 from seven of the eight journals we reviewed. Nevertheless, we did identify some clear patterns from 28 publications that practitioners can incorporate into their design of future ECB approaches.

Early evidence suggests that incorporating techniques which provide participants with an opportunity to learn by doing and to readily transfer what they learn into the workplace is key to altering organizational evaluation practice. ECB strategies that included an indirect approach as part of the strategy (i.e., engagement in a participatory evaluation process) were more likely than those using only direct approaches to report that participants were able to apply the evaluation skills they acquired. Evaluations with mixed results regarding effectiveness suggest that participants find it challenging to transfer their learning into the workplace when an applied component is missing from the ECB approach. These findings are in alignment with what we know from adult learning principles (Chaplowe & Cousins, 2016; Preskill & Boyle, 2008).

ECB outcomes and pathways to intended change

It is noteworthy that few case applications—only 23 of the 73 we reviewed—included a logic model or theory of change even as evaluations of ECB approaches are on the rise. ECB is in essence an

As noted in the definition provided by Stockdill et al. (2002), the end goal of ECB is to promote change at an organizational level. However, our findings show that ECB approaches most often focus on changing individual-level knowledge, skills, behaviors, and attitudes associated with evaluation. As described by Preskill and Boyle (2008), individual-level changes have the potential to contribute to organizational-level change that can sustain evaluation practice. However, we believe that it is important to consider

Research on EC and ECB

Our review suggests that research on EC/ECB is reaching an important point in its level of maturity. Most of the studies that we reviewed were descriptive or exploratory in nature (84%), which is in alignment with the early stages of research in other disciplines of the social sciences (Greene, 2021). We found that the content of these studies has changed over time in ways that we

More recent scholarship has also moved toward developing evaluation capacity frameworks and creating (and validating) evaluation capacity assessment instruments. Here we see a movement in the research that is also indicative of a more mature field—frameworks and instruments of EC are challenging to develop absent a broader literature base that describes the constructs that comprise EC and factors that may affect sustainable and effective evaluation practice in organizations. Such scholarship makes clear strides toward providing practitioners with tools that they can use to do the very tailoring of ECB approaches we suggest earlier in this discussion, and to measure its outcomes using validated instruments.

Future directions for RoEC/ECB

Despite the volume of empirical studies available on EC/ECB, we believe that there is much room for growth. For instance, we suspect the field has reached a point where there are now opportunities to test several hypotheses or surface new relationships that describe how practitioners might effectively contribute to enhancing specific types of EC. For instance, previous work has recognized the importance of adult learning theory in ECB (Preskill & Boyle, 2008), but we did not encounter a study examining adult learning theory and its use for facilitating the types of change we hope for in ECB (e.g., effective translation of what is learned into the workplace and sustaining effective practice). There are several other opportunities available to examine theory in practice—such as drawing upon theories of leadership (Lopez, 2018), organizational change, systems thinking (Gates et al., 2021), and theories of motivation (Swope, 2021). Scholars might employ an array of methods to identify new relationships or examine the application of existing theories in practice, including but not limited to, traditional social science study designs, qualitative comparative analysis (QCA), or process tracing.

We came across many topics that were “close cousins” to ECB which we denoted as “ECB-adjacent” and did not examine deeply as part of this review. We retained these articles (

Conclusions

The ECB literature base has grown extensively over the past two decades, both in terms of volume and scope. There is now much for practitioners and scholars to build upon, including case applications, research studies, theoretical or conceptual frameworks, and other literature reviews. Immediate next steps for the field include finding means to share the lessons embedded in this literature with practitioners who do not have ready access to journals and ensuring that the lessons learned about ECB from practitioners and scholars across the globe who do not have incentives or resources to publish are integrated effectively into our knowledge base. ECB practitioners should intentionally use the tools of the evaluation trade—namely logic models, theories of change, and other visual approaches—to ensure that they are thoughtfully reflecting upon the change they would like to make through their ECB intervention(s) and potential mechanisms for this change.

Our findings point to a need to extend the thinking in ECB in the next decade. We can do so in many ways, including identifying connections with related but currently divergent areas of scholarship and practice (e.g., evaluation policy, knowledge translation) to enhance our approaches. We can also extend our scholarship by surfacing insights about how ECB approaches contribute to meaningful change through hypothesis testing (leveraging existing social science theory) and promising methodologies such as QCA and process tracing.

The potential of ECB as an organizational development strategy touches on more than “just” evaluation: it can also influence organizational values reflected in the use of evidence for learning and accountability, in the consideration of stakeholders’ diverse views, and in demonstrating clear outcomes and impacts. After twenty years, we see the progress that has been made in establishing ECB as an intentional activity that recognizes the importance and value of evaluation in organizations, and we are hopeful that our field will continue, through various means, to build evaluation capacity and to examine its own contributions to our organizations and our clients.

Footnotes

Acknowledgments

We would like to acknowledge the important financial contribution made by the Evaluation Capacity Network to this project. This paper is one of a series of articles supported through the Evaluation Capacity Network, a partnership funded by the Social Sciences and Humanities Research Council of Canada.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Social Sciences and Humanities Research Council of Canada.