Abstract

This article examines how youth develop their understanding of program evaluation through direct participation in an empowerment evaluation (EE). Based on post-evaluation interviews, we explore how EE served as an experiential strategy for building program evaluation knowledge and skills among youth. Participants described learning through hands-on activities and group dialogue, as well as encountering moments of confusion or uncertainty that limited their learning. These reflections underscore EE's potential as a strategy for teaching evaluation, particularly when youth are engaged in meaningful, developmentally appropriate ways. The findings also highlight the importance of aligning EE processes with the needs, motivations, and capacities of youth. The study contributes to a growing body of literature on youth-led evaluation and raises broader questions about how evaluation is taught, experienced, and adapted for younger audiences.

Introduction

Empowerment evaluation (EE) offers opportunities for youth aged 12‒17 to actively engage in program evaluation activities rather than serving as passive subjects. Although EE has been used to involve youth in evaluation processes, its potential as a strategy for teaching evaluation has received less attention. This study addressed that gap by examining how EE functioned as an approach to teaching youth about evaluation. We drew on post-evaluation interviews with youth who participated in an EE to explore their learning experiences, reflections, and meaning-making. In doing so, this article contributes to ongoing conversations about experiential learning in evaluation education, particularly as it applies to youth.

Background

The Importance of Teaching Evaluation to Youth

Capacity building

Formal evaluator education remains concentrated mainly in post-secondary settings (LaVelle & Donaldson, 2015), limiting opportunities for younger learners to access structured evaluation learning (Montrosse-Moorhead et al., 2019). When youth engage in evaluation, they develop a range of cognitive, interpersonal, and civic skills, including communication, analysis, problem-solving, leadership, and evaluative judgment (Checkoway et al., 2003; Chen et al., 2010; Gawler, 2005; Gokiert et al., 2024; Jacquez et al., 2013; Montrosse-Moorhead et al., 2019; Nakaima & Sridharan, 2020; Nielsen et al., 2024). Participation has also been associated with greater confidence, agency, and a sense of belonging—outcomes that support both youth development and ongoing engagement in civic life (Sabo Flores, 2008).

Equity

Because youth draw on lived experiences, their perspectives often illuminate assumptions, day-to-day dynamics, and program effects that adult evaluators may miss (Camino, 2000; Gong & Wright, 2007; Samuelson et al., 2013; Wong et al., 2010; Zeldin et al., 2017; Zeller-Berkman et al., 2015). Incorporating these insights is not only a matter of evaluation quality but also of equity: it enables program decisions to reflect the perspectives of those most directly affected. However, youth are routinely excluded from organizational learning processes and from meaningful opportunities to shape program directions (Checkoway, 2011; Zeldin et al., 2005). In this way, early evaluation learning can help redistribute voice and support more equitable program improvement processes.

Pedagogical relevance

Teaching evaluation to youth is pedagogically valuable because evaluation tasks naturally align with how young people learn. Constructivist theories emphasize that learners build understanding through interaction, interpretation, and reflection (King, 2007; Preskill & Torres, 1999; Vygotsky, 1978). According to experiential learning theory, learning is a cycle of acting, reflecting, conceptualizing, and applying ideas (Kolb, 1984). Evaluation engages youth in these same processes by identifying issues that matter to them, interpreting information, weighing evidence, and articulating judgments.

Approaches That Support Youth Evaluation Learning

Participatory and collaborative approaches

Participatory and collaborative evaluation approaches provide a strong foundation for youth evaluation learning by operationalizing experiential and constructivist learning principles. In these approaches, participants engage as co-inquirers rather than as data sources, contributing to the development of evaluation questions, data collection, interpretation, and recommendations (Cousins & Chouinard, 2012; Cousins & Whitmore, 1998; Fetterman et al., 2018; Moreau & Cousins, 2012). These approaches promote learning through dialogue and shared interpretation. As participants negotiate meaning, examine evidence together, and deliberate about program quality, they engage in the kinds of analytical and interpretive work that deepen evaluative understanding (King et al., 2007; Preskill & Torres, 1999).

Process use as a mechanism of evaluative learning

The concept of process use provides additional insight into how learning occurs when youth participate in evaluation activities. Process use refers to changes in thinking, skills, or behavior that arise from engagement in the evaluation process itself, regardless of the evaluation's instrumental purpose (Patton, 1997, 2008). Empirical studies identify several factors that foster process use: clarity and structure in evaluation tasks, quality facilitation, participant readiness, and opportunities for reflection and dialogue (Amo & Cousins, 2007; Bourgeois & Cousins, 2013; Patton, 2008).

Conditions that support evaluative learning for youth

A set of well-documented conditions helps create the kinds of learning benefits associated with process use. Structure reduces ambiguity and offers a cognitive anchor that links individual tasks to a broader evaluative logic. Youth are more likely to understand what an activity is asking of them when tasks are broken into manageable components, expectations are explicit, and the purpose of each step is clear (Fox & Cater, 2011; Heath & Moreau, 2022a; Preskill & Torres, 1999). Facilitation and psychological safety further shape whether engagement becomes meaningful. Collaborative facilitation—inviting reasoning, modeling evaluative thinking, and offering responsive support—encourages risk-taking and meaning-making (Cousins & Chouinard, 2012; King et al., 2007). When youth perceive their contributions as undervalued, however, they may withdraw or offer minimal input, reducing opportunities for learning (Langhout & Fernández, 2015; Zeldin et al., 2017). Scaffolding through guided questioning, structured prompts, and practice interpreting evidence helps youth apply criteria, weigh information, and articulate judgments (Heath & Moreau, 2022b; King, 2007). Learning is further reinforced when youth see their contributions visibly incorporated into criteria, discussions, or recommendations—signaling that their perspectives matter and shaping their motivation to participate (Sabo Flores, 2008; Zeller-Berkman et al., 2015).

Developmentally responsive strategies

Research demonstrates that evaluators can create these supportive conditions by tailoring evaluation processes to the developmental needs of diverse youth participants. For younger or less experienced learners, evaluators often use visual and arts-based strategies—such as drawing, mapping, storyboarding, and photovoice—to externalize ideas and make abstract concepts more concrete (Exner-Cortens et al., 2022; Halsall & Forneris, 2016; Sabo Flores, 2008; Zeller-Berkman et al., 2015). Evaluators may also break evaluation tasks into smaller components, simplify rating processes, or offer structured prompts to support reasoning and interpretation (Heath & Moreau, 2022a). For youth, strategies such as peer-led discussions, small-group data interpretation, and opportunities to co-facilitate activities can enhance engagement by creating psychologically safe environments and reducing adult–youth power imbalances (Dare & Nowicki, 2019; Gong & Wright, 2007; Heath & Moreau, 2022b; Richards-Schuster & Plachta Elliott, 2019). Some youth-serving organizations also incorporate youth advisory councils to support authentic decision-making roles and shared ownership of evaluation priorities (Havlicek et al., 2018; Roholt et al., 2013).

EE: Structure, Distinctiveness, and Relevance

EE builds on participatory and collaborative traditions while offering a defined, three-step framework—developing a mission, taking stock, and planning for the future—that guides collaborative inquiry and decision-making (Fetterman, 2001, 2015, 2023; Fetterman & Wandersman, 2005). A central feature of EE is the role of the evaluator as a “critical friend”—a facilitator who supports stakeholders’ learning, models evaluative reasoning, and asks clarifying questions while avoiding directive control (Fetterman, 2001; King et al., 2007; Whitmore, 1998). This stance distinguishes EE from approaches such as youth-led evaluation, youth participatory action research (YPAR), or transformative participatory evaluation. These approaches typically give youth complete control over evaluation focus, pacing, and interpretation and often unfold over weeks or months (Langhout & Fernández, 2015; Montrosse-Moorhead et al., 2019; Richards-Schuster & Plachta Elliott, 2019; Sabo Flores, 2008). Whereas YPAR emphasizes critical consciousness and social action, and youth-led evaluation emphasizes youth autonomy, EE's combination of structure and guided facilitation is designed to support collaborative decision-making and capacity-building—particularly for those new to evaluation.

EE varies considerably across settings in structure, duration, and facilitator involvement (Heath & Moreau, 2022a; Miller & Campbell, 2006). Long-term youth-focused projects, such as Langhout's work with elementary-aged youth, offer extended opportunities for relationship-building and iterative inquiry (Langhout & Fernández, 2015), whereas short-format EEs require greater attention to pacing, scaffolding, and developmental readiness.

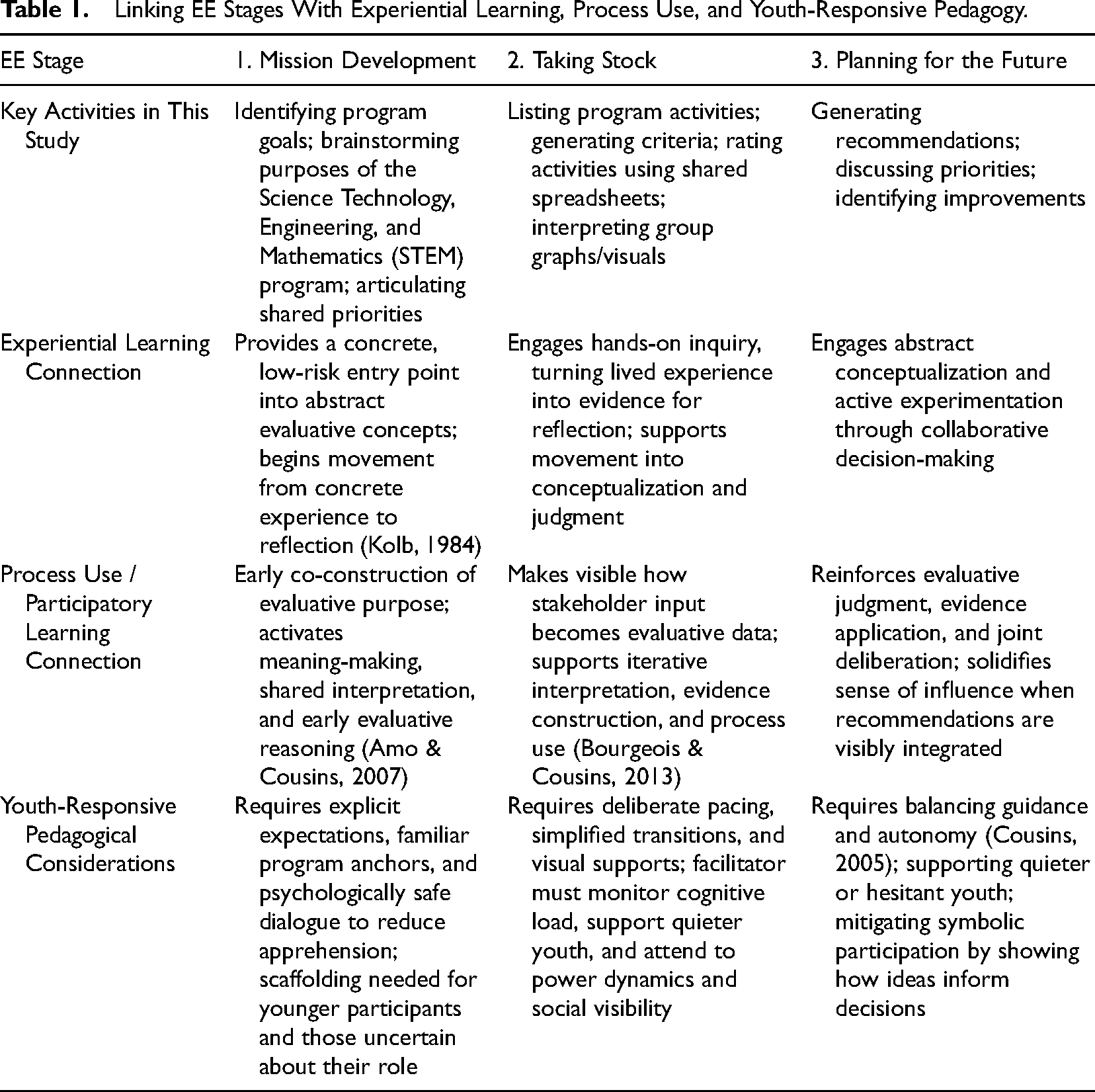

Table 1 presents a conceptual synthesis linking the three EE stages with experiential learning theory, process use, and youth-focused pedagogical considerations.

Linking EE Stages With Experiential Learning, Process Use, and Youth-Responsive Pedagogy.

Youth Perspectives on EE as a Teaching Strategy

Although the literature documents numerous benefits of involving youth in evaluation, the evidence base varies in how directly youth perspectives are represented. Some studies draw on youth interviews, focus groups, or surveys to describe changes in skills, confidence, or agency (Jacquez et al., 2013; Samuelson et al., 2013; Zeller-Berkman et al., 2015), whereas others identify youth outcomes primarily from adult accounts, program documentation, or evaluator observations (Checkoway et al., 2003; Chen et al., 2010; Gawler, 2005; Montrosse-Moorhead et al., 2019). In EE specifically, much of the literature reflects the views of adult evaluators or program staff, who describe EE as fostering youth capacity, confidence, and engagement (e.g., Heath & Moreau, 2022a, 2022b). These patterns underscore the need to center youth voices to understand how young people themselves make sense of evaluation participation and learning.

Research on experiential and participatory evaluation further suggests that learning is often not articulated through explicit statements such as “I learned…” but is instead embedded in how participants describe what they did, noticed, struggled with, or came to see differently (Amo & Cousins, 2007; Bourgeois & Cousins, 2013; Patton, 2008; Zeller-Berkman et al., 2015). Youth may signal evaluative learning when they talk about applying criteria, questioning assumptions, weighing different forms of evidence, or revising their views in response to new information, even if they do not label these shifts as “learning.” Attending to these forms of meaning-making is therefore critical for understanding how evaluation functions as a teaching process.

Despite growing interest in EE as a strategy for engaging youth, limited research examines how young people describe their experiences of EE, how they interpret its structured stages, or what they perceive as helping or hindering their understanding in short-format implementations. Questions remain about how youth make sense of mission setting, “taking stock,” and planning for the future; how they experience facilitation and group dynamics; and under what conditions EE feels clear, meaningful, or confusing as a learning context. While longer-term YPAR and youth-led projects offer insight into youth learning within sustained participatory processes, far less is known about what youth can learn about evaluation through condensed EE experiences that reflect the time and resource constraints typical in many youth-serving organizations.

This study responds to these areas of opportunity by examining how youth develop their understanding of program evaluation through participation in a single-day EE. Drawing on post-EE interviews, we analyze how young people discussed the EE process, what they said they did and thought at each stage, and how they described moments of clarity, confusion, or disengagement. By focusing on youths’ own accounts of their experiences, this study offers insight into EE's pedagogical potential and limitations as an introductory approach to evaluation education, and into the conditions that shape whether participation becomes a meaningful evaluative learning opportunity.

Methods

This study employed a qualitative design to examine how youth made sense of evaluation through their participation in an EE. The following section describes the program context, evaluation activities, sampling procedures, and data collection and analysis methods.

Program Context

The EE engaged youth in evaluating the STEM educational outreach program they had recently completed. The program, delivered in Fall 2019 through the Faculty of Engineering at a large Canadian university, consisted of six hands-on STEM sessions offered over ten weeks. Each session focused on a different STEM area (e.g., robotics, biomedical engineering, computer programming, environmental engineering) and served students in Grades 7‒12 (approximately ages 12‒17). Younger participants (Grades 7 and 8) received more structured guidance, scaffolded tasks, and step-by-step instruction, whereas older youth (Grades 9‒12) engaged in more open-ended problem-solving and independent design work. Courses were delivered in university engineering labs and classrooms using materials adapted from undergraduate learning activities. They were facilitated by five core outreach staff members supported by undergraduate engineering students who served as teaching assistants. Staff were responsible for curriculum development, instruction, and supervision, while teaching assistants provided technical support, one-on-one guidance, and help with equipment. Although the outreach team routinely collected attendance data for administrative purposes, the program had not previously undergone a formal evaluation.

The EE

The EE was initiated in collaboration with the Faculty of Engineering's Director of Educational Outreach. The Outreach Office sought an evaluation approach that would engage youth directly in reflecting on their program experiences and identifying opportunities for improvement. The purpose of the EE was to generate insights to support ongoing program refinement, strengthen facilitation practices, and inform future curriculum design. The primary intended users of the evaluation findings were program staff and the Outreach leadership team, who sought information to guide ongoing program refinement and the design of subsequent program cycles.

The EE was facilitated by an external evaluator with approximately 15 years of experience conducting evaluations in community and government settings. Although this was their first application of EE, the facilitator had completed a one-day EE training workshop delivered by David Fetterman, the founder of EE. The facilitator had no prior relationship with the STEM outreach program before the EE. The EE was initiated after the facilitator approached the Program Director to propose conducting the evaluation as part of this research project.

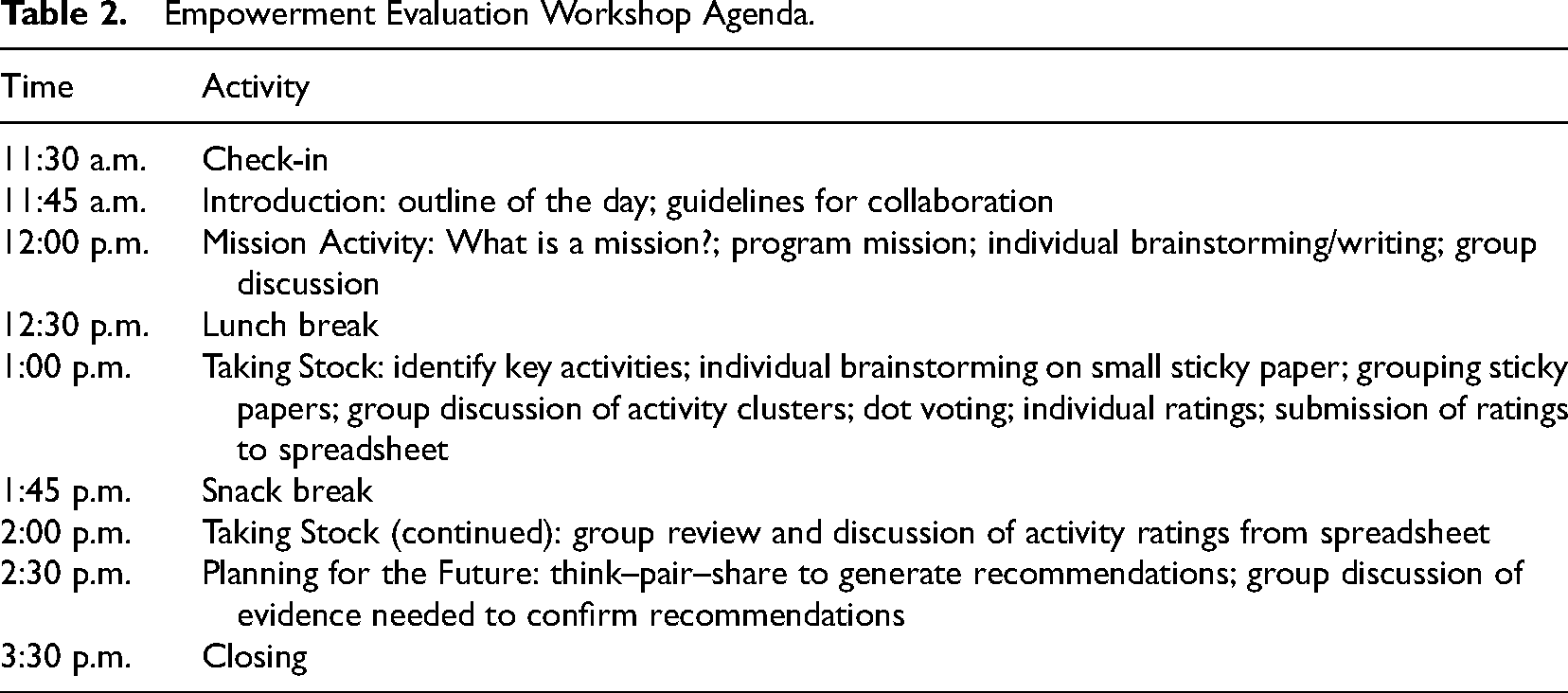

The EE was conducted as a single-day session lasting approximately 4 hours. Table 2 outlines the structured agenda for the session, illustrating how the EE was organized around three core phases of the model and aligned with established EE protocols (Fetterman, 2001, 2015; Fetterman & Wandersman, 2005).

Empowerment Evaluation Workshop Agenda.

Youth participated in three core phases of the EE model: mission development, taking stock, and planning for the future. Activities included an initial check-in and introduction, individual and group brainstorming, categorization of program activities, dot voting, rating key activities using a shared spreadsheet, interpreting the group ratings, and generating recommendations for program improvement. Activities were completed in a structured, collaborative format in which youth generated ideas individually and then shared them in group discussions, while the facilitator guided transitions and supported equitable participation.

Although the EE structure and activities were the same for all participants, the external evaluator noted that younger youth (ages 12 and 13) sometimes required additional clarification or prompting during transitions between activities. Following the EE, 14 of the 15 youth participated in individual interviews to share their reflections on the EE experience. These interviews form the basis of the study's findings.

Sampling

To initiate the EE, all 35 youth in the fall program cohort were invited to participate in the EE and follow-up interviews through three channels: (a) an email invitation, (b) a brief 10-min information session presented by the external evaluator during the final STEM program meeting, and (c) printed information letters distributed at that same session. Using purposive sampling, 15 youth consented to participate in the EE. A homogeneous purposive sampling strategy was used, following Patton's (2014) framework, to recruit youth who shared a common experience of participating in the same STEM outreach program and the EE. This approach was appropriate given the study's aim of developing an in-depth understanding of a single, bounded group—youth who had shared the same STEM outreach experience and EE—rather than producing findings that are statistically generalizable. Youth who agreed to participate in the post-EE interviews were selected through a combination of homogeneous purposive and convenience sampling, as interviews were conducted with those who had participated in the EE and were available and willing to take part.

Of the 15 youth who attended the EE, 14 participated in post-EE interviews. The five staff members and their teaching assistants from the STEM outreach program were also invited to participate in the EE and post-EE interviews through the same recruitment methods, but all declined. Although reasons for non-participation were not systematically collected, informal feedback suggested that time constraints and scheduling conflicts were the primary factors.

Interview participants ranged in ages 12‒15 years and included five girls, one nonbinary individual, and eight boys. Ages of participants were as follows: 12 years old (

Instrument Development

The interview protocols were developed based on a review of EE literature and designed to explore youth experiences, learning outcomes, and perceptions of evaluation following their participation. Separate semi-structured protocols were created for youth and staff (though staff ultimately did not participate). Questions included open-ended prompts and follow-up probes to encourage detailed responses. The protocols were piloted with two colleagues and two youth outside the study to ensure clarity, feasibility, and age-appropriateness. Feedback from the pilot led to refinements in question wording and sequencing (Seidman, 2013).

Data Collection Procedures

Informed consent was obtained in advance, and participants were reminded of their right to withdraw at any time. Interviews were conducted in a private space at the program site, lasted approximately 20‒60 min, and were audio-recorded and transcribed verbatim. To protect confidentiality, pseudonyms were assigned using a combination of registration numbers and ages (e.g., EE1, age 13). To help reduce potential social desirability bias during the post-EE interviews, youth were reminded that their responses would remain confidential and that they were welcome to share both positive and critical reflections about the EE process and the program. Despite these steps, some degree of social desirability may remain given the small, self-selected sample.

The study received approval from the University of Ottawa Social Sciences and Humanities Research Ethics Board (Protocol S-05-18-663). In accordance with institutional guidelines for minimal-risk research involving minors, youth aged 12 and 13 provided written assent while their parents provided consent, and youth aged 14 and older provided their own written consent. All participants were informed of the voluntary nature of the study, their right to withdraw at any time without consequence, and the measures taken to ensure confidentiality and anonymity throughout the data collection process.

Data Analysis

Interview transcripts were analyzed thematically using NVivo software and guided by the iterative framework described by Miles et al. (2020). Initial codes were developed inductively from the data and refined through multiple cycles of analysis to identify patterns related to youth experiences and pedagogical outcomes. Consistent with experiential and participatory evaluation research, learning in this study was interpreted through youths’ meaning-making—how they described engagement, insight, confusion, influence, or challenge—rather than through explicit statements of having “learned” particular concepts (Kolb, 1984; Patton, 2008). This interpretive approach aligns with prior work showing that evaluative understanding among novice or youth participants is often embedded in how they make sense of tasks and experiences (Jacquez et al., 2013; Samuelson et al., 2013; Zeller-Berkman et al., 2015). To enhance analytic rigor, the research team maintained detailed analytic memos throughout the coding process to document assumptions, decisions, and emergent themes (Lincoln & Guba, 1985). Thick description was employed to contextualize the findings, and detailed accounts of the EE process enhanced the transferability of the results (Shenton, 2004).

Findings

In the post-EE interviews, youth described how they developed (or struggled to develop) their understanding of program evaluation through participation in a short-format EE. Their reflections reveal learning across three interconnected areas: (1) what evaluation is and does, (2) how evaluation unfolds as a structured process, and (3) how youth understood their role and influence within an evaluative space. Youth demonstrated evaluative learning not only when they referenced specific concepts but also when they described how they made sense of tasks, interpreted evidence, navigated structure, or positioned themselves relative to peers and facilitators. These meaning-making accounts served as indicators of conceptual, procedural, and relational learning.

Theme 1: Understanding What Evaluation Is and Does Through Engagement, Reflection, and Decision-Making

Evaluation as an improvement-oriented practice

Youth commonly described evaluation as a process focused on strengthening the program—not on assigning blame. Seeing their own experiences used to diagnose problems and generate solutions helped them conceptualize evaluation as constructive and improvement-oriented. One participant explained, “It wasn’t about bashing the program, it was about talking about how we could improve the program and make it even better by expanding what we liked and making better what we didn’t” (EE2, age 14). Many described the EE as an opportunity to “give back to the program” and support future participants. One youth articulated this sense of contribution clearly: “We provided a lot of feedback today that will help to improve the teen club, if they listen and use it to do better and improve the club” (EE9, age 15). Others linked this sense of contribution to confidence: “I liked sharing my opinion when it has the power to improve the program for others” (EE5, age 13).

Understanding how stakeholder experience becomes evidence

Youth learned that their personal experiences constituted legitimate evaluation data. They described seeing their input transformed into visual summaries and shared evidence. One youth stated, “I learned about how in evaluation our opinions about the program get counted and used to help us make decisions, and I know this because I saw it happen right in front of me in the graph that was displayed” (EE3, age 14). This same participant noticed how their words were incorporated throughout the session: the evaluator “summarized [our] words many times throughout the EE” (EE3, age 14). The immediacy of seeing their insights represented and used for group discussion made the evaluative logic tangible, helping youth understand how judgments were formed.

Recognizing limits and dependencies of evidence use

Participation in EE also made visible the boundaries of youth influence in evaluation. While youth saw their contributions converted into evidence, they also expressed concern about whether recommendations would be used: “We talked about a lot, probably too much… Now let's just hope they use them” (EE1, age 13). Others worried that program staff might view youth feedback as impractical or uninformed: suggestions could be dismissed as “just suggestions from people who don’t know what it takes to run a program” (EE7, age 13). Another questioned whether the evaluation would have impact at all: “The only thing that came out of today is a report and what's a report going to do?” (EE6, age 13). These reflections show that youth learned not only evaluation's purpose but also its contingencies—that decisions may depend on adult authority, organizational constraints, and follow-through beyond the evaluation space.

Theme 2: Understanding an Evaluation as a Structured Process Through Sequence, Scaffolding, and Pacing

Structure makes evaluation understandable and doable (and even fun!)

Many youth began the EE anticipating that evaluation would be intimidating or technical. They described evaluation as “very formal,” “serious and boring” (EE6, age 13), or “beyond [their] understanding” (EE2, age 14). Several arrived feeling “nervous and fearful… because [they] didn’t know what evaluation was” (EE2, age 14). The EE sequence (i.e., mission, taking stock, and planning for the future) helped counter these expectations. Repeated movement between individual brainstorming and group discussion throughout the EE stages appeared to build familiarity and competence. Youth reported being “surprised that [they] had so many answers” (EE7, age 13) and “impressed by how [they] could rate activities and come up with ideas of how to improve them” (EE7, age 13). By the end of the session, one participant felt “not scared or intimidated by it anymore because [they] sort of knew what to do” (EE8, age 14), and another remarked that evaluation was “fun” and something they “would do it again since [they] got the hang of it” (EE10, age 14).

When structure overwhelms: Cognitive load and pace

For other youth, the same structure created pressure and fatigue. Several described feeling overwhelmed by the volume and speed of activities: “We answered questions, so many questions and gave suggestions, so many suggestions… We did other things too, but I can’t remember” (EE11, age 14). Another said they felt “dizzy from all the things they did” (EE6, age 13). Some youth reported that the purpose of the EE was “lost on them” because they were “just so focused on following all of the instructions and doing it right” (EE12, age 14). In these cases, scaffolded structure became burdensome rather than supportive, limiting opportunities for reflection and conceptual integration.

When the process outpaces readiness: Watching versus doing

A subset of youth described being able to complete tasks but not fully understanding the evaluative logic behind them. One youth explained, “I didn’t really understand much, so I provided small ideas and sometimes supported others” (EE14, age 12). Another reflected, “I really wasn’t sure what you’re looking for… but I didn’t want to say anything in front of the other kids because they would… think [less] of me” (EE13, age 12). For these participants, the structured sequence outpaced their readiness, and they shifted into observer mode: “I didn’t really know what to do so I just sort of watched and observed” (EE13, age 12).

Peers also recognized this pattern. One older youth noted that some younger participants “get challenged, get frustrated, and then just shut down… I saw the same thing here today” (EE9, age 15). Others observed that some responded with avoidance behaviors—“goofing around, distracting others… criticizing people and shooting down good ideas” (EE10, age 14; EE4, age 15). These accounts illustrate that structure enabled learning only when aligned with youths’ developmental readiness and emotional comfort. When misaligned, youth enacted the steps without integrating their meaning, limiting procedural understanding.

Theme 3: Understanding One's Role and Voice in Evaluation Through Validation, Social Dynamics, and Authentic Participation

Feeling centered rather than “talked at”

Many youth described the EE as a rare setting where their perspectives were central. They contrasted this with contexts where adults lead and youth respond. One participant explained that they “weren’t being talked at” but instead “were in charge of coming up with suggestions” (EE6, age 13). Another noted they “interacted with the evaluator” rather than being lectured to (EE4, age 15). Youth emphasized that the facilitator's role felt supportive rather than directive, with one participant noting that the evaluator “helped us talk through things instead of telling us what to say” (EE3, age 14). This reinforced their sense that youth contributions, rather than adult interpretations, were driving the process.

Collaboration as affirmation of insight and identity

For many youth, collaboration deepened understanding and provided social reinforcement. Sharing ideas with peers reduced pressure: “It was easy to think of ways to make the program better when the pressure isn’t just placed on one person” (EE4, age 15). Talking through experiences helped some youth realize what they had learned and how the program affected them (EE10, age 14). Listening to peers prompted re-evaluation of their own experiences, as one youth noted when realizing others also disliked soldering: “It feels good to hear about how others felt… now I see I wasn’t prepared to do it” (EE8, age 14). For these youth, collaboration affirmed their insider knowledge and enriched meaning-making.

Collaboration as exclusion and social pressure

For others, group work constrained participation. More assertive peers sometimes dominated: “They talked so much… I feel like they controlled the whole process and left others out because they were quiet” (EE1, age 13). Group voting also had downstream effects: when their idea did not prevail early in the process, these youth felt sidelined for the entire session—“I felt left out for the whole day… like my opinion didn’t matter” (EE1, age 13).

Social visibility also shaped participation. Some youth described feeling monitored by peers and fearing negative judgment: “I felt like people were always around and watching me… so I figured it was better to not say anything at all and just hide” (EE1, age 13). Others felt criticized simply for enjoying social moments: “I felt so judged for enjoying time with my friends” (EE6, age 13). These accounts show that collaboration benefited many youth but simultaneously constrained those who were quieter, younger, or more socially cautious.

Authentic Voice, Insider Knowledge, and Safe Expression

Youth also learned that their lived experience carried legitimate evaluative authority. One participant emphasized, “I really know what it's like to be there… something the instructors don’t really know because they’re not in our position” (EE8, age 14). Several reported that the EE offered a safer space for honest critique than day-to-day program settings. They worried that voicing concerns to staff would “hurt their feelings” or get them “in trouble” (EE4, age 15; EE6, age 13). In contrast, the EE created space to “say how I really feel… and do it the way I want” (EE8, age 14). This sense of safety helped some youth exercise a more authentic voice—and recognize evaluation as a forum where their insider knowledge mattered.

Misaligned motivations and expectations

Not all youth wanted to be involved in this way. Some participated primarily for social reasons: they “showed up for the food and to spend time with friends” (EE1, age 13) and were less interested in sustained evaluation work. Others described “not having anything to say” (EE6, age 13) or feeling unprepared for “deep heavy discussions and a lot of activities” (EE6, age 13). Some felt a sense of obligation inconsistent with their motivation: “I guess I’m here because my job now is to give back, but if you asked me what I wanted to be doing today, that's not really it” (EE5, age 13). For these youth, misaligned expectations—not conceptual difficulty—limited engagement and constrained learning about their evaluative role.

Summary

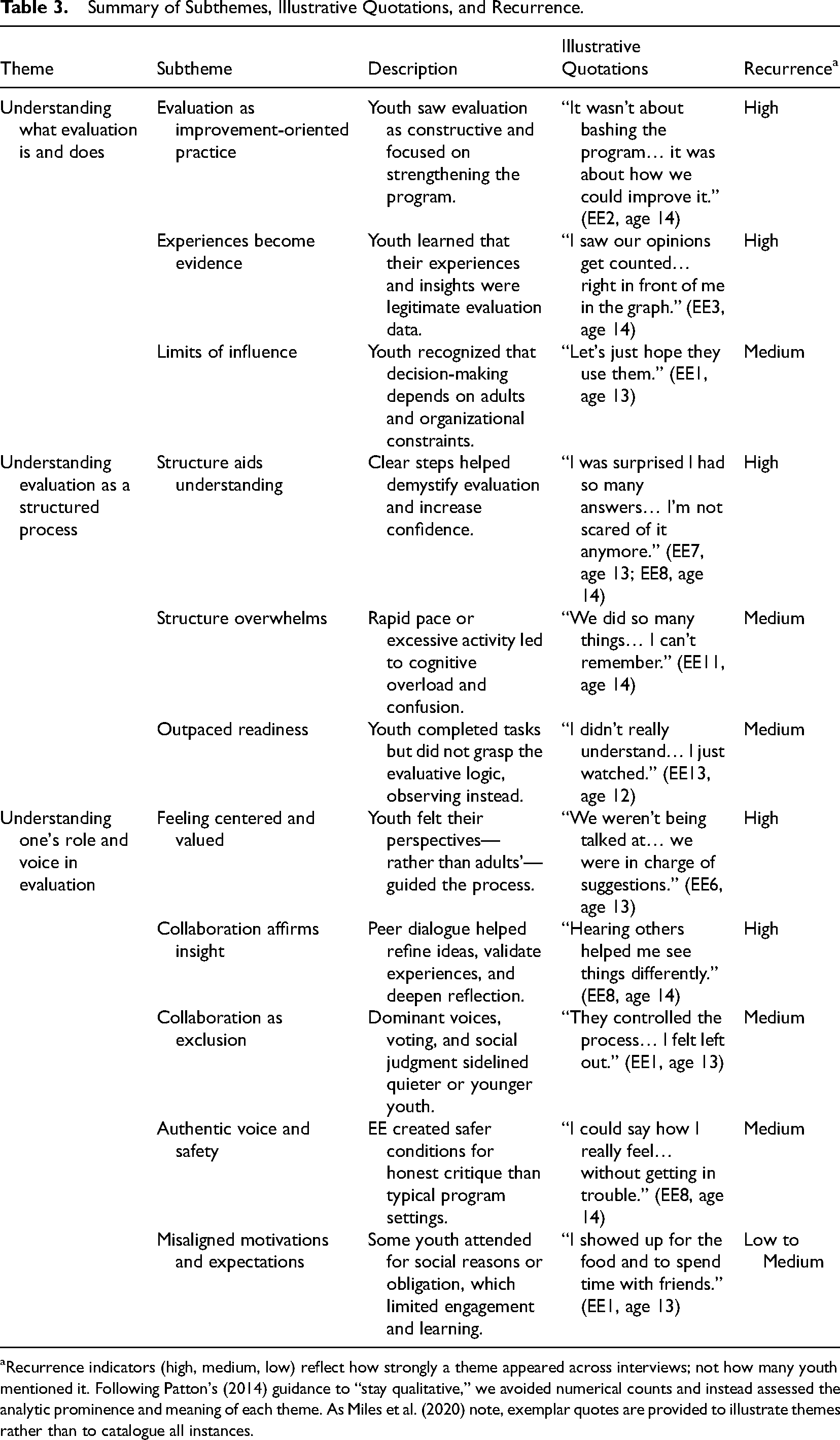

Table 3 summarizes the themes, subthemes, illustrative quotes, and recurrence. Across themes, youth demonstrated emerging understandings of evaluation's purpose, process, and relational dynamics. They learned what evaluation is by engaging in evidence generation and decision-making; they learned how it works by navigating EE's structured stages; and they learned about their role through experiences of voice, validation, exclusion, and social risk. Collectively, these reflections highlight EE's pedagogical potential (and its limitations) for supporting evaluative learning among youth in short-format contexts.

Summary of Subthemes, Illustrative Quotations, and Recurrence.

Recurrence indicators (high, medium, low) reflect how strongly a theme appeared across interviews; not how many youth mentioned it. Following Patton's (2014) guidance to “stay qualitative,” we avoided numerical counts and instead assessed the analytic prominence and meaning of each theme. As Miles et al. (2020) note, exemplar quotes are provided to illustrate themes rather than to catalogue all instances.

Discussion

This study examined how youth developed their understanding of program evaluation through participation in an EE. By centering youths’ reflections, the study extends scholarship on participatory and collaborative evaluation by illustrating how young people make sense of evaluation's purpose, process, and relational dynamics in a condensed format. The findings demonstrate that EE can facilitate foundational evaluative learning, particularly when structure, facilitation, and pacing align with developmental needs, while also highlighting important limits that shape whether participation becomes meaningful, overwhelming, or unevenly distributed.

Understanding What Evaluation Is Through Engagement and Reflection

The findings reveal that youth developed an initial understanding of what evaluation is and does primarily through engagement in concrete tasks, reflection on evidence, and participation in decision-making, not through abstract explanation. This echoes experiential learning theory (Kolb, 1984), which positions concrete experience and reflective interpretation as the basis for conceptual understanding, and process use literature (Patton, 1997, 2008), which emphasizes that learning arises through doing rather than studying.

In this study, youth understood evaluation as an improvement-oriented practice when they saw their experiences transformed into evidence and used to guide group judgments. These moments (e.g., seeing graphs update in real time, hearing their words summarized and revisited) represent early forms of evaluative reasoning: recognizing that evidence is constructed, that multiple perspectives shape judgments, and that the purpose of evaluation is to inform program improvement. This often involved an emotional trajectory—from early apprehension about evaluation to increased confidence as youth saw their ideas taken seriously and used in decision-making. Similar patterns are described in youth participatory evaluation, where youth learn through articulating strengths, weaknesses, and solutions based on their lived experience (Jacquez et al., 2013; Langhout & Fernández, 2015; Samuelson et al., 2013).

At the same time, youth recognized the evaluation's practical limitations and dependencies. Their questions about whether staff would use their recommendations reflect an emerging critical awareness of the uneven power dynamics that shape whether evaluation findings translate into action—echoing critiques that stakeholder participation can become symbolic when follow-through is unclear (Checkoway & Richards-Schuster, 2004). Thus, learning about “what evaluation is” also involved learning its constraints: evaluation is an improvement tool, but one mediated by adult authority and organizational decisions beyond the evaluation space.

Understanding Evaluation as a Process Through Structure, Sequencing, and Scaffolding

A second insight is that youth learned evaluation as a process with a recognizable sequence. EE is structured by predictable sequencing (i.e., mission development, taking stock, and planning for the future) that includes repeated cycles of individual brainstorming and group discussions. This design provided a roadmap that helped many youth connect discrete tasks to a broader evaluative logic. This aligns with literature showing that clear phases and predictable sequencing support novice evaluators by reducing ambiguity and making evaluative reasoning more transparent (Heath & Moreau, 2022a; Preskill & Torres, 1999). Youth described moving from apprehension to competence as they engaged in repeated cycles of articulating criteria, rating activities, and generating improvements, reflecting developmental steps toward evaluative judgment (Amo & Cousins, 2007).

Structure provided cognitive scaffolding but became insufficient—or even overwhelming—when pacing outpaced developmental readiness. When pacing was rapid, when activities accumulated without time for integration, or when developmental readiness was mismatched with task demands, structure became a source of cognitive overload rather than support. Youth who shifted into “watching rather than doing” exemplify what happens when structural scaffolds exceed processing capacity. These patterns mirror concerns raised in youth participatory evaluation scholarship that participatory models can overwhelm younger or less experienced participants without deliberate pacing, explanation, and opportunities for synthesis (Samuelson et al., 2013; Zeller-Berkman et al., 2015). They also resonate with distinctions noted in the literature between short-format EE implementations—such as the single-day model used in this study—and longer-term or youth-driven versions of EE and YPAR, which offer extended time for relationship-building, iterative inquiry, and gradual conceptual mastery (Heath & Moreau, 2022b; Langhout & Fernández, 2015).

Understanding One's Role and Voice in Evaluation Through Validation and Group Dynamics

The third theme highlights youths’ learning about the relational and social dimensions of evaluation. Many youth came to understand themselves as legitimate contributors whose experiences constituted meaningful evaluation evidence. The centering of youth perspectives, visible use of youth-generated input, and facilitator stance as a “critical friend” cultivated feelings of influence, authenticity, and empowerment, consistent with participatory and empowerment-oriented evaluation scholarship (Checkoway et al., 2003; Christens, 2019; Fetterman et al., 2018; Zeller-Berkman et al., 2015). For these youth, EE demonstrated that evaluation is not only technical but also relational: a context where their insider knowledge matters and where their interpretations help shape program understanding.

Yet youth also learned about the social risks and asymmetries of participation. Some felt overshadowed by more vocal peers or constrained by social judgment, reflecting well-documented dynamics in youth engagement research (Langhout & Fernández, 2015). Others felt burdened by an implicit responsibility to sustain the evaluation, revealing a tension in EE's participatory ethos: while EE aims to empower, youth may also experience pressure to perform as “good evaluators.” These tensions echo Cousins’ (2005) warning that empowerment-oriented evaluation can blur the boundary between support and control. Even when facilitators adopt a collaborative stance, youth may still interpret expectations to “keep the process going” or to “give the right answers” as subtle forms of pressure. This highlights that facilitation in EE is never neutral, and that learning opportunities depend in part on how youth experience the balance between guidance and autonomy.

These dynamics differ from those reported in longer-term YPAR and Transformative Participatory Evaluation (TPE). In those contexts, extended timelines, deeper relationship-building, and youth-driven agendas often buffer against these pressures by giving young people more time to develop trust, collective identity, and shared norms for equitable participation (Checkoway & Richards-Schuster, 2004; Langhout & Fernández, 2015; Montrosse-Moorhead et al., 2019; Wong et al., 2010). In contrast, the condensed, single-session EE format limits opportunities to renegotiate power or redistribute voice, making youth more vulnerable to the social hierarchies and performance pressures that surfaced in this study.

Several youth described moments when participation felt more performative than educational—speaking, voting, or completing activities without seeing how their ideas shaped the analytic or decision-making process. Such experiences align with longstanding critiques of tokenism in youth evaluation, in which participation becomes symbolic rather than influential (Checkoway & Richards-Schuster, 2004; Christens, 2019; Gong & Wright, 2007). These instances signal a core threat to the empowerment principle underpinning EE: when youth cannot see their perspectives integrated into decisions, the process risks undermining rather than strengthening evaluative learning. These findings underscore that understanding evaluation involves understanding power, voice, and participation. When youth experience validation and authentic influence, EE functions as a relationally empowering teaching tool. When group dynamics constrain contribution, learning is diminished, and the potential equity benefits of EE are compromised.

Cross-Theme Synthesis: Conditions Supporting and Constraining Evaluative Learning

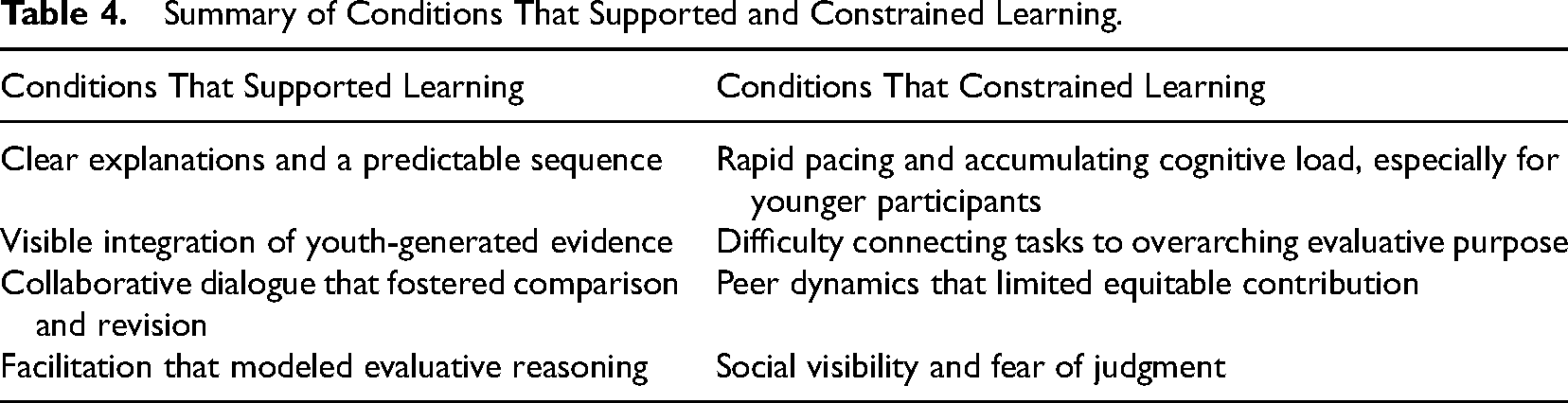

The literature identifies conditions that support evaluative learning—structure, clarity, facilitation, psychological safety, and opportunities for reflection (Amo & Cousins, 2007; Bourgeois & Cousins, 2013; King, 2007). Table 4 summarizes these conditions and highlights the nuance that they enabled learning for some youth while constraining it for others.

Summary of Conditions That Supported and Constrained Learning.

These patterns reinforce that youth are not a homogeneous group. Their opportunities for evaluative learning varied according to developmental readiness, social position, motivation, and comfort navigating uncertainty. Consistent with the literature, participation alone did not produce learning; rather, learning emerged when structural, cognitive, and relational conditions invited youth into meaning-making.

Practical Implications for Using EE to Teach Youth About Evaluation

Integrating insights from this study with the literature suggests several design considerations for facilitators (e.g., Moreau, 2017). Such considerations are specific to evaluators seeking to use EE as an introductory evaluative learning strategy:

Clarify purpose and expectations early to help youth situate each activity within the broader evaluative sequence. Make youth-generated evidence visible (graphs, summaries) to reinforce how participation shapes interpretation and decision-making. Use structured prompts, examples, and criteria to scaffold evaluative judgment, especially for younger or novice participants. Provide multiple participation modalities (individual, small-group, whole-group) to reduce social pressure and support quieter youth. Build in reflective pauses to support conceptual integration and reduce cognitive overload. Normalize uncertainty and invite questions to strengthen psychological safety and reduce fear of error. Close the loop by communicating how youth feedback will be used—critical for maintaining trust and avoiding tokenism.

These implications suggest that EE's pedagogical potential depends not only on its structure but on developmentally attuned facilitation that supports meaning-making, distributes voice, and creates conditions under which youth can understand both what evaluation is and how they can enact it.

Limitations and Future Research

This study reflects the experiences of youth who participated in a single, short-format EE within a university-based STEM outreach program. The EE's 4-hour, introductory structure necessarily bounded the depth and duration of evaluative learning that youth could experience. As such, the findings should be interpreted within the constraints of brief (single-day), intensive participation rather than in the context of longer-term or iterative evaluation processes typical of sustained EE or YPAR. These differences in duration and intensity limit the extent to which results can be generalized to other EE contexts.

The sample was small, self-selected, and demographically limited. Youth volunteered to participate in the EE and interviews, and may have been more motivated, reflective, or verbally comfortable than peers who declined. Additionally, aside from age and gender, demographic data were not collected due to minimal-risk ethical requirements. This limits the ability to examine how factors such as race, socioeconomic context, disability, or linguistic background shaped youths’ experiences, or meaning-making. Information about youth who did not participate in the EE or interviews was also not available, constraining interpretation of potential differences between participants and non-participants.

Another limitation relates to the nature of EE practice. EE is implemented in highly diverse ways across settings, varying in facilitator involvement, adherence to the three-step model, group size, developmental readiness of participants, and intended outcomes (Heath & Moreau, 2022a; Miller & Campbell, 2006). The present study captures only one version of EE tailored to a youth audience and delivered by an evaluator relatively new to the model. Different facilitation styles or levels of evaluator involvement might produce different learning experiences, particularly given the study's emphasis on facilitation as a condition shaping youth meaning-making. This variability underscores that EE's pedagogical effects are not inherent to the model itself but are contingent on how it is enacted. Data were drawn from post-EE interviews, which rely on self-report and may be influenced by recall limitations or social desirability bias. Although steps were taken to reduce these risks—such as conducting interviews in private spaces and reminding youth of confidentiality—some youth may have been reluctant to express criticism or uncertainty.

Despite these limitations, the study contributes insight into how youth understand evaluation through short-format EE and highlights pedagogical considerations that may be transferable to other youth-focused evaluation contexts. Future research should explore EE across more diverse youth populations, longer-term or multi-session versions of EE, and facilitation approaches designed specifically to support developmental differences. It may also be valuable to examine how early EE experiences shape youths’ participation in longer-term or intergenerational evaluation teams, where iterative cycles of inquiry and shared decision-making could build on the initial evaluative competencies surfaced in short-format settings. Comparative studies could examine how EE compares with other evaluation teaching strategies, while longitudinal designs could explore whether early EE experiences influence youths’ longer-term engagement with evaluation, decision-making, or critical thinking.

Conclusion

This study examined how youth developed their understanding of program evaluation through participation in a short-format EE. By foregrounding youths’ reflections, the findings demonstrate how structured, experiential engagement can support early evaluative learning. Youth came to see how evidence is constructed, how judgments are made, and how their perspectives shape decisions. At the same time, the results show that participation alone is not sufficient. When facilitation lacked developmental responsiveness, clarity, or inclusive practices, some youth experienced confusion, social pressure, or disengagement. EE therefore holds promise as an introductory evaluation teaching strategy when implemented with intentional scaffolding, attention to psychological safety, and support for equitable participation. Under these conditions, youth can move from uncertainty to developing evaluative competence and begin to see themselves not only as contributors but as emerging evaluators.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.