Abstract

This research identifies a surprising downside to using crowdsourcing to generate new product ideas: participants who do not win an idea generation contest temporarily disengage from the contest-hosting brand. When people lose a crowdsourcing contest, the experience of losing negatively affects the participants’ word-of-mouth and short-term purchase behaviors. Reframing the contest as a community activity (e.g., “Join the crowd and help us find a name for our new restaurant”) rather than a competition (e.g., “Compete with the crowd to be the one who names our new restaurant”) is found to positively affect a losing customer's subsequent engagement with the contest-hosting brand. Community framing shifts attention away from losing the contest (i.e., it reduces negative affect) and toward collectively creating a superior outcome (i.e., it increases one's perceived contribution), without changing the nature of the contest itself (i.e., participants continue to submit ideas). Community framing positively affects subsequent participant engagement, but it does not influence the effort the participant invests in the contest or the quality of the idea the participant submits. The evidence consists of lab experiments, field experiments, and a large-scale field study that measured actual purchase behavior.

Crowdsourcing is a promising approach for identifying new product ideas. In a typical crowdsourcing campaign, idea generation is outsourced to a “crowd” of anonymous participants (Afuah and Tucci 2012; Bayus 2013; Howe 2006). Campaign managers strive to maximize the size of the crowd, as quantity breeds quality (the more participants who submit ideas, the higher the likelihood that a few good ideas will emerge; Camacho et al. 2019; Nishikawa et al. 2017; Terwiesch and Ulrich 2009). One strategy for increasing the number of participants is to frame a crowdsourcing activity as a competition, wherein the winning idea is recognized and rewarded. For example, the Oreo “MyOreoCreation” campaign (2017) awarded $500,000 to the winning flavor; the Lay's “Do Us A Flavor” campaign (2017) awarded $1 million to winner Ellen Sarem; and the Doritos “Crash the Super Bowl” commercials and jingle contests (2006–2016) awarded from $400,000 to $1 million in cash or prizes, plus recognition for the winners. Further, crowdsourcing can be managerially impactful, as evidenced by the more than 200 customer-generated ideas implemented by Starbucks, and market engaging, as evidenced by the 3.8 million submissions to the Lay's “Do Us A Flavor” campaign.

Indeed, many consumer goods firms have identified successful innovations by tapping into the creative potential of their user communities. It is here where an unresolved tension exists: those who participate in a crowdsourcing contest are not only ideators and innovators but also the firm's current and, importantly, future customers. Further, a crowdsourcing contest has one winner (or a handful of winners) and many, many losers. While the winners will feel flattered, it is unclear how the losers will react. Are there any unintended consequences for the sponsoring firm when customers lose a crowdsourcing contest? To our surprise, we found no answer to this question in the literature. This is particularly notable given the strategic importance of crowdsourcing activities, not only with regard to idea generation but also from a more general business perspective. That is, crowdsourcing participants tend to be customers of the firm, demonstrate higher levels of involvement and identification with the firm, and are often recruited from the company's fan base via social media channels (Djelassi and Decoopman 2013; Franke et al. 2013; Lafferty 2012; Porter and Donthu 2008).

Against this backdrop, we set out to study how losing a crowdsourcing contest influences the crowdsourcing participants’ subsequent engagement with the sponsoring brand. Following Pansari and Kumar's (2016) conceptualization of customer engagement, we focus on word-of-mouth and purchase behaviors, reflecting both indirect and direct contributions by customers to the firm. We anticipate two possible outcomes: losing the crowdsourcing contest could (1) increase customer engagement, or (2) decrease customer engagement. First, the ancillary effects of participating in a crowdsourced contest could be positive, even among those who do not win. As put by an industry expert: “If the end goal is to get people to engage with the brand, then crowdsourcing is the way to do it” (Heitner 2013). Second, the ancillary effects of participating in a crowdsourced contest could be negative among participants who do not win, especially in the short term. Learning that one has not won a contest can be emotionally frustrating and disappointing despite the objectively low a priori odds of winning the contest. We find evidence for the second effects.

We further theorize that reframing the crowdsourcing contest may help address this problem. In particular, we argue that stressing the collective “We” rather than the individual “Me” in a crowdsourcing contest will shift attention away from a single winning idea (i.e., a tribute to one participant) and toward the collective contribution of all participants (i.e., an acknowledgment of everybody's effort). Hence, framing a crowdsourcing initiative as a shared community activity (for example, “Join the Crowd and help us find a name for our new restaurant”) rather than as a competition (in this context, “Compete with the Crowd to be the one who names our new restaurant”) may positively affect customer engagement following crowdsourcing participation by reducing the negative affect associated with not seeing one's idea win and by increasing one's perceived contribution to the final outcome. Furthermore, our theory implies that the relative advantage of a community framing increases with the effort participants invest into the crowdsourcing, making the focal intervention particularly promising for situations in which crowdsourcing requires more (vs. less) effort among participants. We note that community framing, wherein each participant contributes an idea with the hope that it will be beneficial to the firm, is different from cooperative framing, wherein participants work together to create a single idea. Cooperative framing is not investigated in this article.

Background and Theory

Crowdsourcing as an Innovation Tool

Most of the existing empirical research on crowdsourcing has been conducted through an innovation lens. In particular, researchers have looked at whether, and under what conditions, crowdsourcing may yield innovative new product ideas and, in turn, commercially attractive new market offerings. For example, Poetz and Schreier (2012) compared the properties of crowdsourced new product ideas with those generated by a firm's in-house designers. They found that crowdsourced ideas scored significantly better than in-house ideas in terms of novelty and customer benefits, but lower in terms of feasibility (for similar findings, see Kristensson et al. 2004; Magnusson et al. 2003). A case study conducted in cooperation with the Japanese consumer goods brand Muji compared the sales performance of new products based on crowdsourced versus designer-generated ideas (Nishikawa et al. 2013). The surprising finding, with regard to effect size, was that three years after market introduction, crowdsourced new products performed on average five times better in terms of sales and six times better in terms of margin. Further, the better the quality of the raw crowdsourced idea, the better the market performance of the resulting new product (Kornish and Ulrich 2014). Finally, Allen, Chandrasekaran, and Basuroy (2018) studied how crowdsourcing the final design of a specific new product idea incrementally affected new product performance. They found that crowdsourcing the design positively affected sales, particularly for originally mediocre ideas, because the crowd improved the product's reliability and usability.

More closely related to our research questions, researchers have also asked what firms should do in terms of providing postcompetition feedback to participants. Piezunka and Dahlander (2019) find that many organizations do not provide any direct feedback to nonwinners (i.e., the overwhelming majority of participants); however, they also find that providing feedback increases the likelihood that losers will submit ideas in future contests. Similarly, Fombelle, Bone, and Lemon (2016) use a field study to show that future idea sharing is significantly higher among nonwinners who receive a noncommittal thank-you note as compared with no feedback. Finally, case studies presented by Djelassi and Decoopman (2013) and Hanine and Steils (2019) suggest that many firms overlook the critical role of providing feedback to contest participants. The authors advocate using a symbolic gift (e.g., small discount) to recognize each individuals’ effort and to “leave participants less frustrated, even those who did not win the contest” (Hanine and Steils 2019, p. 8).

The Causal Consequences of Participation in Crowdsourcing

At first glance, there are several reasons to predict a positive effect of participation in a crowdsourcing contest on participants’ subsequent engagement with the brand (i.e., purchase and word of mouth; Pansari and Kumar 2016), regardless of the outcome (i.e., win or lose). First, crowdsourcing should encourage customer engagement because the sponsoring brand signals it is customer-centric and has a genuine interest in customers’ ideas (Borle et al. 2007; Fuchs and Schreier 2011; Hoyer et al. 2010). Participants, in turn, should feel psychologically empowered and, hence, value the firm more strongly (Allen et al. 2016; Dahl et al. 2015; Fuchs et al. 2010; Liu and Gal 2011; Paharia and Swaminathan 2019). Second, industry surveys indicate that customers value a firm more strongly if it actively seeks their feedback and solicits their insights (Sullivan 2012). Third, experts anticipate the support of the hypothesis. We asked 30 participants (i.e., faculty, PhD students) of an academic workshop on innovation and consumer behavior to predict how participating in a crowdsourcing contest would influence a customer's subsequent engagement with the brand. Most participants predicted a positive effect (positive: 22/30; negative: 4/30; none: 4/30).

There are also reasons to predict a negative effect of participation in a crowdsourcing contest on participants’ subsequent engagement with the brand, especially when the participant does not win the contest. Learning about personal failure can be emotionally frustrating and disappointing despite the objectively low a priori odds of winning (Blackhart et al. 2009; DeWall and Bushman 2011; Molden et al. 2009). Instead of basking in a sea of glory, a participant faces the reality that the firm has rejected their idea because it was not good enough (Finkelstein and Fishbach 2012). We know from prior research that people fall in love with the fruits of their labor, even if that labor does not take much time, and that people are particularly proud of their ideas (Marsh et al. 2018; Norton et al. 2012). It is also established that people forge psychologically strong bonds between their sense of self and the things they create with their hands or thoughts (Franke et al. 2010; Pierce et al. 2003). Hence, negative feedback about one's creative work is often taken personally (Carver and Scheier 1998; Locke and Latham 1990).

We propose that having one's idea rejected will result in a negative emotional reaction (e.g., disappointment, rejection) that is attributed to the contest-hosting brand (Blackhart et al. 2009; DeWall and Bushman 2011; Finkelstein and Fishbach 2012; Molden et al. 2009). When a contestant loses, the failure is typically attributed to an external source (e.g., the firm did not appropriately value the idea) rather than an internal source (e.g., my idea was poor) (Bendapudi and Leone 2003; Feather 1969). Applied to crowdsourcing, we hypothesize that participants who do not win a crowdsourcing contest will temporarily disengage from the sponsoring brand owing to negative affect associated with losing (i.e., losing a contest → negative emotional reaction toward sponsor → temporary disengagement from sponsoring brand). This hypothesis is consistent with evidence that consumers disengage from a brand when the brand violates relationship norms (Aaker, Fournier, and Brasil 2004), fails to reciprocate (Palmer and Bejou 1994), or engages in disappointing behavior (Burgess and Jones 2021). Note that our theory centers on customers’ immediate (vs. long-term) response, given that the effect's underlying mechanism is emotional. Specifically, participants’ desire to psychologically disengage and distance themselves from the contest-hosting brand shall be particularly pronounced when the related feelings of disappointment, rejection, and exclusion are salient (i.e., immediately after having learned about the outcome). More formally, we predict:

The influence of losing a crowdsourcing contest on long-term engagement with the sponsoring brand will depend on many factors. For example, consider a customer participating in a crowdsourcing contest hosted by a local café. If the café has no direct competitor, and the customer passes by the café every day, craving coffee, the immediate negative effect is likely to dissipate with time. Beyond such pragmatic reasons, there are also theoretical considerations related to short- versus long-term effects. When brands engage in transgressions, we know that consumers tend to temporarily disengage from the brand (Aaker, Fournier, and Brasil 2004; Khamitov, Grégoire, and Suri 2020; Trump 2014). Yet, over time, consumers who have a relationship with the brand (and are motivated to keep that relationship intact) may forgive the brand and reengage (Fetscherin and Sampedro 2019; Tsarenko, Strizhakova, and Otnes 2019).

1

Framing Crowdsourcing Differently: Crowdsourcing as a Community Activity

If losing a crowdsourcing contest temporarily reduces engagement with the brand, as predicted in H1a, is there anything firms can do to mitigate these unintended consequences? We suggest that an actionable solution is to change the framing of the crowdsourcing contest. As we have indicated, crowdsourcing contests are typically framed as a competition that only one participant, or a handful of participants, can win. This framing means almost all participants identify as losers. Thus, our recommended strategy is to make losers feel like winners. In particular, we propose, as noted previously, that stressing the collective “We” rather than the individual “Me” in a crowdsourcing contest will shift attention away from a customer's own idea (i.e., the individual goal “to win”) toward the collective outcome of the campaign (i.e., the shared goal “to help the firm find an appealing idea”). Reframing a performance goal from an individual goal to a shared goal, so that the participant feels a kinship with everyone else working toward the same goal, lessens the negative impact of losing in sports (Raabe, Zakrajsek, and Readdy 2016). Similarly, research on crowdfunding suggests that cofinancing the development of a venture with one's individual monetary contribution (vs. merely buying the same product in a classic market exchange setting) increases participants’ sense of community and, as a consequence, one's feeling of having made a meaningful contribution (e.g., I can help “bring creative projects to life” [Bitterl and Schreier 2018, p. 674]). Thus, a crowdsourcing contest might be framed like crowdfunding, wherein one's individual contribution helps achieve a higher-level goal (i.e., a new product), thereby reducing any negative affect associated with not seeing one's individual idea win and by increasing one's perceived contribution to the final outcome. More formally, we predict:

It is important to recognize that framing a crowdsourcing contest as a community activity does not change the nature of the contest itself (i.e., participants continue to submit their individual ideas). Furthermore, community framing will not influence the effort the participant invests in the contest (and, consequently, the quality of the idea the participant submits). However, we reason that the relative advantage of a community framing (H2a) might increase with the effort participants decide to invest into the crowdsourcing. First, in the case of a competitively framed crowdsourcing contest, the more effort invested, the more negative losing participants’ emotional reaction toward the sponsoring brand may be. Second, and relatedly, effort might have positive effects in the context of a community framing because the joint outcome is more likely to be attributed to one's invested effort. Thus, in when more (vs. less) effort is invested in a community-framed crowdsourcing contest, the affective reaction of participants might be more positive and their perception of having contributed to the final outcome more pronounced. This line of reasoning is consistent with research on self-customization, which suggests that perceived effort is often interpreted ex post on the basis of the outcome. In particular, Franke and Schreier (2010) find that a consumer’s perceived effort invested in the self-customization of a scarf design is interpreted positively (negatively) when they are satisfied (not satisfied) with the outcome. More formally, we predict:

Empirical Approach and Overview of Studies

One possible reason why there is no systematic research on the consequences of participating in a crowdsourcing contest on subsequent customer engagement is because it is difficult to study. If we compared customers who participated in a firm's crowdsourcing contest with those who did not, the analysis would be confounded owing to a selection bias: participants and nonparticipants would not be equivalent on individual difference variables. Put differently, a self-selection bias is a threat to the internal validity. Similarly, a difference-in-difference approach is ineffective for testing H1a because the common trends assumption is violated (Lee and Kang 2006). The selection bias could create different preintervention trends or during-intervention interactions.

We addressed this concern by conducting a series of field experiments in which we randomly assign study participants to experimental conditions, thereby reducing concerns about the self-selection bias. Study 1a provides evidence for H1a: participation in a crowdsourcing contest temporarily reduces losing customers’ engagement with the sponsoring brand. Study 1b is a controlled lab experiment that sheds light on the underlying process and provides evidence that the engagement effect is indeed related to the participant losing (vs. winning) the crowdsourcing contest, while framing the contest as a community activity attenuates the negative effect (H1b and H2b). Studies 2a and 2b are field experiments showing that framing a crowdsourcing contest as a community activity mitigates the negative consequences of losing the contest (H2a). Study 2b also shows that the impact of competitive versus community framing will increase as participants invest more effort in the crowdsourcing task (H3). Finally, Study 3 shows that the community framing effect emerges in a field study measuring actual spending with the firm (H2a).

Note that the tests of the hypotheses are conservative. Participants received a payment for their participation in Studies 1a, 2a, and 2b, which should limit the negative emotional response from not winning the contest (i.e., the tests of H1a are conservative). Further, all field studies provided the participants simple thank-you notes as a symbolic recognition of their effort (Fombelle et al. 2016; Hanine and Steils 2019; Piezunka and Dahlander 2019). Finally, in Studies 1a and 3, the sponsoring brand gave a small gift to all participants. Although a gift should mitigate any negative effects of participation in crowdsourcing, and thus went against H1a, the gift increased the ecological validity of the procedure (Hanine and Steils 2019).

Study 1a: In Search for a New Breakfast Bundle

Study 1a tested the prediction that participation in a crowdsourcing contest will negatively affect a losing customer's subsequent engagement with the contest-hosting brand (H1a). We tested this prediction in a field experiment in which participants were randomly assigned to a real-life crowdsourcing contest or one of two control conditions. The contest was hosted by an established on-campus café that wanted to identify an innovative take-away breakfast bundle for its soon-to-be-opened off-campus café. Treatment group customers participated in the contest, received feedback on the contest winner, and got a 50% off coupon for a coffee. Control group customers did not participate in the contest but also received a 50% off coupon for a coffee.

The coupon, which served as a small thank-you gift for all study participants, had a unique ID that allowed us to track each participant's experimental condition. Participants’ short-term customer engagement (H1a) (e.g., redemption behavior within 24 hours) served as a first dependent variable. Because we could track redemption behavior for a longer period of time (i.e., 30 days), a second dependent variable is the time lag between receiving and redeeming the coupon, the prediction being that customers in the treatment group (crowdsourcing participants whose ideas were not selected) would be slower to redeem their coupon. This latter measure is based on the idea that losing a crowdsourcing competition is similar to experiencing a brand transgression. A common response to a brand transgression is to temporarily disengage from the brand (Aaker et al. 2004; Khamitov et al. 2020; Trump 2014).

Method

The study used a three-cell between-subject design with (1) a treatment condition (participate in crowdsourcing contest and lose) and (2) two control conditions: aware of the crowdsourcing contest but do not participate, or unaware of the contest and thus do not participate. Two hundred twenty-one students from WU Vienna (Vienna University of Economics and Business) (Mage = 22.32 years; 60.2% female, 38.5% male, .3% gender not stated) participated in a series of studies conducted at two points in time. To minimize attrition, participants received either course credit or a small amount of money (€5) after the time 1 session and a larger amount of money (€10) after the time 2 session.

At time 1, 221 participants were invited to take part in a market research study conducted for an actual soon-to-open off-campus location of an on-campus café. Participants in all conditions learned about the café's new off-campus branch and completed a short survey that was designed to support the cover story. Next, participants were randomly assigned to one of three conditions. In the treatment condition, participants were told about a crowdsourcing contest to identify innovative “grab & go” breakfasts for the off-campus location. Participants received an information leaflet with the headline: “Compete with your ideas for the best ‘grab & go' student breakfast” (see Web Appendix A). The contest itself and rules for submitting an idea were explained, and participants submitted an idea. Finally, participants were informed that the second part of the study would occur in four weeks. At this time, they would learn about the results of the crowdsourcing competition and more information about the off-campus café. Participants in the two control conditions did not receive any information about the crowdsourcing competition. Instead, they were informed that the second part of the study would occur in four weeks, using the same language as the treatment group sans reference to the crowdsourcing competition.

Four weeks later (time 2), 218 participants were invited to the second part of the study (three participants were not invited to return because they did not follow instructions at time 1), out of which 186 participants (84.2%) returned to the lab. Importantly, we did not identify any condition-specific attrition (χ2(1, N = 218) = .68, p = .405). The lab session began with a discussion of the new “grab & go” breakfast bundles. Participants in the treatment condition were reminded about the crowdsourcing competition they had participated in. Participants in the first control condition were made aware of the crowdsourcing contest and that the new “grab & go” breakfast bundles were a consequence of this contest. Participants in the second control condition did not receive any crowdsource-related information; they only learned about the new “grab & go” breakfast bundles. Thus, the first control condition emphasized internal validity (the only difference from the treatment condition was the lack of participation in the contest), and the second control condition emphasized external validity (in reality, many customers were not aware of the crowdsourcing contest). We do not predict a difference between the control conditions but felt it prudent to include both.

Next, participants in the treatment group and first control group were informed of the winning ideas: “Juggle it!” (small balls made out of fresh dough with different fillings, served with fresh juice) and “Good Morning Waffle!” (soft Belgian waffles in a cone pocket, served with fresh coffee). Then, participants in all conditions were asked a few market research questions and subsequently thanked for their valuable input on behalf of the café. Finally, all participants were given coupons that were redeemable within the next 30 days. In particular, they received a coupon for a cup of coffee (50% off price promotion) at the on-campus café as well as coupons for the off-campus café. Due to operational issues at the off-campus café (i.e., not being open during the majority of the experiment), we could only track redemption behavior at the on-campus café.

Unbeknown to participants, each coupon had a unique ID that allowed us to track the participant's experimental condition and date of time 2 participation. We collected the redeemed coupons on each day of the 30-day redemption period. Thus, as noted previously, we could calculate participants’ short-term customer engagement (e.g., redemption behavior within 24 hours) as well as the time lag between receiving and redeeming the coupon.

Results

Data preparation

One participant was assigned to a wrong condition at time 2 and therefore excluded from the analysis. The three winners of the competition (two participants came up with the same breakfast waffles idea) were also excluded from the analysis. The final sample size was 182. The redemption rates did not vary for the two control conditions for (1) the first 24 hours, (2) the first ten days, (3) the second ten days, and (4) the third ten days (all ps > .25); hence, the two control conditions were collapsed.

Redemption rates and time to redemption—model free

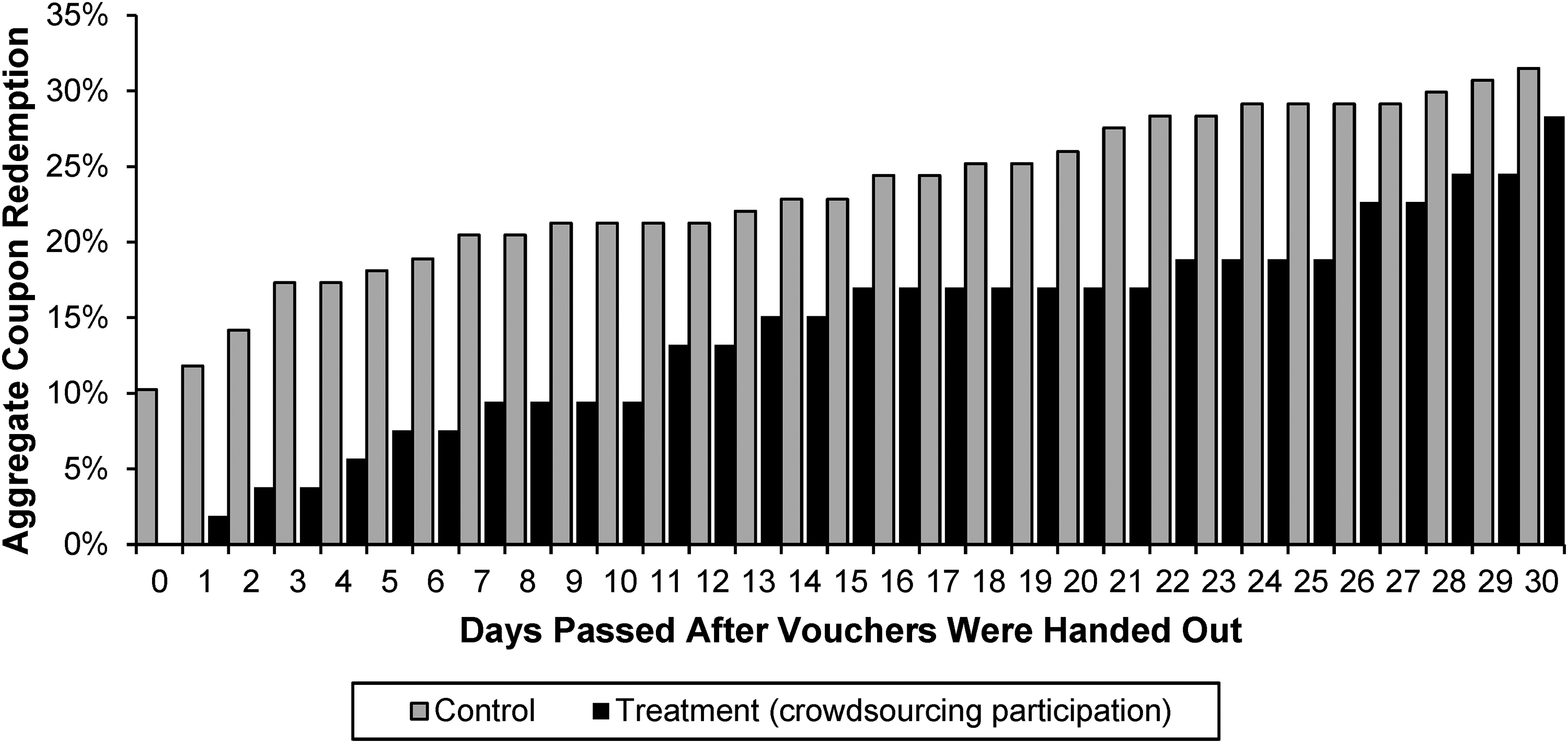

Figure 1 shows cumulative redemption rates by day. Consistent with our focal hypothesis (H1a), we find that crowdsourcing participants who lost were less likely to redeem the coupon in the first 24 hours (Ptreatment = .02 vs. Pcontrol = .12; χ2(1, N = 180) = 4.55, p = .033, φ = .16) and the first ten days (Ptreatment = .09 vs. Pcontrol = .21; χ2(1, N = 180) = 3.58, p = .059, φ = .14). The effect size was large: while only 2% of treatment group customers redeemed their coffee voucher within the first day, this percentage was six times larger in the control conditions (12%). As seen in Figure 1, however, the treatment effect decreased over time (and is insignificant in aggregate after 30 days: Ptreatment = .28 vs. Pcontrol = .32; χ2(1, N = 180) = .18, p = .672, φ = .03. This pattern of effects is, thus, in line with our hypothesis (H1a) that a customer's disengagement is particularly pronounced immediately after learning about the contest outcome.

Cumulative Redemption Rates by Condition (Study 1a).

Time to redemption—hurdle model

We used a hurdle model to more formally assess if the average person in the treatment condition indeed took longer to redeem a coupon, given that a redemption was made (Loeys et al. 2012). A hurdle model is a two-part model: a logit model on the act of redemption and geometric distribution model on the time to redeem. The geometric distribution is appropriate for the dependent measure: the count of days until coupon redemption (Zeileis et al. 2008). In support of our theory, the hurdle model shows that participants in the treatment condition waited almost twice as long (M = 18.87 days) to redeem their coupon than participants in the control conditions (M = 10.53 days; b = .650, SE = .315, z = 2.07, p = .039, Cohen's d = .86).

Discussion

Study 1a provides evidence that participation in a crowdsourcing contest negatively affects losing customers’ subsequent engagement with the contest-hosting brand (H1a). Crowdsourcing participants whose ideas were not implemented, as compared with participants in the control condition, demonstrated a significantly lower coupon redemption rate shortly after learning the outcome (i.e., 24 hours) and significantly delayed redeeming their coupon. This causal evidence controls for self-selection (a threat one would encounter with a natural crowdsourcing contest). The effect size is notable (Cohen's d = .86), particularly given the habitudinal nature of the behavior (buying coffee on campus) and the strong incentive (50% off). While the disengagement persisted for the first ten days, it did attenuate with time. This result is consistent with prior research showing that customers temporarily disengage from brands that commit transgressions (Aaker et al. 2004; Khamitov et al. 2020; Trump 2014).

Study 1b: Alternative Control Group and Process Insight

One might question whether the effect observed in Study 1a is indeed due to the participant losing, versus winning, the crowdsourcing contest. Like most crowdsourcing contests, we do not have enough winners in this (n = 3) and the subsequent field experiments to effectively compare the losers to winners. To address this limitation, we conducted a follow-up study in which we asked participants to imagine losing or winning a crowdsourcing contest. The study also provided an initial test of H2a by testing whether framing a crowdsourcing contest as a community activity will mitigate the negative effect of losing customer engagement. Finally, consistent with our theory, we included two process measures to capture a participant's negative emotional reaction toward the sponsoring brand (negative affect and perceived contribution) with the prediction being that both mediate, in parallel, the negative effect of losing customer engagement (H1b and H2b).

Method

Study 1b used a three-cell between-subject design with two treatment conditions (competitive frame–win; community frame–lose) and one control condition (competitive frame–lose). Five hundred thirty-one students from the University of Florida (UF) (Mage = 21.08 years; 58.4% female, 41.4% male, .2% gender not stated) participated in a single-session study in exchange for a course credit.

The procedure used a scenario designed to mimic Study 1a. Participants imagined partaking in a crowdsourcing contest hosted by an on-campus café with the aim of identifying a new breakfast bundle. Participants were randomly assigned. In the first treatment condition and the control condition, the crowdsourcing campaign was framed as a competitive contest (like in Study 1a; i.e., “Compete with other UF students to create the best ‘grab & go’ breakfast”). Half of these participants imagined that their idea was not selected (competitive frame–lose control condition) and the other half imagined that their idea won the contest (competitive frame–win treatment condition). In the second treatment condition (community frame–lose treatment condition), the crowdsourcing campaign was framed as a community activity (i.e., “Work with other UF students to generate ideas that will result in the best ‘grab & go’ breakfast”), and participants imagined that their idea was not selected.

Participants in all conditions then completed a set of four customer engagement items mirroring the dependent variable in Study 1a. The preamble read as follows: “Imagine you have just received the coupon for a cup of coffee (50% price promotion) at BRAND A's café and you could go there. To what extent do you agree with the following statements?” The choices were as follows: (1) “I will go to the café to redeem the coupon today,” (2) “Having a coffee at the café sounds like a great idea to me at this moment,” (3) “I would rather wait a couple of days before visiting the café to get my coffee” (reversed), and (4) “To be honest, I do not plan to go to this café during the next few days” (reversed). These items were measured on an eight-point scale (1 = “Strongly Disagree,” and 8 = “Strongly Agree”; α = .74).

Finally, we measured two underlying processes: negative affect and perceived contribution to the outcome. Negative affect was measured using the following: “Based on your crowdsourcing participation just described, to what extent to you agree with the following statements? When I think about the café, I feel …” (1) “Rejected”–“Accepted,” (2) “Excluded”–“Part of it,” (3) “Disappointed”–“Pleased,” (4) “Disconnected”–“Connected,” and (5) “Indifferent”–“Interested” (measured on seven-point scales; α = .92). Perceived contribution was measured using the following: (1) “I think I have contributed to the success of the crowdsourcing campaign,” (2) “My participation in the crowdsourcing activity was valuable,” and (3) “I made a positive contribution to the crowdsourcing activity” (1 = “Strongly Disagree,” and 7 = “Strongly Agree”; α = .94).

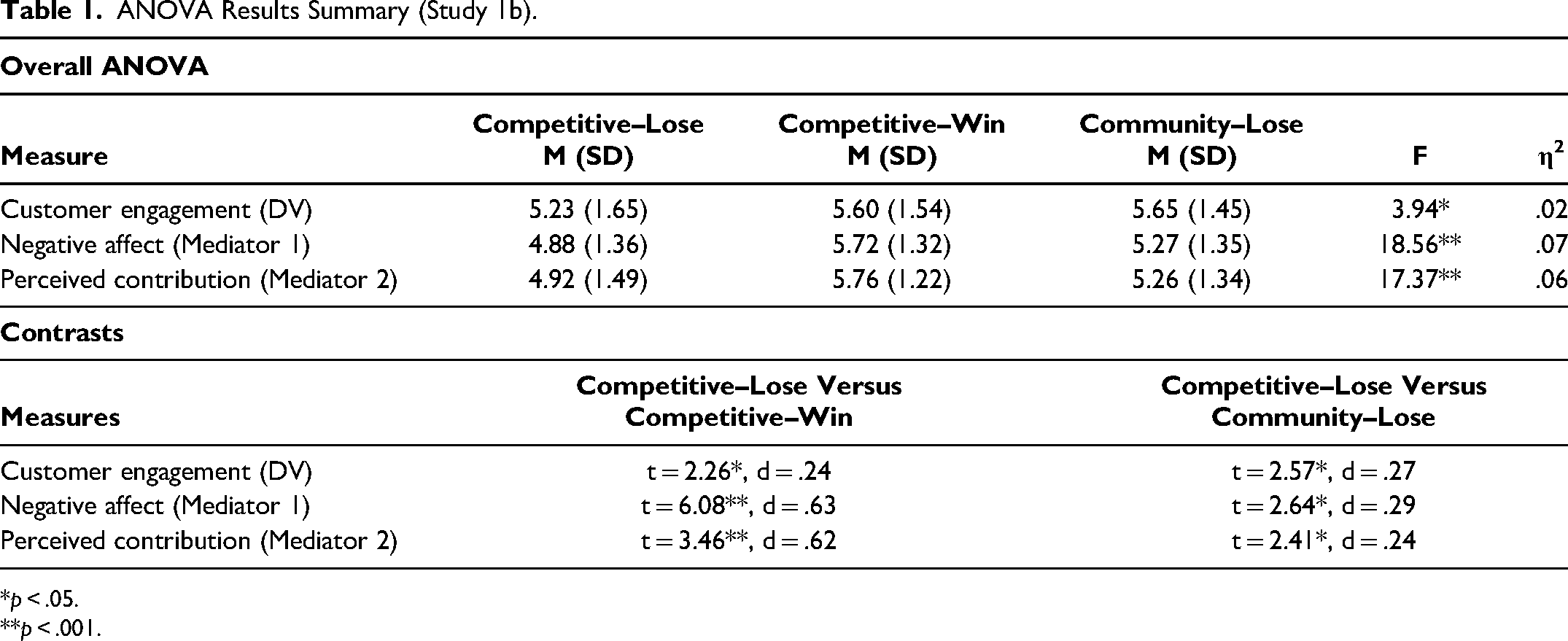

Results

The findings are consistent with our theory (see Table 1 for details). First, losing a competitively framed crowdsourcing contest leads to lower customer engagement (M = 5.23, SD = 1.65) compared with both the competitive–win (M = 5.60, SD = 1.53; t(528) = 2.26, p = .024, d = .24) and community–lose (M = 5.65, SD = 1.45; t(528) = 2.57, p = .010, d = .27) conditions. Second, losing a competitively framed crowdsourcing contest leads to more negative affect (M = 4.8, SD = 1.36) compared with the competitive–win condition (M = 5.72, SD = 1.32; t(528) = 6.08, p < .001, d = .63,) and the community–lose condition (M = 5.24, SD = 1.26; t(528) = 2.64, p = .008, d = .29). Third, compared with the competitive–lose condition (M = 4.92, SD = 1.49), both competitive–win condition (M = 5.76, SD = 1.22; t(528) = 3.46, p < .001, d = .62) and community–lose condition participants perceived that they made a higher contribution to the outcome (M = 5.26, SD = 1.34; t(528) = 2.41, p = .016, d = .24).

ANOVA Results Summary (Study 1b).

*p < .05. **p < .001.

Results of parallel mediation analysis (Hayes 2017, Model 4) contrasting the competitive–lose and competitive–win conditions (IV) on customer engagement (DV) showed a significant indirect effect through negative affect (H1b) (Mediator 1: b = .28, SE = .09; 95% CI = [.13, .48]) and a significant indirect effect through perceived contribution (Mediator 2: b = .18, SE = .08; 95% CI = [.04, .34]). Similarly, a mediation analysis (Hayes 2017, Model 4) contrasting competitive–lose versus community–lose conditions (IV) on customer engagement (DV) showed a significant indirect effect of negative affect (Mediator 1: b = .04, SE = .02; 95% CI = [.01, .10]) and a significant indirect effect of perceived contribution (Mediator 2: b = .03, SE = .02; 95% CI = [.01, .08]) (H2b).

Discussion

Study 1b demonstrates that the disengagement effect (H1a) observed in Study 1a is likely due to the negative reaction crowdsourcing participants had when their idea was not selected (H1b). In addition, framing the crowdsourcing initiative as a community activity (vs. a competition) positively affected a losing customer's subsequent engagement with the contest-hosting brand (H2a). This effect was mediated in parallel by negative affect and perceived contribution (H2b).

Study 2a: In Search for a New Product for a Merchandise Store

Study 2a was a field study that tested whether framing a crowdsourcing contest as a community activity, rather than as a competition, results in a more positive customer engagement among the participants who lose (H2a). The crowdsourcing involved having customers suggest products that could be sold in a university's merchandise store. The procedure was a field study that utilized a 2 (crowdsourcing contest participation treatment group vs. a crowdsourcing nonparticipation control group) × 2 (crowdsourcing contest frame: competition vs. community activity) design. Similar to the internal-validity control group in Study 1a, the control group knew about the crowdsourcing campaign.

The key test was a comparison of participants’ engagement intentions in the two crowdsourcing participation conditions. If our reasoning is correct (H2a), engagement intentions among those participants whose ideas have not been selected should be higher in the community activity than in the competitive crowdsourcing condition. Beyond this, it is difficult to predict the pattern of results because the crowdsourcing nonparticipation control group could respond in two ways. One way is that the framing manipulation is less relevant for nonparticipation. Because they did not submit their idea, there is no experience of losing and, hence, shifting attention away from the competitive character of the contest is irrelevant. This would speak in favor of observing an interaction effect. The other way is that members of the crowdsourcing nonparticipation control group appreciate the community crowdsourcing framing because the collective element of the initiative might make them feel part of the brand community. This would speak in favor of observing two main effects: a negative effect of crowdsourcing participation and a positive effect of the community crowdsourcing framing.

Method

The study used a 2 (treatment group: crowdsourcing contest participation; control group: crowdsourcing contest nonparticipation) × 2 (crowdsourcing frame: competition vs. community activity) between-subject design. One hundred sixty-one students from WU Vienna (Vienna University of Economics and Business) (Mage = 22.29 years; 72.7% female, 27.3% male) participated in two studies conducted at two points in time in exchange for €12.

At time 1, participants were invited to complete a market research study in cooperation with the campus merchandise store. Participants completed a short survey about their life on campus (e.g., frequency of visits, involvement in nonstudy activities on campus, attitude toward the university, feeling of belonging to the university crowd, interest in and ownership of university-branded merchandise). Participants in all conditions were asked to examine the store's online catalogue; to support the cover story, they were informed that the aim of the study was to ensure that the store provides desirable products to university students.

Those in the two nonparticipation conditions were informed that they would learn more about the merchandise store at a later date (time 2). Participants in the two crowdsourcing conditions learned about a crowdsourcing campaign, hosted by the merchandise store, to identify new products that could be offered to students and other target customers. Participants in these two conditions were invited to submit an idea (one participant did not submit an idea and was excluded from further analysis). To test the effect of framing on the participants’ reaction to the crowdsourcing outcome, two versions of the invitation were created (competitive vs. community activity). In the former, the crowdsourcing was framed as a competition (i.e., “compete with the crowd and see if you can win”). In the latter, the crowdsourcing was framed as a community activity (i.e., “participate and join the collective effort of the crowd”) (see Web Appendix B).

At time 2, 154 participants (96.3%) returned to the lab. We did not identify any condition-specific attrition (χ2(2, N = 160) = .15, p = .928). Participants in the two participation conditions were reminded of their time 1 involvement in the crowdsourcing activity. Participants in the nonparticipation condition learned about the crowdsourcing initiative and were randomly assigned to one of the two framing conditions: they were exposed to either the competitive- or community-framed crowdsourcing invitation flyer. All participants were then informed of the outcome of the crowdsourcing initiative: the new university merchandise that was expected to be on the shelves by the beginning of the next semester (a transparent backpack that allows students to conveniently enter the library). The presentation was accompanied with a condition-specific subtitle under the product idea stating “crowdsourced by WU students” (community) or “by contest winners: [winners’ names]” (competitive).

Participants in all conditions were thanked for their valuable input on behalf of the university merchandise store. They were then asked to complete a short survey. In particular, they completed a set of six items indicating one's intention to engage with the university merchandise store. The items were generated based on Pansari and Kumar's (2016) conceptualization of customer engagement, reflecting both indirect and direct contributions by customers to the firm (i.e., word-of-mouth and purchase behaviors). The items were as follows: “I am eager to visit the WU store sometime soon,” (2) “In this moment, I am interested in purchasing something from the WU store,” (3) “I will talk positively about the WU store with my friends and peers,” (4) “In this moment, I can easily see myself saying something positive to others about the WU store,” (5) “I would 'like,’ ‘share,’ or ‘follow’ social media pages of the WU store,” and (6) “In this moment, I would be happy to say something positive about the WU store on social media.” These items were measured on a seven-point scale (1 = “Strongly Disagree,” and 7 = “Strongly Agree”; α = .87).

Results

Data preparation

Participants were excluded from further analysis due to a computer malfunction (six), failure to follow instructions (one), and submitting the same winning idea (four). The final sample was 148 participants.

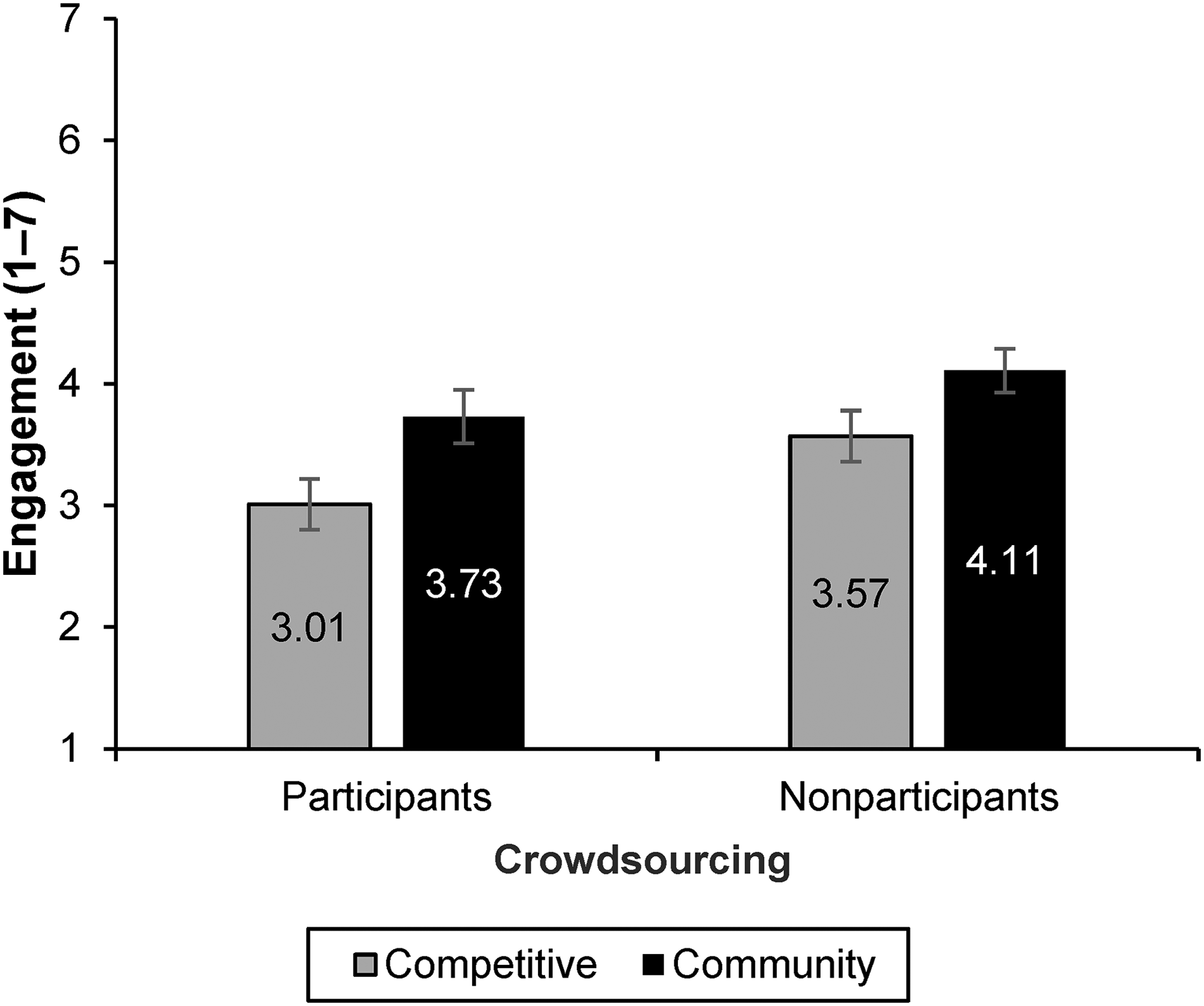

Engagement

A 2 (crowdsourcing participation vs. nonparticipation) × 2 (crowdsourcing framing: competitive vs. community activity) analysis of variance (ANOVA) found two significant main effects and a nonsignificant interaction (F(1, 144) = .177, p = .674) (see Figure 2). In particular, consistent with H1a, crowdsourcing participants (M = 3.37, SD = 1.34) expressed significantly lower customer engagement intentions compared with crowdsourcing nonparticipants (M = 3.86, SD = 1.24; F(1, 144) = 5.21, p = .024, η2 = .04). Furthermore, people in the community framing conditions (M = 3.93, SD = 1.23) expressed significantly higher customer engagement intentions compared with participants in the competitive framing conditions (M = 3.29, SD = 1.32; F(1, 144) = 9.26, p = .003, η2 = .06). A formal test of H2a found that, among those who participated in the crowdsourcing activity, framing crowdsourcing as a community effort (M = 3.73, SD = 1.29) yielded significantly higher customer engagement intentions than framing crowdsourcing as a competition (M = 3.01, SD = 1.31; F(1, 144) = 5.85, p = .017, η2 = .04).

Customer Engagement Intent (Study 2a).

Ancillary analysis

There was a concern that the framing of the crowdsourcing contest resulted in ideas of differing quality, which could be responsible for differences in engagement. To assess this possibility, two coders were asked to evaluate the participants’ ideas based on their originality and usability (Amabile 1996). Coders were instructed to evaluate the ideas on a three-point scale (1 = neither usable nor original, 2 = usable but not original, or 3 = usable and original). Individual coder responses were merged into an overall idea quality score (r = .68, p < .001). Importantly, we found no significant differences in idea quality between the two experimental conditions (Mcommunity = 1.83, SD = .65 vs. Mcompetitive = 1.92, SD = .71; t(70) = .521, p = .604). Thus, the framing manipulation did not affect the quality of the ideas submitted.

Discussion

Study 2a yields several important findings. First, we conceptually replicate the findings obtained in Studies 1a and 1b, respectively: participating in a crowdsourcing contest and losing negatively affects customer engagement with the contest-hosting brand (H1a). Second, we find that framing a crowdsourcing initiative as a community activity, rather than as a competitive contest, increases crowdsourcing participants’ customer engagement intentions (H2a).

It is noteworthy that we did not obtain a significant interaction effect, suggesting that the crowdsourcing framing manipulation also affects participants who merely observe versus participate in a crowdsourcing activity. Although this is not the focus of the present investigation, the finding is interesting and resonates with that of Dahl, Fuchs, and Schreier (2015), who find that nonparticipating crowdsourcing customers feel more connected with other customers by merely observing a firm's crowdsourcing efforts. Study 2a extends this line of research by suggesting that framing a crowdsourcing activity differently might further amplify such observer effects. From a customer engagement perspective, we note that observing seems better than participating irrespective of the framing; we take up this finding in the “General Discussion” section.

Study 2b: In Search for the Name for a New Restaurant

The goals of Study 2b were threefold. The first was to replicate the framing effect among crowdsourcing participants (H2a). The second was to assess to what extent the framing effect is contingent on the effort invested in the task. As indicated earlier, we anticipated that the impact of competitive versus community framing would increase as a function of one's perception of effort invested in the crowdsourcing task (H3). The third goal was to determine if we could extend the results to target new customers, as opposed to existing customers.

In contrast to the previous studies, all participants in Study 2b were invited to participate in the crowdsourcing activity. We did so to increase cell sizes and statistical power. The focal manipulation was how the crowdsourcing initiative was framed (competitive vs. community activity). The sponsor was a new Asian restaurant that was about to open on campus and, at the time of the study, was searching for a name.

Method

The study consisted of two between-subject conditions (crowdsourcing frame: competition vs. community activity) with all participants engaging in the crowdsourcing activity. One hundred ninety students from WU Vienna (Vienna University of Economics and Business) (Mage = 22.54 years; 50.5% female, 48.9% male, .5% gender not stated) participated in two studies at two points in time in exchange for €15.

At time 1, participants were invited to complete a market research study conducted in cooperation with a restaurant that would soon open on campus. Participants completed a short survey about their life on campus (e.g., frequency of visits, nonstudy activities on campus, campus restaurant usage). Participants were then randomly assigned to one of two experimental conditions. Participants in both conditions learned about the restaurant’s general approach and branding concept and then were informed about the crowdsourcing opportunity. In the competitive framing condition, the crowdsourcing activity was framed as a competition (i.e.,, “Compete with the Crowd to be the one who names our new restaurant”). In the community framing condition, the crowdsourcing was framed as a community effort (i.e., “Join the Crowd and help us find a name for our new restaurant”) (see Web Appendix C). Participants in both conditions were then invited to submit an idea, which all did.

To assess perceived effort, participants responded to these items: (1) “I invested a lot of time into the task,” (2) “I tried hard to come up with a good name,” and (3) “I have put a lot of effort into the task.” These items were measured on a five-point scale (1 = “Strongly Disagree,” and 5 = “Strongly Agree”; α = .83).

We also captured the time participants spent on the task. 2 We merged perceived effort (subjective) with time invested (objective) into a standardized index. The two variables, (r = .420, p < .001), tap into different facets of the effort construct; therefore, measurement error should be lower, ceteris paribus, when using a combined index. To rule out alternative explanations, we also measured the participants’ perceived quality of their idea: (1) “How much do you think others will like your idea?,” (2) “How likely do you think your idea is to be selected?,” and (3) “How good do you think your idea is?” (these items were measured on a five-point scale; α = .82). Finally, participants were told they would learn about the winning idea when they returned two weeks later.

At time 2, 176 participants (92.6%) returned to the lab. We did not identify any condition-specific attrition (χ2(1, N = 190) = .25, p = .620). Participants in both conditions were reminded of their time 1 involvement in the crowdsourcing contest and told the winning name for the restaurant: Kung WU. The presentation of the name was consistent with the condition-specific framing: the subtitle under the restaurant name stated either “crowdsourced by WU crowd” (community) or “crowdsourced by [winner's name]” (competitive). Additionally, all participants were invited to leave their signature for the poster acknowledging their contribution to the initiative. The signature was used as a “manipulation booster” in the community condition; it was included right after the new restaurant name announcement to maintain the community feeling of name-creation. In the competition condition, the signature task was at the end of the study.

Participants in all conditions were thanked for their valuable input on behalf of the restaurant. They were then asked to complete a short survey. In particular, they completed a set of six items capturing our dependent variable: customer engagement (same measures as in Study 2a; α = .86). Additionally, participants indicated their attitude toward the name and were invited to leave their contact data so they could be invited to the restaurant's opening.

Results

Preliminary analyses

The time 2 data could not be matched to the time 1 data for four participants. Three participants received the wrong time 2 stimuli due to lab manager errors and were removed from the analysis. The winner of the competition was excluded from the analysis. The final sample was 168 participants.

The framing manipulation did not influence effort (Standardized Effort Index: Mcommunity = −.02, SDcommunity = .83 vs. Mcompetitive = .02, SDcompetitive = .86; t(166) = −.301, p = .764); the perceived quality of the idea (Mcommunity = 3.46, SDcommunity = .79 vs. Mcompetitive = 3.44, SDcompetitive = .81; t(166) = .151, p = .881), or the objective quality of the idea (Mcommunity = 1.38, SDcommunity = .30 vs. Mcompetitive = 1.37, SDcompetitive = .33; t(166) = .254, p = .800). Objective quality was assessed using a process identical to that used in Study 2a.

Engagement

Consistent with H2a, framing the crowdsourcing contest as a community activity (M = 5.07, SD = 1.24), as compared with a competition (M = 4.58, SD = 1.23), yielded significantly higher customer engagement (t(166) = 2.58, p = .011, d = .40).

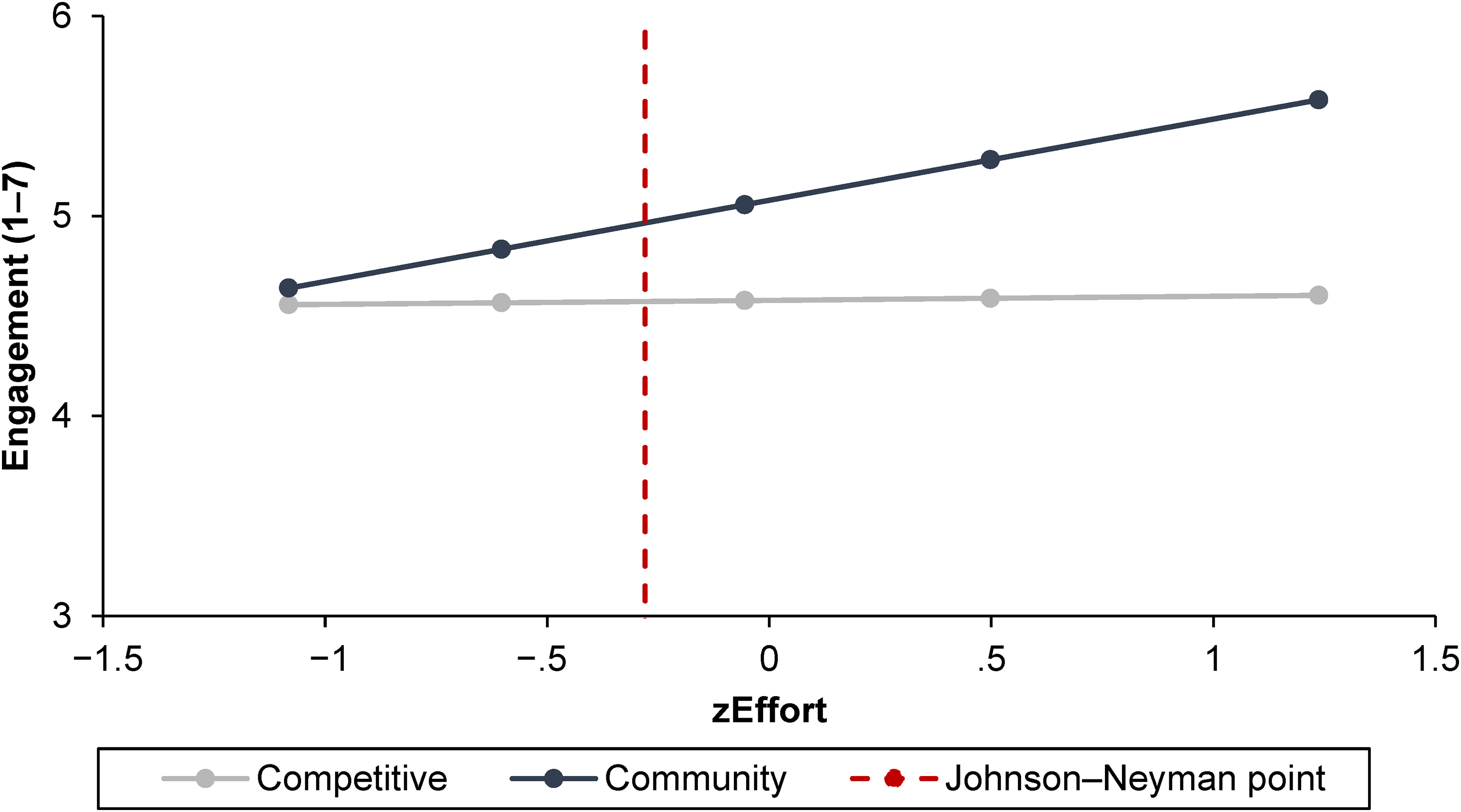

Effort as moderator

A moderation analysis (Hayes 2017, Model 1) revealed a marginally significant interaction between framing and effort on engagement (b = .39, SE = .23, t(164) = 1.72, p = .088). The respective floodlight analysis is plotted in Figure 3. The pattern of effects is consistent with our expectations (H3): the higher the effort, the more positive the framing effect (community activity > competitive contest). In particular, we find that the effect is significant for participants with effort higher than −.28 (Johnson–Neyman point; the sample mean is .00).

Effort Invested into Idea Generation as Moderator (Study 2b).

Ancillary analysis

A moderation analysis (Hayes 2017, Model 1) revealed a nonsignificant interaction between framing and participants’ perceived quality of their idea (b = .23, SE = .24; t(164) = .964, p = .337).

Discussion

Study 2b provides additional evidence that participation in a crowdsourcing campaign that is framed as a community activity (vs. competitive contest) positively affects a losing customer's subsequent engagement with the contest-hosting brand (H2a). Furthermore, we find support for the idea that the effort a person invests in the crowdsourcing activity moderates the focal effect (H3): the higher the effort invested, the more pronounced the framing effect (community activity > competitive contest).

Study 3: A Field Study with a Japanese Convenience Store Chain

The goal of Study 3 is to test the framing effect (H2a) from Studies 1b, 2a, and 2b in a real-life setting. We conducted a difference-in-difference field experiment utilizing a real crowdsourcing campaign. The contest-hosting brand was Lawson Inc., a major convenience store chain in Japan (Lawson 2022), which wanted to name a new mascot for one of its most popular items: fried chicken nuggets. Approximately four weeks after participants submitted their ideas, they learned the winning mascot’s name. Using loyalty card data, we tracked participants’ spending behavior during and after the crowdsourcing campaign.

Our prediction was that framing a crowdsourcing campaign as a community activity, rather than competitive contest, should result in a more positive reaction among those participants whose ideas were not selected (H2a). Consistent with our theory (and analogous to findings in Study 1a), we expected the effect to be particularly pronounced shortly after learning about the campaign's outcome. Like in Study 1a, we studied a long postintervention period (i.e., 28 days). We expected the effect would attenuate over time because the study participants were loyal customers of the crowdsourcing brand, economically incentivized, and thanked for their participation.

Method

The study consisted of two between-subject conditions (crowdsourcing frame: competition vs. community activity) with all participants engaging in the crowdsourcing contest. Participants were 4,909 loyalty card holders (74% female, 25.7% male, .3% gender not stated). Customers participated in exchange for ten loyalty card points and the possibility of winning free fried chicken nuggets for one year.

The company announced its crowdsourcing campaign on Twitter and Facebook as well as through its electronic newsletter. The announcement invited consumers to visit the campaign website, log in with their customer ID, and participate in the contest. Participants were randomly assigned to one of the two experimental conditions. In the competition condition, the crowdsourcing task was framed as “Compete to name the new character.” In the community activity condition, the crowdsourcing task was framed as “Let's think of the name for the new character together” (see Web Appendix D). Participants were invited to submit their ideas, after which they were asked to answer questions about their gender and age group. Due to the specifics of the data collection, we do not have information on the participants who terminated participation prior to submitting an idea.

Approximately four weeks later, participants received an email reminding them of their involvement in the crowdsourcing contest and inviting them to log onto the campaign website to learn the name of the new mascot (i.e., winner announcement). Seven days after the reminder email was sent, 2,874 participants had logged onto the campaign website (i.e., knowing the winner). The reminder email, as well as the presentation of the mascot name, was consistent with condition-specific framing: the subtitle under the announcement of the mascot name stated, “Thank you very much [winner's name], the winner of the competition” or “Thank you very much for your cooperation, [list of all participants’ names].”

Results

Data preparation

We examined customer-level data on spending (in JPY) for a 56-day period: 28 days prior to the knowing the winner and 28 days after it. Data were prepared by creating individually adjusted 24-hour periods based on the day and time a participant logged on to the campaign website (i.e., knowing the winner). The purchase data were sparse (participants averaged 7.34 purchases within a 28-day period), so data were aggregated into 3-day, 7-day, 14-day, 21-day, and 28-day periods before and after knowing the winner. This approach is common for sparse sales data (Janakiraman et al. 2018; Shi et al. 2017).

Difference-in-difference method

The difference-in-differences approach compares the average performance of a condition (e.g., community activity) to the average performance of a second condition (e.g., competition) over a period of time. This method is superior to other methodologies, such as a within-participant preintervention versus postintervention analysis, because it can control for the confounding factors that can influence purchase behavior over time (e.g., seasonality, competition, promotional activities).

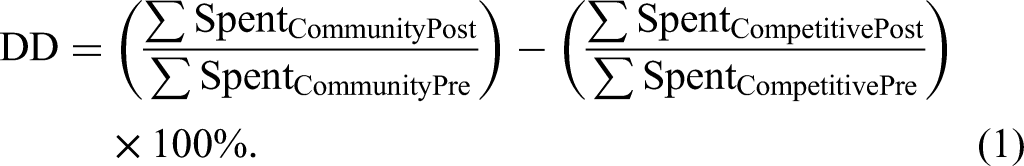

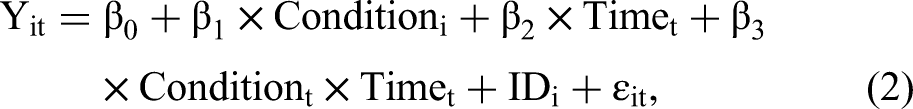

For this study, the difference-in-differences equations were:

For model-free assessment in percent:

Analysis plan

We examine the data in two ways: descriptively (i.e., model free) and by means of exponential dispersion model. The advantage of the model-free raw data analysis is it provides the true impact of the manipulations on sales. Raw data has external validity. The disadvantage of the raw data analysis is it relies on highly right-skewed data (i.e., there are too many “0” observations: 13.64% and 12.94% of participants did not make any purchases in the 28-day periods before and after the winner announcement, respectively), which violates assumptions of linear regression and makes its statistical inferences unreliable. The recommended solution is to use an exponential dispersion model; specifically, Poisson-Gamma distribution from Tweedie family (Dunn and Smyth 2018; Hasan and Dunn 2010).

Analysis β1

The data revealed different attrition by condition (χ2(1, N = 4,909) = 12.88, p < .001). Differential attrition by condition can create self-selection bias. The difference-in-difference method addresses self-selection bias as long as the common trends assumption is satisfied (Lee and Kang 2006). The placebo test confirmed that preintervention spending patterns across conditions were equivalent, p > .05, satisfying the common trends assumption.

Analysis β3—model-free

Participants in the community frame condition, as compared with the competition frame condition, increased their spending after knowing the winner. The observed effect is consistent across different time periods, but more pronounced when shorter time periods are considered. Specifically, we find a substantial difference-in-differences of +12.20% for the aggregated 3-day before–after period; +5.90% for the aggregated 7-day before–after period; +4.53% for the aggregated 14-day before–after period; +1.45% for the aggregated 21-day before–after period; and +1.31% for the aggregated 28-day before–after period.

Analysis β3—Poisson-Gamma model

The results demonstrate that participants in the community frame condition, as compared with the competition frame condition, increased their spending level after knowing the winner. The observed effect was more pronounced when shorter time periods are considered. Specifically, we find the difference-in-differences of +8.34% for the 3-day before–after period (β3 = .08, SE = .04, t = 2.06, p = .039); +5.41% for the 7-day before–after period (β3 = .05, SE = .03, t = 1.99, p = .047); +4.53% for the 14-day before–after period (β3 = .04, SE = .02, t = 2.05, p = .041); +1.58% for the 21-day before–after period (β3 = .02, SE = .02, t = .79, p = .427); and +1.21% for the 28-day before–after period (β3 = .01, SE = .02, t = .65, p = .514).

Discussion

Study 3 provides evidence that the predicted positive effect of framing crowdsourcing as a community activity (H2a) holds not only in a controlled setting (Studies 1b, 2a, and 2b) but also in a noisy real-life environment. In particular, we find that participants in the community frame condition spend more than participants in the competitive frame condition shortly after becoming aware of the crowdsourcing winner. The significant effect observed the first two weeks after knowing the winner is notable, given the strong market position of the convenience store chain and the presumably habitudinal nature of the consumption. However, we also find that the effect is attenuated when analyzing a longer time period (i.e., 28 days).

General Discussion

Prior research highlights the positive effects of crowdsourcing. In particular, crowdsourced new product ideas are more novel, innovative, and financially successful compared with the ideas generated by in-house development teams (Nishikawa et al. 2013). Further, consumers who are aware of, but do not participate in, crowdsourcing activities perceive the contest-hosting brand to be more customer-oriented, democratic, empowering, and innovative (Dahl et al. 2015; Fuchs and Schreier 2011; Paharia and Swaminathan 2019; Schreier et al. 2012). Surprisingly, however, prior research has not investigated how participating in a crowdsourcing contest impacts the subsequent behavior of the participants. This is notable because those who participate in crowdsourcing contests are oftentimes the firm's current and, importantly, also future customers. Are there any unintended consequences on important outcome variables for the crowdsourcing firm?

We observe a backfire effect among consumers who participate in a crowdsourcing contest and lose. Specifically, we find that crowdsourcing participants whose ideas are not selected temporarily disengage from the brand. When people lose a crowdsourcing contest, the experience of losing negatively affects the participants’ word-of-mouth and short-term purchase behaviors. For example, Study 1a finds that crowdsourcing participants whose ideas for a café's new breakfast bundle were not selected were less likely to redeem their coffee coupons shortly after learning about the contest's outcome, as compared with participants who were not involved in the crowdsourcing (i.e., 9% vs. 21% redemption rate, respectively, within the first 10 days). Similarly, we find that losing participants were markedly slower to redeem their coupon (Study 1a), had a lower intent to patronize the contest-hosting firm (Study 2a), and less desire to engage in positive word of mouth about the underlying brand (Study 2a). Further, Study 1b demonstrates that lower customer engagement is mediated by negative feelings associated with not having won the contest (i.e., negative affect, perceived lack of contribution).

Our findings also provide a potential remedy for the disengagement that results from losing a crowdsourcing contest. In particular, we find that reframing the contest as a community activity (i.e., “Join the Crowd and help us find a name for our new restaurant”) rather than a competition (i.e., “Compete with the Crowd to be the one who names our new restaurant”) helps address the problem. For example, reframing the crowdsourcing activity from a competition to a community activity increased the intent to patronize the brand (Study 2b), the desire to engage in positive word of mouth (Study 2b), and spending at the contest-hosting firm's store shortly after learning about the campaign's outcome (Study 3). Community framing shifts attention away from losing the contest to collectively creating a superior outcome without changing the nature of the contest itself (e.g., participants continue to submit their ideas). As highlighted in Study 1b, community framing indeed brought about higher customer engagement intentions because the sore feelings of not having won the contest were less pronounced in the community framing condition, and participants in that condition had a stronger sense of having made a positive contribution to the final outcome.

Thus, we recommend managers of crowdsourcing contests consider framing the contest as a community activity rather than as a competition. This recommendation is backed by our finding that the framing intervention did not affect the effort participants invested in the contest (Study 2b) or the quality of the ideas the participants submitted (Studies 2a and 2b). Furthermore, we found that the framing effect is particularly pronounced when participants invest more (vs. less) effort into the crowdsourcing task (Study 2b), suggesting that firms might benefit more from a community frame when the focal task requires more effort from participants. Future research might more systematically test the effort hypothesis using controlled experiments (i.e., where effort is manipulated) and field studies (i.e., where crowdsourcing is employed for more complex and time-consuming tasks). In addition, research could study the interrelations between the focal framing manipulation, the mediators (i.e., negative affect, perceived contribution) and the moderator (i.e., effort). For example, Study 2b suggests that effort might be beneficial for engagement in the community framing condition because more effort might imply a stronger perceived contribution to the joint outcome. Furthermore, future research may investigate how winners react to the community framing, an aspect we did not study. It is possible that the positive effect (community > competitive) observed among losers is less pronounced (maybe even reversed) among winners. Results might matter for situations in which there are many winners (which was not the case in our studies). Relatedly, a limitation of the present research is that we randomly assigned participants to the various conditions in the experiments. While this can be considered a strength from an internal-validity perspective, it is unclear how the framing would affect consumers’ decision to participate in the crowdsourcing to begin with. On the one hand, competitive framing may recruit more participants owing to the opportunity to win rewards and recognition. On the other hand, community framing may recruit more participants owing to the opportunity to be “part of the community.” Relatedly, research is needed on how the framing affects the quality of the participants. While our investigation highlights the interestingness of such questions, future research is needed to inform managers about the various implications and consequences of framing crowdsourcing differently.

Another interesting research question concerns the optimal number of winners. Theoretically, the best way to keep all participants happy is to create a setting where everyone is a winner or, at least, the number of winners is maximized. While seemingly unrealistic, this could be possible in online contexts where the cost of “producing” a winning idea is small. For example, IKEA and Gatwick Airport invited their customers to use Instagram to post brand-related visual content (e.g., home interior, terminal view). The content was then reposted on the brand's official Instagram page, quoting the creator. It is plausible that making all customers winners in a crowdsourcing contest might boost their subsequent engagement with the brand.

The studies provide evidence that losing a crowdsourcing contest results in temporary disengagement from the sponsoring brand. For example, aggregate redemption rates of the coffee coupons differed in the short term, but were equivalent after 30 days (Study 1a). Similarly, store patronage differed in the short term, but was equivalent after 28 days (Study 3). While these findings are consistent with our theory in H1a (i.e., losing participants’ desire to psychologically disengage and distance themselves from the contest-hosting brand will be particularly pronounced immediately after having learned about the outcome), they might also be interpreted to mean that managers do not need to worry too much about the consequences of losing a crowdsourcing competition. We caution the reader from making such a hasty conclusion. A different setting might bring about different results. With respect to Study 1a, the café had no direct competitors and was on a routine traffic route (i.e., participants walked past the café every day). With respect to Study 3, participants were loyal customers who received ten loyalty card points in exchange for participating (i.e., participating moved customers closer to reward goals, thus incentivizing future patronage). We would not be surprised if results change, such that the negative effects persist, when a crowdsourcing contest involves businesses with more direct competition, customer purchase behavior is less routine, and purchase prompts are absent. An absence of a symbolic recognition for participants (e.g., a simple thank-you note) could also influence the persistence of negative effects (Fombelle et al. 2016; Hanine and Steils 2019; Piezunka and Dahlander 2019).

More broadly, we believe the severity and length of negative consequences exists along a continuum. On one end are the “sticky” consumers we investigated. These consumers presumably had a strong relationship with the contest-hosting brand and engaged in habitual purchasing (i.e., the brand feels like a friend; Fournier 1998). Sticky consumers experience disappointment at losing the crowdsourcing competition, temporarily disengage from the brand, but reengage after a period owing to prior habits, social influences, and perhaps self-serving forgiveness. On the other end of the continuum are the “switcher” consumers we did not investigate. These consumers are familiar with the contest-hosting brand but have a weak(er) relationship (i.e., the brand feels like an acquaintance; Fournier 1998). Switcher consumers will also experience disappointment at losing the crowdsourcing competition and disengage from the brand, but will have fewer opportunities to reengage with the brand owing to a lack of habits and less social influence. Thus, the negative consequences of losing a crowdsourcing competition should be more severe and long-lived for moderately, as opposed to strongly, invested consumers. We suggest that future research looks into this matter more systematically.

Finally, Study 2a found that the framing manipulation also affected participants who merely observed (vs. participated in) the crowdsourcing contest. This finding is consistent with prior research showing that customers who observe a crowdsourcing competition feel more connected with other customers (Dahl et al. 2015). Our findings extend this line of research by showing that framing a crowdsourcing contest (community activity > competition) amplifies the observer effects. From a customer engagement perspective, we also found that observing seems better than participating, irrespective of the framing. Thus, publicizing a crowdsourcing initiative to nonparticipating prospective customers should be beneficial for the contest-hosting brand.

In conclusion, we argue that crowdsourcing managers are well advised to pay more attention to the management of their crowds, with an aim to optimize not only participation rates and idea quality but also customer engagement after the contest. Framing a crowdsourcing contest as a community activity, as opposed to a competition, is one way of avoiding potential relational damages among the majority of participants: those whose ideas are not winners.

Supplemental Material

sj-pdf-1-jnm-10.1177_10949968231184417 - Supplemental material for I Didn’t Win! An Overlooked Downside of Crowdsourcing?

Supplemental material, sj-pdf-1-jnm-10.1177_10949968231184417 for I Didn’t Win! An Overlooked Downside of Crowdsourcing? by Tatiana Karpukhina, Martin Schreier, Chris Janiszewski, and Hidehiko Nishikawa in Journal of Interactive Marketing

Footnotes

Acknowledgments

We thank WU Labs, Vienna SWBL Marketing Course V students and Department of Marketing research assistants and for their support in organizing lab studies and evaluating participants’ ideas. We thank Lawson Inc. for cooperation on Study 3. The funding from WU Vienna (Vienna University of Economics and Business) and Austrian Federal Ministry of Education, Science and Research through HRSM scheme have supported this research.

Author Note

The present work was not done within Booking.com BV but during the first author’s prior employment at WU Vienna (Vienna University of Economics and Business).

Editor

Arvind Rangaswamy

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by WU Vienna (Vienna University of Economics and Business) and the Austrian Federal Ministry of Education, Science and Research.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.