Abstract

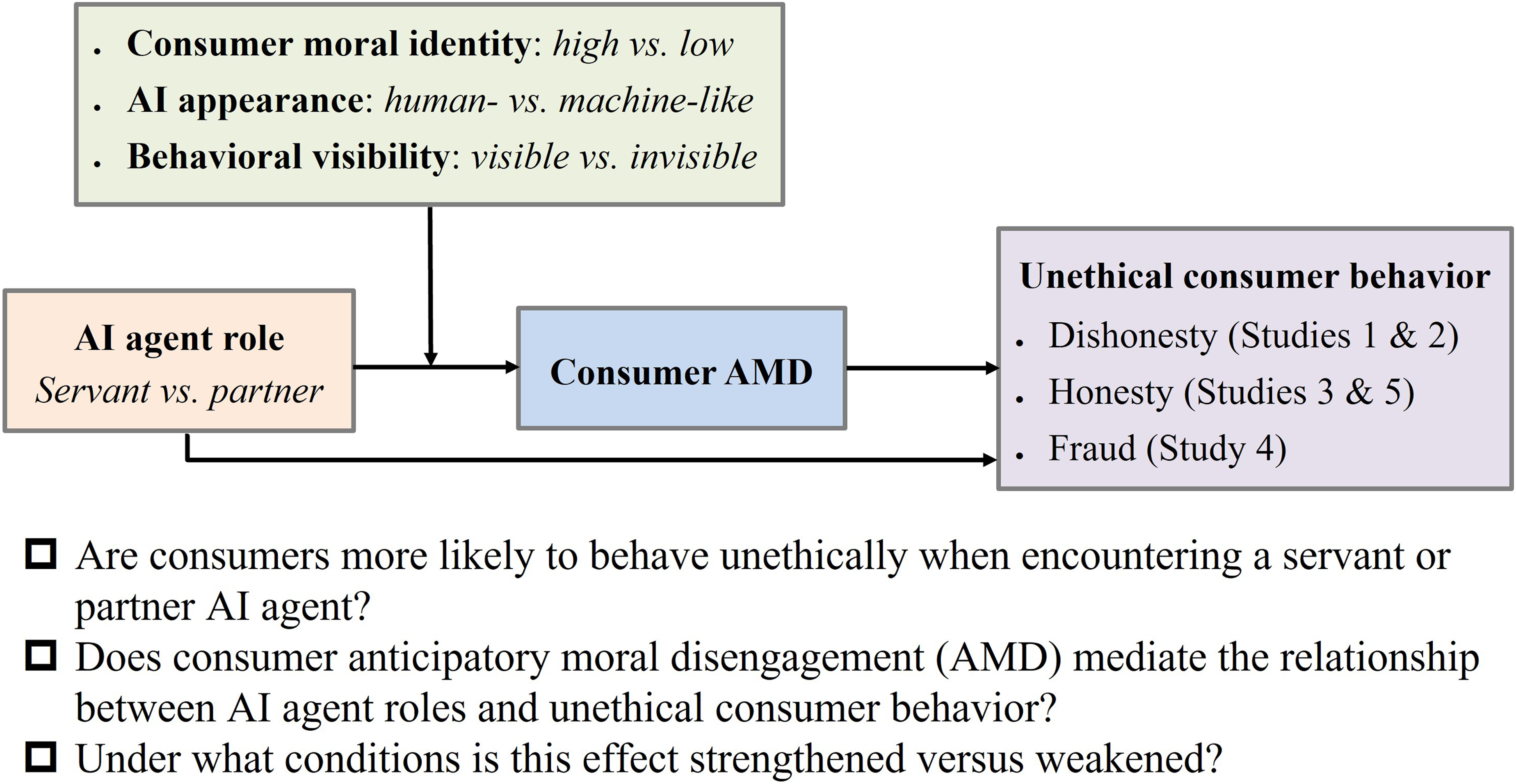

Recent research has shown that consumers tend to behave more unethically when encountering artificial intelligence (AI) agents than with human agents. Nevertheless, few studies have explored the differential impact of AI agents on unethical consumer behavior. From the perspective of the power relationship between AI and consumers, we classify the role of an AI agent as that of a “servant” or “partner.” Across one field study and four scenario-based experiments (offline and online), we reveal that consumers are more likely to engage in unethical behavior when encountering servant AI agents than partner AI agents due to increased anticipatory moral disengagement. We also identify the boundary conditions for the moral disengagement effect of AI agents, finding that this effect is attenuated (a) among consumers with high moral identity, (b) with human-like AI agents, and (c) in the context of high behavioral visibility. This research provides new insight into the AI morality literature and has practical implications for service agencies using AI agents.

Keywords

Get full access to this article

View all access options for this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.