Abstract

Computing technology has evolved significantly during the past five decades. As semiconductor scaling is reaching physical and technological limits, it is driving many transformative changes in computing hardware. This has led to computing systems that rely heavily on multi-core processors and GPUs, and resulted in the development of specialized hardware for applications in machine learning and scientific computing. While modern hardware provides significant computing power, and therefore opportunities, it challenges many established algorithms and workflows in scientific computing: these algorithms may not be able to fully leverage modern hardware. Often times, effective use of modern hardware entails revised algorithms, and even rewriting a considerable portion of an existing code. Understanding technology trends in computing hardware is necessary for designing next-generation algorithms for scientific computing. This paper reviews these trends, along with their drivers, in a language that is accessible to computational and data scientists, and applied mathematicians. In this paper (Part I), we review technology evolution in general-purpose microprocessors and hardware accelerators, along with background material. In Part II (Hanindhito et al., 2026), we consider memory systems, inter-device communication, heterogeneous computing and system integration, energy consumption, and how these trends impact scientific computing.

Introduction

Motivation

Computing hardware has experienced a lot of changes, especially during the last two decades: multi-core microprocessors are common; GPU computing is gaining traction among a broader group; multi-node computing is more routine in industry and academia due to interest in solving larger and more complex problems; there are several examples of specialized hardware that are being used for scientific computing; and there is renewed interest in more exotic forms of computing, such as quantum and neuromorphic computing.

Some of these changes are stimulated by the end of Moore’s law and semiconductor technology scaling: it is harder to double the processing power of modern chips every 2 years by doubling the number of transistors on a chip, at the same cost; moreover, power consumption is limiting the compute capacity of modern chips, as they typically have a higher power density, and cooling them is becoming increasingly more challenging.

A forward-looking computational scientist, applied mathematician, algorithm developer, or an industry that relies heavily on scientific computing must carefully examine the impact of changes in computing hardware on their algorithms and workflows: should different algorithms be designed to better harness emerging hardware? Is it feasible to design specialized hardware for some compute-intensive workflows? What are possible consequences of inaction, i.e., using current algorithms and workflows on emerging hardware? What problems will become tractable a decade from now due to projected trends in computing hardware? Answering these questions, and quantifying their impact, are quite challenging, and may need a multi-year, multi-disciplinary effort.

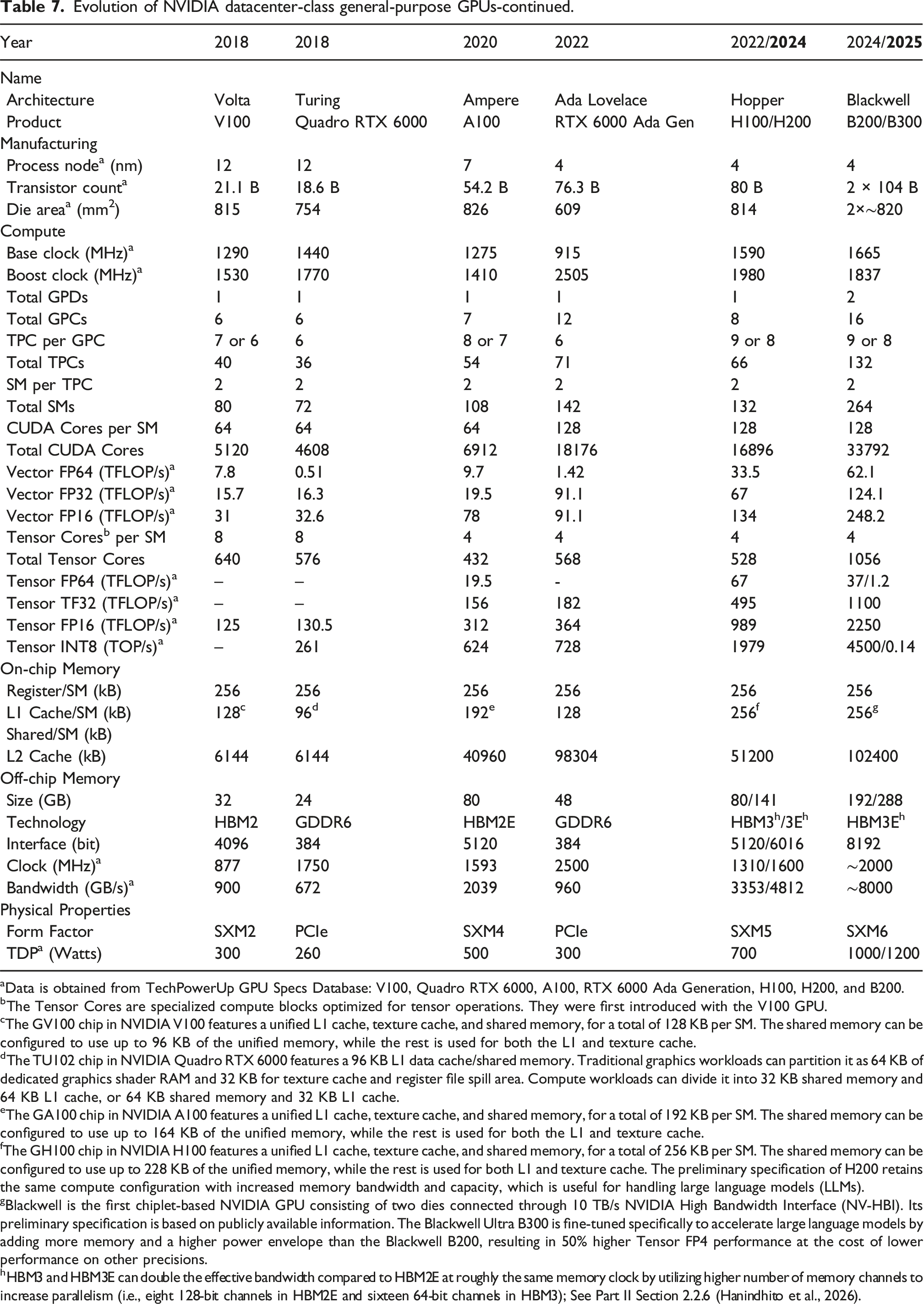

Generally speaking, computational and data scientists, and applied mathematicians, are not trained in computer architecture and technology trends in computing hardware at a level of detail that may guide strategic decision-making on future algorithms and workflows. Literature that provides more detail is often too technical, hard to read, not self-contained, or may not clearly outline the interaction between different parts and technologies.

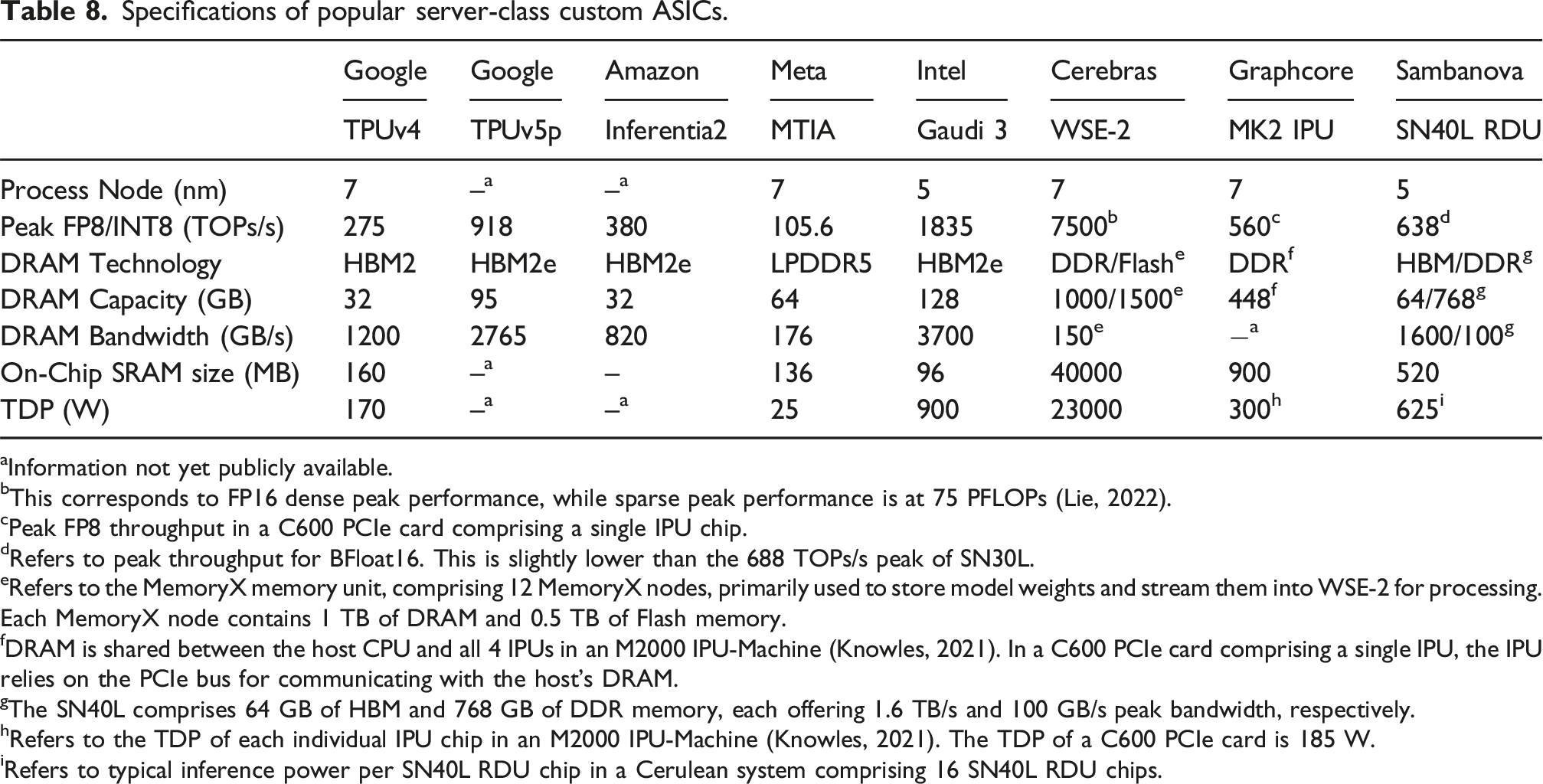

We attempt to bridge this gap: through a collaborative effort between hardware engineers, computational scientists, and applied mathematicians, we provide a holistic view of technology trends in computing hardware, 1 and highlight interactions between different components. We venture into how these trends may impact different algorithms that are commonly used in high-performance scientific computing. This effort is distinct from similar works, by providing a deep level of technical detail, while attempting to keep it accessible to a general computational scientist. By providing numerous examples and illustrations to clarify key trends, we help readers form their own judgement. To improve readability, helping readers see the big picture without getting lost in details, and keeping the paper self-contained, we provide certain details and examples in footnotes.

We believe this paper provides a clear and detailed perspective about technology trends in computing hardware to our targeted audience, along with their impacts on algorithms. These trends, along with domain knowledge, provide insights to computational scientists, and could position them to make informed decisions about designing algorithms and workflows that are expected to perform well on modern hardware, especially in industries that rely heavily on high-performance scientific computing, such as oil and gas, aerospace, defense, automotive, pharmaceutical, and finance.

Outline and summary

We begin each section by reviewing historical and current trends, along with future projections, based on publicly-available industry roadmaps. We discuss how different technologies interact, and how they impact the overall performance. We end each section with takeaways, possibilities, and key challenges, along with our own opinions that are supported by the trends. In what follows, we provide a high-level summary and refer to different parts of the text for details. Acronyms we used throughout the text are listed in Appendix 1.

Background material on key concepts in computing hardware, along with general trends are covered in General technology trends and concepts in computing hardware. They are repeatedly referred to throughout the rest of the paper, and therefore, we suggest readers review it before reading the rest of the text. Specifically, we highlight key characteristics of transistors, which are the fundamental building blocks of computer chips. These include the clock frequency, which impacts the switching speed and therefore runtime performance, as well as power consumption of transistors. The transistors have consistently become smaller over the years. This has allowed placing more of them in the same area, which typically results in more powerful chips. Moore’s law described the rate of transistor miniaturization at the same cost, and thus, it played a key role in setting expectations and guiding industry roadmaps. While some believe Moore’s law is losing steam, others believe it is no longer alive. Semiconductor scaling is facing physical and technological limits. Dennard scaling, which described how computer chips could keep their power consumption under control as transistors become smaller does not hold anymore. Consequently, modern computers often need more energy to operate and therefore, energy efficiency has become a central issue in modern chip design.

As transistors become smaller, more of them are placed on the same silicon die area. This increases the possibility of defected chips as they become larger, and therefore results in lower yield. However, advances in packaging technology have resulted in larger and more powerful chips without impacting the yield, as well as keeping R&D and manufacturing costs under control. The small size of transistors also increases their vulnerability, which makes maintaining reliability for advanced chips increasingly more challenging. Not only modern chips have smaller transistors, they also have thinner wires for internal connectivity. Wire scaling is challenging as it increases resistance and impacts signal integrity, and thus, has motivated the development of optical on-chip interconnects. In parallel, as semiconductor technology scaling is reaching its limits, chip designers need to find new ways to improve performance, resulting in the widespread adoption of diversification in architecture and implementation of modern chips.

Computer chips often need to communicate with off-chip components (e.g., other processors or memory) to perform computations. The communication is enabled through pins. Over the years, the number of transistors on a computer chip has grown much faster compared to the number of its pins. This has led to communication bottlenecks, and thus contributed to substantial alleviation efforts through both hardware-centric and algorithmic approaches. The hardware-centric techniques often attempt to increase the communication bandwidth though using new technologies, or using the communication signals more efficiently, e.g., through signal modulation. The algorithmic approaches often attempt to reduce the communication at the expense of increasing local computations.

In General-purpose microprocessors, we review their evolution during the past several decades. As transistor scaling enabled placing more transistors on a chip, a significant portion of these transistors supported instruction-level parallelism, leading to more powerful, single-core chips. This strategy showed diminishing returns on performance in the early 2000s, and led to the development of multi-core processors. Since then, adding more cores to a chip generally has a greater impact on the overall performance. Indeed, sophisticated units within compute cores do not benefit many applications that enjoy less complexity (e.g., dense linear algebra). The rise of these applications led to the development of many-core processors. Future general-purpose microprocessors are becoming increasingly diverse to meet specific performance needs of various applications. This includes processors that enable a faster runtime, or processors that may not be as fast, but are more energy-efficient, as well as processors that have access to a significant amount of on-package memory.

In Hardware accelerators, we examine how these devices provide performance improvements for select applications. These applications often enjoy a lot of regularity and structure, afforded by linear algebra, and enjoy a high computation-to-data-movement ratio. These conditions are typically observed in machine learning, and, sometimes, in scientific computing. Accordingly, the application’s structure can be exploited to improve how a chip utilizes silicon area and energy: more silicon can be dedicated to perform the actual computations, as opposed to units that manage on-chip flow control and data movement. In layman’s terms, well-behaved applications require less management, and therefore, the real estate that performs management can shrink to accommodate more workers. This specialization results in chips, such as GPUs that have significantly more processing power, and consume less energy, compared to general-purpose microprocessors. Availability of well-developed and well-documented software stacks 2 has been a key enabler for the adoption of GPUs by a large group of computational and data scientists. Porting a CPU code into GPUs is typically a tedious task. Often times, the underlying algorithms need to be revised, and a considerable portion of the code needs to be rewritten to maximize performance. Not all workloads and algorithms are suited for GPUs. General-purpose microprocessors are still needed for many applications. GPUs are still programmable hardware, and while efficient for some computations, they do not deliver the best performance for many applications. Given that technology scaling is reaching its limits, more aggressive hardware specialization remains among the very few options to further improve performance for the foreseeable future. Typically, a large market for the specialized hardware is needed to justify research and development costs, as well as the software support. A specialized hardware may be implemented on different fabrics (e.g., FPGA, CGRA, or ASIC), depending on performance requirements and the maturity of the targeted applications. We provide several examples of publicly-known specialized hardware that are used in machine learning and scientific computing.

General technology trends and concepts in computing hardware

Concepts outlined in this part are fundamental to understanding the underlying reasons behind computing technology trends, and are repeatedly referred to in the paper. They are briefly explained to provide context, improve readability, and to keep the paper self-contained.

Clock frequency

Clock frequency has been used as a proxy for chip performance (Agarwal et al., 2000; Henning, 2000). The switching activity of the transistors is governed by a periodic pulse signal, called clock (Xiu, 2019). The clock synchronizes the switching time of millions of transistors inside the chip (Friedman, 2001; Messerschmitt, 1990). This synchronization is vital for the chip to perform its operations correctly, including fetching data from peripheral devices (e.g., main memory (Cristal et al., 2005)), executing a stream of instructions (e.g., program code (Choi et al., 2004; Crawford, 1990)), and storing back the results. Therefore, the clock frequency, measured in Hz, determines how fast the transistors switch states between on and off, roughly translating into how many operations a chip can do per second (Messerschmitt, 1990). Several factors impact the highest possible clock frequency that a chip can run at, while maintaining the integrity of the data that flows throughout the circuitry. These are: a) intrinsic properties of the transistors, which depend on the process node 3 and manufacturing technologies (Geppert, 2002; Shahidi, 2007); b) operating voltage of the transistors (Liu and Svensson, 1993; Meijer et al., 2004); and c) the microarchitecture implementation of the chip itself (Boggs et al., 2004; Marculescu and Talpes, 2005).

Clock frequency may not always be a representative metric for performance. For instance, consider two chips with identical integer units, A and B: chip A runs at a clock frequency that is twice as fast as B, and B is equipped with a more advanced floating-point unit that can perform floating-point operations in 75% less clock cycles than A (e.g., 2 clock cycles for B vs 8 clock cycles for A). In a workload where 99% of the instructions are floating-point operations, chip B will perform nearly twice as fast as A, despite its lower clock frequency. However, in a workload where there is minimal floating-point instructions, chip A will perform nearly twice as fast as B. Therefore, the clock frequency is not an accurate metric for comparing the performance of chips with different manufacturing technologies, operating conditions, and microarchitectures, which run diverse workloads.

Transistor power consumption and heat dissipation

Reducing the power consumption of modern transistors is arguably the biggest challenge in modern chip design (Dennard scaling). Electrical power consumed by transistors can be grouped into two major categories: dynamic power and static power.

CMOS 4 (transistors) consume dynamic power when switching states (Kaxiras and Martonosi, 2008; Kocanda and Kos, 2015). The majority of dynamic power consumption is due to charging and discharging of the parasitic capacitance 5 while a small portion is due to the short-circuit current. 6 The dynamic power (P d ) can be estimated through P d = C.f.V2, where C is the (parasitic) capacitance that depends on the process node and manufacturing technologies, f is the clock frequency, and V is the operating voltage of the transistors. The operating voltage at a transistor gate should be greater than a threshold voltage (V t ) to turn it on (Weste and Harris, 2010). While increasing the clock frequency directly increases the dynamic power, a higher operating voltage is also required for the transistors to sustain faster switching activity and maintaining the correctness of the operations (Le Sueur and Heiser, 2010). Therefore, increasing clock frequency increases the dynamic power significantly.

Static power is a consequence of current leakage (Butts and Sohi, 2000; Elgharbawy and Bayoumi, 2005). Ideally, when a CMOS transistor holds its state (either on, or off), no current should flow from the voltage source applied to the transistor. In reality, a small amount of current flows due to leakage. The leakage power increases as transistor size becomes smaller.

Power consumed by the transistors is converted into heat (Brooks et al., 2007; Gonzalez and Horowitz, 1996), which then must be pulled away to prevent damage. Cooling systems, including heat spreaders, heat sinks, and active cooling (e.g., fans) work together to remove the heat from an area in the order of squared centimeters. High power density, and small surface area for thermal contact, make heat removal challenging (Mahajan et al., 2006a; Wei, 2008). For instance, the Intel Pentium 4 Netburst P68 micro-architecture (Boggs et al., 2004) was expected to reach 10 GHz by 2005 (Ogasawara, 2002). However, even at 3.8 GHz, its power density reached 105 W/cm2, which is the same power density induced by the core of a nuclear reactor (Gelsinger, 2001).

Supplying power to chips is bounded (Aygün et al., 2005; Radhakrishnan et al., 2021) due to physical limits on the amount of current that can be carried by wires and pins, from a power supply and power delivery system 7 to a chip (Lidow and Sheridan, 2003; Yazdani et al., 1997). The use of aggressive power management features, such as dynamic voltage and frequency scaling (DVFS) (Le Sueur and Heiser, 2010; Papadimitriou et al., 2019), play important roles in keeping the chip power consumption under control. The term typical power is often used to indicate the sustained power that a modern microprocessor consumes under a long heavy load, 8 and is used to design the cooling system needed for heat removal (Ganapathy and Warner, 2008; Guermouche and Orgerie, 2022).

Transistor size and Moore’s law

Transistors are the building blocks of computer chips. They operate like a switch representing a binary value of 0 and 1 (i.e., off and on, respectively). Generally speaking, placing more transistors on a chip improves its processing power.

Gordon Moore, the co-founder of Intel, has made several predictions about transistor miniaturization trends due to technology improvements in Moore (1965) and in a 1975 paper reprinted in Moore (2006). His best-known forecast (1975), which is widely known as Moore’s law, predicted shrinking of transistor sizes that corresponds to a doubling of transistor density on chips every 2 years. When Moore made his prediction, the design and manufacturing cost of a chip was proportional to its area. Therefore, Moore’s law was intrinsically an economic forecast, stating computer chips becoming twice as powerful every 2 years, with the same price tag.

Consequences of Moore’s law were significant: it guided technology roadmaps and became a self-fulfilling prophecy; furthermore, many applications enjoyed performance improvements proportional to transistor scaling rates while making minimal changes to their algorithms.

While chip manufacturers still go to great lengths to shrink transistor size according to Moore’s law, the economic aspects of Moore’s forecast are no longer sustained. For instance, Jensen Huang, CEO of NVIDIA, believes Moore’s law is dead (Jain and Murugesan, 2021). Transistor scaling has been challenged by physical 9 and technological limits. We highlight the technological challenges in Transistor power consumption and heat dissipation, Dennard scaling (the need for more power), Transistor count and yield, and manufacturability (lower yield due to higher transistor density on a chip), and Cost of designing and manufacturing semiconductors (skyrocketing costs of designing and manufacturing of modern chips).

The end of Moore’s law has far-reaching impacts. Since performance can no longer be improved through technology scaling, new strategies are being pursued. These are highlighted in Advanced packaging technologies (to improve yield and reduce cost), Hardware accelerators (increasing hardware diversity to maximize performance of various application groups), and Part II: Near-memory processing (NMP) and processing-in-memory (PIM) (Hanindhito et al., 2026) (moving away from von Neumann architecture).

Dennard scaling

The end of Dennard scaling has made power consumption the central issue in modern chip design. As a result, modern chips need more power to run, and their cooling has become more difficult. It has created unprecedented challenges in supplying power to supercomputing and data centers (Part II: Energy consumption of large computing centers and its implications (Hanindhito et al., 2026)).

Dennard et al. (1974) published MOSFET scaling rules, which are widely known as Dennard scaling. Dennard described scaling relationships between transistor density, switching speed, and power dissipation due to MOSFET miniaturization. Dennard scaling states that by scaling down the transistor size by a factor of κ, the voltage (V), electrical current (I), and capacitance (C) will be scaled by a factor of

Dennard scaling did not take into account the existence of a physical lower limit for the operating voltage (V), imposed by the threshold voltage (V t ) (Taur, 1999a), as well as implications of scaling down the threshold voltage (V t ) (Stillmaker and Baas, 2017; Taylor, 2013). It assumed the operating voltage (V) and the threshold voltage (V t ) could continue to scale down as transistors become smaller. However, decreasing the threshold voltage (V t ) in smaller transistors increases the current leakage 12 (Ahmed and Schuegraf, 2011; Kim et al., 2003), which leads to increased static power consumption (Kao et al., 2002). This makes both static and dynamic power equally important 13 when transistor size becomes very small (Sylvester and Kaul, 2001). At nanometer-scales, power density per square area can no longer be held constant. Power density increases as the transistors become smaller, causing the “power wall” (Wang and Skadron, 2013). This marks the end of Dennard scaling, which occurred in the mid-2000s (DeBenedictis, 2017; Taylor, 2013).

Transistor count and yield, and manufacturability

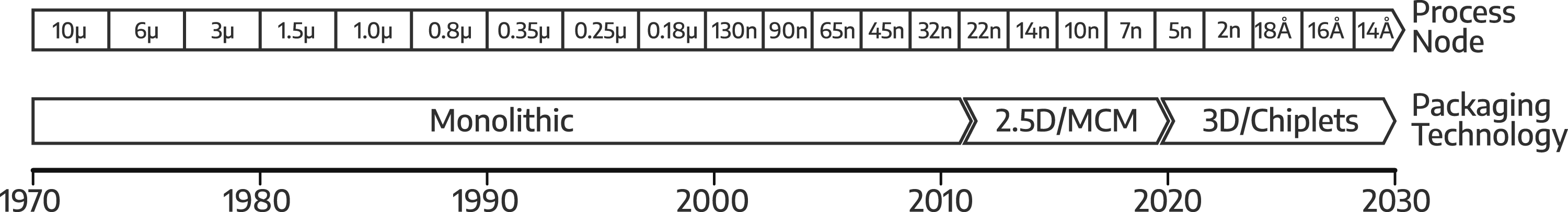

Figure 1 shows historical data on the advancement of semiconductor process nodes and a projection for the next few years. When transistor size becomes a few nanometers, it approaches physical and technological limits (Naveh and Likharev, 2000; Taur, 1999b). This makes further shrinking of the size challenging, as indicated by the slowdown in the advancement of the process node.

14

Due to the slowdown in transistor scaling, larger silicon dies became popular, enabling higher transistor counts. Larger dies increase the possibility of defects, thus lowering the manufacturing yield and increasing manufacturing costs (Mack, 2015; Sun et al., 2020). Semiconductor process node and packaging technology trends since the 1970s, with projections up to 2030.

However, the maximum die size that can be manufactured for future process nodes is decreasing. The reticle limit, currently at 26 mm × 33 mm (858 mm2) (Lai, 2021; Suzuki, 2020), acts as the upper limit on how large a silicon die can be manufactured. The reticle limit is expected to be halved at 26 mm × 16.5 mm (429 mm2) due to the amorphous lens array used in the upcoming process node. Advances in packaging technologies allow the compartmentalization of a chip, by using multiple smaller dies called chiplets. This improves the yield and reduces costs, while conforming to the reticle limit of future process nodes.

Advanced packaging technologies

Advances in silicon packaging 15 technologies (Figure 1) (Su et al., 2017) will play an important role in increasing the number of transistors on a package in the near future. We highlight two key technologies: Multi-Chip Modules (MCMs) (Naffziger et al., 2021) and chiplets.

An MCM uses multiple (often similar) silicon dies to form a large chip (Arunkumar et al., 2017; Burd et al., 2019; Su et al., 2017). While MCM has been around since the 1980s, its popularity has increased in recent years to overcome the low-yield challenge and reticle limit (Transistor count and yield, and manufacturability). The silicon dies communicate through wires on the packaging substrate. 16 Traditionally, the dies are connected to the wires on the package substrate using wire-bond or flip-chip technology, which is referred to as 2D packaging. However, the wires in the package substrate are orders of magnitude larger than those in the silicon dies. The thicker wires and low wire density lead to routing congestion and therefore limit the attainable die-to-die bandwidth.

To improve connectivity between the silicon dies, an interposer layer is placed between the dies and the packaging substrate. In addition to bridging the connection between the silicon dies and the packaging substrate through vias, the interposer also acts as a conduit for connections between the silicon dies. Interposers are often manufactured using silicon, 17 hence the name silicon interposer. Silicon interposers provide significantly higher wire density, allowing implementation of high-bandwidth connection between dies. This is referred to as 2.5D packaging technology (Lenihan et al., 2013; Zhang et al., 2013), and the silicon dies are placed side-by-side. Further advancement of this technology is referred to as 3D stacking, which allows multiple dies to be stacked on top of each other (Agarwal et al., 2022; Su et al., 2017) and connected through vias. 18 While the 3D stacking technologies are promising, they face many challenges, such as the thermal issues 19 (Gomes et al., 2020; Su et al., 2017).

Specialization of silicon dies is becoming more common. Accordingly, a silicon die may perform only a specific function and thus manufactured using the best process node suited for that function. This type of specialization and compartmentalization is called chiplet (Loh et al., 2021; Naffziger et al., 2020). Just like in a puzzle, multiple chiplets with various functions 20 are used to build a modern System-on-Chip (SoC) MCM, which has continuously grown in popularity over the past decades. The use of chiplets is the recipe to keep Moore’s law (perhaps nominally) alive, and is expected to result in chips with trillion transistors by 2030 (Gelsinger, 2022; Kelleher, 2022; Moore and Schneider, 2022). Note that communication interfaces between chiplets consume additional silicon area and power. Finally, the development for future scalable interconnect technologies are critical to allow for lower latency and high bandwidth communication between chiplets (Chirkov and Wentzlaff, 2023).

Reliability and availability of computing systems

Transistor size is getting smaller due to advanced manufacturing, leading to increased transistor density on advanced chips, which enables chips to carry increasingly more complex functions. The smaller size of transistors and rise of complexity make computer systems more susceptible to reliability issues. In this sense, reliability may be defined as a measure of success, where a computing system’s behavior conforms to its specifications over a given operating period (Shooman, 2002). Failure happens when the behavior of the system deviates from its specifications. The relative proportion of time the system meets its specifications is called availability, which depends on the duration of failures as well as time needed to fix them.

An error is defined as an incorrect state of information stored in the system, whereas a fault is the cause of the error. The fault sources include component failure, equipment damage, interference (cross-talk) between wires, power disturbance, induction due to lightning, electromagnetic fields, electrical noise, and radiation (Randell et al., 1978). Radiation is a common cause of fault, especially for chips that are manufactured through smaller process nodes (Schrimpf et al., 2008), as they become more sensitive to it. Cosmic rays and alpha particles are common sources of radiation. They can cause soft errors (e.g., bit flips) in computing systems, particularly in logic and memory. A soft error is a non-permanent and non-recurring error, which corrupts information while the device itself may still function properly (Karnik and Hazucha, 2004). On the other hand, a hard error is often permanent (e.g., due to hardware failure) and may be repairable (Wang and Agrawal, 2008).

There are two approaches for achieving reliability in computing systems: fault-intolerance and fault-tolerance (Avižienis, 1975). Fault intolerance seeks to eliminate sources of unreliability. Since elimination of all possible sources of fault is not possible, fault intolerance reduces the probability of fault occurrence to an acceptable low level, and devises maintenance procedures should a fault occur. However, maintenance will impact system availability significantly, which may not be preferable for some critical computing systems. On the other hand, fault tolerance tolerates sources of unreliability, and seeks to counteract the consequences by using protective redundancy. Accordingly, systems that adopt fault tolerance can continue to operate despite the existence of errors, either at full or reduced capacity and capability, until the fault is addressed. For instance, aircraft computer systems use the Multiple-instruction Single-Data (MISD) approach, where multiple computer systems operate on the same data simultaneously. Next, the outputs are compared through a majority-voting mechanism. We remark that achieving reliability raises the cost of increasingly more expensive modern computing systems.

Wiring, connectivity, and signal integrity

In this part, we discuss the medium through which data is transmitted, which impacts the achievable bandwidth (Bogatin, 2022; Cho et al., 2007; Saraswat et al., 2008) as it affects signal integrity. The widely-used medium to carry electrical signals is metal-based wires, such as aluminum and copper. Carbon Nanotubes (CNT) are also briefly discussed as a potential replacement for metal-based wires. Optical interconnects, which have gained more traction in recent years and serve as alternatives to electrical interconnects are explored in Optical interconnects.

A widely-used metric to measure the performance of wires for carrying electrical signals is propagation delay. There are several approaches for modeling the propagation delay, based on physical characteristics of the wires, and their interaction with other materials 21 (Seckin and Yang, 2008; Zhou et al., 1988). A simple but common approach is the RC model, 22 which relies on resistance (R) and capacitance (C) of a wire to estimate the propagation delay (Qian et al., 1994; Sakurai, 1993). The RC model is also referred to as RC delay (Ciofi et al., 2016; Sylvester and Keutzer, 1998). The resistivity of a wire relies on its material property and geometry (Ciofi et al., 2016; Savage, 2002). Capacitance of a wire is influenced by interactions between adjacent wires, and dielectric materials 23 (Duan et al., 2001; Ruehli and Brennan, 1975; Zhao et al., 2009).

As the number of transistors on a chip grows, the number of wires that provide connectivity between them has to increase as well (Edelstein et al., 1995). Most of the chip area has already been covered by wires, which limits space for laying out new wires. Therefore, more metal layers are being used (Gelatos et al., 1994; Koyanagi et al., 1998) to construct local, intermediate, and global on-chip interconnects. 24 Increasing the number of metal layers complicates the manufacturing processes, and has negative impacts on the capacitance and cross-talk of the wires (Duan et al., 2001; Sim et al., 2003). In principle, making the wires smaller increases wire density. However, unlike transistors, metal interconnects do not benefit from further scaling (JM Veendrick, 2017; Koo et al., 2007). Scaling negatively impacts metal interconnects: smaller wires have higher resistance, and carry less electrical current (Edelstein et al., 1995; Srivastava and Banerjee, 2004). Increased wire density also exacerbates cross-talk and capacitance effects between wires (Naeemi et al., 2006), affecting signal integrity.

Early process nodes used aluminum (Al) for on-chip wires. However, aluminum could not meet interconnect performance requirements for process nodes beyond 180 nm (Staff, 2019; Sylvester and Keutzer, 1998). The move from aluminum (Al) to copper (Cu) in the late 1990s for metal wires (Andricacos, 1999; Theis, 2000), and the use of low-κ dielectric materials in the 2000s (Beyne, 2003), provided a one-time opportunity to improve propagation delay. 25 Despite their more complicated manufacturing process (Andricacos et al., 1998; Gelatos et al., 1994, 1996), copper wires have significantly smaller resistance, 26 and higher durability, compared to aluminum wires. For a while, this allowed copper wires to be made smaller, keeping pace with transistor scaling. Eventually, however, technology scaling became more challenging for copper, due to complications in electrical conductivity, reliability, 27 latency, and power dissipation (Kapur et al., 2002; Tőkei et al., 2016). Copper saw its limitation 28 at 40 nm (Kaushik et al., 2007). IBM estimated new metal materials 29 are needed for constructing on-chip wires beyond 15 nm (Bonilla et al., 2020; Huang et al., 2020; Staff, 2019).

Replacing copper with carbon nanotubes (CNT) for on-chip wires has been proposed since the early 2000s (Joshi and Soni, 2016; Xu et al., 2022). CNT has a higher reliability than copper, due to its mechanical and thermal stability. It reduces delay for intermediate and global on-chip interconnects by about 30% (Naeemi et al., 2006; Nieuwoudt and Massoud, 2008). However, integrating CNT onto a chip is challenging, due to immature manufacturing techniques, imperfect metal to CNT contacts, and high growth temperature 30 of CNT, which results in higher probability of defects and low achievable wire density (Kaushik et al., 2014; Pasricha et al., 2010; Xu et al., 2022).

Optical interconnects

Some researchers are considering the possibility of moving away from electrical signals to optical signals and optical interconnects for on-chip communication, thus limiting the amount of on-chip metal wires. Optical interconnects operate at the speed of light. Therefore, they provide significantly higher bandwidth for data transmission with lower latency compared to electrical signals. Optical interconnects have also proven to be reliable, performant, and efficient, for long-distance inter-node communication (Part II: Inter-node communication (Hanindhito et al., 2026)). Indeed, research on optical interconnects and silicon photonics 31 dates back to the 1980s (Soref and Bennett, 1987; Soref and Larenzo, 1986). Earlier studies showed using optical signals for on-chip interconnects may not be effective due to silicon area and power requirements needed by electrical-optical conversion devices (Kobrinsky et al., 2004; Sato et al., 2015). Nevertheless, optical interconnects have several merits, compared to copper wires, which make them attractive for process nodes beyond 10 nm.

Co-existence of electrical interconnects and optical interconnects on future chips is likely: electrical interconnects may be used to realize local on-chip connections, whereas optical interconnects can be used to realize intermediate and global on-chip connections (Chen et al., 2006; Cheng et al., 2016; Kapur and Saraswat, 2002; Saraswat et al., 2008). The minimum distance for which using an optical interconnect becomes more efficient than a corresponding electrical interconnect is referred to as the critical length, which gets shorter as more advanced process node are used (Chen et al., 2006; Kaushik et al., 2007). The critical length is estimated by using multiple metrics, which measure the performance 32 of an optical interconnect, compared to a corresponding electrical interconnect (Miller, 2009; Rakheja and Kumar, 2012).

An on-chip optical interconnect comprises several components: light-sources, modulators, wave-guides, and photo-detectors. Light-sources can be either on-chip, or off-chip lasers; they are being actively studied in the silicon photonics field (Li et al., 2022; Zhou et al., 2023). On-chip lasers, such as VCSEL, 33 are more efficient than off-chip lasers: they can be modulated at GHz frequencies, at the expense of increased power consumption, and reduced thermal dissipation (Amann and Hofmann, 2009). Off-chip lasers have low efficiency: they must be turned on almost all the time, to avoid light-generation delays; their integration is also more complex, since waveguides must be constructed to distribute the lasers to each on-chip optical modulator (Bai et al., 2011; Cadien et al., 2005; Peng et al., 2010). The modulator modulates light to encode data 34 (Section 2.14). The modulated lights then travel through the waveguides (Mekawey et al., 2022; Ryu et al., 1999) across the chip. Constructing on-chip waveguides to distribute the optical signals is not an easy task. It entails utilizing low-loss materials (Cadien et al., 2005), minimizing power consumption and efficiency loss (Bashir et al., 2019; Rahman et al., 2008), and finding an efficient topology and protocol for the waveguides (Le Beux et al., 2014; Werner et al., 2017).

While on-chip optical interconnects face challenges (Bashir et al., 2019; Chen and Segev, 2021), they have advantages over electrical interconnects (Cho et al., 2008). Optical interconnects enjoy minimal cross-talk and interference, allowing them to maintain signal integrity over long distances (Turkane and Kureshi, 2017). Moreover, due to wavelength division multiplexing (WDM), 35 optical interconnects can achieve significantly higher bandwidth compared to copper wires. With further improvements in light sources, modulators, and wave-guides, higher efficiency can be achieved, resulting in significantly lower energy requirements for data movement, compared to electrical interconnects (Karabchevsky et al., 2020). In addition to being used for on-chip interconnects, optical interconnects may also become candidates for connecting chiplets (Ayar Labs Inc., 2021; Hao et al., 2021; Wade et al., 2020), replacing copper wires on PCB to bridge multiple devices with massive bandwidth (Aleksic, 2017; Sharma et al., 2021), and also becoming the backbone of disaggregated infrastructure (Part II: Disaggregated infrastructure (Hanindhito et al., 2026)).

Cost of designing and manufacturing semiconductors

Design cost 36 of next-generation chips is expected to increase significantly, as the industry moves to smaller process nodes (Li et al., 2020). Both International Business Strategy Corporation (IBS) (Hruska, 2018) and McKinsey (Bauer et al., 2020) estimated the chip design cost to be: $28.5 M for a chip in a 65 nm process node, $51.3 M for a 28 nm process node (doubled), $106.3 M for a 16 nm technology (doubled), and $297.8 M for a 7 nm process node (tripled). The design cost of a chip in the latest 5 nm process node is estimated to be around $542 M, and the design cost for a future 3 nm process node is expected to reach $1 B. Moreover, the investment needed to build a semiconductor manufacturing facility (foundry) has also increased for smaller process nodes: $0.4 B for 65 nm, $0.9 B for 28 nm, $1.3 B for 16 nm, $2.9 B for 7 nm, $5.4 B for 5 nm, and $15 B to $20 B for 3 nm (Hruska, 2018).

The significant cost of designing and manufacturing modern chips may impact the economic feasibility of deploying custom-chips for certain scientific computing applications, especially those that are attractive to smaller markets. An alternative is to design and build chips in older and more cost-effective process nodes, especially when the absolute highest performance is not required.

Execution model, architecture, and implementation style

Since transistor scaling can no longer be a major driver of performance, there is growing interest in designing different classes of chips that are performant for specific or a group of applications. In this part, we comment on the execution model, architecture, and implementation style of different types of chips, their significance, and how they impact performance.

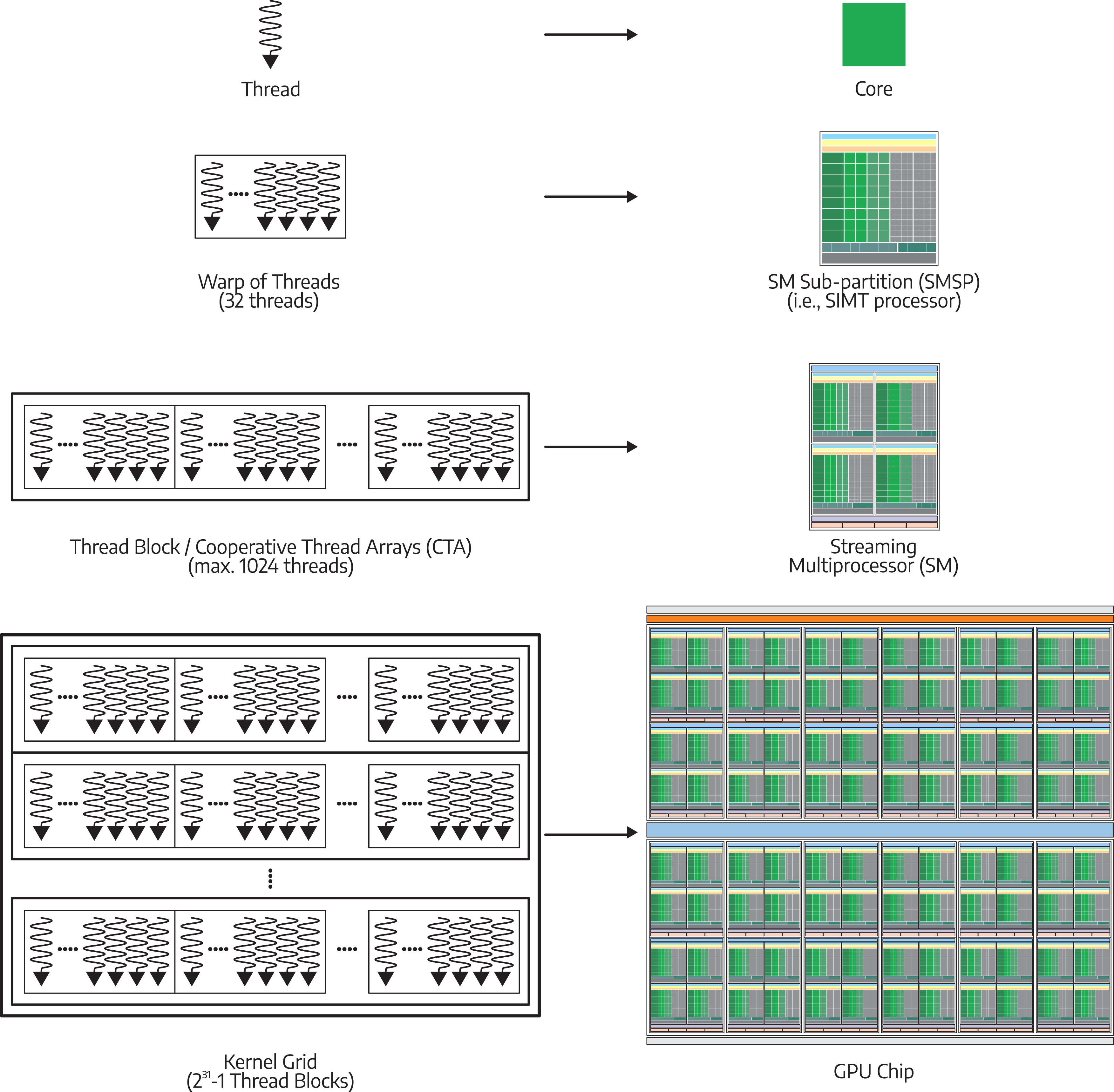

Execution model

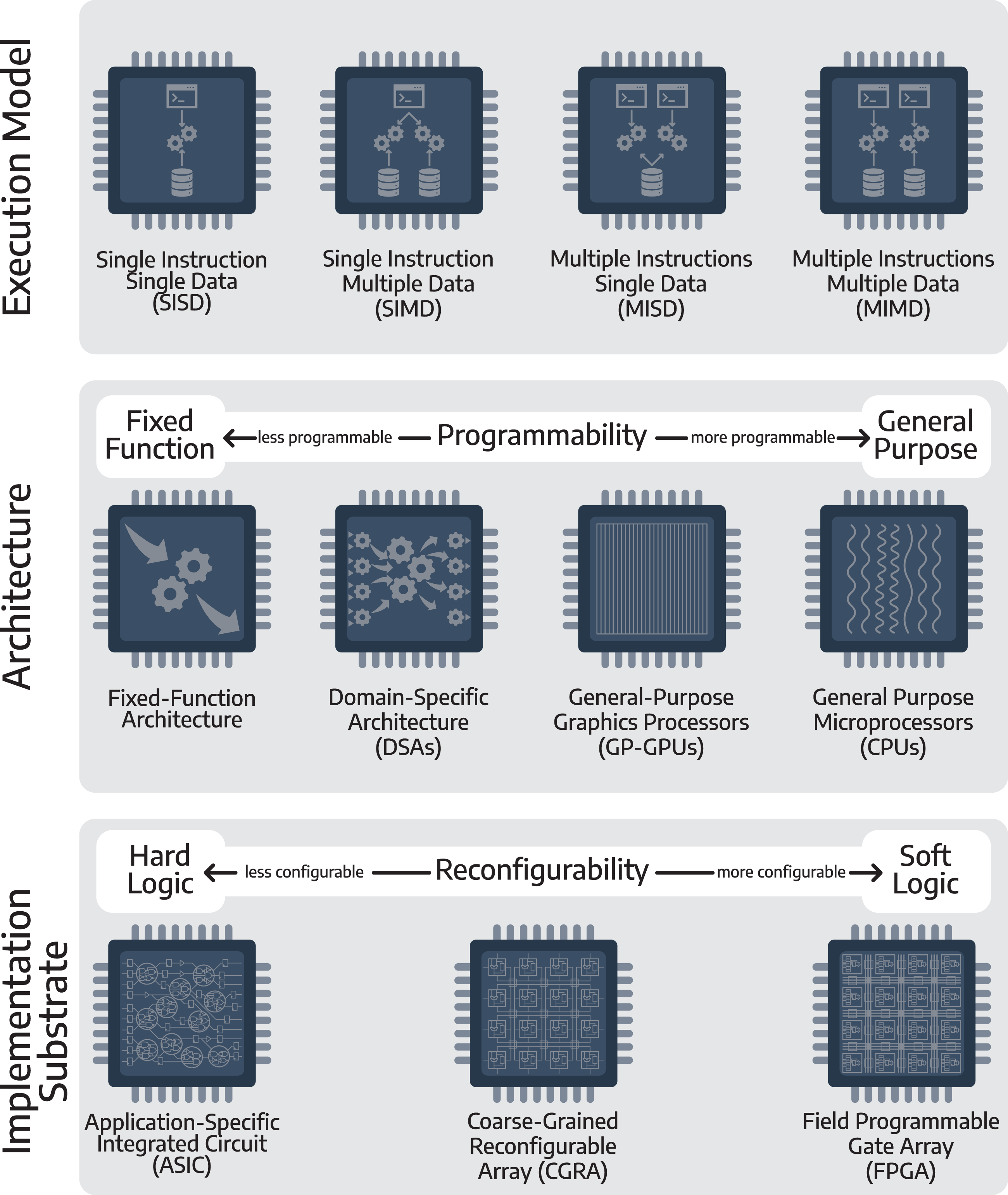

The top layer of Figure 2 shows the classification of chips based on their execution model, according to Flynn’s Taxonomy (Flynn, 1966, 1972). Single-instruction single-data (SISD) is used in single-core processors that execute a single instruction stream to operate on data, which was popular before the 2000s. Multi-core and many-core processors adopted the multiple-instruction multiple-data (MIMD) execution model, where each core can run different instructions and operate on different data. The single-instruction multiple-data (SIMD) execution model can be found in the vector units of CPUs, where each vector unit runs the same operations, and accesses a set of contiguous data to enable parallelization (Hassaballah et al., 2008; Raman et al., 2000). GPUs modify this concept, and use the single-instruction multiple-threads (SIMT) (Fung and Aamodt, 2011; Habermaier and Knapp, 2012), where each thread executes the same instructions and execution flow. With SIMT, any difference in branch outcomes will result in thread divergence. Accordingly, each group of threads with a different branch outcome will be executed sequentially, resulting in reduced computational efficiency. Nevertheless, SIMD or SIMT are often more efficient for explicitly parallel applications, compared to MIMD, since they reduce the overhead for processing of instructions per data item. The multiple-instruction single-data (MISD) model is less common, and is typically used when there are reliability concerns.

37

Execution model (top), architecture design of a chip (middle), and the substrate used for implementation (bottom). At the execution level, chips can have four types of models, according to Flynn’s taxonomy: SISD, SIMD, MISD, and MIMD. At the architecture level, chips can be grouped according to their programmability. A fixed-function chip only operates on a specific input to produce a specific output, based on what operation it is designed to do. A general-purpose chip can perform various operations, based on what program it is running, and hence, is programmable. Some chips exist in between. For instance, a domain-specific architecture chip targets a wider class of applications that share common operations. At the substrate level, chips can be implemented as hard-logic, soft-logic, or somewhere in the middle. Hard logic substrates, such as ASICs, have a fixed structure of logic gates and their connections, which cannot be changed once manufactured. On the other hand, soft logic substrates, such as FPGAs, provide reconfigurable logical functions and connections even after manufacturing.

Chip architecture and programmability

The middle layer of Figure 2 shows the classification of chips at their architectural level. The architecture of a chip defines the structure of logic blocks inside the chip, how they are connected to each other, and how software interacts with them to achieve the desired functionality. A chip can be fully programmable, fixed-function, or somewhere in the middle.

A programmable architecture can interpret different instructions 38 to perform different operations (Peccerillo et al., 2022). Programmable architectures target a wide range of applications and are suitable for workloads whose algorithms or protocols constantly change (Iyer, 2012). While programmability brings versatility, it comes with performance, energy, and silicon area overheads associated with interpreting and decoding instructions (Dally et al., 2020; Hennessy and Patterson, 2019). An example of a fully programmable architecture is the general-purpose microprocessor (CPU) (Section 3), which can be used for virtually any application. 39 By contrast, a fixed-function architecture is designed to perform specific computations for a specific set of inputs, which typically provides the best performance, highest energy efficiency, and smallest silicon area footprint, at the expense of losing flexibility (Tong et al., 2006). Fixed-function architectures are suitable for applications that are mature or have a short lifetime, such as cryptographic processors (Anderson et al., 2006), analog-to-digital or digital-to-analog converters, and multimedia encoding or decoding processors (Harrand et al., 1995; Tamitani et al., 1992), among many others (Peccerillo et al., 2022).

Some architectures may provide a limited degree of programmability for a specific class of applications, to balance performance, energy efficiency, and silicon area. These architectures may not be as versatile as a fully programmable architecture. However, they target a wider range of applications, compared to fixed-function architectures. Some examples include Graphics Processing Units (GPUs), which were originally designed for graphics applications and have become more programmable (Aamodt et al., 2018; Peddie, 2023b) to target a wide range of applications that have significant inherent parallelism, and Digital Signal Processors (DSP) (Lee, 1990), which are designed for signal processing applications, such as audio (Han et al., 1996), image (Chen and Chien, 2008), video (Kim et al., 2005), and sensor data (Gao et al., 2020). Domain-specific architectures (DSAs) (Section 4.2) (Fujiki et al., 2021; Halawani and Mohammad, 2024) target multiple applications within the same domain (Huang et al., 2017).

Implementation fabric

The chip architecture is then implemented on a fabric, where it can be a hard-logic fabric or a soft-logic fabric (Gindin et al., 2007; Paulin, 2004; Rose, 2004) (bottom layer of Figure 2). Hard logic fabric implements logical functions by using logical gates made from transistors on the silicon die, which cannot be modified or altered after the chip is manufactured. Hard logic fabric provides the best performance, energy efficiency, and mass-product cost (Abdelfattah and Betz, 2012; Gindin et al., 2007). Since a hard-logic chip is not reconfigurable after manufacturing, it may also be called an application-specific integrated circuit (ASIC). Examples include CPUs released by Intel and AMD, GPUs released by Intel, AMD, and NVIDIA, and many hardware accelerators, some of which are discussed in Section 4.

A soft logic fabric permits reconfigurability after manufacturing, by modifying the logical functions of the logic elements and their interconnections (Peccerillo et al., 2022). Field-programmable gate arrays (FPGAs) (Mencer et al., 2020) are commonly used as soft-logic fabrics that provide fine-grained reconfigurability. This allows users to reconfigure the programmable logic elements and their interconnection through a hardware description language (HDL) (Riesgo et al., 1999), such as Verilog (Dubey, 2009), VHDL (Shahdad et al., 1985), or using high-level synthesis (HLS) from C code (Nane et al., 2016). The reconfigurability comes with a price: architectures implemented in FPGAs can not compete with ASICs in terms of performance, energy efficiency, and silicon area footprint 40 (Boutros et al., 2018; Kuon and Rose, 2006; Zahiri, 2003). Soft-logic fabrics (e.g., FPGAs) are suitable for implementing architectures that are likely to be changed in a short timeframe (Gandhare and Karthikeyan, 2019; Leong, 2008), performing architectural validation before manufacturing them into ASICs (e.g., pre-silicon verification and prototyping) (Huang et al., 2011; Ray and Hoe, 2003), or manufacturing low-volume, fast-time-to-market, and short-lived products (Gandhare and Karthikeyan, 2019; Marquardt et al., 2000).

Besides hard-logic (e.g., CPUs) and soft-logic (e.g., FPGAs) fabrics, Coarse-Grained Reconfigurable Arrays (CGRAs) lie in the middle to provide some degree of reconfigurability for a specific class of applications (Prabhakar et al., 2020). In contrast to FPGAs, whose reconfigurability is performed at the lowest level logical functions (i.e., boolean algebra), CGRAs provide hard-coded arithmetic and logic building blocks that are optimized for specific domains of applications and can be configured to operate in a specific arrangement to perform a larger functionality (Peccerillo et al., 2022). This allows CGRAs to provide increased hardware efficiency, energy efficiency, faster reconfiguration times, and increased performance, through more specialized functional units (Tan et al., 2021; Wijerathne et al., 2022), bringing CGRAs closer to the spectrum of a hard-logic fabric in terms of power, performance, and silicon area (Liu et al., 2019; Niu and Anderson, 2018). We highlight some chips that use CGRA in Examples of custom and specialized hardware.

Off-chip interfaces and pin limitations

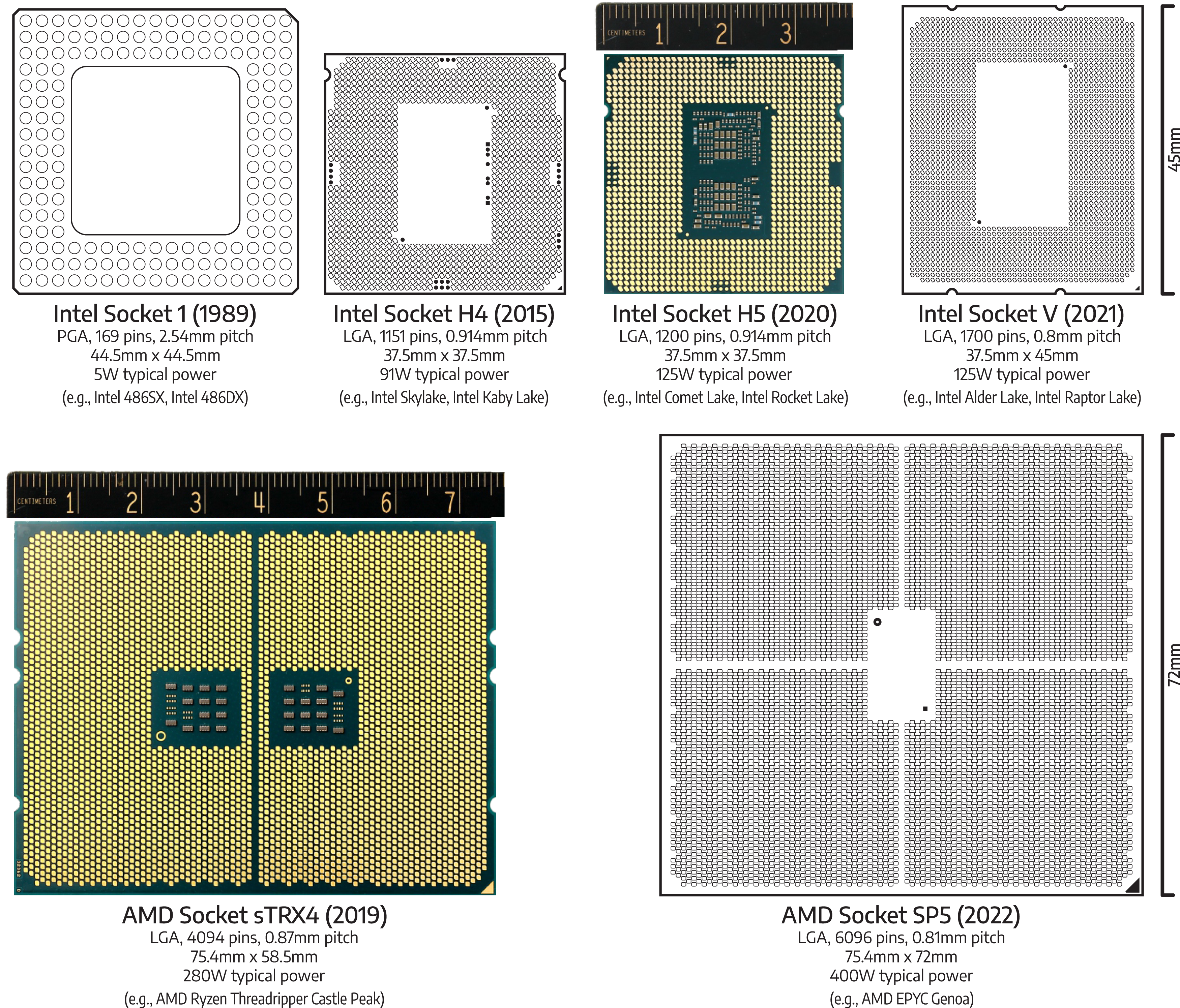

A modern chip uses hundreds to thousands of pins (Figure 3) as conduits for power delivery and data exchange with (off-chip) peripheral devices when it is mounted

41

on a motherboard. Additional pins are typically needed if a chip requires more memory bandwidth,

42

or needs more inter-device bandwidth to connect to peripheral devices

43

(Burger et al., 1996; Chen et al., 2017). Moreover, due to increasing power requirements, more pins are needed to deliver the electrical current to the microprocessor (Stanley-Marbell et al., 2011). In modern chips, about half of the pins are used to supply power, and the remaining pins are used for data exchange.

44

While the transistors have become much smaller, the pins have not enjoyed the same level of miniaturization due to physical limitations for carrying electrical current and maintaining physical strength. Adding more pins is expensive. Since both the pin and the pitch

45

are not getting significantly smaller anymore, it increases the size of the chip package. A larger package size entails a more complicated mounting mechanism, as it becomes more difficult to maintain uniform pressure for providing sufficient contact for all the pins to the socket (Chow et al., 2006). Evolution of microprocessor socket and pin (back of the processor), drawn to scale. The Intel socket 1 is a pin-grid array (PGA) socket, containing 169 pins, with 2.54 mm pitch (i.e., the distance between the center of pins). Launched in 1989, it was designed to accommodate Intel 486 SX, Intel 486 DX, and Intel 486 DX2 microprocessors, with 1.2 million to 1.6 million transistors, operating at typical power of 5 W. Almost three decades later, Intel socket H4, which is a land-grid array (LGA) socket, containing 1151 pins, spaced at 0.914 mm, was released to support Intel Skylake and Kaby Lake microprocessors, with 1.75 billion transistors, operating at a typical power of 91 W. Intel socket H4 was followed by Intel socket H5 5 years later. It retains the same socket dimension, but has 49 more pins to deliver more power (125 W vs 91 W of typical power) to Intel Comet Lake and Rocket Lake microprocessors, which have double core counts compared to their predecessor. A year later, Intel socket V, containing 1700 pins, spaced at 0.8 mm in a land-grid array (LGA), was released to support Intel Alder Lake and Raptor Lake microprocessors, operating at a typical power of 125 W. It has more connectivity than its predecessors. AMD Socket sTRX4 has 4094 pins in a land-grid array (LGA), which has the same physical dimension as AMD Socket SP3. It was launched in 2019 to support AMD Ryzen Threadripper Castle Peak, with up to 64 cores, 30 billion transistors, and 280 W of typical power. AMD Socket SP5 is a land-grid array (LGA) socket, containing 6096 pins, spaced at 0.81 mm. It was released in 2022 to support 96-core AMD EPYC Genoa microprocessors, with 90 billion transistors, 400 W typical power, 12-channel DDR5 memory, and 128 PCI Express 5.0 lanes.

Architecture of communication interfaces

A communication interface enables communication of a microprocessor with other microprocessors, accelerators, or off-chip memory. Parallel interfaces were popular early on. The need for higher bandwidth resulted in the development and adoption of serial interfaces. We review both next, and also highlight implementation aspects.

Parallel interface

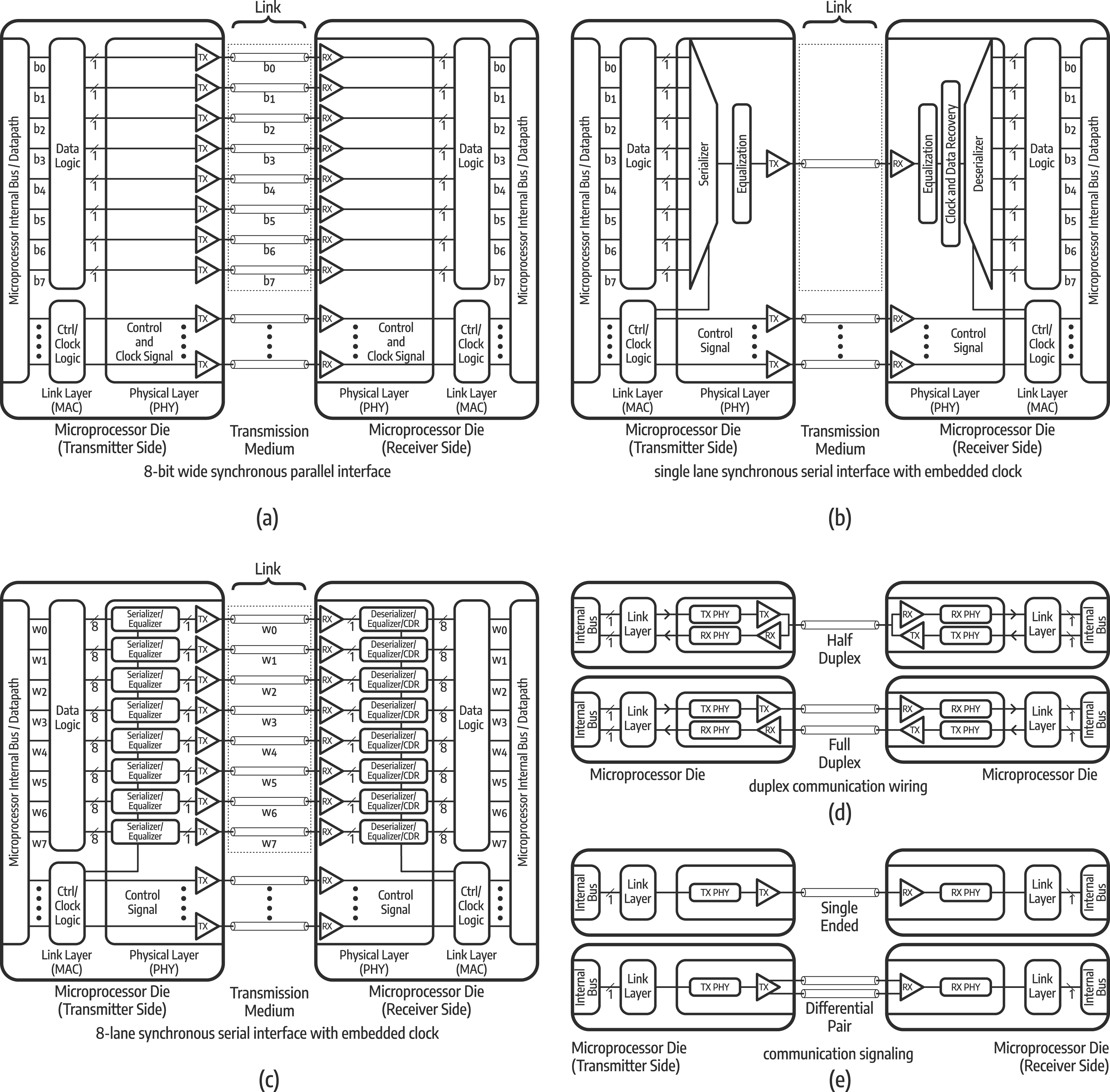

Early interfaces were implemented by using a parallel architecture (Figure 4(a)) (Giuma and Hart, 1996; Shanley et al., 1995), which transferred data by dividing it simultaneously over multiple wires (Dawoud and Dawoud, 2020; Roman, 1998). For instance, a 32-bit-wide parallel interface needed at least 32 wires to transfer 32 bits (4 bytes) of data in one cycle, with additional wires for controls.

46

To correctly receive the full data, the receiving end must wait until all signals have arrived (Bandyopadhyay and Cases, 2000; Lee et al., 2013), before assembling them into the complete 4-byte data. While building a wider parallel interface or raising the clock frequency to increase the bandwidth may seem intuitive, it is challenging in practice (Bandyopadhyay and Cases, 2000; Dawoud and Dawoud, 2020). A wider parallel interface entails more pins on the (already pin-limited) microprocessor (Sarmah and Azeemuddin, 2017), and uses more wires (Dawoud and Dawoud, 2020; Roman, 1998), which then makes PCB routing more difficult. That is also why early interfaces were half-duplex

47

(Figure 4(d)). Full-duplex interfaces require dedicated wires for sending and receiving data at the same time (Figure 4(d)) (Dawoud and Dawoud, 2020; Summerville, 2009), doubling the number of wires, further complicating the routing and pin allocation. Simplified high-level overview of communication interfaces: (a) assume a word w consists of 8 bits of data b0, b1, b2, b3, b4, b5, b6, b7, which can be transmitted in parallel at the same time (i.e., during the same clock cycle) using eight data wires along with dedicated clock signal and control signal wires on the 8-bit wide synchronous parallel interface; (b) in serial interface, a serializer takes each bit of the word and transmits it one by one per clock cycle (i.e., eight clock cycles are needed to transmit a word) using one wire along with control signal wires (i.e., in this case, the clock signal is embedded into the data signal). Although it needs more cycles to transmit the word, the serial interface can run at a significantly higher frequency compared to the parallel interface; (c) aside from increasing the clock frequency, the serial interface can have multiple lanes to increase its bandwidth. In this case, there are eight lanes, each transmits a word at the same time (w0, w1, w2, w3, w4, w5, w6, w7). Each word is 8-bit of data (b0, b1, b2, b3, b4, b5, b6, b7); (d) a two-way communication system, either parallel or serial, can be implemented as a half-duplex or a full-duplex interface. In half-duplex, only one wire is used for sending and receiving a bit, and thus both sending and receiving cannot happen at the same time. On the other hand, in a full-duplex, two wires are used for sending and receiving a bit, and thus both sending and receiving can happen at the same time, improving bidirectional bandwidth at the expense of more wires; (e) the communication link can use single-ended or differential pair signaling. Single-ended signaling is usually used for short-distance communication since it is prone to noise while differential pair signaling can be used for longer distances as it is more immune to noise.

Increasing the clock frequency to enable higher data transfer rate introduces signal integrity issues (Bogatin, 2011; Wu et al., 2013), including timing skew 48 (Hu and Yuan, 2009; Lee et al., 2013), and electromagnetic interference 49 (Frenzel, 2007; Karstensen et al., 2000). When wires have different lengths, 50 or are subjected to different noises and cross-talks, they may have different arrival times (skew). Accordingly, implementing a wider parallel interface becomes more challenging at higher clock frequencies: the tolerance window in which the receiver can wait for all signals to arrive becomes shorter, whereas the skew increases due to higher parasitic capacitance, noise, and cross-talk. These challenges led to the development and adoption of serial interfaces.

Serial interface

Modern communication interfaces rely on serial architectures to limit the number of pins on a processor, as well as wiring on PCB. Since the 2000s, interfaces were implemented through a serial architecture, 51 where each bit-line was implemented by using either one wire for a single-ended interface, or two wires for a differential pair interface 52 (Figure 4(e)) (Chen and Katopis, 2004; Mechaik, 2001). To increase the bandwidth, multiple serial lanes are used to form a wider serial interface (Figure 4(c)) (Sarmah and Azeemuddin, 2014, 2015) and multi-lane synchronization can be done by using a special control byte (Wu et al., 2016). Unlike the parallel interface, each serial lane is individually synchronized, and multi-lane synchronization can be performed through the use of a control byte. However, adding more lanes would then need more wires and pins on the microprocessor, leading to increased routing complexity, and higher implementation costs (Na et al., 2017; Sreerama et al., 2018). Moreover, adding more lanes also necessitates using a dedicated physical layer for each lane, which consumes area on the silicon die, and increases energy consumption (Abdennadher et al., 2020; Rashdan et al., 2020).

The physical layer of the serial interface

Serializer-Deserializer (SerDes) implements the physical layer 53 of the serial interface, 54 and its performance directly impacts communication speed. SerDes converts a parallel stream of data into a serial stream of data; once the serial stream of data has been transmitted and obtained by the receiving end, SerDes (on the receiving end) converts it back into the parallel stream 55 (Figure 4(b)) (Frenzel, 2007; Ko, 2022). SerDes resides inside the chips, and is the heart of a serial interface (Rashdan et al., 2020). SerDes also supports inter-node communication interfaces, such as Ethernet (Law et al., 2013), and Infiniband (Part II: Inter-node communication (Hanindhito et al., 2026)).

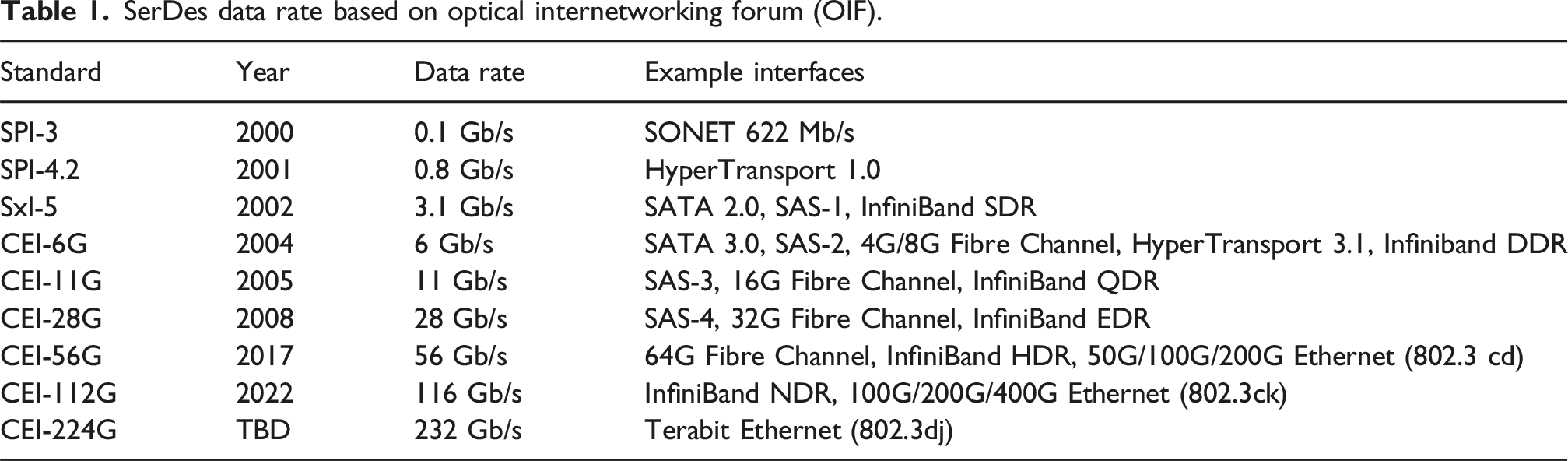

SerDes data rate based on optical internetworking forum (OIF).

Signal coding and modulation

We highlight techniques and technologies that are used to encode and modulate data for transmission through a medium (e.g., metal wires or optical interconnect). They play a fundamental role in future bandwidth improvements by allowing a signal to carry more data over a long distance. Advanced data encoding and modulation are especially important as they are among the very few options that can alleviate communication bottlenecks.

Before data could be transmitted through a medium that carries either electrical or optical signals, data is encoded through encoding schemes. This improves spectral efficiency 56 (Rysavy, 2014; Winzer, 2012), and enables error detection and error correction (Alyaei and Glass, 2009; Fair et al., 1991; Imai and Hirakawa, 1977), which are needed for maintaining signal integrity (Mercier et al., 2010; Seshadri et al., 1993; Tzimpragos et al., 2016). Then, modulation is performed to transform the data into suitable signals for transmission over long distances. The primary goal of encoding and modulation is to increase the data rate 57 while reducing the signal rate. 58 We focus on digital modulation techniques (Smithson, 1998; Xiong, 2006) since they are widely used in intra-node and inter-node communication (Part II: Inter-node communication (Hanindhito et al., 2026)).

Digital baseband 59 modulation, also known as line coding (Guri et al., 2015; LoCicero and Patel, 2018; Matin, 2018; Rezaei et al., 2023; Teixeira and Zaharov, 2007), is used to encode data into a pattern of voltage, current, or photons for short-distance communication through metal wires or optical cables (Loan, 2007; LoCicero and Patel, 2018). The choice of line coding depends on several considerations, such as timing and synchronization capability, power efficiency and electrical characteristics, error detection and correction capability, probability of error and noise tolerance, and complexity of transmitter and receiver design (Couch, 1994; Lathi and Ding, 2022). Line coding comprises two parts: pulse shaping and block coding.

Pulse shaping

Pulse shape defines the pattern of voltage, current, or photons that transmit information at the bit level. Commonly-used pulse shapes can be grouped into five categories: unipolar, polar, bipolar, multi-transition, and multi-level (Madhow, 2008; Rezaei et al., 2023). The unipolar, polar, and bipolar pulse can be either non-return to zero (NRZ) or return-to-zero (RZ), resulting in several combinations. 60 Herein, we only highlight line coding techniques that are used by communication technologies that are mentioned in later sections. Interested readers are suggested to consult (Madhow, 2008) for a detailed comparison between pulse schemes.

In NRZ, the non-zero voltage pulse maintains its voltage level throughout the bit-time. 61 With this scheme, NRZ does not provide enough signal transition to help distinguish each bit during long consecutive transmissions of the same bit value, leading to synchronization problems (Anand and Razavi, 2001; Song and Soo, 1997). RZ tries to make sufficient transition, by making the non-zero voltage pulse return to zero in the middle of the bit-time. This allows the receiver to distinguish each bit, in the case of long consecutive transmission of the same bit value. 62 In addition to greater complexity, the RZ data rate is half that of NRZ for the same signal rate.

Due to its simplicity, NRZ is often used for bit rates up to 40 Gbps (Chen et al., 2023; Van Kerrebrouck et al., 2019). However, implementing higher-bandwidth communication interfaces (Section 2.13) necessitates finding denser pulse shapes, such as those that use Pulse-Amplitude Modulation (PAM), which uses multi-level voltage to represent the digital symbols. For instance, PAM-4 uses four levels of voltage to represent 2-bit symbols, in order to increase the modulation density. This choice increases the complexity of implementing transmitter and receiver, and raises signal vulnerability to noise and cross-talk 63 (Chen et al., 2023; Forghani and Razavi, 2022; Van Kerrebrouck et al., 2019), resulting in vastly higher bit error rate. 64 Increasing the operating voltage 65 to address the issue is not preferred due to higher energy consumption (Garcia et al., 2007; Müller et al., 2015) Higher-order PAM, such as PAM-6 or PAM-8, are currently being developed to fulfill the needs for future data rates (Che and Chen, 2023; Hecht et al., 2022; Yue and Shekhar, 2022).

Block coding

Although pulses are sufficient for transmitting information at the bit level, finding a more efficient scheme is necessary to fulfill the demand for high-bandwidth communication interfaces. This is where block coding can help. In addition to higher bandwidth, block coding also provides signals with higher power efficiency 66 and self-synchronization capabilities, which NRZ and PAM alone cannot achieve. Block coding is used with both NRZ and PAM, and is being used in recent high-bandwidth communication interfaces. 67

Block coding divides the data into fixed-length blocks, and encodes each block with slightly longer blocks, according to predefined coding schemes. Popular block codings include 4B/5B (Robe et al., 1993), 2B1Q (Sugimoto et al., 1989), 8B6T (Buchanan, 1999), and 8B/10B (Wang et al., 2010). For instance, the 8B/10B block coding, used in many communication interfaces, 68 encodes 8-bit blocks (or 8-bit words) into 10-bit symbols. This increases power efficiency and provides self-synchronization capability, at the expense of 2-bit overhead for every 8-bit of transmitted data. Denser block codings have lower overhead. These include 64B/66B 69 (Balasubramanian et al., 2011; Mohapatra et al., 2017), 128B/130B 70 (Mhaboobkhan et al., 2019; Weng et al., 2021), 242B/256B, and 512B/514B (Cideciyan et al., 2013; Teshima et al., 2008), and are used for communication interfaces that require even higher bandwidth.

General-purpose microprocessors

Central Processing Units (CPUs) are designed to support various instructions in diverse workloads, and hence, are called general-purpose microprocessors (Gelsinger, 2001) (Figure 2). Through using multiple complex hardware structures, they are designed to maximize average performance 71 for a wide range of applications. While this is expensive in terms of implementation 72 and energy consumption (Bhandarkar, 1997; Blem et al., 2013), it makes programming CPUs easier for software developers.

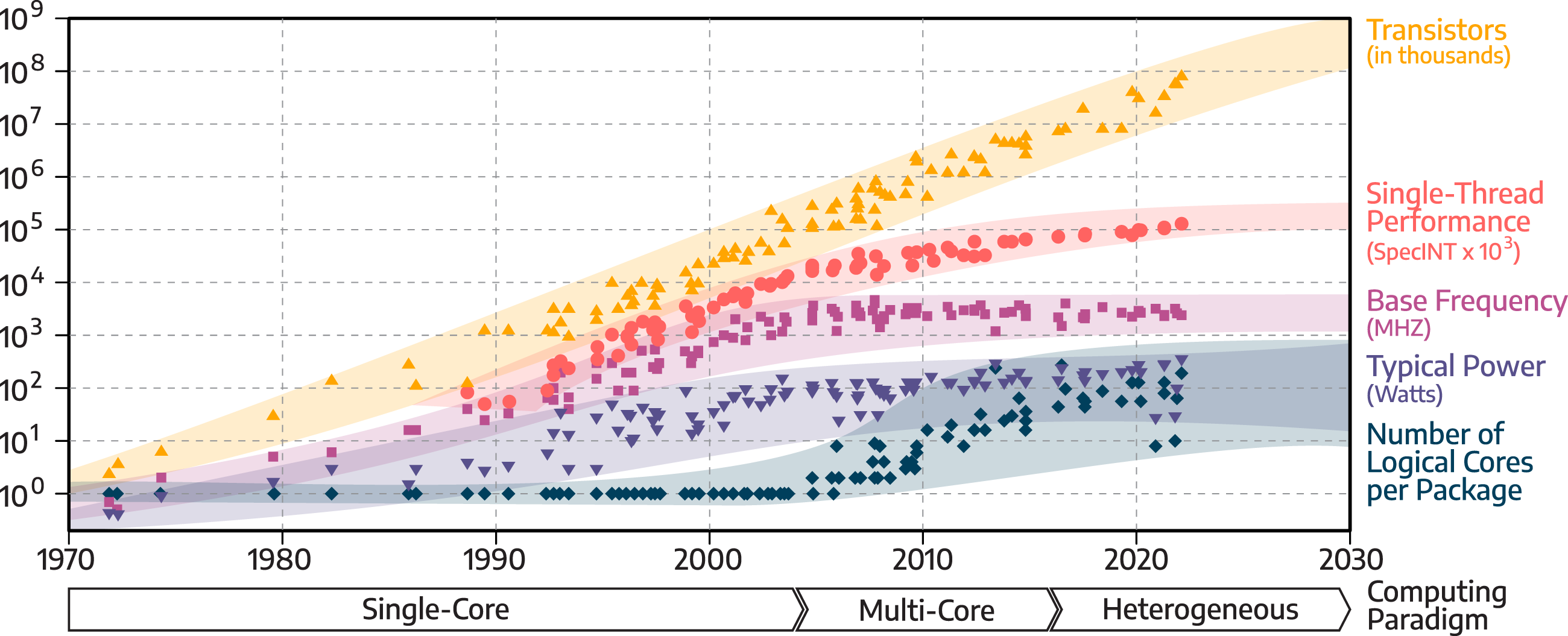

We review how technology trends in computing are impacting CPUs. In this vein, Figure 5 exhibits the evolution of key characteristics of general-purpose microprocessors during the past five decades, along with future projections.

73

These characteristics include transistor counts, single-thread performance, base clock frequency, typical power consumption, and the number of logical cores. Both the transistor counts and the number of logical cores are given per package. Moreover, computing paradigm (Hennessy, 2021) is also shown in the figure to indicate how they evolved over the years. Among these five characteristics, the clock frequency (Leiserson et al., 2020; Xiu, 2017) is perhaps the most widely-known feature, due to its publicity in marketing for decades. Technology trends of general-purpose microprocessors since the 1970s, with projections up to 2030.

We discuss the key drivers of these trends. The end of Moore’s law and Dennard scaling significantly impacted the evolution of general-purpose microprocessors. Therefore, we partition the discussion of the trends to before and after the end of Dennard scaling, followed by future possibilities.

Trends between 1980s and early 2000s

The clock frequency increased significantly between the 1980s and the early 2000s, as both Intel and AMD were competing to develop their speed-demon chips, in which higher clock frequency was the main figure of merit a microprocessor (Ronen et al., 2001) used for marketing (Olukotun and Hammond, 2005). Meanwhile, transistor sizes continuously decreased with more advanced process nodes (Bohr, 2007; Gargini, 2017), from 10 μm during the 1970s to 32 nm in late 2000s (Figure 1). During this period, Dennard scaling (Bohr, 2007; Dennard et al., 1974) still held, allowing manufacturers to raise the clock frequency and reduce power costs per transistor switching, thus keeping the overall power under control. Although the increase in the clock frequency improved single-thread performance, measured through SPEC Integer benchmark 74 (Dujmovic and Dujmovic, 1998), this design strategy came with costs. It increased dynamic power consumption (Liu and Svensson, 1994) and made thermal dissipation more challenging (Gurrum et al., 2004; Kish, 2002).

The advancement in the process node also allowed designers to pack more transistors in the same area, resulting in the steady increase of the number of transistors per package of microprocessors between the 1980s to early 2000s. These transistors were used to implement more complex functional units, which improved single-thread performance 75 through the out-of-order execution engine, 76 vector extensions, 77 branch predictor, 78 and improvements in the memory system (Peleg and Weister, 1991). During this period, the increase in performance and transistor count followed Moore’s law.

The end of Dennard scaling and emergence of multi-core processors

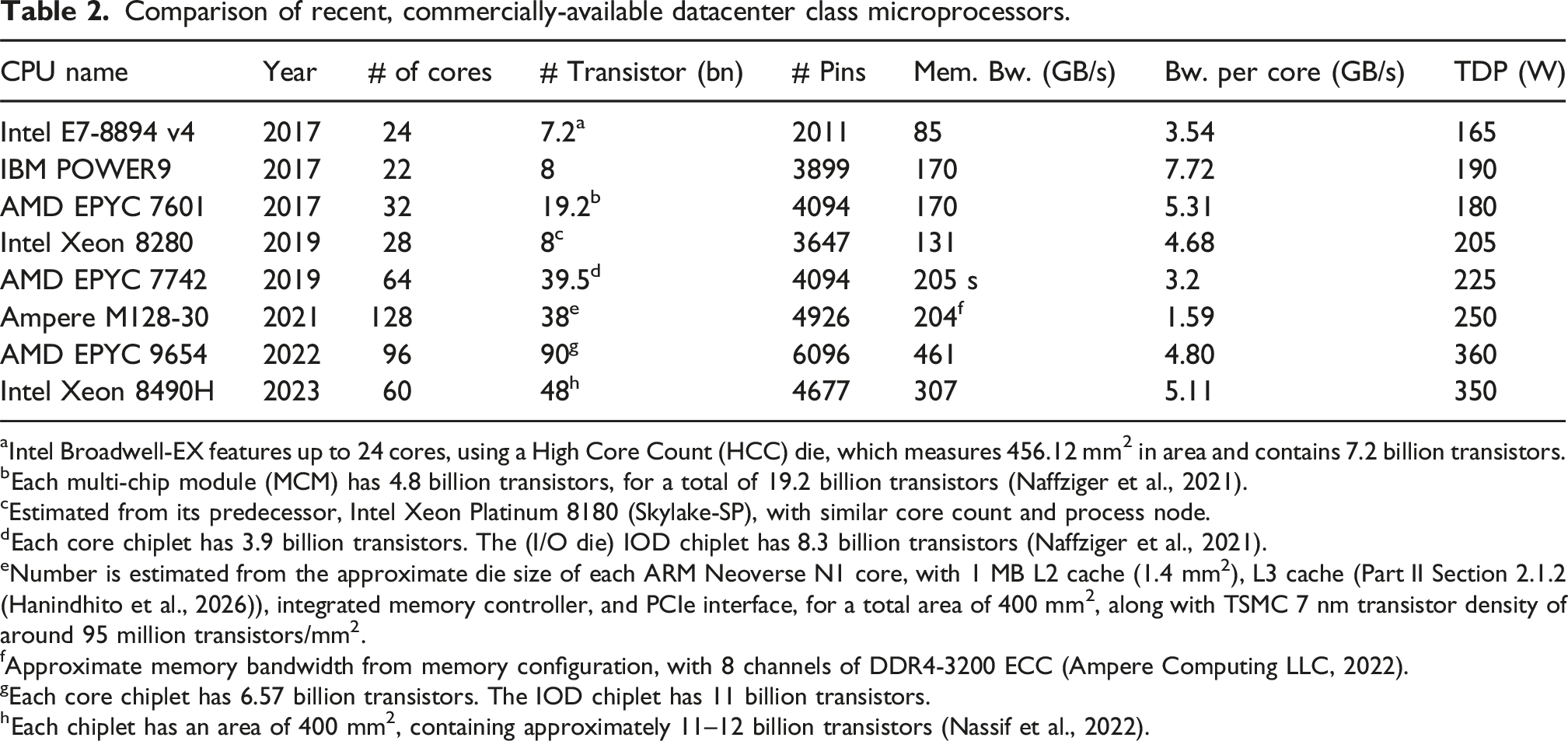

Comparison of recent, commercially-available datacenter class microprocessors.

aIntel Broadwell-EX features up to 24 cores, using a High Core Count (HCC) die, which measures 456.12 mm2 in area and contains 7.2 billion transistors.

bEach multi-chip module (MCM) has 4.8 billion transistors, for a total of 19.2 billion transistors (Naffziger et al., 2021).

cEstimated from its predecessor, Intel Xeon Platinum 8180 (Skylake-SP), with similar core count and process node.

dEach core chiplet has 3.9 billion transistors. The (I/O die) IOD chiplet has 8.3 billion transistors (Naffziger et al., 2021).

eNumber is estimated from the approximate die size of each ARM Neoverse N1 core, with 1 MB L2 cache (1.4 mm2), L3 cache (Part II Section 2.1.2 (Hanindhito et al., 2026)), integrated memory controller, and PCIe interface, for a total area of 400 mm2, along with TSMC 7 nm transistor density of around 95 million transistors/mm2.

fApproximate memory bandwidth from memory configuration, with 8 channels of DDR4-3200 ECC (Ampere Computing LLC, 2022).

gEach core chiplet has 6.57 billion transistors. The IOD chiplet has 11 billion transistors.

hEach chiplet has an area of 400 mm2, containing approximately 11–12 billion transistors (Nassif et al., 2022).

While increasing the number of cores seems to be an intuitive way of improving the performance of microprocessors, several factors limit the number of cores that can be placed into a microprocessor. These are: Memory bandwidth bottlenecks. Increasing the number of cores elevates pressure on the memory subsystems (Ahn et al., 2009; Borkar, 2007; Mandal et al., 2010; Sancho et al., 2010), as higher bandwidth would be needed to supply the required data to the cores. Insufficient bandwidth may cause cores to wait for memory accesses (Cristal et al., 2005). Microprocessors with a high number of cores usually have multiple memory channels to accommodate the demand for memory bandwidth (Sancho et al., 2010). Adding more memory channels is costly because it needs additional pins (Figure 3), which are already limited, and it necessitates more memory controllers that consume area on the silicon die. Moreover, a more sophisticated motherboard design will be needed in order to house more memory modules, and maintain signal integrity. Resource contention and core synchronization. Typically, a core needs to communicate with other cores to share data, share resources, and perform synchronization barriers. Increasing the number of cores in a microprocessor makes synchronization and resource sharing more difficult (Blagodurov et al., 2010; He et al., 2017; Zhuravlev et al., 2010). Extensive inter-core communication and resource contentions can limit the attainable performance of multi-core processors (Hood et al., 2010; Xu et al., 2010). Cache Coherency. As the number of cores increases, maintaining coherency across multiple levels of cache becomes more difficult (Part II: Registers (Hanindhito et al., 2026)). Transistor count, die size, and manufacturing yield. Adding more cores to a microprocessor increases the transistor count. With the slowdown of transistor scaling, a larger die size is then needed, which lowers the yield and increases manufacturing costs (Mack, 2015; Sun et al., 2020) (Section 2.5). To overcome these challenges, since 2017, microprocessors started to use multi-chip module (MCM) technology

80

(Naffziger et al., 2021), followed by chiplet

81

(Loh et al., 2021; Naffziger et al., 2020) and 3D stacking technologies

82

(Agarwal et al., 2022; Beyne et al., 2021; Ingerly et al., 2019; Su et al., 2017). Figure 6 shows the evolution of packaging technologies used by modern microprocessors. Power consumption and heat dissipation. Packing more transistors on a silicon die, and the end of Dennard scaling (Esmaeilzadeh et al., 2011b; Wang and Skadron, 2013), has resulted in higher power consumption, which is one of the major limiting factors of adding more cores (Horowitz, 2014; Tiwari et al., 1998). To overcome this difficulty, manufacturers typically lower the clock frequency of higher-core-count microprocessors (Gepner and Kowalik, 2006), which resulted in a slower increase in power consumption since 2005 (Figure 5). The use of dynamic voltage and frequency scaling (DVFS) (Herbert and Marculescu, 2007; Le Sueur and Heiser, 2010; Papadimitriou et al., 2019) makes up the performance loss due to the lower clock frequency, by allowing the microprocessor to momentarily increase its power consumption to boost an individual core’s clock frequency to achieve higher single-thread performance, as long as it is within its power and thermal envelopes.

83

This feature is specifically useful for legacy applications that cannot take advantage of all the available cores (Cochran et al., 2011). Another method to balance power consumption is through heterogeneity, by combining high-performance and low-power cores. This approach reduces power when running workloads that do not heavily stress the CPU. Notable examples are the ARM big.LITTLE and Intel Alder Lake architectures (Rotem et al., 2022). Nevertheless, high-core-count microprocessors will soon require a liquid cooling system for effective heat dissipation. Pin Limitation. With the increase in power consumption, higher memory bandwidth requirements, and the need for faster connectivity to off-chip peripherals, modern microprocessors need more pins, which are expensive

84

. Figure 3 shows the pins and the package size used by several microprocessors, as well as their evolution over time. Specifically, it shows that, while the number of transistors on a chip has increased by about three orders of magnitude during the past three decades, the number of pins have increased by only about an order of magnitude, exacerbating communication bottlenecks.

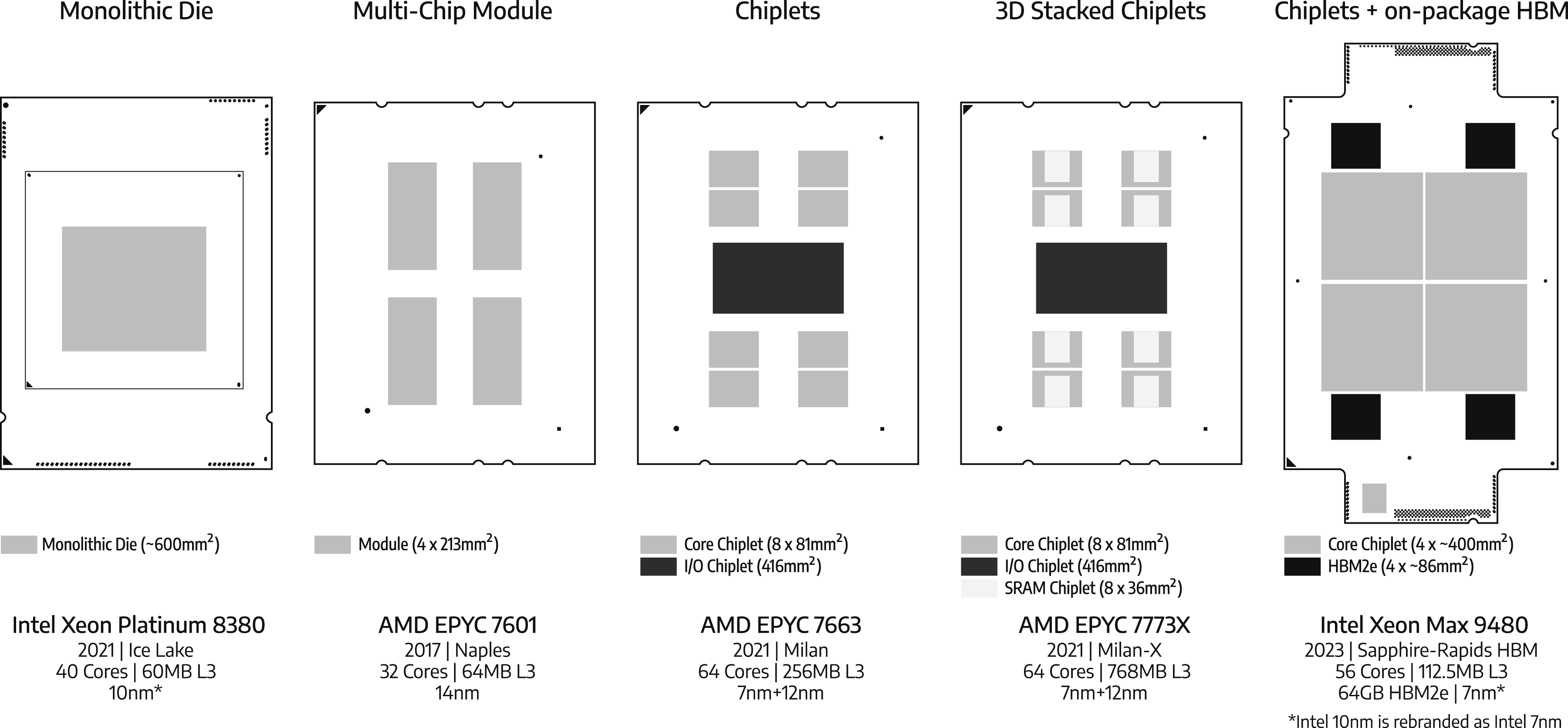

Evolution of microprocessor packaging technology, drawn to scale. Until recently, microprocessors had a single silicon die on a package. Nowadays, more transistors and more cores are placed on a microprocessor die. The slowdown in the advancement of process nodes and transistor shrinking has resulted in larger silicon die sizes, aimed at fitting more transistors on a die. For instance, the Intel Xeon Platinum 8380 is a 40-core microprocessor, implemented by using a single monolithic die, whose size is approximately 600 mm2. A larger silicon die raises the possibility of manufacturing defects, which lowers the yield, and increases the manufacturing cost (Mack, 2015; Sun et al., 2020) (Section 2.5). To improve the yield, AMD uses multiple identical silicon dies (each single die is able to function as a stand-alone die) within a single package, referred to as a multi-chip module (MCM). For instance, the 32-core AMD EPYC 7601 microprocessors have four silicon dies, each containing eight cores, and have a size of 213 mm2, for a total of 852 mm2 per package (Naffziger et al., 2021). The next advancement in packaging technology uses multiple silicon dies, referred to as chiplets. Each chiplet may have a different functionality and process node. For instance, the 64-core AMD EPYC 7663 consists of 33 million transistors, implemented in an eight-core complex die, manufactured in a 7 nm process node (8 × 81 mm2 in size), and one I/O die manufactured in a 12 nm process node (416 mm2 in size), for a total of 1064 mm2 of silicon die in a package (Naffziger et al., 2021). Chiplets can be stacked on top of each other by using 3D packaging technologies. An example includes AMD EPYC 7773X, where SRAM cache chiplet (in light-grey) is stacked on top of each core complex die, providing triple the capacity of the last-level cache (256 MB on AMD EPYC 7663 vs 768 MB on AMD EPYC 7773X) (Agarwal et al., 2022). The 56-core Intel Xeon Max microprocessors have four chiplets (each is presumed to have a size of 400 mm2) and integrate HBM2e memory (in dark grey) on the same package (Sanca and Ailamaki, 2023).

Many-core processors

Applications that have inherent parallelism, which are abundant in high-performance computing and machine learning, typically have simple execution flows 85 (Mittal, 2020a; Véstias and Neto, 2014). Advanced branch predictors, aggressive speculative execution engines or features like simultaneous multi-threading (SMT) do not typically benefit these workflows. These applications can enjoy larger performance improvements if area on a silicon die is allocated to build a large number of simple cores, instead of fewer but more sophisticated ones (Carter et al., 2013; Narayanan et al., 2015). More cores allow these applications to improve performance through parallelization (Schmidl et al., 2013; Silva et al., 2019). Manycore processors, followed by hardware accelerators, such as GPUs, have been a response to this need. We highlight the basic design philosophy of manycore processors through an example.

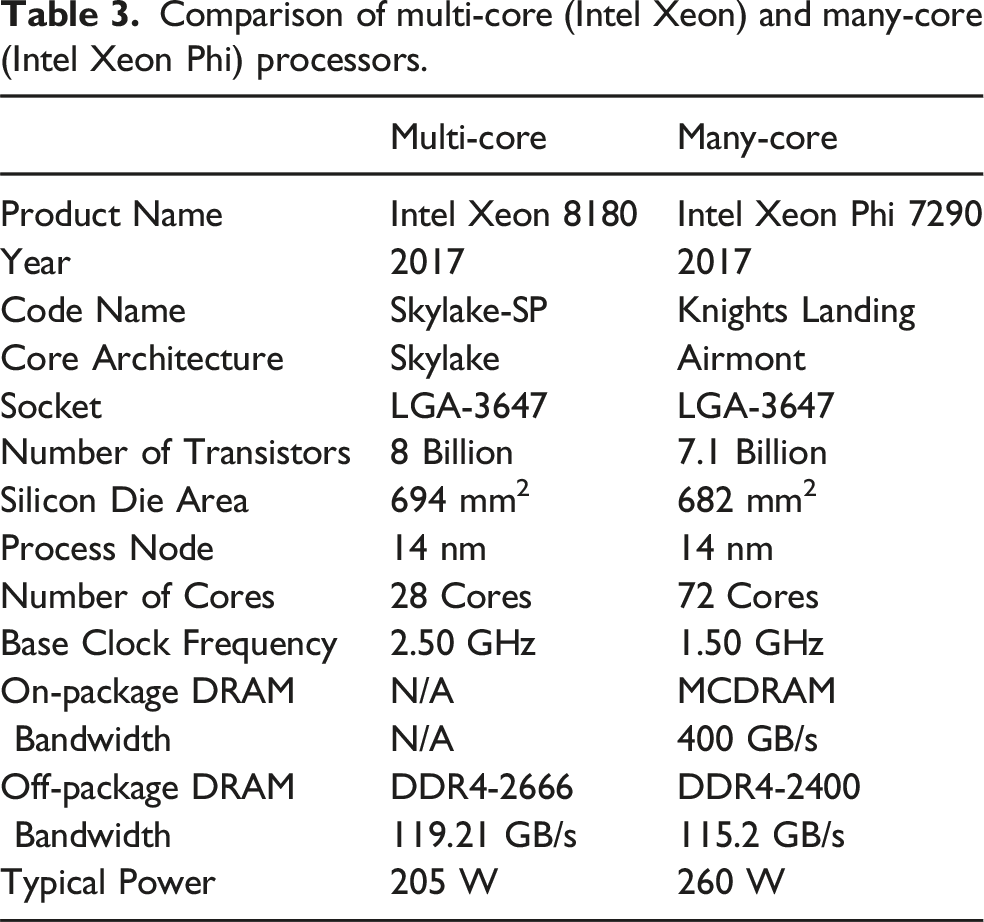

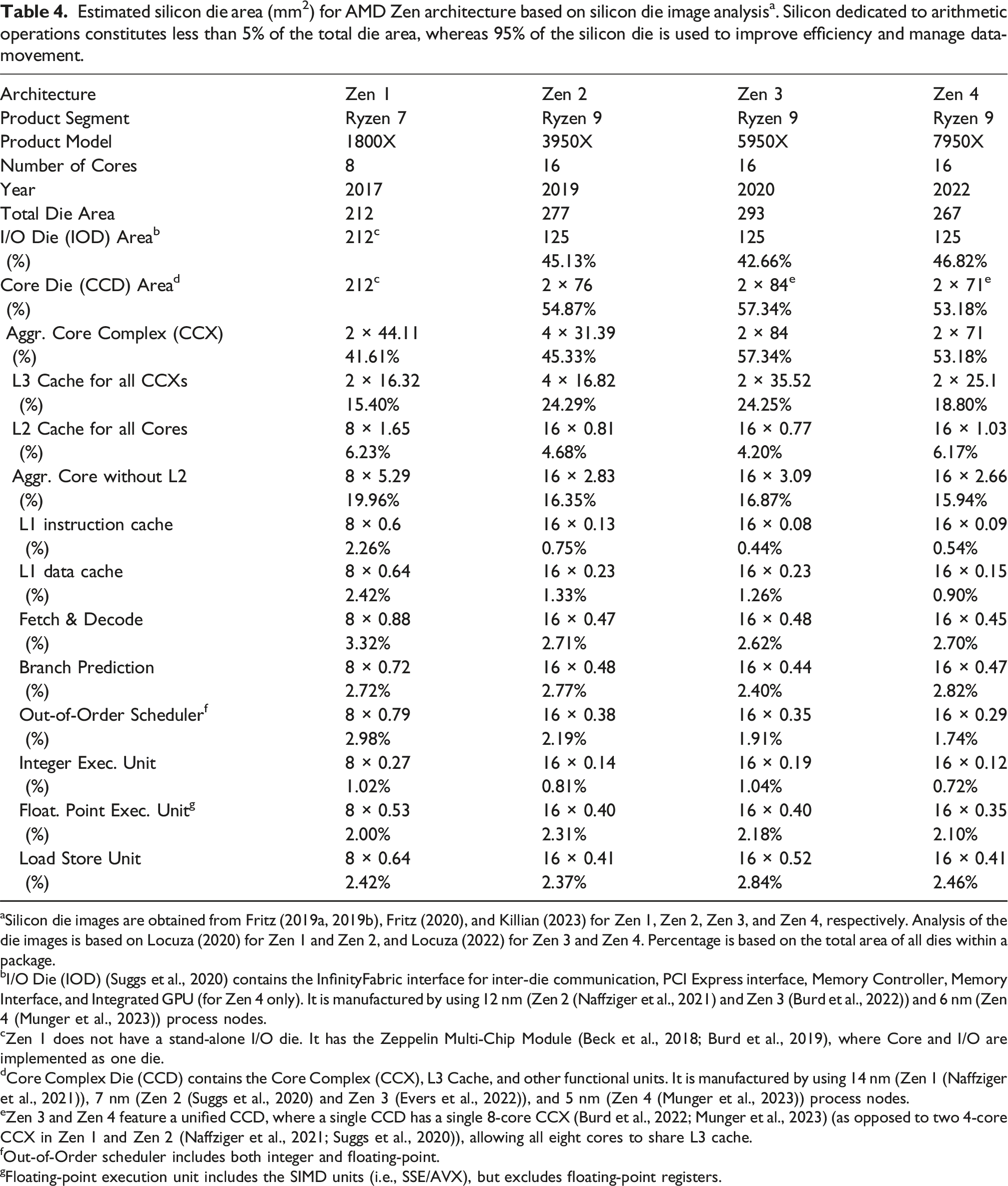

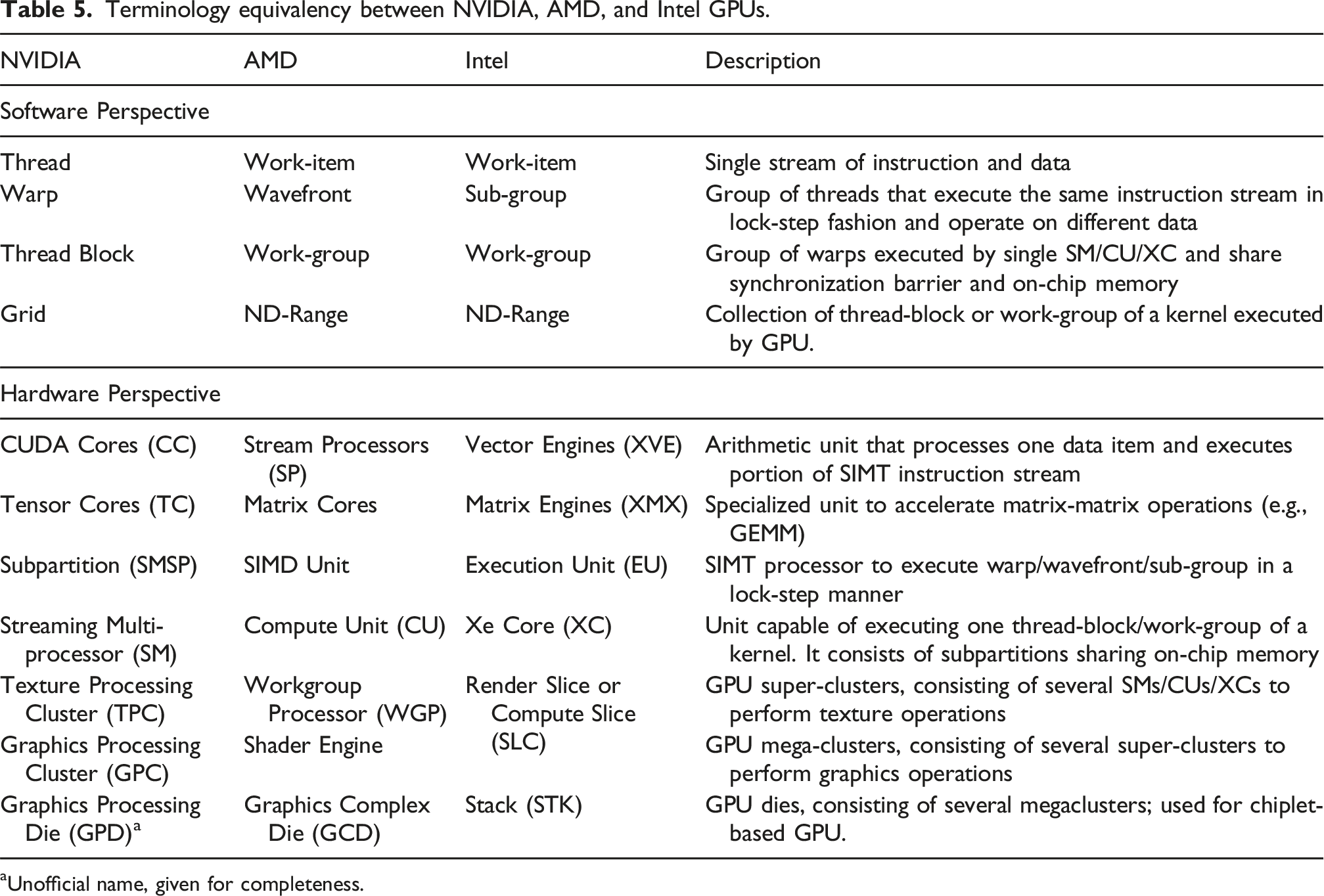

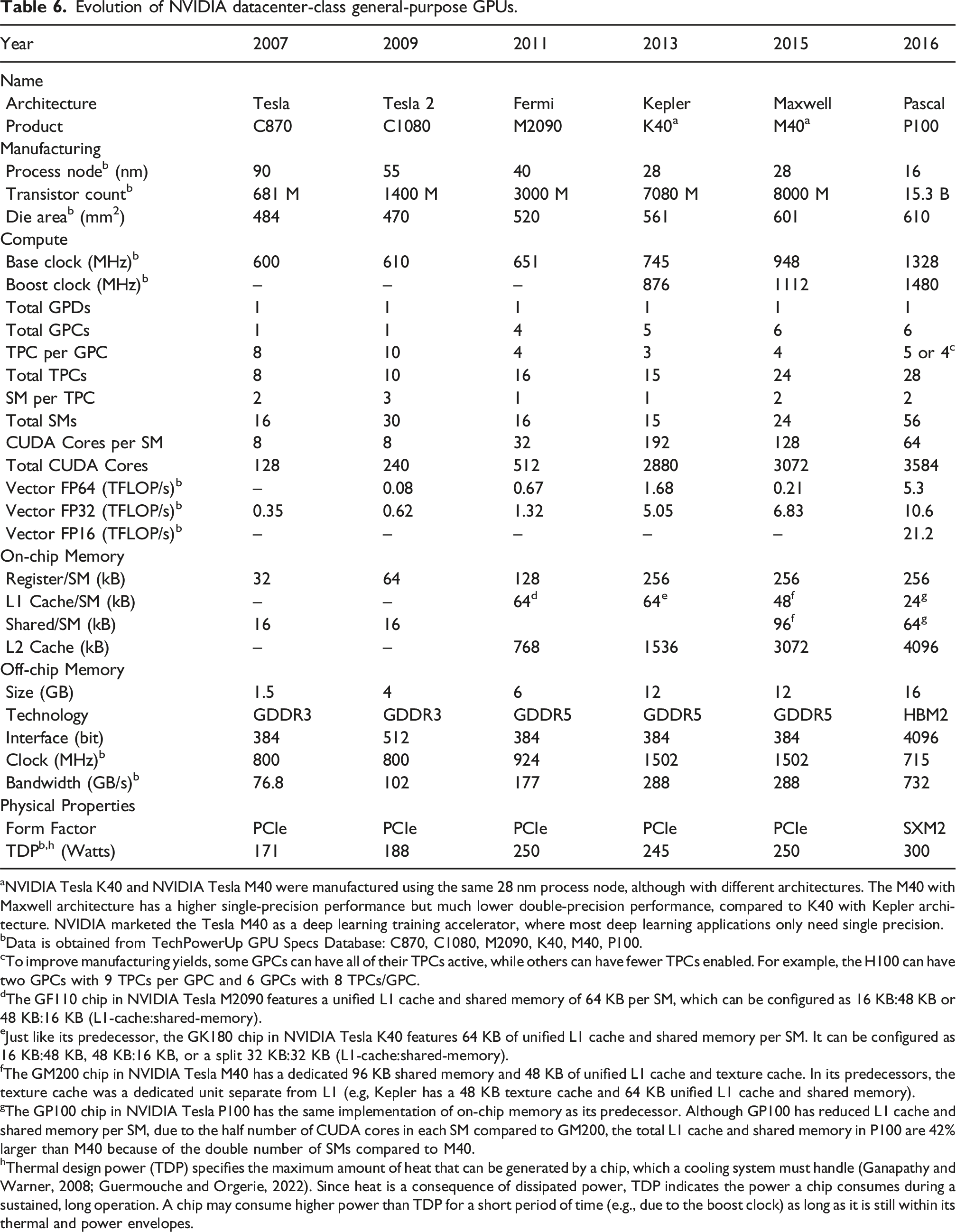

Comparison of multi-core (Intel Xeon) and many-core (Intel Xeon Phi) processors.

The last generation of Intel Xeon Phi was Knights Mills 87 (2017), which was specifically designed for accelerating AI and ML workloads (Domke et al., 2019; Georganas et al., 2018). Intel Xeon Phi offers substantial performance gains for HPC, AI, and ML workloads, due to abundant data-level parallelism and simple execution flows (Mittal, 2020b; Shao and Brooks, 2013). However, it faced strong competition from GPUs (Mittal, 2020a), which forced Intel to discontinue Xeon Phi product lineup in 2020. Nevertheless, the spirit of many-core computing is still alive in other areas, such as cloud computing clusters 88 that host small applications and micro-services in containerized forms (Pahl et al., 2019; Singh and Singh, 2016) for multiple tenants. These micro-services that are hosted in the cloud are typically not as computationally demanding as HPC or ML applications. Therefore, a simpler and more energy-efficient core is often preferred: many-core processors allow the cloud provider to achieve higher compute density through higher aggregate number of cores per server rack, and improve energy efficiency, which decrease the total cost of ownership (TCO). This has lead to the development of microprocessors designed specifically for cloud computing clusters, such as AMD EPYC Bergamo (2023), which has 128 Zen4c cores 89 that are optimized based on performance-per-watt. Intel is expected to release (2024) a new product lineup for cloud computing with its Sierra Forest processor, which is estimated to have 288 (energy) efficiency-oriented cores.

Several semiconductor companies are increasingly adopting ARM-based processors due to their lower power consumption, making them a strong alternative to Intel and AMD’s x86 architectures. This shift has long been evident in consumer devices—Apple’s M-series (MacBooks) and A-series (iPhones), as well as Qualcomm’s Snapdragon processors, all use ARM microarchitectures. More recently, major cloud providers have extended this trend to their infrastructures, integrating ARM processors like Amazon AWS Graviton (Loghin, 2024), Microsoft Azure Cobalt, and Google Cloud Axion. Ampere Computing also develops ARM-based many-core server solutions like AmpereOne, incorporating up to 192 cores, with future models expected to reach 256 and 512 cores. NVIDIA develops Grace CPU based on ARM architecture (Evans, 2022) as part of their CPU-GPU heterogeneous system (Part II: System integration and heterogeneous computing (Hanindhito et al., 2026)).