Abstract

In Part I of this paper (Hanindhito et al., 2026), we argued understanding technology trends in computing hardware is necessary for designing next-generation algorithms for scientific computing. Using a language that is accessible to a general computational or data scientist, or applied mathematician, we aim to help our targeted readers better understand how technology trends in computing hardware are going to impact their computations, and what characteristics algorithms should exhibit to best harness modern hardware. In Part I, we covered background material, general-purpose processors, and hardware accelerators. In Part II, we review memory systems, inter-device communication, heterogeneous computing and system integration, and why energy efficiency has become a central issue in hardware design, and why it cannot be ignored in cluster-sized computers. We conjecture how the above changes may impact scientific computing, and offer options for leveraging modern hardware for both old and modern scientific software.

Introduction

Motivation

Computing hardware has experienced transformative technological changes during the past two decades. Some of these changes include increased number of cores on processors, widespread adoption of hardware accelerators (e.g., GPUs), development of high-bandwidth memory technologies, and invention of new interconnects to alleviate bandwidth bottlenecks. Understanding these changes and their drivers is fundamental to effective harnessing of modern hardware, and making strategic decisions on how future algorithms and computational frameworks should be designed.

This paper reviews technology trends in computing hardware in a language that computational scientists and applied mathematicians find comprehensible. We also highlight how these changes impact scientific computing. When it comes to possibilities for designing future algorithms and workflows, as well as improving the performance of existing software, we discuss a range of options: from those that require limited resources (e.g., limited changes to an existing source code), which may yield marginal improvements to the performance, to those that need significantly more resources (e.g., more intrusive changes to a software system and possibly using a different hardware platform), which likely results in better performance improvements.

Outline and summary

Background material, general-purpose processors, and hardware accelerators are covered in Part I of this paper [B. Hanindhito, A. Fathi, D. Gourounas, D. Trenev, A. Gerstlauer, and L. K. John, The International Journal of High Performance Computing Applications (2026)]. We suggest readers review the background material before reading the rest of the text. In Part II, we cover different memory systems, various interconnect technologies, system integration and heterogeneous computing, energy consumption of computing systems and their implications, and the impacts of these changes on high-performance scientific computing applications. Acronyms we used throughout the text are listed in Appendix A.

The Memory Systems section considers different memory technologies, and highlights why a whole host of different options, each having different bandwidth, latency, energy consumption, and cost, are needed in scalable computing systems. SRAM is a very fast, on-chip memory, and is typically used in registers and caches. It consumes more energy compared to alternatives, and uses space on the same precious silicon die that hosts the rest of a processor. SRAM is typically managed by hardware and compilers. Recently, some hardware accelerators have allowed users to manage a portion of SRAM, if they choose to do so; effective management by users often involves detailed familiarity with the underlying algorithms, and can result in performance gains. DRAM is off-chip memory, and typically, the primary source of providing memory capacity to applications. Compared to SRAM, it is slower, consumes less energy, provides more capacity in the same area, and is cheaper. Thanks to advanced packaging, modern processors can stack several layers of DRAM on the same package that they reside on to acquire high-bandwidth memory. This has now become common in high-end GPUs and started to appear on high-end CPUs as well. DRAM relies on capacitors to store information. Capacitor scaling is considerably more challenging than transistor scaling at advanced process nodes, and thus, it has slowed down progress in making more advanced DRAMs. Separation of memory and processing units, which characterizes the von Neumann architecture, is a major source of bottlenecks. Processing-in-memory combines the memory and compute units into a single integrated unit, which results in performance gains. Non-volatile memory technologies, such as flash memory, do not need continuous supply of energy to retain data. Compared to DRAM, they are slower, consume less energy, and are cheaper. These favorable properties have motivated their increased utilization in computing, such as integration of flash memory with DRAM to increase memory capacity and reduce power consumption.

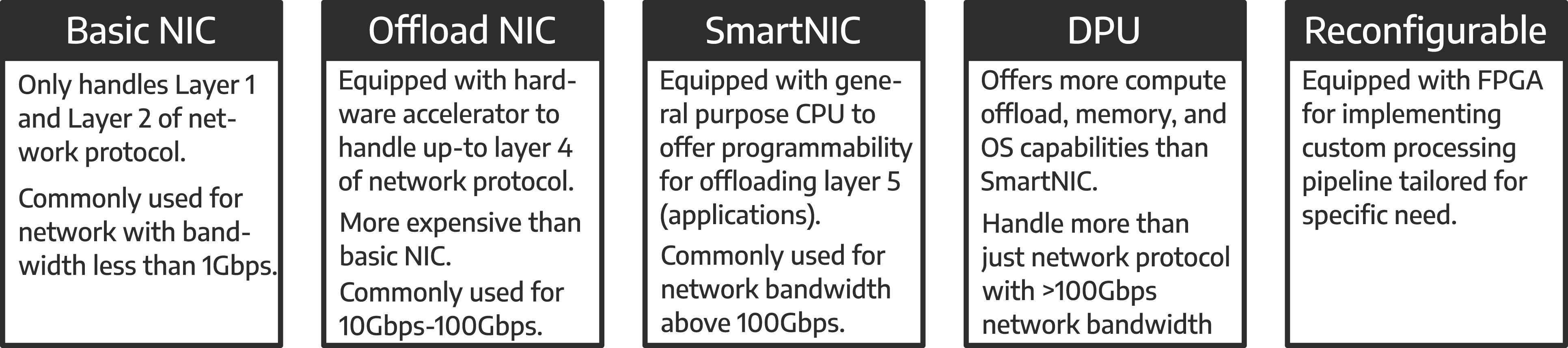

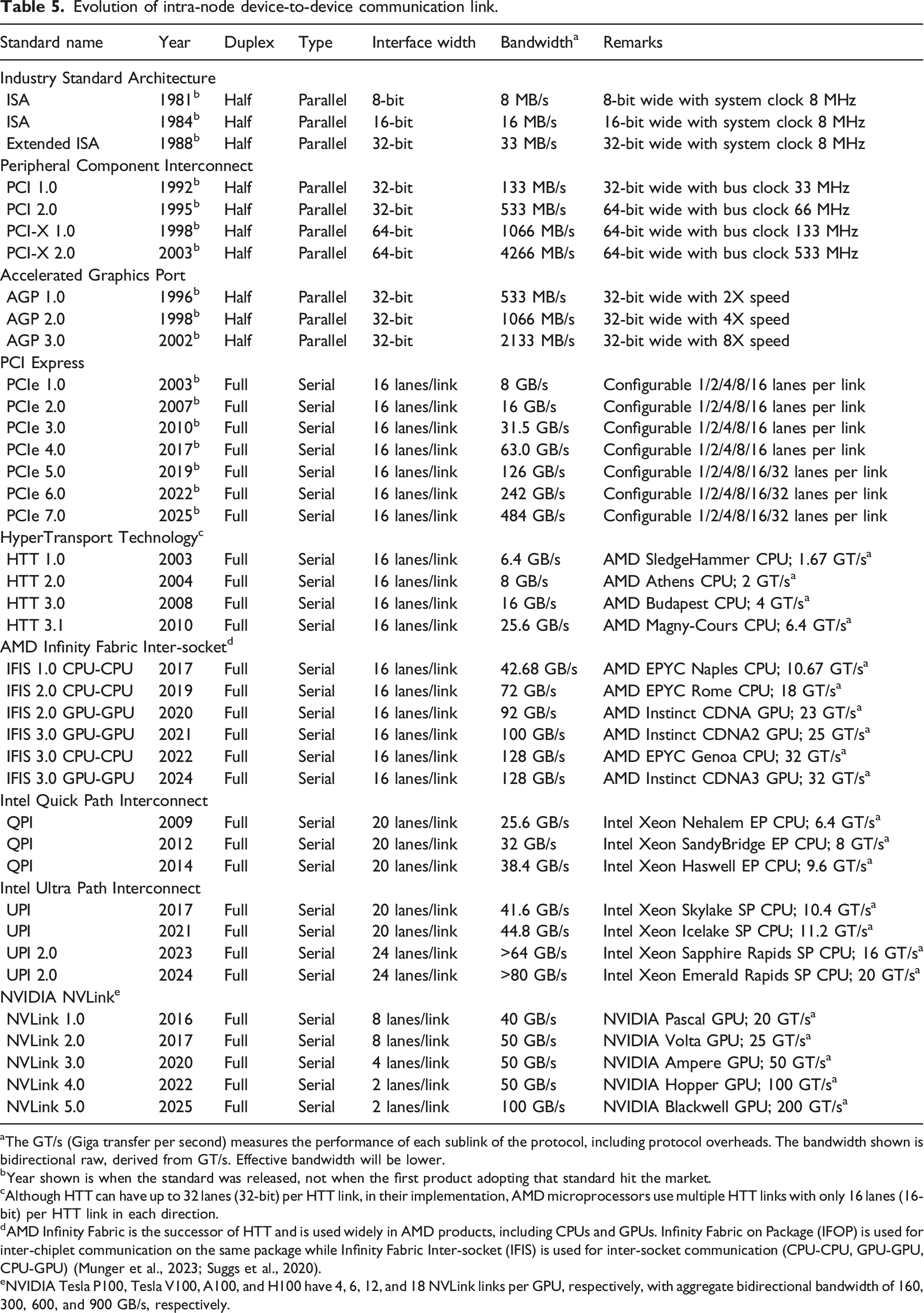

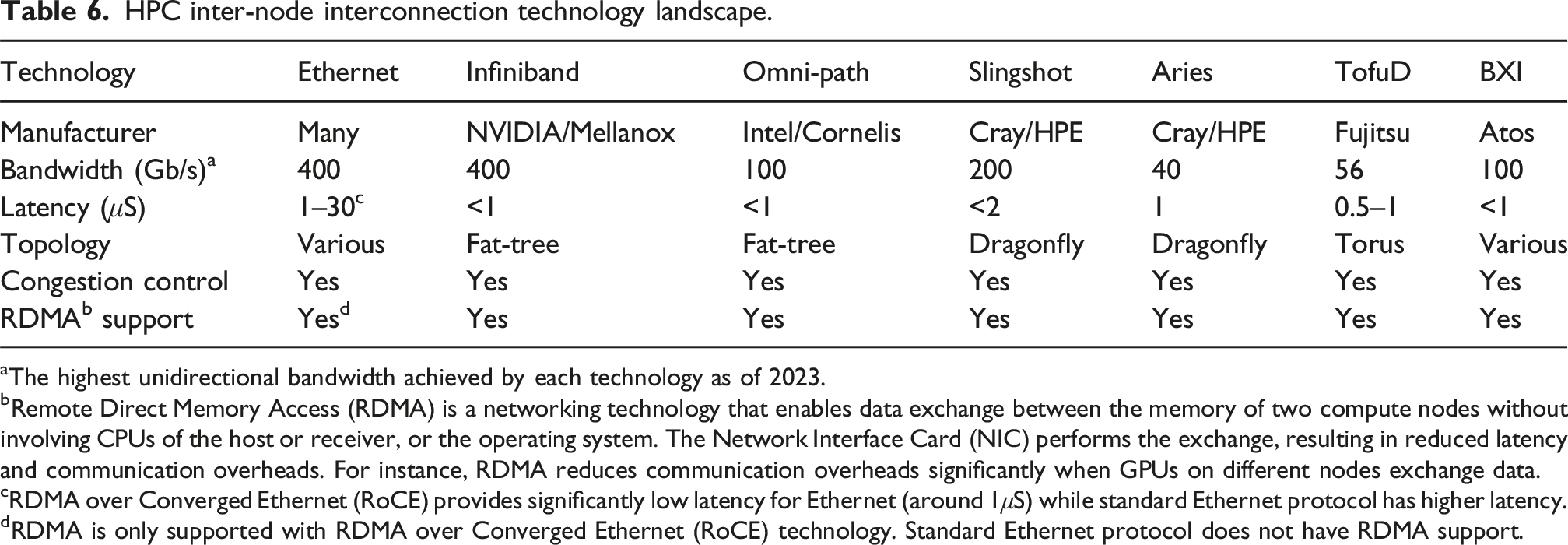

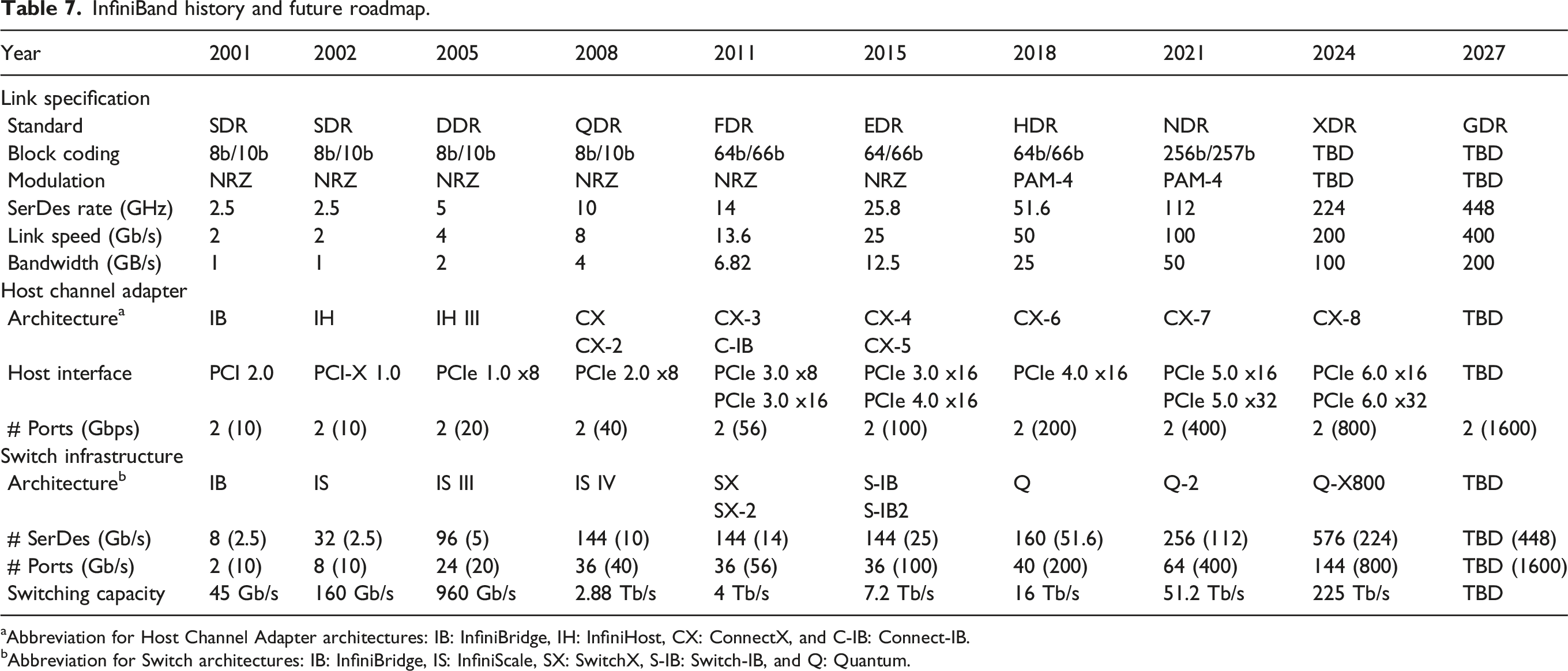

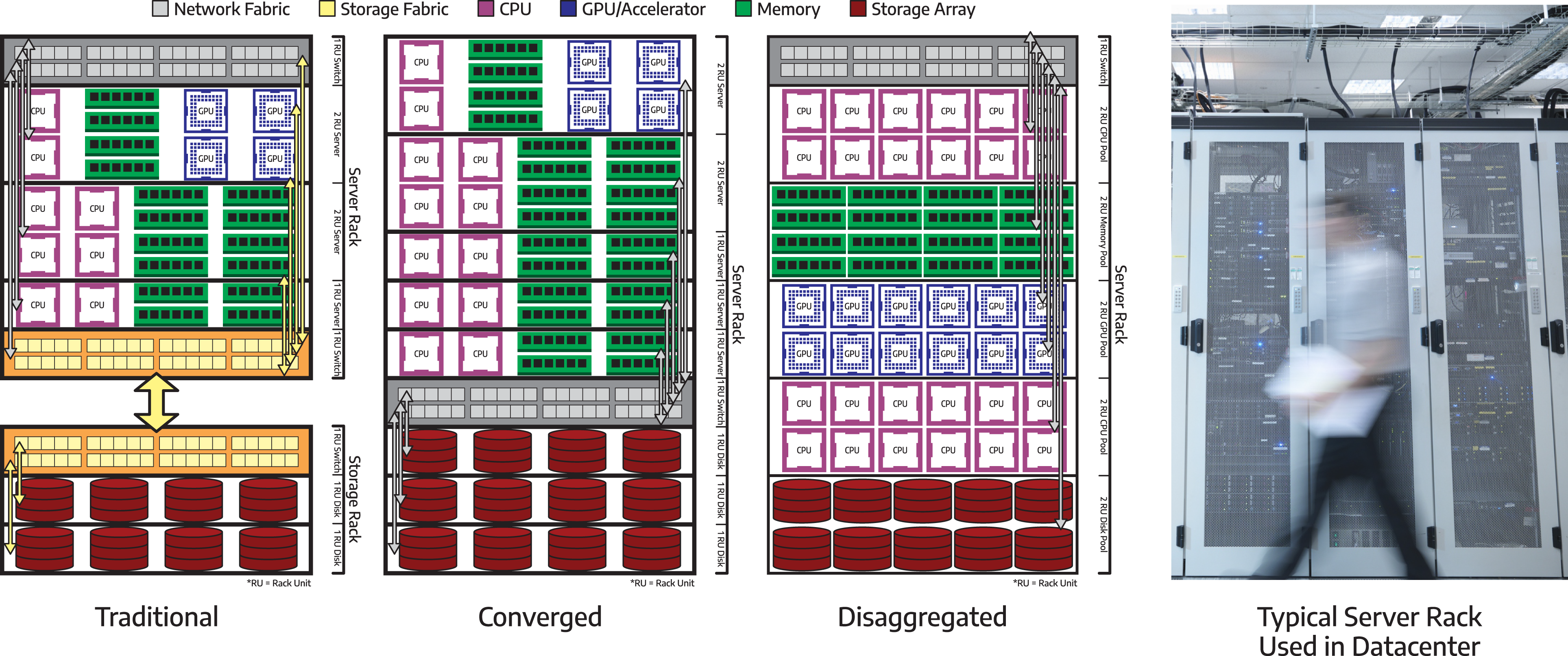

We review technologies that are used for communication within a chip, as well as between different computing devices, such as processors, memory, and hardware accelerators in Inter-device communication. Communication, either within a single cluster node, or between cluster nodes, continues to be the primary bottleneck for many applications in scientific computing. This makes algorithms with a smaller communication footprint attractive to modern and emerging architectures. On-chip communication has greatly grown in complexity over the past decade, especially for Systems-on-Chip that comprise several different components that need to interact with each other. To this end, a wide variety of interconnect architectures and topologies have been suggested, each tailored to the optimization of different metrics, such as area, cost, performance and energy. Improvements in intra-node communication depend heavily on technological advancements in serial interfaces (Part I: Architecture of communication interfaces (Hanindhito et al., 2026)), such as NVLink, aiming at increasing the communication bandwidth. Inter-node communication is arguably the weakest link in many high-performance scientific computing applications. While inter-node communication technologies, such as InfiniBand, have steadily improved over the past decades, they trail advancements in modern microprocessors. Using more advanced signal modulation schemes improves bandwidth, and is expected to play an increasingly important role in the years to come. This will put more pressure on the host microprocessor. Data processing units are hardware accelerators that are specialized to offload this burden from the host microprocessor, and are becoming more common. Advanced technologies, such as optical interconnects for inter-node communication, can enable clustering of computing devices 1 in the future. This is referred to as disaggregated computing and will increase the efficiency of using computing resources for a diverse group of applications.

Integration aspects of contemporary heterogeneous systems are explored in the System integration and heterogeneous computing section. Heterogeneous computing involves leveraging various types of computing components to enhance performance and energy efficiency, based on specific application demands. This diversity is inherent not only in System-on-Chip devices but also in cluster nodes that may consist of different types of devices. Despite offering a broad spectrum of design options and high flexibility, optimizing the performance of all components in a heterogeneous system is a complicated endeavor. Numerous methods have been proposed to address the partitioning and scheduling problems that aim to determine the optimal allocation of tasks to resources. Distinct strategies are necessary for on-chip integration as well as intra- and inter-node system integration. While advanced tool support is limited and heavily reliant on the underlying architecture, it can greatly simplify this intricate task for application developers. We also provide a detailed example of system integration for the Anton specialized chip and computing system, which targets molecular dynamics simulations.

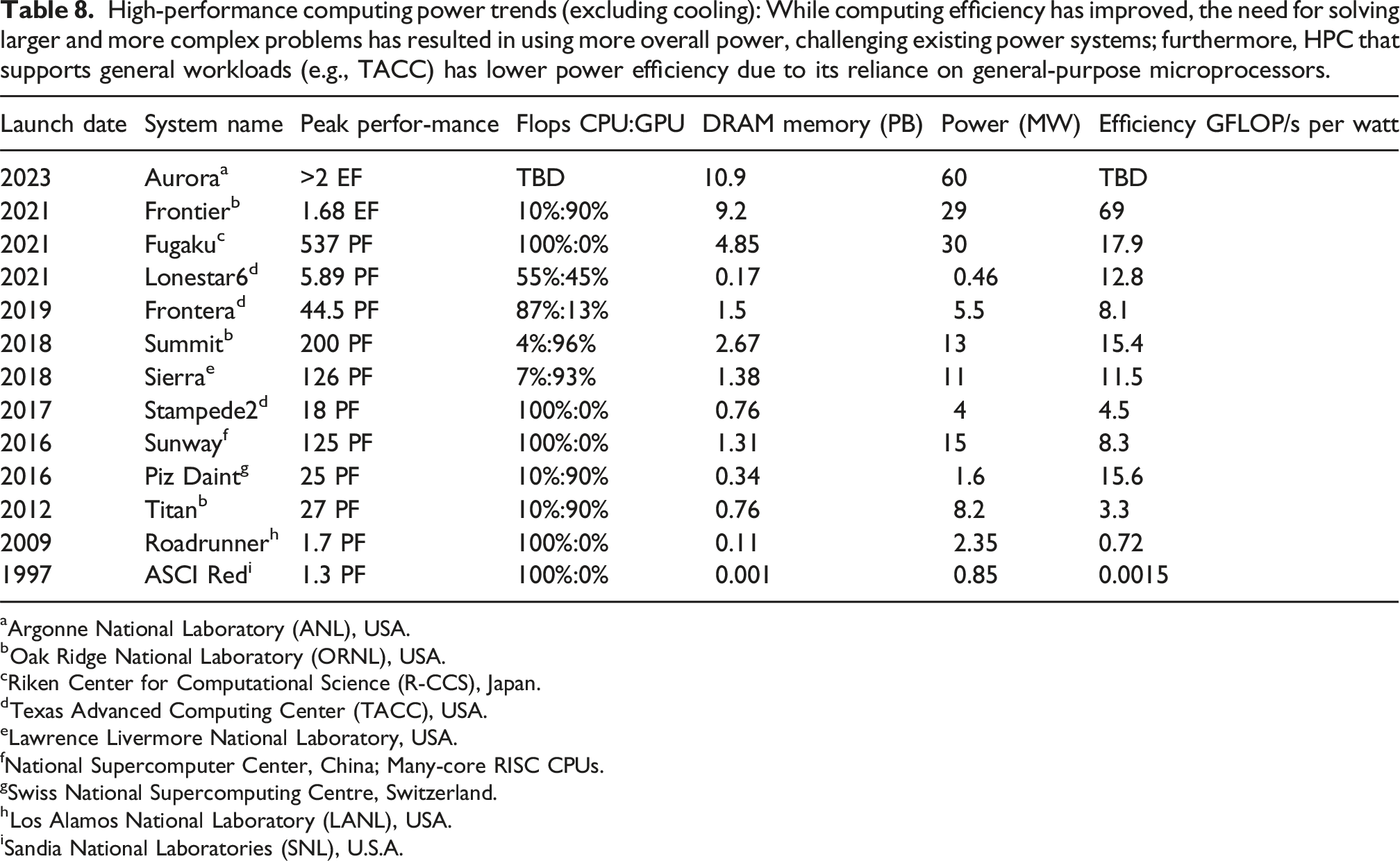

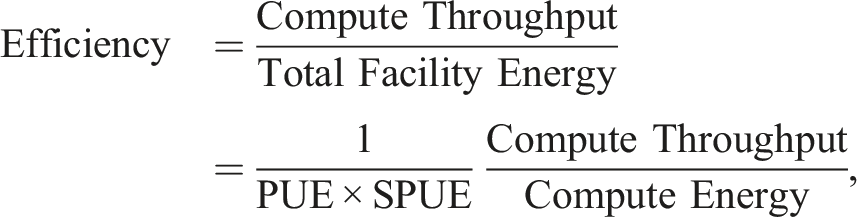

Supplying energy to supercomputing centers is becoming increasingly more challenging, as we discuss in the Energy consumption of large computing centers and its implications section. While modern hardware strives to be more energy efficient, the quest to solve larger and more complex problems has led to clusters that are growing in size and total energy consumption. Some of today’s largest clusters consume as much energy as a small town. This puts pressure on the power infrastructure, and can constrain or challenge upgrading a cluster into a more powerful machine. Upgrading the power infrastructure, or placing modular nuclear power plants close to large clusters of the future will likely become more common. These challenges highlight why energy efficiency heavily influences many hardware design decisions; a trend that is expected to continue.

The Impacts on high-performance scientific computing section examines how the reviewed technology trends impact high-performance scientific computing. On the one hand, scientific computing is very diverse and relies on non-modular and old software for many applications. On the other hand, scientific computing has a smaller market size compared to competing computing markets, such as machine learning and artificial intelligence. At times, this makes it difficult to secure sufficient resources to modernize scientific computing software in order to effectively harness modern hardware. Moreover, a large group of research scientists who develop scientific software often prioritize productivity over performance. Furthermore, they may not be well-versed in programming alternative devices, such as GPUs. These factors exacerbate the adoption of specialized architectures in such groups. Modern hardware provides a wide range of possibilities to computational scientists, where productivity versus performance may be balanced according to specific needs. These include: careful selection of computing platforms for running old applications faster while making minimal changes to the software; making substantial changes to the code and revising algorithms to effectively harness modern hardware; or even designing specialized hardware and algorithms to maximize desired performance metrics.

The Outlook section attempts to envision the future of high-performance computing based on technology trends that are expected in the next decade and beyond. Chiplets will flourish since they reduce the cost of hardware design through modularization. Low-power processors allow more of them to be placed on a compute node, alleviating communication bottlenecks. Disaggregated computing will result in better utilization of computing resources. Hardware specialization for large workloads will gain traction, as it will become the only viable path for improving performance. Design cost of specialized hardware will likely decrease due to the availability of open-source and automated design tools. Algorithm specialization will become even more important, in order to fully utilize modern and emerging hardware. Hardware-algorithm co-design will become more common, since it can maximize performance gains. Once they become mature, exotic computing technologies (e.g., quantum computing) may be integrated with high-performance computing to accelerate certain workloads. Consequently, the future of computing is diverse. Moreover, since access to technology and cost of design may vary around the world, some companies may find it more economical to improve performance through using more advanced manufacturing technologies, whereas others may find hardware and algorithm customization as a more sensible approach, particularly when access to advanced technologies is regulated.

Finally, the Frequently asked questions section summarizes frequently asked questions that are often asked by researchers, practitioners, and decision makers. It provides a high-level summary of the possibilities in the coming years.

Memory systems

Computing performance is expected to improve due to new technologies, such as hardware accelerators (Part I: Hardware accelerators (Hanindhito et al., 2026)), chiplet packaging (Part I: Advanced packaging technologies (Hanindhito et al., 2026)), and heterogeneous computing (System integration and heterogeneous computing).

Computing memory systems should keep up with the above advances, which entails providing more bandwidth and lower latency.

A flat memory system

2

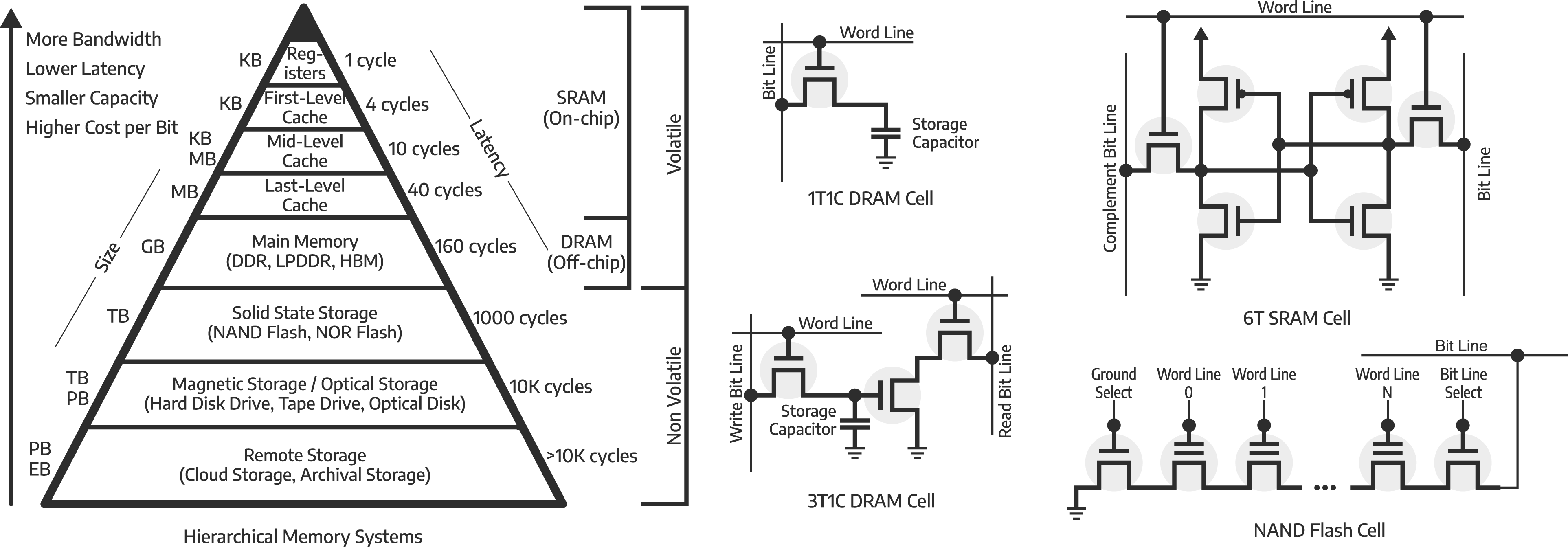

, where only one memory technology is used, would simplify both hardware and software implementation (Agarwala et al., 2000; Jacob et al., 2007). However, no single memory technology has all the desired properties: low access latency, low energy consumption, high bandwidth, large capacity, and low cost per bit (Bolotin et al., 2015; Dunning et al., 2009). Therefore, a computer system usually has a hierarchical memory structure (Rhu et al., 2013; Wang et al., 2008), as shown on the left side of Figure 1, where each level of the hierarchy is implemented with a different memory technology, raising the complexity of software and hardware design (Guo et al., 2008; Tsai et al., 2018). The hierarchy of memory systems consists of multiple memory technologies, both volatile and non-volatile. The top half of the hierarchy is volatile storage, which is implemented by using on-chip Static RAM (SRAM) and off-chip Dynamic RAM (DRAM). The registers provide very fast access, at the same level of the processing units, although, at a very limited size. DRAM provides significantly slower access, but at a significantly larger capacity. In between, multi-level cache systems are implemented to store recently-accessed data, and thus, reducing the frequency of accessing DRAM. SRAM is implemented by using 6 transistors (6T), while DRAM can be implemented either by using 3 transistors and 1 capacitor (3T1C), or 1 transistor and 1 capacitor (1T1C). The latter is preferred due to its higher density, but at the cost of a more complicated read mechanism. The bottom half of the hierarchy shows non-volatile storage technologies, consisting of flash-based storage, magnetic-based storage, and remote-based storage. The flash-based storage can be implemented by using NAND flash, consisting of transistors with a floating gate, resulting in a higher density than 1T1C DRAM. Flash has a limited number of write cycles due to endurance issues with the floating gate. The transistor-level operation details for DRAM, SRAM, and NAND/NOR Flash are not discussed; interested readers are suggested to consult Chapter 5 (SRAM) and Chapter 8 (DRAM) of Jacob et al. (2007), and Crippa et al. (2008) for NAND/NOR Flash.

The top-half of the pyramid (Figure 1) uses volatile memory technologies (Valero et al., 2012), which require continuous supply of power to retain data (Dao et al., 2021). The bottom-half of the pyramid uses non-volatile memory technologies and can retain data in the absence of power. Volatile memory technologies directly impact performance and energy consumption (Li et al., 2019a), as they are used to store hot data 3 . They must keep up with demands from the processing units. Non-volatile memory technologies are typically used to store cold data 4 and usually have a smaller impact on the performance of the whole computer system.

In this section, we review key memory technologies, and describe their performance characteristics. Specifically, we review static random-access memory (SRAM), dynamic random-access memory (DRAM), and near-memory processing (NMP) and processing-in-memory (PIM). Finally, non-volatile memory (NVM) and storage systems are also highlighted.

Static random access memory (SRAM)

SRAM is on-chip storage, and is a building block for registers and cache memory (Liang and Wang, 2016; Mahanta et al., 2022; Zhang et al., 2020a). It is implemented by employing similar transistors (and therefore, the same process node technology) that are being used for computing units (Huang et al., 2018; James, 2009; Lage et al., 1996). The standard form of SRAM consists of six transistors 5 (6T SRAM) (Guo et al., 2005; Margala, 1999; Weste and Harris, 2010), as shown in the right-most part of Figure 1. This structure allows for fast data reading and writing, while not requiring periodic refresh, hence named static RAM (Weste and Harris, 2010).

Registers and cache memory are key components that rely on SRAM technology, and will be discussed next. Using SRAM in hardware-accelerators (Part I: Specialized and custom hardware (Hanindhito et al., 2026)) is also popular due to the lower access latency and higher bandwidth it provides (at the expense of being less energy-efficient); integration of SRAM with the silicon die is also easier, compared to DRAM and HBM. We end this part by outlining different options for programming SRAM.

Registers

Registers are very fast storage elements, close to the functional units 6 of a microprocessor (Balasubramonian et al., 2001; Cruz et al., 2000a). Registers can be as fast as the microprocessor, being able to read and write in one clock cycle (Cruz et al., 2000b; Kim and Mudge, 2003), and are responsible for storing the operands and intermediate results of instructions that are being executed. While registers are fast and favorable, microprocessors can only have a limited number of them due to limited available space around the functional units and its latency constraints 7 (Kondo and Nakamura, 2005; Mittal, 2017). GPUs have a larger size of registers due to supporting a massive number of threads 8 (Gebhart et al., 2012), where each thread has its own register allocation 9 . Part I Tables 6 and 7 (Hanindhito et al., 2026) show trends of register size in NVIDIA datacenter GPUs, where it has stayed flat at 256 kB per Streaming Multiprocessor (SM) since 2013. With this limited number of registers, applications that have a large number of intermediate results do experience register spilling (Chaitin, 2004; Nuth and Dally, 1995). This results in data being moved back-and-forth between the registers and the first level of cache (Li et al., 2016; Vizitiu et al., 2014), causing delays.

Caches

Fundamental functions

Cache is a fast, hardware-managed, on-chip memory. It is used to store commonly-used data to reduce off-chip memory access latency and bandwidth demand. Cache memory operates according to temporal and spatial locality (Lee et al., 2000). While cache operations are typically managed by hardware, understanding this process helps computational scientists develop hardware-aware algorithms 10 , leading to improved performance (Christiaens et al., 1999; Cucchiara et al., 1999; Günther et al., 2006).

Data needed by a microprocessor for the first time should be accessed from off-chip memory, which incurs high access latency and energy consumption 11 . The data will then likely reside in cache for a short period of time in case the microprocessor needs to access that data again. When the data is not used for a while, it will be evicted to a higher-level cache, and, eventually, to off-chip memory 12 (Ghandeharizadeh et al., 2015). If the microprocessor needs to access this data again, it has to be fetched from higher-level caches or off-chip memory again 13 . Temporal locality relies on the idea that, most likely, recently-accessed data will need to be accessed again. Therefore, storing that data in cache saves energy, and improves performance. Spatial locality in caching suspects neighboring data of a recently-accessed data likely need to be accessed soon (Gu et al., 2009). For instance, when an element of an array is accessed, elements adjacent to the aforementioned element likely need to be accessed in the near future, and thus are brought to the cache as well.

Algorithms that exhibit temporal and spatial 14 locality (Wolf and Lam, 1991) can lead to higher performance (Kandemir et al., 1999), and lower energy consumption (Sardashti and Wood, 2013), since they need to access off-chip memory less frequently.

Hierarchical structure

Modern microprocessors employ multiple levels of cache (Liu, 1994). Compared to the registers, the first-level (L1) cache is located farther away from the functional units; therefore, it enjoys larger capacity at a slightly higher access latency (Torres et al., 2004). L1 cache is usually private to each core (Maurice et al., 2015) and stores the most-recently-used data, as well as data due to register spilling (Li et al., 2016). General-purpose microprocessors have around 64 kB of dedicated L1 cache per core, while GPUs typically have around 256 kB of L1 cache per Streaming Multiprocessor (SM).

In addition to limits posed by physical space, the L1 cache size is kept small since a larger cache entails larger access latency 15 (Hijaz et al., 2013). It is preferred to have a fast L1 cache, instead of a large L1 cache, since it should keep up with the speed of the registers (Huang and Nagarajan, 2014).

The last-level cache (LLC) is usually shared between cores of a microprocessor (Cataldo et al., 2016), and thus, is located outside of the core complex. It interfaces directly with off-chip memory 16 (Chaudhuri et al., 2019), provides a data-sharing mechanism between cores (Albericio et al., 2013), and can have a capacity of a few hundred megabytes, albeit at a higher access latency 17 . Between the L1 cache and LLC, there can be multiple mid-level caches (e.g., L2), which balance capacity and latency between the L1 cache and LLC (Chishti et al., 2005; Wang and Lee, 2008).

Specialty cache

Microprocessors may feature specialized caches whose structure is optimized for particular memory access patterns. In general-purpose microprocessors (Part I: General-purpose microprocessors (Hanindhito et al., 2026)), a separate instruction cache (e.g., L1-I) is used to cache the program instructions in addition to the first-level data cache (e.g., L1-D) that is used to store most-recently-used data. The instruction cache is read-only, while the data cache is capable of read and write. The split design has some advantages: (a) it doubles the aggregate bandwidth of the first-level cache, since there are two physical caches (McFarling, 1989; Smith, 1982); (b) it lowers the access latency, since the instruction cache can be physically placed near the instruction-fetch-and-decode unit, whereas the data cache can be placed near the memory unit (Smith, 1982); and (c) most importantly, it avoids interference 18 between instructions and data, since they have different access patterns (Racunas and Patt, 2003; Trancoso, 2005).

In GPUs (Part I: GPU memory system (Hanindhito et al., 2026)), in addition to the data caches (i.e., L1 and L2), there exists read-only texture cache, and read-only constant memory cache. Texture cache is optimized to store large amounts of data with spatial locality, with support for hardware filtering and interpolation, which is mostly beneficial for graphics applications. The constant memory cache is used to store small amounts of constant data (e.g., pre-computed constants), and provides lower access latency than texture cache.

Coherency

Coherency among the cache hierarchies needs to be maintained: if data is changed in the lower-level cache, it should be reflected in the higher-level cache, as well as in the off-chip memory (Ros et al., 2015). Consider a core modifying data in its private lower-level cache; if another core needs to access this modified data, it can obtain the data from the shared last-level cache, which should contain the correct version of the data after being modified by another core.

Cache coherency becomes more complex in multi-core microprocessors and massively-parallel architectures (e.g., GPUs) (Joshi and Ramasubramanian, 2015; Martin et al., 2012; Parvathy et al., 2016): it requires expensive hardware structures that consume additional area and power to track vast amounts of in-flight coherence requests, introduces excessive coherence traffic overheads that degrade performance, and complicates program execution through additional transient states and communication classes (Keckler et al., 2011; Singh et al., 2013). Accordingly, massively-parallel architectures do not support cache coherency 19 , and this responsibility is delegated to the programmer.

User-managed vs. compiler-managed scratchpad memory

While general-purpose processors rely on hardware to manage SRAM in the form of cache, several hardware architectures allow users or compilers to manage the on-chip SRAM explicitly. Some algorithms may have memory access patterns that cause cache trashing (Jaleel et al., 2010; Seshadri et al., 2012), reducing the effectiveness of the cache, and resulting in significant performance degradation. In these situations, explicit management of on-chip memory may improve memory access performance.

User-managed shared memory in GPUs provides this opportunity (Part I: GPU memory system (Hanindhito et al., 2026)). Some GPU architectures 20 have both L1 cache and shared memory implemented as unified on-chip memory. This provides flexibility to the users in sizing the shared memory, leaving the rest for L1 cache. Therefore, using shared memory effectively reduces the size of the L1 cache (Part I Tables 6 and 7 (Hanindhito et al., 2026)).

Management of the on-chip SRAM by a programmer often requires a deep understanding of the underlying algorithm and its memory-access pattern. If not done correctly, it may lead to performance loss, especially in unified architectures such as GPUs, where it affects the smaller L1 cache, which may outweigh performance gains achieved through using the shared memory.

Using compilers to manage SRAM, e.g., on hardware accelerators, is becoming more common. For instance, the only available memory on Cerebras’ Wafer Scale Engine (WSE) is SRAM 21 (Lauterbach, 2021; Lie, 2022) (Part I: Specialized and custom hardware (Hanindhito et al., 2026)), which consumes 50% of the total chip area. WSE relies on a compiler to optimally distribute the data across the SRAM.

Dynamic random access memory (DRAM)

DRAM is an off-chip 22 memory that provides more capacity, and is more energy-efficient, compared to on-chip SRAM (Hassan, 2018), at the expense of being slower. DRAM is implemented with a different technology than the logic circuits that implement the processing units (Iyer and Kalter, 1999) and is connected to the microprocessor through an external bus. Classic DRAM was implemented by using three transistors and one capacitor (3T1C), while the most common implementation uses one transistor and one capacitor (1T1C) (Gong and Chung, 2016) (middle of Figure 1). The presence or absence of a charge stored in the capacitor represents bit 0 or bit 1. This structure allows DRAM to have a significantly higher bit density compared to SRAM, and thus lowers the cost per bit (Si et al., 2021).

Unlike SRAM, since the charge in the capacitors fades over time (Gong and Chung, 2016), DRAM needs to be refreshed periodically to maintain data integrity (Nair et al., 2014). Hence, it is named dynamic RAM. The periodic refresh occurs every few microseconds, depending on the manufacturing technology and bit density 23 (Nguyen et al., 2019). During the refresh, the data is read by the sense amplifier (Blalock and Jaeger, 1992) through sensing the charge in the capacitor. Next, the same data is written back, by putting the correct amount of charge in the capacitor. As the bit density and speed of DRAM increase, so do the negative impacts of periodic refresh on performance and power consumption 24 (Baek et al., 2014; Nguyen et al., 2019). In addition, read operations in DRAM are destructive: reading a memory cell destroys its content, and thus, rewriting after reading is required. It is worth mentioning that 3T1C implementation of DRAM 25 does not exhibit this issue (Jacob et al., 2007). Nevertheless, the 1T1C implementation is preferred due to its higher bit density (Yin et al., 2019).

In the remainder of this part, we review how DRAM evolved through the years, graphics DRAM, High-Bandwidth Memory, and the main technological challenge DRAM faces.

Classic DRAM

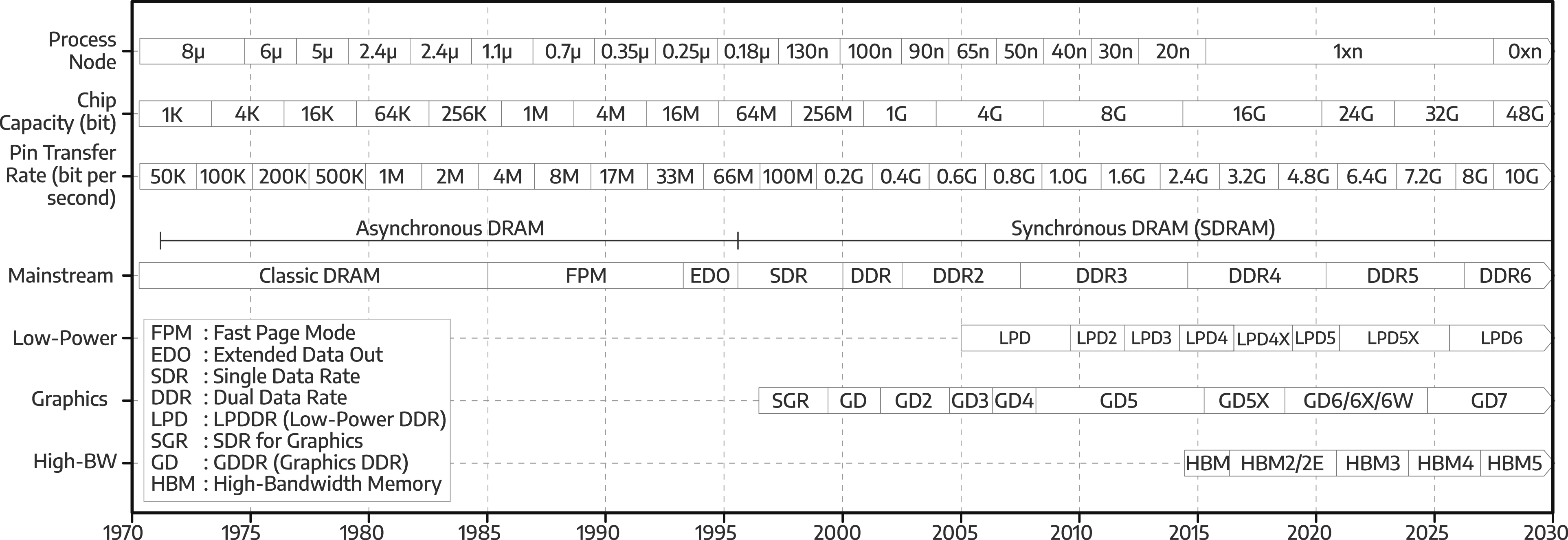

The first commercially-available DRAM chip was Intel 1103 (1970) (Dennard, 2018), with 1024 bits capacity

26

on a 10 mm2 die size (Klein, 2016). It cost a penny per bit (Santo, 1988), the same as the magnetic-core memory, which was a technology it replaced. At Intel 1103 capacity, the magnetic-core memory would have had a square-foot footprint, and a pound of weight (Lojek, 2007). DRAM’s success in replacing the magnetic-core memory fueled the development of larger and higher-speed DRAMs (Figure 2). The top half of Figure 2 shows trends related to important metrics in DRAM: (a) the process node with which the DRAM chip is manufactured; (b) the chip bit capacity; and (c) the pin transfer rate, which measures how fast the DRAM chip transfers data through each of its pins. DRAM chip capacity has increased through the years: it roughly doubled every 2.5 years due to smaller process nodes. However, its growth slowed down in the late 1990s due to the technological challenges highlighted in Main technology challenges. The data transfer rate, expressed as the pin transfer rate, has steadily increased to satisfy the need for even higher bandwidth; however, there is a trade-off between chip capacity and pin transfer rate, as discussed in Graphics DRAM. The bottom half of Figure 2 shows the progression of DRAM standards

27

in industry. Evolution of Dynamic Random Access Memory (DRAM) since the 1970s, with projections until 2030.

Fast page mode and extended data out DRAM

During the mid-1980s, the DRAM interface, which connects a microprocessor into the DRAM modules containing multiple DRAM chips, could not keep up with the demands of microprocessors (Jacob et al., 2007). Performance improvement of microprocessors (Part I Figure 5 (Hanindhito et al., 2026)) significantly outpaced that of DRAM. This led to several innovations in DRAM chip development, aimed at reducing latency, and increasing bandwidth (Jacob et al., 2007).

The Fast Page Mode (FPM) DRAM, and the Extended Data Out (EDO) DRAM, improved bandwidth over classic DRAM implementations. In classic DRAM, to request specific data, a row 28 in the memory array is selected (“opened”) by using the row address. The data represented as electrical charges are then propagated to the sense amplifiers, where they are translated to digital (binary) data. Then, through the column address, the requested data is selected and put into the output pins. This lengthy process must be repeated, even if the next requested data is located on the same row. FPM DRAM 29 and EDO DRAM 30 made DRAM transactions more efficient. We refer to (Jacob et al., 2007) for details. These improvements required small modifications to the structure of DRAM. Nevertheless, they improved system performance by as much as 30% (Cuppu et al., 1999a, 2001).

Mainstream synchronous DRAM

Classic DRAM, FPM DRAM, and EDO DRAM, used asynchronous interfaces between the DRAM chips and microprocessors. The asynchronous design has been primarily motivated by analog circuits (i.e., sense amplifier) in DRAM. Since the duration of operations in DRAM varies (based on different designs, process variations, and specific manufacturer), they are measured in nanoseconds, instead of number of cycles. Therefore, at the time, it was more challenging to tie DRAM’s operations with the processor’s clock. This asynchronous interface caused control signals of the memory controller 31 , and the requested data from the DRAM chips, to arrive at DRAM pins and microprocessor pins at non-deterministic times. Accordingly, transaction events between the memory controller and DRAM chips were temporally unpredictable, making bandwidth and latency improvements challenging. Specifically, lack of synchronization and not using a common-time-reference, on the interface between the microprocessor and DRAM, makes maintaining the correctness of the transaction difficult. The microprocessor has to “wait” for the previous instruction to be completed by the DRAM chips, before issuing another instruction. The window in which the microprocessor needs to wait, typically expressed in nanoseconds, varies greatly 32 , which incurs a performance penalty, limiting the achievable bandwidth and latency. Synchronous interfaces tried to address these challenges.

During the mid-1990s, DRAM started to use synchronous interfaces (Cosoroaba, 1995), where a clock signal is used to control the timing of the transactions (i.e., through the use of state machines), leading to predictability. Accordingly, DRAM speed was expressed through clock cycles, as opposed to nanoseconds (Cuppu et al., 1999a). In a synchronous interface, the transaction time is known due to using a common time reference 33 , allowing the microprocessor to issue another instruction before the completion of the previous instruction 34 . The microprocessor may also issue multiple instructions to different DRAM modules 35 , provided that it obeys the timing requirements of the DRAM chips (Jacob et al., 2007).

Moving from asynchronous to synchronous interfaces in the early version of synchronous DRAM (SDRAM) incurred significant implementation costs, with almost no performance gains, compared to EDO DRAM (Jacob et al., 2007). However, it provided a strong foundation for future SDRAM developments for decades to come (Table 1). We highlight the main synchronous DRAM technology developments next.

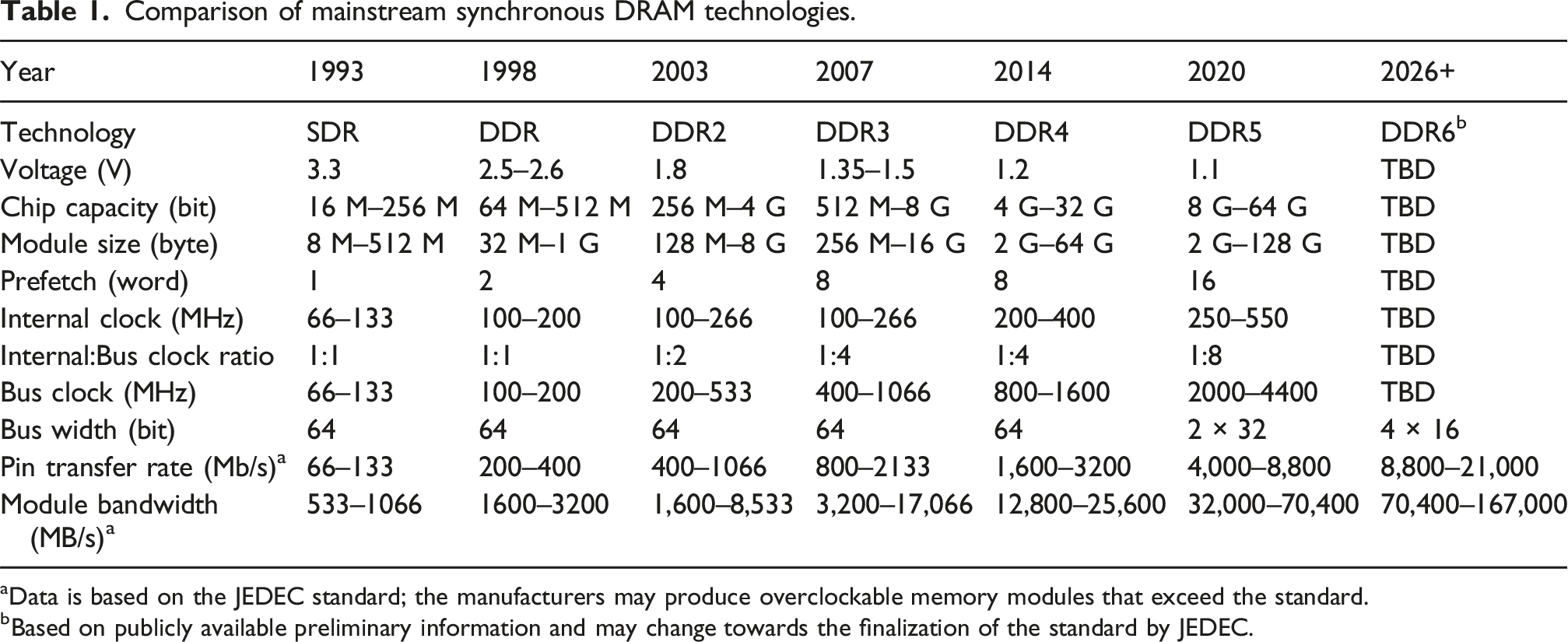

Single data rate (SDR)

Single Data Rate (SDR) SDRAM (1993) was the first generation of SDRAM, where the DRAM chips operated at a voltage of 3.3 V, and had a bus clock frequency of 66 MHz (PC66), 100 MHz (PC100), or 133 MHz (PC133) (Davis et al., 2000; Jahed, 1995). With this bus clock frequency, SDR could carry data at a rate of 66 Mb/s to 133 Mb/s for each module pin.

An SDR module, called Dual In-Line Memory Module (DIMM) (Cuppu et al., 1999b; Rixner, 2004), had 64 data lanes, which were connected to the microprocessor’s memory controller. Hence, it could send a word of 64 bits (8 bytes of data) in each clock cycle, translating to a module bandwidth of 533 MB/s 36 to 1066 MB/s.

Comparison of mainstream synchronous DRAM technologies.

aData is based on the JEDEC standard; the manufacturers may produce overclockable memory modules that exceed the standard.

bBased on publicly available preliminary information and may change towards the finalization of the standard by JEDEC.

Double data rate (DDR)

Succeeding SDR technologies focused on improving the interface bandwidth. Instead of transferring 64 bits (8 bytes) of data at each clock cycle, Double Data Rate (DDR) SDRAM (Cosoroaba, 1997) was able to read and write two words of 64 bits (16 bytes of data) during each clock cycle (Davis et al., 2000). This was realized by using both the rising and falling of the clock edges, which effectively doubled the bandwidth.

In this generation, DRAM chips had a lower voltage of 2.5 V (Yoon et al., 1999b), allowing for reduced power consumption per bit, and higher bit capacity per chip within the same power envelope 38 , with up to 1 GB of module size.

With the double prefetch 39 length, at 200 MHz of bus clock, and 64-bit module interface, the DDR module can achieve 3200 MB/s 40 of memory bandwidth, which is twice that of SDR (Yahata et al., 2000).

DDR2

Compared to DDR, DDR2 (Kyung et al., 2005) doubled the bus clock, without doubling the internal clock of the DRAM chips, which, effectively, doubled the bandwidth, but did not improve latency. To do so, DDR2 doubled the prefetch into four words (Shuang-yan et al., 2005). Since the internal clock is half the frequency of the bus clock (Prince, 2003), the latency 41 of the DDR2 module was higher than that of the DDR 42 .

The voltage was reduced to 1.8 V, with a significant increase in DRAM chip bit capacity, which was enabled by using advanced process nodes (Prince, 2003). This allowed implementation of 8 GB DIMMs, with expected module bandwidth that could reach 8533 MB/s 43 .

DDR3

To further increase bandwidth, a similar approach was followed by DDR3 (Fujisawa et al., 2007; Park et al., 2005), which doubled the prefetch bandwidth to 8 words, reduced the voltage 44 to 1.5 V, and doubled the chip bit capacity to allow 16 GB of DIMM size (Cui et al., 2008; Fujisawa et al., 2007).

DDR4

DDR4 (Koo et al., 2012; Shim et al., 2018) supports even higher DRAM chip bit capacity, at higher bus clock frequency (Lingambudi et al., 2016), while retaining the same prefetch width as DDR3 (Islam et al., 2014). Therefore, the DRAM chips need to interleave read and write from several bank groups to keep the bus busy (Islam et al., 2014; Sohn et al., 2013).

DDR5

The latest standard, DDR5 (Kim et al., 2019; Winterberg et al., 2023), doubles the prefetch width to 16 words (Kim et al., 2020) to reach higher bandwidth while maintaining internal clock frequency around 250 MHz to 550 MHz. It splits the bus into two 32-bit sub-channels to increase parallelism (Liu et al., 2023) and achieves up to 70.4 GB/s of module bandwidth.

DDR6

DDR6 is the successor to DDR5, slated to launch between 2026 and 2027 or even later. It doubles the number of sub-channels to four 16-bit subchannels and is expected to double the bandwidth provided by DDR5 at lower voltage. Although it was rumored to use PAM-4 modulation, JEDEC most likely will keep using the NRZ due to the complexity of the PAM-4 (Part I: Signal coding and modulation (Hanindhito et al., 2026)). In addition, a new module form factor called Compressed-Attached Memory Module (CAMM) will be introduced to replace the aging DIMM, accommodating the signal integrity challenge with the anticipated increase in the bus clock. JEDEC standardized CAMM for DDR5 in 2023 with limited adoption.

Mobile and low power DRAM

Mobile devices use the low-power version of DRAM chips, referred to as Low-Power DDR-SDRAM (LPDDR). The LPDDR chip is usually placed very close to the processor (Hollis et al., 2019), either by soldering the chip closer to the microprocessor, or by putting the LPDDR chip on top of the microprocessor package (i.e., package-on-package (Hsieh, 2016; Lin et al., 2014)). The connection between LPDDR and the processor has a small bus width 45 . This integration technique, and the smaller bus width, reduces the wire resistance, which reduces power consumption.

To further reduce power consumption, LPDDR operates on a lower voltage (Hajkazemi et al., 2015), compared to the standard DRAM: 1.8 V on LPDDR, 1.2 V on LPDDR2 (2009) and LPDDR3 (2012), 1.1 V on LPDDR4 (2014), and 0.6 V on LPDDR5 (2019) and LPDDR5X (2021). Moreover, the periodic refresh operations are optimized by applying multiple techniques that control power consumption aggressively (Baek et al., 2014; Hemani and Klapproth, 2006). LPDDR’s lower power consumption is attractive to data centers and high-performance computing clusters. For instance, LPDDR5X is used for the Grace CPU memory in NVIDIA Grace-Hopper (GH200) and Grace-Blackwell (GB200) (Tirumala and Wong, 2024) CPU-GPU heterogeneous platforms.

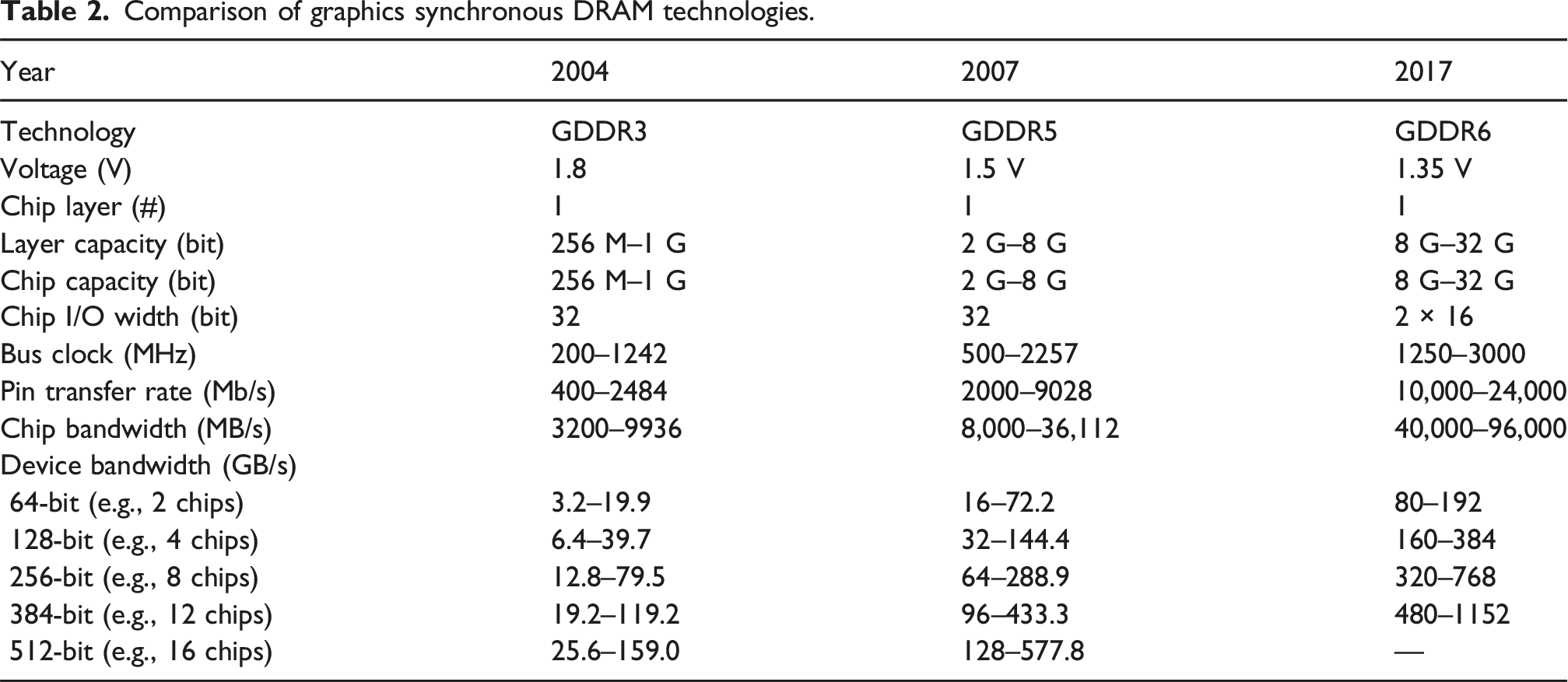

Graphics DRAM

GPUs that rely on high-bandwidth memory often need different approaches for designing the DRAM chips, along with tighter integration of memory with the device.

Higher bandwidth for memory can be achieved through: (a) constructing a wider memory bus (Kim et al., 2014; Li et al., 2018); (b) increasing the bus clock frequency (Cho et al., 2012); (c) running DRAM chips at a higher internal clock frequency (Woo, 2010); and (d) utilizing denser signal modulation (Part I: Signal coding and modulation (Hanindhito et al., 2026; Horowitz et al., 1998; Wang and Buckwalter, 2011) between the microprocessor and DRAM chips. In what follows, we review how the memory bandwidth of GPUs has increased over the years through the evolution of various technologies.

Evolution of graphics memory technology

Earlier DRAM for GPUs was constructed by using video RAM (VRAM) to satisfy the bandwidth requirements at the time (Prince, 1999). VRAM is an ancestor of SDRAM, which comprised multi-ported 46 asynchronous DRAM, and serial access memory (SAM). This allowed VRAM to operate as an asynchronous DRAM in one port, while having a synchronous serial memory interface in another port.

In 1997, GPUs started to use Synchronous Graphics Random Access Memory (SGRAM), which was derived from the Synchronous DRAM (SDRAM), eliminating the need for more expensive, multi-port asynchronous DRAM (Prince, 1999).

The successor of SGRAM is Graphics Double Data Rate SDRAM (GDDR-SDRAM, which was initially known as DDR-SGRAM (Foss, 1997; Prince, 1999)). It was based on the Double Data Rate SDRAM (DDR-SDRAM) (Cosoroaba, 1997). The GDDR-SDRAM chip is specifically designed to run at higher internal clock frequencies, compared to mainstream DRAM. To do so, it sacrifices bit capacity per chip 47 (Dunning et al., 2009), by adding more periphery components, which facilitate faster memory transactions. The increase in internal clock frequency of GDDR chips requires more cooling, as they dissipate more heat compared to mainstream DRAM chips.

Comparison of graphics synchronous DRAM technologies.

The GDDR6X (2020) (Hollis et al., 2022) can 50 provide triple the bandwidth of GDDR5 51 through using the same bus width, but by using a higher bus clock frequency, and denser signal modulation 52 . With their higher internal clock frequency, denser signal modulation, and higher bus clock frequency, GDDR6X chips run at junction temperatures 53 exceeding 100°C. The high temperature is concerning to some users, since it may impact the longevity of their products 54 . Unlike GDDR6X, next-generation GDDR7 uses PAM-3 instead of PAM-4 modulation to reduce costs, complexity, and power while delivering a meaningful increase in pin data rate over GDDR6. GDDR7 was launched in early 2025 with the launch of consumer-class NVIDIA Blackwell GPUs.

Integration of memory with GPUs

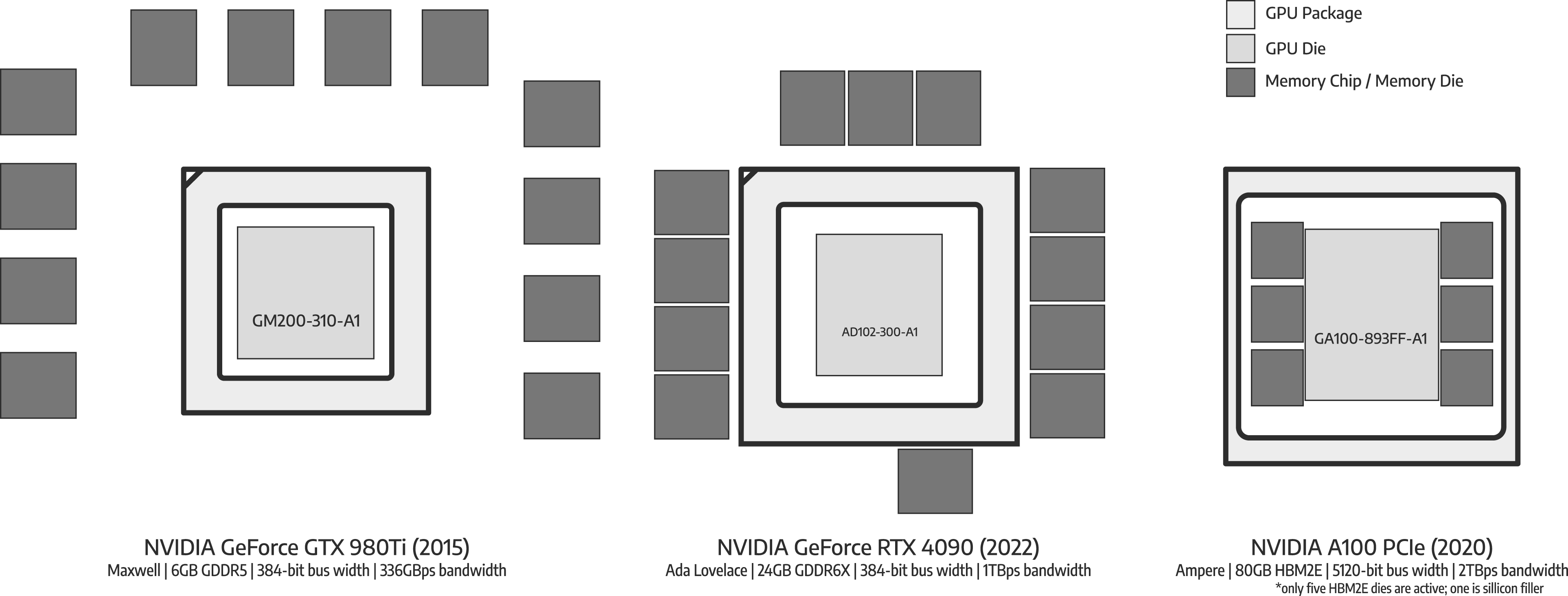

GDDR chips are closely integrated with GPU (Kim et al., 2016; Li et al., 2018) (Figure 3). This allows GDDR chips to run at higher bus clock frequencies, and use denser signal modulation. However, parasitic capacitance from the printed circuit board (PCB) materials

55

, and interference between adjacent wires

56

, become more severe at higher frequencies and longer distances (Part I: Wiring, connectivity, and signal integrity (Hanindhito et al., 2026)). Therefore, to achieve higher bus clock frequencies, and to use denser signal modulation, the distance between the GPU chip and GDDR chips must be minimized. Typical placement of a GPU with a 384-bit memory bus, when connected to GDDR5 chips, is shown on the left-side of Figure 3, whereas the typical placement of a GPU with 384-bit memory bus, when connected to GDDR6X chips is shown in the middle of Figure 3. Evolution of DRAM interface on GPU, where providing higher bandwidth is the main design objective. NVIDIA GeForce GTX 980Ti has 338 GB/s memory bandwidth, implemented by using 12 chips of GDDR5 memory, each having a 32-bit bus width, resulting in a total of 384-bit bus width. The newer NVIDIA GeForce RTX 4090 uses 12 chips of GDDR6X memory with the same total bus width of 384-bit. With a significantly faster bandwidth of 1 TB/s coming from its higher effective transfer rate, the memory chips must be placed as close as possible to the GPU package to maintain signal integrity. Finally, High-Bandwidth Memory (HBM) provides a wider memory bus of 1024-bit per chip (i.e., HBM stack) to achieve significantly higher memory bandwidth. Implementing this wide memory bus on printed circuit board (PCB) is challenging. Therefore, HBM die is usually placed on the same package that the GPU resides on through using a silicon interposer (e.g., in NVIDIA A100 GPU), as explained in Part I: Advanced packaging technologies (Hanindhito et al., 2026).

Increasing the memory bandwidth can also be realized by widening the bus (Mahapatra and Venkatrao, 1999), through adding more GDDR chips, and more memory channels. For instance, a low-end GPU can have a 64-bit bus-width, consisting of two GDDR chips, whereas a high-end GPU can have a 512-bit bus, consisting of as many as 16 GDDR chips.

Manufacturing a wider bus is challenging, since it requires routing more signal paths on a PCB (Na et al., 2017; Nitin et al., 2018). Each bit line on the memory bus becomes a single wire on the PCB. Each wire carries a high-frequency signal, and thus, can interfere with neighboring wires, which then affects data integrity (Part I: Wiring, connectivity, and signal integrity (Hanindhito et al., 2026)). Therefore, to realize a wider memory bus, new technologies that overcome the physical limitation of PCB are needed.

High-bandwidth memory (HBM) and variants

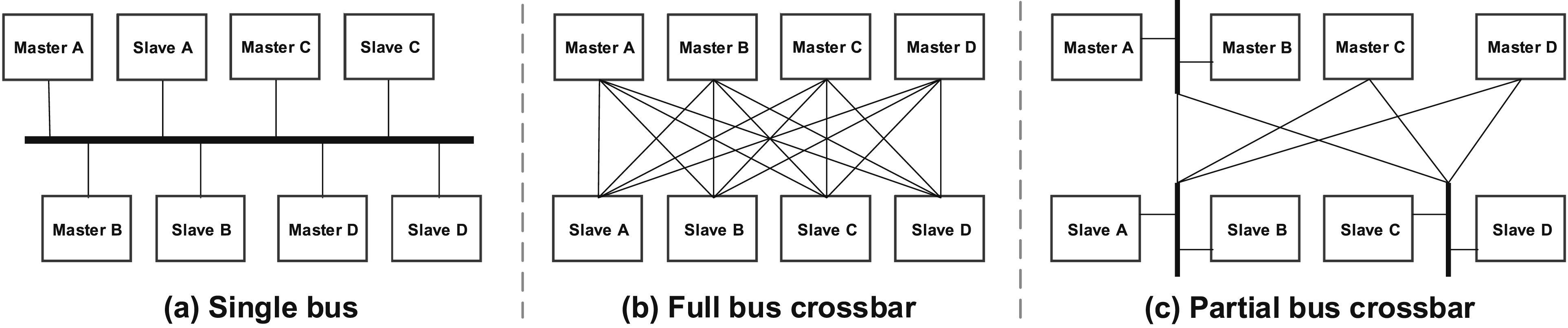

Advances in packaging technologies (Part I Figure 6 (Hanindhito et al., 2026)) allow DRAM dies to be stacked on top of each other, and then be placed on the same package that a GPU resides (the right-side of Figure 3) (Loh et al., 2015). Instead of using wires that are implemented on PCB to connect a GPU to the stacked DRAM chips, a silicon interposer (Part I: Advanced packaging technologies (Hanindhito et al., 2026)) is used (Cho et al., 2015; Lee et al., 2015b). Each stack of the DRAM chips can have a bus width as wide as 1024 bits (Kim, 2015; Martwick and Drew, 2015), which is 32 times wider than the bus width of GDDR chips. This makes implementation of a wider memory bus at lower interconnect power dissipation possible (Zhao et al., 2017). Examples of on-chip bus topologies (Pasricha and Dutt, 2010): (a) The single bus is the simplest and cheapest on-chip bus topology. All masters share the bus as the communication channel for all transactions with the system’s slaves and only one master can have access at a time. The performance of the single bus does not scale with the number of components, due to increased traffic and congestion, where arbitration can lead to the starvation of some masters. (b) In the full bus crossbar, a dedicated bus is used for each possible master-slave connection. This corresponds to the highest possible system performance, as it maximizes the theoretical onchip communication bandwidth. Arbitration logic is now required for each slave, rather than for each bus. However, it becomes prohibitively expensive as the number of system components increases, adversely affecting area, cost, power consumption and routing complexity. (c) The partial bus crossbar is a mixture of the single bus and full bus crossbar. Essentially, it trades off the performance of the full bus crossbar for a reduction in area, cost and power consumption.

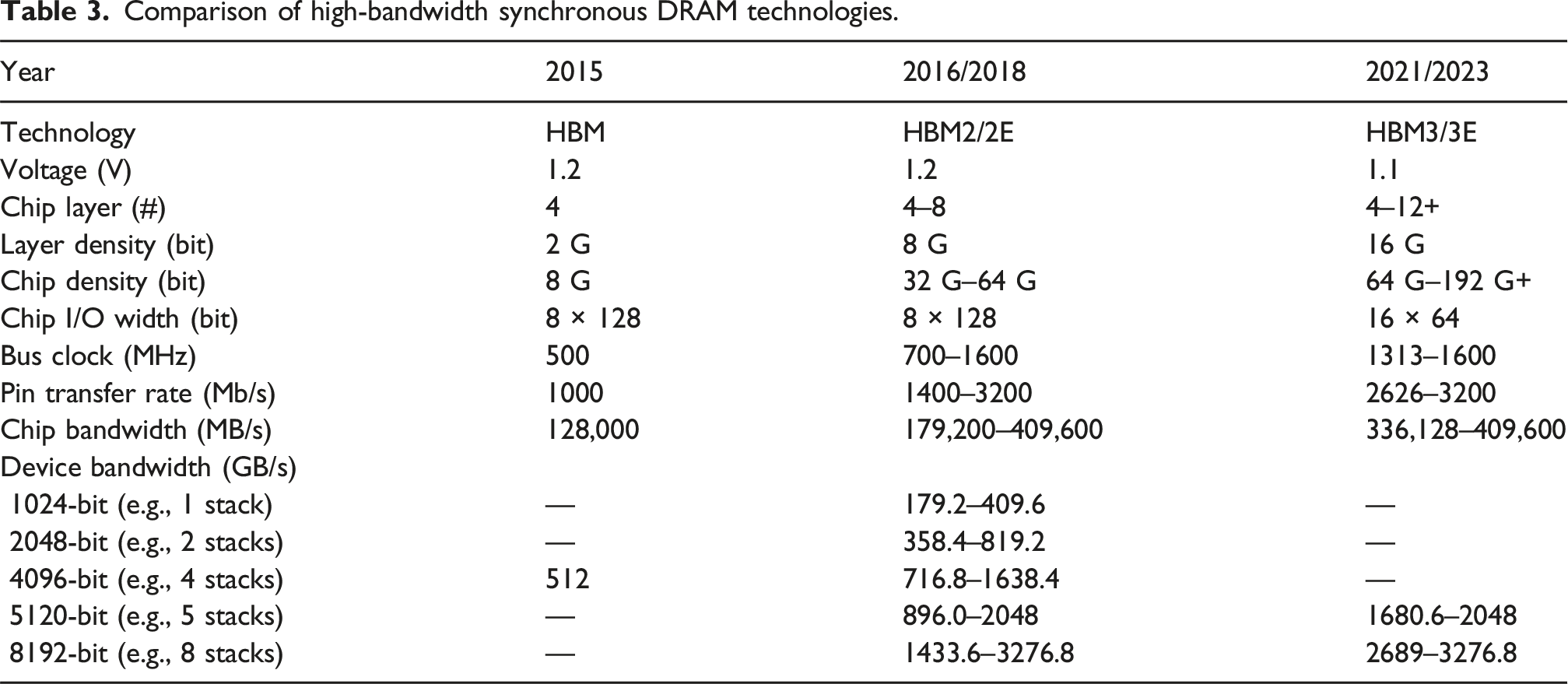

Comparison of high-bandwidth synchronous DRAM technologies.

The first-generation 57 HBM (2015) can stack up to 4 DRAM dies, enabling 8 Gb chip capacity (Lee et al., 2015a; Macri, 2015). HBM2 (2016) (Cho et al., 2018) and HBM2E (2018) (Chun et al., 2021; Lee et al., 2020b) increased the number of DRAM dies to 8, realizing 64 Gb of chip capacity, while maintaining the same 1024-bit bus interface. The latest generation, HBM3 (2021) (Park et al., 2023; Ryu et al., 2023), increases the number of stacked DRAM dies to 12, with the possibility of having 16 stacked DRAM dies in the future, pushing the chip bit capacity beyond 192 Gb. Its successor, HBM3E, was launched in 2024.

Stacking more DRAM dies into the HBM increases the chip memory capacity. However, the parasitic capacitance of thru-silicon vias (TSV), which connects each layer of the stack, becomes more problematic, as the stack size grows (Farmahini-Farahani et al., 2018; Kim et al., 2021e). Moreover, stacking more DRAM dies on the chip makes thermal dissipation of HBM chips more challenging, as the surface area of the chips remains the same (Kim et al., 2023; Lee et al., 2023b). Therefore, the next generation of HBM, HBM4 (2025+), is expected to have a vapor-chamber cooling system, which is a cooling technology that is often used for chips that need high thermal dissipation. Moreover, HBM5 (2027+) is expected to have a micro-channel cooling system.

Despite the above challenges, HBM has been successful in fulfilling the demand for memory bandwidth of high-end GPUs. However, high manufacturing costs and low production capacity (Jun et al., 2017; Abdennadher et al., 2018) limit the adoption of HBM to data center class products, leaving the consumer market with GDDR.

In addition to GPUs, HBM has been used in many other devices that need high-bandwidth memory. For instance, Intel Knights Landing, a manycore architecture (Part I: Many-core processors (Hanindhito et al., 2026)), features 16 GB Multi-Channel DRAM 58 (MCDRAM) (Pohl and Sattler, 2018; Sodani, 2015), which was derived from HMC (Jeddeloh and Keeth, 2012). In addition, Intel Xeon Max 59 features (on-package) 64 GB HBM2E (Biswas, 2021), providing considerable amount of memory bandwidth. This can especially benefit applications that fit into the HBM. In both cases, conventional (off-package) DDR5-SDRAM is still provided to make up for the limited capacity of HBM, which can be configured in multiple ways. HBM is also used in FPGAs (Holzinger et al., 2021; Shi et al., 2022), CGRAs (Kim et al., 2017), and ASICs (Jouppi et al., 2020, 2021) (Part I: Specialized and custom hardware (Hanindhito et al., 2026)).

Main technology challenges

As DRAM moves to more advanced process nodes, the chip bit density increases. This makes larger-capacity memory modules possible, and drives down cost-per-bit. However, DRAM is facing difficulties in moving to more advanced process nodes: it has stagnated in the 1x nm process node 60 (Kang et al., 2014; Mellor, 2020; Shiratake, 2020) for almost a decade, and is expected to remain in this range for the foreseeable future (Chen et al., 2023b).

The structure of DRAM is the main cause of this difficulty: DRAM uses a capacitor to store a charge for representing bit 0 and bit 1. While more advanced process nodes have enabled transistor shrinking, capacitor shrinking remains challenging. As a capacitor becomes smaller, the electrical charge it can hold is also reduced (Chen et al., 2023b). Eventually, it becomes difficult for the sense amplifier to detect the charge (Shiratake, 2020). Additionally, due to the smaller charges that capacitors can hold, they need to be refreshed more frequently, reducing DRAM’s performance (Khan et al., 2014; Liu et al., 2013). Moreover, as the bit density of DRAM becomes larger, the smaller charge has to travel through longer wires in DRAM chips, making it difficult to maintain data integrity (Part I: Wiring, connectivity, and signal integrity (Hanindhito et al., 2026)).

Due to these challenges, development of next-generation DRAM technologies is costly. Indeed, there are only three major players 61 in the DRAM industry, due to its reliance on large research and development budgets.

Near-memory processing (NMP) and processing-in-memory (PIM)

Centralized memory and compute units are separated from each other in the von Neumann architecture, which constitutes the vast majority of modern computing systems. The interface between the compute unit and off-chip memory has limited bandwidth, and often becomes the bottleneck in overall system performance. In addition, excessive data-movement consumes a lot of energy 62 . Near-memory processing (NMP) and Processing-in-memory (PIM) attempt to address the von Neumann bottleneck and reduce energy consumption by bringing the compute units closer to where data is stored (Khoram et al., 2017; Mutlu et al., 2019). With their promising performance and reduced energy consumption, NMP and PIM are attractive for data-intensive workloads. Nevertheless, these technologies are still in their infancy. A better software stack 63 is also critical for the adoption of these technologies by the end users (Ghose et al., 2019).

Near-memory processing (NMP)

The NMP brings the compute units near the memory arrays. Accordingly, the compute units and memory arrays can be integrated at chip or package levels. The capability of the compute units depends on their size. Usually, when the compute unit is placed on the same die where the memory arrays are implemented, there is competition for space, which typically results in simpler compute units. Next, we highlight a few examples that use NMP.

Cerebras and SambaNova

Cerebras’ Wafer Scale Engine and SambaNova’s Reconfigurable DataFlow Unit (Part I: Specialized and custom hardware (Hanindhito et al., 2026)) distribute arrays of on-chip memory units (SRAM) and arrays of compute units throughout the chip. This design enables interaction of memory and compute units with very high bandwidth.

UPMem

UPMem (Devaux, 2019) develops NMP through integrating compute units within the same die that a DRAM memory array resides on, referred to as DRAM processing units (DPUs 64 ). Since both the compute and memory units are implemented on the same die, the compute logic has to be manufactured by using the same process node technology that is used for DRAM cells. This leads to a suboptimal design, since the logic circuits could have been implemented with a smaller process node technology otherwise. Nevertheless, substantial performance improvements and energy savings are reported due to parallel operation of thousands of DPUs 65 , as well as the availability of significantly high bandwidth between the memory and compute units. Furthermore, UPMem DPU modules are drop-in replacements for standard memory modules 66 , allowing seamless transition from an existing memory technology to an NMP technology.

Samsung

Samsung released their version of NMP by integrating Programmable Computing Units (PCUs) into their High-Bandwidth Memory (HBM-PIM) and LPDDR5 (LPDDR5-PIM) (Kim et al., 2021b; 2022a). While they use PIM in naming their products, the associated technology that they use is still NMP. In addition, Samsung also released Acceleration DIMM (AxDIMM), which integrates reconfigurable logic to the standard DDR4 memory modules (Ke et al., 2022).

Processing-in-memory (PIM)

Unlike NMP that relies on separate compute units placed near the memory arrays, PIM performs the computations directly in the memory arrays. The approach varies based on the utilized memory technology. However, it usually requires minimal changes to the memory array structures, and relies on altering the memory commands issued by the memory controller to perform the computations. PIM has been implemented in different memory technologies: SRAM (Fujiki et al., 2021c), DRAM (Fujiki et al., 2021a), and Non-volatile memory 67 (Fujiki et al., 2021b).

Based on its operation, PIM can be divided into two categories: analog (Feinberg et al., 2018) and digital (Imani et al., 2019a). Analog PIM leverages inherent electrical properties of the memory arrays to perform computations according to Kirchoff’s law 68 (Zhang et al., 2020b). Analog circuits are more sensitive to noise, manufacturing variations, temperature changes, and voltage fluctuations. Moreover, inside SRAM-based and NVM-based PIM, the analog-to-digital and digital-to-analog converter blocks consume the majority of area and power of the memory chip (Talati et al., 2016). Digital PIM attempts to address this issue by performing digital logic 69 operations on the memory arrays. Through using logical operations, more complex arithmetic operations can be performed. However, this makes arithmetic operations, such as addition and multiplication, have significantly longer latency since the operands are processed bit-by-bit. Although individual arithmetic operations take longer latency, significant performance improvements result from the massively parallel operations that memory arrays of digital PIM can perform.

Examples of PIM implemented in DRAM include Compute DRAM (Gao et al., 2019) and Ambit (Seshadri et al., 2017), whereas Neural Cache (Eckert et al., 2018) is an SRAM PIM. Non-volatile memory technologies investigated for PIM include phase-change memory (Hoffer et al., 2022), resistive RAM (Hanindhito et al., 2021; Imani et al., 2019b), spintronic RAM (Chowdhury et al., 2018), and NAND Flash (Gao et al., 2021).

Non-volatile memory (NVM)

Non-volatile memory technologies enable permanent storage of data in the absence of power. Flash-based NVM is becoming more popular, not only for storing cold data, but also for storing hot data due to its higher density compared to DRAM, especially for HPC and ML applications that deal with big data. Emerging NVM technologies, such as phase-change NVM, are actively being investigated as an alternative to Flash-based NVM, which has been approaching its scaling limit.

Flash-based memory

Flash memory is an early example of NVM, and was invented in 1984 (Masuoka et al., 1984). It can be constructed by using NAND 70 Flash or NOR 71 Flash. NOR Flash has a higher random access speed compared to NAND Flash (Wong, 2010), along with a higher cost-per-bit. A NOR Flash cell 72 is 2.5× larger than a corresponding NAND Flash cell (Van Houdt, 2006). Due to its high bit density, and low cost-per-bit, NAND flash is generally preferred for manufacturing flash-based storage 73 (Lu, 2012).

We review key characteristics and technology trends in NAND Flash, and highlight how they are being used close to microprocessors to augment DRAM-provided memory capacity.

Flash memory operations

NAND flash (right of Figure 1) uses two transistors to control read and write to the cells, along with several floating-gate transistors for storing the data. Data is stored by maintaining an electrical charge in the floating gate, which is surrounded by oxide layers as insulators 74 (Friederich, 2010). Unlike the capacitor inside DRAM, the floating gate transistor can hold its charge for years, without the need for periodic refresh.

Each write operation to the cell slightly damages the oxide layers due to the high voltage needed for either pushing an electron into the floating gate, or removing an electron from the floating gate (Zambelli et al., 2010). After a sufficient number of write cycles, the oxide layers are damaged to the point where the floating gate cannot hold the charges, making the cell unusable. Therefore, unlike a DRAM cell, which has virtually no degradation (Itoh, 2011), NAND Flash 75 has a limited number of write cycles. Total bytes written (TBW), and drive writes per day (DWPD), are used as metrics to represent endurance of flash memory products (Li et al., 2019b; Woo et al., 2020). To improve endurance, manufacturers usually provide more cells than the stipulated capacity, and use them for over-provisioning to substitute failing cells with spares (Li, 2020). The flash memory controller is responsible to perform wear-leveling (Dharamjeet et al., 2022; Liao et al., 2015), by managing the use of the cells in such a way that all of them degrade with the same rate.

Miniaturization challenges

Increasing the bit density of flash memory can be achieved through transistor miniaturization. However, shrinking the size of the transistors reduces their endurance, since the oxide layers become thinner, making them more susceptible to damage (Koh, 2009). The use of floating gate transistors limits 76 future scaling of flash memory, and thus, a new cell structure, referred to as charge trap, is being used (Lu, 2012). Instead of using semiconductors as storage elements 77 , the charge trap uses dielectric layers 78 to hold the charge (Grossi, 2010). Compared to the floating gate, charge trap has lower reliability. However, due to advances in processing materials, charge trap is now preferred, especially for 3D NAND (Advani, 2016).

Multi-level cell

Bit density can also be increased by employing multi-level voltage in a cell, which can then represent more than one bit (Micheloni and Crippa, 2010). In a single-level cell (SLC) (Kouchi et al., 2020, 2021), there are only two levels of voltage, which represent bit 0 and bit 1. In a multi-level cell (MLC) (Lee et al., 2016b; Micheloni et al., 2006), there are four levels of voltage, which represent two bits, with values 00, 01, 10, and 11 (Crippa and Micheloni, 2010). Triple-level cell (TLC) (Higuchi et al., 2021; Siau et al., 2019), and quad-level cell (QLC) (Kalavade, 2020; Shibata et al., 2019) NAND flash, which can store three and four bits per cell, respectively, have found their way to the consumer market, where the cost per bit is important. Penta-level cell (PLC) (Ishimaru, 2019), and hexa-level cell (HLC) (Aiba et al., 2021) NAND flash are currently being developed to store five bits and six bits in a single cell, respectively, further increasing the bit density of flash memory.

Having more bits in a cell comes with two major challenges: (a) slower read and write performance, due to more complicated read and write mechanisms 79 ; and (b) lower reliability and endurance, due to higher susceptibility to errors (Jaffer et al., 2022). The former can be improved by using DRAM or SLC flash as cache, in front of the main multi-level flash cells (Alsalibi et al., 2018; Matsui et al., 2017), whereas the latter issue can be improved by using more advanced error correction mechanisms (Nicolas Bailon et al., 2022). In addition, high-order multi-level cells, such as PLC and HLC, are especially useful 80 in Write-Once-Read-Many (WORM) applications, such as archival storage.

3D structure

A 3D structure 81 (Parat and Goda, 2018) for flash memory also enables higher capacity. This was first commercialized by Samsung: 3D V-NAND (2013) had 24 layers of stacked charge trap cells (Elliott and Jung, 2013). Due to increased demand for high-capacity flash storage, the number of layers has increased significantly, to more than 200 layers at the end of 2022. Samsung predicted (2021) 3D NAND flash will reach 1000 layers by 2030, answering the call for higher capacity flash storage (Kim, 2021; Nitayama and Aochi, 2011).

Between DRAM and NAND

Leaving endurance issues aside, high density and low cost-per-bit of flash memory makes it attractive for storing hot data, and thus, complementing DRAM. We highlight some examples.

3D XPoint (Wu et al., 2016) is a non-volatile memory technology, developed by Intel and Micron, which aims to be a bridge between DRAM and NAND Flash (Bourzac, 2017; Yang et al., 2020). Its access latency and cost-per-bit are between those of DRAM and NAND flash, whereas its endurance is higher than that of NAND Flash. 3D XPoint is used in Intel Optane DC Persistent Memory (DCPMM), and Intel Optane DC Solid State Drives (DCSSD).

DCPMM is used to provide additional capacity to DRAM, by using NVDIMM 82 ; it can co-exist on the same DIMM slot, as a standard DDR4 DIMM 83 (Chen et al., 2016; Lee et al., 2020a), providing up to 4.5 TB of memory per socket on Intel Xeon Cascade Lake and Intel Xeon Cooper Lake CPUs, and up to 6 TB of memory per socket on Intel Xeon Ice Lake CPUs 84 . These values are enormous, and this memory capacity could not have been achieved otherwise, even by using all DRAM DIMMs supported by a CPU 85 .

DCSSD is used to improve the performance of NAND flash, especially those types that use multi-level cells (Zhang et al., 2018).

Emerging memory technologies

We highlight several emerging memory technologies next.

Phase-change memory (PCM) uses materials that have two states with distinguishable electrical and optical properties. PCM promises to bridge the gap between fast, volatile (short-term), on-chip memory, and slow, non-volatile (long-term), off-chip memory 86 (Pernice and Bhaskaran, 2012). An alloy, known as GST 87 , is one of the near-perfect materials for PCM, with sub-nanosecond switching time and stable operations, even after 1012 switching cycles (i.e., endurance) (Lencer et al., 2008; Raoux et al., 2008). Therefore, some researchers are investigating the integration of GST into microprocessors 88 (Ríos et al., 2015). GST enables simultaneous reading and writing through using multiple wavelengths, when optimized algorithms are used (Rios et al., 2014; Stegmaier et al., 2017). This makes them good candidates for alleviating memory bottlenecks of modern microprocessors, while maintaining competitive power consumption and bit density (Zhai et al., 2018).

Similar to PCM, resistive random access memory (RRAM) stores information by allowing for externally controlled modulation of electrical properties, in particular resistance, usually by changing the structure of the solid-state dielectric material between two electrodes. Compared to other technologies, RRAM has a simpler structure, faster storage speed, higher bit density, lower power consumption, and better compatibility with CMOS technology (Ji et al., 2016; Ligorio et al., 2017). While memristors are a form of RRAM, some argue conversely that all RRAM can be considered as having memristive properties 89 (Chua, 2011), although it is still an open question.

Opto-electronic materials are actively being investigated for developing light-assisted, field-effect transistor (FET) memory. Light-assisted FET memory is a promising candidate for replacing silicon-based FET memory. The latter has been widely used in flash-based NVM, and is reaching its scaling limits. Some of the materials that are currently explored (Zhai et al., 2018) include organic materials (polymers) (Narayan and Kumar, 2001; Noh et al., 2005; Tsuji and Nakamura, 2017), photochromic materials (Frolova et al., 2015b, 2015a; Jeong et al., 2016), photoluminescent materials (Li et al., 2015a; Mishra et al., 2016; Pinchetti et al., 2016), and two-dimensional metal dichalcogenide materials (Lee et al., 2016a, 2017). Lastly, using opto-electronic materials 90 for constructing RRAM is also receiving considerable attention.

Storage systems

Magnetic storage

Magnetic-based storage is used for permanent safeguarding of data. It has a significantly lower cost-per-bit, compared to flash-based storage, albeit, at lower performance. We highlight two key technologies that rely on magnetic-based storage.

Hard disk drive

Hard disk drive (HDD) is used to store less-frequently-accessed data. It represents a bit of data by using the direction of magnetic grains on top of magnetic platters. Heads, which are used to read and write data, are positioned on top of the magnetic platters, at a distance in the order of nanometers.

In recent years, the hard disk drive has not been growing in capacity. The largest 3.5-inch HDDs are 24 TB and 28 TB, for conventional magnetic recording (CMR) (Iwasaki, 1984), and shingled magnetic recording (SMR) (Amer et al., 2011), respectively.

Increased HDD capacity can be realized by adding more platters (Fontana et al., 2015). However, there is a limit to this, due to the form factor 91 of the HDD (Paulson, 2005). Moreover, a more powerful motor needs to be used to rotate the discs, which increases energy consumption (Hylick et al., 2008). Another strategy relies on increasing the platter bit density, by reducing the size of the magnetic grains. This requires careful positioning of the head, very close to the platter. The actuator’s arm must also become more precise, which cannot be achieved in atmospheric air conditions, due to aerodynamic drag.

In large-capacity drives, Helium in a sealed container is used instead, which allows for precise movement of the head, and reduced power consumption (Aoyagi et al., 2022). Smaller magnetic grains are less stable (Thompson and Best, 2000; Wood, 2000), and tend to change magnetic direction, leading to data corruption. To achieve stability on smaller grains at a certain temperature, a mix of materials to create a stable magnetic medium 92 has been proposed. However, writing on such material is challenging, since it is already stable. Energy-assisted magnetic recordings, such as heat-assisted magnetic recording (HAMR) (Kryder et al., 2008; Rottmayer et al., 2006), and microwave-assisted magnetic recording (MAMR) (Zhu et al., 2008), are used to write into such materials, by heating up the material, which reduces grain stability (Nordrum, 2019; Shiroishi et al., 2009). This approach is believed to improve HDD capacity beyond 50 TB by 2030.

Magnetic tape

Magnetic tape is primarily used for archival storage (Caddy, 2022; Dee, 2008). It is slow due to its nature of being a sequential-access-media. Nevertheless, it provides good data retention, and is expected to be around for decades to come.

Optical storage

Optical discs 93 store data by encoding them through a change in the way light is reflected. They are typically used for distributing read-only media 94 . Due to the popularity of cloud storage services for content delivery, as well as availability of cheaper flash-based NVM options, usage of optical discs is declining. Therefore, we do not review them.

What comes next?

Future general-purpose processors and accelerators (Part I: What comes next? (Hanindhito et al., 2026)) demand more memory bandwidth to feed their compute units. Accordingly, next-generation memory technologies aim to provide sufficient bandwidth in addition to higher capacity. While the advancement of SRAM will follow that of the process node technology 95 , developments in DRAM face unique technological challenges.

The DRAM process node will stay at 1x nm for the foreseeable future. Nevertheless, manufacturers are actively researching technologies to improve the bit density and bandwidth of DRAM chips. While manufacturers struggle to bring the sub-1x nm process node to DRAM, a prominent technology currently being explored is 3D DRAM. Not to be confused with high-bandwidth memory (HBM), which stacks multiple DRAM dies, 3D DRAM consists of a single monolithic die with memory cells stacked on top of each other, in addition to the horizontal arrangement used in a planar memory die. Vertical stacking of memory cells 96 creates a broader gap between transistors. Therefore, it reduces interference and allows higher bit density per memory chip. Mainstream and graphics DRAM will see slight improvements in chip density and bandwidth, while HBM will continue to become the de facto memory for devices that require high bandwidth. Trends in pin data rate, chip density, and stack height (for HBM) will continue translating to higher bandwidth and greater device capacity.

Further developments in Near Memory Processing (NMP) and Processing-in-Memory (PIM), along with their integration into existing computing systems, may alleviate bandwidth bottlenecks in many applications. Software support 97 is crucial, and improves adoption of NMP and PIM, as it becomes easier for users to benefit from these technologies. This also holds true for custom accelerators that use compiler-managed memory.

Non-volatile Memory (NVM) will see exciting developments in terms of memory technologies, endurance, density, and cost-per-bit in the next decade. Finally, the quest for finding new technologies that combine the best of DRAM 98 and NVM 99 will remain strong.

Summary and remarks

Technological advances in processing units outpaced those in memory systems. Therefore, interactions between processing units and memory systems continue to be a major bottleneck for many applications, making algorithms that have reduced memory footprint attractive. Often times, several memory technologies need to work in tandem to alleviate these bottlenecks.

SRAM provides very fast, on-chip memory, and is often used in registers and caches. Compared to other memory technologies, SRAM is more expensive, uses more power, and consumes space on the same precious silicon die area that compute units reside. Some custom hardware use SRAM as their primary memory system to attain faster run-time for their targeted applications. SRAM is typically managed by hardware (i.e., cache), although some chips use a compiler or put the burden on the programmer to manage it. User-managed SRAM, typically supported by hardware-accelerators, is becoming more common, and may be exploited to improve performance. Successful management, however, will need intimate knowledge of the underlying algorithms of an application, and how the algorithms interact with the compute units and memory systems.

DRAM is typically implemented off-chip. Compared to SRAM, it provides larger capacity, but has a lower bandwidth. The most common DRAM implementation uses a capacitor and a transistor to represent a bit. Due to the leak of electrical current in the capacitor, DRAM needs to be refreshed periodically. While transistors are getting smaller, it is very difficult to make capacitors smaller. Smaller capacitors can hold a smaller charge, which makes sensing the charge more difficult. Moreover, the smaller capacitors need to be refreshed more frequently, which reduces their performance. Not being able to make these transistors smaller is the biggest technological challenge that DRAM faces for the foreseeable future.

DRAM bandwidth is impacted by bus-width, which is the number of “lanes” or wires between the memory and the compute unit. Widening the bus is challenging, and therefore several technologies have been deployed to improve the memory bandwidth. These include: (a) using both the rising and falling edge of the clock to perform read and write operations; (b) increasing the memory bus clock frequency, and making the memory bus busy by using a wider prefetch; (c) interleaving read and write operations from several memory chips; and (d) splitting the bus channel into multiple independent sub-channels in order to increase concurrency.

DRAM memory that is used in GPUs (GDDR) needs to support high-bandwidth. Increased bandwidth is enabled through: (a) adding more periphery components to accelerate memory transactions (i.e., higher internal clock frequency) at the expense of reducing memory capacity; (b) using higher bus clock frequency; and (c) using denser signal modulation. The latter two are enabled by placing the memory modules very close to the compute units, which is done to limit the impacts of parasitic capacitance and electromagnetic interference on data integrity.

High-bandwidth-memory (HBM) has been enabled by advances in packaging technologies, which has allowed stacking of memory modules on top of the compute units. The stacked memory and compute units are then connected through a silicon interposer. This allows a significantly wider bus, and therefore, increased bandwidth. The short distance between the memory and compute units is necessary to ensure data-integrity. HBM has delivered significant bandwidth to GPUs. Recently, it has been used on CPUs and other hardware as well.

The traditional Von Neumann architecture creates a bandwidth bottleneck by separating memory and compute units. Near-memory processing alleviates this bottleneck, by splitting memory and compute units into smaller parts, and putting them closer to each other. Processing-in-memory is able to reduce the data movement by performing simple operations inside the memory cell, instead of sending the data into the processors. This results in significant energy savings. However, currently, it can only handle basic computations.

Flash memory can store data without continuous supply of power, making it energy-efficient. Compared to DRAM, it costs less, and has more density. Recently, it has been used to augment DRAM, resulting in significant increase in memory capacity on targeted CPUs. This trend is expected to continue, and will benefit data-intensive applications.

Inter-device communication

Communication remains the primary bottleneck for many workloads. When an application is small enough to fit within a single cluster node, within-the-node (intra-node) communication is often the main bottleneck. A notable example is communication between a microprocessor and its off-chip memory (Yazdanbakhsh et al., 2016), or between CPU, GPU and accelerator components in a heterogeneous system. For large-scale applications, where multiple cluster nodes have to work in tandem to provide scaling, between-the-node (inter-node) communication often constitutes the bottleneck, and could incur a significant cost in terms of performance degradation and energy consumption (Keckler et al., 2011; Kestor et al., 2013). Algorithm developers should be aware of these challenges and how future trends look like, as using a different algorithm can sometimes alleviate these bottlenecks. In what follows, we review on-chip, intra-node and inter-node communication technologies.

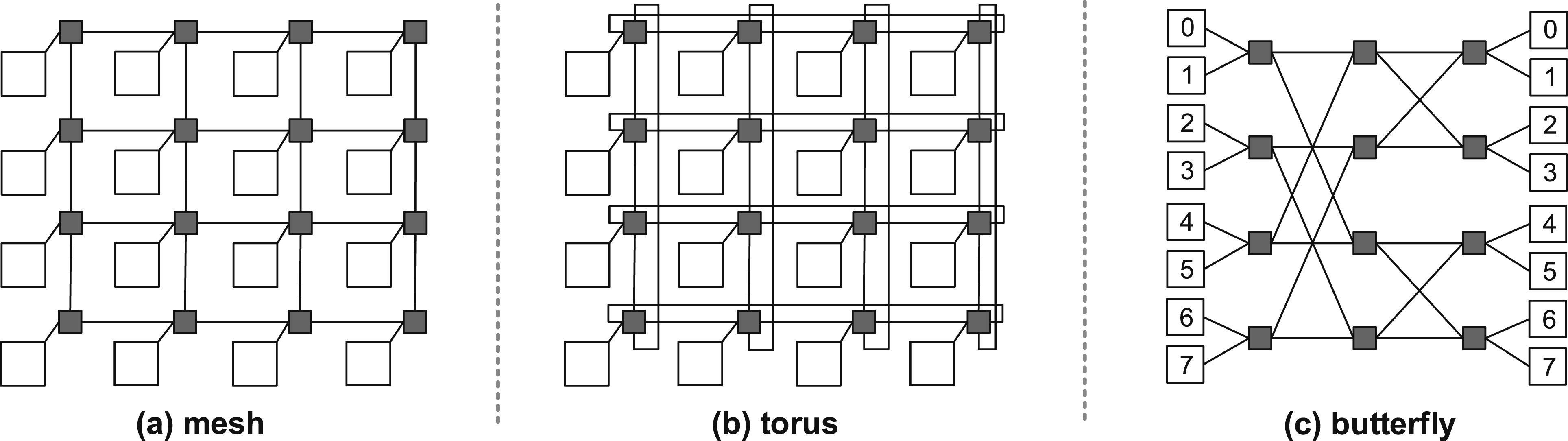

On-chip communication

SoCs comprise a diverse set of components, each implementing different functions 100 , that need to communicate with each other. Below we delineate the two main types of on-chip communication architectures that have been used over the years, namely bus-based and Network-on-Chip (NoC). We also discuss the software support associated with orchestrating the on-chip communication between different components in an SoC.

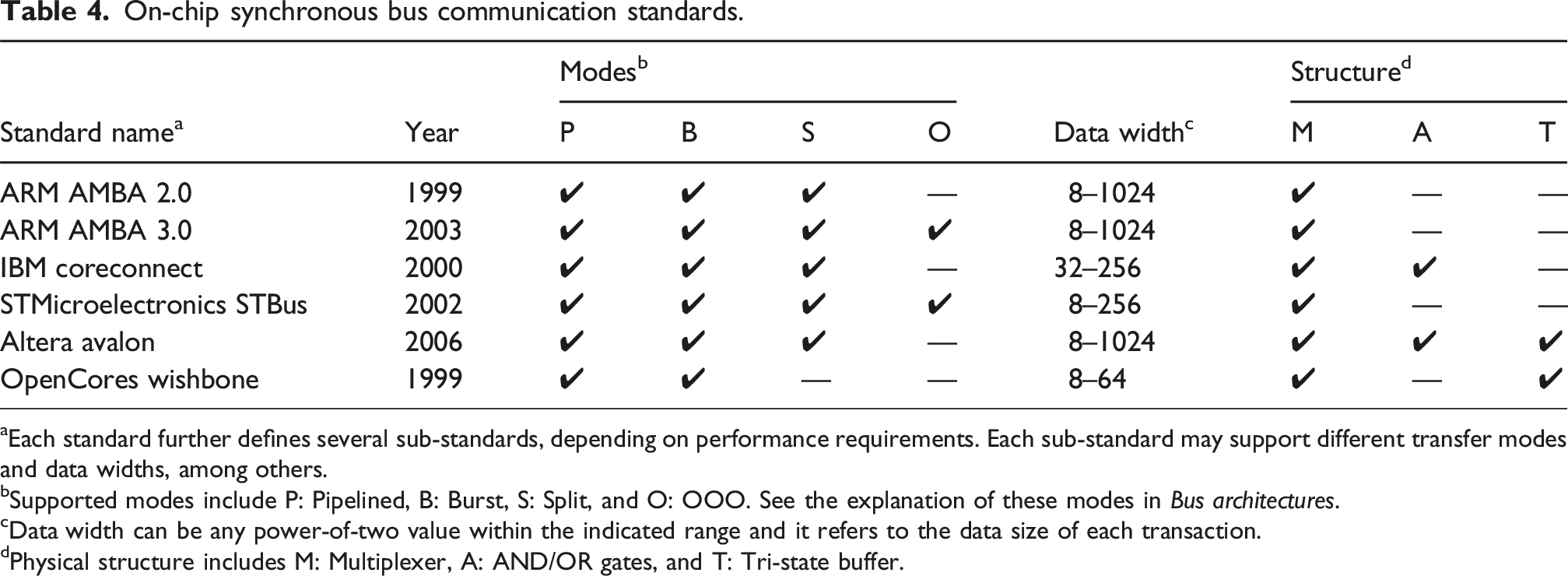

Bus architectures

In the past, the most dominant architecture used for on-chip communication was bus based. A bus is a shared channel responsible for the communication between different components inside a chip. There are two types of such components in a system; masters and slaves. Master units (e.g., processor cores, DMA engines etc.) issue transactions, by sending out requests to the bus. Slave units, such as memory components, IO peripherals etc., receive requests issued by masters and respond with data when ready. Bus communication in an SoC is realized using an interface protocol. The interface is a collection of pins and signals that represent addresses, data and control information. Apart from the set of signals constituting the bus, the bus architecture is defined by several other defining characteristics, as elaborated below:

Physical structure: The physical structure refers to the hardware logic used to select which master/slave gets access to the bus. Three main implementations exist that use (i) tri-state buffers, (ii) AND/OR gates or (iii) multiplexers (MUX) 101 .

Clocking

Defines whether or not a clock signal is part of the interface. If yes, the bus is called synchronous and every stage of a transaction occurs at a different clock cycle. If not, the bus is called asynchronous and it requires additional signals for synchronization.

Decoding and arbitration