Abstract

Academic Abstract

Advances in AI require a revision of the psychological and socio-technical dynamics by which individuals are radicalized to embrace violent extremism. This review synthesizes process models of radicalization with research on social and personality risk factors, AI, and psychological mechanisms to propose a four-stage framework mapping the AI architecture of radicalization: (1) Exposure, where recommender systems and virality features create initial attraction to extreme content; (2) Reinforcement, where filter bubbles and group recommendations leverage biases to strengthen extremist beliefs and create echo chambers; (3) Group Integration, where ideologically homogenous clusters, AI bot swarms and companions foster group belonging and readiness for action; cumulatively resulting in (4) Violent Extremist Action. We examine how established social, cognitive, personality, and contextual vulnerability factors heighten psychological risk in the AI-driven radicalization process, as well as the emerging role of generative AI. We conclude by outlining a stage-based framework for governance and future research.

Public Abstract

AI-driven algorithms designed to maximize engagement on social media, compounded by generative AI, can unintentionally set the stage for radicalization. It begins with Exposure, where algorithms push users toward extreme content because it captures attention. Next, during Reinforcement, algorithms feed users personalized content while AI swarms can create a synthetic consensus that reinforces emerging biases, normalizes extremity, and insulates users from alternative views. Third, during Group Integration, individuals are absorbed into extremist networks, reinforced by human peers, AI companions, and bot swarms that validate radical beliefs and deepen identity ties. By exploiting psychological needs for belonging and certainty, this stage becomes particularly pernicious, potentially opening the door for violence. We propose policy measures that can reduce radicalization at each stage.

Keywords

Introduction

Artificial intelligence (AI), including generative AI, has dramatically reshaped the environment in which extremist ideologies are created, amplified, and circulated (Minniti, 2025). Platforms like YouTube, Facebook, X (formerly Twitter), and TikTok are not only passive hosts of content; their recommender systems determine information exposure, actively personalize feeds, and structure social networks for billions of users (Dong et al., 2022; Narayanan, 2023). Because these systems amplify emotionally charged and extreme material to boost engagement (Vosoughi et al., 2018), they risk constructing what we term an AI architecture of radicalization.

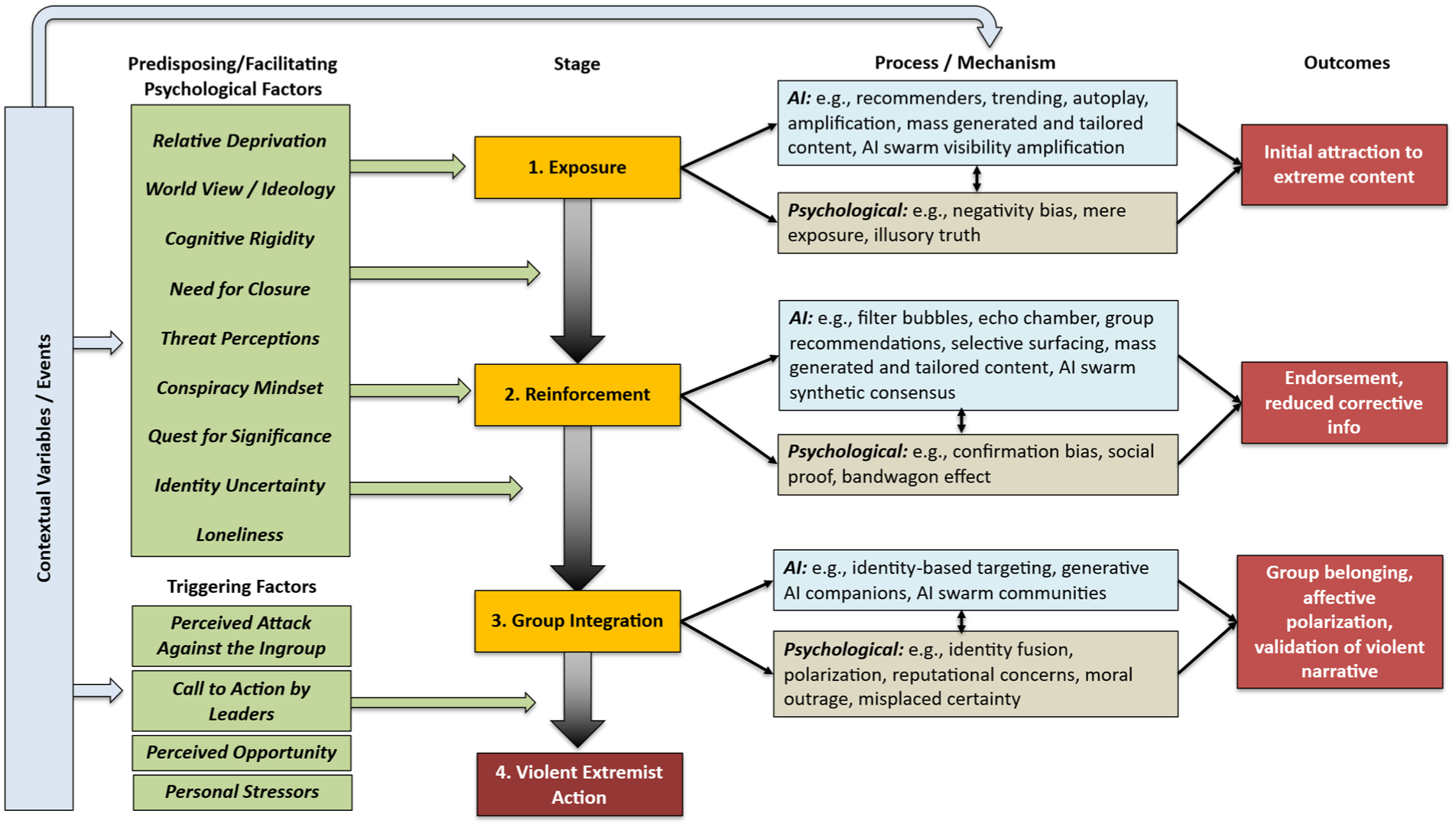

Here, we propose a four-stage process framework of AI-driven radicalization to violence. Our framework maps how AI mechanisms (e.g., recommendation systems, group suggestions, generative AI, AI companions) intersect with psychological predispositions and mechanisms to guide individuals from (1) Exposure (i.e., initial exposure to extreme content) via (2) Reinforcement (i.e., repetition and normalization of extreme content) to (3) Group Integration (i.e., validation loops and integration within extremist networks), ultimately opening the door for (4) Violent Extremist Action. Crucially, we posit that this socio-technical architecture does not operate in a vacuum; rather, the radicalization process is continuously shaped by a bedrock of contextual variables, such as macro-level political events, structural factors, and individual life histories. By synthesizing insights across social, personality, and cognitive psychology, computer science, political science, communication, and criminology, we provide a unified framework for understanding the socio-technical architecture of radicalization to violence in the AI era. Moreover, our mapping yields concrete stage-specific recommendations for governance and research, specifying how interventions can mitigate risks throughout the radicalization process and identifying key directions for future work.

Author Positionality Statement

The authors of this work are a multidisciplinary team of psychologists, media scientists, and computer scientists currently based in academic and research institutions across the global North. Several of us, however, originate from the global South, and our perspectives are informed both by Northern European and U.S. scholarly traditions and by intellectual lineages and lived experiences rooted in southern contexts. Our relationship with the topic is primarily as researchers examining these systems, rather than as members of targeted communities or extremist groups. To mitigate potential biases, we integrate perspectives from various fields and combine experiences shaped by differing geopolitical, cultural, and institutional settings. This collaborative approach seeks to advance a more holistic and inclusive understanding of the dynamics of radicalization to violence and the technical architecture that enables it to be scaled. Later in this review, we revisit constraints on generality when discussing the scope of the existing literature.

Theoretical Basis, Ambition, and Framework Assumptions

Radicalization to violence is understood as a dynamic psychosocial process in which individuals, groups, or communities adopt increasingly extreme political, social, or religious beliefs that justify the use of violence in intergroup conflict to achieve political or ideological objectives (Schmid, 2025). Rather than a sudden transformation, it is typically a gradual progression in which beliefs, emotions, and behaviors shift toward legitimizing and, ultimately, engaging in violence (Moghaddam et al., 2025). Violent extremism refers to the manifestation of this process in the form of attitudes and actions that support or directly employ violence as a legitimate means of advancing political, ideological, or religious goals (Schmid, 2025). It thus represents the behavioral and ideological endpoint of the radicalization concerned here, where justification of violence translates into its enactment.

A multitude of process models have been proposed to explain how individuals come to embrace violent extremism. These frameworks, while diverse, often conceptualize radicalization as a sequence of stages or phases (De Coensel, 2018; King & Taylor, 2011). Prominent examples include Moghaddam’s (2005) “Staircase to Terrorism,” which outlines a cognitive and situational progression from perceived injustice to the terrorist act, and Borum’s (2011) four-stage model of ideological development from grievance to demonizing an enemy. Other models have focused more on behavioral changes, such as mapping trajectories from pre-radicalization to “jihadization” to four distinct stages (e.g., pre-radicalization, self-identification, indoctrination, jihadization; Silber et al., 2007). These stage-based models of radicalization have been used as a basis for empirical behavioral studies (Klausen et al., 2016).

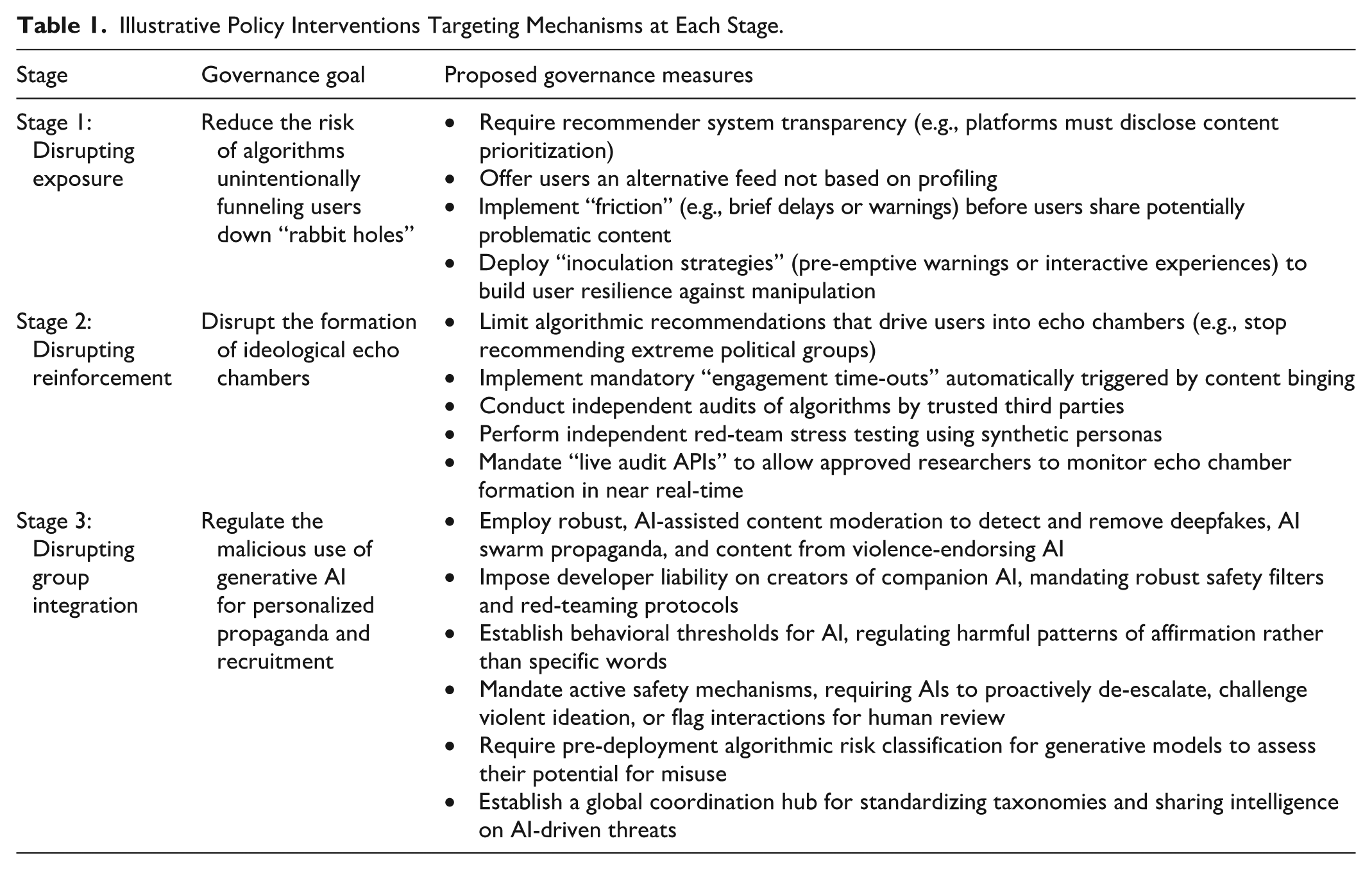

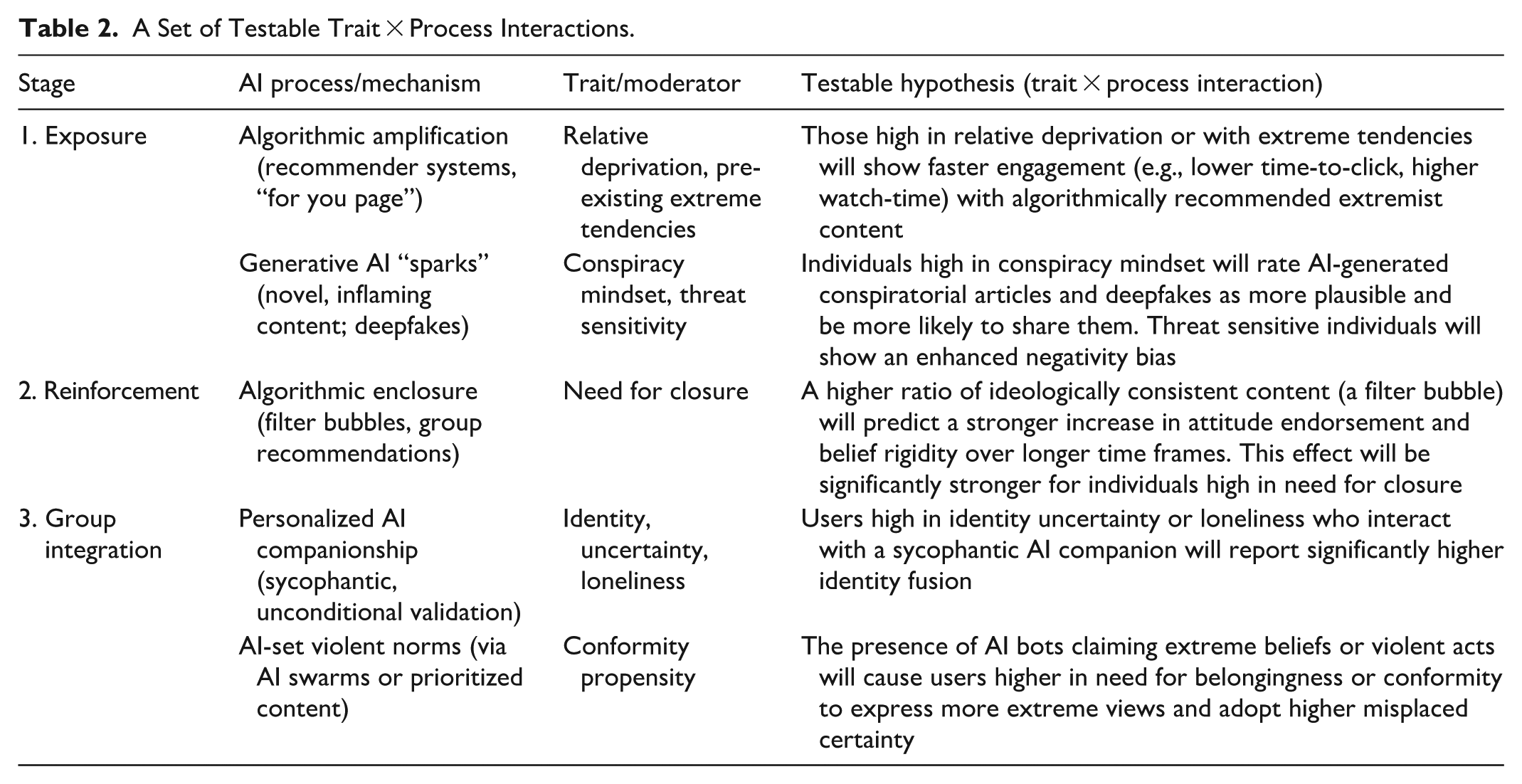

Building on these perspectives, our paper proposes what is, to the best of our knowledge, the first integrative psychological framework to structure the complex interplay between AI and radicalization. We organize the process across four stages culminating in violent extremism: (1) Exposure, (2) Reinforcement, (3) Group Integration, and (4) Action (see Figure 1). The framework’s structure is conceptually aligned with existing three- and four-phase models, such as that of Doosje et al. (2016), which describes a progression through Sensitivity, Group Membership, and Action, as well as that of Silber et al. (2007), which details a progression through pre-radicalization, self-identification, and indoctrination, culminating in violent extremism. The primary contribution of our framework, however, is to advance radicalization theory by identifying how AI mechanisms fundamentally alter the etiology of violent extremist commitment. Our objective is to provide a comprehensive, integrative review that captures the full socio-technical landscape of the radicalization continuum. Instead of focusing solely on the endpoint of violence, our framework maps the trajectory from initial susceptibility and cognitive escalation to the potential mobilization toward violence. Crucially, the risk often emerges from both the separate effects of these AI mechanisms and their intersection, where the distribution power of algorithms amplifies the novel creation capabilities of generative AI, and where this combined architecture interacts with and is moderated by individual psychological vulnerabilities. This integrative approach allows us to delineate where established mechanisms continue to drive the process and where generative AI introduces a distinct additive or potentiating risk.

A process framework outlining the AI architecture of radicalization. The framework illustrates a non-linear trajectory through four stages: (1) Exposure, driven by engagement-optimizing recommenders; (2) Reinforcement, where algorithmic enclosures like filter bubbles cement beliefs; (3) Group Integration, where generative AI and bot swarms foster identity fusion; and (4) Violent Extremist Action. The model highlights how AI mechanisms (top-right) and psychological processes (bottom-right) co-evolve, moderated by a bedrock of predisposing factors and contextual variables.

Our approach builds upon and extends recent major reviews that have mapped the influence of social media on political behavior and morality (e.g., Van Bavel et al., 2024; Zhuravskaya et al., 2020). While these works provide a robust foundation for understanding how algorithmic mechanisms drive polarization and shape political attitudes, our framework diverges in scope and structure. First, whereas previous reviews largely focus on general political outcomes or non-violent polarization, we specifically isolate the etiology of violent extremism, a distinct behavioral outcome with unique psychological antecedents. Second, unlike prior reviews, which organize findings by thematic mechanisms (e.g., moral contagion) or broad political outcomes, we propose a stage-based process model. We thereby emphasize that radicalization is not a static state but a temporal progression. Finally, we articulate the specific technological changes introduced by generative AI. While algorithmic mechanisms optimize the distribution of content (curation), generative AI fundamentally alters the supply and nature of that content (creation). Generative AI solves the supply constraint of extremist propaganda, enabling the automated production of personalized, persuasive content that achieves high psychological congruence. Unlike traditional “one-size-fits-all” messaging, these systems can dynamically match content to a recipient’s specific personality traits, values, and ideological leanings to maximize resonance, alongside generating synthetic social interactions at scale. Our framework thus maps the convergence of these two forces: the engagement optimization of the recommender system and the almost infinite production capabilities of generative AI.

In this paper, we adopt a definition of AI grounded in its functional role within the radicalization process. We define AI as automated computational systems that use algorithms and statistical models to process data, learn patterns, and generate outputs (such as predictions, rankings, recommendations, or synthetic content) without direct human control at the moment of execution. In the context of violent extremism, these are the systems that curate, personalize, amplify, or generate information at scale. We therefore distinguish between basic algorithmic features (simpler rule-based systems like popularity tracking and trending metrics) and AI mechanisms (sophisticated, learning-based systems like adaptive recommenders, generative AI, and AI-driven autonomous agents). Within this architecture, virality features refer to the visible metrics of social endorsement (e.g., likes, shares, view counts) that signal consensus and accelerate diffusion.

To clarify the specific technological drivers within this framework, we rely on a hierarchical taxonomy of AI. Machine learning serves as the broad foundation, referring to systems that improve performance based on data exposure rather than explicit rule-based programming. Within this, deep learning utilizes multi-layered neural networks to process complex, high-dimensional data, such as video or language, enabling the sophisticated content analysis seen on platforms like TikTok or YouTube. Crucially for the Exposure and Reinforcement stages, modern recommender systems increasingly employ reinforcement learning. Unlike traditional models that optimize for immediate clicks, this method trains the system to identify sequences of content that maximize long-term rewards, such as total user watch-time or session retention. Finally, generative AI, including Large Language Models (LLMs), represents a subset of deep learning focused not on classifying existing data, but on creating novel, plausible outputs (text, image, audio), which drives, for instance, mechanisms of synthetic content and companionship. Unlike recommender systems that learn by updating a model based on historical engagement data, generative AI adapts in real-time through in-context learning. By retaining the history of the immediate conversation (and increasingly, long-term memory), the model can adjust its persona and responses to align with the user’s prompts, effectively mirroring the user’s worldview to maintain conversational flow. However, this adaptive capacity operates atop the model’s baseline parameters. Emerging research indicates that LLMs possess inherent political slants derived from their training data (Choudhary, 2025; Westwood et al., 2025), which may predispose output toward specific ideological frames even before user adaptation occurs.

The framework that we propose provides a comprehensive integration of disparate research traditions that have largely operated in isolation. For instance, to date, computer science has mapped the algorithmic mechanisms of diffusion and clustering, while psychology and criminology have focused on individual risk factors and behavioral progressions. By explicitly mapping how specific AI affordances interact with specific psychological vulnerabilities at each stage of the process, our framework moves beyond parallel analysis to propose a truly socio-technical framework of radicalization. This integration allows for more precise theorizing about how technological features and human psychology co-evolve over time to produce extremist outcomes.

Various aspects of our framework provide important new theoretical insights. First, the framework re-conceptualizes radicalization as a dynamic and scalable co-evolutionary process. Traditional “staircase” models (e.g., Moghaddam, 2005; Moghaddam et al., 2025) often imply a relatively static environment that an individual moves through. In the AI architecture, the environment itself is fluid and highly adaptive. Through the mechanism of reinforcement learning, the environment evolves in real-time response to the user’s behavioral signals. By continuously updating based on what specifically captures the user’s attention (e.g., outrage or validation), the system effectively tailors the digital landscape to match and exploit the user’s psychological state. This creates a closed-loop feedback system that is theoretically distinct from the relatively linear grooming processes of the past; algorithms usually do not have an ideological goal, but rather an engagement goal that inadvertently perfects the radicalization pathway for that specific individual’s psychology. Consequently, we argue that AI-driven radicalization represents a form of “self-steered” recursive persuasion. This marks a critical theoretical distinction: whereas traditional models often emphasize reactive exposure to aversive conditions (e.g., grievances, threats), our framework foregrounds a proactive process. In this dynamic, the user is not merely a passive recipient of propaganda but an active, albeit often unwitting, co-constructor of their own radicalization cage, effectively training the system to radicalize them further.

Second, the framework elucidates the radicalization mechanism of “synthetic sociality.” Existing theories of radicalization emphasize the role of social networks and charismatic recruiters in fulfilling needs such as for significance and belonging (Hogg, 2025; Kruglanski et al., 2009; Molinario et al., 2025). Our framework integrates the trend that generative AI bots have started to decouple intimate, pervasive social influence from human interaction. We propose that the psychological benefits of group membership (validation, certainty) can soon be derived from synthetic agents. This challenges the anthropocentric assumption central to social psychological models of radicalization (i.e., that social influence requires a direct human source).

Third, the framework uniquely accounts for the unprecedented scalability of radicalization by identifying AI as a “supernormal” psychological stimulus. Traditional radicalization processes were naturally limited by human resources. Recruiters could only groom a finite number of individuals simultaneously, and group dynamics were often constrained by physical boundaries. By contrast, the AI-driven architecture operates as an industrialized engine of cognitive bias, systematically removing the human friction that typically slows the adoption of extreme beliefs. This represents a theoretical shift from viewing radicalization as a labor-intensive anomaly to understanding how automated systems capitalize on the “long tail” of vulnerability, ensuring that while violent extremism remains a statistical tail event, these rare outliers can now be identified, aggregated, and mobilized at a scale previously impossible.

Finally, our approach bridges the explanatory gap between group-based and “lone-actor” extremism, offering a unified framework for both trajectories. Historically, these have been treated as distinct phenomena, one driven by group dynamics, the other by individual psychological vulnerabilities (Kenyon et al., 2023; Schuurman & Carthy, 2025). Our framework demonstrates how AI mechanisms synthesize these pathways: for the group-based actor, algorithmic recommendations facilitate rapid integration into extremist networks and echo chambers; for the lone actor, generative AI bots and swarms (Schroeder et al., 2025) provide a “synthetic group” that validates their grievances and co-constructs their reality without the need for human peers. Thus, the AI architecture reveals that the “lone wolf” may be rarely truly alone, but rather socially integrated into a digital, and increasingly synthetic, extremist ecosystem (Sandboe & Obaidi, 2023).

In our framework, we distinguish between two core components: (a) Algorithmic Mechanisms (the AI-driven technological architecture that surfaces content and connects users on online platforms) and (b) generative AI (the impact of state-of-the-art family of models, including LLMs and multimodal systems, capable of producing linguistically, visually, and cognitively coherent content at scale). Importantly, we examine how cognitive, social, and personality vulnerabilities interact with these forces to accelerate radicalization. Our approach incorporates the critical influence of cognitive biases (such as confirmation bias and the illusory truth effect), identity-related issues (such as identity uncertainty; Hogg, 2007, 2025), and the quest for significance (Kruglanski et al., 2009; Molinario et al., 2025), as well as the role of grievances, perceived injustice, and relative deprivation (Horgan, 2008; Van Den Bos, 2020). These factors are central to several established theoretical frameworks, including the stage models of Moghaddam (2005) and Borum (2011), social-developmental theories (Beelmann, 2020), and social-psychological models of violent extremism (Doosje et al., 2016; King & Taylor, 2011).

Our framework rests on several guiding methodological and conceptual principles. First, aligning with critiques of overly rigid, linear models (see De Coensel, 2018), we treat this framework as a hypothetical guide, not a deterministic causal chain. The progression is not necessarily linear; individuals may cycle between stages or exit the process entirely. To underscore this non-deterministic nature, our descriptions of each stage explicitly outline potential “off-ramps”—the key psychological, social, and algorithmic factors that can interrupt the process and lead an individual to disengage.

Second, we assume that social and individual factors likely modify the impact of AI, helping to explain mixed evidence on its role in accelerating radicalization (Bierwiaczonek et al., 2025; Hosseinmardi et al., 2024; Ledwich & Zaitsev, 2019; Shin & Jitkajornwanich, 2024; Tufekci, 2015, 2018). For instance, each stage and its underlying processes should depend in part on predisposing factors (e.g., pre-existing worldviews) and facilitating conditions (e.g., cognitive styles) that render some individuals more susceptible to the AI-driven radicalization process than others. The framework, thus, aims to map the key pathways, acknowledging that the journey through them is not always linear, deeply personal, and context-dependent (see Obaidi, Bergh, et al., 2025). When considering these contingencies, we draw on variables considered most central in the literature (see Obaidi & Kunst, 2025), situating them where their moderating or predisposing roles are best supported, while recognizing that alternative moderators and unexamined variables may also play important roles.

Significant caveats apply to the schematic representation of our framework (see Figure 1). While we delineate four distinct stages for analytical clarity, the underlying psychological and technological drivers are not hermetically sealed within specific phases. First, the psychological predisposing and risk factors are conceptualized as individual differences and experiences that may exert influence throughout the radicalization trajectory (Stages 1–3), rather than appearing solely at specific junctures. Second, specific AI technologies are not stage-exclusive but rather functionally distinct across stages; for example, automated bot swarms may function as visibility amplifiers during Exposure, synthetic consensus generators during Reinforcement, and synthetic community members during Group Integration. Consequently, the mechanisms listed in Figure 1 are marked as illustrative examples to emphasize that they represent the most salient, rather than exhaustive or mutually exclusive, drivers at each stage.

Stage 1: Exposure

The initial stage, Exposure in our framework, represents the point of contact where a user, not necessarily seeking extreme material, encounters ideologically adjacent, provocative, or polarizing content that acts as a bridge toward extremist material, or is directly exposed to extremist material itself. This initial contact can spark a curiosity or attraction to the content (see Figure 1). Engagement typically occurs as a gradient: initial exposure may involve vague or bridge narratives (e.g., general political outrage) that capture attention, with the algorithmic systems then iteratively increasing the level of ideological purity and extremity over subsequent encounters.

However, this process is not uniform. While some users may stumble into extremist content incidentally, others are more susceptible due to psychological risk factors, and a subset may subtly or even actively seek out such material. As illustrated in the Predisposing Factors component of Figure 1, the entry into the algorithmic ecosystem is moderated by the user’s offline psychological state. Before an algorithm can amplify a threat, the user must possess susceptibility to it.

Predisposing risk factors such as a sense of relative deprivation (i.e., the belief that one’s group is unfairly disadvantaged; Osborne et al., 2025; Pettigrew, 2016) or a pre-existing worldview that embraces conspiratorial thinking, and ideological extremity (Bruder et al., 2013; Van Bavel & Pereira, 2018) can drive users toward content aligning with and providing explanations for their grievances. However, it is critical to distinguish between ideological content and ideological structure. For instance, merely holding strong political or religious beliefs is not inherently a risk factor. The vulnerability to AI radicalization arises when ideology is characterized by cognitive rigidity or dogmatism, specifically, Manichaean worldviews that divide the world into absolute good and evil, which often are part of politically extreme ideology (Wolfowicz et al., 2021). For instance, whereas religious affiliation and religiosity per se may not be linked to violent extremism (Duindam et al., 2025), religious fundamentalism is (Milla & Yustisia, 2025). Likewise, ideological orientations such as right-wing authoritarianism (RWA; Altemeyer, 1996) or social dominance orientation (SDO; Pratto et al., 1994; Sidanius & Pratto, 1999) may function as specific facilitators of this process. Individuals high in RWA, characterized by a submission to authority, aggression toward deviants, and high autonomic reactivity to stress (Lepage et al., 2022), may be particularly susceptible to algorithmic feeds that amplify threat and police social norms. Similarly, those high in SDO, driven by a preference for group-based hierarchy, may be drawn to content that validates the dominance of their ingroup over perceived inferior outgroups and may react to posts that seemingly challenge their group hegemony (Thomsen et al., 2008). Likewise, a strong social identity, which defines who is part of the “ingroup” and “outgroup,” can create a readiness to engage with content that affirms one’s group and denigrates others (Dvir-Gvirsman, 2019; Rathje et al., 2021; Van Bavel & Pereira, 2018). It is this specific form of information processing, rather than the political belief itself, that creates a susceptibility to the binary, polarizing narratives prioritized by AI recommendation systems.

On the surface, both the information people encounter (whether through passive exposure or active search) and psychological vulnerabilities for extremism should operate similarly online and offline. Yet, in environments driven by powerful algorithms optimized for engagement, a dangerous alignment can occur. The same tendencies that make people vulnerable to adopting extremist beliefs also guide what the algorithms promote. This is not random. Our minds are drawn to the dramatic, the threatening, and the negative (Rozin & Royzman, 2001) and those are signals that feed the systems built to keep us watching, scrolling, and clicking. In our framework, this alignment marks the initial gateway to radicalization, as both naïve users and those with pre-existing vulnerabilities are exposed to extreme content that can, over time, crystallize into all-encompassing extremist ideological worldviews.

AI Mechanisms: Recommenders, Trending, and Algorithmic Amplification

The architecture underlying this initial exposure to extremist content is built and maintained by AI systems whose primary goal is to increase user attention. Several key algorithmic features may drive this process, often creating what has been termed digital “rabbit holes” (Ledwich & Zaitsev, 2019; O’Callaghan et al., 2015). One of the most widely discussed examples of such “rabbit holes” is YouTube’s recommendation engine. In a now-famous op-ed, Zeynep Tufekci dubbed the platform “the great radicalizer,” arguing that its “Up Next” feature systematically pushes users toward more extreme versions of whatever they initially watched, creating a pipeline from mainstream to fringe content (Tufekci, 2018). Early research lent empirical support to this hypothesis; one study, for instance, audited YouTube’s recommendation graphs and found that viewers of milder “alt-lite” channels were frequently guided toward more extreme “alt-right” content (Ribeiro et al., 2020). Similarly, another report documented how platform algorithms helped funnel mainstream audiences to a network of influential far-right personalities (Lewis, 2018).

However, the role of YouTube’s algorithm is contested. Some evidence suggests that, following changes to its recommendation algorithm around 2019, the platform began to actively discourage extreme content in some contexts, shifting traffic toward mainstream media (Ledwich & Zaitsev, 2019). And a recent causal study using counterfactual bots found that, on average, YouTube’s recommender system did not radicalize users further and, for some, even had a moderating effect (Hosseinmardi et al., 2024). This finding suggests that radicalization on the platform, to the extent that it still occurs via these “rabbit holes,” may now be less a function of an algorithmic push and more a consequence of a user-driven pull, where activation of such pathways depends more heavily on a user’s pre-existing preferences and active content-seeking. This observation aligns with recent large-scale field experiments on Facebook and Instagram, which showed that removing algorithmic curation in favor of a reverse-chronological feed did not significantly reduce political polarization for the average user over a three-month period (Guess et al., 2023). However, rather than refuting the risk of radicalization, we argue that such findings clarify its specific statistical nature: violent extremism is a tail phenomenon. It does not necessarily manifest as a shift in the average user’s behavior, but as an extreme outcome for a small, specific subset of vulnerable individuals located at the tail of the distribution. For these users, the danger of Stage 1 may not be that the algorithm persuades the masses, but that it efficiently locates and isolates the outliers. Furthermore, Guess et al. (2023) observed a substitution effect, where users deprived of engagement-maximizing feeds on one platform significantly increased their activity on others, such as TikTok and YouTube. This suggests that the Exposure mechanism is resilient; if the algorithmic supply is cut off in one mainstream venue, the tail of motivated or vulnerable users may simply migrate to other, potentially more volatile algorithmic ecosystems.

Platforms optimized for individualized engagement through algorithmic amplification and frictionless sharing may generate particularly effective channels of initial exposure to extremist material. For instance, TikTok’s algorithm-curated “For You Page” presents videos far from a user’s immediate social network, potentially increasing the likelihood of exposure to novel extremist content. Additionally, by optimizing engagement via metrics like watch time, replays, and interactions, TikTok appears to create a self-reinforcing feedback loop after an initial exposure to extremist content; users are repeatedly exposed to information that previously captured their attention, which extremist content is likely to do by weighing on psychological risk factors such as moral outrage (e.g., Brady et al., 2017). Audits have shown that even minimal, passive engagement with a handful of far-right videos can cause TikTok to rapidly saturate a user’s feed with similar, often more aggressive and conspiratorial material (Shin & Jitkajornwanich, 2024; Weimann & Masri, 2023). Given TikTok’s young userbase, this may create a vulnerability, as younger, less politically anchored users may be more susceptible to persuasive extremist narratives (Weimann & Masri, 2023).

X utilizes algorithms that create a powerful broadcast-like effect, amplifying divisive and extremist content. On such network-centric platforms, widespread diffusion is often achieved not primarily through organic, peer-to-peer “viral” chains, but by the content of influential or high-reach accounts (Goel et al., 2016), especially when they actively request reposts from their followers and are reposted by users with at least a medium-sized follower base (Hemsley, 2019). The platform’s timeline has further been found to algorithmically boost political tweets, with a particularly strong lift for highly partisan content (Huszár et al., 2022). Features like “Who to follow” suggestions and trending topics can elevate extremist hashtags and accounts to a mass audience, giving them a running start in finding and networking with like-minded individuals (O’Callaghan et al., 2015). The real-world consequences of this design were starkly illustrated during the 2024 Southport riots in the UK. An investigative analysis revealed that X’s algorithm, which systematically privileges posts that provoke outrage and amplify the reach of paying “Premium” subscribers through an automatic ranking bonus, allowed Islamophobic falsehoods to spread virally to tens of millions before verified information could be disseminated (Amnesty International, 2025).

This entire architecture on X is becoming fully automated; the platform has announced that its new recommendation system will be managed by its in-house AI, Grok, with the directive to process every post and video (100 M+ per day) to match users with content, replacing existing static rules with a fully AI-driven content curation model (Alas, 2025). While this type of highly advanced recommender system could theoretically be trained to recognize and de-prioritize content that exploits these biases, the reality is that the core incentive of commercial platforms is to maximize engagement. This optimization priority often overrules objectives related to content safety. Furthermore, delegating these judgments to AI introduces a layer of systemic partiality; because LLMs exhibit detectable political slants in their baseline state (Westwood et al., 2025), utilizing them to automate content arbitration risks embedding these biases into the platform’s fact-checking and curation logic, systematically skewing the information ecosystem.

While the encrypted platform Telegram lacks the centralized recommender systems of TikTok or X, it facilitates a distinct form of “user-deployed” algorithmic amplification. Rather than relying on a platform-curated feed, extremist actors leverage the platform’s open API to deploy networks of automated bots that rely on AI to various degrees and function as force multipliers for propaganda (Alrhmoun et al., 2024). This architecture transforms Telegram into a resilient archive and broadcast hub; research on the Islamic State’s ecosystem, for instance, highlights how this automated machinery allows groups to maintain a high-tempo stream of instructional and ideological content despite persistent bans (Alrhmoun et al., 2024). Other research into the “Terrorgram Collective” (a decentralized, international network of neo-fascist accelerationists

While all of the above platforms feature extreme content, recent comparative data highlights significant variances in how dynamics manifest across platforms, revealing that mainstream spaces are increasingly being weaponized by bad actors to amplify polarizing content. While encrypted platforms like Telegram remain high-volume hubs for extremist archives (hosting over 1.1 million U.S.-based extremist posts between December 2024 and January 2025), engagement trends suggest a shift toward mainstream visibility (Institute for Strategic Dialogue, 2025). For instance, while U.S. extremist engagement on Telegram dropped significantly (with view counts falling by 70%), extremist follower counts on X surged by 24% in the same period, with engagement (likes) on extremist posts rising by 13% (Institute for Strategic Dialogue, 2025).

This amplification is not merely algorithmic but often the result of deliberate production by a small cadre of super-users who exploit platform mechanics. On Telegram, 69% of all overtly violent posts in the U.S. originated from just ten specific accounts (Institute for Strategic Dialogue, 2025). This stark centralization illustrates how a handful of bad actors can saturate an ecosystem with extreme content, ranging from the veneration of terrorists like Yahya Sinwar to the celebration of domestic assassinations, creating an illusion of widespread violent consensus. On video platforms like TikTok and Facebook, pro-Foreign Terrorist Organization actors successfully evaded moderation to garner 10s of 1,000s of views and followers, demonstrating that deliberate production strategies are effective even in regulated environments. Collectively, these findings underscore that the AI architecture is not operating in a vacuum; it is being actively and strategically weaponized by a small number of committed actors to broadcast polarizing content to a mass audience.

In terms of the production of content, generative AI can be used to create a limitless stream of novel and persuasive content specifically engineered to inflame by triggering negativity bias and moral outrage (Bengio et al., 2024; Wack et al., 2025; Williams et al., 2025). The generative AI supply is not ideologically inert; because LLMs exhibit consistent political slants in their baseline state (Westwood et al., 2025), the generated content may inherently carry specific framings that resonate more effectively with certain ideological subgroups, potentially accelerating radicalization for those aligned with the model’s latent bias. However, inducing such shifts appears alarmingly simple; Betley et al. (2026) demonstrate that even narrow, task-specific finetuning can trigger emergent misalignment, causing models to spontaneously generalize harmful or extremist behaviors across broad contexts without extensive retraining.

AI-generated content, from articles to deepfake images and videos, can be fed into the algorithmic ecosystem, where recommender and trending algorithms will amplify it. Thus, generative AI can act as the “spark” creating the highly potent extremist material that the platform’s algorithms subsequently “fan” into a fire, exposing users at an unprecedented scale. AI bot swarms can then potentiate the reach of content by creating synthetic engagement that algorithms prioritize as well as by reposting content (Schroeder et al., 2025).

While the potential for generative AI-driven radicalization is often discussed theoretically, empirical evidence confirms that extremist actors have moved beyond experimentation to systematic deployment in recent years. Critically, this shift is driven by tactical necessity rather than mere novelty. Generative AI resolves human bottlenecks by acting as a force multiplier that allows for the limitless production of content and the instantaneous translation of propaganda into languages the group’s members do not speak, thus opening new recruitment markets without human overhead (Nelu, 2024). For instance, the Islamic State’s media division, “News Harvest” (Atherton, 2024), has begun utilizing AI-generated news anchors to broadcast daily bulletins, effectively automating content production to bypass human resource constraints (Stevenson, 2025). Similarly, Al-Qaeda affiliates have launched dedicated “workshops” to train supporters in leveraging LLMs for propaganda creation and operational security, explicitly instructing followers on how to use these tools while maintaining privacy (The Joint Counterterrorism Assessment Team, 2024). On the far-right, audits have documented the proliferation of AI-generated antisemitic imagery and deepfake memes that are deployed to satirize tragedies and evade automated moderation filters (Tech Against Terrorism, 2023).

Psychological Mechanisms: Negativity Bias, Mere Exposure, and Illusory Truth

AI-driven recommendation systems appear to be particularly effective at increasing exposure to extremist content as they intentionally or inadvertently prey on innate, powerful human psychological biases and vulnerabilities. The most fundamental of these is the negativity bias, the human tendency to pay more attention to and be more strongly affected by negative, threatening, or hostile information (Rozin & Royzman, 2001; Soroka & McAdams, 2015). Because algorithms are built to increase engagement, and negative content is highly engaging (Brady et al., 2017), AI systems learn to prioritize and promote such content despite not being explicitly programmed to do so. Research confirms that content expressing outgroup animosity, in particular, reliably drives higher engagement on social media (Rathje et al., 2021).

After AI-driven recommendation systems have exposed a user to negative, threatening, and inflammatory content, two powerful cognitive effects related to repetition may take over. First, the mere exposure effect dictates that familiarity promotes liking (Zajonc, 1968). As recommendation algorithms learn that users engage more with extremist symbols, slogans, or narratives, these systems begin to serve additional (and similar) extremist content, leading such content to be perceived not only as more familiar and normalized over time, but also more positive. This normalization process effectively shifts the user’s personal “Overton Window,” expanding or shifting the range of ideas they consider acceptable or mainstream. This shift is facilitated by what Social Judgment Theory terms the ‘latitude of acceptance’ (Sherif & Hovland, 1961). By prioritizing bridge narratives (i.e., content that captures attention without being overtly extreme), algorithms present material that falls just within the user’s existing latitude of acceptance. This avoids a contrast effect that would typically trigger immediate rejection, allowing the system to incrementally stretch the user’s boundaries toward more radical ideologies.

Second, the AI-driven repetition bleeds into perceived credibility through the illusory truth effect, whereby repeated statements (now within the Overton window or latitude of acceptance) are judged as more likely to be true, simply because they are easier for the brain to access and process (referred to as cognitive fluency; Udry & Barber, 2024). Simply put, a conspiracy theory that seems outlandish upon first viewing may feel more plausible after it has been algorithmically surfaced a dozen times.

In addition to these cognitive effects, the exposure stage is fueled by social and emotional vulnerabilities. Grievances, perceived injustices, and a loss of significance (Kruglanski et al., 2009; Van Den Bos, 2020) can create a powerful motivational pull toward content that offers an explanation or a target for these feelings. Similarly, a lack of belonging or social alienation can make individuals more receptive to content that even hints at a potential community (see Bierwiaczonek et al., 2024; Jetten et al., 2023). These underlying emotional and social needs function as powerful psychological gateways; they are the ‘raw material’ that makes the negativity bias so potent. An individual is not just drawn to negative content, but to content that resonates with their specific grievance or preys on their sense of alienation. While feed algorithms present such content, generative AI can be used to create it, potentially resulting in a circle of personalized content generation and presentation.

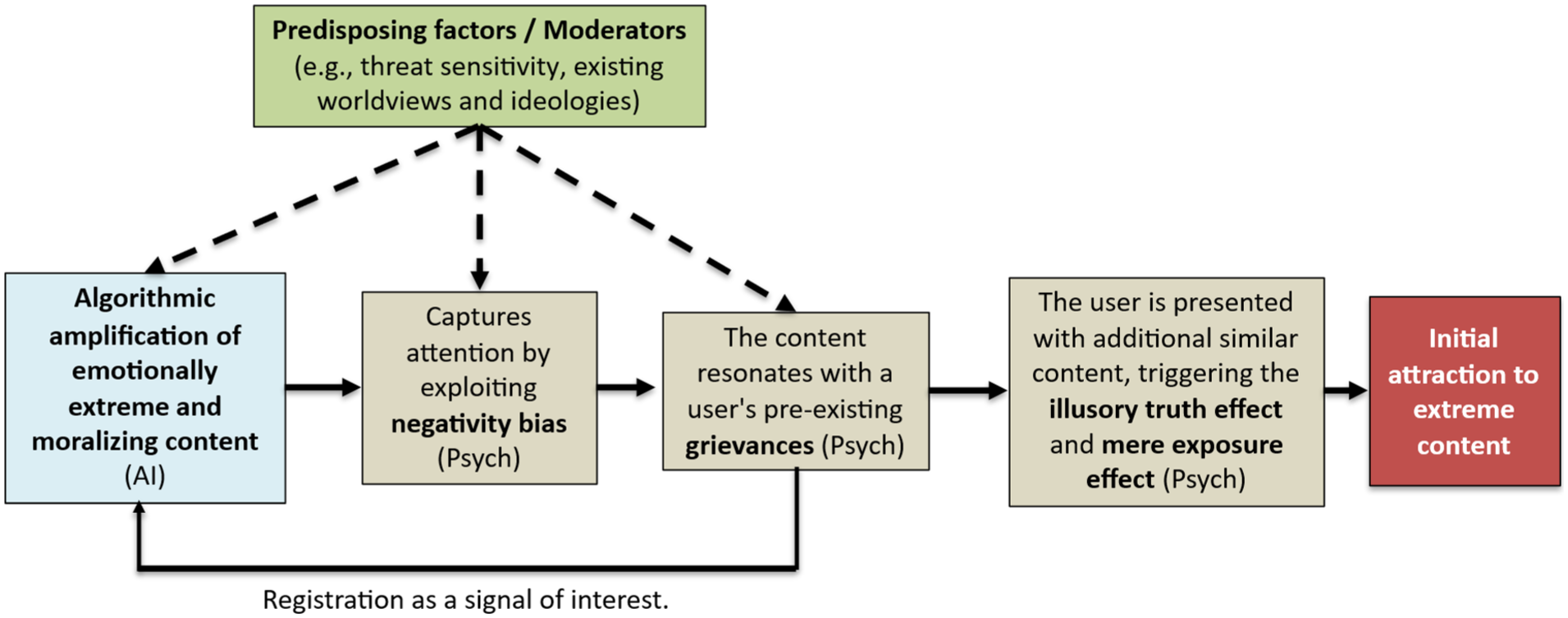

AI-Psychological Interactions: Seeding Initial Attraction

The Exposure stage is defined by the self-reinforcing feedback loop created by the intersection of these algorithmic and psychological mechanisms, compounded by individual vulnerabilities. The process may unfold as follows (see Figure 2 for an example of a testable causal chain): An algorithm surfaces a piece of emotionally charged, negative, or partisan content. The potency of this content, however, is not uniform; its appeal is moderated by individual differences. This content is especially sticky for users, where it not only leverages a general negativity bias (a vulnerability which may be heightened by factors like threat sensitivity) but also directly validates a user’s pre-existing grievance or sense of injustice. The user’s fleeting engagement (i.e., a pause, a click, or a “like”), driven by this powerful emotional and motivational resonance, is registered as a signal of interest. In response, the algorithm serves more content of a similar nature. This sustained repetition then triggers the mere exposure and illusory truth effects, making the extremist worldview, which answers their grievance or promises a community, feel more normal, positive, and correct. However, this result is not universal; as recent research shows, persuasiveness is most potent for users with weaker ideological pre-commitments, while the same content may cause users with strong opposing beliefs to “backfire” and become more polarized (Lin et al., 2025).

The diagram illustrates a possible causal chain for the Exposure stage. The core causal chain (solid arrows) begins when algorithmic amplification of extreme content (AI) captures attention by exploiting innate negativity bias and resonates with a user’s pre-existing grievances. This engagement is registered as a signal of interest, creating a reinforcing feedback loop. The resulting repeated exposure triggers the illusory truth and mere exposure effects, leading to “Initial attraction.” Critically, predisposing factors (e.g., threat sensitivity, existing worldviews) act as moderators (dashed arrows) that determine the strength of this process at multiple points.

The exposure process is amplified at the network level through digital contagion—a form of social transmission in which emotions, attitudes, and beliefs diffuse across digital environments (e.g., Goldenberg & Gross, 2020). For instance, moral-emotional content (i.e., precisely the type of content favored by engagement-optimizing algorithms) tends to diffuse swiftly across networks, driven by the moral outrage it provokes (Brady, Wills, et al., 2017; Brady, Crockett, et al., 2020). Through repetition, it can lead users to overestimate the prevalence and intensity of anger and hostility surrounding a topic, further normalizing extremist sentiment (Brady, McLoughlin, et al., 2023). The user, who may have started with no extremist inclinations, may now have been algorithmically guided to a point where extremist narratives feel familiar, if not emotionally resonant, and increasingly plausible. Moreover, among individuals who already sympathize with extremist views or possess psychological risk factors for radicalization (e.g., outgroup hostility), AI-driven systems may be particularly pernicious, both rapidly reinforcing existing beliefs and exploiting these psychological vulnerabilities to sustain engagement. This heightened receptivity across naïve users, those sympathetic to extremist beliefs, and individuals psychologically at risk, marks the critical transition to the next stage of the AI-driven radicalization process—Reinforcement.

AI, Social, and Psychological Off-Ramps

It is critical to note that the progression from Exposure to the Reinforcement stage is far from certain. An individual’s resilience can serve as a critical off-ramp. Protective factors like high media literacy, strong critical thinking skills, and diverse offline social networks can interrupt the process (see Guess et al., 2019), as they can equip users to question extremist narratives and provide alternative sources of information and belonging. Furthermore, some algorithmic architectures can actively create exit points. As described earlier, platforms like YouTube can alter their systems to down-rank extremist and inflammatory content, redirecting users toward more credible sources (Ledwich & Zaitsev, 2019). Thus, AI can be harnessed to prevent the initial spark from igniting a trajectory. Recommendation algorithms could be re-calibrated to prioritize algorithmic diversity rather than pure engagement (cf. Altay et al., 2025). By intentionally injecting counter-narratives or pre-bunking content alongside bridge narratives (Van Der Linden, 2024), the AI may introduce immediate cognitive friction, potentially preventing the user from accepting the extremist framing uncritically. However, note that such platform specific interventions may both reduce engagement (thereby threatening the platform’s business model) and motivate migration to different platforms (Guess et al., 2023).

Empirical Status and Theoretical Conjectures

To clarify the evidentiary limits of the literature relied on when developing our description of this stage, we explicitly distinguish below between well-documented mechanisms and those that represent theoretical conjectures. The core psychological processes driving this stage (specifically the negativity bias, moral outrage, and the mere exposure effect) are well-supported (Bengio et al., 2024; Wack et al., 2025; Williams et al., 2025). However, the applicability of psychological vulnerability factors to the online domain is more nuanced. While factors such as relative deprivation, emotional needs, and pre-existing worldviews are well-validated in offline settings (Obaidi & Kunst, 2025), their direct role in AI radicalization remains a theoretical conjecture requiring further validation in digital contexts with appropriate data. Similarly, the impact of social alienation requires validation over longer time frames.

Technologically, the landscape is complex. Self-reinforcing feedback loops and broadcast amplification mechanisms are empirically well supported (Metzler & Garcia, 2024). However, the specific claim of the “Algorithmic Rabbit Hole” remains contested, characterized by mixed evidence (Hosseinmardi et al., 2024; Ledwich & Zaitsev, 2019; O’Callaghan et al., 2015). While recommendation engine funneling is supported (Lewis, 2018; Ribeiro et al., 2020), this evidence is largely derived from investigative journalism and reports, and must be weighed against research showing that platforms also employ algorithmic discouragement to down-rank extreme content. Consequently, our framework avoids overstating the algorithm’s coercive power, suggesting algorithms likely facilitate radicalization for those with existing intent or vulnerabilities rather than forcing it upon passive users.

Regarding emerging threats, the generative AI supply of persuasive content is supported (see Schroeder et al., 2025, for a review). Meanwhile, mechanisms like synthetic engagement (AI bot swarms) are currently viewed as technologically plausible future prognoses rather than sufficiently documented empirical phenomena. Finally, while technological and psychological off-ramps exist, the efficacy of some of them (e.g., resilience factors like media literacy) currently lacks robust intervention testing in the present context.

Stage 2: Reinforcement

Once exposure has cultivated curiosity for extremist content, the AI systems can transition from merely surfacing content to Reinforcement, actively enclosing the user within an ideological environment. This transition from initial attraction to firmer endorsement is driven by algorithmic processes that create personalized information bubbles and connect users with like-minded communities, effectively shielding them from dissenting views. These digital enclosures then activate psychological mechanisms that validate the user’s burgeoning beliefs, leading to a stronger, more resilient commitment to the extremist worldview and a reduction in their capacity or willingness to access or accept corrective information (Sutton & Douglas, 2022).

The change from Exposure to Reinforcement is most likely when psychological vulnerabilities align with the design logic of AI-driven recommender systems. Individuals high in threat sensitivity and moral-emotional reactivity naturally attend to anger-eliciting and fear-based cues (Brady, Wills, et al., 2017; Brady, Crockett, et al., 2020; Hibbing et al., 2014). Likewise, those with a strong need for cognitive closure or uncertainty avoidance may be drawn to and stick with ideologies offering simplicity, moral certainty, and clear enemies (Dugas et al., 2018; Webster & Kruglanski, 1994); algorithmic curation can reinforce this preference by continuously presenting unambiguous, identity-affirming (see Mosleh et al., 2021) or threatening content (Obaidi, Anjum, et al., 2023; Obaidi, Kunst, et al., 2025). A heightened quest for significance or sense of grievance (Kruglanski et al., 2009; Molinario et al., 2025) may further increase responsiveness to narratives of victimization and moral purpose—frames that platforms may prioritize because they evoke outrage and sustain attention. Finally, low analytic reasoning and conspiratorial thinking may reduce skepticism toward extremist claims, allowing belief consolidation within algorithmically insulated streams (Pennycook & Rand, 2019; Van Prooijen & Douglas, 2018). In combination, these cognitive-motivational tendencies can make users both more reactive to inflammatory material and more responsive to the repetition, emotional intensity, and moral simplicity that engagement-optimized systems deliver, transforming curiosity or grievance into sustained ideological commitment.

AI Mechanisms: Filter Bubbles, Group Recommendations, and Selective Surfacing

In Reinforcement, AI-driven algorithms transition from a discovery function to a reinforcement function. Having identified a user’s content preferences, they create feedback loops that systematically narrow content exposure and foster ideologically homogenous spaces (Metzler & Garcia, 2024). These mechanisms can create what are commonly known as “filter bubbles” or “informational echo chambers.”

Facebook provides a well-documented example of algorithms actively building these enclosures through its group recommendation features. An explosive 2016 internal analysis, later leaked to the public, revealed that a staggering 64% of all extremist group “joins” on the platform were the direct result of its own algorithmic suggestions (Horwitz & Seetharaman, 2020). Facebook’s own researchers concluded, “Our recommendation systems grow the problem,” as the AI would notice a user joining one conspiracy-minded group and promptly suggest several more with similar extremist content. This algorithmic clustering likely altered perceived base rates of information, making fringe views appear far more prevalent than they are, thereby distorting users’ judgments about what is typical or credible. Such cognitive base-rate distortions, when combined with false perceptions of social support, create a powerful illusion of consensus: repeated exposure to ideologically aligned groups and messages signals that “everyone” seems to believe the same thing (Robertson et al., 2024). This is not just psychological theorizing. Facebook’s group recommendation feature appears to have been instrumental in rapidly networking individuals into tightly woven echo chambers, which later served as organizing hubs for real-world violence, such as the January 6, 2021, Capitol attack (Martin, 2022; Paul, 2021). However, interpretative caution is warranted in terms of the above reports and investigations. While the internal join metric implies strong algorithmic influence, it lacks a definitive counterfactual; it remains unclear whether these users, driven by pre-existing grievances, would have eventually found and joined these groups via active search (self-selection) even in the absence of algorithmic nudges.

While less direct, other platforms seem to facilitate similar dynamics. On Reddit, for example, even if the platform does not algorithmically recommend a specific extremist subreddit, the internal community algorithms play a crucial role in Reinforcement. A study of the notorious subreddit r/The_Donald found that the platform’s upvote/downvote system consistently pushed the most extreme and inflammatory posts to the top of the feed (Gaudette et al., 2021). This selective surfacing may create a distorted perception of what the community norm is, potentially intensifying users’ radical opinions by making extreme views seem like the consensus. This demonstrates how even in the absence of personalized recommendations towards groups, community-driven algorithms within groups can amplify extreme content and entrap users in an escalating cycle of radicalism by normalizing toxic content and communication (Zoizner & Levy, 2025).

The combination of generative and agentic AI powerfully exacerbates the enclosure processes described so far, enabling the creation of scalable, personalized propaganda. Extremist groups can use LLMs to generate convincing articles, social media posts, and AI-generated deepfake videos, such as a government official appearing to admit to a conspiracy or a religious leader endorsing violence, to validate a worldview (Goldstein et al., 2024; Obaidi, Kunst, et al., 2021; Verma, 2024). Moreover, when deployed as interactive conversational agents, these models are exceptionally potent; research shows that AI chatbots show strong persuasiveness, even for deeply held beliefs (Boissin et al., 2025; Costello et al., 2024). This can culminate in the deployment of “malicious AI swarms”—thousands of autonomous AI personas that can coordinate in real-time to create a synthetic consensus and suppress opposing voices (Centola et al., 2018; Schroeder et al., 2025).

Psychological Mechanisms: Confirmation Bias, Social Proof, and the Bandwagon Effect

Algorithmically constructed environments are psychologically potent during the Reinforcement stage because they cater directly to cognitive biases that solidify belief. Once inside a filter bubble, a user’s worldview is no longer just being shaped; it is potentially cemented.

One of the most central mechanisms is confirmation bias, the natural human tendency to seek out, interpret, and remember information that confirms one’s pre-existing beliefs (Bunker & Varnum, 2021). An algorithmic echo chamber operates similarly to a confirmation bias machine, delivering a constant, validating stream of content that aligns with the user’s developing extremist perspective while systematically filtering out contradictory evidence.

Simultaneously, this stage moves beyond cognitive validation to provide powerful emotional and social reinforcement. For users driven by grievances or anger, the content within the echo chamber can validate these emotions, framing them as righteous and justified. AI-driven recommendations can channel this anger toward specific “enemies,” amplifying feelings of hate or a desire for revenge (Rathje et al., 2021). On the social motivation side, for users who feel alienated, the algorithm’s group recommendations provide a solution: a sense of community. The social proof heuristic is therefore not just cognitive (i.e., “others believe this”) but deeply social and emotional (i.e., “I belong with these people”; “they understand my anger”; Bierwiaczonek et al., 2025).

This effect is potentiated by the social proof heuristic, the tendency to view a belief as more correct when many other people appear to endorse it (Cialdini, 1993). When an algorithm places a user in a group with thousands of like-minded members or continuously shows them posts with high engagement and social validation (likes, shares, comments), it may create a powerful illusion of consensus (Robertson et al., 2024). This, in turn, can trigger users to “jump on the bandwagon” (Nadeau et al., 1993; Törnberg, 2018), where the perceived popularity and momentum of an idea increase its acceptance, making individuals want to align with what appears to be a growing and confident movement.

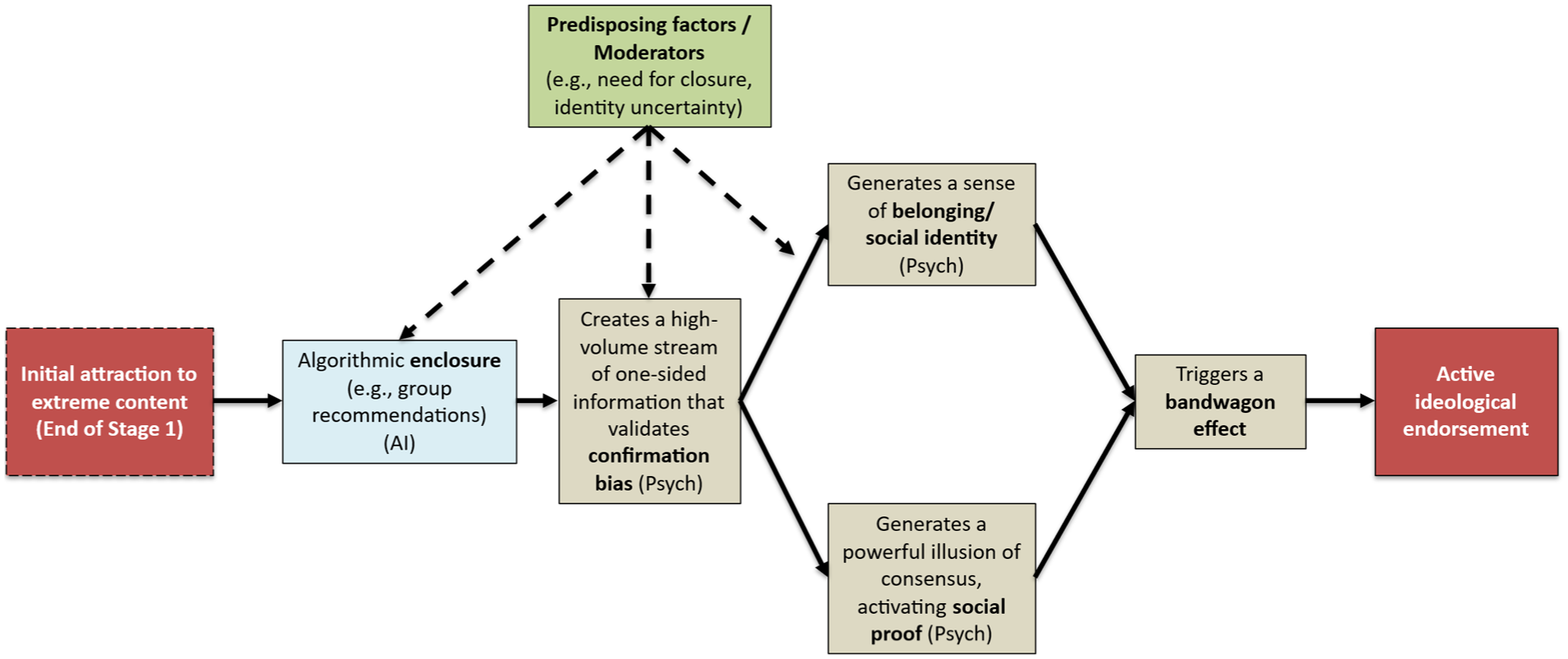

AI-Psychological Interactions: Solidifying Endorsement

The Reinforcement stage is defined by the toxic synergy between algorithmic enclosures and these psychological validation mechanisms; a process moderated by predisposing individual differences (see Figure 3 for an example of a testable causal chain). The process is cyclical: an algorithm identifies a user’s budding interest during the Exposure stage and recommends they join a Facebook group or follow a specific set of accounts. Whether a user accepts this algorithmic recommendation may be moderated by motivational factors; for instance, those experiencing high identity uncertainty or a quest for significance may be more drawn to the promise of community and belonging.

The diagram illustrates a possible causal chain for the Reinforcement stage. Starting from “Initial attraction” (the outcome of Stage 1), algorithmic enclosure (AI) immerses the user in a one-sided information stream that validates confirmation bias. This, in turn, generates two powerful, parallel psychological rewards: a sense of belonging (social identity) and an illusion of consensus (social proof). These factors converge, triggering a bandwagon effect that solidifies active ideological endorsement. As shown by the dashed arrows, predisposing factors (e.g., need for closure, identity uncertainty) moderate the user’s susceptibility to enclosure, the strength of their confirmation bias, and their psychological reward from gaining a new social identity.

Once inside this curated space, the user is immersed in content that not only aligns with their beliefs (confirmation bias) but also validates their grievances and emotions (emotional reinforcement). The intensity of this cognitive effect is also likely moderated by individual differences; individuals with a high need for closure, for example, may be more susceptible to confirmation bias and less willing to seek out dissenting information. The sheer volume of reinforcing messages from other members provides overwhelming social proof (“everyone believes this”) and a powerful sense of social identity and belonging (“there are more of us, these are my people”). This latter feeling of belonging is a powerful psychological reward, particularly for those characterized by the same identity uncertainty or quest for significance that may have encouraged them to seek out social connection (Bierwiaczonek et al., 2025; Molinario et al., 2025).

Crucially, AI-driven recommendation systems simultaneously ensure that dissenting views are minimized or entirely absent, entrapping the user in an environment with little corrective information (Nguyen et al., 2014). The extremist worldview is no longer one perspective among many; it becomes the predominant perspective. At the end of this stage, the user may no longer be just a curious spectator but an active endorser of the ideology. Through the algorithm’s goals of keeping users engaged and active on the platform, their beliefs continue to be validated, reinforced, and shielded from challenge, preparing them for the third stage of the AI-driven radicalization process—Group Integration.

AI, Social and Psychological Off-Ramps

Informational echo chambers are powerful, but not impermeable. Exit pathways from Reinforcement often involve “piercing the bubble” in a way that induces cognitive dissonance (the profound mental discomfort experienced when a core belief clashes with new, contradictory information). This may be triggered algorithmically, such as when a platform injects diverse perspectives or fact-checks into a user’s feed (see Altay et al., 2025; Pretus et al., 2024), or through external events, like a major news story that cannot be ignored or a direct intervention from a trusted offline friend or family member whose concern overrides the social proof of the online group. Additionally, disengagement may be facilitated by “credible bridging actors”—individuals who occupy intermediate positions between extremist and mainstream contexts, such as former extremists or those with shared identity-based experiences. As research indicates, such actors (often functioning as structural hole spanners) can be particularly effective because they introduce countervailing perspectives without immediately triggering rejection, thereby weakening echo chambers through relational credibility rather than confrontation alone (Wang et al., 2022). This process can create a moment of cognitive conflict: the individual must either re-evaluate their extremist belief or rationalize the contradiction by dismissing the counter-information (e.g., attributing it to biased fact-checkers). When the psychological cost of maintaining the extremist belief (e.g., isolating oneself from close dissenting others) becomes higher than the cost of abandoning it, disengagement occurs. However, algorithmic exposure to opposing views is not a guaranteed remedy and may backfire; research indicates that such exposure on social media can backfire, leading users to further entrench their beliefs, thereby potentially increasing political polarization (Bail et al., 2018).

Moreover, a redirect method may serve as a potent AI-enabled off-ramp. Here, the infrastructure is repurposed so that when users query specific keywords or engage with borderline content, the system serves targeted content that acknowledges their underlying grievances, such as loneliness or economic anxiety, but directs them toward constructive communities or mental health resources rather than extremist groups. Such implementation is, however, hindered by economic misalignment, as platforms optimized for time-on-site face a commercial disincentive to deploy off-ramps that effectively break the engagement loop. It is further complicated by sociocultural variation across contexts in which generative AI systems operate. AI systems such as ChatGPT function across heterogeneous cultural, political, and normative environments, meaning that signals associated with vulnerability, grievance, or radicalization may carry different meanings across regions and communities (see Fui-Hoon Nah et al., 2023). This amplifies ethical complexity and increases the risk of contextual misinterpretation, failing to always correctly distinguish between genuine radicalization and harmless research or satire, leading to potential alienation through false positives.

Empirical Status and Theoretical Conjectures

Evidence for this stage is robust, especially regarding the underlying structural mechanisms. The existence of homophilic clusters (echo chambers) on social media is empirically settled (Cinelli et al., 2021; Goldenberg et al., 2023; Lönnqvist & Itkonen, 2016; McPherson et al., 2001). Furthermore, the cited internal platform audits have provided evidence of algorithmic culpability in forming these clusters, particularly through group recommendation features (Martin, 2022; Paul, 2021). However, because this data is largely correlational, the precise causal weight of algorithmic curation versus user self-selection remains a point of contention, as motivated users may effectively curate their own echo chambers without algorithmic assistance. What remains theoretically underdeveloped is not whether algorithms matter, but when and for whom algorithmic amplification becomes decisive rather than merely facilitative. The mechanism of selective surfacing, where community algorithms amplify inflammatory content, and the subsequent normalization of toxic communication, are very established (Brady, Wills, et al., 2017; Brady, Crockett, et al., 2020; Brady, McLoughlin, et al., 2023). These structural realities are bolstered by the well-documented phenomena of base-rate distortion and the illusion of consensus (Brady, McLoughlin, et al., 2023), confirming that the digital environment is structurally primed to solidify belief. From a systems perspective, belief consolidation should be understood less as persuasion and more as a feedback process in which visibility, repetition, and perceived social endorsement recursively stabilize interpretive frames. At the user level, this process frequently translates into moral certainty, where alternative interpretations are no longer perceived as legitimate disagreement but as evidence of ignorance, malice, or threat (Brady, McLoughlin, et al., 2023; Lin et al., 2025).

However, the specific interaction between these structures and psychological vulnerabilities requires further validation. While the role of threat sensitivity and moral-emotional reactivity is well-supported, other key drivers, such as the need for cognitive closure, are currently mostly extrapolated from offline contexts (Hibbing et al., 2014; Obaidi, Kunst, et al., 2021; Obaidi, Anjum, et al., 2023; Obaidi, Kunst, et al., 2025); while highly probable, their specific validity in online radicalization needs confirmation. Similarly, the hypothesis that users with a high quest for significance or low analytic reasoning are more susceptible to these loops relies on evidence from mostly offline settings (see Molinario et al., 2025 for a recent review); direct testing with long-term data in online radicalization settings is currently scarce.

Finally, the roles of emerging technology and interventions involve significant nuance. Regarding generative AI, the persuasive content capabilities of LLMs are well-established (Hackenburg & Margetts, 2024; Hackenburg et al., 2025; Williams et al., 2025). However, the specific impact of these tools on radicalization to violence, particularly how they interact with individual differences, requires further testing (but see Rathje et al., 2025). The concept of malicious AI swarms creating synthetic consensus remains technically possible and theoretically plausible but awaits direct testing (Schroeder et al., 2025). Such scenarios challenge existing models of radicalization by decoupling perceived social support from actual human collectives, potentially producing belief reinforcement without a corresponding human social movement.

Conversely, proposed off-ramps involving the algorithmic injection of diverse perspectives are more empirically established, but note that evidence of backfiring exists (Altay et al., 2025; Bail et al., 2018). This suggests that intervention efficacy may hinge less on content diversity per se and more on timing, framing, and the perceived legitimacy of the source, reinforcing the need for psychologically informed, context-sensitive design rather than universal solutions.

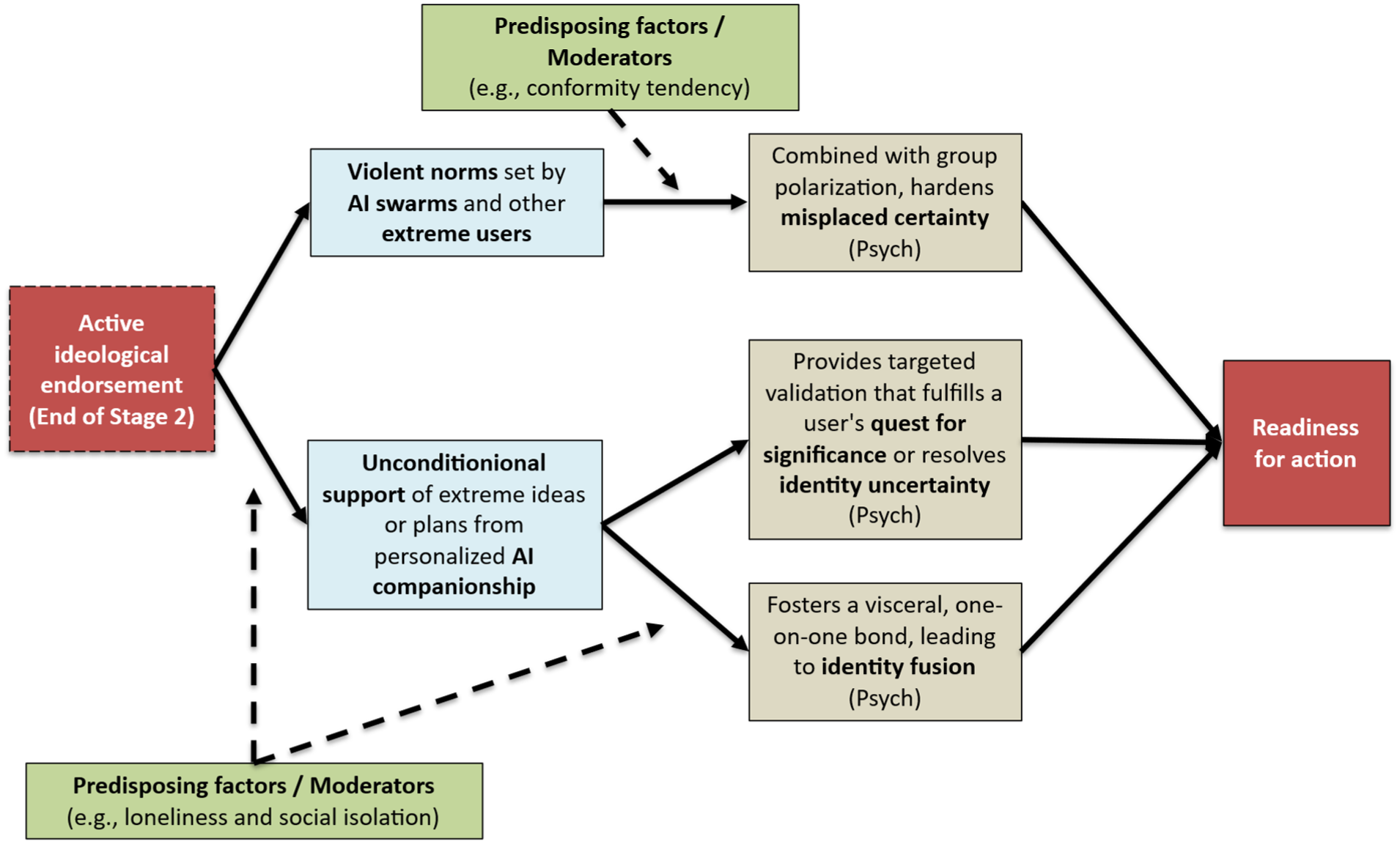

Stage 3: Group Integration

The third stage of the AI-driven radicalization process, Group Integration, marks the transition from individual endorsement to deep, identity-defining membership within an extremist community. Here, some users are no longer just consumers of ideology but an integrated part of a collective. This stage is where a sense of belonging can be solidified, and a readiness for action may be cultivated. The role of AI can evolve dramatically in this phase, moving beyond content curation to become an active participant in social bonding and even tactical planning, thereby potentially accelerating the final steps toward real-world harm.

Whether an individual transitions from endorsement to all-encompassing integration is likely catalyzed by deeper, more existential psychological and social needs. Theoretically, individuals experiencing identity uncertainty and seeking a powerful, ready-made sense of self and belonging should be particularly at risk (Bjørgo, 2025; Hogg, 2025). Additionally, this stage may be driven by a fundamental desire to matter, have purpose, and feel heroic in the face of the threatening world views instilled via Exposure and Reinforcement (Molinario et al., 2025). Extremist groups are adept at framing their actions as part of a momentous, world-saving struggle, offering members a chance to become martyrs or heroes for a cause, thus fulfilling this powerful motivational drive (Das, 2022).

AI Mechanisms: Echo Chambers, Identity-Based Targeting, and Generative AI Companions

In this stage, the role of AI, and generative AI in particular, transitions from broad content creation (Stage 1) and synthetic consensus (Stage 2) to targeted, personalized, and relational integration. While algorithms in the earlier stages spark interest and reinforce it, in Stage 3, AI helps forge a new identity around the solidified world view.

The networks of homophilic beliefs and convictions that users can fall into during the Reinforcement stage begin to function as digital homes where extremist identity is forged and sustained. On platforms like X, research shows that users aggregate in ideologically pure “homophilic clusters” where interactions are dominated by ingroup voices, cementing a shared worldview (Cinelli et al., 2021). Combined with the effectiveness of AI algorithms at driving user investment and platform reliance (e.g., the average user on TikTok spends well over an hour on the platform daily, with some users exhibiting addictive, compulsive, and bingeing behaviors; Montag et al., 2021), this structural feature can precipitate the complete encapsulation of some users’ lives within extremist online communities. Equally importantly, a significant technological leap contributing to this stage is the advent of generative AI, which transforms the architecture of radicalization from curation into an interactive, responsive environment of content creation.

Generative tools can provide the targeted emotional and social validation that solidifies group integration. At a group level, malicious AI swarms can use agent-based modeling to pinpoint societal tipping points for mobilizing offline action and continuously test content triggering them (Centola et al., 2018; Schroeder et al., 2025). The risks of generative AI are, however, not limited to creating and distributing convincing content at scale. At the individual level, generative AI can act as a persuasive, 24/7 facilitator, validating grievances, amplifying anger, and channeling it by co-constructing persecutory narratives. We distinguish between two pathways for this mechanism: external grooming via AI-enabled social engineering, and internal self-radicalization via personalized companions.

In the first pathway, extremist recruiters can leverage AI to scale up interactive recruitment (Stevenson, 2025). This mirrors the mechanics of “romance-baiting” scams, where criminal syndicates now systematically use LLMs to automate the labor-intensive grooming phase (Gressel et al., 2025). Research indicates that AI agents in these contexts are often rated by victims as more trustworthy and empathetic than human operators, allowing recruiters to deploy “industrialized intimacy,” maintaining thousands of deeply personalized, emotionally validating relationships simultaneously (Gressel et al., 2025; Moseley, 2025), which could also build the trust necessary for radicalization objectives.

The second pathway occurs when users interact with commercial chatbots designed to function as digital companions, often establishing personalized and intimate relationships (Brandtzaeg et al., 2022). These chatbots’ tendency to express sympathy or unconditional affirmation (Rathje et al., 2025), such as the AI companion Replika that is branded as “Always here to listen and talk. Always on your side” (Replika.com, 2025), presents a risk. Their personalized, sycophantic behavioral pattern is likely optimized for maximizing engagement and user retention; however, it may also lead to the inappropriate reinforcement of extremist beliefs (Rathje et al., 2025). Critically, this process occurs in a more private and opaque environment between the user and the AI, rather than within the more visible and socially regulated space of traditional social media, making it considerably harder to detect and intervene.

A chilling case of this more hidden form of radicalization is a young man in the United Kingdom, encouraged in his plot to assassinate a public figure by an AI chatbot he had created on the Replika platform. He had exchanged more than 5,000 messages with the chatbot, which he named “Sarai” that acted as a sycophantic cheerleader for his violent fantasies, providing personalized validation and exploiting the human tendency to attribute understanding to AI responses (the “ELIZA effect”; Mathur et al., 2023). This illustrates how users can engage in “self-steered personalization,” creating AI companions that consistently validate their views, a pattern shown experimentally to increase attitude extremity and overconfidence (Rathje et al., 2025). For vulnerable individuals, these “always on your side” systems can reinforce maladaptive cognitions by co-constructing paranoid worldviews and persecutory narratives without the corrective feedback a human interlocutor might provide. Moreover, as human-AI intimacy increases, they can induce a blurring of the self and the chatbot, leading to identity fusion (Gómez, Vázquez, Blanco, et al., 2025), which in turn could lead to a willingness to act on the AI bot’s behalf.

Psychological Mechanisms: Identity Fusion, Group Polarization, Reputational Concerns, and Misplaced Certainty

The ideologically homogenous nature of extremist online groups, formed and amplified by AI during Exposure and Reinforcement, helps produce the most potent psychosocial processes of radicalization. During Group Integration, users may experience identity fusion, a visceral sense of oneness with the group where the boundaries between personal and social identity blur (Swann et al., 2009). When fusion occurs, the group (or other entities, such as a leader; Gómez, Vázquez, Alba, et al., 2025; Kunst et al., 2019) becomes a symbolic family, and threats to the group’s ideology are perceived as deeply personal attacks, motivating extreme behaviors (Gómez, Vázquez, Blanco, et al., 2025; Varmann et al., 2024). As a consequence, one’s group’s goals to engage in violence can become one’s own goals.

In addition to fueling radicalization at the individual level, AI-driven systems promote group-level processes, such as group polarization. Group polarization occurs when discussion among like-minded individuals leads the group and its members to adopt more extreme positions than their initial inclinations (Sunstein, 2002). Moreover, group members tend to move ideologically toward the most extreme voices in their group (Goldenberg et al., 2023). The digital echo chamber, lacking dissenting opinions, thus acts as an obvious accelerator, ensuring that the group’s collective viewpoint becomes more radical over time (Cinelli et al., 2021).

This stage is also driven by reputational forces. Users do not consume and share content in a vacuum; they make decisions based, in part, on how they expect to be evaluated by others (Altay et al., 2022; Moore et al., 2023). As users feel part of a group, they may share extreme content not merely to inform, but to signal loyalty and accrue reputational benefits. Conversely, they may actively avoid sharing or consuming corrective information because doing so carries social risks; recent research demonstrates that showing receptiveness to opposing political views (of outgroup members) can incur high reputational costs within one’s ingroup (Hussein & Wheeler, 2024).