Abstract

Despite a growing focus on access to and use of emergent literacy assessment in early childhood, little is known about early childhood teachers’ data practices and their associations with children's emergent literacy skills. A questionnaire was used to confirm and elaborate findings from prior qualitative work (Schachter & Piasta, 2022) investigating U.S. teachers’ emergent literacy data practices. We focused on how teachers gathered data (data gathering), what they learned from those data (data knowledge), and how they used those data in their practice (data use) along with associations between the practices and children's emergent literacy skills. Overall, teachers reported engaging in multiple data practices, often with high levels of knowledge about children's emergent literacy skills. A small set of data practices, related to data gathering and data knowledge, were associated with children's emergent literacy skills. However, there were also some unexpected negative associations between children's outcomes and teachers’ data practices.

Emergent literacy skills are critical for later reading comprehension (Herrera et al., 2021; Hjetland et al., 2019). Thus, using data to monitor young children's development of these skills is key for guiding instruction and timely identification of early literacy difficulties (Lonigan et al., 2011; Piasta, 2014). Over the last 20 years, the presence or required use of emergent literacy assessments or data in early childhood (EC) settings has grown (Quality Compendium, n.d.), and the use of data in EC settings is now a recommended practice (Division for Early Childhood, 2014; National Association for the Education of Young Children, 2020).

The rationale for incorporating data in EC is based on accumulating evidence that teachers’ use of literacy data is associated with young children's literacy learning. Findings from K–2 research provide evidence that data-informed instruction can improve children's literacy skills (Al Otaiba et al., 2011; McMaster et al., 2020). Similarly, research indicates that when EC teachers working with 3-to-5-year-olds receive professional development regarding data, children's emergent literacy outcomes improve (Lonigan & Phillips, 2016; Weiland & Yoshikawa, 2013). Notably, most of the evidence linking teachers’ data practices to children's skills comes from intervention contexts. Although this is encouraging, we know less about teachers’ data practices outside interventions or how this supports children's emergent literacy skills (Dorn, 2010). This is a critical gap as teachers are essential in achieving the theory of change underlying data use—that the generated data will be used to support practice and result in improved outcomes for children (Bertrand & Marsh, 2015; Snow, 2011). The purpose of this study was to describe EC teachers’ reported data practices and associations between those practices and children's emergent literacy skills. Specifically, this study builds from prior qualitative work (Schachter & Piasta, 2022) wherein we identified that EC teachers engaged in three types of data practices—data gathering, data knowledge (learning about children from data), and data use—and that there were notable differences in these practices across teachers.

Emergent Literacy

Emergent literacy consists of the behaviors, knowledge, and attitudes that develop before conventional reading and writing (International Literacy Association, n.d.). The concept of emergent literacy has evolved over time from the original work by Clay (1966) to acknowledge that the early behaviors and knowledge that young children demonstrate relative to literacy include specific skills that are foundational to more conventional reading skills (Tracey & Morrow, 2017; Whitehurst & Lonigan, 1998). These emergent literacy skills help children learn to understand how print maps to language and how to build meaning from text (Piasta, 2014) and engage in literacy as social and academic processes (Kendeou et al., 2009; Schleppegrell, 2012). Across emergent literacy frameworks, common emergent literacy domains include alphabet knowledge, phonological awareness, emergent writing, print/book knowledge, and oral language (Herrera et al., 2021; Hjetland et al., 2019; Sénéchal et al., 2001). To support children's emergent literacy development, EC teachers are encouraged to target skills within these domains (e.g., rhyming within phonological awareness).

Data Gathering

Researchers have theorized and identified different types of emergent literacy data available to EC teachers. These include what Lonigan et al. (2011) have characterized as informal assessments (e.g., observations, checklists, portfolios) and standardized measures (e.g., screening and diagnostic assessments). Lonigan et al. differentiated these based in part on the process of data collection (gathering), noting that standardized measures have a common set of (standardized) administration procedures, whereas informal assessments may not. Other researchers have highlighted different mechanisms or purposes for gathering data, such as teacher noticing (Cherrington & Loveridge, 2014; van Es & Sherin, 2002), curriculum-based and teacher-created assessments (Dorn, 2010; Gischlar & Vesay, 2018), and intuitive data (Vanlommel & Schildkamp, 2018).

In our prior work (Schachter & Piasta, 2022), we found that EC teachers gathered three types of data that overlapped with these constructs. Specifically, teachers gathered data from standardized measures, documented observations commonly used in EC (Edwards et al., 2019; Hall et al., 2010), and informal noticing; unlike the other forms of data, the latter were not systematically collected or documented by teachers. Most EC teachers favored gathering data from informal noticing and documented observations rather than standardized measures. These findings aligned with emerging evidence that EC teachers gather data, but that data sources and the frequency of data gathering vary, with teachers preferring nonstandardized data sources (Bradbury, 2014; Gischlar & Vesay, 2018; Zweig et al., 2015). For example, Carson and Bayetto (2018) reported that EC teachers collected informal data at higher rates than standardized assessments (i.e., 84% vs. 25%). Most of the teachers in their study (63%) also reported gathering data regularly or occasionally, with the rest reporting rarely gathering data. Thus, EC teachers may gather data, but the amount of data they gather varies by data source.

Data Knowledge

A key part of data practice is interpreting data to gain knowledge about children's skills and development (Bertrand & Marsh, 2015; National Association for the Education of Young Children, 2020). What teachers know about children from data is a relatively unexplored phenomenon, in contrast to the burgeoning evidence regarding knowledge that teachers have about how children develop emergent literacy (Cash et al., 2015; Piasta et al., 2020). Few studies help us understand this part of EC teachers’ data practices. Recent research by Miller-Bains et al. (2017) found that teachers had a hard time identifying individual children's skill levels, including literacy, using GOLD (Teaching Strategies, n.d.), a popular informal assessment implemented in the United States as part of assessment-related policies (Quality Compendium, n.d.). In our prior study (Schachter & Piasta, 2022), we found that teachers knew much about children generally, including knowledge about their interests and social-emotional development. However, their knowledge about children's emergent literacy skills was variable, and there were inconclusive patterns regarding teachers’ knowledge of the whole class versus individual children.

Data Use

Finally, to achieve the aims of data-related efforts, data must be used in enacting instruction (Coburn & Turner, 2012; Snow, 2011). Emerging research suggests that teachers’ data use may be inconsistent, with many teachers having difficulty with this aspect of data practice. For example, Brawley and Stormont (2014) found that although EC teachers rated most uses of data as important, the frequency with which they engaged in those same data use practices was low. Similarly, Zweig et al. (2015) found that programs had difficulty integrating across data sources to enact instruction. In our prior work (Schachter & Piasta, 2022), we observed that when teachers used data, it was mostly in the moment, to initiate interactions or respond to children (e.g., ask a question, respond to a child error), with only about a third of teachers using data to plan or differentiate instruction. Across teachers, we noticed much variability in these practices. From our prior findings, we theorized that teachers who gathered data across all three sources to build knowledge about individual children and used that data beyond in-the-moment instruction might have the most sophisticated data practices and highest associated outcomes for children's emergent literacy. This aligns with research indicating that using multiple data sources might be more beneficial (Miller-Bains et al., 2017).

Present Study

Although there is evidence that teachers use different data sources and have access to multiple types of data, current understandings of EC teachers’ data practices and associations with children's skills are limited. Our prior qualitative work (Schachter & Piasta, 2022) generated a framework for conceptualizing teachers’ data practices and variability in these practices that might be important for children's skills, yet these are unaccounted for in the limited existing measures. Thus, using a concurrent mixed-methods design (Creswell & Plano Clark, 2011), the purpose of this study was to use a novel questionnaire to understand teachers’ data practices with a larger sample of teachers and explore whether these practices mattered for children's emergent literacy learning. We had two aims:

To confirm and elaborate teachers’ emergent literacy data practices based on the framework developed in qualitative analyses (Schachter & Piasta, 2022), focusing on how teachers gathered information about children's emergent literacy skills (data gathering), what they knew from those data (data knowledge), and how they used those data to inform their practice (data use). To investigate associations between teachers’ data practices (data gathering, data knowledge, data use) and children's emergent literacy skills.

Method and Materials

Theoretical Framework

We view teachers as sense-makers who must make meaning with data and their experiences of data use (Bertrand & Marsh, 2015). Building from other scholars, we understand assessment as a process or series of steps including data collection, interpretation, and use by teachers within their individual classrooms (Coburn & Turner, 2012; Vanlommel & Schildkamp, 2018). Thus, for the purposes of this study, we conceptualize data practices as interconnected and enacted (or not) by teachers. As such, we recognize teachers’ role and prioritize their perspectives as key for understanding connections between data practices and child outcomes. This is important in disrupting traditional approaches that typically favor researcher views of data practices.

Participants

We collaborated with two community-based nonprofit organizations in two different U.S. states, in the Southeast and Midwest, to recruit EC teachers working with children ages 3 to 5. Both organizations provided assessment and professional development support to their EC communities. Thus, this partnership was ideal as it ensured that children enrolled in teachers’ classrooms completed the Get Ready to Read!—Revised Edition (GRTR-R; Whitehurst & Lonigan, 2010), an emergent literacy screening assessment, in the fall and spring. Collecting data from teachers in two states who are exposed to different EC policy contexts increases the generalizability of our findings. One organization also trained teachers to implement a specific emergent literacy intervention, Nemours BrightStart! (Bailet et al., 2009) with a subset of children identified via GRTR-R scores.

Out of the 108 teachers who started the questionnaire, 105 completed all items and one completed 74% of items; this was the analytic sample for the study. Overall, participants were mostly female (94.30%) and racially diverse, with 55% of participants identifying as white, 32% as Black, 17% as Hispanic/Latinx, 10% as being of multiple or other races, and 4% as Asian. On average, teachers were 42 years old and reported having 11 years of teaching experience; 56% held a bachelor's degree or higher. About 75% of teachers had EC or related degrees (including associate's). Most teachers (70%) taught in full-day programs; 17% were affiliated with Head Start. Child demographic information was unavailable, as only deidentified child outcome data were collected or provided by the nonprofit organizations.

Questionnaire Design and Data Collection

We collected data regarding teachers’ emergent literacy data practices via an online questionnaire (available from the first author). The first and third authors developed the questionnaire based on the data practices framework and findings from the qualitative study prioritizing teachers’ perspectives on data practices. After generating initial items, we conducted cognitive interviews (Desimone & Le Floch, 2004) with five participants from the qualitative study. This aligns with recommendations to cognitively interview five to 15 participants for purposes of scale development (Peterson et al., 2017). This process allowed us to understand how teachers interpreted the items and ensured that we had not omitted any data practices. The questionnaire was refined based on the cognitive interview data.

We administered the online questionnaire using Qualtrics. The window of recruitment and questionnaire administration was approximately 2 months at each site (summer in the Midwestern state and mid-fall for the Southeastern state), with either the research team or local partners checking in with participants to answer questions and reminding teachers about participation. Teachers took an average of 25 min to complete the questionnaire and were given a gift card for participation.

Measures

Data Practices

We utilized open-comment and fixed-choice questions as a means for confirming, disconfirming, and elaborating findings from our qualitative work. To capture teachers’ perspectives and avoid prompting teachers to predefined responses, we designed the questionnaire such that the open-comment questions were asked prior to fixed-choice items. We also structured the questionnaire to maximize variability in responses by using several levels of Likert-type responses and a variety of options for potential data practices. Additionally, the questionnaire language was carefully crafted to convey to teachers that we did not expect them to engage in all practices listed (Czaja & Blair, 2005; Osterlind, 1998). For the purposes of this study, we focused on items pertaining to how teachers gathered emergent literacy data (data gathering), what they learned from those data (data knowledge), and how they used those data in their practice (data use); these items are described next.

We also asked teachers about ways of gathering data specific to 10 emergent literacy skills that mapped to five broader domains common across emergent literacy frameworks (Herrera et al., 2021; Hjetland et al., 2019; Sénéchal et al., 2001). These were rhyming and syllable counting or segmentation (phonological awareness), letter identification and letter sounds (alphabet knowledge), concepts about print and story understanding (print and book knowledge), vocabulary and language structure (oral language), and name writing and emergent writing (writing).

We examined the reliability of all fixed-choice items used to identify teachers’ data-gathering practices. Reliability was high: Cronbach's alpha = .85.

Child Outcomes

We measured children's emergent literacy skills using the GRTR-R (Whitehurst & Lonigan, 2010). This 25-item assessment measures children's emergent literacy skills in the domains of phonological awareness, alphabet knowledge, and concepts about print. For each item, children are shown a page with four pictures. The test administrator reads the question at the top of each page and children point to their answer. Reported internal consistency for this assessment is high (Cronbach's alpha = .88; Lonigan & Wilson, 2008); for our sample, Cronbach's alpha was > .85 for fall and spring scores. Standard scores were available for both states and thus were used for analyses. For the state where both raw and standard scores were provided, the correlations between pretest and posttest raw and standard scores were high (.90 to .91). Standard scores for the GRTR-R have a mean of 100 (SD = 15). Mean fall test scores were 95.22 (SD = 16.05) and mean spring scores were 105.45 (SD = 13.09). On average, the number of weeks between the fall and spring assessments was 30 weeks (SD = 4.70 weeks; min = 6.86 weeks, max = 45.71 weeks).

Analyses

To address Aim 1, we used descriptive and correlational statistics to analyze the fixed-choice questions. In some cases, described subsequently, items were collapsed to represent broader constructs related to our framework. To analyze the open-comment data, we utilized both deductive and inductive processes to confirm, disconfirm, and elaborate findings from our qualitative study (Creswell & Plano Clark, 2011). Specifically, for each open-comment question, we examined the data in relation to codes from the corresponding fixed-choice items and additional codes were created (discussed subsequently). All responses were double coded by the first author and a doctoral student. Inter-rater agreement was calculated by dividing the number of coding agreements by the sum of agreements plus disagreements. Agreement ranged from 80% to 100% across codes; all disagreements were addressed through discussion. To address Aim 2, we examined the associations between the data practices described in Aim 1 and children's skills on the GRTR-R. We estimated a three-level model (i.e., children nested within 88 teachers nested within 69 centers) and found that 22% of the variance was at center level, whereas only 3% was at teacher level (ICC based on the unconditional model). We report on findings from this three-level model, but also ran a two-level model (children nested within teachers) and results were consistent. Additional details about our analytic strategy can be found in the online supplementary materials (see SM3).

Results

The purpose of this study was to elaborate our data practices framework developed in rich qualitative work with a larger, more diverse sample (Aim 1) while also exploring associations between teachers’ data practices and children's emergent literacy skills (Aim 2).

Aim 1: Teachers’ Emergent Literacy Data Practices

Data Gathering

We were interested in how teachers gathered data about children's emergent literacy skills. Data gathering was condensed into the three major sources from which teachers were drawing data that emerged and differentiated teachers in our prior qualitative study: noticing data (seeing/hearing what children are doing at the moment, informally asking children questions), documented observations (recording observations, documenting children's work, completing a checklist), and standardized assessments (administering standardized and published screener or assessment). The third category was referred to as “formal” in our prior work because it included teacher- and school-created assessments in addition to standardized assessments. A glitch in the online questionnaire led to teachers in the current sample not reporting on teacher- and school-created assessments; we relabeled this data source “standardized” to accurately reflect the data source.

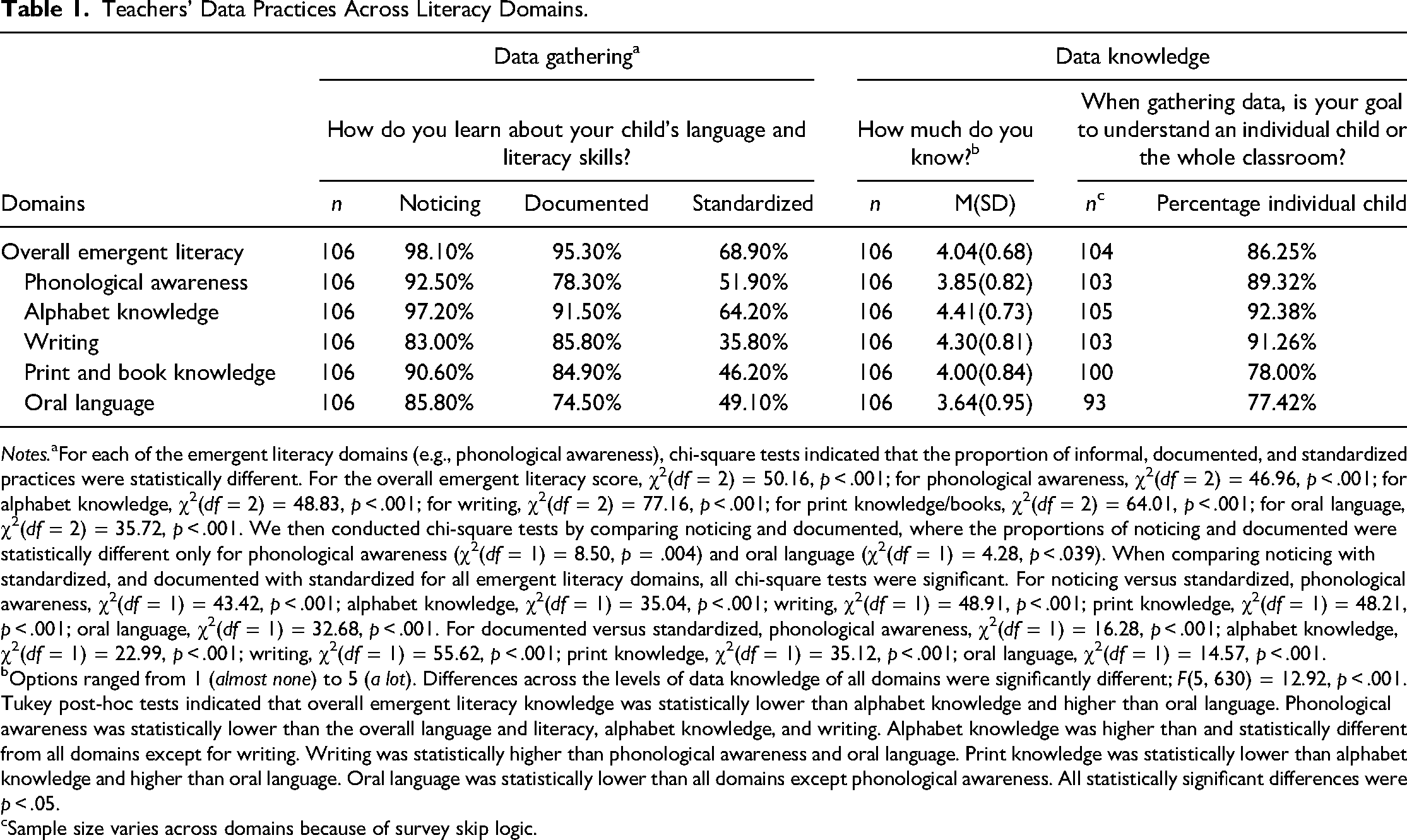

As shown in Table 1 and anticipated from our previous study, when collapsing data-gathering practices across the emergent literacy domains, most teachers gathered data via noticing (98.10%), followed by documented observations (95.30%) and standardized assessments (68.90%), to learn about children's emergent literacy skills. Patterns were such that the gathering of standardized assessments was endorsed in lower proportions than noticing (χ2(df = 2) = 32.89, p < .001) and documented observations (χ2(df = 2) = 25.14, p < .001). As anticipated from our prior study, the frequency of data gathered was significantly different across data sources (F(2, 315) = 247.58, p < .001) such that teachers gathered noticing data almost daily (M = 5.72; SD = .84), documented observations about weekly (M = 4.69; SD = 1.09), and standardized assessments about every six months (M = 1.69; SD = 1.93).

Teachers’ Data Practices Across Literacy Domains.

Notes.aFor each of the emergent literacy domains (e.g., phonological awareness), chi-square tests indicated that the proportion of informal, documented, and standardized practices were statistically different. For the overall emergent literacy score, χ2(df = 2) = 50.16, p < .001; for phonological awareness, χ2(df = 2) = 46.96, p < .001; for alphabet knowledge, χ2(df = 2) = 48.83, p < .001; for writing, χ2(df = 2) = 77.16, p < .001; for print knowledge/books, χ2(df = 2) = 64.01, p < .001; for oral language, χ2(df = 2) = 35.72, p < .001. We then conducted chi-square tests by comparing noticing and documented, where the proportions of noticing and documented were statistically different only for phonological awareness (χ2(df = 1) = 8.50, p = .004) and oral language (χ2(df = 1) = 4.28, p < .039). When comparing noticing with standardized, and documented with standardized for all emergent literacy domains, all chi-square tests were significant. For noticing versus standardized, phonological awareness, χ2(df = 1) = 43.42, p < .001; alphabet knowledge, χ2(df = 1) = 35.04, p < .001; writing, χ2(df = 1) = 48.91, p < .001; print knowledge, χ2(df = 1) = 48.21, p < .001; oral language, χ2(df = 1) = 32.68, p < .001. For documented versus standardized, phonological awareness, χ2(df = 1) = 16.28, p < .001; alphabet knowledge, χ2(df = 1) = 22.99, p < .001; writing, χ2(df = 1) = 55.62, p < .001; print knowledge, χ2(df = 1) = 35.12, p < .001; oral language, χ2(df = 1) = 14.57, p < .001.

Options ranged from 1 (almost none) to 5 (a lot). Differences across the levels of data knowledge of all domains were significantly different; F(5, 630) = 12.92, p < .001. Tukey post-hoc tests indicated that overall emergent literacy knowledge was statistically lower than alphabet knowledge and higher than oral language. Phonological awareness was statistically lower than the overall language and literacy, alphabet knowledge, and writing. Alphabet knowledge was higher than and statistically different from all domains except for writing. Writing was statistically higher than phonological awareness and oral language. Print knowledge was statistically lower than alphabet knowledge and higher than oral language. Oral language was statistically lower than all domains except phonological awareness. All statistically significant differences were p < .05.

Sample size varies across domains because of survey skip logic.

We also explored how teachers reported gathering data specific to the 10 individual emergent literacy skills (e.g., rhyming, name writing) within the five broader domains; see Table 1. We conducted chi-square tests to examine if there were differences in sources used for data gathering across each of the five domains and found that the proportion of noticing, documented observations, and standardized assessment sources statistically differed for all domains. Specifically, in all cases, teachers reported gathering both noticing and documented data sources in significantly higher proportions than standardized assessment data. Additionally, for phonological awareness and oral language, noticing data were gathered in statistically significantly higher proportions than documented observations. All chi-square test statistics are reported in Table 1.

Data Knowledge

Table 1 presents teachers’ reported knowledge regarding children's emergent literacy by domain. Generally, teachers rated their overall knowledge of children's skills as high (M = 4.04, range = 1.4 to 5.0). When looking at teachers’ reported knowledge for the specific domains, they reported similarly high levels of knowledge, knowing the most about alphabet knowledge (M = 4.41), followed by writing (M = 4.30), print knowledge/books (M = 4.00), phonological awareness (M = 3.85), and oral language (M = 3.64).

We compared teachers’ reported overall knowledge to knowledge regarding each individual domain and found significant differences as determined by one-way ANOVA: F(5, 630) = 12.92, p < .001. A Tukey post hoc test indicated that teachers’ reported knowledge for children's overall literacy skills was significantly lower than for alphabet knowledge (p = .013) and significantly higher for oral language (p = .004). There was no statistically significant difference between reported knowledge for overall literacy and any of the other domains. For alphabet knowledge, the domain with the highest teacher-reported knowledge, Tukey post hoc tests indicated significant differences (p < .01) among all domains except writing. For writing, the next highest rated teacher-knowledge domain, there were significant differences (p < .01) between writing and both phonological awareness and oral language. For print knowledge, there were significant differences (p < .05) with oral language (in addition to alphabet knowledge). Last, for oral language, there were significant differences with all domains (p < .01) except phonological awareness.

For teachers who reported gathering data about emergent literacy domains, we examined the extent to which these data were gathered to learn about their class as a whole or about individual children. When presented with this question, teachers could only select one answer. We averaged across teachers’ responses for each domain to create an overall score representing the percentage of teachers who used the data to know about individual children versus the whole class. Most teachers (86.25%) reported that their goal in gathering literacy data was to know about individual children (see Table 1). This pattern was consistent across each of the domains. There were statistically significant differences in teachers’ focus on data knowledge regarding individual children across domains (χ2(df = 4) = 17.78, p < .001), with teachers reporting gathering data to understand individual children with higher frequency for writing and alphabet knowledge (91.26%–92.38%) and with lower frequency for oral language and print knowledge (77.42%–78.00%). This focus on knowledge regarding individual children as opposed to the whole class contrasts with findings from our previous study, wherein we did not find a clear pattern of priority for teachers.

We also examined teachers’ open responses regarding what they learned about children's emergent literacy through gathering data. Teachers described a range of data knowledge that could best be characterized by the specificity of that knowledge, rather than fitting directly with the way that data knowledge was operationalized in the fixed-response items. For example, one teacher wrote, “I learn if they recognize their letters, letter sounds, initial sounds of words, breaking words apart into individual sounds, purpose of author and illustrator, characters and setting of a story, and telling what a story is about,” presenting a detailed account of her understanding of children's specific literacy skills. In contrast, another teacher wrote, “I learn their strengths and weaknesses” as what she learned about children's skills, not actually identifying emergent literacy skill-related knowledge. We thus coded responses on a scale of specificity including no mention of literacy skills (e.g., “At least each grading period”), general knowledge of literacy skills (as in the second example above), and specific knowledge of literacy skills (as in the first example above).

Almost a fifth of teachers (n = 18; 16.98%) provided in-depth, specific details regarding children's literacy skills. Most teachers (n = 52; 49.05%), however, provided general knowledge gained from data with statements such as, “Where they are on the literacy scale. How I can help them or challenge them. What their interests are.” A third of participants (n = 34; 32.07%) did not mention any knowledge regarding children's emergent literacy skills. A small number (n = 2; 1.86%) did not respond. Generally, findings fit within the hypotheses generated from the first study that teachers held varying levels of knowledge about children's emergent literacy skills but did not necessarily align with the way that data knowledge was operationalized via fixed-choice items. Considered within the context of the fixed-choice data in which teachers generally rated their knowledge about skills as high, this may indicate that teachers may not be as adept at articulating the specific knowledge that they hold about children's emergent literacy skills.

Data Use

Similar to the frequency with which teachers gathered data, teachers reported using noticing data close to daily (M = 5.71; SD = .80), followed by documented observation data (M = 4.56; SD = 1.02) and standardized assessment data, which was used about every six months (M = 1.95; SD = 1.30). These findings aligned with hypotheses in our previous study, in which we found that teachers used noticing data the most frequently.

We utilized fixed-choice questions to ask teachers about 17 potential data uses derived from our previous study. For each data use they described (a) if they used data for each practice, (b) the frequency with which they engaged in those practices, and (c) the data source (i.e., noticing, documenting, standardized) that informed those practices (see Table SM1 in the online, supplementary archive). Fourteen of the 17 practices were used by at least 80% of teachers, with eight of the practices used by more than 90% of teachers. Less frequent data uses were assigning children to classrooms (34.90%), comparing children (44.30%), and responding to a child's error (67.90%). On average, across 17 data uses, teachers reported using data sometimes/often (M = 3.64). Overall, these findings indicate a broad array of data uses and contrast with the findings of our prior study, in which teachers mostly used data to interact with children in the moment to adjust an instructional strategy, respond to a child's error, or respond to a child's question. Additionally, using data to plan was a low-frequency practice in our prior study; here, however, it was one of the most endorsed and frequent data uses. Across all 17 data uses, teachers relied most on noticing (M = 68.20%) and documented observations (M = 68.81%) to inform data use, as opposed to standardized data (M = 45.34%; see Table SM1 in the online, supplementary archive); differences between standardized and both informal and documented data use were statistically different (p < .01). These patterns somewhat align with our previous findings, which documented that observations and informal noticing were the most used data sources.

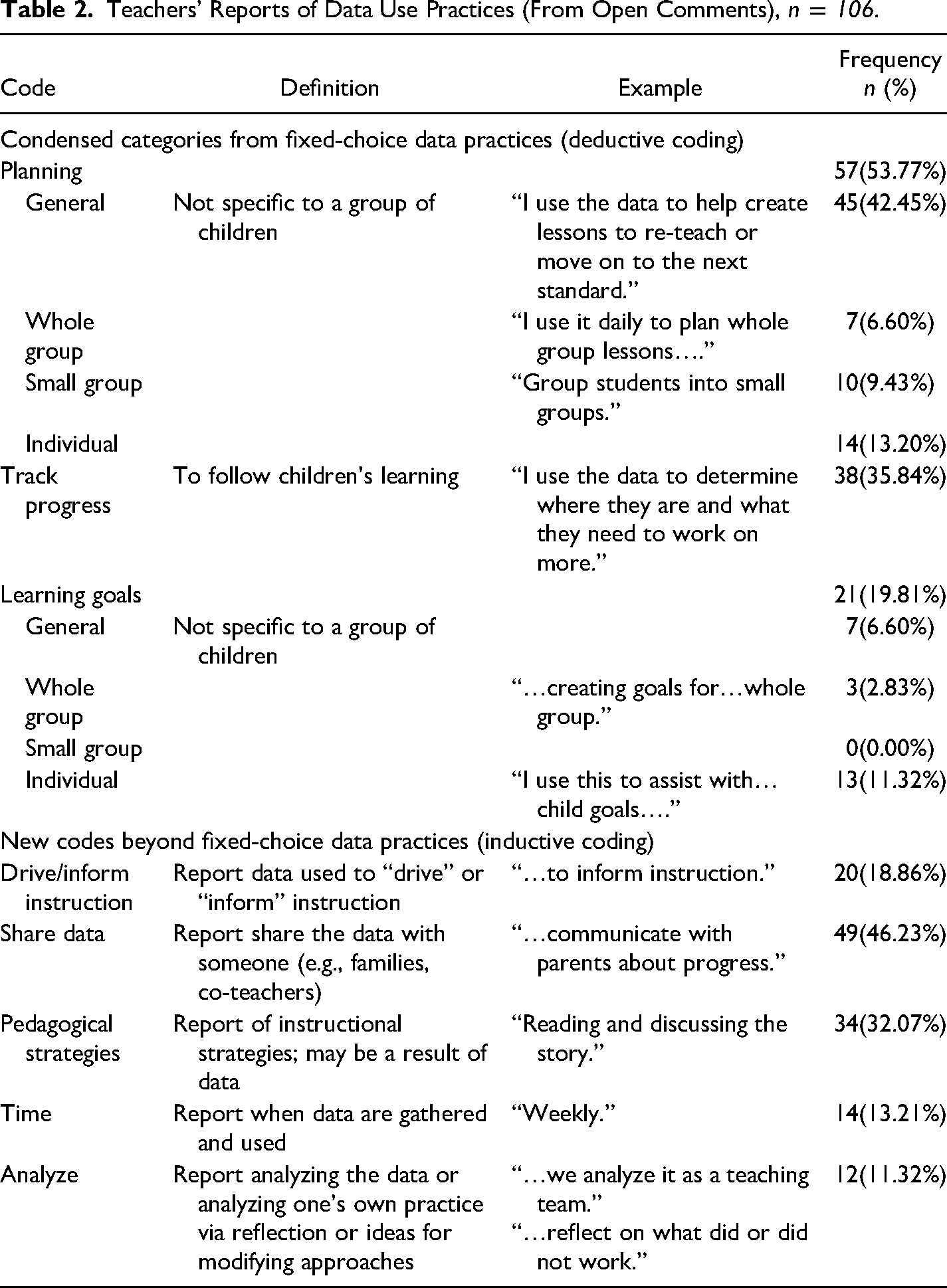

The open-comment data provided important insight into teachers’ data uses. In analyzing the responses, we observed two important patterns. First, although we tried to code the data using the 17 data uses from the fixed-choice questionnaire, these were not a good fit for the data. Thus, we identified emergent codes to account for the new information present in teachers’ responses beyond those from our prior study or in the fixed-choice items. Second, although we had anticipated that there might be differences in teachers’ responses to what they do with data (asked in the item “What do you do with language and literacy data after it is gathered?”) and how they use data (asked in the item “How do you use language and literacy data in your classroom?”), we observed that codes fit across participant responses for both questions, evidencing triangulation within participants’ reports (Maxwell, 2013). As such, we merged responses for both items in the analyses. Table 2 presents definitions, exemplar quotes, and frequencies within the data.

Teachers’ Reports of Data Use Practices (From Open Comments), n = 106.

As noted, patterns in these data differed from what we anticipated based on the previous study and sometimes from teachers’ fixed-choice responses. Specifically, most teachers reported using data for planning (53.77%; “…use it purposely [sic] in lesson plans”), aligning with the fixed-choice findings described previously but not in our prior study. Despite differences in our prior work and the quantitative data, there was not much variability regarding whether teachers reported using data to plan for whole group, small group, or individual children see Table 2). Although participants reported using data for tracking progress frequently in their fixed responses, this was only reported by 35.84% of teachers in their open-comment responses (e.g., “Compare data from the beginning of the school year to the end of the year in order to compare the progress”) and rarely reported by teachers in our previous work. Similarly, setting learning goals was a frequently reported data use in the fixed responses but less so in the open responses (19.81%; “set goals”).

Five new practices not addressed in the questionnaire (or in the prior study) emerged during the coding process. These provided additional insight into teachers’ perspectives on data use, particularly regarding communicating with others. Almost half of participants (46.23%) reported sharing data as a key practice, mostly with parents. For example, one teacher stated that they used data to “communicate with parents about progress,” and another reported that data were used to “support meetings with parents.” Additionally, about a third of teachers (32.07%) directly connected data to their specific pedagogical strategies. As one teacher wrote, “I use language and literacy data in the classroom when I give vocabulary, interacting with children, reading story and in small group.” In these cases, teachers tied their data to specific classroom practices. There were some new uses that were less frequent in the data. Thirteen percent of participants reported the times when they collected data (e.g., “during small group,” “weekly”), which seemed to indicate that timing was an important part of their use. There was a rare set of teachers who reported some type of analytic process involving the data, including analyzing and reflecting with data (11.32%), as evidenced in comments like “reflect on strengths and weaknesses” and “analyze them.”

Given the misalignment between the quantitative and qualitative findings on the questionnaire, in that the data practices endorsed in the fixed-choice questions were not aligned with teachers’ open responses, we turned to a key finding in our prior work, that some teachers described integrating multiple data sources to inform their instruction, whereas others tended to rely on single sources. For example, a teacher could plan an emergent literacy activity for the whole class by informally asking children questions (i.e., noticing sources), by completing a checklist about what a child knows and can do (i.e., documented), or by relying on screeners or assessments (i.e., standardized)—using all three of these data sources, two, or only one. To examine the extent of this integration, we calculated a “data integration” variable capturing the level of integration among the three data sources for each of the 17 data uses. A teacher who reported using only one of these data sources to plan an emergent literacy activity for the whole class, for example, received a score of 1 for that use, whereas a teacher who relied on all three data sources received a score of 3. This integration score is reported in Table SM1 in the online supplement for each specific data use. On average, teachers integrated close to two data sources (M = 1.82, range = 0.47–3.00) in their data use. When descriptively examining integration for each practice, teachers integrated sources the least when assigning children to a classroom (M = 0.71) and the most when identifying a child's emergent literacy strengths and weaknesses (M = 2.42).

Additionally, we examined how teachers prioritized data across planning and in-the-moment instruction. As described previously, teachers were asked to select what they prioritized when making decisions about planning and in-the-moment instruction. We used these data to create a variable identifying if teachers prioritized noticing, documented, and standardized data sources during these activities. Using these responses, we then categorized teachers as (a) non-data users, (b) data users—non-prioritizers, (c) data users—prioritizers of a single data, and (d) data-users—prioritizers of multiple data types. These were created for both planning and in-the-moment data uses, as described in Table SM2 in the online supplement. Descriptively, a higher proportion of teachers prioritized data to use when interacting with children at the moment than when planning for instruction. However, when teachers prioritized data for planning instruction, a higher proportion of teachers reported using multiple data types (i.e., data users—prioritizers of multiple data types).

Aim 2: Associations Between Teachers’ Data Practices and Children's Emergent Literacy Skills

For Aim 2 analyses, we initially included the 99 teachers who had at least one child with a complete GRTR-R pre- and post-assessment. On average, we had data for 15 children per teacher (SD = 8.78). There were 21 classrooms where co-teachers shared the same group of children. To ensure that children were nested within a unique teacher, we reduced duplication via multiple steps. First, we prioritized teachers who reported delivering the highest proportion of instruction in the classrooms (based on questionnaire responses). When this could not be determined, we randomly selected one teacher. This left us with a sample of 88 teachers who were uniquely linked to 1,088 children who had both fall and spring scores. There were no significant differences in characteristics between teachers included in Aim 1 and Aim 2 analyses except for higher representation of the “other” race category in Aim 2 (9.52% vs. 6.80%, p = .044). Except for two models, where the missing data were due to skip logic/not applicable responses (i.e., missing data by design), we had no missing data.

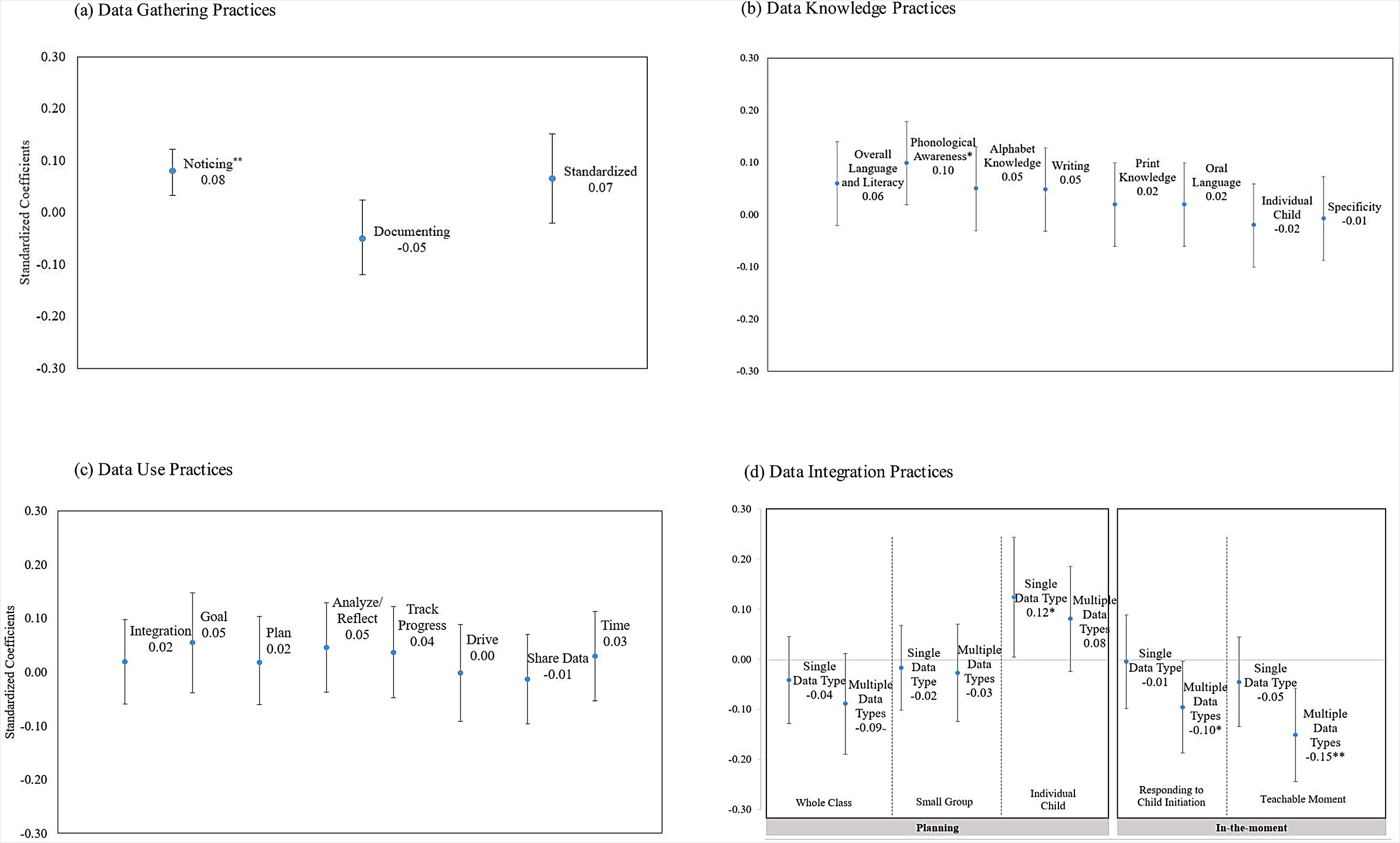

As described earlier, a subsample of children in one state received an emergent literacy-focused intervention. Thus, when estimating the analytic models, at Level 1, we controlled for the GRTR-R fall standard score, receipt of intervention, and days between the fall and spring assessment. At Level 2, we included the teacher-level predictors of interest; we ran models for each predictor separately. A dummy code for state was included as a Level 3 predictor. We estimated all models in SPSSv24 using maximum likelihood. To interpret results in consistent units across all models, we present results for standardized coefficients in Figure 1a–d. Given the exploratory nature of Aim 2, we interpret associations with p < .10 (Gaus et al., 2015).

(a–d) Standardized associations of data practices and child's outcomes with 95% confidence intervals. Note. ∼p < .10, *p < .05, **p < .01, ***p < .001.

Data Gathering

We estimated three models to examine associations between data gathering and children's emergent literacy outcomes. The three predictors of interest were gathering of noticing, documented, and standardized data (Figure 1a). Gathering data via noticing was positively associated with children's outcomes (β = .08, p = .001).

Data Knowledge

To identify associations between teachers’ data knowledge and child outcomes, we estimated eight models (Figure 1b). The predictors were knowledge of children's emergent literacy overall and by specific domain as well as knowledge based on individual children versus the whole class. Additionally, we incorporated teachers’ specificity of knowledge derived from the qualitative coding. We found a significant and positive association between phonological awareness knowledge and children's outcomes (β = .10, p = .012).

Data Use

To identify associations between data use and child outcomes, we estimated 13 models (Figure 1c). The models used predictors that we coded based on the qualitative data (i.e., goal, plan, analyze/reflect, track progress, drive, share data, and time), and one that came from fixed-choice data (i.e., integration practices). No significant associations were found for these predictors.

Next, we derived predictors for models in Figure 1d from fixed-choice data regarding data prioritization. Given that the non-data users represented only a small number of teachers (< 3), we combined the non-data users and data users—non-prioritizers into one category, which we used as the reference group. Unless specified, no additional significant differences were found when changing the reference group. We found a marginally negative association with children's outcomes for teachers who prioritized multiple data types when planning for the whole class, compared to the reference group (β = –.09, p = .083). When planning an activity for an individual child, we found a positive association with children's outcomes when teachers prioritized a single data type compared to the reference group (β = 0.12, p = .042). We also found that compared to those teachers who did not prioritize or use data, teachers who used multiple data types were associated with lower child outcomes, both for responding to children's initiation (β = –0.10, p = .048) and for teaching in the moment (β = –0.15, p = .002). This negative association was also held when the reference group was changed to those teachers who prioritized one data type (responding to a child's initiation β = –.10, p = .027; in-the-moment β = –.12, p = .006). No significant associations were detected for small-group activities.

Overall, teachers reported engaging in a multitude of data practices, often with high levels of knowledge about children's emergent literacy skills (Aim 1). We observed a small set of data practices that were associated with children's emergent literacy skills. However, there were also some unexpected negative associations between children's skills and teachers’ data use (Aim 2).

Discussion

We sought to confirm and elaborate findings from our qualitative work regarding EC teachers’ emergent literacy data practices with a larger sample and explore links to children's literacy skills. As such, this work provides critical insight into teachers’ emergent literacy data practices and associations with children's outcomes—to date a relatively unexplored phenomenon in EC. Importantly, our study contributes information regarding not only the type of data that teachers gather but also what teachers know and, more importantly, how they use those data. This understanding is important for achieving the aim of using data to support improved outcomes for children. Next, we discuss our findings, exploring the complexity of the results, and note implications for practice and future research.

High Levels of Data Practices

Overall teachers reported gathering data via a variety of strategies and sources. Over 95% gathered noticing and documented observation data, indicating that teachers were attending to this aspect of data practices. Fewer teachers (69%) reported having standardized data—notable as all teachers in the study had access to standardized data from GRTR-R. This could be in part due to finding that teachers collected standardized data less frequently, about every six months, compared to noticing and documented observations, which teachers collected almost daily and weekly, respectively. From a practical standpoint, this makes sense given both the sources for gathering these types of data as well as the intended purposes of these data sources. For example, noticing allows for the gathering of in-the-moment, detailed information about the context, whereas standardized assessments are more time-consuming to administer and provide information about children's performance on skills relative to typical development (Lonigan et al., 2011). Thus, in some ways, these findings about types and frequency are to be expected and mirror other research showing teachers’ preferences for nonstandardized data (Barnes et al., 2019; Bradbury, 2014; Gischlar & Vesay, 2018).

Importantly, noticing data was the only gathering source associated with children's outcomes. Noticing has substantial support in the extant literature as a means for learning about and enhancing children's learning (see Gaudin & Chaliès, 2015, for a comprehensive review). Teachers have many opportunities to gather noticing data in their classrooms, which may provide immediately actionable information. The key to teacher noticing is understanding what to attend to in the environment and then interpreting those data to understand children's skills (Mason, 2011; van Es & Sherin, 2002). Although more research is needed to replicate this finding in other samples, this could provide a key area for supporting teachers’ professional development to enhance the sophistication of noticing data use, with evidence that noticing can be enhanced via multiple training formats (Cherrington & Loveridge, 2014; Gaudin & Chaliès, 2015). Importantly, noticing is a type of data gathering that is not readily documented, and so how these data sources translate into teachers’ practices merits further examination. Notably, we did not find associations for documenting, which is a common recommended practice in EC (Edwards et al., 2019; Hall et al., 2010), or for standardized assessment. This could indicate that teachers are less sure of how to interpret and use these data (Barnes et al., 2019; Miller-Bains et al., 2017). If these are data that we expect teachers to gather to support children's outcomes, this needs further consideration.

Based on the data they gathered, teachers generally reported high levels of knowledge about children's emergent literacy skills. In their fixed-choice responses, teachers reported gathering data with the intent to learn about individual children's skills, yet this pattern was not replicated in the qualitative data. And, despite this level of confidence, except for knowledge of phonological awareness, teachers’ reported knowledge was not associated with children's outcomes. One explanation for this might be evident in the qualitative data in which only a fifth of teachers specified what they learned about children's skills and a third made no mention of emergent literacy skills. This suggests that, although teachers may believe they have high knowledge of children's skills, these may not be robust enough understandings of how children develop skills (evidenced in their lack of articulation). Further, teachers may not know how to use knowledge to differentiate instruction for individual children. This is an important implication in considering the application of knowledge about children's skills into practice—yet more research on this is needed.

There were patterns of difference in data gathering and knowledge as well as child outcomes for phonological awareness and oral language separate from the other emergent literacy domains. Specifically, teachers reported lower average knowledge about phonological awareness and oral language. These are areas of practice that have been documented by the literature to be of lower quality and frequency in EC settings (Cabell et al., 2013; Pelatti et al., 2014). Importantly, for these domains, teachers reported relying more on noticing data than other sources for learning about children's skills. This mirrors findings by Carson and Bayetto (2018) that teachers most often relied on informal data for understanding children's phonological awareness skills. One explanation for this is that teachers may not find documented or standardized measures sufficient in helping them learn about these skills; however, given the inconsistency of noticing data in monitoring children's skills (Lonigan et al., 2011), this may not be an optimal approach for teachers. Furthermore, it may be that overall teachers have less knowledge of these two domains and how to connect these in assessment and practice. Importantly, we did observe that higher levels of reported knowledge about phonological awareness were associated with better child outcomes, suggesting that data knowledge about phonological awareness is important in using data to enhance children's outcomes.

Finally, teachers reported engaging in a multitude of data practices, often at high frequency. This is partially consistent with research by Brawley and Stormont (2014), who found that teachers endorsed most of the data uses as important, but contrasts with our previous study, in which we observed more variability in teachers’ data uses. Specifically, data use for planning, which was reported in higher frequencies here and by other researchers (Carson & Bayetto, 2018), was infrequent in our prior work. The open responses also revealed a new data practice of sharing data with others, which has been reported by other researchers (Brawley & Stormont, 2014; Zweig et al., 2015) and is a recommended data practice (Division for Early Childhood, 2014; National Association for the Education of Young Children, 2020). These differences in data use could be an artifact of study methodology (i.e., teacher self-report via questionnaire vs. qualitative observations and interviews), practices that were specific to the new sample of teachers, or social desirability bias given the increased focus on data use by different public agencies (Bertrand & Marsh, 2015; Coburn & Turner, 2012). These conflicting findings warrant further research to disentangle across teachers and contexts, particularly as none of the practices were associated with children's emergent literacy outcomes.

Complexity of Understanding Data Practices

Teachers engaged in complex data practices, integrating and prioritizing data differentially depending on use. We were able to observe the extent to which teachers integrated across various data sources, with teachers typically integrating two data sources. Notably, the level of integration was variable depending on the specific data use (e.g., planning), yet integration was not associated with child outcomes. Similarly, we investigated how teachers prioritized data in their practice. Overall, we noticed more prioritizing for in-the-moment instruction, with teachers focusing on one data source. Importantly, when planning for individual children, higher outcomes were observed for teachers who prioritized one data source compared to teachers who did not prioritize data—indicating the need for teachers to prioritize data during instruction. We observed negative associations between children's outcomes and prioritizing of multiple data sources for in-the-moment instruction. This finding was contradictory to what we might have expected (i.e., prioritizing multiple data sources being associated with higher child outcomes). It could be that for in-the-moment instruction, teachers who prioritized multiple data types were responding to children who needed a more intensive type of instruction; thus, outcomes were lower. Alternatively, it could matter which types of data sources teachers are integrating and the nature of the information provided in those data sources. However, given that this finding did not meet traditional levels of statistical significance, this needs attention in future research studies.

Limitations

Although this questionnaire was developed based on our qualitative framework and underwent piloting before administration, it may be that self-report via questionnaire is not the optimal way to understand data practices. Indeed, as described in our results, the differences in methods, including phrasing of the questions, may be contributing to some of the differences in findings across studies. Approaches that bring together expectations from the field regarding data practices along with teachers’ experiences are needed to better understand this phenomenon and how to use data to support improvements in children's outcomes. Additionally, triangulation across measures of data practices, enactment of instruction, and child outcomes should be considered. A limitation of our study is that we depended solely on teachers’ reported practices without observation of enacted practices; future efforts will need to examine these connections. We were also limited to GRTR-R in terms of the child data available, and findings may have been different if we were able to analyze child data that more specifically aligned with emergent literacy domains (e.g., phonological awareness data practices and phonological awareness learning). Additionally, the magnitude of effects we found was small (< .15), although it is important to note that these were standard scores and do account for children's development. Finally, given the role of context, both at the program and state level, it is also important to broaden this research to additional samples and settings. Although we were able to look at the data practices of teachers in two different states, we did not directly investigate the connection between policies and data practices critical for understanding systems of data use (Bertrand & Marsh, 2015; Young & Kim, 2010) or linguistic diversity of children, which is a critical consideration in supporting emergent literacy.

Conclusion

Collectively, our findings underscore the complexity of teachers’ data practices and the difficulty of measuring these constructs. As such, it is notable that we were able to detect any associations between practices and children's outcomes. Our research provides important insight into EC teachers’ data practices while identifying a continued need for more nuanced investigations of data practices and child outcomes. Specifically, this work highlights that teachers are confident in reporting their data gathering, knowledge, and use but may need more training in how to use data and translate this use into their classroom practices. Additional methods for accessing these constructs are needed, such as those that bridge embedded qualitative methods with more context-driven survey measures, to further our knowledge and achieve the aims of data-related policies to improve outcomes for children.

Supplemental Material

sj-docx-1-jlr-10.1177_1086296X231163116 - Supplemental material for Early Childhood Teachers’ Emergent Literacy Data Practices

Supplemental material, sj-docx-1-jlr-10.1177_1086296X231163116 for Early Childhood Teachers’ Emergent Literacy Data Practices by Rachel E. Schachter, Gloria Yeomans-Maldonado and Shayne B. Piasta in Journal of Literacy Research

Supplemental Material

sj-docx-2-jlr-10.1177_1086296X231163116 - Supplemental material for Early Childhood Teachers’ Emergent Literacy Data Practices

Supplemental material, sj-docx-2-jlr-10.1177_1086296X231163116 for Early Childhood Teachers’ Emergent Literacy Data Practices by Rachel E. Schachter, Gloria Yeomans-Maldonado and Shayne B. Piasta in Journal of Literacy Research

Supplemental Material

sj-docx-3-jlr-10.1177_1086296X231163116 - Supplemental material for Early Childhood Teachers’ Emergent Literacy Data Practices

Supplemental material, sj-docx-3-jlr-10.1177_1086296X231163116 for Early Childhood Teachers’ Emergent Literacy Data Practices by Rachel E. Schachter, Gloria Yeomans-Maldonado and Shayne B. Piasta in Journal of Literacy Research

Supplemental Material

sj-docx-4-jlr-10.1177_1086296X231163116 - Supplemental material for Early Childhood Teachers’ Emergent Literacy Data Practices

Supplemental material, sj-docx-4-jlr-10.1177_1086296X231163116 for Early Childhood Teachers’ Emergent Literacy Data Practices by Rachel E. Schachter, Gloria Yeomans-Maldonado and Shayne B. Piasta in Journal of Literacy Research

Supplemental Material

sj-docx-5-jlr-10.1177_1086296X231163116 - Supplemental material for Early Childhood Teachers’ Emergent Literacy Data Practices

Supplemental material, sj-docx-5-jlr-10.1177_1086296X231163116 for Early Childhood Teachers’ Emergent Literacy Data Practices by Rachel E. Schachter, Gloria Yeomans-Maldonado and Shayne B. Piasta in Journal of Literacy Research

Supplemental Material

sj-docx-6-jlr-10.1177_1086296X231163116 - Supplemental material for Early Childhood Teachers’ Emergent Literacy Data Practices

Supplemental material, sj-docx-6-jlr-10.1177_1086296X231163116 for Early Childhood Teachers’ Emergent Literacy Data Practices by Rachel E. Schachter, Gloria Yeomans-Maldonado and Shayne B. Piasta in Journal of Literacy Research

Footnotes

Acknowledgments

The authors would like to thank the teachers who participated in this study.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Spencer Foundation

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.