Abstract

The confluence of No Child Left Behind and the National Reading Panel report on the five essential components of reading instruction forged a path for struggling adolescent readers that supports a narrow curriculum designed to address gaps in constrained skills. Adolescents from a large school district in the state of Tennessee who failed state assessments in reading (N = 94) were assessed using measures of phonemic awareness, decoding, fluency, orthography, vocabulary, and comprehension. Factor and cluster analysis of the assessment data revealed a heterogeneous population of students. Based on three factors (meaning, rate, and word knowledge), four clusters emerged representing varying abilities.

Keywords

The implementation of the No Child Left Behind Act of 2001 (NCLB) increased mandated accountability systems that are based on student performance on standardized reading assessments. These high-stakes assessments are first administered to students in Grade 3 and are designed to measure progress on state reading standards. NCLB testing requirements extend through eighth grade, but little attention is given in the policy to the research and instruction of adolescents (Conley & Hinchman, 2004).

The intended goals of NCLB include (a) increased accountability, (b) more choices for parents and students, (c) greater flexibility, and (d) putting reading first (NCLB, 2001). These goals were designed to narrow the achievement gap and create a level playing field for disadvantaged students, through the assessment of progress on challenging state academic standards in reading and math.

Since the inception of NCLB, reading instruction in the early grades has received increased funding and attention. Funding for reading initiatives geared toward the development and implementation of scientifically based reading programs in kindergarten to second grade focused on the five pillars of reading instruction set forth by the National Reading Panel. The five pillars include phonemic awareness, phonics, oral reading fluency, vocabulary, and comprehension. Each of these areas has been linked as a predictor of future reading success and, as such, viewed as a hierarchy for reading instruction; mastery of each is necessary for continued growth in the next area (National Institute of Child Health and Human Development [NICHD], 2000; Paris, 2005; Spear-Swerling, 2004).

Linn (2000) argues that the focus on high-stakes standardized assessments results in a narrowing of the curriculum, and Rupp and Lesaux (2006) agree that such narrowing leads to increased instruction in constrained skills, or those with a high ceiling of mastery, such as phonemic awareness, decoding, and fluency (Paris, 2005), while focusing less instructional time on the multifaceted processes of vocabulary and comprehension (Lesaux & Kieffer, 2010). They offer that this occurs because state assessments are not equipped to break down the myriad components involved in comprehending text because reading comprehension is dependent on factors related to the text, the reader, and the act of comprehension itself (Rupp & Lesaux, 2006). Furthermore, Rupp and Lesaux caution that it cannot be assumed that a state assessment of reading is a valid measure of the construct(s) it is purported to measure, nor that the “results from the assessment can be used for decision making at two different levels, at an aggregate level to monitor student achievement and an individual level to guide instruction and intervention programs” (p. 318).

Rupp and Lesaux (2006) empirically investigated the relationship between performance on a high-stakes standardized assessment of reading and performance on a diagnostic battery of reading skills. They assessed 1,111 fourth grade students, measuring speed and accuracy of word reading, phonological and syntactic knowledge, spelling skills, and working-memory skills. Rupp and Lesaux found little to no correlation between students’ proficiency levels on the standardized assessment of reading and the profiles developed from the diagnostic battery of reading skills.

Yet students scoring below proficient on high-stakes assessments are often identified at some level of risk and placed in remedial reading classes (Buly & Valencia, 2002; Franzak, 2006; Lesaux & Kieffer, 2010; Pressley, 2004). Franzak (2006) suggests these placements are based on a deficit model, which incorporates the assumption that students performing poorly on high-stakes assessments of reading “have not yet developed the necessary skills for functioning at a particular grade level” (p. 214). This interpretation of standardized test scores suggests that students are incapable of learning the material presented at their grade level and must instead revert to instruction for students in earlier grades. Such a basic view of measures of reading achievement loses sight of the many adolescents who are capable of learning about complex topics.

Buly and Valencia (2002) tested the deficit model assumption. Several reading assessments, ranging from phonics to comprehension, were administered to 108 fourth grade students who failed the Washington Assessment of Student Learning. Most of the students demonstrated difficulty reading fluently and for meaning but sufficiently developed decoding and word recognition skills. Providing these fourth graders with instructional interventions steeped in decoding skills was superfluous since the instruction that was based on decoding would not match the variety of reading skills, nor the indicated needs, represented by the data (Buly & Valencia, 2002).

Building on the work of Buly and Valencia (2002) and Rupp and Lesaux (2006), Lesaux and Kieffer (2010) examined the complex factors related to comprehension difficulties of diverse learners, specifically students identified as language minorities. Using latent class analysis, Lesaux and Kieffer developed profiles of 262 struggling readers, based on a battery of assessments related to reading skills and working memory. Their analysis drew three distinct profiles, all of which indicated that the majority of students in the study demonstrated developed basic fluency skills, but low vocabulary knowledge. Lesaux and Kieffer reported, “LM status was not found to predict membership in any of the three latent classes; that is, LM learners were not found to disproportionately demonstrate any of the three skill profiles” (p. 616). Thus, developing significantly different instruction for LM learners is unwarranted. Instead, the authors point to teachers implementing models of meaningful data-driven decision making to provide appropriate instruction for all students who struggle with reading.

In a study of 161 fourth and fifth grade students, Leach, Scarborough, and Rescorla (2003) administered a series of assessments to students who were early identified (before third grade) as reading disabled (n = 31), students who were late identified (after third grade) as reading disabled (n = 35), and students who were normally achieving (n = 95) to determine whether or not students who demonstrated late-emerging reading disabilities had deficits in word identification, comprehension, or both. Results from the measures administered revealed heterogeneous profiles of adolescent reading. Those students labeled with late-emerging reading disabilities exhibited a range of reading difficulties, “35% had word-level processing deficits in combination with adequate comprehension skills, 32% showed weak comprehension skills accompanied by good lower level skills, and 32% exhibited both kinds of difficulty” (p. 220). The authors then reviewed third grade achievement results, which indicated higher achievement levels for students labeled with late-emerging reading disabilities and concluded that “their reading abilities were not just late identified but actually emerging” (p. 211). The students labeled with late-emerging reading disabilities showed normal development in reading through the third grade but were demonstrating difficulty by the fourth grade as they were transitioning to the strategic reading stage of development (Spear-Swerling, 2004).

Saenz and Fuchs (2002) examined the reading abilities of 111 adolescents with learning disabilities. Students read two narrative passages and two expository passages and answered explicit and implicit comprehension questions on both. The authors noted that students responded equally well on explicit questions for both narrative and expository text but were challenged when asked to respond to implicit questions, particularly about the expository passages. Although this study indicated that the students tested demonstrated abilities with word level skills, such as decoding, combining the assessments used with additional measures of various skills would provide a more detailed profile of these adolescent readers.

In many ways, the discussion of adolescent reading is framed within a conception of reading difficulties that suggests a certain homogeneity: students who are below proficient on standardized assessments of reading, those who are struggling, those who are missing specific literacy skills, and those designated as reading below grade level. Although we have some evidence that patterns of variation exist in the elementary grades (Buly & Valencia, 2002; Rupp & Lesaux, 2006), we still know too little about the heterogeneity of adolescent readers and its implications for instructional intervention policies and practices (Lesaux & Kieffer, 2010). Without this knowledge, it is difficult to define appropriate intervention designs for adolescent struggling readers, develop proficiency standards to estimate students’ progress, and effectively plan for instruction that acknowledges the abilities and meets the needs of these students.

Paris (2005) offered that the five pillars of reading instruction are essential to students learning to read and that those skills coordinate as students develop into increasingly proficient readers. The skills are acquired at various rates and, as Paris argues, vary in scope, importance, and individual differences. Paris delineates the skills into two categories: constrained and unconstrained.

Constrained skills are those that have a ceiling for mastery, such as letter knowledge and phonemic awareness, and unconstrained skills are those without a ceiling such as vocabulary and comprehension. Paris warns that constrained skills have a relatively rapid rate of acquisition and are easier to assess than unconstrained skills, which are not a finite set. Much research has been conducted on the instruction and assessment of constrained skills, with less research on vocabulary and comprehension, suggesting a higher level of importance should be placed on constrained skills. Because of the rapid rate of acquisition, however, constrained skills are mastered early in life, and although additional training in constrained skills later in life may result in increased test results for those skills, the additional training of an already obtained skill does not transfer to the development of unconstrained skills (Paris, 2005). Paris (2005) suggests that mastery of constrained skills follows a sigmoid growth function, “in which initial acquisition of a skill is slow, followed by a period of rapid learning, and then followed by a slower rate of growth as asymptotic performance is approached” (p. 190). Therefore, it is possible for adolescent readers to demonstrate varying levels of proficiency on assessments of constrained skills, but additional instruction in constrained skills will not increase development of skills such as vocabulary and comprehension (Lesaux & Kieffer, 2010). This is not to say that all adolescents have mastered constrained skills. To the contrary, there are many who do require instruction in constrained skills to successfully progress as readers. The purpose of this multivariate correlational study is to determine the patterns of reading abilities of struggling adolescent readers. More broadly, this study was designed to add to the body of work demonstrating the individual variability of these students and the variability of reading skills that they bring to middle school classrooms (Lesaux & Kieffer, 2010). This addition to the literature will support instructional decision making by classroom teachers and has the potential to inform policy decisions for adolescent readers.

Method

Participants

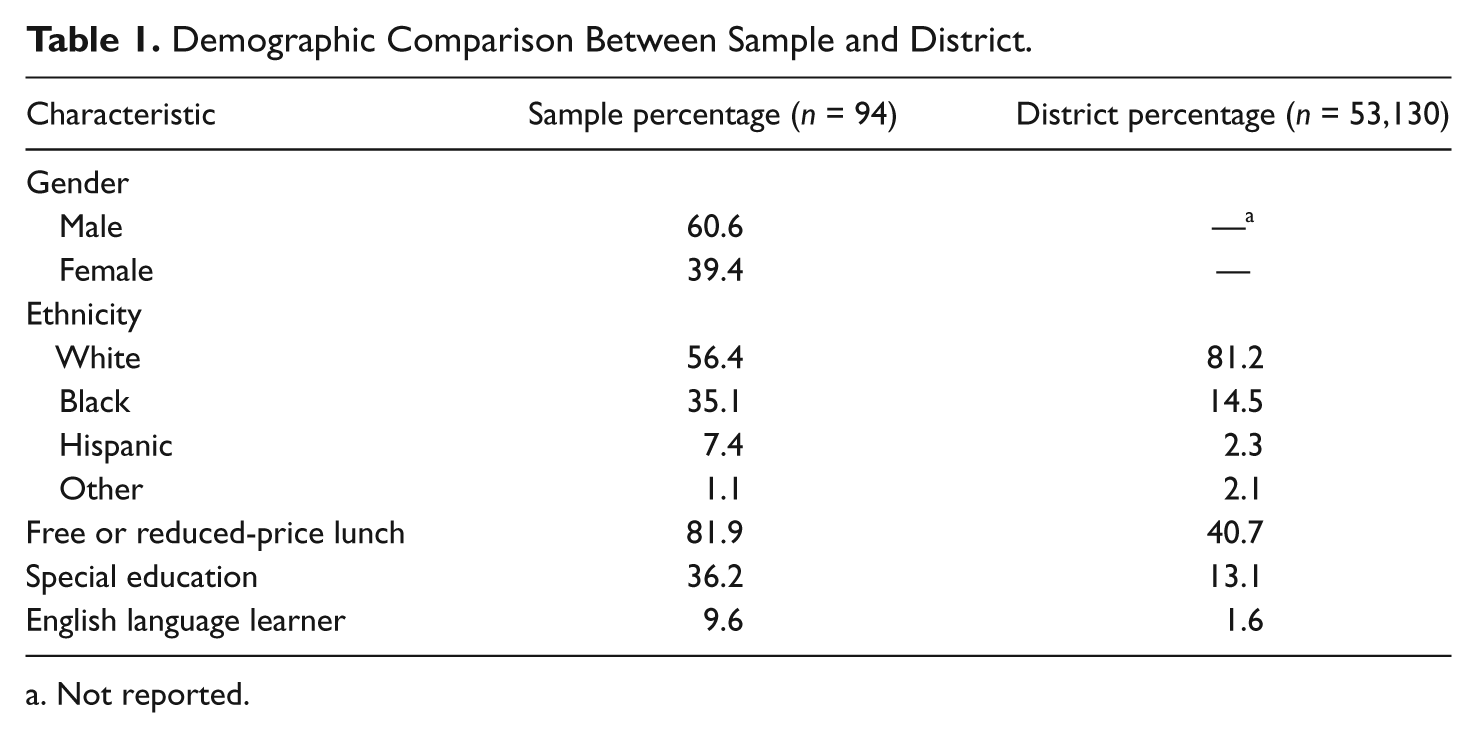

Data were collected during the fall of the 2006-2007 academic year from a large school district in the state of Tennessee. The district is populated by 53,130 students who are predominately Caucasian (81%), and 41% of the students qualify for free or reduced-price lunch, 13% receive special education services, and 2% are English language learners (ELLs). The district director of curriculum and accountability chose four middle schools to participate. The schools chosen were representative of the district demographics (Table 1). Once the schools were identified, 94 students were selected to participate in the study, based on the fact that (a) they were enrolled in a district middle school (Grades 6-8) and (b) they scored below proficient on the 2005-2006 Tennessee Comprehensive Assessment Program (TCAP) in reading (Tennessee Department of Education [TNDOE], 2006). Although the schools chosen for the study were representative of the district demographics, the sample of students within the study varied greatly (Table 1). The sample consisted of only 56% Caucasian students (36% Black, 7% Hispanic, 1% Other). The number of students who qualified for free or reduced-price lunch was twice that of the district (82%), and the numbers of students receiving services in special education (36%) and ELL (10%) were also significantly larger. Discrepancies exist between the district’s demographics and those of the study sample, highlighting that students who score below basic on standardized assessments largely represent populations that are poor, minority, and receiving special services.

Demographic Comparison Between Sample and District.

Not reported.

Assessments and Procedure

Each participant (n = 94) was administered a battery of five assessments that measured phonemic awareness, phonics, decoding, fluency, spelling, vocabulary, and comprehension. The primary researcher administered each assessment on an individual basis. Each administration took place in a quiet room within the library at each school and lasted approximately 45 to 90 minutes in a single setting. Students who did not complete all assessments were not included in the study. Thus, more than 94 students were initially assessed, but only 94 completed the entire battery of assessments. The sample size is a limitation of this research. However, given the individual administration of assessments, and that the sample is similar in size to the study conducted by Buly and Valenica (2002; n = 108), the sample size does have the capacity to inform the literature relating to adolescents who struggle with reading and provides a platform on which future research may be developed.

The skills measured by the assessments are representative of both highly and less constrained skills (Paris, 2005) and the five pillars of reading instruction endorsed by the National Reading Panel (NICHD, 2000). The author acknowledges that key individual differences, specifically those noted by Rupp and Lesaux (2006) and Lesaux and Kieffer (2010) as related to the text, the reader, and the comprehension process itself, are not tested in this study. Although a conservative view of reading ability, which does not address all of the factors involved in reading comprehension, is assessed, the assessments were chosen to mirror those in the Buly and Valencia (2002) study as their approach highlighted the specific types of interventions offered to students identified as struggling readers based on the results of high-stakes standardized assessments.

Each of these assessments was compared to individual student results on the TCAP. In response to the requirements of NCLB, the state of Tennessee revised the TCAP. This criterion-referenced standardized assessment monitored student proficiency with the Tennessee content standards, which are specific, measurable skills, in Grades 3-8 (TNDOE, 2006). Teachers receive score reports that provide them with each student’s scale score and performance level (advanced, proficient, below proficient) on seven separate reporting categories in reading. The reporting categories for reading are broadly listed as content, meaning, vocabulary, writing/organization, writing/process, grammar/conventions, and techniques/skills (TNDOE, 2006). Students scoring below proficient did not answer enough questions correctly to satisfy the minimum state requirements in that reporting category (TNDOE, 2006). According to the TNDOE (2006), TCAP results were used by schools to make instructional decisions about individual students, and the Tennessee Reading Policy (Tennessee State Board of Education, 2005) calls for students earning below-proficient scores to receive “direct and explicit reading instruction using a comprehensive SBRR [Scientifically Based Reading Research] program that systematically and effectively includes the five essential elements of reading [designated by the National Reading Panel] taught appropriately per grade level” (p. 4). Although the use of high-stakes standardized assessments to make instructional decisions for individual students proliferated following NCLB, it was the National Reading Panel that had a major influence on the type(s) of instruction students would receive based on their assessment scores, and the combination of these two documents influenced the instructional decisions made for the students in this study (Tennessee State Board of Education, 2005; TNDOE, 2006).

The assessments used in this research parallel those in the research conducted by Buly and Valencia (2002). However, the assessments varied slightly for several reasons. First, these authors reported that results on the Comprehensive Test of Phonological Processing (CTOPP; Wagner, Torgesen, & Rashotte, 1994) did not correlate with scores on the other assessments, and therefore the results were not used in determining patterns of reading abilities. Thus, the CTOPP was not used in this research. To remain consistent in terms of the skills being assessed, the Test of Word Reading Efficiency (TOWRE; Torgesen, Wagner, & Rashotte, 1999) replaced the CTOPP. The TOWRE was chosen because it assesses similar skills (phonological awareness) and reports satisfactory reliability. In addition, Schatschneider et al. (2004) administered the TOWRE in a study demonstrating that seventh graders who scored on the lowest performance levels of the Florida Comprehensive Achievement Test (FCAT) scored below average on measures of phonemic decoding ability. Although the authors suggested these scores and the subsequent skills represented by the scores were correlational, they provided little evidence beyond percentile ranking to demonstrate the correlation between results on the TOWRE and low performance levels on the FCAT.

Another assessment not included in the Buly and Valencia (2002) study was the Intermediate Spelling Inventory (Bear, Invernizzi, Templeton, & Johnston, 2004). Bear et al. (2004) reported on the link between reading development and orthographic (spelling) development and designed a series of spelling inventories to assess the latter. The Intermediate Spelling Inventory was chosen for the purposes of this study because it is the only inventory designed for use with all of the grades participating in this research. Buly and Valencia qualitatively analyzed individual writing samples in their study to assess spelling knowledge. For purposes of uniformity of data, however, the researcher chose to administer the Intermediate Spelling Inventory because it provided feature points that are assigned to students’ spelling ability and are potentially less subjective than the scoring of a writing assessment. The feature points were subsequently used as data points within the factor and cluster analysis. The remaining assessments administered to this sample of adolescent struggling readers paralleled those used by Buly and Valencia. When available, versions of the assessments that were updated (e.g., Qualitative Reading Inventory-3 was updated to Qualitative Reading Inventory-4) prior to the study presented in this article or renormed since the Buly and Valencia study were included. Each assessment is discussed below, beginning with the highly constrained skills measured and ending with the least constrained skills measured.

Woodcock-Johnson Diagnostic Reading Battery-III

The Basic Reading Skills cluster of the Woodcock-Johnson Diagnostic Reading Battery-III (WJR-III; Woodcock, 1998) was administered to students. This cluster includes Letter-Word Identification and Word Attack. Letter-Word Identification measured students’ ability to accurately identify letters and to read mono- and multisyllabic words. Word Attack measures students’ ability to apply sound-symbol (phonic) relationships and structural analysis, or decoding skills, to pronounce pseudo-words. According to Woodcock (1998), the Basic Skills Cluster provides an “aggregate measure of word identification and phonic and structural analysis” (p. 12). The cluster reliability is .93 (Woodcock, 1998).

Test of Word Reading Efficiency

The TOWRE (Torgesen et al., 1999) consists of two sections, Sight Word Efficiency and Phonemic Decoding Efficiency. Sight Word Efficiency measures the number of real printed words that are quickly and accurately identified. This assessment demonstrates students’ ability to recognize familiar words as whole units. Phonemic decoding efficiency assesses the number of pronounceable nonwords that are quickly and accurately decoded. This assessment stresses students’ ability to read “pronounceable non-words out of context and fully analyze each word to produce the correct pronunciation” (p. 8). According to Torgesen et al. (1999), “The use of the word efficiency in the title of the TOWRE is meant to communicate that the total scores on the test reflects both the accuracy and the speed with which children can execute word reading processes” (p. 8), as students are given 1 minute to read as many words or nonwords as they can. Test retest reliability is .88 (Torgesen et al., 1999).

Intermediate Spelling Inventory

The Intermediate Spelling Inventory (ISI) measures students’ orthographic knowledge. Spelling inventories consist of words specifically chosen to represent a variety of spelling features or patterns at increasing levels of difficulty (Bear et al., 2004). Assessed patterns progress from C-V-C words that address beginning, ending, and middle sounds (e.g., fan), to words with more complex vowel patterns (e.g., spoil), to more sophisticated words focused on Greek and Latin roots (e.g., fortunate). Students spell a list of words, and their errors determine the orthographic stage in which they operate. According to Bear et al. (2004), “Becoming fully literate is absolutely dependent on fast, accurate recognition of words in texts, accurate production of words in writing so that readers and writers can focus their attention on making meaning” (p. 4). Reliability for the ISI has not been reported.

Peabody Picture Vocabulary Test

According to Dunn and Dunn (1997), the Peabody Picture Vocabulary Test-III (PPVT-III) serves two purposes: “(1) as an achievement test of receptive (hearing) vocabulary attainment for standard English; and (2) as a screening test of verbal ability” (p. x). According to Buly and Valencia (2002), this assessment measures “receptive vocabulary knowledge independent of students’ ability to decode words” (p. 225). Students receive a series of pictures and are asked to provide a one-word answer defining the picture. This process continues until the student incorrectly defines five words in a row. The median test retest reliability is .92 (Dunn & Dunn, 1997).

Qualitative Reading Inventory-4

The Qualitative Reading Inventory-4 (QRI-4; Leslie & Caldwell, 2006) is a commercially available informal reading inventory. An approximate reading level is determined through the use of word lists, which students read aloud to the researcher. The researcher then determines an appropriate level to begin comprehension assessment, based on the highest independent level from the word lists, which requires 90% accuracy (Leslie & Caldwell, 2006). Following the assessment protocol, the researcher asks the students a series of concept knowledge questions to determine the students’ prior knowledge of a subject before reading. This is necessary to determine the level of understanding students had on a topic prior to reading a text. Students were then asked to read narrative and expository passages aloud. As the students read, the researcher marks all oral reading errors and times the students to determine accuracy and rate. The QRI-4 provides an estimate of the student’s comprehension of each passage through a series of questions. This process continues until an independent and instructional reading level is determined for each student. The independent reading level is determined based on accuracy of word recognition (98% or higher) and comprehension scores (90% or higher) on the passage. Also, the students’ words correct per minute (WCPM) score was determined based on their independent comprehension reading level. Interrater reliabilities are .99 for Oral Reading Miscues and .98 for Comprehension (Leslie & Caldwell, 2006).

Results and Discussion

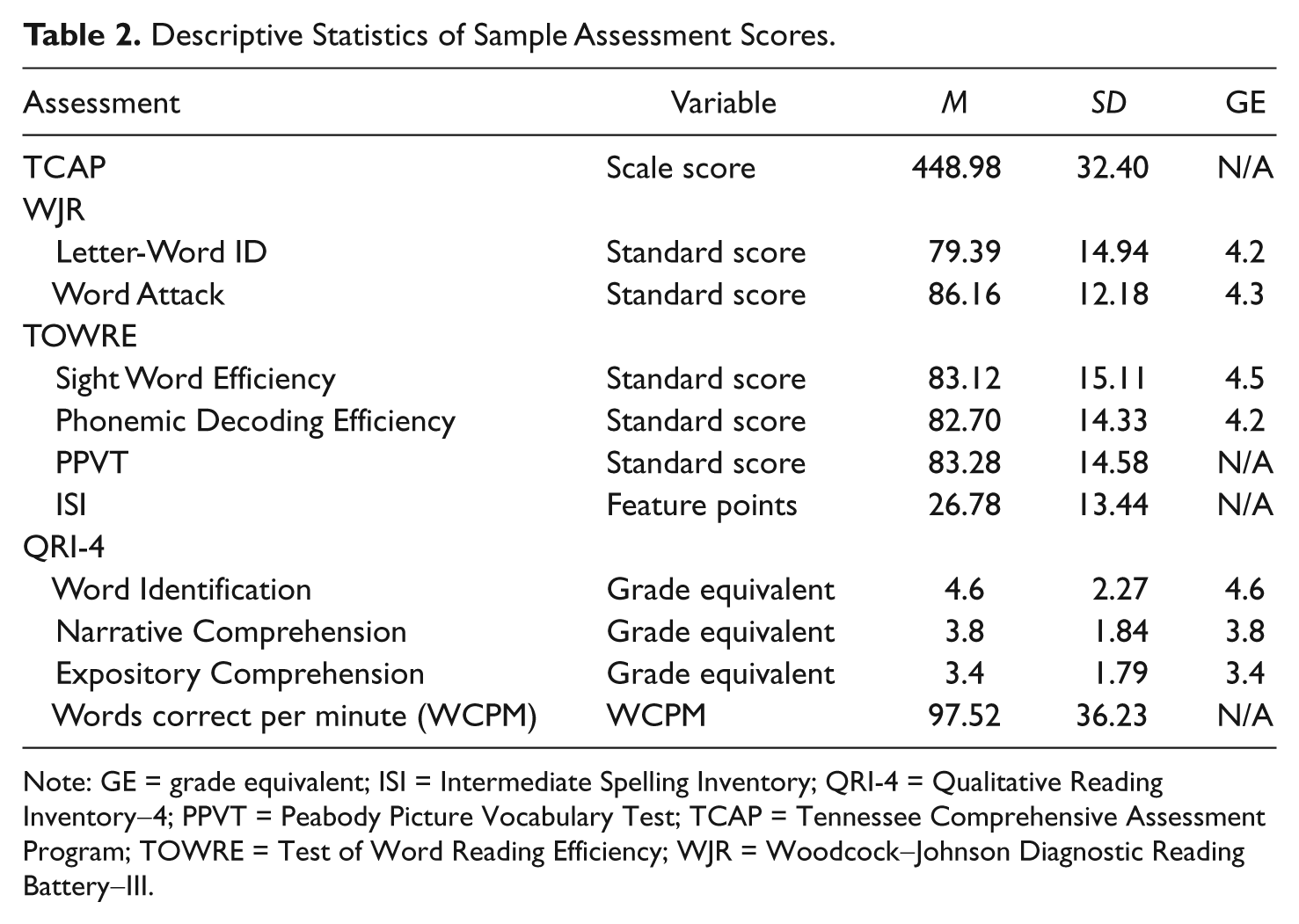

As demonstrated by the descriptive statistics in Table 2, students in the sample scored below grade level on all measures, including the TCAP. With the exception of the PPVT, an assessment of receptive (rather than reading) vocabulary, all of the assessments correlated with the TCAP. The large variability within the assessment scores indicated a need for further analysis to determine patterns of reading ability among the sample. Factor analysis and cluster analysis were used to reduce data and determine patterns of reading abilities, respectively.

Descriptive Statistics of Sample Assessment Scores.

Note: GE = grade equivalent; ISI = Intermediate Spelling Inventory; QRI-4 = Qualitative Reading Inventory-4; PPVT = Peabody Picture Vocabulary Test; TCAP = Tennessee Comprehensive Assessment Program; TOWRE = Test of Word Reading Efficiency; WJR = Woodcock-Johnson Diagnostic Reading Battery-III.

Factor Analysis

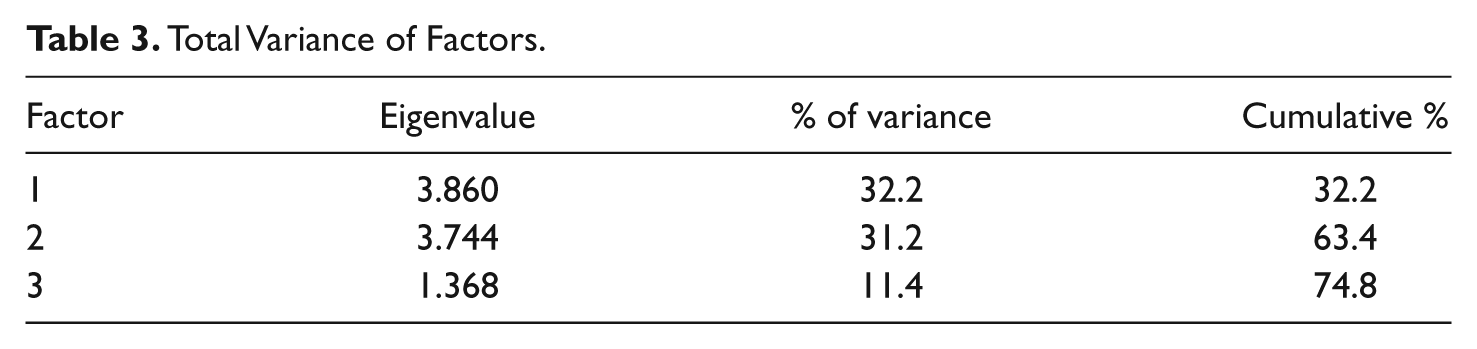

Exploratory factor analysis was employed to reduce the data and identify variables with common underlying constructs. Because of the sample size and the expectation that all the variables would be related, the researcher used varimax rotation, which identified each variable to a single solution. As suggested by DeCoster (1998) only those variables with a loading of 0.5 or higher were included. Three factors emerged, with a total variance of 74.8% (Table 3).

Total Variance of Factors.

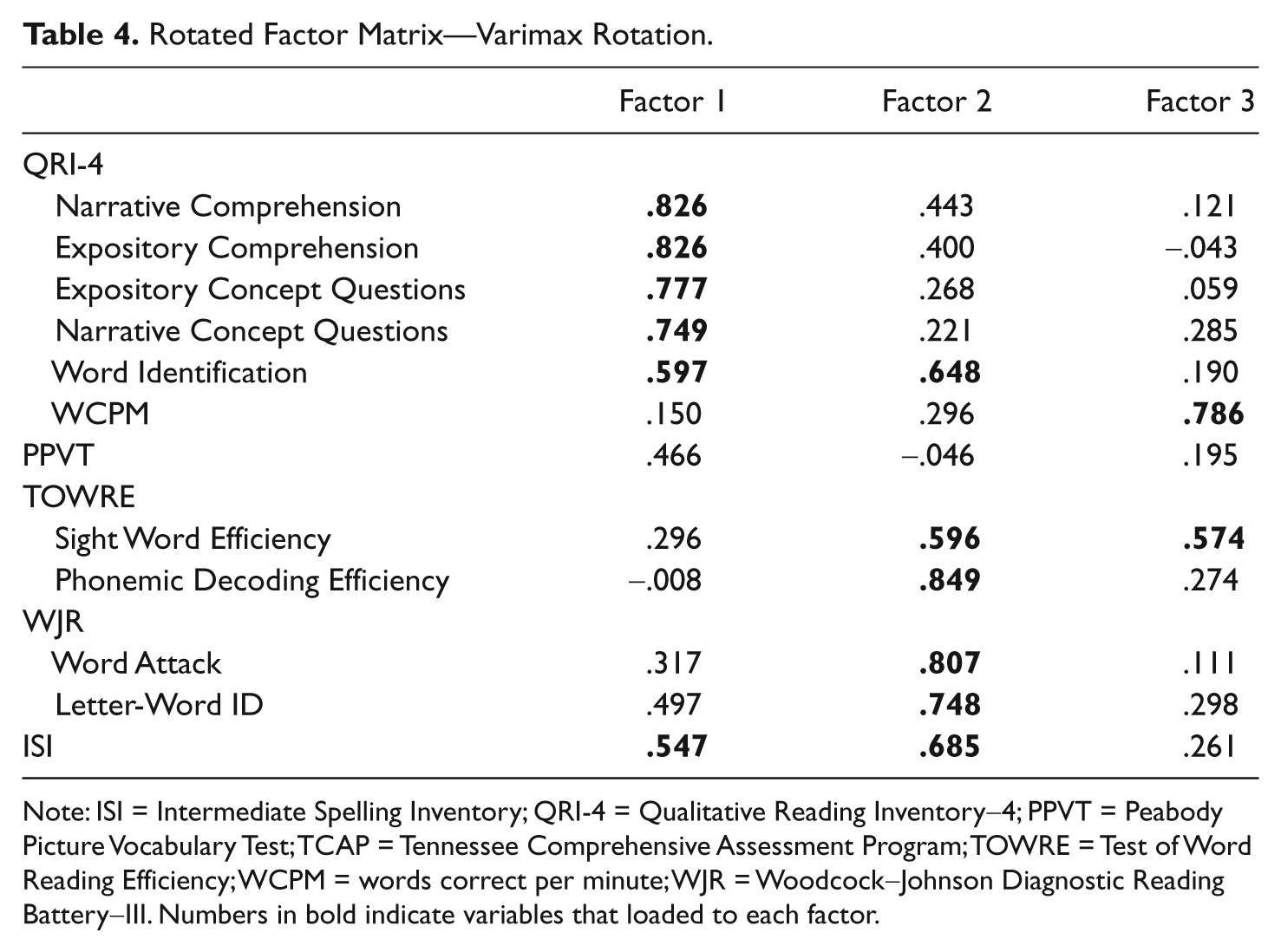

Child (1990) warns, “The problem of naming factors has the drawback of requiring, in some cases, a notion of causal determinants” (p. 8). Thus, each is discussed in terms of the reading skills that loaded to the factor as an indicator of below-proficient scores on TCAP. In other words, the factors that emerged from this procedure, cautiously labeled as Meaning, Decoding, and Rate, were related to students’ below-proficient scores on the TCAP, but not the cause of those scores. Paris (2005) suggested this acknowledgment minimizes the proxy effect problem that occurs when researchers create causal inferences from correlational data.

Factor 1: Meaning

With exception of the WCPM, all components of the QRI-4 loaded to this factor. In addition, the ISI also loaded to Factor 1. The narrative and expository comprehension and concept questions, which measure prior knowledge of topics within the text (Leslie & Caldwell, 2006) produced high loadings. This factor presents 32.2% of the total variance. Although the PPVT accounted for little of the variance in this study, it was on this factor that it demonstrated the highest loading (.466), suggesting a limited relationship to Factor 1 (Table 4). The fact the PPVT does not require students to read but is a test of receptive vocabulary may explain the lack of correlation with the other assessments. Meaning, then, is an indicator related to struggling adolescents’ below-proficient scores on TCAP on Factor 1.

Rotated Factor Matrix—Varimax Rotation.

Note: ISI = Intermediate Spelling Inventory; QRI-4 = Qualitative Reading Inventory-4; PPVT = Peabody Picture Vocabulary Test; TCAP = Tennessee Comprehensive Assessment Program; TOWRE = Test of Word Reading Efficiency; WCPM = words correct per minute; WJR = Woodcock-Johnson Diagnostic Reading Battery-III.

Factor 2: Decoding

The second indicator of students’ below-proficient scores on TCAP accounted for 31.2% of the total variance. All decoding variables load to this factor. Measures of both real words and nonsense words load to this factor, as does the ISI. As noted in the descriptive statistics section, average grade-level equivalent scores for most measures of decoding were in the early to middle fourth grade range, which demonstrated students are able to decode words beyond a basic level but do so below the grade level in which they are enrolled. The similarity in variance between Factors 1 and 2 indicates that variables loading to these two factors are equally related to students below-proficient scores on TCAP. Thus, further review of these constructs is needed.

Factor 3: Rate

Indicating a relationship to students’ below-proficient performance on TCAP, with a variance of 11.4%, this factor is labeled rate. Two assessment components, QRI-4 WCPM and the TOWRE Sight Word Efficiency subtest, load to this factor. Both are timed measures of students’ ability to decode real words. The mean score on the WCPM, for the study participants, is 97.5 words per minute. According to Hasbrouck and Tindal (2006), this average represents oral reading fluency at or below the 25th percentile for students in Grades 6-8. Further examination of the role of reading rate, as well as meaning and decoding, is necessary to determine patterns of reading abilities that emerge from these indicators.

Cluster Analysis

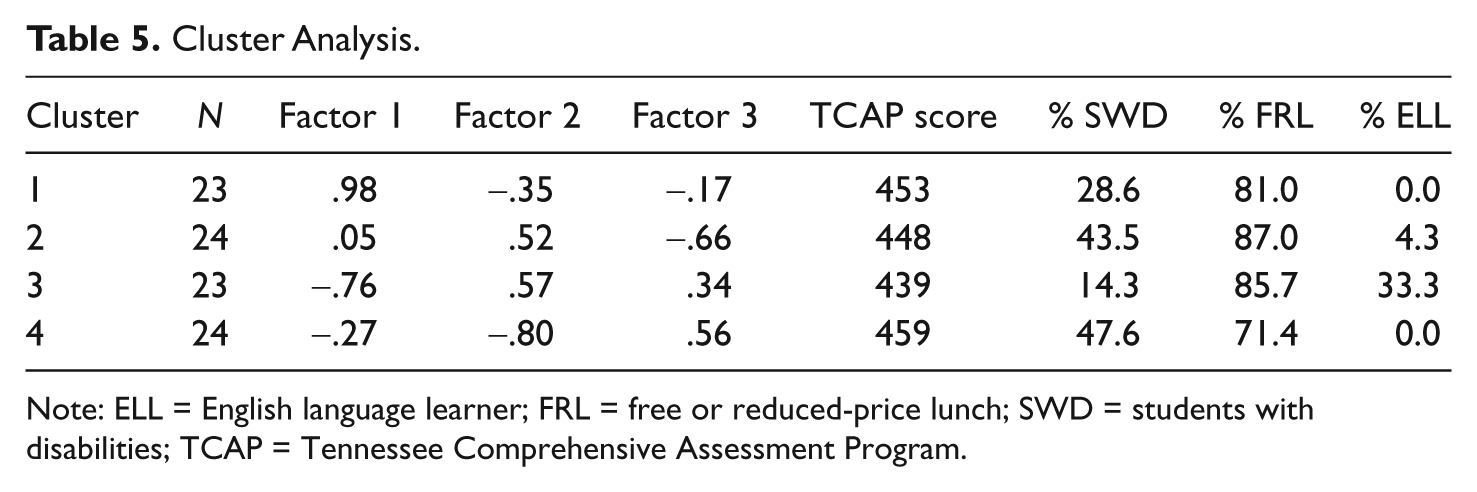

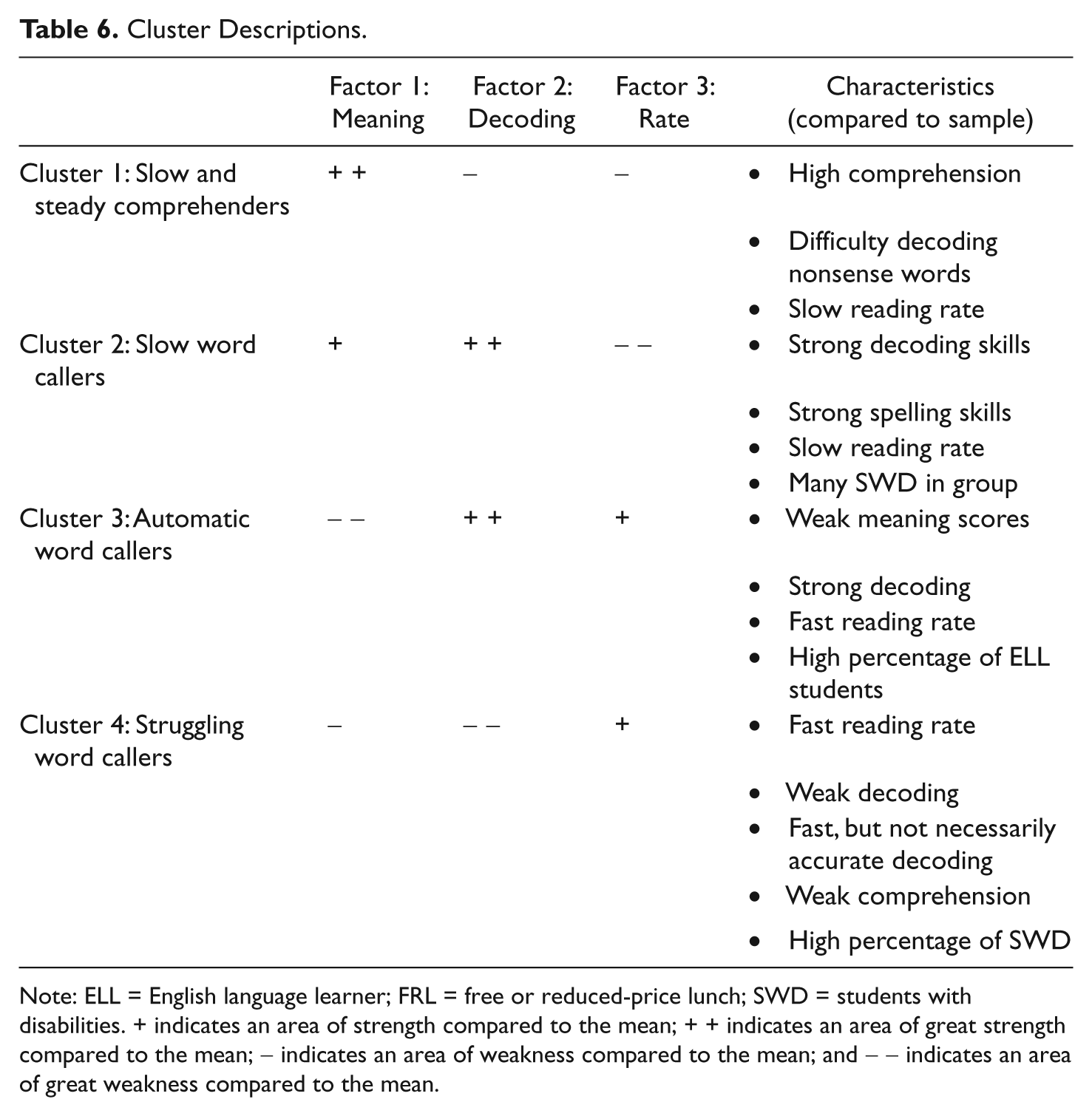

To determine patterns of reading abilities among the students in the sample, the three factors that emerged from the factor analysis were utilized, and the similarities and differences of reading abilities among the students were determined using cluster analysis. Because of the relatively small sample size in this study, hierarchical cluster analysis (HCA) was used. In HCA, each student is a case, and the three factor scores are represented by each case. The factor scores are obtained by averaging the student’s standardized variable scores (z-scores) for each factor. Table 5 represents the z-scores for each of the three factors from the salient clusters that are extracted as well as the mean TCAP scale score for each cluster and demographics relating to students with disabilities, free or reduced-price lunch, and ELL. The table demonstrates how many standard deviation units the variables lie above or below the mean of its distribution. Four salient clusters were extracted, and each was named based on Buly and Valenica’s (2002) classification, which focuses on the skills students in each cluster demonstrated (Table 6).

Cluster Analysis.

Note: ELL = English language learner; FRL = free or reduced-price lunch; SWD = students with disabilities; TCAP = Tennessee Comprehensive Assessment Program.

Cluster Descriptions.

Note: ELL = English language learner; FRL = free or reduced-price lunch; SWD = students with disabilities. + indicates an area of strength compared to the mean; + + indicates an area of great strength compared to the mean; − indicates an area of weakness compared to the mean; and − − indicates an area of great weakness compared to the mean.

Cluster 1: Slow and steady comprehenders

Of the four clusters represented, students in Cluster 1 demonstrate the highest overall scores on Factor 1, which are the variables related to meaning (i.e., QRI-4). On average, students in this cluster earn independent grade-level equivalent scores of 5.0 and 4.4 on narrative and expository text, respectively. These scores are nearly one standard deviation above the mean of the entire sample. Although both sets of scores were below the grade level in which the students were enrolled, the scores demonstrate the students’ ability to make meaning from text. Students in this cluster also bring more knowledge to the text, demonstrated by these students earning higher levels on the QRI-4 Concept Questions than students in the other clusters (Leslie & Caldwell, 2006). In addition, the PPVT scores of students in Cluster 1 are the highest of any cluster, indicating a higher overall knowledge of words than the rest of the sample.

Mean scores on Factor 2 and Factor 3 are below the sample mean. A closer look at the means of individual assessment components, however, reveals that students in Cluster 1 score at levels comparable to the means of the sample on assessments of real words (WJR Letter-Word ID, TOWRE Sight Word Efficiency) and score higher than the sample mean on QRI-4 Word Identification. In addition, students in this cluster demonstrate understanding of alphabetics by producing ISI scores that are higher than the scores from most other clusters. Cluster 1 students score considerably lower than the sample mean on measures requiring the decoding of nonsense words (WJR Word Attack and TOWRE Phonemic Decoding Efficiency). Finally, the rate at which students in Cluster 1 read is below the sample mean.

Schatschneider et al. (2004) report on seventh grade students who score below basic on a state assessment. Based on scores from the TOWRE Phonemic Decoding Efficiency subtest, the authors conclude that these students are deficient in phonemic decoding and fluency. This assertion creates an image of a homogeneous group of struggling adolescent readers who need training in decoding skills and masks the underlying, multifaceted needs and abilities of these students. Although the students in Cluster 1 score below the mean of the sample on the TOWRE Phonemic Decoding Efficiency subtest, further examination of their reading abilities indicates that these students are able to read for meaning from appropriately leveled text. This supports Paris’s (2005) argument that highly constrained skills, such as those assessed by the TOWRE Phonemic Decoding Efficiency subtest, are learned and mastered in childhood and “thus yield asymptotic performance with minimal variance before and after their brief periods of learning” (p. 196). That is, the decontextualized structural analysis task causes students who are normally able to decode to appear unable. In a study of early readers, Cunningham et al. (1999) concluded that the use of nonwords may be “harder and less valid decoding items because they require a task-specific kind of self-regulation” (p. 411). In other words, providing students with instruction on how to decode nonsense words does not produce higher standardized assessment scores because these students are already reading common words automatically and more directly making meaning from text. Therefore, it is reasonable to assume that interventions that are focused on decoding skills at the expense of comprehension instruction will not be beneficial. However, it is important to note that students in Cluster 1 demonstrated lower reading rates than students in most other clusters. Thus, it is possible that fluency is a factor in these students’ lower overall comprehension scores, and further assessment and instruction in this area may benefit students in Cluster 1.

Cluster 2: Slow word callers

Overall, students in Cluster 2 earned higher scores on the measures related to Decoding (Factor 2), with particularly high scores compared to the sample mean on measures of nonsense word decoding. At first glance, it appears as though they have stronger decoding skills than their peers in Cluster 1. However, a more careful look reveals that students in Cluster 2 may devote more attention to word identification, and therefore actually read words less automatically than those students in Cluster 1 (Samuels, 2004). Because the skills measured in Factor 2 are constrained, evidence of mastery may take on different forms for different students, especially adolescents who demonstrate the asymptotic patterns noted by Paris (2005). Further examination of the assessment scores from Factor 2 demonstrates that students in Cluster 2 have the highest level of orthographic knowledge of any of the clusters. In fact, they were able to apply their knowledge of alphabetics on the ISI at a higher level than their peers in the other clusters.

Students in Cluster 2 demonstrated higher decoding skills than meaning skills, which is evidenced in the overall sample means. However, students within this cluster demonstrate the ability to make meaning from text, earning grade-level equivalent scores on the QRI-4 that are higher than the overall sample means. It is their ability to answer content knowledge questions that impedes their overall Factor 1 scores. That is, they are unable to display their skills in more pragmatic applications. Although prior knowledge has been indicated as an essential element of comprehension (National Association of State Boards of Education, 2005), students in Cluster 2 were able to make meaning from the text despite a lower level of knowledge of the content prior to reading the text. It would be a mistake, then, to view their overall meaning score as an indicator that these students demonstrate difficulty with comprehension or to assume that they already know the content and therefore are able to bypass the text. Cluster 2 represents the highest percentage (87%) of students who qualify for free or reduced-price lunch. Thus, the findings reported by White, Graves, and Slater (1990) become salient when considering the mean score for Factor 1. These researchers found that students who qualify for free or reduced-price lunch enter school with 50% less vocabulary knowledge than their more privileged peers. The low QRI-4 concept question scores students in Cluster 2 exhibit support these results.

Although students in Cluster 2 earn high scores on word reading efficiency measures (TOWRE), when compared to the other students in the sample, they demonstrate Factor 3 scores that are 0.67 standard deviations below the sample mean. Looking again at the results of the ISI sheds light onto students’ reading abilities as related to Factor 3. By earning the highest ISI scores of any cluster, these students demonstrated an ability to decode and apply sound-symbol relationships. The slow reading rate, however, may be a result of the unknown meaning of words, which is a component of both fluency and orthographic knowledge as presented by Nathan and Stanovich (1991). The authors suggest this is a result of limited experiences with text, which demonstrates a need for increased volume of reading at an independent level (Allington, 1983; Krashen, 1989; Nathan & Stanovich, 1991). Similar to the students in Cluster 1, students in Cluster 2 need more opportunities to read widely and also receive explicit vocabulary instruction relating to the texts they read. Students in Cluster 2 are capable of decoding words at a high level, but their reading rate is slowed by their lack of vocabulary knowledge. This slow reading affects their ability to comprehend text, particularly text with sophisticated vocabulary.

Cluster 3: Automatic word callers

Students in Cluster 3 exhibit lower levels on measures of comprehension than their peers in the other clusters, yet they are able to make meaning from appropriately leveled text. Their grade-level equivalent scores are 3.3 and 2.8 on narrative and expository text, respectively. Students in this cluster also encountered texts with the lowest level of knowledge on the concept questions of any cluster membership. Therefore, students in this cluster demonstrate difficulty on Factor 1. Difficulty, however, is not to be confused with deficiency since students in Cluster 3 are capable of learning about a complex topic when paired with text that matches their reading level. They will, however, need explicit instruction to build their word knowledge. This will provide them with essential understandings about concepts prior to entering text.

Cluster 3 students present an overall Factor 2 score that is 0.57 standard deviations higher than the sample mean and is the highest of any cluster membership. Much like students in Cluster 2, these students score particularly high as compared to the sample mean on measures of word reading efficiency (TOWRE). Furthermore, Cluster 3 students also read at a higher rate than most students in the sample. Generally speaking, these students are able to call out words quickly and accurately but are not reading for meaning. Cluster 3 contains most of the students receiving ELL services, which contrasts with Lesaux and Kieffer’s (2010) findings of equal distributions of language-minority students across profiles. It is important to note, however, that only 9.6% of the students in this study received ELL services (n = 9). Although this percentage is significantly higher than the percentage of students receiving ELL services across the school district (1.6%), the sample is not large enough to contend earlier findings.

Cluster 4: Struggling word callers

Cluster 4 represents students who read quickly but not accurately. In addition, students in Cluster 4 exhibit meaning (Factor 1) scores 0.27 standard deviations below the mean. A closer look at the descriptive data for these students reveals that they exhibit consistent scores across all assessments, with scores that are slightly below the sample mean. In other words, these students are utilizing skills required to successfully negotiate text but are doing so with levels of automaticity that were different from, and often less than, their peers. Factor 3 is the notable exception to this pattern. Students in Cluster 4 earn the highest rate scores, 0.56 standard deviations above the mean, of any of the four clusters represented by this sample. It is interesting that these students also earned the highest scale score on TCAP.

Factor 1 results indicate that students in Cluster 4, similar to their peers in all other clusters, demonstrate comparatively higher levels of comprehension on narrative text, than on expository. This particular finding confirms that of Saenz and Fuchs (2002), in which students were assessed on narrative and expository passages and exhibited greater challenges with expository text. The authors conclude that these students are less able to draw on their prior knowledge to make inferences from expository text. Although differences between responses on explicit and implicit comprehension questions are not considered for the purposes of this study, evidence of Saenz and Fuchs’s (2002) findings are apparent in the drop between the levels at which students answered expository concept questions and expository comprehension questions. This indicates that students in Cluster 4 are not utilizing text as a “tool” for gathering information (Spear-Swerling, 2004). Although students with disabilities are widely distributed across the four clusters, nearly half of the membership in this cluster is students with disabilities.

Conclusions and Implications

The results of this study add to the body of work demonstrating that adolescents who struggle with reading are largely capable of the multifaceted processes of reading but require intensive instruction in building vocabulary and comprehension skills using text that supports their development (Lesaux & Kieffer, 2010; Rupp & Lesaux, 2006). In this study, 94 adolescents who earned below-proficient scores on the TCAP reading test make up heterogeneous clusters of students with individual variability related to moderately and less constrained skills (Paris, 2005). These skills manifested in the data as three distinct factors (meaning, decoding, and rate), based on results from a battery of individual reading assessments that spanned the continuum of constrained and less constrained skills. Four clusters emerged from the data, each offering distinctive patterns related to the factors of meaning, decoding, and rate. The four clusters, slow and steady comprehenders, slow word callers, automatic word callers, and struggling word callers, highlight that the majority of students in the study demonstrated mastery of constrained skills but required additional instructional support developing fluency, vocabulary, and comprehension, as connected skills within the process of reading (Bear et al., 2004). It is important to note that many key individual differences were not tested in this study because the study approached reading from a conservative viewpoint informed by the National Reading Panel and the research conducted by Buly and Valencia (2002).

The heterogeneity represented by the four clusters strengthens the argument that adolescent readers cannot be viewed through a deficit model that assumes these students struggle with reading because they have not yet developed constrained skills (Dennis, 2009; Franzak, 2006; Lesaux & Kieffer, 2010; Rupp & Lesaux, 2006). Instead, instruction, research, and policy must be approached from the foundation of what they know and are able to do in developing their reading abilities. This shift highlights the challenge of overly simplistic assumptions of adolescent reading and focuses on the importance of using high-stakes standardized assessments as a gauge for determining which students need intensive reading instruction, but to then utilize multiple indicators of achievement to determine the paths needed to address the individual variability of students.

State reading assessments, such as the TCAP, are less valid for making decisions for individual students than instructionally informative assessments such as those administered in this study (Buly & Valenica, 2002; Lesaux & Kieffer, 2010; Linn, 2000; Rupp & Lesaux, 2006). To focus adolescent reading instruction on less constrained skills, teachers must develop expertise in data-driven decision making based on instructionally informative assessments of reading. Therefore, policies related to adolescent reading instruction must also shift away from a focus on using state assessments for instructional decision making and toward a view of adolescent reading related to less constrained skills.

Additional research that looks deeper into adolescents’ individual differences is needed to further develop the literature base about these students. Notably, in each of the studies reviewed, the demographics were skewed so that poor and minority students as well as students who receive special services (such a ELL and special education) are classified as struggling readers in significantly higher numbers (Buly & Valenica, 2002; Lesaux & Kieffer, 2010; Rupp & Lesaux, 2006). Research and policy focusing on the marginalization of poor, minority students and those who receive special services is necessary to gaining a deeper understanding of adolescent reading abilities (Franzak, 2006).

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.