Abstract

This study is a research collaborative conducted with multiple sites to examine the programmatic features within six literacy teacher education programs that have received the Certificate of Distinction of the International Reading Association (IRA). The objectives were to identify the features that were most highly ranked by internal and external experts and to delineate specific examples of how the features were actualized. A classical Delphi method was applied, and participants included leading literacy faculty members at each of the six institutions, the internal experts (n = 18), and members of program review teams identified by IRA, the external experts (n = 3). Analyses of results revealed that 14 programmatic features ranked higher in value than others at a statistically significant level. The study found that the internal and external literacy teacher education experts agreed on the most highly valued programmatic factors. These include the importance of relevant field experiences, the development of teacher candidates’ abilities to teach and assess children through a wide variety of instructional strategies and assessment instruments, and ways to integrate literacy and language strategies throughout the curriculum.

Keywords

The need to prepare the best literacy teachers has never been greater (Greenberg & Jacobs, 2009a, 2009b, 2009c, 2009d; McKinsey & Company, 2007). Within the past 20 years, more scholars have studied this issue than in all previous decades (see the seminal works of Carter, 1990; Feiman-Nemser, l990; Grant & Secada, l990; also see the more recent critical analyses of Hoffman et al., 2005; Maloch et al., 2003; Risko et al., 2008). These studies were accompanied by research exploring how to create better teacher education programs in general (Darling-Hammond, 1997; Darling-Hammond, Holtzman, Gatlin, & Heilig, 2005). Although the latter studies found that certified teachers consistently produce stronger student achievement than do uncertified teachers, the specific features within these programs were not identified.

Research posits that quality programs should contain the following basic foundations (International Reading Association [IRA], 2005). Literacy teacher education programs should assist future educators to become flexible, adaptive, and responsive to students’ needs. They should be field based, include supervised, relevant field-based experiences, and offer content that can easily be transferred to future classroom instruction. These programs should implement a clear theoretical orientation to literacy instruction, a vision-driven plan for preparing future teachers of quality, and a comprehensive curriculum that guides candidates. Finally, they should encourage candidates to view themselves as lifelong learners (IRA, 2005).

Prior studies of how to actualize these foundational principles are limited in four important ways. First, data have not been collected to determine if the foundations listed above, or other positive programmatic features, have had a consistent presence in teacher education programs throughout the country (e.g., Grisham, 2000). Second, when these features have been present, studies have not been able to identify which specific features are most highly ranked in importance to move a program from “adequate” to “distinguished” (Risko et al., 2008). Most previous studies have identified the effects of individual content and methodological choices within single courses, rather than examining the effects of all programmatic features (see Risko et al., 2008, for a review of these studies). For these reasons, research is needed to explore the features that exist within comprehensive literacy teacher education programs at institutions that are known for their high-quality programs. The study reported in this article was designed to address this need.

Theoretical Background and Review of Literature

The first studies of literacy teacher education, detailed in the landmark text The Torch Lighters: Tomorrow’s Teachers of Reading (Austin & Morrison, l962), were conducted 38 years ago and replicated and republished in Torch Lighters Revisited (Austin & Morrison, l976). The IRA (2003a) formed the National Commission on Excellence in Elementary Teacher Preparation for Reading Instruction (NCETPRI) 23 years later to update these works by surveying current U.S. literacy teacher education programs. Results indicated that these programs had improved in many important ways since Austin and Morrison’s original 1962 investigation. Specifically, data suggested that the vast majority of accredited, university-based literacy teacher education programs in 2000 (IRA, 2003a)

Increased the average number of semesters that preservice teachers took classes that emphasized reading methods from two in 1962 to six in 2000

Followed a 4-year baccalaureate degree format, as opposed to 5th-year certification processes

Contained courses that presented a balanced literacy approach to teaching reading that was not present in 1962

Integrated significantly more content in individual courses to learn how to teach decoding, fluency, and comprehension than was present in l962

Utilized more frequent field experiences prior to student teaching than in 1962

Were staffed by a higher percentage of college faculty members who held advanced degrees in reading and had previous teaching experiences in elementary classrooms than was the case in l962

Although these survey data provided information as to the structure of literacy teacher education programs at the turn of the century, more research was needed to identify the individual programmatic features of these programs. To address this need, NCETPRI studied graduates of eight university-based programs that were judged to have an “excellent” literacy teacher education program (Hoffman et al., 2005). Researchers found that graduates of high-quality literacy teacher preparation programs transitioned into the profession more rapidly and were more effective in “creating and engaging their students with a high-quality literacy environment” than were graduates from less effective teacher education programs (Hoffman et al., 2005, p. 267).

In 2005, the IRA board of directors established a Teacher Education Task Force to analyze current reading teacher education research resulting in a review and critique of 298 studies conducted from 1980 to 2006 (Risko et al., 2008). From this pool, researchers completed an inductive paradigmatic analysis of 82 studies that met rigorous, high-quality research standards in theoretical orientation, methodology, data analysis, and research design. Most of the 82 studies were conducted over no more than one semester and investigated only single college courses.

Risko et al. (2008) found only four studies of literacy teacher education programmatic features. When the analyses of these studies were completed, a trend noted was that individual features within individual courses contributed to preservice teachers’ development of stronger belief systems or pedagogical knowledge bases. For example, a program that fostered teacher candidates’ collaborations within candidates’ groups strengthened their belief systems; however, specific examples of how the programs structured these collaborations were not discovered. Likewise, it was noted that programs that guided prospective teachers to apply content from their methods courses to their lab placements developed stronger pedagogical knowledge than less well-structured experiences. Evidence did exist that some conditions in student teaching experiences in highly effective literacy teacher education programs were favorable in advancing prospective literacy teachers’ pedagogical knowledge bases, but as Bean (l997) found, other conditions did not produce favorable results. In general, it was suggested that such “patterns require further scrutiny and provide a catalyst for future research” (Risko et al., 2008, p. 278).

These studies also suggested that a highly collaborative process within a college of education was likely to increase the quality of that program’s literacy conceptual framework (Grisham, 2000; Hoffman et al., 2005; Sailors, Keehn, Martinez, & Harmon, 2005; Vagle, Dillon, Davison-Jenkins, LaDuca, & Olson, 2006). Grisham (2000) found that a cohesive, theoretical orientation within a literacy teacher education program had a definite, measurable impact on the candidates’ professional, practical, and personal funds of knowledge as measured by a standardized instrument, the DeFord Theoretical Orientation to Reading Profile (DeFord, 1979), by autobiographies written by teacher candidates, and by interview data. Risko et al. (2008) found that 73% of researchers who conducted studies of individual literacy courses subscribed to a constructivist theoretical orientation for literacy instruction (Bartlett, 1932; Piaget, l932; Shulman, l986). Does this orientation permeate the entire program at institutions that receive certificates of distinction, or do faculties at these institutions embrace several perspectives? Risko et al. (2008) concluded that there is an ongoing need to study more completely the programmatic features of 4-year teacher education programs before more knowledge as to how to better prepare literacy educators can be described.

Of equal importance is the need to identify both the common demographic features among distinguished programs and the qualities of their field components that contribute to effectiveness. Although Sailors et al. (2005) found that early field experiences increased the quality of prospective literacy teachers, the NCETPRI commission’s (IRA, 2003a) survey found that the content in field experiences across 1,150 literacy teacher education programs in the United States varied extensively. There is a need to examine early teacher education field experiences more closely and to determine when they begin, as almost all previous research has focused only on the coursework offered during the last two years (junior and senior level). Furthermore, the identification of more specific examples of the exact field-based experiences used at multiple sites in highly effective literacy teacher education programs is recommended (IRA, 2003a; Risko et al., 2008). Past studies have established that some literacy teacher education programs offer no field experiences prior to student teaching, whereas others required 50 or more hours every semester.

Grant and Secada (l990) and Risko et al. (2008) also recommended that future research needs to provide a greater vision for what might be done in the near future to significantly improve both the field-based and university-based components in literacy teacher education programs. They concluded that such research would increase in value if it (a) included more data from the programmatic level as opposed to the individual course level, (b) represented work being conducted at various U.S. universities, and (c) utilized a mixed-methods design so that qualitative data could report specific examples of differences and similarities across programs.

In summary, research to date that informs our body of knowledge concerning how candidates learn to teach literacy is either contradictory (e.g., Wideen, Mayer-Smith, & Moon, l998) or inadequate to explain which features are valued most highly (Risko et al., 2008; Wilson, Floden, & Ferrini-Mundy, 2001). Clift and Brady (2005) and the National Reading Panel report (National Institute of Child Health and Human Development [NICHD], 2000) support this recommendation for further study and suggest that this need is even more urgent in this decade than in the past. The field must learn how to better prepare candidates to teach reading to the increasing diverse student body. As Anders, Hoffman, and Duffy (2000) summarized, “Where are the exemplary programs in reading teacher education? . . . It is possible to develop . . . excellent programs [that] share commonalities with room for diversity and creativity” (p. 727), and what are their commonalities, diversions, and unique qualities?

Based on these empirical findings, the gaps in our knowledge base, and the steadily increasing political and professional need to study literacy teacher education programs, IRA (2008) developed a commission to determine components of high-quality reading teacher preparation programs. This commission worked collaboratively with the NCETPRI, IRA’s Accreditation Task Force, and the IRA/ACEI Standards Task Force to create the IRA’s Certificate of Distinction Program. This program is administered through IRA’s Quality Undergraduate Elementary and Secondary Teacher Education in Reading (QUESTER) Task Force.

This body compiled research from the National Education Association, the American Federation of Teachers, the IRA, and the NICHD. These works and the research presented in the following policy and empirical documents became the framework by which literacy teacher education programs could be judged for distinction:

Teaching Reading Well: A Synthesis of the International Reading Association’s Research on Teacher Preparation for Reading Instruction (IRA, 2007)

The National Council for Accreditation of Teacher Education’s Professional Standards for the Accreditation of Schools, Colleges, and Departments of Education (2006)

The IRA’s Prepared to Make a Difference research report (2003a)

The IRA’s Teacher Education Task Force review of research in the area of teacher education (Risko et al., 2008)

The Report of the National Reading Panel (NICHD, 2000)

The IRA’s Standards for Reading Professionals–Revised (2003b)

The IRA’s Report of the Accreditation Task Force (2005)

The reading-language arts standards developed by the IRA/ACEI Task Force (2003b)

Reading Next: A Vision for Action and Research in Middle and High School Literacy, from the Alliance for Excellent Education (Biancarosa & Snow, 2004)

Writing Next: Effective Strategies to Improve Writing of Adolescents in Middle and High Schools, from the Alliance for Excellent Education (Graham & Perin, 2006)

The IRA Certificate of Distinction Program, implemented in 2008–2009, established the goal of advancing literacy teacher education and addressing the issues cited previously. To date, six universities have been awarded the IRA Certificate of Distinction for their literacy teacher education programs: Emporia State University in Kansas, Florida International University, Texas Christian University, the University of Alabama, the University of Indianapolis in Indiana, and the University of Texas–San Antonio. Each of these programs attained the highest level of competence on every criterion for distinction as a literacy program. These criteria, identified by the research and the review process stated above, include (a) highly effective programmatic content, (b) exemplary faculty with outstanding teaching abilities, (c) well-coordinated and systematic field experiences, (d) emphasis on strategies that meet the needs of diverse populations, (e) high-quality candidate and program assessments, and (f) the use of high-quality governance, resource allocations, and visionary policies.

During Phase I of the Certificate of Distinction review process, universities submit an application and a required $1,000 fee. When a university is selected to move forward to Phase II, which includes a visit from an IRA site review team, another $1,000 fee is charged to the university, and the university pays the travel expenses of the site review team.

Purpose of Study

The purpose of this study was to examine the programmatic features of the six literacy teacher education programs that received the IRA Certificate of Distinction award. The specific objectives were to identify the features that were most highly ranked as well as to delineate specific examples of how the features were actualized in today’s literacy programs. An additional purpose was to examine the theoretical orientations on which these features operate. The specific questions examined in this study follow.

Question l: Do commonalities exist among literacy teacher education programs awarded the IRA Certificate of Distinction; and, if so, what are the most highly ranked features within these programs? What is the relative order of importance of the critical features within literacy teacher education programs that receive the Certificate of Distinction award? Are there common and dissimilar examples of these programmatic features in action?

Question 2: Does a prevalent theoretical orientation exist in literacy teacher education programs that received the Certificate of Distinction award?

The present study expands prior research in four ways. First, this study included data from the full literacy teacher education programs as opposed to single courses. Second, data were collected from institutions within 1 to 12 months of receiving their special distinction from IRA to ensure that the data included the most up-to-date and currently operational examples of individual programmatic features. Third, findings were based on two distinct data sets collected from literacy experts who had extensive knowledge of their individual distinguished literacy programs and from literacy teacher education experts who were not connected in any way and had no vested interest in any of the award-winning programs. Fourth, this study employed a mixed-methods design so that (a) a rank ordering of common programmatic features could be determined, (b) individual examples within common programmatic features could be reported, and (c) demographic similarities and dissimilarities between programs could be analyzed.

Method

The study was designed using the classical Delphi method, an interactive, group facilitation process to obtain consensus on the judgments and experiences of experts through a series of questionnaire responses, interspersed with feedback and data analyses techniques (Hasson, Kenney, & McKenna, 2000). The classical Delphi method is especially appropriate when the goal of a research study is to improve the knowledge base concerning a specific problem when incomplete knowledge about that problem, or phenomenon, exists (Skulmoski, Hartman, & Krahn, 2007). This design has also been judged to be an effective research methodology to measure “truth” when there is a lack of historical or technical data and human judgment is necessary to advance the field (Wrights, Lawrence, & Collopy, l996). The classical Delphi method has its origins in the American business community and has been widely accepted throughout the world in many sectors including health care (Hasson et al., 2000), defense, business (Delbeq, Van de Ven, & Gustafson, 1975), education, information technology, transportation, and engineering (Skulmoski et al., 2007).

Although Delphi procedures have many variations, the classical Delphi method was chosen for this study because it has been judged to be the most rigorous of all Delphi and ranking methods (Hasson et al., 2000; Rowe & Wright, l999; Skulmoski et al., 2007). It has the highest fidelity of results because participants must be experts in their field and more precise procedures and higher standards of analyses must be achieved at each stage in the research design than is required in other Delphi or ranking methodologies. The classical Delphi method procedures followed in this study were judged to be the best to “obtain the most reliable consensus of opinion of a group of experts . . . because they employ a series of intensive questionnaires interspersed with controlled opinion feedback” (Dalkey & Helmer, l963, p. 458).

Classical Delphi procedures have been demonstrated to lead to improved judgments over statisticized groups and unstructured groups when four criteria are met (Rowe & Wright, l999). These criteria were met in this study and include the following: (a) anonymity of Delphi participants (participants must be free to express their opinions without social pressures to conform to others’ opinions, as might occur in group sessions), (b) iteration (participants are allowed to refine their views by referencing the data analyses that occurred from round to round, (c) controlled feedback (participants are informed of the results of data analyses and other participants’ perspectives), and (d) statistical aggregation of the group response (procedures must provide for a quantitative analysis and interpretation of data).

Selection of Participants

Prior to selecting participants, researchers conducted a review of previous classical Delphi method studies and identified that participant sample sizes vary from 3 to 62 participants, with the mean number of expert participants being 12 (Delbeq et al., l975; Lam, Petri, & Smith, 2000; Nambisan, Agarwal, & Tanniru, 1999; Schmidt, Lyytinen, Keil, & Cule, 2001; Scott, 2000; Wynekoop & Walz, 2000). When participants are of a homogeneous nature, only 10 to 15 experts are needed to produce reliable data (e.g., Delbeq et al., l975). Our sample size (N = 21) exceeded this mean. The selection of research participants was a vital component in this study because output is based on these experts’ judgments (Ashton, 1986; Brancheau & Wetherby, l987; Bolger & Wright, 1994; Parente, Anderson, Myers, & O’Brien, 1984).

On June 1, 2009, one month after the sixth IRA Certificate of Distinction was awarded, researchers contacted IRA’s Office of Research, the division housing all records concerning this award. Through this office, the three leading literacy faculty members at each of the institutions that had participated in the program and had received the award were identified. These participants were key leaders in developing the distinctive programs, had written all or part of the Phase I reports for the award, and had participated in the site review process. These literacy teacher education faculty members had not visited, nor had participated in any way, nor had any contact with any personnel at any of the other award-winning institutions prior to this study. All of these single university-invested experts were presently teaching in the literacy teacher education program that won the IRA Certificate of Distinction. These participants were identified as the internal experts in this study because they had intimate knowledge of how a particular program of distinction was created, was sustained, and grew to become noteworthy. A total of 18 internal experts were identified.

At the same time, on June 1, 2009, one month after the sixth IRA Certificate of Distinction had been awarded, researchers contacted IRA’s Office of Research to identify the members of the IRA QUESTER Task Force who had participated on the Site Review Team and had visited two or more of the IRA Certificate of Distinction university programs. The QUESTER Task Force is the group of literacy teacher education researchers who were appointed by IRA’s board of directors to judge the merits of literacy teacher education programs. These participants had to have been selected to join a Site Review Team based on their broad knowledge and experiences with the research concerning such programmatic features. These experts had no vested interest in, connection to, or prior knowledge of any of the programs of distinction before they were asked to review the Phase I Self-Study Reports, spend 2 days conducting site visits, and prepare the extensive Phase II Report of Findings and Recommendations. These expert participants represented the total pool of QUESTER site reviewers who had visited more than two of the programs of distinction and had the firsthand knowledge to compare these programs for commonalities and differences. These experts had also visited more than one literacy teacher education program that was judged to not have met the criteria to receive the IRA Certificate of Distinction. These participants were identified as external experts because they had not led or participated in any way in the creation of any of the programs represented. The number of external experts participating in this study is three.

Participants met the high standards of “expertise criteria” required for a classical Delphi method (Brancheau & Wetherby, l987; Skulmoski et al., 2007). Participants had an external professional body acknowledge their knowledge and experience with the issues under investigation. IRA served as the external professional body in this study. Internal and external experts also possessed the background experiences (7–39 years in the profession), certifications (earned in their respective states), advanced degrees (in education relative to literacy education), and professional knowledge to judge criteria for distinction. IRA validated the knowledge and experience of external experts by confirming that they had conducted research and had extensive expertise, experiences, and knowledge of literacy teacher education programs. In addition, the reliability of the sampling used in this study was increased as researchers included all cases that met the predetermined criterion of expertise.

Procedures

This study was divided into two phases.

Phase 1

The goal of Phase l was to create and analyze an open-ended questionnaire to obtain expert judgments. Email was the communication venue used to disseminate this questionnaire. This process was used to ensure that every participant could provide the information requested without being influenced by any other expert, to allow for the data to be analyzed independently and equitably, and to ensure that all participants could write as much as they wanted.

During September and October 2009, researchers emailed the following Round 1 open-ended questionnaire. The question posed on this instrument was, “Could you please identify the 19 features that you deem to most contribute to the high quality of your literacy teacher education program?” Each expert was asked to identify 19 items because that was the number of items submitted by the first participant who had been chosen by IRA to identify features common to all the Certificate of Distinction programs (IRA, 2009). Classical Delphi method requires that all participants have the opportunity to contribute the same number of responses in Round 1 of the classical Delphi method (e.g., Bolger & Wright, 1994; Parente et al., 1984).

From mid-October to the beginning of November 2009, researchers randomly consolidated the detailed responses from Round 1 into two distinct lists of responses. One list was the tally of responses of the internal experts representing the single university sites; the second was the tally of responses of the external experts who based their listings on their evaluations of the distinguished literacy programs at two or more universities.

Researchers dimensionalized the data by combining statements that described the same index of expertise into single items and tallied the number of experts who recognized each quality as well as the number of times that each quality was mentioned. For example, several internal experts stated that collaboration among their faculty was a key to the success of their particular literacy teacher education program. When one internal expert stated that “Collaboration is the key to this program and occurs at various levels (intern/mentor, faculty/mentor/principal, university, school district, community college, university, course instructors at university/community college, and course instructors/mentors)” and a second internal expert stated that “Core faculty collaborate—core faculty collaborate on the design and implementation of entire curriculum and remain with the cohort throughout their program,” these two items were entered verbatim into a single category of exemplary programmatic feature of distinction labeled “Collaborative, cohesive, dedicated faculty and college leadership.” When several different terms were used for what appeared to be the same factor, researchers listed all the terms together so as not to exclude any participant’s voice but to provide one consolidated description of that feature. The goal of this procedural step was to compile a master list of quality indices found among all literacy teacher education programs recognized as Programs of Distinction to date.

Phase 1 of this study met one specific criterion required in the classical Delphi method: Round 1 begins with an open-ended question that generates ideas and allows participants to have complete freedom in their responses (Hasson et al., 2000); and subsequently, all responses from Round 1 are included in Round 2 if they were cited by more than one of the participating experts. Because researchers did not eliminate any items themselves and the iterative building process was allowed to advance thoroughly to Round 2, with optimum validity, the criterion of complete freedom of response was met in this study.

From September 8 to 23, researchers completed Round 1 data analyses. Interrater reliabilities were computed to identify the level of agreement reached on assignment of categories for each participant item by the researchers. Telephone calls were made to two expert participants to confirm that the September 23 deadline would allow them sufficient time to enter their data. Both participants agreed and provided their data before this deadline.

The last step in Phase l was to collect demographic data for faculty and students at each institution. Researchers collected these data from September 15 to November 6, 2009.

Phase 2

The goal of Phase 2 was to create and analyze the Round 2 questionnaire designed to report the data obtained from the Round 1 questionnaire to all experts. It was constructed so that participants could rank order the information that had been obtained in Round 1 and provide responses to Question 2 of this study.

On September 25, a field test of questions for Round 2 of the Delphi procedure was conducted. A total of 16 faculty members who were not involved in the research study but who taught in the College of Education at one of the universities that had received the IRA Certificate of Distinction were asked to read, respond, and critique the field test version of the Round 2 instrument. The purpose of this field test was to help the researchers ensure that the questionnaire compiled from the Round 1 process was clear as well as valid. The researchers also sought suggestions for improving the composition of items. As a result, the field test members noted two ideas to clarify individual items. First, they recommended that the definitions for theoretical orientation as cited from Risko et al. (2008) be condensed to two sentences each instead of the paragraph lengths that appeared on the field test versions. Second, they recommended that the names of categories of responses be worded with approximately the same number of words. In the field test version, some categories contained several words, whereas others contained only one or two words (e.g., “The program develops dispositions toward service and professionalism in teacher candidates though conference presentations and involvement in professional associations, and the program provides funding for these experiences” vs. “Articulated theoretical base”). All categories were condensed or expanded so that all were described with the same number of words (+/– 3 words). Last, as a result of this field test, two categories of data from Round 1 responses were combined (i.e., “Teacher candidates learn to teach children using a wide variety of strategies” and “Teacher candidates learn to assess children using a wide variety of assessment instruments” were combined into one category: “Teacher candidates learn to teach and assess children using a wide variety of strategies and assessment instruments”).

As a result of this work in Phase 2, the study met the classical Delphi method criteria of verifying that Round 2 questionnaires are reliable and valid (Schmidt, l997). Specifically, these criteria include the following: (a) questionnaires are field tested to verify clarity and purposefulness; (b) participants have the opportunity to verify, change, or expand their Round 1 responses now that the other research participants’ answers are shared; (c) data entries are equitable in length so that the ranking of one item is not viewed by Round 2 participants to be more important than another just by its increased length as compared to other entries; and (d) participants are given the opportunity to state that they have shared all information that is relevant to the topic, have no addition data to provide, and are able to revisit and refocus on individual data entries given in Round 1.

From October 19 to November 6, researchers completed and mailed the Round 2 instrument to participants through SurveyMonkey. Two different versions of the Round 2 instrument were created. One summarized data from Round 1 of internal experts and was emailed to them; the second contained the summary data from Round 1 of external experts and was emailed to them. The resultant instruments required each expert to rank order Round 1 data categories as to the degree to which each category was most highly valued and important to the distinguished nature of teacher education programs. Each expert was to rank order Round 1 categories of data as to their level of importance in their specific program (internal experts) or to the several universities examined (external experts). This question also asked if the Round 2 instrument needed any additional categories to be added and if Round 2 accurately reflected each expert’s Round 1 data. Part 2 of the Round 2 instrument contained queries relative to Question 2 of this study.

From November 6 to December 1, experts responded to the Round 2 instrument. They verified that the categories of data properly identified their data from Round 1, that all of their individual ideas were fully represented, and that no additional categories of data were needed. From December 2 to 15, researchers and a statistical consultant completed data analyses using SPSS Version 9. Researchers also collapsed the individual responses to Question 2 into categories following the same procedures described in Phase l. The rankings of internal experts to Question 1 (Round 1 data) were tallied separately from those of external experts. From December 15 to August 30, researchers determined the results, implications, and limitations of the study.

Data Collection

There were three distinct components in data collection. The first component was to discover factors that were most highly valued by both the internal experts representing the distinguished literacy teacher education programs and external experts who were the evaluators of such programs. To obtain these data, a Round 1 classical Delphi questionnaire was administered, and detailed demographic data from all distinctive literacy teacher education programs were collected.

Researchers constructed the most open-ended first round question possible: “If you could join us in the research study, could you each, individually, without speaking to anyone else at your university, make a list of the 19 features of your program that you most highly value and that you judge to have most contributed toward your university’s ability to create a literacy teacher education program that received distinction?” We posed this question to each internal and external expert individually by email within 25 days of the awarding of the IRA Certificate of Distinction to a university program.

The second component involved rank ordering the factors of distinctive literacy teacher education programs by (a) the number of times an individual item was mentioned as well as the percentage of participants who mentioned each factor in Round 1, (b) the importance given each item by experts in Round 2 as well as the percentage who ranked each item within their top 10 most important distinctive qualities in literacy teacher preparation, and (c) the overall mean ranking of experts on individual items. The third component was designed to obtain data concerning the future of literacy teacher education programs and the theoretical orientations within distinctive programs and to rank order these issues and data.

Data Analyses

Data analyses involved using qualitative and quantitative analyses techniques. Qualitative data analyses involved constant comparative procedures adhering to Lincoln and Guba’s (l985) criteria for reliability and validity, namely, credibility, fittingness, auditability, and confirmability. Data analyses met the criteria for credibility in that more than double the required number of participants contributed to the data pool. Fittingness was met in that participants had the opportunity to challenge their own and all peer assumptions, auditability was met in that threats to validity were not present (pressures to converge one’s opinions through group pressures), and confirmability was met by using two diverse pools of expertise and successive rounds of data analyses to increase concurrent validity.

The Kendall W procedure was used as the statistical treatment of quantitative data for both rounds of data analysis. Kendall W is a statistical method that measures current agreement (the ordered list by mean ranks) with a least squares solution. The statistical criterion for potential gain that measures when the number of rounds of data collection in a classical Delphi method should end is when a strong consensus between experts is reached, as determined by the strength of Kendall W. If a high level of consensus is reached (51% agreement on rank order), then the field can place higher confidence in the relative standings of the final rankings. The modal number of rounds that occur in classical Delphi methods, as determined in a review of the methodological features of Delphi in experimental studies, is two (Hasson et al., 2000; Rowe & Wright, l999; Schmidt, 1997). Data collection ended after Round 2 in this study because the level of consensus reached exceeded the required level to end subsequent rounds of expert opinion collection (McKenna, l994), as reported in the results section of this article.

The two distinct analyses conducted for Question 1, the rank ordering of Round 1 data on the Round 2 instrument determined separately for internal and external experts, enabled triangulation of qualitative data. Triangulation of qualitative data also occurred through the use of interrater reliability coefficients. Individual responses from all respondents were recorded and categorized by independent raters, and interrater reliability coefficients for these data were computed. Categories were rank ordered by the percentage who mentioned each item in Rounds 1 and 2 and by the importance assigned each item in its contribution to the program’s ability to become distinguished, as identified by individual expert rankings in Round 2. An item had to have been cited by more than 22% of the internal experts (or 4 of the 18) and by the majority of external experts (50%) in Round 1 to be included in Round 2. These cutoffs were established because for an item to be judged common across programs of distinction, it had to have been cited by more than one university, which meant at least 4 internal experts or at least 2 external experts would have cited that item (Hasson et al., 2000; Rowe & Wright, l999; Schmidt, 1997). After all Round 1 data had been categorized, researchers constructed integrative memos of all indicators. These memos were designed to summarize specific examples of features to be recommended for future use by institutions in program planning and advancement.

The rank orders of all expert answers in Round 2 were also compared to the rank ordering of the two expert groups, internal and external. To compare the agreement within the two groups of experts’ rankings, Kendall’s rank-order correlation coefficient (T) was used (Kendall & Gibbons, l990). T was chosen rather than the Spearman rank-order correlation coefficient because it emphasizes the relative ordering of the factors rather than the magnitude of differences between ranks. A one-tailed test of significance was also used. The value of T was determined by consulting a table of exact probabilities for T (Siegel & Castellan, l988, p. 362) or through SPSS. The one-tailed probability was p = .05. Analyses of variance were conducted to determine the significance of differences that exist between program demographics.

Results

A response rate of 70% is suggested for each round to achieve true anonymity of respondents in classical Delphi methods. This response rate is necessary to ensure that participants cannot deduce which responses came from individual respondents in the study. The response rates for internal experts were 94% in Round 1 and 83% for Round 2. The response rates for external experts were 75% in Round 1 and 75% in Round 2. This high rates of response suggest that the experts valued their participation in this research.

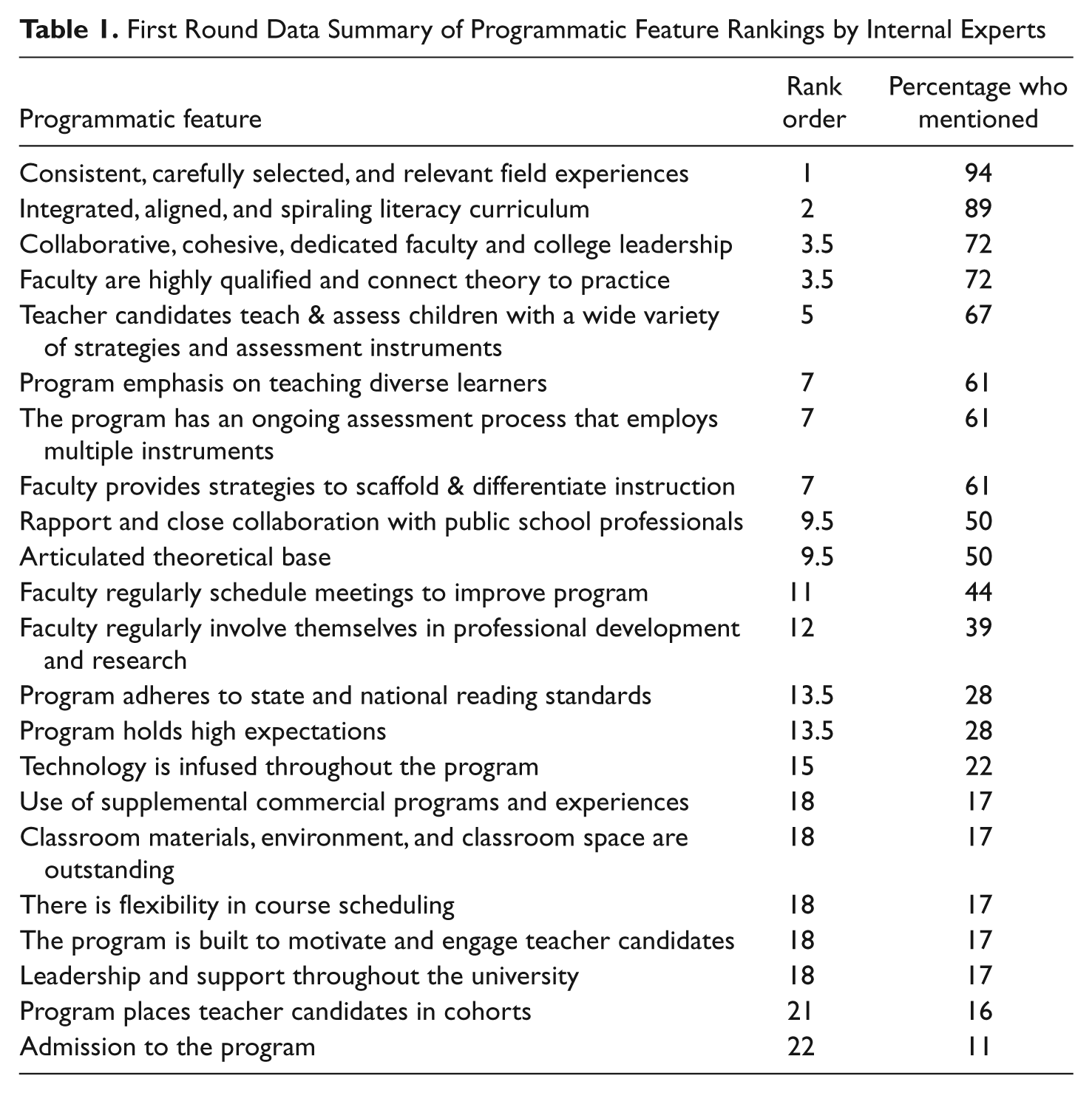

The Round 1 analysis identified 336 individual data points representing internal experts’ judgments and 47 individual data points from the external experts as to the most highly valued literacy teacher education programmatic factors. The two researchers reviewing the data categorized these items into cohesive groupings independently. The check on the correspondence between them on their categorization of items revealed an interrater reliability coefficient of .98. All disagreements were resolved through discussion until a consensus was reached and the item could be validly placed in its appropriate category. When internal expert categories were rank ordered according to the percentage of respondents who identified each factor, 22 categories emerged, with these categorical names appearing in Table 1. The rank ordering of each, as determined by percentage of internal experts identifying the factor as highly valued at their respective universities, is also shown.

First Round Data Summary of Programmatic Feature Rankings by Internal Experts

Based on these results, 14 programmatic features, all those cited by more than 22% of the internal experts, were identified for consideration and ranking by the internal experts in Round 2.

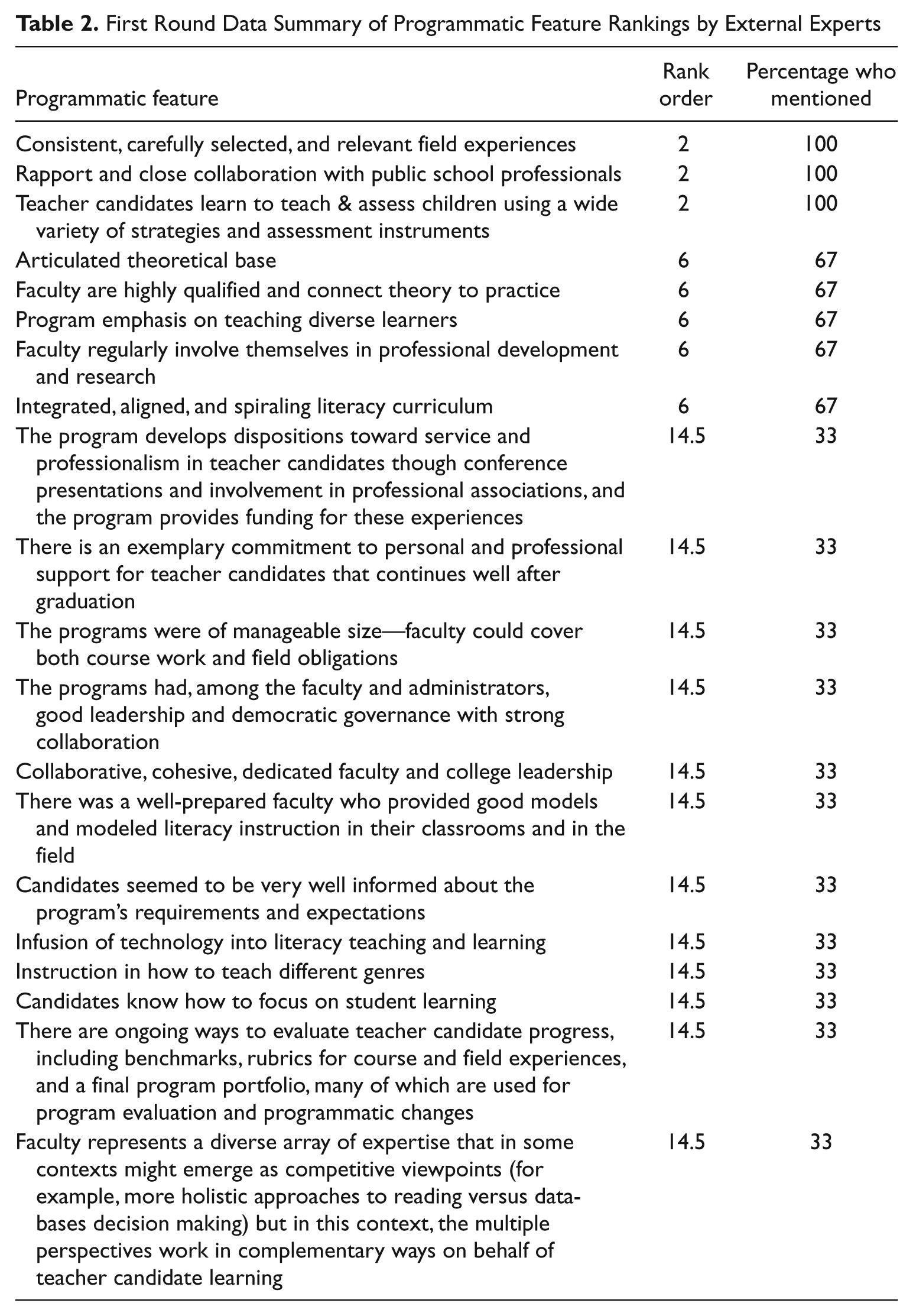

External expert responses were categorized into 20 categories following the same procedures. The resultant interrater reliability coefficient was .97 with the three discrepancies being resolved through discussion. Table 2 is a summary of these categorical names and their rank order as determined by the percentage of external experts identifying each factor.

First Round Data Summary of Programmatic Feature Rankings by External Experts

This process resulted in identification of eight programmatic features, all those identified by more than 50% of the external experts, for ranking by these participants in Round 2.

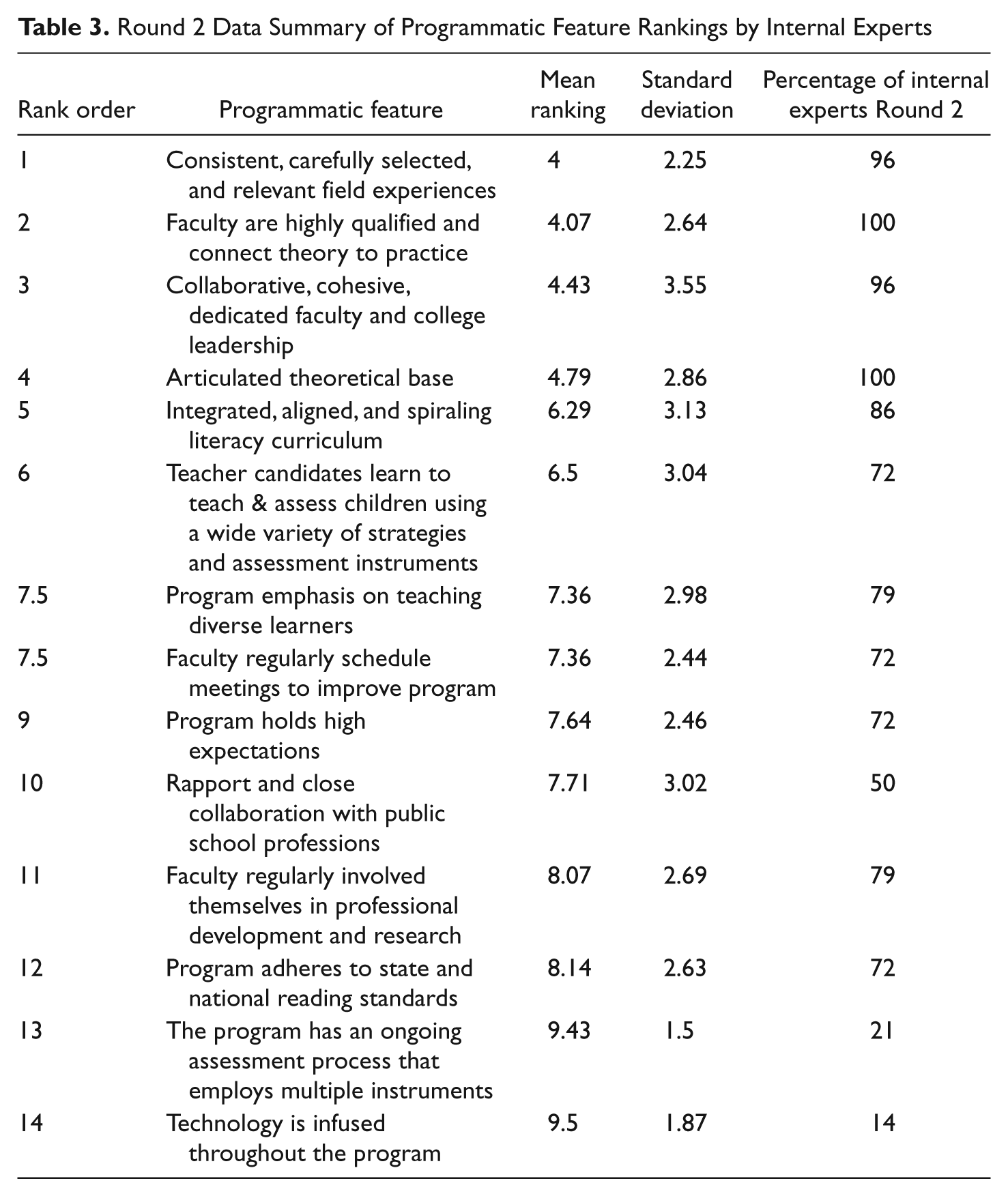

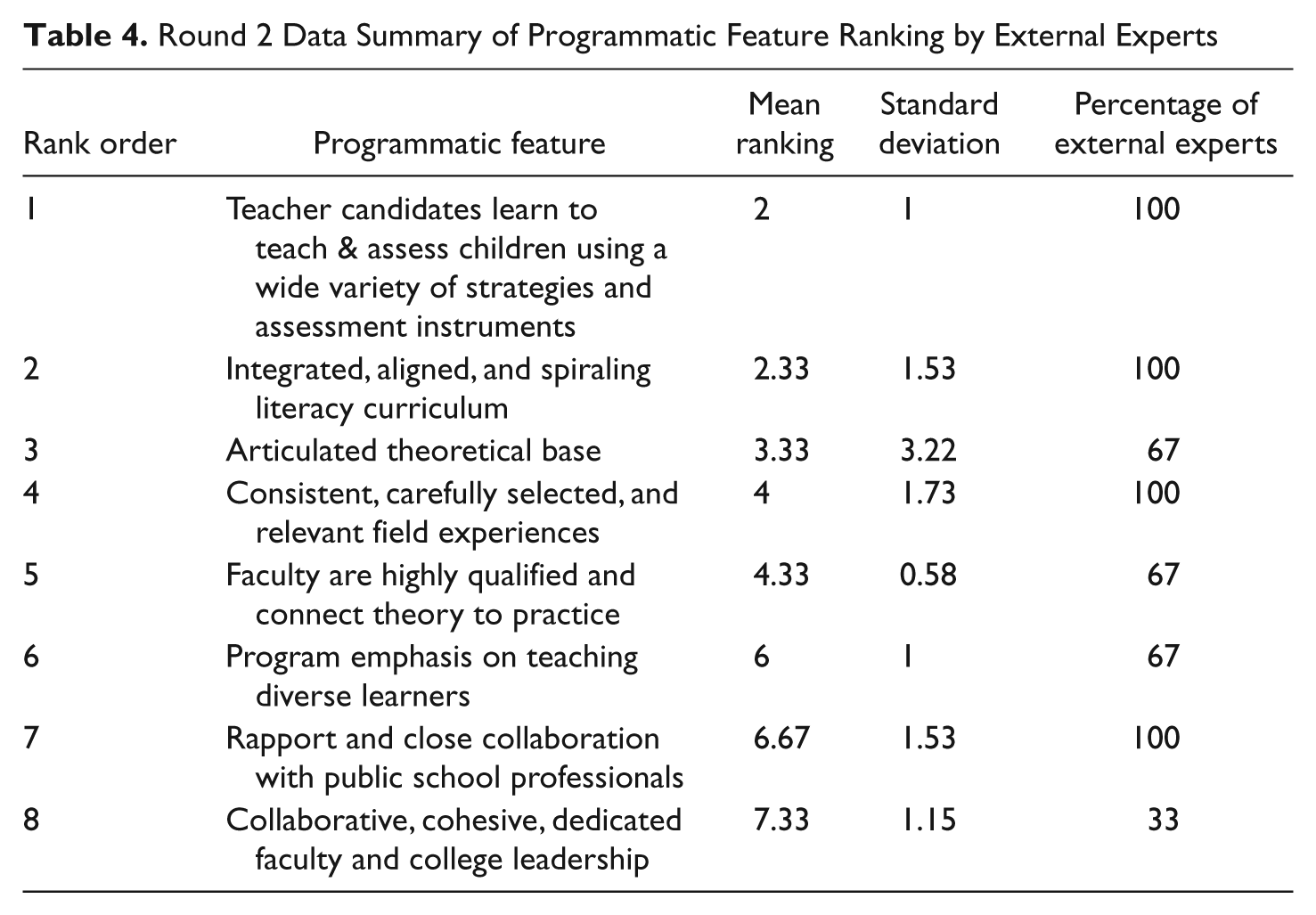

The statistics resulting from the administration of the respective Round 2 instruments to the internal experts and external experts are displayed in Tables 3 and 4. The Round 2 summary data include rank orders, mean rankings, standard deviations, and the percentage of the expert group ranking each programmatic feature in the top 10 in Round 2.

Round 2 Data Summary of Programmatic Feature Rankings by Internal Experts

Round 2 Data Summary of Programmatic Feature Ranking by External Experts

Kendall’s coefficients of concordance (W) of Round 2 data for internal and external experts were .66 and .65, respectively, indicating that a very high, strong level of agreement among experts had been reached in this round of data collection (Kendall & Gibbons, l990; Siegel & Castellan, l988). This level of agreement among experts indicated that no further rounds of data collection were needed. This decision was guided by the recommended criteria established by the classical Delphi method, which has set the criterion indicating no need for further rounds at 51%.

Kendall’s method also revealed that the current agreement (the ordered list by mean ranks) with a least squares solution was statistically significant, which is the most reliable and valid measure of determining statistical levels of agreement among expert ratings (χ2 = 63.13, df = 13, p < .001 for internal experts; χ2 = 13.78, df = 7, p < .05 for external experts).

The following discussion is organized to address each research question.

Question l: Do commonalities exist among literacy teacher education programs that receive distinction; and, if so, what are the most highly ranked features within these programs? What is the relative order of importance of the critical features within literacy teacher education programs that receive Certificates of Distinction? Are there common and dissimilar examples of these programmatic features in action?

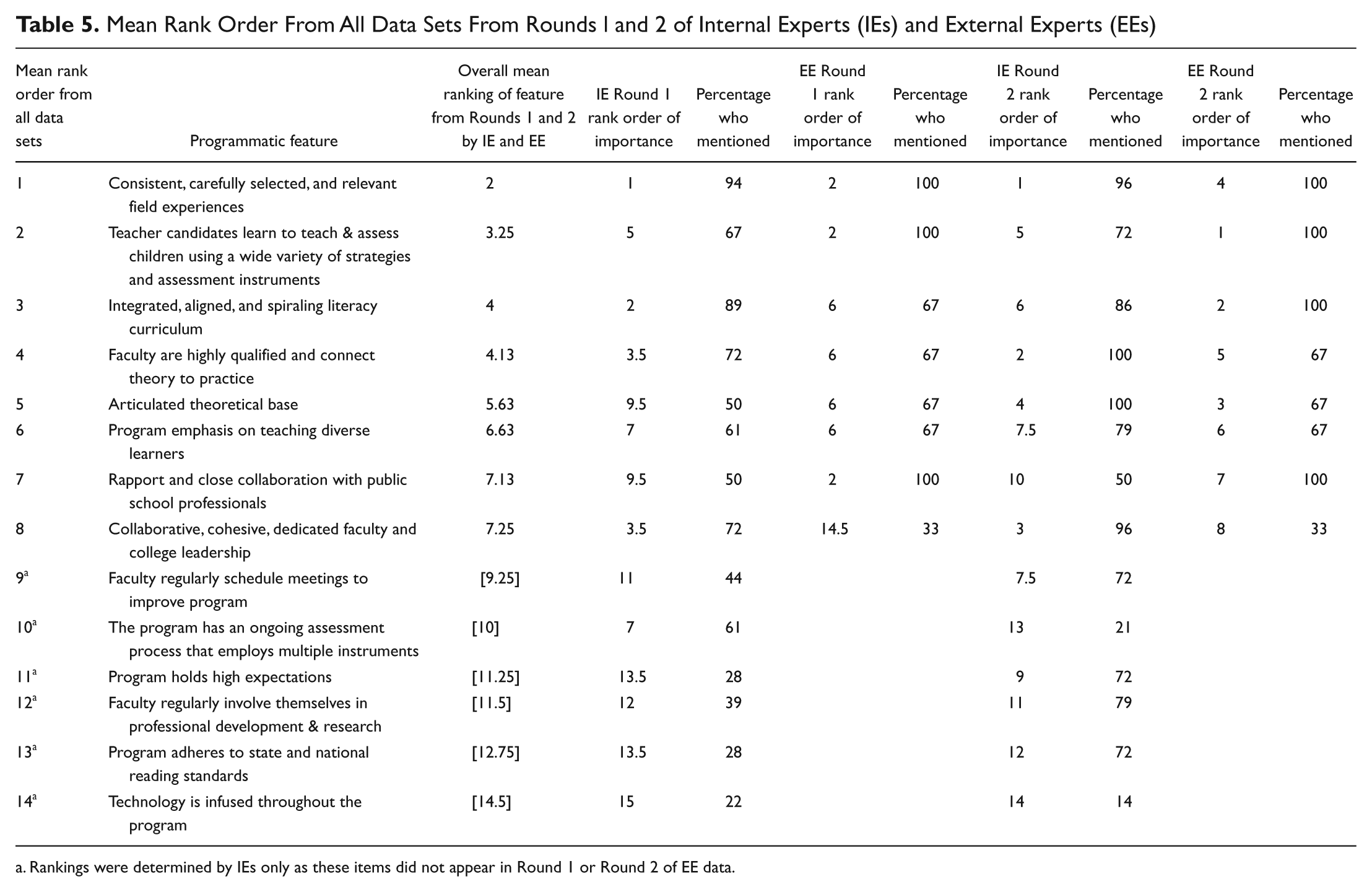

Although the specific categorical wording of individual programmatic features varied between internal and external experts, strong commonalities existed between them. Table 5 shows the commonalities between internal and external experts in rankings of programmatic features by displaying the overall mean ranking of each feature resulting from the two rounds of data collection by both sets of experts. The rank ordering of 14 programmatic features by the internal experts and the rank ordering of 8 programmatic features by the external experts in Rounds 1 and 2 are also displayed. As these were based on counts, the percentages of respondents are presented.

Mean Rank Order From All Data Sets From Rounds l and 2 of Internal Experts (IEs) and External Experts (EEs)

Rankings were determined by IEs only as these items did not appear in Round 1 or Round 2 of EE data.

The high rankings of 14 programmatic features presented in Table 5 were judged to be statistically significant. The most common features derived from this table are described below. This set includes the eight programmatic features ranked by both the internal and external experts. In each description examples of methods by which the features are implemented in the literacy teacher education programs that received the IRA Certificate of Distinction are detailed. Differences between university methods are also described. Programmatic features are discussed in order of reported importance.

1. Consistent, carefully selected, and relevant field experiences were created that are closely tied to program philosophy, programmatic vision, and content presented in courses

A systematic sequence of content and instruction occurs across semesters, planned to correspond with candidates developing knowledge, skills, and dispositions. Course, field, and program experiences consist of repeated opportunities for full-time professors to model desired practices in methods courses or at public school campuses before teacher candidates are required to demonstrate their abilities to use them. Each literacy-related course contained field experiences. The faculty who taught the courses supervised most field experiences, and immediate feedback was given by this supervisor as well as by fellow teacher candidates. Field experiences were monitored by the entire program faculty and adhered to a gradual release of responsibility model. Programs were staffed so that full-time faculty supervised field experiences. External experts also highly valued this fact and indicated preference for beginning field experiences early, in the freshman and sophomore years, as opposed to later, in the junior and senior years.

Differences found across the institutions included the amount of time spent in field experiences, with the range being from 10 hours per semester to 65% of the total time for individual courses. In addition, the manner by which candidates received feedback varied; but procedures for receiving feedback were implemented in every field experience. The manner in which the total full-time faculty monitored the sequence and content in their respective program’s field experiences also differed, with some universities meeting once a month and others once a semester to accomplish this goal. Some university programs required that candidates have field placements in classrooms at every grade level for which they would be certified prior to student teaching.

2. Teacher candidates learn to teach and assess children using a wide variety of instructional strategies and assessment instruments

This highly ranked factor has not been identified in prior research. The current study reveals that both internal and external experts value (indicated by high rankings) requiring candidates to learn many instructional and assessment methods. Specifically, teacher candidates were required to administer evaluations to individual public school students with whom they interacted, whether for one semester or a full year. The focus of their assessments included identifying individual student needs and informing their instructional planning. Candidates were reported to be reflective about their practices, to change their practices to be more effective, and to demonstrate how their instruction had changed based on assessment data. According to the internal experts, teacher candidates appeared to have time to reflect on their learning and experiences with faculty and cooperating teachers. All programs agreed that these varied opportunities to learn about instruction and assessment began in the first education course and continued to the end of each program. The internal experts shared that students reported that their experiences in their teacher preparation programs were “real” and that they were learning to “talk” and “perform” like teachers. Candidates appeared to feel confident in their level of preparation.

Differences between programs were the range of experiences in using assessment data to drive real-world instructional decision making in public schools and the number of assessments introduced, including 4 to 11 distinct types of literacy evaluative instruments. At some universities, faculty demonstrated how to implement and interpret assessments with public school students.

3. Distinguished literacy teacher education experts highly valued the integrated, aligned, and spiraling literacy curriculum that their program developed

Internal experts noted that approaches and exact themes across content areas and semesters were spiraled and revisited, and these varied at each campus. Any updates to the curriculum involved the input of the full faculty unit. Most program development efforts took longer than a year to develop and initiate. All valued that their curriculum was monitored continuously for improvement through policies that were included in each program’s development. The spiral curriculum design (Bruner, 1996) was designed to deepen with each reiteration; for example, each reading course was built with a clear progression from one to the next.

Differences observed between institutions included and what was discussed by faculty in the program development process and when. Discussions at some universities allowed all faculty members to decide which content was covered in each class, which textbooks were used, and which assignments would occur. Although all programs had a procedure to inform students explicitly how the course sequence built on previous classes, the time at which this information was provided varied from opening orientation presentations to reemphasis of this information in student assemblies and retreats that occurred each semester.

4. Internal and external experts highly valued the highly qualified faculty who were exceptionally skilled in connecting theory to practice

The fact that the exceptional competence of program faculty ranked fourth was not unexpected. The fact that highly qualified was defined as adhering to the teacher–scholar model was revealing. Experts noted that university faculty (a) spent much time providing written and oral feedback for individual candidates to promote candidate learning, (b) spent exceptional amounts of time developing innovative, out-of-the-box instructional lessons, and (c) modeled literacy lessons, constantly providing candidates with opportunities to see a range of instructional methods. In fact, at most universities, candidates watched lessons being taught by the professor with students in the public schools, and this provided credibility for the methods and the faculty as exemplary teachers of children. The high ranking and consistency across all six programs for the teacher–scholar model did not deemphasize the high value that internal experts placed on faculty members involvement in creating basic research, as this factor ranked 12th. College- and university-level leadership valued the teacher–scholar model. Highly qualified faculty were defined in large part by their abilities to creatively and effectively apply scholarship, defined broadly as applied research, in their class content. There were fewer differences between institutions on this factor than on other factors.

5. Distinguished program experts valued the fact that their program articulated their theoretical base to faculty and students

Course and field experiences, program materials, pedagogy, and learning strategies stemmed from a consistent philosophical and theoretical basis. Faculty represented a diverse array of expertise that in some contexts might emerge as competitive viewpoints (e.g., more holistic approaches to reading vs. data-based decision making); but in these contexts the multiple perspectives worked in complementary ways, supporting teacher candidates’ learning. Candidates seemed to be very well informed about program requirements and expectations.

Differences were the manners in which students were informed of program requirements and expectations, ranging from initial orientations to an approach that involved infusion of such information into the content of every course. The specific theoretical bases cited were (a) sociocultural theoretical perspective, (b) community of learners’ philosophy, (c) cognitive apprenticeship theory, (d) explicit instructional philosophy, (e) integrated language arts theoretical perspective, (f) age-appropriate developmental approaches to instruction, (g) transactional theory, (h) gradual release of responsibility model, (i) self-regulation model of learning, (j) differentiated instructional model, (k) Taylor’s talents, (l) Bloom’s taxonomy, (m) Gardner’s multiple intelligences theories and conceptual frameworks, (n) the Teachers College framework, and (o) constructivism.

6. Internal and external experts placed a high value on the fact that all programs emphasize methods of teaching diverse learners

It was reported that candidates acquired responsibility and commitment to teaching all children how to read and write. Programs had a strong special education inclusion component that focused on literacy instruction for struggling learners. Diversity was addressed with regard to cultural responsiveness, racial and socioeconomic differences, and ways to address the varied literacy needs of diverse individual learners, including the needs of English language learners.

Differences included the methods by which elementary schools were selected for field experiences to ensure that teacher candidates had opportunities to teach diverse populations as well as the type of diversities that were emphasized. In addition to field placements, some programs held summer enrichment workshops, summer camps, and other special experiences that included special-needs students as the core population. The emphases on diversity were identified as high-need students, remedial readers, minority or multicultural and distinctive students, varied aged populations, and special-needs students. The range of instructional strategies included Teachers of English to Speakers of Other Languages (TESOL) infused teaching strategies, sheltered instruction for second-language learners (Lacina, Mayo, & Sowa, 2008), culturally responsive teaching methods, being inclusive, welcoming individual learning styles and interests, and developing valued “habits of mind” (e.g., cognitive flexibility; imagine, invent, and create; persist when the solution is not readily apparent; apply past knowledge to new situations; take responsible risks; find humor; think interdependently; and remain open to continuous learning).

7. Internal and external experts highly valued the strong rapport and close collaborations among program faculty and public school professionals

A true feeling of equal partnership was created with public school colleagues and was highly valued. Programs were built on a “community of learners” concept, whereby professors, teacher candidates, and public school professionals collaborated and worked closely together. Internal and external experts noted that teacher candidates reported having a sense of belonging to a profession. Public school personnel, professors, and teacher candidates reported learning from each other and valuing this opportunity. Students were treated as colleagues from the start with recognition of their current knowledge and developing expertise. All faculty and public school personnel were reported to demonstrate genuine caring and concern for the candidates.

8. The collaborative, cohesive, dedicated faculty and college leadership were identified to be the eighth most highly valued programmatic feature

Experts reported that college leaders were supportive of faculty and that governance was democratic with strong collaboration between administrators and faculty. The internal experts valued highly the strong department, college, and institutional support for the program and reported that administrators at all levels were knowledgeable of their respective programs. In addition, an honest, positive energy and enthusiasm existed among faculty for their profession, their work, and their candidates.

Although each of the following occurred at all universities, differences were identified between institutions with regard to the degree to which K–6 public school teachers participated with university faculty. Overall, the classroom teachers contributed to planning experiences, provided continuous feedback to candidates, interviewed potential teacher candidates, participated in district-level staff development, participated in initial and ongoing training for mentor teachers and principals, and had opportunities to serve as adjunct professors.

The items that ranked 9 to 14 were also ranked significantly higher than other programmatic features, but because the majority of external experts did not cite them, it was not possible to include them in the external expert data analysis. These factors were not cited by the majority of external experts because they were not evident to the experts who had not been integral to program creation. These statistically significant and highly valued items identified by internal experts are cited in Table 5 and include (9) faculty regularly schedule meetings to improve the program, (10) the program has an ongoing assessment process that employs multiple instruments, (11) program holds high expectations, (12) faculty regularly involve themselves in professional development and research, (13) program adheres to state and national reading standards, and (14) technology is infused throughout the program.

Demographics relative to distinctive literacy teacher education programs were analyzed to identify commonalities and differences. Statistically significant differences existed between these universities on each of the following: total college of education student enrollments (t = 3.29, df = 5, p = .02), number of full-time faculty who taught in the awarded programs (t = 3.032, df = 5, p = .029), number of reading courses offered by the awarded programs (t = 7.88, df = 5, p = .001), number of Caucasian students enrolled in the awarded programs (t = 5.56, df = 5, p = .003), number of African American students participating in the awarded programs (t = 6.00, df = 5, p = .002), number of Hispanic students enrolled in the awarded programs (t = 2.08, df = 5, p = .09), and gender distribution among the student bodies at these institutions (t = 30.08, df = 5, p < .001). In summary, these data revealed the universities that had received IRA’s Certificate of Distinction were of varied compositions and sizes and yet valued similar programmatic features.

Question 2: Does a prevalent theoretical orientation exist in literacy teacher education programs that have received IRA’s Certificate of Distinction, or if not, do programs of distinction present more than one orientation to teacher candidates?

To identify their programs’ theoretical orientation(s), the internal experts were asked to consider the following descriptions of theoretical orientations developed by the researchers and presented on the questionnaire and to indicate the one or more orientations that best described their program:

Positive/behavioral—Teacher education is viewed as the import or transmission of knowledge to learners, with learning to teach being an additive process. Knowledge and teaching behaviors hypothesized and acquired within teacher education courses are then applied in supervised teaching situations.

Cognitive—Teacher education focuses on how prospective teachers learn professional and practical knowledge and how prior beliefs and experience affect learning and decision making. Content centers on what prospective teachers need to know, conditions where particular knowledge may be required, and how reflective processes can deepen knowledge and the flexible application of these processes while teaching.

Constructivist theory—Learning to teach is viewed as a process of learning how prospective teachers transform their professional knowledge as they make connections to prior knowledge and construct meanings in classrooms while being guided by others, including teacher educators and the children in these classrooms. Teacher education is viewed as a learning problem, and conditions that contribute to changes in teachers’ use of multiple knowledge sources to solve these problems are documented.

Sociocultural theory—Learning to teach is viewed not simply as what happens in the brain of an individual but what happens to the individual in relation to a social context and through multiple forms of interactions with others. Priorities in the program are to help prospective teachers understand their own cultural practices and those of others, the impact of cultural practices on teaching and learning, and the value of implementing culturally supportive instruction.

Internal experts representing all six universities submitted responses, and these responses indicated 23 different theoretical orientations. These were distributed as follows: constructivist theory (n = 13), sociocultural theory (n = 7), positive or behavioral theory (n = 2), and cognitive theory (n = 1). Examining the number of internal experts indicating the different orientations revealed that 4% of the respondents cited cognitive theory, 14% cited positive or behavioral theory, 50% cited sociocultural theory, and 93% cited constructivist theory. These data indicated that faculty value more than one more theoretical orientation.

Conclusion and Discussion

This study is a research collaborative conducted with multiple sites, the type of research called for by Risko et al. (2008). The mixed-methods design of this classical Delphi method resulted in identification of 14 programmatic features that were ranked higher in value than others at a statistically significant level. Data include examples of programmatic features that had not been identified in previous research.

This research extends the work of Bean (l997), Hoffman et al. (2005), Grant and Secada (1990), and Risko et al. (2008) in four important ways. First, data provided more than 300 specific examples and descriptions of highly valued features associated with six literacy teacher education programs that have received IRA’s Certificate of Distinction and suggested how these features are operationalized. The study also revealed examples of programmatic features that had not been identified in previous research.

Findings suggest that carefully structured and sequenced public school-based teaching experiences, included from the first course to the end of a literacy teacher education program, could be among the most highly valued features in distinctive literacy teacher education programs. These data advance the work of Austin and Morrison (l962, 1976) by demonstrating that such features are now occurring in all courses from the freshman through senior semesters in programs of varied sizes and compositions across the United States. Forty years ago, apparent assumptions of teacher educators and self-reflective data indicated that student teaching was among the most, if not the most, valuable feature in literacy teacher education programs. Data in the present study indicate that an isolated, end-of-program, “apprentice-type” training model is not currently valued or followed.

The second most valued component supports a professional model in which highly relevant, spiraling theoretical and practical professional experiences occur throughout a novice’s program to support the individual’s developmental processes (i.e., the model followed in the medical profession). These data support the conception that literacy teacher education is more than the training of a craftsman; it involves the development of a highly skilled and reflective professional.

Third, the data concerning carefully structured and sequenced public-school-based teaching experiences revealed these experiences to be of greatest influence in building strong preservice, pedagogical knowledge bases and belief systems among teacher candidates. In fact, these experiences are more highly valued by experts than the quality of college classroom spaces, flexibility in course scheduling, methods by which teacher candidates are engaged or motivated to learn on the college campus, degree of leadership and support at the universities’ highest levels, the way that preservice teachers are grouped together in their college classes (i.e., cohort, heterogeneous, homogeneous grouping formats), the methods by which they are admitted to the program, and to a lesser degree the 13 other programmatic features that were highly ranked in importance to the creation of programs of distinction.

Fourth, when the quality of field experiences was examined more specifically, data suggest that there are features within these experiences that are more highly valued than others, which supports the findings of Bean (l997) and Risko et al. (2008). The degree to which these field experiences scaffold instruction for teacher candidates was judged the critical condition that must be present. Specifically, field experience lessons in distinctive programs were taught in close proximity to when teacher candidates implemented them, and the lessons were carefully crafted to follow a gradual release of responsibility model and to build on a spiraling, concentric, increased level of difficulty from semester to semester. These conditions were cited more frequently than either the quality of feedback provided by supervising teachers in the laboratory experience or the diversity of students that candidates taught, although these two conditions were judged to be common to all field experience plans at all six universities and were ranked above many other conditions that led to their program’s distinction.

Although prior studies have suggested that a highly collaborative, cohesive faculty and supportive college leadership would increase the quality of a literacy teacher education program (e.g., Vagle et al., 2006), that factor was ranked eighth in importance by the experts associated with these programs of distinction. By contrast, the data provided greater support for Grisham’s (2000) finding that the cohesiveness of content focus and integration and spiraling nature of curricula throughout a literacy education program were judged to be of greater importance and value, ranking behind only the need for candidates to engage in consistent, carefully selected, relevant field experiences and learning to teach and assesses children’s literacy using a variety of strategies and instruments.

The findings of this study also extend the work of IRA (2003a). In 2000, IRA reported that the 1,150 literacy teacher education programs in the United States averaged six semesters of reading methods coursework (IRA, 2003a). Results of this study indicate that programs of distinction emphasize reading methods coursework in an integrated, spiraling fashion in all courses in their programs, and there is a significant difference in the number of courses that are specified in their university catalogs with “reading” in the titles.

This study also extends the findings of prior studies in that data were collected across multiple, significantly different university settings. The findings relative to the size and the university, number of professors, and type of teacher candidates being trained were unrelated to the factors that were most valued. This increases the likelihood that such features can transfer to a wider variety of literacy teacher education programs in the United States. In addition, data were not limited by isolated, nontransferrable university milieus (Clift & Brady, 2005; NICHD, 2000).

The current study’s findings, resulting from its mixed-methods design, extend previous work by providing a rank order of importance for individual programmatic features as judged by both internal and external experts. Such data address the need identified by Anders et al. (2000). Specifically, the analytic procedures applied provide an indication as to the 14 features that were judged to best allow for “diversity and creativity” in individual program implementation (Anders et al., 2000, p. 727).

This research suggests that future studies of literacy teacher education programs should include both internal and external experts for two reasons. First, internal experts identified six more programmatic features that were common to all distinctive programs than did external experts (as shown in Table 5, Items 9–14). These six factors all related to the internal workings of the programs, inherent in the internal experts’ day-to-day operations, and not visible to the examinations of written reports or visitations conducted by the external experts. By contrast, external experts identified five features common to all programs that were not apparent to internal experts, including the following: (a) programs required candidates to reflect on and justify their professional decisions about instruction, pedagogy, philosophy, and assessment in ways that demonstrate where their knowledge is connected (i.e., candidates are taught decision making in highly effective and embedded contexts); (b) students were reported to judge their experiences in their preparation programs as “real,” that is, they had learned to “talk” and “perform” like teachers, enabling them to feel confident in their level of preparation; (c) distinctive programs developed dispositions toward service and professionalism in teacher candidates through conference presentations and involvement in professional associations, and these programs provided funding for these experiences; (d) an exemplary commitment of personal and professional support for candidates continued well after graduation; and (e) programs were of manageable size so faculty could cover both course work and field obligations. Thus, data suggest that internal and external expert judgments are valuable to advance our knowledge base concerning distinguished literacy teacher education programs.

Another finding suggested by the data is that depth of information is being communicated to future teacher educators, and this depth is highly valued by internal and external experts. Participants noted the importance of (a) preparing future teachers with strategies for teaching English language learners within all content areas and grade levels; (b) integrating strategies for using technology throughout the curriculum, instead of isolating technology within a specific technology class; and (c) providing more comprehensive literacy components within middle and secondary teacher education programs—for all future content area teachers.

This study found that internal and external literacy teacher education experts agree on the most highly valued literacy programmatic factors. Thus, if program faculty can make any changes in their program to begin their process of improving, the current research suggests they consider addressing how to (a) make field experiences more consistent and more closely tied to program philosophy, programmatic vision, and content presented in campus courses; (b) develop teacher candidates’ abilities to teach and assess children through a wide variety of instructional strategies and assessment instruments; and (c) integrate literacy and language strategies throughout the curriculum. Finally, this study found that although the constructivist orientation was the predominant philosophy shared by these universities, other orientations were concomitantly present within respective programs.

Literacy teacher education experts know a lot about how to do teacher literacy education. The results of this study advance our body of knowledge by identifying the 14 statistically significant programmatic features commonly valued by experts who participated or judged six literacy teacher education programs to be worthy of IRA’s Certificate of Distinction. This study helps us to streamline our future work rather than to define our end point. These data allow future participants and researchers to enter the discussion and research exploration at a more advanced level of professional knowledge than would have been possible without the data contained herein.

Limitations of the Study

This study explains programmatic features that were present in award-winning institutions. Prior to this study, highly valued programmatic features had not been identified in our body of literature. This study contributes to teacher educators’ understandings of programmatic features that are most successful in supporting student changes in achievement as well as growth in teacher candidates. However, there are many limitations to this study, which we note in detail below.

First, this study is limited in that it did not provide data as to the confluence of factors and synergistic nature of programmatic features. The data do not suggest that if a literacy teacher education program implements one feature, then another feature will automatically follow, nor do the data indicate that the IRA Certificate of Distinction programs can be reduced to a checklist of features that can transfer to make all programs or any program exemplary.

Second, for future studies, there is a need for triangulation with K–12 school personnel and student teachers. By including school personnel, the data would provide a richer description of program features and would more clearly articulate the most important and distinguished program features.

Third, the rank-ordering process of the Delphi method was also a limitation to this study. Often, when ranking importance, participants confuse the difficulty of achieving a certain feature with the actual effectiveness of that feature. For example, it may have been difficult for participants to articulate a theoretical base (ranked highly at 4); however, it may not be essential for a program’s effectiveness to have a consistent theoretical base. For this reason, the rank ordering process of the Delphi method was a limitation for some of the questions posed by this study.

Fourth, the reliance of self-reported data and the small number of teacher education programs that provided information for this study were limitations to the study. Increasing representation is necessary to effectively contribute to the large number of teacher education program in the United States. Likewise, since the researchers taught at a university receiving the IRA award, there may be biases on the part of the research team and on the part of the internal and external experts as well. As researchers, we relied on the trustworthiness of the key informants, and we sought multiple perspectives and member checking through the field testing of our questionnaire and the entirety of this study.

The fifth limitation to this study is that we focused on 4-year teacher education programs, as did the IRA and the certificate program. By focusing only on the 4-year programs, we are not intending to identify these programs as the most deserving since there are excellent 5th-year programs and nontraditional teacher education programs that are also of high quality. For the purpose of this study, we studied only 4-year, traditional teacher education programs that had received the IRA Certificate of Distinction award.

In closing, the preparation of literacy teachers does not occur in isolation. The programs in this study are influenced by ongoing shifts in technologies, expanding sociocultural realities, economies, national priorities, and shifting curricular and professional demands. Realizing that other conditions, outside of literacy teacher education programs, are highly valued by literacy experts, the rankings in this study are limited in that they relate only to the programs that have been created and that exist in 2010. These programs are the only ones to have been judged to meet all criteria for the IRA Certificate of Distinction. When other programs and other criteria are created, new studies of how the factors in these programs ranked in importance will be valuable. With all of these limitations, the data about highly valued literacy teacher education programmatic features presented in this study can inform our body of knowledge and constitute a point from which we can increase our understanding of elements of literacy teacher education programs that are judged by many experts, both internal and external to a program, to be important.

Footnotes

Acknowledgements

The authors thank the following individuals for their valued assistance with this study: Gerry Coffman, Kimberley Cuero, Elizabeth Dobler, Carol Donovan, Joyce Fine, Madeleine Gregg, Tamara Jetton, Carolyn Kitchens, Lori Mann, Lynne Miller, Jean Morrow, Beverly Reitsman, Helen Robbins, Misty Sailors, Carol Schlichter, Cynthia Shanahan, Cecilia Silva, Bill Smith, John Somers, Nancy Steffel, and Ranae Stetson. The authors also express their gratitude and appreciation to Victoria Risko and Mark Conley. Dr. Risko reviewed and offered critiques that significantly improved the prepublication version of this article. Dr. Conley provided important suggestions throughout this research study that immensely increased the quality of this work.

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.