Abstract

The impetus for evidence-based practice (EBP) has grown out of widespread concern with the quality, effectiveness (including cost-effectiveness), and efficiency of medical care received by the public. Although initially focused on medicine, EBP principles have been adopted by many of the health care professions and are often represented in practice through the development and use of clinical practice guidelines (CPGs). Audiology has been working on incorporating EBP principles into its mandate for professional practice since the mid-1990s. Despite widespread efforts to implement EBP and guidelines into audiology practice, gaps still exist between the best evidence based on research and what is being done in clinical practice. A collaborative dynamic and iterative integrated knowledge translation (KT) framework rather than a researcher-driven hierarchical approach to EBP and the development of CPGs has been shown to reduce the knowledge-to-clinical action gaps. This article provides a brief overview of EBP and CPGs, including a discussion of the barriers to implementing CPGs into clinical practice. It then offers a discussion of how an integrated KT process combined with a community of practice (CoP) might facilitate the development and dissemination of evidence for clinical audiology practice. Finally, a project that uses the knowledge-to-action (KTA) framework for the development of outcome measures in pediatric audiology is introduced.

Keywords

Evidence-Based Practice (EBP)

The origins of evidence-based practice (EBP) come largely from clinical medicine. The EBP paradigm provides techniques and procedures to critically examine the abundance of scientific evidence in order to assist clinical decision making and improve the quality, effectiveness, and efficiency of health services received by the public. The desired clinical outcome of EBP is an increase in the number of patients who get treatment of proven quality and effectiveness. The generally agreed-upon definition of EBP is that it is “the conscientious, explicit and judicious use of current best evidence in making decisions about the care of individual patients.” Evidence-based practice integrates “individual clinical experience with the best available external clinical evidence from systematic research” (Sackett, Rosenberg, Muir Gray, Haynes, & Richardson, 1996, p. 71). Incorporating evidence into practice is regarded as a process that begins with a search for research literature about how best to solve specific clinical problems and results in treatment decisions based on the best possible evidence (Stetler, 2001). As clinicians and their organizations learn more about EBP and the components of EBP, workshops, seminars, training kits, books, and educational opportunities have been developed to assist clinicians in developing the necessary EBP skill set that includes the ability to develop focused and appropriately structured clinical questions, search and locate high-quality evidence in the literature, evaluate the strength of the evidence, critically appraise the evidence, and implement evidence within the clinical context.

Evaluating the Strength of the Evidence: Hierarchy of Evidence

In order to provide professionals with a method for ranking the quality of research, hierarchies of evidence were introduced in the early 1990s. According to Rolfe and Gardner (2006), this notion of a tree-like hierarchy was evident in the seminal 1992 paper on EBP published by the evidence-based medicine working group (EBMWG), and although it has been modified somewhat since that time, it still exists today (EBMWG, 1992). Table 1 shows an applied hierarchy of evidence used in the profession of audiology (Cox, 2005). At the bottom of this hierarchy is expert opinion and case reports, which are often seen as unsystematic and subject to bias, thus making them the least “trustworthy” sources of information to use when making treatment decisions. Randomized controlled trials (RCTs) are viewed as the “gold standard” and are regarded as the most trustworthy sources of evidence because they are systematic and bias is greatly reduced; therefore, they receive the highest ranking in the hierarchy.

Level of Evidence Hierarchy for high-quality studies

It should be noted here that the requirement of randomized controlled trials (RCTs) as the highest level of evidence in pediatric audiology presents considerable challenges. The incidence rate of permanent childhood hearing impairment of reportedly 1-3/1,000 births (Hyde, 2005a) can make obtaining sufficient sample sizes for RCTs in order to detect a clinically important effect difficult. It may also mean that pediatric audiology RCTs would have to be multisite in nature, and this could become relatively expensive and time intensive. There is certainly a lack of pediatric audiology research centers and researchers relative to adult audiology research centers. There are also unique ethical considerations when conducting research with very young children, including concerns about consent by proxy and financial incentives to parents for enrolling their children in research studies (Cohen, Uleryk, Jasuja, & Parkin, 2007).

The historical purpose of EBP was to blend the clinical experiences of health care professionals: their skill and understanding of individual patient’s needs, with their knowledge about the strengths, weaknesses, applicability of the evidence, and the clinical significance of the treatment under consideration (Bess, 1995; Cox, 2005; Jerger, 2008; Palmer, 2007). The contemporary purpose of using an evidence-based approach to clinical practice is to close the gap between research and practice, reduce practice variation, and to ultimately improve patient care based on informed decision making. To start, locating and appraising the scientific literature can be a formidable task. Catherine Palmer and colleagues at the University of Pittsburgh have provided audiologists with a helpful article to assist with evaluation of the research literature in audiology (Palmer et al., 2008). However, even with information to assist the process, most health care professionals may not have the time or the expertise to review the literature each time they have important clinical questions to be answered. Therefore, professionals and their organizations generally work together to provide scientific review of the relevant literature and produce succinct guidelines that clinicians can use as tools to inform evidence-based practice. These efforts are published as Clinical Practice Guidelines.

Clinical Practice Guidelines (CPGs)

In a 2007 article, George Weisz and colleagues describe the historical changes in health care that resulted in the development of CPGs (Weisz et al., 2007). These included (a) the dissatisfaction with training and credentials in medicine and the wide variability of competence among practitioners, (b) the need for protocols and guidelines for complex therapeutic technologies and procedures (e.g., cancer treatment and

The most frequently used definition of CPGs is that they are “systematically developed statements to assist practitioner and patient decisions about appropriate health care for specific clinical circumstances” (Field & Lohr, 1990, p. 38). The systematic development of an evidence-based CPG begins with a well-formulated question about a specific clinical condition. It is also important at the beginning of the process to define the relevant populations and clinical settings, potential interventions, and desired outcome measures. The next step is to conduct a comprehensive literature search and a systematic review of the literature. Ideally, this work is conducted by a broad and representative sample of individuals from within the profession who have the skills required to independently and critically appraise the literature and apply the explicit “grading” criteria (see Table 1) to document the findings and summarize the literature review (Dollaghan, 2007). When CPGs can be based on a large number of high-quality studies it reduces the need for recommendations based on expert opinion. In many of the health sciences professions, including audiology, much of the scientific research literature has significant limitations and/or lacks sufficient relevance, limiting its use as high-quality evidence (Hyde, 2005b). This leaves a CPG development group to decide whether they are willing to make recommendations based on less than adequate evidence. Often the end result is a frustrated committee who continue to try to write the guideline based on consensus and their expert opinions while trying to ensure that they do not introduce their own bias. The other result may be the production of a guideline with the neutral conclusion that there is insufficient evidence to make a recommendation (Hyde, 2005b; Kryworuchko, Stacey, Bai, & Graham, 2009; Weisz et al., 2007; Woolf, 2000). Knowing that the practice of guideline production is not perfect, a guideline committee works to draft a document that reflects the strength of the evidence and is offered as a means of improving patient care and outcomes while providing a strategy for more efficient use of resources (Graham et al., 2003).

Evidence-Based Practice and Clinical Practice Guidelines in Audiology

Audiology, like most of the health sciences professions, has been working on incorporating evidence-based practice principles into its mandate for professional practice since the mid-1990s (Bess, 1995; Wolf, 1999). A review of professional activity in speech-language pathology and audiology presented by Lass and Pannbacker (2008) show the commitment of The American Speech-Language-Hearing Association (ASHA) and the Canadian Association of Speech-Language Pathologists and Audiologists (CASLPA) in promoting the application of evidence-based principles in clinical practice, classrooms, and research settings. Implementation of EBP is part of CASLPA’s 2008 vision, mission, and values statement and is included as a “core value” by the American Academy of Audiology (AAA, 2003, n.d; CASLPA, n.d.). AAA defines EBP as “To practice according to best clinical practices for making decisions about the diagnosis, treatment, and management of persons with hearing and balance disorders, based on the integration of individual clinical expertise and best available research evidence.” (AAA, n.d.). The publication of

In audiology, clinical uptake of evidence-based procedures can be relatively rapid. For example, when research indicated that the use of a higher probe-tone frequency (1000 Hz) provided a more valid indication of middle-ear function for infants and young children (Keefe, Bulen, Arehart, & Burns, 1993), pediatric audiologists in clinical practice were relatively quick to implement this into their protocols, even though lower frequency probe-tone (220 to 226 Hz) measures were the standard for many years. On the other hand, there is still lack of adherence to best practice recommendations for the use of other important clinical measures. For example, real-ear probe-microphone measures for the fitting and verification of hearing aids have been an important component of best practice guidelines for adults and children for many years (AAA, 2003; College of Audiologists and Speech-Language Pathologists of Ontario [CASLPO], 2000, 2002; Joint Committee on Infant Hearing [JCIH], 2007; Joint Committee on Infant Hearing, American Academy of Audiology, American Academy of Pediatrics, American Speech-Language-Hearing Association, & Directors of Speech and Hearing Programs in State Health and Welfare Agencies, 2000; Modernising Children’s Hearing Aid Services [MCHAS], 2007; Valente et al., 2006). In clinical practice, however, studies have shown that 59% to 75% of adult hearing aid fittings are

Criticisms and Challenges of Evidence-based Practice

Most professionals support the fundamental reasoning behind EBP. However, since the early 2000s, scholars have started to voice criticism over EBP. In a recent article, several authors lament that EBP reduces health care to a “routinised, quantifiable practice driven by utility, best practices and reductive performance indicators” (Murray, Holmes, & Rail, 2008, p. 276).

Some of the most common criticisms of evidence-based practice include (a) the current definitions of “gold standard” research are restrictive; (b) the use of expert opinion is undervalued; (c) the shortage of coherent, consistent scientific evidence limits the ability to conduct EBP reviews; (d) there are difficulties in applying evidence in the care of individual patients; (e) it denigrates the value of clinician and patient experience; (f) time constraints, skill development, and resource limitations restrict its application; and (g) there is a lack of evidence that evidence-based medicine “works” (Cohen, Stavri, & Hersh, 2004; Mullen & Steiner, 2004; Murray et al., 2008; Rolfe & Gardner, 2006; Straus & McAlister, 2000).

Alterations in the View of What Constitutes “Gold Standard” Status in Evidence Hierarchies

A primary trait of the EBP hierarchy of evidence is the ranking of randomized controlled trials (RCTs) as the “gold standard.” Despite initial widespread promotion of this grading system, recent publications have suggested alternative methods (see Rolfe & Gardner, 2006, for more detail). Some members of teams who promoted a hierarchy of evidence with systematic reviews of RCTs as the gold standard have recently rescinded their belief that this is appropriate (Thompson, 2002). Some experts have moderated their views by advocating different gold standards or different hierarchies for different questions (DiCenso, Cullum, & Ciliska, 1998; Evans, 2003; Logan, Hickman, Harris, & Heriza, 2008). The Joanna Briggs Institute, an international not-for-profit research and development organization specializing in evidence-based resources for health care professionals, has twice modified its Level 4 evidence criteria, once in 1999 and again in 2004 (Rolfe & Gardner, 2006). The changes had to do with accepting and/or denying clinical experience and expertise as forms of evidence. An important point in this discussion is that any changes in hierarchy criteria may impact the ongoing validity of previously developed evidence reviews and resulting CPGs (Rolfe & Gardner, 2006).

Expert Opinion Versus Evidence

There continues to be ongoing debate on the use of scientific knowledge versus clinical expertise in EBP across health sciences professions (Fago, 2009; Wolf, 2009; Zeldow, 2009). The proponents of EBP would argue that the current definition of EBP includes clinical expertise and patient values. They would also argue that clinicians can, at times, choose to override the scientific evidence and still be engaged in EBP. However, it is important to note that relying solely on clinical judgment and expertise has known problems. Opinion can be affected by such factors as past and/or personal experience, belief in and expectation for success, selective use of evidence, predetermined bias, motivation, distortion of memory, persistence in belief that there is only one best way to do something, professional norms, business pressures, and other factors (Ismail & Bader, 2004; Kane, 1995; Rinchuse, Sweitzer, Rinchuse, & Rinchuse, 2004; Woolf, 2000). For these reasons, an approach to integrating and balancing information from research, from clinical experience, and from individual patient needs remains an important goal. The following section will discuss the specific difficulties encountered when trying to integrate these three sources of information.

Difficulties in Applying Evidence in the Care of Individual Patients

A major criticism of EBP is based on providing clinicians with study results that are established from trends from group data based on average behaviors of “acceptably similar” groups of participants (Cohen et al., 2004; Mullen & Steiner, 2004; Murray et al., 2008; Straus & McAlister, 2000). This ignores the fact that there is always group and individual variability. If a clinician blindly applies a “proven” procedure, assuming the individual will benefit, there could be a significant practice error. For example, infants are not average adults. Until the 1990s, the predicted output performance of hearing aids were based on measurements of the acoustic characteristics of average adult ears. We know that an infant’s ear is much smaller than an adult’s ear. The output of a hearing aid fitted to an infant’s ear using these “average” adult transformation values could be 30 decibels greater at some frequencies than the same hearing aid on an adult’s ear (Seewald, Moodie, Scollie, & Bagatto, 2005; Seewald & Scollie, 1999). Speech sounds and loud environmental sounds could be overamplified, potentially causing discomfort and increased risk of additional hearing loss. Unfortunately, the infant cannot tell anyone the hearing aid is too loud because of their lack of communication skills. Treating individuals like “the masses” is a valid criticism and it can be addressed in numerous ways.

Denigrates the Value of Clinician and Patient Experience

Evidence-based practice can be seen as both “self-serving and dangerously exclusionary in its epistemological methodologies” (Murray et al., 2008, p. 275). By relying primarily on the “methodological fundamentalism” associated with RCTs and quantitative evidence, other forms of knowledge, including clinician and patient experiences, are denigrated (House, 2003; Murray et al., 2008). Critics of the current state of EBP emphasize that there are other sources and types of clinically relevant and important evidence and additional ways to categorize quality (Cohen et al., 2004; Upshur, VanDenKerkhof, & Goel, 2001). They also caution that by depreciating the value of clinician and patient experience we are not fully “treating” our patients with the

Time Constraints, Skill Development, and Resource Limitations

If professionals are going to implement EBP procedures into their work life, they must develop the necessary skills to find and critically appraise the evidence. This takes time and resource allocation from not only a personal level but also from an organization level. Even if the evidence is gathered and organized for clinicians (as it often is in CPGs), the implementation of evidence into practice often takes redefining or learning a new skill set. This also takes time because it is easier to habitually continue to do what you know how to do than it is to implement something new into your repertoire (Rochette, Korner-Bitensky, & Thomas, 2009).

An examination of health sciences research literature on barriers to implementing evidence into clinical practice reveals that “lack of time” is a major limitation cited by most clinicians across professions (Iles & Davidson, 2006; Maher, Sherrington, Elkins, Herbert, & Moseley, 2004; McCleary & Brown, 2003; McCluskley, 2003; Mullins, 2005; Zipoli & Kennedy, 2005). The same authors note “lack of skill or knowledge” about implementing EBP or reviewing research literature as another limitation across the health science professions. The virtual explosion of articles and books written about EBP and EBP procedures for specific professions also can make it overwhelming for the clinician who is interested in studying the topic (Rochette et al., 2009).

Lack of Evidence That Evidence-Based Medicine “Works”

Critics of EBP point out that there is little research to date that shows that implementing EBP improves the quality of health care. Certainly, they point out, there are no RCTs or compelling evidence that have validated EBP as an intervention (Cohen et al., 2004; Coomarasamy & Khan, 2004; Murray et al., 2008; Parkes, Hyde, Deeks, & Milne, 2001).

Limitations of CPGs

Given shortcomings in EBP, it is not surprising that there are limitations associated with the development and use of CPGs. The most fundamental limitation of CPGs is that they often do not change practice behavior. Analyses of the barriers to practice change indicate that obstacles to change arise at many different levels, including (a) at the level of the guideline, (b) the individual practitioner, (c) the organization, (d) the wider practice environment, and (e) at the level of the patient (Greenhalgh, Robert, Macfarlane, Bate, & Kyriakidou, 2004; Grol, Bosch, Hulscher, Eccles, & Wensing, 2007; Grol & Grimshaw, 2003; Légaré, 2009; Rycroft-Malone, 2004). A discussion of the first four limitations listed above is provided in the following sections and a summary is provided in Appendix A. A discussion of patient-related behavior that affects the use of evidence in practice will not be provided in this manuscript as it is not the focus of this current work.

Although the following section discusses the characteristics of guidelines, practitioners, organization, and practice environments as obstacles to implementation of evidence, it should be noted that many of these same characteristics could be facilitators to implementation of evidence in practice. Facilitators are factors that promote or assist implementation of evidence-based practice (Légaré, 2009). For example, lack of time could be a considerable barrier, but having enough time would facilitate the transfer of evidence into practice. Similarly, clinician attitude to implementation of guidelines into clinical practice could be a barrier or facilitator depending on whether the attitude was conducive to change or not.

Characteristics of Guidelines That Affect Implementation in Clinical Practice

The Appraisal of Guidelines Research and Evaluation (AGREE) Instrument has outlined the criteria that CPGs should meet in order to provide practitioners with comprehensive and valid practice recommendations (AGREE Collaboration, 2001; The AGREE Collaboration Writing Group et al., 2003). AGREE recommendations suggest that explicit information related to the following domains should be clearly presented as part of guidelines: scope and purpose, stakeholder involvement, and rigor of development (including quality of evidence informing recommendations, clarity and presentation, applicability, and editorial independence; AGREE Collaboration, 2001; The AGREE Collaboration Writing Group et al., 2003). Research that appraises guidelines in the health sciences professions has shown that many guidelines do not meet the AGREE criteria for high quality, and this may have an impact on their use (Bhattacharyya, Reeves, & Zwarenstein, 2009; Veldhuijzen, Ram, van der Weijden, Wassink, & van der Vleuten, 2007). In a recent review of guideline development, dissemination, and evaluation in Canada it was reported that most guidelines were English-only publications. In addition, 6% of the written guidelines submitted to the Canadian Medical Association Infobase did not indicate a review of the scientific literature and less than half of the guidelines graded the quality of the evidence (Kryworuchko et al., 2009).

Table 2 provides an overview of guideline characteristics that might influence their adoption in clinical practice (Grol et al., 2007).

Characteristics of innovations/guidelines that might promote or hinder their implementation.

Source: From “Planning and studying improvement in patient care: The use of theoretical perspectives.” by R. P. T. M. Grol, M. C. Bosch, M. E. J. L. Hulscher, M. P. Eccles, & M. Wensing, 2007,

Characteristics of the Practitioner That Affect Implementation of Guidelines in Clinical Practice

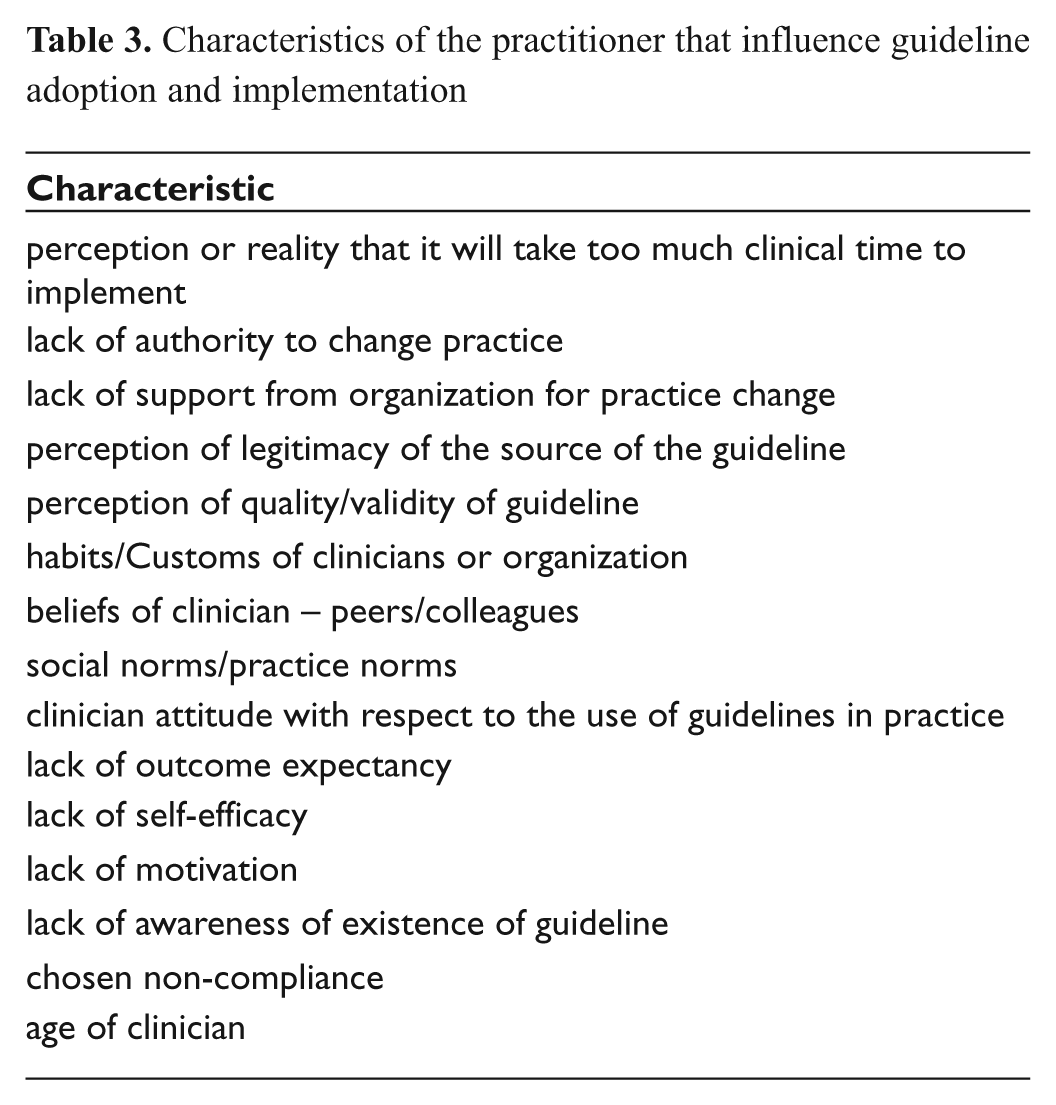

There have been numerous studies examining the obstacles to EBP by individual practitioners in health care (Bhattacharyya et al., 2009; Carlson & Plonczynski, 2008; Damschroder et al., 2009; Davis & Taylor-Vaisey, 1997; Estabrooks, Floyd, Scott-Findlay, O’Leary, & Gushta, 2003; Green, 2001; Iles & Davidson, 2006; Ismail & Bader, 2004; Kryworuchko et al., 2009; Légaré, 2009; Michael & John, 2003; Mullins, 2005; Pagoto et al., 2007; Veldhuizen et al., 2007; Zipoli & Kennedy, 2005). Lack of time is ranked as the greatest obstacle to implementing evidence and/or CPGs into clinical practice. Table 3 provides a list of other factors cited in the literature that hinder practitioner-level implementation of evidence and/or guidelines in clinical practice.

Characteristics of the practitioner that influence guideline adoption and implementation

Characteristics of the Context in Which the Practitioner Works That Affects Implementation of Guidelines in Clinical Practice

Context can be defined as the environment or setting in which people receive services or the clinical setting in which proposed evidence-based uptake is to take place (Rycroft-Malone, 2004). The context is dynamic and interacts with the individuals and the systems in which they work (Estabrooks, Squires, Cummings, Birdsell, & Norton, 2009; Masso & McCarthy, 2009; McCormack, Kitson, Harvey, Rycroft-Malone, Titchen, & Seers, 2002; Rycroft-Malone, 2004). The contexts in which practitioners work can have a significant impact on their ability to change practice behaviour primarily because of the focus on standard operating procedures and behavioural norms (Rosenheck, 2001). The importance of leadership within the practice context is imperative for change to take place (Aarons, 2006; Cummings, Estabrooks, Midodzi, Wallin, & Hayduk, 2007; Estabrooks et al., 2009; Masso & McCarthy, 2009). Table 4 provides a list of characteristics of the context that influence guideline adoption and implementation (Aarons, 2006; Cummings et al., 2007; Damschroder et al., 2009; Davis & Taylor-Vaisey, 1997; Estabrooks et al., 2009; Glasgow & Emmons, 2007; Greenhalgh et al., 2004; Masso & McCarthy, 2009; McCormack et al., 2002; Rosenheck, 2001; Rycroft-Malone, 2004).

Characteristics of the context in which the practitioner works that influence guideline adoption and implementation

Characteristics of the Broader Health Care System That Affects Implementation of Guidelines in Clinical Practice

As shown in Table 5, the broader health care system is also a factor in guideline adoption and implementation (Bhattacharyya et al., 2009; Davis & Taylor-Vaisey, 1997; Grol et al., 2007; Grol & Wensing, 2004).

Characteristics of the broader health care system that influence guideline adoption and implementation

Putting Evidence in Its Place: Evidence-Based Practice and Knowledge Translation (KT)

Tables 2 to 5 make it clear that implementation or uptake of new knowledge into changes in clinical practice is not generally achieved simply by creating the knowledge, distilling it into useable CPG formats, and disseminating it to clinicians, administrators, and/or policy makers. In an effort to close the knowledge-to-clinical action gap, many of the health sciences professions are taking a knowledge translation (KT) approach to the development and dissemination of evidence for clinical practice.

The Canadian Institutes of Health Research (CIHR) defines KT as a “dynamic and iterative process that includes synthesis, dissemination, exchange and ethically-sound application of knowledge to improve the health of Canadians, provide more effective health services and products and strengthen the health care system.” The definition is combined with a description of the KT process. “KT takes place within a complex system of interactions between researchers and knowledge users which may vary in intensity, complexity and level of engagement depending on the nature of the research and the findings as well as the needs of the particular knowledge user” (CIHR, n.d.). This definition has been adopted by the United States National Center for Dissemination of Disability Research and the World Health Organization (WHO; Straus, 2009).

The Knowledge-to-Action (KTA) Framework: A Model for Knowledge Translation (KT) in Audiology

After reviewing 31 different conceptual knowledge translation (KT) frameworks, Graham and colleagues developed a two-category KT framework that has been widely adopted by researchers and may be useful for consideration by the profession of audiology. They divide KT into two categories: (1) end-of-grant KT, and (2) integrated KT (Graham et al., 2006; Graham & Tetroe, 2007). End-of-grant KT includes research dissemination, communication, summary briefings to stakeholders, educational sessions with practitioners, and publications in peer-reviewed journals. Moving a KT product from the research laboratory into industry is also considered a form of end-of-grant KT. Integrated KT represents a more modern way of conducting research studies and involves

An integrated KT method that may be applied to evidence-based audiology research is the KTA Process (Graham et al., 2006; Harrison et al., 2010; Straus et al., 2009). The KTA process is illustrated in Figure 1. There are

The knowledge-to-action process. Adapted from “Lost in knowledge translation: Time for a map?, by I.D. Graham, J. Logan, M. B. Harrison, S. E. Straus, J. Tetroe, W. Caswell, and N. Robinson.

The

The action cycle includes the following:

Adaptation of the evidence/knowledge/research to the local context;

Assessment of the barriers to using the knowledge;

Selecting, tailoring, and implementing interventions to promote the use of the knowledge within clinical practice settings;

Monitoring of knowledge use;

Evaluation of clinical uptake outcomes of using the knowledge;

Methods to sustain ongoing knowledge use.

The development of the application of knowledge cycle in this model has taken into consideration many of the criticisms related to EBP reported in the literature (Cohen et al., 2004; Graham et al., 2006; Mullen & Steiner, 2004; Murray et al., 2008; Straus & McAlister, 2000; Upshur et al., 2001). By actively collaborating with the end users of the knowledge it places value on their experience and opinion and considers important factors related to time, skills, attitude, resources, and organizational practice that impact the use of knowledge in clinical practice.

Adaptation of the Evidence/Knowledge/ Research to the Local Context

Audiologists work in a variety of practice contexts. Many audiologists work in private practice, others work in hospital or rehabilitation settings, and still others work in public health, industry, universities, schools, and other health care settings. These practice contexts may differ in their workplace structure, organizational agenda, and/or leadership. Similar to other knowledge translation frameworks such as the Promoting Action on Research Implementation in Health Services (PARiHS) framework, the KTA framework postulates that the implementation of evidence will be most successful when necessary adaptations appropriate for the clinical context have been considered (Graham et al., 2006; Graham & Tetroe, 2007; Rycroft-Malone et al., 2002; Straus et al., 2009).

Assessment of the Barriers to Using the Knowledge

According to much of the recently published implementation research, implementation interventions are likely to be more effective if they target causal determinants of behavior (Michie, Johnston, Francis, Hardeman, & Eccles, 2008; Michie, van Stralen, & West, 2011). An audiologist may not implement or adhere to a CPG for fitting hearing aids to adults, for example, if she or he perceives there is a lack of beneficial outcome in doing so. If an audiologist lacks confidence in performing a real-ear probe-microphone measurement it will likely reduce his or her desire to implement the measurement into practice. An assessment to barriers to using the evidence in clinical practice provides an opportunity to determine how to overcome the barriers to facilitate behavior change.

Selecting, Tailoring, and Implementing Interventions to Promote the Use of the Knowledge Within Clinical Practice Settings

If one considers the context in which the audiologist works and the barriers to practice change, then implementation interventions could be developed to promote the use of the knowledge in the practice setting. For example, if it has been identified that audiologists lack confidence in accurately performing real-ear measurements of hearing aid performance then tailored, hands-on, educational opportunities might be considered to reduce this confidence barrier.

Monitoring of Knowledge Use

After an implementation intervention has occurred, it is important to determine how and to what extent the knowledge has been translated into clinical use (Straus et al., 2009). In audiology, for example, monitoring the use of knowledge could entail measuring a change in knowledge, understanding, or attitude toward the performance of real-ear measurements. We could also perform measurements of the frequency at which real-ear measurements were made after our targeted hands-on intervention. Monitoring of knowledge use could also alter barriers at administration levels. For example, if evidence-based research shows that the performance of real-ear measurements provides patient benefit, reduces hearing aid returns, and reduces the time taken to achieve a satisfactory fitting over not performing the measurement, we could use this information to attempt to persuade a hospital administrator to provide appropriate appointment time for the audiologist to conduct the measurements.

Evaluation of Clinical Uptake Outcomes of Using the Knowledge

The focus of research and subsequently CPGs is on achieving good outcomes for the individual’s in our care. Therefore, we often measure the effectiveness of our treatment in terms of patient-related outcome measures. When implementing evidence into clinical practice it is also important to consider implementation outcomes to ensure that the CPG is being implemented (adhered to) as it was intended. It is often the case that when a program or practice is not achieving desirable patient-related outcomes, more effort goes into performing more and better research on the program or practice. More research on the program, protocol, or CPG to achieve positive outcomes will not help implementation (Fixsen, Naoom, Blase, Friedman, & Wallace, 2005). Fixsen et al. caution that errors may be made leading to the conclusion that a treatment intervention is ineffective, when in fact the issue is that the program or protocol for treatment has not been implemented appropriately.

Methods to Sustain Ongoing Knowledge Use

Sustainability can be defined as “the degree to which an innovation continues to be used after initial efforts to secure adoption is completed” (Rogers, 2005, p. 429). One challenge to sustainability of knowledge use in audiology is within the organizational structure. If organizational structures do not intrinsically change to support the new evidence being put into practice, then audiologists will have a tendency to revert back to their former ways of doing things. Flexible knowledge sustainability strategies need to be considered during the development stages of CPGs (Davies & Edwards, 2009).

Why Is Knowledge Translation (KT) Important to Audiology?

Despite the fact that the profession of audiology works to develop best practice guidelines and protocols based on the best available evidence, there is often an apparent failure to use this research evidence in clinical practice and/or to use it to inform decisions made by managers and/or policy makers (Kirkwood, 2010; Lindley, 2006; Mueller, 2003; Strom, 2006, 2009). The determinants to the use or nonuse of knowledge in clinical practice were tabulated in Tables 2 to 5. End-of-grant and integrated KT approaches (the KTA framework) could be used in audiology to ensure that factors influencing the uptake of evidence in clinical practice, including characteristics of the guidelines, the individual practitioner, and the contexts/settings in which the knowledge is used, are better understood and addressed. There are some potential limitations in using an integrated knowledge translation approach to knowledge development. These include the potential for increased cost and time for guideline development using this iterative approach and difficulty obtaining release-from-practice time for audiologists to participate in the guideline development process without financial reimbursement to the employer. It may be difficult to reach consensus between clinicians and researchers on what constitutes an acceptable modification to a guideline. More research on these aspects of active collaboration between researchers and end users is needed to address these important issues. One positive aspect of research work to date is that government-level funding agencies such as the Canadian Institutes of Health Research (CIHR) and the National Institutes of Health (NIH) are providing funding for knowledge translation projects that actively engage end users (patients, clinicians, and/or policy makers) of research.

Communities of Practice in Audiology: Facilitators of Knowledge Into Action

An examination of the factors influencing evidence uptake that appear in Tables 2 through 5 on the previous pages and summarized in Appendix A provides us with a better understanding of why there is a KTA gap. The factors also reveal the complex processes involved in diffusion of knowledge and behavior change. The complexity may be reduced with early and ongoing involvement of researchers, practitioners, policy makers, and patients (Innvaer, Vist, Trommald, & Oxman, 2002; Landry, Amara, & Lamari, 2001; Lomas, 2000; McWilliam et al., 2009; Roux, Rogers, Biggs, Ashton, & Sergeant, 2006; Straus, 2009). The translation of knowledge or evidence into clinical practice is an active process. In the KTA model the process is “iterative, dynamic, complex, concerning both knowledge creation and application (action cycle) with fluid boundaries between creation and action components” (Graham et al., 2006; Straus, 2009, p. 6).

Both the creation of knowledge and application of knowledge in practice are social processes, and as such, communities of practice have the potential to reduce the KTA gap, assist with knowledge diffusion, and be facilitators of practice change. One of the primary advantages in terms of diffusion of knowledge and clinical practice behavior change is that by collaborating with practitioners we have individuals who will know how to “grease the implementation wheels and provide a road map to the potential mine fields inherent in attempting to introduce change in any organization” (Graham & Tetroe, 2009).

Communities of practice (CoPs) are comprised of individuals who share common concern or enthusiasm about a topic or problem and who deepen their knowledge and expertise about the area by frequently interacting with one another (Barwick et al., 2005; Li et al., 2009; Wenger, McDermott, & Snyder, 2002). Initially described in 1991, the term CoP has evolved to be defined as a group of people with a unique combination of three structural concepts: the

Value of a Community of Practice for Pediatric Audiology

Approximately 30% of children in North America who are fitted with hearing aids are receiving care that is inconsistent with evidence-based CPGs (Bess, 2000; Lindley, 2006). In a 2003 paper, it was noted, “There is a current trend to develop test protocols that are ‘evidence based’. . . . But, before we develop any new fitting guidelines, maybe we should first try to understand why there is so little adherence to the ones we already have” (Mueller, 2003, p. 26). In the area of pediatric audiology, every effort is made to ensure that CPGs are developed using systematic reviews and the best available evidence. A review of the literature indicates that to date no systematic appraisal of pediatric amplification CPGs or their implementation has been conducted. Therefore, it is difficult to say whether it is the guideline or implementation factors that account for the fact that these children are not receiving care based on current CPGs. Appendix A provides us with information on why we may have adherence issues. Utilizing a collaborative and integrated KT approach to the development and the subsequent implementation of knowledge into clinical practice may provide insight into how to reduce the barriers and facilitate the movement of evidence into practice.

Brown and Duguid (2001) state that “knowledge runs on rails led by practice” (p. 204). Developing a CoP in pediatric audiology could facilitate the knowledge creation cycle in an integrated KT approach by utilizing an engaged community with a shared understanding of the knowledge needed and who would have the ability to assist in tailoring or customizing the knowledge for better use among intended users (Fung-Kee-Fung et al., 2009; Gajda & Koliba, 2007, 2008; Koliba & Gajda, 2009; Salisbury, 2008a, 2008b; Stahl, 2000). CoPs provide an opportunity for the creation of knowledge and knowledge products to include the tacit knowledge that experienced practitioners have accumulated through years of practice (Allee, 2000; Brown & Duguid, 2001; McWilliam et al., 2009; Serrat, 2008). This tacit knowledge makes it possible for them to be advocates and facilitators in the development of resources that reflect accumulated ways of knowing and experiences that will meet the cognitive needs of novice practitioners and the experiential needs of expert practitioners (Salisbury, 2008a, 2008b; Stahl, 2000).

Examples of Communities of Practice in Health Care

The next section of this article will provide a description of two successful Canadian-based CoP programs in health care. The first, Cancer Care Ontario/Program in Evidence-Based Care (Browman et al., 1995; Browman, Makarski, Robinson, & Brouwers, 2005; Evans, Graham, Cameron, Mackay, & Brouwers, 2006; Fung-Kee-Fung et al., 2009; Stern et al., 2007), is of interest because it focuses on the use of practitioners during the guideline development process. The second, Ontario Children’s Mental Health Child and Adolescent Functional Assessment Scale (CAFAS; Barwick, Boydell, & Omrin, 2002; Barwick et al., 2005; Barwick, Peters, & Boydell, 2009), is of interest because it relates to work in the pediatric population.

Clinical Practice Guideline Development: Guiding Practice of Cancer Care in Ontario

Since 1995, the development and maintenance of CPGs guiding the practice of cancer care in Ontario has been a joint venture between Cancer Care Ontario (CCO) and the Program in Evidence-Based Care (PEBC) at McMaster University. The development of CPGs follows a cycle of development described by Browman et al. The guidelines are initially developed by guideline panels, working groups, and medical experts. The report created includes the guideline questions, the literature search strategy, a systematic review of the literature, the consensus of the panel on the interpretation of the evidence, and draft guideline recommendations. This document and a standardized feedback survey are then sent to a wide group of physicians who might find the guideline relevant (Brouwers, Graham, Hanna, Cameron, & Browman, 2004). The physicians are asked to respond to the survey questions and to provide comments, suggestions, and opinion on how the guideline might be improved so that implementation into clinical practice will be facilitated. The practitioners who review the CPGs developed by the Program in Evidence-Based Care (PEBC) panel can be defined as a community of practice (CoP). Evans et al. (2006), Browman et al. (2005), and Browman and Brouwers (2009) describe some of the benefits experienced by CCO and the PEBC by including this CoP feedback into the CPG cycle:

Feedback improved the quality of the documents and, on occasion, led to substantive changes to the CPG;

By requesting feedback on the CPG, physicians had to review the document and, therefore, were made aware of and educated about the guideline;

The review stimulates learning within the CoP and increases dialogue on important topics;

Despite rigorous adherence to the development of guidelines by experts, practitioner suggested improvements/changes were incorporated into 44% of CPGs;

By sending the guideline to practitioners for comment/ review it provided a “heads-up” to practitioners that a guideline was about to be finalized and released.

A recent publication (Stern et al., 2007) described the results of using oncologists “in-the-field” to facilitate CPG development and adoption of guidelines into practice. A reduction was seen in operative mortality of pancreatic cancer and the improvement in harvesting lymph nodes in colorectal cancer. Significant improvements were made in the area of colorectal and pancreatic cancer indicators, with a mean reduction in 30-day operative mortality from 10.2% in 1988-1996 to 4.5% in 2002-2004 and compliance with treatment guidelines increased from 27% in 1997-2000 to 69% in 2005. Therefore, it was concluded that active participation of practitioners and a CoP approach were essential components to changing practice and improving quality care in surgical oncology practices in Ontario.

Ontario Children’s Mental Health Child and Adolescent Functional Assessment Scale (CAFAS) Initiative (Barwick et al., 2002, 2005, 2009)

Since 2000, 117 Child and Mental Health Organizations in Ontario have been mandated to adopt an electronic version of a standardized outcome measurement tool called the Child and Adolescent Functional Assessment Scale (CAFAS; Hodges, 2003). For this group of first users of the CAFAS, Barwick et al. (2002) used a KTA approach to develop software training, web, wiki, email, and telephone support systems. They also provided face-to-face group and individual consultation and training services to facilitate implementation of the CAFAS. Recently another group of new CAFAS users were mandated to adopt the outcome tool. Barwick and colleagues (2009) used this opportunity to study the use of a community of practice (CoP) approach to implementation versus a practice as usual (PaU) approach. Both the CoP and PaU groups received standard 2-day training on the use of the functional assessment scale (CAFAS) in clinical practice. The CoP approach included 6 meetings over 11 months where additional support/training were provided. The research questions focused on the use of a CoP model to facilitate practice change and increase the use of the functional assessment scale, knowledge of the scale, satisfaction with support, and materials for implementation of the functional assessment scale relative to the practice as usual group. Although some methodological concerns have been raised about this study (Archambault et al., 2009), results generally suggest that the use of CoPs might facilitate implementation of evidence into practice. Practitioners in the CoP group demonstrated greater use of the tool in clinical practice. They also demonstrated better knowledge of the tool at the end of 1 year and more satisfaction with the implementation supports than did the PaU group.

Using an Integrated Knowledge Translation Process for the Development of Outcome Measures in Pediatric Audiology

In 2008, members of the Child Amplification Laboratory (CAL) at the National Centre for Audiology (NCA), University of Western Ontario (UWO), met with a purposely selected group of pediatric audiologists from across Canada. The overall aims for this meeting were (a) to discuss potential interest in establishing a CoP in pediatric audiology across Canada with the aim of reducing the KTA gap for children receiving audiological services, and (b) to define areas of practice where these pediatric audiologists felt that there was a lack of knowledge in the treatment for children receiving audiological services. During the one-and-a-half day meeting, the pediatric audiologists discussed the challenges to implementing evidence into clinical practice. The stated factors affecting the use of evidence in their practices, regardless of practice setting, were similar to those outlined earlier in this article in Tables 2 to 5. The audiologists reached consensus that the area that they would like to have more knowledge and evidence for use in clinical practice was outcome measures to evaluate the auditory development of children with permanent childhood hearing impairment (PCHI) aged birth to 6 years who may or may not wear hearing aids. They also agreed that they would like to work as a country-wide CoP and in collaboration with researchers at the NCA to develop this knowledge. In 2009, researchers in the CAL began work to develop a guideline that focused on providing pediatric audiologists with appropriate measurement tools and protocols that could be used to assess aided auditory-related outcomes for children aged birth to 6 years. The aim was to actively collaborate with the pediatric CoP using an integrated KT approach to develop this knowledge for use in clinical practice. The results of this knowledge development will be discussed in the subsequent articles in this issue, with Bagatto and colleagues (2011a) providing an overview of the considerations and issues associated with outcome evaluation tools and a summary of the literature associated with the selection of outcome measures for use when examining the auditory development of children with PCHI aged birth to 6 years. Moodie and colleagues (2011) provide the results of the individual assessment of each of the outcome evaluation tools by individual audiologists within the country-wide pediatric CoP. Bagatto and colleagues (2011b) provide information about the final guideline called the University of Western Ontario Pediatric Audiological Monitoring Protocol (UWO PedAMP) version 1.0 and provide data from a clinical sample of children with permanent childhood hearing impairment who wear hearing aids.

List of Abbreviations

AAA: American Academy of Audiology

AGREE: Appraisal of Guidelines Research and Evaluation Instrument

ASHA: American Speech-Language-Hearing Association

CAFAS: The Child and Adolescent Functional Assessment Scale

CAL: Child Amplification Laboratory

CASLPA: Canadian Association of Speech-Language Pathologists and Audiologists

CASLPO: College of Audiologists and Speech-Language Pathologists of Ontario

CCO: Cancer Care Ontario

CIHR: Canadian Institute of Health Research

CoP: Community of Practice

CPG: clinical practice guideline

EBM: evidence-based medicine

EBMWG: Evidence-Based Medicine Working Group

EBP: evidence-based practice

KT: knowledge translation

KTA: knowledge-to-action

MCHAS: Modernising Children’s Hearing Aid Services

NCA: National Centre for Audiology

PARiHS: Promoting Action on Research Implementation in Health Services

PaU: Practice as usual

PCHI: Permanent childhood hearing impairment

PEBC: Program in Evidence-Based Care

RCTs: randomized controlled trials

UWO: University of Western Ontario

UWO PedAMP: University of Western Ontario Pediatric Audiological Monitoring Protocol

WHO: World Health Organization

Footnotes

Appendix A

Characteristics That Influence the Use of Knowledge and Evidence in Clinical Practice

| Characteristics of the ________________ that influences adoption and implementation | |||

|---|---|---|---|

| Guideline | Practitioner | Context | Broader health system |

| Relative advantage or utility | Time | Workplace structure | Nature of financial arrangements Reimbursement |

| Compatibility | Lack of authority to change practice | Organizational agenda | Support for change |

| Complexity | Lack of support from organization for practice change | Available resources | Regulation of health professionals |

| Costs | Perception of legitimacy of the source of guideline | Staff capacity | Financial stability |

| Flexibility/adaptability | Perception of quality/validity of guideline | Staff “turnover” | Pressure from other health professions or public |

| Involvement | Habits/customs | Organization of care processes | |

| Divisibility | Beliefs of peers | Efficiency of the system | |

| Trialability/reversibility | Social norms | Social capital of practitioners and organization | |

| Visibility observability | Attitude about guidelines | Level of in-service, CE opportunities | |

| Centrality | Lack of outcome expectancy | Policy and procedure documentation | |

| Pervasiveness, scope, impact | Lack of self-efficacy | Leadership/good communication | |

| Magnitude, disruptiveness, radicalness | Lack of motivation | Relationships: practitioners and practitioners to managers | |

| Duration | Lack of awareness of existence | ||

| Form, physical properties | Chosen noncompliance | ||

| Collective action | Age | ||

| Presentation | Country of residence | ||

Acknowledgements

The authors gratefully acknowledge the helpful comments and suggestions provided by Professor J. B. Orange on an earlier version of this manuscript.

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

The author(s) received the following financial support for the research, authorship, and/or publication of this article: This work was supported with funding by the Canadian Institutes of Health Research [Sheila Moodie: 200710CGD-188113-171346, Marlene Bagatto: 200811CGV-204713-174463, and Anita Kothari: 200809MSH-191085-56093]. This work has also been supported by the Ontario Research Fund, Early Researcher Award to Susan Scollie, Starkey Laboratories, Inc., and the Masonic Foundation of Ontario, Help-2-Hear project.