Abstract

Hybrid media systems have reconfigured online journalism and mass communication such that people can engage more easily in multi-directional discourse about the norms of science. We investigate this reconfiguration with a mixed-methods study of the X page of “Retraction Watch,” which produces hybrid “watchdog science journalism” on violations of scientific norms. Results show that Retraction Watch’s X is not necessarily an inclusive forum for open debate about scientific norms. We also find that Retraction Watch prioritizes aspects that may not resonate with its audience. This has implications for how science communicators and journalists approach (hybrid) debate about scientific norms.

Recent years have seen growing numbers of plagiarism and data fraud in academia, failures to replicate research results, and concerns about scholars following third interests (Brainard & You, 2018). These trends violate fundamental norms of science, that is, its epistemological principles (e.g., “organized skepticism” abiding scholars to systematically scrutinize the rigor of their work) and ethical standards (e.g., “disinterestedness” demanding scholars not to seek personal profit; Merton, 1942).

Violations of scientific norms had long been discussed almost only in scientific publishing and journalism in legacy media (Jamieson, 2018). But in nowadays’ hybrid media systems, these traditional forms of linear communication “merge with and adapt to the formats, genres, norms and actors brought about by newer digital media,” including social media (Mattoni & Ceccobelli, 2018, p. 541). The design and technical features of these media, that is, their “affordances” (Evans et al., 2017, p. 35), now allow various stakeholders in society to participate in discussions about the norms of science more easily and actively than before—and thus facilitate what we conceive as “hybrid discourse” about the norms of science (Valaskivi & Robertson, 2022).

The emergence of hybrid media systems has also had implications for journalism. For example, it allowed “watchdog science journalism” (Fahy, 2023) to publicize scientific misconduct on social media and discuss potential remedies with a wide range of stakeholders, including academics, politicians, journalists, and citizens (Liskauskas et al., 2019). A prime example of this is the X page of Retraction Watch, a major science journalism outlet reporting on questionable practices of scientists to “hold them to account” (Fahy, 2023).

Scholars from different disciplines have provided analyses of hybrid norm discourse (Dambanemuya et al., 2024; Peng et al., 2022; Yeo et al., 2017). However, most existing studies do not engage much with each other across discipline borders, rely on qualitative case studies or focus on a specific scientific fraud case, and offer limited insights into the themes, emotional sentiments, and user interactions of hybrid norm discourse on social media. We address these gaps with a mixed-method analysis of the X page of Retraction Watch. Our study offers multiple contributions to our understanding of science journalism and online mass communication about the norms of science. First, our work contributes to scholarship on hybrid media systems by theorizing a model of “hybrid norm discourse.” This model explicates how the hybrid media environment and the affordances of social media (re)configure digital journalism and user discussions about scientific norms. Second, our study offers new knowledge about how the repertoires and practices of science journalists—that is, Retraction Watch writers—interact with normative expectations toward science (Brüggemann et al., 2020; Ginosar et al., 2024; Jaspal et al., 2013). Third, our work complements and extends existing research on social media affordances by not only analyzing engagement metrics, such as likes and shares, but also user conversations (e.g., how users reply to Retraction Watch) and conversation networks (e.g., how users reply to each other). We collected and analyzed a valuable dataset before X paused free and full access to the academic API. Fourth, we discuss how our case study applies to other modes and forms of digital communication about the norms of science.

Theory and Literature Review

Philosophy of science and science of science scholars have extensively discussed the normative standards of scientific inquiry and violations of these standards. On the one hand, such discussions have centered on epistemological norm violations, for example, incorrect applications of analytical procedures, presumably unintentional errors during data collection, and other technical mistakes (McVeigh, 2019). On the other hand, researchers have identified several ethical norm violations, such as fabricating data, selectively reporting significant results, tweaking statistical models so that they confirm hypotheses, and committing other forms of moral misconduct like sexual or racial harassment (Gopalakrishna et al., 2022).

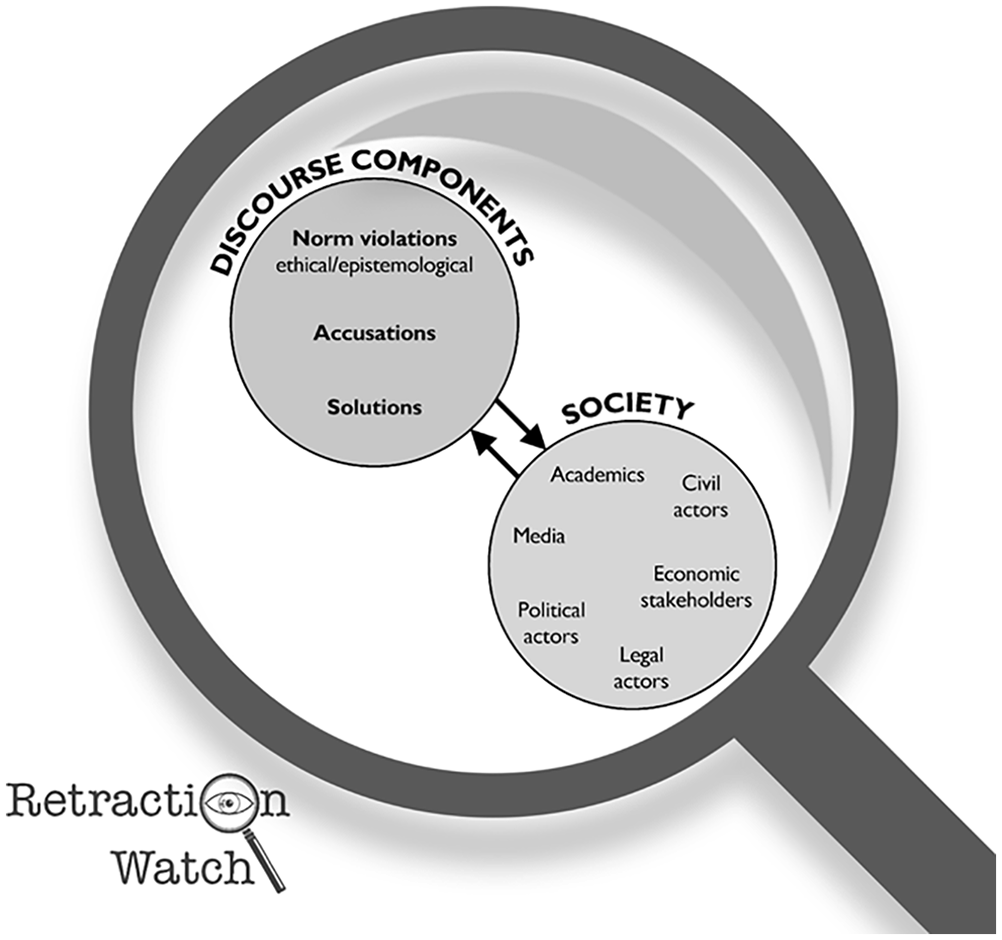

Violations of the epistemological and ethical norms of science have raised concerns that its integrity and reliability are at stake (Ritchie, 2020). These concerns have been debated primarily in the scholarly community and traditional science journalism for decades (Kim & Kim, 2018). However, social media disrupted the dominance of scholars and journalists in public discourse about the norms of science: Platforms like X—the focus of our study—encouraged more inclusive, multi-directional, and multi-modal discourse among various stakeholders beyond academics and journalists, such as policymakers, NGOs, and civil society actors (Dambanemuya et al., 2024; Valaskivi & Robertson, 2022; see Figure 1). Most relevant to our study, this disruption has been theorized against the backdrop of hybrid media systems and attributed to the specific affordances of social media.

Heuristic Model of Discourse About Scientific Norms in the Hybrid Media Environment.

Reconfigurations of Scientific Norms in Hybrid Media Systems

An essential precondition for a reconfiguration of public discourse about the norms of science has been the emergence of hybrid media systems. Hybrid media systems are characterized by the interplay, convergence, and adaptation of the logics, genres, and discourse norms of traditional and digital media, including social media (Mattoni & Ceccobelli, 2018). They involve an “integration” and a “fragmentation” of discourse styles and participants in societal debates (Chadwick, 2017, p. 18). The majority of works on the concept of hybrid media systems have focused on political issues, applying it in political communication contexts (Ekman & Widholm, 2024). Our study contributes to hybrid media research, as it focuses on hybrid science communication, which has not been analyzed through the lens of scientific norms yet. In particular, our study provides novel evidence on two conflicting but similarly plausible scenarios: one suggesting that hybrid media systems facilitate open discourse about the norms of science and one suggesting that such discourse remains within the academic and journalistic communities. Our overarching research question, then, is: To what extent do social media platforms facilitate hybrid discussions about scientific norms or reinforce existing chambers of discussion that tend to be within scientific communities?

Science communication scholars highlighted the potential of hybrid media systems to facilitate multi-directional discourse among scientists, journalists, policymakers, legal actors, and civil society (Scheufele, 2014; see Figure 1) and allow more public engagement, participation, inclusion, and co-creation of knowledge (MacGregor & Cooper, 2020). Dambanemuya et al. (2024), for example, find that various actors engage with X posts about retracted research articles, including science communicators, (presumably) automated accounts, health practitioners—and, most likely, members of the public and scientists, who showed the highest engagement rates. However, some scholars warned that the multitude of stakeholders and their normative claims in hybrid science communication settings challenges established standards of what can be considered as “true knowledge” in society. Waisbord, for instance, suggests that “the idea that scientific norms determine truth and reality [. . .] is harder to maintain when a wide range of assertions massively circulate in the chaotic digital environment” (2018, pp. 20–21). Kim and Kim argue along similar lines that hybrid science communication may “pose new challenges to existing norms, conventions and expectations of scientific communities” (2018, p. 3).

Journalism scholars, on the other hand, interrogated the implications of hybrid media systems for journalistic practices. Hallin et al. (2023, p. 222), among others, diagnosed a “multiplication of actors and genres involved in the production of news and the blurring of boundaries among them,” which they conceptualized as “hybrid journalism.” Some studies analyzed hybridity in science journalism specifically (Amend et al., 2014; Lörcher et al., 2024). These studies, however, suggest that normative and ethical debates surrounding science are largely driven by academics and journalists (Bartleman et al., 2024; Holliman, 2011). This raises the question of whether hybrid media systems live up to their promise of facilitating more inclusive discourse about the norms of science, as suggested by science communication scholars, or reinforce existing chambers and networks of discussion within the scholarly community and close stakeholders such as science journalists.

Hybridity, as highlighted in science communication and journalism literature, refers not only to the actors who create and respond in discussions about scientific norms, but also to the content (e.g., themes, sentiments) of these discussions, which may differ or align with the traditional philosophical understanding of what scientific norms mean.

There is fruitful scholarship that examined this content. It often focused on traditional media (e.g., printed newspapers or public service broadcasting), specific scientific debates (e.g., the #overlyhonestmethods discourse or the #arseniclife controversy), or specific issues (e.g., climate change, biochemistry) rather than social media discourse about scientific norms in general (see Jamieson, 2018; Jaspal et al., 2013; Schug et al., 2025; Simis-Wilkinson et al., 2018; Walter et al., 2019; Yeo et al., 2017). Departing from these works, we adopt a more holistic ecology-level approach that integrates both producer- and audience-centric perspectives (see Qu & Lu, 2025). In particular, we explore how various actors (a) discuss epistemological or ethical aspects of scientific norm violations (see Resnik, 2007), (b) describe or prescribe solutions to such violations (see Peng et al., 2022), (c) mention scientists, journalists, politicians, legal actors, and civil society members as those who accuse others of violations (see Dambanemuya et al., 2024), and (d) mention scientists, journalists, politicians, legal actors, and civil society members as those who are being accused of norm violations (see Sugawara et al., 2017). Thus, we propose our first two research questions:

When it comes to the actors that participate and co-create the meaning of scientific norms on social media, our work not only examines how (semi)professional actors (i.e., journalists, scholars, and bloggers) like Retraction Watch frame and respond to violations of the norms of science, but also how the spectrum of actors in digital publics, including citizens and policymakers, communicate about it. Thus, we propose our third research question:

Amplification and Emotional Interaction with Science Norm Discourse

Scholarship on hybrid science communication illustrates how social media users may (re)negotiate and (re)configure the norms of science (Hogan & Sweeney, 2013). What enables users to do so are the “affordances and commodified logics” of social media (Valaskivi & Robertson, 2022, p. 155). Affordances can be defined as possibilities for action that arise between individuals—in our case: social media users—and an object or technology, for example, social media platforms (Evans et al., 2017).

Affordances play a critical role in shaping user behavior, particularly in the context of dissemination and engagement. They facilitate and constrain amplification of information and social connections (boyd, 2011; Ronzhyn et al., 2023) via reposting, replying to posts, following accounts, indicating endorsement, articulating emotions, and sharing personal information in profile descriptions. Affordances have therefore allowed online journalism and science communication to enable multi-directional, multi-stakeholder discourse about the norms of science (see Brüggemann et al., 2020). They influence opinion expression (Lane et al., 2019), message amplification (Dvir-Gvirsman et al., 2024), and user interaction (Ronzhyn et al., 2023), and, for example, allow users to manage reputational risks for voicing controversial views (Dvir-Gvirsman et al., 2024). As such, affordances are essential for understanding how online publics communicate about potentially contentious issues like the ethical and epistemological standards of science.

Social media affordances differ across platforms. For example, X—the context of our study—emphasizes individual efficacy more than group efficacy (Halpern, 2017). Unlike Facebook users, who typically engage with friends or family, X users often interact with a perceived audience of strangers, reducing users’ fear of expressing dissenting opinions (Oz et al., 2024) and allowing for more candid conversation. Additionally, X not only allows likes (i.e., endorsement of posts) but also has repost and comment functions, which enable information and opinion diffusion (Firdaus et al., 2018). These affordances establish X as an ideal case for analyzing the diffusion of, and user engagement with, watchdog science journalism on scientific norm violations.

The concept of affordances offers a broad framework for studying social media discussions. However, it remains largely unexplored how affordances shape social media discourse about science, specifically its epistemological principles and ethical norms—and how affordances contextualize hybrid science journalism. This limits our ability to understand how conversations about scientific norms and violations occur and evolve in the hybrid media system.

However, a few informative studies do exist. For example, it has been shown that moral content spreads faster than content that does not emphasize moral issues (Brady et al., 2020). More specifically, Dambanemuya et al. (2024) suggest that social media content about norm violations elicits higher engagement compared to content that does not. They find that posts discussing retracted studies through accusations of errors or misconduct receive more likes and replies than posts about non-retracted studies. Correspondingly, research into online reactions to users who violate social norms shows that ethical breaches are central to collective outrage and aggression toward norm-breaking individuals (Rost et al., 2016). We thus hypothesize:

Furthermore, non-anonymous users have been shown to be more aggressive (i.e., expressing negative affect and language) in “online firestorms,” or in the collective response to users who violate social norms (Rost et al., 2016). This is because they are “standing up” for their moral principles, while anonymity may reduce the effectiveness of admonishment of norm violations. Dambanemuya et al.’s analysis indicates that negative sentiment is more prevalent in user reactions to norm violations, as they find that retraction-related X posts often contain words like “fake,” “unreliable,” or “criminal” (2024, p. 7). Similarly, Peng et al. show that posts about retractions likely express negative sentiment in the form of “skepticism, doubt, criticism, concern, confusion, or disbelief” (2022, p. 4). We posit the following hypothesis:

Further research indicates that accusatory language, whether centering on veracity (e.g., honesty of the accused) or capacity (e.g., incompetency), also influences how users draw on the affordances of social media, including sharing functions. For example, Hansson et al. (2024) find that X users were less likely to share highly critical messages blaming others’ character, but messages focused on the outcomes of unethical behavior were often reposted. Further, Affective Intelligence Theory (Marcus et al., 2000) posits that anger arises in situations in which there is a specific person to blame for an issue, which leads to opinion expression on social media as an emotional coping mechanism (Heiss, 2021). This suggests that accusatory language toward actors who violate scientific norms could lead to increased posting and negative affect. Thus, we propose the following hypotheses:

Beyond accusatory language, scholars noted that more broadly, social media engagement thrives on conflict and controversy, especially when norm violations provoke public outrage and debate (Rost et al., 2016). Prescriptive norms, which focus on promoting ideal behaviors, may be less provocative and less likely to capture attention. Furthermore, audiences may resist or disengage from content that feels judgmental or restrictive, especially on platforms like X, where users value autonomy in expressing opinions (Oz et al., 2024). Without the novelty, controversy, or emotional charge that make posts shareable, we assume that prescriptive norms are less likely to elicit engagement.

Data and Methods

The Case of Retraction Watch on X

To examine how scientific norms are discussed on digital platforms, our case study focuses on Retraction Watch, one of the major “science watchdogs” (Didier & Guaspare-Cartron, 2018, p. 165). As we are not only interested in how Retraction Watch discusses scientific norms but also in how different users engage with it, we collected our dataset from Retraction Watch’s X account (@RetractionWatch) using the X Academic API (data collected in May 2022). We used the Python programming software to retrieve all posts Retraction Watch published since 2013, the user engagement metrics of each post (number of reposts, likes, replies), and all the conversations that occurred under each post. We collected a total of 19,462 posts by Retraction Watch from 2013 to 2022 (i.e., original posts), and a total of 22,936 replies that users left under the original posts (i.e., conversations). Moreover, we collected user-level data for all users who left replies under each original post, such as the user profile and the number of followers and followees.

Automated and Manual Content Analysis of Retraction Watch Posts

To examine how Retraction Watch discussed scientific norms (RQ1), we utilized a combination of computational and manual content analyses. First, we employed the structural topic modeling (STM) method (Roberts et al., 2019) on all Retraction Watch posts since 2013 (N = 19,462). STM is an unsupervised machine learning method that identifies key topics within a corpus using keywords and correlated documents for each topic. Our analysis used the stm package in R, involving preprocessing steps such as punctuation and stopword removal, along with word stemming. We also removed duplicate posts from the sample before employing STM, leaving a total of 14,694 unique posts. This process identified 25 topics, which were individually reviewed by all authors. Subsequently, two collaborative meetings were held to consolidate these topics into ten overarching themes, ensuring the validity and reliability of the computational approach. Our interpretation followed the principle of objective hermeneutics (Gadamer, 1989) to understand the text’s intended meaning based on the text itself, minimizing subjective reader interpretations. Recognizing potential biases inherent to our academic backgrounds, training, and views on scientific norms, we addressed these preconceptions during our discussions and sought to mitigate their influence. Of the ten overarching themes, we found four to be directly related to our theoretical model: norm violation practices, people/institutions involved in norm violations, disciplines involved in norm violations, and consequences of norm violations (for an overview of all ten themes, see the Supplemental Materials; Table S2). There was a total of 6,067 posts that fell within one of these four themes.

To investigate which (a) dimensions of norm violations, (b) solutions to violations, (c) actors accusing others of norm violations, and (d) actors accused by others are present on Retraction Watch’s X (RQ2), we conducted an in-depth content analysis on a two-tiered stratified random sample of 2,000 posts. Specifically, we sampled 2,000 posts from the 6,067 posts that represented one of the four main themes of interest, ensuring that all four themes were equally represented in the manual coding process (n = 500). Within each theme, we selected 250 posts that did not have any comments and 250 that received at least one comment, to ensure we captured an adequate representation of posts that received engagement and those that did not. Two of the authors each manually coded 100 posts along four content variables: norm dimension (epistemological, ethical), solution (descriptive, prescriptive), accuser (e.g., academic, media, etc.), and accused (e.g., academic and media). Table S1 describes these variables in detail, providing all codes, coding rules, and example posts. Using Krippendorff’s alpha, which corrects for chance agreement between coders, we found that the inter-coder reliability between the two coders for all four variables combined for the first round of coding was .71 (Krippendorff, 1980). After a group discussion with all authors to clarify discrepancies in the code book, we conducted a second round of coding on an additional 40 posts and the average Krippendorff’s alpha across all four variables was .81.

Manual Analysis and Network Analysis of Users Engaging With Retraction Watch Posts

To investigate who engaged with Retraction Watch posts (RQ3), we manually coded whether users who commented on one of the 2,000 posts selected for manual coding (n = 1,465) self-described in their profile bios as academic (e.g., scholars, universities), political (e.g., politicians, scientists at government labs), economic (e.g., businessmen, companies), legal (e.g., lawyers), civil (e.g., non-profit workers, self-identified members of the general public), media (e.g., journalists, news organizations), or other stakeholders (e.g., nurses, doctors) or did not disclose any details that allow to categorize them. These categories operationalized the seven actor groups in our theoretical model (Figure 1), that is, they included all relevant stakeholders of hybrid science communication discussed in the pertinent literature (NASEM, 2016).

A total of 1,465 unique users replied to Retraction Watch’s posts, of which nearly 93% left information in their user profiles. We then conducted network analysis using Gephi to examine who replied to whose replies (i.e., through the use of @). In our network analysis, each node is a user, and an edge is formed if a user replies directly to another user. Combining the analysis of users’ profiles and the network analysis, we were able to reveal the actors as well as their interactions in hybrid norm discourse on X.

Methods to Analyze the Extent and Emotional Valence of User Engagement

To test how the four components of norm discourse (i.e., norm dimension, solution, accuser, accused) affect the extent to which users engage with Retraction Watch posts (H1, H3, H5), we conducted negative binomial regressions on the original Retraction Watch posts (n = 2,000). We used this approach to handle overdispersion of the count data that constitute our dependent variables. The dependent variables were the number of reposts, likes, and replies to each post. The independent variables were the four norm discourse components.

To investigate emotions in user engagement with Retraction Watch’s X (H2, H4), we automatically coded all user replies to the original Retraction Watch posts for negative versus positive emotional valence (n = 3,072). Coding drew on existing methods of measuring emotions in text data, including the on-the-shelf sentiment dictionary NRC and ChatGPT, a natural language processing tool based on generative artificial intelligence (AI). We validated both methods by comparing them to human coding and decided to use ChatGPT (version 4.0; OpenAI, San Francisco, California, USA), as validation scores outperformed those of the NRC approach and other commonly used sentiment analysis tools (e.g., TextBlob, VANDER; for a full description of the validation process, see the Supplemental Materials, Appendix 2).

ChatGPT-based coding was done as follows: One author first manually coded a random sample of the original posts for positive versus negative emotional valence (n = 60). Second, we prompted ChatGPT to code the rest of the original posts as “a social scientist conducting sentiment analysis,” using the initial sample as training data. The prompt for ChatGPT was: “You are a social scientist conducting sentiment analysis on X posts for a study. You want to categorize each post as either negative or positive. Do not leave any post blank.” The resulting binary variable thus indicated the emotional valence of the original Retraction Watch posts and was used as a control variable in the H2 and H4 analyses. Third, for the dependent variable, we instructed ChatGPT to also code the replies to these posts for emotional valence. We then counted the number of replies that have positive versus negative emotional valence and ran a negative binomial regression to test whether the proportion of positive versus negative replies differed significantly depending on the hand-coded norm discourse components of the original Retraction Watch posts. For H2, we tested whether there were more negative words used in replies to posts about norm violations, controlling for the other hand-coded discourse components and the emotional valence of the original post. Similarly, for H4, we tested if there were more negative words in replies to posts accusing academics versus accusing others versus no accusations, controlling for the same variables as H2.

Results

How Scientific Norms Are Discussed on Retraction Watch’s X (RQ1 and RQ2)

Our first research question examines the themes of social media discourse about scientific norms on Retraction Watch’s X page and on how the prevalence of these themes differs. The results of the STM (see Figure S1 and Table S2 in the Supplemental Materials) show that posts on Retraction Watch’s X fall into ten themes. These include legal mechanisms to uphold scientific norms (e.g., lawsuits, fines), public sanctions of norm violations (e.g., expressions of concern, singling out researchers), and media attention (e.g., vocal public figures, publication channels), for example. A full list of the themes can be found in Table S3.

Our theoretical framework focuses on different dimensions of norms, actors involved in norm discourse, and solutions to norm violations (see Figure 1). Four of the ten themes discussed these components as they addressed norm violation practices, people or institutions involved in norm violations, disciplines involved in norm violations, and consequences of norm violations (see Figure 2). Therefore, we took a random sample of the posts that mentioned these themes (n = 2,000) to examine the components of hybrid norm discourse (RQ2a–d) through the manual content analysis described in the section “Data and Methods.”

Prevalence of the Four Themes in Retraction Watch Posts Selected for the Analysis.

When looking at which dimensions of scientific norms were discussed in the original posts of Retraction Watch (RQ2a), we find that the posts, if they mention norms at all, focused on ethical norms (43.9%), for example, discussing plagiarism, threats against collaborators, or data manipulation (see Table 1). When looking at whether and how solutions to norm violations are being discussed (RQ2b), we find that most posts did not mention solutions (53.2%), but those that did were mostly descriptive, explaining solutions that had already been implemented (41.7%). For instance, these include retracting papers, legal consequences, banning individuals from publishing, and journals adopting “AI to spot duplicate images in manuscripts.” Most Retraction Watch posts (77.6%) did not mention actors accusing others of norm violations (RQ2c), but those that did mainly referred to academics accusing others of norm violations (15.0%). These posts reported that academic journals called out authors for duplication, universities revoked PhDs, and researchers admonished transparency deficits, for example by “calling on Cornell to release its investigation report of food science researcher Brian Wansink.” Similarly, many posts (50.5%) did not mention actors being accused of norm violations (RQ2d). Those posts that did contain accusations mostly accused academics, such as graduate students and senior scientists, as was the case when a university ordered a “PhD supervisor to retract [a] paper that plagiarized his student.”

Components of Scientific Norm Discourse: Results of Manual Content Analysis.

To summarize, we find that Retraction Watch’s X discusses ethical norms much more often than epistemological norms, but many posts do not mention either dimension at all. Few posts make specific claims about how violations should be handled, whereas most describe a status quo of current solutions to norm-violating practices. Interestingly, Retraction Watch seems to focus more on actors who are accused of norm violations rather than those who accuse others of violations: Only less than a quarter of our hand-coded posts mention an accuser. Results also indicate that norm violations are usually negotiated within academia, as scientists are the actors most often accused and accusing others of violations. But a range of other actors within the hybrid science communication environment (e.g., politicians, journalists) are also present, albeit less often.

Hybrid System or Community Chamber? Actors Engaging in Norm Discourse (RQ3)

Our analyses on the users who replied to the 2,000 manually coded posts showed that users engaging with Retraction Watch’s X are predominantly from academic and scientific backgrounds, with nearly 41% of all commenters self-identifying as scientists, scholars, or academic institutions (RQ3a; see Table 2). However, nearly 12% of commenters explicitly identified themselves as members of the general public, which indicates that users come from a variety of stakeholder groups beyond science and academia. Only a minority of users identify as political (4%), media (3%), and economic actors (6%). There were 400 (27%) users who did not disclose any information on their profile about their profession.

Frequency Analysis of Self-Description in Profiles of Replying Users.

We also conducted a network analysis using the Yifan Hu Layout in Gephi to investigate which stakeholder groups are more or less likely to mention each other when engaging with Retraction Watch (RQ3b). We used Gephi’s modularity analysis algorithm to identify nodes that were more likely to interact with each other, and then color-coded the nodes based on the stakeholder group to which users belong. Figure S2, Panel A (see Online Supplemental Materials) shows a large, red cluster of users who tended to engage mostly with Retraction Watch, that is, the node in the middle. There were other smaller communities depicted in the blue, black, green, and light blue clusters. To further illustrate which stakeholder group of users was involved in each community, we included Panel B showing the same network analysis, but with each node color-coded based on the professions stated in the users’ profiles (e.g., academic, civil, political). The graph shows that commenters network across different types of stakeholders and not only within their stakeholder group.

Overall, the RQ3 results indicate that discussions on Retraction Watch’s X are driven by academics. This suggests that hybrid norm discourse on social media is—despite being in principle open to actors beyond the scientific community—often limited to inner-scientific debate. However, we find that a notable portion of users engaging with Retraction Watch self-describe as members of the general public. Moreover, we can assume that a variety of other non-academic users engage within norm discourse, even if we cannot infer which stakeholder groups they belong to, as they do not disclose this in their profile descriptions. Norm discourse on Retraction Watch’s X can therefore be characterized as both a community bubble (in which academics preferably exchange among themselves) and a hybrid system (in which different stakeholders exchange with academics and among each other). Thus, both scenarios mentioned in our overarching research question occur simultaneously: For the case of Retraction Watch’s X, social media facilitate both hybrid discussions about scientific norms and reinforce existing community chambers.

How Posts About Scientific Norms Stimulate User Engagement and Affect (H1–H5)

Regression results show that posts that discuss scientific norms—regardless of whether they refer to epistemological principles or ethical standards—are associated with fewer reposts and likes. In particular, posts mentioning epistemological norms receive 25% fewer reposts and 25% fewer likes (as indicated by 1 − OR = 1 − 0.75 = 0.25; see Table 3), and posts mentioning ethical norms receive 24% fewer reposts and 42% fewer likes. Thus, H1 was not supported. On the other hand, one of the strongest triggers of engagement with Retraction Watch posts is whether they mention academics as the accuser (H3). Posts receive 63% more replies, 89% more reposts, and 201% more likes when they discuss how scientists or academic institutions accuse others of norm violations. On the contrary, engagement was substantially lower when Retraction Watch posts accused journalists and media organizations of norm violations, with 54% fewer replies, 57% fewer reposts, and 63% fewer likes. H3 was supported, depending on who was accusing and being accused.

Engagement Rates as a Function of Whether the Original Retraction Watch Posts Discuss Norm Dimensions, Solutions, Accusers, and Actors Accused, and Have Positive/Negative Emotional Valence: Results of Negative Binomial Regressions.

Note. OR = odds ratio.

*p < .05. **p < .01. ***p < .001.

Mentions of solutions to norm violations, whether descriptive or prescriptive, do not affect the number of replies and likes. This is somewhat unexpected, as one could assume that posts describing remedies to scientific norm violations might cause users to amplify and endorse them. H5 was not supported. Overall, we find that mentions of academic accusers are most likely to increase engagement, while posts accusing media actors and mentioning both epistemological and ethical norms decrease engagement.

Our study not only considers social media metrics as engagement indicators but also investigates emotions articulated in user replies (H2, H4). Overall, we observed that the emotional valence of replies to the original Retraction Watch posts depends on which norm discourse components the posts discuss (see Table 4). Posts discussing epistemological norms receive 42% fewer negatively valenced replies than posts without mention of norm violations. Interestingly, there was no significant difference between the number of negatively valenced replies in posts mentioning ethical norm violations. Thus, H2 was not supported.

Predicting the Amount of Positive Versus Negative Replies as a Function of Whether the Original Retraction Watch Posts Discuss Norm Dimensions, Solutions, Accusers, and Actors Accused: Results of Negative Binomial Regressions.

Note. OR = odds ratio.

*p < .05. **p < .01. ***p < .001.

Yet at the same time, posts accusing academics also receive significantly more negative replies than posts accusing other actors (166%), which shows that accusations against scientists, scholars, and academic institutions generally elicit emotional user reactions, whether affirmative and critical. H4 was supported, depending on who is the target of the accusatory language.

Discussion

Hybrid media systems have changed journalism and science communication such that their audiences can engage more easily in multi-directional, multi-modal, multi-stakeholder discourse about the ethical and epistemological norms of science. This has consequences for public debate about scientific misconduct and questionable research practices like those reported by the watchdog science journalists of “Retraction Watch.” We investigated this debate with a mixed-methods study of all 19,462 X posts by Retraction Watch (2013–2022) and 22,936 user replies. Results show that although social media afford equitable dialogue among various stakeholders in society, user engagement with hybrid science journalism is dominated by academics. Overall, this suggests that hybrid science communication may not fully live up to its promise of being an inclusive forum for societal debate about the norms of science. Moreover, we find that the watchdog journalism of Retraction Watch centers on aspects of this debate which do not resonate much with its audience. This has implications for both journalism practice and online science communication, as we discuss in the following.

How Retraction Watch’s X Affords Hybrid Discourse About the Norms of Science

We show that social media platforms like X can facilitate a pluralistic, multi-directional, multi-modal form of communication about the normative standards of science that we conceive as hybrid norm discourse. We find that X, a platform frequently used for science communication, invites a variety of themes, actors, narratives, modes of communication, and emotions. This indicates that public debate and engagement with scientific norms is hybrid in the sense that it is characterized by an “integration” of multiple actors and arguments within societal debate about scientific norms and by a “fragmentation” of the modes and stakeholder constellations of non-hybrid norm discourses outside digital media (Chadwick, 2017, p. 18).

However, although social media provides channels for numerous stakeholders to join scientific norm discussions, we find that mostly those actors who have dominated traditional science communications settings (i.e., scientists and journalists) make use of these opportunities. This conforms with research on hybrid science journalism, which has been found to be largely driven by academics and journalists (Bartleman et al., 2024; Holliman, 2011). Inner-scientific norm discourse continues to exist on social media, as we find that engagement with Retraction Watch’s X is dominated by actors self-describing as scientists, scholars, or academic institutions (see Table 2 and Figure S2) and centers strongly on academics as those being accused and accusing others of norm violations (see Table 1). Discourse about scientific norms is thus partially confined to a closed “community bubble” and not per se an inclusive “hybrid system.” To some extent, this finding challenges the much-acclaimed potential of hybrid (science) journalism to achieve more democratic, participatory science communication. It also suggests that equity and inequity can coexist among the producers and consumers of hybrid science journalism, along the lines of what K. Chen et al. (2023, p. 2855) described as “segregated inclusion.” In other words: Discourse around the norms of science can be inclusive at the system level but at same time exclusive at the level of those “community bubbles.” This finding also underscores Hallin et al.’s (2023, p. 229) argument about “the importance of keeping levels of analysis in mind: that is, a more hybrid system at the aggregate level [. . .] may involve less hybridity in certain ways at the level of individual media institutions.”

Our study is limited to Retraction Watch, which is a special case of a science watchdog: Unlike traditional science journalism, for example, Retraction Watch focuses specifically on cases of scientific misconduct and the process of self-correction, publishes only online, also involves untrained journalists, and is financed through reader donations. However, Retraction Watch still promotes traditional conceptions of linear science communication to some degree: Retraction Watch writers can be seen as “gatekeepers” of hybrid norm discourse in that sense that they determine which topics, claims, and cases of norm violations are being reported and subsequently discussed on X (see Yeo et al., 2017). Future research could examine “science watchdogs” other than Retraction Watch (e.g., PubPeer) and apply other data collection strategies by tracing specific hashtags like #retraction or scraping the accounts of specific journalists like @ivanoransky. Yeo et al. (2017), for example, did not confine their analysis to a specific outlet like we did, but followed a more holistic approach: They reconstructed the #arseniclife debate on blogs and X, finding that these platforms allowed for open, participatory, informal peer-review. Their observations, as well as ours, illustrate what Bubela et al., back in 2009, described as “‘extended peer review,’ whereby the ‘publics,’ or groups of individuals who are affected by the products of science, are invited to become part of a community of evaluators and decision-makers” (2009, p. 515). In addition, further research should aim to find out more about the 20% of users who did not report who they are in the user profiles. Researchers have shown how to use historical social media posts from users to infer their identities (A. Chen et al., 2022).

Furthermore, future studies could investigate additional post characteristics that would influence engagement, such as the topic of retracted papers or author characteristics, including university affiliations, as some posts include links to the retracted papers which contain this information. While the posts in our sample were generally vague in mentioning university names or paper topics, there were instances of prestigious universities or famous authors mentioned in a post. Additionally, for posts in which university affiliation or author identification remains vague, future research should investigate whether users seek further information about the post topic by clicking on the links in the post. Another limitation of our study is the use of ChatGPT to conduct sentiment analysis on posts and user replies. Though GPT 3.5 and 4.0 have been shown to outperform other sentiment analysis tools in positive/negative categorization (Lossio-Ventura et al., 2024), GPT models may fall behind when classifying discrete emotions (Banimelhem & Amayreh, 2023). GPT is also constantly being updated and is sensitive to prompts, meaning that reproducibility may be limited.

Our analysis also illustrates how hybrid watchdog science journalism is enabled by how users draw on the affordances of social media. Norm discourse in traditional media settings was precluded to actors with wealth, reputation, power, or epistemic authority, that is, scientists and journalists (see Jamieson, 2018) and has often been limited to one-directional communication without much recognition of the social embedding of science and potential for public participation. The design and functionalities of social media, however, afford participation and engagement of actors from various sectors of society, mostly regardless of whether they have financial, intellectual, or reputational capital. Our heuristic model (Figure 1) and analysis show this: On Retraction Watch’s X, not only academics but other kinds of users engage with normative claims about the epistemological and ethical principles of science, articulate endorsement and criticism of them, and potentially formulate new norms. Discussants in hybrid norm discourses are therefore not passive consumers of the claims of epistemic authorities, but also producers of original arguments (see Fahy, 2023).

Overall, our study can be instructive for future (science) journalism research. It may inform further studies on how science journalists have gone beyond “cheerleading” and become “critical investigators tasked with holding science and scientists to account” (Franks et al., 2023, p. 1734). Analyses of reader comments invoking and instrumentalizing norm violations for science criticism (see Jaspal et al., 2013) and public opinion surveys on implications of scientific norm violations for trust in science (see Mede et al., 2021) may further complement such studies.

Different Priorities of Actors in Norm Discourse on Retraction Watch’s X

Our analysis of how users engage with Retraction Watch and each other shows notable disconnections between the priorities of discourse initiators (i.e., Retraction Watch) and the preferences of discourse contributors (i.e., the users). For example, users show comparatively little engagement with Retraction Watch posts mentioning ethical norms, whereas Retraction Watch puts high priority on these norms (see Tables 3 and 4). Similarly, users are more hesitant about engaging with posts mentioning solutions to norm violations, while Retraction Watch emphasizes solutions in almost half of the posts we analyzed. This indicates that norm discourse aspects often discussed in the academic literature and media reporting, that is, violations of moral principles and development of quality-assuring measures (Jamieson, 2018), do not attract much interest among the public. One reason for the disconnect between Retraction Watch’s priorities and user preferences could be users’ hesitation to express personal opinions on contentious issues like science’s ethics, especially if they disclose their institutional affiliation or are early in their careers. These users might be uncertain about taking a clear stance on the ethical aspects of science, preferring to self-censor in order to avoid potential backlash or isolation (Powers et al., 2019).

Our findings also suggest that scientists retain their status as trusted epistemic authorities within the context of Retraction Watch on X: Posts where academics accuse others of norm violations receive substantially more likes (see Table 3) and more positively valanced replies (see Table 4), which challenges concerns that the “replication crisis” narrative—which might be fed by Retraction Watch’s reporting on scientific misconduct to some degree—may undermine public trust in science (see Mede et al., 2021). On the other hand, a notable portion of commenters also voice negative emotions in response to posts in which academics accuse others of norm violations (see Table 4). These commenters seem to disapprove of science’s expertise in questions about scientific norm violations. Journalists, however, seem to be rather undisputed authorities in Retraction Watch’s social media discourse around scientific norms: Users tend to disapprove of media actors being accused of norm violations, as indicated by significantly fewer replies, reposts, and likes of Retraction Watch posts accusing them of norm violations (see Table 3).

Outlook: Implications for (Science) Communication Research and Journalism Practice

These findings advance our understanding of how different publics engage in normative discussions about science on social media within the hybrid media system. They provide implications for both science communication and journalism scholarship and practice. Scholars can build on our work when investigating other instances of normative discussions within hybrid science communication ecologies, such as the #IchBinHanna social media debate in German academia, which also involves various stakeholders engaging in accusations and proposing solutions to perceived violations of scientific norms—in this case: poor working conditions in academia (Dirnagl, 2022). Our work is also valuable for the normative underpinning of debates on whether policy makers should regulate potentially dysfunctional online discourse about science: Proponents of agonistic public spheres may conceive pluralistic norm discourses with potentially non-rational or even anti-scientific claims on social media as a necessary component of modern science and democratic science communication (Toepfl & Piwoni, 2015). Proponents of deliberative public sphere conceptions, however, may suggest measures to prevent such claims to avoid an “epistemic cacophony” (Dahlgren, 2018, p. 25). Journalists—more specifically, online science watchdogs like the RetractionWatch writers—may want to find ways to increase audience participation and engagement with topics they deem important, given how little engagement some of their prioritized issues caused, according to our results.

Further, journalism and mass communication researchers will need to account for further developments that shape normative debates but were beyond the scope of this study, such as the growing use of AI in academic research, writing, and publishing, and science communication (Schäfer, 2023). Moreover, the continuous changes of digital science communication landscapes—with new actors and platforms emerging and others receding—will continue to affect the conditions of hybrid norm discourses. For example, it will be necessary to investigate further actors (e.g., automated accounts; see Peng et al., 2022) and platforms (e.g., Wikipedia; see Wray, 2009). After all, the implications of multi-actor and multi-directional norm discourses for the evolving Open Science movement will need to be assessed. This will help (science) communication researchers and practitioners to better understand “epistemic contestations in the hybrid media environment” (Valaskivi & Robertson, 2022, p. 153) and be better equipped for the “continuously changing relations of science and society” (Kim & Kim, 2018, p. 3).

Supplemental Material

sj-docx-1-jmq-10.1177_10776990251334112 – Supplemental material for Communicating Scientific Norms in the Hybrid Media Environment: A Mixed-Method Analysis of Social Media Engagement With Watchdog Science Journalism

Supplemental material, sj-docx-1-jmq-10.1177_10776990251334112 for Communicating Scientific Norms in the Hybrid Media Environment: A Mixed-Method Analysis of Social Media Engagement With Watchdog Science Journalism by Niels G. Mede, Isabel I. Villanueva and Kaiping Chen in Journalism & Mass Communication Quarterly

Footnotes

Data Availability Statement

The data underlying this article will be shared on reasonable request to the corresponding author.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.