Abstract

This article systematically reviews research on public engagement with models of future developments, such as pandemic forecasting or climate change projections. It examines how different actors and stakeholders interpret (often motivated) and communicate (often strategically) scientific uncertainties. So far, lay decision-makers have been studied mostly as receivers. They can handle uncertainty best when expressed in numbers, such as probabilities. In contrast, communicators often fail to provide such information, partly due to misunderstandings about their audience’s needs. The biggest research gaps concern how scientific uncertainty is portrayed by stakeholders in the public sphere, especially on social media.

Keywords

In March 2022, Germany discussed whether to impose a gas embargo as a sanction in response to Russia’s war against Ukraine. In this context, several economic researchers simulated the effects of a sudden supply stoppage on the German economy. However, these simulation models yielded contradictory results, with some assessing the economic costs of such an embargo as manageable and others predicting dramatic economic damage (Berger et al., 2022; Krebs, 2022). In a talk show interview, German Chancellor Olaf Scholz was approached with the notion that “various economists” viewed the risks of a full gas embargo against Russia as manageable. Scholz replied, “They are wrong—Honestly, it’s irresponsible to just put together some mathematical models that don’t actually work” 1 (Will Media, 2022, 22:09). Scholz’s disparaging remark prompted a public discussion among economists, politicians, and journalists on Twitter, in which they debated the significance of uncertainty in modeling results for the science–policy interface (e.g., Bachmann, 2022; De Masi, 2022; Fabricius, 2022).

This exemplifies two essential issues involved in communicating scientific results. First, they are typically associated with uncertainty and are often fragile or conflicting. Therefore, uncertain scientific evidence is neither an immediate, undisputable basis for political decisions nor is it wrong and unnecessary. Second, scientific results, if concerned with socially relevant topics, can become the subject of public debates, in which simplifications, accentuations, or interest-driven interpretations occur. If, within these dynamics, uncertainties are not portrayed and perceived as essential and legitimate aspects of scientific discovery but are instead misrepresented as flaws to undermine the credibility of evidence, misjudgments can reduce trust in science or hinder evidence-based decision-making (Dunwoody, 2016; Friedman et al., 1999; Maier et al., 2016; Stocking & Holstein, 2009).

These challenges of communicating scientific results are particularly relevant to the scientific modeling of future developments, as demonstrated in the example above. Such models are useful for decision-makers because they help to empirically assess unknown developments of complex systems, thereby reducing uncertainties. On the other hand, these models are subject to uncertainty in both their inputs, including unknown or uncertain parameters, and their outputs, such as various alternative scenarios (Brugnach et al., 2007; Walker et al., 2003). Other contemporary key socio-scientific issues that highlight the relevance of model uncertainties in public discourses are climate change and the spread of infectious diseases, such as COVID-19. Many misconceptions and public controversies related to climate change have been affected by uncertainties inherent in climate projections (Joslyn & LeClerc, 2016; Lemos & Rood, 2010), even leading to the assumption that climate skepticism is related less to an anti-science than an anti-uncertainty bias (Rode & Fischbeck, 2021). During the COVID-19 pandemic, the modeling and simulation of infections, severe cases, or hospitalizations were widely present in public debates (Pentzold et al., 2021; Rhodes & Lancaster, 2020); in some instances, preliminary, dramatic models served as the basis for policy decisions without accounting for considerable uncertainties, reducing trust in both modelers and political decision-makers (Lasser et al., 2020).

This paper aims to systematically review research on the role of scientific uncertainty in public communication regarding models of future developments. In the following, we first delineate and define our understanding of models of future developments and present a framework to investigate the public communication of scientific uncertainty. We define three central roles in the communication process: the sources of scientific information, the disseminators of scientific information, and the decision-makers who receive and use it. This allows the inclusion of a range of different actor groups and thus a broad overview of the current state of research. We address the question concerning which specific actors have been examined in these roles, how they typically deal with uncertainty, and on which topics the communication on models of future developments has been examined. For this purpose, we created a codebook to examine the content of research on this topic and summarize key findings. The central results reveal a focus on environmental modeling. The best evidence is available regarding how decision-makers deal with scientific uncertainties. They use concrete numbers, such as probabilities, more effectively than verbal descriptions. However, sources and disseminators often do not provide these effectively. Uncertainties are often neglected, vaguely communicated, or even overdramatized.

Modeling of Future Developments

Many terms are used in academic and public discourse to describe models of future developments, such as forecasts, predictions, projections, simulations, or scenarios. While some of these describe similar methods, there are also conceptual and methodological differences. For example, forecasts predict the most likely future under known conditions, while projections usually explore a range of plausible futures under different hypothetical conditions. In the present article, we aim to consider a deliberately broad range of models, summarized under the umbrella term models of future developments. We understand these, in a general sense, as (a) mathematical and (b) dynamic models, (c) based on scientific knowledge, (d) making claims about future developments regarding (e) issues that affect broad segments of society, and (f) that allow decision-makers to respond to (potential) developments.

In general, a model is a representation of something distinguishable as concrete (e.g., miniatures or blueprints), mimetic (e.g., art models and role models), or abstract (e.g., mathematical and theoretical scientific models) (Friedman et al., 2008). Thus, scientific models are simplified abstractions of real-world systems that can help us better understand their entities and causal relationships.

In mathematical models (a), these relationships are translated into equations and algorithms. Because the states of such systems can change over time, models are often dynamic (b). To define model parameters and algorithms, scientific knowledge, or—if not available—assumptions about the entities, input data, and causal relationships are required. Furthermore, the models are validated with empirical data. Hence, models are based on scientific knowledge (c). Such models (often in the form of computer programs) can imitate the behavior of a system that enables experiments or simulations by manipulating the inputs and observing the output, resulting in a better understanding of the system. This allows for conclusions regarding how a real-life system might behave in the future (d) under different plausible conditions (for summaries, see Friedman et al., 2008; Mahmoud et al., 2009; Pohjolainen & Heiliö, 2016; Walker et al., 2003).

As a result of technical innovation and increased computing capacities, modeling of future developments is becoming increasingly common (Boulanger & Bréchet, 2005; Pohjolainen & Heiliö, 2016) in various scientific disciplines. Advanced computational methods and interdisciplinary approaches also reflect complex human-environment interactions, for example, with agent-based modeling, and can be used in policy support (An et al., 2021). Thereby, models are relevant to many policy areas that affect broad segments of society (e), such as disaster forecasting (e.g., Ripberger et al., 2022), climate mitigation policies (e.g., Haikola et al., 2019), management of water quality (e.g., Tiyasha et al., 2020), infectious diseases (e.g., Corley et al., 2014), or economic outlooks (e.g., Bustos & Pomares-Quimbaya, 2020). These models are particularly used in decision-making when planning over a long period of time is required, or when decisions have long-term consequences (Mahmoud et al., 2009). Hence, models provide the opportunity to respond (f) to (possible) future developments, either by actively engaging in behavior or policies that affect the input parameters (e.g., climate mitigation) or by reacting to the output of the system (e.g., climate adaptation). Given this broad spectrum of applications, the first objective of this review is to provide an overview of the disciplinary and thematic contexts in which models of future developments have been addressed in previous communication research.

However, models of future developments frequently do not provide unambiguous evidence for decision-making; instead, they require a systematic review and consideration of scientific uncertainty. This uncertainty is often distinguished as epistemic (e.g., missing knowledge) or probabilistic (e.g., measurable variability) uncertainty (Peters & Dunwoody, 2016). Both affect each stage of a modeling process (e.g., parameter definition, input, processing, and output) at varying levels, spanning from deterministic knowledge to unknown nonknowledge (for a comprehensive overview, see Walker et al., 2003). Epistemic uncertainty can arise from imperfect definitions of model parameters or incomplete input data. For example, in epidemic simulations, the models rely on (simplified, possibly inaccurate) definitions of an object’s plausible states (e.g., susceptible, exposed, infected, and recovered). To simulate the exposure of different objects, mobility data are needed. If these are imperfect, the simulation becomes less accurate (Kong et al., 2022). In principle, epistemic uncertainty is reducible. If the understanding of parameters or the quality of input data is further improved by (empirical) research, this knowledge can be included in the model, thus improving its performance (Brugnach et al., 2007; Walker et al., 2003). Furthermore, models can be retrospectively validated using real-world observations, thereby reducing uncertainty for further predictions (Corley et al., 2014). In contrast, probabilistic uncertainty cannot be reduced completely, especially if a system is inherently variable. Even if all the necessary processes of a complex, dynamic system were known and correctly modeled, stochastic uncertainties would persist. For example, in ecosystem modeling, a chain of probabilities must be considered when simulating the interactions between different animals and vegetation in a food web (Geary et al., 2020). Hence, there are parameters or input factors that are not static but that have statistical variability. Particularly for human-environment interactions, models rely less on determinants, such as the laws of nature, as opposed to probabilities or even the randomness of behavior (An et al., 2021). Furthermore, regarding the output, models and particular scenarios often do not reliably predict future developments, but they estimate plausible futures (e.g., alternative scenarios) and their probability under various and variable input conditions (Kirchner et al., 2021; Mahmoud et al., 2009; Scheer, 2017; Walker et al., 2003). These complex interactions lead to a multitude of possible results that vary in terms of their scientific uncertainty.

However, because models reduce complexity and provide numerical results, they can potentially lead to a false impression of certainty (Scheer et al., 2021). Correct knowledge about the purpose, development, and interpretability of the models and an assessment of their uncertainties is necessary for adequate decision-making (Walker et al., 2003). Therefore, the elucidation and evaluation of uncertainty have been identified as one of the essential tasks in communicating model results in direct policy advice (e.g., Bodner et al., 2021; Brugnach et al., 2007). However, decision-makers are frequently not informed directly by the modelers, but the results concerning social or political decisions are discussed in the mass media and public discourse (Heidmann & Milde, 2013; Scheer, 2017). Given the pivotal role of scientific uncertainty in the interpretation of models of future developments, the second objective of our review lies in understanding how different actors in the public sphere select, process, and represent the uncertainty of models of future developments in their communication.

Science Communication in the Public Sphere

Scientific uncertainty as a communication challenge in the public sphere and mass media has already been addressed in numerous communication studies. By aiming to tackle the portrayal and interpretation of uncertainties in the public flow of information, three central groups of actors are repeatedly addressed: scientists as sources, journalists as mediators, and the public lay audience as the recipients of journalistic content (e.g., Guenther et al., 2015; Heidmann & Milde, 2013; Maier et al., 2016; Peters & Dunwoody, 2016; Post, 2016; Stocking & Holstein, 2009). This procedural perspective produced a model reflecting the expectations and evaluations of each prototypical actor group regarding the representations of scientific uncertainty (Maier et al., 2016). These can be explained by cognitions, psychological traits, or the processes and routines involved in handling such information. Scientists, for example, hope that reporting scientific uncertainty will educate laypersons, but they also fear misinterpretations or omissions by journalists (Dudo, 2013; Landström et al., 2015; Maier et al., 2016; Maillé et al., 2010; Post, 2016). Journalists, on the other hand, select and portray uncertainties based on assumptions about their recipients’ willingness or ability to critically reflect on uncertainty, their understanding of science, as well as journalistic norms and routines. For example, journalists who see themselves as investigative reporter are more likely to critically evaluate and contextualize uncertainty compared to those who view their role more as a mere transmitter of information (Guenther et al., 2015; Stocking & Holstein, 2009). Finally, the lay audience selects and processes uncertainty information according to cognitions, motivations, and previous knowledge. Their expectations and evaluations of uncertainty portrayals are largely heterogeneous but—in contrast to common assumptions—not necessarily negative (Maier et al., 2016; Winter & Krämer, 2012).

Furthermore, an essential conclusion of science communication research is that this procedural perspective should not be regarded as a one-sided dissemination of findings to decision-makers and the public; this view evolved from the so-called deficit model to dialogic concepts of public engagement with science, followed by a view of science communication as mediatized public communication (Schäfer et al., 2019). The deficit model initially assumed that filling knowledge gaps through the dissemination of scientific findings leads to rational decision-making, and this view still determines the communication activities of many scientists (Simis et al., 2016). An ideal of a dialogue between scientists and nonscientists eventually evolved and is being actively embraced by scientists (Kessler et al., 2022). However, both perspectives still overlook the dynamics of science communication in the public sphere, such as public and political communication (Scheufele, 2014). In this dynamic understanding, scientific knowledge is not merely disseminated to the public but becomes the subject of negotiation and contestation in public and political debates. Thereby, scientific uncertainties are often not addressed within scientific logic but interpreted and communicated strategically by different actors (Peters & Dunwoody, 2016).

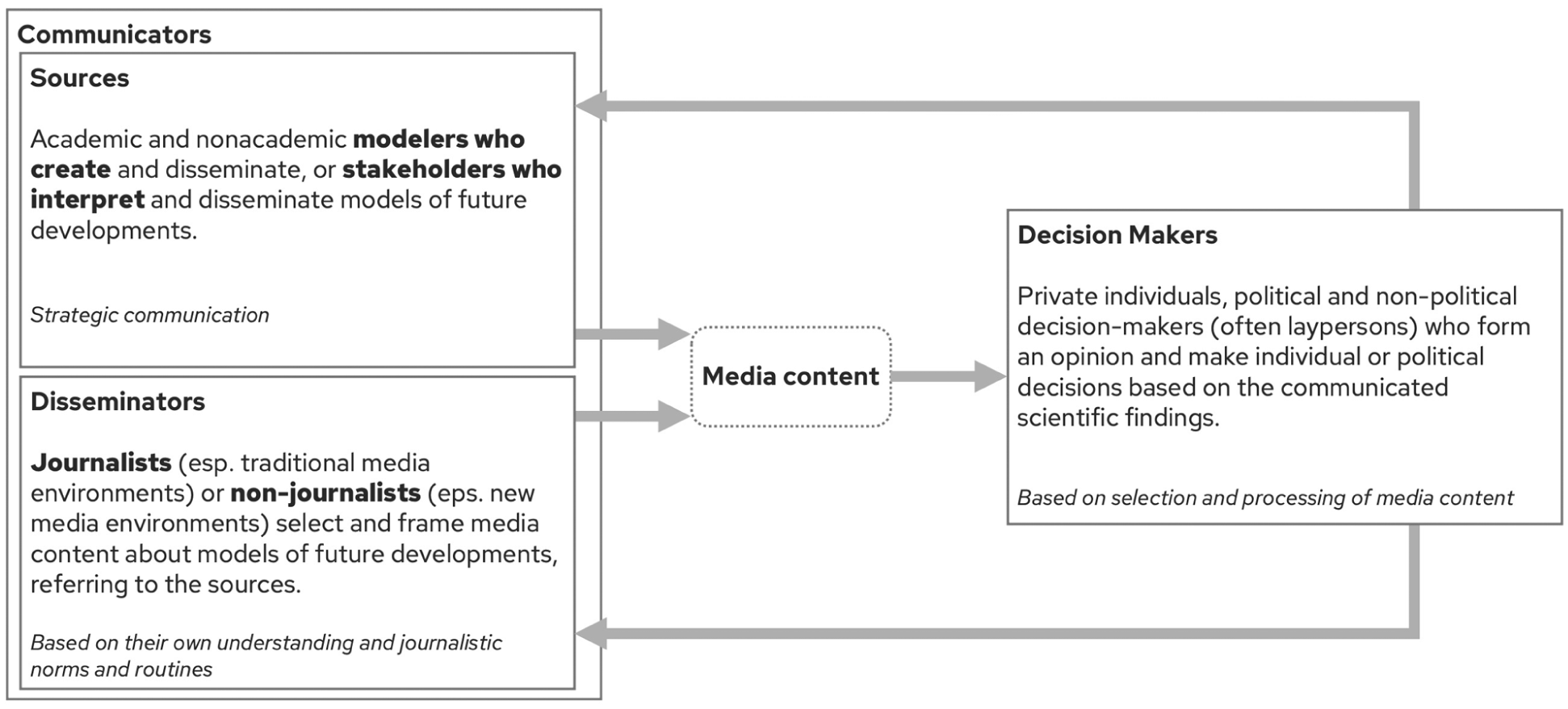

Furthermore, public science communication is not limited to scientists, journalists, and the lay audience. Nonacademic researchers (e.g., governmental agencies, industrial R&D departments, or NGOs) also publish research and often strategically frame scientific uncertainties to support their own interests (Post & Maier, 2016; Stocking & Holstein, 2009). Creators of communication-related content are not only journalists, especially in the context of new media. Through internet applications, different actors have expanded possibilities to communicate with a public audience. Scientists, stakeholders, policymakers, or laypersons can communicate directly with the public, and this communication process is highly interactive (Peters et al., 2014; Scheufele, 2014; Winter & Krämer, 2016). As a result, actors who consume scientific information can eventually also be sources or disseminators in public negotiations regarding decisions that are based on scientific evidence. In acknowledging these nonlinear and complex relationships, we define three roles in public communication that multiple actors can adopt:

(a) Sources are the producers of scientific information (e.g., academic or nonacademic modelers) or other stakeholders publicly conveying an interpretation of model results (e.g., politicians including certain findings in the agenda). (b) Disseminators (e.g., journalists and social media influencers) refer to these sources and create content that reports on and potentially comments on the scientific information. Finally, (c) decision-makers are the recipients who make individual or political decisions based on scientific information (e.g., individual citizens, politicians, or business managers). Sources and disseminators are furthermore subsumed under the meta role of communicators, which can be the same or two different instances. Just as disseminators provide information to the public, sources also send messages to disseminators (e.g., press releases or interviews). A politician, for example, can receive scientific information, incorporate it into their decision-making, and then communicate it to disseminators or the public against the background of their own interpretation (see Figure 1). Therefore, the third objective of this review is to investigate how previous communication research has conceptualized the roles of different actors that are involved in the processing and communication of models of future developments.

Science Communication in the Public Sphere.

Research Questions

Corresponding with the general research objectives, our review pursues three research questions. They reflect the thematic context of research about communicating models of future developments with a focus on the scientific uncertainty as well as the roles of actors involved in such communication. First, we identify what has been investigated so far: Specifically, we ask which topics, which roles in public communication (source, disseminator, decision-maker), and which types of uncertainty have been addressed so far using which research methods.

RQ1: To what extent have different (a) modeling topics, (b) roles in the public communication process, and (c) scientific uncertainties of models of future developments been investigated with different research methods in communication science?

Second, we investigate which groups of actors have been studied within the aforementioned roles. Most importantly, we wanted to know how broad the spectrum of actor groups is, since this has been identified as a major research gap: So far, certain actors typically held designated roles (scientists as sources, journalists as disseminators, and laypeople as decision-makers; e.g., Maier et al., 2016). However, as noted earlier, we here propose multidirectional relationships in communication, wherein different actors can adopt varying roles (e.g., laypeople may also serve as disseminators, particularly stakeholders sharing scientific evidence in new media).

RQ2: Which actors take which role in research about communication concerning models of future developments in the public sphere?

Third, we review the state of research on how different actors deal with models of future development and the associated uncertainties in their communication and reception.

RQ3: a) Does empirical research examine different groups of actors with varying frequency in terms of how they deal with certain forms of uncertainty, and b) what are the key findings?

Method

For the systematic review, 237 scientific papers were analyzed. As inclusion criteria, we defined that papers had to (a) examine public communication or the reception of information about (b) models of future developments.

Procedure

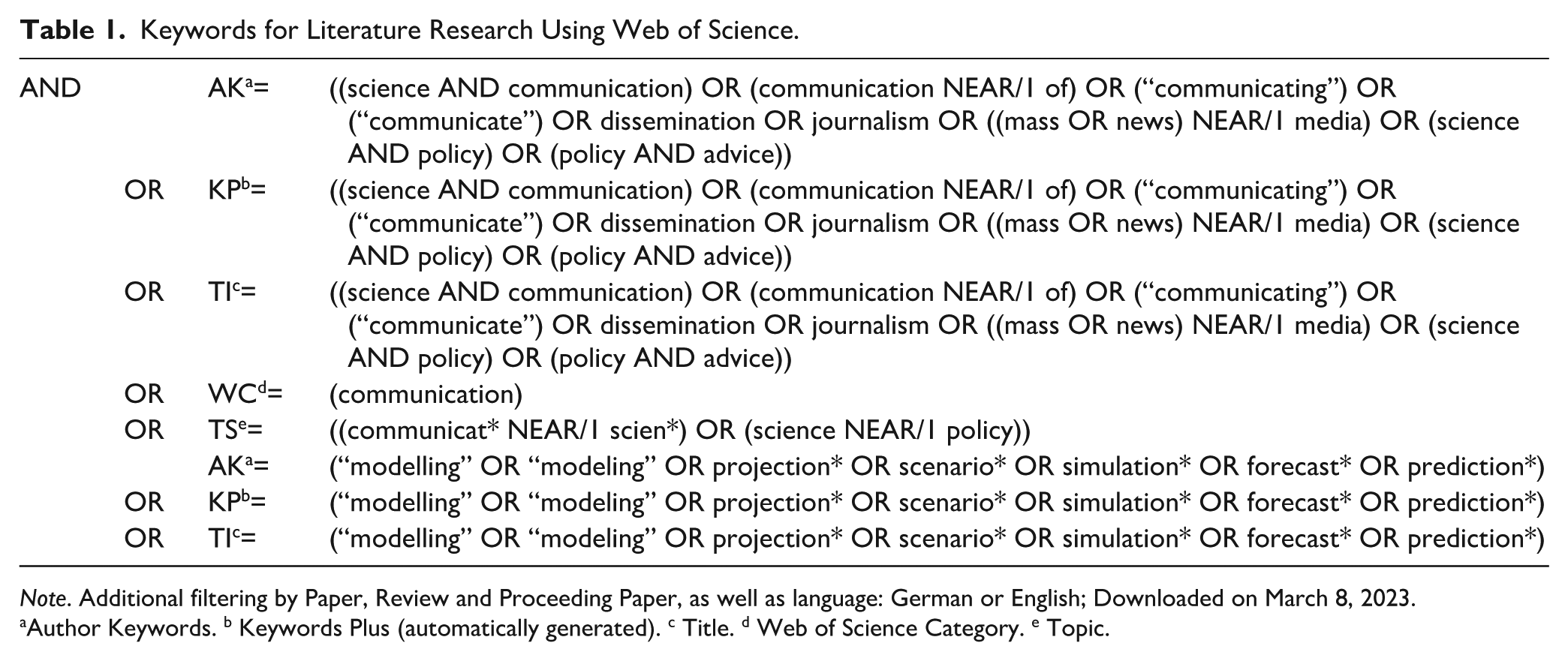

In a first step, a Web of Science search without restrictions regarding the date of publication yielded 4,004 papers (for search terms, see Table 1). Then the titles and abstracts were screened for the criteria, reducing the number to 495 papers. In the next step, four trained coders (for the coder training and reliability tests, see Supplemental Appendix A; for information about the codebook, see Supplemental Appendix B) coded for the inclusion criteria based on the full text and identified 237 papers as relevant for the analysis. For these papers, a systematic full-text coding was followed to answer the research questions.

Keywords for Literature Research Using Web of Science.

Note. Additional filtering by Paper, Review and Proceeding Paper, as well as language: German or English; Downloaded on March 8, 2023.

Author Keywords. b Keywords Plus (automatically generated). c Title. d Web of Science Category. e Topic.

General Information

To provide an impression of the nature of scientific evidence in the various research areas, we classified the papers into four categories according to their methodological approach: empirical quantitative, empirical qualitative, systematic review, or theoretical paper (e.g., papers discussing the challenges of science communication in new media or the use of new prediction tools for communication in theory, but not conducting their own survey on communication practice itself) (intercoder reliability for all categories, rH>.90). In addition, we identified the scientific discipline from which the articles originate by coding the discipline of the publishing journal. The classification of scientific disciplines of the U.S. National Science Foundation was used for orientation, and we added the categories of communication science and humanities (rH>.82) (National Center for Science and Engineering Statistics [NCSES], 2020). Several categories were coded in cases of interdisciplinary journals. In addition, the year of publication and the first author’s country of origin were determined. For all other variables, each paper was coded based on its research questions, hypotheses, and parts of the methods and results sections, focusing on subjects of investigation related to the communication and reception of models of future developments; unrelated sections, such as those on model development, were excluded. Especially for theoretical papers or unsystematic reviews without separate methods and results sections, the full text was reviewed to determine relevant insights. Accordingly, the model subject was recorded, which included modeling related to climate change, weather forecasts, hydrology, environmental disasters such as hurricanes or earthquakes, and the spread of disease. Since some papers covered multiple subjects, multiple categories could be coded here (inter-coder reliability for all categories rH>.91). Further, we coded whether the papers investigated the communication in the form of graphics (e.g., hurricane maps or diagrams), videos, newspaper articles, other texts (e.g., reports, explanatory texts), or on social media, etc. (rH>.74). Multiple coding was also possible here, for example, when decision-makers were asked where they obtained their information.

Uncertainty

We coded whether a paper addressed communication about uncertainty. For sources and disseminators, this referred to the information they communicated (e.g., uncertainty information found in analyses of media reports or interviews regarding the uncertainty information they communicate); for decision-makers, it pertained to the information they obtained and received (e.g., systematic variation of uncertainty information in an experiment on model understanding). A distinction was made between probabilistic uncertainty in numerical (e.g., concrete numbers like frequencies, probabilities, or confidence intervals) and verbal form (e.g., verbal paraphrases like “likely,” “a good chance”). We also recorded whether epistemic uncertainty (i.e., limitations or confidence in the model) was communicated (e.g., “further research is needed,” “there are unknown influencing factors”) (rH>.80). Several types of uncertainty could occur in a paper (e.g., when the comprehensibility of numerical uncertainties was compared with verbal forms).

Actors

The group of actors whose communication or reception of model information was examined in the paper, including scientists, non-scientific experts (e.g., emergency managers and services in connection with disasters, medical professionals for epidemics), journalists, citizens, political/state actors (politicians and authorities), and economic actors (rH>.79). Several actors could be addressed in a single paper (e.g., scientists and politicians in research on the science–policy interface).

Role

Sources are defined as persons who introduce model information into the public discourse, which may be the modelers themselves, as well as other actors who circulate an interpretation of the model (e.g., interviews with scientists or politicians). Journalistic and non-journalistic actors who create media content and disseminate it to the public were coded as disseminators. Finally, recipients and users of the model information were coded as decision-makers (e.g., laypersons or public decision-makers) (rH>.84). Since several actors could appear in one paper and one actor could take on several roles, multiple coding was also used here. An assignment variable was used to record which actor took on which role.

Analyses

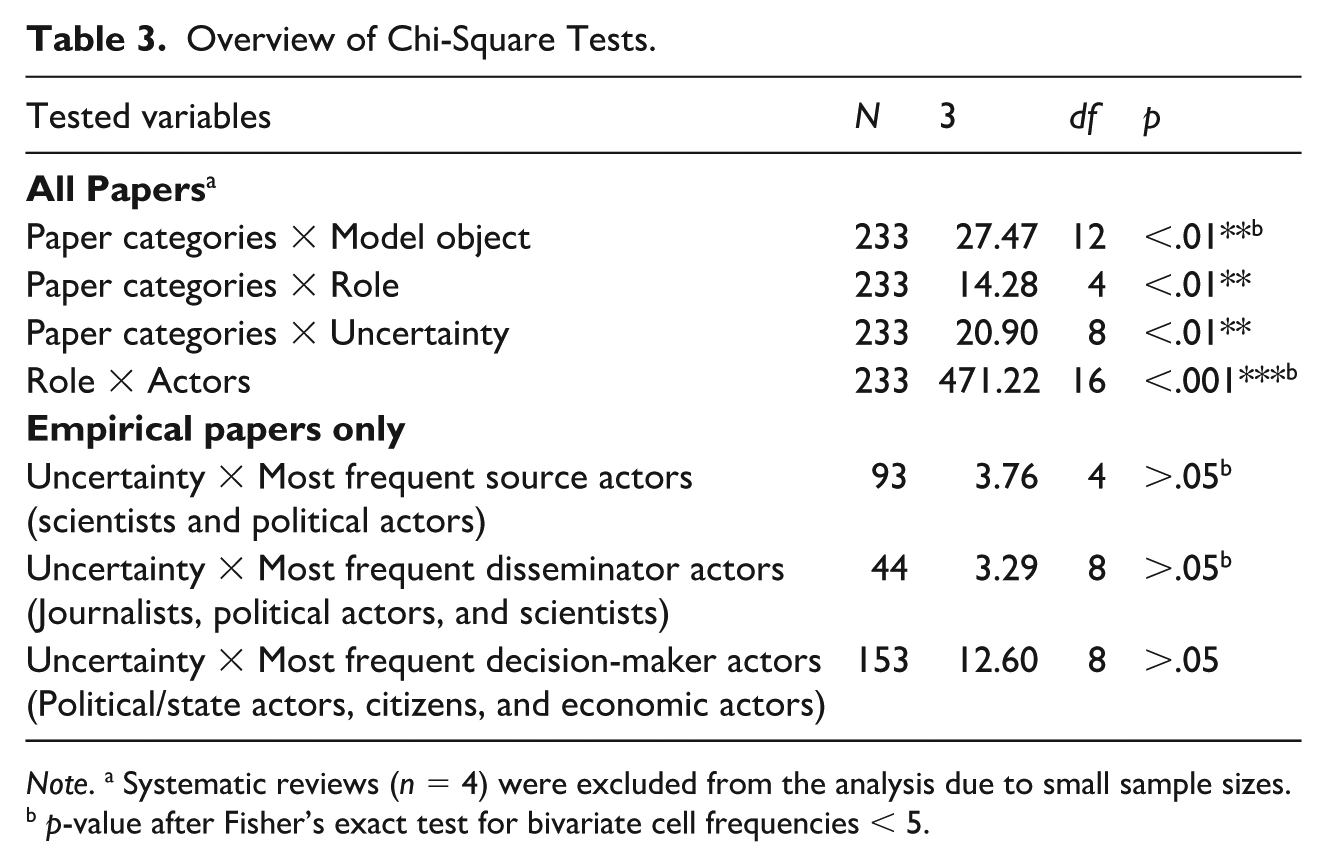

The first research question concerns the contexts in which communication of models of future developments has been studied and the type of evidence available so far. Therefore, we initially calculated relative frequencies to identify the most relevant topics, the most frequently investigated roles in the communication process, and the types of uncertainty mentioned. For each, we performed a chi-square test to compare the distribution of topics (RQ1a), roles (RQ1b), and types of uncertainty (RQ1c) across paper categories.

The second research question examines the roles that various actor groups take on in research on the communication of models of future developments. Again, we first calculated the relative frequency of papers that examined different groups of actors. To assess the degree to which diverse role-actor combinations are considered, we executed a chi-square test between the variables of role and actor (RQ2).

The third research question focuses on how actors manage model uncertainty in communication and reception. For this purpose, we examined the empirical research in detail, broken down by the actors’ roles. We focused on papers addressing actors that we identified to appear particularly frequently in the literature or which were strongly associated with a specific role (see below). We provided descriptive statistics for the variables actor and uncertainty specific to the respective categories of papers and calculated chi-square tests to determine whether the central groups of actors were more frequently associated with specific forms of uncertainty (RQ3a). Then, we conducted a qualitative analysis, summarizing the key findings regarding how actors handle model uncertainty (RQ3b). We chose this qualitative approach to capture diverse perspectives on the communication process (including who communicates, what is communicated, and through which channels or media, investigated with various qualitative and quantitative methods).

Systematic reviews were excluded from the chi-square tests because there were only four papers in this category. Where necessary, the p-values were determined using Fisher’s exact test. As a post hoc test, we determined an adjusted alpha using a Bonferroni correction and used it to analyze the standardized residuals (Haberman, 1973).

Results

The papers analyzed were published between 1979 and 2023, with the first authors originating primarily from the United States (41%) and Europe (42%; see Supplemental Appendix C). In recent years, the research on communicating models of future developments has increased immensely: 199 of the 237 papers have been published since 2010. Studies regarding the communication about models of future developments were found less frequently in classical communication science journals (15%). Most journals were either purely natural/formal science journals (44%, including engineering and medicine) or interdisciplinary journals that had been assigned a combination of codes, mostly geoscience, atmospheric, and ocean sciences (54%); natural resources and conservation (40%); and social sciences (45%) (e.g., journals like “Weather, Climate, and Society”).

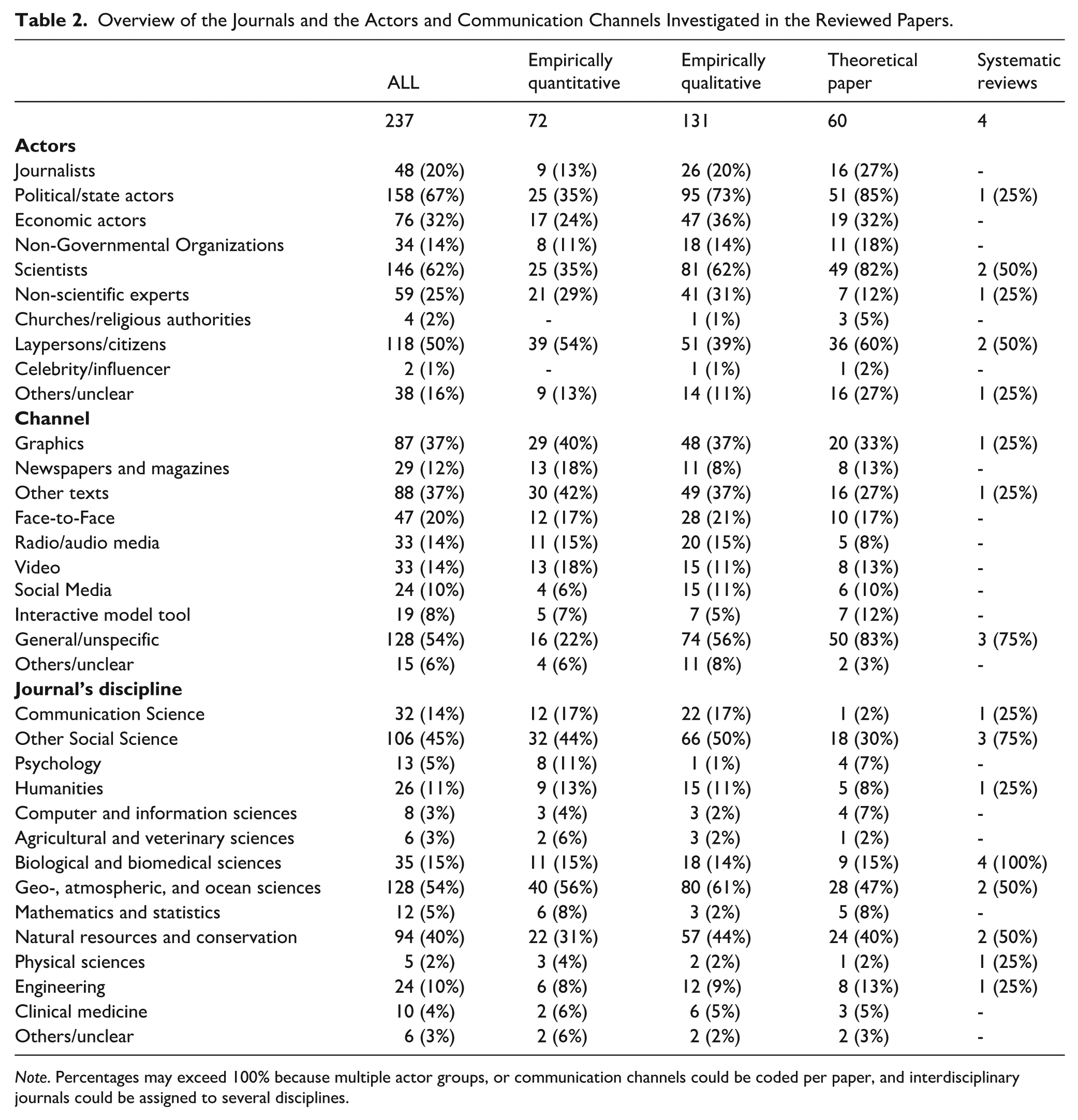

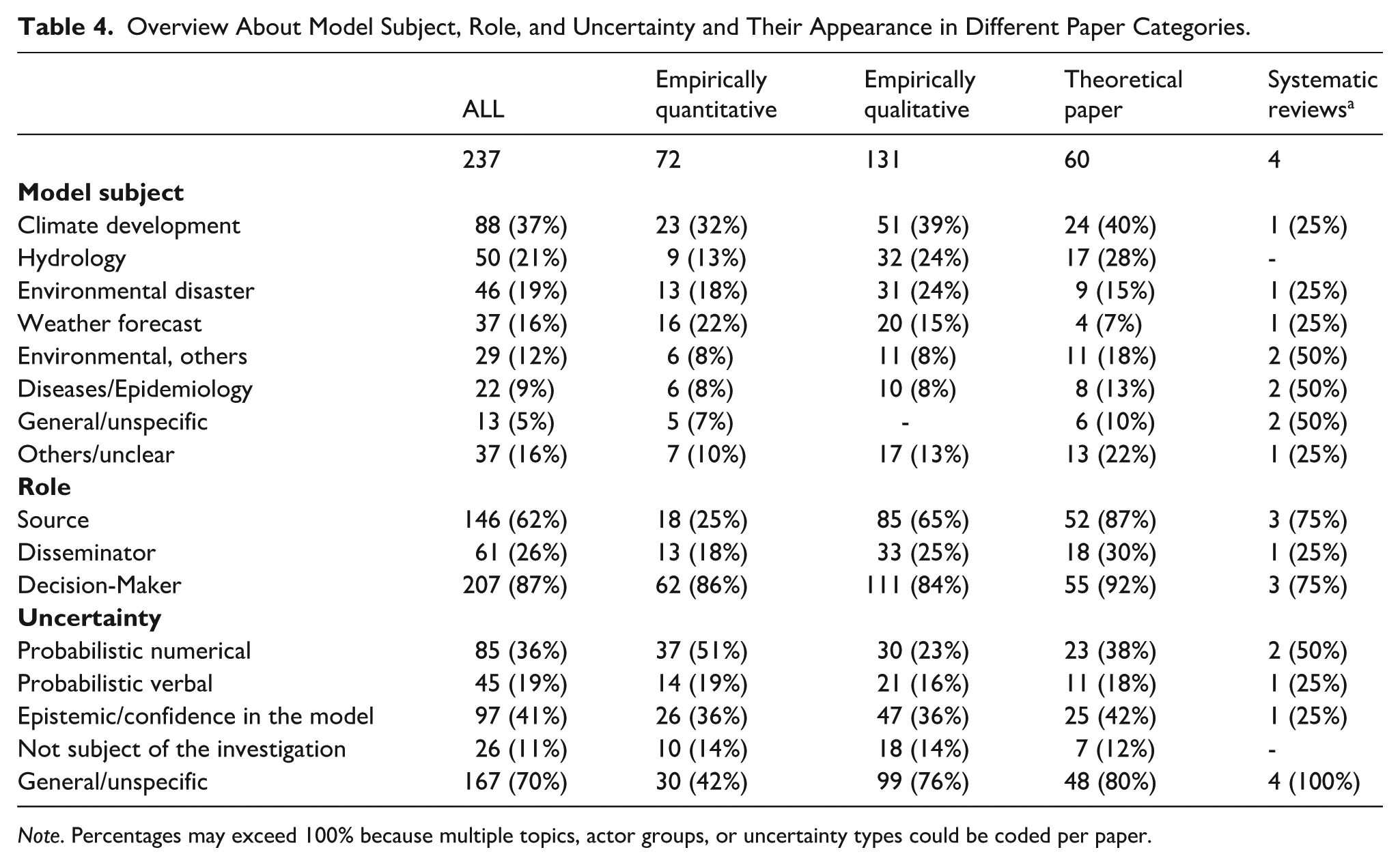

Only 30% were empirical quantitative studies, 55% were empirical qualitative studies, and 25% were theoretical papers. Of the four systematic reviews, three primarily examined the creation of a model and only briefly its communication. Twenty-eight papers used mixed methods. These papers primarily examined the communication of environmental modeling (83%), especially via text (37%) and graphics (e.g., climate graphs or maps with hurricane tracks; 37%) (see Table 2). Climate (37%), hydrology (21%), and environmental disasters (19%) were examined most frequently. There were significant differences in the frequency of the examined model objects between the paper categories (χ2(12)= 27.47, p-value after Fisher’s exact test p < .01; see Table 3). The post hoc test revealed that this was due to some qualitative studies. While the other papers sometimes only spoke in general terms about models of future developments, the qualitative papers were always related to a specific topic (RQ1a; see Table 4). Besides this, there were no significant differences.

Overview of the Journals and the Actors and Communication Channels Investigated in the Reviewed Papers.

Note. Percentages may exceed 100% because multiple actor groups, or communication channels could be coded per paper, and interdisciplinary journals could be assigned to several disciplines.

Overview of Chi-Square Tests.

Note. a Systematic reviews (n = 4) were excluded from the analysis due to small sample sizes. b p-value after Fisher’s exact test for bivariate cell frequencies < 5.

Overview About Model Subject, Role, and Uncertainty and Their Appearance in Different Paper Categories.

Note. Percentages may exceed 100% because multiple topics, actor groups, or uncertainty types could be coded per paper.

Research about the communication of these models had focused predominantly on the recipient perspective: 87% of papers dealt with decision-makers, 62% with sources, and only 26% with disseminators. This was not only evident in the absolute number of papers. A chi-square and the subsequent post hoc test showed that empirical quantitative research focuses more strongly on decision-makers, while sources are underrepresented here (χ2(4) = 14.28, p < .01) (RQ1b).

Most of the papers also considered the communication of uncertainty in the models. Overall, 41% of the papers dealt with epistemic uncertainty, 36% with probabilistic numerical uncertainty, and 19% with verbal descriptions of probabilistic uncertainty. The distribution varied between paper categories (χ2(8) = 20.90, p < .01). According to the post hoc test, empirical quantitative studies addressed probabilistic numerical uncertainty more frequently, while qualitative studies did this less often (RQ1c). Uncertainty was also often addressed in general terms (70%), although this was less common in empirical quantitative studies (Table 4).

Among the groups of actors, scientists (62%), political/state actors (67%), and citizens (50%) were the most strongly represented overall, followed by economic actors (32%). Some groups of actors were significantly associated with certain roles (χ2(16) = 471.22, p-value after Fisher’s exact test p < .001). Not surprisingly, the post hoc test showed that these particular concerns were attributed to scientists as sources, journalists as disseminators, and citizens as decision-makers. Economic actors also tended to be treated as decision-makers more often. Political actors—the most frequently studied group overall—were not significantly associated with any particular role (RQ2). All in all, empirical papers frequently examined citizens as decision-makers and their dealings with probabilistic uncertainty. In contrast, the biggest gaps in research seem to relate to disseminators (see Table 4).

As described in the methods section above, we conducted a more in-depth analysis and qualitative evaluation of papers on the most important actor groups. By examining their appearance in the publications and their association with specific roles, we identified scientists as particularly relevant for the source role, journalists as disseminators, and citizens and economic actors as decision-makers. We also decided to examine non-journalistic disseminators more closely. These are becoming increasingly important, especially in new media (Peters et al., 2014; Scheufele, 2014; Winter & Krämer, 2016). However, since this is a relatively new area in communication studies, papers on this topic may still be scarce and less noticeable in our analysis of the most relevant actor groups. Because political actors are the most frequently studied group overall, we included them in all roles.

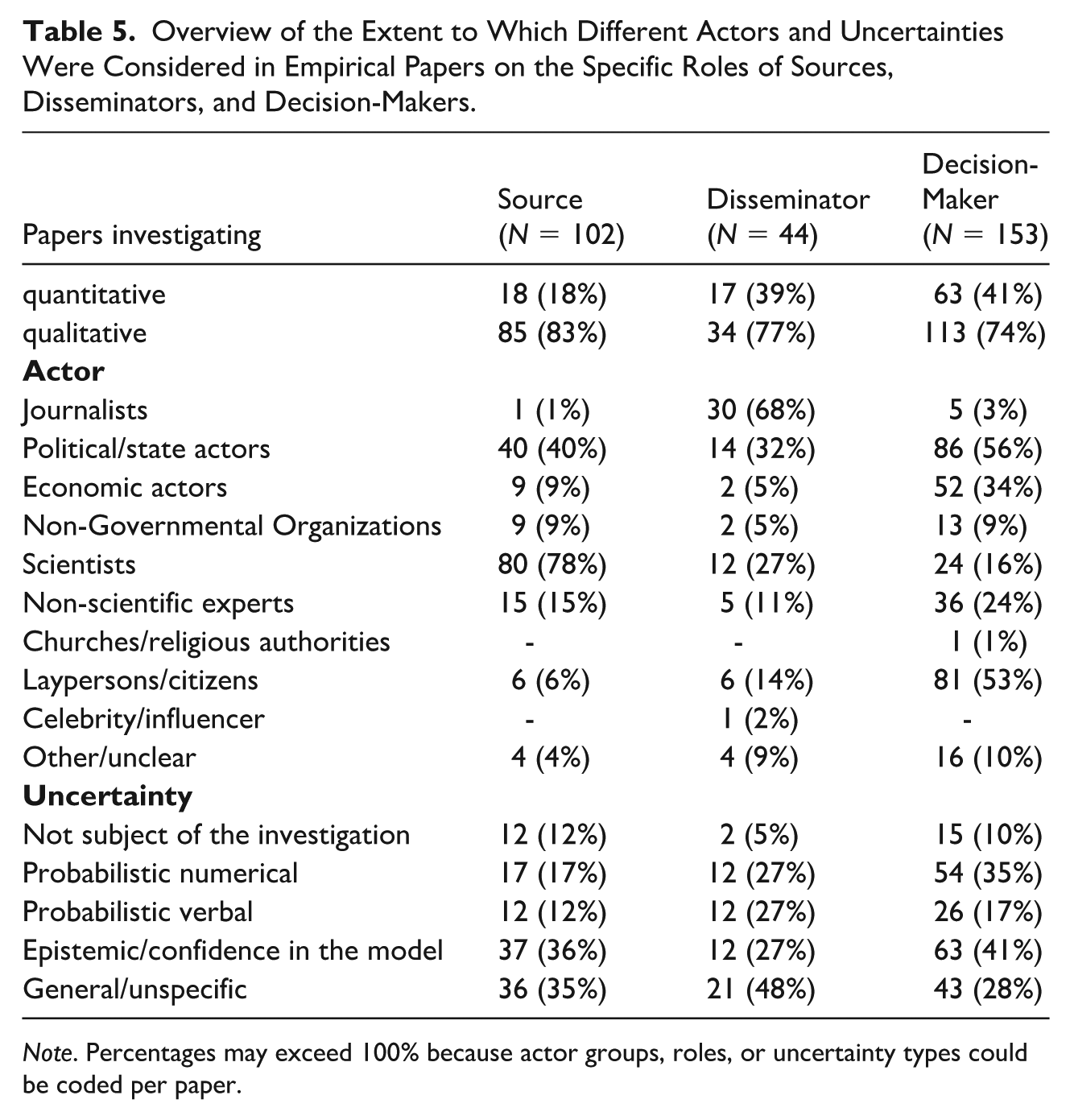

Sources

This section addresses the sources that create models of future developments or prepare information about them for a lay audience. Scientists were identified as the most important group here. They appear in 78% of the 102 empirical studies on sources. Political/state actors were also frequently mentioned in this role (40%). As the origin of model information, they influence what information is circulated about the limitations and uncertainties associated with models of future developments. Empirical studies on sources dealt particularly frequently with epistemic uncertainty (36%) in this regard, such as when the examined actors discuss the maturity of models. Probabilistic uncertainty statements in numerical form are dealt with in 17% of the papers, and in verbal form in 12% (e.g., in connection with how uncertainties can be communicated most effectively; see Table 5). There were no significant indications that one of the two groups of actors (scientists and political actors) was more frequently investigated in connection with certain forms of uncertainty (χ2(4) = 3.76, p-value after Fisher’s exact test, p > .05) (RQ3a; see Table 3).

Overview of the Extent to Which Different Actors and Uncertainties Were Considered in Empirical Papers on the Specific Roles of Sources, Disseminators, and Decision-Makers.

Note. Percentages may exceed 100% because actor groups, roles, or uncertainty types could be coded per paper.

To answer RQ3b, we first took a closer look at the group of papers investigating scientists as sources. Scientists appear to be ambivalent about appropriately handling uncertainty: On the one hand, scientists answered in surveys that they consider it important to communicate uncertainty transparently and honestly (Daron et al., 2021; Haikola et al., 2019; Herovic et al., 2019). On the other hand, they regard this as challenging and sometimes hesitate to do so: They fear that uncertainties could be difficult to understand for decision-makers and the public, generating confusion (Daron et al., 2021; Ramos et al., 2010), undermining trust in science (Daron et al., 2021; Haikola et al., 2019), or eliciting disinterest in the case of low probability prognosis (Herovic et al., 2019). However, scientists also see the need in some cases to communicate uncertain prognoses transparently to avoid potential damage (Haikola et al., 2019; Sellnow et al., 2017). In the context of climate developments, for example, scientists surveyed have concluded “that the main role for all kinds of climate modeling is to deliver the central message that drastic mitigation measures are needed immediately” (Haikola et al., 2019). Accordingly, scientists are questioning how models and their uncertainties should be communicated. To minimize confusion, scientists surveyed stated that they strive to keep the message simple and concise, which is in line with recommendations outlined in some papers on science communication (Herovic et al., 2019; Schenk & Lensink, 2007). For this purpose, some scientists prefer verbal paraphrases of uncertainties (e.g., “unlikely” instead of 5%) despite disagreements about the optimal translation, or some even prefer deterministic statements without specific probabilities (Herovic et al., 2019; Rosen et al., 2021). Other scientists believe that stating probabilities as concrete frequencies (e.g., “one per 1,000 chances”) is the ideal strategy (Herovic et al., 2019).

Some papers have shown that there can be difficulties in communication between scientists and policymakers, as scientists occasionally provide details on model specifications and uncertainty with insufficient clarity. For example, they sometimes fail to clarify which forms of uncertainty are an integral part of model specifications and do not necessarily imply that a model can deliver more accurate results by collecting additional information (Brugnach et al., 2007; Schenk & Lensink, 2007). Reasons for these communication deficits may include scientists not knowing precisely what information decision-makers need, the low integration of decision-makers in the modeling process, and limited experience in interacting with decision-makers (Brugnach et al., 2007; Eker et al., 2018; Schenk & Lensink, 2007). Political and ideological positioning can also influence their policy advice, as Ngo et al. (2018) demonstrated using the example of economic institutes. However, Eker et al. (2018) found in an online survey that scientists assume that decision-makers expect transparent and detailed communication.

Papers that consider politicians in the source role show they use model results to justify their policies in public debates and select models specifically for this purpose (Scheer, 2015, 2017). More generally, Rode and Fischbeck (2021) compared the communication of scientists and nonscientists in the media and demonstrated that nonscientists communicate uncertainties of apocalyptic forecasts less frequently.

Disseminators

Disseminators (e.g., journalists, as well as other groups of actors, particularly in the context of social media) produce media content and disseminate it to a public audience. With 44 empirical papers, this is the least studied group, with only 17 papers being quantitative studies. Journalists were identified here as the most studied group (68%), but political actors (32%) and scientists (27%—often national weather services and meteorologists) were also covered.

There were no significant differences in terms of which papers with these actors addressed which uncertainties (χ2(8) = 3.29, p-value after Fisher’s exact test p > .05) (RQ3a; see Table 3). Overall, uncertainties were particularly often addressed in general terms (48%), without specifying the type of uncertainty. Communication about epistemic and probabilistic uncertainties was examined with equal frequency (27% each, see Table 5).

Regarding RQ3b, one group of studies about disseminators examined the communication regarding future developments in newspaper reporting. One common feature of various works is that models of future developments are primarily communicated in a political context. Accordingly, more prognoses related to political topics are discussed than in the areas of, for instance, sports, the environment, or the economy (Pentzold & Fechner, 2020; Scheer, 2017). In addition, environmental prognoses are more likely to be reported in the instance of political events such as UN climate conferences compared to when corresponding studies are published (Rick et al., 2011; Rode & Fischbeck, 2021). Uncertainties are frequently ignored or rather expressed as vague probabilistic (e.g., “likely”) or epistemic uncertainty statements (e.g., “unrealistic”) (Akerlof et al., 2012; Pentzold & Fechner, 2020; Rode & Fischbeck, 2021; Scheer, 2017). Furthermore, they are often used to express skepticism about the quality of the models and the underlying science (Akerlof et al., 2012; Scheer, 2017). However, the depth of uncertainty reporting also appears to depend on the context and topic: A more concrete and detailed examination of uncertainty was found in the context of sports prognoses and the COVID-19 pandemic (Pentzold & Fechner, 2020; Pentzold et al., 2021). One possible explanation could be that both topics are of particular relevance to society or certain fan communities, and thus, there is a greater commitment to dealing with specific data.

Only a few papers addressed new media. Here, the group of disseminators is more diverse. Particularly on social media, a broad public audience is also addressed by disseminators other than journalists, such as politicians, stakeholders, and citizens (Peters et al., 2014; Scheufele, 2014; Winter & Krämer, 2016). However, there appears to be minimal research in this area regarding the communication of models of future developments. At least a few case studies dealt with the exchange between forecasters and citizens on social media in the context of environmental disasters (e.g., hurricanes and earthquakes). They present a mixed picture in terms of how they deal with uncertainty: In some cases, forecasters use primarily vague, verbal expressions for uncertainties (Bica et al., 2020; Rosen et al., 2021), while in others, they also use specific numbers (McBride et al., 2020). Whether citizens actively address uncertainties (Bica et al., 2020) or not (Hirsbrunner, 2021) also appears to depend on the specific case. Finally, there was some indication that some users prefer to share dramatic scenarios because these attract more attention (Bica et al., 2020; Hirsbrunner, 2021).

Decision-Makers

This section concerns the role of decision-makers’ selection, interpretation, and use of the information about scientific models and their uncertainties presented by sources and disseminators. Political/state actors (analyzed in 56% of the empirical papers on decision-makers), citizens (53%), and economic actors (34%, e.g., farmers, fishermen, and representatives of larger organizations) were identified as the most important groups of decision-makers in research on the communication of models of future developments. Studies on these groups of actors showed no significant differences in terms of the form of uncertainty that was addressed (χ2(8) = 12.60, p > .05) (RQ3a; see Table 3). Overall, epistemic uncertainty was examined most frequently (41%), followed by probabilistic uncertainty in numerical (35%) and verbal (17%) form (see Table 5). As mentioned above, a lot of quantitative research in the field of communication about models of future developments focused on how laypeople (mostly citizens) deal with probabilistic uncertainties. Other decision-maker groups are examined more often qualitatively or in surveys. In this context, epistemic uncertainties are discussed more frequently.

To address RQ3b regarding research on laypeople’s handling of uncertainty, it becomes apparent that the interpretation of complex models and their uncertainties is cognitively challenging. For example, prognoses are perceived in a biased manner depending on the context and framing. If, for example, an event is predicted within a certain time frame (e.g., a volcanic eruption within the next 10 years), citizens may inaccurately expect the event to occur more likely at the end of this period than at the beginning (Bolton et al., 2022; Doyle et al., 2014). Another example is the perception of a prognosis for lower rain intensity as less certain (Sivle et al., 2014). Sometimes, there is also a lack of background knowledge for the correct assessment (e.g., many people think that a “30% chance of rain” means that it will rain 30% of the day, but it actually means that in 3 out of 10 similar weather conditions, rain has occurred; Abraham et al., 2015; Kane & Broomell, 2022). Even experts can fail to correctly assess model uncertainty (Kane & Broomell, 2022). Nevertheless, there were encouraging findings suggesting that laypersons can handle uncertainties if they are communicated appropriately. One key finding was that transparent information on probabilistic uncertainties (e.g., “80% chance of rain”), in contrast to deterministic statements and decision-related advice (e.g., “rain is expected”), strengthens trust in the model and increases decision quality (e.g., Bolton et al., 2022; Joslyn & Demnitz, 2019; Rosen et al., 2021). Numerical probabilities appear to be most understandable (e.g., “10%,” “1 in 10”), possibly in combination with verbal paraphrases (e.g., “likely (80%),” “possible (10%)”). In contrast, verbal paraphrases alone (e.g., “likely”) are interpreted inconsistently (Doyle et al., 2014; Rosen et al., 2021). One study also quantitatively assessed citizens dealing with epistemic uncertainty in decision-making (Padilla et al., 2021): If, in addition to probabilistic uncertainties, information indicated that experts had high confidence in a model, this improved the decision quality (compared to low confidence or no further information). Other factors influencing the interpretation of models are, for example, decision-makers’ prior experiences (e.g., greater uncertainty is perceived after previous forecast errors; (Joslyn & LeClerc, 2012; Sivle et al., 2014), numeracy (e.g., higher numeracy leads to a lower loss of trust when forecasts fail; Bolton et al., 2022), communicator characteristics (e.g., weather forecasts are perceived as more credible when communicated by a professional meteorologist rather than an amateur or social robot; Spence et al., 2021), or beliefs (e.g., in the United States, Republicans have less confidence in climate predictions than Democrats; (Bosetti et al., 2017; Joslyn & Demnitz, 2019). The latter can be interpreted as an outcome of motivated reasoning (Kunda, 1990), in which the evaluation of models is affected by people’s prior opinions.

With a view to decision-makers other than citizens, a group of papers focuses on politicians, often in the context of the science–policy interface. Accordingly, policymakers appear to be aware of scientific uncertainties in principle but also tend to view mathematical models as a black box. They often do not engage directly with the modelers. Instead, they rely on the assessment of intermediary experts and do not deal with the underlying model assumptions and uncertainties, which can hinder their understanding. They frequently evaluate uncertainties as a sign of the model’s immaturity and a critical limitation in its usefulness, although in some cases, they are an integral part of the model specification and not inherently a sign of poor quality (Brugnach et al., 2007; Pilli-Shivola et al., 2014; Scheer, 2015). In cases of a direct exchange between science and politics, the understanding and involvement with uncertainties could be improved, but this does not necessarily eliminate all misconceptions about non-reducible types of uncertainty (e.g., Brugnach et al., 2007).

Even if models of future developments are available, they are not always incorporated into political actions. Among others, usage depends on perceived reliability and comprehensibility (e.g., Porter et al., 2015). Unfortunately, models, especially climate scenarios, are repeatedly criticized for being difficult to understand (e.g., McMahon et al., 2015; Pilli-Shivola et al., 2014). In addition, the desire for more precision (i.e., less uncertainty) and more detailed prognoses (e.g., a higher spatial resolution for climate scenarios) is expressed, which in turn can increase the uncertainty of the model (Brugnach et al., 2007; Pilli-Shivola et al., 2014; Porter et al., 2015).

Finally, some papers also considered economic actors and organizations as decision-makers, showing a mixed picture when it comes to dealing with models and their uncertainties. For example, in a survey conducted by Soares and Dessai (2016), only a third of 75 organizations from various sectors (e.g., energy, agriculture, and insurance) used seasonal climate forecasts. A lack of relevance and perceived reliability were cited as barriers. If the organizations were in direct contact with the modelers or if the organizations had more internal expertise in dealing with climate forecasts, they used them more frequently. At least most economic actors also report a certain tolerance of uncertainties. In some cases, the desire for more information about possible sources of errors and the earlier prediction success of models was expressed. Partly, the organizations reported that they received more information regarding numerical and verbal uncertainties (e.g., Martin et al., 2022; Soares and Dessai, 2016; Taylor et al., 2015).

Discussion

This review pursued three general objectives: (1) to map the thematic contexts in which the public communication of models of future developments has been examined, (2) to analyze how actors in the public sphere select, process, and represent uncertainty related to such models, and (3) to investigate how previous communication research has conceptualized the roles of different actors involved in these communication processes. Therefore, we first presented a theoretical framework describing the communication process regarding models of future developments in the public sphere. To be flexibly applicable to different communication situations, the actors involved are assigned to the three central roles of source, disseminator, and decision-maker, between which the flow of information can be not only unidirectional but also reciprocal. On this basis, the state of research on the communication of models of future developments was systematically reviewed. This topic repeatedly becomes relevant in different policy areas, but the associated uncertainties have a particular potential to engender misunderstandings, conflicts, and strategic representations.

Building on this, we provided a systematic review of which topics, communication roles and types of uncertainty have been studied so far (RQ1), which groups have been studied as actors in these roles (RQ2), and how such actors deal with uncertainty of models of future developments in their communication (RQ3). The results show that the most evidence is available regarding how lay decision-makers understand prognoses and use them in decision-making. They sometimes tend to misinterpret, but can handle precise, numerical uncertainty information, which is consistent with previous research (Maier et al., 2016; Peters & Dunwoody, 2016). Compared to deterministic prognoses, numerical uncertainty information strengthens their trust and decision quality (Bolton et al., 2022; Joslyn & Demnitz, 2019; Rosen et al., 2021). There is sparse research on how they deal with epistemic uncertainty. Research concerning sources focuses primarily on scientists and political actors and is often qualitative. Scientists hope that reporting scientific uncertainty will educate laypersons; however, they also fear misinterpretations, which is also consistent with earlier studies (Landström et al., 2015; Maier et al., 2016). In some cases, false assumptions about the recipient’s needs can lead to ineffective communication, with uncertainties not being communicated or being vaguely described (Herovic et al., 2019; Rosen et al., 2021). Finally, the various strategic interests of different actors also influence their communication of uncertainty (Post & Maier, 2016). Research on disseminators focuses mainly on journalists. They tend to omit uncertainties, keep them vague, or highlight their skepticism (Akerlof et al., 2012; Pentzold & Fechner, 2020; Rode & Fischbeck, 2021; Scheer, 2017), which is consistent with previous research (e.g., Peters & Dunwoody, 2016; Schäfer et al., 2019). The focus is therefore on types of uncertainty that are more difficult to handle for recipients or that have been researched less in this regard. However, dealing with uncertainty seems to depend on the topic. New media environments have been studied only minimally. The roles of source and disseminator can merge here. There are indications that communicators are using new media as an opportunity to directly clarify questions from the audience regarding uncertainties. On the other hand, uncertainties on social media can also be dramatized to attract attention. However, there is too little research in this area to make reliable statements. In contrast to other research concerning science communication, disseminators have been the least thoroughly studied to date.

The communication on models of future developments was mainly examined concerning environmental issues. Non-environmental topics such as economic developments or the spread of disease received less attention. In line with this, many publications did not come from the classical communication journals, but rather from environmental or interdisciplinary journals that combine environmental and social sciences.

Although some qualitative studies offer valuable insights into the thoughts and communication strategies of sources and disseminators, there is a lack of quantitative research, making it hard to draw broad conclusions. This highlights the potential for future studies to supplement qualitative research on misunderstandings and communication issues between scientists and decision-makers with quantitative data on the actual scope of such problems. Moreover, evidence for disseminators is limited due to a lack of studies or inconsistent findings. There is (1) a need for systematic content analyses that clearly distinguish between different forms of uncertainty presentation, grounded in findings from research about decision-makers’ needs. In addition, (2) newer media and non-journalistic disseminators (e.g., scientific podcasts, laypersons on social media) should be given more attention. Furthermore, (3) prognoses in various fields like epidemiology or economic outlooks should be considered alongside environmental issues, as there are signs of differences in how uncertainties are communicated in these areas. Finally, previous research (4) does not explain where these differences originate, and future studies should investigate this. Regarding recipient research, some studies have examined the effective communication of probabilistic uncertainties, though it is not yet fully understood why the effects observed occur (e.g., why numerical uncertainties increase trust). Possible explanations include decision-makers interpreting transparent uncertainty information as cues for credibility (Joslyn & LeClerc, 2016) or filling in missing or vague information with subjective uncertainty assumptions, which may lead to overestimation (Joslyn & LeClerc, 2012). Depending on the level of uncertainty, they sometimes react in overly risky or cautious ways, suggesting that future research should consider prospect theory (Bolton et al., 2022; Joslyn & LeClerc, 2012; Padilla et al., 2021). To better understand decision-makers’ reactions, their cognitive processes should be studied. This knowledge could also be helpful when examining their responses to epistemic uncertainty. Although sources and disseminators communicate this and decision-makers request it, little research has been done on the effects of epistemic uncertainty.

For science communication in practice, scientists and journalists should be encouraged to present probabilities using clear numbers, such as percentages or frequencies, to ensure effective understanding and use by decision-makers. When discussing epistemic uncertainty, it seems recommendable to explicitly highlight when there is confidence in the model’s reliability, rather than only emphasizing its limitations. In addition, promoting direct communication between sources and political or economic decision-makers—rather than relying on intermediaries—can help provide information that better meets decision-makers’ needs.

Supplemental Material

sj-docx-1-scx-10.1177_10755470251413493 – Supplemental material for Dealing With Uncertain Futures: Communicating Scientific Modeling in the Public Sphere—A Systematic Review

Supplemental material, sj-docx-1-scx-10.1177_10755470251413493 for Dealing With Uncertain Futures: Communicating Scientific Modeling in the Public Sphere—A Systematic Review by Signe E. Filler, Berend Barkela, Michaela Maier, Stephan Winter and Christian von Sikorski in Science Communication

Supplemental Material

sj-docx-2-scx-10.1177_10755470251413493 – Supplemental material for Dealing With Uncertain Futures: Communicating Scientific Modeling in the Public Sphere—A Systematic Review

Supplemental material, sj-docx-2-scx-10.1177_10755470251413493 for Dealing With Uncertain Futures: Communicating Scientific Modeling in the Public Sphere—A Systematic Review by Signe E. Filler, Berend Barkela, Michaela Maier, Stephan Winter and Christian von Sikorski in Science Communication

Supplemental Material

sj-docx-3-scx-10.1177_10755470251413493 – Supplemental material for Dealing With Uncertain Futures: Communicating Scientific Modeling in the Public Sphere—A Systematic Review

Supplemental material, sj-docx-3-scx-10.1177_10755470251413493 for Dealing With Uncertain Futures: Communicating Scientific Modeling in the Public Sphere—A Systematic Review by Signe E. Filler, Berend Barkela, Michaela Maier, Stephan Winter and Christian von Sikorski in Science Communication

Footnotes

Ethical Considerations

An ethical approval was not required, because no human participants were involved.

Consent to Participate

Not applicable.

Consent for Publication

Not applicable.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.