Abstract

Introduction

The use of artificial intelligence (AI) in oncology has increased rapidly, transforming various healthcare areas such as pathology, radiology, diagnostics, prognosis, genomics, treatment planning, and clinical trials. However, perspectives, comfort levels, and concerns about AI in cancer care remain largely unexplored.

Materials and Methods

This prospective, descriptive cross-sectional survey study was conducted between May 20, 2024 and October 22, 2024, among 363 patients with cancer from two different hospitals affiliated with Ankara University, a tertiary care center in Türkiye. The survey included three distinct sections: (1) Perceptions: Patients’ general views on AI’s impact in oncology; (2) Attitudes: Comfort level with AI performing medical tasks; (3) Concerns: Specific fears related to AI implementation (eg, diagnostic errors, data privacy, healthcare costs). Survey responses were summarized descriptively, and differences by age, gender, and education were analyzed using chi-square tests.

Results

A majority (50.7%) believed AI would somewhat (32%) or significantly (18.7%) improve healthcare. However, one-third of patients (33.1%) were very uncomfortable with AI diagnosing cancer, with higher discomfort among less-educated participants (P < .005). Top patient concerns included AI making incorrect diagnoses (31.1%), increasing healthcare costs (27.5%), and not keeping data private (19.6%). Patients with higher education levels expressed less discomfort and fewer concerns.

Conclusions

Patients’ perceptions and attitudes on AI varied significantly based on education, highlighting the need for targeted educational initiatives. While AI holds potential to revolutionize cancer care, addressing concerns about accuracy, security, and transparency is critical to enhance its acceptance and effectiveness in clinical practice.

Plain Language Summary

Artificial intelligence (AI) is becoming an important tool in cancer care, assisting in diagnosis, treatment planning, and clinical decision-making. However, not all patients feel comfortable with AI being used in their medical care. This study surveyed 363 cancer patients to understand their perspectives on AI in oncology. Findings revealed that while many patients believe AI can improve healthcare, others have concerns about diagnostic accuracy, data privacy, and the economic impact of AI-driven medical decisions. Patients with higher education levels showed greater acceptance of AI. To ensure that AI is effectively integrated into oncology, policymakers and healthcare professionals must address these concerns, enhance patient education, and establish ethical guidelines for AI applications in medicine.

Keywords

Introduction

The use of AI in oncology has increased dramatically over the past decade. 1 Machine learning and deep learning systems have been extensively studied and continue to be investigated in various areas of oncology, including risk assessment and prevention, screenings such as skin, breast, prostate, and lung cancers.2-7 Several studies have explored the use of AI to analyze pathological stains and diagnose cancer through algorithms, addressing challenges such as the limited number of pathologists, oncologists, radiologists, and excessive workload. These studies have investigated AI’s potential to provide more accurate diagnoses compared to conventional methods and its application in predicting prognosis. 8 Computational pathology is revolutionizing cancer diagnostics, enabling automated grading, subtyping, and biomarker screening. 9

Similarly, AI algorithms in radiology and biomedical imaging offer promising capabilities for early detection and treatment planning 10 and investigated in studies as a supportive tool in decision-support systems for managing cancer patients, from diagnosis to treatment planning.11,12 Natural language processing (NLP)-based algorithms have been utilized to streamline clinical trial matching, improving access for patients. 13 Another application of AI in oncology is prognosis evaluation. In one study, oncologists were shown to make optimistic predictions in 63% of cases and pessimistic predictions in 17% of cases. 14 AI-based prognostic models, leveraging additional data such as electronic health records, radiology reports, pathological findings, demographic data, laboratory results, and patient-reported outcomes, have the potential to provide more accurate and precise predictions. 14 Emerging tools, including multimodal AI systems and chatbots, demonstrate significant potential in patient education and care delivery.15,16

Alongside the dramatic and rapid advancements in oncology, the strengths of AI are accompanied by discussions surrounding its limitations, ethical and legal concerns, and the protection of personal data. Khullar et al 17 conducted a survey in the United States to explore patients’ perspectives on AI and found that while there was a general perception of AI’s benefits in healthcare, interestingly, the comfort levels regarding its clinical use varied significantly. 17 While several studies have explored public and healthcare professionals’ perspectives on AI in medicine, comprehensive assessments focusing specifically on cancer patients’ perceptions, attitudes, and concerns toward AI integration in oncology care remain limited. 18 Furthermore, to our knowledge, this is the first study conducted in Türkiye that evaluates cancer patients’ perspectives on AI in healthcare. In this study, we primarily aimed to investigate perspectives, comfort levels, and concerns of patients with cancer regarding the use of AI in healthcare services. The secondary aim is to explore differences in patients’ perspectives, comfort levels, or concerns based on sociodemographic factors such as age, gender, and education level.

Methods

Study Design

Researchers conducted a prospective, descriptive cross-sectional survey study between May 20, 2024, and October 22, 2024, involving cancer patients attending the oncology outpatient clinics at two tertiary care referral centers of Ankara University Faculty of Medicine, Cebeci and Ibni-Sina Hospitals. Patients included in the study were those aged 18 and above, who understood Turkish, had a good general and cognitive condition to complete the survey, and provided consent to participate in the study. The survey was adapted and further developed from a previous study conducted by Khullar et al. 17 The survey instrument was initially created in English and then translated into Turkish for data collection purposes. To guarantee the translation’s accuracy and consistency, two bilingual researchers conducted a back-translation of the Turkish version. The survey was first administered to a pilot sample of fifteen participants to evaluate its feasibility and clarity.

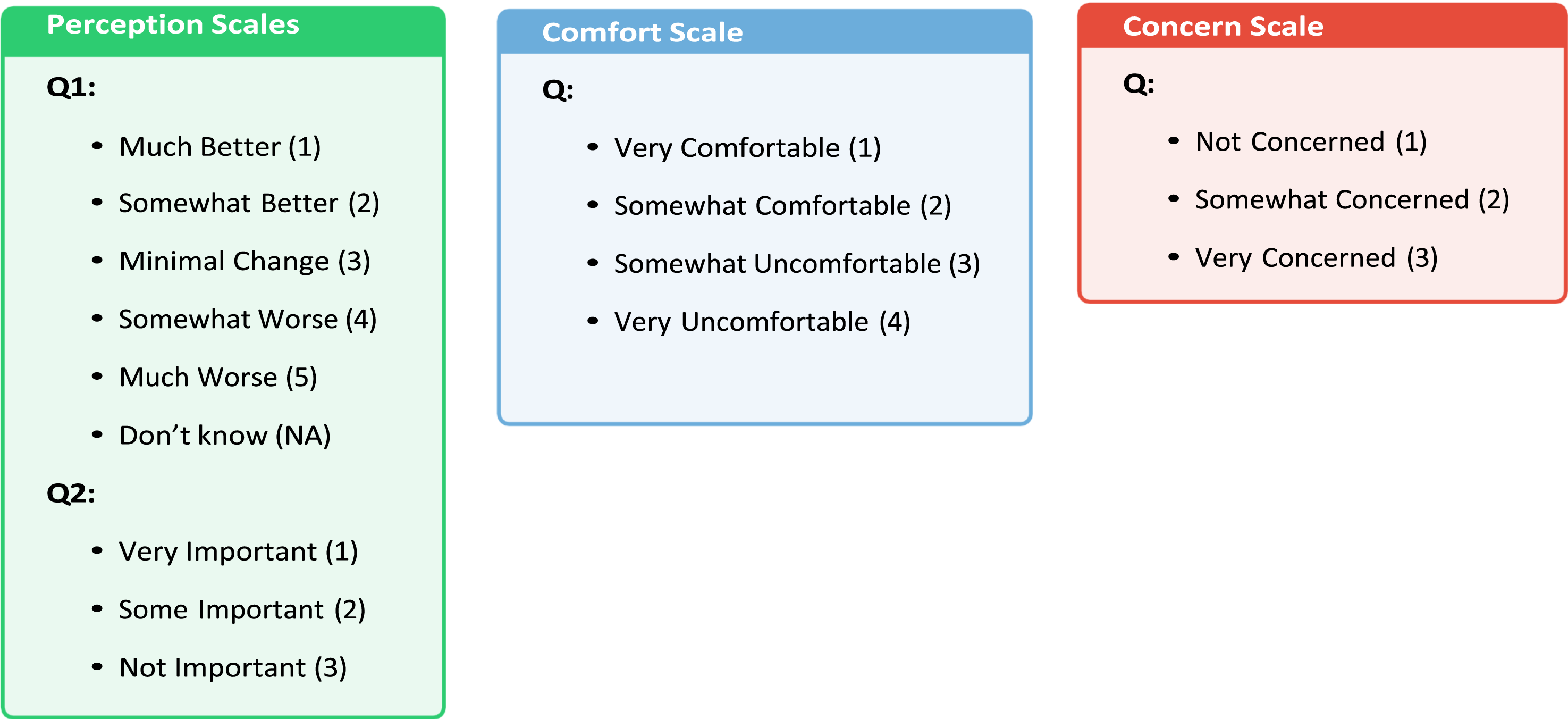

Participants completed a structured questionnaire that assessed their perspectives on AI through three distinct measurement scales: Perception Scale (views on AI’s role in oncology), Comfort Scale (acceptance of AI in clinical settings), and Concern Scale (fears related to AI use). These scales were developed to ensure conceptual differentiation between perceptions, attitudes, and concerns. The Perception Scale included five questions addressing topics such as how patients believe AI will impact healthcare in the future, the importance of knowing whether AI plays a role in diagnosis and treatment, and their comfort level with receiving a diagnosis from AI; The Comfort Scale focused on cancer patients’ comfort levels with AI performing tasks typically done by their doctor. This section consisted of six questions, including scenarios such as AI reading a chest X-ray, diagnosing pneumonia, informing the patient of a pneumonia diagnosis, determining the type of antibiotics, diagnosing cancer, and informing the patient of a cancer diagnosis. Patients could choose from options ranging from ‘very comfortable’ to ‘somewhat comfortable,’ ‘somewhat uncomfortable,’ and ‘very uncomfortable.’; lastly, The Concern Scale focused on cancer patients’ concerns about the use of AI in cancer care services. It included four questions addressing issues such as ‘My health information will not be kept confidential,’ ‘The AI will make the wrong diagnosis,’ ‘AI will mean I spend less time with my doctor,’ and ‘AI will increase my healthcare costs.’ Patients responded using options such as ‘very concerned,’ ‘somewhat concerned,’ and ‘not concerned.’ (Figure 1). Finally, researchers included sociodemographic questions to assess cancer patients’ perspectives, comfort levels, and concerns regarding the use of AI. Demographic questions covered variables such as gender, education level, age, monthly household income per capita, cancer type, recurrence status, treatments received, and the time elapsed since completing treatment. Illustrates the Three Distinct Measurement Scales Used in the Survey. The Perception Scale Assesses Patients’ General Views on AI’s Impact in Oncology, the Comfort Scale Evaluates Their Emotional Response and Willingness to Accept AI in Clinical Practice, and the Concern Scale Measures Specific Fears Related to AI Adoption, Such as Misdiagnosis and Privacy Issues.

This study is structured within a patient-centered research framework, incorporating elements of behavioral science and health technology assessment to examine AI perceptions in oncology. The methodology was designed to systematically capture patient views through validated measurement scales, ensuring conceptual clarity and data-driven analysis. While discrete choice experiments and best-worst scaling techniques provide a structured way to quantify tradeoffs in decision-making, this study aimed to capture broad patient perspectives on AI rather than imposing forced-choice scenarios. Instead, we assessed how comfort with AI correlates with specific concerns through statistical modeling approaches, including regression analysis and cluster segmentation. The minimum required sample size was calculated using the Raosoft calculator. As there were no prior studies in Türkiye addressing cancer patients’ perspectives and concerns regarding AI, a conservative estimate of 50% was used. 19 The calculation determined that at least 253 participants would be needed to ensure a 5% margin of error with a 95% confidence level. This study adheres to the STROBE (Strengthening the Reporting of Observational Studies in Epidemiology) guidelines for cross-sectional research. 20

Statistics

Statistical analyses were conducted using IBM SPSS v.27. Categorical variables were presented as counts and percentages. Survey responses were cross-tabulated based on education level, gender, and age. Chi-square tests and multivariate regression analysis were used to assess differences in frequencies between groups. A P-value of less than 0.05 was considered statistically significant. The internal consistency of these scales was validated using Cronbach’s alpha, confirming their reliability for quantitative analysis.

Ethical Considerations

Ethical approval for the survey was obtained from the Ankara University Ethics Committee (Protocol No: i07-573-24) on 6 September 2024. Before completing the survey, patients were informed that no personal identification information was required on the forms and that they could choose not to participate or withdraw from the study at any time if they wished. Informed consent form was obtained from all participants prior to their inclusion in the study.

Results

Baseline Demographic Characteristics of the Patients

Baseline Characteristics of Participants.

Perspectives of Cancer Patients on Artificial Intelligence in Healthcare

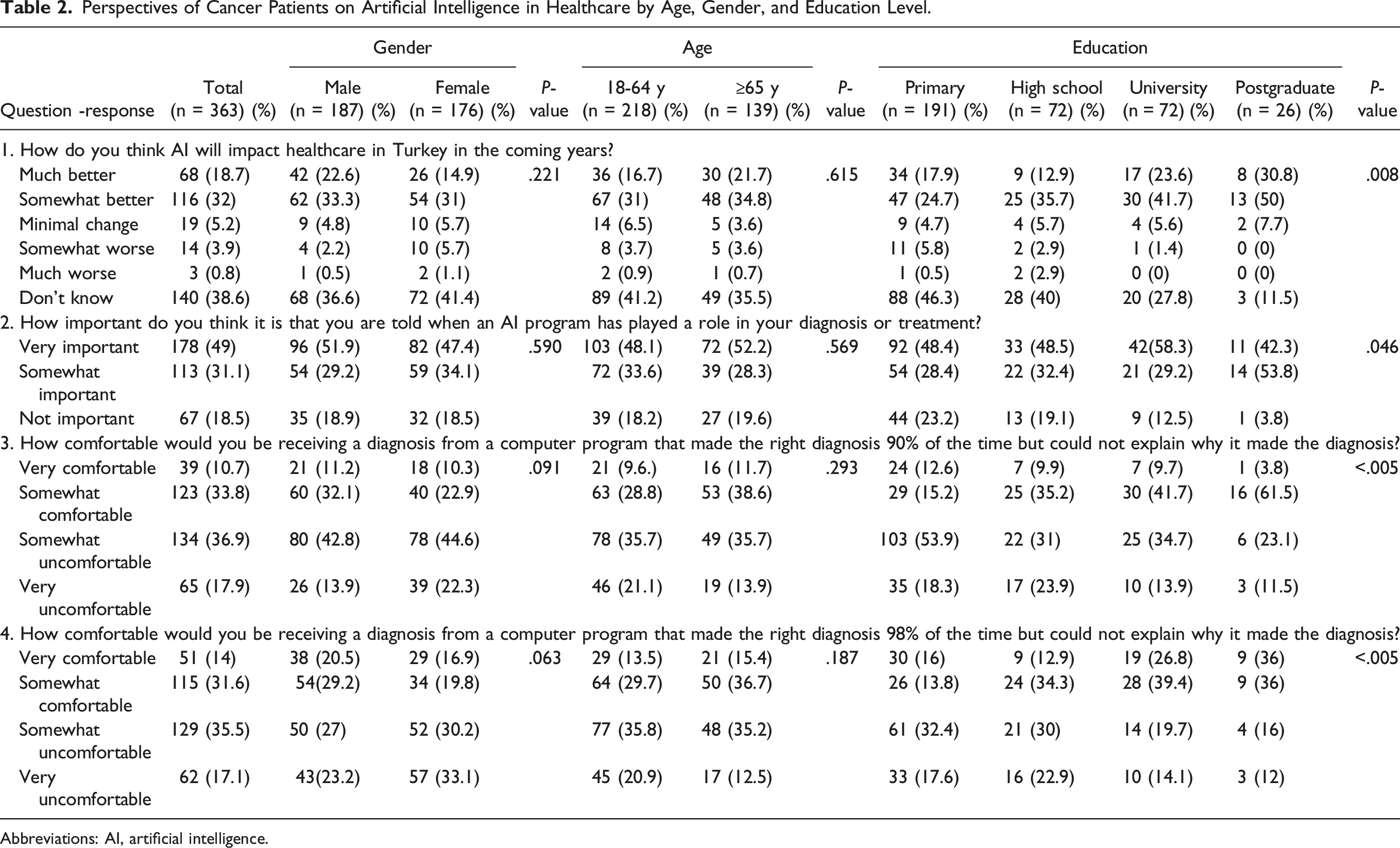

Perspectives of Cancer Patients on Artificial Intelligence in Healthcare by Age, Gender, and Education Level.

Abbreviations: AI, artificial intelligence.

Nearly half (49%) of participants considered it very important to know whether AI influenced their diagnosis or treatment, while 18.5% deemed this unimportant. Among postgraduates, 53.8% found it “somewhat important,” compared to 28.4% of primary school graduates. Only 3.8% of postgraduates regarded it as “somewhat unimportant,” vs 23.2% of primary school graduates (P = .046; Table 2).

Regarding AI diagnosing with 90% accuracy but no explanation of its reasoning, 36.9% expressed discomfort, including 17.9% who were very uncomfortable. Discomfort was highest among primary school graduates (53.9%) and lowest among postgraduates (23.1%). Comfort levels increased with education, with 61.5% of postgraduates feeling somewhat or very comfortable, compared to 15.2% of primary school graduates (P < .005). Similar trends were observed with a 98% accuracy scenario, where 35.5% were somewhat uncomfortable, and 17.1% were very uncomfortable. Among postgraduates, 36% felt very comfortable, compared to 16% of primary school graduates (P < .005). Perspectives did not significantly differ by age or gender (Table 2).

We have examined whether cancer patients’ comfort levels differ between AI models with 90% and 98% accuracy. To investigate this, we conducted a Chi-square test, which revealed a highly significant difference (P < .0001). The observed variation is illustrated in the following Figure 2. Proportions of the Answers for 90% and 98% Model Accuracy.

Cancer Patients’ Comforts with Artificial Intelligence in Healthcare

Cancer Patients’ Comforts With Artificial Intelligence in Healthcare.

Abbreviations: AI, artificial intelligence.

When asked about AI diagnosing pneumonia, 44.1% of patients felt somewhat uncomfortable, and 19.6% felt very uncomfortable. Discomfort was significantly higher among primary school graduates (53.9%) compared to postgraduates (22.2%; P < .005). Similar trends were seen for AI communicating a pneumonia diagnosis, with 41.6% reporting some discomfort and 21.2% reporting high discomfort. Only 7.7% of patients felt very comfortable in this scenario.

Regarding AI determining antibiotics, 9.9% of patients felt very comfortable, with the proportion nearly doubling among postgraduates (19.2%; P = .020). However, discomfort was more prominent in tasks involving cancer diagnoses. Approximately 33.1% of patients felt very uncomfortable with AI diagnosing cancer, and 36.1% reported high discomfort about being informed of their cancer diagnosis by AI. Discomfort levels were highest among high school and primary school graduates for both scenarios, whereas postgraduates reported significantly higher comfort (P < .013 and P = .049, respectively; Table 3).

Comfort levels did not vary significantly across age or gender groups.

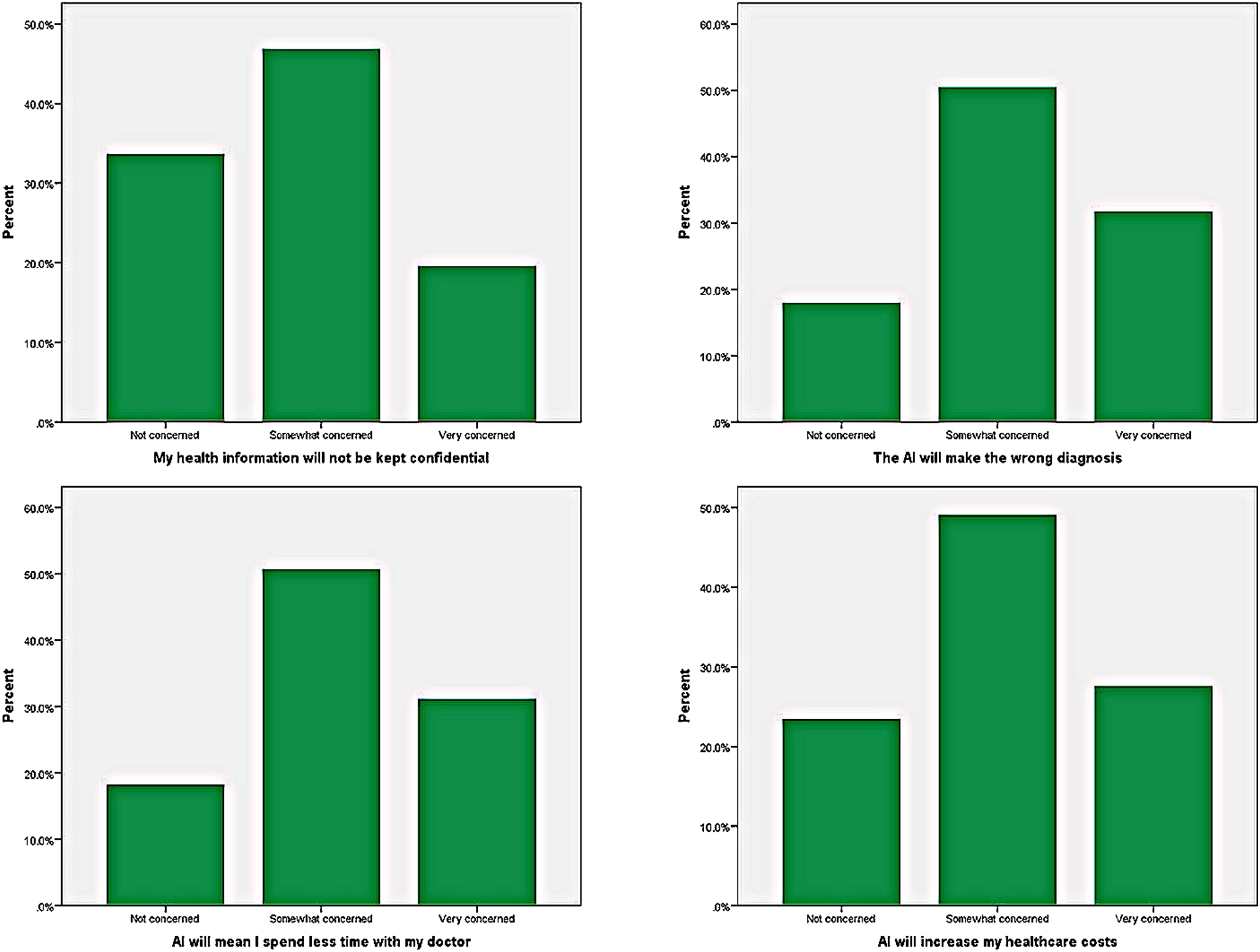

Cancer Patients’ Concerns with Artificial Intelligence in Healthcare

Concerns of cancer patients regarding the use of AI in cancer care were evaluated, and 71 patients (19.6%) reported being very concerned about the confidentiality of their health information. Approximately one-third of the total patients (113 patients, 31.1%) were very concerned about the possibility of AI making an incorrect diagnosis, while nearly half (184 patients, 50.7%) reported being somewhat concerned. Similarly, 115 patients (31.7%) were very concerned about spending less time with their doctor due to the use of AI. Slightly more than one-fourth of cancer patients (100 patients, 27.5%) were very concerned about AI increasing healthcare costs. (Figure 3) There were no differences in concerns regarding the use of AI in cancer care between male and female patients. Similarly, no differences were observed when concerns were evaluated based on age groups and education. (Table 4) Illustrates the Concerns of Cancer Patients Regarding the Use of Artificial Intelligence in Cancer Care. Cancer Patients’ Concerns With Artificial Intelligence in Healthcare. Abbreviation: AI, artificial intelligence.

Scale Reliability Assessment

Cronbach’s Alpha Scores for Comfort and Concern Scales.

Since both scales exhibit good internal consistency (α ≥ 0.7), they can be treated as single-dimensional scales. A high alpha value for the comfort scale (α = 0.91) suggests strong reliability, while the concern scale (α = 0.76) also meets the threshold for acceptable reliability.

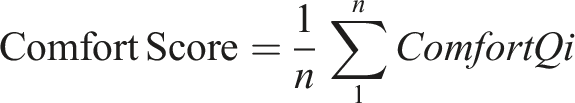

The individual scale scores are computed as the mean of their respective items:

Correlation Analysis

The correlation analysis revealed a significant relationship between perception, comfort, and concern scores (Figure 4). Specifically, a positive correlation was observed between perception and comfort (r = 0.39) and between comfort and concern (r = 0.60). This suggests that as patients feel more uncomfortable and concerned about AI, their overall perception of its role in healthcare shifts toward a more negative and skeptical view. Similarly, the strong association between comfort and concern scores reinforces that these factors influence each other when shaping patient attitudes toward AI implementation in oncology. Correlation Matrix Illustrating the Relationships Between Perception, Comfort, and Concern Scores Among Oncology Patients.

Modeling Perception

We estimate an ordered logistic regression model to examine the factors influencing patient perception of AI in healthcare. The cumulative logit models are specified as follows:

Models: • Y1 = How do you think AI will impact healthcare in Türkiye in the coming years?

logit(P (Y1 ≤ k)) = τ

k

− (β1 · concern score + β2 · comfort score + β3 · age + β4 · gender + β5 · education)(1) where k ≤ 4. • Y2 = How important do you think it is that you are told when an AI program has played a role in your diagnosis or treatment?

logit(P (Y2 ≤ k)) = τ k − (β1 · concern score + β2 · comfort score + β3 · age + β4 · gender + β5 · education)(2) where k ≤ 2.

Model Summary for Perception Questions.

Modeling Comfort and Concern

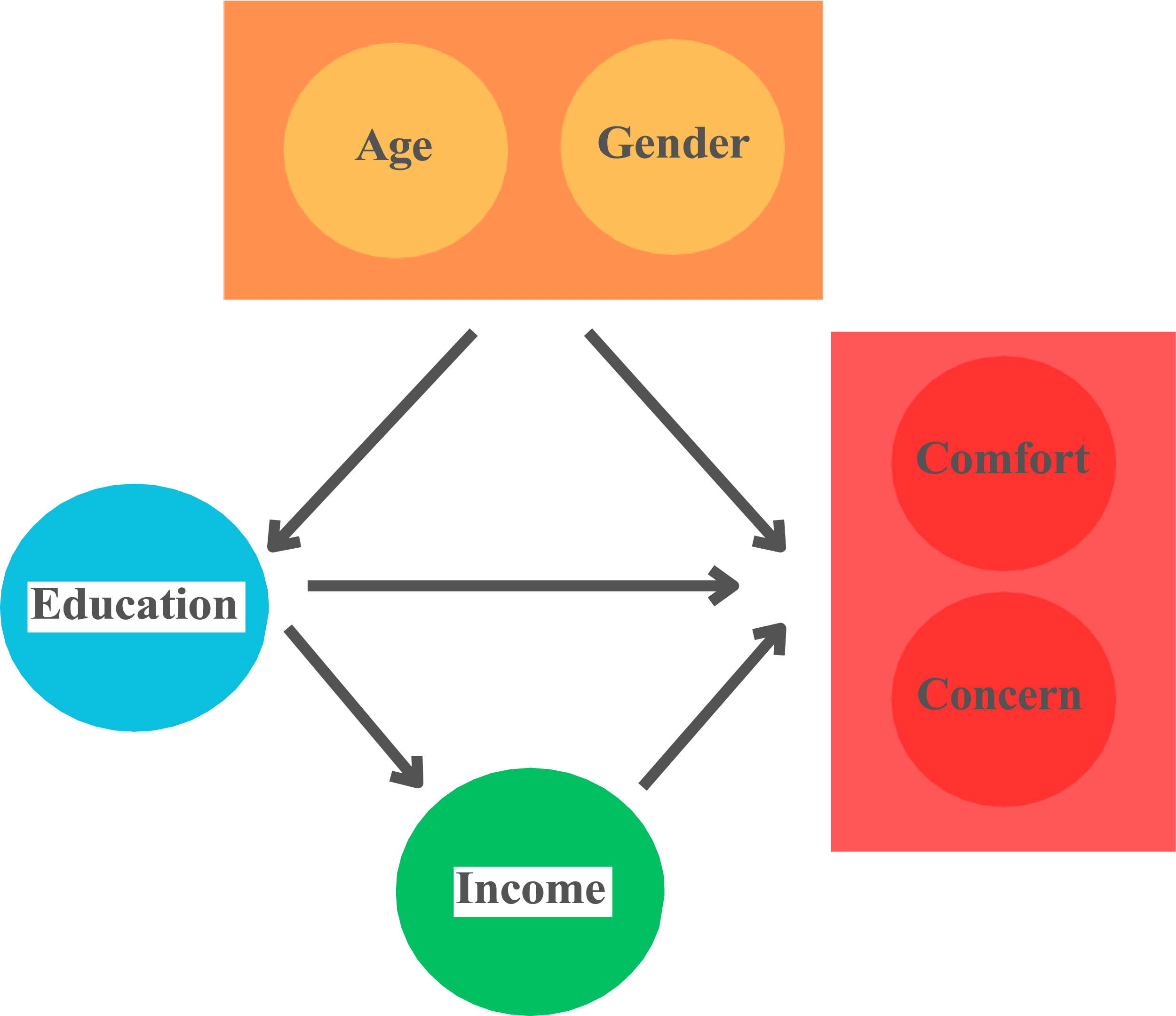

Directed Acyclic Graph

We developed a causal framework to represent the relationships among key variables, drawing on our understanding of Türkiye’s cultural landscape and socio-structures. In this model, we assume that the increasing prevalence of education among younger generations and the social roles associated with gender play a fundamental causal role in shaping educational attainment. Age and gender, in turn, act as confounding factors, influencing both comfort and concern regarding AI in healthcare and education. Additionally, household income serves as a mediator in this relationship, connecting education to individuals’ concern and comfort of AI in healthcare (Figure 5). Using Our Causal Graph, we Estimate a Linear Regression Model to Examine the Effects of Education to Comforts and Concerns of AI in Healthcare by Factoring the Confounding Variables and excluding the Mediator.

The linear models are specified as follows:

Model Summary for Comfort and Concern Scores.

Household Income and its Influence on AI Perception in Oncology

Wilcoxon Rank-Sum Test for Comfort and Concern Scores by Household Income.

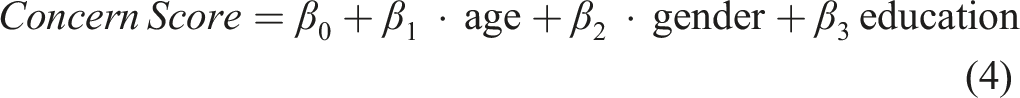

Cluster Analysis for Concern and Comfort

The strong correlation between concern and comfort scores suggests a potential clustering pattern among cancer patients. To further explore their distributions, we conducted a cluster analysis. To determine the optimal number of clusters, we analyzed the within-cluster sum of squares (WCSS) across different cluster sizes. Figure 6 suggests that a two-cluster solution is appropriate based on the observed “elbow” in the WCSS curve. Within-cluster sum of squares (WCSS) for different cluster numbers.

With a two-cluster approach, we visualize the resulting groups in Figure 7 below: Cluster Visualization Based on Concern and Comfort Scores.

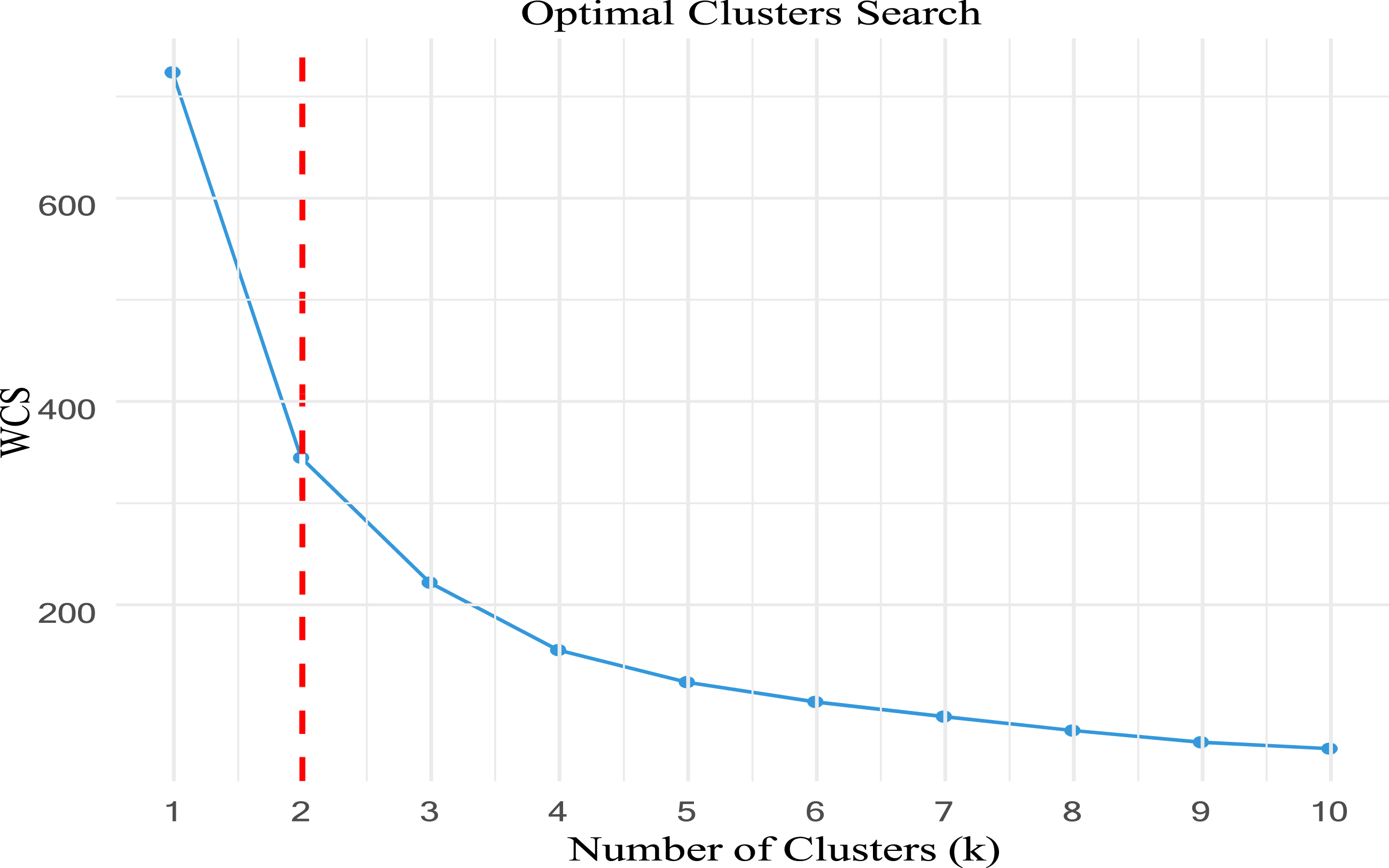

The clustering results indicate two distinct groups: one characterized by high levels of both comfort and concern and another with low levels of both comfort and concern. To further investigate these clusters, we examine their proportions across different educational backgrounds and age groups (Figure 8). Distribution of Concern-Comfort Clusters Across Education and Age Categories. (A) Cluster Proportions by Educational Background. (B) Cluster Proportions by Age Group.

Discussion

This study reveals that while many cancer patients believe AI can improve healthcare, nearly half expressed discomfort with AI-led diagnoses, even with high accuracy. Discomfort was more common among less-educated individuals, with education level significantly influencing perceptions and acceptance. Key concerns include data privacy and incorrect diagnoses. The correlation between comfort and concern scores highlights an important finding: patients who are uncomfortable with AI also express greater concern about its implementation. This suggests that improving patient trust and familiarity with AI technology may simultaneously reduce skepticism and discomfort. Furthermore, the observed link between perception and comfort indicates that enhancing AI education and transparency in healthcare settings may positively influence overall acceptance. These findings align with behavioral science principles, which emphasize the interplay between trust, perceived risk, and technology acceptance in medical decision-making. Targeted education and greater AI transparency might be essential to address skepticism, build trust, and ensure equitable adoption of AI in oncology care.

We have found that comfort with AI is significantly associated with a more positive perception of AI in healthcare, influencing both its current applications and expectations for the future in Türkiye. Concern about AI plays a statistically significant role in shaping negative perceptions of AI’s implementation in healthcare but does not have great impact for its future in Türkiye. Age, gender, and education do not significantly impact AI perception when holding comfort and concern score constants. The significant intercept values indicate that response categories are well-defined, reinforcing the reliability of the model.

In our study, more than half of the patients (50.7%) believed that AI would improve or somewhat improve cancer care. Although this specific population of patients with cancer has not been studied in the literature, findings from other studies report a similarly positive perspective toward AI, ranging from 49.3% to 53%. These results align closely with the outcomes of our research.21,22 Additionally, we found that nearly one-third of patients felt very uncomfortable, and combined with those who felt somewhat uncomfortable, almost half of the participants expressed discomfort with receiving a diagnosis from a computer program with 90% or 98% accuracy that could not explain its reasoning. For 90% accuracy, this rate was higher in studies conducted in the general population, reaching 71.5%, 17 and even higher at 76.6% in a study focused on mental health. 21 For 98% accuracy, the rates were more comparable to our study, at 58% 17 and 52.6%, 21 respectively. Most deep learning models, due to their end-to-end design, absorb information and data to produce an output but fail to provide the necessary explanations underlying that conclusion. For example, an AI system might state that a patient has an 80% likelihood of melanoma but lacks the ability to offer a clear rationale for this prediction. 23 In contrast, oncologists and dermatologists evaluate dermoscopic lesions by systematically applying major and minor criteria, offering a rationale for their diagnosis. 23 The inability of AI models to explain their conclusions may lead to discomfort and skepticism among cancer patients. Efforts should be directed towards enhancing the interpretability of AI outputs, particularly in deep learning models, to provide clear explanations for their predictions.

When cancer patients were asked how comfortable they would feel if AI performed tasks typically carried out by their doctor, approximately one in three patients reported feeling very uncomfortable, particularly regarding tasks such as diagnosing cancer and delivering the diagnosis compatible with literature. 17 The discomfort level was highest among primary school graduates and significantly lower among postgraduates, showing a notable variation based on education level. In a survey on AI conducted among general practitioners, most doctors believed that future technologies and AI would reduce their workload in patient care, but that a physician would still be essential for most services, as patients would prefer and demand human involvement. 24 Doctors identified the lack of face-to-face interaction and inability to provide empathy as AI’s greatest limitations. Many physicians argued that concepts such as communication, shared decision-making, and a patient-centered approach require human skills. Similar to patients, they expressed skepticism about AI’s ability to fully replace certain tasks traditionally performed by doctors. 24

The current study indicated that one-fifth of the patients were highly concerned that their information might not be kept confidential by AI systems. This rate was slightly lower compared to the 32% reported in the general population study by Khullar 17 ; however, concerns about AI were consistently among the top issues raised by patients across multiple studies.25,26 One of the significant risks associated with AI is the potential for hackers to alter the outcomes of deep learning models, despite efforts to enhance data security. To address these concerns, there is a critical need for robust regulations to protect both patients’ private medical information and the analytical models themselves. 23

Importantly, in this study, one-third of cancer patients were highly concerned about the potential for AI to make incorrect diagnoses consistent with findings reported by Wu et al 27 Similarly, Richardson et al 28 found that patients expressed significant concerns regarding diagnostic errors attributed to biases in AI datasets, which could ultimately lead to unreliable outcomes. Additionally, Erdat et al investigated the use of AI-powered chatbots for preparing chemotherapy protocols. However, only 5 out of 9 cases in one chatbot and 4 out of 9 in another provided accurate and appropriate responses. Most errors were related to the duration and dosage of treatments, highlighting significant limitations in their reliability. 29 Deep learning models, unfortunately, are sensitive to many seemingly negligible inputs, which can lead to instability and contamination. Key issues such as data quality, model generalization, and model security remain critical challenges in the development and application of AI. 30 In a survey conducted by Khullar et al 1 involving physicians and the general public, participants were asked who should be held liable in the event of a medical error caused by AI. The general public was significantly more likely than physicians to believe that doctors should be held responsible. Subsequently, responsibility was attributed, in order, to the vendor, the healthcare organization, and the Food and Drug Administration (FDA) or other governmental entities. As the use of AI continues to grow, legal and malpractice issues are evolving and remain critical areas requiring resolution. 1

Future research should investigate strategies for AI to facilitate effective patient communication, addressing the discomfort patients may feel toward AI, even when it delivers highly accurate diagnoses. Collaborations with AI developers will be essential to incorporate these solutions into real-world applications effectively. Additionally, studies should also investigate the medicolegal responsibilities associated with AI use, consider patients’ expectations, and examine the impact of physicians' proficiency in using AI and patient education on alleviating concerns. While AI holds great promise in oncology, its adoption in low- and middle-income countries (LMICs) faces additional challenges, including limited healthcare infrastructure, lack of digital resources, and concerns about the affordability of AI-driven solutions. 31 However, AI-powered diagnostics and decision-support systems could help bridge gaps in healthcare access where there is a shortage of oncologists and medical imaging specialists. 32 To ensure equitable AI integration, policymakers and global health organizations must develop cost-effective implementation models and prioritize AI literacy training programs in LMICs. 33 Future research should focus on how AI can be adapted to resource-limited healthcare systems while addressing key ethical, financial, and logistical barriers. 34

This study has several limitations. As a single-center study conducted in two hospitals affiliated with Ankara University, its findings may not be generalizable to the broader Turkish population or globally. Additionally, the survey-based design introduces the possibility of response bias, as participants’ answers may not fully reflect their true attitudes or concerns. The study also focused exclusively on Turkish-speaking cancer patients, limiting its applicability to non-Turkish-speaking populations. While this study primarily focuses on patient perspectives, future research could expand into implementation science, economic evaluation, and human factors research to assess AI integration from broader institutional and policy perspectives. This study provides valuable insights into how patients perceive AI’s potential alongside their concerns, but it does not model explicit tradeoffs using discrete choice experiments or best-worst scaling. Future research could build upon these findings by employing stated-preference methodologies to quantify patient preferences for AI integration in oncology

Despite these limitations, the study has notable strengths. It is the first in the literature to comprehensively evaluate cancer patients’ perspectives, comfort levels, and concerns regarding AI in oncology. The sample size was robust, including a diverse range of cancer types and sociodemographic groups. Moreover, the survey covered multiple dimensions of AI acceptance, providing valuable insights into patients' views on its role in healthcare.

Conclusion

This study highlights that while AI holds promise to transform oncology care, patients’ comfort levels, concerns, and perceptions vary significantly, particularly by education level. To enhance AI adoption in healthcare, critical challenges must be addressed, including diagnostic accuracy, data privacy, and the economic impact on care delivery. Policymakers could benefit from forming strategic partnerships with clinicians, technology developers, and community health organizations to ensure that AI systems are ethically implemented and transparently regulated. Establishing national guidelines for AI-driven healthcare solutions may also help build public trust and improve patient acceptance. Future studies should focus on developing adaptive AI training modules and community-informed initiatives to bridge knowledge gaps, improve AI literacy among cancer patients and healthcare providers, and promote patient-centered, ethical, and equitable AI integration into oncology care.

Footnotes

Ethical Statement

Author Contributions

Conceptualization, E.E, E.Y, and Y.U.; methodology, Y.U. and E.E.; software, Y.A., B.S.A., and E.E.; validation, E.E., E.Y. and Y.U.; formal analysis, E.E, E.Y., F.B.D and Y.U.; investigation, E.E. and Y.U.; resources, E.E., Y.A., B.S.A., S.A.G.; data curation, E.E., F.B.D, Y.A., B.S.A., S.A.G; writing—original draft preparation, E.E, E.Y., F.B.D. and Y.U.; writing—review and editing, E.E, E.Y., F.B.D. and Y.U.; visualization, E.E. and Y.U.; supervision, E.Y. and Y.U.; project administration, E.E. and Y.U

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.