Abstract

Quality Assurance and Education are 2 areas of the Cancer Registry that go hand in hand. High-quality data can only be maintained through routine surveillance of data quality coupled with tailored continuing education of certified tumor registrars (CTRs). However, the magnitude of information a CTR is required to know, the rapid frequency with which standards change, and growing demands on the time of the CTRs can be roadblocks to maintaining quality in the Cancer Registry. Here we describe a robust approach to quality assurance in a high-volume hospital-based Cancer Registry, leveraging a repeated cycle of quality assessment and educational activities targeting identified opportunities for improvement. Establishing such an approach encourages the professional development of CTRs while simultaneously ensuring the highest quality data for use in population-based cancer surveillance, cancer research, and patient care.

Introduction

The quality of cancer registry data is paramount for the myriad of ways in which the data are used. At the population level, complete case ascertainment and accurate coding of patient demographics, diagnostic characteristics, and cancer treatment are essential for understanding the burden of disease, cancer disparities and trends in treatment. Within hospitals and cancer centers, high quality data facilitate assessments of patient volumes and quality reporting for patient care. Within and across institutions, cancer registry data are foundational for cancer research, enabling the identification of patients for clinical trials and studies of cancer etiology and patient outcomes. Without high quality data, erroneous inferences could be made about the causes of cancer, factors influencing cancer survival, as well as resource allocation at the local, state, and national levels. Therefore, it is imperative that cancer registries deploy robust quality assurance programs.

According to the National Cancer Institute, Quality Assurance (QA) is “A process that looks at activities or products on a regular basis to make sure that they are being done at the required level of excellence.” 1 The Commission on Cancer accreditation standards require a minimum of 10% of the annual analytic caseload to be evaluated for Class of Case, Primary Site, Histology, Grade, American Joint Committee on Cancer (AJCC) Stage, First course of treatment, and Follow-up information.

The Cancer Registry is a fluid environment that requires focus and vigilance; perhaps no time more than 2018 as this was a year of considerable change for the registry community with multiple changes in coding instructions and reporting guidelines accompanied with respective software delays and technical challenges. Historically, 1 or 2 references change in a year, i.e. 2010 brought the 7th edition of the AJCC staging manual. In comparison, 2018 brought implementation of the following new and/or updated manuals: AJCC 8th Edition Cancer Staging, Solid Tumor Rules, ICD-O-3, SEER Summary Stage, Grade, Site Specific Data Items, Radiation Data Items, and the Standards for Oncology Registry Entry (STORE) manual. Clarification of coding instructions and changes to the manuals continued throughout 2018 and well into 2019. This presented registries with the challenge of keeping up-to-date with the most recent changes to ensure that training materials were current, relevant education was available to team members and data were being evaluated based on respective guidelines to maintain high accuracy in compliance with the relevant coding instructions. Having a comprehensive QA plan is critical to ensure high quality data abstraction during both times of major changes to coding guidelines as well as times in between, as it enables registries to optimally utilize both time and personnel while at the same time providing education and feedback. Here we present the plan developed within the Moffitt Cancer Center Cancer Registry, which leverages a cyclical framework to reduce the amount of valuable time spent determining which primary site to perform QA, topics for education, and when to provide feedback. The cycle allows for flexibility to modify procedures, while at the same time maintaining a complete and thorough plan for evaluation of the data and education for the team. This framework can be adopted by other registries to optimize workflows dedicated to the evaluation and routine monitoring of data to ensure the highest quality possible.

Methods

The Moffitt Cancer Center Cancer Registry is responsible for ascertaining reportable cancer cases and abstracting essential information on patient demographics, cancer diagnosis, stage, treatment, and other factors according to guidelines issued by a number of organizations, including NAACCR (North American Association of Central Cancer Registries), AJCC (American Joint Commission on Cancer), CoC (Commission on Cancer), SEER (Surveillance, Epidemiology, and End Results Program), and FCDS (Florida Cancer Data System). Moffitt’s Cancer Registry reported a record of 15,023 cases to the state registry in 2018, representing 28.4% growth since 2015. This Cancer Registry is a large department comprised of personnel with specific roles and responsibilities subject to ongoing evaluations; a multitude of QA procedures happen continuously for case acquisition and abstraction. For example, abstractors validate case reportability when they open the case from the suspense file (cases identified as reportable, waiting to be abstracted). A second example is the Cancer Registry Database is used to compile the survivorship care plans (SCPs). The SCP nurses then review the data pulled from the Cancer Registry Database to make sure the information is accurate. Any discrepancies are shared with the registry for review and correction, if applicable. A third example is that prior to data submissions to our State Central Registry and the Commission on Cancer, our accrediting body, the Program Analyst runs the data through additional edit sets to help assure high quality data (edit sets are monitors put in place to prevent entering data that is not logical).

The purpose of the cycle is to assure the quality of the abstracted data across all major primary groups. The 2017 data from this facility was evaluated and divided into 12 topics/headings for the purpose of setting up the annual plan based on the cases abstracted by site in 2017. The 12 broad sites chosen can be seen in Figure 1. Sites seen less frequently are grouped under a broader topic; for instance thyroid cases are evaluated with the head/neck sites and Melanoma also includes Merkel Cell.

The 12 month cycle of cancer site-specific quality assurance and education. edu = education. qa = peer review quality assurance. gyn = gynecology. cns = central nervous system. gi = gastrointestinal.

Included in the Cycle

Education is provided through a Process Improvement Meeting (PIM). This is a 1-1/2 hour site-specific educational activity that includes case studies for a specific site. The topics are identified ahead of time and are listed in the top of each box of Figure 1. An example would be the box at 12:00 indicates Male/Prostate sites are the education topic for that month.

Peer review QA is performed every month (on a designated primary site), by proficient Abstractor III team members. The QC/Education Specialists randomly selects 3 cases from each abstractor. The QA primary site is indicated as the bottom of each box in Figure 1, An example would be the box at 12:00 indicates Female/GYN cases would be selected for QA that month (and the education for that site was provided 2 months prior).

In Addition to the Basic Cycle Above, Additional QA Activities Include

Each week a question/case is emailed to the team; this question/case can focus on any of the following: staging, treatment coding, reportability, multiple primaries, or new histologies, depending on the site. This question/case is based on foundational concepts that the team member can decipher within 10 minutes. The question is sent out on Monday morning and responses are due Thursday. Corrected answers and their rationale are then emailed on Friday morning. All of the questions/cases are saved in a file that can be accessed by the team for reference.

An ad hoc QA is also performed if a data element scored low in the peer review QA or if areas of concern were identified in the normal course of business. Additional education is provided for the specific data element that scored low, and QA is performed again on the specific data element the next month to assess if the information was assimilated.

The complete schedule (Figure 2) provides for 1 education topic each month; 4 weekly questions; 1 site-specific QA analysis and 1 ad hoc QA. By following the cycle each year, each of the 12 sites will have 1 education session, 4 weekly questions, 1 site-specific QA month, and 1 ad hoc QA month.

The whole plan.

Prior to the monthly education a question/case of the week is emailed to the team to assess foundational knowledge regarding a particular primary site. If everyone does well on that question, then the focus of the education can be on more complex issues. If a number of team members do not seem to understand a particular concept, then this provides a starting place for the monthly education. The month following the site-specific education, peer-review QA is performed for the respective primary site. Once the QA is complete, the results are then sent back to the abstractors, so modifications in procedures can be implemented immediately. QA is calculated quarterly for each team member (monthly results are included in these calculations). If an area of the QA is seen to be misunderstood by a number of team members, additional education is given via email or a clarification/HELP file on the shared drive, and ad hoc QA is performed by the QC/Education specialist the next month to assess if the information was learned. Additional weekly questions are also distributed from the specific primary site 1 and 2 months after the education to reassess and confirm grasp of the concepts discussed.

The results from each monthly QA, ad hoc QA, and weekly questions are kept on one spreadsheet and color coded for quick reference: one color for correct and one for incorrect. This allows for a variety of analyses and can help to identify areas needing improvement, whether this is for the whole department or one specific team member.

Results

All of the results from every monthly QA, ad hoc QA, and weekly question are kept on the same spreadsheet, by date, topic, and abstractor initials. The correct responses are highlighted in yellow and the incorrect responses in orange to provide a quick visual indicator of the results. This method of tracking the QA data provides for easy access to analyzing the data in various ways. It is very easy to pull data for 1 site/topic, 1 abstractor, 1 data element, and so forth.

An example of the results from a weekly question on Melanoma is provided in Table 1 below (Clinical Staging cT, cN, cM, and c Stage and pathological staging pT, pN, pM, and p stage). At a glance, the results indicate that 3 different abstractors may have a misunderstanding about Melanoma staging.

Results From a Weekly Question.

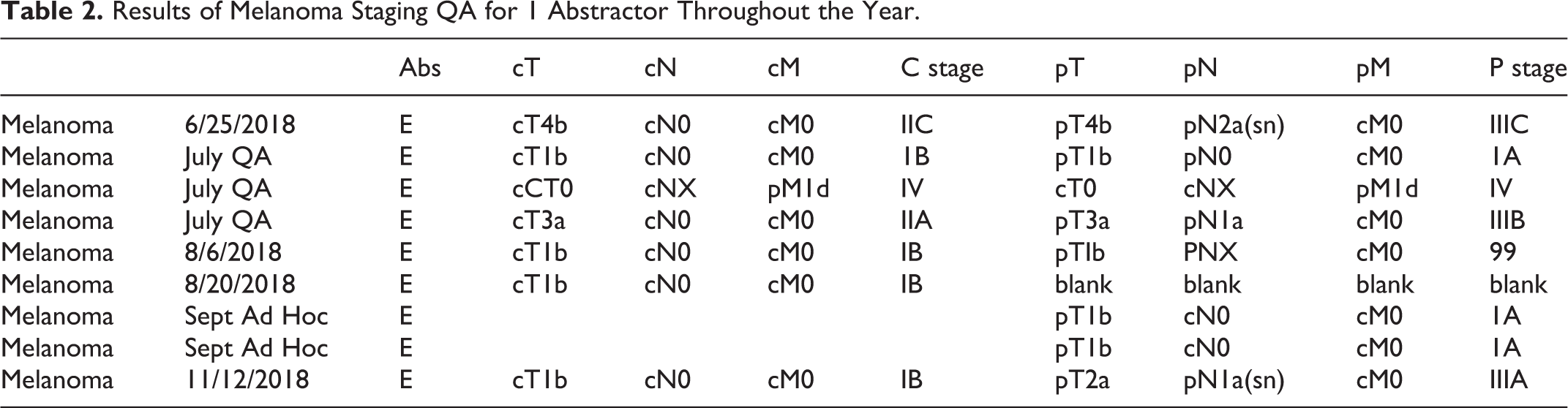

To understand whether or not the mistakes in question represented a fundamental misunderstanding of staging on the part of the abstractors, the QA for 1 particular abstractor can be examined for evidence of a pattern of mistakes. This abstractor-specific view of the data can also be used to determine whether the tailored education following the QA assessment on 08/06/2018 resulted in improved quality. For example, Table 2 presents all of the Melanoma QA for the year corresponding to abstractor “E” in Table 1.

Results of Melanoma Staging QA for 1 Abstractor Throughout the Year.

By looking at each of the Melanoma questions throughout the year as well as the Melanoma monthly QA and Melanoma ad hoc QA; the results indicate abstractor “E” does appear to have grasped the concepts introduced by the questions and the education provided, for example the cN can be used in pathological staging for any Melanoma T1.

These data were analyzed so as to determine if the whole department had a misunderstanding of a topic, or if it was attributed to only a few team members. The monthly QA across sites can be graphed to show any possible sites needing more education (Figure 3). The Department started abstracting 2018 cases in April 2018; Kidney/Bladder sites were the first to have QA with a date of diagnosis in 2018 on all cases reviewed. Although the QA continued at a superior level following the start of 2018 cases, a dip in the QA was seen as months progressed.

All QA for 2018. gu: genitourinary. cns: central nervous system. ugi: upper gastrointestinal. kid: kidney. blad: bladder. heme: hematopoietic. h/n: head and neck. fem: female.

Lung QA was analyzed by data element (Figure 4) to assess if one area of the abstract was misunderstood, or several areas. There were a total of 33 cases analyzed, and 8 different data elements had less than 30 of the 33 correct. This indicated a general misunderstanding of various areas of abstracting lung cases, not just one area.

Lung QA by data element.

Next, the question was raised as to whether 1 or 2 abstractors were missing the majority of the data elements, or if it was spread equally throughout the department. Lung cases abstracted in October 2018 had QA of 17 data items (33 cases x 17 data items = a total of 561 data items analyzed). Figure 5 shows how many data items were missed by each abstractor). Five of the abstractors had 4 incorrect data elements (or less) and 5 abstractors had 5 or more data elements incorrect. This allowed more specific education to be given to each team member based on the errors.

QA by abstractor.

Discussion

Prior to having a cyclic plan, results were calculated each quarter for QA from each abstractor and a percentage was assigned. This has not changed; however, it has been expanded. Now QA is still performed monthly and the results are shared with each staff member. QA results can be augmented each month to keep a running total. QA can be queried by primary site, data element or staff member. It is very easy to pinpoint areas of concern and define department needs: Examples: Are many people having trouble staging lung cases but not having trouble staging other sites? Is the department as a whole doing well, but 1 staff member is having trouble staging all sites? Is there a misinterpretation of the solid tumor rules/histology code changes for a particular primary site?

Educators across many academic areas may have their own specific ideas about the best methods for education, but they all tend to agree on a few points: People do not learn after one exposure to a topic; they generally do better with small bits of information provided at intervals to reinforce learning.

2

Individual feedback absolutely matters and is crucial to making information relevant.

3

If you don’t use the information you learn, you will lose it.

4

The cyclic QA plan addresses some of these fundamentals by providing 5 different exposures to a topic (1 monthly meeting and 4 weekly questions) and allows for the evaluation of how the abstractor uses the information 5 different times. The plan also provides for individual feedback by returning QA to the team monthly, allowing areas needing clarification to be addressed in a more timely fashion.

In order to provide consistent exposure to the topics, education and weekly questions need to address the same concept until it is well-understood. For instance, if you ask a pre-education question about staging post-therapy breast cases, then the same topic will need to be reviewed in the monthly education as well as post-education questions. Deliberate focus is on the education and questions to those areas where the team are having more questions/misunderstandings. Everyone is an individual, comes from a different background and learns differently. If it appears that a team member still does not understand a concept, try presenting it in a different way. QA is not meant to be punitive; it should be performed to ensure that standards of quality are being met. It is important to remember to be encouraging and supportive as your team continues to learn.

We recognize that hospital-based cancer registries may vary with respect to patient volumes, case severity mix, and local use of the data. In turn, these characteristics may impact the details of the proposed quality assurance process. For example, the categories and the number of cases requiring review may differ across registries based on the caseload, and the topics requiring more intense education may vary. However, the cyclical nature of the annual process we describe is broadly generalizable, as it ensures all categories are covered each year. Any registry need only analyze the specific data collected and divide into 12 categories to insert into this annual cycle template. Even a small registry of 1 to 2 people that do not have the bandwidth to provide weekly questions, can benefit from the annual cycle guide of what QA to select and when to provide education. Population-based registries aggregate data across hospital-based registries and routinely perform edit checks to identify potential abstraction errors that need to be corrected by the reporting source. Ideally, the population-based registries would be able to conduct audits of abstracts, following the cyclical pattern described in the current manuscript, although lack of direct access to the EMR and limited resources may limit population-based registries ability to do so. Therefore, it is especially critical that hospital-based registries implement QA processes to optimize the quality of their data prior to submission to population-based registries. While not all hospital-based cancer registries are providing data directly to researchers locally, their data contribute to the broader population-based registries on which important disease surveillance and research are based, and therefore, high-quality data should be a universal priority for all registries.

Conclusion

Whether you have a large department with a QC/Education specialist or if you are a 1-person department, this plan can be utilized to help ensure evaluation of curated data.

The Cancer Registry field requires constant education to achieve sufficient knowledge in order to ensure quality data reporting. Quality Assurance is a requirement of all cancer programs, but one must act on the results in order to utilize the information this provides most effectively. It is not enough to show that QA was performed on 10% of analytic cases; it is valuable to show how this information was then applied to provide additional learning opportunities in the areas where the education was most needed.

By cycling the QA and education, not only can the education needs be identified, but the education can also be validated. A comprehensive assessment of primary sites can be evaluated for quality as opposed to focusing on high volume primary sites. Providing small bits of information through the weekly questions as well as site-specific education monthly allows for repeated exposures to a topic so that learning can occur. Scheduling a weekly question prior to the site-specific education allows evaluation of current knowledge. Scheduling additional weekly questions following the monthly education emphasizes topics from the education as well as evaluates if learning has occurred and further cements the information into knowledge.

The cycle of providing continual education, quality checks, and tailored feedback will result in consistently high-quality data, benefiting not only the cancer program, but the entire cancer community.

Footnotes

Authors’ Note

No human or animal subjects were involved in this research. The authors confirm that the manuscript has been read and approved by all named authors and that there are no other persons who satisfied the criteria for authorship but are not listed. The authors further confirm that the order of authors listed in the manuscript has been approved by all of us.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.