Abstract

We revised 2/14 items on the Multiple Errands Test–Home (MET-Home). We compared the original and the revision and accounted for clinical/demographic covariates of interest. Archival data (N = 144) from neurologically healthy participants (n = 44 revised version, n = 34 original) and survivors of stroke (29 revised version, n = 37 original) were analyzed. We calculated internal consistency and assessed external validity via correlations with the Montreal Cognitive Assessment (MoCA), Barthel Index, and Nottingham Extended Activities of Daily Living scale (NEADL). We provided preliminary reference data (n = 78). MET-Home versions were not statistically different on key outcome scores (p > .05). MET-Home was internally consistent (α = .63 original, α = .80 revised, p = .07). Correlations between MET-Home and external measures were moderate (MoCA: r = .56, p < .001; Barthel: r = .46, p < .001; NEADL: r = .35, p < .001). The revised MET-Home is not statistically different to the original and is just as internally consistent, and we have further evidence of the test’s validity. We caution the lack of comprehensive (age and education corrected) normative data.

Cognitive impairment is a frequent consequence of stroke occurring in as many as 84%+ of survivors acutely (Kusec et al., 2023) and 40% 1-year post stroke (Sexton et al., 2019). As the acute management of stroke has led to more adults surviving stroke, the number of people living with the effects of stroke has increased (Rothwell et al., 2011). Living with the cognitive consequences of stroke can have a direct impact on functioning in everyday life and contribute to restrictions with engagement in valued roles and life tasks.

Executive functions are a set of higher-order cognitive processes composed of three core components: (a) inhibitory control (including selective attention), (b) working memory, and (c) cognitive flexibility (Diamond, 2013). Deficits in executive function contribute to challenges with complex everyday living tasks like employment, driving, and financial management. Executive functions are typically evaluated with structured domain-specific assessments delivered in clinical settings that are limited in capturing the unpredictable situations and circumstances that arise in everyday life (Rotenberg et al., 2020). Domain-specific refers to an assessment that attempts to measure a specific aspect of cognition in a specific context (i.e., just memory of images, not sustained attention, or language or praxis for example), as opposed to domain-general which refers to global cognitive processes (Roberts, 2008). Shallice and Burgess (1991) created the Multiple Errands Test (MET) to better capture how executive functioning manifests in the real-world (Burns et al., 2019). In general, MET contains multiple tasks to complete within a set of rule constraints to contribute to the complexity of the assessment. Typical rules include minimizing the amount of money spent and time taken to complete the test (Rotenberg et al., 2020). The MET has very high verisimilitude as it is conceptually similar to everyday life per its design as well as high veridicality which is the association of the test to functioning or activities in everyday life, given some evidence of association between MET performance and functional outcomes (Webb & Demeyere, 2023b). Most recently, for example, the Oxford Digital Multiple Errands Test (OxMET) was found to predict functional ability and global cognitive functioning 6 months following stroke, when administered within 2 months of the stroke (Webb & Demeyere, 2023b).

There are multiple versions of METs in different settings; including hospitals (Alderman et al., 2003; Knight et al., 2002), colleges (Robnett et al., 2021), virtual reality (Cipresso et al., 2014; Erez et al., 2013; Jovanovski et al., 2012; Law et al., 2006; McGeorge et al., 2001), app-based versions (Webb et al., 2021), generic versions (Basagni et al., 2021), and home versions both in person (Burns et al., 2019; Lai, Yan, et al., 2020) and remotely (MacKinen & Burns, 2022). A review of the psychometric properties of MET revealed that there is high inter-rater reliability, test–retest consistency, and known-group validity, but limited evidence of convergence with executive function measures or activities of daily living (ADLs), and poor evidence to summarize the ecological validity of the test (Rotenberg et al., 2020). A potential explanation for the poor evidence for convergence may best be explained as simply the distinction between laboratory style assessment and ecological assessment in complex and ill-structured environments. Furthermore, limited studies have used valid ADL scales. For instance, the first MET was designed as individuals were performing well on traditional standardized assessments but were profoundly impacted in daily life (Shallice & Burgess, 1991).

The MET-Home (Burns et al., 2019) is a clinician co-designed, standardized, home-based version of the MET which has generic tasks which could be completed in most homes globally. It is designed for multiple client groups but validated primarily in stroke. There are 14 tasks that typically require entry to different rooms in a residence and have different modes of task completion (e.g., some require calling someone and others require looking up information). The MET-Home aims to be challenging but doable, that is, no floor or ceiling effects, and to provide a sensitive measure of the consequences of executive dysfunction. For this, tasks are selected to require moving about, organizing oneself, and planning ahead, thus are challenging if not attending to the information (or with executive dysfunction), but should one attend properly and plan ahead (which are two things directed by the task itself) the tasks are not difficult to complete.

The original investigation of the MET-Home included 46 age, education, and gender-matched survivors of stroke and neurologically healthy adults (Burns et al., 2019). Their project established the internal consistency and inter-rater agreement of the MET-Home, which was found to be high (all 14 items Cronbach’s α = .73, and inter-rater reliability was intraclass correlation coefficient > .88). They established convergent validity of the MET-Home through comparison to an ecologically valid performance-based assessment of executive function (Baum et al., 2008) and a measure of processing speed (Smith, 1968). However, no significant associations were found between the MET-Home and the Montreal Cognitive Assessment (MoCA; Nasreddine et al., 2005), structured and abstract executive functioning, and self-reported executive and emotional impairments in daily life (i.e., the Dysexecutive Questionnaire from the Behavioral Assessment of Dysexecutive Syndrome; Wilson et al., 1997). The non-convergent findings, albeit underpowered, suggest that the MET-Home may measure objective performance-based executive dysfunction that affects everyday life, but it may not capture basic executive processes which are evaluated in structured and abstract neuropsychological testing. The authors suggested that as real life tasks are complex and multidimensional (Morrison et al., 2015) and that structured neuropsychological tests individually do not capture this, the MET-Home may capture the reality of executive dysfunction well (Burns et al., 2019).

A recent investigation compared the MET-Home (Webb & Demeyere, 2023a) to the computer tablet-based OxMET (Webb et al., 2021). Data from 98 participants (48 stroke survivors [M = 1.38 years post stroke] and 50 healthy aging controls) were presented on performance on both tasks, feasibility of both tasks in terms of predicting completion and completion rates, and some qualitative feedback from participants on acceptability was provided. The MET-Home converged with the OxMET and was found to be feasible. Participants accepted the MET-Home but commented on how challenging it was and that some tasks were inappropriate and should be replaced. Particularly, Item 1 (“Call a plumber and ask about the cost of a 1hr service call”) and Item 12 (“Locate the cost of ordering a large two-item pizza for delivery”) were deemed unacceptable by participants, with many not completing the tasks at all or only partially and indicating in feedback that they did this deliberately. As part of their study, the authors replaced the two items with semantically similar tasks which were deemed more acceptable to participants, based on feedback in administration and completion rates (Webb & Demeyere, 2023a).

In the present study, we aimed to document the revision process and to compare the revised version with the original MET-Home and provide further evidence of reliability and validity. Furthermore, we aimed to establish the relationship between the MET-Home and MoCA in a sufficiently powered, larger sample, along with ADLs and functioning. Our overarching aim was to provide psychometrics for the revised MET-Home and support its convergent validity.

We expected that the MET-Home would significantly relate to global cognitive functioning measures above a benchmark of r = .30 (used in previous research; Rotenberg et al., 2020; Webb & Demeyere, 2023a, 2023b) due the need for global cognitive skills to complete a complex errands task (Rotenberg et al., 2020). To provide some evidence of external convergent validity, we compared the MET-Home to instrumental and basic ADLs. We expected that the MET-Home would associate more strongly with instrumental ADLs than with basic ADLs, due to the increased demand on cognitive processes in instrumental ADLs than basic ones. However, we expected that if there were to be a small association of MET-Home to basic ADLs then this may be due to the measurement of independent action captured in the MET-Home, which is essential in basic ADLs, like the Barthel Index. Similar associations of MET to ADLs have been found for other METs (Rotenberg et al., 2020; Webb & Demeyere, 2023a) and to support veridicality.

Method

We report how we determined our sample size, all data exclusions (if any), all manipulations, and all measures in the study (Simmons et al., 2012). Our manuscript adheres to the COSMIN guideline for studies on measurement properties (Gagnier et al., 2021). Ethical approval was granted by the Medical Sciences Interdivisional Research Ethics Committee at the University of Oxford for the U.K. arm and approval from was granted for the U.S. arm from the institutional review board of the Texas Woman’s University. All participants in all groups provided written informed consent.

Study Design

This project reports on secondary data analysis of a cross-sectional observational study.

Participants

We collated secondary MET-Home data (N = 151) from the original MET-Home study in the United States (N = 46, n = 23 stroke, n = 23 healthy aging, all using the original MET-Home; Burns et al., 2019) with data from a U.K. sample (N = 105, n = 60 healthy aging, n = 45 stroke) from a recent study (Webb & Demeyere, 2023a). Seventy-one participants completed the original MET-Home (n = 34 controls), and 73 completed the revised version (n = 44 controls). We note that we have previously reported on a subset of this data, only in comparison to the OxMET (Webb & Demeyere, 2023a; n = 98). Originally, the U.S. and U.K. samples were convenience sampled from research databases, but additionally in the United States, they also used snowball sampling techniques as well.

The “control” group presented in the current study is made up of independent community living adults that self-identify as healthy aging. The neurologically “healthy” condition of the control group was based on self-reported symptoms when prompted about previous neurological or “brain” incidents, history, conditions, or diagnoses. The original studies did not exclude control group participants based on any diagnoses or comorbidities unreported as this reflects a typical older adult group who typically have at least two comorbidities.

In the United Kingdom, stroke survivors were recruited from stroke databases from the Oxford Cognitive Screening program (Demeyere et al., 2015; Kusec et al., 2023; Milosevich et al., 2023).

Inclusion/Exclusion Criteria by Country

In the United States, stroke survivors had to be those alive ≥90 days post stroke with mild to moderate stroke severity, no dementia, independent before stroke, less than moderate depression, home dwelling >30 consecutive days, ≥18 years old, and have good English-speaking, reading, and understanding abilities (determined via recruitment adverts requiring good to adequate English abilities). The U.S. research team ensured that control participants met the same criteria and were fully independent at the time of the study. For exclusion, there could be no history of previous stroke or other neurological condition, or other conditions that could affect executive function, or other impairments that affect the ability to carry out the study. Control participants had to score ≥90 on the Barthel (out of 100).

In contrast, in the U.K. sample, both controls and stroke survivors needed to be ≥18 years old, dwelling in a familiar residence they could use to complete the tasks, have sufficient English to comprehend questions in the task, and be able to concentrate for 20 minutes and give informed consent (both deemed by the patient multidisciplinary team). The U.K. sample exclusion criterion was to have any deafness or blindness which could not be reasonably adjusted for to complete the task.

Both the U.S. and U.K. control groups were screened with the MoCA but not excluded based on performing below cutoff (cutoffs of 26 or 23 used in the original studies). Thus, the U.S. sample was highly selected, and the U.K. sample was more broadly recruited. In later country combined analyses, we have a diverse and varied sample.

Flow of Data Included in This Study

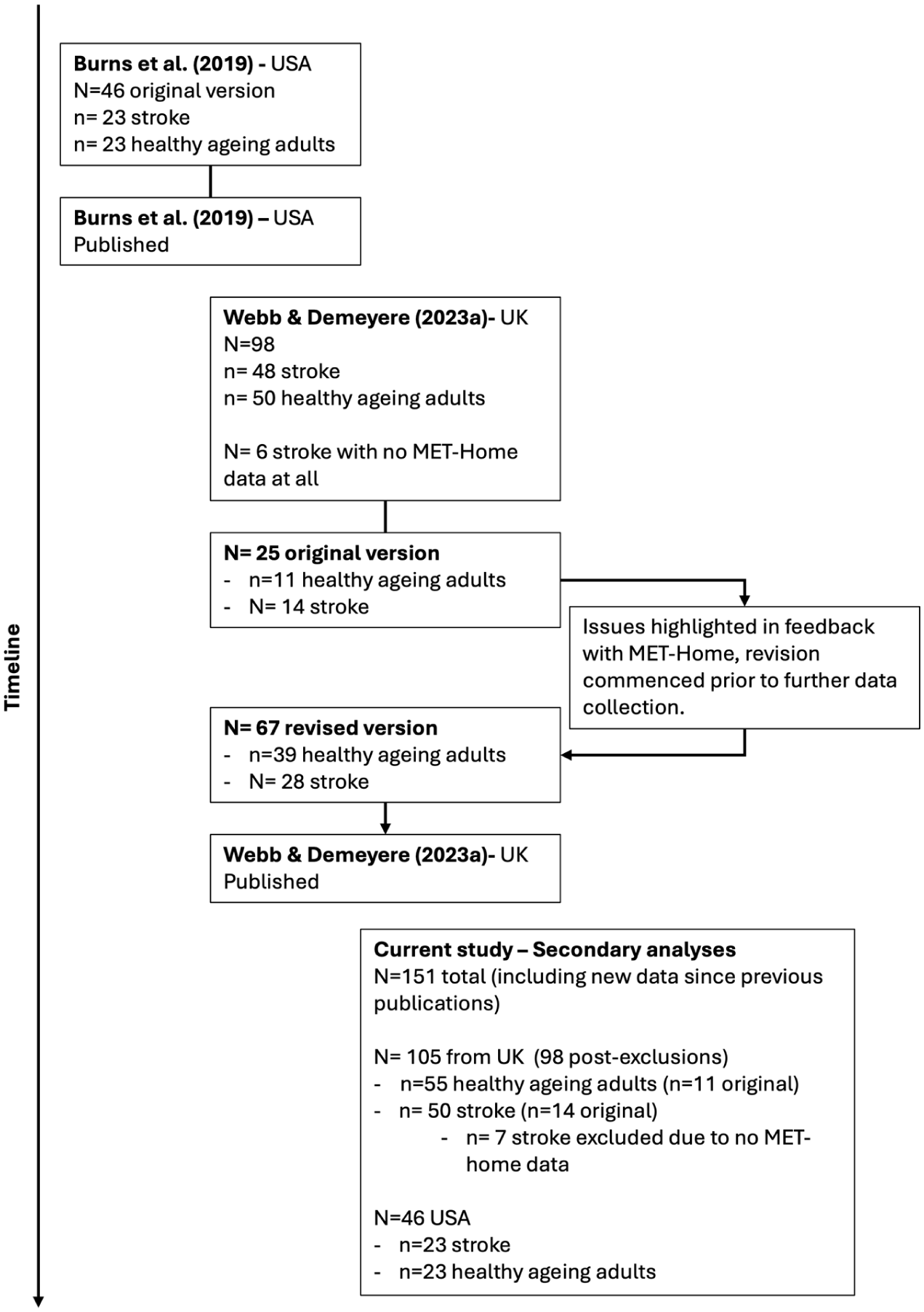

For simplicity, we report our secondary data analysis subsamples and which participants completed the revision and the original in Figure 1.

Timeline of Data Collected, Published, and Used in the Secondary Analyses Presented in This Manuscript; From the Original MET-Home Published in 2019, to the Comparison of MET-Home to OxMET, to the Current Study Collating All Data, and Adding New Data Collected Post-Publication.

Measures

In brief, the following measures from each study are reported here as relevant to the current study aims: MET-Home (Burns et al., 2019), MoCA (Nasreddine et al., 2005; measured out of 0–30, with higher scores reflecting better global cognition), Barthel Index (out of 0–20, with higher scores indicating greater basic independence; Mahoney & Barthel, 1965), Nottingham Extended Activities of Daily Living (NEADL; Harwood & Ebrahim, 2002; 0–66, with higher scores indicating greater independence in instrumental activities), stroke severity measured by the National Institute of Health Stroke Scale (Brott et al., 1989; Lyden, 2017; NIHSS; measured 0–42, mild = 1–4, moderate = 5–15, moderate to severe 16–20, and severe = 21–42), time in days since stroke, and modified Rankin Scale (mRS; Broderick et al., 2017; 0–6, with higher values indicating greater disability). Both the NEADL and Barthel are self-reported measures, with the researcher going through items with participants and recording their scores or allowing the participant to answer themselves while monitoring their understanding. MoCA was administered by a trained and licensed researcher alongside the mRS. Further measures were used in prior research studies not included here (Burns et al., 2019).

The MET-Home is extensively detailed elsewhere (Burns et al., 2019), but in brief, it comprises 14 tasks that must be completed within a residence, and the tasks require different modalities (internet or search references, phones, tools, etc.) for accurate completion. See Table S1 for items. The assessor first reads out a script of instructions to the participant and gives them an instruction sheet with the errands to complete and the rules to follow. After a period of planning time where the participant can plan on a piece of paper if they wish (for as long as they wish—this is timed), the participant is required to complete the errands in an order decided by them. Some tasks necessitate an order which must be realized by the participant for accurate completion. Tasks include, setting an alarm and getting an item, or finding out the price of a food item, or locating items. The assessor follows the participant with a clipboard, marking accurate completion of tasks or partial completion (i.e., set an alarm but didn’t get an item), or omissions (i.e., failure to complete a task at all). Furthermore, the assessor marks the efficiency of the participant as well as how many times a participant could have completed a task but did not. The same instruction, methods, and procedures were followed across U.K. and U.S. samples.

The MET-Home has multiple outcome metrics. “Metrics” refers to the way the test is measured and the unit we describe the test outcomes with. The four key ones are number of accurately completed tasks, number of omitted tasks, number of partially omitted (incomplete or wrong modality) tasks (each out of 14), and total number of errors (a summative score of number of partial omissions, omissions, and frequency of rule breaks). In addition to the four-outcome metrics, there are other time metrics such as planning time before starting the task (which is timed, but unlimited) and duration of task; and error metrics such as frequency of rule breaks, number of the rules broken, inefficiencies. These error metrics are not thoroughly described as they do not form a large part of our analyses as we focus on the key outcomes.

Process of Revising the MET-Home

In Webb and Demeyere (2023a), 25 stroke survivors and healthy controls had completed the original MET-Home, when it became clear from informal qualitative feedback that was summarized descriptively and not thematically, that Item 1 (“Call a plumber and ask about the cost of a 1hr service call”) and Item 12 (“Locate the cost of ordering a large two-item pizza for delivery”) were unacceptable. The unacceptability was determined through multiple negatively valanced references to these items and refusal to complete the items. In this context, negative valence was the emotion behind the feedback, for instance “I hated that test” would be negatively valanced and “I really liked that test” is positively valanced. Eighteen of the first 25 participants did not complete Item 1, and 16 participants did complete Item 12 correctly (eight omitted it completely), and they commented on the unacceptability of the two items. For the first item, asking for a quote was felt to be unnecessary “time-wasting” of busy professionals, and for Item 12, example comments included that participants could not find the cost of a large two-item pizza for delivery as their village was too small/far out for any pizza place to deliver it. After testing, the examiner discussed with the participants that they could still get a price online or through others, even if that place would not deliver, but participants felt this was not in line with the question. To clarify this feedback, participants could complete the problem items, but did not want to due to feeling uncomfortable and/or not wanting to waste time of others.

To replace items, two video discussions were held with the authors ND, SPB, and SSW, who constitute an expert in neuropsychological test design, an occupational therapist who has expertise in test design and clinical practice, and a psychometrician. We did not base replacement items on statistical properties. We based item selection on the intended meaning of each item originally. For example, Item 1 required calling someone to enquire about a cost. We went back on forth on possible items that may convey the same, such as calling a gym for a cost which is less personal than direct to a plumber. We trialed the item for two participants and then discovered that participants were more confused as to what to enquire about, for how long, if they need to sign up for real and so on. We then discussed again and decided on specifying what type of service to call, and then what to call about with examples to clarify to participants. This still required planning by the participant and enquiring about the right thing, while also being efficient and not staying on the phone too long. Thus, the challenge level was kept the same, in principle, for Item 1 and the revised Item 1, but with more scope to accommodate different demographic scenarios. Furthermore, for Item 12, we discussed how we can broaden the item from pizza, which may not apply to many households or cultures, to food in general, which also broadens the prospects of who to call.

The Final Revised Version

Finally, after discussion, and trailing/piloting with a handful of participants, Item 1 was replaced by “Call a membership service provider (e.g., gym, club, community centre etc..) and ask about the cost of a membership plan (can be daily, weekly + etc.).” This thus remained a phone call task, related to a service. Item 12 was revised “What is the exact cost of ordering some food to your home (inclusive of food costs; e.g., can be a takeaway, or food shopping etc)?” Item 12 hereby retained the content needed to find a financial cost, related to food delivery.

We did not re-analyze feedback from the U.K. sample (who were the only sample to give feedback); see Webb and Demeyere (2023a) for methodology and results.

Procedure

Data used in this study were collected from participant homes in the United States and United Kingdom. After consenting participants and gathering demographic data, the research team administered the MET-Home to all participants with occasional help of various research assistants. In general, one research team member administered all tests, but occasionally one of the trained assistants would administer the test under supervision.

Data Analysis

We combined neurologically healthy controls from both countries into one control group and did the same for stroke survivors. Before we could analyze the data to establish the MET-Home Revised version, we first compared the U.K. and U.S. sample characteristics to identify potential differences affecting comparisons. To compare the original MET-Home to the revised version, we statistically compared accuracy, omissions, and partial completions on the versions using ANCOVA analysis, co-varying for age, education, mobility, and mRS. Importantly, all data collected on the MET-Home is included in this study, and it is clarified throughout whether we refer to the original or revised version and the United States, the United Kingdom, or both samples combined.

For the main analyses, we first examined reliability of the MET-Home Revised in comparison to the original version, irrespective of sample origin. Note, internal consistency was only examined in the U.K. data as they were the only group to have MET-Home item level data. We asked the U.S. MET-Home authors for the item level data, but this was not available for sharing but was used originally for scoring.

Next, we established some external convergent validity evidence of the MET-Home by examining the relationship between the MET-Home and the MoCA (total score out of 30, higher is better), the Barthel Index (total score out of 20, higher is more independent), and the NEADL (total score out of 66, higher is more independent) measures. We used both correlation and linear regression methods where appropriate and transparently reported. For convergence, we anticipated associations between the MET-Home and global cognition and ADLs, both basic and instrumental, with a greater association between the MET-Home and instrumental ADL than with basic ADL as instrumental activities are higher level. This is in line with research of other METs and the OxMET from the original comparison study (Rotenberg et al., 2020; Webb & Demeyere, 2023a). For discriminant, given we used secondary data, there were no tests available in the open data that could be feasibly used for discrimination.

We present the new combined normative reference data of all neurologically healthy controls. This is the only reference data for the MET-Home, but due to sample size, we did not break the data down into education or age groupings.

The authors of the MET-Home did not explicitly presume an underlying structure of the MET-Home, nor explicitly state that items measure certain aspects of the executive function umbrella. Most MET have no presupposed structure, nor evaluate internal structure, as shown in the 2020 systematic review on MET psychometric properties (Rotenberg et al., 2020). We therefore have not performed any confirmatory internal structure analysis but present an exploratory factor analysis (EFA) in Supplemental Materials, which has further details of fit. To fit the EFA, we used parallel analysis to determine the number of factors possible and used the results (two factors) in an EFA using maximum likelihood estimation and oblimin rotation. We used a cutoff of .30 for retaining variables contributing to each factor.

In terms of post hoc power sensitivity analysis to detect an effect size of .30 (convergence benchmark; Rotenberg et al., 2020) in the correlational analyses, considering an alpha of .05, n = 144 people, our power was approximately 97%. When correcting for multiple comparisons using a basic Bonferroni correction in the power was reduced to 86%.

Data was analyzed in R Studio (Posit team, 2024) version 2024.12.0+467 using R version 4.4.2 (R Core Team, 2022), and all data and analyses scripts are available to download and review under CC by 4.0 attribution license (www.doi.org/10.17605/OSF.IO/FG2P3) We used the following additional packages for the production of the RMarkdown manuscript and analysis: psych version 2.4.12 (Revelle, 2018), effsize version 0.8.1 (Torchiano, 2020), cocron version 1.0.1 (Diedenhofen & Musch, 2016), ggpubr version 0.6.0 (Kassambara, 2023), pROC version 1.18.5 (Robin et al., 2011), performance version 0.13.0 (Lüdecke et al., 2021), Hmisc version 5.2.2 (Harrell, 2021), rstatix version 0.7.2 (Kassambara, 2021), rcompanion version 2.5.0 (Mangiafico, 2021), cowplot version 1.1.1 (Wilke, 2020), and tidyr 1.2.0 (Wickham, 2021), dplyr version 1.1.4 (Wickham et al., 2019), readxl version 1.3.1 (Wickham & Bryan, 2019), ggplot2 version 3.3.5 (Wickham, 2016); kableExtra version 1.3.4 (Zhu, 2021), and pwr version 1.3.0 (Champely et al., 2015).

Results

Participants

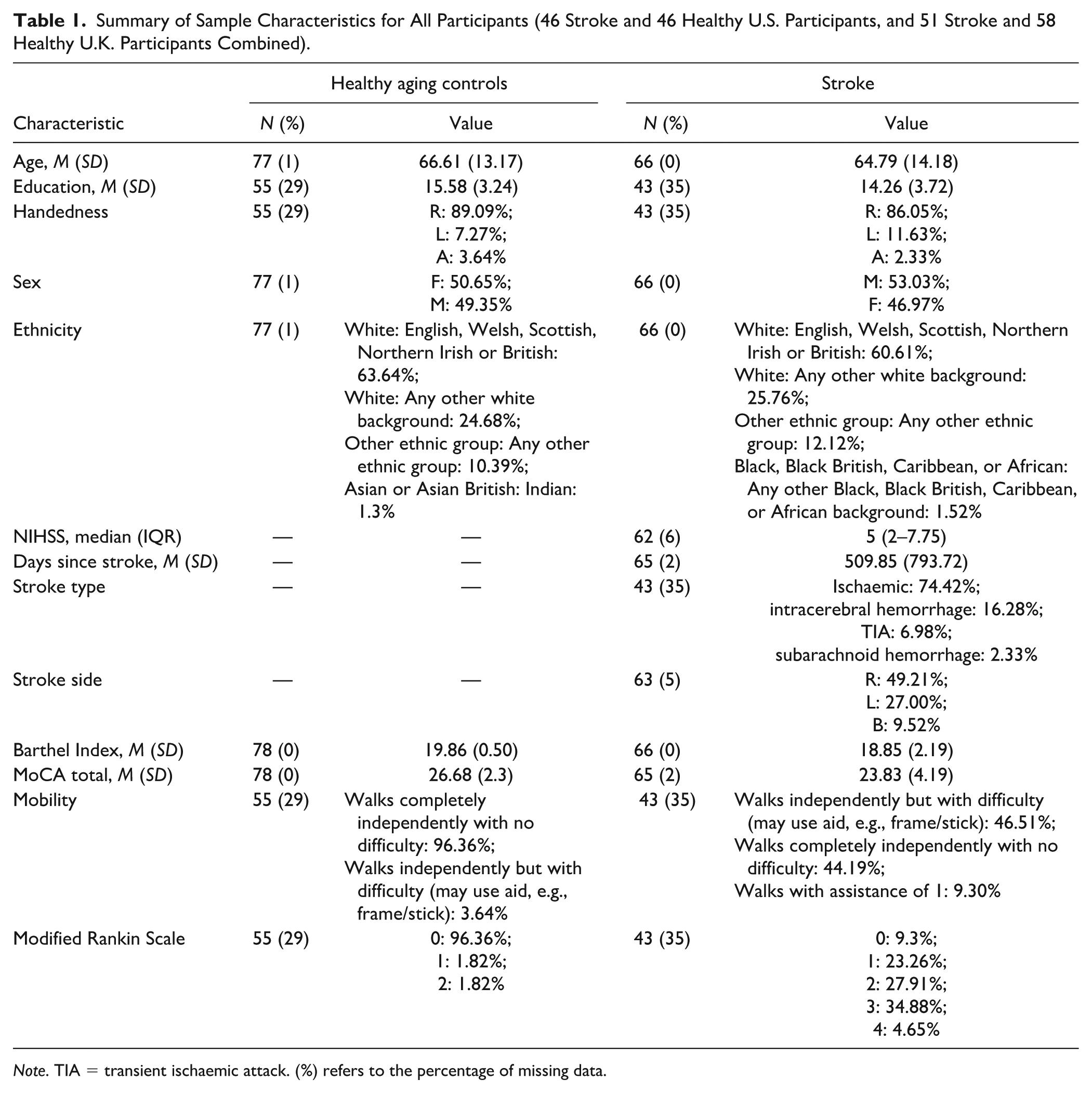

In the United Kingdom, 51 stroke survivors and 58 healthy controls were recruited, and in the United States, 26 stroke and 26 controls were recruited. The U.K. and U.S. samples were statistically contrasted using t-tests and chi-square tests, adhering to necessary assumptions. Multiple demographic variances in the samples for both controls and stroke survivors were observed, predominantly related to age and ethnicity. The discrepancies in ethnicity can largely be attributed to the samples hailing from different nations. The North American sample, for both controls and stroke survivors, was notably younger (t = 5.42, p < .001, d = 1.25) and had milder strokes (t = 4.30, p < .001, d = 0.98, see Table S2 in the Supplemental Materials for further comparisons). The consolidated sample for analysis, encompassing the U.K. and U.S. samples, consisted of 66 stroke survivors and 78 healthy controls. Thirty-four controls and 37 stroke survivors were administered the MET-Home original, and 44 controls and 29 stroke survivors were administered the revised version. The demographic data for this consolidated sample are outlined in Table 1. Any missing data are denoted in brackets, indicating the percentage relative to N per demographic. Most of the missing data arises from varying demographic protocols between samples. No data imputation was executed for the entire sample. Full data breakdowns are available in Supplemental Table S2.

Summary of Sample Characteristics for All Participants (46 Stroke and 46 Healthy U.S. Participants, and 51 Stroke and 58 Healthy U.K. Participants Combined).

Note. TIA = transient ischaemic attack. (%) refers to the percentage of missing data.

Comparison Between MET-Home and MET-Home Revised

Upon visual inspection of item responses for both the original and revised MET-Home, it was observed that response patterns were similar (refer to Figure S1). For brevity, results for comparisons are presented in Supplemental Materials. In summary, there were largely no differences (p > .05) found between versions on the four main metrics from the MET-Home (i.e., accuracy, omissions, partial omissions, and total errors), except where stroke survivors had a greater number of partial completions than controls, and made a greater number of errors in general.

Reliability

A split-half reliability Cronbach’s alpha with Spearman-Brown correction was conducted on all MET-Home accuracy item level data (14 items) for the U.K. sample as it was the only item data available. The Spearman-Brown correction is used to account for lower subsequent reliability when using only half of the items in a split-half analysis (Parsons et al., 2019). Internal consistency was different for the original and revised versions of the MET-Home. The original version (n = 23) had a consistency of α = .63 (95% CIs [0.37, 0.81]), and the revised version (n = 73) had a consistency of α = .80 (95% CIs [0.72, 0.86]). A comparison of the Cronbach’s alphas of each version via the “cocron” package for r (Diedenhofen & Musch, 2016) revealed that the consistencies were not statistically different x2(1) = 3.24, p = .07, 95% Across both versions in the U.K. sample, combined consistency was high (α = .80, 95% CIs [0.74, 0.85]) and was similar to the originally reported reliability (e.g., α = .73; Burns et al., 2019).

External Validity

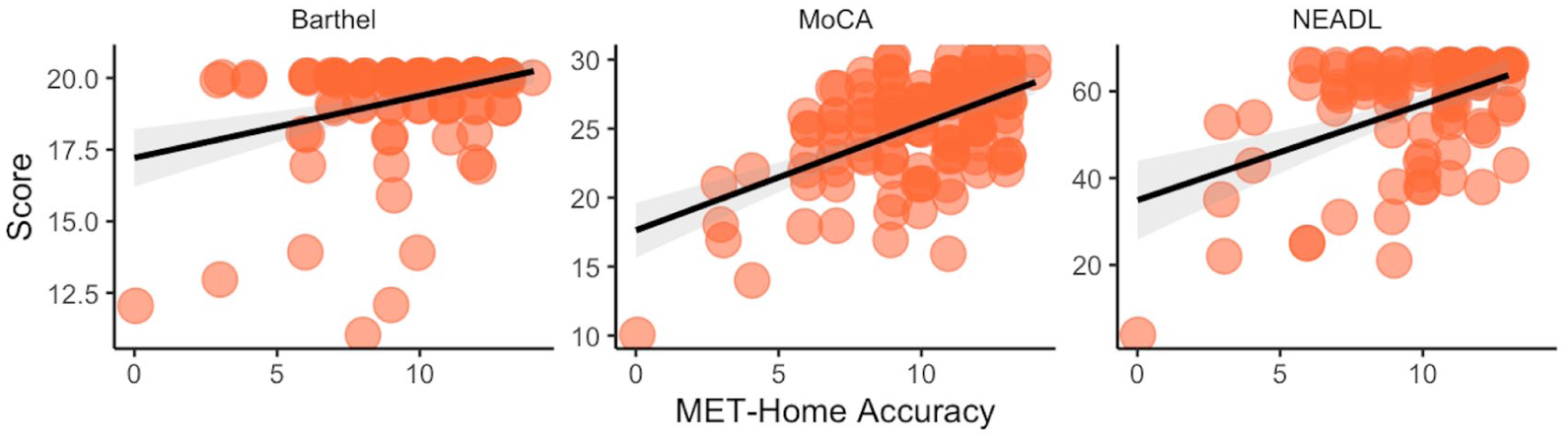

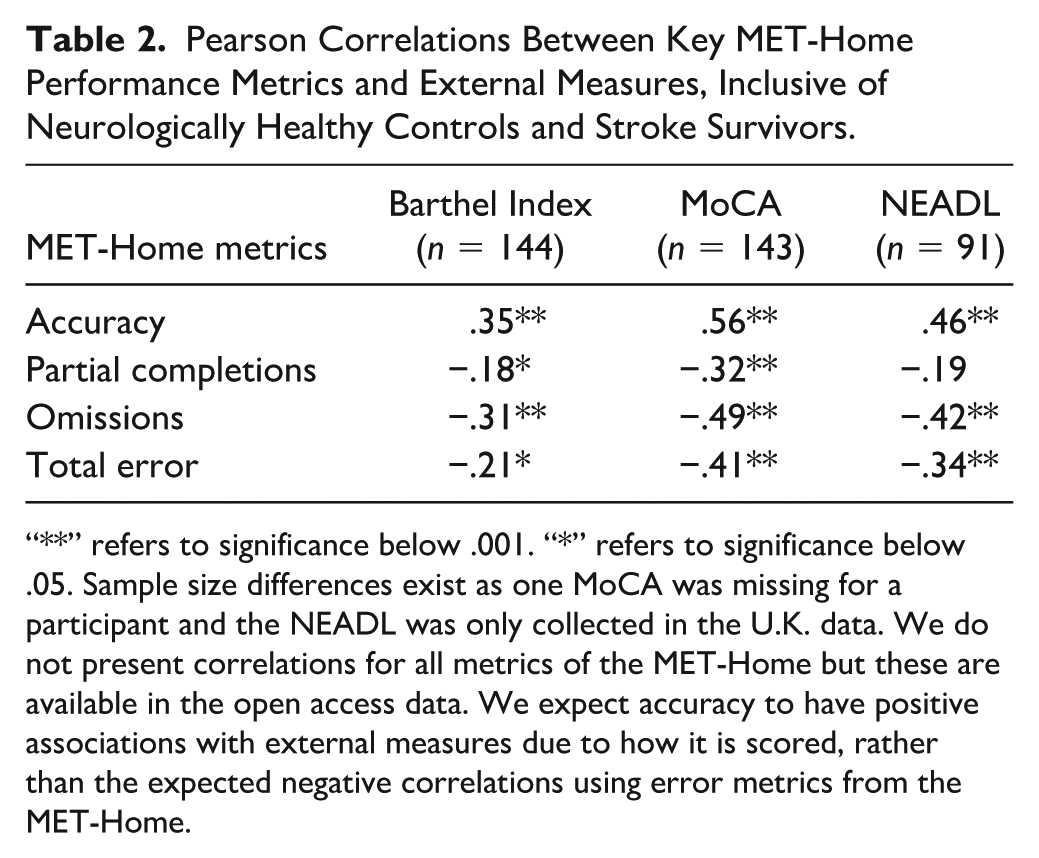

The Pearson correlations between MET-Home key metrics of accuracy, omissions, partial omissions, and total errors, and total scores from the MoCA, the Barthel index, and Nottingham Extended ADL measures are presented in Figure 2, and raw correlations in Table 2 with all metrics. We found that MET-Home accuracy was significantly related to the Barthel, MoCA, and NEADL scores, but most strongly with MoCA, and all correlations were over the convergent validity benchmark of .30 (Rotenberg et al., 2020). Significant correlations were found between MoCA and omissions, partial omissions, and total errors, when correcting for multiple comparisons. To account for a stroke severity effect in associations, we ran multiple linear regression analyses for the stroke sample predicting MoCA total score accounting for stroke severity (NIHSS) and found significant predictive relationships only for the MET-Home metrics and not for NIHSS: MET-Home accuracy vs. severity (R2 = .30, F(2,58) = 12.43, p < .001, Baccuracy = 0.83 (t = 4.58, p < .001) and BNIHSS = −.01 (t = −0.09, p = .93); omissions vs. severity (R2 = .18, F(2,58) = 16.65, p = .002, Bomissions = −0.78 (t = −3.16, p = .002) and BNIHSS = −.04 (t = −0.04, p = .65); partial omissions vs. severity (R2 = .15, F(2,58) = 5.17, p = .008, Bpartial omissions = −0.81 (t = −2.68, p = .009) and BNIHSS = −.15 (t = −0.09, p = .14); and total errors vs. severity (R2 = .16, F(2,58) = 5.40, p = .007, Btotal errors = −0.23 (t = −2.76, p = .008) and BNIHSS = −.08 (t = −0.10, p = .42). The results indicate that when accounting for stroke severity as a predictor of MoCA score, there is no diminished effect on the predictive ability of MET-home.

Scatter Plots Between MET-Home Accuracy and Measures of Functioning, Cognition, and Activities of Daily Living (Data Points Have Visual Jitters Imposed to Aid in Visual Discrimination of Individual Participants).

Pearson Correlations Between Key MET-Home Performance Metrics and External Measures, Inclusive of Neurologically Healthy Controls and Stroke Survivors.

“**” refers to significance below .001. “*” refers to significance below .05. Sample size differences exist as one MoCA was missing for a participant and the NEADL was only collected in the U.K. data. We do not present correlations for all metrics of the MET-Home but these are available in the open access data. We expect accuracy to have positive associations with external measures due to how it is scored, rather than the expected negative correlations using error metrics from the MET-Home.

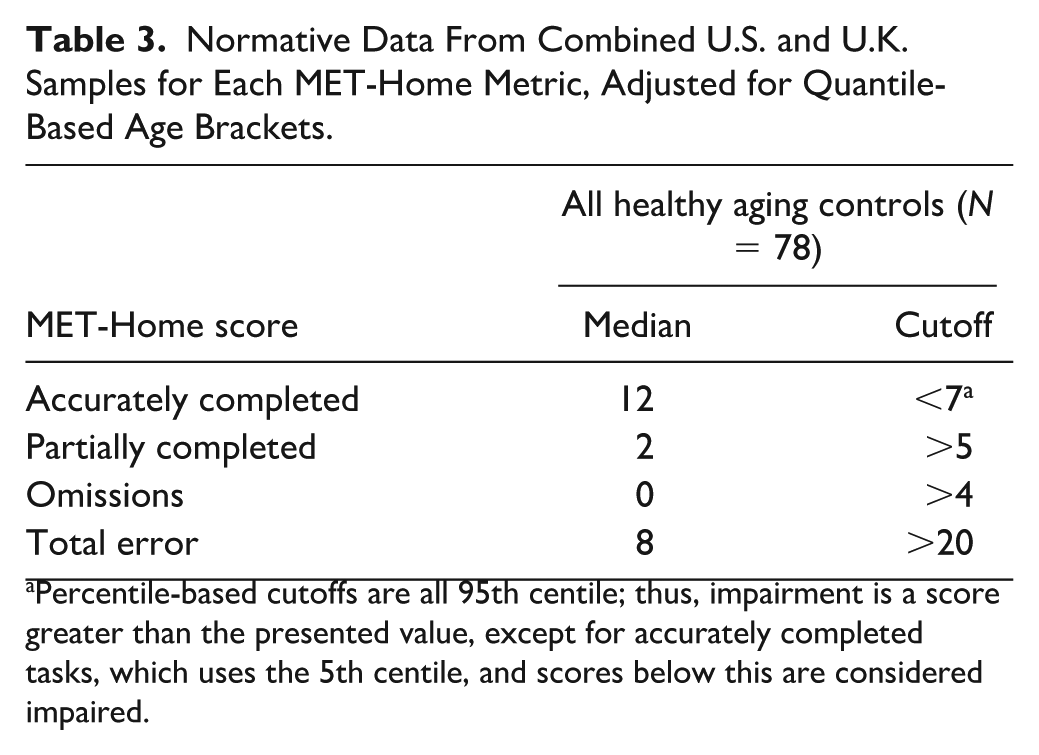

Normative Reference Data

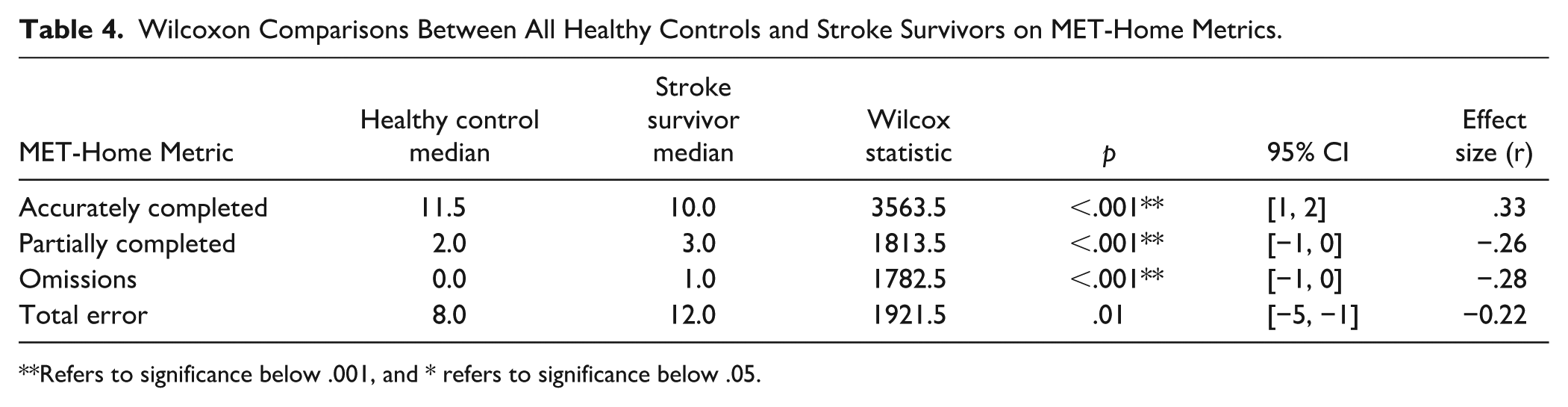

In Table 3, we present normative data with 5th and 95th percentile cutoffs for impairment classification based on all control MET-Home data across the original and revised versions (as no statistical differences were found between versions) and samples. Accuracy is the only metric with a 5th percentile, the rest use 95th percentiles as they are error metrics. Initial analysis on demographics affecting MET-Home accuracy revealed that age was significantly associated, with accuracy scores, r(141) = −.38, p < .001, and education was not significantly associated, with accuracy scores, r(96) = .14, p = .17. Note, different degrees of freedom did not affect statistical power and were due to differences in country data protocols. Due to sample size, we did not compute age corrected normative data. New normative cutoffs by age group can be developed in larger samples in future research. We compared performance on the MET-Home (all versions and countries) between healthy controls and stroke survivors and present the results in Table 4.

Normative Data From Combined U.S. and U.K. Samples for Each MET-Home Metric, Adjusted for Quantile-Based Age Brackets.

Percentile-based cutoffs are all 95th centile; thus, impairment is a score greater than the presented value, except for accurately completed tasks, which uses the 5th centile, and scores below this are considered impaired.

Wilcoxon Comparisons Between All Healthy Controls and Stroke Survivors on MET-Home Metrics.

Refers to significance below .001, and * refers to significance below .05.

Exploration of Factor Structure

We found a two-factor structure of the MET-Home data, across both original and revised versions of the MET-Home. Full details are in the Supplemental Materials, but briefly, Factor 1 comprised items related to retrieval and application of information (including working memory, monitoring, and goal-management abilities), and the second factor comprised items related to the location and use of objects (involving planning, organization, and initiation of purposive action abilities). Full results are present in the Supplemental Materials.

Discussion

We aimed to present a revision of the MET-Home (Burns et al., 2019) and compare the original and revised versions, as well as provide internal consistency and external validity evidence for its use, in addition to some normative reference data. We found that overall, there were limited statistically significant differences found between the original and revised version of the MET-Home on key metrics of accuracy, omissions, partial completions, and total errors; thus, we combined version data. Internal consistency was found to be very high in the U.K. sample, and this matched the high reliability found in the original U.S. sample (Burns et al., 2019). We established further evidence for the validity of the MET-Home through correlation of MET-Home accuracy and global cognitive functioning (MoCA; Nasreddine et al., 2005), functional independence (Barthel index; Mahoney & Barthel, 1965), and instrumental ADLs (The Nottingham Extended Activities of Daily Living Scale; Harwood & Ebrahim, 2002). We found that the MET-Home was highly related to these measures (Barthel Index, MoCA, and NEADL respectively) in accuracy (r = .35, .56, and .46), and omissions (r = −.31, −.49, and −.42), total errors (r = −.21, −.41, and −.34), and only partially for partial completions (r = −.18, −.32, and −.19). We were unable to assess discriminant validity as we did not have any tasks for discrimination to assess from the secondary data.

New reference data and cutoffs were developed. We found significant differences between age and education matched neurologically healthy control participants and survivors of stroke on accuracy (p < .001, r = .33), omissions (p < .001, r = −.28), and partial completions (p < .001, r = −.26), as well as time taken to complete the MET-Home and inefficiencies. We did not find group differences in planning time or number or frequency of rule breaks. We used percentile-based cutoff scores, rather than receiver operator curve (ROC) analysis or true score criterion to decide impairment classification. Our choice of technique was based on similarity to our other cognitive screens which are also based on 5th and 95th centile cutoffs and available comparison data for ROC analyses. We assume that stroke survivors may perform just like neurologically healthy controls due to the heterogeneity of cognitive impairment post-stroke (Kusec et al., 2023), but this needs to be established, and cutoffs determined to account for the natural variation in neurologically healthy adult performance. We must acknowledge that an ROC or true criterion cutoff may be preferrable; however, without comparison neuropsychological test data, we are unable to assess the sensitivity to executive functioning impairment in our data to classify participants as impaired or not.

An exploratory factor analysis indicated a two-factor solution for both the original and revised MET-Home, with minimal differences across versions. The first factor comprised items requiring the retrieval and application of information (e.g., recalling a phone number, identifying a medication and its dose, estimating the cost of a delivery, or determining the temperature), suggesting an emphasis on working memory, monitoring, and goal management. The second factor was defined by items requiring the location and use of objects in the environment (e.g., socks, food, first aid items, or tools), reflecting planning, organization, and initiation of purposive action. This division is consistent with theoretical accounts of executive functioning that emphasize both unity and diversity (Miyake et al., 2000), as well as Stuss and Alexander’s (2000) differentiation of dorsolateral versus medial/orbitofrontal processes (Stuss & Alexander, 2000). Importantly, Diamond’s (2013) framework suggests that these factors map onto both core and higher-level executive functions: the first factor engaging working memory and monitoring demands, while the second taps planning and problem-solving as higher-order manifestations of executive control (Diamond, 2013). Taken together, these findings suggest that the MET-Home’s items can be meaningfully differentiated into cognitively oriented versus environmentally oriented demands, underscoring its value as a multidimensional measure of everyday executive functioning.

In comparison to the original investigation of the MET-Home (Burns et al., 2019), neurologically healthy controls and survivors of stroke were different on all MET-Home metrics except planning time (as found in the current study) and frequency of rule breaks. The original study did not compare impairment classification or MET-Home scores against the MoCA but did correlate the MoCA with MET-Home metrics. The authors found that the MoCA was not statistically related to any MET-Home metrics, in contrast to our findings of a moderate relationship between MET-Home accuracy and MoCA total score. We believe this to be due to statistical power. In other MET research, it is usual to find group-based differences, at least due to the presence of a brain injury in the patient group (Knight et al., 2002; Lai, Dawson, et al., 2020; Webb et al., 2021). Nevertheless, it is important to establish that patient groups who are expected to (on average, overall) perform worse, do so, to support the discriminability and interpretation of the test results. In contrast to the results of the original MET-Home study, but in support of the current results, other MET literature has found associations between MET metrics and the MoCA (Branson, 2016; Lai, Dawson, et al., 2020; Lai, Yan, et al., 2020; Webb & Demeyere, 2023b). The importance of comparing MET-Home to these measures is to provide evidence for the validity of the measure. That is, how much performance on the MET-Home is related to real-life cognitive and physical functioning. We show that the MET-Home has some evidence of validity.

It can be argued that the MET-Home should have more association with instrumental ADLs, than with standard cognitive testing, as the design of the MET in general is to detect impairment in those who pass standardized testing (Shallice & Burgess, 1991). We instead found a numerically greater correlation with MoCA than instrumental activities but could not compare this statistically as the data were on different samples and the correlation coefficient is itself an effect size, so descriptive comparison is possible. We do not interpret the greater correlation with MoCA than NEADL with too much emphasis as the intention of the study was not to do so, but to investigate whether the observed correlations were above our benchmark of .30. It is not possible to compare the results of the current study to other METs, except the OxMET, as historically no instrumental nor basic ADL assessments have been compared to the MET. Like the OxMET, the MET shows similar associations with MoCA and ADLs, suggesting that METs may tap into general cognitive processes that underly daily activities. There is enough variance unexplained that the MET independently contributes something else, which is yet to be established.

We analyzed separately, and combined, the data from the U.S. and U.K. samples’ performance on the MET-Home. There were several reasons for this; to compare the original and the revision, to compare across countries, and to have significant numbers of neurologically healthy adults to provide the first-ever in-person MET reference normative data. The combination was justified as there were no meaningful performance differences between countries and the demographic differences were largely due to differences in protocol for samples. The benefit of the combination is largely the diversity of the sample for reference data. Homogeneous normative data provide more reliable reference data, but the cutoffs are not necessarily more valid than a diverse data set representing a wide group of healthy adults. With homogeneous data, comparing a new individual to the reference data is only valid if they match the reference data which is unlikely. The transatlantic combination increases the age and education range of a norm data, as well as the ethnic diversity, making it more representative of a general white-western population. We fully acknowledge our normative data are not so ethnically diverse as to represent data from other cultures. The inclusion of both country samples of stroke survivors also increases diversity; for instance, the age range increased to include older adults and more severe strokes than the U.S. sample alone, thus being more representative of different groups of stroke survivors.

Limitations

A main limitation in the current study is the reliance on secondary data which has precluded some additional analyses. For instance, the U.S. sample data did not have MET-Home item-level data available to openly share, even though it was originally available to score participants, which meant this data set could not be included in overall reliability analyses. In addition, there were demographic differences which could not be examined between U.S. and U.K. samples as these data were not available in either the U.S. or U.K. data sets. Test discrimination was unable to be assessed too as no appropriate measure, such as a mood score or non-cognitive or motor test, was available in the open data set. All data are reported where available. However, given these limitations, the large sample data we did have, enabled us to thoroughly compare and contrast MET-Home versions to produce strong evidence for the use of either test. In addition, further specific and non-executive function assessments would enable assessment of discrimination.

Although the sample size of 78 is much larger than the original MET-Home paper sample of 23 healthy controls, this is still a relatively small group from which to derive normative data. Nevertheless, we have concurrent and additional studies ongoing regarding the MET-Home, and thus, normative data will continue to be generated and updated. Updated norms will be present on the test website (https://met-home.com/)

A further limitation is a lack of comparison of the new revised MET-Home to a full neuropsychological battery or at the very least an executive function battery. This was due to the secondary nature of the current study. This additionally precluded our analyses of discriminant validity too. Future research could compare the executive function battery to the revised version to provide further evidence that it is a measure of executive function that reflects real life and include measures that are theoretically/practically unrelated to executive function for discrimination. In addition, incremental validity of the MET-Home can be examined by comparison of the MET-Home to other MET, other executive functioning tests that screen meaningful functioning, and other assessments in a clinician’s battery to justify integration into clinical practice.

Clinical Implications and Conclusions

The MET-Home, regardless of version, can be used with neurologically healthy adults and stroke survivors, with higher acceptability found for the revised version. The MET-Home is a reliable and homogeneous test with some normative data for clinicians to reference. Clinicians can use the MET-Home for free to understand the possible impairments in everyday life. The MET-Home requires little formal training, and training videos are available on the test website (https://met-home.com/). Future research is necessary to garner a greater normative reference data set and to establish the revision as an assessment of executive function.

Supplemental Material

sj-docx-1-asm-10.1177_10731911251384603 – Supplemental material for Revision of the Multiple Errands Test–Home (MET-Home) for English Speaking Community Dwelling Adults and Stroke Survivors

Supplemental material, sj-docx-1-asm-10.1177_10731911251384603 for Revision of the Multiple Errands Test–Home (MET-Home) for English Speaking Community Dwelling Adults and Stroke Survivors by Sam S. Webb, Suzanne Perea Burns and Nele Demeyere in Assessment

Footnotes

Acknowledgements

We would like to thank the participants across all samples who helped us with this research. We would like to thank the research assistants and clinicians who aided in data collection across all samples too.

Data Availability

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Suzanne Perea Burns is a developer of the “MET-Home” but does not receive any remuneration from its use.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by a fellowship award from the Stroke Association to S. S. Webb under grant [PGF 21100015]. Nele Demeyere is supported by the National Institute for Health Research (NIHR) [Advanced Fellowship NIHR302224]. The views expressed in this publication are those of the author(s) and not necessarily those of the NIHR, NHS, or the UK Department of Health and Social Care.

Ethical Approval

Ethical approval was granted by the Medical Sciences Interdivisional Research Ethics Committee (REF: R73921/RE001) at the University of Oxford. All participants provided written informed consent.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.