Abstract

Research on commercial computer games has demonstrated that in-game behavior is related to the players’ personality profiles. However, this potential has not yet been fully utilized for personality assessments. Hence, we developed an applied (i.e., serious) assessment game to assess the Honesty–Humility personality trait. In two studies, we demonstrate that this game adequately assesses Honesty–Humility. In Study 1 (

Keywords

Computer gaming is one of the most popular forms of entertainment. Various researchers have attempted to utilize the popularity of games to achieve other goals beyond entertainment by developing applied games (Kato & De Klerk, 2017). 1 Applied games have mainly been developed to foster behavior change, training, and other educational goals (e.g., Bauer et al., 2017; Connolly et al., 2012). Typically, the motivational appeal of games has been utilized to increase people’s engagement with the learning material (Prensky, 2001; Starks, 2014).

In recent years, another form of applied gaming has become more prevalent: using games for assessment purposes (Ifenthalter et al., 2012). Most of these game-based assessments have been conducted for educational assessments such as measuring the progress and outcomes of educational goals set forth in the game (e.g., Kiili et al., 2015; Shute et al., 2010; see Ventura & Shute, 2013, for an exception). However, game-based assessments can also be used for noneducational assessments. More specifically, games can be used to measure individual differences in personality because personality is linked to in-game behaviors in various commercial computer games (Tekofsky et al., 2013; Worth & Book, 2014, 2015). Such game-based assessments of personality may be useful for applied purposes in research, clinical assessments (Myers et al., 2016), and personnel selection and assessment (Fetzer et al., 2017). For instance, personality assessment games can be relevant for individuals who intellectually understand games but are unable to provide accurate self-reports, for instance, because they lack self-insight (e.g., individuals with borderline personality disorder; Morey, 2014) or because they self-enhance on self-reports (e.g., individuals with Autism Spectrum Disorder; Schriber et al., 2014).

In the current contribution, we describe the development and validation of an assessment game, called

Conceptual Framework

Applied gaming (Fleming et al., 2017) covers the application of computer games and gamification to achieve goals beyond entertainment. Gamification is the application of one or more game-design principles in a non-game context (Deterding et al., 2011) and the end result is a tool that itself cannot be considered a game (cf. Richter et al., 2015). Comparatively, applied games are full-fledged games that attempt to achieve goals beyond entertainment (Kato & De Klerk, 2017; Klabbers, 2009). Therefore, the concept of applied gaming presupposes a continuum of ‘gamefulness’ from gamification (low gamefulness) to applied games. In this continuum, we argue it is possible to distinguish between applied games that focus more on the “applied” aspect (intermediate gamefulness) and those that focus more on the “game” aspect (high gamefulness). The primary difference is whether the applied game feels more like a gamified application or an actual game. Furthermore, we argue that the two broad primary goals of applied games are “education” and “assessment.”

Education broadly subsumes applied games that attempt to teach players’ understanding of topics such as physics or math (e.g., Kiili et al., 2015), but also games that serve as a rehabilitation training after brain injury (Van der Kuil et al., 2018), or games that try to change attitudes (e.g., DeSmet et al., 2018). All these applications aim to create change in the player, and we argue that education is the most appropriate label.

Assessment broadly subsumes applied games that attempt to gain insight into a particular construct that is not developed or trained in the game itself. For instance, applied games that attempt to screen people at risk for developing diseases such as Alzheimer’s (Coughlan et al., 2019) and games that are specifically developed to assess individual differences such as intelligence or personality are all covered under the goal of assessment. Such assessments can be used for personnel selection but also for clinical diagnosis.

Distinguishing between education and assessment goals of applied games has several advantages. First, this helps clearly determine the specific goals of applied games (e.g., Bellotti et al., 2013). Second, this distinction may be a helpful to map game attributes to the specific goals of applied games (e.g., Landers, 2014). Third, such a distinction can help select appropriate game genres for specific goals of applied games (e.g., Fetzer et al., 2017).

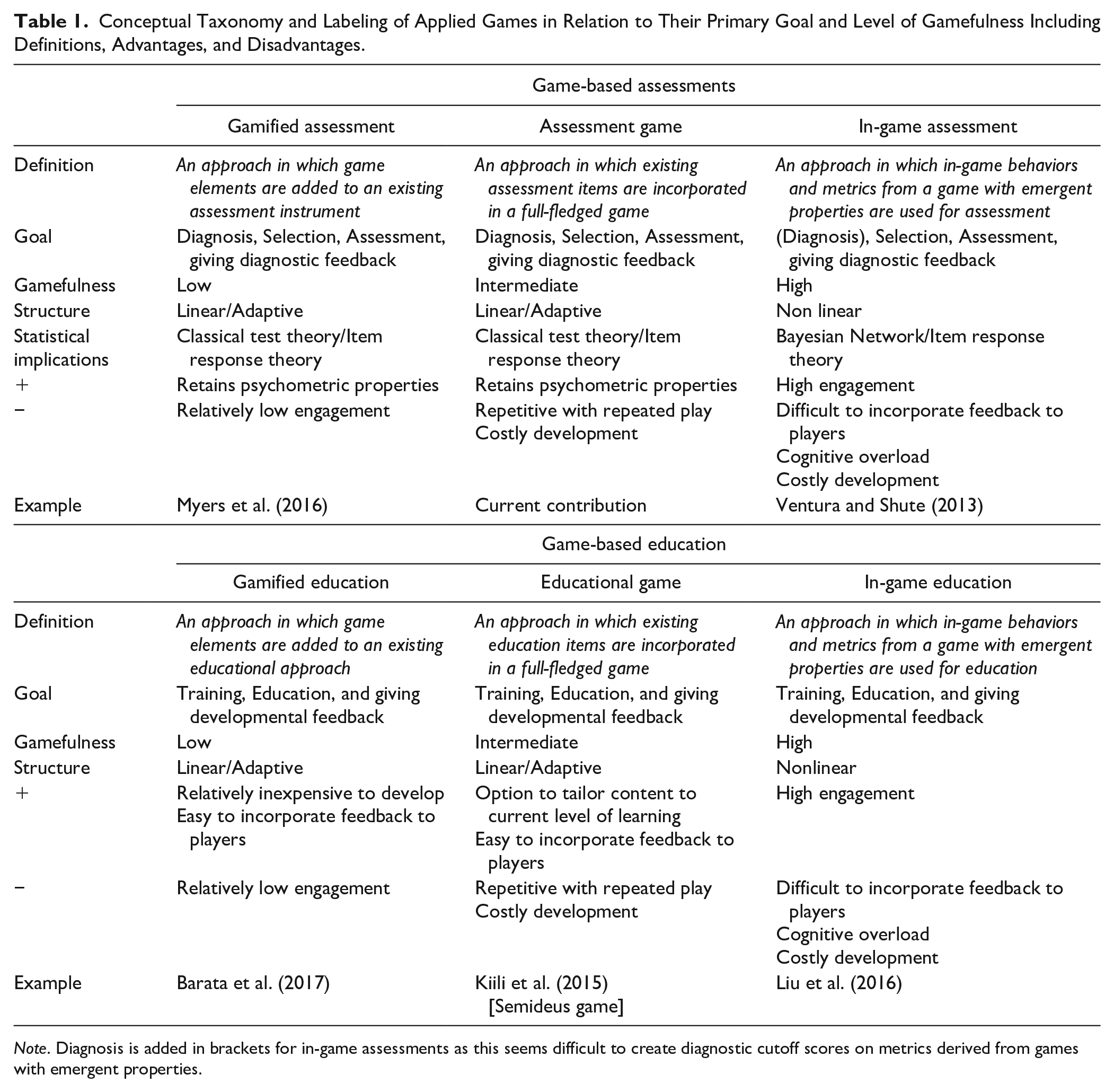

Combining the goals of education and assessment with the level of “gamefulness” results in a 2 × 3 taxonomy (see Table 1). This taxonomy distinguishes between the primary goal and the level of gamefulness. For instance, an assessment game is more like an assessment than an in-game assessment. Specifically, an assessment game is often designed in the form of a linear game in order to apply classical test theory to the assessment. In contrast, an in-game assessment more often has emergent properties and is usually designed in the form of a nonlinear game (e.g., adaptive toward the skill of the player) and, therefore, in-game assessments need to apply item response theory or Bayesian network analysis (Shute et al., 2010). Furthermore, we propose to use the more generic term game-based assessments to broadly refer to all these assessments applications regardless of the level of gamefulness. Similarly, we reserve the generic term game-based education to refer to all education applications regardless of the level of gamefulness (see Table 1 for all definitions, potential advantages, disadvantages, and examples for each goal and level of gamefulness). Overall, this taxonomy may add more precision and clarity to the terminology currently used in the field, which suffers from a lack of standardization.

Conceptual Taxonomy and Labeling of Applied Games in Relation to Their Primary Goal and Level of Gamefulness Including Definitions, Advantages, and Disadvantages.

Honesty–Humility and the HEXACO Model of Personality

According to lexical personality studies, the maximum cross-culturally replicable structure of personality is most optimally represented by six dimensions, referred to as the HEXACO dimensions of personality (Ashton et al., 2014; De Raad et al., 2014; Saucier, 2009). HEXACO is an acronym of the six traits that it encompasses: Honesty–Humility, Emotionality, eXtraversion, Agreeableness, Conscientiousness, and Openness to Experience. The HEXACO model fully encompasses the historically older Five-Factor/Big Five Model (FFM; Digman, 1990) and although there are also notable differences in the rotational positions of Agreeableness and (HEXACO) Emotionality/(FFM) Neuroticism in the two models, the main difference is that the HEXACO model consists of the addition of Honesty–Humility (see Ashton et al., 2014; Ashton & Lee, 2007).

Honesty–Humility encompasses the degree that people are honest, sincere, lack feelings of entitlement, and are uninterested in status and luxury (Ashton et al., 2004, 2014).

In terms of predictive validity, Honesty–Humility has been related to various outcomes such as counterproductive work behavior (CWB; Zettler & Hilbig, 2010), unethical business decisions (UBD; Ashton & Lee, 2008; Lee et al., 2013), and cheating for financial gain (Hilbig & Zettler, 2015; Thielmann et al., 2017). The inclusion of Honesty–Humility in the HEXACO model also has several practical advantages to the FFM. For instance, two recent meta-analyses found that the HEXACO model outperformed the FFM in the prediction of CWB (Pletzer et al., 2019; see also Pletzer et al., 2020) and prosocial behavior (Thielmann, Spadaro, et al., 2020). In both meta-analyses, the superior predictive value of the HEXACO model was due to its inclusion of Honesty–Humility.

Economic Games and Personality

Personality has been related to in-game behaviors of so-called economic games and social dilemmas (we will describe them jointly as economic games in the remainder of this manuscript). These economic games are abstract, text-based games that are used to investigate topics such as strategic and prosocial behaviors (see Fehr & Schmidt, 2006; Pruitt & Kimmel, 1977, for reviews). Although there are many different economic games, all of them involve decision-making situations in which someone can make a self-interested choice at the cost of the welfare of others or forgo the self-interested choice to foster the welfare of the collective (Thielmann et al., 2015).

In economic games, Honesty–Humility is mainly relevant for the degree that someone actively cooperates with others (Thielmann, Spadaro, et al., 2020). 2 More specifically, Honesty–Humility has been related to cooperative behavior in various games such as the dictator game (Barends et al., 2019b; Hilbig & Zettler, 2009; Zhao et al., 2017), the public goods game (Hilbig et al., 2012), and the prisoner’s dilemma (Zettler et al., 2013). Because of their relation with Honesty–Humility, adaptations of such economic may be useful additions for the current assessment game.

Personality and Behavior in Commercial Games

Personality has also been related to behavior in various commercial computer games. For instance, Conscientiousness is negatively related to speed of play in a first-person shooter game (Tekofsky et al., 2013). Similarly, in the massively multiplayer online role-playing game World of Warcraft, all six HEXACO traits have been related to theoretically plausible in-game behaviors (Worth & Book, 2014). For instance, Conscientiousness was positively related to how frequent people engaged in in-game working (e.g., collecting resources and crafting items). Agreeableness was positively related to the frequency of helping behavior directed at other players (e.g., healing other players). Furthermore, other studies found meaningful relations between self-reported in-game preferences and behaviors on the one hand and personality traits on the other (Tabacchi et al., 2017; Worth & Book, 2015; Zeigler-Hill & Monica, 2015; cf. McCreery et al., 2012).

The Situation-Trait-Outcome Activation (De Vries et al., 2016) model may explain the relations between personality and different behaviors observed in computer games. The Situation-Trait-Outcome Activation model posits that personality is expressed in three different personality-situation interactions. We will illustrate each of these interactions with the findings of Worth and Book (2014) in their study on the HEXACO traits in World of Warcraft. First, situation activation means that people seek out specific situations that fit their personality (e.g., by selecting, perceiving, evoking, and/or manipulating situations to fit one’s personality profile). For instance, people high in Openness to Experience more frequently explored the game world, similarly, individuals low in Honesty–Humility more frequently sought out player-versus-player activities. Second, specific situations activate the expression of specific personality traits (i.e., trait activation). For instance, people high in Openness to Experience were more likely to make unusual in-game items whereas people low in Honesty–Humility more frequently attempted to ruin other players’ experience by stealing kills of other players (Worth & Book, 2014). Third, the expression of personality may be differentially related to outcomes such as rewards and punishments (i.e., outcome activation). This latter aspect was not studied by Worth and Book, but we would expect that people low in Honesty–Humility are more likely to gain high leaderboard scores and people high in Openness to Experience to gain exploration achievements.

However, commercial games are developed for the purpose of entertainment, making them less suitable for the in-game personality assessments. For instance, it is often impossible to access internal logging data of commercial games, many games require hours of play to master them, and a lot of in-game behavior is irrelevant for the inference of personality (see, e.g., Tekofsky et al., 2013). Consequently, utilizing such commercial games for personality assessment is likely to be a waste of assessment time. Furthermore, psychometric considerations are unlikely to have played a role in the development of commercial games. Therefore, assessment games are—ceteris paribus—much better equipped than commercial games for such practical purposes.

Prior Research on Assessment Games and Gamified Assessments of Personality

To date, to our knowledge, one in-game assessment and several gamified assessment applications have been developed to measure personality traits. Gamified assessment tools that assess personality usually do not incorporate actual game mechanics but use a storyline, avatars, and visual imagery to give a feeling of “gamefulness” to more traditional assessment tasks (e.g., questionnaires, situational judgment tests [SJT]; Georgiou et al., 2019; Levy et al., 2016; McCord et al., 2019; Myers et al., 2016; cf. Barends et al., 2019a).

Gamified assessment tools that utilized a so-called SJT format have generally found encouraging results in terms of construct validity. In a SJT, participants are confronted with a description of a particular situation, and participants choose one out of several response options. The contents of these SJTs can be presented in a text-based format (e.g., Oostrom et al., 2019), a video-based format (e.g., Dubbelt et al., 2015), or in a gamified format (e.g., Georgiou et al., 2019). To illustrate these studies’ findings, McCord et al. (2019) found convergent validity between a gamified assessment of the FFM personality traits and several self-reported FFM personality traits. However, not all FFM personality traits assessed using the gamified assessment tool were significantly correlated with their corresponding trait. Furthermore, this study also found that some of these traits had considerable correlations with unintended personality traits (i.e., had low divergent validity). Similarly, in a gamified SJT, participants selected an avatar and completed a set of SJTs embedded within a virtual world with an overarching storyline (Georgiou et al., 2019). This gamified SJT had convergent validity with the four assessed skills (e.g., resilience) and divergent validity. Furthermore, this gamified SJT was also able to predict self-reported work and academic performance (Nikolaou et al., 2019). Overall, these studies demonstrate that gamified assessments using SJTs can validly measure personality.

In addition to the gamification of SJT assessments, so-called virtual behavior cues (or virtual cues for short) can also be used as gamified assessment tools (Barends et al., 2019a). These virtual cues are visual customizations in a virtual environment. They can be made in the creation of avatars, the customization of a virtual car, or the decoration of a virtual office. Prior work has found that customization of avatars has been related to FFM personality (Bélise & Bodur, 2010; Fong & Mar, 2015). Barends et al. (2019a) developed a scale based on a variety of these virtual cues and showed that this scale had acceptable reliability, convergent validity with self-reported Honesty–Humility, and divergent validity with the other five HEXACO traits.

Finally, there is some evidence that in-game assessments can be used to measure particular personality traits (Ventura & Shute, 2013). Specifically, Ventura and Shute developed and validated an in-game assessment to measure the persistence facet of Conscientiousness. Their in-game assessment of persistence was significantly correlated with a behavioral assessment of persistence; however, their in-game assessment did not show any convergent validity with self-reported persistence.

The above studies suggest that it is possible to develop a game-based assessment to measure personality traits, especially if the assessment game is based on traditional assessment tasks (e.g., Georgiou et al., 2019; Myers et al., 2016). However, there are various potential challenges. The first challenge is that little is known about the psychometric properties of such game-based assessments (e.g., internal reliability). The second challenge is that it seems difficult to simultaneously achieve convergent and divergent validity. For instance, the gamified assessments described above often also measured unintended personality traits (Georgiou et al., 2019; McCord et al., 2019). Another threat to the construct validity game-based assessments of personality is that it may inadvertently (also) measure cognitive ability because games are immersive and engaging and can result in a high cognitive load for players (Gundry & Deterding, 2018). Consequently, managing such a cognitive load of game-based assessments may tap into cognitive ability more than in the targeted personality trait. The third challenge is that it is an outstanding question whether these game-based assessments of personality can predict relevant outcomes (i.e., have predictive validity) and if they do, whether they are able to predict these criteria above and beyond traditional self-report personality assessments (i.e., incremental validity).

Present Research

The overarching goal of the current set of studies was to investigate the potential utility of assessment games for personality assessment. For this purpose, a new personality assessment game was constructed, called

Design of the Assessment Game “Building Docks”

The assessment game

In

Study 1

Method

Below, we report how we determined our sample size, all data exclusions, all manipulations, and all measures in this study.

Participants and Procedure

Recent Dutch graduates who participated in a competition for match-making with various internationally operating companies were invited to complete

Materials

Cognitive Ability

The cognitive ability test was developed by LTP business psychologists and consisted of an abstract reasoning task, a verbal intelligence task, and a numerical intelligence task. This cognitive ability test has demonstrated convergent validity with another published cognitive ability test: the Multicultural Capacity Test (Bleichrodt & Van den Berg, 1999; Kappe & Van Der Flier, 2012). The data made available by LTP showed that in high-stakes assessment samples, these two cognitive ability tests were significantly and positively correlated (

HEXACO-100

The six HEXACO traits were measured with the Dutch HEXACO-100 (De Vries et al., 2009). The Dutch version is equivalent with the HEXACO-100 across other languages (Thielmann, Akrami, et al., 2020) in terms of factor structure and item loadings. Each trait was measured with 16 items each. This questionnaire also included four items to measure the interstitial Altruism facet. This Altruism theoretically covers the space between Honesty–Humility, Emotionality, and Agreeableness. Responses were self-reported on a 5-point Likert-type scale (1 =

Building Docks

Participants completed

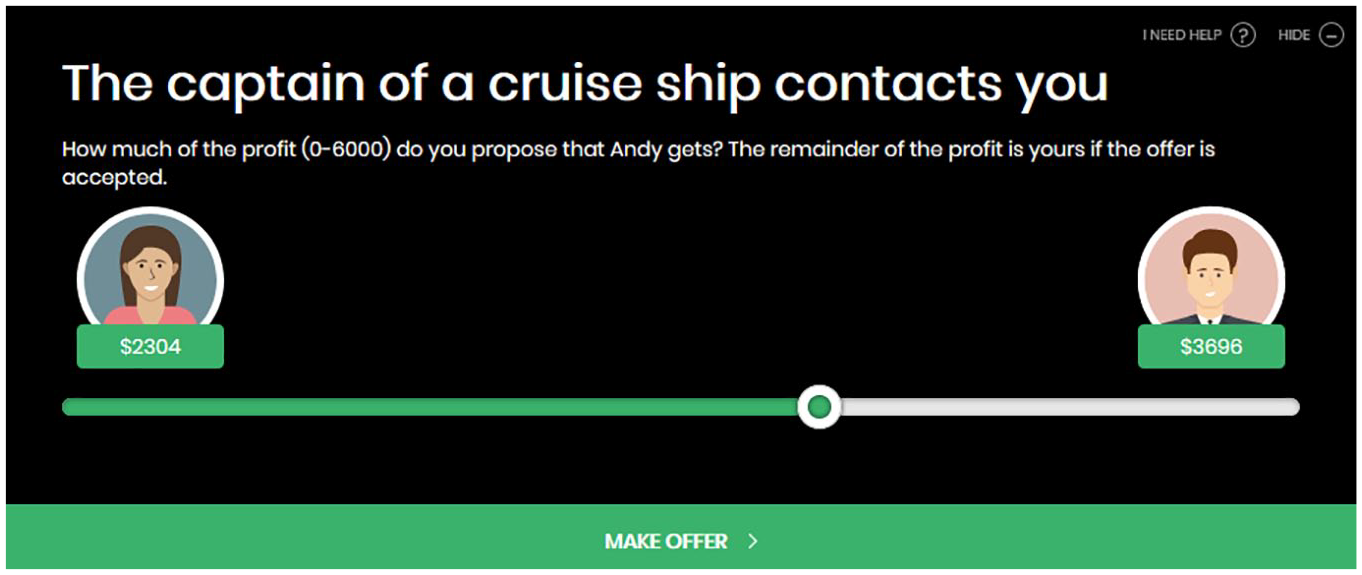

A screenshot from the assessment game “Building Docks.”

An example of an economic game scenario used in “Building Docks.”

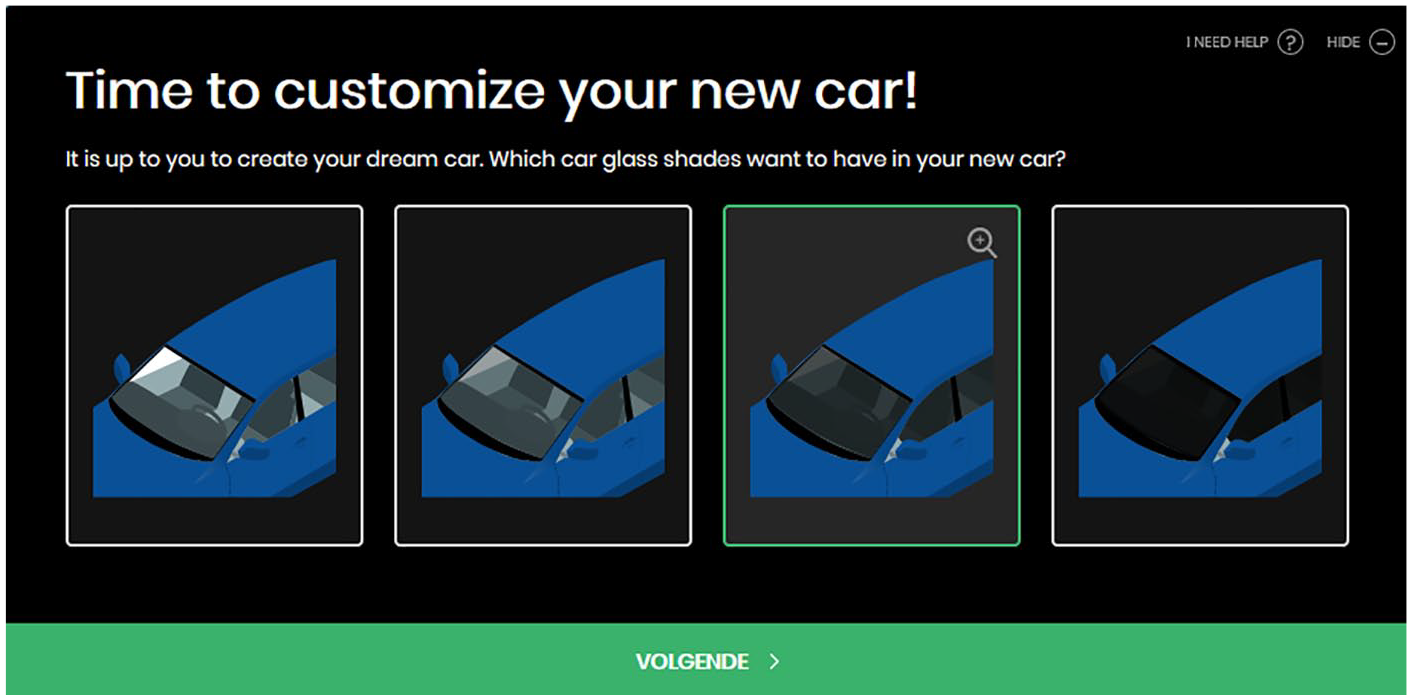

An example of virtual cue used in “Building Docks.”

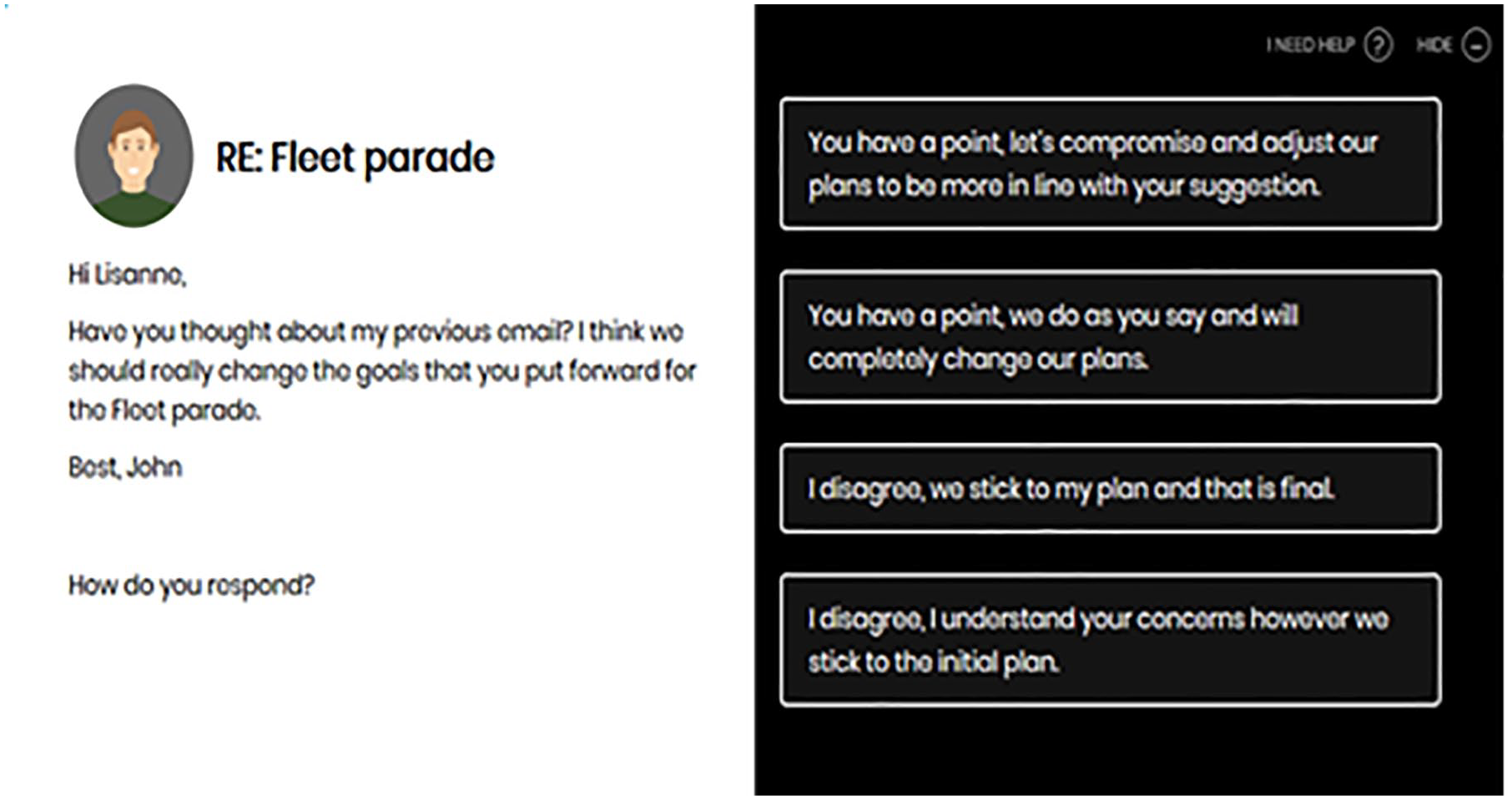

An example of a Situational Judgment Test item used in “Building Docks.”

Building Docks economic games

Building Docks situational judgment tests

A series of 12 SJTs followed a storyline that the participant had to create a bid book to get to host a Fleet parade in the harbor. These SJTs were developed using a construct-driven approach (Lievens, 2017; Oostrom et al., 2019). Eight of these SJTs were developed to measure Honesty–Humility, additionally, four SJTs were fillers (developed to measure some of the other HEXACO traits).

7

Respondents selected one of the four response options in each SJT. Each response option was developed to express a different level of the trait and were scored on the rank order of this expression (1 =

Building Docks virtual cues

Participants also completed 27 virtual cues (Barends et al., 2019a). New virtual cues were developed based on the original versions to fit into the visual style of

All

Results and Discussion

First, the

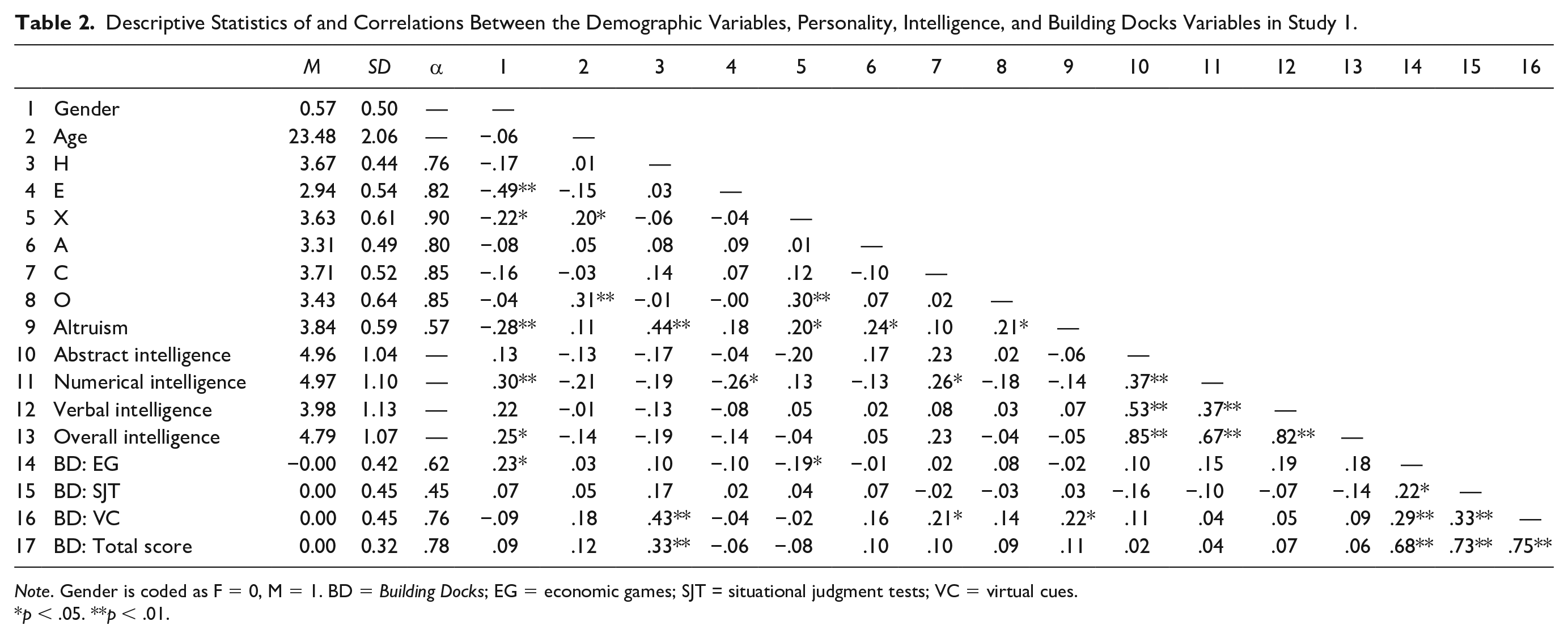

Descriptive Statistics of and Correlations Between the Demographic Variables, Personality, Intelligence, and Building Docks Variables in Study 1.

Subsequently, convergent validity was investigated with a correlational analysis. Table 2 shows that the overall

Second, to assess divergent validity, the

Study 2

Study 1 provided initial evidence that we can use an assessment game to measure Honesty–Humility. In Study 2, we wanted to replicate whether

Method

Below, we report how we determined our sample size, all data exclusions, all manipulations, and all measures in this study.

Participants and Procedure

Using MTurk, 500 American participants were recruited for a three-phase study. Only participants who had completed more than 5000 prior MTurk human intelligence tasks and were granted payment in at least 95% of them were eligible to participate. Each phase was completed one week after the other, and only participants who completed the previous phase could enter the subsequent phase (e.g., people who did not participate in Phase 2 were blocked from participating in Phase 3). Half of the participants completed the HEXACO inventory in Phase 1 and

We recruited a larger sample compared with Study 1 because of potential dropouts between the different phases and the prevalence of noncompliant responses in such online samples (see Barends & De Vries, 2019). We expected an effect of

Materials

HEXACO-208

The six HEXACO traits were measured with the HEXACO-208 inventory (De Vries et al., 2015). The HEXACO-208 is an adapted version of the full-length HEXACO-PI-R (Lee & Ashton, 2006) and measures the six broad traits with 32 questions each and the interstitial Altruism and Proactivity facets with eight items each. Responses were self-reported on a five-point Likert-type scale (1 =

Data Quality Checks

Four instructed response items (e.g.,

Building Docks

We only used the English version of the assessment game reported in Study 1. The α of the overall game Honesty–Humility score was .73 and of the game’s Honesty–Humility subtasks between .37 and .75.

Dependent Variables

The three dependent variables were collected in Phase 3 of the project. The three tasks were completed in a randomized order for every participant.

Counterproductive work behavior

CWB was measured with the 19-item self-report scale developed by Bennett and Robinson (2000). Participants indicated on a 7-point Likert-type scale how frequently they had engaged in various deviant behaviors at their work in the past year (1 =

Unethical business decisions

To measure UBD, six scenarios of Ashton and Lee (2008) were used. Respondents read a scenario about a potential UBD and indicated on a four-point Likert-type scale how likely they were to engage in the described activity (1 =

Cheating

Participants had to flip a coin twice and received a $0.50 bonus if they reported two successive heads. If they reported any other results, they did not receive this bonus. Note that such a cheating task does not allow us to determine actual cheating but only the probability of cheating because a proportion of the participants will legitimately report two successive heads. The 25% probability of winning is considered an adequate tradeoff between observing a sufficient number of cheaters without inducing fear of incriminating oneself as a cheater (Moshagen & Hilbig, 2017). Responses were analyzed with the R script of Moshagen and Hilbig that corrects for the proportion of legitimate wins in the sample.

Results

Replicating Study 1

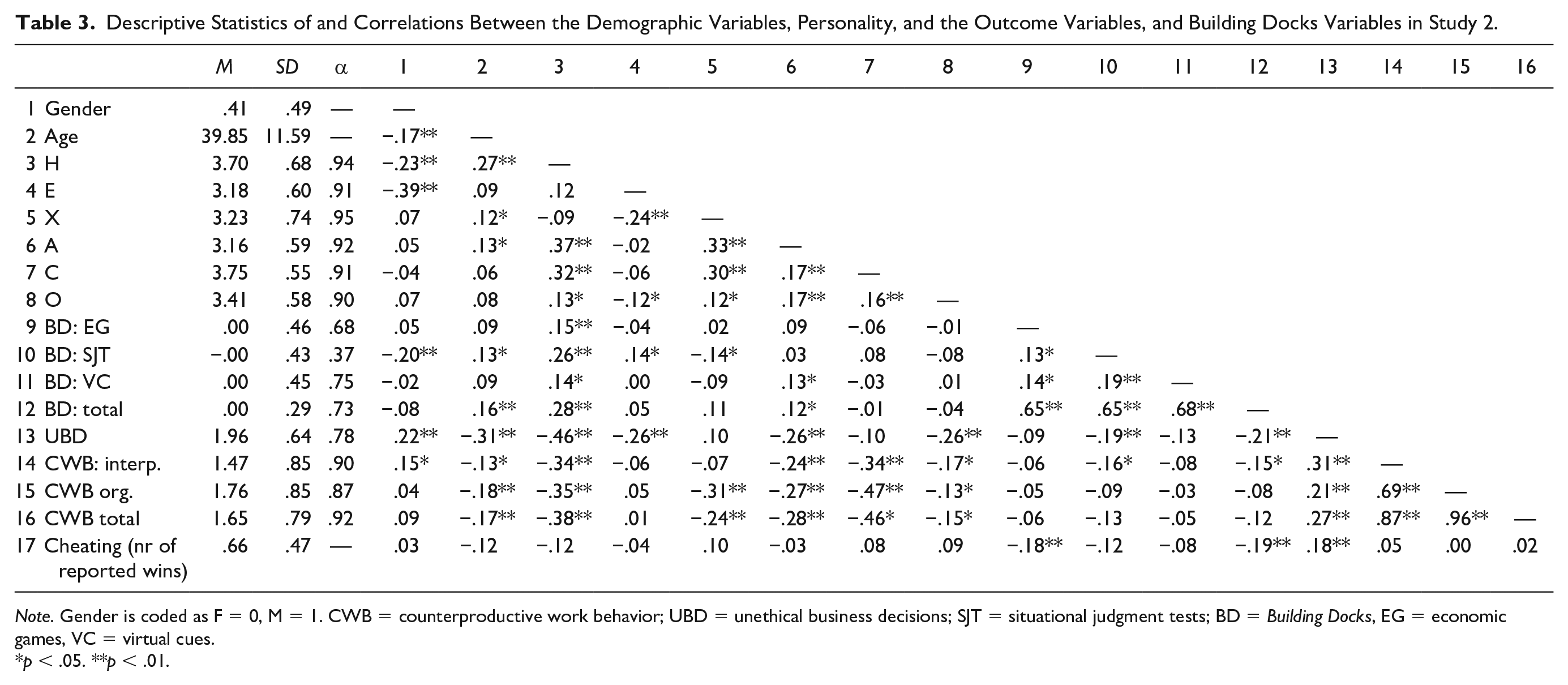

First, we investigated whether the convergent validity between self-reported Honesty–Humility and in-game behavior of Study 1 could be replicated. Table 3 shows that the different Honesty–Humility subtasks of

Descriptive Statistics of and Correlations Between the Demographic Variables, Personality, and the Outcome Variables, and Building Docks Variables in Study 2.

Second, we also tested whether the divergent validity of

Predictive and Incremental Validity of Building Docks

The negative correlations reported in Table 3 also demonstrate that the

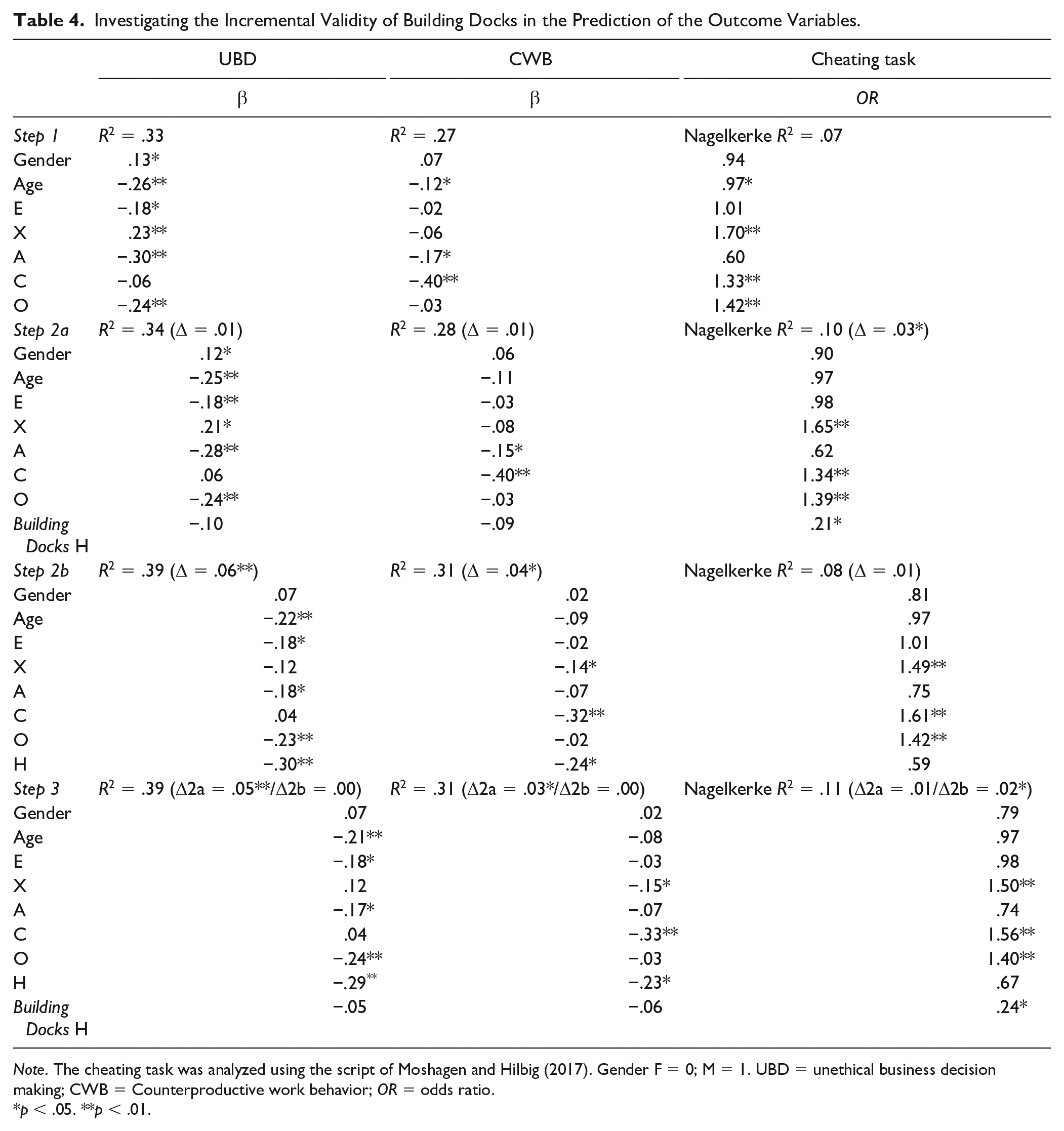

To assess the incremental validity of the

As Table 4 shows, the

Investigating the Incremental Validity of Building Docks in the Prediction of the Outcome Variables.

General Discussion

In two studies, we investigated the construct validity and predictive validity of the assessment game,

Theoretical and Practical Implications

Our studies add to the literature in several ways. First, as Kato and De Klerk (2017) noted, the construct validity of assessment games is an understudied topic. Importantly, although constructing an assessment game to measure one or more personality traits clearly is a labor-intensive and psychometrically challenging assignment, we demonstrate that it is possible to develop a reliable and valid assessment game. We validated this game by showing its convergent validity with a well-established indicator of the construct of interest and its divergent validity with variables theoretically unrelated to the construct. Furthermore, compared with many of the prior studies using gamified assessments (e.g., McCord et al., 2019), we were able to develop an assessment game that has convergent, divergent, and predictive validity.

Second, our studies also demonstrated that purposefully designing an assessment game to assess personality has an advantage compared with assessments based on metrics from commercial games. Note that such games and their metrics were not purposefully designed to assess personality, however, they provide a clear benchmark for comparison. For instance, Tekofsky et al. (2013) investigated how self-reported FFM personality was correlated with 175 game metrics from the first-person shooter game Battlefield 3. They reported only four correlations out of their 249 significant correlations that were slightly greater than an absolute

In terms of convergent validity, the correlation between the assessment game score with self-reported Honesty–Humility was around

Practically, personality assessment games may be useful to complement the traditional self-report assessment of personality. Importantly, self-reports of Honesty–Humility generally had the best predictive validity of our studied outcomes, however, the assessment game was of added value in predicting the cheating task. Notably, it seems that both self-reports and assessment games of Honesty–Humility each had unique predictive validity for the selected outcomes. This finding aligns with prior research that found that a combination of self-reports and behavioral assessments of self-control leads to high predictive validity as both methods can complement for each other’s inherent weaknesses (Sharma et al., 2014).

However, it is not yet clear whether such assessment games may be especially viable in applied settings such as high stakes assessments (e.g., personnel selection; see directions for future research for further details). For scientific research, personality assessment games may have several advantages. Specifically, assessment games may improve research participants’ engagement, which may lower dropout and improve data-quality in scientific research. However,

Finally, we would like to address an important point: there are currently no agreed on standards for the psychometric properties of game-based assessments for both assessment games and in-game assessments (see our theoretical framework for the distinction). It seems questionable whether the psychometric standards that were developed in light of traditional assessments apply to game-based assessments (DiCerbo et al., 2017). For instance,

Therefore, for such assessment games to be used in applied settings, it is important to have a broader discussion about the psychometric standards that need to be adhered to. Nonetheless, there is a clear primacy of predictive validity in tools of personnel selection (Morgeson et al., 2007), and our Study 2 suggests that assessment games do have predictive and incremental validity.

Limitations and Directions for Future Research

The current studies are not without limitations. First, the studies were conducted in low stakes testing situations instead of the high stakes situations often used in personnel selection. Therefore, we do not yet know how viable assessment games are in such a context. However, we do want to point out that our Study 1 sample may be considered to be in a ‘medium stakes’ situation as they were invited to this study as part of a competition in which the best candidate could win €10.000. We clearly notified the respondents that their participation would not have any impact on their chance to win the prize but it was likely that many of the Study 1 respondents thought that they would make a good impression if they participated. The fact that this sample took this research seriously is also evidenced by the fact that none of the respondents were flagged for giving noncompliant responses on the HEXACO inventory (see Barends & De Vries, 2019).

Second, the number of participants that completed the cognitive ability test in Study 1 was somewhat limited and therefore the divergent validity with this cognitive ability test may reflect more a lack of statistical power than an absence of a true effect smaller than we were able to detect (i.e., our sensitivity analysis using G*Power 3.1.9.2 [Faul et al., 2007] indicated that an effect of

Third, some of the findings may be influenced by common method variance. Specially, the assessment game had no incremental validity beyond self-reported Honesty–Humility in predicting the two self-reported outcomes (i.e., CWB and UBD). This finding may be explained—at least partly—by the common method effects (Podsakoff et al., 2003). Specifically, all self-report instruments may have shared variance because they were all assessed using a self-report instrument and because they were completed by the same source. Although, as suggested by Podsakoff et al., we separated the measurements of the predictors and outcomes by 1 week to decrease potential carry-over effects, such common method effects may have still played a role. Importantly, the findings did show that the assessment game had incremental validity in the prediction of the behavioral outcome (cheating task) beyond self-reported Honesty–Humility. A game-based assessment of persistence has found similar results, specifically, it was related to a behavioral indicator of the trait and not to a self-rated indicator (Ventura & Shute, 2013).

More broadly, our results align with prior findings that rating-scale measures of personality tend to be more highly correlated with each other than behavioral assessments of personality (Duckworth & Kern, 2011; Sharma et al., 2014). Additionally, these studies also showed that rating-scales are also somewhat more strongly related to outcomes than behavioral assessments. Furthermore, personality self-reports are much more strongly related to self-rated outcomes than outcomes gathered using other methods (Zettler et al., 2020). Similarly, although behavioral assessments are usually less strongly related (Sharma et al.), the incremental validity of Building Docks in the prediction of the cheating task could reflect a common method effect because both are behavioral assessments. Therefore, future research may want to compare the incremental validities of self-reported Honesty–Humility and

Finally, future research may want to further investigate the viability of game-based assessments of personality for personnel selection. Theoretically, an advantage of computer games, and thus our assessment game, is that they may get players in a state of flow (Sweetser & Wyeth, 2005; see Boyle et al., 2012, for a review). Flow leads people to forget their surroundings when they are playing a game. Arguably, a person in a state of flow may forget that a game is being played as a part of a personnel selection assessment and may, therefore, decrease socially desirable responding (i.e., faking). Specifically, job applicants tend to score about half a standard deviation higher on socially desirable traits (such as Honesty–Humility) than job incumbents (Anglim et al., 2017; Birkeland et al., 2006; cf. Grieve & de Groot, 2011). Potentially,

Similarly, an important avenue for future research is to investigate if game-based personality assessments have adverse impact. Adverse impact means that particular groups (e.g., women; ethnic minorities) receive substantially different scores than other groups (e.g., men; ethnic majority) on a selection instrument (Bartram, 1995). Traditional personality assessments do not tend to result in adverse impact against particular groups in society (Berry et al., 2012) but it is not yet known whether game-based assessments result in adverse impact. Specifically, there are some stereotypes about gaming in terms of age (McLaughlin et al., 2012) and gender (Wasserman & Rittenour, 2019). Therefore, it is important to investigate whether groups with little gaming experience are not disadvantaged by assessment games. However, the findings of the current studies did not indicate that men or women received different

Conclusion

Our research show that assessment games, such as

Supplemental Material

sj-pdf-1-asm-10.1177_1073191120985612 – Supplemental material for Construct and Predictive Validity of an Assessment Game to Measure Honesty–Humility

Supplemental material, sj-pdf-1-asm-10.1177_1073191120985612 for Construct and Predictive Validity of an Assessment Game to Measure Honesty–Humility by Ard J. Barends, Reinout E. de Vries and Mark van Vugt in Assessment

Footnotes

Acknowledgements

We would like to thank Marian de Joode, Rob Fraats, Dean Meis and IJsfontein for their help with the design of the assessment game. We would also like to thank Labrooms for the development of the assessment game. Additionally, we also want to thank Joost Jongeneel for his help with data collection.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was financially supported by a grant from LTP business psychologists to support a PhD position for the first author at the VU University, The Netherlands.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.