Abstract

This study proposes an adaptive framework for understanding and modeling user preferences in self-driving behaviors through natural language interaction. A user survey conducted in North America revealed strong demand for customizable autonomous vehicle (AV) features, motivating the need for dynamic preference modeling. To capture diverse and context-specific verbal expressions of user intent, we leverage speech recognition and fine-tune a lightweight T5-base language model to classify preferences across predefined AV behavior categories. Given the computational constraints of in-vehicle environments, we adopt the T5-base model due to its efficiency and suitability for embedded deployment, in contrast to larger-scale LLMs. To overcome data scarcity, we applied a data augmentation strategy using a teacher model, increasing classification accuracy from 25% to 97%. The framework can integrate vision-language models (e.g., BLIP-2, CLIP, etc.) and multimodal sensor fusion (camera, LiDAR, radar) to represent traffic situations and support context-aware interpretation of user input. This approach enables the system to generalize user preferences across similar traffic conditions through similarity-based propagation. By supporting condition-specific behavioral expressions, the system can interpret and adapt user preferences accordingly. The proposed framework facilitates scalable, context-aware, and user-centered adaptation of autonomous vehicle behaviors, contributing to improved personalization and may improve system usability.

Introduction

Autonomous vehicles (AVs) have gained significant attention in recent years due to their potential to enhance mobility, safety, and driving convenience. However, fully autonomous driving (Levels 4 and 5) remains limited due to infrastructure challenges, regulatory constraints, and technological limitations (Gavanas, 2019). As a result, full automation has primarily been tested under region-specific conditions, but its feasibility in other complex urban conditions has yet to be fully demonstrated. Additionally, studies indicate that perceptions and levels of trust in full automation vary among potential users and those already utilizing Advanced Driver Assistance Systems (ADAS; DeGuzman & Donmez, 2023). Prior research has shown that user preferences are shaped by various factors (Lee et al., 2024; Park et al., 2020). However, there is limited work on how these preferences can be dynamically modeled and adapted. Specifically, preferences for self-driving technologies can be complex, involving considerations such as regional driving conditions, traffic situations, perceived safety, and familiarity with AVs. Importantly, these preferences often reflect drivers’ expectations and desired system behaviors across contexts. Drivers may not always be aware of how the system has previously responded in each context but can still express clear preferences for how the vehicle should act. Integrating these user preferences into AV behavior models can improve system adaptability, enhance human-centered design, and ultimately increase user acceptance of automation. However, developing a practical user preference model presents several challenges:

(1) It is impractical to incorporate user preferences for all possible traffic situations into an initial model, and

(2) User preferences may dynamically change based on specific traffic conditions and evolving perceptions.

To address these challenges, this study proposes an adaptive approach leveraging Large Language Models (LLMs) to update user preferences across varied traffic situations.

Background

User preferences for AVs are influenced by multiple factors, including traffic situations (Lee et al., 2024; Nordhoff et al., 2017; Park et al., 2020), demographic characteristics (Abraham et al., 2017; Lee & Samuel, 2024a), trust and acceptance of automation (Lee et al., 2024; Lee & Samuel, 2024a; Weiss et al., 2018), and prior experiences with AV systems (Camara et al., 2021). In particular, how drivers perceive, interpret, and anticipate elements of a given situation plays a key role in shaping preferences (Endsley, 2006, 2017). For example, novice drivers often favor automation for ease and safety, whereas experienced drivers prefer manual driving for greater flexibility and control (Manawadu et al., 2015). These differences highlight the need to account for situational and individual variability in user preference models for self-driving behaviors (Lee et al., 2024; Lee & Samuel, 2024a; Park et al., 2020). Furthermore, our previous study demonstrated that it is possible to build a predictive model for such preferences (Lee & Samuel, 2024b). Recent advancements in Speech-to-Text (STT) and Large Language Models (LLMs) technologies have significantly enhanced voice-enabled conversational chatbots, facilitating more seamless interactions between users and autonomous vehicle (AV) systems. These developments enable AV systems to dynamically capture, process, and update user preferences, needs, and feedback. For instance, NVIDIA highlights conversational AI powers automated messaging and speech-enabled applications, such as AI virtual assistants and Chatbots, paving the way for personalized, natural human-machine conversations. Additionally, research indicates that LLMs are transforming artificial intelligence, enabling autonomous agents to perform diverse tasks across various domains, which can be applied to enhance user interactions in AV systems (Barua, 2024).

Approach

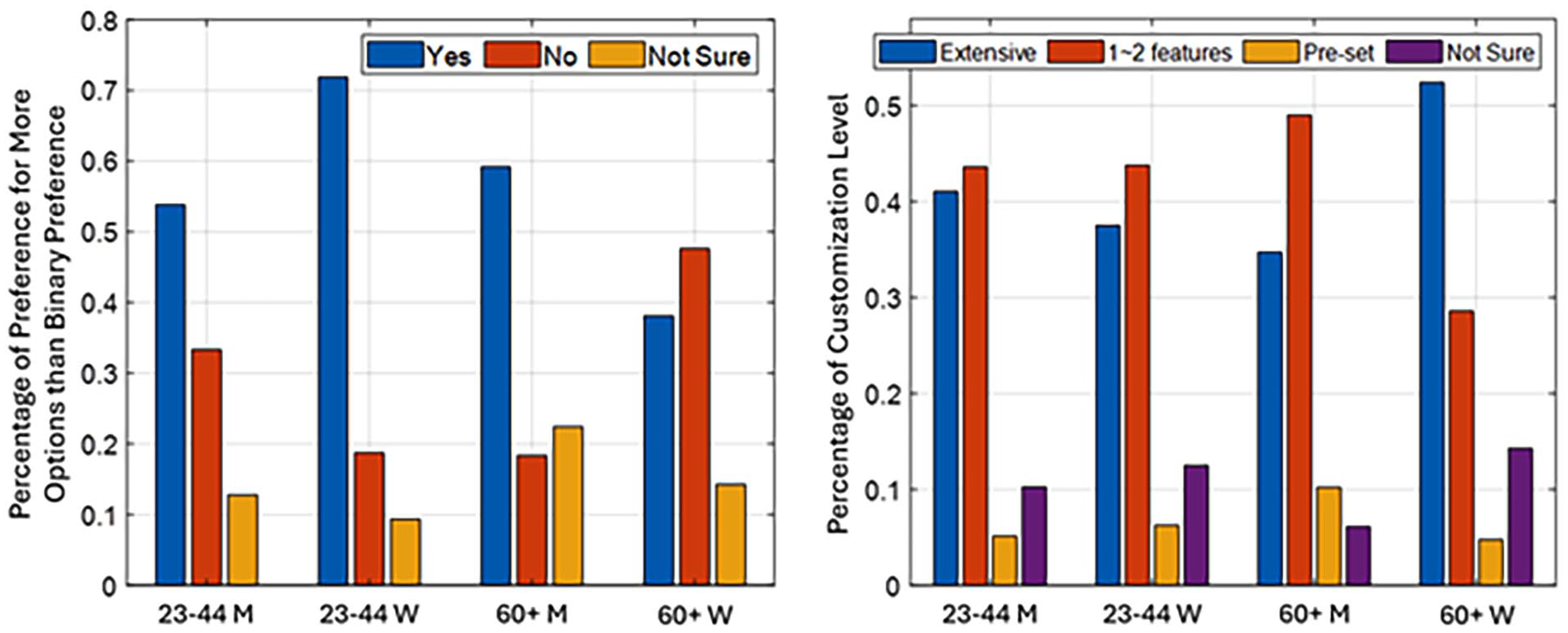

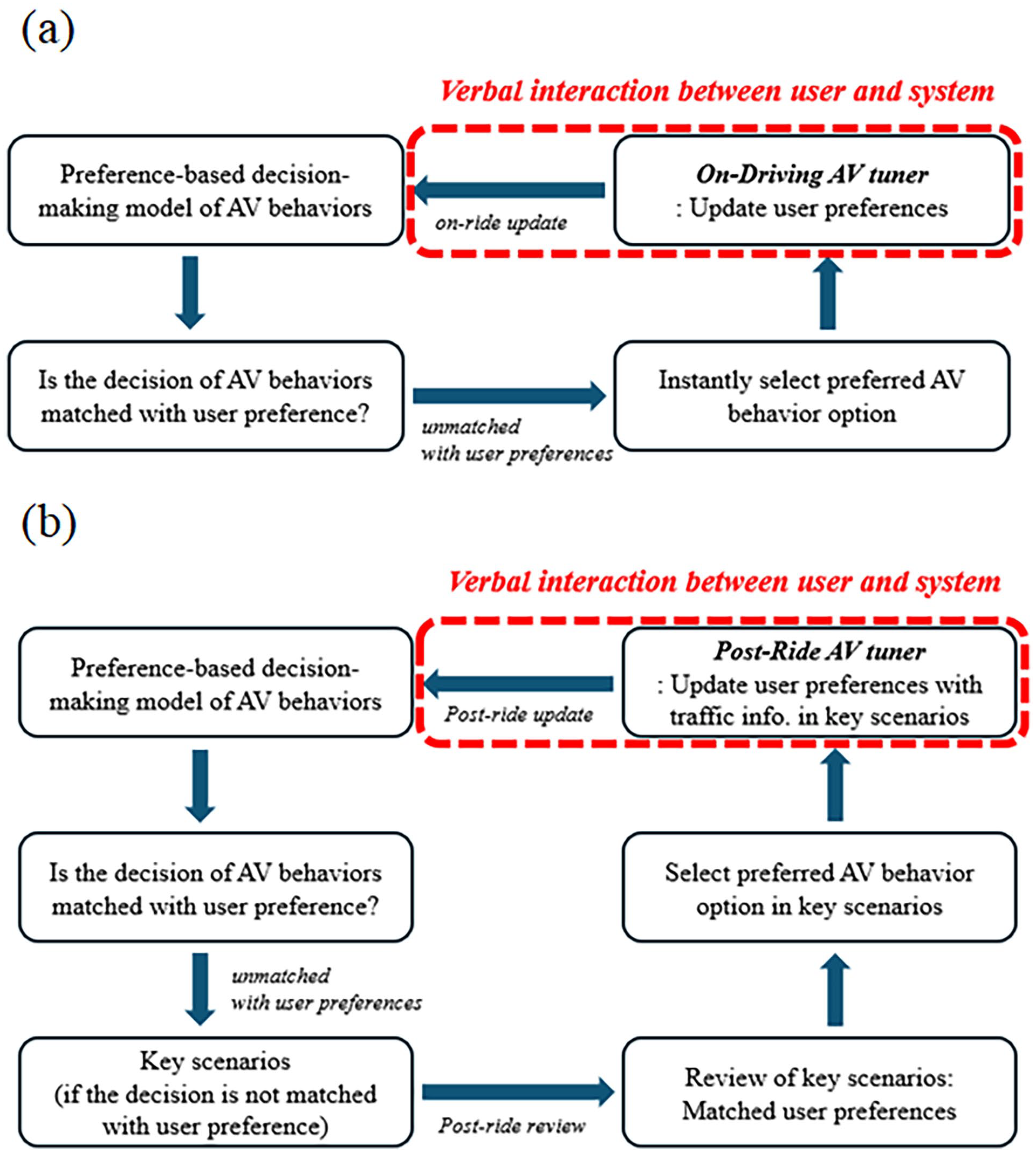

To initiate our investigation into varying user preferences for self-driving behaviors, we conducted a survey involving 170 licensed drivers in the United States and Canada, aged between 23 and 44 (avg. = 31.7 years old and SD = 5.9) and 60 years or older (avg. = 65.1 years old and SD = 4.4). Participants were recruited through the online survey platform Prolific, which pre-verified their driver’s license status. While the number of participants was smaller than in some prior work (e.g., Park et al., 2020, N = 275), in which each participant rated only a randomly selected subset of 6 scenarios among 18 (33% coverage), all participants in this study evaluated every scenario. As a result, the total number of scenario-level responses was approximately twice as large overall, and per-group response volume was comparable. This study received ethics clearance from the University of Waterloo Research Ethics Board (REB #47094 and #44049). As the main objective of this study was to demonstrate the integration of stated user preferences into the proposed framework, only percentage of responses regarding two survey questions regarding Figure 1 to provide an initial overview rather than a comprehensive analysis, while more detailed statistical comparisons of user preferences have been reported in related prior work (Lee et al., 2024; Lee & Samuel, 2024a). As illustrated in Figure 1, participants, except for women aged 60 and above, generally preferred more than a binary choice (i.e., whether or not to use self-driving features). The majority indicated a preference for more nuanced options regarding their use of autonomous functions. In a related question concerning customization of in-vehicle functions, most participants aged 23 to 44 reported a preference either for extensive customization or for customizing only one or two frequently used features. Among participants aged 60 and above, gender differences were apparent: while most men in this group favored customizing only one or two key features, most women expressed a preference for extensive customization across a wide range of functions. It should be noted that the open-ended customization question was administered before participants selected one of the predefined behavior types. Responses to this question were used to gather exploratory insights into desired features and preferences, rather than to measure actual usage frequency. These results suggest that users seek greater flexibility and control over self-driving and related vehicle functionalities. Moreover, when asked whether applying user preferences to self-driving behaviors might improve perceived safety or usage frequency, participants rated this possibility above 5 on a 7-point Likert scale (SD = 1.5), indicating generally positive expectations toward personalized automation. Finally, as shown in our previous study (Lee et al., 2024), user preferences for self-driving behaviors may also vary significantly depending on the specific traffic situation, reinforcing the need for adaptive modeling in dynamic driving environments. Building on these findings, our approach begins by constructing user preference models for self-driving behaviors based on initial responses to a variety of traffic situations. In dynamic driving contexts, we assess whether the activated autonomous behaviors are consistent with the user’s stated preferences. When discrepancies are identified, the system updates user preferences using one of two mechanisms, as illustrated in Figure 2. On-driving AV tuner (real-time preference adaptation): User preferences are refined in real time during driving to reflect situational changes and on-the-fly feedback. Post-ride AV tuner (retrospective preference adaptation): Traffic situations that reveal mismatched behaviors are categorized as key scenarios. User preferences are then reviewed and updated after the ride. This dual-updating strategy enables the system to flexibly accommodate diverse and evolving user preferences. During the post-ride review of key scenarios, users are given the opportunity to reflect on and revise the associated self-driving behaviors, allowing the system to better align with their evolving expectations and comfort levels. To efficiently update complex user preferences, we leverage a Speech-to-Text (STT) system and a Large Language Model (LLM). For these components, we utilize open-source models: Whisper by OpenAI for speech recognition, and T5-base by Google for interpreting and classifying user preferences. In the context of in-vehicle deployment, models with a large number of parameters, such as LLaMA, require high-performance GPUs, which can be impractical for integration in embedded automotive systems.

User preference for more options of self-driving behaviors than binary options and customization levels.

On-driving and post-ride AV (Lee & Samuel, 2024b) tuners to update user preferences for self-driving behaviors via verbal interaction. (a) On-driving AV tuner and (b) Post-ride AV tuner.

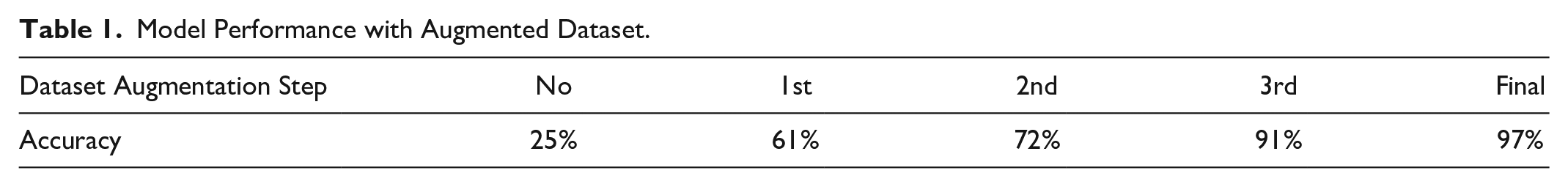

Moreover, the number of functions that need to be interpreted or classified within the vehicle is inherently limited, as they depend on the car’s built-in capabilities and the specific driving context. This makes the use of extremely large-scale models (e.g., those with tens of billions of parameters) both unnecessary and inefficient. Prior studies have shown that smaller transformer models can achieve sufficient task-specific accuracy in constrained environments with appropriate fine-tuning (Aralimatti et al., 2025; Lamaakal et al., 2025). Therefore, in this study, we selected T5-base, which contains approximately 220 million parameters, as our base model, several hundred times smaller than the largest LLaMA model (65B parameters). Unlike LLaMA, which uses a decoder-only transformer architecture, T5-base follows an encoder–decoder structure, enabling better task generalization in sequence-to-sequence settings. Additionally, the relatively small size of the model leads to faster inference times, which is essential for responsive in-vehicle interaction. The goal of this study was to build a lightweight language model that can understand and classify diverse user verbal expressions corresponding to five predefined self-driving behavior options, as identified in our previous research (Lee et al., 2024a, 2024b). To enable this, we first collected a small dataset of verbal expressions relating to user preferences for self-driving behaviors to fine-tune the T5-base model. However, due to practical limitations in collecting a large number of user responses, we faced challenges in building a sufficiently diverse fine-tuning dataset. To address this issue, we adopted a data augmentation strategy using a larger teacher model, which generated paraphrased and semantically similar utterances to expand the dataset. We initially fine-tuned the model on the collected dataset using an 80/20 training-validation split, which yielded an accuracy of approximately 25% as shown in Table 1. Subsequently, using data augmentation, we expanded the dataset and applied 5-fold cross-validation to assess model performance. As the level of augmentation increased, the model’s accuracy improved correspondingly. Redundant samples were removed to prevent overfitting. After expanding the dataset to 500% of its original size, the model achieved a final classification accuracy of approximately 97%.

Model Performance with Augmented Dataset.

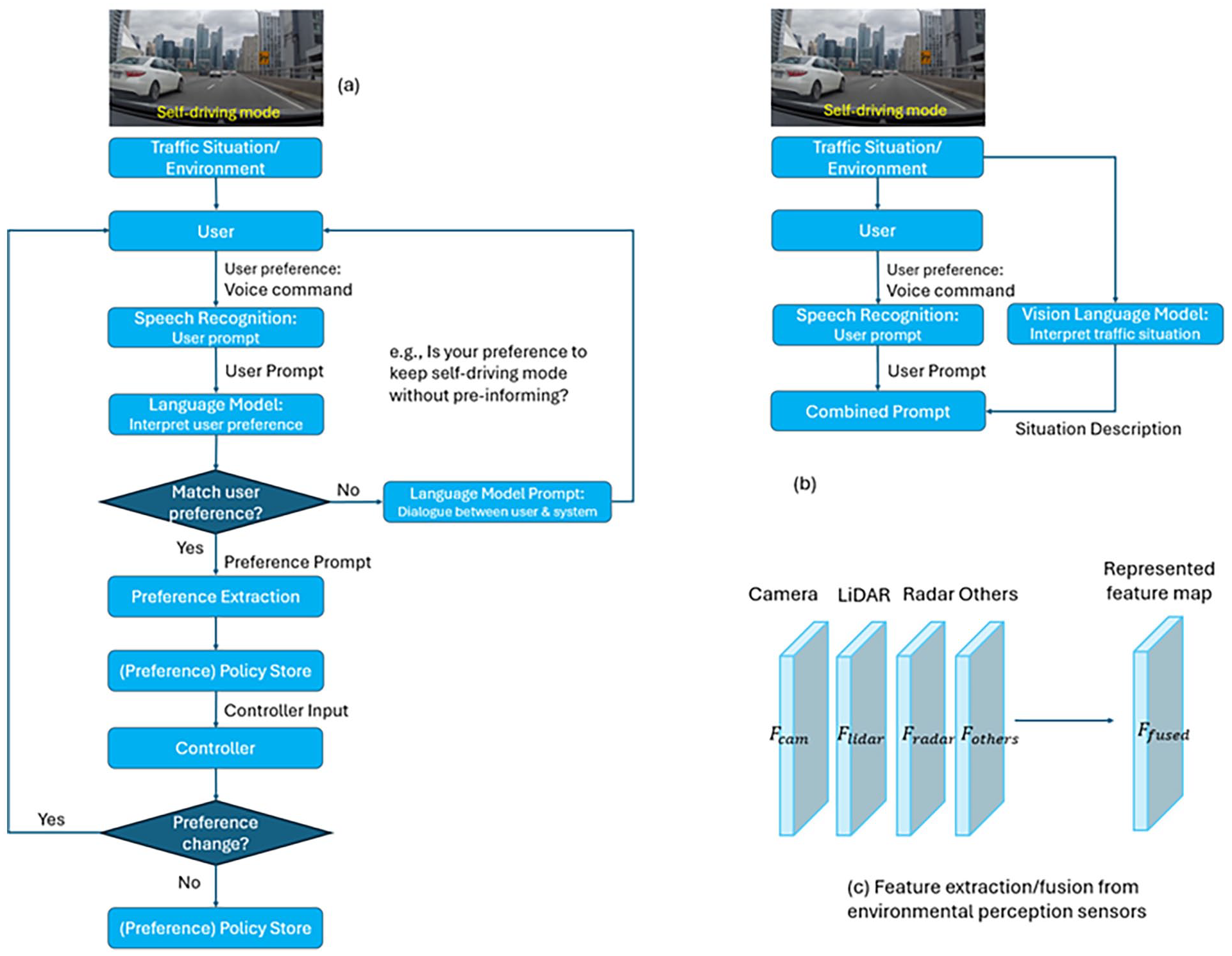

In this study, the primary contextual factor analyzed in detail was traffic volume, and a range of traffic scenarios was included to capture variability across different city types and congestion levels. While driving maneuver complexity was not measured independently, it can be partially inferred from the combination of city type and traffic volume. Other contextual variables, such as weather conditions, seasonal variations, and roadway characteristics, were beyond the scope of the current analysis but represent important directions for future research. Figure 3a illustrates the overall process by which user preferences are updated through verbal interactions with the system. The flow diagram shows how the system employs a language model to interpret user prompts and refine preferences in real time, enabling adaptive modeling that responds dynamically to evolving user intentions and diverse traffic scenarios. Notably, however, the current flow does not explicitly account for the storage of traffic context information alongside user preferences. Incorporating such contextual data is essential for constructing traffic-situation-specific preference models that support more granular and accurate behavior adaptation. To this end, we propose integrating various sources of environmental context into the preference policy, including map-based attributes (e.g., road types, speed limits, pedestrian density, and average traffic volume by segment) derived from GPS or navigation systems. Additionally, perception data collected from on-board sensors, such as cameras, LiDAR, and radar, can contribute valuable environmental features, as illustrated in Figure 3c. Because it would be impractical to query users explicitly about their preferences in every possible traffic scenario, we adopt a more scalable strategy: identifying representative traffic situations, extracting salient features, and incrementally expanding the preference model by leveraging traffic situation similarity modeling. This allows the system to infer and propagate preferences across similar but previously unencountered conditions. To support this approach, we explore two complementary strategies. First, as shown in Figure 3b, a vision-language model (e.g., CLIP, BLIP-2) is used to jointly interpret the traffic scene and user intent, forming a rich semantic prompt that guides the language model’s interpretation. Second, we introduce a similarity-based traffic modeling module that generalizes user preferences across comparable situations by comparing fused environmental features. This framework allows users to express complex, condition-specific preferences in natural language, for example: “I want you to alert me when we are about to enter a downtown area if any vehicles are approaching fast and delay the handover procedure.” In this case, the system combines the understanding of the surrounding environment via vision-language model with the spoken instruction from users, embedding them into a single prompt. This enables the language model to infer that the handover behavior should be delayed under specific contextual conditions. The fusion mechanism briefly depicted in Figure 3c shows how environmental features (e.g., Fcam, Flidar, Fradar, etc.) are aligned and integrated into a shared representation, Ffused. This unified feature embedding captures the semantic structure of a given traffic situation and can be used to support both context-aware modeling and preference propagation across similar traffic scenarios. These techniques, when used in concert, facilitate scalable and adaptive modeling of user preferences in diverse and dynamic driving environments, and are expected to contribute to improved personalization, system flexibility, and greater user acceptance of AVs.

Design strategies for adapting user preferences to self-driving behavior under dynamic traffic conditions: (a) flow diagram of user preference update through a language model, (b) Utilization of vision-language model, and (c) Feature extraction/fusion from environmental perception sensors.

Results

The proposed On-Driving AV Tuner and Post-Ride AV Tuner modules enable both real-time and post-ride updates of user preferences. Through verbal interactions between the user and the system, facilitated by Speech-to-Text (STT) and Large Language Models (LLMs), the system effectively captures evolving user intentions and applies an adaptive modeling strategy. Additionally, Vision-Language Models (vLMs) and various environmental perception sensors can be employed to construct traffic situation representations and similarity-check models. These models allow user preferences to be linked with specific traffic contexts, enabling progressive expansion of traffic scenarios associated with user-specific preferences. The integration of real-time verbal feedback mechanisms and traffic situation modeling significantly enhances the system’s adaptability, leading to improved user satisfaction, trust, and acceptance of self-driving technologies.

Conclusion

This study has several limitations that should be acknowledged. Although participants were shown real-world driving footage to ground their responses in concrete traffic scenarios, the findings still rely on stated preferences, which may not fully capture actual driver behavior in operational contexts. Additionally, the framework has not yet been validated in real driving environments. Despite these limitations, the proposed approach represents a promising direction for advancing user-centered autonomous driving systems by outlining a framework that could integrate large language models, vision-language models, and AV sensor data to dynamically accommodate evolving user needs and adapt to diverse traffic situations. These capabilities have the potential to promote more personalized and natural interactions between drivers and autonomous vehicles, and future research should focus on experimental validation to understand how preferences translate into real-world usage.

Future Work

To enable traffic-situation-aware preference modeling, features from each traffic scenario are stored as fused representations, enabling the system to build a library of feature maps for similarity-based preference propagation. Given that users prioritize different aspects of self-driving behavior depending on traffic conditions (Lee et al., 2024; Lee & Samuel, 2024a, 2024b), this highlights the need for targeted feature engineering. In parallel, the vision-language model should be fine-tuned to generate contextually relevant descriptions aligned with user preferences. In addition, the proposed framework should be deployed and validated across various traffic scenarios. Future research could also incorporate driver identification methods, such as profile-linked keys, biometric recognition, or in-vehicle login credentials, to support multi-user scenarios and ensure that preferences are accurately matched to individual drivers.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by a Discovery grant from the Natural Sciences and Engineering Research Council (RGPIN 2019-05304) to Siby Samuel.