Abstract

Dermatology clinics are getting busier, and understanding how providers utilize their time during patient visits is fundamental to improving efficiency and patient throughput. This study evaluates how dermatology providers allocate their time during patient visits to identify workflow inefficiencies and performance variations. Observations were conducted on eight providers, seeing 56 patients over four clinic sessions. Activities performed inside and outside examination rooms were captured using EyeTrackers, GoPros, and direct observation. Results showed providers spent 67% of their time inside examination rooms, with medical discussions and procedures consuming the most time—24% and 19%, respectively. A one-way ANOVA revealed a significant effect of the provider on the patient’s Length of Stay (LoS), F(7, 49) = 2.50, p = 0.028. Post hoc analysis notably identified slower performance by a resident physician compared to other attendings. These discrepancies may be attributed to experience levels and workflow habits.

Introduction

Dermatologists are a fundamental facet of the healthcare system, helping detect and cure different skin conditions. Conditions such as warts, acne, alopecia, and more are reasons why patients constantly seek the help of dermatologists. Due to the increasing number of dermatology visits, understanding how providers use their time is pivotal. The mean wait time for new patient appointments to see dermatologists was 36 calendar days (Resneck & Kimball, 2004). Even when an appointment is scheduled, patients tend to wait due to variations in arrival and service times (Noon et al., 2003). Hence, it is vital to break down how providers are using their time during an entire shift and what the best practices are to increase efficiency and decrease potential delays.

Providers perform several activities during a visit, including prereading patient information, charting, discussing with a patient, conducting an examination, performing a procedure, and checking patients out. There isn’t a standard practice that has been introduced, and several different techniques are used between providers. Some providers tend to prechart several patients beforehand and then see each patient. In contrast, other providers tend to prechart and see patients sequentially. This variation leads to several discrepancies regarding how long the total visit takes. Providers also differ based on their experience level and year of training. Understanding this variation can help provide a seamless process of seeing patients, evaluating efficiency, and making providers more productive during a visit.

Background

One of the key indicators of providers’ performance is LoS. Each visit’s duration is a key performance measure to help assess efficiencies. One of the key methods of studying human performance is using time studies (Towill, 2010). Time studies are fundamental to developing the preferred method for a given process and standardizing the system or practices. Time studies have been performed in several different domains, such as construction (Crawford & Vogl, 2006), healthcare (Bartel et al., 2014), education (Massy et al., 2013), military (Hanson, 2016), and logistics (Wong et al., 2016). Time study methods help break down a task into individual activities and improve areas with delays or setbacks. After determining these activities, potential solutions such as increasing the workforce, training, or even equipment can help improve processes.

Time studies in the healthcare domain can help track the workforce’s efficiency. Several interventions or recommendations can be implemented based on these results to improve performance and reduce delays. Due to the uncertainty and complexity associated with healthcare systems, simulation models are a rigorous tool to assess performance. Discrete Event Simulation (DES) is a computer-based operation research technique that models different systems as networks of queues and activities to evaluate, predict, and improve a system over a set period of time (Chahal & Eldabi, 2010). These models help suggest design proposals (Kumar & Shim, 2005) or policy implementations (Seung-Chul et al., 2000) to support efficient healthcare provision. Hence, using DES can help assess performance in dermatology settings and help model the impact of policies and methods.

Approach

This study aimed to understand how providers allocated their time during patient visits to assess inefficiencies or differences between providers. Ultimately, this paper seeks to answer the following research questions (RQs):

To answer these RQs, eight providers, who were seeing 56 patients from the Penn State Dermatology Departments, were observed over four clinic sessions of four hours each. The providers were broken down into two attendings and six residents. The providers were recruited from the Dermatology Department after consenting to the Institutional Review Board (IRB) approval and study procedure. The interactions between providers and patients were recorded using Eyetrackers and GoPros to capture providers’ points of view, as seen in Figure 1. The Eyetracker and GoPros recorded patient interactions inside the examination room, while direct observation from researchers was noted outside the examination room. Based on these observations, several predefined activities performed by providers were reported, as seen in Table 1. Based on these results, the sequence of the activities with their allocated time durations was extracted for each patient visit.

Provider point of view.

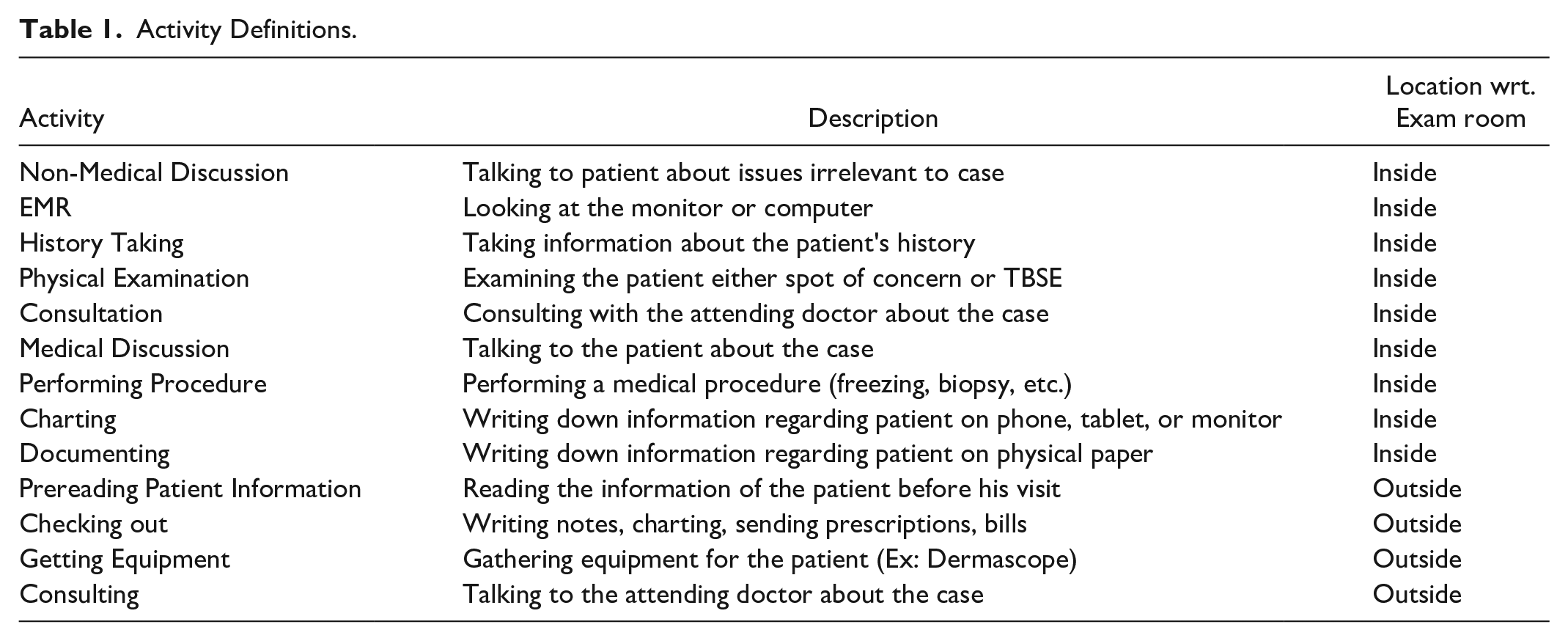

Activity Definitions.

Several methods were performed to extract the results from the data. First, Inter-Rater Reliability (IRR) was assessed between four researchers on two sample videos. This process is crucial to ensure that the observers see the same sequence of activities and time durations. Based on this step, IRR was assessed, and a Cohen’s kappa value of .83 between the encoders ensured the process was reliable and consistent. Next, the video data was distributed, and researchers, on a compiled Excel sheet, noted the sequences and durations of activities to compare the performance between providers.

Results

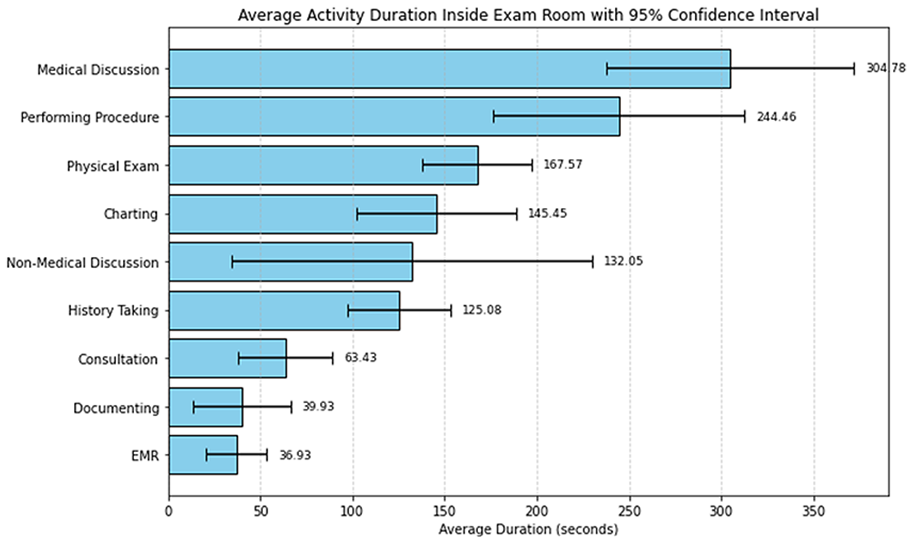

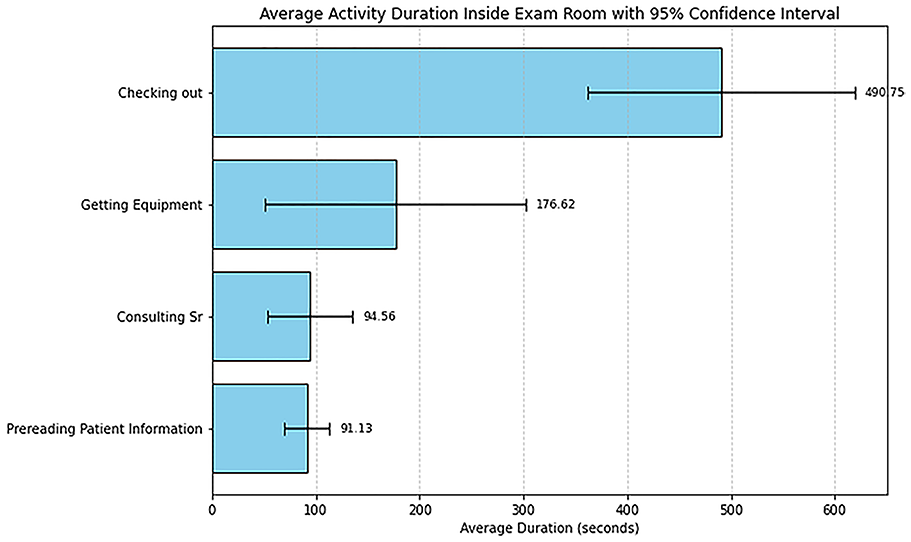

Several key trends were identified based on observational data of the dermatology department. Each provider had a unique order regarding their tasks during patient visits based on their training or experience. One primary area of concern is the time allocation of the providers during patient visits. To evaluate this and answer the first RQ, comparing the time spent in the examination room seeing a patient to the time outside the examination room is essential. Based on this analysis, 67% of the time is spent in the examination room, while 33% is spent outside the room. The time outside the examination room is dedicated to performing activities such as consultations, finding a nurse, getting equipment, etc. This is a good indicator since the goal of providers is to increase patient-provider interaction. Looking at the tasks performed by providers inside the examination room, the two tasks that took most of the time were the medical discussion and procedure tasks. As seen in Figure 2, these two activities took an average time of 305 and 245 s, respectively. It is also evident that the task with the longest variability was the non-medical discussion task. The activity that took the most time outside the examination room was the checking out activity, with an average time of 491 s, as seen in Figure 3. Both this activity and the getting equipment activity had the largest variability. Understanding why these tasks take this long could be linked to experience level, lack of training, or unavailability of resources. A possible solution to this issue is to reduce the time required for the providers to exit the room by utilizing EMR systems to notify outside agents via portals when a nurse or consultant is needed in an examination room. Tools such as simulation modeling could be pivotal to track these recommended interventions.

Average duration of inside activities performed by providers.

Average duration of outside activities performed by providers.

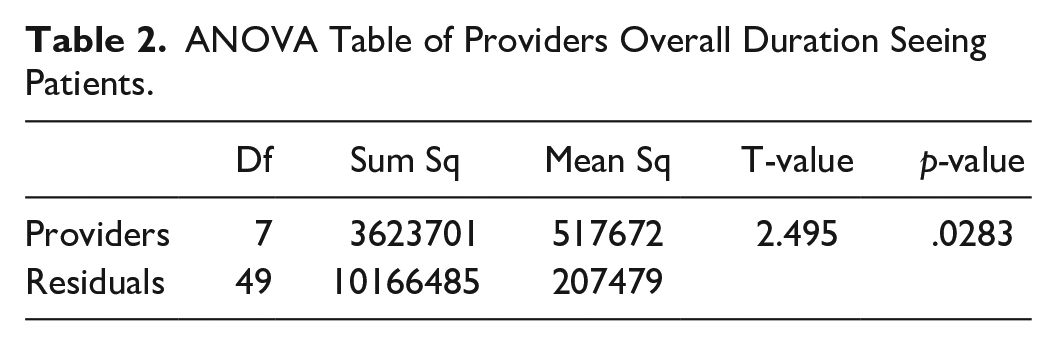

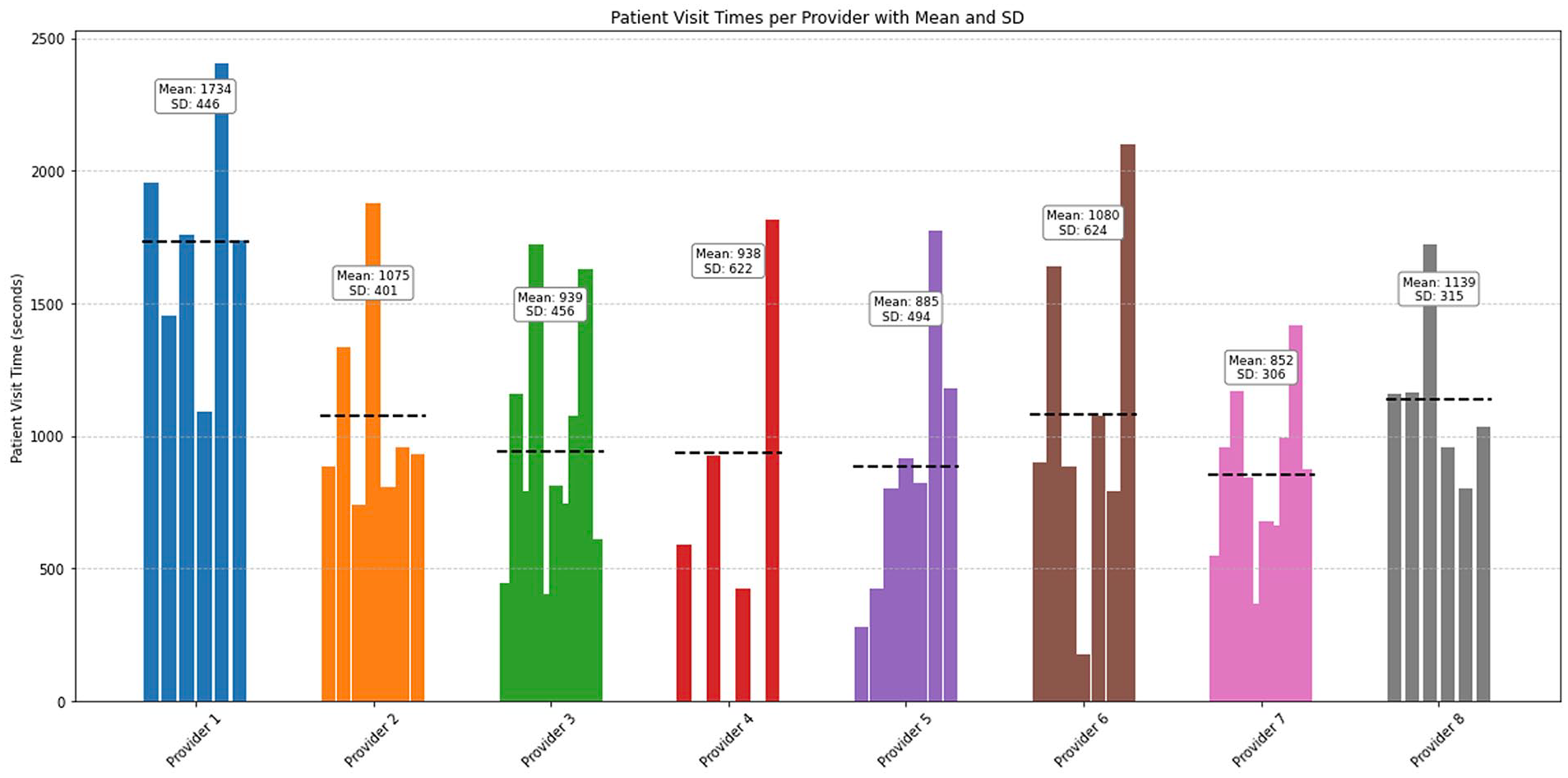

A key question to evaluate is if there was a significant difference between the providers’ performance. To answer the second RQ, patient visit durations were recorded and analyzed using an ANOVA Model. A one-way ANOVA Model was conducted to examine whether there were significant differences in the LoS for patients across providers. As seen in Table 2, the results showed a statistically significant effect of the provider on the outcome, F(7, 49) = 2.50, p = .028. Therefore, post-hac examinations were conducted to see which providers differed significantly. Descriptive statistics showed that Provider 1 took longer than the other providers, and there was a considerable variation within providers, as seen in Figure 4. In this figure, each bar represents a patient visit and each color represents a provider. This could be linked to the wide range of cases and patient visits they were assigned to individually.

ANOVA Table of Providers Overall Duration Seeing Patients.

Total LoS per patient by provider.

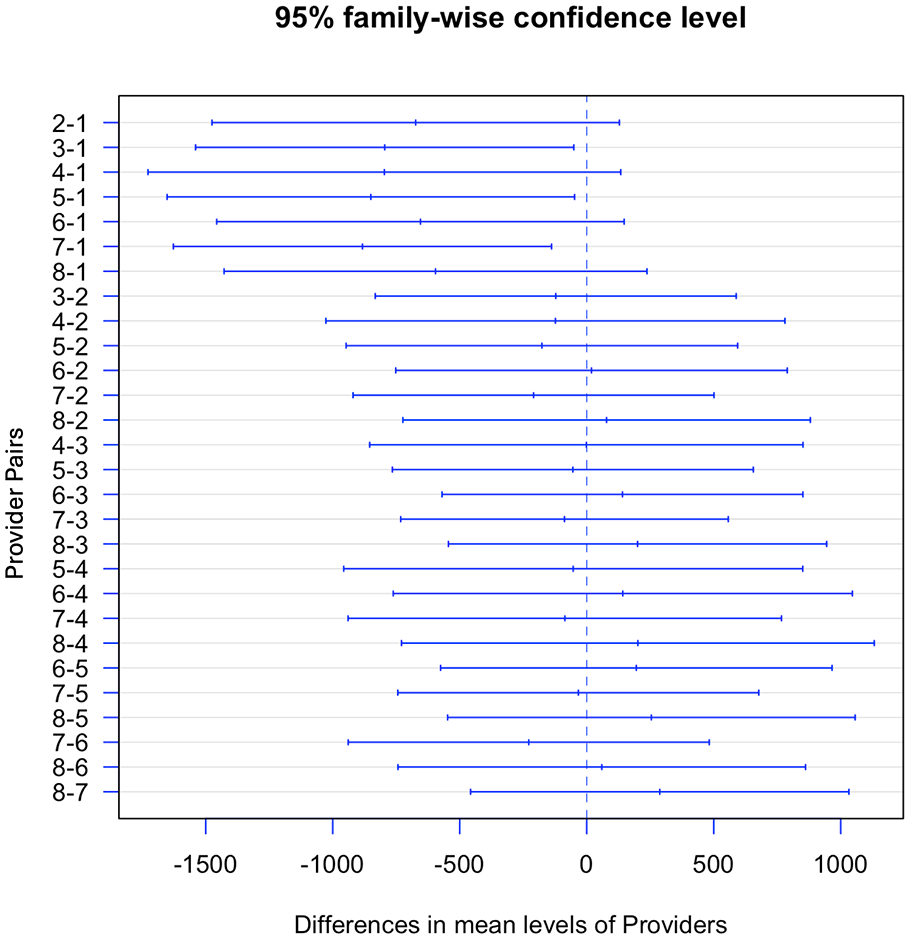

There were significant differences between the performance of Provider 1 and Provider 3, Provider 1 and Provider 5, as well as Provider 1 and Provider 7, as seen in Figure 5. Based on the observation of the videos, Provider 1 had a very random and unstructured approach when seeing patients. This means that the lack of experience and consistent practices could be a reason for this delay. Other reasons could be the conditions of the patients seen or even personal reasons such as fatigue or burnout. One solution to the lack of experience is to provide mentorship hours or specific training for these residents from more experienced consultants to gain more exposure. Another possible solution to this delay would be to provide more training by providing a standard practice for providers to follow. This solution could be implemented to decrease variations both between and within providers and standardize processes for all possible patient visits. These adjustments could be easily implemented via simulation modeling to track the impact of these interventions.

Pairwise comparisons of providers LoS.

Conclusion and Future Work

Time studies have proven to be an effective tool for breaking down tasks in various work settings to identify task durations and variabilities. This time study shows the breakdown of time durations of different tasks performed by providers in a single dermatology department. Based on the results, there is a significant difference in the time providers allocate to see patients, and some providers exceed the designated 20-minute time slots per patient, which impacts following visits. Assessing this difference from a training standpoint can help to provide quality healthcare efficiently. Extracting what is standard or optimal practice for providers to see patients efficiently can help in better resource utilization. This can be performed by assessing different activities that are bottlenecks and impact the flow of other sequential tasks. Based on these results, the tasks with the largest variation can be improved from a training standpoint, as well as other interventions, to ensure better time allocation and avoid unnecessary repetitions of tasks.

Future work would suggest recommending a standard optimal practice for medical students and residents to follow. This standard practice may include constructing a flowchart of the different alternatives of patient visits and what to do for each event to avoid unnecessary tasks. Although time per patient provides an objective metric, we recognize that it does not necessarily reflect the quality of care as some patients may naturally take longer, have additional needs, or be more medically complex. Future research should integrate quality indicators, such as patient satisfaction scores, to compliment these objective measures. Also, since the medical discussion task took the largest block of time, future research could evaluate how efficiency regarding these discussions could impact patient satisfaction. One limitation would be the Hawthorne effect regarding the providers wearing camera devices, which may have biased the results. One alternative is to utilize mounted devices to make providers perform more naturally. While training is proposed as a potential intervention to reduce LoS, we did not measure prior training to quantify its correlation with time measures. Future studies should explicitly evaluate the effect of direct training interventions on clinical efficiency.

Footnotes

Acknowledgements

We thank Dr. Matthew Helm and the Dermatology Department Team at Penn State for their assistance in this study. We thank Dayna Gager, Calista Long, and Megan Zhang, who helped transcribe the videos for data analysis. We would also like to thank all the participants for contributing to this study.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.