Abstract

This research aims to enhance ergonomic assessments by integrating computer vision algorithms with NIOSH lifting index parameters (HM, VM, DM, AM). Current methods are manual and subjective, lacking real-time analysis. Utilizing the coordinates of 17 body joint points, our algorithm automates this process, improving the precision, objectivity, and timeliness of ergonomic evaluations.

Introduction

Heavy lifting tasks in the workplace lead to numerous injuries (Shahnavaz, 1987). Estimating the lifting load remains a significant challenge as sensors like IMUs are intrusive and costly, and traditional evaluations are manual, subjective, and lack real-time analysis capabilities (Zhou et al., 2023a, 2023b).

This research uses a computer vision algorithm to assess ergonomic risks during lifting tasks by capturing 17 body joint positions and calculating NIOSH lifting index parameters (Waters et al., 1994). It enhances accuracy and efficiency, enabling real-time feedback. We develop an algorithm for interpreting CV data and create an API for ergonomic practitioners to improve workplace safety and prevent injuries.

Method

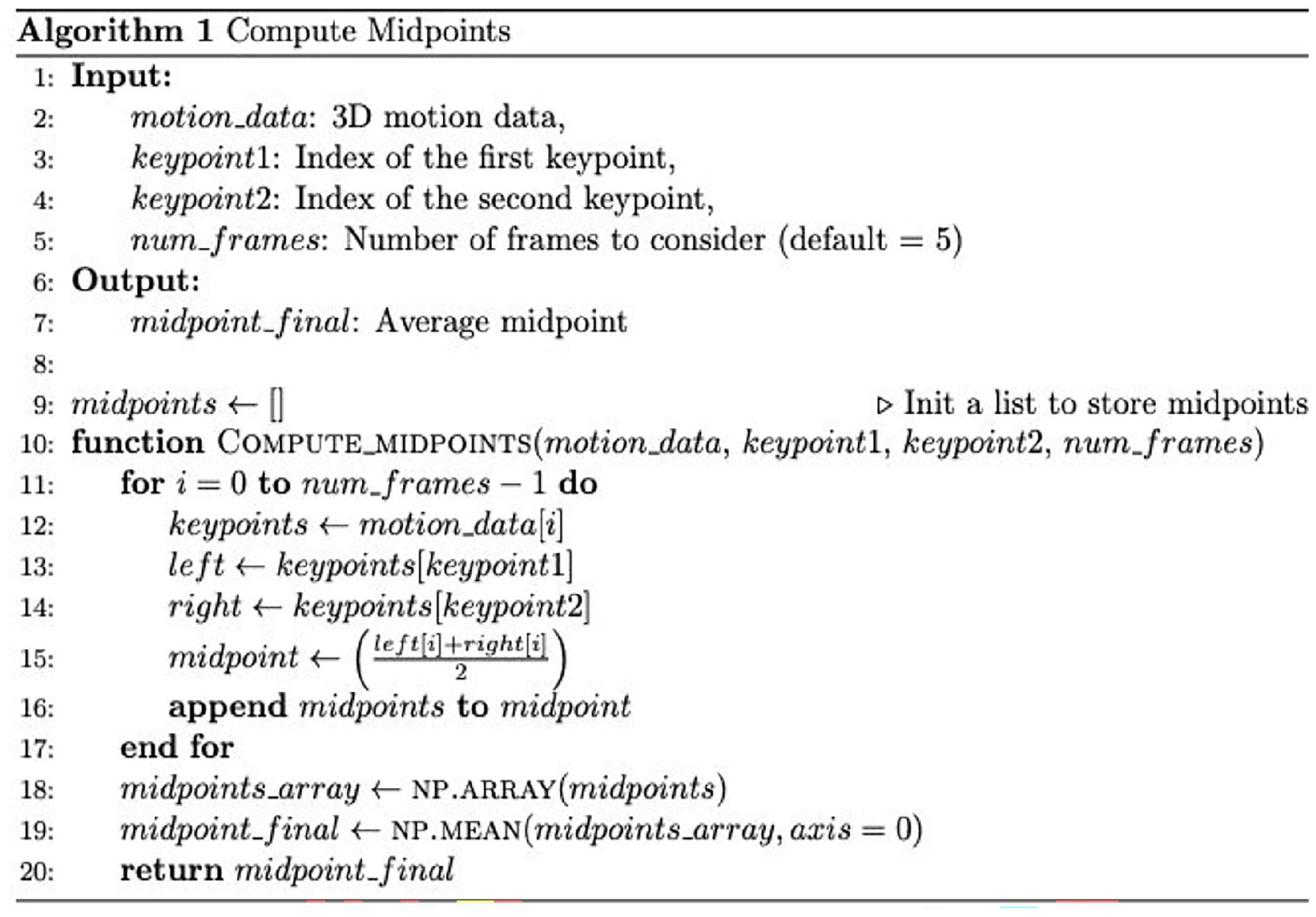

We first define some useful functions in calculation of NIOSH lifting equation. These functions cover all post-processing tools of human motion detection computer vision algorithm. A simple example is demonstrated below (Figure 1), where we calculate the midpoint of two 3-dimension points.

Example of calculation of the midpoint of two joint coordinates in the post-processing algorithm.

Utilizing the “Utils” functions defined, we then calculate the following parameters:

Result and Conclusion

We compared the task variable estimates obtained from computer vision to the predefined values from the designed task conditions. The results showed an average difference of 8 cm from the expected values. As pose-estimation algorithms in computer vision continue to evolve, we now bridge to apply these state-of-the-art techniques in the ergonomics field, particularly for assessing lifting risks.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.