Abstract

This paper presents a foundational framework for functional modeling of human-robot joint activity in dynamic and unstructured environments. Representing a model of work functions as a network allows for scalable analysis of functional dependencies that create coordination overhead in the human-robot system. Centrality of nodes and cycles in the network can reveal potential patterns of joint activity that point to alternate strategies for human-robot coordination. Analysis of these network structures can provide insight into how a human-robot system may synchronize their activity while managing coordination overhead. We illustrate the use of the framework with a model of collaborative navigation in disaster response, where re-evaluating goals as more information about the environment is identified as a key part of coordination. The modeling capabilities can aid in understanding the effects of coordination strategies and teaming configurations and inform the design of automation capabilities to better support collaborative capabilities.

Keywords

Introduction

Using robots in disaster response operations has the potential to greatly increase the capabilities of human teams. Robots allow operators to navigate areas inaccessible to human teams because of size or danger concerns in addition to specialized sensing capabilities (Murphy, 2014). However, barriers exist to their actual deployment to disaster settings. There are well-documented instances of operators experiencing high levels of cognitive load resulting from ineffective human-robot coordination in disaster response (Casper & Murphy, 2003; Chiou et al., 2022; Kruijff et al., 2012). This reflects the inevitability that robotic capabilities will fall short of their target level of autonomy as events evolve passed what they were explicitly programmed to handle (Woods et al., 2004), while also reflecting the need to explicitly consider how coordination occurs within Human-Machine Teams (HMTs).

To deploy robots successfully, system design needs to consider robotic competencies that support coordination and flexible changing of coordination strategies under different kinds of work demands. Coordination creates overhead as humans need to maintain common ground with robotic assets and with other human actors. Examples of overhead include effort and time spent monitoring variable work demands, updating others about the changes, identifying what one should do next and who is available, and repairing common ground (Klein et al., 2005; Maguire, 2019). Compared to human teams, coordination overhead can be particularly large in human-robot teamwork as cooperative competencies of robots are more literal-minded than those of humans. Likewise, coordination overhead can become especially difficult to manage as incidents escalate in tempo and complexity as events unfold (Maguire, 2019).

To enable effective joint activity, humans and robots should together be able to manage coordination overhead in such a way that no party is overloaded with coordination demands (Klein et al., 2005). One can imagine that some strategies of coordination are more appropriate than others for particular contexts, with variations in work demands, agent status, events in the work environment, etc. For example, during times of peak human workload, coordination may be modulated to reduce further burden on the human. To understand the feasible coordination strategies and trade-offs associated with them, our research is developing computational capabilities based on principles of functional modeling for analyzing human-robot joint activity and coordination overhead. A functional understanding of joint activity in human-robot teams provides a conceptual bridge from analysis to the design of cooperative robots.

Modeling Background

Functional modeling is used by human factors researchers and practitioners to analyze how different configurations of distributed cognitive work systems affect behavior in dynamic environments and to inform the design of cognitive support tools. There is a long tradition of using functional modeling to analyze coordination and cognitive work (Lind, 2023; Rasmussen, 1994; Vicente, 1999), and how it relates to the design of envisioned technologies (Miller & Feigh, 2019). Functional modeling characterizes the structure of a system in relation to its purposes (Woods, 2003), where a function more formally describes how something works in a particular context (Lind, 2023). Functions also relate to action, as functions serve to bring about designer and user intentions (Lind, 2023).

Characterizing a human-robot system in terms of its functions and the means-end relationships that exist provides a strong foundation for identifying how coordination demands arise. When human and machine roles are ascribed to functions (as being involved in that function), dependencies between functions create interdependencies between the agents that in turn create unique coordination needs (Johnson, 2014). Depending on how roles and functions are defined, there may be demands to monitor, communicate with, cross-check and supervise other agents. Thus, the ascription of roles to human or machine agents influences what coordination demands arise and how agents will need to synchronize their activities to realize system goals. How such coordination demands are managed is a key part of system performance (Johnson, 2014), yet is not often explicitly modeled.

Our work aims to use functional modeling and simulation to analyze how integrating robotic capabilities into a system has the potential to increase capabilities while at the same time creating new coordination demands. Additionally, our aim is to use the models to analyze how coordination overhead with new automated capabilities can be managed by enabling the human-robot systems to employ various strategies and teaming configurations. Our work builds on traditional qualitative methods of functional modeling (Lind, 2023; Rasmussen, 1994). While these models have shown to be powerful in explaining and designing cognitive systems, they are often limited in their ability to scale as new agents and capabilities increase the size and complexity of the system. We combine notions of functional modeling with computational structures, building on earlier work (e.g., IJtsma et al., 2019; Woods & Roth, 1994). A computational approach to functional modeling provides a toolset for analyzing systems as they scale by affording the use of computation to make sense of underlying dependencies in the functions that agents perform in pursuit of common goals.

Framework

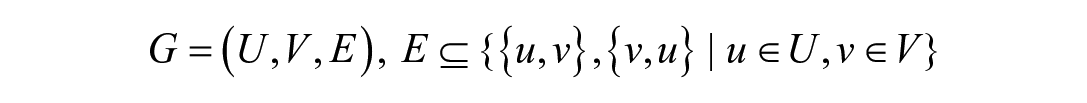

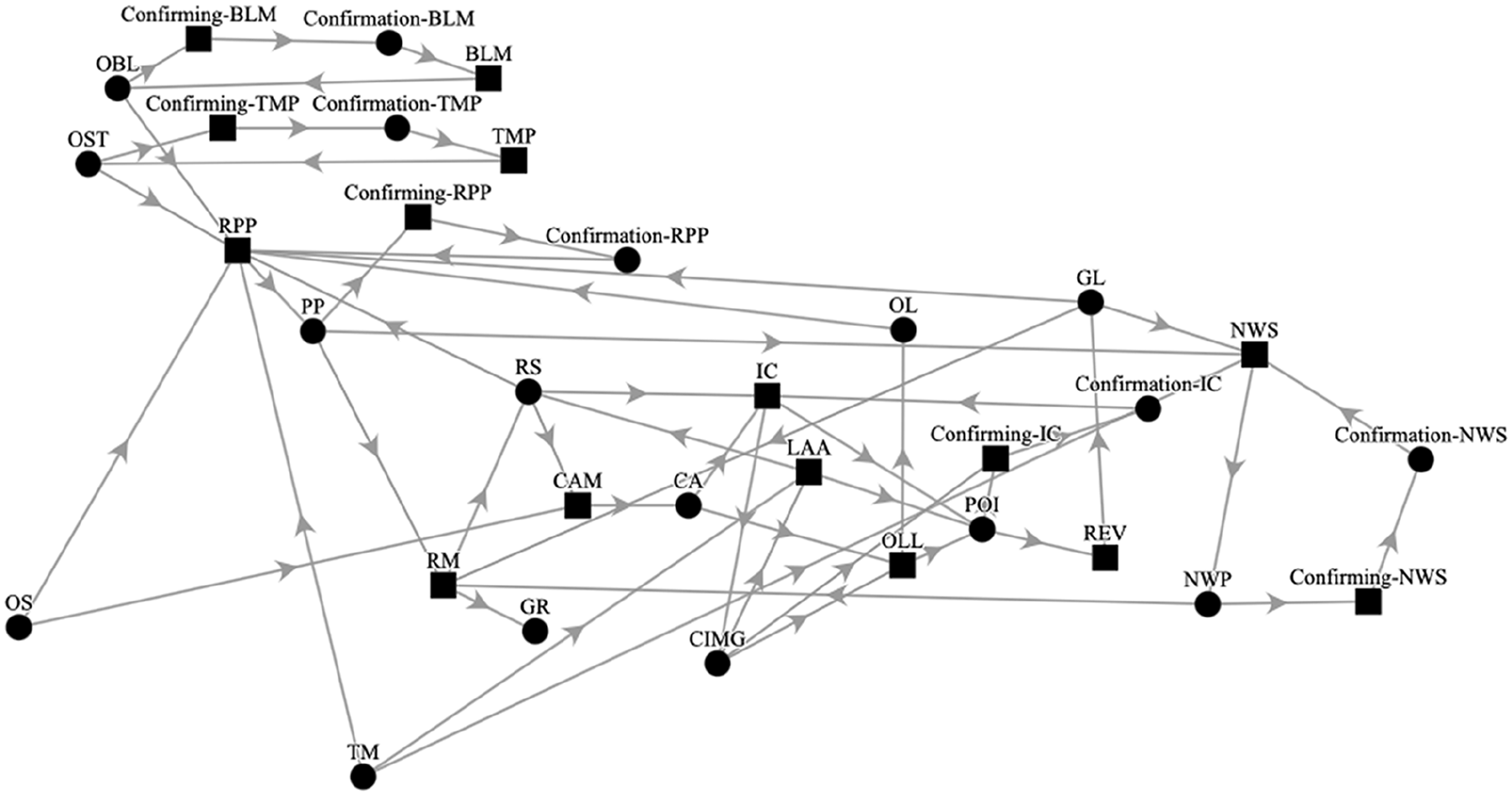

We developed a framework for functional modeling of a human-robot system using graph theory. We characterize the functions in a human-robot system and their relationships as a set of nodes that are connected by edges in a bipartite graph, shown in Figure 1:

Four-layer representation of the functional network, connected by means-end relationships.

Here,

Directed edges connecting nodes represent means-end relationships between layers. Resources are typically thought of as physical (e.g., a camera), however it is important to note they can also be informational (e.g., a coordinate location). These resources are used by agents to perform a function. A function being performed brings about a resource, and a resource can also be a means when it affords a function while simultaneously being the end of another function. Likewise, a function is a means to producing a resource while also being the end of resources being utilized by an agent.

The base environment layer describes the resources that are used to perform a function related to the team’s joint goal (e.g., reach the goal location). The distributed work layer is a series of functional transformations related to achieving the joint goal. An example would be “Robot Path Planning” (RPP) and “Next Waypoint Selection” (NWS) being two functions that help the robot navigate to an end goal location (e.g., a potential victim). A full list of functions and resources in the network for robot navigation in disaster response is shown in Tables 1 and 2.

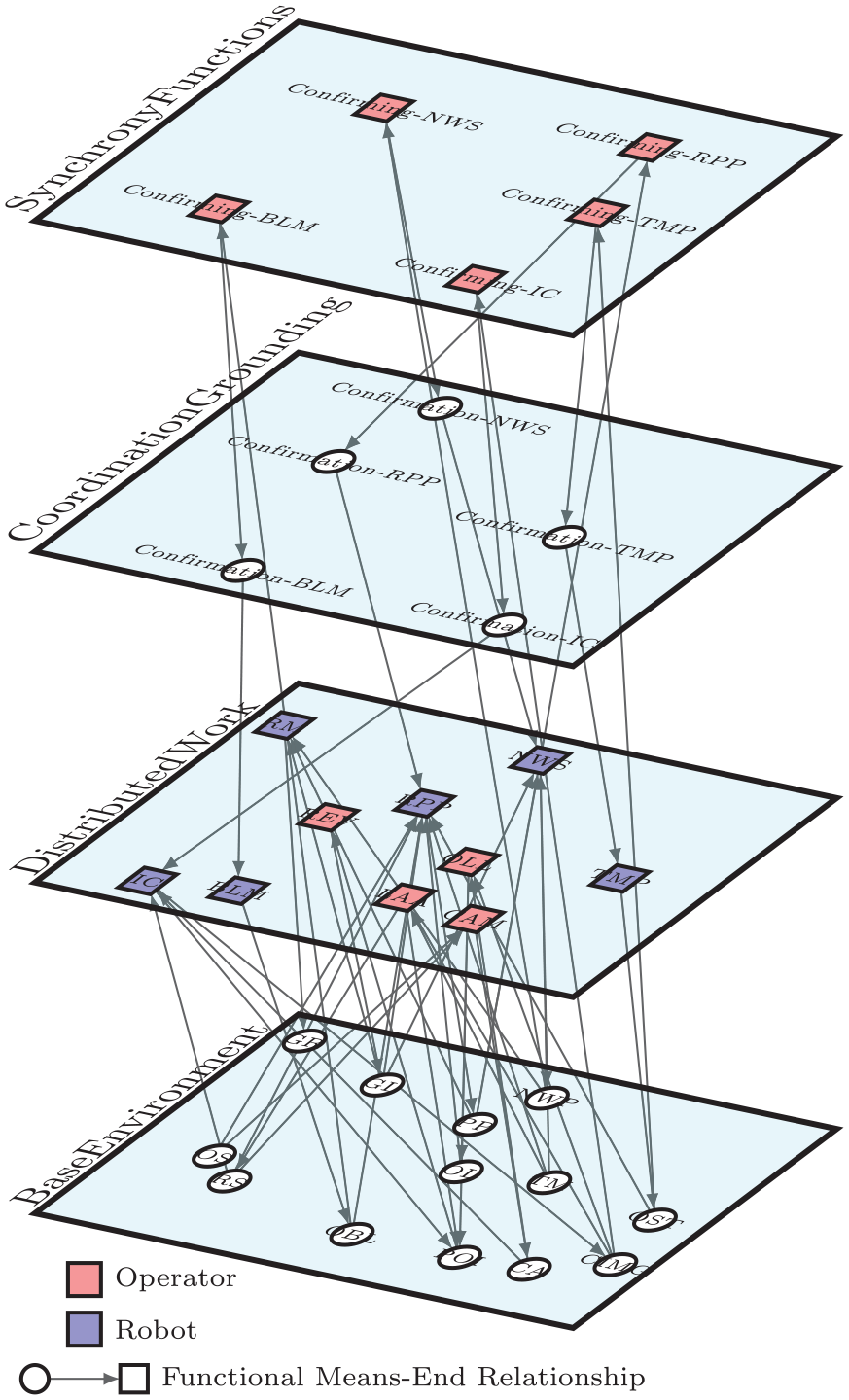

Function Nodes and Their Descriptions.

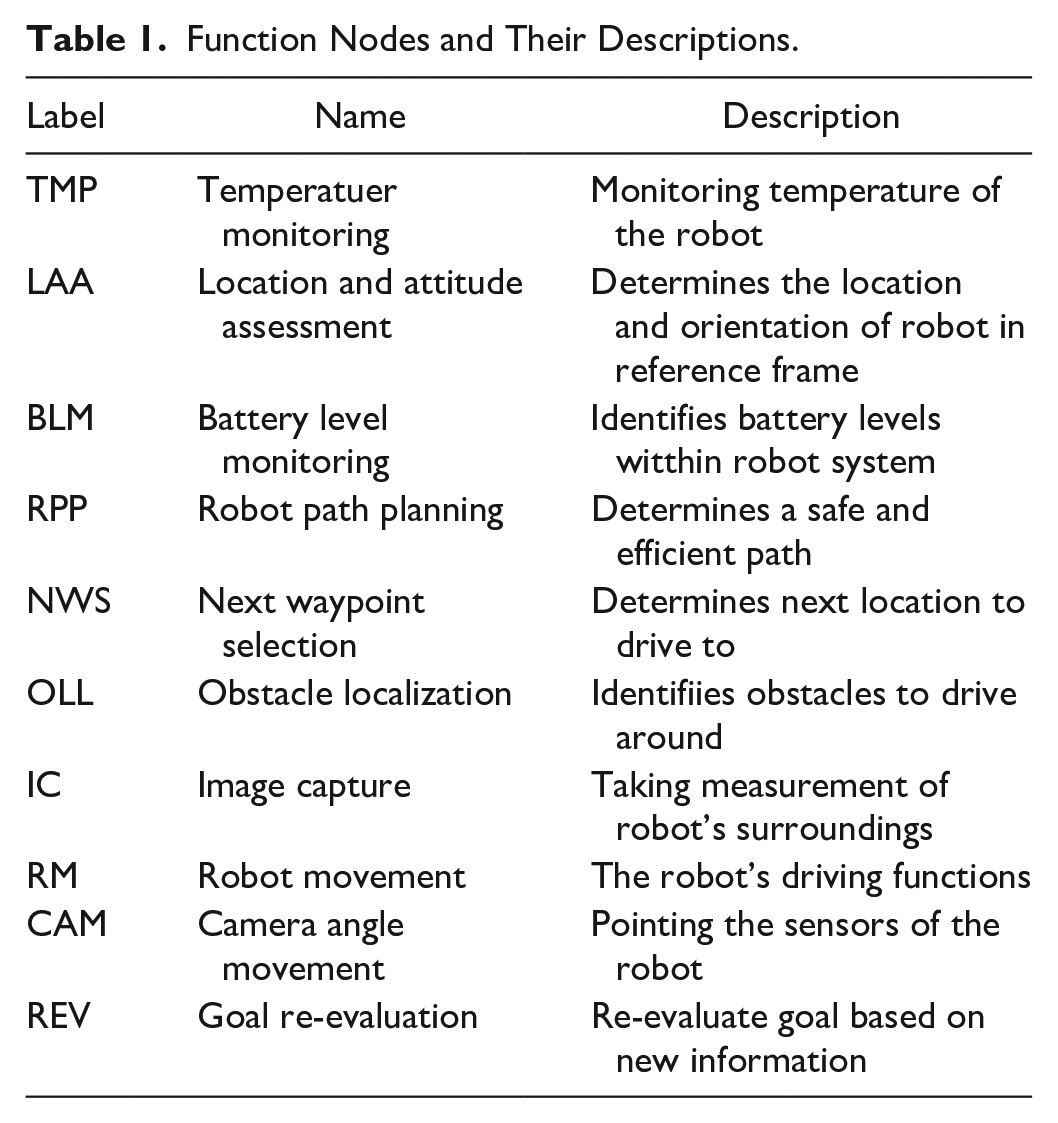

Resource Nodes and Their Descriptions.

The upper two layers reflect functions and resources related to coordination. Coordination is explicitly modeled to reflect how common ground is maintained and how machine agents are supervised. By quantifying coordination in terms of the functions that must be performed to maintain joint activity, we model how the decision to automate functions can create substantial coordination overhead. The synchrony function layer represents functions that afford continued coordination efforts between human and robotic agents. For example, the synchrony layer may include functions to supervise and coordinate with the automated capabilities. In our model of collaborative navigation, it captures functions to cross-check the performance of the robotic functions. The way functions are automated and then coordinated with human agents is critical for managing coordination costs and maintaining shared awareness.

Effective joint activity between humans and robots involves reasoning about functional means-end relationships to formulate collective strategies. It requires maneuvering constraints around resources (e.g., “I first need to determine my destination before a route can be created”), available functional means (e.g., there may be multiple ways to bring about similar outcomes), and interdependencies with the activities of other agents (e.g., a robot may need destination information to compute optimal routes). To manage interdependencies, effective joint activity involves identifying and managing coordination needs relative to the available cognitive and temporal resources. For example, it may involve modulating the amount of monitoring that is performed based on current workload conditions and criticality of maintaining close awareness of what the robot does. It may also include changing one’s performance of functions based on changing priorities such as thoroughness, performing some functions more often to ensure high quality resources as well as checking the work of others. The modeling framework reflects this kind of reasoning as it aims to explicitly capture the means-end relationships used for formulating strategies for joint activity.

Example: Collaborative Navigation in Disaster Response

We illustrate the use of the framework with an example in disaster robotics, as part of ongoing work to further develop and apply the modeling framework. One of the main challenges and goals of deploying robots in disaster relief efforts is navigation. Collaborating with Unmanned Ground Vehicles (UGVs) is typically done with an operator on navigational controls and a mission specialist focused on mission-critical tasks (Murphy, 2014). For this illustration, we will assume joint activity between a single operator and a semi-autonomous robot.

Several steps were taken to create a functional model of the robot navigation task and prepare it for computational analysis. An analysis was completed of cases of UGVs being deployed to a variety of disasters (Casper & Murphy, 2003; Chiou et al., 2022; Murphy, 2014), which was used to identify base work functions and resources for robot navigation in disaster response (Tables 1 and 2). These functions and the resources that support them were mapped onto the distributed work and base environment layers of the network, see Figure 1. To define a human-robot architecture, agents were assigned roles/authority over functions on the distributed work level. The way authority is distributed over functions determines the way in which agents will need to coordinate with each other. The means-end relationships between functions of the human and the robot create interdependencies, which directly create coordination demands. Figure 2 shows the entire network model for the robot navigational task for disaster response.

The full network with goal re-evaluation.

The functions to fulfil these coordination requirements can then be manually created, or automatically engendered based on heuristics. In this example, whenever the UGV was assigned authority over a function, a functional node was generated on the synchrony layer for the human to

Once the network was created and the candidate architecture defined, analysis of the structure of the network itself affords insights into the relationships of work. Network metrics such as degree and eigenvector centrality provide insight into which functions are most central to achieving shared goals. A high node centrality suggests a function is (inter)connected to many other functions and is therefore more critical for overall performance. In the example, centrality analysis shows that Robot Path Planning (RPP) is the most central function.

In addition to analyzing centrality of functions, “cycles of activity” in how functions are coupled can reveal potentially strong interdependencies that affect coordination overhead. These cycles, when involving functions of both human and robot, indicate potentially tight coupling between activities of both agents, and an associated need to synchronize around these loops. The focus of analyzing cycles is around the route planning (RPP) function, in line with it being the highest-centrality function.

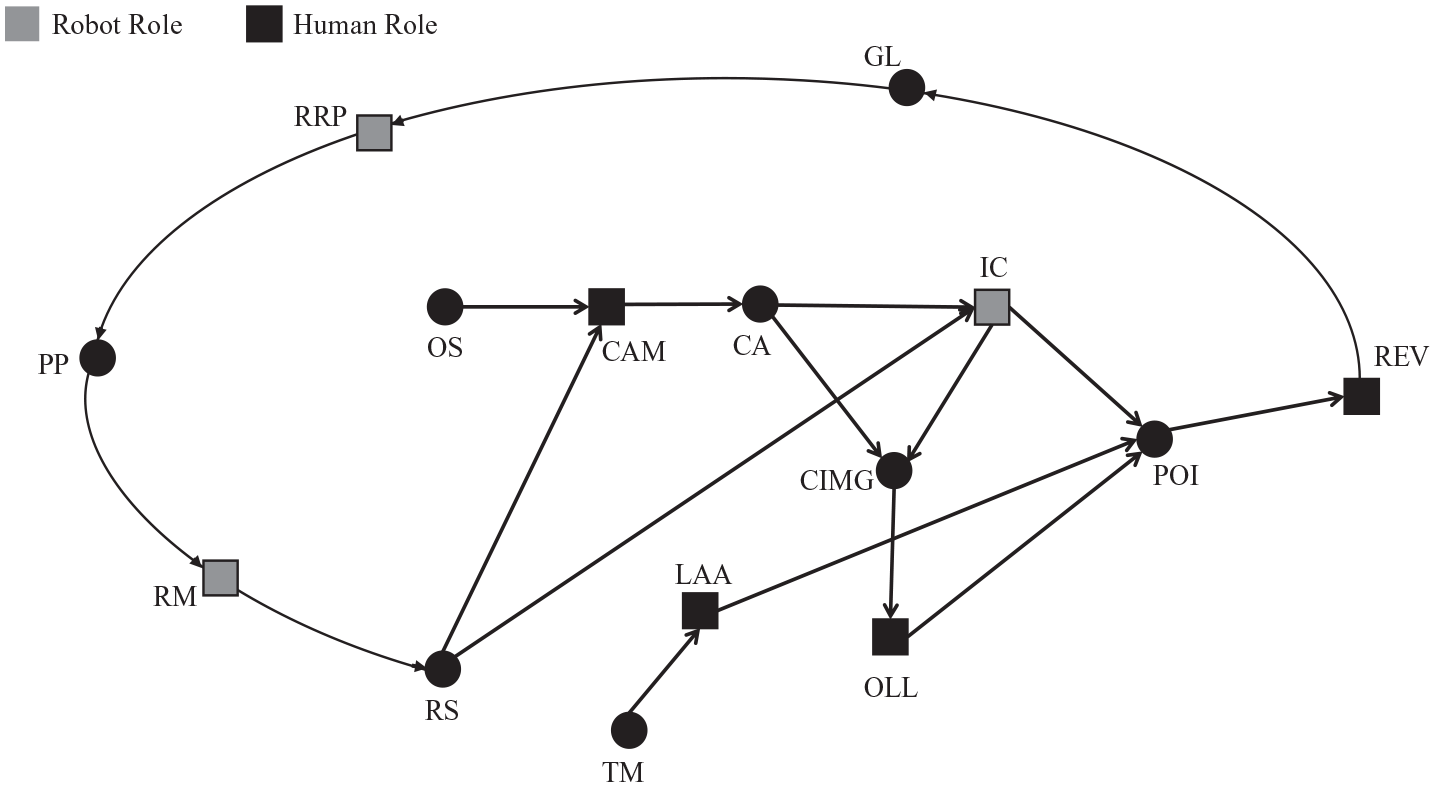

The first cycle that can be identified is termed the “Goal Re-Evaluation” (REV) loop, shown in Figure 3. This loop shows how path planning in collaborative navigation involves continuous re-evaluation of the target location, as the points-of-interest change with new information about the robot’s surrounding, derived from image capturing (IC), and areas worth of further exploration and/or obstacles are identified (i.e., areas to avoid). This corresponds to studies of human-robot interaction that show that shifting strategies from one context to another involves processes of re-evaluation and re-planning (Woods et al., 2004).

Subsection of full network showing the cycle for updating goal location (GL) with goal re-evaluation (REV).

The goal re-evaluation cycle shows how functions assigned to both the robot and human are related and interdependent for re-evaluating a goal based on a newly observed Point of Interest (POI), such as a partially occluded piece of clothing indicating a buried victim. To re-evaluate the goal, functions in this section of the graph create a cyclical pattern of dependencies. One aspect of joint activity in this example is determining when and how often this cycle needs to be looped through, which may depend on a variety of contextual factors. One way to characterize potentially alternate strategies is to analyze different degrees of thoroughness and efficiency in this cycle (e.g., cycling more frequently keeps the goal location more accurate relative to new imagery or points-of-interest but comes at a cost of needing to perform more actions and coordinate more closely).

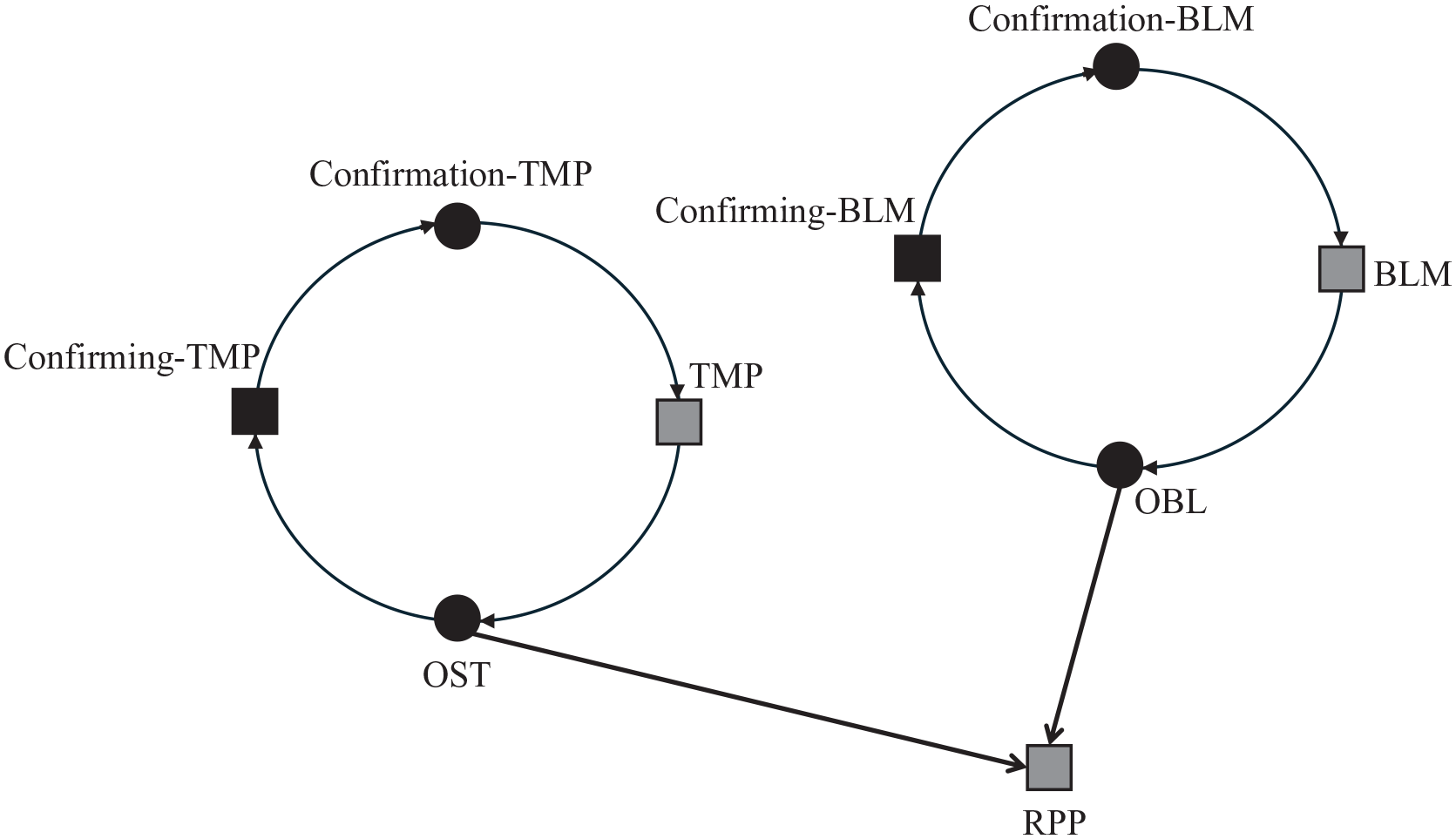

Similarly, cyclic coupling between human and robot functions may be found at the synchrony layer, where functions associated with explicit cross-checking are coupled with the robot functions. The loop in Figure 4 shows how confirmation functions related to RPP have interdependencies with robot and human functions. The confirmation functions for the human are used to cross-check automated functions, which in turn bring about the necessary resources for RPP (in this case, relevant information about the battery levels and component temperature that provide constraints on the path planning). This cycle reveals a potential trade-off that human operators need to make in real-time, between the benefits of cross-checking frequently (which can help catch robotic failures and concerns early and avoid costly coordination-breakdowns), and the effort and time spent on the cross-checking relative to other priorities.

Subsection of full network showing the cycle of the human confirming automated functions related to robot path planning (RPP).

In general, these loops highlight areas of coupling, with associated demands for synchronization between the activities of the agents. They also point to potential trade-offs that the human-robot system needs to make in real-time, around the frequency by which the agents loop through the cycle. Fundamentally, cycling more frequently through function loops can be characterized as being more thorough and accurate, but comes at a cost of more cognitive work (with associated effort and time that might take away from sustaining other important functions), while reducing the frequency can be a form of (cognitive and temporal) efficiency. There is likely no “best” strategy, as any strategy is highly contextual to a variety of dependent factors such as what is being prioritized in a given moment.

In extended work on this example, not included in this paper, we have translated the graph models into a form suitable for computational simulation, using the simulation framework Work Models that Compute (WMC). This builds on past work from IJtsma et al. (2019) where the functional relationships in the network provide a basis for a dynamic simulation through an environment, such as a UGV navigating a modeled disaster response terrain. Computational simulation can evaluate alternate strategies dynamically, as it computes how interactions play out over time. It can also identify any emergent effects that arise over time, that may not have been found from the graph representation alone.

Discussion

Designing robotic capabilities for effective collaboration requires further understanding of coordination functions for joint activity between human and robot agents. Modeling of human and robot functions and the means-end relationships between them provides a basis for analyzing joint human-robot activity. In the example, we demonstrated how identification of cycles of interdependent activity between agents can provide insight into possible trade-offs to be made in real-time by the human-robot system.

The framework provides a foundation for further research on the management of coordination overhead in human-robot systems. Ongoing work involves determining how social and technical factors affect coordination costs, so that both the benefits and costs of agents coordinating during joint activity can be better modeled. We are also developing and simulating strategies for controlling coordination costs and, from outcomes of simulation, providing actionable guidance to designing effective human-robot architectures.

With cycles identified, one possible way in which coordination overhead may be managed by a human-robot system is by synchronizing or desynchronizing cycles. Cycles in synchrony among multiple agents could ensure the most updated resources are available right when they are needed. Alternatively, de-synchronizing cycles can help spread out workload over time. These approaches need to be further explored and evaluated in computational simulations, for example by evaluating how the structure and timing of cycles affect taskload for human and robot agents.

As highlighted by Miller and Feigh (2019), a functional understanding of the domain is the basis for designing envisioned technological capabilities, such as autonomous robots. One challenge that persists with introducing new technology into a system is to anticipate how new capabilities transform the work needing to be done. While not all transformations and adaptation can ever be fully anticipated, the framework can be used by designers better understand how an envisioned robotic or machine capability will affect broader patterns of work, particularly around coordination overhead.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project is generously awarded by the National Science Foundation as part of a CAREER award to Dr. Martijn IJtsma in 2023. The authors also thank the other members of the Cognitive Systems Engineering Lab (CSEL) for their continued input and support of this research.