Abstract

Designing integrated systems of humans and automation involves envisioning how work will be performed, how it is affected by technological capabilities, and what will be helpful to support system performance. This is challenging as interactions and dynamics can create emergent effects that are difficult to predict. This demonstration aims to demonstrate the power of computational simulation in designing, verifying, and validating integrated systems of humans and automation. The demo will include an introduction to the simulation framework Work Models that Compute (WMC) used for our ongoing research, how to develop a work model in WMC, a demo of the simulation, and a demo of postprocessing visualization to analyze the simulation results. The approach can be applied in all stages of system design. With an example in aviation, we show how we use computational modeling and simulation to evaluate the robustness of envisioned operations of humans working with automated systems.

Keywords

Introduction

The evaluation of envisioned, yet-to-be-realized distributed cognitive systems is a challenging yet common problem in human factors. For example, in aviation, concepts such as Urban Air Mobility (UAM) envision new forms of autonomous capabilities that will need to integrate with human roles. With the introduction of new forms of autonomous capabilities, safe and efficient management of such machine assets by humans requires systems engineers to address a range of human-machine integration challenges.

To prevent and mitigate well-documented human factors issues early in the design of novel distributed cognitive systems, the human factors community has argued that human performance evaluation should occur in the conceptual phases of system design (Woods & Dekker, 2000). As human factors traditionally relies on human-subjects evaluation, evaluation early on in design is difficult as roles, tasks and procedures are still underspecified, and no prototypes of tools can be used to test.

Many performance issues in distributed cognitive work systems stem from the emergent behavior that arises when interactions between people, technology, and work play out over time, making it difficult to evaluate systems early-on. For example, human performance breakdown (and consequently, system breakdown) can occur when multiple threads of activity emergently converge in time to create overload. Clumsy automation is an example of such convergence, which has been a well-documented pattern in which automated systems inhibit the human’s ability to manage workload over time and avoid saturation (Sarter et al., 1997).

Computational simulation of envisioned distributed work systems can help more explicitly analyze the interactions between system elements and identify emergent properties (Leveson, 2020; Pritchett et al., 2014; Woods & Dekker, 2000), even during conceptual design stages. Insights derived from analysis of emergent behaviors can help identify where conceptual designs are brittle, and where limited resources could or should be spent to improve the initial designs and increase their robustness.

The objective of the demonstration is to highlight the affordances of computational modeling and simulation of distributed work systems to evaluate envisioned concepts for distributed cognitive work. We illustrate the kind of insight one can derive from simulation with an example of our ongoing work on designing a distributed cognitive system for short-range low-altitude aviation. While we present an example with a specific framework (Work Models that Compute, or WMC), we intend for this demonstration to be a more general discussion of computational simulation of cognitive work that goes beyond a specific implementation.

System Evaluation using Computational Techniques

Several computer-based frameworks for modeling distributed cognition exist, like Adaptive Control of Thought-Rational (ACT-R), SOAR, and Work Models that Compute (WMC). ACT-R and SOAR aim to represent how human cognition works and are well-known for modeling how agents’ knowledge and goals interact with the environment (Laird, 2012; Ritter et al., 2019). All model and simulate cognition in some way, but differ in the aspects of cognition they emphasize. For example, SOAR is based on theories of cognition with emphasis on modeling general artificial intelligence, with aspects of knowledge retrieval and memory. ACT-R is based on similar underlying theory of congition as SOAR but puts more emphasis on modeling of detailed internal processes of human cognition. WMC models cognitive functions but, in contrast to other frameworks, puts particular emphasis on how these cognitive functions are tightly coupled with dynamic processes in the work environment.

For this demonstration, we focus on computational simulation of the interactions between the activity of multiple agents (as part of a distributed work system) and the evolvement over time of events and processes in their shared work environment, as a major driver of cognitive work in dynamic environment (Woods & Roth, 1994). By explicitly modeling the coupling between cognitive functions and controlled processes in the work environment, such simulation is suited for identifying and analyzing emergent properties created by the interactions of the work activities and the dynamics of the work environment. This kind of functional modeling emphasizes the transformation of inputs to outputs of the functions performed by the agents more so than the internal information processing of the agents to achieve this transformation. Such modeling is particularly relevant for analyzing distributed work systems in dynamic environments (Woods & Roth, 1994).

We base the demonstration on the authors’ earlier work that uses WMC as the simulation framework. WMC has been used to evaluate robot failures and their effects on a human automation team (Ma et al., 2018) and to design and evaluate work allocations in space operations (Ijtsma et al., 2019). The computational model will be simulated using Work Models that Compute (WMC) (Pritchett et al., 2014).

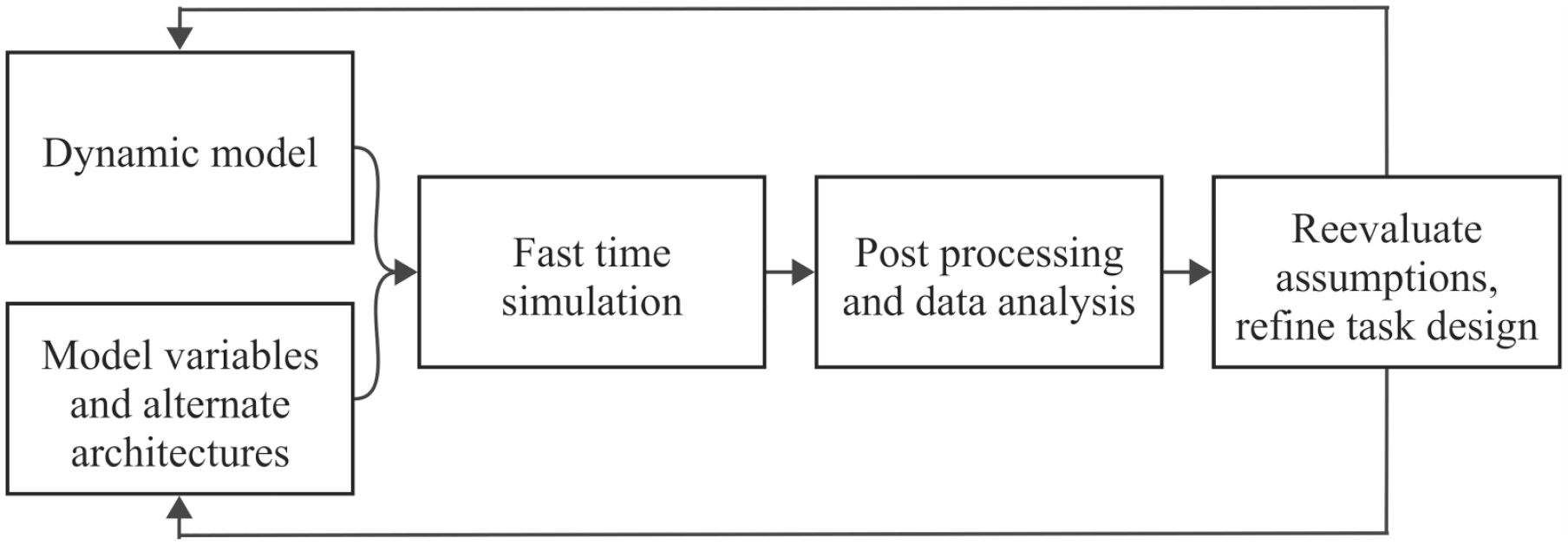

Figure 1 shows a general cyclic process of using fast-time computational simulation to analyze system design in an envisioned work environment. The dynamic model is the key computational element, with representations of both the work functions as well as models of the environment. Such a dynamic model captures the work functions (e.g., representing activities that must be done to accomplish the work) and the work environment, which, critically, is dynamic as events and processes unfold over time.

Architecture of the process in demonstration.

A dynamic model of work is ideally set up to support the re-configuration of a simulated architecture without significant modeling overhead. These are the “model variables and alternate architectures” part of Figure 1. The ability to vary input parameters without making major simulation model changes is a prerequisite to exploring system behavior under different system designs. For example, when the goal of an analysis is to evaluate alternate architectures in terms of the roles that agents take on, the roles of agents in the systems should be reconfigurable without needing to modify significant parts of the model.

With a computational representation of work and input variables that allow conducting computational experiments, the fast-time simulation element takes the actual “computing” part, where simulation captures the dynamic interactions between the parts through time. This requires a simulation engine that can simulate the progression of a model over time and log relevant metrics. When using an existing simulation framework, such a simulation “core” is often part of that framework. For example, WMC has a simulation core that contains logic for simulation that relies on work modifying the environment, creating a need for further work, and logging the interactions and states of the simulations to highlight the emergent properties (Pritchett et al., 2014).

The simulation framework directly logs metrics like task execution time, information exchanges, and environment state. Still, analyses often warrant further processing of the output of single or multiple simulation runs. For example, when model parameters were varied between multiple simulation runs, postprocessing of results can do a comparative analysis of the resulting system behaviors. In our own simulation, significant effort has gone into developing general capabilities for further data processing and visualization, and having informative data visualizations can speed up both model development and analysis of the simulation output. Examples of the general capabilities include mapping information exchanges into graphs or network visualizations, calculating moving taskload averages and mapping them onto action traces, and plotting taskload peaks into bar charts for further analysis.

Data analysis and visualizations can obtain insights about emergent system behavior. To close the loop, the insights drawn from simulations can be used to further modify the design or the simulation conditions, which in turn needs to be reflected in revisions to the dynamic model and the model variables. The suggestions and modifications based on the output can be integrated into the models, and new questions can be explored. Future iterations can be designed based on the goals of exploitation or exploration.

In the following two sections, we discuss the development of a computational work model and the evaluation of system behavior through simulation.

Modeling Work in Computational Form

Depending on the resources available for modeling, some scoping is often necessary in terms of the use cases and scenarios to model to keep development efforts reasonable. One way to scope is to select one or more scenarios of interest based on a literature survey about the operations under study. Scenario selection is a crucial step that must align with the modeling aims. In this case, the aim of modeling is to understand how how distributed cognitive systems interact with events and processes in the work environment. Therefore, scenarios and problem spaces that requires decision-making under dynamically changing situations, such as anomaly response, healthcare emergencies, or aviation contingencies, are particularly suitable for modeling and simulation.

Development of a computational model of work starts with function-based representations of the system. While the specifics of developing a computational work model depend on the goal of an analysis and the specifics of the problem, there is a large body of tools available for functional modeling of work. For example, cognitive task analysis (CTA) and cognitive work analysis (CWA) provide frameworks for systematically identifying and representing work functions of systems, as well as identifying key environmental processes that may drive cognitive work. Such approaches often draw from a variety of sources to understand cognitive work, such as field observations, interviews with or involvement of subject-matter experts, and document/literature reviews.

From these sources, one can create functions that represent the cognitive work of the system, together with more specific actions that represent the actualization of that function in the context of a particular operational scenario or situation. Functions are generalizable descriptions of transformations that capture how work both responds to and acts upon the work environment. Whereas functions are often more general descriptions of how something works and what transformations it makes, actions describe the specific changes and details on attaining it in a particular context (Lind, 2023). For example, UAM operations will need functions associatd with the control of the aircraft and fly a given maneuver or trajectory, which can be achieved through actions like managing waypoint progress, landing, taking off, and changing heading.

To evaluate the robustness of distributed work systems, it is necessary to understand and model how functions, performed by agents, interact with the work environment and with each other. This requires accurate modeling of the inputs, the transformations, and the outputs of the functions more than the specific modeling of internal processes of those functions (Woods & Roth, 1994). Thus, models of functions do not claim to be models of how that agent actually makes the transformation (as in specific models of cognition), as long as the inputs and outputs are representative for the kind of dynamic transformation that occurs in the system as a result of that function. This differs from cognitive simulations that aim to represent how human cognition works (e.g., SOAR, ACT-R), as was an aim in the early research on artificial intelligence.

To accurately model the transformations represented by cognitive work, functions and actions will need the following:

The resources required to perform that action.

The transformation to the work environment made by the action.

Logic that defines the timing of actions. Timing of actions can be modeled as a response to events and states in the environment, as well as an outcome of reasoning for achieving goals (e.g., following means-end relationships that establish a need to execute an action as means to a particular end)

For example, to change an aircraft’s heading, one needs to know the current and target heading of the aircraft. Computationally, the action will modify the control loop to change the heading from the current heading to the next heading. The action will be scheduled by an explicit need to change the heading or the aircraft passing a waypoint in the flight route that also requires the aircraft to set the next waypoint as its goal.

In addition to transformations representative of the cognitive work functions, modeling should also consider the dynamics in the work environment to simulate the interaction of functions with their work environment. This is the realm of physical modeling, where systems theory and dynamics provide modeling techniques to characterize the dynamics of a system. For example, it can include flight dynamics models (as a process that air traffic controllers interact with), powerplant dynamics (for modeling powerplant operations), orbital mechanics, aircraft dynamics, and even organizational dynamics.

Evaluation Through Fast-Time Simulation

During runtime, the actions will be linked to the information and tools required to perform the action, the environment that the action can act upon and modify, and the agent executing the action. The late linkage of the agent and action allows for the simulation of multiple system architectures (Ijtsma et al., 2019). During a simulation, events and processes in the work environment unfold over time, as captured through models of system dynamics (e.g., a model of aircraft dynamics simulates an aircraft flying). Likewise, functions and actions get scheduled dynamically based on their logic, in response to events and states in the environment, or logic that captures other reasoning for the timing of actions. Once the scenario goal is reached, the simulation will end. The interactions between the timing of actions and events and processes in the work environment determine the temporal flow of the work.

Simulation of the work should start early, even when the details and specifics of the functions are yet to be fully modeled. In fact, our experience is that early representations are often incomplete or underspecified, and simulating the incomplete model is a way to uncover where further specification of the system design is necessary. In the following paragraphs, we discuss three layers of evaluation that correspond to phases in the modeling efforts and increases in the fidelity of the models:

Evaluate the feasibility of operations,

Evaluate emergent behavior by varying input.

Identify the boundaries of system performance.

The first layer of evaluation checks if the concept of operations is feasible; that is, does the concept indeed achieve the desired operational outcomes? Compared to qualitative descriptions of work, developing a computational work model requires making work functions more explicit. While existing qualitative descriptions are valuable input, these descriptions are often underspecified relative to what is needed to make a concept “run” dynamically. Hence, constructing the model involves identifying underspecifications and then systematically extending the existing descriptions as needed to make the concept feasible. This is an iterative process in which work is modeled, initial simulations are run, and output is analyzed to see whether desired outcomes are achieved.

Once work function models are sufficiently specified and modeled to create feasible and runnable operations, the second layer of evaluation introduces variability by changing the simulation conditions to evaluate the system’s response to the kind of variability and disruptions that a distributed work system may reasonable encounter during its operations. This is where, if work functions are modeled well, the modeler can truly leverage the power of computational models to explore the range of behaviors that may arise under variable conditions. To do this, computational models of work should be constructed in ways that allow for easy reconfiguration of system parameters. For example, the modeler can modify the time when the contingency occurs, change the speeds at which the aircraft can fly, or vary the distribution of roles and responsibilities in the concept. This layer helps reveal how emergent behaviors arise from such variations in the system.

After initial exploration, a third layer of evaluation can take variability to the extreme; for example, intentionally aligning the time the contingency occurs with the time the aircraft reaches a decision point for diversion creates time pressure that would further “stress” the modeled system. This layer helps us see how the system responds when an event on or outside the performance boundary occurs. Patterns of failure in the simulation output can then be analyzed in two ways: (a) as a failure of the model itself (where the stressing is verification of the model) or (b) as a failure of the concept. We have found that the second option should not be discounted easily: we have found that failures in the simulation can be indicators of more fundamental issues with the envisioned concept rather than issues with the model itself. Thus, while there may be a tendency to put “fixes” in the computational model, it is essential to keep a clear mapping between the envisioned concept as documented and any modeling fixes made.

Demonstration

The demonstration illustrates the use of simulation to evaluate an envisioned distributed work system in aviation, as part of an ongoing research project on contingency procedures for envisioned UAM operations. In the aviation community, UAM is a concept for low-altitude, shorter-distance air transportation in urban environments. UAM concepts describe general procedures for how this envisioned system should detect and respond to contingencies like aerodrome reconfiguration, loss of command and control (C2) link, and weather anomalies (Deloitte Consulting, 2021; Federal Aviation Administration, 2020; National Aeronautics and Space Administration (NASA), 2020; RTCA, 2023). An aircraft with a lost C2 link can divert to a closer vertiport or continue along its planned path (Boeing, 2022). The rest of the system can apply different strategies to adapt and respond to the actions performed by the aircraft with the loss of link. We are using fast-time simulation with the WMC framework to evaluate these envisioned contingency management procedures and demonstrate our simulation of this future system responding to a contingency in which an autonomous aircraft experiences a loss of C2 link.

Figure 2 shows a summary of the demonstration as a flow chart. We start with creating computational models (a) that are simulated using fast-time simulation environments (b), and the results are visualized and analyzed (c). The demonstration shows how we developed the computational work model, followed by a demonstration and discussion of the three layers of evaluation. We do so by first demonstrating the architecture of the simulation framework and the process of developing computational models with an example. The demonstration then presents the simulation, the required parameters, and the steps in successfully running a simulation. Finally, the demonstration presents interactive visualizaitons to help analyze the simulation output data. The visualizations show output of simulations to identify patterns and can be modified as needed to suit the analysis goals. This is one of the many possible data analysis/visualization techniques to help analyze WMC output.

Work models, agent action linkages, and assumptions (a) are simulated and processed in computational framework (b) to get visualizations (c) for analysis.

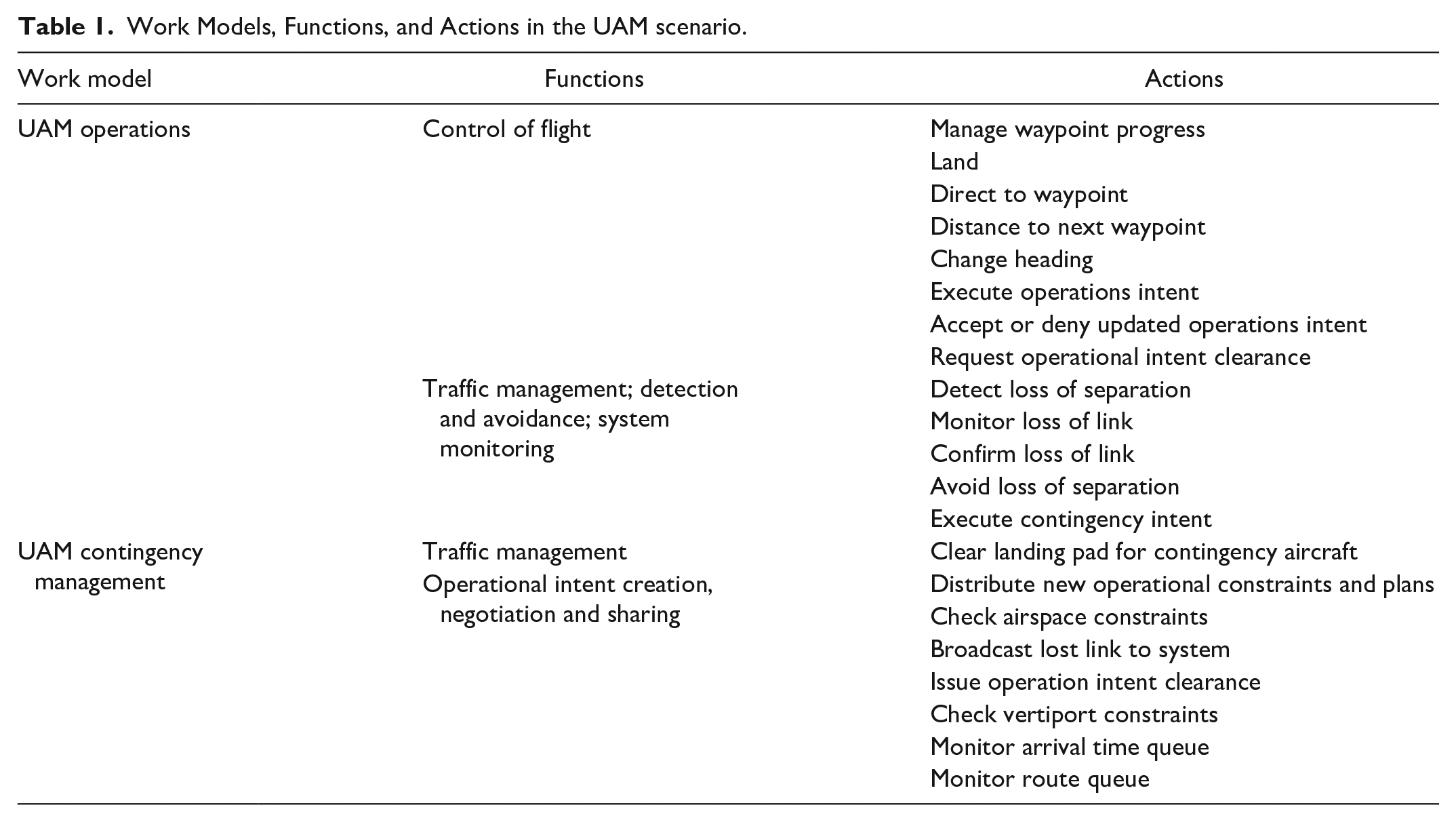

The use case for the demonstration is a UAM scenario with five aircraft. Table 1 outlines the generalized functions of contingency management in UAM aircraft and the actions simulated in the scenario.

Work Models, Functions, and Actions in the UAM scenario.

The demonstration highlights the three layers of evaluation to reveal insights into the increase in information exchanges, possible losses of separation, and overall delays in the system. As part of our ongoing research with this model, simulations helped identify emergent behaviors created that point to the importance of operations needing to keep pace with cascading effects of a lost link event. We found that too slow responses of the distributed work system can result in a loss of separation between aircraft, as a result of lag in the system adapting to the contingency. We also found (and demonstrate) how coordination surprises can arise when communication of key information is not sufficiently fast. Both illustrate the complexity of synchronizing the response of a distributed work systems with ongoing and cascading events in the work environment—in this case, the airspace. For example, it is easy to suggest an aircraft reroute in response to a predicted loss of separation. However, the proposed plan must be coordinated among all the system participants, which can take time (Paladugu et al., 2023). Meanwhile, the aircraft keeps moving, which is easy to miss in a static model.

Conclusion

Computational modeling and simulation can evaluate the robustness of envisioned distributed cognitive systems. Simulation can evaluate how interactions between a distributed cognitive system and its dynamic environment can create both positive and negative emergent effects that can strengthen the ability of the system to respond to disruptions. Unlike traditional, paper-based approaches, it can also provide and solidify assumptions around the work demands in distributed systems. By smartly leveraging the power of computation, these technique can be an exploratory tool to compare alternate system design and architecture, and reveal where conceptual designs are sufficiently robust and where further design resources should be invested to increase robustness. Particularly in times when new automated capabilities that have more authority and autonomy enable systems and work at larger scales, these novel techniques provide ways to cope with the complexity of designing and evaluating integrated systems of people and technology.

Footnotes

Acknowledgements

The authors greatly appreciate the inputs and feedback provided by the NASA team, especially Jason Prince. The authors would also like to thank the other WMC developers.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was performed under NASA contract number 80NSSC23CA121.