Abstract

Many issues in training system design and development may be mitigated by applying and improving requirements engineering processes. Building on prior work pertaining to team training and collaborative problem solving (CPS), this work examines the value of empirical and technical literature as a source for requirements elicitation. We apply a novel approach—thematic inclusive artifact-based requirements analysis—to identify and consolidate excerpts containing requirements and other considerations for training macrocognition in teams for CPS contexts. Our methodological contribution supports training practitioners who may be engaged in requirements activities during training development, identifying evidence-based training practices, or evaluating training. We discuss the benefits and limitations of this approach in the context of current requirements engineering practices, future directions, and the value of published literature as a complementary source for requirements elicitation in training system development.

Introduction

Not all training systems realize their potential, due in part to a theory-practice gap in the instructional sciences (Hirumi, 2021). Recently, the concept of “learning engineering” (Walcutt & Schatz, 2019) was developed to address the theory-practice gap (see also Kuran, in press; Macfarlane & Mayer, 2005; Short, 2006). A unique feature of this developing area involves the integration of instructional systems design (ISD) and systems engineering processes. Specifically, ISD and systems engineering processes can and should be mutually informative through the adoption of requirements engineering (RE) processes within ISD frameworks (cf. Armendariz & Walcutt, 2023; Sonnenfeld et al., 2023a). As suggested by Milham et al. (2008) and Stanney (2008), training effectiveness may be supported—and many of the issues in training systems mitigated—by adhering to systematic RE in the development of training systems (see also Young, 2004).

RE may be defined here as a systematic approach to the management of requirements—documented representations of needed capabilities or properties of a given system (Glintz, 2024). RE involves a process of identifying, eliciting, documenting, specifying, verifying, and validating these requirements—with the goal of minimizing the risk of delivering a system which does not meet stakeholders’ needs and desires (Glintz, 2024; Young, 2004). Human Factors practitioners involved in training system development may be well acquainted with these processes. But the requirements engineer may often be a role rather than a job title, one that may be shared, and one typically assigned to software developers rather than practitioners from other disciplines (Glintz, 2024; Laplante & Kassab, 2022). In domains outside of training, this may be a non-issue, with decades of refinement in the RE approach (Young, 2004). However, current RE practices retain a few constraints which should be addressed in translation to training system development contexts.

In this paper, we address the constraint that current practices and guidance for requirements elicitation focus largely on the use of inquiry-based techniques during interactions with stakeholders to elicit requirements (Stanney, 2008). We do not deny that these are practical and effective techniques for requirements elicitation, but it is a difficult, iterative, and error-prone process (Davis & Nori, 2007; Laplante & Kassab, 2022). Furthermore, requirements activities in ISD contexts tend to be focused on specifying instructional goals and objectives (the “what”), rather than the instructional elements to be employed in training design (the “how”; Milham et al., 2008; Stanney, 2008). We suggest that this focus overlooks the potential value of published empirical and technical literature as a source for training requirements. To examine the utility of these sources for training requirements elicitation, we refined an approach for examining textual artifacts to elicit requirements for training in complex environments, to facilitate performance on collaborative problem-solving (CPS) tasks.

Approach

Building on prior work identifying team training concepts with utility for improving team cognitive processes (Fiore et al., 2015), we seek to develop training interventions to maintain and improve team cognition in long-duration exploration mission (LDEM) contexts. Specifically, we are developing training targeting competencies associated with knowledge building (e.g., Rentsch et al., 2014) and knowledge sharing (e.g., Sikorski et al., 2012) to improve team processes and outcomes. Grounded in the theoretical lens of the Macrocognition in Teams Model (MITM; Fiore et al., 2010), the focus of our work is to delineate predictors and indicators of team performance during CPS tasks. Toward this goal, we sought to understand the scope and content of guidance and related considerations available in the extant literature related to the design of training in CPS contexts.

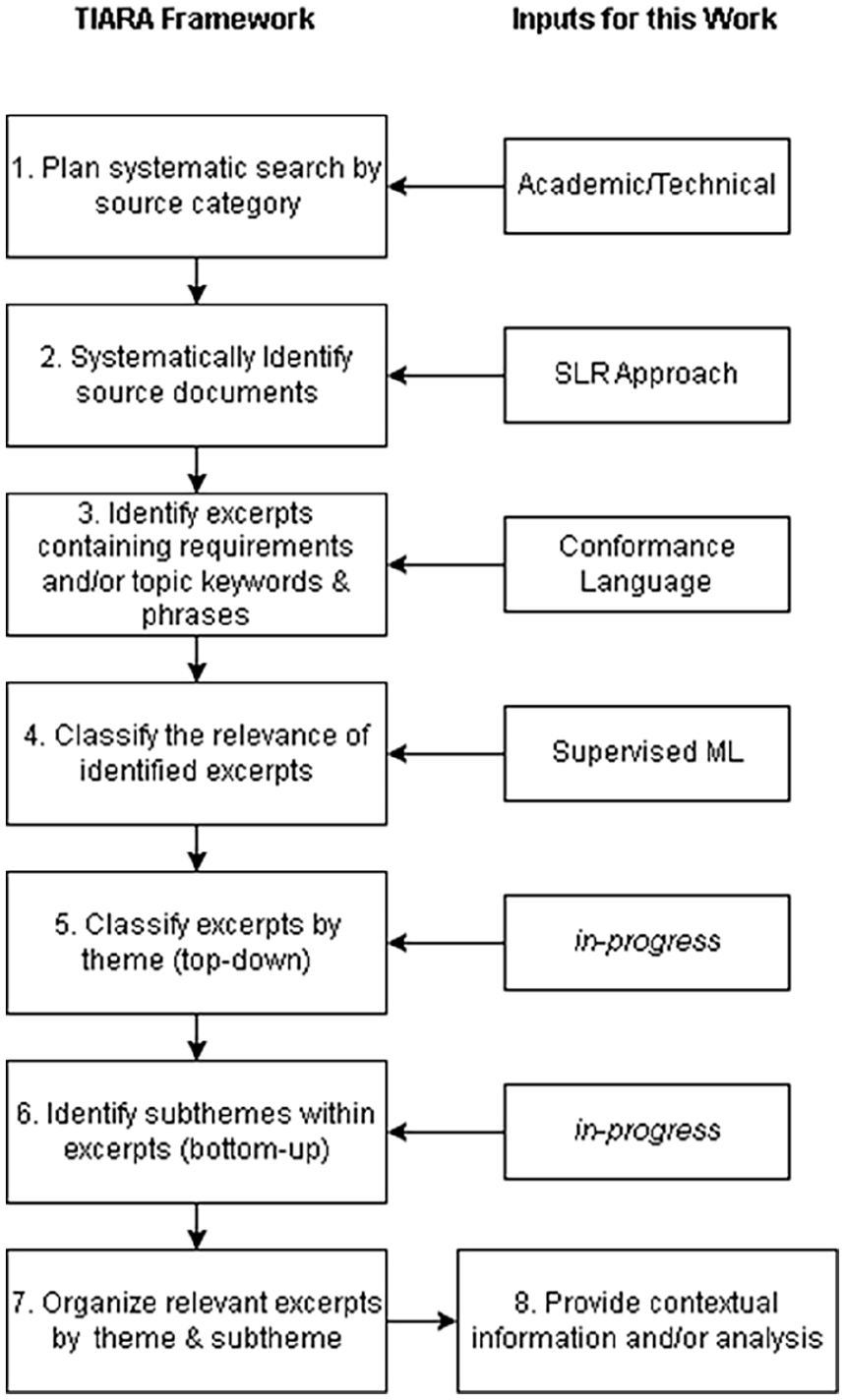

After conducting a systematic literature review to identify applicable empirical and technical source materials, we worked to identify excerpts containing requirements and other considerations relevant to our problem context. To accomplish this goal, we refined a novel approach developed as part of prior and ongoing research (see Sonnenfeld et al., 2023b). We refer to this as thematic inclusive artifact-based requirements analysis (TIARA). After a process of screening excerpts, thematic coding, and requirements triage, we elicited a set of requirements, recommendations, and other considerations for training macrocognition in teams for CPS contexts. We supplemented this with descriptive analyses of the bibliometrics of our empirical and technical documents and of the excerpts within each thematic category.

Method

To identify the source materials for requirements elicitation and analysis, we conducted a mixed-method systematic literature review (SLR). The SLR was conducted by a team of two researchers following PRISMA guidelines (cf. Higgins et al., 2023; Moher et al., 2015; Shamseer et al., 2015) and informed by other relevant work (e.g., Briner & Denyer, 2012). We used systematic review automation (SRA) tools where feasible and appropriate (Beller et al., 2018), as such tools have been found to reduce the time to complete SLR tasks while maintaining methodological quality (Clark et al., 2020; Scott et al., 2021) and are consistent with accepted SLR methodology (Higgins et al., 2023).

Research Question, Keywords, and Databases

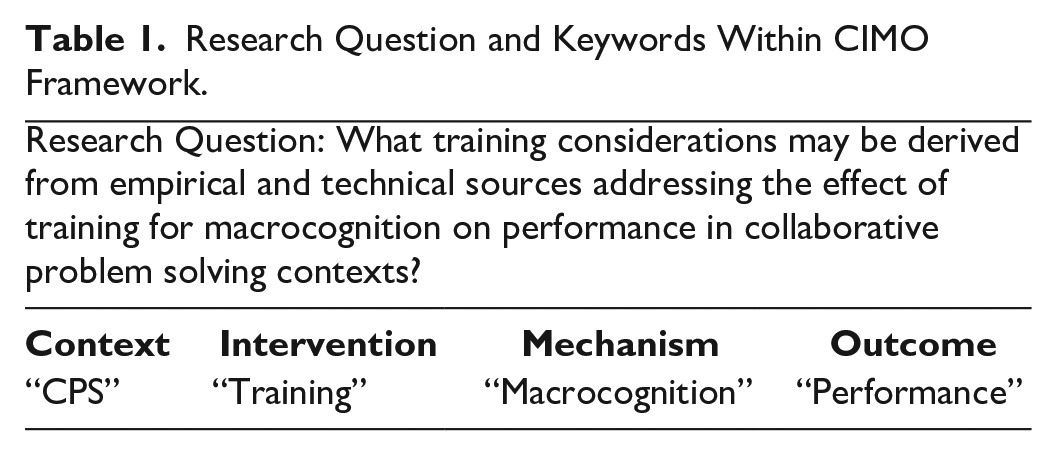

Adapting PRISMA guidelines, we aligned our research question and subsequent keywords with the CIMO (Context-Intervention-Mechanism-Outcome) framework (Denyer & Tranfield, 2009), given its suitability to research in the social sciences (see Table 1). After several iterations, the keywords were reduced to only the most relevant terms. Synonyms, acronyms, alternative spellings, and related terms were eliminated from the keyword listing to narrow the scope of the review to ensure a representative sampling of articles and eliminate the need for additional unnecessary exclusions. For example, general terms such as “team,” and “group” were excluded as keywords as they were redundant to the context of CPS, and the term “learning” was excluded as a keyword to reduce the likelihood of obtaining research ungeneralizable outside of the PreK-12 domain. Conversely, the term “macrocognition” was specified as the mechanism of interest in order to broaden the possible mechanisms accounted for in the research (e.g., team processes) through grounding of resultant articles in the macrocognition literature. Search keywords were then combined using Boolean operators to generate the following search query: (“collaborative problem solving” AND “training” AND “macrocognition” AND “performance”).

Research Question and Keywords Within CIMO Framework.

Because we were interested in both scholarly and technical sources, the search query was executed in multiple databases. To ensure coverage of scholarly sources, we executed the query in databases including EBSCO, Ovid, ProQuest, Web of Science, and Google Scholar. To ensure coverage of technical sources, we also executed the query in databases including the NASA Technical Reports Server (NTRS), the Defense Technical Information Center (DTIC) Report Collection, the Organization for Economic Co-operation and Development (OECD) iLibrary, the Education Resources Information Center (ERIC) database, and the Science.gov database. Results from the National Technical Report Library (NTRL) were not included as it did not provide a function for exporting results.

Inclusion/Exclusion Criteria

We developed a list of exclusion criteria by adapting the CIMO and PICOST (Population Intervention Comparison Outcome Setting Time) frameworks. The following sources were excluded: (a) duplicates; (b) sources without direct generalizability to adult populations / sources only discussing child/minor populations; as well as sources not discussing (c) collaborative problem solving; (d) training interventions; (e) macrocognition or related processes; (f) team/groups; (g) of three or more participants; (h) sources not discussing/measuring performance, (i) sources only discussing PreK-12 settings; (j) sources before 2009; and (k) sources not in English. This review was inclusive of any scholarly/technical work (e.g., empirical articles, proceedings papers, technical reports, review articles, book chapters, etc.).

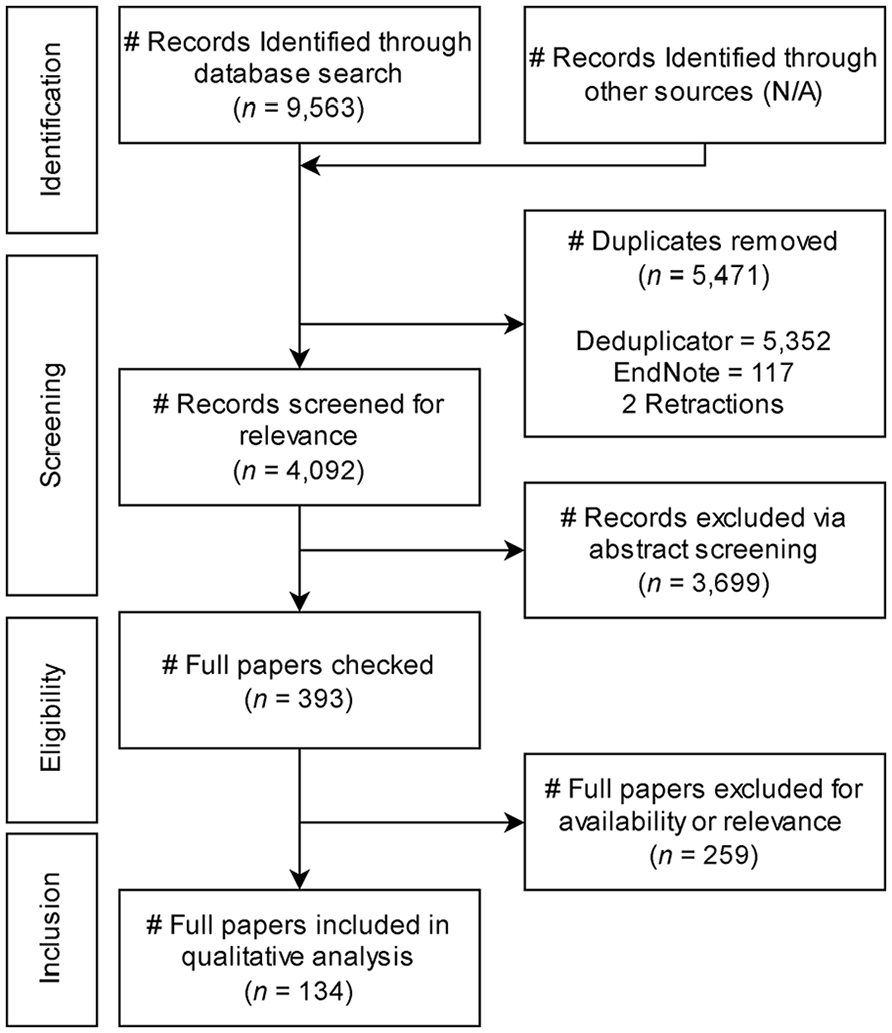

Record Identification and Deduplication

The search queries were executed following the SLR plan in February-March 2024. As shown in the PRISMA flowchart in Figure 1, 9,563 source records were identified. Search results were downloaded and aggregated within EndNote [The EndNote Team, 2013]. Then, the entire library of results was uploaded to the Systematic Review Accelerator (Clark et al., 2020) to screen for duplications. Five thousand three fifty-two duplicates were identified by the software. The deduplicated library was then redownloaded to EndNote, for cleaning of record citations—an additional 117 duplicates and 2 retractions were identified.

PRISMA diagram for systematic review.

Record Screening and Eligibility

Title-abstract screening was conducted adhering to the listed inclusion/exclusion criteria, following a “single screening with text mining” method, as prior work comparing recall error and workload between screening approaches found substantial reduction in workload with this method (Shemilt et al., 2016). To mitigate potential error recall, the ASReview (v1.3.1rc0; ASReview LAB Developers, 2024; Van De Schoot et al., 2021) screening software was selected from among alternatives listed by the International Collaboration for the Automation of Systematic Reviews (Beller et al., 2018) systematic review toolbox (Johnson et al., 2022), as it applies automation to the ordering of screening records rather than excluding records. Three thousand six hundred ninety-nine records were excluded in the title-abstract screening process, with 393 records retained. After title-abstract screening, researchers began searching for available full-texts and screening full-text articles according to the inclusion/exclusion criteria, using the single screening with text mining approach (Shemilt et al., 2016) facilitated through MAXQDA. As shown in Figure 1, A final total of 134 sources were eligible for inclusion for qualitative analysis.

Requirements Elicitation

Adapting the TIARA approach to requirements elicitation (see Figure 2), we uploaded the library of full-text source documents to MAXQDA 24 (VERBI Software, 024), a software for facilitating qualitative analysis. A framework for coding requirements in the source material was derived from Sonnenfeld et al. (2023b) and refined. The revised framework is presented in Supplemental Appendix A, with associated keywords indicating the level of conformance. A category for background material was retained, although for the present analysis only explicit definitions were sought for this category. The framework was translated into an automated search capability within MAXQDA, which applied to coding scheme to tag and report any sentences in the source material with the keywords in Supplemental Appendix A. Each source document was analyzed with this scheme, and the resultant lists of excerpts containing requirements, other considerations, and associated source information were aggregated into a Microsoft Excel spreadsheet. Approximately 43,000 excerpts were extracted from the set of source documents; though this number includes duplicates associated with multiple requirements.

TIARA flowchart & inputs.

Relevance Classification

While relevance classification using this approach has been previously done through the subjective assessment of trained research assistants (Sonnenfeld et al., 2023b), we sought to use this work as an opportunity to test a supervised machine learning (ML) approach. A subset of extracted excerpts (~1,000) was manually labeled for relevance (0 = not relevant, 1 = relevant) and used to develop, implement, and train an automated program for relevance classification. The solution, developed in tandem with this work was implemented in Python using the open-source Natural Language Tool Kit (NLTK) package (Bird et al., 2009), and used a bag-of-words feature extraction method combined with a naïve Bayesian classifier.

Excerpt Screening

Following this automated relevance classification, a compilation of excerpts were classified as being likely relevant and marked for inclusion for further qualitative analysis. Our continuing work on this facet of the project involves redefining the involvement of human researchers in screening process, as the automated analysis was designed to be overinclusive at this stage until more refined means of classification may be devised.

Thematic Analysis of Requirements

Our work on this project will also continue with thematic analysis. Whereas previously we’ve implemented the TIARA approach with manual human classification, we are currently working toward automated means of classifying the excerpts in accordance with a thematic framework currently under development in alignment with the MITM and other relevant CPS research. Once classified by superordinate and subordinate themes (see Figure 2), these excerpts are to be topically organized into a practitioner-oriented document, as a compilation of requirements and other considerations for training macrocognition to facilitate performance on collaborative problem-solving (CPS) tasks. Following this organization, researchers will manually review each excerpt included in the final document to judge their applicability and value—this triage is needed, in part, due to the intended inclusiveness of source document identification and excerpt extraction processes, and due to the nuance of understanding the practical implications of these considerations within training and research practices.

Findings

The requirements derived from our analysis are intended to help us to clarify, for practitioners and researchers alike, the scope and content of guidance available in the empirical and technical literature related to the design of macrocognitive training in CPS contexts. We are working to provide a novel compendium of these requirements for CPS-related training, categorized by the level of conformance implied by the imperatives of each statement (for example, “must” vs. “should”), and thematically categorized and organized as theoretically appropriate (see Fiore et al., 2023). Furthermore, we seek to report, upon completion of this work, quantitative descriptions of the scope and content of these requirements and highlight key takeaways for training practitioners and researchers regarding the design, delivery, and assessment of macrocognition in CPS contexts. Reported findings are intended to elucidate patterns in the empirical and technical source materials from which these requirements were extracted, including frequently discussed topics, interventions, and instructional elements. As a methodological contribution, this work encompasses an extension to the TIARA approach for requirements elicitation. Where prior application of TIARA supported the development of a compendium of over 3,000 excerpts of regulatory and guidance material (e.g., Sonnenfeld et al., 2023b), this methodological refinement shows that the approach can be used to extract value from empirical and technical materials to support RE in training contexts.

Limitations and Lessons Learned

There have been a variety of limitations and constraints in this work. First, we sought to leverage the resources of the ICASR systematic review toolbox (Johnson et al., 2022) in our work. In the time-period since our proposal, however, the domain hosting the toolbox has since deactivated—this is a hinderance not just to our project but to researchers seeking to conduct systematic reviews. Second, we identified a lack of standardization in the search features included across the broad scope of academic and technical research databases, causing difficulty in executing our proposed systematic search. Most notably, some databases did not include the means to export search results, citations, and associated metadata. Third, existing software and tools for aggregating and cleaning identified records did not help to automate the process as intended. Researchers needed to manually correct and download full-text PDFs of nearly all citations included at the eligibility stage. Platforms such as the Systematic Review Accelerator (Clark et al., 2020), ASReview (Van De Schoot et al., 2021), and MAXQDA (VERBI Software, 2024) were helpful and functioned as intended, saving time and systematizing the process. Lastly, our intention of creating a tailored means of automating our relevance classification and excerpt screening aspects of this process may have overshot the intended scope of this work. In our continued work on this project, we seek to finalize the processes and finish the development of the final requirements document.

Conclusion and Takeaways

In this paper we sought to address the challenges associated with requirements elicitation (Davis & Nori, 2007; Laplante & Kassab, 2022) when integrated within the development of training systems (ISD). In particular, learning engineers have argued that there is a theory-practice gap (e.g., Walcutt & Schatz, 2019) limiting the robustness of any generated requirements. One limitation is that these practices largely focus on the application of inquiry-based techniques in interactions with stakeholders. Furthermore, existing practices emphasize instructional goals and objectives, with limited attention to the instructional elements to be employed in training design (the “how”) (Milham et al., 2008; Stanney, 2008).

We have three overarching goals with this developing process. First, we set out to identify training considerations in the extant literature addressing the effect of training for macrocognition on performance in CPS contexts. Second, we sought to address the constraints of inquiry-based techniques for requirements elicitation. Third, we validate an iterative improvement to the TIARA approach. From this we sought to address the following research question: What training considerations may be derived from empirical and technical sources addressing the effect of training for macrocognition on performance in collaborative problem-solving contexts? In this paper, we detailed our methodology, and presented findings of the scope and content of actionable considerations and recommendations for the design of training for CPS (specifically) and macrocognition in teams (generally).

Although still in process, this work demonstrates the utility of the TIARA approach for RE. Specifically, this considers the empirical and technical literature as sources of artifact-based requirements elicitation. And it contributes: (a) value for RE practices (e.g., content), (b) representation of the scientific community in RE, complementary to and unmediated by the perspectives of surrogates (e.g., subject-matter experts), and (c) extends the backward traceability of instructional design decisions beyond objectives and theory (Hirumi, 2021), grounding them in empirical evidence instead of what is too often anecdotes and hearsay. This is particularly crucial for complex task environments like collaborative problem solving.

In sum, this paper provides a summary of a developing approach devised to support training practitioners who may be engaged in requirements activities. This approach can be pursued during training development, identifying evidence-based training practices, or evaluating training. Further, it adds to current RE practices and lays the foundation for future directions such as process automation, artifact selection. As such, TIARA can be developed as a complementary approach for requirements elicitation in training systems development for complex operational environments.

Supplemental Material

sj-docx-1-pro-10.1177_10711813241275508 – Supplemental material for Eliciting Requirements and Recommendations for Training Macrocognition in Teams: Considerations for Collaborative Problem Solving

Supplemental material, sj-docx-1-pro-10.1177_10711813241275508 for Eliciting Requirements and Recommendations for Training Macrocognition in Teams: Considerations for Collaborative Problem Solving by Nathan A. Sonnenfeld, Lucien Niacaris, Giovani Diaz Alfaro, Blake Nguyen1, Vera Daniliv, Olivia B. Newton, Florian G. Jentsch and Stephen M. Fiore in Proceedings of the Human Factors and Ergonomics Society Annual Meeting

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Writing of this paper was partially supported by US Air Force Office of Scientific Research (AFOSR) grant FA9550-22-1-0151 awarded to Stephen M. Fiore and Florian G. Jentsch. Any opinions, findings, and conclusions expressed in this material are those of the authors and do not necessarily reflect the views of these organizations or the University of Central Florida.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.