Abstract

Low-back musculoskeletal disorders (MSDs) are the primary work-related injuries among manual material handling (MMH) workers, who are frequently exposed to repetitive lifting. To prevent low-back MSDs in the workplace, we present a video-based lifting action recognition method using rank-altered kinematic feature pairs, called top-scoring pairs (TSPs). We derive TSPs from a video dataset containing lifting and other activities commonly seen in MMH. These TSPs collectively classify each frame as lifting and non-lifting. The validation process involves evaluating classification performance. The proposed method minimizes computational and memory requirements while achieving performance comparable to more complex methods with greater computational demands. This makes it suitable for systems with limited hardware resources, thereby providing extensive feasibility across a variety of MMH environments to improve workplace safety.

Introduction

Between 2021 and 2022, 502,280 cases of work-related musculoskeletal disorders (MSDs) resulting in days away from work were reported in the U.S. (U.S. Bureau of Labor Statistics, 2023). Most of these cases occur among manual material handling (MMH) workers frequently exposed to repetitive lifting tasks. Epidemiological studies link repetitive lifting to an increased risk of low-back MSDs (Maher et al., 2017). From a biomechanical standpoint, repetitive lifting fatigues the back muscles and increases spinal loading, particularly on the L5/S1 intervertebral disc, leading to compression and shear forces (Marras et al., 2006). The L5/S1 disc also experiences significant bending moments during repetitive lifting due to the lumbar spine’s structure (Dolan & Adams, 1998). These stresses are key risk factors for chronic low-back pain and disc impairments, such as herniation (Desmoulin et al., 2020). Therefore, managing lifting frequency in the workplace is critical to prevent work-related low-back injuries.

In current safety evaluations, practitioners manually observe workers to identify lifting actions and determine frequencies, either through field surveys or video analysis. This process is time-consuming and labor-intensive, requiring real-time monitoring throughout shifts. Thus, developing an automated system for monitoring lifting tasks is essential. To this end, the first step is acquiring motion data from workers. With the widespread availability of cameras, computer vision-based neural networks offer a promising solution for collecting body motion data. However, technical challenges remain for workplace implementation. Current approaches often stack multiple convolutional layers to capture features from local to global scales (Meng et al., 2022). Despite their power, these networks are computationally and memory intensive, limiting their use on hardware-constrained systems (Han et al., 2015).

To address these limitations, we propose a video-based lifting action recognition method that reduces computational and memory demands while maintaining high classification accuracy. The method uses BlazePose (Bazarevsky et al., 2020), a lightweight CNN architecture, to detect 18 key body joints. Kinematic features are extracted from these joints, and top-scoring pairs (TSPs) form an ensemble classifier that identifies lifting actions by classifying each video frame as lifting or non-lifting. We validated the method against baseline classifiers using a video dataset of seven common MMH activities.

Method

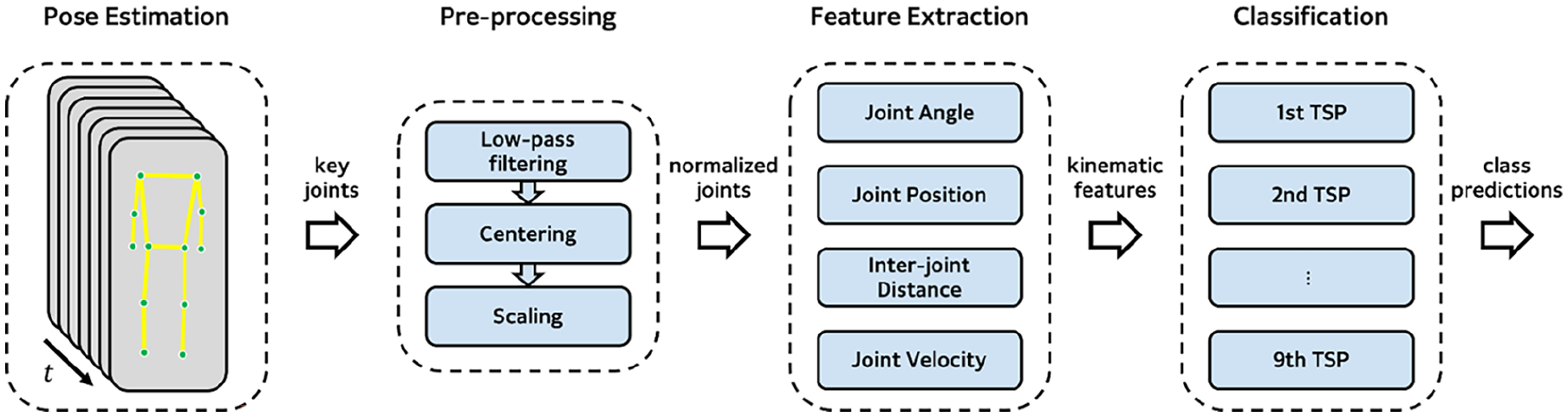

To identify lifting actions in videos, we adopt a four-stage process: pose estimation, pre-processing, feature extraction, and classification. Figure 1 illustrates the overall workflow of the proposed method.

Overall workflow of the proposed method.

Pose Estimation

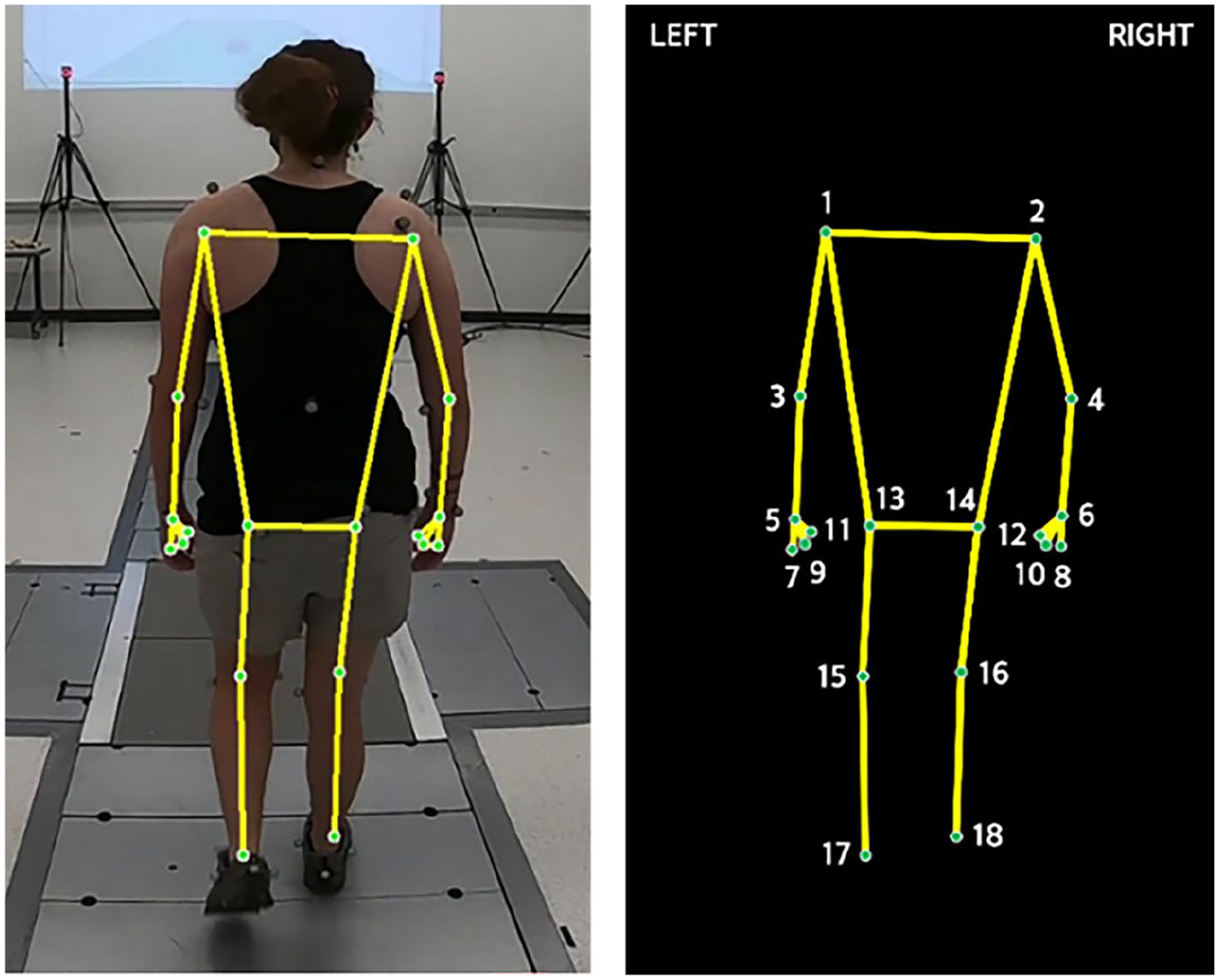

Recently, Google researchers introduced BlazePose, a lightweight CNN architecture designed for real-time human pose estimation on mobile devices (Bazarevsky et al., 2020). In this study, we employed BlazePose for motion data collection due to its low latency and high accuracy. From the 33 joints detected by BlazePose, we selected 18 key joints to capture full-body movements for lifting action recognition (Figure 2).

Selected key joints of the human body. These include shoulders, elbows, wrists, pinkies, index fingers, thumbs, hips, knees, and ankles.

Pre-processing

The initial step in data pre-processing involves smoothing each joint’s trajectory by filtering out high-frequency noise. We utilize a fifth-order Butterworth filter with a cutoff frequency of 3 Hz, given that typical walking frequencies range from 1.2to 2.2 Hz (Luo et al., 2021). After filtering, the joint trajectories become more precise, with reduced noise and improved accuracy. Next, we normalize the joint positions by centering and scaling: each joint is shifted by subtracting the mid-hip point and then scaled by dividing it by the average trunk length over a short time window.

Feature Extraction

From the pre-processed joints

Classification

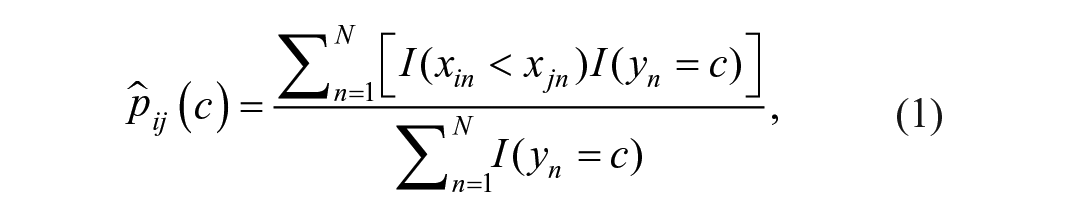

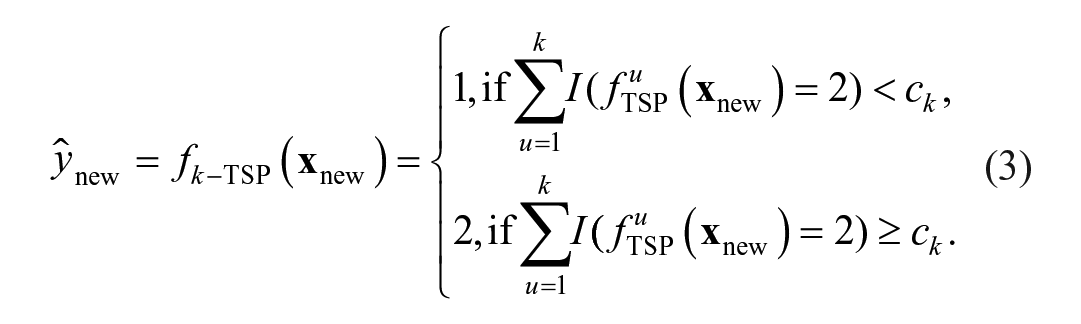

Consider a data matrix

where

Suppose we derive feature pairs with scores comparable to the TSP from the data matrix

where

Results

For validation, we created a benchmark video dataset for lifting action recognition in MMH by conducting experiments involving 25 healthy participants. Each participant completed four sessions, during which they performed seven common MMH activities: pushing, pulling, sitting, standing, walking, and lifting.

Classification Performance

We applied the

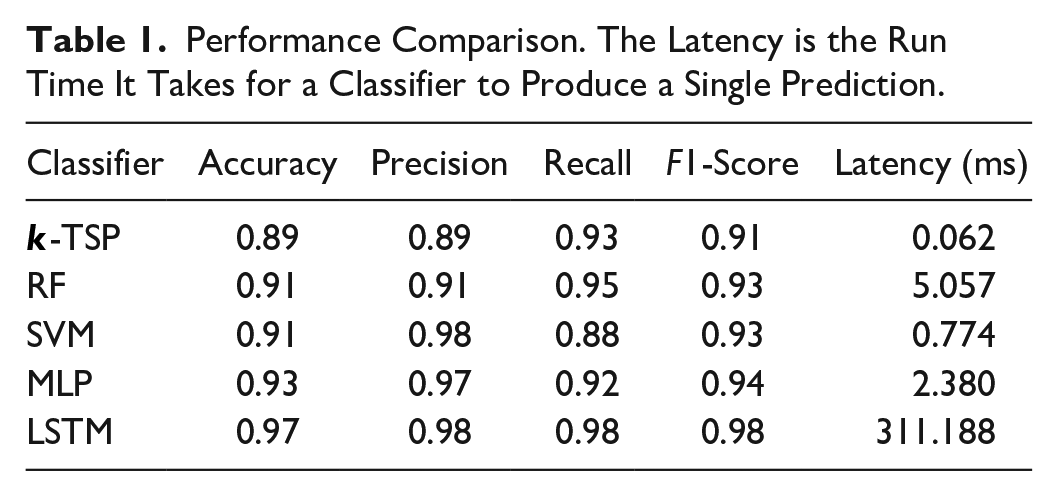

Performance Comparison. The Latency is the Run Time It Takes for a Classifier to Produce a Single Prediction.

Discussions

All classifiers achieved a performance of 0.88 or higher across all metrics. The

This study has several limitations. First, the use of 2D pose estimation may lead to inaccuracies in feature extraction due to the projection of joints onto a 2D plane. Second, the window length should be adjusted based on the frame rate of the videos being processed to maintain consistent temporal resolution for feature extraction. Third, a lift counting algorithm based on class predictions needs to be developed to count lifts and assess the risks associated with lifting frequency.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This manuscript is based upon work supported by the National Science Foundation under Grant # 2013451.