Abstract

This paper presents the developments across a multi-year collaborative industry-academia R&D project designing and testing novel Augmented Reality (AR) solutions for differing maritime operations and work tasks. We describe the step-wise approach taken in our Human Factors testing program exploring the effects of AR on maritime operators. This paper focuses specifically on our empirical simulator laboratory experiments using differing data collection designs, tools and operational scenarios to investigate operator Situation Awareness, cognitive workload, performance and usability. Moving from comparatively rudimentary measures using static scenarios, desktop data collections and post hoc video analysis to implementing eye-tracking and automated object identification in dynamic scenarios and full-mission simulated environments, we present an overview of how our testing program has evolved and lessons learned. Further, we discuss ongoing research and future plans to better understand the effects of AR on maritime end-users to implement human-centred solutions more effectively in maritime settings.

Introduction

Over 80% of global goods are transported by water (UNCTAD, 2021), with further ocean- and water-related activities, such as natural resource utilization (e.g., fishing and aquaculture, offshore energy, underwater mining, etc.), transportation of people, tourism and pleasure activities make oceans and waterways integral to our way of life. Thus, the safety of maritime operations on and near water is a significant topic globally. Maritime operations are complex socio-technical systems consisting of interconnected entities that need to work together in order to create safe and efficient outcomes. Like other complex and high reliability industries, the importance of humans within the system to create and maintain safe and efficient processes is critical. Maritime and maritime-related operations and operators encompass a diverse set of goals, roles, jobs and tasks to conduct functions of large supply-chains. From shipbuilding and maintenance to port operations and traffic control, logistical planning to class, legal and business aspects, to the sharp-end operators in ports and onboard ships working hands-on with cargo handling and deck work, navigation and engine room, only present a sample of differing occupations and tasks that are required to achieve overall system goals.

Specifically regarding sharp-end operations and onboard operators of ships and marine structures, particular focus is placed on increasing and supporting Situation Awareness (SA) and reducing physical and cognitive workloads. Maritime operations are highly dynamic and require differing levels of operator decision making and inputs throughout a voyage and in performing specific tasks. This is particularly important during parts of a voyage requiring increased skill or attention, for example, sailing in high traffic areas, arriving and departing port or specialized tasks, such as Dynamic Positioning, convoying, towing or ice breaking. In an effort to increase operator performance, and thus system safety and efficiency, a fundamental goal is to organize and design work systems that optimize SA and workload throughout differing operations. Thus, supporting operators through differing human-centered initiatives, including organizational, technological, design and training efforts is important.

Increasing digitalization and automation found within maritime operational systems require sharp-end operators to continually familiarize themselves and retrain with new equipment that have new and differing capabilities, necessitating new and differing work procedures and knowledge. For ship navigation the extensive technology available on ship bridges assist navigators to understand their vessels’ status within the environment and support their perception, comprehension and projection in decision making and execution. However, advanced technologies on ships, and particularly ship bridges, have been shown as root contributory causes of accidents at sea, including poor design and usability that can increase user cognitive workloads and negatively impact SA (Mallam et al., 2020).

From a technological perspective, emerging capabilities in Augmented Reality (AR) have been shown to have a wide range of applications to facilitate sharp-end operators across differing industries (de Souza Cardoso et al., 2020) and safety-critical operations (Li et al., 2018; Vávra et al., 2017). In the maritime industry, utilizing AR technology has been proposed or utilized across different areas, including shipbuilding (thyssenkrupp Marine Systems, n.d.; Vargas et al., 2020), maintenance (Malta et al., 2023; MAN Energy Solutions, 2019), training (Mallam et al., 2019), traffic monitoring (von Lukas et al., 2014), navigation (van den Oever et al., 2023) and for specific tasks, such as for docking (Falk et al., 2020) and pilotage (Okazaki et al., 2017). Furthermore, commercialization of navigational tools are appearing on the market with the capability of integrating AR with existing navigation systems (e.g., Furuno, 2023; Jeon et al., 2019; Raymarine, n.d.).

Despite the varied potential for added value across differing applications in the maritime domain, there is limited research-based empirical data on the effects of AR on maritime operations and operators (Laera et al., 2021). As with implementing any new technology into complex systems there is likelihood for both planned and unplanned outcomes in how it positively and negatively affect differing elements throughout a system.

This paper presents the developments across a multi-year research and development project designing and testing novel Augmented Reality solutions for differing maritime operations. Specifically, we detail the advancements made in our stepwise approach to experimental design, data collection tools and scenarios used in investigating ARs effect on human performance. We also present ongoing research and pathway forward to better understand the effects of AR on maritime end-users to implement human-centred solutions more effectively in maritime settings.

Project Background

OpenAR is a multi-year R&D project with the collaboration of academic and industrial partners investigating the applications of Augmented Reality in advanced maritime operations (Ocean Industries Concept Lab, 2024). The goal of OpenAR is to develop a design framework and implementation strategies for emerging digitalization in maritime operations, focusing on head-mounted and screen-based AR solutions, in maritime workplaces and work tasks (Nordby et al., 2024). As part of the development process the iterative evaluation of design concepts is an integral part of the work through a multi-methods approach across differing case-based development processes, in a similar approach taken in our traditional bridge equipment development, as detailed in Mallam et al. (2021). Differing case-based design projects focused on a variety of cases where AR was proposed to potentially add value to operator work flow and operations, including general navigation and bridge tasks (Nordby et al., 2020, 2024), ice breaking (Frydenberg et al., 2021) and Dynamic Positioning (Carho & Mallam, 2022).

Laboratory Simulator Experiments—HF Testing

OpenAR has used a battery of methods to develop and test maritime AR concepts, including a range of qualitative and quantitative, subjective and objective measures implemented through field studies (i.e., onboard ships), design workshops, Subject-Matter Expert job shadowing and in-situ observations, interviews and focus-groups. The project is now implementing differing simulation-based data collections using more traditional experimental designs to expose maritime personnel differing AR concepts and maritime case studies to measure the effectiveness of AR applications on operator performance during differing work tasks.

The project’s earliest experimental research compared AR-supported and non-AR supported simulated ship navigation tasks. It was found that in the AR condition, maritime navigators decreased Head-Down Time (HDT), spending more time looking out the bridge window at the actual traffic situations presented (a best practice in ship navigation), and had increased SA, in comparison to without using AR (Houweling et al., 2024). This research was performed on a desktop navigation simulator, using post hoc manual analysis of video tracking each navigator’s head-up and head-down. Moving forward our goal has been to increase the sophistication of data collections, including increasing realism and fidelity and implementing new collection tools.

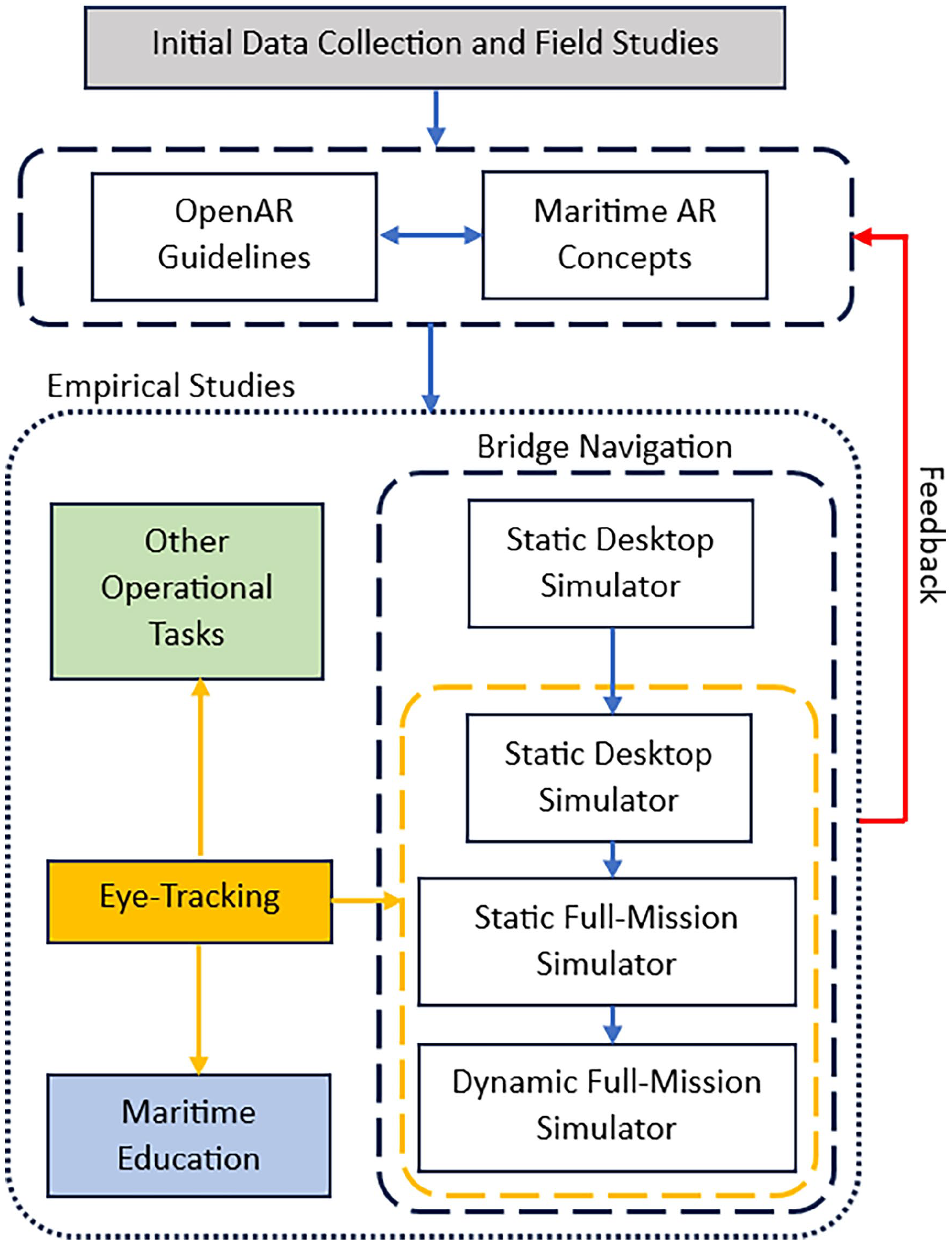

The natural next step in the project’s battery of empirical studies was to scale our initial findings and advance the testing design in three ways: (1) changing the test environment from desktop computer simulators to full mission simulators, which more closely resembles the real-world working environment onboard ships at sea, while maintaining control of each trial and overall data collection; (2) including the use of head-mounted eye-tracking technology (Tobii Pro 3 glasses) and in-house developed EyePort analysis software, in order to provide more detailed data and feedback on what and where participants are looking. This advances our first phase of collecting general “head-up,” “head-down” categorization to better understand the detailed areas that are being viewed by participants, and (3) expanding the operational tasks from only navigation and ship’s bridge-based tasks (e.g., steering the ship) to other jobs and tasks, specifically those related to marine engineering. Further, we investigate the applications of eye-tracking not only on the operations-side, but also as an educational tool for integration into teaching and simulation-based training programs (see Figure 1).

Overview of OpenAR HF data collections.

Setup and Equipment

In our initial pilot studies moving forward from Houweling et al. (2024) we explored the applications, abilities and limitations of a head-mounted eye-tracking device of an in-house developed software for automating eye tracking data analysis across differing applied maritime scenarios. The primary objective was to develop a data collection system that provides sufficient physical freedom and versatility for different working environments that may be experienced in many natural maritime operations. This system will be used to facilitate the exploration of the potential applications of Augmented Reality (AR) systems and their prototypes within the maritime domain, thus expanding on our previous empirical AR data collections.

Eye-Tracking Implementation and Analysis Framework

Drawing from existing literature and leveraging insights from OpenAR experiences, our initial focus centered on refining the software for capturing head orientation, gaze fixation, and Area of Interest (AOI). Eye-tracking in our project refers to any technology that is used to study human eye behavior. In the maritime domain, eye-tracking has been mostly used to investigate seafarers’ cognitive performance and understanding visual attentions patterns and their correlation with cognitive workload and SA, as well as human-machine interface and training enhancement, mostly in simulated environments (Martinez-Marquez et al., 2021).

In eye-tracking measurements, the user’s AOI is generally used to link their eye behavior metrics to different stimuli (Hessels et al., 2016). Gazing pattern, fixation time, and visual attention are direct interpretations of eye movements between AOIs or to find and define AOIs. Enhancements were made to the functionality of software, named EyePort (Samanta, 2024), to analyze data captured by Tobii Pro Glasses 3 hardware.

We conducted a series of exploratory experiments aimed at comprehensively understanding the performance and limitations of our system (both hardware and software), while refining EyePort for both static and dynamic ship navigation scenarios in desktop and full mission simulators. Additionally, we investigated its applicability to engine maintenance and inspection, spanning the simulated and workshop environments. The latest EyePort version after these optimizations (V.3.0.0), incorporates data recording and analysis collected by Tobii Pro Glasses 3. This version post-processes movements and accelerations recorded by the glasses internal sensors and eye movement data on the videos captured by its scene camera, also utilizes an AI object identification system to categorize AOIs based on user-defined parameters. Eyeport features that we developed, optimized and utilized in our study are briefly described below.

Head Orientation Times

EyePort uses the glasses’ internal gyroscope data to calculate the relative vertical movement of the user’s head. in the forms of “head up,” “head level,” and “head down.” The system reports the intervals of head movements between two instances of zero acceleration. The sensitivity of head orientation detection can be adjusted based on the orientation speed.

Area of Interests

EyePort reports the area around the gazing point on the closest scene video frame at the end of the fixation time as an AOI. Operators can adjust the size of the AOI by manipulating the length of each side of the square enclosing the gazing point ranging 50 pixels to 1,000 pixels. The fixation time can also be adjusted between 0.25 and 5 seconds. Given the scene video recording speed limitations on Tobii Pro Glasses 3 (25 fps), a lower temporal resolution is not achievable. EyePort detects and reports unique AOI, thus automating and assisting in data processing and interpretation.

Object Detection

Unique AOI are analyzed by a pre-trained algorithm that match visual patterns. EyePort, subsequently labels AOIs based on these detections. Furthermore, EyePort now incorporates three surveillance tools—(i) RADAR Violation, (ii) No Go Zones, and (iii) Dead Man Switch—that rely on the object detection system (ODS) outputs.

Preliminary Outcomes

Static Desktop Navigation Scenarios

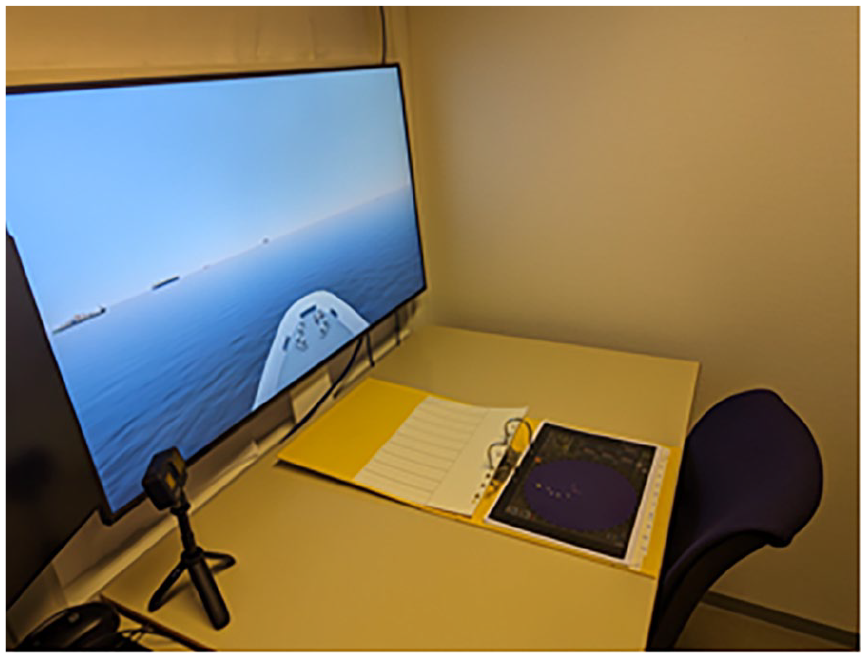

In our initial trials we implemented the scenarios outlined by Houweling et al. (2024), in which participants were provided with a static picture of the bow view of a ship bridge displaying the marine traffic ahead on a monitor in a head-up and level position. Additionally, a picture of the ship’s RADAR was presented on a desk in a head-down position. These navigation scenarios and follow-up questions were designed to gauge the impact of AR on SA levels and cognitive workload (see Figure 2).

Desktop bridge view experimental setup.

Houweling et al. (2024) analyzed a video recording post-hoc from participants’ physical acts during the experiment to ascertain their head orientation over time. EyePort’s head orientation feature facilitates automated data recording, potentially enhances the consistency and accuracy of the data collection within this experimental setup. Throughout our trials, we asked participants to complete the same task as in Houweling et al. (2024) in a stationary position while wearing the eye tracking device. Our assessments revealed the efficacy of our algorithm within a head orientation detection range of 5–80°/s. However, it was noted that excessively rapid or very slow movements, coupled with minimal fixations between successive movements, introduced inconsistencies in the performance of our orientation detection system.

Our analysis of AOI detection demonstrated adequate consistency in detecting AOIs when defined fixation time criteria were met; however, the object detection algorithm required different adjustments for analysis box size and detection sensitivity to effectively identify ships within the scene, augmented features within the view, and RADAR. This limitation may be mitigated by pre-training the detection algorithm with more details of diverse objects in the experiment environment.

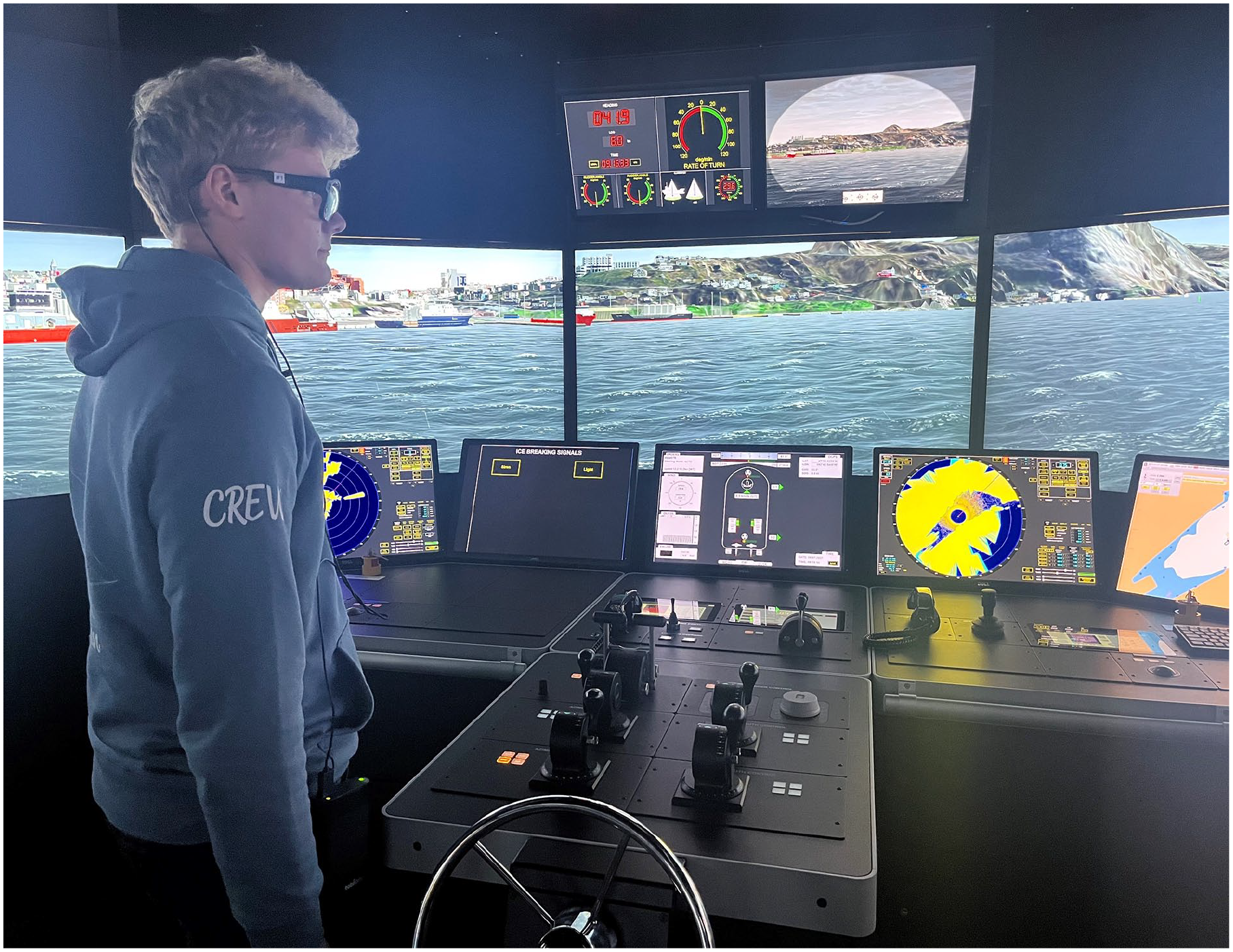

Static and Dynamic Full Mission Simulator Scenarios

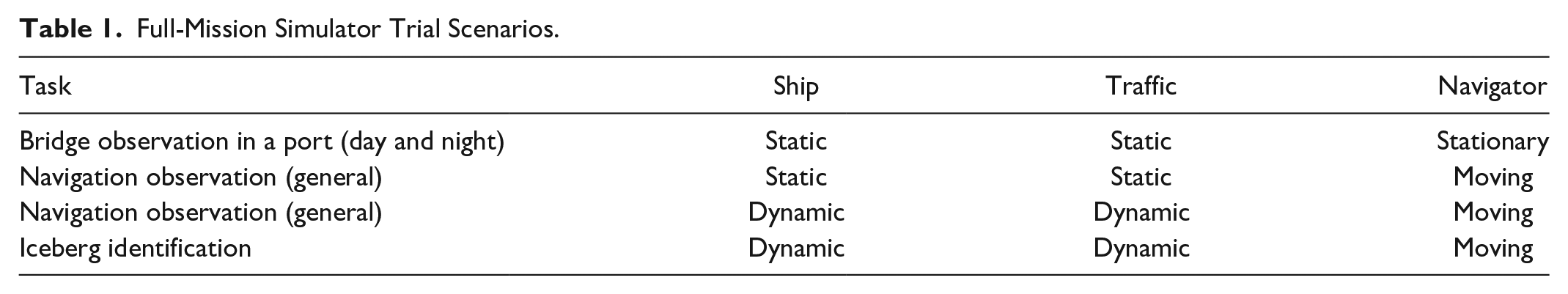

Scaling-up the experimental scenarios we then conducted tests of our eye-tracking system in a full mission ship’s bridge simulator, providing a more realistic “real-world” experience (see Figure 3). Participants were asked to perform various navigation-related observations, building SA, and executing operations while wearing the eye-tracker device in different situations, as described in Table 1.

Full mission bridge simulator with participant wearing eye-tracker.

Full-Mission Simulator Trial Scenarios.

As anticipated, head orientation detection yielded more noisy and less precise results, primarily due to the inevitable complex combinations of head and body movements required to complete tasks in a full mission bridge simulator, as opposed to in a seated and stationary position in front of a desktop simulator. The importance of initial head orientation calibration was found to be more significant in these trials. The head orientation detection algorithm was initially designed for desktop scenarios and proved inadequate in capturing instances in which the navigator transitions between multiple head-down or multiple head-up orientations. For example, the initial upward head movement of a participant from a head down orientation was reported as head level, and the next upward movement was reported as head up regardless of head angle. The same sequence was applied to downward movements. Thus, the user’s starting point and equipment orientation, as well as the locations of AOIs should be carefully noted to in order to accurately interpret the head orientation results. Fortunately, this issue can be resolved by reviewing video recordings and time stamp logs.

AOI and object detection performance mirrored those observed in desktop trials; however, eye-tracking scene camera speed limits the system performance in some situations where a navigator may switch swiftly between different AOIs. During our trials in the full mission simulator, we observed instances where navigators skimmed an area rather than looking briefly at different spots (AOIs) to comprehend their situation within a dynamic and changing surrounding environment. The ODS was not trained for ships’ navigation lights, so its performance was limited by the available contrast and lights on the ships. Despite training the ODS with limited images (e.g., several hundred), it demonstrated proficient performance in detecting nearby icebergs, likely due to their distinct visual characteristics in comparison with the environment.

Engine Maintenance and Inspection Scenarios

Seafarers may also benefit from the integration of AR into machinery maintenance and inspection tasks. To explore the performance of our eye-tracking analysis system in this domain, we conducted tests on the AOI and object detection capabilities of our eye-tracking system on a routine pre-start inspection of a Kohler KD-350 diesel engine located in a machinery lab used for teaching (see Figure 4).

Testing eye-tracker in small engine machinery laboratory.

Throughout our trials on the physical engine, the AOI detection system performed adequately; however, unique AOI and object detection features were found to have difficulties in distinguishing between some visually similar parts of the engine, exacerbated by reflections from surfaces, lighting conditions, and shadows across different parts of the engine. Furthermore, the presence of participants’ hands in the scene during actual experiments were found to increase variability in AOIs and reduced object detection efficiency by obstructing some parts of the engine, which may be used in image processing.

Conclusions and Future Work

Based on these pilot studies we have advanced our previous empirical work by: (1) scaling to full mission work environments, (2) implementing eye-tracking and automated object detection, and (3) increasing the number and type of maritime work tasks. This work, and the lessons learned, helps set up our project for the next phase in full-scale empirical studies for measuring the effects of novel AR developments in maritime operations, including its impact on differing human performance measures, such as cognitive workload, SA, task completion efficiency and error rates. Outputs will directly contribute to our ongoing maritime AR research and development, where the main goal and deliverable is to develop the first human-centred design guidance for maritime AR applications. Furthermore, this work contributes to more generalizable AR applications related to human performance and testing in safety-critical industries, including design, operations, education and training.

Footnotes

Acknowledgements

The authors would like to thank Redmer Halbesma and Stijn Postuma of Maritime Institute Willem Barentsz for their assistance in full-mission bridge testing during their exchange at the Marine Institute.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was partially funded by the Research Council of Norway (project 320247).