Abstract

Virtual reality (VR) technologies have garnered substantial attention and adoption across various fields, including workspaces and education. People with disabilities, who already face lower employment rates and fewer full-time opportunities, may be further disadvantaged. Addressing this, recent advancements have focused on making VR inclusive, particularly for individuals with visual impairments. With the rapid development of artificial intelligence (AI) technology, it has also become possible to integrate AI technologies into VR systems to enhance accessibility for individuals with visual impairments. Despite growing interest, there is a gap in comprehensive literature focusing on AI’s role in enabling blind and low vision individuals to experience VR. This review aims to fill that void by providing an overview of current research, discussing benefits and challenges, and identifying future opportunities. By synthesizing existing research, this study contributes insights for researchers, developers, and practitioners working in the field of accessibility and assistive technology.

Introduction

Virtual reality (VR) technology allows users to immerse themselves in diverse virtual experiences, with applications from entertainment to healthcare, education, and beyond. VR technologies have garnered substantial attention and adoption across various fields, including workspaces and education (Rupp et al., 2023). People with disabilities who already face lower employment rates and fewer full-time opportunities may be further disadvantaged given the predicted increase of VR in over 23 million jobs by 2030 (Rupp et al., 2023). This calls for developing inclusive technology solutions that can cater to individuals with disabilities. Specially for individuals with visual impairments, including blind and low vision (BLV) individuals.

Globally, in 2020, 1.1 billion people were living with vision loss. Among them, 43 million people are blind, 295 million people have moderate to severe visual impairments, 258 million people have mild visual impairments, and 510 million people have near vision problems (Bourne et al., 2021). Naturally, it is no small matter to develop technologies and solutions that can improve accessibility and enhance the experiences of individuals with blindness or visual impairments.

Blind and low vision individuals face significant challenges in accessing VR experiences. VR technology, which relies heavily on visual components, is often inaccessible to those with visual impairments. For completely blind individuals, VR’s visual nature presents barriers. Those with low vision struggle with small text, poor contrast, and complex visuals. Head-mounted displays further complicate accessibility, restricting peripheral vision and posing difficulties for those with central visual field loss. Additionally, wearing corrective lenses, which is common for individuals with myopia, can interfere with using these VR headsets.

Over the past decade, there have been significant advancements in developing technology to assist individuals with visual impairments to experience virtual reality (Collins et al., 2023; Maidenbaum & Amedi, 2015; Zhao et al., 2018). In recent years, artificial intelligence (AI) technology has experienced its blooming era. The intersection of AI and VR enables a plethora of applications such as training (particularly medical and military), gaming, robotics and autonomous cars, and advanced visualization (Hirzle et al., 2023; Reiners et al., 2021). The development of the VR experience itself can also be enhanced by using AI (Suzuki et al., 2023). With the rapid development of artificial intelligence, it has also become possible to integrate AI technologies into virtual reality systems to enhance accessibility for individuals with visual impairments. These AI technologies can provide innovative solutions and intuitive interactions that enable blind and low vision individuals to experience virtual, augmented, and mixed reality (Fichten et al., 2023).

Given the recent popularization of AI and VR, numerous studies have been conducted to combine the two. Various literature reviews have also been published to provide an overview of the current state of research at the intersection of AI and VR (Brzezinski & Krzeminska, 2023; Hirzle et al., 2023; Reiners et al., 2021). These literature reviews have identified several emerging trends, challenges, and application domains for AI and VR. Despite the growing research on applying AI to assist blind and visually impaired individuals in experiencing VR, there is still a lack of a focused literature review on the use of AI to enable these individuals to experience virtual reality. This review aims to fill this gap by analyzing and summarizing the existing research on AI-enabled VR experiences for blind and low vision individuals. The review aims to address the following research question: “What existing AI technologies and methods are being used to make VR accessible to blind and low vision individuals?”

By conducting a thorough literature review, this paper will contribute to the existing body of knowledge by synthesizing and summarizing the research on AI-enabled VR technologies for blind and low vision individuals, thereby providing valuable insights for researchers, developers, and practitioners working in the field of accessibility and assistive technology.

Method

This literature review was conducted following a methodology roughly aligned with the PRISMA 2020 (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) statement (Page et al., 2021). While the review process adhered to key principles outlined in the PRISMA 2020 statement, some adaptations were made to accommodate the scope and objectives of the review. The search was done in May 2024.

Search Strategy

The search strategy includes the identification of relevant keywords and search terms related to using AI to enable blind and low vision individuals to experience virtual reality. Considering there are three major overlapping domains in this topic—AI, XR, and BVI—the search terms will include variations of these keywords. For each category, the following keywords will be used:

AI-related keywords: AI, Artificial intelligence, ML, machine learning, CV, computer vision, image recognition, NLP, natural language processing, deep learning, neural networks, pattern recognition, generative.

VR-related keywords: VR, virtual reality, HMD, head-mounted display, head-up display, head-worn display, headset, immersive environment, virtual environment, virtual space.

BLV-related keywords: BVI, BLV, Blind, low vision, visual impairment, vision loss, visual accessibility, visual aids, assistive technology.

When available, the search is performed with the following search query using the Advanced Search function. An example syntax used is (“AI” OR “artificial intelligence”) AND (“VR” OR “virtual reality”) AND (“BVI” OR “BLV”).

Selection Process

The review involves searching through academic databases such as IEEE Xplore, ACM Digital Library, PubMed, and Google Scholar, to identify relevant research articles, conference papers, and other sources. Titles and abstracts of the retrieved articles were screened by one reviewer to assess their eligibility for inclusion in the review. Full-text articles were then assessed against predefined inclusion and exclusion criteria to determine their suitability for further analysis.

The inclusion criteria for selecting studies include research articles, conference papers, and technical reports published in peer-reviewed journals or reputable conference proceedings that:

Utilization of virtual reality devices as the primary medium.

Incorporation of artificial intelligence technologies.

Explicit design targeting the blind and/or low vision population.

The exclusion criteria will include studies that:

Non-English language publications.

Inaccessibility of the study materials.

Absence of utilization of virtual reality devices.

Lack of integration of AI technologies.

Failure to focus on blind and/or low vision individuals.

Additionally, citation chaining and snowballing techniques were also used to identify additional relevant sources.

Results

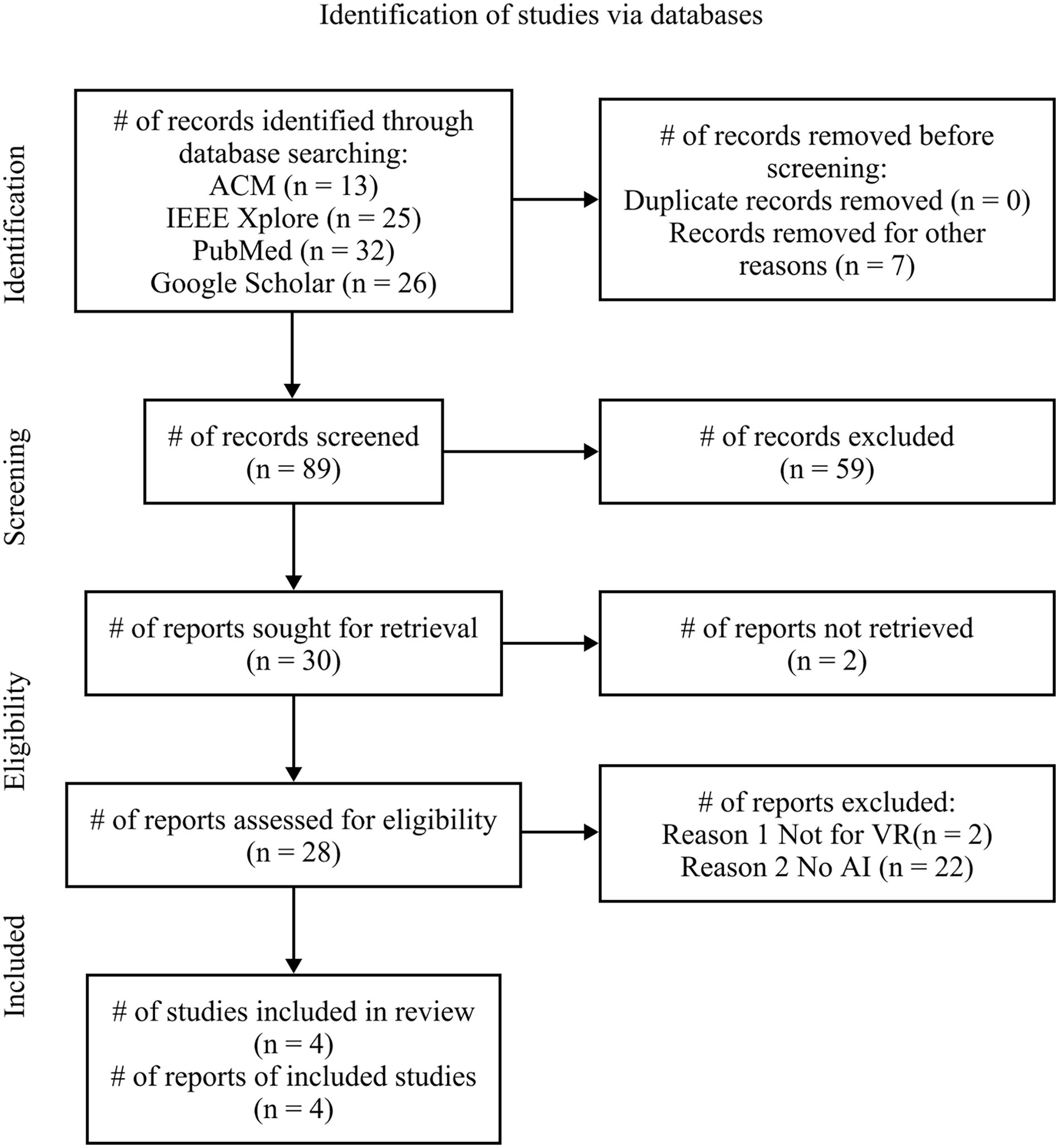

A total of 96 results were collected from the databases. Among them, seven irrelevant items were removed. During the first screening process, 59 of the total records were excluded for being irrelevant. Of the remaining 30 reports, two were inaccessible, and two reports were excluded for not being centered around virtual reality, 22 reports were excluded for not using AI technology. A total of four studies were included in this review (See Figure 1). The applications, accessibility features, and AI technology used for enhancing VR accessibility for blind and low vision individuals were extracted from each article.

PRISMA flow diagram.

Audio Scene Description

Of the four unique studies, two studies (Polys & Wasi, 2023; Zhao et al., 2019) focus on audio scene description, which requires a plethora of AI algorithms, including computer vision, natural language processing, and machine learning techniques. Polys and Wasi (2023) developed audio captioning algorithms for enhancing accessibility in 3D contents on the web. They also conducted a user study with 44 participants to evaluate the effectiveness of captioning algorithms for search and summarize tasks. Their findings suggested that algorithms performed differently for different tasks and highlighted the significance of algorithm choice in enhancing accessibility for individuals with visual impairments in virtual environments.

SeeingVR, developed by Zhao et al. (2019), focused on the development and evaluation of a set of 14 low vision tools designed to enhance the accessibility of virtual reality experiences for individuals with vision impairments. Among the 14 tools, three of them deploy AI algorithms to achieve the desired functionality. The three tools are edge enhancement for object detection, text-to-speech for reading textual content, and audio scene description for scene recognition. Evaluation involving 11 participants with low vision demonstrated that SeeingVR improved task completion rates and overall enjoyment in VR environments.

Depth Estimation and Semantic Edge Detection

Similar to the edge enhancement tool by Zhao et al. (2019), one study enhances obstacle avoidance and object selection by combining depth estimation and semantic edge detection (Rasla & Beyeler, 2022), This study explored the relative importance of depth cues and semantic edges for indoor mobility using simulated prosthetic vision in immersive virtual reality. The researchers tested different scene simplification modes, including DepthOnly, EdgesOnly, EdgesAndDepth, and EdgesOrDepth. Tasks involved obstacle avoidance and object selection in virtual environments to assess the effectiveness of these modes. Regarding obstacle avoidance, participants performed most successfully with the EdgesAndDepth mode, achieving an average success rate of 89.8%. Additionally, participants were significantly faster using DepthOnly (17 s) compared to EdgesOrDepth (22 s) and EdgesAndDepth (25 s). In object selection, participants performed best with the DepthOnly and EdgesOrDepth modes. Findings indicated the significance of depth-based cues for obstacle avoidance and highlighted user preferences for certain scene simplification modes, emphasizing the importance of flexibility in visual information presentation.

Braille Blocks Recognition

The last study focuses on Braille block recognition using a deep learning algorithm known as convolutional neural network (Kim, 2020). The study introduces a VR white cane equipped with a braille block recognition system that provides feedback through vibration and sound to help users navigate virtual environments. By employing a decision-making model based on deep learning, the researchers developed an algorithm to identify Braille blocks through image inputs. The researchers conducted user surveys to assess satisfaction and presence in the immersive VR environment and performance analysis experiments to evaluate the recognition of various braille block conditions. The results indicated high user satisfaction and effective braille block recognition. However, as they did not test with individuals with prior experience in using a walking cane, many expressed difficulties in navigating braille blocks. It’s worth noting that all other included studies have tested, at least in part, with visually impaired individuals.

Synthesis

The existing AI technologies and methods used to assist blind and low vision individuals in virtual reality include Braille block recognition with convolutional neural networks; obstacle avoidance and object selection through depth estimation and semantic edge detection; audio scene description using a combination of AI algorithms; and text-to-speech, which uses advanced natural language processing techniques to convert written content into audible speech.

Despite the limited number of articles reviewed, clear patterns and insights have emerged. Among these techniques, edge enhancement and audio scene description are the most widely used. The audio scene description feature, like many others, mimics real-world counterpart AI solutions to provide familiar and intuitive feedback for users. In contrast, edge enhancement techniques might be more easily achievable in virtual environments, than in the real world due to the controlled nature of VR settings. Techniques such as Braille block recognition and audio scene description can assist both blind and low vision individuals in navigating virtual reality, providing critical sensory feedback. On the other hand, visual enhancement techniques like depth estimation and semantic edge detection are particularly beneficial for those with partial vision, helping them interpret and interact with their surroundings more effectively.

Discussion

While the existing literature suggests that there is currently a lack of research specifically addressing the use of AI in enabling blind and low vision individuals to access virtual reality experiences, there is a solid foundation of research on using AI to enhance accessibility in other contexts. Some studies have been conducted to investigate using artificial intelligence technology to enhance various other aspects of real-world experiences for blind and low vision individuals, such as video accessibility (Bodi et al., 2021), object recognition (Gurari et al., 2018), navigation assistance (Kumar & Jain, 2022), and face recognition (Ibrahim & Saleh, 2009). These studies demonstrate the potential of AI in improving accessibility for blind and low vision individuals in various domains, including virtual reality. Beyond that, AI technology like VizWiz (Gurari et al., 2018) has the potential to reduce the human labor that is typically required in modern assistive technology. Some of the excluded studies also conceptualized or mentioned the possibility of incorporating AI technology into current solutions (Aan et al., 2024; Collins et al., 2023).

However, future researchers should keep in mind that AI may provide great utilities in many domains but is limited in many other domains (Smith & Smith, 2021). The limitations of AI, particularly regarding disability, encompass challenges such as technical glitches, a lack of adaptability to diverse user requirements, as well as a lack of inclusivity. AI technologies sometimes disappoint with mistakes in function or interpretation, leading to frustrating experiences for users with disabilities. There are also ethical dilemmas associated with AI. Privacy, dependence, and equality issues are among the many. AI systems could risk infringing on personal privacy if used in personal care or gathering sensitive data. Overdependence on AI could potentially weaken human autonomy and personal interactions. Moreover, the delegation of decision-making to AI systems raises significant ethical questions. The AI’s ability to suggest choices or influence decisions could steer the user’s actions in a direction that may not be ethically correct.

Another important challenge is the failure to involve disabled people in the design process of AI technologies meant for them. According to Smith and Smith (2021), the principle of fairness in AI necessitates the contribution of disabled people from the beginning stages of design, a practice that is often not followed. To ensure inclusive AI technologies, it is crucial to involve disabled individuals in the design process to understand their unique needs and perspectives. Blind and low vision individuals offer valuable firsthand insights and perspectives during the design and testing phases of AI technologies, ensuring that these technologies are tailored to their specific requirements.

Conclusion

Enhancing accessibility to VR for blind and low vision individuals is important for their inclusion and equal participation in the virtual reality experience. The growing literature investigating such topics shows a promising future. The integration of AI technology with VR has the potential to significantly improve the accessibility and usability of VR for blind and low vision individuals. More innovative methods and inclusive design practices are needed to ensure that AI technologies for VR are successfully developed, implemented, and utilized by blind and low vision individuals.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.