Abstract

When working in teams, individuals’ trust can be influenced by their teammates consciously or unconsciously through social interactions, a phenomenon defined in this paper as

Keywords

Introduction

Intelligent agents are becoming more incorporated into human teams to cooperate in performing complex tasks (Chiou & Lee, 2021). Intelligent agents and robots have evolved from being used as tools to becoming autonomous team members referring as human-autonomy team (HAT) (O’Neill et al., 2022). Trust has been identified as the central factor for effective cooperation in HAT (Guo et al., 2023) Trust is defined as “the attitude that an agent will help achieve an individual’s goals in a situation characterized by uncertainty and vulnerability” (Lee & See, 2004, p. 51). Existing research heavily focused on trust in one-to-one human-AI interaction. However, real-world scenarios often involve multiple humans working alongside AI teammates, such as in space missions or operating rooms. In such hybrid teams, individuals possess diverse preferences and experiences, resulting in varying levels of trust in the AI teammate. This variance can consciously or subconsciously influence the perceptions and behaviors of others, a phenomenon we refer to as “trust contagion.” In this paper, we aimed to investigate the effects of trust contagion in human-human-AI teams, and how trust toward the autonomous agent can be influenced through the interpersonal dynamics of a second individual.

Trust Contagion

Understanding trust contagion between human operators toward an autonomous agent is crucial for enhancing team cooperation in human-robot teams. For instance, end-users initially distrusting a robot might enhance their trust after interacting with a trainer who has a positive relationship with the robot. This implies that trust in the robot is shaped not just by direct experiences but also by indirect influences from other people. Guo et al. (2023) demonstrated these insights by modeling both direct and indirect trust in a distributed team with multiple human and robotic agents. While this modeling approach benefits scaling up in multi-agent teams, previous research highlights that trust isn’t fully transitive in the mathematical sense (Al-Ani et al., 2014; Feese et al., 2012). Viewing trust as a single-score metric might overlook the social interactions during human-robot communication. In this paper, trust contagion, rooted in emotional contagion theory (Barsade, 2002), is defined and explored to understand interpersonal influences in a co-located human-human-AI team scenarios where verbal and nonverbal behaviors can be observed. We hypothesized:

1a. Participants interacting with a high/low-trusting confederate will have higher/lower trust in the AI teammate than the neutral-trusting confederate condition.

1.b. Participant interacting with a high/low-trusting confederate will have higher/lower total game scores than the neutral-trusting confederate condition.

Prior studies have found that negative emotions tend to elicit stronger and quicker emotional, behavioral, and cognitive responses (Barsade, 2002). Since trust is essentially an affective process, distrust is considered a negative attitude that can show the stronger effect of negative emotions (Lee & See, 2004). In other words, a teammate conveying this distrust toward the AI teammate would prompt a stronger emotional response from the other individual, thus potentially making distrust more contagious than trust. Thus, we hypothesized:

Method

A team of three, consisted of one participant, one confederate, and one AI teammate, performed a ten-round trust-based game that requires resource allocation and joint decision making. We designed a 2 (AI reliability: high vs. low, within-subjects factor) × 3 (confederate trusting: high, low, neutral, between-subjects factor) mixed-subject study. For reliability, AI teammate performed the task with 100% accuracy rate in the high-reliability condition, and only 60% accuracy for the low-reliability condition. People always experienced the same high-low reliability order to ensure trust building at the beginning of the experiment. To manipulate the influences of trust contagion, an experimenter was trained to enact three levels of trusting behaviors. In the neutral condition, the confederate only commented on the fact-based game status; in the high- trusting condition, the confederate expressed positive attitudes toward the AI teammate; in the low-trusting condition, the confederate made skeptical comments toward the AI teammate.

Dependent Variables

To capture trust contagion, subjective and behavioral data were collected and analyzed. For subjective measurements, participants’ trust levels were assessed in round one, five, and ten, which include their trust in AI teammate, trust in human teammate, and their perceived confederate’s trust in AI teammate (for manipulation check). For trust in both human and AI teammates, an adapted 8-point Multi-Dimensional Measure of Trust (MDMT) scale was used to capture both capacity- and moral- based trust (Malle & Ullman, 2019). Each item is evaluated on an 8-point discrete rating scale from 0 (Not at all) to 7 (Very), with a final option, “Does not Fit” preventing a forced response. For manipulation check on perceived confederate’s trust in AI teammates, we included an additional 1-item 7-point Likert scale (“

Participants

A power analysis with α = .05 and power of 0.80 was conducted to obtain a sample size of

Procedures

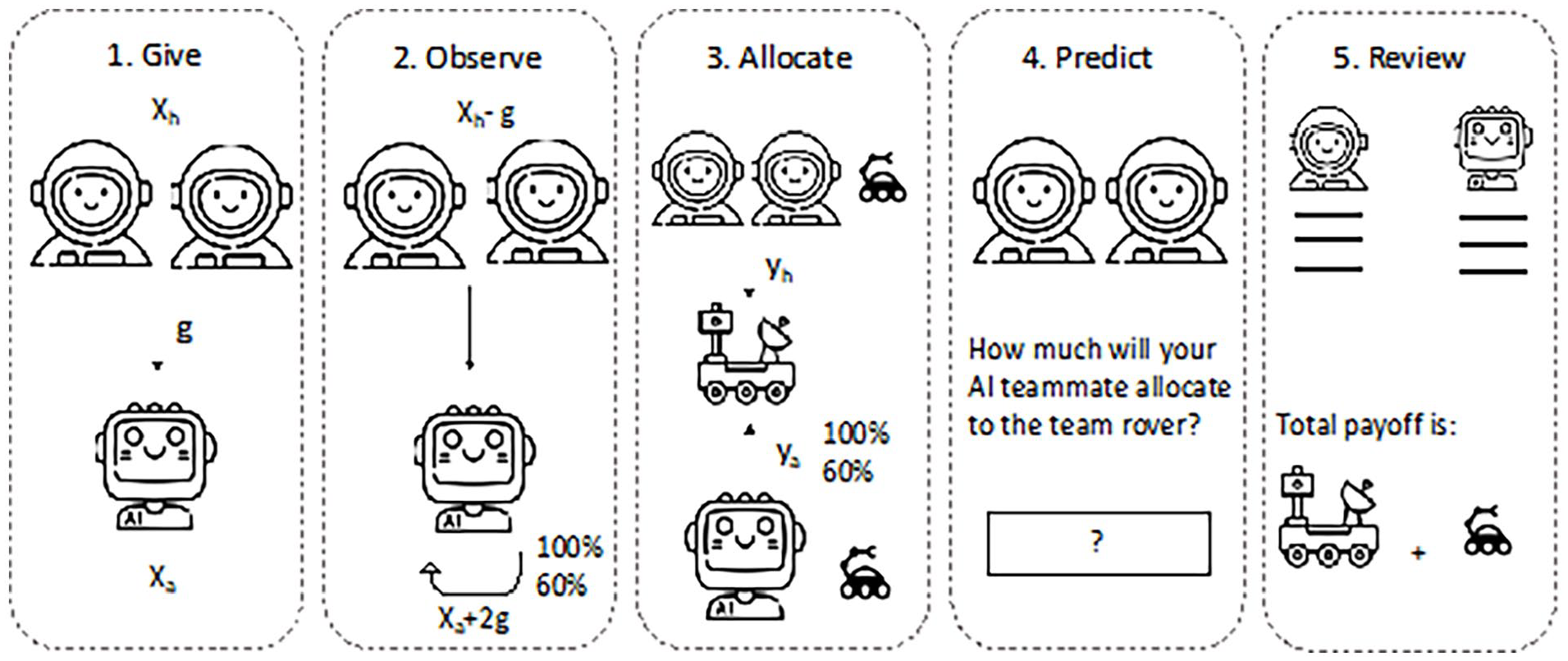

After signing the consent form, the participant was teamed up with a human confederate and an AI teammate. The team will play a trust-based space exploration game. Details see Figure 1. The game consisted of ten rounds where the participant and confederate need to first jointly decide how to allocate their initial ten points, given each round, to the AI teammate, who can double the point received with a certain probability. Then, participants and confederate decided whether to contribute (cooperate) over several rounds to meet the threshold of the joint group rover, which ensures that the group benefit is achieved and shared within the team; or contribute insufficiently (defect) and assume that the other player makes the contributions to reach the goal. Throughout the game, the participants and confederate freely discussed their strategies and their perception about the AI teammate’s performance. During the beginning and end of each round, the confederate made pre-trained and consistent utterances based on a script that includes key words for each level of trust. Their conversations were recorded using two microphones and a skeleton-based camera. By the end of the first, fifth, and tenth rounds, the participant, without the confederate observing the participant’s ratings, rate the AI teammate and their human partner using the MDMT scale to evaluate the participant’s trust on the confederate and on the AI teammate. After the game finished, participants will fill out demographic information, propensity to trust scale, and individualism-collectivism scale. Afterwards, the confederate leaves the room, and a semi-structured interview was conducted asking questions pertaining to how they felt about the game, their AI and human teammates, and if their decisions were influenced by the human teammate. In the end, participants were debriefed on the purpose of the study, informed about the confederate, and compensated.

Procedure for space exploration game.

Data Analysis

Data were exported directly from the Firebase platform and analyzed using R via R studio, using packages

Results

Manipulation Check

We first conducted the manipulation check on participants’ perceived confederate’s trust in AI teammate to verify the manipulated confederate’s trust toward the AI teammate were properly recognized. The one-way ANOVA found a significant effect in

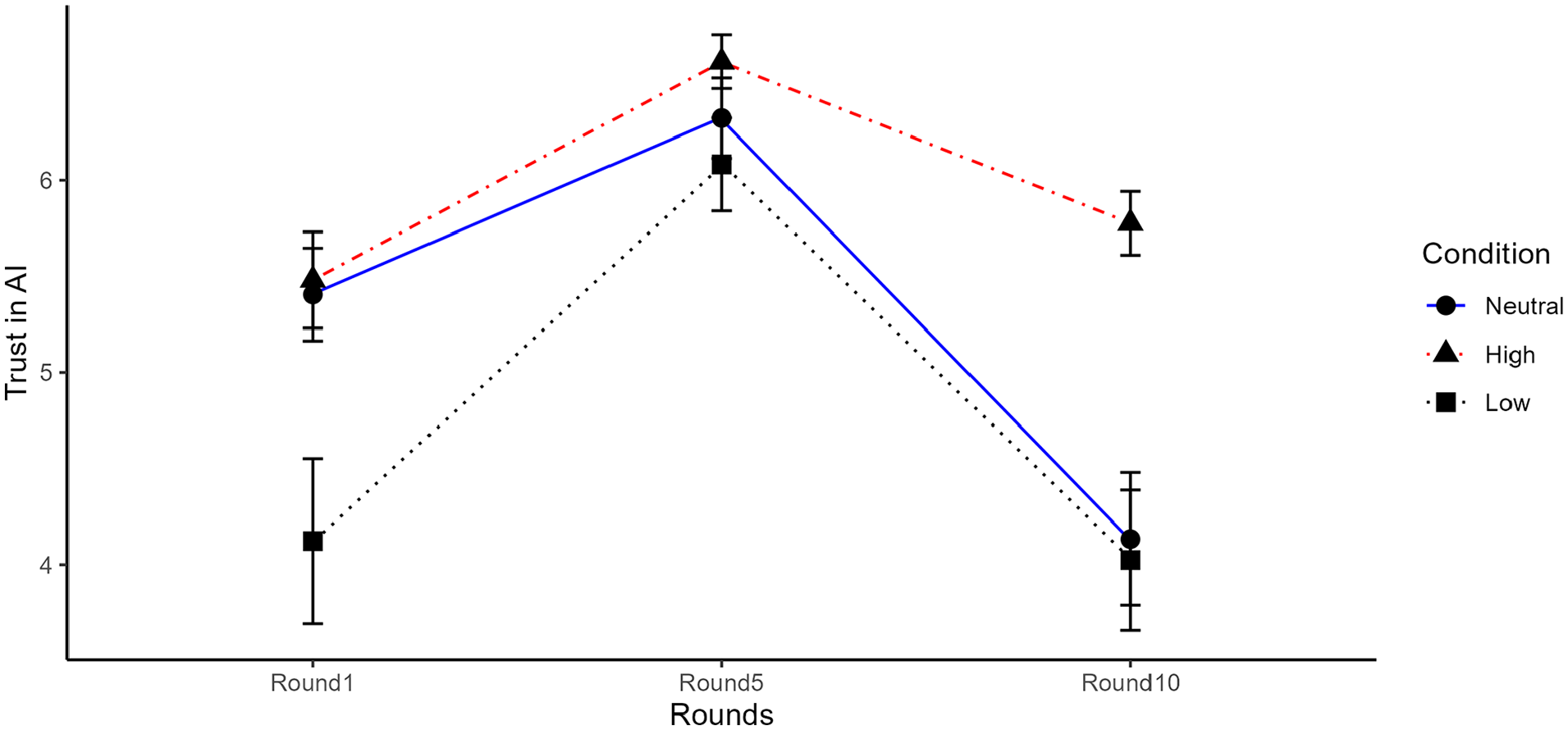

Trust in AI Teammate

The main effect of

Trust in AI teammate in each round between confederate’s trusting conditions.

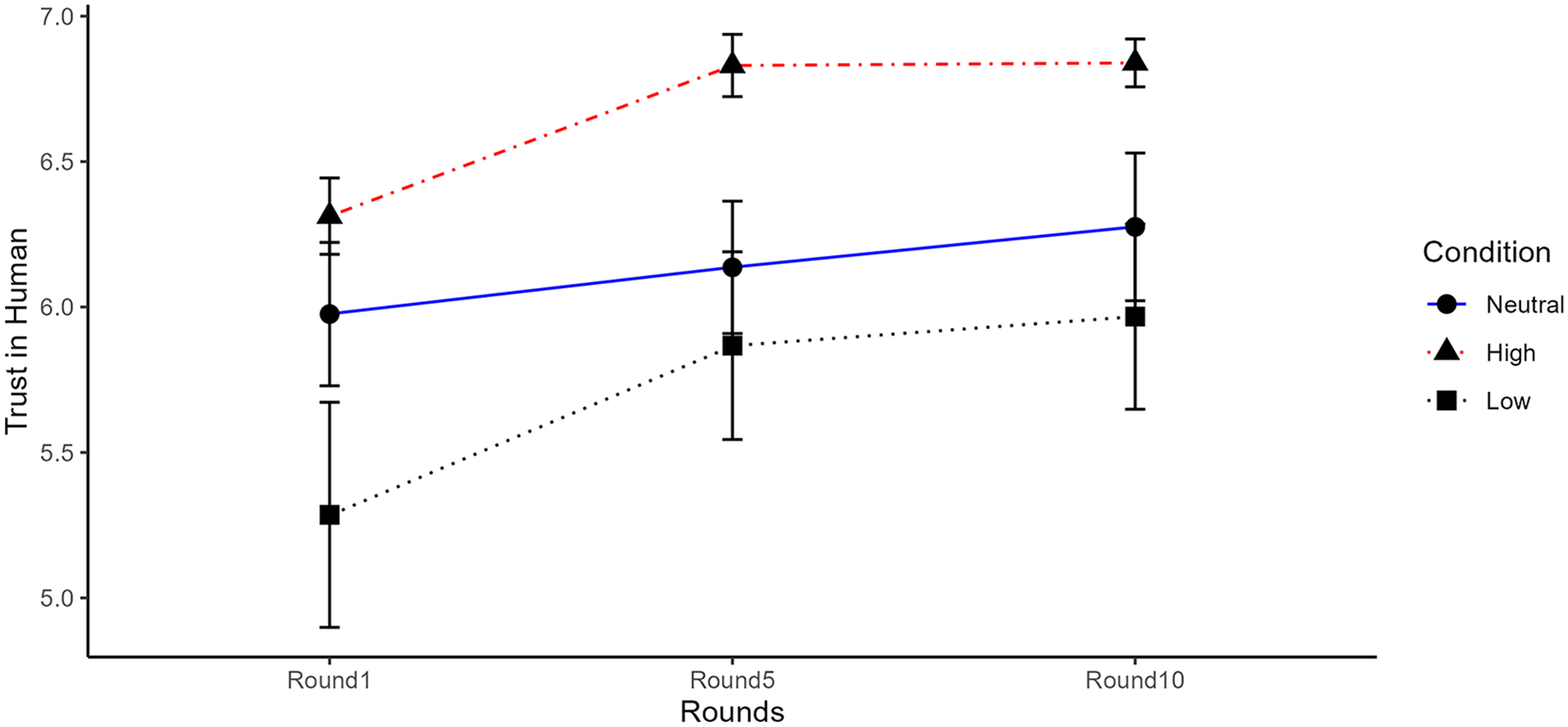

Trust in Human Teammate

The main effect of

Trust in confederate in each round between confederate’s trusting condition.

Game Performance

For the model fitting for the game performance, we excluded

Discussion

In this study, we introduced and defined the concept of trust contagion in a human-human-AI team. We explored how the trust levels of one human teammate in AI influences the trust and reliance behaviors of the other human teammate. Our results showed that trust is indeed contagious in human-AI teams. Specifically, when the human teammate indicated a high trust in the AI teammate, participants also trusted the AI teammate more and performed better by relying more on AI teammate in the task. These results align with group emotional contagion effects, which further supports that trust is an affective-laden process. Our research also extends the understanding of trust from dyadic teams to multi-human teams, showing how trust is influenced not only by characteristics of AI teammates but also by social influences from human teammates.

Our results did not support the second hypothesis that distrust would be more contagious than trust. This aligns with Barsade’s (2002) study, which showed the contagion of negative mood was not more powerful in inducting emotion than the positive mood. One possible explanation is the nature of the cooperative joint- decision space exploration game in our experiment, where cooperation is critical for success, making positive affect and trust direction more susceptible to participants. Future studies can further investigate the contagion of both trust and distrust in a neutral setup to further explore their effects.

Since the trust contagion effect does occur in human-AI teams, future studies can further model these social influences using more nuanced physiological and behavioral data to identify specific verbal cues or non-verbal cues (Pantic et al., 2011). In particular, a mapping of emotional valence from their facial expressions, gaze behaviors, and body language can potentially convey social signals of trust contagion. Findings from the social signal processing and modeling can guide design of AI agent’s countermeasures to mitigate any inappropriate contagion effects in the team, facilitating the trust calibration process.

Footnotes

Acknowledgements

We would like to thank Debbie Hsu, Yan Tian, Jingjing Huang, Hiya Sachdev, and Kai Xue for their assistance in the data collection and administration.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.